Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

277,927 | 30,695,948,372 | IssuesEvent | 2023-07-26 18:36:37 | RG4421/ampere-centos-kernel | https://api.github.com/repos/RG4421/ampere-centos-kernel | opened | CVE-2023-33203 (Medium) detected in linuxv5.2 | Mend: dependency security vulnerability | ## CVE-2023-33203 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/tor... | True | CVE-2023-33203 (Medium) detected in linuxv5.2 - ## CVE-2023-33203 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>L... | non_process | cve medium detected in cve medium severity vulnerability vulnerable library linux kernel source tree library home page a href found in base branch amp centos kernel vulnerable source files drivers net ethernet qualcomm emac emac c ... | 0 |

18,267 | 24,347,071,737 | IssuesEvent | 2022-10-02 13:08:43 | rathena/FluxCP | https://api.github.com/repos/rathena/FluxCP | opened | Vote - How do you process donations? | Enhancement Request Component: Payment Processor | ### Provide Details

So that I can gauge how you guys use FluxCP, can you please use the following emoji reactions for:

👍 I use the FluxCP Shop and provided Donation NPC. Shop Credits are stored via FluxCP. This is the default setup.

👎 I use the Item Shop on FluxCP but have changed the Shop Credits to use in-ga... | 1.0 | Vote - How do you process donations? - ### Provide Details

So that I can gauge how you guys use FluxCP, can you please use the following emoji reactions for:

👍 I use the FluxCP Shop and provided Donation NPC. Shop Credits are stored via FluxCP. This is the default setup.

👎 I use the Item Shop on FluxCP but hav... | process | vote how do you process donations provide details so that i can gauge how you guys use fluxcp can you please use the following emoji reactions for 👍 i use the fluxcp shop and provided donation npc shop credits are stored via fluxcp this is the default setup 👎 i use the item shop on fluxcp but hav... | 1 |

252,892 | 27,271,244,050 | IssuesEvent | 2023-02-22 22:34:22 | jmservera/simplelogger | https://api.github.com/repos/jmservera/simplelogger | closed | CVE-2021-28860 (High) detected in mixme-0.3.5.tgz - autoclosed | security vulnerability | ## CVE-2021-28860 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mixme-0.3.5.tgz</b></p></summary>

<p>A library for recursive merging of Javascript objects</p>

<p>Library home page: <... | True | CVE-2021-28860 (High) detected in mixme-0.3.5.tgz - autoclosed - ## CVE-2021-28860 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mixme-0.3.5.tgz</b></p></summary>

<p>A library for re... | non_process | cve high detected in mixme tgz autoclosed cve high severity vulnerability vulnerable library mixme tgz a library for recursive merging of javascript objects library home page a href path to dependency file package json path to vulnerable library node modules mixm... | 0 |

16,069 | 9,682,628,212 | IssuesEvent | 2019-05-23 09:35:27 | bkimminich/juice-shop | https://api.github.com/repos/bkimminich/juice-shop | closed | WS-2019-0066 (Medium) detected in ecstatic-3.3.0.tgz | security vulnerability | ## WS-2019-0066 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ecstatic-3.3.0.tgz</b></p></summary>

<p>A simple static file server middleware</p>

<p>Library home page: <a href="http... | True | WS-2019-0066 (Medium) detected in ecstatic-3.3.0.tgz - ## WS-2019-0066 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ecstatic-3.3.0.tgz</b></p></summary>

<p>A simple static file se... | non_process | ws medium detected in ecstatic tgz ws medium severity vulnerability vulnerable library ecstatic tgz a simple static file server middleware library home page a href path to dependency file juice shop package json path to vulnerable library tmp git juice shop node mo... | 0 |

613,971 | 19,102,676,753 | IssuesEvent | 2021-11-30 01:16:42 | MT-CTF/capturetheflag | https://api.github.com/repos/MT-CTF/capturetheflag | closed | Visually indicate pro players | Feature :star: Low Priority :sleeping: :gear: Audiovisuals | Currently, all players look the same. It is not clear which players one can count on and which are not, which players are dangerous and which are not.

I propose to indicate players with access to pro-section somehow.

It should be something simple and visible from all directions. Probably colorful hat. | 1.0 | Visually indicate pro players - Currently, all players look the same. It is not clear which players one can count on and which are not, which players are dangerous and which are not.

I propose to indicate players with access to pro-section somehow.

It should be something simple and visible from all directions. Probab... | non_process | visually indicate pro players currently all players look the same it is not clear which players one can count on and which are not which players are dangerous and which are not i propose to indicate players with access to pro section somehow it should be something simple and visible from all directions probab... | 0 |

316,514 | 9,648,635,088 | IssuesEvent | 2019-05-17 16:48:19 | zeit/ncc | https://api.github.com/repos/zeit/ncc | closed | Supporting require.resolve dynamic passing | committed dynamic require priority | Within the use cases around custom loaders (think Babel plugins, webpack loaders), there are a number of edge cases of dynamic require that come up. While Webpack can get quite far in computing dynamic requires like `require(require.resolve('./asdf.js'))`, there is a tricky case where there is a separation between the ... | 1.0 | Supporting require.resolve dynamic passing - Within the use cases around custom loaders (think Babel plugins, webpack loaders), there are a number of edge cases of dynamic require that come up. While Webpack can get quite far in computing dynamic requires like `require(require.resolve('./asdf.js'))`, there is a tricky ... | non_process | supporting require resolve dynamic passing within the use cases around custom loaders think babel plugins webpack loaders there are a number of edge cases of dynamic require that come up while webpack can get quite far in computing dynamic requires like require require resolve asdf js there is a tricky ... | 0 |

723,174 | 24,887,502,407 | IssuesEvent | 2022-10-28 09:02:44 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | closed | Profiles opened via deeplink stack on top of each other | bug ui Profile priority 4: minor E:Bugfixes | # Bug Report

## Description

Found during testing of https://github.com/status-im/status-desktop/pull/6450

If a profile is already open and a deep link is used for another profile then it is stacked on top. Each profile must then be closed one after the other to return to the main screen.

## Steps to reprod... | 1.0 | Profiles opened via deeplink stack on top of each other - # Bug Report

## Description

Found during testing of https://github.com/status-im/status-desktop/pull/6450

If a profile is already open and a deep link is used for another profile then it is stacked on top. Each profile must then be closed one after the ... | non_process | profiles opened via deeplink stack on top of each other bug report description found during testing of if a profile is already open and a deep link is used for another profile then it is stacked on top each profile must then be closed one after the other to return to the main screen steps to ... | 0 |

69,484 | 7,135,526,627 | IssuesEvent | 2018-01-23 01:33:51 | strongbox/strongbox | https://api.github.com/repos/strongbox/strongbox | opened | Test the packed indexes using Artifactory | good first issue help wanted testing | * Start the `strongbox-distribution`

* Deploy artifacts to one of the Maven 2 repositories

* Run the cron task for rebuilding the Maven Indexes. This should produce a packed Maven Index once it's finished.

* Create a new proxy repository in Artifactory pointing to the one in Strongbox the you've deployed the artifac... | 1.0 | Test the packed indexes using Artifactory - * Start the `strongbox-distribution`

* Deploy artifacts to one of the Maven 2 repositories

* Run the cron task for rebuilding the Maven Indexes. This should produce a packed Maven Index once it's finished.

* Create a new proxy repository in Artifactory pointing to the one ... | non_process | test the packed indexes using artifactory start the strongbox distribution deploy artifacts to one of the maven repositories run the cron task for rebuilding the maven indexes this should produce a packed maven index once it s finished create a new proxy repository in artifactory pointing to the one ... | 0 |

46,910 | 7,294,842,488 | IssuesEvent | 2018-02-26 02:53:19 | IntelVCL/Open3D | https://api.github.com/repos/IntelVCL/Open3D | closed | Optimize a pose graph, GlobalOptimizationOption | Documentation | http://www.open3d.org/docs/tutorial/Advanced/multiway_registration.html#input tell me that “Class GlobalOptimizationOption defines the loss function of the pose graph.” , But I am not find how to defines the loss function, and I also found no default loss function | 1.0 | Optimize a pose graph, GlobalOptimizationOption - http://www.open3d.org/docs/tutorial/Advanced/multiway_registration.html#input tell me that “Class GlobalOptimizationOption defines the loss function of the pose graph.” , But I am not find how to defines the loss function, and I also found no default loss function | non_process | optimize a pose graph globaloptimizationoption tell me that “class globaloptimizationoption defines the loss function of the pose graph ” but i am not find how to defines the loss function and i also found no default loss function | 0 |

21,286 | 28,481,658,949 | IssuesEvent | 2023-04-18 03:32:04 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | opened | Improve REST API throughput in Kubernetes | enhancement process rest | ### Problem

The performance of the REST API in Kubernetes is still not as good as the VM it replaces.

### Solution

* Increase Traefik memory

* Increase REST deployment limit to a little more than 1 CPU

* Revert autoscaling back to CPU based but with higher requests

* Reduce minimum replicas to 2 since resources h... | 1.0 | Improve REST API throughput in Kubernetes - ### Problem

The performance of the REST API in Kubernetes is still not as good as the VM it replaces.

### Solution

* Increase Traefik memory

* Increase REST deployment limit to a little more than 1 CPU

* Revert autoscaling back to CPU based but with higher requests

* Re... | process | improve rest api throughput in kubernetes problem the performance of the rest api in kubernetes is still not as good as the vm it replaces solution increase traefik memory increase rest deployment limit to a little more than cpu revert autoscaling back to cpu based but with higher requests re... | 1 |

9,444 | 12,426,673,680 | IssuesEvent | 2020-05-24 22:29:32 | burnpiro/wod-bike-dataset-generator | https://api.github.com/repos/burnpiro/wod-bike-dataset-generator | opened | Data output json format | data processing | Because of the file size, we cannot perform filter/map operations in real-time. We have to change the format of the .json file to be sth like:

```

{

<day>: {

<hour>: [

{

"o": 1,

"d": 2,

"c": 15... | 1.0 | Data output json format - Because of the file size, we cannot perform filter/map operations in real-time. We have to change the format of the .json file to be sth like:

```

{

<day>: {

<hour>: [

{

"o": 1,

"d": 2,

... | process | data output json format because of the file size we cannot perform filter map operations in real time we have to change the format of the json file to be sth like o d ... | 1 |

7,771 | 10,904,647,186 | IssuesEvent | 2019-11-20 09:11:19 | eclipse-theia/theia | https://api.github.com/repos/eclipse-theia/theia | opened | use `close` not `exit` event process | bug process terminal | `exit` event does not mean that all output was delivered, `close` means it: https://nodejs.org/api/child_process.html#child_process_event_close

> he 'close' event is emitted when the stdio streams of a child process have been closed. This is distinct from the 'exit' event, since multiple processes might share the sa... | 1.0 | use `close` not `exit` event process - `exit` event does not mean that all output was delivered, `close` means it: https://nodejs.org/api/child_process.html#child_process_event_close

> he 'close' event is emitted when the stdio streams of a child process have been closed. This is distinct from the 'exit' event, sinc... | process | use close not exit event process exit event does not mean that all output was delivered close means it he close event is emitted when the stdio streams of a child process have been closed this is distinct from the exit event since multiple processes might share the same stdio streams vscode... | 1 |

43,930 | 5,717,997,017 | IssuesEvent | 2017-04-19 18:30:38 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Type inference bug? | Area-Compilers Resolution-By Design | **Version Used**: Visual Studio 2017

**Steps to Reproduce**:

```c#

public static class NullExt

{

public static T? Some<T>(this T? value, Action<T> func) where T : struct

{

if (value != null)

func(value.Value);

return value;

}

... | 1.0 | Type inference bug? - **Version Used**: Visual Studio 2017

**Steps to Reproduce**:

```c#

public static class NullExt

{

public static T? Some<T>(this T? value, Action<T> func) where T : struct

{

if (value != null)

func(value.Value);

return val... | non_process | type inference bug version used visual studio steps to reproduce c public static class nullext public static t some this t value action func where t struct if value null func value value return value ... | 0 |

21,964 | 30,461,652,819 | IssuesEvent | 2023-07-17 07:25:22 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [MLv2] Segments | .Backend .Epic .metabase-lib .Team/QueryProcessor :hammer_and_wrench: | For custom expression editor migration we need to port Segments.

It is currently used like `query.table().segments.find.....`

Example: `frontend/src/metabase-lib/expressions/index.js` | 1.0 | [MLv2] Segments - For custom expression editor migration we need to port Segments.

It is currently used like `query.table().segments.find.....`

Example: `frontend/src/metabase-lib/expressions/index.js` | process | segments for custom expression editor migration we need to port segments it is currently used like query table segments find example frontend src metabase lib expressions index js | 1 |

9,814 | 12,824,724,713 | IssuesEvent | 2020-07-06 13:55:27 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Clarify usage of datasource url when using SQLite - `file:` is what works and `sqlite:` `sqlite://` should error | bug/2-confirmed kind/bug process/candidate | ## Problem

For SQLite in schema.prisma this works everywhere (Prisma Client JS, Prisma Client Go, Migrate) and is documented:

```

file:./dev.db

file:../dev.db

```

This is not valid (error from engine)

```

sqlite:dev.db

```

This is considered valid by the engine's parser but it doesn't work either in cli... | 1.0 | Clarify usage of datasource url when using SQLite - `file:` is what works and `sqlite:` `sqlite://` should error - ## Problem

For SQLite in schema.prisma this works everywhere (Prisma Client JS, Prisma Client Go, Migrate) and is documented:

```

file:./dev.db

file:../dev.db

```

This is not valid (error from en... | process | clarify usage of datasource url when using sqlite file is what works and sqlite sqlite should error problem for sqlite in schema prisma this works everywhere prisma client js prisma client go migrate and is documented file dev db file dev db this is not valid error from en... | 1 |

13,897 | 16,657,484,295 | IssuesEvent | 2021-06-05 19:52:26 | zotero/zotero | https://api.github.com/repos/zotero/zotero | opened | Option to include annotation colors via Add Note | Enhancement Word Processor Integration | Once we do https://github.com/zotero/zotero/issues/2080, we could add an option (toggleable from the citation dialog, off by default) to include annotation colors when inserting notes into word processor documents. | 1.0 | Option to include annotation colors via Add Note - Once we do https://github.com/zotero/zotero/issues/2080, we could add an option (toggleable from the citation dialog, off by default) to include annotation colors when inserting notes into word processor documents. | process | option to include annotation colors via add note once we do we could add an option toggleable from the citation dialog off by default to include annotation colors when inserting notes into word processor documents | 1 |

3,988 | 6,917,667,840 | IssuesEvent | 2017-11-29 09:25:01 | w3c/html | https://api.github.com/repos/w3c/html | opened | CFC: Merge Web Workers into HTML | process | This is a Call For Consensus (CFC) to merge the [Web Workers](https://w3c.github.io/workers/) specification into the [HTML](https://w3c.github.io/html) specification.

The reason for merging the two specifications is that it would make it easier to maintain Web Workers, and therefore more likely that the Web Workers ... | 1.0 | CFC: Merge Web Workers into HTML - This is a Call For Consensus (CFC) to merge the [Web Workers](https://w3c.github.io/workers/) specification into the [HTML](https://w3c.github.io/html) specification.

The reason for merging the two specifications is that it would make it easier to maintain Web Workers, and therefor... | process | cfc merge web workers into html this is a call for consensus cfc to merge the specification into the specification the reason for merging the two specifications is that it would make it easier to maintain web workers and therefore more likely that the web workers parts of the html specification will b... | 1 |

804,383 | 29,485,449,813 | IssuesEvent | 2023-06-02 09:22:51 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | opened | [Bug]: Invalid cyclic ref error for module level function | Type/Bug Priority/High Team/CompilerFE | ### Description

When there is a function call for another module and if there is a function definition in same name, the compiler detects a cyclic ref error.

### Steps to Reproduce

```ballerina

import ballerina/edi;

type EdiDeserialize function ();

public function fromEdi835String() {

json|error res = ed... | 1.0 | [Bug]: Invalid cyclic ref error for module level function - ### Description

When there is a function call for another module and if there is a function definition in same name, the compiler detects a cyclic ref error.

### Steps to Reproduce

```ballerina

import ballerina/edi;

type EdiDeserialize function ();

p... | non_process | invalid cyclic ref error for module level function description when there is a function call for another module and if there is a function definition in same name the compiler detects a cyclic ref error steps to reproduce ballerina import ballerina edi type edideserialize function publi... | 0 |

76,631 | 21,523,647,447 | IssuesEvent | 2022-04-28 16:14:35 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | Improve pull request feedback times on docs only changes | >enhancement >docs :Delivery/Build Team:Docs Team:Delivery v8.3.0 | Currently the examples in the Elasticsearch documentation are tested using the functionality described here: https://github.com/elastic/elasticsearch/tree/master/docs#snippet-testing

Tests that take a lot of setup make for very slow gradle checks. For example:

> ./gradlew :docs:check

BUILD SUCCESSFUL in 18m 8s

... | 1.0 | Improve pull request feedback times on docs only changes - Currently the examples in the Elasticsearch documentation are tested using the functionality described here: https://github.com/elastic/elasticsearch/tree/master/docs#snippet-testing

Tests that take a lot of setup make for very slow gradle checks. For exampl... | non_process | improve pull request feedback times on docs only changes currently the examples in the elasticsearch documentation are tested using the functionality described here tests that take a lot of setup make for very slow gradle checks for example gradlew docs check build successful in that is alread... | 0 |

18,091 | 3,667,472,522 | IssuesEvent | 2016-02-20 01:08:58 | kumulsoft/Fixed-Assets | https://api.github.com/repos/kumulsoft/Fixed-Assets | closed | Asset Transaction - Details Page - Add New Asset | bug Fixed HIGH Ready for testing |

Refer above image and make following corrections

1. Remove/hide Custodian Field

2. Remove/hide Category field

3. Filter out MAINTENANCE, and IMPROVEMENT from this Transaction type DDL list.

4. Move Ass... | 1.0 | Asset Transaction - Details Page - Add New Asset -

Refer above image and make following corrections

1. Remove/hide Custodian Field

2. Remove/hide Category field

3. Filter out MAINTENANCE, and IMPROVEMEN... | non_process | asset transaction details page add new asset refer above image and make following corrections remove hide custodian field remove hide category field filter out maintenance and improvement from this transaction type ddl list move asset description field to new location and rename descrip... | 0 |

276,721 | 30,525,835,097 | IssuesEvent | 2023-07-19 11:11:40 | ESC-CoM/esc-server | https://api.github.com/repos/ESC-CoM/esc-server | closed | [Setting] CORS 설정 | setting security | ## 🙋🏻♂️ 환경 세팅

배포서버를 통한 실제 테스트를 위해서 CORS를 설정합니다.

## 📖 참고 사항

공유할 내용, 레퍼런스, 추가로 발생할 것으로 예상되는 이슈, 스크린샷 등을 넣어 주세요.

- 추가적으로 필요한 내용은 comment로 남겨주세요.

| True | [Setting] CORS 설정 - ## 🙋🏻♂️ 환경 세팅

배포서버를 통한 실제 테스트를 위해서 CORS를 설정합니다.

## 📖 참고 사항

공유할 내용, 레퍼런스, 추가로 발생할 것으로 예상되는 이슈, 스크린샷 등을 넣어 주세요.

- 추가적으로 필요한 내용은 comment로 남겨주세요.

| non_process | cors 설정 🙋🏻♂️ 환경 세팅 배포서버를 통한 실제 테스트를 위해서 cors를 설정합니다 📖 참고 사항 공유할 내용 레퍼런스 추가로 발생할 것으로 예상되는 이슈 스크린샷 등을 넣어 주세요 추가적으로 필요한 내용은 comment로 남겨주세요 | 0 |

212,533 | 16,458,062,246 | IssuesEvent | 2021-05-21 15:00:46 | microsoft/WindowsTemplateStudio | https://api.github.com/repos/microsoft/WindowsTemplateStudio | closed | Add vstemplate tests | Can Close Out Soon Testing | We should add some basic checks for our vstemplate files as @mrlacey suggested in https://github.com/microsoft/WindowsTemplateStudio/issues/4271#issuecomment-836655705.

- [x] All our vstemplate files have a TemplateID that ends with "WTS.local"

- [x] All our vstemplate files have a Name that ends with "; local)"

... | 1.0 | Add vstemplate tests - We should add some basic checks for our vstemplate files as @mrlacey suggested in https://github.com/microsoft/WindowsTemplateStudio/issues/4271#issuecomment-836655705.

- [x] All our vstemplate files have a TemplateID that ends with "WTS.local"

- [x] All our vstemplate files have a Name that ... | non_process | add vstemplate tests we should add some basic checks for our vstemplate files as mrlacey suggested in all our vstemplate files have a templateid that ends with wts local all our vstemplate files have a name that ends with local all our projecttemplates include the projecttype tag windows t... | 0 |

229,207 | 25,304,460,480 | IssuesEvent | 2022-11-17 13:13:35 | MatBenfield/news | https://api.github.com/repos/MatBenfield/news | closed | [SecurityWeek] Web Giants to Submit User Data as EU Law Comes Into Effect | SecurityWeek Stale |

**A new EU law imposing stricter online regulation comes into effect Wednesday and the biggest platforms like Facebook and Google will have until February 17 to reveal their user numbers.**

[read more](https://www.securityweek.com/web-giants-submit-user-data-eu-law-comes-effect)

<https://www.securityweek.com/web-... | True | [SecurityWeek] Web Giants to Submit User Data as EU Law Comes Into Effect -

**A new EU law imposing stricter online regulation comes into effect Wednesday and the biggest platforms like Facebook and Google will have until February 17 to reveal their user numbers.**

[read more](https://www.securityweek.com/web-giants... | non_process | web giants to submit user data as eu law comes into effect a new eu law imposing stricter online regulation comes into effect wednesday and the biggest platforms like facebook and google will have until february to reveal their user numbers | 0 |

19,060 | 25,078,269,638 | IssuesEvent | 2022-11-07 17:04:25 | FreeCAD/FreeCAD | https://api.github.com/repos/FreeCAD/FreeCAD | closed | [Bug] Bug Reporting Template Requires Previous Forum Discussion | Process | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Forums discussion

https://www.forum.freecadweb.org/viewtopic.php?p=638121#p638121

### Version

0.21 (Development)

### Full version info

```shell

[code]

OS: Linux Mint 20.3 (X-Cinnamon/cinnamon)

Word size of FreeCAD: 64-bit

V... | 1.0 | [Bug] Bug Reporting Template Requires Previous Forum Discussion - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Forums discussion

https://www.forum.freecadweb.org/viewtopic.php?p=638121#p638121

### Version

0.21 (Development)

### Full version info

```shell

[code]

OS: Linu... | process | bug reporting template requires previous forum discussion is there an existing issue for this i have searched the existing issues forums discussion version development full version info shell os linux mint x cinnamon cinnamon word size of freecad bit version ... | 1 |

8,228 | 11,414,658,563 | IssuesEvent | 2020-02-02 04:53:53 | MobileOrg/mobileorg | https://api.github.com/repos/MobileOrg/mobileorg | closed | Investigate switch from CocoaPods to Carthage. | development process | Determine if switching to Carthage and removing the dependency on CocoaPods to allow using `xcconfig` files is worth the effort (probably, yes).

* https://github.com/Carthage/Carthage

This should also enable removing the multiple plist config with various build settings (adhoc,debug,release).

Using the latest... | 1.0 | Investigate switch from CocoaPods to Carthage. - Determine if switching to Carthage and removing the dependency on CocoaPods to allow using `xcconfig` files is worth the effort (probably, yes).

* https://github.com/Carthage/Carthage

This should also enable removing the multiple plist config with various build set... | process | investigate switch from cocoapods to carthage determine if switching to carthage and removing the dependency on cocoapods to allow using xcconfig files is worth the effort probably yes this should also enable removing the multiple plist config with various build settings adhoc debug release us... | 1 |

11,059 | 13,893,097,004 | IssuesEvent | 2020-10-19 13:06:30 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | reopened | Add more APIs | type: process | The following APIs have been identified for generation:

- [ ] Access Approval API - needs C# namespace option

- [ ] App Engine Admin API - if this is google/appengine/v1, needs C# namespace option

- [x] Cloud Bigtable Admin API (been generated for ages)

- [ ] Binary Authorization API (in google/cloud/binaryauthor... | 1.0 | Add more APIs - The following APIs have been identified for generation:

- [ ] Access Approval API - needs C# namespace option

- [ ] App Engine Admin API - if this is google/appengine/v1, needs C# namespace option

- [x] Cloud Bigtable Admin API (been generated for ages)

- [ ] Binary Authorization API (in google/cl... | process | add more apis the following apis have been identified for generation access approval api needs c namespace option app engine admin api if this is google appengine needs c namespace option cloud bigtable admin api been generated for ages binary authorization api in google cloud binar... | 1 |

16,052 | 20,194,609,441 | IssuesEvent | 2022-02-11 09:30:33 | ooi-data/RS01SBPS-PC01A-05-ADCPTD102-streamed-adcp_velocity_beam | https://api.github.com/repos/ooi-data/RS01SBPS-PC01A-05-ADCPTD102-streamed-adcp_velocity_beam | opened | 🛑 Processing failed: GroupNotFoundError | process | ## Overview

`GroupNotFoundError` found in `processing_task` task during run ended on 2022-02-11T09:30:32.846480.

## Details

Flow name: `RS01SBPS-PC01A-05-ADCPTD102-streamed-adcp_velocity_beam`

Task name: `processing_task`

Error type: `GroupNotFoundError`

Error message: group not found at path ''

<details>

<summary... | 1.0 | 🛑 Processing failed: GroupNotFoundError - ## Overview

`GroupNotFoundError` found in `processing_task` task during run ended on 2022-02-11T09:30:32.846480.

## Details

Flow name: `RS01SBPS-PC01A-05-ADCPTD102-streamed-adcp_velocity_beam`

Task name: `processing_task`

Error type: `GroupNotFoundError`

Error message: grou... | process | 🛑 processing failed groupnotfounderror overview groupnotfounderror found in processing task task during run ended on details flow name streamed adcp velocity beam task name processing task error type groupnotfounderror error message group not found at path traceba... | 1 |

1,627 | 4,239,486,768 | IssuesEvent | 2016-07-06 09:38:33 | BriceChou/WeiboClient | https://api.github.com/repos/BriceChou/WeiboClient | closed | User personal center and message center issue | High In processing TODO | When phone in landscape mode ,we can't see the page bottom content. We can't scroll down the page.

1. let your phone in landscape mode.

2. open the application.

3. can't scroll down the personal center and message bottom page. | 1.0 | User personal center and message center issue - When phone in landscape mode ,we can't see the page bottom content. We can't scroll down the page.

1. let your phone in landscape mode.

2. open the application.

3. can't scroll down the personal center and message bottom page. | process | user personal center and message center issue when phone in landscape mode we can t see the page bottom content we can t scroll down the page let your phone in landscape mode open the application can t scroll down the personal center and message bottom page | 1 |

759 | 3,244,506,708 | IssuesEvent | 2015-10-16 02:53:02 | GFUCABAM/statler | https://api.github.com/repos/GFUCABAM/statler | opened | Link to Sentiment API | API server components Language processing | Enable the API to make a JSON request to the https://github.com/vivekn/sentiment API and store what it sends back. | 1.0 | Link to Sentiment API - Enable the API to make a JSON request to the https://github.com/vivekn/sentiment API and store what it sends back. | process | link to sentiment api enable the api to make a json request to the api and store what it sends back | 1 |

16,105 | 20,329,807,671 | IssuesEvent | 2022-02-18 09:37:14 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Error: [introspection-engine/connectors/sql-introspection-connector/src/introspection_helpers.rs:241:64] called `Option::unwrap()` on a `None` value on cockroachdb | kind/bug process/candidate topic: error reporting team/migrations topic: cockroachdb | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma introspect`

Version: `3.9.2`

Binary Version: `bcc2ff906db47790ee902e7bbc76d7ffb1893009`

Report: https://prisma-errors.netlify.app/report/13667

OS: `x64 linux 5.4.0-1067-azure`

JS Stacktrace:

```

Error: [introspection... | 1.0 | Error: [introspection-engine/connectors/sql-introspection-connector/src/introspection_helpers.rs:241:64] called `Option::unwrap()` on a `None` value on cockroachdb - <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma introspect`

Version: `3.9.2`

Binary Version: `bcc2ff906db... | process | error called option unwrap on a none value on cockroachdb command prisma introspect version binary version report os linux azure js stacktrace error called option unwrap on a none value at childprocess node modules prisma build index... | 1 |

5,474 | 8,352,613,693 | IssuesEvent | 2018-10-02 07:17:56 | facebook/graphql | https://api.github.com/repos/facebook/graphql | closed | Status of GraphQL spec change process | 🐝 Process | This repository provides an awesome opportunity for broader community involvement in the evolution of GraphQL. The [change process](https://github.com/facebook/graphql/blob/master/CONTRIBUTING.md) is great start to making this engagement productive but it's been in draft status since #342 merged back on August 14, 2017... | 1.0 | Status of GraphQL spec change process - This repository provides an awesome opportunity for broader community involvement in the evolution of GraphQL. The [change process](https://github.com/facebook/graphql/blob/master/CONTRIBUTING.md) is great start to making this engagement productive but it's been in draft status s... | process | status of graphql spec change process this repository provides an awesome opportunity for broader community involvement in the evolution of graphql the is great start to making this engagement productive but it s been in draft status since merged back on august is there anything blocking this process fr... | 1 |

49,281 | 12,308,611,272 | IssuesEvent | 2020-05-12 07:32:50 | chocolate-doom/chocolate-doom | https://api.github.com/repos/chocolate-doom/chocolate-doom | closed | Clang compilation produces warnings | build | Compiling on macOS, using make, shows the following warnings. Ignore the warnings about libraries.

http://pastebin.com/4ANqMtHW | 1.0 | Clang compilation produces warnings - Compiling on macOS, using make, shows the following warnings. Ignore the warnings about libraries.

http://pastebin.com/4ANqMtHW | non_process | clang compilation produces warnings compiling on macos using make shows the following warnings ignore the warnings about libraries | 0 |

6,489 | 9,559,138,396 | IssuesEvent | 2019-05-03 15:52:23 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | opened | Add a question to the Education Page | Apply Process State Dept. | Who: Student and DoS

What: Additional Education requirement

Why: DoS would like to screen out applicants based off this question

A/C

- Add the following question to the "Education and Transcripts" page under "Will you continue your education after this internship has been completed?" and before the GPA question

... | 1.0 | Add a question to the Education Page - Who: Student and DoS

What: Additional Education requirement

Why: DoS would like to screen out applicants based off this question

A/C

- Add the following question to the "Education and Transcripts" page under "Will you continue your education after this internship has been co... | process | add a question to the education page who student and dos what additional education requirement why dos would like to screen out applicants based off this question a c add the following question to the education and transcripts page under will you continue your education after this internship has been co... | 1 |

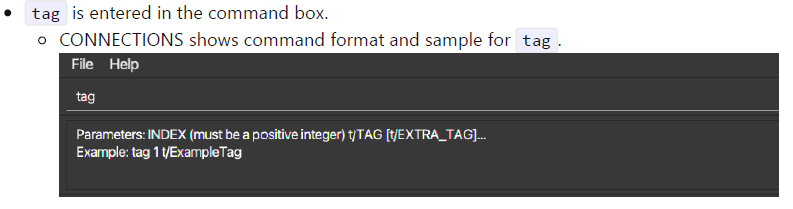

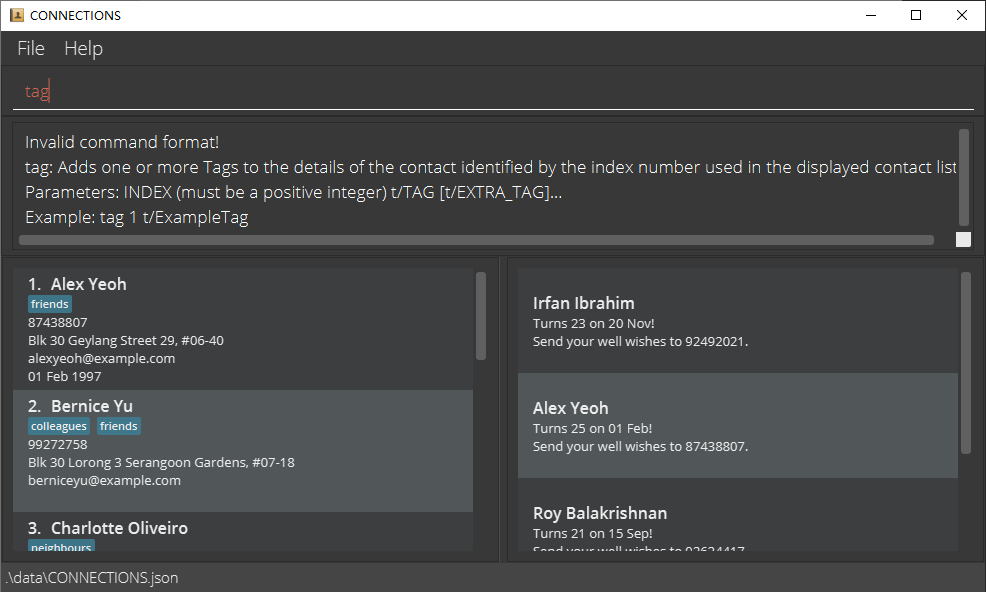

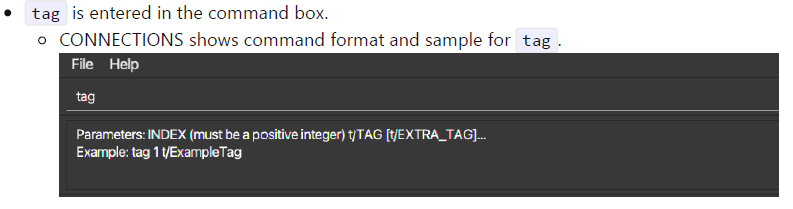

247,044 | 18,857,230,887 | IssuesEvent | 2021-11-12 08:20:29 | TTraveller7/pe | https://api.github.com/repos/TTraveller7/pe | opened | UG screenshots unconsistency in "Sample Usage" part | type.DocumentationBug severity.VeryLow | What I did: Enter `tag`

Expected:

Actual:

Comments: The same for `edit`. For Sample Usage ... | 1.0 | UG screenshots unconsistency in "Sample Usage" part - What I did: Enter `tag`

Expected:

Actual:

detected in linuxlinux-4.19.269 | security vulnerability | ## CVE-2022-42895 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.269</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge... | True | CVE-2022-42895 (Medium) detected in linuxlinux-4.19.269 - ## CVE-2022-42895 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.269</b></p></summary>

<p>

<p>The Linux Ker... | non_process | cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files net bluetoot... | 0 |

155,624 | 12,263,656,996 | IssuesEvent | 2020-05-07 01:43:40 | QubesOS/updates-status | https://api.github.com/repos/QubesOS/updates-status | closed | linux-kernel-4-19 v4.19.114-1 (r4.0) | r4.0-dom0-cur-test | Update of linux-kernel-4-19 to v4.19.114-1 for Qubes r4.0, see comments below for details.

Built from: https://github.com/QubesOS/qubes-linux-kernel/commit/f82d9ad67aed964542a9487cc6428e2d7f5b9caf

[Changes since previous version](https://github.com/QubesOS/qubes-linux-kernel/compare/v4.19.107-1...v4.19.114-1):

QubesO... | 1.0 | linux-kernel-4-19 v4.19.114-1 (r4.0) - Update of linux-kernel-4-19 to v4.19.114-1 for Qubes r4.0, see comments below for details.

Built from: https://github.com/QubesOS/qubes-linux-kernel/commit/f82d9ad67aed964542a9487cc6428e2d7f5b9caf

[Changes since previous version](https://github.com/QubesOS/qubes-linux-kernel/com... | non_process | linux kernel update of linux kernel to for qubes see comments below for details built from qubesos qubes linux kernel update to kernel qubesos qubes linux kernel update to kernel qubesos qubes linux kernel update to kernel qubesos qubes linux kernel... | 0 |

292,682 | 25,229,027,962 | IssuesEvent | 2022-11-14 18:10:41 | WPChill/download-monitor | https://api.github.com/repos/WPChill/download-monitor | closed | incompatibility with wpml | Bug needs testing tested | **Describe the bug**

Hi, We are using your plugin. We have our website in two languages (English and French). For English download button (thru shortcode) is working fine but for French version, download button is not working.

We are getting below link with that button, https://domain.com/?lang=fr%2Fdownload%2F20306... | 2.0 | incompatibility with wpml - **Describe the bug**

Hi, We are using your plugin. We have our website in two languages (English and French). For English download button (thru shortcode) is working fine but for French version, download button is not working.

We are getting below link with that button, https://domain.co... | non_process | incompatibility with wpml describe the bug hi we are using your plugin we have our website in two languages english and french for english download button thru shortcode is working fine but for french version download button is not working we are getting below link with that button you can see l... | 0 |

14,293 | 17,266,394,652 | IssuesEvent | 2021-07-22 14:16:00 | googleapis/python-storage | https://api.github.com/repos/googleapis/python-storage | closed | Teardown of `blobs_to_delete` fixture flakes with TimeoutError. | api: storage type: process | From [this Kokoro failure](https://source.cloud.google.com/results/invocations/c4cab6ef-94f0-4609-a2ea-760344f14623/targets/cloud-devrel%2Fclient-libraries%2Fpython%2Fgoogleapis%2Fpython-storage%2Fpresubmit%2Fsystem-3.8/log):

```python

self = <urllib3.connectionpool.HTTPSConnectionPool object at 0x7fdd9aa453a0>

er... | 1.0 | Teardown of `blobs_to_delete` fixture flakes with TimeoutError. - From [this Kokoro failure](https://source.cloud.google.com/results/invocations/c4cab6ef-94f0-4609-a2ea-760344f14623/targets/cloud-devrel%2Fclient-libraries%2Fpython%2Fgoogleapis%2Fpython-storage%2Fpresubmit%2Fsystem-3.8/log):

```python

self = <urllib... | process | teardown of blobs to delete fixture flakes with timeouterror from python self err timeout the read operation timed out url storage b gcp systest kms o test blob generation prettyprint false timeout value def raise timeout self err url timeout value is ... | 1 |

9,330 | 12,340,580,963 | IssuesEvent | 2020-05-14 20:12:13 | googleapis/nodejs-recommender | https://api.github.com/repos/googleapis/nodejs-recommender | closed | promoting library to GA | api: recommender type: process | Package name: **@google-cloud/recommender**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [x] 28 days elapsed since la... | 1.0 | promoting library to GA - Package name: **@google-cloud/recommender**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [x... | process | promoting library to ga package name google cloud recommender current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue required ... | 1 |

98,364 | 16,373,810,096 | IssuesEvent | 2021-05-15 17:39:12 | hugh-whitesource/NodeGoat-1 | https://api.github.com/repos/hugh-whitesource/NodeGoat-1 | opened | CVE-2019-19919 (High) detected in handlebars-4.0.5.tgz | security vulnerability | ## CVE-2019-19919 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.0.5.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates ... | True | CVE-2019-19919 (High) detected in handlebars-4.0.5.tgz - ## CVE-2019-19919 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.0.5.tgz</b></p></summary>

<p>Handlebars provides... | non_process | cve high detected in handlebars tgz cve high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file nodegoat ... | 0 |

45,655 | 13,131,642,343 | IssuesEvent | 2020-08-06 17:23:35 | jgeraigery/kraft-heinz-merger | https://api.github.com/repos/jgeraigery/kraft-heinz-merger | opened | CVE-2018-20822 (Medium) detected in node-sass-v4.13.1 | security vulnerability | ## CVE-2018-20822 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sassv4.13.1</b></p></summary>

<p>

<p>:rainbow: Node.js bindings to libsass</p>

<p>Library home page: <a href=ht... | True | CVE-2018-20822 (Medium) detected in node-sass-v4.13.1 - ## CVE-2018-20822 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-sassv4.13.1</b></p></summary>

<p>

<p>:rainbow: Node.js ... | non_process | cve medium detected in node sass cve medium severity vulnerability vulnerable library node rainbow node js bindings to libsass library home page a href found in head commit a href vulnerable source files kraft heinz merger node modules ... | 0 |

17,039 | 22,420,243,676 | IssuesEvent | 2022-06-20 01:42:26 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add [Orgazmo] | suggested title in process | Please add as much of the following info as you can:

Title: Orgazmo

Type (film/tv show): Comedy XXX

Film or show in which it appears: Captain Orgazmo by Trey Parker

Is the parent film/show streaming anywhere? Youtube

About when in the parent film/show does it appear?

Actual footage of the film/show ca... | 1.0 | Add [Orgazmo] - Please add as much of the following info as you can:

Title: Orgazmo

Type (film/tv show): Comedy XXX

Film or show in which it appears: Captain Orgazmo by Trey Parker

Is the parent film/show streaming anywhere? Youtube

About when in the parent film/show does it appear?

Actual footage of ... | process | add please add as much of the following info as you can title orgazmo type film tv show comedy xxx film or show in which it appears captain orgazmo by trey parker is the parent film show streaming anywhere youtube about when in the parent film show does it appear actual footage of the film... | 1 |

7,721 | 10,825,802,250 | IssuesEvent | 2019-11-09 18:09:31 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Link to keyscoped topic not properly resolved. | bug preprocess/keyref priority/medium | I'm attaching a ZIP sample.

[improperLinkToKeyScopedTopic.zip](https://github.com/dita-ot/dita-ot/files/631027/improperLinkToKeyScopedTopic.zip)

So, the DITA Map looks like this:

```xml

<topicgroup keyscope="product1_scope">

...........

<topicref keys="archiving" href="product1/c_archiving_mod.dita"/>

</top... | 1.0 | Link to keyscoped topic not properly resolved. - I'm attaching a ZIP sample.

[improperLinkToKeyScopedTopic.zip](https://github.com/dita-ot/dita-ot/files/631027/improperLinkToKeyScopedTopic.zip)

So, the DITA Map looks like this:

```xml

<topicgroup keyscope="product1_scope">

...........

<topicref keys="archivi... | process | link to keyscoped topic not properly resolved i m attaching a zip sample so the dita map looks like this xml because c archiving mod dita is referenced in two key scopes we will have in the output file two htmls for it c archiving mo... | 1 |

12,682 | 15,048,016,695 | IssuesEvent | 2021-02-03 09:40:12 | pystatgen/sgkit | https://api.github.com/repos/pystatgen/sgkit | opened | Move to NumPy's ArrayLike and DtypeLike | process + tools | Introduced in NumPy 1.20.0: https://numpy.org/doc/stable/release/1.20.0-notes.html#numpy-is-now-typed

These would replace our types in `sgkit.typing`. | 1.0 | Move to NumPy's ArrayLike and DtypeLike - Introduced in NumPy 1.20.0: https://numpy.org/doc/stable/release/1.20.0-notes.html#numpy-is-now-typed

These would replace our types in `sgkit.typing`. | process | move to numpy s arraylike and dtypelike introduced in numpy these would replace our types in sgkit typing | 1 |

11,829 | 14,655,267,750 | IssuesEvent | 2020-12-28 10:36:27 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] [Dev] getting 500 error when site permission is not given | Bug P1 Participant manager Process: Dev Process: Fixed Process: Tested QA Process: Tested dev | Getting 500 error when site permission is not given

AR : Displaying general error message

ER : 'No sites found' (EC_0070) should be displayed

| 4.0 | [PM] [Dev] getting 500 error when site permission is not given - Getting 500 error when site permission is not given

AR : Displaying general error message

ER : 'No sites found' (EC_0070) should be displayed

will not create new or update the item

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: dbcc24f0-9856-a7db-f229-c383e5e5c57d

* ... | 1.0 | Persistence is not working - The app initializes a single item, but, as no persistence is setup this is the only item available: POST (or PUT) will not create new or update the item

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: dbcc24f0-9... | non_process | persistence is not working the app initializes a single item but as no persistence is setup this is the only item available post or put will not create new or update the item document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id ... | 0 |

362,311 | 25,370,256,657 | IssuesEvent | 2022-11-21 10:02:24 | IT-Academy-BCN/ita-directory | https://api.github.com/repos/IT-Academy-BCN/ita-directory | closed | Add "Recover password" link on login form's design | documentation frontend | Añadir al prototipo de la página de login enlace a "recuperar la contraseña" presente en el proyecto.

<img width="332" alt="Captura de Pantalla 2021-10-18 a les 12 46 55" src="https://user-images.githubusercontent.com/8426157/137717201-97edf524-bb2b-44e2-87ec-df4abf1cc834.png">

| 1.0 | Add "Recover password" link on login form's design - Añadir al prototipo de la página de login enlace a "recuperar la contraseña" presente en el proyecto.

<img width="332" alt="Captura de Pantalla 2021-10-18 a les 12 46 55" src="https://user-images.githubusercontent.com/8426157/137717201-97edf524-bb2b-44e2-87ec-df4a... | non_process | add recover password link on login form s design añadir al prototipo de la página de login enlace a recuperar la contraseña presente en el proyecto img width alt captura de pantalla a les src | 0 |

77,252 | 26,880,858,170 | IssuesEvent | 2023-02-05 16:14:25 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | opened | Documentation needed for Android Secure Backup | T-Defect | ### Steps to reproduce

MY STEPS TO SHOW WHAT IS NOT DOCUMENTED ABOUT ANDROID SECURE BACKUP:

Make: Samsung

Model: SM-T580 (Gal... | 1.0 | Documentation needed for Android Secure Backup - ### Steps to reproduce

MY STEPS TO SHOW WHAT IS NOT DOCUMENTED ABOUT ANDROID SECUR... | non_process | documentation needed for android secure backup steps to reproduce my steps to show what is not documented about android secure backup make samsung model sm galaxy tab a os android element version g matrix sdk version olm version android s... | 0 |

195,802 | 22,360,123,255 | IssuesEvent | 2022-06-15 19:35:42 | videojs/videojs-overlay | https://api.github.com/repos/videojs/videojs-overlay | closed | CVE-2019-20149 (High) detected in kind-of-6.0.2.tgz | security vulnerability | ## CVE-2019-20149 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kind-of-6.0.2.tgz</b></p></summary>

<p>Get the native type of a value.</p>

<p>Library home page: <a href="https://regi... | True | CVE-2019-20149 (High) detected in kind-of-6.0.2.tgz - ## CVE-2019-20149 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kind-of-6.0.2.tgz</b></p></summary>

<p>Get the native type of a ... | non_process | cve high detected in kind of tgz cve high severity vulnerability vulnerable library kind of tgz get the native type of a value library home page a href dependency hierarchy karma tgz root library chokidar tgz braces tgz ... | 0 |

3,873 | 6,812,082,651 | IssuesEvent | 2017-11-06 00:13:03 | learn-anything/maps | https://api.github.com/repos/learn-anything/maps | closed | best path for learning clojurescript | natural language processing programming study plan | Take a look [here](https://my.mindnode.com/gDrV35KLRsdT9Ajxvr7EnN9yanPrgTx7LRzmfBUK).

If you think there is a better way one can learn clojurescript or you think the way the nodes are structured is wrong, please say it here.

Also if you think there are some really amazing resources on clojurescript that are missi... | 1.0 | best path for learning clojurescript - Take a look [here](https://my.mindnode.com/gDrV35KLRsdT9Ajxvr7EnN9yanPrgTx7LRzmfBUK).

If you think there is a better way one can learn clojurescript or you think the way the nodes are structured is wrong, please say it here.

Also if you think there are some really amazing re... | process | best path for learning clojurescript take a look if you think there is a better way one can learn clojurescript or you think the way the nodes are structured is wrong please say it here also if you think there are some really amazing resources on clojurescript that are missing or you wish something was ad... | 1 |

3,316 | 6,425,291,967 | IssuesEvent | 2017-08-09 15:08:34 | dzhw/zofar | https://api.github.com/repos/dzhw/zofar | closed | New Plugin: automated Deployment | category: technical.processes et: 32 prio: 1 status: development type: backlog.task | General deployment platform (on presentation-Server):

- [x] **Create New Plugin für contin. Integration.**

_Task for another Time:

Get another Virtual Machine for the presentation server._ | 1.0 | New Plugin: automated Deployment - General deployment platform (on presentation-Server):

- [x] **Create New Plugin für contin. Integration.**

_Task for another Time:

Get another Virtual Machine for the presentation server._ | process | new plugin automated deployment general deployment platform on presentation server create new plugin für contin integration task for another time get another virtual machine for the presentation server | 1 |

10,762 | 13,549,367,967 | IssuesEvent | 2020-09-17 08:06:02 | easably/games | https://api.github.com/repos/easably/games | reopened | 3. Словарь с ачивками | ☭ in process | - [ ] добавить в сайдбаре ссылку "Мой словарь" выше ссылки "Аккаунт" (иконка на усмотрение разработчика)

- [ ] добавить список слов, структура как на макете, на сам дизайн внимания не обращай, в р... | 1.0 | 3. Словарь с ачивками - - [ ] добавить в сайдбаре ссылку "Мой словарь" выше ссылки "Аккаунт" (иконка на усмотрение разработчика)

- [ ] добавить список слов, структура как на макете, на сам дизайн ... | process | словарь с ачивками добавить в сайдбаре ссылку мой словарь выше ссылки аккаунт иконка на усмотрение разработчика добавить список слов структура как на макете на сам дизайн внимания не обращай в рамках этой задачи нужна только структура словаря ачивка на слове пройденного... | 1 |

19,984 | 14,884,261,477 | IssuesEvent | 2021-01-20 14:22:56 | InfectedLibraries/Biohazrd | https://api.github.com/repos/InfectedLibraries/Biohazrd | opened | All user-defined records should be returned by reference for instance methods on Windows x64 | Arch-x64 Area-Translation Bug Concept-Correctness Concept-OutputUsability | Biohazrd does not correctly handle instance methods which return records. As far as I can tell, in **all** scenarios, these types are returned by reference. (Even if they can be enregistered.)

Additionally, these functions really should be emitted as returning a pointer to the return type as well since the buffer pa... | True | All user-defined records should be returned by reference for instance methods on Windows x64 - Biohazrd does not correctly handle instance methods which return records. As far as I can tell, in **all** scenarios, these types are returned by reference. (Even if they can be enregistered.)

Additionally, these functions... | non_process | all user defined records should be returned by reference for instance methods on windows biohazrd does not correctly handle instance methods which return records as far as i can tell in all scenarios these types are returned by reference even if they can be enregistered additionally these functions r... | 0 |

128,292 | 10,523,944,380 | IssuesEvent | 2019-09-30 12:15:39 | kubernetes/kubeadm | https://api.github.com/repos/kubernetes/kubeadm | closed | update kubeadm/kinder tests for 1.16 | area/testing kind/cleanup lifecycle/active priority/important-soon | needs PRs for adding 1.16 jobs:

- [x] k/kubeadm/kinder https://github.com/kubernetes/kubeadm/pull/1744

- [x] test-infra https://github.com/kubernetes/test-infra/pull/14037

and PRs for removing 1.13 jobs once 1.16 is out:

- [x] k/kubeadm/kinder https://github.com/kubernetes/kubeadm/pull/1804

- [x] test-infra http... | 1.0 | update kubeadm/kinder tests for 1.16 - needs PRs for adding 1.16 jobs:

- [x] k/kubeadm/kinder https://github.com/kubernetes/kubeadm/pull/1744

- [x] test-infra https://github.com/kubernetes/test-infra/pull/14037

and PRs for removing 1.13 jobs once 1.16 is out:

- [x] k/kubeadm/kinder https://github.com/kubernetes/k... | non_process | update kubeadm kinder tests for needs prs for adding jobs k kubeadm kinder test infra and prs for removing jobs once is out k kubeadm kinder test infra assign area testing kind cleanup priority important soon | 0 |

148,884 | 13,249,780,332 | IssuesEvent | 2020-08-19 21:27:06 | raybellwaves/xskillscore | https://api.github.com/repos/raybellwaves/xskillscore | opened | add xarray to intersphinx_mapping | documentation | Add xarray in here

https://github.com/raybellwaves/xskillscore/blob/master/docs/source/conf.py#L122

To be able to link to the xarray docs in the See also section e.g. https://xskillscore.readthedocs.io/en/latest/api/xskillscore.pearson_r.html#xskillscore.pearson_r | 1.0 | add xarray to intersphinx_mapping - Add xarray in here

https://github.com/raybellwaves/xskillscore/blob/master/docs/source/conf.py#L122

To be able to link to the xarray docs in the See also section e.g. https://xskillscore.readthedocs.io/en/latest/api/xskillscore.pearson_r.html#xskillscore.pearson_r | non_process | add xarray to intersphinx mapping add xarray in here to be able to link to the xarray docs in the see also section e g | 0 |

22,298 | 30,854,055,117 | IssuesEvent | 2023-08-02 19:03:29 | cohenlabUNC/clpipe | https://api.github.com/repos/cohenlabUNC/clpipe | closed | Support Wildcarding For Confound Column Selection | postprocess2 medium | Allow something like comp_cor* to be used in column selection, for either confound columns setting or scrub target columns. | 1.0 | Support Wildcarding For Confound Column Selection - Allow something like comp_cor* to be used in column selection, for either confound columns setting or scrub target columns. | process | support wildcarding for confound column selection allow something like comp cor to be used in column selection for either confound columns setting or scrub target columns | 1 |

10,888 | 13,669,204,766 | IssuesEvent | 2020-09-29 01:15:05 | knative/serving | https://api.github.com/repos/knative/serving | closed | Document the knative provisioning guide | area/API area/autoscale area/networking kind/feature kind/process lifecycle/stale | /area API

/area autoscale

/kind process

## Describe the feature

Currently we provision Knative for a quite small load, by default.

We should provide some guidance in how to provision the system; suggested replicas (for activator), resources (CPU/Memory) vs concurrency/# services/QPS.

| 1.0 | Document the knative provisioning guide - /area API

/area autoscale

/kind process

## Describe the feature

Currently we provision Knative for a quite small load, by default.

We should provide some guidance in how to provision the system; suggested replicas (for activator), resources (CPU/Memory) vs concurrenc... | process | document the knative provisioning guide area api area autoscale kind process describe the feature currently we provision knative for a quite small load by default we should provide some guidance in how to provision the system suggested replicas for activator resources cpu memory vs concurrenc... | 1 |

37,902 | 10,110,352,119 | IssuesEvent | 2019-07-30 10:03:12 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | XLA Warning | comp:xla type:build/install | <!--This template is for miscellaneous issues not covered by the other issue categories.

For questions on how to work with TensorFlow, or support for problems that are not verified bugs in TensorFlow, please go to [StackOverflow](https://stackoverflow.com/questions/tagged/tensorflow).

If you are reporting a vulne... | 1.0 | XLA Warning - <!--This template is for miscellaneous issues not covered by the other issue categories.

For questions on how to work with TensorFlow, or support for problems that are not verified bugs in TensorFlow, please go to [StackOverflow](https://stackoverflow.com/questions/tagged/tensorflow).

If you are rep... | non_process | xla warning this template is for miscellaneous issues not covered by the other issue categories for questions on how to work with tensorflow or support for problems that are not verified bugs in tensorflow please go to if you are reporting a vulnerability please use the for high level discuss... | 0 |

21,926 | 30,446,558,590 | IssuesEvent | 2023-07-15 18:48:23 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pyutils 0.0.1b14 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b14",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt:... | 1.0 | pyutils 0.0.1b14 has 2 GuardDog issues - https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b14",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package nam... | process | pyutils has guarddog issues dependency pyutils version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt pytils python utils silen... | 1 |

598,048 | 18,235,453,682 | IssuesEvent | 2021-10-01 06:07:34 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | closed | Add support for passing session token in AWS ECR requests | type:feature priority-3-normal datasource:docker status:ready | ### What would you like Renovate to be able to do?

When using temporary credentials for accessing AWS ECR it is necessary to pass [the so-called session token](https://docs.aws.amazon.com/AWSJavaScriptSDK/v3/latest/clients/client-ecr/modules/credentials.html#sessiontoken-1) in addition to access key ID and secret ac... | 1.0 | Add support for passing session token in AWS ECR requests - ### What would you like Renovate to be able to do?

When using temporary credentials for accessing AWS ECR it is necessary to pass [the so-called session token](https://docs.aws.amazon.com/AWSJavaScriptSDK/v3/latest/clients/client-ecr/modules/credentials.htm... | non_process | add support for passing session token in aws ecr requests what would you like renovate to be able to do when using temporary credentials for accessing aws ecr it is necessary to pass in addition to access key id and secret access key renovate currently supports passing access key id and secret access ... | 0 |

270,905 | 20,614,257,163 | IssuesEvent | 2022-03-07 11:38:21 | jamal919/bandsos | https://api.github.com/repos/jamal919/bandsos | closed | :memo: Missing project information | documentation | The project information is missing. A small description can be found here - https://www.spaceclimateobservatory.org/band-sos-bengal-delta - for the first version. | 1.0 | :memo: Missing project information - The project information is missing. A small description can be found here - https://www.spaceclimateobservatory.org/band-sos-bengal-delta - for the first version. | non_process | memo missing project information the project information is missing a small description can be found here for the first version | 0 |

41,201 | 12,831,755,549 | IssuesEvent | 2020-07-07 06:14:07 | rvvergara/todolist-api-igaku | https://api.github.com/repos/rvvergara/todolist-api-igaku | closed | CVE-2019-10795 (Medium) detected in undefsafe-2.0.2.tgz | security vulnerability | ## CVE-2019-10795 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>undefsafe-2.0.2.tgz</b></p></summary>

<p>Undefined safe way of extracting object properties</p>

<p>Library home page... | True | CVE-2019-10795 (Medium) detected in undefsafe-2.0.2.tgz - ## CVE-2019-10795 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>undefsafe-2.0.2.tgz</b></p></summary>

<p>Undefined safe wa... | non_process | cve medium detected in undefsafe tgz cve medium severity vulnerability vulnerable library undefsafe tgz undefined safe way of extracting object properties library home page a href path to dependency file tmp ws scm todolist api igaku package json path to vulnerable l... | 0 |

467 | 2,904,721,765 | IssuesEvent | 2015-06-18 19:40:01 | pwittchen/prefser | https://api.github.com/repos/pwittchen/prefser | closed | Create GitHub release of 1.0.5 | release process | Release notes for 1.0.5 are available in #37. Create release after Maven Sync and updating README.md in #41. | 1.0 | Create GitHub release of 1.0.5 - Release notes for 1.0.5 are available in #37. Create release after Maven Sync and updating README.md in #41. | process | create github release of release notes for are available in create release after maven sync and updating readme md in | 1 |

239,423 | 7,794,752,722 | IssuesEvent | 2018-06-08 04:45:23 | braun-robotics/rust-lpc82x-hal | https://api.github.com/repos/braun-robotics/rust-lpc82x-hal | opened | Handle I2C errors | good first issue priority: medium type: enhancement | No error checking is currently done by the I2C API. This of course disqualifies it from any serious use. | 1.0 | Handle I2C errors - No error checking is currently done by the I2C API. This of course disqualifies it from any serious use. | non_process | handle errors no error checking is currently done by the api this of course disqualifies it from any serious use | 0 |

14,584 | 17,703,503,284 | IssuesEvent | 2021-08-25 03:09:47 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - samplingProtocol | Term - change Class - Event normative Process - complete | ## Change term

* Submitter: Paula Zermoglio @pzermoglio

* Justification (why is this change necessary?): To accommodate "summary" Events in which the specific protocols can not be attributed to specific Occurrences.

* Proponents (who needs this change): Humboldt Core Task Group (representing multiple independent s... | 1.0 | Change term - samplingProtocol - ## Change term

* Submitter: Paula Zermoglio @pzermoglio

* Justification (why is this change necessary?): To accommodate "summary" Events in which the specific protocols can not be attributed to specific Occurrences.

* Proponents (who needs this change): Humboldt Core Task Group (re... | process | change term samplingprotocol change term submitter paula zermoglio pzermoglio justification why is this change necessary to accommodate summary events in which the specific protocols can not be attributed to specific occurrences proponents who needs this change humboldt core task group re... | 1 |

18,216 | 24,274,963,283 | IssuesEvent | 2022-09-28 13:15:25 | zammad/zammad | https://api.github.com/repos/zammad/zammad | closed | Zammad doesn't like cyrillic aliases | bug verified prioritised by payment mail processing | <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org/t/major-change-regarding-github-issues-community-board/21

P... | 1.0 | Zammad doesn't like cyrillic aliases - <!--

Hi there - thanks for filing an issue. Please ensure the following things before creating an issue - thank you! 🤓

Since november 15th we handle all requests, except real bugs, at our community board.

Full explanation: https://community.zammad.org/t/major-change-regardin... | process | zammad doesn t like cyrillic aliases hi there thanks for filing an issue please ensure the following things before creating an issue thank you 🤓 since november we handle all requests except real bugs at our community board full explanation please post feature requests development qu... | 1 |

1,707 | 4,350,448,182 | IssuesEvent | 2016-07-31 08:15:55 | AkkadianGames/Nanoshooter | https://api.github.com/repos/AkkadianGames/Nanoshooter | closed | Separate Susa goals from Nanoshooter roadmap on Trello | Docs Process Ready | ## Criteria

- [x] Trello roadmaps are separated.

- [x] Nanoshooter's milestones are adjusted to fit.

| 1.0 | Separate Susa goals from Nanoshooter roadmap on Trello - ## Criteria

- [x] Trello roadmaps are separated.

- [x] Nanoshooter's milestones are adjusted to fit.

| process | separate susa goals from nanoshooter roadmap on trello criteria trello roadmaps are separated nanoshooter s milestones are adjusted to fit | 1 |

19,293 | 25,466,376,372 | IssuesEvent | 2022-11-25 05:06:15 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [IDP] [PM] UI issue in the non-organizational admin popup message | Bug P1 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | UI issue is observed in the non-organizational admin popup message while adding the admin in the application

**AR:**

**ER:**

**ER:**

| area-System.Diagnostics.Process test-run-core | Opened on behalf of @AriNuer

The test `System.Diagnostics.Tests.ProcessTests/ProcessStart_OpenFileOnLinux_UsesSpecifiedProgram(programToOpenWith: \"vi\")` has failed.

Failure Message:

```

Assert.Equal() Failure

↓ (pos 2)

Expected: vi

Actual: vim-nox11

↑ (pos 2)

```

Stack Trace:

```

at Sy... | 1.0 | Test failure: System.Diagnostics.Tests.ProcessTests/ProcessStart_OpenFileOnLinux_UsesSpecifiedProgram(programToOpenWith: \"vi\") - Opened on behalf of @AriNuer

The test `System.Diagnostics.Tests.ProcessTests/ProcessStart_OpenFileOnLinux_UsesSpecifiedProgram(programToOpenWith: \"vi\")` has failed.

Failure Message:

```... | process | test failure system diagnostics tests processtests processstart openfileonlinux usesspecifiedprogram programtoopenwith vi opened on behalf of arinuer the test system diagnostics tests processtests processstart openfileonlinux usesspecifiedprogram programtoopenwith vi has failed failure message ... | 1 |

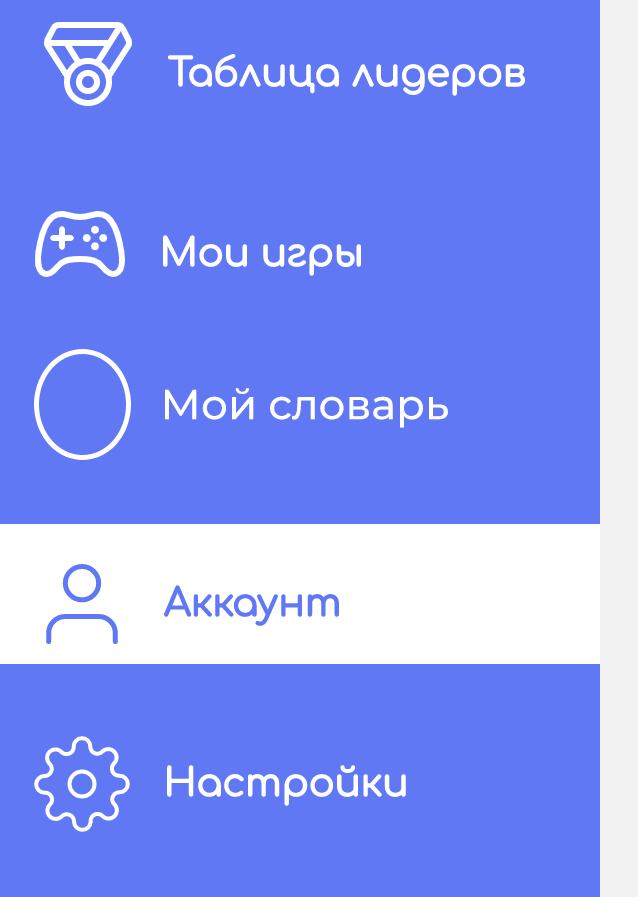

59,365 | 24,738,646,742 | IssuesEvent | 2022-10-21 01:43:14 | MicrosoftDocs/dynamics-365-customer-engagement | https://api.github.com/repos/MicrosoftDocs/dynamics-365-customer-engagement | closed | Omnichannel custom menu items in Session panel | assigned-to-author in-progress Pri2 dynamics-365-customerservice/svc | In section 1. Session panel it shows contacts on the Sitemap on the left. Is it possible to add custom persistent menu items to the Sitemap or will it only display contact items in the menu as pictured?

... | 1.0 | Omnichannel custom menu items in Session panel - In section 1. Session panel it shows contacts on the Sitemap on the left. Is it possible to add custom persistent menu items to the Sitemap or will it only display contact items in the menu as pictured?

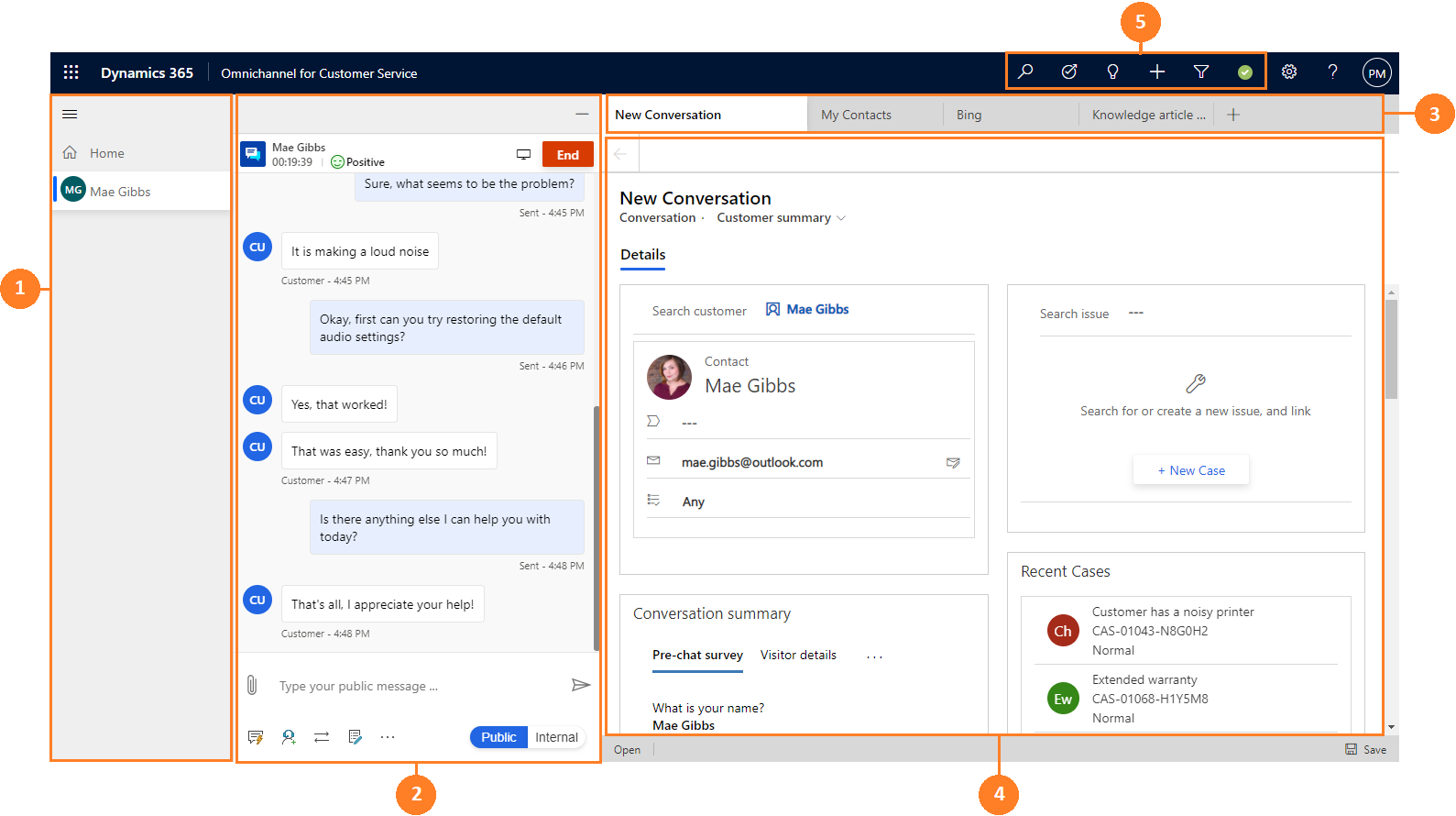

**Versions used(KubeSphere/Kubernetes)**

KubeSphere: `v3.3.1-rc.5`

Kubernetes: (If KubeSphere installer used, you can skip this)

| 1.0 | Pod replicas can not auto refresh - **Describe the bug**

The current can not auto refresh.

**Versions used(KubeSphere/Kubernetes)**

KubeSphere: `v3.3.1-rc.5`

Kubernetes: (If KubeSphere installer... | non_process | pod replicas can not auto refresh describe the bug the current can not auto refresh versions used kubesphere kubernetes kubesphere rc kubernetes if kubesphere installer used you can skip this | 0 |

275,183 | 8,575,393,369 | IssuesEvent | 2018-11-12 17:08:33 | HabitRPG/habitica-android | https://api.github.com/repos/HabitRPG/habitica-android | opened | Achievements: Bailey's 'Town Crier NPC' Achievement image is blank | Priority: minor | On bailey's profile when you view their achievements, that image doesn't show. | 1.0 | Achievements: Bailey's 'Town Crier NPC' Achievement image is blank - On bailey's profile when you view their achievements, that image doesn't show. | non_process | achievements bailey s town crier npc achievement image is blank on bailey s profile when you view their achievements that image doesn t show | 0 |

42,190 | 5,431,312,273 | IssuesEvent | 2017-03-04 00:14:14 | elegantthemes/Divi-Beta | https://api.github.com/repos/elegantthemes/Divi-Beta | closed | PHP Errors | !IMPORTANT BUG DESIGN SIGNOFF QUALITY ASSURED READY FOR REVIEW | ### Problem:

```

[01-Mar-2017 23:08:11 UTC] PHP Notice: Undefined index: depends_on in et.falgout.us/wp-content/themes/Divi/includes/builder/functions.php on line 596

[01-Mar-2017 23:08:11 UTC] PHP Warning: Invalid argument supplied for foreach() in et.falgout.us/wp-content/themes/Divi/includes/builder/functions... | 1.0 | PHP Errors - ### Problem:

```

[01-Mar-2017 23:08:11 UTC] PHP Notice: Undefined index: depends_on in et.falgout.us/wp-content/themes/Divi/includes/builder/functions.php on line 596

[01-Mar-2017 23:08:11 UTC] PHP Warning: Invalid argument supplied for foreach() in et.falgout.us/wp-content/themes/Divi/includes/buil... | non_process | php errors problem php notice undefined index depends on in et falgout us wp content themes divi includes builder functions php on line php warning invalid argument supplied for foreach in et falgout us wp content themes divi includes builder functions php on line php warning array i... | 0 |

21,766 | 30,281,977,352 | IssuesEvent | 2023-07-08 07:06:15 | X-Sharp/XSharpPublic | https://api.github.com/repos/X-Sharp/XSharpPublic | closed | The preprocessor puts an excess parenthesis (Xbase++ dialect) | bug Preprocessor | **Describe the bug**

The preprocessor puts an excess parenthesis in the wrong place

**To Reproduce .prg**

```

#xtranslate ORA_DRV(<Method>([<arg_list,...>])) => ;

(__oraResult := ORA_<Method>([<arg_list>]),;

iif(ISOBJECT(__oraResult) .and. __oraResult:isDerivedFrom(Error()),;

(Eval(ErrorBlock... | 1.0 | The preprocessor puts an excess parenthesis (Xbase++ dialect) - **Describe the bug**

The preprocessor puts an excess parenthesis in the wrong place

**To Reproduce .prg**

```

#xtranslate ORA_DRV(<Method>([<arg_list,...>])) => ;

(__oraResult := ORA_<Method>([<arg_list>]),;

iif(ISOBJECT(__oraResult) .and.... | process | the preprocessor puts an excess parenthesis xbase dialect describe the bug the preprocessor puts an excess parenthesis in the wrong place to reproduce prg xtranslate ora drv oraresult ora iif isobject oraresult and oraresult isderivedfrom error ... | 1 |

4,255 | 7,189,050,235 | IssuesEvent | 2018-02-02 12:34:05 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | Solution to DDOS trace explosion problem | libs-etherlib status-inprocess type-enhancement | Sometime after block 2363154 and before block 'Tangerine', there are traces with 10's of thousands of entries. This slows down a full scan of the blockchain data (when one is looking at a traces) significantly (1,000s of times slower than scanning traces from other time periods). This makes naive scans of the entire bl... | 1.0 | Solution to DDOS trace explosion problem - Sometime after block 2363154 and before block 'Tangerine', there are traces with 10's of thousands of entries. This slows down a full scan of the blockchain data (when one is looking at a traces) significantly (1,000s of times slower than scanning traces from other time period... | process | solution to ddos trace explosion problem sometime after block and before block tangerine there are traces with s of thousands of entries this slows down a full scan of the blockchain data when one is looking at a traces significantly of times slower than scanning traces from other time periods this m... | 1 |