Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

5,301 | 8,121,837,688 | IssuesEvent | 2018-08-16 09:30:06 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | why adding vpool can choose multiple IPs | process_wontfix | When I add or extend a vpool , I find both public ip and storage ip show up in the list widget `storage ip`. This means I can choose either ip.

However, I selected only one specific ip for storage during asd manager setup. What's the sense for vpool to use other ips? | 1.0 | why adding vpool can choose multiple IPs - When I add or extend a vpool , I find both public ip and storage ip show up in the list widget `storage ip`. This means I can choose either ip.

However, I selected only one specific ip for storage during asd manager setup. What's the sense for vpool to use other ips? | process | why adding vpool can choose multiple ips when i add or extend a vpool i find both public ip and storage ip show up in the list widget storage ip this means i can choose either ip however i selected only one specific ip for storage during asd manager setup what s the sense for vpool to use other ips | 1 |

49,876 | 13,187,284,038 | IssuesEvent | 2020-08-13 02:55:35 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | [BadDomList] uses explicit string numbers (Trac #2209) | Incomplete Migration Migrated from Trac combo core defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2209">https://code.icecube.wisc.edu/ticket/2209</a>, reported by kjmeagher and owned by andrii.terliuk</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:15:23",

"description": "r165011/IceCube ... | 1.0 | [BadDomList] uses explicit string numbers (Trac #2209) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2209">https://code.icecube.wisc.edu/ticket/2209</a>, reported by kjmeagher and owned by andrii.terliuk</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "20... | non_process | uses explicit string numbers trac migrated from json status closed changetime description icecube introduced an option which excludes doms with string number this is highly discouraged please use omtype or similar to identify new unneeded doms ... | 0 |

8,027 | 11,209,171,600 | IssuesEvent | 2020-01-06 09:51:39 | inasafe/inasafe-realtime | https://api.github.com/repos/inasafe/inasafe-realtime | closed | We need a way to test that Realtime is running | enhancement feature request orchestration ready realtime processor | Problem

It is possible for InaSAFE Realtime to stop working and for no one to notice.

Today - the last Realtime event is dated 13th July, yet there have been 17 earthquakes in Indonesia since that date, 5 of which are greater than 5.0

We seem to be unaware of system failures unless a person looks at it.

InAWARE p... | 1.0 | We need a way to test that Realtime is running - Problem

It is possible for InaSAFE Realtime to stop working and for no one to notice.

Today - the last Realtime event is dated 13th July, yet there have been 17 earthquakes in Indonesia since that date, 5 of which are greater than 5.0

We seem to be unaware of system... | process | we need a way to test that realtime is running problem it is possible for inasafe realtime to stop working and for no one to notice today the last realtime event is dated july yet there have been earthquakes in indonesia since that date of which are greater than we seem to be unaware of system fai... | 1 |

11,799 | 14,624,203,122 | IssuesEvent | 2020-12-23 05:43:26 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Cannot convert the value of type "Microsoft.Azure.Commands.Profile.Models.PSAzureSubscription" to type "Microsoft.Azure.Commands.Common.Authentication.Abstractions.Core.IAzureContextContainer". | automation/svc cxp process-automation/subsvc product-issue triaged |

$AzureContext = Get-AzSubscription -SubscriptionId $ServicePrincipalConnection.SubscriptionID

returns error when using in Start-AzAutomationRunbook

Start-AzAutomationRunbook : Cannot bind parameter 'DefaultProfile'. Cannot convert the

"XXXXXXX-XXXX-XXXX-XXXX-XXXXXXXXXXXX" value of type "Microsoft.Azure.Comman... | 1.0 | Cannot convert the value of type "Microsoft.Azure.Commands.Profile.Models.PSAzureSubscription" to type "Microsoft.Azure.Commands.Common.Authentication.Abstractions.Core.IAzureContextContainer". -

$AzureContext = Get-AzSubscription -SubscriptionId $ServicePrincipalConnection.SubscriptionID

returns error when using... | process | cannot convert the value of type microsoft azure commands profile models psazuresubscription to type microsoft azure commands common authentication abstractions core iazurecontextcontainer azurecontext get azsubscription subscriptionid serviceprincipalconnection subscriptionid returns error when using... | 1 |

1,840 | 4,646,971,567 | IssuesEvent | 2016-10-01 06:54:30 | nodejs/node | https://api.github.com/repos/nodejs/node | opened | Investigate flaky parallel/test-tick-processor-unknown | process test tools | * **Version**: master

* **Platform**: smartos, windows

* **Subsystem**: process

I've recently started seeing `test-tick-processor-unknown` failures on `smartos14-32` and various Windows configurations in CI.

Most are merely timeouts, but I did see this instance on smartos that resulted in a [different result](h... | 1.0 | Investigate flaky parallel/test-tick-processor-unknown - * **Version**: master

* **Platform**: smartos, windows

* **Subsystem**: process

I've recently started seeing `test-tick-processor-unknown` failures on `smartos14-32` and various Windows configurations in CI.

Most are merely timeouts, but I did see this in... | process | investigate flaky parallel test tick processor unknown version master platform smartos windows subsystem process i ve recently started seeing test tick processor unknown failures on and various windows configurations in ci most are merely timeouts but i did see this instance on... | 1 |

18,899 | 24,837,755,027 | IssuesEvent | 2022-10-26 10:11:20 | hoprnet/hoprnet | https://api.github.com/repos/hoprnet/hoprnet | closed | Prevent PRs from merging if target branch is broken | devops processes | We would like to make it harder to merge PRs to a branch, particularly master and release branches, when these are broken in the sense of previous CI workflow having failed.

It should be somehow shown in the PR merge UI that a merge is not advised. Obviously we still need to be able to merge PRs in order to fix the ... | 1.0 | Prevent PRs from merging if target branch is broken - We would like to make it harder to merge PRs to a branch, particularly master and release branches, when these are broken in the sense of previous CI workflow having failed.

It should be somehow shown in the PR merge UI that a merge is not advised. Obviously we s... | process | prevent prs from merging if target branch is broken we would like to make it harder to merge prs to a branch particularly master and release branches when these are broken in the sense of previous ci workflow having failed it should be somehow shown in the pr merge ui that a merge is not advised obviously we s... | 1 |

17,498 | 23,305,508,106 | IssuesEvent | 2022-08-07 23:50:05 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Mirror, Father Mirror | suggested title in process | Please add as much of the following info as you can:

Title: The Flower that Drank the Moon

Type (film/tv show): Film

Film or show in which it appears: Ghost World (2001)

Is the parent film/show streaming anywhere?

Yes (Pluto, Hulu, Amazon Prime, Vudu, among others).

About when in the parent film/show does... | 1.0 | Add Mirror, Father Mirror - Please add as much of the following info as you can:

Title: The Flower that Drank the Moon

Type (film/tv show): Film

Film or show in which it appears: Ghost World (2001)

Is the parent film/show streaming anywhere?

Yes (Pluto, Hulu, Amazon Prime, Vudu, among others).

About when ... | process | add mirror father mirror please add as much of the following info as you can title the flower that drank the moon type film tv show film film or show in which it appears ghost world is the parent film show streaming anywhere yes pluto hulu amazon prime vudu among others about when in ... | 1 |

21,529 | 3,901,454,457 | IssuesEvent | 2016-04-18 10:55:37 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | stress: failed test in cockroach/rpc/rpc.test: TestOffsetMeasurement | Robot test-failure | Binary: cockroach/static-tests.tar.gz sha: https://github.com/cockroachdb/cockroach/commits/0958303ee7be3dd3fe7281c7008f520c53c8ebbd

Stress build found a failed test:

```

=== RUN TestOffsetMeasurement

SIGABRT: abort

PC=0x4612c9 m=0

goroutine 0 [idle]:

runtime.epollwait(0x7fff00000004, 0x7fff370baed8, 0xffffffff000... | 1.0 | stress: failed test in cockroach/rpc/rpc.test: TestOffsetMeasurement - Binary: cockroach/static-tests.tar.gz sha: https://github.com/cockroachdb/cockroach/commits/0958303ee7be3dd3fe7281c7008f520c53c8ebbd

Stress build found a failed test:

```

=== RUN TestOffsetMeasurement

SIGABRT: abort

PC=0x4612c9 m=0

goroutine 0 ... | non_process | stress failed test in cockroach rpc rpc test testoffsetmeasurement binary cockroach static tests tar gz sha stress build found a failed test run testoffsetmeasurement sigabrt abort pc m goroutine runtime epollwait usr local go src runtime sys linux ... | 0 |

337,052 | 30,236,605,510 | IssuesEvent | 2023-07-06 10:39:32 | keycloak/keycloak | https://api.github.com/repos/keycloak/keycloak | closed | UserSessionProviderModelTest#testRemoteCachesParallel sessions are not removed after the test | area/testsuite kind/bug area/storage team/store team/continuous-testing | ### Before reporting an issue

- [X] I have searched existing issues

- [X] I have reproduced the issue with the latest release

### Area

testsuite

### Describe the bug

UserSessionProviderModelTest#testRemoteCachesParallel creates sessions without the realm directly in the cache, meaning they are not cleaned after th... | 2.0 | UserSessionProviderModelTest#testRemoteCachesParallel sessions are not removed after the test - ### Before reporting an issue

- [X] I have searched existing issues

- [X] I have reproduced the issue with the latest release

### Area

testsuite

### Describe the bug

UserSessionProviderModelTest#testRemoteCachesParallel... | non_process | usersessionprovidermodeltest testremotecachesparallel sessions are not removed after the test before reporting an issue i have searched existing issues i have reproduced the issue with the latest release area testsuite describe the bug usersessionprovidermodeltest testremotecachesparallel cre... | 0 |

721,144 | 24,819,526,032 | IssuesEvent | 2022-10-25 15:23:37 | AY2223S1-CS2103T-T11-2/tp | https://api.github.com/repos/AY2223S1-CS2103T-T11-2/tp | closed | Add Tests for Task and Status | enhancement Priority.High | The tests we currently have do not cover ```Task``` and ```Status``` effectively. Let's improve the testing by adding more tests for ```Task``` and ```Status```. | 1.0 | Add Tests for Task and Status - The tests we currently have do not cover ```Task``` and ```Status``` effectively. Let's improve the testing by adding more tests for ```Task``` and ```Status```. | non_process | add tests for task and status the tests we currently have do not cover task and status effectively let s improve the testing by adding more tests for task and status | 0 |

23,897 | 7,431,133,138 | IssuesEvent | 2018-03-25 11:40:50 | magicDGS/jsr203-http | https://api.github.com/repos/magicDGS/jsr203-http | closed | Add CI automatic testing for windows | DevOps build | Using [Circle-CI](https://circleci.com/), where if I remember correctly I have a free account for public projects. | 1.0 | Add CI automatic testing for windows - Using [Circle-CI](https://circleci.com/), where if I remember correctly I have a free account for public projects. | non_process | add ci automatic testing for windows using where if i remember correctly i have a free account for public projects | 0 |

27,149 | 27,753,083,817 | IssuesEvent | 2023-03-15 22:43:16 | matomo-org/matomo | https://api.github.com/repos/matomo-org/matomo | opened | Fix inconsistencies across dropdown elements | Enhancement c: Usability To Triage | Context: Through community feedback we've heard that the UI of Matomo has room for improvement. We are therefore looking at improvements (small and large) that can have an impact on making the platform easier to use, particularly for new users who are struggling the most.

Problem: Dropdown elements have visual incon... | True | Fix inconsistencies across dropdown elements - Context: Through community feedback we've heard that the UI of Matomo has room for improvement. We are therefore looking at improvements (small and large) that can have an impact on making the platform easier to use, particularly for new users who are struggling the most.

... | non_process | fix inconsistencies across dropdown elements context through community feedback we ve heard that the ui of matomo has room for improvement we are therefore looking at improvements small and large that can have an impact on making the platform easier to use particularly for new users who are struggling the most ... | 0 |

226,392 | 18,015,661,514 | IssuesEvent | 2021-09-16 13:40:42 | nodejs/node-addon-api | https://api.github.com/repos/nodejs/node-addon-api | closed | Methods without corresponding tests | test good first issue stale | Need to document then list of methods that still need tests. | 1.0 | Methods without corresponding tests - Need to document then list of methods that still need tests. | non_process | methods without corresponding tests need to document then list of methods that still need tests | 0 |

407,808 | 11,937,435,159 | IssuesEvent | 2020-04-02 12:10:43 | googleapis/nodejs-translate | https://api.github.com/repos/googleapis/nodejs-translate | closed | Synthesis failed for nodejs-translate | api: translation autosynth failure priority: p1 type: bug | Hello! Autosynth couldn't regenerate nodejs-translate. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to a new branch 'autosynth'

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6/runpy.py", line 193, in _run_module_as_... | 1.0 | Synthesis failed for nodejs-translate - Hello! Autosynth couldn't regenerate nodejs-translate. :broken_heart:

Here's the output from running `synth.py`:

```

Cloning into 'working_repo'...

Switched to a new branch 'autosynth'

Traceback (most recent call last):

File "/home/kbuilder/.pyenv/versions/3.6.9/lib/python3.6... | non_process | synthesis failed for nodejs translate hello autosynth couldn t regenerate nodejs translate broken heart here s the output from running synth py cloning into working repo switched to a new branch autosynth traceback most recent call last file home kbuilder pyenv versions lib runpy... | 0 |

15,888 | 20,075,035,674 | IssuesEvent | 2022-02-04 11:43:30 | climatepolicyradar/navigator | https://api.github.com/repos/climatepolicyradar/navigator | opened | Extract text passages using heuristic rules when adding new document | Document processing | When adding a document to the system, in either the bulk load from CCLW or when adding individual documents, the system should extract the text from that document.

The text should be extracted using a baseline method using heuristic rules to identify words, sentences and paragraphs.

Extracted text passages should be ... | 1.0 | Extract text passages using heuristic rules when adding new document - When adding a document to the system, in either the bulk load from CCLW or when adding individual documents, the system should extract the text from that document.

The text should be extracted using a baseline method using heuristic rules to identi... | process | extract text passages using heuristic rules when adding new document when adding a document to the system in either the bulk load from cclw or when adding individual documents the system should extract the text from that document the text should be extracted using a baseline method using heuristic rules to identi... | 1 |

371,885 | 10,987,019,577 | IssuesEvent | 2019-12-02 08:16:53 | yilmazvolkan/purposefulCommunityPlatform | https://api.github.com/repos/yilmazvolkan/purposefulCommunityPlatform | opened | Community Screen | Effort: High Mobile Priority: High Status: In Progress | 1. Create join to community screen

1. Create my communities feed screen

1. New functionalities for each user role | 1.0 | Community Screen - 1. Create join to community screen

1. Create my communities feed screen

1. New functionalities for each user role | non_process | community screen create join to community screen create my communities feed screen new functionalities for each user role | 0 |

324,631 | 9,906,624,793 | IssuesEvent | 2019-06-27 14:13:37 | python/mypy | https://api.github.com/repos/python/mypy | closed | Tuple slice by variable results in incorrect type | bug priority-1-normal | ```python

from typing import Tuple

sz = 2

x: Tuple[int, ...] = (1, 2)[:sz]

```

```console

$ mypy --version

mypy 0.711

$ mypy t.py

mt.py:4: error: Incompatible types in assignment (expression has type "int", variable has type "Tuple[int, ...]")

``` | 1.0 | Tuple slice by variable results in incorrect type - ```python

from typing import Tuple

sz = 2

x: Tuple[int, ...] = (1, 2)[:sz]

```

```console

$ mypy --version

mypy 0.711

$ mypy t.py

mt.py:4: error: Incompatible types in assignment (expression has type "int", variable has type "Tuple[int, ...]")

``` | non_process | tuple slice by variable results in incorrect type python from typing import tuple sz x tuple console mypy version mypy mypy t py mt py error incompatible types in assignment expression has type int variable has type tuple | 0 |

11 | 2,497,881,994 | IssuesEvent | 2015-01-07 11:59:09 | kendrainitiative/kendra_hub | https://api.github.com/repos/kendrainitiative/kendra_hub | opened | Kendra Social interaction | Architecture High priority | We have talked about integration with social media but I think the fist stage should be enabling discoverability of users within the system.

We currently have a Contacts page that lists legal entities and we have enabled user to user communications.

We should consider replacing the Legal entities listing (demo) with ... | 1.0 | Kendra Social interaction - We have talked about integration with social media but I think the fist stage should be enabling discoverability of users within the system.

We currently have a Contacts page that lists legal entities and we have enabled user to user communications.

We should consider replacing the Legal ... | non_process | kendra social interaction we have talked about integration with social media but i think the fist stage should be enabling discoverability of users within the system we currently have a contacts page that lists legal entities and we have enabled user to user communications we should consider replacing the legal ... | 0 |

16,677 | 21,780,783,796 | IssuesEvent | 2022-05-13 18:37:41 | carbon-design-system/ibm-cloud-cognitive | https://api.github.com/repos/carbon-design-system/ibm-cloud-cognitive | closed | Change frequency of dependency updates | dependencies type: process improvement | ## What will this achieve?

Currently our dependencies are automatically updated via a PR opened by a github action every week. To help with everyone's overall workload currently, we should decrease the frequency of these updates.

<!--

e.g.

- bug fix

- unit testing

- review

- enhancement

- component implem... | 1.0 | Change frequency of dependency updates - ## What will this achieve?

Currently our dependencies are automatically updated via a PR opened by a github action every week. To help with everyone's overall workload currently, we should decrease the frequency of these updates.

<!--

e.g.

- bug fix

- unit testing

- ... | process | change frequency of dependency updates what will this achieve currently our dependencies are automatically updated via a pr opened by a github action every week to help with everyone s overall workload currently we should decrease the frequency of these updates e g bug fix unit testing ... | 1 |

20,694 | 27,367,118,116 | IssuesEvent | 2023-02-27 20:07:02 | bcgov/upptime | https://api.github.com/repos/bcgov/upptime | opened | CronJob prod-dh-hold-processor-cj is stuck | status-switch status prod-dh-hold-processor-cj | Cron job is taking longer than 10 minutes to complete | 1.0 | CronJob prod-dh-hold-processor-cj is stuck - Cron job is taking longer than 10 minutes to complete | process | cronjob prod dh hold processor cj is stuck cron job is taking longer than minutes to complete | 1 |

16,965 | 22,329,826,850 | IssuesEvent | 2022-06-14 13:43:37 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | ProcessStartInfo wraps quotes arround my `--option=` | area-System.Diagnostics.Process untriaged | ### Description

I want to use `ArgumentList.Add` to safely add arguments in a secure way but it wraps double quotes around the entire string even when it is an key–value pair option.

### Reproduction Steps

```cs

using System.Diagnostics;

var info = new ProcessStartInfo("cmd");

info.UseShellExecute = false... | 1.0 | ProcessStartInfo wraps quotes arround my `--option=` - ### Description

I want to use `ArgumentList.Add` to safely add arguments in a secure way but it wraps double quotes around the entire string even when it is an key–value pair option.

### Reproduction Steps

```cs

using System.Diagnostics;

var info = new... | process | processstartinfo wraps quotes arround my option description i want to use argumentlist add to safely add arguments in a secure way but it wraps double quotes around the entire string even when it is an key–value pair option reproduction steps cs using system diagnostics var info new... | 1 |

745,772 | 26,000,154,118 | IssuesEvent | 2022-12-20 14:44:35 | keycloak/keycloak | https://api.github.com/repos/keycloak/keycloak | opened | [CVE-2022-41881] Denial of Service (DoS) vulnerability in io.netty:netty-codec-haproxy | priority/important area/dependencies status/not-vulnerable kind/cve | ### Description

### Detailed paths

- _Introduced through_: org.keycloak:keycloak-quarkus-server-app@999-SNAPSHOT › org.keycloak:keycloak-quarkus-server@999-SNAPSHOT › io.quarkus:[quarkus-vertx@2.13.5.Final](mailto:quarkus-vertx@2.13.5.Final) › io.netty:[netty-codec-haproxy@4.1.82.Final](mailto:netty-codec-haproxy... | 1.0 | [CVE-2022-41881] Denial of Service (DoS) vulnerability in io.netty:netty-codec-haproxy - ### Description

### Detailed paths

- _Introduced through_: org.keycloak:keycloak-quarkus-server-app@999-SNAPSHOT › org.keycloak:keycloak-quarkus-server@999-SNAPSHOT › io.quarkus:[quarkus-vertx@2.13.5.Final](mailto:quarkus-ver... | non_process | denial of service dos vulnerability in io netty netty codec haproxy description detailed paths introduced through org keycloak keycloak quarkus server app snapshot › org keycloak keycloak quarkus server snapshot › io quarkus mailto quarkus vertx final › io netty mailto netty code... | 0 |

6,756 | 9,882,561,376 | IssuesEvent | 2019-06-24 17:10:20 | gunn4r/material-ui-advanced-table | https://api.github.com/repos/gunn4r/material-ui-advanced-table | opened | Switch storybook webpack to use babel-loader for TS compilation | development process | Also make sure HMR and such is working. | 1.0 | Switch storybook webpack to use babel-loader for TS compilation - Also make sure HMR and such is working. | process | switch storybook webpack to use babel loader for ts compilation also make sure hmr and such is working | 1 |

20,971 | 27,819,515,451 | IssuesEvent | 2023-03-19 03:24:54 | cse442-at-ub/project_s23-cinco | https://api.github.com/repos/cse442-at-ub/project_s23-cinco | closed | Implement login and register form using the database on my local demo app | IO Task Processing Task Sprint 2 | Test

VPN into UB servers. Go to "Connect to database" issue to learn more.

Start up Apache web server, make sure the two php files login.php and register.php are in your htdocs folder.

Execute "npm start". "sudo npm start" if you have insufficent permissions.

Enter this URL http://localhost:3000.

Verify you can see a l... | 1.0 | Implement login and register form using the database on my local demo app - Test

VPN into UB servers. Go to "Connect to database" issue to learn more.

Start up Apache web server, make sure the two php files login.php and register.php are in your htdocs folder.

Execute "npm start". "sudo npm start" if you have insuffice... | process | implement login and register form using the database on my local demo app test vpn into ub servers go to connect to database issue to learn more start up apache web server make sure the two php files login php and register php are in your htdocs folder execute npm start sudo npm start if you have insuffice... | 1 |

6,548 | 9,637,599,586 | IssuesEvent | 2019-05-16 09:10:27 | zephyrproject-rtos/zephyr | https://api.github.com/repos/zephyrproject-rtos/zephyr | closed | GH labels reorg | area: Process enhancement | Following https://github.com/zephyrproject-rtos/zephyr/pull/15054 (Assuming it is merged),

this issue aims at settling other fixes for the labels which were not trivially agreed yet.

I list each proposal in a separate point below, if you agree just give it a thumbs-up, if you disagree a down (and create a separate co... | 1.0 | GH labels reorg - Following https://github.com/zephyrproject-rtos/zephyr/pull/15054 (Assuming it is merged),

this issue aims at settling other fixes for the labels which were not trivially agreed yet.

I list each proposal in a separate point below, if you agree just give it a thumbs-up, if you disagree a down (and cr... | process | gh labels reorg following assuming it is merged this issue aims at settling other fixes for the labels which were not trivially agreed yet i list each proposal in a separate point below if you agree just give it a thumbs up if you disagree a down and create a separate comment for it maybe we can handle t... | 1 |

33,678 | 2,770,905,638 | IssuesEvent | 2015-05-01 17:52:18 | GoogleCloudPlatform/kubernetes | https://api.github.com/repos/GoogleCloudPlatform/kubernetes | closed | Add support for new Docker 1.6 cgroup parent flag | area/isolation cluster/platform/mesos priority/P3 team/node | Docker 1.6 allows setting the parent cgroup which allows us to set the parent for pods, see #5671 | 1.0 | Add support for new Docker 1.6 cgroup parent flag - Docker 1.6 allows setting the parent cgroup which allows us to set the parent for pods, see #5671 | non_process | add support for new docker cgroup parent flag docker allows setting the parent cgroup which allows us to set the parent for pods see | 0 |

240,212 | 26,254,337,749 | IssuesEvent | 2023-01-05 22:33:48 | MValle21/Intelehealth-WebApp | https://api.github.com/repos/MValle21/Intelehealth-WebApp | opened | CVE-2021-23364 (Medium) detected in browserslist-4.14.0.tgz | security vulnerability | ## CVE-2021-23364 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>browserslist-4.14.0.tgz</b></p></summary>

<p>Share target browsers between different front-end tools, like Autoprefi... | True | CVE-2021-23364 (Medium) detected in browserslist-4.14.0.tgz - ## CVE-2021-23364 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>browserslist-4.14.0.tgz</b></p></summary>

<p>Share tar... | non_process | cve medium detected in browserslist tgz cve medium severity vulnerability vulnerable library browserslist tgz share target browsers between different front end tools like autoprefixer stylelint and babel env preset library home page a href path to dependency file pac... | 0 |

84,457 | 3,665,076,143 | IssuesEvent | 2016-02-19 14:45:18 | e-government-ua/iTest | https://api.github.com/repos/e-government-ua/iTest | closed | Исправить ошибки в тестах | priority - High | https://jenkins-new.igov.org.ua/job/_iTest/241/

нужно провести фиксы в основе это замена ФИО и элементов

Замена ФИО раньше был Дмитро Олександрович Дубілет стал Володимир Володимирович Білявцев

исправить локаторы в пейгобжект | 1.0 | Исправить ошибки в тестах - https://jenkins-new.igov.org.ua/job/_iTest/241/

нужно провести фиксы в основе это замена ФИО и элементов

Замена ФИО раньше был Дмитро Олександрович Дубілет стал Володимир Володимирович Білявцев

исправить локаторы в пейгобжект | non_process | исправить ошибки в тестах нужно провести фиксы в основе это замена фио и элементов замена фио раньше был дмитро олександрович дубілет стал володимир володимирович білявцев исправить локаторы в пейгобжект | 0 |

19,031 | 25,040,926,319 | IssuesEvent | 2022-11-04 20:40:20 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | opened | Release 5.4.0 - November 2022 | P1 type: process release team-OSS | # Status of Bazel 5.4.0

- Expected release date: Next week

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/45)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and add it to the milestone.

To che... | 1.0 | Release 5.4.0 - November 2022 - # Status of Bazel 5.4.0

- Expected release date: Next week

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/45)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and ad... | process | release november status of bazel expected release date next week to report a release blocking bug please add a comment with the text bazel io flag to the issue a release manager will triage it and add it to the milestone to cherry pick a mainline commit into simply ... | 1 |

350,052 | 10,477,909,117 | IssuesEvent | 2019-09-23 22:08:07 | spacetx/starfish | https://api.github.com/repos/spacetx/starfish | closed | Cannot do ImageStack.{transform,apply} after ImageStack.{sel,isel} | bug high priority | The resulting ImageStack takes a reference to the backing MPDataArray. When we pass the MPDataArray to the child processes, we reshape the raw byte buffer to the shape of the resulting ImageStack. However, the MPDataArray is sized to reflect the original ImageStack's size, and so the reshape operation fails. | 1.0 | Cannot do ImageStack.{transform,apply} after ImageStack.{sel,isel} - The resulting ImageStack takes a reference to the backing MPDataArray. When we pass the MPDataArray to the child processes, we reshape the raw byte buffer to the shape of the resulting ImageStack. However, the MPDataArray is sized to reflect the ori... | non_process | cannot do imagestack transform apply after imagestack sel isel the resulting imagestack takes a reference to the backing mpdataarray when we pass the mpdataarray to the child processes we reshape the raw byte buffer to the shape of the resulting imagestack however the mpdataarray is sized to reflect the ori... | 0 |

36,500 | 5,065,078,758 | IssuesEvent | 2016-12-23 10:19:23 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | github.com/cockroachdb/cockroach/pkg/storage: TestTransferRaftLeadership failed under stress | Robot test-failure | SHA: https://github.com/cockroachdb/cockroach/commits/eee8e77c21ceeb4f62b1e59d13caeb467bd2d9df

Parameters:

```

COCKROACH_PROPOSER_EVALUATED_KV=false

TAGS=

GOFLAGS=-race

```

Stress build found a failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=99098&tab=buildLog

```

I161223 09:50:10.598200 27883 sto... | 1.0 | github.com/cockroachdb/cockroach/pkg/storage: TestTransferRaftLeadership failed under stress - SHA: https://github.com/cockroachdb/cockroach/commits/eee8e77c21ceeb4f62b1e59d13caeb467bd2d9df

Parameters:

```

COCKROACH_PROPOSER_EVALUATED_KV=false

TAGS=

GOFLAGS=-race

```

Stress build found a failed test: https://teamcity... | non_process | github com cockroachdb cockroach pkg storage testtransferraftleadership failed under stress sha parameters cockroach proposer evaluated kv false tags goflags race stress build found a failed test storage store go failed initial metrics computation system config not yet a... | 0 |

96,470 | 10,933,628,627 | IssuesEvent | 2019-11-24 04:00:33 | bigblueberry/SomethingLikeCAS | https://api.github.com/repos/bigblueberry/SomethingLikeCAS | closed | 문서화, 코드 리팩토링 | Refactoring documentation help wanted | 1. 로직이 (필연적으로) 복잡하다. 나같은 뛰어난 재능의 소유자의 머릿속에서는 금방 이해되는 내용이나, 코드가 직관적으로 아름답지 못하고 쓰거나 디버깅에 편하지 않다.

2. 그래서 로직은 보존하면서도 신속히 코드를 아름답게 리팩토링해줘야 한다.

3. 그리고 프로젝트 확장성을 위해서 로직이나 주요 클래스들을 문서화해줘야 한다. | 1.0 | 문서화, 코드 리팩토링 - 1. 로직이 (필연적으로) 복잡하다. 나같은 뛰어난 재능의 소유자의 머릿속에서는 금방 이해되는 내용이나, 코드가 직관적으로 아름답지 못하고 쓰거나 디버깅에 편하지 않다.

2. 그래서 로직은 보존하면서도 신속히 코드를 아름답게 리팩토링해줘야 한다.

3. 그리고 프로젝트 확장성을 위해서 로직이나 주요 클래스들을 문서화해줘야 한다. | non_process | 문서화 코드 리팩토링 로직이 필연적으로 복잡하다 나같은 뛰어난 재능의 소유자의 머릿속에서는 금방 이해되는 내용이나 코드가 직관적으로 아름답지 못하고 쓰거나 디버깅에 편하지 않다 그래서 로직은 보존하면서도 신속히 코드를 아름답게 리팩토링해줘야 한다 그리고 프로젝트 확장성을 위해서 로직이나 주요 클래스들을 문서화해줘야 한다 | 0 |

100,327 | 30,673,440,430 | IssuesEvent | 2023-07-26 01:48:37 | microsoft/msquic | https://api.github.com/repos/microsoft/msquic | closed | QUIC tools are compiled with RUNPATH set to an absolute path | Area: Build OS: Ubuntu | ### Describe the bug

RUNPATH is set to ` /home/runner/work/msquic/msquic/artifacts/bin/linux/x64_Debug_openssl`, which means the tools will search for libmsquic in ` /home/runner/work/msquic/msquic/artifacts/bin/linux/x64_Debug_openssl`.

```

jedihy@yi-devbox:~/good-linux/bin/linux/x64_Debug_openssl$ objdump -p s... | 1.0 | QUIC tools are compiled with RUNPATH set to an absolute path - ### Describe the bug

RUNPATH is set to ` /home/runner/work/msquic/msquic/artifacts/bin/linux/x64_Debug_openssl`, which means the tools will search for libmsquic in ` /home/runner/work/msquic/msquic/artifacts/bin/linux/x64_Debug_openssl`.

```

jedihy@y... | non_process | quic tools are compiled with runpath set to an absolute path describe the bug runpath is set to home runner work msquic msquic artifacts bin linux debug openssl which means the tools will search for libmsquic in home runner work msquic msquic artifacts bin linux debug openssl jedihy yi de... | 0 |

174,475 | 14,483,851,358 | IssuesEvent | 2020-12-10 15:38:31 | AlinTudi98/webCrawler | https://api.github.com/repos/AlinTudi98/webCrawler | closed | Documentatie clasa PageCrawler | documentation | Documentarea clasei **PageCrawler**, a membrilor si metodelor prezente in cadrul acesteia. | 1.0 | Documentatie clasa PageCrawler - Documentarea clasei **PageCrawler**, a membrilor si metodelor prezente in cadrul acesteia. | non_process | documentatie clasa pagecrawler documentarea clasei pagecrawler a membrilor si metodelor prezente in cadrul acesteia | 0 |

18,143 | 24,186,573,391 | IssuesEvent | 2022-09-23 13:47:27 | cloudfoundry/korifi | https://api.github.com/repos/cloudfoundry/korifi | closed | [Feature]: Developer can push apps using the top-level `command` field in the manifest | Top-level process config | ### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`, `-i`, `-m` etc.

### Acceptance Criteria

* **GIVEN** I have the sources of an application (e.g. `tests/smoke/assets/test-node-app`)

**AND** `ma... | 1.0 | [Feature]: Developer can push apps using the top-level `command` field in the manifest - ### Background

**As a** developer

**I want** top-level process configuration in manifests to be supported

**So that** I can use shortcut `cf push` flags like `-c`, `-i`, `-m` etc.

### Acceptance Criteria

* **GIVEN** I ha... | process | developer can push apps using the top level command field in the manifest background as a developer i want top level process configuration in manifests to be supported so that i can use shortcut cf push flags like c i m etc acceptance criteria given i have the s... | 1 |

102,546 | 8,848,442,071 | IssuesEvent | 2019-01-08 07:00:35 | vavr-io/vavr | https://api.github.com/repos/vavr-io/vavr | closed | Shrinking in PropertyCheck: find a minimal counter example | feature «vavr-test» | If a property is falsified with a counter example of a specific size then branch in a subroutine, which tries to find a minimum counter example.

This can be accomplished by subsequently run the test with sizes from 0 (or 1) to the actual size.

I did this manually for finding a RedBlackTree bug. It would be helpful, i... | 1.0 | Shrinking in PropertyCheck: find a minimal counter example - If a property is falsified with a counter example of a specific size then branch in a subroutine, which tries to find a minimum counter example.

This can be accomplished by subsequently run the test with sizes from 0 (or 1) to the actual size.

I did this ma... | non_process | shrinking in propertycheck find a minimal counter example if a property is falsified with a counter example of a specific size then branch in a subroutine which tries to find a minimum counter example this can be accomplished by subsequently run the test with sizes from or to the actual size i did this ma... | 0 |

8,188 | 11,386,902,519 | IssuesEvent | 2020-01-29 14:08:20 | googleapis/google-cloud-cpp-common | https://api.github.com/repos/googleapis/google-cloud-cpp-common | closed | CI builds are slow due to long Docker image build times | type: process | I've noticed a number of CI builds taking an abnormally long time to run... usual had been ~20 minutes but occasionally builds are taking almost an hour to complete.

I looked at logs from some of these and the bulk of the time (even in "normal" ~20 minute builds) appears to be building a Docker image. These should ... | 1.0 | CI builds are slow due to long Docker image build times - I've noticed a number of CI builds taking an abnormally long time to run... usual had been ~20 minutes but occasionally builds are taking almost an hour to complete.

I looked at logs from some of these and the bulk of the time (even in "normal" ~20 minute bui... | process | ci builds are slow due to long docker image build times i ve noticed a number of ci builds taking an abnormally long time to run usual had been minutes but occasionally builds are taking almost an hour to complete i looked at logs from some of these and the bulk of the time even in normal minute build... | 1 |

17,384 | 23,201,351,851 | IssuesEvent | 2022-08-01 21:54:23 | celo-org/celo-monorepo | https://api.github.com/repos/celo-org/celo-monorepo | closed | ODIS E2E tests to run in CI | release-process infra-and-monitoring ci Component: ODIS Component: Identity Deprioritised | As an ODIS developer, I should be able to easily identify E2E issues before production deployment

E2E tests will provide us better protection against regression going forward. Need to investigate how best to run this since it will require a deployment. | 1.0 | ODIS E2E tests to run in CI - As an ODIS developer, I should be able to easily identify E2E issues before production deployment

E2E tests will provide us better protection against regression going forward. Need to investigate how best to run this since it will require a deployment. | process | odis tests to run in ci as an odis developer i should be able to easily identify issues before production deployment tests will provide us better protection against regression going forward need to investigate how best to run this since it will require a deployment | 1 |

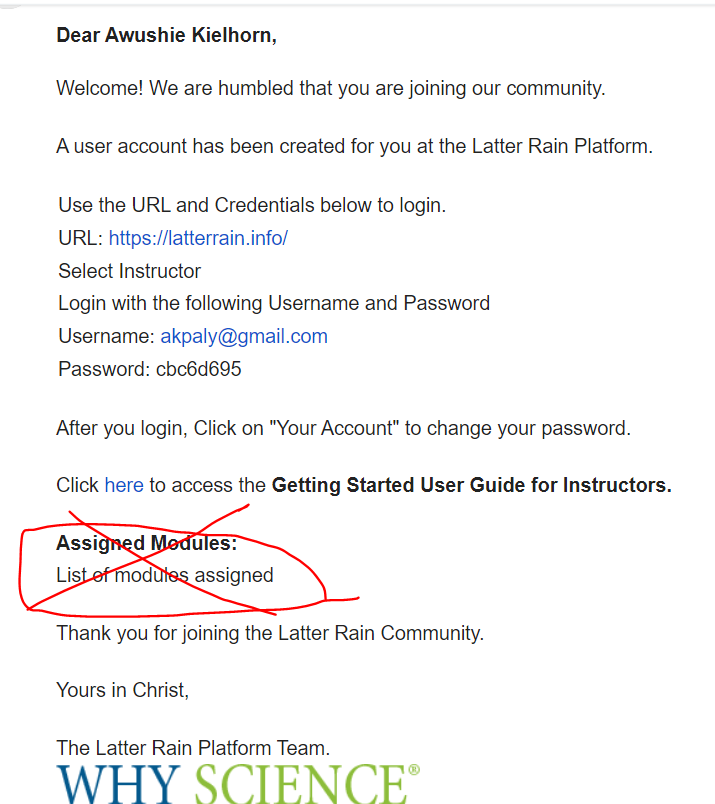

666,417 | 22,354,829,413 | IssuesEvent | 2022-06-15 14:50:30 | why-science/demo | https://api.github.com/repos/why-science/demo | opened | Edit welcome email - Remove info about modules assigned | high priority | Since itadmin@whyscience assigned modules after an account is created modules are no longer automatically assigned. Remove the text circled in the welcome emails for Coach and instructor

| 1.0 | Edit welcome email - Remove info about modules assigned - Since itadmin@whyscience assigned modules after an account is created modules are no longer automatically assigned. Remove the text circled in the welcome emails for Coach and instructor

it freezes the swiper. It will not work anymore. Unless you hide back the legend.

it freezes the swiper. It will not work anymore. Unless you hide back the legend.

detected in multiple libraries | Mend: dependency security vulnerability | ## WS-2023-0196 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>memoffset-0.6.1.crate</b>, <b>memoffset-0.5.4.crate</b>, <b>memoffset-0.5.3.crate</b>, <b>memoffset-0.2.1.crate</b>, ... | True | WS-2023-0196 (Medium) detected in multiple libraries - ## WS-2023-0196 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>memoffset-0.6.1.crate</b>, <b>memoffset-0.5.4.crate</b>, <b>me... | non_process | ws medium detected in multiple libraries ws medium severity vulnerability vulnerable libraries memoffset crate memoffset crate memoffset crate memoffset crate memoffset crate memoffset crate memoffset crate offset of function... | 0 |

7,217 | 10,347,033,702 | IssuesEvent | 2019-09-04 16:27:01 | googleapis/nodejs-bigquery | https://api.github.com/repos/googleapis/nodejs-bigquery | closed | bigquery: confirm federated sheets support range property | type: process | For some time, the BigQuery backend has supported an additional "range" property when defining a federated table against Google Drive (via GoogleSheetsOptions). Please verify this property is available to users of the library. With the next discovery release, the "beta" label for this functionality will be removed.

... | 1.0 | bigquery: confirm federated sheets support range property - For some time, the BigQuery backend has supported an additional "range" property when defining a federated table against Google Drive (via GoogleSheetsOptions). Please verify this property is available to users of the library. With the next discovery release... | process | bigquery confirm federated sheets support range property for some time the bigquery backend has supported an additional range property when defining a federated table against google drive via googlesheetsoptions please verify this property is available to users of the library with the next discovery release... | 1 |

19,355 | 25,489,587,748 | IssuesEvent | 2022-11-26 22:13:43 | OpenAsar/arrpc | https://api.github.com/repos/OpenAsar/arrpc | closed | add process scanning? | enhancement process | undecided on whether to add or not as it isn't really RPC but loosely related (not in `discord_rpc` but in `discord_game_utils` for Discord's own Native modules, for example). would expand the scope quite a bit but might be worth it. **please react/comment if you want!** it would be optional/disableable if added to eli... | 1.0 | add process scanning? - undecided on whether to add or not as it isn't really RPC but loosely related (not in `discord_rpc` but in `discord_game_utils` for Discord's own Native modules, for example). would expand the scope quite a bit but might be worth it. **please react/comment if you want!** it would be optional/dis... | process | add process scanning undecided on whether to add or not as it isn t really rpc but loosely related not in discord rpc but in discord game utils for discord s own native modules for example would expand the scope quite a bit but might be worth it please react comment if you want it would be optional dis... | 1 |

17,155 | 22,716,204,372 | IssuesEvent | 2022-07-06 02:27:13 | quark-engine/quark-engine | https://api.github.com/repos/quark-engine/quark-engine | closed | Update README for the recently released features. | issue-processing-state-06 | Recently, the team added many features to Quark (Rule Viewer, Web Report, and RadioContrast API). To illustrate the power of these features, we documented them in README with examples. However, it also causes some problems with the file.

1. Outdated information. - For example, the command introduced in the Detail Re... | 1.0 | Update README for the recently released features. - Recently, the team added many features to Quark (Rule Viewer, Web Report, and RadioContrast API). To illustrate the power of these features, we documented them in README with examples. However, it also causes some problems with the file.

1. Outdated information. - ... | process | update readme for the recently released features recently the team added many features to quark rule viewer web report and radiocontrast api to illustrate the power of these features we documented them in readme with examples however it also causes some problems with the file outdated information ... | 1 |

80,716 | 15,586,320,047 | IssuesEvent | 2021-03-18 01:40:39 | soumya132/java-code | https://api.github.com/repos/soumya132/java-code | closed | CVE-2018-14718 (High) detected in jackson-databind-2.8.1.jar - autoclosed | security vulnerability | ## CVE-2018-14718 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streamin... | True | CVE-2018-14718 (High) detected in jackson-databind-2.8.1.jar - autoclosed - ## CVE-2018-14718 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary... | non_process | cve high detected in jackson databind jar autoclosed cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file tmp ws scm java c... | 0 |

116,872 | 15,024,485,786 | IssuesEvent | 2021-02-01 19:42:18 | filecoin-project/slate | https://api.github.com/repos/filecoin-project/slate | closed | Add autoplay as a configurable option when viewing videos. | Design | There should be a checkbox when you're editing one of your videos, that checkbox should indicate whether or not you want the video to play automatically or wait.

| 1.0 | Add autoplay as a configurable option when viewing videos. - There should be a checkbox when you're editing one of your videos, that checkbox should indicate whether or not you want the video to play automatically or wait.

| non_process | add autoplay as a configurable option when viewing videos there should be a checkbox when you re editing one of your videos that checkbox should indicate whether or not you want the video to play automatically or wait | 0 |

27,479 | 4,056,863,996 | IssuesEvent | 2016-05-24 20:06:28 | pydata/pandas | https://api.github.com/repos/pydata/pandas | closed | Idea: use df.index/df.columns names to automatically choose axis along which to broadcast | API Design Indexing | In writing some math code in pandas, I find it necessary to do things like

df2 = df.sub(ser, axis='columns')

instead of the shorter and more intuitive

df2 = df - ser

in order to control the axis along which the series is broadcast.

I think it would be a big improvement syntactically if pandas wo... | 1.0 | Idea: use df.index/df.columns names to automatically choose axis along which to broadcast - In writing some math code in pandas, I find it necessary to do things like

df2 = df.sub(ser, axis='columns')

instead of the shorter and more intuitive

df2 = df - ser

in order to control the axis along which ... | non_process | idea use df index df columns names to automatically choose axis along which to broadcast in writing some math code in pandas i find it necessary to do things like df sub ser axis columns instead of the shorter and more intuitive df ser in order to control the axis along which the ... | 0 |

339,242 | 30,385,737,606 | IssuesEvent | 2023-07-13 00:24:41 | redhat-developer/odo | https://api.github.com/repos/redhat-developer/odo | closed | Pipeline as a code on IBMCloud for test | area/testing lifecycle/stale lifecycle/rotten area/infra needs-triage | /kind tests

/area infra

As a QE I want to create a script to automate Pipelines on IBM Cloud.

**NOTES**:

- The latest IBM Cloud modules for Ansible now include the IBM Cloud toolchain, with which we could manage our jobs as code instead of through the UI.

See https://github.com/IBM-Cloud/ansible-collection-ibm... | 1.0 | Pipeline as a code on IBMCloud for test - /kind tests

/area infra

As a QE I want to create a script to automate Pipelines on IBM Cloud.

**NOTES**:

- The latest IBM Cloud modules for Ansible now include the IBM Cloud toolchain, with which we could manage our jobs as code instead of through the UI.

See https://g... | non_process | pipeline as a code on ibmcloud for test kind tests area infra as a qe i want to create a script to automate pipelines on ibm cloud notes the latest ibm cloud modules for ansible now include the ibm cloud toolchain with which we could manage our jobs as code instead of through the ui see | 0 |

268,681 | 23,387,989,643 | IssuesEvent | 2022-08-11 15:13:21 | bitcoin/bitcoin | https://api.github.com/repos/bitcoin/bitcoin | closed | test, ci: Use the documented way to test with ThreadSanitizer | Tests | According to the [ThreadSanitizer docs](https://clang.llvm.org/docs/ThreadSanitizer.html#current-status):

> C++11 threading is supported with **llvm libc++**.

To use the ThreadSanitizer in the documented way we should build from depends with `clang++ -stdlib=libc++` (see #18820).

Related to #19024. | 1.0 | test, ci: Use the documented way to test with ThreadSanitizer - According to the [ThreadSanitizer docs](https://clang.llvm.org/docs/ThreadSanitizer.html#current-status):

> C++11 threading is supported with **llvm libc++**.

To use the ThreadSanitizer in the documented way we should build from depends with `clang++ ... | non_process | test ci use the documented way to test with threadsanitizer according to the c threading is supported with llvm libc to use the threadsanitizer in the documented way we should build from depends with clang stdlib libc see related to | 0 |

10,423 | 26,960,366,525 | IssuesEvent | 2023-02-08 17:42:09 | NIEM/NTAC | https://api.github.com/repos/NIEM/NTAC | closed | Combining JSON Schemas | NIEM 6 Architecture | Can we combine JSON schemas? Dr. Scott couldn't, but Christina has had success.

Christina: Need to add the extra stuff like `choice` to the metamodel to support community content.

Dr. Scott: Is there more than just like a sub group?

Christina, Jim Cabral, Mike Hulme all say yes.

Christina: Can add keywords ... | 1.0 | Combining JSON Schemas - Can we combine JSON schemas? Dr. Scott couldn't, but Christina has had success.

Christina: Need to add the extra stuff like `choice` to the metamodel to support community content.

Dr. Scott: Is there more than just like a sub group?

Christina, Jim Cabral, Mike Hulme all say yes.

Chr... | non_process | combining json schemas can we combine json schemas dr scott couldn t but christina has had success christina need to add the extra stuff like choice to the metamodel to support community content dr scott is there more than just like a sub group christina jim cabral mike hulme all say yes chr... | 0 |

299,480 | 22,608,391,468 | IssuesEvent | 2022-06-29 15:01:26 | gefyrahq/gefyra | https://api.github.com/repos/gefyrahq/gefyra | closed | Add guide for docker desktop | documentation | The getting started guide does only partially work for docker desktop since there is no open port or ingress installed. Some docs for that would probably really help. | 1.0 | Add guide for docker desktop - The getting started guide does only partially work for docker desktop since there is no open port or ingress installed. Some docs for that would probably really help. | non_process | add guide for docker desktop the getting started guide does only partially work for docker desktop since there is no open port or ingress installed some docs for that would probably really help | 0 |

350,804 | 31,932,334,427 | IssuesEvent | 2023-09-19 08:17:04 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix jax_random.test_jax_poisson | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5648283567"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5639189771/job/15274082116"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a hre... | 1.0 | Fix jax_random.test_jax_poisson - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5648283567"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5639189771/job/15274082116"><img src=https://img.shields.io/badge/-s... | non_process | fix jax random test jax poisson tensorflow a href src jax a href src numpy a href src torch a href src paddle a href src | 0 |

7,357 | 10,500,736,717 | IssuesEvent | 2019-09-26 11:12:32 | nodejs/security-wg | https://api.github.com/repos/nodejs/security-wg | closed | Update on program eligibility settings | process | This is mostly an FYI but an important one since it came as a feedback from a hacker submitting a report on H1 and expecting a bounty.

Apparently, now that we have the bounty supported when creating new scopes of projects we need to manually toggle off the "eligible for bounty". I don't think a whole lot of us has a... | 1.0 | Update on program eligibility settings - This is mostly an FYI but an important one since it came as a feedback from a hacker submitting a report on H1 and expecting a bounty.

Apparently, now that we have the bounty supported when creating new scopes of projects we need to manually toggle off the "eligible for bount... | process | update on program eligibility settings this is mostly an fyi but an important one since it came as a feedback from a hacker submitting a report on and expecting a bounty apparently now that we have the bounty supported when creating new scopes of projects we need to manually toggle off the eligible for bounty... | 1 |

20,536 | 27,191,856,339 | IssuesEvent | 2023-02-19 22:02:07 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Starblast Five from "Reboot" (Screenshots added) | suggested title in process | Please add as much of the following info as you can:

Title: Starblast Five

Type (film/tv show): TV show - sci fi

Film or show in which it appears: Reboot

Is the parent film/show streaming anywhere? Yes - Hulu

About when in the parent film/show does it appear? Ep. 1x01 - "Step Right Up"

Actual footage ... | 1.0 | Add Starblast Five from "Reboot" (Screenshots added) - Please add as much of the following info as you can:

Title: Starblast Five

Type (film/tv show): TV show - sci fi

Film or show in which it appears: Reboot

Is the parent film/show streaming anywhere? Yes - Hulu

About when in the parent film/show does i... | process | add starblast five from reboot screenshots added please add as much of the following info as you can title starblast five type film tv show tv show sci fi film or show in which it appears reboot is the parent film show streaming anywhere yes hulu about when in the parent film show does i... | 1 |

241,197 | 26,256,698,249 | IssuesEvent | 2023-01-06 01:49:27 | terranceguz/terranceguz-atom | https://api.github.com/repos/terranceguz/terranceguz-atom | opened | WS-2018-0650 (High) detected in useragent-2.3.0.tgz | security vulnerability | ## WS-2018-0650 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>useragent-2.3.0.tgz</b></p></summary>

<p>Fastest, most accurate & effecient user agent string parser, uses Browserscope'... | True | WS-2018-0650 (High) detected in useragent-2.3.0.tgz - ## WS-2018-0650 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>useragent-2.3.0.tgz</b></p></summary>

<p>Fastest, most accurate & ... | non_process | ws high detected in useragent tgz ws high severity vulnerability vulnerable library useragent tgz fastest most accurate effecient user agent string parser uses browserscope s research for parsing library home page a href path to dependency file repo with submodules... | 0 |

65,784 | 7,919,667,316 | IssuesEvent | 2018-07-04 18:12:43 | MicrosoftDocs/feedback | https://api.github.com/repos/MicrosoftDocs/feedback | closed | Some search results contain markup | assigned-to-pm by design logged-request | E.g. https://docs.microsoft.com/en-us/dotnet/api/?view=netframework-4.7&term=ImeProcessedKey

shows the following in the description:

Gets the keyboard key referenced by the event, if the key will be processed by an [!INCLUDE[TLA#tla_ime](~/includes/tlasharptla-ime-md.md)].

which includes something I think shouldn't ... | 1.0 | Some search results contain markup - E.g. https://docs.microsoft.com/en-us/dotnet/api/?view=netframework-4.7&term=ImeProcessedKey

shows the following in the description:

Gets the keyboard key referenced by the event, if the key will be processed by an [!INCLUDE[TLA#tla_ime](~/includes/tlasharptla-ime-md.md)].

which ... | non_process | some search results contain markup e g shows the following in the description gets the keyboard key referenced by the event if the key will be processed by an includes tlasharptla ime md md which includes something i think shouldn t be part of the search result ⚠ idea migrated from uservoi... | 0 |

19,151 | 25,228,229,722 | IssuesEvent | 2022-11-14 17:32:45 | pystatgen/sgkit | https://api.github.com/repos/pystatgen/sgkit | opened | Hypothesis VCF strategies | process + tools IO | It would be useful to use [Hypothesis](https://hypothesis.readthedocs.io/en/latest/index.html) to generate VCF files for testing `vcf_to_zarr` and the forthcoming `zarr_to_vcf` (#924) functions.

There is already test code in sgkit for [generating VCF files](https://github.com/pystatgen/sgkit/blob/main/sgkit/tests/io... | 1.0 | Hypothesis VCF strategies - It would be useful to use [Hypothesis](https://hypothesis.readthedocs.io/en/latest/index.html) to generate VCF files for testing `vcf_to_zarr` and the forthcoming `zarr_to_vcf` (#924) functions.

There is already test code in sgkit for [generating VCF files](https://github.com/pystatgen/sg... | process | hypothesis vcf strategies it would be useful to use to generate vcf files for testing vcf to zarr and the forthcoming zarr to vcf functions there is already test code in sgkit for but currently this is only used to generate a it should be possible to repurpose the generator to use hypothesis ... | 1 |

14,178 | 17,089,088,379 | IssuesEvent | 2021-07-08 15:11:24 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Why an Environment can only have resources like Kubernetes or a Virtual Machine? | cba devops-cicd-process/tech devops/prod product-feedback | Hi

what if my env is only a single web app or multiple web apps?

Shouldn't i write my pipeline as code(not only build but also release stages)?

What if my Staging env was composed by multiple web apps?

It would be nice to have full history divided by resource.

---

#### Document Details

⚠ *Do not edit thi... | 1.0 | Why an Environment can only have resources like Kubernetes or a Virtual Machine? - Hi

what if my env is only a single web app or multiple web apps?

Shouldn't i write my pipeline as code(not only build but also release stages)?

What if my Staging env was composed by multiple web apps?

It would be nice to have fu... | process | why an environment can only have resources like kubernetes or a virtual machine hi what if my env is only a single web app or multiple web apps shouldn t i write my pipeline as code not only build but also release stages what if my staging env was composed by multiple web apps it would be nice to have fu... | 1 |

5,831 | 8,665,880,233 | IssuesEvent | 2018-11-29 01:20:31 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | closed | Draft process page for Plan Review Checklist | Content Type: Process Page Department: Public Health Site Content Size: S Team: Content | Current: chrome-extension://oemmndcbldboiebfnladdacbdfmadadm/http://austintexas.gov/sites/default/files/files/Health/Environmental/FeeChanges/Plan_Review_Checklist_PS_100117.pdf

Drive: | 1.0 | Draft process page for Plan Review Checklist - Current: chrome-extension://oemmndcbldboiebfnladdacbdfmadadm/http://austintexas.gov/sites/default/files/files/Health/Environmental/FeeChanges/Plan_Review_Checklist_PS_100117.pdf

Drive: | process | draft process page for plan review checklist current chrome extension oemmndcbldboiebfnladdacbdfmadadm drive | 1 |

14,189 | 17,093,799,915 | IssuesEvent | 2021-07-08 21:30:34 | googleapis/python-storage | https://api.github.com/repos/googleapis/python-storage | closed | Use 'testing/constraints-{python_version}.txt' to test lower bound dependencies | api: storage type: process | The files exist, but `noxfile.py` does not use them. | 1.0 | Use 'testing/constraints-{python_version}.txt' to test lower bound dependencies - The files exist, but `noxfile.py` does not use them. | process | use testing constraints python version txt to test lower bound dependencies the files exist but noxfile py does not use them | 1 |

299,106 | 25,882,304,764 | IssuesEvent | 2022-12-14 12:08:55 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Use regular `StandaloneGateway` in `ClusteringRule` | kind/toil area/test | **Description**

Currently, `ClusteringRule` has custom code to build and configure a Gateway. This can lead to drift between test and production code, as seen in https://github.com/camunda/zeebe/pull/9669 which would have been caught by tests if the `ClusteringRule` would just use the regular `StandaloneGateway`, fo... | 1.0 | Use regular `StandaloneGateway` in `ClusteringRule` - **Description**

Currently, `ClusteringRule` has custom code to build and configure a Gateway. This can lead to drift between test and production code, as seen in https://github.com/camunda/zeebe/pull/9669 which would have been caught by tests if the `ClusteringRu... | non_process | use regular standalonegateway in clusteringrule description currently clusteringrule has custom code to build and configure a gateway this can lead to drift between test and production code as seen in which would have been caught by tests if the clusteringrule would just use the regular standalon... | 0 |

715,909 | 24,614,710,108 | IssuesEvent | 2022-10-15 06:38:31 | HaDuve/TravelCostNative | https://api.github.com/repos/HaDuve/TravelCostNative | opened | Feedback von Tina umsetzen | 1 - High Priority Frontend MetaIssue | - [ ] Categorie/Overview button ist nicht intuitiv

- [ ] Einladungs-system ist unklar (Einladung muss weggehen, sobald man joined)

- [ ] Profilbild ändern als "coming soon" deklarieren | 1.0 | Feedback von Tina umsetzen - - [ ] Categorie/Overview button ist nicht intuitiv

- [ ] Einladungs-system ist unklar (Einladung muss weggehen, sobald man joined)

- [ ] Profilbild ändern als "coming soon" deklarieren | non_process | feedback von tina umsetzen categorie overview button ist nicht intuitiv einladungs system ist unklar einladung muss weggehen sobald man joined profilbild ändern als coming soon deklarieren | 0 |

290,989 | 25,112,991,094 | IssuesEvent | 2022-11-08 22:21:12 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | Failing test: Chrome X-Pack UI Plugin Functional Tests.x-pack/test/plugin_functional/test_suites/resolver - Resolver test app when the user is interacting with the node with ID: firstChild when the user hovers over the primary button when the user has clicked the primary button (which selects the node.) should render a... | failed-test | A test failed on a tracked branch

```

Error: expected 0.09618055555555556 to be below 0.096

at Assertion.assert (node_modules/@kbn/expect/expect.js:100:11)

at Assertion.lessThan.Assertion.below (node_modules/@kbn/expect/expect.js:336:8)

at Function.lessThan (node_modules/@kbn/expect/expect.js:531:15)

a... | 1.0 | Failing test: Chrome X-Pack UI Plugin Functional Tests.x-pack/test/plugin_functional/test_suites/resolver - Resolver test app when the user is interacting with the node with ID: firstChild when the user hovers over the primary button when the user has clicked the primary button (which selects the node.) should render a... | non_process | failing test chrome x pack ui plugin functional tests x pack test plugin functional test suites resolver resolver test app when the user is interacting with the node with id firstchild when the user hovers over the primary button when the user has clicked the primary button which selects the node should render a... | 0 |

633,095 | 20,244,769,984 | IssuesEvent | 2022-02-14 12:43:38 | Domiii/dbux | https://api.github.com/repos/Domiii/dbux | closed | Fix async error stack (part 13) | bug priority | * [x] fix: When error is thrown from `async` function, previous `await` nodes are tagged as errors

* [x] test w/ `sequelize` -> `findOrCreate-parallel`

* The circles mark nodes where error was thrown. The other nodes (BEFORE the actual error) should not be "on fire":

*  - * [x] fix: When error is thrown from `async` function, previous `await` nodes are tagged as errors

* [x] test w/ `sequelize` -> `findOrCreate-parallel`

* The circles mark nodes where error was thrown. The other nodes (BEFORE the actual error) should not be "on fire":

*  Gecko/20100101 Firefox/91.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/85789 -->

**URL**: https://www.youtube.com/

**Browser / Version**: Firefox 91.0

**Operatin... | 1.0 | www.youtube.com - see bug description - <!-- @browser: Firefox 91.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:91.0) Gecko/20100101 Firefox/91.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/85789 -->

**URL**: https://www.youtube.com/

**Brow... | non_process | see bug description url browser version firefox operating system windows tested another browser yes chrome problem type something else description video freezes then picks up audio is fine steps to reproduce youtube videos are freezing seems to happen in h... | 0 |

71,495 | 3,358,567,771 | IssuesEvent | 2015-11-19 10:10:39 | readium/readium-sdk | https://api.github.com/repos/readium/readium-sdk | closed | WKWebView window.parent/top = self, new epubReadingSystem method, requires changing deep iframe injection routine | enhancement priority high [critical] Shared-JS | See:

https://github.com/readium/readium-sdk/commit/669659c2e63129b818ce13c88709fc446c776e10#commitcomment-10473931 | 1.0 | WKWebView window.parent/top = self, new epubReadingSystem method, requires changing deep iframe injection routine - See:

https://github.com/readium/readium-sdk/commit/669659c2e63129b818ce13c88709fc446c776e10#commitcomment-10473931 | non_process | wkwebview window parent top self new epubreadingsystem method requires changing deep iframe injection routine see | 0 |

98,300 | 20,670,572,047 | IssuesEvent | 2022-03-10 01:22:55 | OctopusDeploy/Issues | https://api.github.com/repos/OctopusDeploy/Issues | closed | ARC not supported in Git projects. Warning message is not clear about this. | kind/bug state/happening team/config-as-code | ### Team

- [X] I've assigned a team label to this issue

### Severity

_No response_

### Version

2022.1

### Latest Version

_No response_

### What happened?

After converting to a project to use Git, the [ARC](https://octopus.com/docs/projects/project-triggers/automatic-release-creation) settings are automatically... | 1.0 | ARC not supported in Git projects. Warning message is not clear about this. - ### Team

- [X] I've assigned a team label to this issue

### Severity

_No response_

### Version

2022.1

### Latest Version

_No response_

### What happened?

After converting to a project to use Git, the [ARC](https://octopus.com/docs/pr... | non_process | arc not supported in git projects warning message is not clear about this team i ve assigned a team label to this issue severity no response version latest version no response what happened after converting to a project to use git the settings are automatically cleared... | 0 |

22,283 | 30,833,601,016 | IssuesEvent | 2023-08-02 05:12:09 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Rewind persisted db to remove corrupted entries | question log-processing on-disk | I managed to run my background analytics job twice at the same time and now I have a bunch of data for "hits" that appears to be double counted (there is an obvious segment of the chart where the "hits" line is twice the height of before and after). I was wondering if it's possible for me to rewind the persisted databa... | 1.0 | Rewind persisted db to remove corrupted entries - I managed to run my background analytics job twice at the same time and now I have a bunch of data for "hits" that appears to be double counted (there is an obvious segment of the chart where the "hits" line is twice the height of before and after). I was wondering if i... | process | rewind persisted db to remove corrupted entries i managed to run my background analytics job twice at the same time and now i have a bunch of data for hits that appears to be double counted there is an obvious segment of the chart where the hits line is twice the height of before and after i was wondering if i... | 1 |

14,215 | 17,136,057,234 | IssuesEvent | 2021-07-13 02:24:28 | parcel-bundler/parcel | https://api.github.com/repos/parcel-bundler/parcel | closed | Changes in stylusrc imported files not being picked up | :bug: Bug CSS Preprocessing ✨ Parcel 2 💰 Cache | <!---

Thanks for filing an issue 😄 ! Before you submit, please read the following:

Search open/closed issues before submitting since someone might have asked the same thing before!

-->

# 🐛 bug report

<!--- Provide a general summary of the issue here -->

I have a stylus file that I use to declare variables... | 1.0 | Changes in stylusrc imported files not being picked up - <!---

Thanks for filing an issue 😄 ! Before you submit, please read the following:

Search open/closed issues before submitting since someone might have asked the same thing before!

-->

# 🐛 bug report

<!--- Provide a general summary of the issue here ... | process | changes in stylusrc imported files not being picked up thanks for filing an issue 😄 before you submit please read the following search open closed issues before submitting since someone might have asked the same thing before 🐛 bug report i have a stylus file that i use to declare vari... | 1 |

12,176 | 14,741,948,458 | IssuesEvent | 2021-01-07 11:26:18 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Payment Deletion Error | anc-process anp-not prioritized ant-bug | In GitLab by @pchaudhary on Feb 27, 2019, 04:31

There is an error while we delete a payment which was printed on the invoice & the billing cycle of that invoice is reverted.

This issue comes up if the account's invoice is very first. | 1.0 | Payment Deletion Error - In GitLab by @pchaudhary on Feb 27, 2019, 04:31

There is an error while we delete a payment which was printed on the invoice & the billing cycle of that invoice is reverted.

This issue comes up if the account's invoice is very first. | process | payment deletion error in gitlab by pchaudhary on feb there is an error while we delete a payment which was printed on the invoice the billing cycle of that invoice is reverted this issue comes up if the account s invoice is very first | 1 |

528,756 | 15,374,011,204 | IssuesEvent | 2021-03-02 13:19:45 | netdata/netdata-cloud | https://api.github.com/repos/netdata/netdata-cloud | closed | [BUG] Node View not loading due to Javascript errors | bug priority/high visualizations-team-bugs | **Describe the bug**

Node page not loading

**To Reproduce**

Click on a node detail like https://app.netdata.cloud/spaces/production-iqdkpt4/rooms/general/nodes/483ee1be-3cbb-47a0-a913-7d00facd5c96

**Expected behavior**

Page should render

**Error logs**

[Error] TypeError: undefined is not an object (eva... | 1.0 | [BUG] Node View not loading due to Javascript errors - **Describe the bug**

Node page not loading

**To Reproduce**

Click on a node detail like https://app.netdata.cloud/spaces/production-iqdkpt4/rooms/general/nodes/483ee1be-3cbb-47a0-a913-7d00facd5c96

**Expected behavior**

Page should render

**Error logs... | non_process | node view not loading due to javascript errors describe the bug node page not loading to reproduce click on a node detail like expected behavior page should render error logs typeerror undefined is not an object evaluating object o a r n hasownproperty funzione anonima... | 0 |

14,781 | 18,054,918,129 | IssuesEvent | 2021-09-20 06:47:16 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Print process start time to processing logs while running | Processing Feature Request | **Feature description.**

With long-running processing algorithms it would be nice to see the start time of the process in the processing log while the process is still running. Currently when the process finishes it does tell how long the process took, but if you have a long running process and do something else whil... | 1.0 | Print process start time to processing logs while running - **Feature description.**

With long-running processing algorithms it would be nice to see the start time of the process in the processing log while the process is still running. Currently when the process finishes it does tell how long the process took, but if... | process | print process start time to processing logs while running feature description with long running processing algorithms it would be nice to see the start time of the process in the processing log while the process is still running currently when the process finishes it does tell how long the process took but if... | 1 |

255,448 | 19,303,175,078 | IssuesEvent | 2021-12-13 08:44:37 | ReceiptHero/docsHero | https://api.github.com/repos/ReceiptHero/docsHero | closed | Change Swagger UI to Redoc | documentation | Miika suggested to use [Redoc](https://github.com/Redocly/redoc) for present open API spec in documentation | 1.0 | Change Swagger UI to Redoc - Miika suggested to use [Redoc](https://github.com/Redocly/redoc) for present open API spec in documentation | non_process | change swagger ui to redoc miika suggested to use for present open api spec in documentation | 0 |

3,750 | 6,733,151,362 | IssuesEvent | 2017-10-18 14:00:13 | york-region-tpss/stp | https://api.github.com/repos/york-region-tpss/stp | closed | Watering Assignment - Add Options Create New and Continue to Work | enhancement process workflow ui ux | Add option to Discard, Temporarily Save options the Current Watering Assignment.

Creating a new card after clicking the watering assignment master card.

"Create New" will pull directly from etrans table and combined with the on-hold number from lastest assignment.

"Continue to work" will pull from the temp ta... | 1.0 | Watering Assignment - Add Options Create New and Continue to Work - Add option to Discard, Temporarily Save options the Current Watering Assignment.

Creating a new card after clicking the watering assignment master card.