Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

242,994 | 26,277,893,409 | IssuesEvent | 2023-01-07 01:26:03 | turkdevops/snyk | https://api.github.com/repos/turkdevops/snyk | closed | CVE-2011-4969 (Low) detected in yiisoft/yii-1.1.14 - autoclosed | security vulnerability | ## CVE-2011-4969 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>yiisoft/yii-1.1.14</b></p></summary>

<p>Yii Web Programming Framework</p>

<p>Library home page: <a href="https://api.git... | True | CVE-2011-4969 (Low) detected in yiisoft/yii-1.1.14 - autoclosed - ## CVE-2011-4969 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>yiisoft/yii-1.1.14</b></p></summary>

<p>Yii Web Progra... | non_process | cve low detected in yiisoft yii autoclosed cve low severity vulnerability vulnerable library yiisoft yii yii web programming framework library home page a href dependency hierarchy x yiisoft yii vulnerable library found in head commit a href ... | 0 |

105,051 | 22,832,341,291 | IssuesEvent | 2022-07-12 13:55:48 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | closed | [Accessibility]: `N/A` hard to understand when read aloud | team/code-intelligence accessibility team/frontend-platform wcag/2.1/fixing wcag/2.1 | ### Audit type

Screen reader navigation

### User journey audit issue

#33516, #33518

### Problem description

<img width="884" alt="n:a" src="https://user-images.githubusercontent.com/103087/167504500-6c228e31-688b-4262-ba26-14d18129ffd1.png">

I couldn't understand the text for `N/A` here.

### Expect... | 1.0 | [Accessibility]: `N/A` hard to understand when read aloud - ### Audit type

Screen reader navigation

### User journey audit issue

#33516, #33518

### Problem description

<img width="884" alt="n:a" src="https://user-images.githubusercontent.com/103087/167504500-6c228e31-688b-4262-ba26-14d18129ffd1.png">

... | non_process | n a hard to understand when read aloud audit type screen reader navigation user journey audit issue problem description img width alt n a src i couldn t understand the text for n a here expected behavior rename text or add an aria label that can say no... | 0 |

6,790 | 9,921,948,721 | IssuesEvent | 2019-06-30 22:59:12 | GroceriStar/fetch-constants | https://api.github.com/repos/GroceriStar/fetch-constants | closed | #### [Recipe Search][URLS][part1] | in-process | https://chickenkyiv.github.io/search-api-documentation/docs/db-schema

https://chickenkyiv.github.io/search-api-documentation/docs/db-schema

By using names on URLS from this [page](https://chickenkyiv.github.io/search-api-documentation/docs/database-tables-models/attribute/allergy)

In order to make it better, we'... | 1.0 | #### [Recipe Search][URLS][part1] - https://chickenkyiv.github.io/search-api-documentation/docs/db-schema

https://chickenkyiv.github.io/search-api-documentation/docs/db-schema

By using names on URLS from this [page](https://chickenkyiv.github.io/search-api-documentation/docs/database-tables-models/attribute/aller... | process | by using names on urls from this in order to make it better we ll create set of constants each for a different method example allergy will became export const attribute filter type allergy attribute filter type allergy | 1 |

4,300 | 7,194,970,240 | IssuesEvent | 2018-02-04 12:13:39 | w3c/html | https://api.github.com/repos/w3c/html | closed | Add attribution of WHATWG HTML, as required by the CC-BY license | editorial process | WHATWG HTML now has the following copyright notice:

> Copyright © 2018 WHATWG (Apple, Google, Mozilla, Microsoft). This work is licensed under a [Creative Commons Attribution 4.0 International License](https://creativecommons.org/licenses/by/4.0/).

Unlike the previous license, this license explicitly requires att... | 1.0 | Add attribution of WHATWG HTML, as required by the CC-BY license - WHATWG HTML now has the following copyright notice:

> Copyright © 2018 WHATWG (Apple, Google, Mozilla, Microsoft). This work is licensed under a [Creative Commons Attribution 4.0 International License](https://creativecommons.org/licenses/by/4.0/).

... | process | add attribution of whatwg html as required by the cc by license whatwg html now has the following copyright notice copyright © whatwg apple google mozilla microsoft this work is licensed under a unlike the previous license this license explicitly requires attribution although previous copying ... | 1 |

3,523 | 6,564,753,246 | IssuesEvent | 2017-09-08 03:58:05 | zero-os/0-Disk | https://api.github.com/repos/zero-os/0-Disk | closed | zeroctl rebalance command | process_wontfix type_feature | Part of the self-healing as described in https://github.com/zero-os/0-orchestrator/blob/master/specs/selfhealing/storage.md.

TODO:

+ describe parameters and how the command would look like;

+ describe what the command would do and to which storage types it would apply (if specific at all); | 1.0 | zeroctl rebalance command - Part of the self-healing as described in https://github.com/zero-os/0-orchestrator/blob/master/specs/selfhealing/storage.md.

TODO:

+ describe parameters and how the command would look like;

+ describe what the command would do and to which storage types it would apply (if specific at... | process | zeroctl rebalance command part of the self healing as described in todo describe parameters and how the command would look like describe what the command would do and to which storage types it would apply if specific at all | 1 |

6,044 | 8,854,377,592 | IssuesEvent | 2019-01-09 01:04:16 | NottingHack/hms2 | https://api.github.com/repos/NottingHack/hms2 | opened | member.ex audit | Process | After 6 years we should remove ex member records

but to keep DB integrity we likely obfuscate the data

need to look carefully at what tables are have already that need cleaning and as we add more this audit will constantly need updating

going to be best to break this down in `Entity` base files in say `app/HMS/O... | 1.0 | member.ex audit - After 6 years we should remove ex member records

but to keep DB integrity we likely obfuscate the data

need to look carefully at what tables are have already that need cleaning and as we add more this audit will constantly need updating

going to be best to break this down in `Entity` base files... | process | member ex audit after years we should remove ex member records but to keep db integrity we likely obfuscate the data need to look carefully at what tables are have already that need cleaning and as we add more this audit will constantly need updating going to be best to break this down in entity base files... | 1 |

247,773 | 26,728,863,316 | IssuesEvent | 2023-01-30 01:13:34 | Yash-Handa/GitHub-Org-Geographics | https://api.github.com/repos/Yash-Handa/GitHub-Org-Geographics | opened | CVE-2022-48285 (Medium) detected in jszip-3.2.1.tgz | security vulnerability | ## CVE-2022-48285 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jszip-3.2.1.tgz</b></p></summary>

<p>Create, read and edit .zip files with JavaScript http://stuartk.com/jszip</p>

<... | True | CVE-2022-48285 (Medium) detected in jszip-3.2.1.tgz - ## CVE-2022-48285 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jszip-3.2.1.tgz</b></p></summary>

<p>Create, read and edit .zi... | non_process | cve medium detected in jszip tgz cve medium severity vulnerability vulnerable library jszip tgz create read and edit zip files with javascript library home page a href path to dependency file github org geographics package json path to vulnerable library node mo... | 0 |

40,398 | 6,822,351,597 | IssuesEvent | 2017-11-07 19:46:46 | flyve-mdm/flyve-mdm-glpi-demo | https://api.github.com/repos/flyve-mdm/flyve-mdm-glpi-demo | closed | Move Wiki articles to project site | documentation | Hi, @Naylin15

Could you move this article to the Wiki in the project site?

https://github.com/flyve-mdm/flyve-mdm-glpi-demo/wiki/Self_create_an_user_account

Thank you. | 1.0 | Move Wiki articles to project site - Hi, @Naylin15

Could you move this article to the Wiki in the project site?

https://github.com/flyve-mdm/flyve-mdm-glpi-demo/wiki/Self_create_an_user_account

Thank you. | non_process | move wiki articles to project site hi could you move this article to the wiki in the project site thank you | 0 |

9,172 | 12,225,607,296 | IssuesEvent | 2020-05-03 06:25:24 | labnote-ant/labnote | https://api.github.com/repos/labnote-ant/labnote | closed | Make checkbox for conditions in process | process-view | Make checkbox for True/False conditions (ex. gradually, heating and etc.) in process. | 1.0 | Make checkbox for conditions in process - Make checkbox for True/False conditions (ex. gradually, heating and etc.) in process. | process | make checkbox for conditions in process make checkbox for true false conditions ex gradually heating and etc in process | 1 |

10,700 | 13,495,112,155 | IssuesEvent | 2020-09-11 22:59:34 | googleapis/java-secretmanager | https://api.github.com/repos/googleapis/java-secretmanager | closed | Promote to GA | api: secretmanager type: process | Package name: **google-cloud-secretmanager**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [ ] 28 days elapsed s... | 1.0 | Promote to GA - Package name: **google-cloud-secretmanager**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [ ] 2... | process | promote to ga package name google cloud secretmanager current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue required d... | 1 |

15,118 | 18,851,809,719 | IssuesEvent | 2021-11-11 22:00:34 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | TablesClient: make predict no longer call `get_model` and remove related arguments [breaking change] | type: process | Currently, for every [`predict`](https://github.com/googleapis/google-cloud-python/blob/master/automl/google/cloud/automl_v1beta1/tables/tables_client.py#L2593-L2603) call, two API requests are made:

1. [`get_model`](https://github.com/googleapis/google-cloud-python/blob/master/automl/google/cloud/automl_v1beta1/table... | 1.0 | TablesClient: make predict no longer call `get_model` and remove related arguments [breaking change] - Currently, for every [`predict`](https://github.com/googleapis/google-cloud-python/blob/master/automl/google/cloud/automl_v1beta1/tables/tables_client.py#L2593-L2603) call, two API requests are made:

1. [`get_model`]... | process | tablesclient make predict no longer call get model and remove related arguments currently for every call two api requests are made predict get model is costly often but sometimes in my tests when the project contains huge number of models planning to never do get model c... | 1 |

21,308 | 28,502,253,305 | IssuesEvent | 2023-04-18 18:18:03 | daviddrysdale/python-phonenumbers | https://api.github.com/repos/daviddrysdale/python-phonenumbers | closed | United States area code 557 is not working in python-phonenumbers | process | This is a python specific issue. The parent repository shows this as a valid phone number:

Python Version: v3.10.8

Library Version: v8.13.9

Phone number area code not working: 557

Parent Repo Validation:

perf: speed severe: performance | # Background and reproduction

One of our customer wants to use `CustomPaint` to trigger `RasterCache` by setting `isComplex = true, willChange = false` as below:

```

import 'package:flutter/material.dart';

class CostlyToRasterize extends StatelessWidget {

@override

Widget build(BuildContext context) => Co... | True | CustomPaint does not respect isComplex/willChange if painters are null - # Background and reproduction

One of our customer wants to use `CustomPaint` to trigger `RasterCache` by setting `isComplex = true, willChange = false` as below:

```

import 'package:flutter/material.dart';

class CostlyToRasterize extends S... | non_process | custompaint does not respect iscomplex willchange if painters are null background and reproduction one of our customer wants to use custompaint to trigger rastercache by setting iscomplex true willchange false as below import package flutter material dart class costlytorasterize extends s... | 0 |

12,298 | 14,854,473,458 | IssuesEvent | 2021-01-18 11:21:51 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | Error messages should be updated as per document | Bug P1 Participant manager Process: Fixed Process: Tested dev | Error messages should be updated as per following document

https://docs.google.com/spreadsheets/d/1CEcgbG2Et3FCc5qbavewlNQapxNHG5kTSNMFYzdz4nc/edit?usp=sharing | 2.0 | Error messages should be updated as per document - Error messages should be updated as per following document

https://docs.google.com/spreadsheets/d/1CEcgbG2Et3FCc5qbavewlNQapxNHG5kTSNMFYzdz4nc/edit?usp=sharing | process | error messages should be updated as per document error messages should be updated as per following document | 1 |

27,062 | 4,867,181,025 | IssuesEvent | 2016-11-15 03:03:29 | TNGSB/eWallet | https://api.github.com/repos/TNGSB/eWallet | closed | e-Wallet_WebAdmin 07112016 | Defect - High (Sev-2) | As for today testing, there are 3 defects found in web admin module.

-Card Management

-Customer Service

Kindly refer the attached defects document for your perusal.

[Sev_2.zip](https://github.com/TNGSB/eWallet/files/574946/Sev_2.zip)

| 1.0 | e-Wallet_WebAdmin 07112016 - As for today testing, there are 3 defects found in web admin module.

-Card Management

-Customer Service

Kindly refer the attached defects document for your perusal.

[Sev_2.zip](https://github.com/TNGSB/eWallet/files/574946/Sev_2.zip)

| non_process | e wallet webadmin as for today testing there are defects found in web admin module card management customer service kindly refer the attached defects document for your perusal | 0 |

21,947 | 30,448,636,913 | IssuesEvent | 2023-07-16 02:00:07 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Fri, 14 Jul 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### Temporal Label-Refinement for Weakly-Supervised Audio-Visual Event Localization

- **Authors:** Kalyan Ramakrishnan

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Machine Learning (cs.LG); Sound (cs.SD); Audio and Speech Processing (eess.AS)

- **Arxiv link:** https://arxiv.or... | 2.0 | New submissions for Fri, 14 Jul 23 - ## Keyword: events

### Temporal Label-Refinement for Weakly-Supervised Audio-Visual Event Localization

- **Authors:** Kalyan Ramakrishnan

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Machine Learning (cs.LG); Sound (cs.SD); Audio and Speech Processing (eess.AS... | process | new submissions for fri jul keyword events temporal label refinement for weakly supervised audio visual event localization authors kalyan ramakrishnan subjects computer vision and pattern recognition cs cv machine learning cs lg sound cs sd audio and speech processing eess as ... | 1 |

8,892 | 11,986,967,840 | IssuesEvent | 2020-04-07 20:18:32 | googleapis/google-cloud-go | https://api.github.com/repos/googleapis/google-cloud-go | closed | internal/gapicgen: Go-based binary dependencies are using master instead of latest release | type: process | We've noticed that the `internal/gapicgen` system is pulling `master` of Go-based binary dependencies i.e. `protoc-gen-go_gapic` and `goimports`, rather than the version specified in `internal/gapicgen/go.mod`.

We need to ensure that the pinned versions are respected by the generation tooling so that we don't introd... | 1.0 | internal/gapicgen: Go-based binary dependencies are using master instead of latest release - We've noticed that the `internal/gapicgen` system is pulling `master` of Go-based binary dependencies i.e. `protoc-gen-go_gapic` and `goimports`, rather than the version specified in `internal/gapicgen/go.mod`.

We need to en... | process | internal gapicgen go based binary dependencies are using master instead of latest release we ve noticed that the internal gapicgen system is pulling master of go based binary dependencies i e protoc gen go gapic and goimports rather than the version specified in internal gapicgen go mod we need to en... | 1 |

40,120 | 6,800,157,238 | IssuesEvent | 2017-11-02 13:04:50 | onury/geolocator | https://api.github.com/repos/onury/geolocator | closed | Demo pages not using HTTPS | documentation | Hello,

As stated in your own README:

> Make sure you're calling Geolocation APIs (such as geolocator.locate() and geolocator.watch()) from a secure origin (i.e. an HTTPS page). In Chrome 50, Geolocation API is removed from unsecured origins. Other browsers are expected to follow.

Since the demo is not using HT... | 1.0 | Demo pages not using HTTPS - Hello,

As stated in your own README:

> Make sure you're calling Geolocation APIs (such as geolocator.locate() and geolocator.watch()) from a secure origin (i.e. an HTTPS page). In Chrome 50, Geolocation API is removed from unsecured origins. Other browsers are expected to follow.

S... | non_process | demo pages not using https hello as stated in your own readme make sure you re calling geolocation apis such as geolocator locate and geolocator watch from a secure origin i e an https page in chrome geolocation api is removed from unsecured origins other browsers are expected to follow si... | 0 |

12,482 | 14,950,090,914 | IssuesEvent | 2021-01-26 12:33:46 | panther-labs/panther | https://api.github.com/repos/panther-labs/panther | opened | Expand AWS ALB log parser to include Desync mitigation mode fields | data inputs story team:data processing | ### Description

[AWS ALB access logs](https://docs.aws.amazon.com/elasticloadbalancing/latest/application/load-balancer-access-logs.html#access-log-file-format) include 4 additional fields that the current [log parser](https://github.com/panther-labs/panther/blob/2a27d25374cde5ab88d67142d3ebc42568d2dd94/internal/log... | 1.0 | Expand AWS ALB log parser to include Desync mitigation mode fields - ### Description

[AWS ALB access logs](https://docs.aws.amazon.com/elasticloadbalancing/latest/application/load-balancer-access-logs.html#access-log-file-format) include 4 additional fields that the current [log parser](https://github.com/panther-la... | process | expand aws alb log parser to include desync mitigation mode fields description include additional fields that the current seems to ignore target port list target status code list classification classification reason the last two fields seem particularly important in th... | 1 |

1,193 | 3,690,399,345 | IssuesEvent | 2016-02-25 19:51:34 | hoodiehq/editorial | https://api.github.com/repos/hoodiehq/editorial | closed | Define a formal method in which contributors are added to the Editorial team | process | I updated the `team-roles.md` file with descriptions in https://github.com/hoodiehq/editorial/pull/23. In addition to adding the descriptions, I think we need to define a formal process in which contributors are added to the Editorials team.

Having this early on makes the transition from one (or few) contributor to... | 1.0 | Define a formal method in which contributors are added to the Editorial team - I updated the `team-roles.md` file with descriptions in https://github.com/hoodiehq/editorial/pull/23. In addition to adding the descriptions, I think we need to define a formal process in which contributors are added to the Editorials team.... | process | define a formal method in which contributors are added to the editorial team i updated the team roles md file with descriptions in in addition to adding the descriptions i think we need to define a formal process in which contributors are added to the editorials team having this early on makes the transitio... | 1 |

3,559 | 3,955,259,057 | IssuesEvent | 2016-04-29 20:12:21 | commercialhaskell/stack | https://api.github.com/repos/commercialhaskell/stack | closed | Avoid unnecessarily loading the hackage index | awaiting pr help wanted type: performance | Loading the hackage is expensive, because the encoded index cache is 18Mb. On my fairly fast computer, it takes 0.3 seconds to decode it. Search `getPackageCaches` to find the spots it's used.

There are two cases where loading the hackage currently happens unnecessarily:

1) In `loadSourceMap`, because the user ... | True | Avoid unnecessarily loading the hackage index - Loading the hackage is expensive, because the encoded index cache is 18Mb. On my fairly fast computer, it takes 0.3 seconds to decode it. Search `getPackageCaches` to find the spots it's used.

There are two cases where loading the hackage currently happens unnecessar... | non_process | avoid unnecessarily loading the hackage index loading the hackage is expensive because the encoded index cache is on my fairly fast computer it takes seconds to decode it search getpackagecaches to find the spots it s used there are two cases where loading the hackage currently happens unnecessarily... | 0 |

248,990 | 26,870,749,324 | IssuesEvent | 2023-02-04 12:35:24 | turkdevops/electron-api-demos | https://api.github.com/repos/turkdevops/electron-api-demos | closed | CVE-2022-25881 (Medium) detected in http-cache-semantics-4.1.0.tgz - autoclosed | security vulnerability | ## CVE-2022-25881 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-cache-semantics-4.1.0.tgz</b></p></summary>

<p>Parses Cache-Control and other headers. Helps building correct H... | True | CVE-2022-25881 (Medium) detected in http-cache-semantics-4.1.0.tgz - autoclosed - ## CVE-2022-25881 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>http-cache-semantics-4.1.0.tgz</b><... | non_process | cve medium detected in http cache semantics tgz autoclosed cve medium severity vulnerability vulnerable library http cache semantics tgz parses cache control and other headers helps building correct http caches and proxies library home page a href dependency hierarc... | 0 |

16,413 | 21,191,507,959 | IssuesEvent | 2022-04-08 17:59:19 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Webkit: Run internal tests across Webkit browser | process: tests type: chore browser: webkit stage: icebox | In addition to Chrome and Firefox, run our internal tests across Webkit browser.

Skip any tests in the Webkit browser that do not currently pass. | 1.0 | Webkit: Run internal tests across Webkit browser - In addition to Chrome and Firefox, run our internal tests across Webkit browser.

Skip any tests in the Webkit browser that do not currently pass. | process | webkit run internal tests across webkit browser in addition to chrome and firefox run our internal tests across webkit browser skip any tests in the webkit browser that do not currently pass | 1 |

299,408 | 25,901,504,710 | IssuesEvent | 2022-12-15 06:18:48 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: liquibase failed | C-test-failure O-robot O-roachtest T-sql-sessions branch-release-22.2.0 | roachtest.liquibase [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6784087?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6784087?buildTab=artifacts#/liquibase) on release-22.2.0 @... | 2.0 | roachtest: liquibase failed - roachtest.liquibase [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6784087?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/6784087?buildTab=artifacts#/... | non_process | roachtest liquibase failed roachtest liquibase with on release test artifacts and logs in artifacts liquibase run orm helpers go orm helpers go java helpers go liquibase go liquibase go test runner go tests run on cockroach beta tests run against liquibase... | 0 |

378,623 | 11,205,407,359 | IssuesEvent | 2020-01-05 14:04:23 | kubernetes-sigs/kubefed | https://api.github.com/repos/kubernetes-sigs/kubefed | closed | federation controller disaster recovery Support | kind/feature lifecycle/rotten priority/backlog | <!-- Please only use this template for submitting enhancement requests -->

**What would you like to be added**:

Currently, we can only have one host cluster, but if the host cluster goes down, then the federation controller will also goes down, and then the federation will not work.

**Why is this needed**:

It i... | 1.0 | federation controller disaster recovery Support - <!-- Please only use this template for submitting enhancement requests -->

**What would you like to be added**:

Currently, we can only have one host cluster, but if the host cluster goes down, then the federation controller will also goes down, and then the federati... | non_process | federation controller disaster recovery support what would you like to be added currently we can only have one host cluster but if the host cluster goes down then the federation controller will also goes down and then the federation will not work why is this needed it is better to enable the ... | 0 |

700 | 3,197,184,575 | IssuesEvent | 2015-10-01 02:00:35 | 18F/CMS.gov-developer | https://api.github.com/repos/18F/CMS.gov-developer | closed | Plan training dates | process | * [ ] CMS to consider what kind of trainings and with you.

* [ ] CMS to pick tentative dates and times.

* [ ] Add these to the [scheduled trainings document](https://github.com/18F/CMS.gov-developer/blob/master/deliverables/draft/training_schedule.md). | 1.0 | Plan training dates - * [ ] CMS to consider what kind of trainings and with you.

* [ ] CMS to pick tentative dates and times.

* [ ] Add these to the [scheduled trainings document](https://github.com/18F/CMS.gov-developer/blob/master/deliverables/draft/training_schedule.md). | process | plan training dates cms to consider what kind of trainings and with you cms to pick tentative dates and times add these to the | 1 |

174,844 | 21,300,487,115 | IssuesEvent | 2022-04-15 01:59:18 | YJSoft/nedb | https://api.github.com/repos/YJSoft/nedb | closed | WS-2017-0247 (Low) detected in ms-0.3.0.tgz - autoclosed | security vulnerability | ## WS-2017-0247 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ms-0.3.0.tgz</b></p></summary>

<p>Tiny ms conversion utility</p>

<p>Library home page: <a href="https://registry.npmjs.or... | True | WS-2017-0247 (Low) detected in ms-0.3.0.tgz - autoclosed - ## WS-2017-0247 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ms-0.3.0.tgz</b></p></summary>

<p>Tiny ms conversion utility</... | non_process | ws low detected in ms tgz autoclosed ws low severity vulnerability vulnerable library ms tgz tiny ms conversion utility library home page a href path to dependency file nedb package json path to vulnerable library nedb node modules ms dependency hierarchy ... | 0 |

8,950 | 12,059,071,918 | IssuesEvent | 2020-04-15 18:36:30 | googleapis/cloud-profiler-nodejs | https://api.github.com/repos/googleapis/cloud-profiler-nodejs | closed | Drop support for Node 8 | api: cloudprofiler priority: p2 type: process | We'd like to drop support for Node 8 before releasing v4.0.0, because we would like to reduce the number of major version changes for this module.

@bcoe and @JustinBeckwith -- At what point in time will we be able to drop Node 8?

| 1.0 | Drop support for Node 8 - We'd like to drop support for Node 8 before releasing v4.0.0, because we would like to reduce the number of major version changes for this module.

@bcoe and @JustinBeckwith -- At what point in time will we be able to drop Node 8?

| process | drop support for node we d like to drop support for node before releasing because we would like to reduce the number of major version changes for this module bcoe and justinbeckwith at what point in time will we be able to drop node | 1 |

1,926 | 2,521,738,882 | IssuesEvent | 2015-01-19 16:34:05 | Trashed/MovieSuggester | https://api.github.com/repos/Trashed/MovieSuggester | opened | MainActivity | top priority UI WIP | Build the main view!

This view should have:

* ActionBar

* Search Icon (launches search field), Settings (Icon), Help and About options

* Horizontal scrolling lists for top ten suggested movies, actors and genres

* These lists are aligned vertically on top of each other | 1.0 | MainActivity - Build the main view!

This view should have:

* ActionBar

* Search Icon (launches search field), Settings (Icon), Help and About options

* Horizontal scrolling lists for top ten suggested movies, actors and genres

* These lists are aligned vertically on top of each other | non_process | mainactivity build the main view this view should have actionbar search icon launches search field settings icon help and about options horizontal scrolling lists for top ten suggested movies actors and genres these lists are aligned vertically on top of each other | 0 |

110,514 | 9,458,893,663 | IssuesEvent | 2019-04-17 07:02:30 | Students-of-the-city-of-Kostroma/Student-timetable | https://api.github.com/repos/Students-of-the-city-of-Kostroma/Student-timetable | closed | Разработать сценарии тестирования пригодных для автоматизации модульным тестированием метода Delete(Model model) сущности ВУЗ | Controller Delete(Model model) Script Unit test ВУЗ ЛР04 | [План разработки.](https://docs.google.com/presentation/d/1sLkafCqJTvIAcyZ1jfNw0JrZq8o33WYKGot23z7EfzA/edit#slide=id.g4e940e7976_0_102)

[Сценарий.](https://docs.google.com/spreadsheets/d/114F1wKsHoGB75gmF2p_XUR5zgbUb6IeQNX1ziO_BSIw/edit#gid=2120214548)

[Ссылка на диаграмму классов.](https://docs.google.com/presentati... | 1.0 | Разработать сценарии тестирования пригодных для автоматизации модульным тестированием метода Delete(Model model) сущности ВУЗ - [План разработки.](https://docs.google.com/presentation/d/1sLkafCqJTvIAcyZ1jfNw0JrZq8o33WYKGot23z7EfzA/edit#slide=id.g4e940e7976_0_102)

[Сценарий.](https://docs.google.com/spreadsheets/d/114F... | non_process | разработать сценарии тестирования пригодных для автоматизации модульным тестированием метода delete model model сущности вуз | 0 |

464,105 | 13,306,387,839 | IssuesEvent | 2020-08-25 20:09:37 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | Expand E2E Tests for Facility Locator (Vet Centers) | Q32020-priority QA frontend stretch-goal vsa vsa-facilities | ## Background

We need to expand the test suite specifically for Vet Center searches

## Tasks

**1. Check to see if the following user stories are covered by existing tests**

-When `Vet centers` is selected as the facility type,

<details>

<summary>When `Vet centers` is selected as the facility type (exp... | 1.0 | Expand E2E Tests for Facility Locator (Vet Centers) - ## Background

We need to expand the test suite specifically for Vet Center searches

## Tasks

**1. Check to see if the following user stories are covered by existing tests**

-When `Vet centers` is selected as the facility type,

<details>

<summary>Wh... | non_process | expand tests for facility locator vet centers background we need to expand the test suite specifically for vet center searches tasks check to see if the following user stories are covered by existing tests when vet centers is selected as the facility type when vet centers i... | 0 |

17,258 | 23,041,373,793 | IssuesEvent | 2022-07-23 07:26:58 | python/cpython | https://api.github.com/repos/python/cpython | closed | test_notify_all hangs forever in sparc64 | type-bug tests 3.9 expert-multiprocessing | BPO | [40186](https://bugs.python.org/issue40186)

--- | :---

Nosy | @isidentical

<sup>*Note: these values reflect the state of the issue at the time it was migrated and might not reflect the current state.*</sup>

<details><summary>Show more details</summary><p>

GitHub fields:

```python

assignee = None

closed_at = No... | 1.0 | test_notify_all hangs forever in sparc64 - BPO | [40186](https://bugs.python.org/issue40186)

--- | :---

Nosy | @isidentical

<sup>*Note: these values reflect the state of the issue at the time it was migrated and might not reflect the current state.*</sup>

<details><summary>Show more details</summary><p>

GitHub field... | process | test notify all hangs forever in bpo nosy isidentical note these values reflect the state of the issue at the time it was migrated and might not reflect the current state show more details github fields python assignee none closed at none created at labels title t... | 1 |

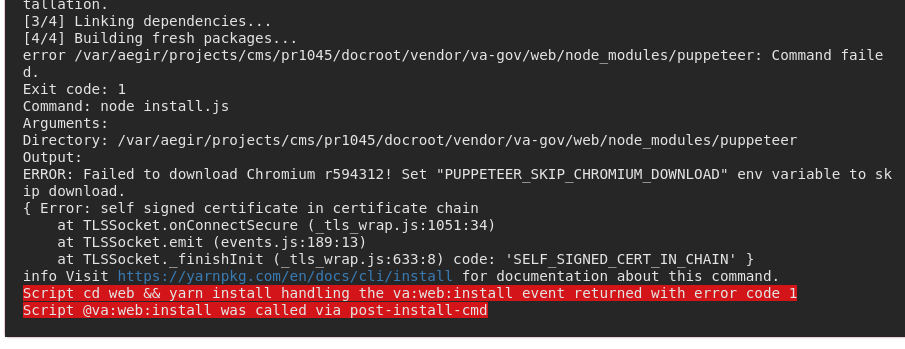

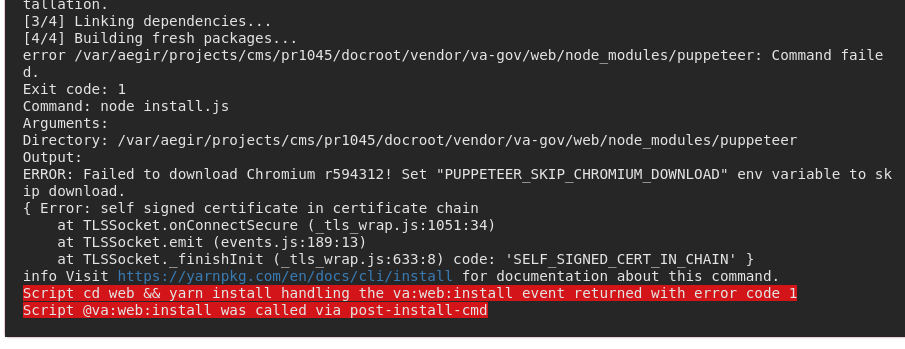

15,593 | 11,598,159,772 | IssuesEvent | 2020-02-24 22:25:47 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | opened | ERROR: Failed to download Chromium r594312! | Infrastructure | I've seen this flicker a few times recently. Don't really want to add the `PUPPETEER_SKIP_CHROMIUM_DOWNLOAD` env var but we may have to.

> ERROR: Failed to download Chromium r594312! Set "PUPPETEER_SKIP_... | 1.0 | ERROR: Failed to download Chromium r594312! - I've seen this flicker a few times recently. Don't really want to add the `PUPPETEER_SKIP_CHROMIUM_DOWNLOAD` env var but we may have to.

> ERROR: Failed to d... | non_process | error failed to download chromium i ve seen this flicker a few times recently don t really want to add the puppeteer skip chromium download env var but we may have to error failed to download chromium set puppeteer skip chromium download env variable to skip download error self sign... | 0 |

17,502 | 23,315,752,796 | IssuesEvent | 2022-08-08 12:22:46 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Primary key in model using a missing column | bug/0-unknown kind/bug process/candidate team/schema topic: prisma db pull topic: Your friendly prisma developers | Hi Prisma Team! Prisma Migrate just crashed.

## Command

`db pull`

## Versions

| Name | Version |

|-------------|--------------------|

| Platform | darwin-arm64 |

| Node | v16.13.1 |

| Prisma CLI | 4.1.0 |

| Engine | 8d8414deb36033... | 1.0 | Primary key in model using a missing column - Hi Prisma Team! Prisma Migrate just crashed.

## Command

`db pull`

## Versions

| Name | Version |

|-------------|--------------------|

| Platform | darwin-arm64 |

| Node | v16.13.1 |

| Prisma CLI | 4.1.0 ... | process | primary key in model using a missing column hi prisma team prisma migrate just crashed command db pull versions name version platform darwin node prisma cli ... | 1 |

44,381 | 12,124,137,234 | IssuesEvent | 2020-04-22 13:46:44 | carbon-design-system/ibm-security | https://api.github.com/repos/carbon-design-system/ibm-security | closed | Button loading prop should not disable button | Defect severity 4 | ## Request

The `loading` prop on the `Button` component disables the button. I don't believe that this should be the default behavior. Let the consumer decide whether to disable the button or not. If they already pass in a prop to set `loading`, they can use the same one to disable if they want.

Not sure if it is... | 1.0 | Button loading prop should not disable button - ## Request

The `loading` prop on the `Button` component disables the button. I don't believe that this should be the default behavior. Let the consumer decide whether to disable the button or not. If they already pass in a prop to set `loading`, they can use the same o... | non_process | button loading prop should not disable button request the loading prop on the button component disables the button i don t believe that this should be the default behavior let the consumer decide whether to disable the button or not if they already pass in a prop to set loading they can use the same o... | 0 |

2,870 | 5,830,076,148 | IssuesEvent | 2017-05-08 15:56:45 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | reopened | editable function returning false is not honoured - sort on custom formatter does not work | enhancement inprocess | First of all thanks for a great component :thumbsup: - am using it extensively.

Couple of issues that i have noticed

1. I use dataFormat that returns a React Component. The rendering is fine but the sort does not work at all. If this is going to be fixed it will be good if there will be an option whether to sort on... | 1.0 | editable function returning false is not honoured - sort on custom formatter does not work - First of all thanks for a great component :thumbsup: - am using it extensively.

Couple of issues that i have noticed

1. I use dataFormat that returns a React Component. The rendering is fine but the sort does not work at al... | process | editable function returning false is not honoured sort on custom formatter does not work first of all thanks for a great component thumbsup am using it extensively couple of issues that i have noticed i use dataformat that returns a react component the rendering is fine but the sort does not work at al... | 1 |

6,813 | 9,956,647,326 | IssuesEvent | 2019-07-05 14:28:06 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Issue with custom database path | log-processing | I'm trying to keep incremental logs in the btree DB. My initial command was:

```zcat /usr/local/www/usesthis.com/log/access.log.*.gz | goaccess --keep-db-files --db-path=/usr/local/share/stats/usesthis/ --log-format=COMBINED -o /usr/local/www/usesthis.com/public/stats.html -```

Which worked great, I can see a ton... | 1.0 | Issue with custom database path - I'm trying to keep incremental logs in the btree DB. My initial command was:

```zcat /usr/local/www/usesthis.com/log/access.log.*.gz | goaccess --keep-db-files --db-path=/usr/local/share/stats/usesthis/ --log-format=COMBINED -o /usr/local/www/usesthis.com/public/stats.html -```

W... | process | issue with custom database path i m trying to keep incremental logs in the btree db my initial command was zcat usr local www usesthis com log access log gz goaccess keep db files db path usr local share stats usesthis log format combined o usr local www usesthis com public stats html w... | 1 |

147,298 | 23,196,248,935 | IssuesEvent | 2022-08-01 16:39:15 | microsoft/fluentui | https://api.github.com/repos/microsoft/fluentui | closed | [Bug]: SplitButton opens the dropdown menu instead of invoking the onClick action when used with touch. | Resolution: By Design Fluent UI react (v8) Component: SplitButton | ### Library

React / v8 (@fluentui/react)

### System Info

```shell

System:

OS: Windows 10 10.0.22000

CPU: (8) x64 Intel(R) Core(TM) i7-1065G7 CPU @ 1.30GHz

Memory: 2.07 GB / 15.60 GB

Browsers:

Edge: Spartan (44.22000.120.0), Chromium (102.0.1245.44), ChromiumDev (104.0.1293.1)

Internet Exp... | 1.0 | [Bug]: SplitButton opens the dropdown menu instead of invoking the onClick action when used with touch. - ### Library

React / v8 (@fluentui/react)

### System Info

```shell

System:

OS: Windows 10 10.0.22000

CPU: (8) x64 Intel(R) Core(TM) i7-1065G7 CPU @ 1.30GHz

Memory: 2.07 GB / 15.60 GB

Browsers:

... | non_process | splitbutton opens the dropdown menu instead of invoking the onclick action when used with touch library react fluentui react system info shell system os windows cpu intel r core tm cpu memory gb gb browsers edge spartan ... | 0 |

420,406 | 28,263,462,804 | IssuesEvent | 2023-04-07 03:03:33 | AY2223S2-CS2113-T13-1/tp | https://api.github.com/repos/AY2223S2-CS2113-T13-1/tp | closed | [PE-D][Tester B] Lack of example to show command with optional description | documentation priority.Medium |

Would be good if there was an example on how to use `add` with the optional parameter description

<!--session: 1680252471869-a4ff2ce6-21c8-4fa9-ad69-6bc92f657b80-->

<!--Version: Web v3.4.7-->

---------... | 1.0 | [PE-D][Tester B] Lack of example to show command with optional description -

Would be good if there was an example on how to use `add` with the optional parameter description

<!--session: 1680252471869-... | non_process | lack of example to show command with optional description would be good if there was an example on how to use add with the optional parameter description labels severity low type documentationbug original jaredoong ped | 0 |

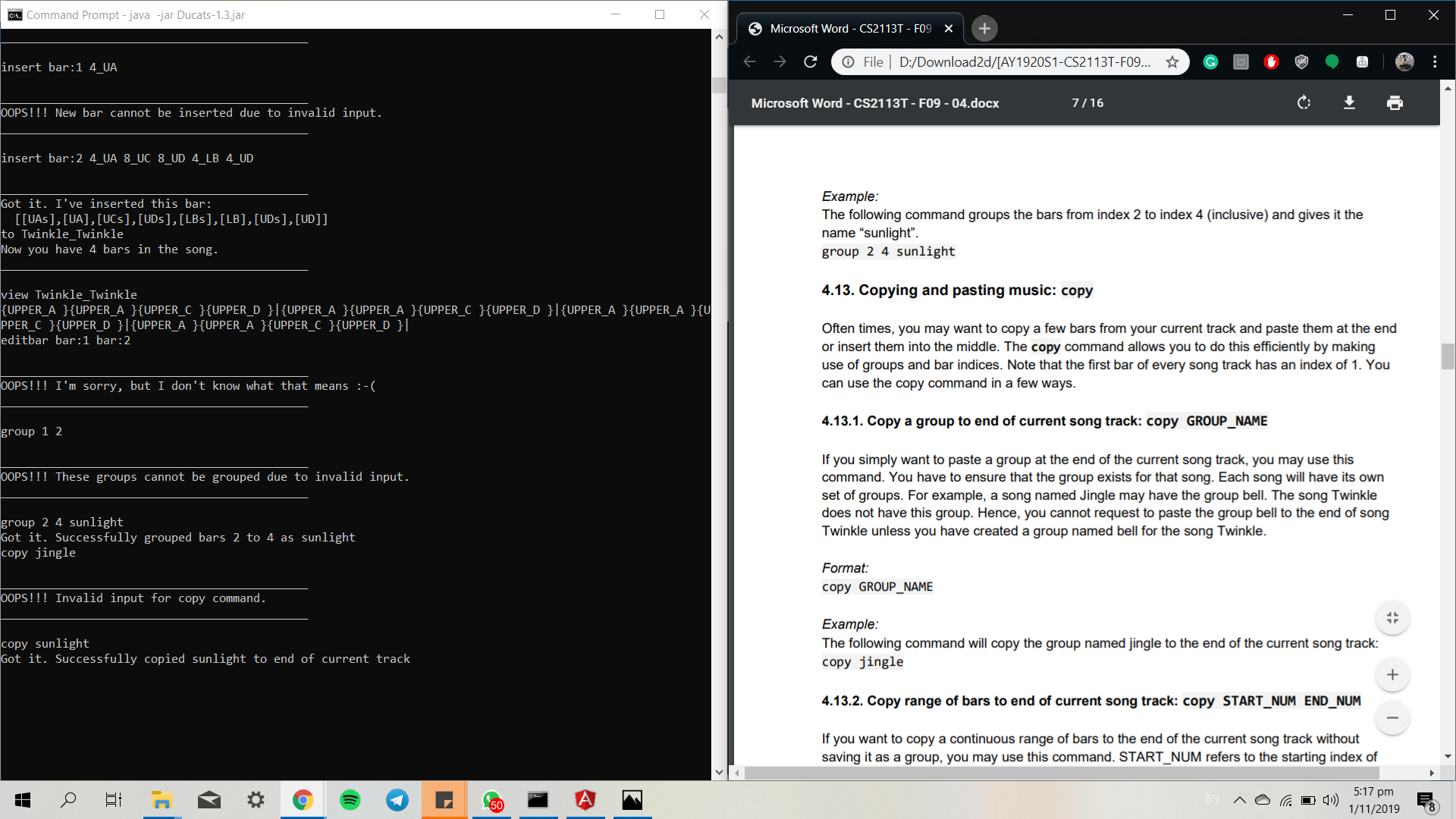

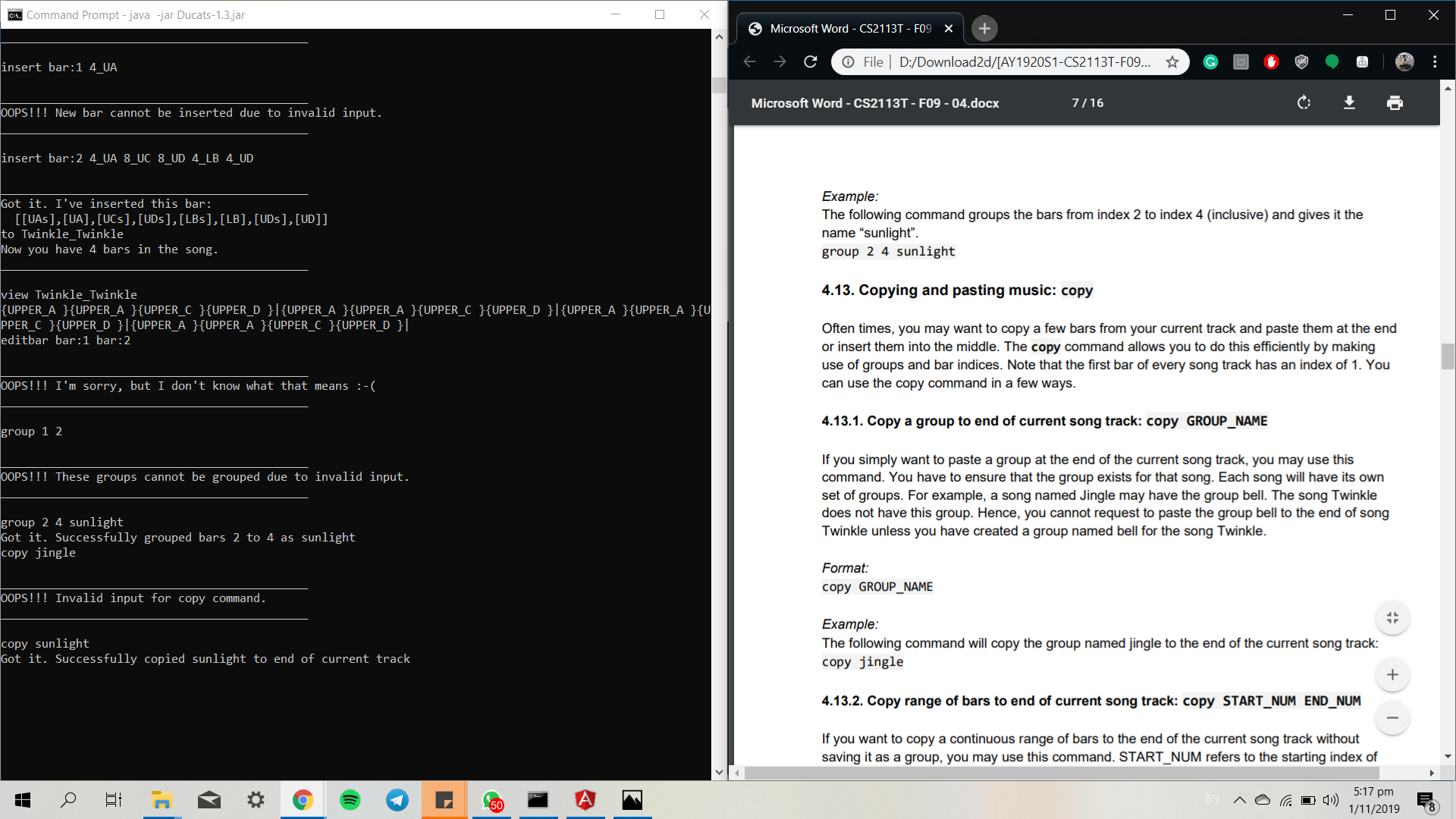

365,623 | 10,790,040,692 | IssuesEvent | 2019-11-05 13:19:37 | AY1920S1-CS2113T-F09-4/main | https://api.github.com/repos/AY1920S1-CS2113T-F09-4/main | closed | Documentation not uniform in examples, making it hard to follow. | priority.High severity.Medium status.Ongoing |

Difference in example, when executed, I won't find jingle inside. Confusing for user.

<hr><sub>[original: JasonLeeWeiHern/ped#7]<br/>

</sub> | 1.0 | Documentation not uniform in examples, making it hard to follow. -

Difference in example, when executed, I won't find jingle inside. Confusing for user.

<hr><sub>[original: JasonLeeWe... | non_process | documentation not uniform in examples making it hard to follow difference in example when executed i won t find jingle inside confusing for user | 0 |

192,570 | 15,353,232,881 | IssuesEvent | 2021-03-01 08:17:37 | kbuffington/Georgia | https://api.github.com/repos/kbuffington/Georgia | closed | Suggestion for Instructions | documentation | I'm super casual on this kind of thing (Not even coding, just installing)

So, the roadblock I ran into was on step 8. I didn't understand how to add the Jscript Pannel, specifically as I'm ultra new to Foobar.

Luckily your video helped me out there, through some reddit skimming, and happening to run across it on ... | 1.0 | Suggestion for Instructions - I'm super casual on this kind of thing (Not even coding, just installing)

So, the roadblock I ran into was on step 8. I didn't understand how to add the Jscript Pannel, specifically as I'm ultra new to Foobar.

Luckily your video helped me out there, through some reddit skimming, and ... | non_process | suggestion for instructions i m super casual on this kind of thing not even coding just installing so the roadblock i ran into was on step i didn t understand how to add the jscript pannel specifically as i m ultra new to foobar luckily your video helped me out there through some reddit skimming and ... | 0 |

6,506 | 9,592,746,130 | IssuesEvent | 2019-05-09 09:40:56 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | opened | Obsolete 'GO:0046731 passive induction of host immune response by virus' and children? | multi-species process | Hello,

GO:0046731 passive induction of host immune response by virus

is defined as "The unintentional stimulation by a virus of a host defense response to viral infection, as part of the viral infectious cycle." - so that doesn't sound like any gene product is actually doing anything.

Similar for the children:... | 1.0 | Obsolete 'GO:0046731 passive induction of host immune response by virus' and children? - Hello,

GO:0046731 passive induction of host immune response by virus

is defined as "The unintentional stimulation by a virus of a host defense response to viral infection, as part of the viral infectious cycle." - so that doe... | process | obsolete go passive induction of host immune response by virus and children hello go passive induction of host immune response by virus is defined as the unintentional stimulation by a virus of a host defense response to viral infection as part of the viral infectious cycle so that doesn t sound l... | 1 |

4,311 | 7,203,155,640 | IssuesEvent | 2018-02-06 08:06:08 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [processing] [FEATURE] SplitWithLines | Automatic new feature Processing | Original commit: https://github.com/qgis/QGIS/commit/0e2ef065d7e89ba65db93f3a628d46c9bb31f265 by nyalldawson

Rename algorithm SplitLinesWithLines to SplitWithLines

Accept polygon as input, too

Use only selected lines to split with (if processing is set to use selection only)

Issue log message if trying to split multi ... | 1.0 | [processing] [FEATURE] SplitWithLines - Original commit: https://github.com/qgis/QGIS/commit/0e2ef065d7e89ba65db93f3a628d46c9bb31f265 by nyalldawson

Rename algorithm SplitLinesWithLines to SplitWithLines

Accept polygon as input, too

Use only selected lines to split with (if processing is set to use selection only)

Iss... | process | splitwithlines original commit by nyalldawson rename algorithm splitlineswithlines to splitwithlines accept polygon as input too use only selected lines to split with if processing is set to use selection only issue log message if trying to split multi geometries update help | 1 |

8,730 | 11,863,341,717 | IssuesEvent | 2020-03-25 19:32:27 | w3c/webauthn | https://api.github.com/repos/w3c/webauthn | closed | Add direction to somewhere to ask for help to the contributing guidelines | type:process | I have been looking around this repo and finding answers to most of the questions I have in various issues and PR's.

Does such a community exist which will accept questions for people trying to use webauthn?

It would be great if there was some line in the contributing.md which said

"If you are unsure if your issue... | 1.0 | Add direction to somewhere to ask for help to the contributing guidelines - I have been looking around this repo and finding answers to most of the questions I have in various issues and PR's.

Does such a community exist which will accept questions for people trying to use webauthn?

It would be great if there was s... | process | add direction to somewhere to ask for help to the contributing guidelines i have been looking around this repo and finding answers to most of the questions i have in various issues and pr s does such a community exist which will accept questions for people trying to use webauthn it would be great if there was s... | 1 |

7,417 | 10,541,888,562 | IssuesEvent | 2019-10-02 11:57:34 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | closed | Run the breaking change detector on PRs | type: process | For APIs which have already gone GA, it would be useful to run our breaking change detector against the published version.

Will need to look to see how tricky that is. | 1.0 | Run the breaking change detector on PRs - For APIs which have already gone GA, it would be useful to run our breaking change detector against the published version.

Will need to look to see how tricky that is. | process | run the breaking change detector on prs for apis which have already gone ga it would be useful to run our breaking change detector against the published version will need to look to see how tricky that is | 1 |

86,963 | 8,055,089,918 | IssuesEvent | 2018-08-02 08:12:09 | pandas-dev/pandas | https://api.github.com/repos/pandas-dev/pandas | closed | Specific Timestamps breaks time series indexing (.loc returns wrong results) | Indexing Testing Timezones good first issue | When try to access labels (`.loc`) by using a specific list of `Timestamp` objects or a `DatetimeIndex` object (See attached csv file), the resulting index is returned in UTC offset but the original timezone is not removed.

This seems to happen only in very specific cases, when the index passed to `.loc` contains la... | 1.0 | Specific Timestamps breaks time series indexing (.loc returns wrong results) - When try to access labels (`.loc`) by using a specific list of `Timestamp` objects or a `DatetimeIndex` object (See attached csv file), the resulting index is returned in UTC offset but the original timezone is not removed.

This seems to ... | non_process | specific timestamps breaks time series indexing loc returns wrong results when try to access labels loc by using a specific list of timestamp objects or a datetimeindex object see attached csv file the resulting index is returned in utc offset but the original timezone is not removed this seems to ... | 0 |

400,104 | 11,769,162,500 | IssuesEvent | 2020-03-15 13:45:07 | microsoft/terraform-provider-azuredevops | https://api.github.com/repos/microsoft/terraform-provider-azuredevops | closed | Implement a Terraform data source to lookup Git repositories | good first issue priority-low | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | Implement a Terraform data source to lookup Git repositories - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the commu... | non_process | implement a terraform data source to lookup git repositories community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise for issue followers and... | 0 |

13,762 | 16,521,836,538 | IssuesEvent | 2021-05-26 15:17:53 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | allowPartiallySucceededBuilds for deployment jobs | cba devops-cicd-process/tech devops/prod product-question | Hey Devops Gurus,

Is there to allowPartiallySucceededBuilds for deployment jobs?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 5aeeaace-1c5b-a51b-e41f-f25b806155b8

* Version Independent ID: fd7ff690-b2e4-41c7-a342-e528b911c6e... | 1.0 | allowPartiallySucceededBuilds for deployment jobs - Hey Devops Gurus,

Is there to allowPartiallySucceededBuilds for deployment jobs?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 5aeeaace-1c5b-a51b-e41f-f25b806155b8

* Version... | process | allowpartiallysucceededbuilds for deployment jobs hey devops gurus is there to allowpartiallysucceededbuilds for deployment jobs document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id ... | 1 |

22,625 | 31,847,770,452 | IssuesEvent | 2023-09-14 21:28:34 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | [MLv2] Port MLv1 `hasWritePermission` to MLv2 (only for native queries) | Querying/Native .Backend .metabase-lib .Team/QueryProcessor :hammer_and_wrench: | There're a few MLv1 methods the editor uses that'd be nice to port to MLv2 as well.

Port `hasWritePermission` (only native) to MLv2

https://github.com/metabase/metabase/blob/dbfca6c6d173294ddcf97b394750574b4ef10221/frontend/src/metabase-lib/queries/NativeQuery.ts#L200 | 1.0 | [MLv2] Port MLv1 `hasWritePermission` to MLv2 (only for native queries) - There're a few MLv1 methods the editor uses that'd be nice to port to MLv2 as well.

Port `hasWritePermission` (only native) to MLv2

https://github.com/metabase/metabase/blob/dbfca6c6d173294ddcf97b394750574b4ef10221/frontend/src/metabase-lib... | process | port haswritepermission to only for native queries there re a few methods the editor uses that d be nice to port to as well port haswritepermission only native to | 1 |

75,803 | 3,476,132,228 | IssuesEvent | 2015-12-26 14:24:59 | Stephane-D/SGDK | https://api.github.com/repos/Stephane-D/SGDK | closed | recomp sprite collision | Priority-Low | Hi, rescomp readme is wrong:

Collision should be set to box by default:

https://github.com/Stephane-D/SGDK/blob/master/tools/rescomp/rescomp.txt#L143

but:

https://github.com/Stephane-D/SGDK/blob/10f2039eacac7d72a6eb63b274af6b95c91a85b8/tools/rescomp/src/sprite.c#L72

it's set to none | 1.0 | recomp sprite collision - Hi, rescomp readme is wrong:

Collision should be set to box by default:

https://github.com/Stephane-D/SGDK/blob/master/tools/rescomp/rescomp.txt#L143

but:

https://github.com/Stephane-D/SGDK/blob/10f2039eacac7d72a6eb63b274af6b95c91a85b8/tools/rescomp/src/sprite.c#L72

it's set to none | non_process | recomp sprite collision hi rescomp readme is wrong collision should be set to box by default but it s set to none | 0 |

13,548 | 16,090,323,496 | IssuesEvent | 2021-04-26 15:55:24 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | remove depricated jenkins jobs from set pending | kind/process lifecycle/stale priority/important-longterm | for example

windows virtualbox

podman ,,,

these still are being set as pending,even though we dont run them | 1.0 | remove depricated jenkins jobs from set pending - for example

windows virtualbox

podman ,,,

these still are being set as pending,even though we dont run them | process | remove depricated jenkins jobs from set pending for example windows virtualbox podman these still are being set as pending even though we dont run them | 1 |

393,762 | 11,624,364,335 | IssuesEvent | 2020-02-27 10:38:04 | dhowe/spectre | https://api.github.com/repos/dhowe/spectre | closed | Add circular cutout to profile image in templates/email.html | med-priority needs-verification | this is static html, just need the image to look like it does for avatars on the main site

you can test in templates/output.html (a generated file), then move the code to templates/email.html (the template file) when ready

if finished, check for new (unassigned) tickets from @billposters - he was testing last nig... | 1.0 | Add circular cutout to profile image in templates/email.html - this is static html, just need the image to look like it does for avatars on the main site

you can test in templates/output.html (a generated file), then move the code to templates/email.html (the template file) when ready

if finished, check for new ... | non_process | add circular cutout to profile image in templates email html this is static html just need the image to look like it does for avatars on the main site you can test in templates output html a generated file then move the code to templates email html the template file when ready if finished check for new ... | 0 |

5,863 | 8,682,734,453 | IssuesEvent | 2018-12-02 11:41:34 | bitshares/bitshares-community-ui | https://api.github.com/repos/bitshares/bitshares-community-ui | closed | Backup not functioning | Privatekey Backup bug process | when clicking on Backup (header) then on 'continue to backup' (the popup) then on 'I understand' button it wont take to the next step. | 1.0 | Backup not functioning - when clicking on Backup (header) then on 'continue to backup' (the popup) then on 'I understand' button it wont take to the next step. | process | backup not functioning when clicking on backup header then on continue to backup the popup then on i understand button it wont take to the next step | 1 |

7,328 | 10,468,918,467 | IssuesEvent | 2019-09-22 17:02:37 | produvia/ai-platform | https://api.github.com/repos/produvia/ai-platform | closed | Text Classification | natural-language-processing task wontfix | # Goal(s)

- Assign a sentence or document an appropriate category

# Input(s)

- Sentence or document

# Output(s)

- Category

# Objective Function(s)

- TBD

| 1.0 | Text Classification - # Goal(s)

- Assign a sentence or document an appropriate category

# Input(s)

- Sentence or document

# Output(s)

- Category

# Objective Function(s)

- TBD

| process | text classification goal s assign a sentence or document an appropriate category input s sentence or document output s category objective function s tbd | 1 |

12,110 | 14,740,468,313 | IssuesEvent | 2021-01-07 09:08:16 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | RE: SA Billing - Save Credit Card to Account | anc-process anp-1 ant-bug | In GitLab by @kdjstudios on Nov 9, 2018, 11:52

**Submitted by:** "Arianna Screen" <arianna.screen@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-11-09-49358

**Server:** Internal

**Client/Site:** NA

**Account:** NA

**Issue:**

Today I am running credit cards and following t... | 1.0 | RE: SA Billing - Save Credit Card to Account - In GitLab by @kdjstudios on Nov 9, 2018, 11:52

**Submitted by:** "Arianna Screen" <arianna.screen@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-11-09-49358

**Server:** Internal

**Client/Site:** NA

**Account:** NA

**Issue:**

... | process | re sa billing save credit card to account in gitlab by kdjstudios on nov submitted by arianna screen helpdesk server internal client site na account na issue today i am running credit cards and following the instructions to save the credit cards i have a... | 1 |

12,854 | 21,012,322,589 | IssuesEvent | 2022-03-30 07:53:21 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | opened | [maven]org.apache.maven.plugins:maven-compiler-plugin get configuration/source and configuration/target | type:feature status:requirements priority-5-triage | ### What would you like Renovate to be able to do?

[springshell-rce-0-day-vulnerability](https://www.cyberkendra.com/2022/03/springshell-rce-0-day-vulnerability.html)

Some vulnerability in the java ecosystem are based on the JDK version, org.apache.maven.plugins:maven-compiler-plugin configuration/source and conf... | 1.0 | [maven]org.apache.maven.plugins:maven-compiler-plugin get configuration/source and configuration/target - ### What would you like Renovate to be able to do?

[springshell-rce-0-day-vulnerability](https://www.cyberkendra.com/2022/03/springshell-rce-0-day-vulnerability.html)

Some vulnerability in the java ecosystem ar... | non_process | org apache maven plugins maven compiler plugin get configuration source and configuration target what would you like renovate to be able to do some vulnerability in the java ecosystem are based on the jdk version org apache maven plugins maven compiler plugin configuration source and configuration ta... | 0 |

13,343 | 15,801,515,409 | IssuesEvent | 2021-04-03 05:17:52 | PyCQA/flake8 | https://api.github.com/repos/PyCQA/flake8 | closed | Flake8 3.0 does not work, throws AttributeError exception | bug:confirmed component:multiprocessing component:pyflakes priority:high | In GitLab by @akittas on Jul 25, 2016, 08:52

Python version: 3.5.2 64-bit

OS: Windows 10 Pro 64 bit

Installation: pip install flake8

pip version: 8.1.2

setuptools version: 25.0.1

flake 8 --version: 3.0.0 (pycodestyle: 2.0.0, mccabe: 0.5.0, pyflakes: 1.2.3) CPython 3.5.2 on Windows

running method: fla... | 1.0 | Flake8 3.0 does not work, throws AttributeError exception - In GitLab by @akittas on Jul 25, 2016, 08:52

Python version: 3.5.2 64-bit

OS: Windows 10 Pro 64 bit

Installation: pip install flake8

pip version: 8.1.2

setuptools version: 25.0.1

flake 8 --version: 3.0.0 (pycodestyle: 2.0.0, mccabe: 0.5.0, pyfl... | process | does not work throws attributeerror exception in gitlab by akittas on jul python version bit os windows pro bit installation pip install pip version setuptools version flake version pycodestyle mccabe pyflakes cpython... | 1 |

452,912 | 32,073,573,391 | IssuesEvent | 2023-09-25 09:32:47 | dappnode/DAppNodeDocs | https://api.github.com/repos/dappnode/DAppNodeDocs | closed | New documentation tree | documentation 2023 | The new documentation will follow this tree:

1. User docs

2. Dev docs

3. Smooth

4. DAO (show warning about deprecation or remove)

Inside user docs we should include:

1. Get started

a. Access Dappnode (via WiFi)

b. Set up

Register

Repository (IPFS + Ethereum)

Host password

... | 1.0 | New documentation tree - The new documentation will follow this tree:

1. User docs

2. Dev docs

3. Smooth

4. DAO (show warning about deprecation or remove)

Inside user docs we should include:

1. Get started

a. Access Dappnode (via WiFi)

b. Set up

Register

Repository (IPFS + Ethereum... | non_process | new documentation tree the new documentation will follow this tree user docs dev docs smooth dao show warning about deprecation or remove inside user docs we should include get started a access dappnode via wifi b set up register repository ipfs ethereum... | 0 |

3,210 | 9,213,817,246 | IssuesEvent | 2019-03-10 15:09:07 | jimkyndemeyer/js-graphql-intellij-plugin | https://api.github.com/repos/jimkyndemeyer/js-graphql-intellij-plugin | closed | Support for multiple schemas (on multiple endpoints) | enhancement v2-alpha v2-architecture | I need to access two different graphql endpoints from a project, where each endpoint has a different schema.

I'm currently keeping two different copies of graphql.config.json that I swap between as needed.

Is there a better way to handle this? If not, consider this an enhancement request. | 1.0 | Support for multiple schemas (on multiple endpoints) - I need to access two different graphql endpoints from a project, where each endpoint has a different schema.

I'm currently keeping two different copies of graphql.config.json that I swap between as needed.

Is there a better way to handle this? If not, con... | non_process | support for multiple schemas on multiple endpoints i need to access two different graphql endpoints from a project where each endpoint has a different schema i m currently keeping two different copies of graphql config json that i swap between as needed is there a better way to handle this if not con... | 0 |

49,212 | 6,157,458,330 | IssuesEvent | 2017-06-28 18:59:45 | mapzen/android | https://api.github.com/repos/mapzen/android | opened | Sample App Major Section: More Info | Sample App Redesign | - [ ] Links to the places demo

- [ ] Release info

- [ ] Feature list

- [ ] Contact

- [ ] Participate

- [ ] Download

- [ ] API keys

| 1.0 | Sample App Major Section: More Info - - [ ] Links to the places demo

- [ ] Release info

- [ ] Feature list

- [ ] Contact

- [ ] Participate

- [ ] Download

- [ ] API keys

| non_process | sample app major section more info links to the places demo release info feature list contact participate download api keys | 0 |

514,457 | 14,939,551,731 | IssuesEvent | 2021-01-25 17:05:05 | idaholab/raven | https://api.github.com/repos/idaholab/raven | closed | [TASK] ROMCollection.Interpolated truncated lifetime | FutureRAVENv2.1 priority_minor task | --------

Issue Description

--------

For workflow debugging, an option to limit the number of cycles sampled in an Interpolated ROMCollection would be useful.

----------------

For Change Control Board: Issue Review

----------------

This review should occur before any development is performed as a response to ... | 1.0 | [TASK] ROMCollection.Interpolated truncated lifetime - --------

Issue Description

--------

For workflow debugging, an option to limit the number of cycles sampled in an Interpolated ROMCollection would be useful.

----------------

For Change Control Board: Issue Review

----------------

This review should occu... | non_process | romcollection interpolated truncated lifetime issue description for workflow debugging an option to limit the number of cycles sampled in an interpolated romcollection would be useful for change control board issue review this review should occur bef... | 0 |

133,660 | 18,299,017,408 | IssuesEvent | 2021-10-05 23:53:38 | bsbtd/Teste | https://api.github.com/repos/bsbtd/Teste | opened | CVE-2020-11112 (High) detected in jackson-databind-2.9.5.jar, jackson-databind-2.6.7.3.jar | security vulnerability | ## CVE-2020-11112 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.5.jar</b>, <b>jackson-databind-2.6.7.3.jar</b></p></summary>

<p>

<details><summary><b>jackson-d... | True | CVE-2020-11112 (High) detected in jackson-databind-2.9.5.jar, jackson-databind-2.6.7.3.jar - ## CVE-2020-11112 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.5.j... | non_process | cve high detected in jackson databind jar jackson databind jar cve high severity vulnerability vulnerable libraries jackson databind jar jackson databind jar jackson databind jar general data binding functionality for jackson works on core str... | 0 |

21,442 | 29,478,655,563 | IssuesEvent | 2023-06-02 02:09:32 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | 'webpack-preprocessor': project is missing dependency '@types/bluebird' | type: typings npm: @cypress/webpack-preprocessor stale | ### Current behavior

Cypress project type-checking fails if we try to type-check `cypress/plugins/index.js` and it includes `require('@cypress/webpack-preprocessor')`

### Desired behavior

Type-checking... | 1.0 | 'webpack-preprocessor': project is missing dependency '@types/bluebird' - ### Current behavior

Cypress project type-checking fails if we try to type-check `cypress/plugins/index.js` and it includes `require('@cypress/webpack-preprocessor')`

. | 1.0 | add installation by VM instructions - Per discussion/presentation with @golfvert, add documentation for installing wis2box by way of VM (Proxmox). | non_process | add installation by vm instructions per discussion presentation with golfvert add documentation for installing by way of vm proxmox | 0 |

21,144 | 28,121,790,020 | IssuesEvent | 2023-03-31 14:42:04 | MPMG-DCC-UFMG/C01 | https://api.github.com/repos/MPMG-DCC-UFMG/C01 | closed | Post mortem DEBUG - Trace Viewer | [2] Alta Prioridade [1] Requisito [0] Desenvolvimento [3] Processamento Dinâmico | ## Comportamento Esperado

Desejamos incluir o "Trace Viewer" do Playwright como ferramenta de debug para coletores dinâmicos. É uma funcionalidade com uma ótima interface que permite a execução passo a passo de um coletor dinâmico, permitindo a visualização das páginas, snapshots, e inspeção do código fonte de cada in... | 1.0 | Post mortem DEBUG - Trace Viewer - ## Comportamento Esperado

Desejamos incluir o "Trace Viewer" do Playwright como ferramenta de debug para coletores dinâmicos. É uma funcionalidade com uma ótima interface que permite a execução passo a passo de um coletor dinâmico, permitindo a visualização das páginas, snapshots, e ... | process | post mortem debug trace viewer comportamento esperado desejamos incluir o trace viewer do playwright como ferramenta de debug para coletores dinâmicos é uma funcionalidade com uma ótima interface que permite a execução passo a passo de um coletor dinâmico permitindo a visualização das páginas snapshots e ... | 1 |

313,442 | 26,931,362,950 | IssuesEvent | 2023-02-07 17:03:22 | dotnet/source-build | https://api.github.com/repos/dotnet/source-build | closed | Remove security-partners-dotnet pipeline from main branch | area-ci-testing | It's my understanding that the [security-partners-dotnet.yml](https://github.com/dotnet/installer/blob/main/src/SourceBuild/content/eng/pipelines/security-partners-dotnet.yml) pipeline is not used in the main branch and only used for .NET 6/7 servicing. If so, then this file should be removed from the main branch. | 1.0 | Remove security-partners-dotnet pipeline from main branch - It's my understanding that the [security-partners-dotnet.yml](https://github.com/dotnet/installer/blob/main/src/SourceBuild/content/eng/pipelines/security-partners-dotnet.yml) pipeline is not used in the main branch and only used for .NET 6/7 servicing. If so,... | non_process | remove security partners dotnet pipeline from main branch it s my understanding that the pipeline is not used in the main branch and only used for net servicing if so then this file should be removed from the main branch | 0 |

102,065 | 11,273,465,603 | IssuesEvent | 2020-01-14 16:36:40 | GluuFederation/casa | https://api.github.com/repos/GluuFederation/casa | closed | Enable Plugins Isolated Way to Persist Configuration | Needs Documentation Needs QA enhancement | Plugin devs should have the possibility to store relevant configurations in the same database attribute the application uses for settings. It's good to have a single point of configuration

We have to offer a safe means so that developers cannot read or write other plugins configs or core configs | 1.0 | Enable Plugins Isolated Way to Persist Configuration - Plugin devs should have the possibility to store relevant configurations in the same database attribute the application uses for settings. It's good to have a single point of configuration

We have to offer a safe means so that developers cannot read or write oth... | non_process | enable plugins isolated way to persist configuration plugin devs should have the possibility to store relevant configurations in the same database attribute the application uses for settings it s good to have a single point of configuration we have to offer a safe means so that developers cannot read or write oth... | 0 |

8,492 | 11,647,929,489 | IssuesEvent | 2020-03-01 17:49:56 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Assert failure currentProcessCpuCount == g_processAffinitySet.Count() | area-System.Diagnostics.Process untriaged | https://helix.dot.net/api/2019-06-17/jobs/5c24c550-4c42-459f-bbfd-21e69cbf8a27/workitems/System.Diagnostics.Process.Tests/console

`Libraries Test Run checked coreclr Linux x64 Debug `

```

Assert failure(PID 15210 [0x00003b6a], Thread: 15210 [0x3b6a]): currentProcessCpuCount == g_processAffinitySet.Count()

File: /... | 1.0 | Assert failure currentProcessCpuCount == g_processAffinitySet.Count() - https://helix.dot.net/api/2019-06-17/jobs/5c24c550-4c42-459f-bbfd-21e69cbf8a27/workitems/System.Diagnostics.Process.Tests/console

`Libraries Test Run checked coreclr Linux x64 Debug `

```

Assert failure(PID 15210 [0x00003b6a], Thread: 15210 [0... | process | assert failure currentprocesscpucount g processaffinityset count libraries test run checked coreclr linux debug assert failure pid thread currentprocesscpucount g processaffinityset count file w s src coreclr src vm gcenv os cpp line image home helixbot work p dotn... | 1 |

87,382 | 25,107,004,353 | IssuesEvent | 2022-11-08 17:19:58 | spack/spack | https://api.github.com/repos/spack/spack | closed | Installation issue: Binary libffi fails checksum verification | build-error | ### Steps to reproduce the issue

```

# On develop branch

spack mirror add binary_mirror https://binaries.spack.io/releases/v0.18

spack buildcache keys --install --trust

spack -vvv install /d6d3lh3

```

### Error message

==> Installing libffi-3.4.2-d6d3lh3hgkjwbifbbjuvqs2a3w5xj7pd

==> Fetching https://bin... | 1.0 | Installation issue: Binary libffi fails checksum verification - ### Steps to reproduce the issue

```

# On develop branch

spack mirror add binary_mirror https://binaries.spack.io/releases/v0.18

spack buildcache keys --install --trust

spack -vvv install /d6d3lh3

```

### Error message

==> Installing libffi-... | non_process | installation issue binary libffi fails checksum verification steps to reproduce the issue on develop branch spack mirror add binary mirror spack buildcache keys install trust spack vvv install error message installing libffi fetching gpg signature made ... | 0 |

17,611 | 23,430,202,294 | IssuesEvent | 2022-08-15 00:46:00 | sparc4-dev/astropop | https://api.github.com/repos/sparc4-dev/astropop | closed | Avoid breaking in image registration lists | bug image-processing | When the image registration fails for a list, it breaks. Add option to ignore and log the errors only. May be warn it on header too. | 1.0 | Avoid breaking in image registration lists - When the image registration fails for a list, it breaks. Add option to ignore and log the errors only. May be warn it on header too. | process | avoid breaking in image registration lists when the image registration fails for a list it breaks add option to ignore and log the errors only may be warn it on header too | 1 |

17,554 | 23,368,077,419 | IssuesEvent | 2022-08-10 17:07:30 | nghi-huynh/HPA_HuBMAP_Kaggle_competition | https://api.github.com/repos/nghi-huynh/HPA_HuBMAP_Kaggle_competition | closed | WSI Pre-processing: tiling + tissue segmentation | data preprocessing data preparation | WSI preprocessing: Tiling + Tissue Segmentation

**Goal**: using simple thresholding techniques like Otsu or Triangle binarization to identify tissue/non-tissue in tiles and save it for training purpose

Refer to [WSI processing: Tiling + Tissue Segmentation](https://www.kaggle.com/code/nghihuynh/wsi-preprocessing-... | 1.0 | WSI Pre-processing: tiling + tissue segmentation - WSI preprocessing: Tiling + Tissue Segmentation

**Goal**: using simple thresholding techniques like Otsu or Triangle binarization to identify tissue/non-tissue in tiles and save it for training purpose

Refer to [WSI processing: Tiling + Tissue Segmentation](https... | process | wsi pre processing tiling tissue segmentation wsi preprocessing tiling tissue segmentation goal using simple thresholding techniques like otsu or triangle binarization to identify tissue non tissue in tiles and save it for training purpose refer to for more detail rescale thresholding... | 1 |

8,007 | 7,106,350,087 | IssuesEvent | 2018-01-16 16:27:06 | OpenLiberty/open-liberty | https://api.github.com/repos/OpenLiberty/open-liberty | opened | Generate keystore.password during server create | Epic in:Security story team:Zombie Apocalypse | Implements the resolution of design issue #1175

- During `server create` time [generate a random secure password](http://crunchify.com/java-generate-strong-random-password-securerandom/) into a `keystore.password` property in `${server.config.dir}/server.env`

- We will add a new flag to server create (e.g. `se... | True | Generate keystore.password during server create - Implements the resolution of design issue #1175

- During `server create` time [generate a random secure password](http://crunchify.com/java-generate-strong-random-password-securerandom/) into a `keystore.password` property in `${server.config.dir}/server.env`

-... | non_process | generate keystore password during server create implements the resolution of design issue during server create time into a keystore password property in server config dir server env we will add a new flag to server create e g server create myserver no password which will disable th... | 0 |

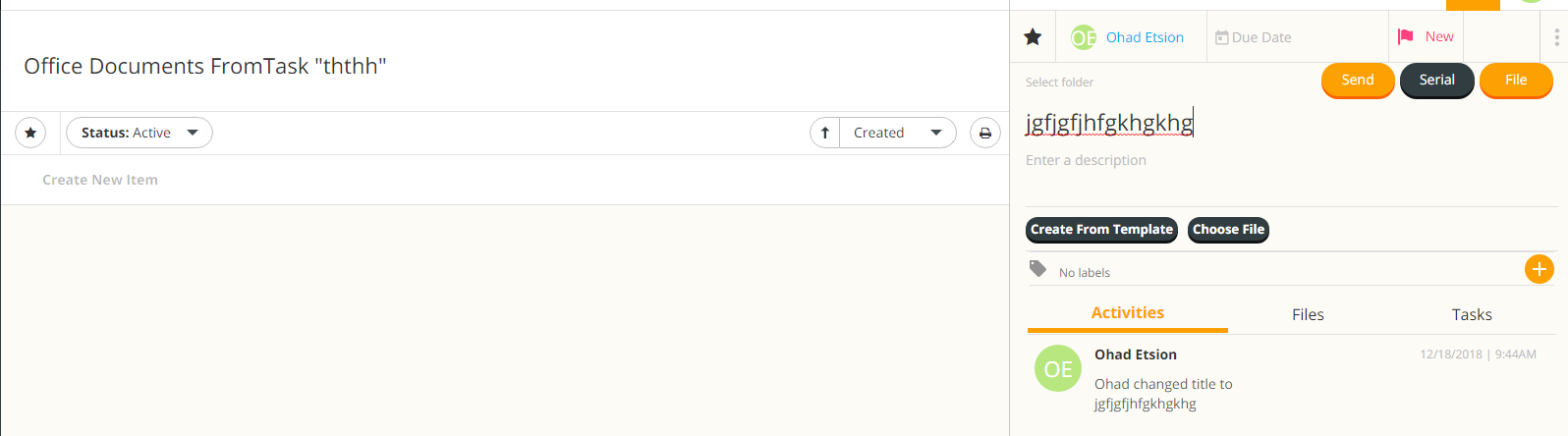

6,001 | 8,808,922,197 | IssuesEvent | 2018-12-27 16:54:37 | linnovate/root | https://api.github.com/repos/linnovate/root | closed | office documents from tasks bug | 2.0.6 Fixed Process bug critical | after creating a task, and then going to the documents tab, clicking on manage documents

create new item doesnt update the list, and after editing the document it isnt saved after refreshing the page

| 1.0 | office documents from tasks bug - after creating a task, and then going to the documents tab, clicking on manage documents