Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

137,437 | 20,146,898,010 | IssuesEvent | 2022-02-09 08:33:23 | Joystream/atlas | https://api.github.com/repos/Joystream/atlas | opened | Comments Design: Video page | design | ### User stories

1. As a content viewer, I want to see other people's comments under a video I'm watching.

2. As a content viewer, I want to react to other people's comments under a video I'm watching.

3. As a content viewer, I want to sort other people's comments under a video I'm watching.

4. As a content viewer I w... | 1.0 | Comments Design: Video page - ### User stories

1. As a content viewer, I want to see other people's comments under a video I'm watching.

2. As a content viewer, I want to react to other people's comments under a video I'm watching.

3. As a content viewer, I want to sort other people's comments under a video I'm watchi... | non_process | comments design video page user stories as a content viewer i want to see other people s comments under a video i m watching as a content viewer i want to react to other people s comments under a video i m watching as a content viewer i want to sort other people s comments under a video i m watchi... | 0 |

21,718 | 30,220,022,201 | IssuesEvent | 2023-07-05 18:35:13 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | opened | Error on reviveTerminalProcesses in remote | bug remote terminal-process | Repro:

1. Open OSS

2. Open new test resolver window

3. Close non-test resolver window

4. Close all terminals

5. Exit application

6. Open OSS

7. Open pty host log

I see an error being returned from `reviveTerminalProcesses`:

```

2023-07-05 11:32:09.225 [trace] [RPC Request] PtyService#reviveTerminalProce... | 1.0 | Error on reviveTerminalProcesses in remote - Repro:

1. Open OSS

2. Open new test resolver window

3. Close non-test resolver window

4. Close all terminals

5. Exit application

6. Open OSS

7. Open pty host log

I see an error being returned from `reviveTerminalProcesses`:

```

2023-07-05 11:32:09.225 [trace]... | process | error on reviveterminalprocesses in remote repro open oss open new test resolver window close non test resolver window close all terminals exit application open oss open pty host log i see an error being returned from reviveterminalprocesses ptyservice re... | 1 |

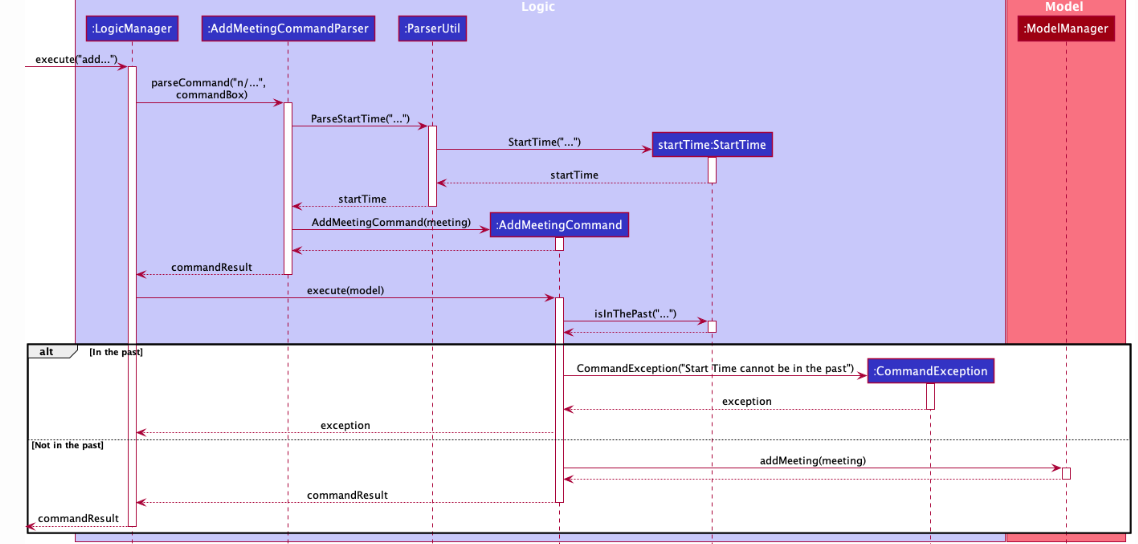

282,184 | 21,315,470,098 | IssuesEvent | 2022-04-16 07:34:42 | Cyolune/pe | https://api.github.com/repos/Cyolune/pe | opened | Large sequence diagram in Adding Meetings | severity.VeryLow type.DocumentationBug | The sequence diagram found in Adding Meetings in the DG is large and that makes it complicated. An alternative would be to use reference frames for sections like the time parsing

<!--session: 165008427238... | 1.0 | Large sequence diagram in Adding Meetings - The sequence diagram found in Adding Meetings in the DG is large and that makes it complicated. An alternative would be to use reference frames for sections like the time parsing

detected in linuxlinux-4.19.279 - autoclosed | Mend: dependency security vulnerability | ## CVE-2022-26490 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.k... | True | CVE-2022-26490 (High) detected in linuxlinux-4.19.279 - autoclosed - ## CVE-2022-26490 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The ... | non_process | cve high detected in linuxlinux autoclosed cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files ... | 0 |

2,410 | 5,196,598,859 | IssuesEvent | 2017-01-23 13:22:37 | raphym/Simulation_of_message_routing_by_intelligent_agents | https://api.github.com/repos/raphym/Simulation_of_message_routing_by_intelligent_agents | opened | Node / Quorum / Map modification with id | being processed | When i discover that a node is a quorum , so instead to add the new node (quorum) in the end of the vector of all elements of the map , i have to replace the old node with the new into the same place in the vector.

So for create a node with the same id that the old i change the constructor of the node class and quorum... | 1.0 | Node / Quorum / Map modification with id - When i discover that a node is a quorum , so instead to add the new node (quorum) in the end of the vector of all elements of the map , i have to replace the old node with the new into the same place in the vector.

So for create a node with the same id that the old i change t... | process | node quorum map modification with id when i discover that a node is a quorum so instead to add the new node quorum in the end of the vector of all elements of the map i have to replace the old node with the new into the same place in the vector so for create a node with the same id that the old i change t... | 1 |

15,867 | 20,036,245,689 | IssuesEvent | 2022-02-02 12:13:33 | googlefonts/noto-fonts | https://api.github.com/repos/googlefonts/noto-fonts | closed | Too many files in directories | Noto-Process-Issue | There are really too many files in the directory of fonts (notably the hinted and unhinted ones, possibly as well for some staged versions)

I really suggest using one directory by font family, or by ISO 15924 script code (like "Latn" for the base fonts, "Zsym" for symbols, "Szye" for emojis), and put in them all rel... | 1.0 | Too many files in directories - There are really too many files in the directory of fonts (notably the hinted and unhinted ones, possibly as well for some staged versions)

I really suggest using one directory by font family, or by ISO 15924 script code (like "Latn" for the base fonts, "Zsym" for symbols, "Szye" for ... | process | too many files in directories there are really too many files in the directory of fonts notably the hinted and unhinted ones possibly as well for some staged versions i really suggest using one directory by font family or by iso script code like latn for the base fonts zsym for symbols szye for emoj... | 1 |

7,330 | 24,648,554,123 | IssuesEvent | 2022-10-17 16:38:08 | bcgov/api-services-portal | https://api.github.com/repos/bcgov/api-services-portal | closed | Cypress Test - Consumer detail - edit labels | automation | 1. Manage/Edit labels spec

1.1 authenticates Mark (Access-Manager)

1.2 Navigate to Consumer page and filter the product

1.3 Click on the first consumer

1.4 Verify that labels can be deleted

1.5 Verify that labels can be updated

1.6 Verify that labels can be added

| 1.0 | Cypress Test - Consumer detail - edit labels - 1. Manage/Edit labels spec

1.1 authenticates Mark (Access-Manager)

1.2 Navigate to Consumer page and filter the product

1.3 Click on the first consumer

1.4 Verify that labels can be deleted

1.5 Verify that labels can be updated

1.6 Verify that labels can be added

| non_process | cypress test consumer detail edit labels manage edit labels spec authenticates mark access manager navigate to consumer page and filter the product click on the first consumer verify that labels can be deleted verify that labels can be updated verify that labels can be added | 0 |

377,483 | 11,171,584,125 | IssuesEvent | 2019-12-28 21:00:55 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | opened | Disabled the Next button until items have been chosen in a prescription | Bug: development Docs: not needed Effort: small Module: dispensary Priority: high | ## Describe the bug

Currently can proceed through the 'checkout' with no items.

### To reproduce

Steps to reproduce the behavior:

1. Create a new prescription

2. don't add any items and click next

4. See error

### Expected behaviour

`NEXT` should be disabled until at least one item has been chosen.... | 1.0 | Disabled the Next button until items have been chosen in a prescription - ## Describe the bug

Currently can proceed through the 'checkout' with no items.

### To reproduce

Steps to reproduce the behavior:

1. Create a new prescription

2. don't add any items and click next

4. See error

### Expected behav... | non_process | disabled the next button until items have been chosen in a prescription describe the bug currently can proceed through the checkout with no items to reproduce steps to reproduce the behavior create a new prescription don t add any items and click next see error expected behav... | 0 |

20,203 | 26,779,255,538 | IssuesEvent | 2023-01-31 19:41:21 | googleapis/google-cloud-node | https://api.github.com/repos/googleapis/google-cloud-node | closed | promote library to GA | type: process api: mediatranslation | Package name: **@google-cloud/media-translation**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [ ] 28 days elapsed si... | 1.0 | promote library to GA - Package name: **@google-cloud/media-translation**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

... | process | promote library to ga package name google cloud media translation current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue required ... | 1 |

8,648 | 11,789,750,207 | IssuesEvent | 2020-03-17 17:41:42 | googleapis/synthtool | https://api.github.com/repos/googleapis/synthtool | closed | Java: missing template tests | type: process | - [x] Run template generation using a sample `.repo-metadata.json` file

- [ ] Ensure any generated `pom.xml` files are valid XML | 1.0 | Java: missing template tests - - [x] Run template generation using a sample `.repo-metadata.json` file

- [ ] Ensure any generated `pom.xml` files are valid XML | process | java missing template tests run template generation using a sample repo metadata json file ensure any generated pom xml files are valid xml | 1 |

205,574 | 15,648,067,863 | IssuesEvent | 2021-03-23 04:52:21 | RTXteam/RTX | https://api.github.com/repos/RTXteam/RTX | closed | ARAX returning TRAPI-noncompliant result? | ARAX demo need to test on production question | From the BDT Friday stand-up meeting (2021.03.12),

<img width="1441" alt="Screen Shot 2021-03-12 at 9 04 20 AM" src="https://user-images.githubusercontent.com/5562107/110973381-0adf7a00-8312-11eb-98f2-e90c9bfd8471.png">

I think Sarah said that the red "50" means results that are not TRAPI-compliant. Just bringing... | 1.0 | ARAX returning TRAPI-noncompliant result? - From the BDT Friday stand-up meeting (2021.03.12),

<img width="1441" alt="Screen Shot 2021-03-12 at 9 04 20 AM" src="https://user-images.githubusercontent.com/5562107/110973381-0adf7a00-8312-11eb-98f2-e90c9bfd8471.png">

I think Sarah said that the red "50" means results... | non_process | arax returning trapi noncompliant result from the bdt friday stand up meeting img width alt screen shot at am src i think sarah said that the red means results that are not trapi compliant just bringing this up in case it is something fixable that is not currently on the doc... | 0 |

17,095 | 22,609,855,234 | IssuesEvent | 2022-06-29 16:09:20 | NationalSecurityAgency/ghidra | https://api.github.com/repos/NationalSecurityAgency/ghidra | closed | Fujitsu FR60/80 Support | Feature: Processor/Unsupported Status: Waiting on customer | Summary

----

I developed a [FR 60 Plugin](https://github.com/desrdev/ghidra-fr60) and I would like to upstream this work. I am unsure if the maintainers of this project are interested in accepting this functionality to mainline. If there is interest, would it be for generalizable functionality of the architecture or ... | 1.0 | Fujitsu FR60/80 Support - Summary

----

I developed a [FR 60 Plugin](https://github.com/desrdev/ghidra-fr60) and I would like to upstream this work. I am unsure if the maintainers of this project are interested in accepting this functionality to mainline. If there is interest, would it be for generalizable functionali... | process | fujitsu support summary i developed a and i would like to upstream this work i am unsure if the maintainers of this project are interested in accepting this functionality to mainline if there is interest would it be for generalizable functionality of the architecture or the core specific features as... | 1 |

337,561 | 30,249,536,383 | IssuesEvent | 2023-07-06 19:18:31 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | opened | Fix jax_numpy_logic.test_jax_greater | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5478319447"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5475303530"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/... | 1.0 | Fix jax_numpy_logic.test_jax_greater - | | |

|---|---|

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5478319447"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5475303530"><img src=https://img.shields.io/badge/-success-success></... | non_process | fix jax numpy logic test jax greater paddle a href src torch a href src numpy a href src jax a href src tensorflow a href src | 0 |

807,398 | 30,000,383,982 | IssuesEvent | 2023-06-26 08:56:06 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.reddit.com - design is broken | priority-critical browser-fenix engine-gecko android13 | <!-- @browser: Firefox Mobile 116.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 13; Mobile; rv:109.0) Gecko/116.0 Firefox/116.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/124045 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.red... | 1.0 | www.reddit.com - design is broken - <!-- @browser: Firefox Mobile 116.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 13; Mobile; rv:109.0) Gecko/116.0 Firefox/116.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/124045 -->

<!-- @extra_labels: browser... | non_process | design is broken url browser version firefox mobile operating system android tested another browser yes chrome problem type design is broken description images not loaded steps to reproduce image browsing control with content does not load loads in chrome... | 0 |

444,020 | 12,805,139,295 | IssuesEvent | 2020-07-03 06:48:56 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Make staging server build use staging account system | Priority: Medium Status: Fixed | Account server is https://auth.develop.strangeloopgames.com/, need to use this instead of the normal account server for staging builds.

- Find or add a compile flag to the staging build process (talk to Eugene aka Jaskes about this)

- Make the server check for this during compilation | 1.0 | Make staging server build use staging account system - Account server is https://auth.develop.strangeloopgames.com/, need to use this instead of the normal account server for staging builds.

- Find or add a compile flag to the staging build process (talk to Eugene aka Jaskes about this)

- Make the server check for... | non_process | make staging server build use staging account system account server is need to use this instead of the normal account server for staging builds find or add a compile flag to the staging build process talk to eugene aka jaskes about this make the server check for this during compilation | 0 |

65,503 | 14,727,876,951 | IssuesEvent | 2021-01-06 09:11:10 | Seagate/cortx-s3server | https://api.github.com/repos/Seagate/cortx-s3server | closed | CVE-2015-7576 (Low) detected in actionpack-4.2.2.gem | needs-attention needs-triage security vulnerability | ## CVE-2015-7576 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>actionpack-4.2.2.gem</b></p></summary>

<p>Web apps on Rails. Simple, battle-tested conventions for building and testing ... | True | CVE-2015-7576 (Low) detected in actionpack-4.2.2.gem - ## CVE-2015-7576 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>actionpack-4.2.2.gem</b></p></summary>

<p>Web apps on Rails. Simp... | non_process | cve low detected in actionpack gem cve low severity vulnerability vulnerable library actionpack gem web apps on rails simple battle tested conventions for building and testing mvc web applications works with any rack compatible server library home page a href depen... | 0 |

1,117 | 3,592,095,378 | IssuesEvent | 2016-02-01 14:52:04 | Project60/org.project60.sepa | https://api.github.com/repos/Project60/org.project60.sepa | closed | PaymentProcessor: duplicate period dropdown | bug CiviSEPA 1.1 CiviSEPA 1.2 payment processor | If you're using the SEPA payment processor on a donation page for recurring contributions with customized intervals along with other payment processors, the generated period dropdown field ("monthly", "quarterly", ...) that replaces the interval input fields will get duplicated if you change the selected payment proces... | 1.0 | PaymentProcessor: duplicate period dropdown - If you're using the SEPA payment processor on a donation page for recurring contributions with customized intervals along with other payment processors, the generated period dropdown field ("monthly", "quarterly", ...) that replaces the interval input fields will get dupli... | process | paymentprocessor duplicate period dropdown if you re using the sepa payment processor on a donation page for recurring contributions with customized intervals along with other payment processors the generated period dropdown field monthly quarterly that replaces the interval input fields will get dupli... | 1 |

11,303 | 14,106,787,513 | IssuesEvent | 2020-11-06 15:24:00 | metabase/metabase | https://api.github.com/repos/metabase/metabase | opened | Recursively process source queries | .Query Language (MBQL) Querying/Processor | Most of the middleware should not be aware of source queries, but we’d rather just be able to call the entire preprocessing middleware stack on the source query. | 1.0 | Recursively process source queries - Most of the middleware should not be aware of source queries, but we’d rather just be able to call the entire preprocessing middleware stack on the source query. | process | recursively process source queries most of the middleware should not be aware of source queries but we’d rather just be able to call the entire preprocessing middleware stack on the source query | 1 |

93,801 | 19,339,040,075 | IssuesEvent | 2021-12-15 00:48:11 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | opened | insights: FE space under series labels | bug ux team/code-insights | See the video below. There is a lot of extra space underneath the data series labels.

https://user-images.githubusercontent.com/1855233/146102299-8ed0e3e2-84b4-40f9-97cf-9a8b9b62c330.mov

| 1.0 | insights: FE space under series labels - See the video below. There is a lot of extra space underneath the data series labels.

https://user-images.githubusercontent.com/1855233/146102299-8ed0e3e2-84b4-40f9-97cf-9a8b9b62c330.mov

| non_process | insights fe space under series labels see the video below there is a lot of extra space underneath the data series labels | 0 |

13,647 | 16,358,668,018 | IssuesEvent | 2021-05-14 05:25:57 | Vanuatu-National-Statistics-Office/vnso-RAP-tradeStats-materials | https://api.github.com/repos/Vanuatu-National-Statistics-Office/vnso-RAP-tradeStats-materials | closed | Increase in observations when merging in Principle Commodities | bug dashboard data processing monthly report | When merging Principle Commodities there is still a jump from 12540 to 12545 observations. Not sure why this is happening

https://github.com/Vanuatu-National-Statistics-Office/vnso-RAP-tradeStats-materials/issues/49 | 1.0 | Increase in observations when merging in Principle Commodities - When merging Principle Commodities there is still a jump from 12540 to 12545 observations. Not sure why this is happening

https://github.com/Vanuatu-National-Statistics-Office/vnso-RAP-tradeStats-materials/issues/49 | process | increase in observations when merging in principle commodities when merging principle commodities there is still a jump from to observations not sure why this is happening | 1 |

118,660 | 11,985,558,139 | IssuesEvent | 2020-04-07 17:43:24 | reapit/foundations | https://api.github.com/repos/reapit/foundations | closed | Create glossary of terminology for platform API documentation | documentation platform-team | We should create a glossary that explains the terminology that our endpoints and other documentation makes use of.

- Provide clear, concise human readable documentation to explain the concepts exposed by the interactive API explorer

- Also include concepts that we talk about in our documentation (eg. customer / cl... | 1.0 | Create glossary of terminology for platform API documentation - We should create a glossary that explains the terminology that our endpoints and other documentation makes use of.

- Provide clear, concise human readable documentation to explain the concepts exposed by the interactive API explorer

- Also include con... | non_process | create glossary of terminology for platform api documentation we should create a glossary that explains the terminology that our endpoints and other documentation makes use of provide clear concise human readable documentation to explain the concepts exposed by the interactive api explorer also include con... | 0 |

21,032 | 27,972,094,880 | IssuesEvent | 2023-03-25 05:47:29 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | Add skip/src/dstnodata params to gdal2xyz (Request in QGIS) | Processing Alg 3.32 | ### Request for documentation

From pull request QGIS/qgis#52187

Author: @lpinner

QGIS version: 3.32

**Add skip/src/dstnodata params to gdal2xyz**

### PR Description:

## Description

Adds `-skipnodata` and `-src/dstnodata` parameters to GDAL provider gdal2xyz processing algorithm. These parameters were added to GDAL... | 1.0 | Add skip/src/dstnodata params to gdal2xyz (Request in QGIS) - ### Request for documentation

From pull request QGIS/qgis#52187

Author: @lpinner

QGIS version: 3.32

**Add skip/src/dstnodata params to gdal2xyz**

### PR Description:

## Description

Adds `-skipnodata` and `-src/dstnodata` parameters to GDAL provider gdal... | process | add skip src dstnodata params to request in qgis request for documentation from pull request qgis qgis author lpinner qgis version add skip src dstnodata params to pr description description adds skipnodata and src dstnodata parameters to gdal provider processing algorithm ... | 1 |

21,996 | 30,494,875,284 | IssuesEvent | 2023-07-18 10:07:14 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Casting -> coercion | doc-enhancement cba Pri1 azure-devops-pipelines/svc azure-devops-pipelines-process/subsvc |

I think Type coercion is meant instead of Type casting in the examples with Boolean to Integer coercion

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 77c58a78-a567-e99a-9eb7-62dddd1b90b6

* Version Independent ID: 680a79bc-11de... | 1.0 | Casting -> coercion -

I think Type coercion is meant instead of Type casting in the examples with Boolean to Integer coercion

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: 77c58a78-a567-e99a-9eb7-62dddd1b90b6

* Version Indepen... | process | casting coercion i think type coercion is meant instead of type casting in the examples with boolean to integer coercion document details ⚠ do not edit this section it is required for learn microsoft com ➟ github issue linking id version independent id conte... | 1 |

7,247 | 10,415,966,853 | IssuesEvent | 2019-09-14 09:09:38 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | GRASS v.build.polylines dosn't work in Qgis 3.4.10 | Bug Feedback Processing | In reference to issue #30680, Grass dosn't work in Qgis 3.4 to 3.8

I'm tested various GRASS algorithms and dosn't works with Qgis 3.4.10 from the Qgis process window.

> The specified GRASS 7 folder "C:\PROGRA~1\QGIS3~1.4\bin\bin" does not contain a valid set of GRASS 7 modules. Please, go to the Processing set... | 1.0 | GRASS v.build.polylines dosn't work in Qgis 3.4.10 - In reference to issue #30680, Grass dosn't work in Qgis 3.4 to 3.8

I'm tested various GRASS algorithms and dosn't works with Qgis 3.4.10 from the Qgis process window.

> The specified GRASS 7 folder "C:\PROGRA~1\QGIS3~1.4\bin\bin" does not contain a valid set... | process | grass v build polylines dosn t work in qgis in reference to issue grass dosn t work in qgis to i m tested various grass algorithms and dosn t works with qgis from the qgis process window the specified grass folder c progra bin bin does not contain a valid set of grass ... | 1 |

83,407 | 24,055,513,170 | IssuesEvent | 2022-09-16 16:27:06 | gradle/gradle | https://api.github.com/repos/gradle/gradle | closed | Nested composites with cyclic dependencies cause an error | a:bug in:composite-builds stale | Version: 4.10.nightly

Let's say you have the following dependencies between composite builds:

```

projectA --compile-->

projectB --testcompile-->

projectC --compile-->

projectA

```

This does not create a cycle due to the testcompile dependency between projectB and projectC.

... | 1.0 | Nested composites with cyclic dependencies cause an error - Version: 4.10.nightly

Let's say you have the following dependencies between composite builds:

```

projectA --compile-->

projectB --testcompile-->

projectC --compile-->

projectA

```

This does not create a cycle due t... | non_process | nested composites with cyclic dependencies cause an error version nightly let s say you have the following dependencies between composite builds projecta compile projectb testcompile projectc compile projecta this does not create a cycle due to... | 0 |

233,456 | 17,865,414,881 | IssuesEvent | 2021-09-06 08:51:40 | NorESMhub/NorESM | https://api.github.com/repos/NorESMhub/NorESM | opened | Archiving NorESM output data | Documentation | Hi,

We wish to document "Best practices for archiving", which involves both short term and long term archiving. Can you please help me with this?

**Short term archiving**

- What is the best practice to archive files from HPC machines to e.g. NIRD?

- rsync, scp, sshfs (make a mount folder)

- I use rsync, bu... | 1.0 | Archiving NorESM output data - Hi,

We wish to document "Best practices for archiving", which involves both short term and long term archiving. Can you please help me with this?

**Short term archiving**

- What is the best practice to archive files from HPC machines to e.g. NIRD?

- rsync, scp, sshfs (make a mo... | non_process | archiving noresm output data hi we wish to document best practices for archiving which involves both short term and long term archiving can you please help me with this short term archiving what is the best practice to archive files from hpc machines to e g nird rsync scp sshfs make a mo... | 0 |

13,568 | 16,105,628,489 | IssuesEvent | 2021-04-27 14:38:14 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | `process.exit` results in a segmentation fault in v12.x | confirmed-bug fs process | <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the template below as you're able.

Version: output of `node -v`

P... | 1.0 | `process.exit` results in a segmentation fault in v12.x - <!--

Thank you for reporting an issue.

This issue tracker is for bugs and issues found within Node.js core.

If you require more general support please file an issue on our help

repo. https://github.com/nodejs/help

Please fill in as much of the templat... | process | process exit results in a segmentation fault in x thank you for reporting an issue this issue tracker is for bugs and issues found within node js core if you require more general support please file an issue on our help repo please fill in as much of the template below as you re able vers... | 1 |

7,637 | 8,017,064,813 | IssuesEvent | 2018-07-25 14:58:56 | terraform-providers/terraform-provider-azurerm | https://api.github.com/repos/terraform-providers/terraform-provider-azurerm | closed | Data Source for Azure Container Registry | good first issue new-data-source service/container-registry | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | Data Source for Azure Container Registry - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers... | non_process | data source for azure container registry community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra noise for issue followers and do not help priorit... | 0 |

14,579 | 17,703,385,488 | IssuesEvent | 2021-08-25 02:54:54 | kevingduck/gh | https://api.github.com/repos/kevingduck/gh | reopened | Big release! | New Epic Processed Epic EPIC-9876 | ## Multi-phase Release | 🔗 For linking related issues across release phases (i.e., an epic)

🚧 **NOTICE: This is an experiment. Pay no attention to that man behind the curtain! See https://github.com/github/product-operations/issues/254 for more information.** 🚧

### Each phase of a release (alpha, beta, etc.)... | 1.0 | Big release! - ## Multi-phase Release | 🔗 For linking related issues across release phases (i.e., an epic)

🚧 **NOTICE: This is an experiment. Pay no attention to that man behind the curtain! See https://github.com/github/product-operations/issues/254 for more information.** 🚧

### Each phase of a release (alp... | process | big release multi phase release 🔗 for linking related issues across release phases i e an epic 🚧 notice this is an experiment pay no attention to that man behind the curtain see for more information 🚧 each phase of a release alpha beta etc should have its own issue created in t... | 1 |

5,186 | 7,736,148,623 | IssuesEvent | 2018-05-27 23:06:38 | lgmagalhaes88/cms-app | https://api.github.com/repos/lgmagalhaes88/cms-app | closed | Tela upload de arquivo | requirement template | Criar tela de upload de arquivo .txt com no formato: `nome_da_disciplina nome_do_professor período`.

Enviando-o para o servidor backend

Após o processamento e retorno das informações, é exibida um grid organizado pelos dias da semana e pelos períodos, informando as aulas do primeiro e segundo horário. | 1.0 | Tela upload de arquivo - Criar tela de upload de arquivo .txt com no formato: `nome_da_disciplina nome_do_professor período`.

Enviando-o para o servidor backend

Após o processamento e retorno das informações, é exibida um grid organizado pelos dias da semana e pelos períodos, informando as aulas do primeiro e segun... | non_process | tela upload de arquivo criar tela de upload de arquivo txt com no formato nome da disciplina nome do professor período enviando o para o servidor backend após o processamento e retorno das informações é exibida um grid organizado pelos dias da semana e pelos períodos informando as aulas do primeiro e segun... | 0 |

449,271 | 31,836,382,914 | IssuesEvent | 2023-09-14 13:46:01 | vatesfr/xen-orchestra | https://api.github.com/repos/vatesfr/xen-orchestra | closed | Unable to stop backup | type: enhancement :sparkles: comp: documentation :book: Priority 3: can wait/workaround :yellow_circle: status::in backlog :white_check_mark: | **XOA or XO from the sources?**

XO from sources.

**Describe the bug**

A clear and concise description of what the bug is.

My backup job is stuck ( another problem ;-) )

I can't stop it !

<img width="265" alt="image" src="https://user-images.githubusercontent.com/4645967/167281207-eee77794-0efc-4799-8ab5-7... | 1.0 | Unable to stop backup - **XOA or XO from the sources?**

XO from sources.

**Describe the bug**

A clear and concise description of what the bug is.

My backup job is stuck ( another problem ;-) )

I can't stop it !

<img width="265" alt="image" src="https://user-images.githubusercontent.com/4645967/167281207-e... | non_process | unable to stop backup xoa or xo from the sources xo from sources describe the bug a clear and concise description of what the bug is my backup job is stuck another problem i can t stop it img width alt image src to reproduce steps to reproduce the behavior ... | 0 |

102,272 | 8,823,836,572 | IssuesEvent | 2019-01-02 15:04:04 | dwyl/hq | https://api.github.com/repos/dwyl/hq | closed | What is the company PAYE reference number? (for tax return purposes) | please-test question | I need to know:

What is the company PAYE reference number? (for tax return purposes)

I'm not sure if this information is sensitive so I don't mind if you don't respond here. Apparently they're usually on your payslips but I can't see ours on there. | 1.0 | What is the company PAYE reference number? (for tax return purposes) - I need to know:

What is the company PAYE reference number? (for tax return purposes)

I'm not sure if this information is sensitive so I don't mind if you don't respond here. Apparently they're usually on your payslips but I can't see ours on t... | non_process | what is the company paye reference number for tax return purposes i need to know what is the company paye reference number for tax return purposes i m not sure if this information is sensitive so i don t mind if you don t respond here apparently they re usually on your payslips but i can t see ours on t... | 0 |

14,819 | 18,156,878,427 | IssuesEvent | 2021-09-27 03:37:36 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | closed | Bazel cannot generate google-cloud-asset-v1 because of external proto references | type: process | The google-cloud-asset-v1 library is still being generated via the docker release of gapic-generator-ruby. This is because asset-v1 references a bunch of protos, specifically:

* google/cloud/orgpolicy/v1/orgpolicy.proto

* google/cloud/osconfig/v1/inventory.proto

* google/identity/accesscontextmanager/type/device_r... | 1.0 | Bazel cannot generate google-cloud-asset-v1 because of external proto references - The google-cloud-asset-v1 library is still being generated via the docker release of gapic-generator-ruby. This is because asset-v1 references a bunch of protos, specifically:

* google/cloud/orgpolicy/v1/orgpolicy.proto

* google/clou... | process | bazel cannot generate google cloud asset because of external proto references the google cloud asset library is still being generated via the docker release of gapic generator ruby this is because asset references a bunch of protos specifically google cloud orgpolicy orgpolicy proto google cloud os... | 1 |

83,705 | 3,640,878,098 | IssuesEvent | 2016-02-13 06:27:45 | jerradgenson/auteur | https://api.github.com/repos/jerradgenson/auteur | opened | RSS URLs reference index.html | enhancement low priority | RSS feed URLs should reference article directories instead of referencing their index.html files directly. | 1.0 | RSS URLs reference index.html - RSS feed URLs should reference article directories instead of referencing their index.html files directly. | non_process | rss urls reference index html rss feed urls should reference article directories instead of referencing their index html files directly | 0 |

7,548 | 10,674,335,429 | IssuesEvent | 2019-10-21 09:14:08 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Too many term in immune signalling | multi-species process |

all of these terms must represent a gene product upstream of the immune response signalling and so, essentially are all the same process.

GO:0035419 activation of MAPK activity involved in innate immune response | part_of

-- | --

GO:0035422 activation of MAPKKK activity involved in innate immune response ... | 1.0 | Too many term in immune signalling -

all of these terms must represent a gene product upstream of the immune response signalling and so, essentially are all the same process.

GO:0035419 activation of MAPK activity involved in innate immune response | part_of

-- | --

GO:0035422 activation of MAPKKK activit... | process | too many term in immune signalling all of these terms must represent a gene product upstream of the immune response signalling and so essentially are all the same process go activation of mapk activity involved in innate immune response part of go activation of mapkkk activity involved i... | 1 |

4,139 | 7,094,784,418 | IssuesEvent | 2018-01-13 08:32:06 | triplea-game/triplea | https://api.github.com/repos/triplea-game/triplea | opened | Label Changes | category: dev & admin process discussion | Quick FYI and discussion for recent label updates. Some changes:

- rename p0 + p1 to "production - p0" and "production p1"

- regression renamed back to 'release blocker'

- type + category merged, just now 'category.*' labels, no 'type.*:' labels.

3rd is a bit more controversial, but it was not clear that havin... | 1.0 | Label Changes - Quick FYI and discussion for recent label updates. Some changes:

- rename p0 + p1 to "production - p0" and "production p1"

- regression renamed back to 'release blocker'

- type + category merged, just now 'category.*' labels, no 'type.*:' labels.

3rd is a bit more controversial, but it was not ... | process | label changes quick fyi and discussion for recent label updates some changes rename to production and production regression renamed back to release blocker type category merged just now category labels no type labels is a bit more controversial but it was not clear ... | 1 |

19,916 | 26,378,759,772 | IssuesEvent | 2023-01-12 06:27:39 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Remote download minimal will download all input files for symlink | more data needed type: support / not a bug (process) team-Remote-Exec | ### Description of the bug:

Symlink inputs(files they point to) are downloaded to host when remote_download_minimal is set.

Because C++ shared libraries are all symlinked to a solib directory, this causes all shared libraries to be downloaded.

I would expect them not to be downloaded. During execution, the actu... | 1.0 | Remote download minimal will download all input files for symlink - ### Description of the bug:

Symlink inputs(files they point to) are downloaded to host when remote_download_minimal is set.

Because C++ shared libraries are all symlinked to a solib directory, this causes all shared libraries to be downloaded.

... | process | remote download minimal will download all input files for symlink description of the bug symlink inputs files they point to are downloaded to host when remote download minimal is set because c shared libraries are all symlinked to a solib directory this causes all shared libraries to be downloaded ... | 1 |

5,216 | 8,007,146,601 | IssuesEvent | 2018-07-24 00:36:08 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | opened | Release 'datastore 1.7.0' | api: datastore packaging type: process | Major changes are:

- Add support for Python 3.7.

- Drop support for Python 3.4.

- Bugfix: query offsets (#4675). | 1.0 | Release 'datastore 1.7.0' - Major changes are:

- Add support for Python 3.7.

- Drop support for Python 3.4.

- Bugfix: query offsets (#4675). | process | release datastore major changes are add support for python drop support for python bugfix query offsets | 1 |

366,137 | 25,570,534,986 | IssuesEvent | 2022-11-30 17:18:54 | redpanda-data/documentation | https://api.github.com/repos/redpanda-data/documentation | opened | Create a page to explain what is Redpanda's versioning scheme | documentation | ### Describe the Issue

During the execution of this [PR](https://github.com/redpanda-data/documentation/pull/859) we mention that users should only upgrade one major version at a time. But we never clearly inform what constitutes a major version for us.

So, ideally we should create a page to clearly explain to o... | 1.0 | Create a page to explain what is Redpanda's versioning scheme - ### Describe the Issue

During the execution of this [PR](https://github.com/redpanda-data/documentation/pull/859) we mention that users should only upgrade one major version at a time. But we never clearly inform what constitutes a major version for us.... | non_process | create a page to explain what is redpanda s versioning scheme describe the issue during the execution of this we mention that users should only upgrade one major version at a time but we never clearly inform what constitutes a major version for us so ideally we should create a page to clearly explai... | 0 |

417 | 2,852,496,369 | IssuesEvent | 2015-06-01 13:56:29 | genomizer/genomizer-server | https://api.github.com/repos/genomizer/genomizer-server | closed | Fix or delete ignored tests | Data Storage enhancement Processing | I've disabled a bunch of tests that were either erroneous, depended on hardcoded data not included in the repo, were too slow or couldn't be easily fixed. Please take a look and either delete or re-enable them.

Full list of disabled tests:

- [x] `test/server/test/ServerGenomeReleaseTest.java`

- [x] `test/server/... | 1.0 | Fix or delete ignored tests - I've disabled a bunch of tests that were either erroneous, depended on hardcoded data not included in the repo, were too slow or couldn't be easily fixed. Please take a look and either delete or re-enable them.

Full list of disabled tests:

- [x] `test/server/test/ServerGenomeReleaseT... | process | fix or delete ignored tests i ve disabled a bunch of tests that were either erroneous depended on hardcoded data not included in the repo were too slow or couldn t be easily fixed please take a look and either delete or re enable them full list of disabled tests test server test servergenomereleasetes... | 1 |

37,148 | 9,968,128,604 | IssuesEvent | 2019-07-08 14:57:48 | GoogleChrome/workbox | https://api.github.com/repos/GoogleChrome/workbox | opened | Minimum required version of node should be v8 | Breaking Change workbox-build workbox-cli workbox-webpack-plugin | As per https://nodejs.org/en/about/releases/, the earliest version of node that's still in the maintenance window is v8.x.

In the Workbox v5 release, we should switch out minimum required version of node to match, and update our `babel-preset-env` transpilation accordingly for the build tools.

Additionally, we sh... | 1.0 | Minimum required version of node should be v8 - As per https://nodejs.org/en/about/releases/, the earliest version of node that's still in the maintenance window is v8.x.

In the Workbox v5 release, we should switch out minimum required version of node to match, and update our `babel-preset-env` transpilation accordi... | non_process | minimum required version of node should be as per the earliest version of node that s still in the maintenance window is x in the workbox release we should switch out minimum required version of node to match and update our babel preset env transpilation accordingly for the build tools additionall... | 0 |

271,429 | 23,603,979,815 | IssuesEvent | 2022-08-24 06:29:14 | MrBrax/LiveStreamDVR | https://api.github.com/repos/MrBrax/LiveStreamDVR | closed | Force resubscribe | needs testing | When pressing subscribe on the channel settings page, even if the subscription was deleted from the "about" page, then nothing happens.

Log just says this:

```

web_1 | 2022-06-06 19:19:50.004 | helper <INFO> Skip subscription to 23161357:stream.online (lirik), in cache.

web_1 | 2022-06-06 19:19:50.005 | helper ... | 1.0 | Force resubscribe - When pressing subscribe on the channel settings page, even if the subscription was deleted from the "about" page, then nothing happens.

Log just says this:

```

web_1 | 2022-06-06 19:19:50.004 | helper <INFO> Skip subscription to 23161357:stream.online (lirik), in cache.

web_1 | 2022-06-06 19... | non_process | force resubscribe when pressing subscribe on the channel settings page even if the subscription was deleted from the about page then nothing happens log just says this web helper skip subscription to stream online lirik in cache web helper skip subsc... | 0 |

10,170 | 13,044,162,738 | IssuesEvent | 2020-07-29 03:47:35 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `GetParamString` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `GetParamString` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/src/rpn_ex... | 2.0 | UCP: Migrate scalar function `GetParamString` from TiDB -

## Description

Port the scalar function `GetParamString` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @lonng

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.... | process | ucp migrate scalar function getparamstring from tidb description port the scalar function getparamstring from tidb to coprocessor score mentor s lonng recommended skills rust programming learning materials already implemented expressions ported from tidb | 1 |

726,907 | 25,015,682,899 | IssuesEvent | 2022-11-03 18:35:35 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | Dragons are OMEGAfucked | Issue: Bug Priority: 2-Before Release Difficulty: 1-Easy | I spawned one on live, and devour action simply didn't work. This absolutely cucks the dragon as it's 50% of what it does.

Their damage to windows/walls is also very low, I know you're supposed to be able to devour those instead of attacking them, but maybe it should be bumped up just a tiny bit? | 1.0 | Dragons are OMEGAfucked - I spawned one on live, and devour action simply didn't work. This absolutely cucks the dragon as it's 50% of what it does.

Their damage to windows/walls is also very low, I know you're supposed to be able to devour those instead of attacking them, but maybe it should be bumped up just a tin... | non_process | dragons are omegafucked i spawned one on live and devour action simply didn t work this absolutely cucks the dragon as it s of what it does their damage to windows walls is also very low i know you re supposed to be able to devour those instead of attacking them but maybe it should be bumped up just a tiny... | 0 |

3,929 | 6,848,433,191 | IssuesEvent | 2017-11-13 18:30:15 | syndesisio/syndesis-ui | https://api.github.com/repos/syndesisio/syndesis-ui | closed | Update the config.json in development mode so it's obvious you're running against the development server | dev process Priority - High | We should change the app name or otherwise update the config.json so that it's quick and easy to see that you're looking at the development server, saves having to go make some trivial change to check. | 1.0 | Update the config.json in development mode so it's obvious you're running against the development server - We should change the app name or otherwise update the config.json so that it's quick and easy to see that you're looking at the development server, saves having to go make some trivial change to check. | process | update the config json in development mode so it s obvious you re running against the development server we should change the app name or otherwise update the config json so that it s quick and easy to see that you re looking at the development server saves having to go make some trivial change to check | 1 |

13,490 | 16,018,572,081 | IssuesEvent | 2021-04-20 19:18:18 | anlsys/aml | https://api.github.com/repos/anlsys/aml | opened | Integration Tests | process:proposal | In GitLab by @NicolasDenoyelle on Mar 15, 2021, 10:42

Create a pipeline stage with integration tests.

For instance, the test could consist in:

- Fetch applications using AML.

- Build AML

- Build applications and link with AML.

- Run applications.

[XSBench](https://github.com/ANL-CESAR/XSBench) is an application ... | 1.0 | Integration Tests - In GitLab by @NicolasDenoyelle on Mar 15, 2021, 10:42

Create a pipeline stage with integration tests.

For instance, the test could consist in:

- Fetch applications using AML.

- Build AML

- Build applications and link with AML.

- Run applications.

[XSBench](https://github.com/ANL-CESAR/XSBench... | process | integration tests in gitlab by nicolasdenoyelle on mar create a pipeline stage with integration tests for instance the test could consist in fetch applications using aml build aml build applications and link with aml run applications is an application using aml that could be used a... | 1 |

19,507 | 25,818,942,330 | IssuesEvent | 2022-12-12 08:05:15 | googleapis/ruby-style | https://api.github.com/repos/googleapis/ruby-style | reopened | Dependency Dashboard | type: process | This issue lists Renovate updates and detected dependencies. Read the [Dependency Dashboard](https://docs.renovatebot.com/key-concepts/dashboard/) docs to learn more.

This repository currently has no open or pending branches.

## Detected dependencies

<details><summary>bundler</summary>

<blockquote>

<details><summar... | 1.0 | Dependency Dashboard - This issue lists Renovate updates and detected dependencies. Read the [Dependency Dashboard](https://docs.renovatebot.com/key-concepts/dashboard/) docs to learn more.

This repository currently has no open or pending branches.

## Detected dependencies

<details><summary>bundler</summary>

<blockq... | process | dependency dashboard this issue lists renovate updates and detected dependencies read the docs to learn more this repository currently has no open or pending branches detected dependencies bundler gemfile github actions github workflows ci yml actions checkout rub... | 1 |

14,497 | 17,604,292,631 | IssuesEvent | 2021-08-17 15:13:32 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE][processing] Export layers information algorithm (Request in QGIS) | Processing Alg 3.18 | ### Request for documentation

From pull request QGIS/qgis#40462

Author: @nirvn

QGIS version: 3.18

**[FEATURE][processing] Export layers information algorithm**

### PR Description:

## Description

This PR adds a new algorithm to QGIS' processing toolbox named export layers information. The algorithm creates a polygo... | 1.0 | [FEATURE][processing] Export layers information algorithm (Request in QGIS) - ### Request for documentation

From pull request QGIS/qgis#40462

Author: @nirvn

QGIS version: 3.18

**[FEATURE][processing] Export layers information algorithm**

### PR Description:

## Description

This PR adds a new algorithm to QGIS' proc... | process | export layers information algorithm request in qgis request for documentation from pull request qgis qgis author nirvn qgis version export layers information algorithm pr description description this pr adds a new algorithm to qgis processing toolbox named export layers informat... | 1 |

40,741 | 10,141,071,553 | IssuesEvent | 2019-08-03 10:30:43 | STEllAR-GROUP/hpx | https://api.github.com/repos/STEllAR-GROUP/hpx | closed | install error: libparcel_coalescing.so.0.9.11 missing | category: CMake tag: wontfix type: defect | I tried to install HPX locally on a Kubuntu system using

```

cmake -DCMAKE_INSTALL_PREFIX=/home/mario/hpx_gcc ../hpx

make -j4

make install

```

and I get the following:

```

CMake Error at cmake_install.cmake:48 (FILE):

file INSTALL cannot find

"/home/mario/hpx_gcc/lib/hpx/libparcel_coalescing.so.0.9.11".

```

Ind... | 1.0 | install error: libparcel_coalescing.so.0.9.11 missing - I tried to install HPX locally on a Kubuntu system using

```

cmake -DCMAKE_INSTALL_PREFIX=/home/mario/hpx_gcc ../hpx

make -j4

make install

```

and I get the following:

```

CMake Error at cmake_install.cmake:48 (FILE):

file INSTALL cannot find

"/home/mario/h... | non_process | install error libparcel coalescing so missing i tried to install hpx locally on a kubuntu system using cmake dcmake install prefix home mario hpx gcc hpx make make install and i get the following cmake error at cmake install cmake file file install cannot find home mario hpx ... | 0 |

1,780 | 6,715,574,036 | IssuesEvent | 2017-10-13 21:51:11 | p4lang/p4-spec | https://api.github.com/repos/p4lang/p4-spec | closed | [PSA] Should PRE be defined to have multiple class-of-service queues per output port? | portable switch architecture | It is a relatively common feature for a queueing system to have multiple queues per output port, not merely one queue per port. Perhaps the PSA could have a constant that is part of the architecture spec, that could vary across implementations, that gives the maximum number of queues per output port, and a way to spec... | 1.0 | [PSA] Should PRE be defined to have multiple class-of-service queues per output port? - It is a relatively common feature for a queueing system to have multiple queues per output port, not merely one queue per port. Perhaps the PSA could have a constant that is part of the architecture spec, that could vary across imp... | non_process | should pre be defined to have multiple class of service queues per output port it is a relatively common feature for a queueing system to have multiple queues per output port not merely one queue per port perhaps the psa could have a constant that is part of the architecture spec that could vary across impleme... | 0 |

13,652 | 16,360,879,829 | IssuesEvent | 2021-05-14 09:16:26 | CGAL/cgal | https://api.github.com/repos/CGAL/cgal | closed | SOR CGAL PointSet | Pkg::Point_set_processing_3 question | Hi,

I am trying to follow SOR outlier removal tutorial from here:

https://doc.cgal.org/latest/Point_set_processing_3/Point_set_processing_3_2remove_outliers_example_8cpp-example.html

But it works with Point vector.

Is there any possibility to use a PointSet with color also?

If yes is it possible to show a code... | 1.0 | SOR CGAL PointSet - Hi,

I am trying to follow SOR outlier removal tutorial from here:

https://doc.cgal.org/latest/Point_set_processing_3/Point_set_processing_3_2remove_outliers_example_8cpp-example.html

But it works with Point vector.

Is there any possibility to use a PointSet with color also?

If yes is it po... | process | sor cgal pointset hi i am trying to follow sor outlier removal tutorial from here but it works with point vector is there any possibility to use a pointset with color also if yes is it possible to show a code example when i try to use pointset instead of point vector i get following error ... | 1 |

734,067 | 25,337,302,987 | IssuesEvent | 2022-11-18 17:59:06 | apache/lucene | https://api.github.com/repos/apache/lucene | closed | Add an equivalent of ant's stage-maven-artifacts for the release wizard [LUCENE-10293] | type:task legacy-jira-priority:Minor | Currently the RC Maven artifacts cannot be easily moved to Nexus. Devise a way to do it from gradle (either as a bundle ZIP or directly, the way it was done before).

---

Migrated from [LUCENE-10293](https://issues.apache.org/jira/browse/LUCENE-10293) by Dawid Weiss (@dweiss), updated Apr 14 2022

Linked issues:

- #1... | 1.0 | Add an equivalent of ant's stage-maven-artifacts for the release wizard [LUCENE-10293] - Currently the RC Maven artifacts cannot be easily moved to Nexus. Devise a way to do it from gradle (either as a bundle ZIP or directly, the way it was done before).

---

Migrated from [LUCENE-10293](https://issues.apache.org/jir... | non_process | add an equivalent of ant s stage maven artifacts for the release wizard currently the rc maven artifacts cannot be easily moved to nexus devise a way to do it from gradle either as a bundle zip or directly the way it was done before migrated from by dawid weiss dweiss updated apr linked issu... | 0 |

180,097 | 30,390,196,937 | IssuesEvent | 2023-07-13 06:16:04 | airswap/airswap-web | https://api.github.com/repos/airswap/airswap-web | closed | Add wallet confirmation screen when user interacts with metamask (or other wallet) | enhancement needs-design | We need to improve the metamask (and other wallets) flow a bit. Right now the “Sign” and “Take” button will go in a loading state when opening metamask. I think for some users it didn’t open (or they didn’t notice it?) and that’s why they were stuck. So we need a “If your wallet does not open something went wrong” mess... | 1.0 | Add wallet confirmation screen when user interacts with metamask (or other wallet) - We need to improve the metamask (and other wallets) flow a bit. Right now the “Sign” and “Take” button will go in a loading state when opening metamask. I think for some users it didn’t open (or they didn’t notice it?) and that’s why t... | non_process | add wallet confirmation screen when user interacts with metamask or other wallet we need to improve the metamask and other wallets flow a bit right now the “sign” and “take” button will go in a loading state when opening metamask i think for some users it didn’t open or they didn’t notice it and that’s why t... | 0 |

90,994 | 15,856,355,169 | IssuesEvent | 2021-04-08 02:08:46 | faizulho/storefront | https://api.github.com/repos/faizulho/storefront | opened | CVE-2020-28500 (Medium) detected in lodash-4.17.14.tgz | security vulnerability | ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.14.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registr... | True | CVE-2020-28500 (Medium) detected in lodash-4.17.14.tgz - ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.14.tgz</b></p></summary>

<p>Lodash modular util... | non_process | cve medium detected in lodash tgz cve medium severity vulnerability vulnerable library lodash tgz lodash modular utilities library home page a href path to dependency file storefront package json path to vulnerable library storefront node modules commitlint ensure ... | 0 |

15,012 | 18,723,926,419 | IssuesEvent | 2021-11-03 14:34:30 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - relationshipOfResource | Term - change Class - ResourceRelationship non-normative Process - complete | ## Change term

* Submitter: Peter Desmet @peterdesmet

* Justification (why is this change necessary?): The definition is unclear, even misleading. The way it is, directionality of the relationship can be interpreted both ways. The relationship must be from subject to object.

* Proponents (who needs this change): ... | 1.0 | Change term - relationshipOfResource - ## Change term

* Submitter: Peter Desmet @peterdesmet

* Justification (why is this change necessary?): The definition is unclear, even misleading. The way it is, directionality of the relationship can be interpreted both ways. The relationship must be from subject to object.

... | process | change term relationshipofresource change term submitter peter desmet peterdesmet justification why is this change necessary the definition is unclear even misleading the way it is directionality of the relationship can be interpreted both ways the relationship must be from subject to object ... | 1 |

11,207 | 13,957,705,381 | IssuesEvent | 2020-10-24 08:14:30 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | MT-MITA: Harvest | Geoportal Harvesting process MT - Malta | Good Morning Angelo,

Kindly can you perform a harvest for the Maltese CSW as the last harvest was performed on the 26th March 2018. On a side note is there a problem with the harvesting or its just the portal is currently being busy? Kindly can you check whether the harvesting for Malta is seen set to daily from your s... | 1.0 | MT-MITA: Harvest - Good Morning Angelo,

Kindly can you perform a harvest for the Maltese CSW as the last harvest was performed on the 26th March 2018. On a side note is there a problem with the harvesting or its just the portal is currently being busy? Kindly can you check whether the harvesting for Malta is seen set t... | process | mt mita harvest good morning angelo kindly can you perform a harvest for the maltese csw as the last harvest was performed on the march on a side note is there a problem with the harvesting or its just the portal is currently being busy kindly can you check whether the harvesting for malta is seen set to dail... | 1 |

506,053 | 14,657,297,047 | IssuesEvent | 2020-12-28 15:17:56 | ac2cz/FoxTelem | https://api.github.com/repos/ac2cz/FoxTelem | opened | PPM adjustment for RTL | RTL RTL-SDR enhancement low priority | Is it possible to implement an option to enter the ppm-value of an RTL-SDR as they differ from piece to piece (have 3 of them, one has 56 ppm, next 68, the last one 41)? | 1.0 | PPM adjustment for RTL - Is it possible to implement an option to enter the ppm-value of an RTL-SDR as they differ from piece to piece (have 3 of them, one has 56 ppm, next 68, the last one 41)? | non_process | ppm adjustment for rtl is it possible to implement an option to enter the ppm value of an rtl sdr as they differ from piece to piece have of them one has ppm next the last one | 0 |

9,192 | 12,228,996,307 | IssuesEvent | 2020-05-03 21:55:19 | chfor183/data_science_articles | https://api.github.com/repos/chfor183/data_science_articles | opened | Integrated Tools | Data Preprocessing Machine Learning Probability and Statistic | ## TL;DR

Alteryx

RapidMiner

IBM SPSS

Orange

Google Analytics

SAS (Statistical Analysis System)

Data Robot

BigML

Knime

STATA

H2O.ai

## Key Takeaways

- 1

- 2

## Useful Code Snippets

```

function test() {

console.log("notice the blank line before this function?");

}

```

## Articles/Ressource... | 1.0 | Integrated Tools - ## TL;DR

Alteryx

RapidMiner

IBM SPSS

Orange

Google Analytics

SAS (Statistical Analysis System)

Data Robot

BigML

Knime

STATA

H2O.ai

## Key Takeaways

- 1

- 2

## Useful Code Snippets

```

function test() {

console.log("notice the blank line before this function?");

}

```

##... | process | integrated tools tl dr alteryx rapidminer ibm spss orange google analytics sas statistical analysis system data robot bigml knime stata ai key takeaways useful code snippets function test console log notice the blank line before this function a... | 1 |

17,324 | 23,142,866,745 | IssuesEvent | 2022-07-28 20:23:11 | USGS-R/drb-do-ml | https://api.github.com/repos/USGS-R/drb-do-ml | reopened | Inspect hidden states for process understanding | experiment process-guidance | An idea that has come up is to inspect the hidden states to see if they are behaving as we would expect some state or flux in the process would behave.

The two examples that have come up are:

1. a **biomass**: some representation of a biomass that accumulates in the summer, is lower in the winter, and maybe has a ... | 1.0 | Inspect hidden states for process understanding - An idea that has come up is to inspect the hidden states to see if they are behaving as we would expect some state or flux in the process would behave.

The two examples that have come up are:

1. a **biomass**: some representation of a biomass that accumulates in th... | process | inspect hidden states for process understanding an idea that has come up is to inspect the hidden states to see if they are behaving as we would expect some state or flux in the process would behave the two examples that have come up are a biomass some representation of a biomass that accumulates in th... | 1 |

6,437 | 2,588,038,355 | IssuesEvent | 2015-02-17 22:13:34 | ilios/ilios | https://api.github.com/repos/ilios/ilios | closed | load user attributes in management console asynchronously | Deprecated Functionality low priority wontfix | refs #364.

see `ilios.management.user_accounts.buildUserAddAndRolesDOM`

rewrite this whole mess to load user attributes asynchronously. | 1.0 | load user attributes in management console asynchronously - refs #364.

see `ilios.management.user_accounts.buildUserAddAndRolesDOM`

rewrite this whole mess to load user attributes asynchronously. | non_process | load user attributes in management console asynchronously refs see ilios management user accounts builduseraddandrolesdom rewrite this whole mess to load user attributes asynchronously | 0 |

188,524 | 15,164,537,027 | IssuesEvent | 2021-02-12 13:52:57 | arturo-lang/arturo | https://api.github.com/repos/arturo-lang/arturo | closed | [Reflection\boolean?] add example for documentation | documentation easy library todo | [Reflection\boolean?] add example for documentation

https://github.com/arturo-lang/arturo/blob/a971add892fe3d675b3320f356cf2d96179e2a22/src/library/Reflection.nim#L207

```text

attrs = NoAttrs,

returns = {Boolean},

# TODO(Reflection\boolean?) add example for documentation

# l... | 1.0 | [Reflection\boolean?] add example for documentation - [Reflection\boolean?] add example for documentation

https://github.com/arturo-lang/arturo/blob/a971add892fe3d675b3320f356cf2d96179e2a22/src/library/Reflection.nim#L207

```text

attrs = NoAttrs,

returns = {Boolean},

# TODO(Reflectio... | non_process | add example for documentation add example for documentation text attrs noattrs returns boolean todo reflection boolean add example for documentation labels library documentation easy example ... | 0 |

14,731 | 17,950,275,452 | IssuesEvent | 2021-09-12 15:41:24 | Leviatan-Analytics/LA-data-processing | https://api.github.com/repos/Leviatan-Analytics/LA-data-processing | closed | Implement analysis progress endpoint [3] | Data Processing Week 2 Sprint 4 | Do the implementation of the analysis progress to know in which part of the data processing flow is. | 1.0 | Implement analysis progress endpoint [3] - Do the implementation of the analysis progress to know in which part of the data processing flow is. | process | implement analysis progress endpoint do the implementation of the analysis progress to know in which part of the data processing flow is | 1 |

9,384 | 3,038,668,804 | IssuesEvent | 2015-08-07 00:28:20 | servo/servo | https://api.github.com/repos/servo/servo | closed | Write a reftest for the background attribute on the body element | A-testing C-assigned E-easy | #5851 merged without any tests. We should add one - have a reference page that uses CSS to set the background image for a page, and a test page that sets the attribute on the body element instead. See the documentation at http://testthewebforward.org/docs/reftests.html and examples at http://mxr.mozilla.org/servo/sourc... | 1.0 | Write a reftest for the background attribute on the body element - #5851 merged without any tests. We should add one - have a reference page that uses CSS to set the background image for a page, and a test page that sets the attribute on the body element instead. See the documentation at http://testthewebforward.org/do... | non_process | write a reftest for the background attribute on the body element merged without any tests we should add one have a reference page that uses css to set the background image for a page and a test page that sets the attribute on the body element instead see the documentation at and examples at you ll need ... | 0 |

820,392 | 30,771,007,132 | IssuesEvent | 2023-07-30 22:38:26 | apcountryman/picolibrary | https://api.github.com/repos/apcountryman/picolibrary | opened | Add WIZnet W5500 TCP over IP client socket | priority-normal status-awaiting_development type-feature | Add WIZnet W5500 TCP over IP client socket (`::picolibrary::WIZnet::W5500::IP::TCP::Client`) and associated mock (`::picolibrary::Testing::Automated::WIZnet::W5500::IP::TCP::Mock_Client`).

- [ ] The `Client` class should be defined in the `include/picolibrary/wiznet/w5500/ip/tcp.h`/`source/picolibrary/wiznet/w5500/ip/... | 1.0 | Add WIZnet W5500 TCP over IP client socket - Add WIZnet W5500 TCP over IP client socket (`::picolibrary::WIZnet::W5500::IP::TCP::Client`) and associated mock (`::picolibrary::Testing::Automated::WIZnet::W5500::IP::TCP::Mock_Client`).

- [ ] The `Client` class should be defined in the `include/picolibrary/wiznet/w5500/i... | non_process | add wiznet tcp over ip client socket add wiznet tcp over ip client socket picolibrary wiznet ip tcp client and associated mock picolibrary testing automated wiznet ip tcp mock client the client class should be defined in the include picolibrary wiznet ip tcp h source picol... | 0 |

10,342 | 13,170,876,107 | IssuesEvent | 2020-08-11 15:46:40 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | .transaction([]) fails with Request Timeout Error | bug/2-confirmed kind/bug process/candidate team/support topic: previewFeatures topic: transactionApi | ## Bug description

Using the new `.transaction([])`, I am seeing this on the client (JavaScript):

```

{ Error: Request Timeout Error

at PrismaClientFetcher._request (/app/node_modules/@prisma/client/runtime/index.js:1:213208) code: 'UND_ERR_REQUEST_TIMEOUT', meta: undefined }

```

## How to reproduce

... | 1.0 | .transaction([]) fails with Request Timeout Error - ## Bug description

Using the new `.transaction([])`, I am seeing this on the client (JavaScript):

```

{ Error: Request Timeout Error

at PrismaClientFetcher._request (/app/node_modules/@prisma/client/runtime/index.js:1:213208) code: 'UND_ERR_REQUEST_TIMEOUT... | process | transaction fails with request timeout error bug description using the new transaction i am seeing this on the client javascript error request timeout error at prismaclientfetcher request app node modules prisma client runtime index js code und err request timeout meta... | 1 |

22,663 | 31,895,971,157 | IssuesEvent | 2023-09-18 01:45:13 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - preparations | Term - change normative Task Group - Material Sample Process - complete Class - MaterialEntity | ## Term change

* Submitter: [Material Sample Task Group](https://www.tdwg.org/community/osr/material-sample/)

* Efficacy Justification (why is this change necessary?): The MaterialSample Task Group concluded that `dwc:preparations` should be organized under MaterialEntity rather than Occurrence, and that developing... | 1.0 | Change term - preparations - ## Term change

* Submitter: [Material Sample Task Group](https://www.tdwg.org/community/osr/material-sample/)

* Efficacy Justification (why is this change necessary?): The MaterialSample Task Group concluded that `dwc:preparations` should be organized under MaterialEntity rather than Oc... | process | change term preparations term change submitter efficacy justification why is this change necessary the materialsample task group concluded that dwc preparations should be organized under materialentity rather than occurrence and that developing a materialentity extension to rigorously addr... | 1 |

8,753 | 11,873,639,569 | IssuesEvent | 2020-03-26 17:37:27 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | opened | Add `cli` as executable for @prisma/cli? | kind/discussion kind/improvement process/candidate topic: cli | ## Problem

When using npx we need to do `npx -p @prisma/cli@alpha prisma2 --version`

## Solution

If we put another name for the executable in the package.json `cli` npx should work like:

`npx @prisma/cli@alpha --version` which is a lot easier (no parameters!)

## Additional context

We may want to test how it'... | 1.0 | Add `cli` as executable for @prisma/cli? - ## Problem

When using npx we need to do `npx -p @prisma/cli@alpha prisma2 --version`

## Solution

If we put another name for the executable in the package.json `cli` npx should work like:

`npx @prisma/cli@alpha --version` which is a lot easier (no parameters!)

## Addi... | process | add cli as executable for prisma cli problem when using npx we need to do npx p prisma cli alpha version solution if we put another name for the executable in the package json cli npx should work like npx prisma cli alpha version which is a lot easier no parameters additional... | 1 |

10,740 | 13,535,169,783 | IssuesEvent | 2020-09-16 07:08:34 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | [Bug report]动态组件遍历后,会生成很多个 wx:if="" | processing | **问题描述**

升级版本到 2.6.16之后,使用 动态组件 <compoent is="">来遍历呈现组件的时候,构建后每个组件上会产生很多 wx:if,然后提示警告:duplicate attribute: wx:if

写法如下:

```html

<component wx:for="{{componentsList}}" class="component" is="{{ item.name }}" componentData="{{ item.data }}" wx:key="uuid"></component>

```

生成以后的代码如下:

```html

<c-title componentD... | 1.0 | [Bug report]动态组件遍历后,会生成很多个 wx:if="" - **问题描述**

升级版本到 2.6.16之后,使用 动态组件 <compoent is="">来遍历呈现组件的时候,构建后每个组件上会产生很多 wx:if,然后提示警告:duplicate attribute: wx:if

写法如下:

```html

<component wx:for="{{componentsList}}" class="component" is="{{ item.name }}" componentData="{{ item.data }}" wx:key="uuid"></component>

```

生... | process | 动态组件遍历后,会生成很多个 wx if 问题描述 升级版本到 ,使用 动态组件 来遍历呈现组件的时候,构建后每个组件上会产生很多 wx if,然后提示警告:duplicate attribute wx if 写法如下: html 生成以后的代码如下: html 把我所有组件都wx if了一遍 | 1 |

667,687 | 22,496,683,567 | IssuesEvent | 2022-06-23 08:13:01 | fritz-marshal/fritz-beta-feedback | https://api.github.com/repos/fritz-marshal/fritz-beta-feedback | closed | Display full accuracy H0 for cosmology | enhancement triage: low priority | Hello,

We have noticed that there is a small inconsistency in the cosmological parameters in the About page on Fritz. The current parameters on the page are `FlatLambdaCDM(name=“Planck18_arXiv_v2”, H0=67.7 km / (Mpc s), Om0=0.31, Tcmb0=2.725 K, Neff=3.05, m_nu=[0. 0. 0.06] eV, Ob0=0.049).`

However, if you look at... | 1.0 | Display full accuracy H0 for cosmology - Hello,

We have noticed that there is a small inconsistency in the cosmological parameters in the About page on Fritz. The current parameters on the page are `FlatLambdaCDM(name=“Planck18_arXiv_v2”, H0=67.7 km / (Mpc s), Om0=0.31, Tcmb0=2.725 K, Neff=3.05, m_nu=[0. 0. 0.06] eV... | non_process | display full accuracy for cosmology hello we have noticed that there is a small inconsistency in the cosmological parameters in the about page on fritz the current parameters on the page are flatlambdacdm name “ arxiv ” km mpc s k neff m nu ev however if you lo... | 0 |