Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

7,397 | 10,523,131,038 | IssuesEvent | 2019-09-30 10:15:33 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | 百度小程序for循环:更新渲染数据无法视图更新 | processing | 如题,微信和头条正常,

"@mpxjs/api-proxy": "^2.2.27",

"@mpxjs/core": "^2.2.27",

"@mpxjs/webpack-plugin": "^2.2.29", | 1.0 | 百度小程序for循环:更新渲染数据无法视图更新 - 如题,微信和头条正常,

"@mpxjs/api-proxy": "^2.2.27",

"@mpxjs/core": "^2.2.27",

"@mpxjs/webpack-plugin": "^2.2.29", | process | 百度小程序for循环:更新渲染数据无法视图更新 如题,微信和头条正常, mpxjs api proxy mpxjs core mpxjs webpack plugin | 1 |

11,340 | 14,163,692,484 | IssuesEvent | 2020-11-12 03:01:32 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Real-time daemon seems to ingore --keep-last | bug log-processing on-disk | I am running the following command as a `systemd` service on my server:

```

goaccess /var/log/apache2/access.log.1 /var/log/apache2/access.log --restore --persist --db-path /var/spool/goaccess/realtime --real-time-html --keep-last=10 -o /var/lib/goaccess/realtime.html --daemonize --pid-file=/var/run/goaccess.pid

`... | 1.0 | Real-time daemon seems to ingore --keep-last - I am running the following command as a `systemd` service on my server:

```

goaccess /var/log/apache2/access.log.1 /var/log/apache2/access.log --restore --persist --db-path /var/spool/goaccess/realtime --real-time-html --keep-last=10 -o /var/lib/goaccess/realtime.html ... | process | real time daemon seems to ingore keep last i am running the following command as a systemd service on my server goaccess var log access log var log access log restore persist db path var spool goaccess realtime real time html keep last o var lib goaccess realtime html daemonize ... | 1 |

4,874 | 5,310,312,727 | IssuesEvent | 2017-02-12 19:00:21 | catapult-project/catapult | https://api.github.com/repos/catapult-project/catapult | opened | Catapult roll failing at "Switch clients to new JavaScript API (batch 5)" | Infrastructure Telemetry | [First failing roll](https://codereview.chromium.org/2687073004/) has just [[Telemetry] Switch clients to new JavaScript API (batch 5)](https://codereview.chromium.org/2687773003) in the commit list.

From [the log](https://luci-logdog.appspot.com/v/?s=chromium%2Fbb%2Ftryserver.chromium.win%2Fwin_chromium_x64_rel_ng... | 1.0 | Catapult roll failing at "Switch clients to new JavaScript API (batch 5)" - [First failing roll](https://codereview.chromium.org/2687073004/) has just [[Telemetry] Switch clients to new JavaScript API (batch 5)](https://codereview.chromium.org/2687773003) in the commit list.

From [the log](https://luci-logdog.appsp... | non_process | catapult roll failing at switch clients to new javascript api batch has just switch clients to new javascript api batch in the commit list from measurements detached context age in gc unittest testwithnodata failed unexpectedly traceback most recent call last fi... | 0 |

22,746 | 32,063,395,849 | IssuesEvent | 2023-09-24 22:36:41 | hsmusic/hsmusic-wiki | https://api.github.com/repos/hsmusic/hsmusic-wiki | opened | Automatically warn about tracks without any artists at all | scope: data processing type: dev friendliness | I.e, none in `Artists` on the artist nor on the album. I guess it's possible some wikis could want to use tracks without artists, but the wiki doesn't properly support that yet, and it's always a mistake on HSMusic!

This should check the update values, not computed values, for `artistContribs` (on album/track), so i... | 1.0 | Automatically warn about tracks without any artists at all - I.e, none in `Artists` on the artist nor on the album. I guess it's possible some wikis could want to use tracks without artists, but the wiki doesn't properly support that yet, and it's always a mistake on HSMusic!

This should check the update values, not... | process | automatically warn about tracks without any artists at all i e none in artists on the artist nor on the album i guess it s possible some wikis could want to use tracks without artists but the wiki doesn t properly support that yet and it s always a mistake on hsmusic this should check the update values not... | 1 |

6,469 | 9,546,672,709 | IssuesEvent | 2019-05-01 20:38:22 | openopps/openopps-platform | https://api.github.com/repos/openopps/openopps-platform | closed | Internship Opportunity - update description under logo | Apply Process Approved Opportunity Create Requirements Ready State Dept. | Who: Internship viewers

What: View U.S. in front of Dept of State

Why: State has requested we always display U.S. in front of their program name.

Acceptance Criteria:

The text under the logo on all views of the internship opportunity (creator view and applicant) should be updated to read, "U.S. Department of State"

M... | 1.0 | Internship Opportunity - update description under logo - Who: Internship viewers

What: View U.S. in front of Dept of State

Why: State has requested we always display U.S. in front of their program name.

Acceptance Criteria:

The text under the logo on all views of the internship opportunity (creator view and applicant)... | process | internship opportunity update description under logo who internship viewers what view u s in front of dept of state why state has requested we always display u s in front of their program name acceptance criteria the text under the logo on all views of the internship opportunity creator view and applicant ... | 1 |

22,577 | 31,805,275,024 | IssuesEvent | 2023-09-13 13:36:49 | GSA/EDX | https://api.github.com/repos/GSA/EDX | opened | Update personal access token for GitHub Workflow (September 2023) | process | For the EDXPROJECT_TOKEN to automate the issue workflow (adding it to EDX's Inbox in its Kanban board)

Instructions:

- Click on your user icon at the top right

- Click settings

- Scroll to bottom, click "Developer Settings"

- Under personal access tokens, click tokens classic

- You want to update the EDXPROJECT... | 1.0 | Update personal access token for GitHub Workflow (September 2023) - For the EDXPROJECT_TOKEN to automate the issue workflow (adding it to EDX's Inbox in its Kanban board)

Instructions:

- Click on your user icon at the top right

- Click settings

- Scroll to bottom, click "Developer Settings"

- Under personal acce... | process | update personal access token for github workflow september for the edxproject token to automate the issue workflow adding it to edx s inbox in its kanban board instructions click on your user icon at the top right click settings scroll to bottom click developer settings under personal access ... | 1 |

579,369 | 17,189,917,244 | IssuesEvent | 2021-07-16 09:26:50 | vortexntnu/Vortex-AUV | https://api.github.com/repos/vortexntnu/Vortex-AUV | closed | Create node for controlling gripper and lights | High priority | Should be fairly simple. The gripper is controlled as on/off via a single GPIO pin. Same story with the lights.

Should be toggleable from joystick. | 1.0 | Create node for controlling gripper and lights - Should be fairly simple. The gripper is controlled as on/off via a single GPIO pin. Same story with the lights.

Should be toggleable from joystick. | non_process | create node for controlling gripper and lights should be fairly simple the gripper is controlled as on off via a single gpio pin same story with the lights should be toggleable from joystick | 0 |

218,102 | 16,749,691,420 | IssuesEvent | 2021-06-11 20:46:38 | FragSoc/esports-bot | https://api.github.com/repos/FragSoc/esports-bot | closed | Formal minimum dependencies versions? | bug documentation | I'm pretty sure the bot needs at least python `3.8`, but I'm not too sure about that. We should formalise this. | 1.0 | Formal minimum dependencies versions? - I'm pretty sure the bot needs at least python `3.8`, but I'm not too sure about that. We should formalise this. | non_process | formal minimum dependencies versions i m pretty sure the bot needs at least python but i m not too sure about that we should formalise this | 0 |

19,012 | 25,013,112,305 | IssuesEvent | 2022-11-03 16:37:43 | MPMG-DCC-UFMG/C01 | https://api.github.com/repos/MPMG-DCC-UFMG/C01 | opened | Debug: Modo `Headless = False` e Gravação de vídeos | [2] Alta Prioridade [1] Requisito [0] Desenvolvimento [3] Processamento Dinâmico | ## Comportamento Esperado

Ferramentas de depuração de coletores se mostram necessárias para a correção de erros, principalmente do novo sistema distribuído.

## Comportamento Atual

Atualmente, não temos muitas ferramentas de debug integradas ao sistema. Além do Trace Viewer (#4798), outras features podem ajudar com... | 1.0 | Debug: Modo `Headless = False` e Gravação de vídeos - ## Comportamento Esperado

Ferramentas de depuração de coletores se mostram necessárias para a correção de erros, principalmente do novo sistema distribuído.

## Comportamento Atual

Atualmente, não temos muitas ferramentas de debug integradas ao sistema. Além do ... | process | debug modo headless false e gravação de vídeos comportamento esperado ferramentas de depuração de coletores se mostram necessárias para a correção de erros principalmente do novo sistema distribuído comportamento atual atualmente não temos muitas ferramentas de debug integradas ao sistema além do ... | 1 |

4,691 | 7,526,671,763 | IssuesEvent | 2018-04-13 14:41:23 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | stdio: cannot write to stdin after #1233 | help wanted process | Continuing from https://github.com/nodejs/node/issues/9201#issuecomment-255103708. The change from #1233 makes it impossible to write to stdin, something that works in v0.10 and v0.12.

Test case:

``` js

var spawn = require('child_process').spawn;

var args = ['-e', 'process.stdin.write("ok\\n")'];

var proc = spawn(pro... | 1.0 | stdio: cannot write to stdin after #1233 - Continuing from https://github.com/nodejs/node/issues/9201#issuecomment-255103708. The change from #1233 makes it impossible to write to stdin, something that works in v0.10 and v0.12.

Test case:

``` js

var spawn = require('child_process').spawn;

var args = ['-e', 'process.s... | process | stdio cannot write to stdin after continuing from the change from makes it impossible to write to stdin something that works in and test case js var spawn require child process spawn var args var proc spawn process execpath args stdio proc stdin pipe process stdout ... | 1 |

15,906 | 2,611,532,830 | IssuesEvent | 2015-02-27 06:03:53 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | opened | master chef and elements | auto-migrated Component-Lua Priority-Low Type-Enhancement | ```

So, an old style I proposed to mikade and a new one, both open for discussion!

- elemental mode

Four type of teams, each with a natural element that boost a given subset of

weapons.

For example we could think of Fire, Water, Earth and Wind: the first one has a

boost for explosion weapons, the second can walk on ... | 1.0 | master chef and elements - ```

So, an old style I proposed to mikade and a new one, both open for discussion!

- elemental mode

Four type of teams, each with a natural element that boost a given subset of

weapons.

For example we could think of Fire, Water, Earth and Wind: the first one has a

boost for explosion weapo... | non_process | master chef and elements so an old style i proposed to mikade and a new one both open for discussion elemental mode four type of teams each with a natural element that boost a given subset of weapons for example we could think of fire water earth and wind the first one has a boost for explosion weapo... | 0 |

21,904 | 30,352,332,385 | IssuesEvent | 2023-07-11 20:01:29 | StormSurgeLive/asgs | https://api.github.com/repos/StormSurgeLive/asgs | opened | Improve swan max file support in `generateXDMF.f90` | enhancement incremental improvement postprocessing | It appears that this utility does not recognize `swan_DIR_max.63.nc`, `swan_TM01_max.63.nc`, `swan_TM02_max.63.nc`, or `swan_TMM10_max.63.nc`. | 1.0 | Improve swan max file support in `generateXDMF.f90` - It appears that this utility does not recognize `swan_DIR_max.63.nc`, `swan_TM01_max.63.nc`, `swan_TM02_max.63.nc`, or `swan_TMM10_max.63.nc`. | process | improve swan max file support in generatexdmf it appears that this utility does not recognize swan dir max nc swan max nc swan max nc or swan max nc | 1 |

385,078 | 11,412,236,718 | IssuesEvent | 2020-02-01 11:48:13 | islos-efe-eme/auto-news | https://api.github.com/repos/islos-efe-eme/auto-news | opened | Add scheduler for the news (Slack bot) | priority:high slack | Depends on https://github.com/islos-efe-eme/auto-news/issues/3

- [ ] Add scheduler for posting the news in the channel `gta-news` once every day. | 1.0 | Add scheduler for the news (Slack bot) - Depends on https://github.com/islos-efe-eme/auto-news/issues/3

- [ ] Add scheduler for posting the news in the channel `gta-news` once every day. | non_process | add scheduler for the news slack bot depends on add scheduler for posting the news in the channel gta news once every day | 0 |

6,772 | 3,054,334,702 | IssuesEvent | 2015-08-13 01:24:54 | facebook/osquery | https://api.github.com/repos/facebook/osquery | closed | documentation uses old added/removed format in using osqueryd docs | documentation | > https://github.com/facebook/osquery/blob/master/docs/wiki/introduction/using-osqueryd.md

Each query represents a monitored view of your operating system. The first time a scheduled query runs it logs every row in the resulting table with the "added" action. In this example, on an OS X laptop, after the first 60 se... | 1.0 | documentation uses old added/removed format in using osqueryd docs - > https://github.com/facebook/osquery/blob/master/docs/wiki/introduction/using-osqueryd.md

Each query represents a monitored view of your operating system. The first time a scheduled query runs it logs every row in the resulting table with the "add... | non_process | documentation uses old added removed format in using osqueryd docs each query represents a monitored view of your operating system the first time a scheduled query runs it logs every row in the resulting table with the added action in this example on an os x laptop after the first seconds it would log ... | 0 |

15,396 | 19,580,287,120 | IssuesEvent | 2022-01-04 20:19:44 | 2i2c-org/infrastructure | https://api.github.com/repos/2i2c-org/infrastructure | closed | Create a model for our hub capacity | type: enhancement :label: team-process | # Background

As we begin to support hub infrastructure for other communities, we will need to balance the time of each of our team members in a way that distributes work and makes our support of hubs efficient. There will likely be a non-linear model that answers "how many hubs can our team support at this moment in... | 1.0 | Create a model for our hub capacity - # Background

As we begin to support hub infrastructure for other communities, we will need to balance the time of each of our team members in a way that distributes work and makes our support of hubs efficient. There will likely be a non-linear model that answers "how many hubs ... | process | create a model for our hub capacity background as we begin to support hub infrastructure for other communities we will need to balance the time of each of our team members in a way that distributes work and makes our support of hubs efficient there will likely be a non linear model that answers how many hubs ... | 1 |

417,014 | 12,154,746,809 | IssuesEvent | 2020-04-25 09:51:52 | leinardi/pylint-pycharm | https://api.github.com/repos/leinardi/pylint-pycharm | closed | PluginException: Icon cannot be found in '/modules/modulesNode.png' | Priority: High Status: Accepted Type: Bug | **pylint-pycharm version:**

0.12.1

**description:**

pycharm issues this error on each plugin execution.

this is mostly an aesthetic bug since it doesn't seem to prevent the plugin from running.

**full traceback:**

```

com.intellij.diagnostic.PluginException: Icon cannot be found in '/modules/modulesNode.png'... | 1.0 | PluginException: Icon cannot be found in '/modules/modulesNode.png' - **pylint-pycharm version:**

0.12.1

**description:**

pycharm issues this error on each plugin execution.

this is mostly an aesthetic bug since it doesn't seem to prevent the plugin from running.

**full traceback:**

```

com.intellij.diagnost... | non_process | pluginexception icon cannot be found in modules modulesnode png pylint pycharm version description pycharm issues this error on each plugin execution this is mostly an aesthetic bug since it doesn t seem to prevent the plugin from running full traceback com intellij diagnosti... | 0 |

59,183 | 6,630,834,260 | IssuesEvent | 2017-09-25 02:50:21 | steedos/apps | https://api.github.com/repos/steedos/apps | closed | 流程设计中,若已指定审批岗位的节点,人员发生修改(删除),无法再次对此步骤进行属性编辑 | fix:Done test:OK |

流程设计器中,已经配置好了处理人(指定审批岗位),当此审批岗位人员被删除或是调动时,流程设计器会提示:步骤中指定的处理人已删除或已停用。这时,我们需要对此节点就行修改,要点击右边的属性按钮。属性按钮无响应! | 1.0 | 流程设计中,若已指定审批岗位的节点,人员发生修改(删除),无法再次对此步骤进行属性编辑 -

流程设计器中,已经配置好了处理人(指定审批岗位),当此审批岗位人员被删除或是调动时,流程设计器会提示:步骤中指定的处理人已删除或已停用。这时,我们需要对此节点就行修改,要点击右边的属性按钮。属性按钮无响应! | non_process | 流程设计中,若已指定审批岗位的节点,人员发生修改(删除),无法再次对此步骤进行属性编辑 流程设计器中,已经配置好了处理人(指定审批岗位),当此审批岗位人员被删除或是调动时,流程设计器会提示:步骤中指定的处理人已删除或已停用。这时,我们需要对此节点就行修改,要点击右边的属性按钮。属性按钮无响应 | 0 |

30,948 | 5,889,756,334 | IssuesEvent | 2017-05-17 13:37:44 | LDMW/app | https://api.github.com/repos/LDMW/app | closed | 13/4 Call | discuss documentation | **Note: from memory, should have made notes (next time)**

The main points from the conversation were:

### Sprint planning

We will be working in a more agile way with everyone getting involved with time estimating each feature that will be built.

Fast iterations on each new feature.

### Why Wagtail?

Be... | 1.0 | 13/4 Call - **Note: from memory, should have made notes (next time)**

The main points from the conversation were:

### Sprint planning

We will be working in a more agile way with everyone getting involved with time estimating each feature that will be built.

Fast iterations on each new feature.

### Why Wa... | non_process | call note from memory should have made notes next time the main points from the conversation were sprint planning we will be working in a more agile way with everyone getting involved with time estimating each feature that will be built fast iterations on each new feature why wag... | 0 |

212,741 | 7,242,280,583 | IssuesEvent | 2018-02-14 06:47:25 | NuGet/Home | https://api.github.com/repos/NuGet/Home | closed | Engineering: NuGet.Client should produce nupkgs using SemVer 2.0.0 | Area: Engineering Improvements Priority:2 Sprint 131 | The NuGet.Client repo should make sure of SemVer 2.0.0 by producing nupkgs with release labels that include `.` instead of `-` for proper ordering.

Current: `3.6.0-rc-1950`

Expected: `3.6.0-rc.1.1950`

| 1.0 | Engineering: NuGet.Client should produce nupkgs using SemVer 2.0.0 - The NuGet.Client repo should make sure of SemVer 2.0.0 by producing nupkgs with release labels that include `.` instead of `-` for proper ordering.

Current: `3.6.0-rc-1950`

Expected: `3.6.0-rc.1.1950`

| non_process | engineering nuget client should produce nupkgs using semver the nuget client repo should make sure of semver by producing nupkgs with release labels that include instead of for proper ordering current rc expected rc | 0 |

1,033 | 25,115,254,434 | IssuesEvent | 2022-11-09 01:03:05 | jongfeel/BookReview | https://api.github.com/repos/jongfeel/BookReview | closed | 3부 6장 불평을 처리하는 안전밸브 | 2022 How to Win Friends & Influence People | ### 6장 불편을 처리하는 안전밸브

상대방이 먼저 자신의 이야기를 마치도록 내버려 두어라. 상대방은 자신의 일과 문제에 대해서 당신보다 훨씬 더 잘 알고 있다. 그러니 상대방에게 질문을 던져라. 상대방이 당신에게 이야기를 하게 만들어라.

---

규칙 6: 다른 사람이 말을 많이 하도록 만들어라.

Let the other man do a great deal of the talking. | 1.0 | 3부 6장 불평을 처리하는 안전밸브 - ### 6장 불편을 처리하는 안전밸브

상대방이 먼저 자신의 이야기를 마치도록 내버려 두어라. 상대방은 자신의 일과 문제에 대해서 당신보다 훨씬 더 잘 알고 있다. 그러니 상대방에게 질문을 던져라. 상대방이 당신에게 이야기를 하게 만들어라.

---

규칙 6: 다른 사람이 말을 많이 하도록 만들어라.

Let the other man do a great deal of the talking. | non_process | 불평을 처리하는 안전밸브 불편을 처리하는 안전밸브 상대방이 먼저 자신의 이야기를 마치도록 내버려 두어라 상대방은 자신의 일과 문제에 대해서 당신보다 훨씬 더 잘 알고 있다 그러니 상대방에게 질문을 던져라 상대방이 당신에게 이야기를 하게 만들어라 규칙 다른 사람이 말을 많이 하도록 만들어라 let the other man do a great deal of the talking | 0 |

421,589 | 28,348,841,911 | IssuesEvent | 2023-04-12 00:07:09 | fga-eps-mds/2023-1-CAPJu-Doc | https://api.github.com/repos/fga-eps-mds/2023-1-CAPJu-Doc | opened | Atualizar mural do Lean Inception | documentation eps | # Atualizar Mural

## Descrição.

Atualizar mural as informações do CAPJU (Até a etapa 5 - Jornada do usuário) e completar etapas as demais informações

<!-- ### Issue relacionada com [US <Numero>](link) <Se tiver> -->

## Como solucionar <Nao necessario>

Debater com a equipe e validar com o PO com base no Mura... | 1.0 | Atualizar mural do Lean Inception - # Atualizar Mural

## Descrição.

Atualizar mural as informações do CAPJU (Até a etapa 5 - Jornada do usuário) e completar etapas as demais informações

<!-- ### Issue relacionada com [US <Numero>](link) <Se tiver> -->

## Como solucionar <Nao necessario>

Debater com a equipe... | non_process | atualizar mural do lean inception atualizar mural descrição atualizar mural as informações do capju até a etapa jornada do usuário e completar etapas as demais informações como solucionar debater com a equipe e validar com o po com base no mural do link critérios de aceitaçã... | 0 |

12,656 | 15,026,002,684 | IssuesEvent | 2021-02-01 21:57:49 | 2i2c-org/team-compass | https://api.github.com/repos/2i2c-org/team-compass | closed | Tech Team Update: 2021-01-27 | team-process | Hey @2i2c-org/tech-team - we're a couple of days late for our latest updates. Sorry about that! Can folks fill out the [HackMD](https://hackmd.io/i2Siurp1TkmPYgn3ZgxFQw) with their own updates? ✨

- **Updates HackMD**: https://hackmd.io/i2Siurp1TkmPYgn3ZgxFQw

- **Team Sync history**: https://2i2c.org/team-compass/t... | 1.0 | Tech Team Update: 2021-01-27 - Hey @2i2c-org/tech-team - we're a couple of days late for our latest updates. Sorry about that! Can folks fill out the [HackMD](https://hackmd.io/i2Siurp1TkmPYgn3ZgxFQw) with their own updates? ✨

- **Updates HackMD**: https://hackmd.io/i2Siurp1TkmPYgn3ZgxFQw

- **Team Sync history**: ... | process | tech team update hey org tech team we re a couple of days late for our latest updates sorry about that can folks fill out the with their own updates ✨ updates hackmd team sync history todo clean up the for this update ping the team members in wai... | 1 |

13,274 | 15,757,795,002 | IssuesEvent | 2021-03-31 05:51:57 | kubeflow/internal-acls | https://api.github.com/repos/kubeflow/internal-acls | closed | Presubmit test to prevent Github-sync from being broken by inconsistent member lists | kind/bug kind/process priority/p1 | /kind process

Follow up on #344

We've seen a couple of times that Github sync is broken when a member is added to a team but not the Kubeflow org.

For example:

```

$ kubectl logs github-sync-1600450800-gz94v -n github-admin

{"component":"peribolos","file":"prow/flagutil/github.go:78","func":"k8s.io/test-infr... | 1.0 | Presubmit test to prevent Github-sync from being broken by inconsistent member lists - /kind process

Follow up on #344

We've seen a couple of times that Github sync is broken when a member is added to a team but not the Kubeflow org.

For example:

```

$ kubectl logs github-sync-1600450800-gz94v -n github-admin... | process | presubmit test to prevent github sync from being broken by inconsistent member lists kind process follow up on we ve seen a couple of times that github sync is broken when a member is added to a team but not the kubeflow org for example kubectl logs github sync n github admin component ... | 1 |

10,268 | 13,124,712,763 | IssuesEvent | 2020-08-06 04:40:01 | didi/mpx | https://api.github.com/repos/didi/mpx | closed | [Bug report]构建为swan的时候,全局配置属性丢失 | processing | **问题描述**

mpx构建为百度小程序时,丢失全局配置属性:networkTimeout、permission、requiredBackgroundModes

原因如下:

https://github.com/didi/mpx/blob/c8b6f09491abf3553db90c2442099f9f94022962/packages/webpack-plugin/lib/platform/json/wx/index.js#L210-L220

在这个文件里做了删除。

**建议**

各家小程序都在持续更新迭代,所以希望mpx官方也定期检查配置,^@^ | 1.0 | [Bug report]构建为swan的时候,全局配置属性丢失 - **问题描述**

mpx构建为百度小程序时,丢失全局配置属性:networkTimeout、permission、requiredBackgroundModes

原因如下:

https://github.com/didi/mpx/blob/c8b6f09491abf3553db90c2442099f9f94022962/packages/webpack-plugin/lib/platform/json/wx/index.js#L210-L220

在这个文件里做了删除。

**建议**

各家小程序都在持续更新迭代,所以希望mpx官方也定期检查... | process | 构建为swan的时候,全局配置属性丢失 问题描述 mpx构建为百度小程序时,丢失全局配置属性:networktimeout、permission、requiredbackgroundmodes 原因如下: 在这个文件里做了删除。 建议 各家小程序都在持续更新迭代,所以希望mpx官方也定期检查配置, | 1 |

339,571 | 10,256,254,447 | IssuesEvent | 2019-08-21 17:13:30 | NuGet/Home | https://api.github.com/repos/NuGet/Home | closed | PackagePathResolver.GetInstalledPath doesn't work with relative paths | Priority:2 Sprint 157 Type:Bug | Either make sure `PackagePathResolver.Root` is absolute [here](https://github.com/NuGet/NuGet.Client/blob/3803820961f4d61c06d07b179dab1d0439ec0d91/src/NuGet.Core/NuGet.Packaging/PackageExtraction/PackagePathHelper.cs#L123) or just make it absolute in the PackagePathResolver constructor. | 1.0 | PackagePathResolver.GetInstalledPath doesn't work with relative paths - Either make sure `PackagePathResolver.Root` is absolute [here](https://github.com/NuGet/NuGet.Client/blob/3803820961f4d61c06d07b179dab1d0439ec0d91/src/NuGet.Core/NuGet.Packaging/PackageExtraction/PackagePathHelper.cs#L123) or just make it absolute ... | non_process | packagepathresolver getinstalledpath doesn t work with relative paths either make sure packagepathresolver root is absolute or just make it absolute in the packagepathresolver constructor | 0 |

105,353 | 13,181,071,331 | IssuesEvent | 2020-08-12 13:47:42 | JamesOwers/midi_degradation_toolkit | https://api.github.com/repos/JamesOwers/midi_degradation_toolkit | closed | df_to_csv and csv_to_df should probably be in the same place | design | I'd go for having them both in that "midi" package and renaming it perhaps? (Currently csv_to_df is data_structures.read_note_csv)

Essentially, mdtk.midi (renamed) would be for file I/O and conversion, while mdtk.data_structures would be about doing things with dataframes. | 1.0 | df_to_csv and csv_to_df should probably be in the same place - I'd go for having them both in that "midi" package and renaming it perhaps? (Currently csv_to_df is data_structures.read_note_csv)

Essentially, mdtk.midi (renamed) would be for file I/O and conversion, while mdtk.data_structures would be about doing thin... | non_process | df to csv and csv to df should probably be in the same place i d go for having them both in that midi package and renaming it perhaps currently csv to df is data structures read note csv essentially mdtk midi renamed would be for file i o and conversion while mdtk data structures would be about doing thin... | 0 |

73,811 | 8,940,639,185 | IssuesEvent | 2019-01-24 00:34:16 | Opentrons/opentrons | https://api.github.com/repos/Opentrons/opentrons | opened | Thermocycler: Define Temperature Cycles (API) | WIP api design feature medium | As a Thermocycler user, I would like to be able to define temperature cycles via API.

## Acceptance Criteria

- [ ] User able to define a 'cycle' consisting of an arbitrary number of steps

- [ ] A step includes a defined temperature, temperature ramp rate, and time at that temperature

- [ ] If no temperature ram... | 1.0 | Thermocycler: Define Temperature Cycles (API) - As a Thermocycler user, I would like to be able to define temperature cycles via API.

## Acceptance Criteria

- [ ] User able to define a 'cycle' consisting of an arbitrary number of steps

- [ ] A step includes a defined temperature, temperature ramp rate, and time at... | non_process | thermocycler define temperature cycles api as a thermocycler user i would like to be able to define temperature cycles via api acceptance criteria user able to define a cycle consisting of an arbitrary number of steps a step includes a defined temperature temperature ramp rate and time at tha... | 0 |

6,976 | 10,127,500,294 | IssuesEvent | 2019-08-01 10:21:56 | bisq-network/bisq | https://api.github.com/repos/bisq-network/bisq | closed | User experience: add "Start payment" before "Payment started" button | in:gui in:trade-process was:dropped | For a new user, "Payment started" button without any clarification may be somewhat confusing. It is good that once you press it, it asks you if you have made a payment or not, but maybe it would be better if there was some instruction before that button, such as "Start payment". | 1.0 | User experience: add "Start payment" before "Payment started" button - For a new user, "Payment started" button without any clarification may be somewhat confusing. It is good that once you press it, it asks you if you have made a payment or not, but maybe it would be better if there was some instruction before that b... | process | user experience add start payment before payment started button for a new user payment started button without any clarification may be somewhat confusing it is good that once you press it it asks you if you have made a payment or not but maybe it would be better if there was some instruction before that b... | 1 |

3,815 | 6,800,316,550 | IssuesEvent | 2017-11-02 13:37:15 | syndesisio/syndesis-ui | https://api.github.com/repos/syndesisio/syndesis-ui | closed | Update third party dependencies | dev process enhancement Priority - High | Noticed while looking at https://github.com/syndesisio/syndesis-ui/issues/934 that our dependency versions are ancient at this point and many could use a bump up to a newer version. | 1.0 | Update third party dependencies - Noticed while looking at https://github.com/syndesisio/syndesis-ui/issues/934 that our dependency versions are ancient at this point and many could use a bump up to a newer version. | process | update third party dependencies noticed while looking at that our dependency versions are ancient at this point and many could use a bump up to a newer version | 1 |

239,913 | 7,800,159,509 | IssuesEvent | 2018-06-09 05:42:57 | tine20/Tine-2.0-Open-Source-Groupware-and-CRM | https://api.github.com/repos/tine20/Tine-2.0-Open-Source-Groupware-and-CRM | closed | 0007568:

do not send iMIP-messages via ActiveSync | ActiveSync Bug Mantis high priority | **Reported by pschuele on 10 Dec 2012 14:25**

**Version:** Milan (2012.03.7)

do not send iMIP-messages via ActiveSync

- check attachments, do not send message with Felamimail_Model_Message::CONTENT_TYPE_CALENDAR attachment

| 1.0 | 0007568:

do not send iMIP-messages via ActiveSync - **Reported by pschuele on 10 Dec 2012 14:25**

**Version:** Milan (2012.03.7)

do not send iMIP-messages via ActiveSync

- check attachments, do not send message with Felamimail_Model_Message::CONTENT_TYPE_CALENDAR attachment

| non_process | do not send imip messages via activesync reported by pschuele on dec version milan do not send imip messages via activesync check attachments do not send message with felamimail model message content type calendar attachment | 0 |

17,373 | 23,198,521,665 | IssuesEvent | 2022-08-01 18:54:21 | vectordotdev/vector | https://api.github.com/repos/vectordotdev/vector | closed | Make Vector more scriptable | meta: idea needs: approval needs: requirements domain: processing | In this issue I want to discuss on high level adding scripting APIs to Vector. It might not be the top priority at the moment, but I'm creating this issue now to give us enough time to think about it and discuss it.

## Introduction

### Goals and scope of this issue

The goal is to define how ideal APIs should l... | 1.0 | Make Vector more scriptable - In this issue I want to discuss on high level adding scripting APIs to Vector. It might not be the top priority at the moment, but I'm creating this issue now to give us enough time to think about it and discuss it.

## Introduction

### Goals and scope of this issue

The goal is to ... | process | make vector more scriptable in this issue i want to discuss on high level adding scripting apis to vector it might not be the top priority at the moment but i m creating this issue now to give us enough time to think about it and discuss it introduction goals and scope of this issue the goal is to ... | 1 |

95,858 | 16,112,865,488 | IssuesEvent | 2021-04-28 01:00:51 | bci-oss/keycloak | https://api.github.com/repos/bci-oss/keycloak | opened | CVE-2021-23382 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2021-23382 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-6.0.23.tgz</b>, <b>postcss-6.0.1.tgz</b>, <b>postcss-7.0.27.tgz</b></p></summary>

<p>

<details><summary><b... | True | CVE-2021-23382 (Medium) detected in multiple libraries - ## CVE-2021-23382 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>postcss-6.0.23.tgz</b>, <b>postcss-6.0.1.tgz</b>, <b>postc... | non_process | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries postcss tgz postcss tgz postcss tgz postcss tgz tool for transforming styles with js plugins library home page a href path to dependency file keyc... | 0 |

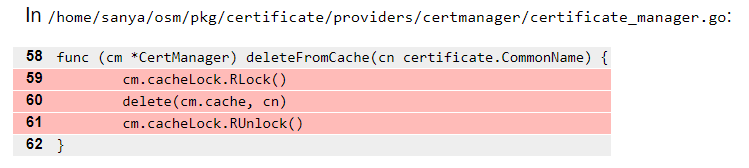

213,553 | 16,524,589,591 | IssuesEvent | 2021-05-26 18:20:09 | openservicemesh/osm | https://api.github.com/repos/openservicemesh/osm | closed | test: pkg/certificate/providers/certmanager/certificate_manager.go - delete cert from cache | area/tests size/XS | In `pkg/certificate/providers/certmanager/certificate_manager.go`, the unit test coverage is low for an deleteFromCache() func. See highlighted lines below.

Scenarios not covered:

* cert is deleted fro... | 1.0 | test: pkg/certificate/providers/certmanager/certificate_manager.go - delete cert from cache - In `pkg/certificate/providers/certmanager/certificate_manager.go`, the unit test coverage is low for an deleteFromCache() func. See highlighted lines below.

detected in linux-stable-rtv4.1.33 | Mend: dependency security vulnerability | ## WS-2021-0334 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page:... | True | WS-2021-0334 (High) detected in linux-stable-rtv4.1.33 - ## WS-2021-0334 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwrigh... | non_process | ws high detected in linux stable ws high severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source files ... | 0 |

474,146 | 13,653,333,470 | IssuesEvent | 2020-09-27 12:14:06 | Trinityyi/boardeaux | https://api.github.com/repos/Trinityyi/boardeaux | closed | Design and style boards and cards | Priority: normal enhancement | - [x] Implement a baseline for CSS styling

- [x] Organize CSS code and handle styling conventions

- [x] Add icons/iconfont

- [x] Style cards

- [x] Style modals

- [x] Style menu

- [x] Style boards

| 1.0 | Design and style boards and cards - - [x] Implement a baseline for CSS styling

- [x] Organize CSS code and handle styling conventions

- [x] Add icons/iconfont

- [x] Style cards

- [x] Style modals

- [x] Style menu

- [x] Style boards

| non_process | design and style boards and cards implement a baseline for css styling organize css code and handle styling conventions add icons iconfont style cards style modals style menu style boards | 0 |

85,152 | 24,524,888,323 | IssuesEvent | 2022-10-11 12:26:26 | microsoft/fluentui | https://api.github.com/repos/microsoft/fluentui | closed | [Feature]: Enable screener checks to run from the screener proxy | Area: Build System Type: Epic CI | ### Library

React Components / v9 (@fluentui/react-components)

### Describe the feature that you would like added

Make screener checks runs to be triggered by the screener proxy instead of ADO using the GitHub API.

- [x] Convert the Azure DevOps job that runs the screener checks to a GitHub Action; | https... | 1.0 | [Feature]: Enable screener checks to run from the screener proxy - ### Library

React Components / v9 (@fluentui/react-components)

### Describe the feature that you would like added

Make screener checks runs to be triggered by the screener proxy instead of ADO using the GitHub API.

- [x] Convert the Azure De... | non_process | enable screener checks to run from the screener proxy library react components fluentui react components describe the feature that you would like added make screener checks runs to be triggered by the screener proxy instead of ado using the github api convert the azure devops job th... | 0 |

2,554 | 4,913,767,104 | IssuesEvent | 2016-11-23 13:39:33 | esdee1902/TA05_K21T03_Team3.6 | https://api.github.com/repos/esdee1902/TA05_K21T03_Team3.6 | closed | As a player, I want to hear sounds when the ball hit wall or paddles. | Requirement | 1. Tìm và tải âm thanh: 10 phút

2. Tìm hiểu cách thêm nó vào source code: 30 phút

3. Test xem sự ảnh hưởng tới trò chơi: 10 phút

Tổng cộng: 50 phút | 1.0 | As a player, I want to hear sounds when the ball hit wall or paddles. - 1. Tìm và tải âm thanh: 10 phút

2. Tìm hiểu cách thêm nó vào source code: 30 phút

3. Test xem sự ảnh hưởng tới trò chơi: 10 phút

Tổng cộng: 50 phút | non_process | as a player i want to hear sounds when the ball hit wall or paddles tìm và tải âm thanh phút tìm hiểu cách thêm nó vào source code phút test xem sự ảnh hưởng tới trò chơi phút tổng cộng phút | 0 |

80,750 | 23,296,430,545 | IssuesEvent | 2022-08-06 16:47:48 | bevyengine/bevy | https://api.github.com/repos/bevyengine/bevy | closed | Adding DefaultPlugins to the App panics with `default-features = false` | C-Bug A-Build-System A-App | ## Bevy version

0.8

## What you did

Use Bevy without default features and add the `DefaultPlugins` to the `App`

`cargo.toml`:

```toml

[dependencies.bevy]

version = "0.8.0"

default-features = false

features = ["dynamic"]

```

`main.rs`:

```rust

use bevy::prelude::*;

fn main() {

App::new().add_plu... | 1.0 | Adding DefaultPlugins to the App panics with `default-features = false` - ## Bevy version

0.8

## What you did

Use Bevy without default features and add the `DefaultPlugins` to the `App`

`cargo.toml`:

```toml

[dependencies.bevy]

version = "0.8.0"

default-features = false

features = ["dynamic"]

```

`main.r... | non_process | adding defaultplugins to the app panics with default features false bevy version what you did use bevy without default features and add the defaultplugins to the app cargo toml toml version default features false features main rs rust use bevy prel... | 0 |

7,077 | 10,227,248,737 | IssuesEvent | 2019-08-16 20:12:06 | googleapis/google-cloud-cpp-spanner | https://api.github.com/repos/googleapis/google-cloud-cpp-spanner | closed | docker cmake-super build compiles with -j1 | priority: p2 type: process | The docker cmake-super build takes significantly longer than other builds (2-3x). From the logs we see:

`make[3]: warning: jobserver unavailable: using -j1. Add '+' to parent make rule.`

@coryan suggests we could use ninja or CMAKE_BUILD_PARALLEL_LEVEL if we move to cmake-3.12+ https://github.com/googleapis/goo... | 1.0 | docker cmake-super build compiles with -j1 - The docker cmake-super build takes significantly longer than other builds (2-3x). From the logs we see:

`make[3]: warning: jobserver unavailable: using -j1. Add '+' to parent make rule.`

@coryan suggests we could use ninja or CMAKE_BUILD_PARALLEL_LEVEL if we move to ... | process | docker cmake super build compiles with the docker cmake super build takes significantly longer than other builds from the logs we see make warning jobserver unavailable using add to parent make rule coryan suggests we could use ninja or cmake build parallel level if we move to cmake... | 1 |

71,166 | 18,501,470,503 | IssuesEvent | 2021-10-19 14:10:47 | NOAA-EMC/NCEPLIBS-g2c | https://api.github.com/repos/NOAA-EMC/NCEPLIBS-g2c | closed | Skip the 1.6.3 release - next release should be 1.6.4 | build | The NCO has done a 1.6.3 release of g2clib. To avoid confusion, we will call the next release 1.6.4. | 1.0 | Skip the 1.6.3 release - next release should be 1.6.4 - The NCO has done a 1.6.3 release of g2clib. To avoid confusion, we will call the next release 1.6.4. | non_process | skip the release next release should be the nco has done a release of to avoid confusion we will call the next release | 0 |

13,240 | 15,707,747,013 | IssuesEvent | 2021-03-26 19:22:38 | correctcomputation/checkedc-clang | https://api.github.com/repos/correctcomputation/checkedc-clang | opened | `ClangTool::run` `chdir` call corrupts internal Clang include file path cache (?) | Upstream bug clang preprocessor command-line | As part of the change to expand macros before running 3C, I tried to change `convert_project` so that instead of (1) passing an adjusted version of the union of all compiler options seen in the compilation database to 3C via `-extra-arg-before`, it (2) lets 3C read the options directly from the compilation database. T... | 1.0 | `ClangTool::run` `chdir` call corrupts internal Clang include file path cache (?) - As part of the change to expand macros before running 3C, I tried to change `convert_project` so that instead of (1) passing an adjusted version of the union of all compiler options seen in the compilation database to 3C via `-extra-arg... | process | clangtool run chdir call corrupts internal clang include file path cache as part of the change to expand macros before running i tried to change convert project so that instead of passing an adjusted version of the union of all compiler options seen in the compilation database to via extra arg b... | 1 |

18,941 | 24,901,739,161 | IssuesEvent | 2022-10-28 21:48:47 | googleapis/python-bigquery | https://api.github.com/repos/googleapis/python-bigquery | closed | Run checks to use latest supported Python (3.9) | api: bigquery type: process | In `setup.py` and in README we declare that we support `Python >= 3.6`, but the nox test sessions only use Python up to 3.8. Python 3.9 is also missing from the `classifiers` list in `setup.py`.

We should bump the maximum versions to `3.9` where applicable. If tests fail in 3.9, we should bound the `python_requires`... | 1.0 | Run checks to use latest supported Python (3.9) - In `setup.py` and in README we declare that we support `Python >= 3.6`, but the nox test sessions only use Python up to 3.8. Python 3.9 is also missing from the `classifiers` list in `setup.py`.

We should bump the maximum versions to `3.9` where applicable. If tests ... | process | run checks to use latest supported python in setup py and in readme we declare that we support python but the nox test sessions only use python up to python is also missing from the classifiers list in setup py we should bump the maximum versions to where applicable if tests ... | 1 |

9,910 | 12,950,137,587 | IssuesEvent | 2020-07-19 12:02:19 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Cryptic error when XHTML outout generate copy outer param set to not allowed value | feature good first issue preprocess priority/low stale | If I process DITA OT XHTML using DITA OT 2.x and the copy outer parameter set to:

```

-Dgenerate.copy.outer=2

```

I obtain a very cryptic error at some point like:

```

BUILD FAILED

C:\wade\DITA-OT\build.xml:41: The following error occurred while executing this line:

C... | 1.0 | Cryptic error when XHTML outout generate copy outer param set to not allowed value - If I process DITA OT XHTML using DITA OT 2.x and the copy outer parameter set to:

```

-Dgenerate.copy.outer=2

```

I obtain a very cryptic error at some point like:

```

BUILD FAILED

C:\wade\DITA-OT... | process | cryptic error when xhtml outout generate copy outer param set to not allowed value if i process dita ot xhtml using dita ot x and the copy outer parameter set to dgenerate copy outer i obtain a very cryptic error at some point like build failed c wade dita ot... | 1 |

84,425 | 15,721,437,312 | IssuesEvent | 2021-03-29 03:06:36 | mycomplexsoul/delta | https://api.github.com/repos/mycomplexsoul/delta | opened | CVE-2020-28502 (High) detected in xmlhttprequest-ssl-1.5.5.tgz | security vulnerability | ## CVE-2020-28502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlhttprequest-ssl-1.5.5.tgz</b></p></summary>

<p>XMLHttpRequest for Node</p>

<p>Library home page: <a href="https://r... | True | CVE-2020-28502 (High) detected in xmlhttprequest-ssl-1.5.5.tgz - ## CVE-2020-28502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlhttprequest-ssl-1.5.5.tgz</b></p></summary>

<p>XML... | non_process | cve high detected in xmlhttprequest ssl tgz cve high severity vulnerability vulnerable library xmlhttprequest ssl tgz xmlhttprequest for node library home page a href path to dependency file delta package json path to vulnerable library delta node modules xmlhttprequ... | 0 |

365,739 | 25,549,936,283 | IssuesEvent | 2022-11-29 22:28:44 | cal-itp/benefits | https://api.github.com/repos/cal-itp/benefits | closed | Make it clear which terminology to use | documentation deliverable | There have been some discussions that have come up around terminology within the Benefits project:

- "Benefit" and "discount" - cc https://github.com/cal-itp/mobility-marketplace/issues/370

- What are [the different things the user selects between](https://www.figma.com/proto/SeSd3LaLd6WkbEYhmtKpO3/Benefits-(IAL... | 1.0 | Make it clear which terminology to use - There have been some discussions that have come up around terminology within the Benefits project:

- "Benefit" and "discount" - cc https://github.com/cal-itp/mobility-marketplace/issues/370

- What are [the different things the user selects between](https://www.figma.com/p... | non_process | make it clear which terminology to use there have been some discussions that have come up around terminology within the benefits project benefit and discount cc what are is there should there be a catch all term that encompasses both the model in the app is eligibilitytype ... | 0 |

124,642 | 26,502,265,655 | IssuesEvent | 2023-01-18 11:10:55 | salmenf/webwriter | https://api.github.com/repos/salmenf/webwriter | opened | Schema-based parsing at all system boundaries | code quality core | External data (document formats, packages, etc.) should be parsed so data in the system is uniform. | 1.0 | Schema-based parsing at all system boundaries - External data (document formats, packages, etc.) should be parsed so data in the system is uniform. | non_process | schema based parsing at all system boundaries external data document formats packages etc should be parsed so data in the system is uniform | 0 |

15,883 | 20,071,519,068 | IssuesEvent | 2022-02-04 07:37:39 | plazi/treatmentBank | https://api.github.com/repos/plazi/treatmentBank | closed | figures not linked since Feb 1. | help wanted invalid processing BLR | @gsautter is there a reason that the figures in the recently batch processed articles (since Feb 1) are not linked to the images?

Could be that there is a Zenodo issue? https://tb.plazi.org/GgServer/dioStats/stats?outputFields=doc.articleUuid+doc.gbifId+doc.zenodoDepId&groupingFields=doc.articleUuid+doc.gbifId+doc.z... | 1.0 | figures not linked since Feb 1. - @gsautter is there a reason that the figures in the recently batch processed articles (since Feb 1) are not linked to the images?

Could be that there is a Zenodo issue? https://tb.plazi.org/GgServer/dioStats/stats?outputFields=doc.articleUuid+doc.gbifId+doc.zenodoDepId&groupingField... | process | figures not linked since feb gsautter is there a reason that the figures in the recently batch processed articles since feb are not linked to the images could be that there is a zenodo issue e g | 1 |

65,534 | 7,885,386,058 | IssuesEvent | 2018-06-27 12:18:37 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Create unified UI for block variants | Chrome Needs Design Feedback | Coming out of #773 and relating to other issues like #317, #728, and especially #522. There seems to be a need for a consistent style for choosing between variations on a type of block (e.g. the two types of blockquotes). I can't find a ticket tackling this across all blocks, so I hope this is an appropriate new ticket... | 1.0 | Create unified UI for block variants - Coming out of #773 and relating to other issues like #317, #728, and especially #522. There seems to be a need for a consistent style for choosing between variations on a type of block (e.g. the two types of blockquotes). I can't find a ticket tackling this across all blocks, so I... | non_process | create unified ui for block variants coming out of and relating to other issues like and especially there seems to be a need for a consistent style for choosing between variations on a type of block e g the two types of blockquotes i can t find a ticket tackling this across all blocks so i hope th... | 0 |

17,202 | 22,779,632,849 | IssuesEvent | 2022-07-08 18:07:25 | hashgraph/hedera-json-rpc-relay | https://api.github.com/repos/hashgraph/hedera-json-rpc-relay | reopened | docker service does not start, lerna missing? | bug P2 process | ### Description

Hi @Nana-EC

There seems to be an issue when I try to run the relay node as a docker service. I hope the issue is obvious once you see the logs :) Perhaps the issue is resolved once you have `lerna` as a `dependency` instead of a `devDependency`.

```sh

$ docker-compose up

relay_1 |

relay_1 ... | 1.0 | docker service does not start, lerna missing? - ### Description

Hi @Nana-EC

There seems to be an issue when I try to run the relay node as a docker service. I hope the issue is obvious once you see the logs :) Perhaps the issue is resolved once you have `lerna` as a `dependency` instead of a `devDependency`.

``... | process | docker service does not start lerna missing description hi nana ec there seems to be an issue when i try to run the relay node as a docker service i hope the issue is obvious once you see the logs perhaps the issue is resolved once you have lerna as a dependency instead of a devdependency ... | 1 |

219,894 | 17,117,650,200 | IssuesEvent | 2021-07-11 17:37:30 | phetsims/gravity-and-orbits | https://api.github.com/repos/phetsims/gravity-and-orbits | closed | CT designed API changes detected | priority:2-high status:ready-for-review type:automated-testing | ```

gravity-and-orbits : phet-io-api-compatibility : unbuilt

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1623335973957/gravity-and-orbits/gravity-and-orbits_en.html?continuousTest=%7B%22test%22%3A%5B%22gravity-and-orbits%22%2C%22phet-io-api-compatibility%22%2C%22unbuilt%22%5D%2C%22snapshotName%22%3A%22... | 1.0 | CT designed API changes detected - ```

gravity-and-orbits : phet-io-api-compatibility : unbuilt

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1623335973957/gravity-and-orbits/gravity-and-orbits_en.html?continuousTest=%7B%22test%22%3A%5B%22gravity-and-orbits%22%2C%22phet-io-api-compatibility%22%2C%22unbui... | non_process | ct designed api changes detected gravity and orbits phet io api compatibility unbuilt query ea brand phet io phetiostandalone phetiocompareapi uncaught error assertion failed designed api changes detected please roll them back or revise the reference api gravityandorbits general model siminfo d... | 0 |

8,282 | 11,447,519,499 | IssuesEvent | 2020-02-06 00:03:56 | parcel-bundler/parcel | https://api.github.com/repos/parcel-bundler/parcel | closed | posthtml-expressions breaks vue single file components | :bug: Bug HTML Preprocessing Stale Vue | <!---

Thanks for filing an issue 😄 ! Before you submit, please read the following:

Search open/closed issues before submitting since someone might have asked the same thing before!

-->

# 🐛 bug report

<!--- Provide a general summary of the issue here -->

When configuring `posthtml-expressions` in a project... | 1.0 | posthtml-expressions breaks vue single file components - <!---

Thanks for filing an issue 😄 ! Before you submit, please read the following:

Search open/closed issues before submitting since someone might have asked the same thing before!

-->

# 🐛 bug report

<!--- Provide a general summary of the issue here ... | process | posthtml expressions breaks vue single file components thanks for filing an issue 😄 before you submit please read the following search open closed issues before submitting since someone might have asked the same thing before 🐛 bug report when configuring posthtml expressions in a pr... | 1 |

216,083 | 16,628,317,797 | IssuesEvent | 2021-06-03 12:37:29 | simon-ritchie/apysc | https://api.github.com/repos/simon-ritchie/apysc | closed | Adjust document code block execution implementation to append jslib optional arguments | documentation enhancement | - Point to the common js lib directory (to reduce duplicated js lib files)

- Skip js lib exporting | 1.0 | Adjust document code block execution implementation to append jslib optional arguments - - Point to the common js lib directory (to reduce duplicated js lib files)

- Skip js lib exporting | non_process | adjust document code block execution implementation to append jslib optional arguments point to the common js lib directory to reduce duplicated js lib files skip js lib exporting | 0 |

27,258 | 5,327,170,033 | IssuesEvent | 2017-02-15 08:13:51 | nordsoftware/react-boilerplate | https://api.github.com/repos/nordsoftware/react-boilerplate | opened | Async/await | documentation enhancement | Async / await is not just for managing promises. It is the way to control synchronous / asynchronous execution on parts of the code.

Note that **await** may only be used in functions marked with the **async** keyword.

```js

// with

function sleep(ms) {

// Promise here is just to accomplish the sleep functi... | 1.0 | Async/await - Async / await is not just for managing promises. It is the way to control synchronous / asynchronous execution on parts of the code.

Note that **await** may only be used in functions marked with the **async** keyword.

```js

// with

function sleep(ms) {

// Promise here is just to accomplish th... | non_process | async await async await is not just for managing promises it is the way to control synchronous asynchronous execution on parts of the code note that await may only be used in functions marked with the async keyword js with function sleep ms promise here is just to accomplish th... | 0 |

233,912 | 19,086,056,759 | IssuesEvent | 2021-11-29 06:12:30 | boostcampwm-2021/iOS06-MateRunner | https://api.github.com/repos/boostcampwm-2021/iOS06-MateRunner | opened | [단위 테스트] TeamRunningResultViewModel | test | ## 🗣 설명

- Input에 대해 Output이 정상적으로 반환되는지 테스트합니다.

```swift

struct Input {

let viewDidLoadEvent: Observable<Void>

let closeButtonDidTapEvent: Observable<Void>

let emojiButtonDidTapEvent: Observable<Void>

}

struct Output {

var dateTime: String

var dayOf... | 1.0 | [단위 테스트] TeamRunningResultViewModel - ## 🗣 설명

- Input에 대해 Output이 정상적으로 반환되는지 테스트합니다.

```swift

struct Input {

let viewDidLoadEvent: Observable<Void>

let closeButtonDidTapEvent: Observable<Void>

let emojiButtonDidTapEvent: Observable<Void>

}

struct Output {

v... | non_process | teamrunningresultviewmodel 🗣 설명 input에 대해 output이 정상적으로 반환되는지 테스트합니다 swift struct input let viewdidloadevent observable let closebuttondidtapevent observable let emojibuttondidtapevent observable struct output var datetime string ... | 0 |

10,982 | 13,783,261,463 | IssuesEvent | 2020-10-08 18:56:04 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | closed | Efficiency Improvement | Calculator Process Heating | Graphs like O2 enrichment

Y = Savings

X = Combustion preheat temp, Flue gas temp, O2 in flue | 1.0 | Efficiency Improvement - Graphs like O2 enrichment

Y = Savings

X = Combustion preheat temp, Flue gas temp, O2 in flue | process | efficiency improvement graphs like enrichment y savings x combustion preheat temp flue gas temp in flue | 1 |

118,668 | 11,985,758,418 | IssuesEvent | 2020-04-07 18:04:54 | logicahealth/covid-19 | https://api.github.com/repos/logicahealth/covid-19 | closed | Add additional licensing statements to IG landing page. | documentation enhancement | Submitted OBO Carol Macumber of Clinical Architecture and agreed to be Stan:

For any specification that references external terminologies, it’s HL7’s policy to include the following Copyright statement.

"This HL7 specification contains and references intellectual property owned by third parties ("Third Party IP"... | 1.0 | Add additional licensing statements to IG landing page. - Submitted OBO Carol Macumber of Clinical Architecture and agreed to be Stan:

For any specification that references external terminologies, it’s HL7’s policy to include the following Copyright statement.

"This HL7 specification contains and references inte... | non_process | add additional licensing statements to ig landing page submitted obo carol macumber of clinical architecture and agreed to be stan for any specification that references external terminologies it’s ’s policy to include the following copyright statement this specification contains and references intellec... | 0 |

605 | 3,074,885,578 | IssuesEvent | 2015-08-20 10:11:07 | sysown/proxysql-0.2 | https://api.github.com/repos/sysown/proxysql-0.2 | opened | Make query retry optional | CONNECTION POOL enhancement MYSQL PROTOCOL QUERY PROCESSOR | ## Why

ProxySQL is now able to re-execute queries if these fails because killed or the server has gone away.

This feature should be optional

## What

* [ ] add variable mysql-query_retries_on_failure

* [ ] make a new field in mysql_query_rules | 1.0 | Make query retry optional - ## Why

ProxySQL is now able to re-execute queries if these fails because killed or the server has gone away.

This feature should be optional

## What

* [ ] add variable mysql-query_retries_on_failure

* [ ] make a new field in mysql_query_rules | process | make query retry optional why proxysql is now able to re execute queries if these fails because killed or the server has gone away this feature should be optional what add variable mysql query retries on failure make a new field in mysql query rules | 1 |

14,525 | 17,620,701,885 | IssuesEvent | 2021-08-18 14:59:11 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Changes to GO:0039580 suppression by virus of host PKR activity | multi-species process | Hi @pmasson55

GO:0039580 suppression by virus of host PKR activity is mapped to ~KW-1223~ https://www.uniprot.org/keywords/1102 and https://viralzone.expasy.org/554

Looks like proteins annotated to that term inhibit PKR, which is a kinase that regulates eukaryotic translation initiation factor 2.

So - a map... | 1.0 | Changes to GO:0039580 suppression by virus of host PKR activity - Hi @pmasson55

GO:0039580 suppression by virus of host PKR activity is mapped to ~KW-1223~ https://www.uniprot.org/keywords/1102 and https://viralzone.expasy.org/554

Looks like proteins annotated to that term inhibit PKR, which is a kinase that r... | process | changes to go suppression by virus of host pkr activity hi go suppression by virus of host pkr activity is mapped to kw and looks like proteins annotated to that term inhibit pkr which is a kinase that regulates eukaryotic translation initiation factor so a mapping to go suppressio... | 1 |

1,470 | 4,049,448,673 | IssuesEvent | 2016-05-23 14:15:14 | brucemiller/LaTeXML | https://api.github.com/repos/brucemiller/LaTeXML | closed | A bug in mathtools.sty.ltxml? | bug packages postprocessing | If I compile the below code via

`latexmlc --mathimages --destination=test-out.xml test.tex`

then I get an error: the image of the formula can’t be created. If I delete the (unused) package mathtools, then everything goes fine. (The real problem happens in a larger TEX file where I indeed need mathtools, and where the... | 1.0 | A bug in mathtools.sty.ltxml? - If I compile the below code via

`latexmlc --mathimages --destination=test-out.xml test.tex`

then I get an error: the image of the formula can’t be created. If I delete the (unused) package mathtools, then everything goes fine. (The real problem happens in a larger TEX file where I inde... | process | a bug in mathtools sty ltxml if i compile the below code via latexmlc mathimages destination test out xml test tex then i get an error the image of the formula can’t be created if i delete the unused package mathtools then everything goes fine the real problem happens in a larger tex file where i inde... | 1 |

681,651 | 23,319,592,746 | IssuesEvent | 2022-08-08 15:13:41 | SeekyCt/ppcdis | https://api.github.com/repos/SeekyCt/ppcdis | opened | Improve tail call detection | bug enhancement high priority | In a lot of cases, tail calls are missed. A branch should never happen to a stwu r1 outside of tail calls, so that could fix a lot of the current cases. Performing partial finalisation of tags before doing the tail call postprocessing could help too | 1.0 | Improve tail call detection - In a lot of cases, tail calls are missed. A branch should never happen to a stwu r1 outside of tail calls, so that could fix a lot of the current cases. Performing partial finalisation of tags before doing the tail call postprocessing could help too | non_process | improve tail call detection in a lot of cases tail calls are missed a branch should never happen to a stwu outside of tail calls so that could fix a lot of the current cases performing partial finalisation of tags before doing the tail call postprocessing could help too | 0 |

2,297 | 5,116,217,883 | IssuesEvent | 2017-01-07 01:18:36 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Test failure: System.ServiceProcess.Tests.SafeServiceControllerTests/GetServices | area-System.ServiceProcess test-run-core | Opened on behalf of @jiangzeng

The test `System.ServiceProcess.Tests.SafeServiceControllerTests/GetServices` has failed.

KeyIso.CanStop\r

Expected: True\r

Actual: False

Stack Trace:

at System.ServiceProcess.Tests.SafeServiceControllerTests.GetServices()

Build : Master - 20161215.04 (Cor... | 1.0 | Test failure: System.ServiceProcess.Tests.SafeServiceControllerTests/GetServices - Opened on behalf of @jiangzeng

The test `System.ServiceProcess.Tests.SafeServiceControllerTests/GetServices` has failed.

KeyIso.CanStop\r

Expected: True\r

Actual: False

Stack Trace:

at System.ServiceProce... | process | test failure system serviceprocess tests safeservicecontrollertests getservices opened on behalf of jiangzeng the test system serviceprocess tests safeservicecontrollertests getservices has failed keyiso canstop r expected true r actual false stack trace at system serviceproce... | 1 |

254,736 | 8,087,342,951 | IssuesEvent | 2018-08-09 01:10:01 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | USER ISSUE: Bullrush seed showing water when planted and then one died | Low Priority | **Version:** 0.7.0.0 beta staging-7da08558

they still have 6.6 hours to grow to maturit... | 1.0 | USER ISSUE: Bullrush seed showing water when planted and then one died - **Version:** 0.7.0.0 beta staging-7da08558

to match the defined memory limit for the pods. | 1.0 | Define default CPU requests/limits - It would be nice to define a default CPU limit (and potentially a request) to match the defined memory limit for the pods. | non_process | define default cpu requests limits it would be nice to define a default cpu limit and potentially a request to match the defined memory limit for the pods | 0 |

46,529 | 6,020,733,073 | IssuesEvent | 2017-06-07 17:05:35 | calvaryQC/website-public | https://api.github.com/repos/calvaryQC/website-public | closed | Audio Sermons Page | ✭ redesign | This is a page that will be the audio library of all sermons taught from Calvary Chapel of Queen Creek speakers for Sundays, Wednesdays, and special events.

Sermons should be:

- [x] Under the Media Menu Header

- [x] Move all current data to new site

- [x] Apply new layout | 1.0 | Audio Sermons Page - This is a page that will be the audio library of all sermons taught from Calvary Chapel of Queen Creek speakers for Sundays, Wednesdays, and special events.

Sermons should be:

- [x] Under the Media Menu Header

- [x] Move all current data to new site

- [x] Apply new layout | non_process | audio sermons page this is a page that will be the audio library of all sermons taught from calvary chapel of queen creek speakers for sundays wednesdays and special events sermons should be under the media menu header move all current data to new site apply new layout | 0 |

32,909 | 27,086,553,488 | IssuesEvent | 2023-02-14 17:24:10 | phpmyadmin/scripts | https://api.github.com/repos/phpmyadmin/scripts | closed | PHP upgrade is required for demo server | infrastructure | PHP 8.1.0+ is required.

Currently installed version is: 7.4.33 | 1.0 | PHP upgrade is required for demo server - PHP 8.1.0+ is required.

Currently installed version is: 7.4.33 | non_process | php upgrade is required for demo server php is required currently installed version is | 0 |

130,331 | 18,155,767,335 | IssuesEvent | 2021-09-27 01:12:27 | benlazarine/cas-overlay | https://api.github.com/repos/benlazarine/cas-overlay | opened | CVE-2019-12400 (Medium) detected in xmlsec-2.0.5.jar | security vulnerability | ## CVE-2019-12400 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlsec-2.0.5.jar</b></p></summary>

<p>Apache XML Security for Java supports XML-Signature Syntax and Processing,

... | True | CVE-2019-12400 (Medium) detected in xmlsec-2.0.5.jar - ## CVE-2019-12400 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlsec-2.0.5.jar</b></p></summary>

<p>Apache XML Security for... | non_process | cve medium detected in xmlsec jar cve medium severity vulnerability vulnerable library xmlsec jar apache xml security for java supports xml signature syntax and processing recommendation february and xml encryption syntax and processing recommendation ... | 0 |

13,884 | 16,654,744,413 | IssuesEvent | 2021-06-05 10:08:41 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Responsive issue > Multiple logos are getting displayed where the device width is small | Bug P2 Participant manager Process: Fixed Process: Tested dev | Responsive issue > Multiple logos are getting displayed where the device width is small

| 2.0 | [PM] Responsive issue > Multiple logos are getting displayed where the device width is small - Responsive issue > Multiple logos are getting displayed where the device width is small

| process | responsive issue multiple logos are getting displayed where the device width is small responsive issue multiple logos are getting displayed where the device width is small | 1 |

30,046 | 5,996,271,758 | IssuesEvent | 2017-06-03 12:41:14 | rekcuFniarB/forum-theprodigy-ru | https://api.github.com/repos/rekcuFniarB/forum-theprodigy-ru | opened | В профиле в списке последних постов и комментов длинные ссылки ломают структуру форума | bug Component-UI Milestone-Release2 Priority-High Type-Defect |

собственно скрин | 1.0 | В профиле в списке последних постов и комментов длинные ссылки ломают структуру форума -

собственно скрин | non_process | в профиле в списке последних постов и комментов длинные ссылки ломают структуру форума собственно скрин | 0 |

7,650 | 10,738,746,747 | IssuesEvent | 2019-10-29 15:15:50 | prisma/prisma2 | https://api.github.com/repos/prisma/prisma2 | closed | Make Prisma generate independent of environment | bug/2-confirmed kind/bug process/candidate | `prisma generate` fails when a particular environment variable is not available. The reason(s) for this are:

```groovy

datasource ds {

provider = env("DB_PROVIDER")

url = env("DB_URL")

}

```

If `DB_PROVIDER` is used, then the generate CLI **needs** to resolve it to proceed as it needs to know the data s... | 1.0 | Make Prisma generate independent of environment - `prisma generate` fails when a particular environment variable is not available. The reason(s) for this are:

```groovy

datasource ds {

provider = env("DB_PROVIDER")

url = env("DB_URL")

}

```

If `DB_PROVIDER` is used, then the generate CLI **needs** to re... | process | make prisma generate independent of environment prisma generate fails when a particular environment variable is not available the reason s for this are groovy datasource ds provider env db provider url env db url if db provider is used then the generate cli needs to re... | 1 |

21,384 | 3,702,306,929 | IssuesEvent | 2016-02-29 16:23:05 | owncloud/core | https://api.github.com/repos/owncloud/core | opened | Make it possible to unshare all shares, or a subset, in one click | app:files design enhancement - proposed feature:sharing | **User type**: Logged-in

**User level**: All

### Description

<!--

Please try to give as much information as you can about your request

-->

From 9.0, all reshares are shown in the owner's sharing tab and there is no visual distinction between shares and reshares. Owners can only guess to which of their share... | 1.0 | Make it possible to unshare all shares, or a subset, in one click - **User type**: Logged-in

**User level**: All

### Description

<!--

Please try to give as much information as you can about your request

-->

From 9.0, all reshares are shown in the owner's sharing tab and there is no visual distinction betwee... | non_process | make it possible to unshare all shares or a subset in one click user type logged in user level all description please try to give as much information as you can about your request from all reshares are shown in the owner s sharing tab and there is no visual distinction betwee... | 0 |

13,687 | 16,444,947,031 | IssuesEvent | 2021-05-20 18:24:32 | googleapis/python-spanner-django | https://api.github.com/repos/googleapis/python-spanner-django | closed | Change tests parallelizing mechanism | api: spanner priority: p1 type: process | While working on the last PR I've got in the situation when kokoro checks passed (green), but after some time their status became red (because some parallelized tests failed - some can take [more than 20 min.](https://source.cloud.google.com/results/invocations/0417017c-7716-4076-87b7-d2a5dd5a1d48/targets)). This can c... | 1.0 | Change tests parallelizing mechanism - While working on the last PR I've got in the situation when kokoro checks passed (green), but after some time their status became red (because some parallelized tests failed - some can take [more than 20 min.](https://source.cloud.google.com/results/invocations/0417017c-7716-4076-... | process | change tests parallelizing mechanism while working on the last pr i ve got in the situation when kokoro checks passed green but after some time their status became red because some parallelized tests failed some can take this can cause problems with automerge bot that runs checks and merges pr automatica... | 1 |

18,176 | 24,224,281,486 | IssuesEvent | 2022-09-26 13:21:17 | altillimity/SatDump | https://api.github.com/repos/altillimity/SatDump | closed | multiple batch decoding no result | bug Processing | I have noticed this problem on earlier versions, but in #465 its real problem. I have batch file to offline decode recorded files (VHF, Meteor M2). Using satdump-ui.exe no problem, those are decoded each, one by one manually, but batch file processing fails to decode, created no files and .cadu have zero size. It looks... | 1.0 | multiple batch decoding no result - I have noticed this problem on earlier versions, but in #465 its real problem. I have batch file to offline decode recorded files (VHF, Meteor M2). Using satdump-ui.exe no problem, those are decoded each, one by one manually, but batch file processing fails to decode, created no file... | process | multiple batch decoding no result i have noticed this problem on earlier versions but in its real problem i have batch file to offline decode recorded files vhf meteor using satdump ui exe no problem those are decoded each one by one manually but batch file processing fails to decode created no files a... | 1 |

521,578 | 15,111,914,991 | IssuesEvent | 2021-02-08 21:05:46 | CDH-Studio/I-Talent | https://api.github.com/repos/CDH-Studio/I-Talent | closed | Frontend yarn related errors | bug high priority | **Describe the bug**

A clear and concise description of what the bug is.

there are currently 372 errors and 3 warnings from our packages.

**To Reproduce**

run yarn check

**Expected behavior**

A clear and concise description of what you expected to happen.

**Screenshots**

If applicable, add screenshots to h... | 1.0 | Frontend yarn related errors - **Describe the bug**

A clear and concise description of what the bug is.

there are currently 372 errors and 3 warnings from our packages.

**To Reproduce**

run yarn check

**Expected behavior**

A clear and concise description of what you expected to happen.

**Screenshots**

If ... | non_process | frontend yarn related errors describe the bug a clear and concise description of what the bug is there are currently errors and warnings from our packages to reproduce run yarn check expected behavior a clear and concise description of what you expected to happen screenshots if ap... | 0 |

576,056 | 17,070,133,098 | IssuesEvent | 2021-07-07 12:21:56 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | newstalk1130.iheart.com - site is not usable | browser-firefox-ios os-ios priority-normal | <!-- @browser: Firefox iOS 34.2 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU iPhone OS 14_6 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/34.2 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/79345 -->

<!-- @extra... | 1.0 | newstalk1130.iheart.com - site is not usable - <!-- @browser: Firefox iOS 34.2 -->

<!-- @ua_header: Mozilla/5.0 (iPhone; CPU iPhone OS 14_6 like Mac OS X) AppleWebKit/605.1.15 (KHTML, like Gecko) FxiOS/34.2 Mobile/15E148 Safari/605.1.15 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/... | non_process | iheart com site is not usable url browser version firefox ios operating system ios tested another browser no problem type site is not usable description page not loading correctly steps to reproduce tried to open from search browser configu... | 0 |

12,249 | 14,767,407,123 | IssuesEvent | 2021-01-10 06:34:08 | Big-Joe-Channel/edit-repo | https://api.github.com/repos/Big-Joe-Channel/edit-repo | opened | EP | assigned new mission processing | # EP94

## Notices

- Video Title:工作去小公司还是大公司 (在2021年)/ Work for a small company or a large one?

- Thumbnail Title:小公司总监来告诉你升职之道

- Raw video: 2020-10-29_08-48-23 [点击下载](https://drive.google.com/file/d/1Mg8s0ufQuzOyCDO-CO9r4jaWOyrjp3B_/view?usp=sharing)

- Comments:bigjoe: zhiyao有时候有点啰嗦,该切就切,该加速就加速

## Todo-List... | 1.0 | EP - # EP94

## Notices

- Video Title:工作去小公司还是大公司 (在2021年)/ Work for a small company or a large one?

- Thumbnail Title:小公司总监来告诉你升职之道

- Raw video: 2020-10-29_08-48-23 [点击下载](https://drive.google.com/file/d/1Mg8s0ufQuzOyCDO-CO9r4jaWOyrjp3B_/view?usp=sharing)

- Comments:bigjoe: zhiyao有时候有点啰嗦,该切就切,该加速就加速