Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

292,999 | 25,258,191,487 | IssuesEvent | 2022-11-15 20:05:03 | eclipse-openj9/openj9 | https://api.github.com/repos/eclipse-openj9/openj9 | closed | [JDK19] JVMTI framepop02.java#id1 and singlestep01 Segfault | comp:vm test excluded project:loom jdk19 comp:jvmti | `framepop02/framepop02.java#id1` and `SingleStep/singlestep01` pass with the RI. These tests are related to Project Loom. The test failures will only be seen in JDK19+.

Related: #16187.

### Issue

There is a recursive infinite loop. The test registers a JVMTI event callback for `MethodEntry`, and invokes `jvmtiGe... | 1.0 | [JDK19] JVMTI framepop02.java#id1 and singlestep01 Segfault - `framepop02/framepop02.java#id1` and `SingleStep/singlestep01` pass with the RI. These tests are related to Project Loom. The test failures will only be seen in JDK19+.

Related: #16187.

### Issue

There is a recursive infinite loop. The test registers ... | non_process | jvmti java and segfault java and singlestep pass with the ri these tests are related to project loom the test failures will only be seen in related issue there is a recursive infinite loop the test registers a jvmti event callback for methodentry and invokes jvmtigetthre... | 0 |

12,676 | 4,513,659,031 | IssuesEvent | 2016-09-04 12:15:54 | nextcloud/gallery | https://api.github.com/repos/nextcloud/gallery | opened | GDrive-like grid view | coder wanted enhancement sponsor needed | _From @RoxasShadow on January 8, 2016 0:52_

For me would be great having the possibility to have a grid view similar to the Google Drive one with nice cropped image that share the same size.

I think it's an elegant and efficient way to look at the image thumbnails.

*Gallery+*

<img width="341" alt="screen shot 201... | 1.0 | GDrive-like grid view - _From @RoxasShadow on January 8, 2016 0:52_

For me would be great having the possibility to have a grid view similar to the Google Drive one with nice cropped image that share the same size.

I think it's an elegant and efficient way to look at the image thumbnails.

*Gallery+*

<img width="... | non_process | gdrive like grid view from roxasshadow on january for me would be great having the possibility to have a grid view similar to the google drive one with nice cropped image that share the same size i think it s an elegant and efficient way to look at the image thumbnails gallery img width a... | 0 |

2,949 | 5,930,338,028 | IssuesEvent | 2017-05-24 00:58:10 | triplea-game/triplea | https://api.github.com/repos/triplea-game/triplea | closed | New Issue Labeling process and wiki page | type: process | Creating labels ad-hoc is good, but not having a shared definition of what labels mean is not. We have some notes on the main readme about what labels meant. This was not a complete list.

I copy/pasted our current label set (and created a 'not fixing / ice box' label), and put them in this wiki page: https://github.... | 1.0 | New Issue Labeling process and wiki page - Creating labels ad-hoc is good, but not having a shared definition of what labels mean is not. We have some notes on the main readme about what labels meant. This was not a complete list.

I copy/pasted our current label set (and created a 'not fixing / ice box' label), and ... | process | new issue labeling process and wiki page creating labels ad hoc is good but not having a shared definition of what labels mean is not we have some notes on the main readme about what labels meant this was not a complete list i copy pasted our current label set and created a not fixing ice box label and ... | 1 |

43,948 | 5,719,686,767 | IssuesEvent | 2017-04-19 22:45:26 | brave/browser-laptop | https://api.github.com/repos/brave/browser-laptop | opened | Settings v Preferences mismatch | design feature/about-pages settings | - Did you search for similar issues before submitting this one?

Yes

- Describe the issue you encountered:

I was opening an issue for Sync, and noticed that we use _Settings_ from the top menu:

**_Edit > Settings_** for the `about:preferences` page.

Sync issue opened for reference: https://github.com/brave/s... | 1.0 | Settings v Preferences mismatch - - Did you search for similar issues before submitting this one?

Yes

- Describe the issue you encountered:

I was opening an issue for Sync, and noticed that we use _Settings_ from the top menu:

**_Edit > Settings_** for the `about:preferences` page.

Sync issue opened for ref... | non_process | settings v preferences mismatch did you search for similar issues before submitting this one yes describe the issue you encountered i was opening an issue for sync and noticed that we use settings from the top menu edit settings for the about preferences page sync issue opened for ref... | 0 |

46,937 | 13,198,015,237 | IssuesEvent | 2020-08-14 01:01:50 | orenavitov/promoted-builds-plugin | https://api.github.com/repos/orenavitov/promoted-builds-plugin | opened | CVE-2020-2230 (Medium) detected in jenkins-core-2.121.1.jar | security vulnerability | ## CVE-2020-2230 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jenkins-core-2.121.1.jar</b></p></summary>

<p>Jenkins core code and view files to render HTML.</p>

<p>Path to depende... | True | CVE-2020-2230 (Medium) detected in jenkins-core-2.121.1.jar - ## CVE-2020-2230 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jenkins-core-2.121.1.jar</b></p></summary>

<p>Jenkins c... | non_process | cve medium detected in jenkins core jar cve medium severity vulnerability vulnerable library jenkins core jar jenkins core code and view files to render html path to dependency file tmp ws scm promoted builds plugin pom xml path to vulnerable library epository org jenkin... | 0 |

16,542 | 21,567,859,771 | IssuesEvent | 2022-05-02 02:31:43 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Custom QNetworkAccessManager for QWebPage segfaults | Processing Bug Python Console | ### What is the bug or the crash?

Using `QWebView`, I needed to accommodate for an authentication procedure where I have to capture some of the javascript responses a web page does. That requires a custom `QNetworkAccessManager` which needs to be connected with the `QWebPage`, see script below (the reason is by defa... | 1.0 | Custom QNetworkAccessManager for QWebPage segfaults - ### What is the bug or the crash?

Using `QWebView`, I needed to accommodate for an authentication procedure where I have to capture some of the javascript responses a web page does. That requires a custom `QNetworkAccessManager` which needs to be connected with t... | process | custom qnetworkaccessmanager for qwebpage segfaults what is the bug or the crash using qwebview i needed to accommodate for an authentication procedure where i have to capture some of the javascript responses a web page does that requires a custom qnetworkaccessmanager which needs to be connected with t... | 1 |

79,680 | 9,933,936,249 | IssuesEvent | 2019-07-02 13:27:51 | microsoft/terminal | https://api.github.com/repos/microsoft/terminal | closed | Bug Report | Area-Settings Issue-Question Needs-Author-Feedback Product-Terminal Resolution-By-Design |

# Environment

```none

Windows build number: Microsoft Windows [Version 10.0.18362.207]

Windows Terminal version (if applicable): unsure how to check, fresh install from the Microsoft Store

```

# Steps to reproduce

<!-- A description of how to trigger this bug. -->

Start Windows Terminal,

Attempt to move... | 1.0 | Bug Report -

# Environment

```none

Windows build number: Microsoft Windows [Version 10.0.18362.207]

Windows Terminal version (if applicable): unsure how to check, fresh install from the Microsoft Store

```

# Steps to reproduce

<!-- A description of how to trigger this bug. -->

Start Windows Terminal,

At... | non_process | bug report environment none windows build number microsoft windows windows terminal version if applicable unsure how to check fresh install from the microsoft store steps to reproduce start windows terminal attempt to move the window around it is not possible focusing and unfocu... | 0 |

8,243 | 11,420,168,833 | IssuesEvent | 2020-02-03 09:35:09 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | Merge GO:0044054 rounding by symbiont of host cells into GO:0052039 modification by symbiont of host cytoskeleton | multi-species process | GO:0044054 rounding by symbiont of host cells -> 1 annotation

is a read-out of GO:0052039 modification by symbiont of host cytoskeleton (1 annotation)

These two terms will be merged.

@RLovering just informing you since the annotation to 'GO:0044054 rounding by symbiont of host cells ' is from your group.

Th... | 1.0 | Merge GO:0044054 rounding by symbiont of host cells into GO:0052039 modification by symbiont of host cytoskeleton - GO:0044054 rounding by symbiont of host cells -> 1 annotation

is a read-out of GO:0052039 modification by symbiont of host cytoskeleton (1 annotation)

These two terms will be merged.

@RLovering j... | process | merge go rounding by symbiont of host cells into go modification by symbiont of host cytoskeleton go rounding by symbiont of host cells annotation is a read out of go modification by symbiont of host cytoskeleton annotation these two terms will be merged rlovering just informing you since ... | 1 |

148,378 | 5,680,706,200 | IssuesEvent | 2017-04-13 02:30:34 | meetinghouse/cms | https://api.github.com/repos/meetinghouse/cms | opened | Dark Theme: Add "active"/"not-active" classes to /projects/tags and /posts/tags sub-menu items. | Medium Priority | @vivek-chaudhari When you click on a sub-menu item for projects (portfolios) or blog and the page loads, there is no "active" class on the active menu item. Can you add the active/not-active classes functionality to those sub-menus on dark theme like it is on the main menu?

You can see the projects sub-items on Stev... | 1.0 | Dark Theme: Add "active"/"not-active" classes to /projects/tags and /posts/tags sub-menu items. - @vivek-chaudhari When you click on a sub-menu item for projects (portfolios) or blog and the page loads, there is no "active" class on the active menu item. Can you add the active/not-active classes functionality to those ... | non_process | dark theme add active not active classes to projects tags and posts tags sub menu items vivek chaudhari when you click on a sub menu item for projects portfolios or blog and the page loads there is no active class on the active menu item can you add the active not active classes functionality to those ... | 0 |

7,756 | 10,867,564,318 | IssuesEvent | 2019-11-15 00:23:34 | googleapis/nodejs-containeranalysis | https://api.github.com/repos/googleapis/nodejs-containeranalysis | closed | Release GA | type: process | Package name: `@google-cloud/containeranalysis`

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [x] 28 days elapsed ... | 1.0 | Release GA - Package name: `@google-cloud/containeranalysis`

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [x] 28 ... | process | release ga package name google cloud containeranalysis current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue required day... | 1 |

121,426 | 10,168,139,398 | IssuesEvent | 2019-08-07 20:02:01 | davissong/first_reposit | https://api.github.com/repos/davissong/first_reposit | opened | SQA-FTest-Learning Markdown from template | test | <h3>Tests US: # </h3>

Description: trying to use markdown to show how we can write test scripts from GitHub

Test Cases (External) Link to Test Cases, <h1>trying to replace this</h2>

Where executed from:

- [x] GFE

- [x] CAG

- [x] Both UI and API are on same laptop

*Show Result: via labels like the Pass/Fa... | 1.0 | SQA-FTest-Learning Markdown from template - <h3>Tests US: # </h3>

Description: trying to use markdown to show how we can write test scripts from GitHub

Test Cases (External) Link to Test Cases, <h1>trying to replace this</h2>

Where executed from:

- [x] GFE

- [x] CAG

- [x] Both UI and API are on same laptop

... | non_process | sqa ftest learning markdown from template tests us description trying to use markdown to show how we can write test scripts from github test cases external link to test cases trying to replace this where executed from gfe cag both ui and api are on same laptop show result via... | 0 |

551,701 | 16,178,197,157 | IssuesEvent | 2021-05-03 10:23:38 | DIT112-V21/group-09 | https://api.github.com/repos/DIT112-V21/group-09 | closed | Release and test alpha-1 version | JS enhancement high-priority project sprint-2 | ## Objectives

- Finalize the Frontend app - [Milestone 1](https://github.com/DIT112-V21/group-09/milestone/1)

- Setup ElectronJS build workflow and initialize

- Automatically build and deploy alpha-1 release for macOS, Windows and Ubuntu

## Target

- By mid-week around Apr 28th, 2021

- At latest by the end of Sp... | 1.0 | Release and test alpha-1 version - ## Objectives

- Finalize the Frontend app - [Milestone 1](https://github.com/DIT112-V21/group-09/milestone/1)

- Setup ElectronJS build workflow and initialize

- Automatically build and deploy alpha-1 release for macOS, Windows and Ubuntu

## Target

- By mid-week around Apr 28th,... | non_process | release and test alpha version objectives finalize the frontend app setup electronjs build workflow and initialize automatically build and deploy alpha release for macos windows and ubuntu target by mid week around apr at latest by the end of sprint next steps after rel... | 0 |

135,573 | 18,714,904,476 | IssuesEvent | 2021-11-03 02:18:27 | ChoeMinji/react | https://api.github.com/repos/ChoeMinji/react | opened | CVE-2020-7793 (High) detected in multiple libraries | security vulnerability | ## CVE-2020-7793 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ua-parser-js-0.7.22.tgz</b>, <b>ua-parser-js-0.7.12.tgz</b>, <b>ua-parser-js-0.7.20.tgz</b></p></summary>

<p>

<detail... | True | CVE-2020-7793 (High) detected in multiple libraries - ## CVE-2020-7793 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ua-parser-js-0.7.22.tgz</b>, <b>ua-parser-js-0.7.12.tgz</b>, <b>... | non_process | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries ua parser js tgz ua parser js tgz ua parser js tgz ua parser js tgz lightweight javascript based user agent string parser library home page a href path... | 0 |

14,620 | 17,766,639,947 | IssuesEvent | 2021-08-30 08:23:07 | googleapis/teeny-request | https://api.github.com/repos/googleapis/teeny-request | reopened | Dependency Dashboard | type: process | This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Awaiting Schedule

These updates are awaiting their schedule. Click on a checkbox to get an update now.

- [ ] <!-- unschedule-branch=renovate/actions-setup-node-2.x -->chore(d... | 1.0 | Dependency Dashboard - This issue provides visibility into Renovate updates and their statuses. [Learn more](https://docs.renovatebot.com/key-concepts/dashboard/)

## Awaiting Schedule

These updates are awaiting their schedule. Click on a checkbox to get an update now.

- [ ] <!-- unschedule-branch=renovate/actions-se... | process | dependency dashboard this issue provides visibility into renovate updates and their statuses awaiting schedule these updates are awaiting their schedule click on a checkbox to get an update now chore deps update actions setup node action to ignored or blocked these are blocked by an existin... | 1 |

189,633 | 22,047,080,635 | IssuesEvent | 2022-05-30 03:51:13 | madhans23/linux-4.1.15 | https://api.github.com/repos/madhans23/linux-4.1.15 | closed | CVE-2019-19037 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed | security vulnerability | ## CVE-2019-19037 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home p... | True | CVE-2019-19037 (Medium) detected in linux-stable-rtv4.1.33 - autoclosed - ## CVE-2019-19037 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p... | non_process | cve medium detected in linux stable autoclosed cve medium severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulner... | 0 |

20,904 | 27,746,311,852 | IssuesEvent | 2023-03-15 17:16:31 | openxla/community | https://api.github.com/repos/openxla/community | opened | Add iree-discuss redirect to appropriate ML on openxla.org | process | Would be good to have all OpenXLA mailing lists live on openxla.org. Should set up iree-discuss to migrate either to openxla-discuss or dedicated ML on openxla.org. | 1.0 | Add iree-discuss redirect to appropriate ML on openxla.org - Would be good to have all OpenXLA mailing lists live on openxla.org. Should set up iree-discuss to migrate either to openxla-discuss or dedicated ML on openxla.org. | process | add iree discuss redirect to appropriate ml on openxla org would be good to have all openxla mailing lists live on openxla org should set up iree discuss to migrate either to openxla discuss or dedicated ml on openxla org | 1 |

14,145 | 17,035,635,740 | IssuesEvent | 2021-07-05 06:39:13 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Responsive issue > Customer logo is not getting displayed on error pages | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | AR: Customer logo is not getting displayed on error pages

ER: Customer logo should be displayed on all the error pages and if the user click on logo then user should be navigate to sign in page

| 3.0 | [PM] Responsive issue > Customer logo is not getting displayed on error pages - AR: Customer logo is not getting displayed on error pages

ER: Customer logo should be displayed on all the error pages and if the user click on logo then user should be navigate to sign in page

: The position where the uncle was in the array

- Uncle reward (just reward for the uncle)

Take a look on: https://etherscan.io/uncles | 1.0 | Add to UncleRecord - - BlockHeight: Real block number where it appeared

- Position / Index (or another name): The position where the uncle was in the array

- Uncle reward (just reward for the uncle)

Take a look on: https://etherscan.io/uncles | process | add to unclerecord blockheight real block number where it appeared position index or another name the position where the uncle was in the array uncle reward just reward for the uncle take a look on | 1 |

15,477 | 19,685,996,288 | IssuesEvent | 2022-01-11 22:14:38 | PyCQA/pylint | https://api.github.com/repos/PyCQA/pylint | closed | ValueError: generator already executing | Crash 💥 python 3.9 topic-multiprocessing Requires Astroid Update | ### Steps to reproduce

This appears very sensitive to the exact current code and environment. My attempts to create a simplified case for testing have failed. I have a commit in a local git repo of the failing state. I can publish that if needed to debug the root cause. There is nothing proprietory in the project.

... | 1.0 | ValueError: generator already executing - ### Steps to reproduce

This appears very sensitive to the exact current code and environment. My attempts to create a simplified case for testing have failed. I have a commit in a local git repo of the failing state. I can publish that if needed to debug the root cause. Ther... | process | valueerror generator already executing steps to reproduce this appears very sensitive to the exact current code and environment my attempts to create a simplified case for testing have failed i have a commit in a local git repo of the failing state i can publish that if needed to debug the root cause ther... | 1 |

11,300 | 14,105,572,282 | IssuesEvent | 2020-11-06 13:42:39 | paul-buerkner/brms | https://api.github.com/repos/paul-buerkner/brms | closed | PPC for (right-)censored data | feature post-processing | It seems like posterior predictive checks (PPCs) for censored data (in non-Cox models) are currently performed by leaving out the censored observations from the observed data. I think this is not appropriate: For example, the noncensored observations may be systematically smaller than the censored ones. (Think of the e... | 1.0 | PPC for (right-)censored data - It seems like posterior predictive checks (PPCs) for censored data (in non-Cox models) are currently performed by leaving out the censored observations from the observed data. I think this is not appropriate: For example, the noncensored observations may be systematically smaller than th... | process | ppc for right censored data it seems like posterior predictive checks ppcs for censored data in non cox models are currently performed by leaving out the censored observations from the observed data i think this is not appropriate for example the noncensored observations may be systematically smaller than th... | 1 |

12,472 | 14,940,966,887 | IssuesEvent | 2021-01-25 19:03:06 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | opened | Process Hst thing asked for?eating Small things | Process Heating Quick Fix | Aux - why is duty cycle first. it should be last | 1.0 | Process Hst thing asked for?eating Small things - Aux - why is duty cycle first. it should be last | process | process hst thing asked for eating small things aux why is duty cycle first it should be last | 1 |

159,144 | 6,041,203,894 | IssuesEvent | 2017-06-10 21:49:57 | svof/svof | https://api.github.com/repos/svof/svof | closed | Wrong definition of `a_darkyellow` | bug low priority simple difficulty up for grabs | > Sent By: Lynara On 2017-03-20 00:54:22

A_darkyellow, a color made by svo, is wrong - it should be {179,179,0}. It is {0,179,0}. Which is a_darkgreen. | 1.0 | Wrong definition of `a_darkyellow` - > Sent By: Lynara On 2017-03-20 00:54:22

A_darkyellow, a color made by svo, is wrong - it should be {179,179,0}. It is {0,179,0}. Which is a_darkgreen. | non_process | wrong definition of a darkyellow sent by lynara on a darkyellow a color made by svo is wrong it should be it is which is a darkgreen | 0 |

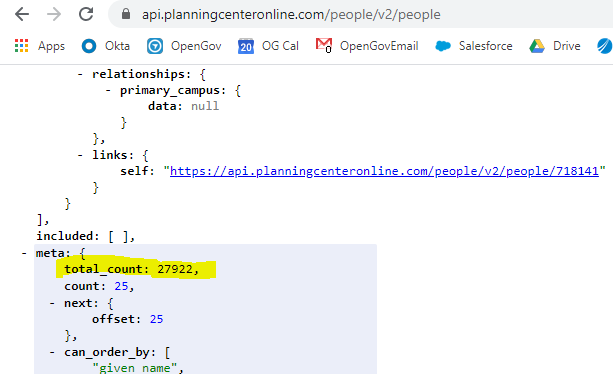

664 | 11,860,896,873 | IssuesEvent | 2020-03-25 15:33:08 | planningcenter/developers | https://api.github.com/repos/planningcenter/developers | closed | Total count is exactly 10000 when doing GET request for People (People) JSON | People | When going to https://api.planningcenteronline.com/people/v2/people on the browser, json['meta']['total_count'] reveals the real number (27922).

However, I've tried doing a GET request via Postman, an ET... | 1.0 | Total count is exactly 10000 when doing GET request for People (People) JSON - When going to https://api.planningcenteronline.com/people/v2/people on the browser, json['meta']['total_count'] reveals the real number (27922).

detected in js-yaml-3.5.5.tgz, js-yaml-3.6.1.tgz | security vulnerability | ## WS-2019-0032 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>js-yaml-3.5.5.tgz</b>, <b>js-yaml-3.6.1.tgz</b></p></summary>

<p>

<details><summary><b>js-yaml-3.5.5.tgz</b></p></summ... | True | WS-2019-0032 (High) detected in js-yaml-3.5.5.tgz, js-yaml-3.6.1.tgz - ## WS-2019-0032 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>js-yaml-3.5.5.tgz</b>, <b>js-yaml-3.6.1.tgz</b><... | non_process | ws high detected in js yaml tgz js yaml tgz ws high severity vulnerability vulnerable libraries js yaml tgz js yaml tgz js yaml tgz yaml parser and serializer library home page a href path to dependency file js demo package json path... | 0 |

20,701 | 27,385,849,614 | IssuesEvent | 2023-02-28 13:16:10 | microsoftgraph/msgraph-metadata | https://api.github.com/repos/microsoftgraph/msgraph-metadata | closed | beta generation failing because of delta function | Generator: Metadata Preprocessor metadata-issue blocking | [beta weekly generation is currently failing](https://microsoftgraph.visualstudio.com/Graph%20Developer%20Experiences/_build/results?buildId=106293&view=logs&j=4c81e8e4-6eb9-5def-7261-b44ad0fc1b7d&t=163839c6-f69e-5b5d-39e2-5b38e97d3fe3) because [a delta function on directory objects was added](https://dev.azure.com/msa... | 1.0 | beta generation failing because of delta function - [beta weekly generation is currently failing](https://microsoftgraph.visualstudio.com/Graph%20Developer%20Experiences/_build/results?buildId=106293&view=logs&j=4c81e8e4-6eb9-5def-7261-b44ad0fc1b7d&t=163839c6-f69e-5b5d-39e2-5b38e97d3fe3) because [a delta function on di... | process | beta generation failing because of delta function because which conflicts with the derived types delta functions i believe we should introduce an xsl fix that removes the functions on the derived types only for beta only for kiota based sdk for the time being until the service fixes their definition to ... | 1 |

80,728 | 3,573,587,270 | IssuesEvent | 2016-01-27 07:30:47 | OpenSRP/opensrp-server | https://api.github.com/repos/OpenSRP/opensrp-server | closed | Immediate schedule generation | BANGLADESH High Priority | When a front line (FWA) health worker visit a house and register a household. Then after that registration Opensrp server generate pregnancy surveillance schedule (psrf) after 8 weeks if it finds a eligible couple in that household registration. But the workers want to complete the surveillance in a day they are doing ... | 1.0 | Immediate schedule generation - When a front line (FWA) health worker visit a house and register a household. Then after that registration Opensrp server generate pregnancy surveillance schedule (psrf) after 8 weeks if it finds a eligible couple in that household registration. But the workers want to complete the surve... | non_process | immediate schedule generation when a front line fwa health worker visit a house and register a household then after that registration opensrp server generate pregnancy surveillance schedule psrf after weeks if it finds a eligible couple in that household registration but the workers want to complete the surve... | 0 |

4,905 | 7,783,585,384 | IssuesEvent | 2018-06-06 10:23:43 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | Why are Ubuntu and centos 7vdisk automatic snapshots different? | process_wontfix | My Ubuntu has automatic snapshots of virtual disks, why centos 7 does not have automatic snapshots of virtual disks, what do I need to do? | 1.0 | Why are Ubuntu and centos 7vdisk automatic snapshots different? - My Ubuntu has automatic snapshots of virtual disks, why centos 7 does not have automatic snapshots of virtual disks, what do I need to do? | process | why are ubuntu and centos automatic snapshots different my ubuntu has automatic snapshots of virtual disks why centos does not have automatic snapshots of virtual disks what do i need to do | 1 |

21,141 | 28,111,846,866 | IssuesEvent | 2023-03-31 07:50:45 | Open-EO/openeo-processes | https://api.github.com/repos/Open-EO/openeo-processes | closed | load_stac_collection | new process | **Proposed Process ID:** load_stac_collection

## Context

An openEO backend now only supports predefined collections, but properly configured STAC collections can also be loaded. This avoids the need for static config, and simplifies use cases like running an openEO backend out of the box.

There's a few bigger qu... | 1.0 | load_stac_collection - **Proposed Process ID:** load_stac_collection

## Context

An openEO backend now only supports predefined collections, but properly configured STAC collections can also be loaded. This avoids the need for static config, and simplifies use cases like running an openEO backend out of the box.

... | process | load stac collection proposed process id load stac collection context an openeo backend now only supports predefined collections but properly configured stac collections can also be loaded this avoids the need for static config and simplifies use cases like running an openeo backend out of the box ... | 1 |

306,769 | 26,494,028,796 | IssuesEvent | 2023-01-18 02:46:52 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | closed | The toolbar and editor for one app configuration are not localized | :heavy_check_mark: merged 🧪 testing :globe_with_meridians: localization :gear: app-config | **Storage Explorer Version:** 1.28.0-dev

**Build Number:** 20221226.3

**Branch:** main

**Platform/OS:** Windows 10/Linux Ubuntu 22.04/MacOS Ventura 13.1 (Apple M1 Pro)

**Reproduce Languages:** All

**Architecture:** ia32/x64

**How Found:** From running test cases

**Regression From:** Not a regression

## Steps ... | 1.0 | The toolbar and editor for one app configuration are not localized - **Storage Explorer Version:** 1.28.0-dev

**Build Number:** 20221226.3

**Branch:** main

**Platform/OS:** Windows 10/Linux Ubuntu 22.04/MacOS Ventura 13.1 (Apple M1 Pro)

**Reproduce Languages:** All

**Architecture:** ia32/x64

**How Found:** From r... | non_process | the toolbar and editor for one app configuration are not localized storage explorer version dev build number branch main platform os windows linux ubuntu macos ventura apple pro reproduce languages all architecture how found from running test cases ... | 0 |

436,380 | 12,550,374,565 | IssuesEvent | 2020-06-06 10:53:18 | EBIvariation/trait-curation | https://api.github.com/repos/EBIvariation/trait-curation | opened | Main page: implement “Export as spreadsheet” button | Priority: Low Scope: Main page | This should function in a way similar to #18. Note that this is low priority and should only be implemented after all other UI components are done & tested, unless we identify a use case which makes this more important.

== 2` and tak... | 1.0 | do not automatically create *_set_auxiliary_variables.m on preprocessor run - As `*_set_auxiliary_variables.m` is sometimes empty, it does not make sense to create it on every run. Only create it if something will be written to it.

This change requires either a flag in `M_` to be tested every time the function is ca... | process | do not automatically create set auxiliary variables m on preprocessor run as set auxiliary variables m is sometimes empty it does not make sense to create it on every run only create it if something will be written to it this change requires either a flag in m to be tested every time the function is ca... | 1 |

242,375 | 20,244,890,914 | IssuesEvent | 2022-02-14 12:50:37 | commercialhaskell/stackage | https://api.github.com/repos/commercialhaskell/stackage | closed | hspec-core-2.8.4 | failure: test-suite | ```

Test suite failure for package hspec-core-2.8.4

spec: exited with: ExitFailure 1

Failures:

test/Test/Hspec/Core/Config/OptionsSpec.hs:41:7:

1) Test.Hspec.Core.Config.Options.parseOptions, wi... | 1.0 | hspec-core-2.8.4 - ```

Test suite failure for package hspec-core-2.8.4

spec: exited with: ExitFailure 1

Failures:

test/Test/Hspec/Core/Config/OptionsSpec.hs:41:7:

1) Test.Hspec.Core.Config.Optio... | non_process | hspec core test suite failure for package hspec core spec exited with exitfailure failures test test hspec core config optionsspec hs test hspec core config option... | 0 |

4,935 | 7,795,874,294 | IssuesEvent | 2018-06-08 09:35:05 | StrikeNP/trac_test | https://api.github.com/repos/StrikeNP/trac_test | closed | Update README in directory output_scripts/twp_ice (Trac #168) | Migrated from Trac enhancement nielsenb@uwm.edu post_processing | In r3915, you've added files used to verify the .nc output for submission to the TWP-ICE intercomparison. Thanks for this.

Could you also please update the README in that same directory in order to describe each of the new files? Perhaps you could augment what you wrote in the svn commit:

". . . verification plots ... | 1.0 | Update README in directory output_scripts/twp_ice (Trac #168) - In r3915, you've added files used to verify the .nc output for submission to the TWP-ICE intercomparison. Thanks for this.

Could you also please update the README in that same directory in order to describe each of the new files? Perhaps you could augme... | process | update readme in directory output scripts twp ice trac in you ve added files used to verify the nc output for submission to the twp ice intercomparison thanks for this could you also please update the readme in that same directory in order to describe each of the new files perhaps you could augment wha... | 1 |

153,188 | 5,886,972,232 | IssuesEvent | 2017-05-17 05:35:08 | CovertJaguar/Railcraft | https://api.github.com/repos/CovertJaguar/Railcraft | closed | multiblock not forming on login | bug cannot reproduce needs verification old version priority-low | this happens whenever I log into the server in a chuck close to the coke ovens or tanks.

https://youtu.be/lowsT3twtTY

Railcraft_1.7.10-9.12.2.0.jar

Forge 10.13.4.1566

| 1.0 | multiblock not forming on login - this happens whenever I log into the server in a chuck close to the coke ovens or tanks.

https://youtu.be/lowsT3twtTY

Railcraft_1.7.10-9.12.2.0.jar

Forge 10.13.4.1566

| non_process | multiblock not forming on login this happens whenever i log into the server in a chuck close to the coke ovens or tanks railcraft jar forge | 0 |

16,832 | 22,062,010,103 | IssuesEvent | 2022-05-30 19:20:56 | tc39/proposal-regexp-v-flag | https://api.github.com/repos/tc39/proposal-regexp-v-flag | closed | Advance to Stage 3 | process | Criteria taken from [the TC39 process document](https://tc39.es/process-document/) minus those from previous stages:

> - [x] Complete spec text

https://github.com/tc39/proposal-regexp-set-notation#specification

> - [x] Designated reviewers have signed off on the current spec text

- [x] @waldemarhorwat: http... | 1.0 | Advance to Stage 3 - Criteria taken from [the TC39 process document](https://tc39.es/process-document/) minus those from previous stages:

> - [x] Complete spec text

https://github.com/tc39/proposal-regexp-set-notation#specification

> - [x] Designated reviewers have signed off on the current spec text

- [x] ... | process | advance to stage criteria taken from minus those from previous stages complete spec text designated reviewers have signed off on the current spec text waldemarhorwat msaboff all ecmascript editors have signed off on the current spec text ba... | 1 |

16,237 | 20,790,139,460 | IssuesEvent | 2022-03-17 00:28:50 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | QP/MBQL: `[:relative-datetime :current]` doesn't work inside `[:between]` filter | Type:Bug Priority:P2 Querying/Processor .Backend | Combining the two types of syntactic sugar clauses doesn't work properly and results in an error. Should be an easy fix | 1.0 | QP/MBQL: `[:relative-datetime :current]` doesn't work inside `[:between]` filter - Combining the two types of syntactic sugar clauses doesn't work properly and results in an error. Should be an easy fix | process | qp mbql doesn t work inside filter combining the two types of syntactic sugar clauses doesn t work properly and results in an error should be an easy fix | 1 |

19,390 | 25,528,473,443 | IssuesEvent | 2022-11-29 05:52:21 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | [servicegraphprocessor] Index out of range panic in updateDurationMetrics method | bug priority:p2 processor/servicegraph | ### What happened?

## Description

Index out of range panic in updateDurationMetrics method.

## Steps to Reproduce

Startting up with servicegraphprocessor configured for a while, the collector got panic.

### Collector version

v0.63.0

### Environment information

## Environment

OS: macos 12.3.1

Compiler(if ... | 1.0 | [servicegraphprocessor] Index out of range panic in updateDurationMetrics method - ### What happened?

## Description

Index out of range panic in updateDurationMetrics method.

## Steps to Reproduce

Startting up with servicegraphprocessor configured for a while, the collector got panic.

### Collector version

v... | process | index out of range panic in updatedurationmetrics method what happened description index out of range panic in updatedurationmetrics method steps to reproduce startting up with servicegraphprocessor configured for a while the collector got panic collector version environment ... | 1 |

4,382 | 7,273,083,873 | IssuesEvent | 2018-02-21 02:38:20 | GoogleCloudPlatform/google-cloud-python | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-python | closed | Logging: Release new version of google-cloud-logging | api: logging type: process | Hello,

The latest version of Google cloud logging is https://pypi.org/project/google-cloud-logging/#history, in October 2017. We wanted a PyPi release with the latest changes with respect to creating sinks with `unique_writer_identity` set.

We really would prefer not using the latest google-cloud module as we wa... | 1.0 | Logging: Release new version of google-cloud-logging - Hello,

The latest version of Google cloud logging is https://pypi.org/project/google-cloud-logging/#history, in October 2017. We wanted a PyPi release with the latest changes with respect to creating sinks with `unique_writer_identity` set.

We really would p... | process | logging release new version of google cloud logging hello the latest version of google cloud logging is in october we wanted a pypi release with the latest changes with respect to creating sinks with unique writer identity set we really would prefer not using the latest google cloud module as we want... | 1 |

258,653 | 8,178,616,979 | IssuesEvent | 2018-08-28 14:17:07 | Theophilix/event-table-edit | https://api.github.com/repos/Theophilix/event-table-edit | closed | Frontend: Enable stack view also for large screens | enhancement low priority | When stack view is chosen, the view stays in toggle mode until browser width is <640px. Enable stack view also for large screen. | 1.0 | Frontend: Enable stack view also for large screens - When stack view is chosen, the view stays in toggle mode until browser width is <640px. Enable stack view also for large screen. | non_process | frontend enable stack view also for large screens when stack view is chosen the view stays in toggle mode until browser width is enable stack view also for large screen | 0 |

94,720 | 10,851,448,412 | IssuesEvent | 2019-11-13 10:47:18 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | reopened | GeoStory User Guide | Documentation GeoStory Priority: High Task User Guide | ### Description

A proper documentation for the GeoStory tool must be provided for users in the user guide.

### Other useful information (optional):

This task must be accomplished within a dedicated branch of mapstore to be merged with master as soon as the first version of the tool is completed.

| 1.0 | GeoStory User Guide - ### Description

A proper documentation for the GeoStory tool must be provided for users in the user guide.

### Other useful information (optional):

This task must be accomplished within a dedicated branch of mapstore to be merged with master as soon as the first version of the tool is complet... | non_process | geostory user guide description a proper documentation for the geostory tool must be provided for users in the user guide other useful information optional this task must be accomplished within a dedicated branch of mapstore to be merged with master as soon as the first version of the tool is complet... | 0 |

4,370 | 7,260,515,712 | IssuesEvent | 2018-02-18 10:54:27 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | [FEATURE] New algorithms to add Z/M values to existing geometries | Automatic new feature Processing | Original commit: https://github.com/qgis/QGIS/commit/340cf93f93fb28e1aa9e23b2d80a7c38e6a89d6c by nyalldawson

Allows upgrading geometries to include these dimensions, or

overwriting any existing Z/M values with a new value.

Intended mostly as a test run for QgsProcessingFeatureBasedAlgorithm | 1.0 | [FEATURE] New algorithms to add Z/M values to existing geometries - Original commit: https://github.com/qgis/QGIS/commit/340cf93f93fb28e1aa9e23b2d80a7c38e6a89d6c by nyalldawson

Allows upgrading geometries to include these dimensions, or

overwriting any existing Z/M values with a new value.

Intended mostly as a test r... | process | new algorithms to add z m values to existing geometries original commit by nyalldawson allows upgrading geometries to include these dimensions or overwriting any existing z m values with a new value intended mostly as a test run for qgsprocessingfeaturebasedalgorithm | 1 |

40,078 | 6,797,338,956 | IssuesEvent | 2017-11-01 22:24:59 | glpi-project/php-library-glpi | https://api.github.com/repos/glpi-project/php-library-glpi | closed | Update README | documentation | Hi, @Naylin15

Please, replace this URL in the README file:

https://dev.flyve.org/glpi/apirest.php

To this one:

https://github.com/glpi-project/glpi/blob/master/apirest.md

And the new install command is:

`composer require glpi-project/php-library-glpi`

Thank you. | 1.0 | Update README - Hi, @Naylin15

Please, replace this URL in the README file:

https://dev.flyve.org/glpi/apirest.php

To this one:

https://github.com/glpi-project/glpi/blob/master/apirest.md

And the new install command is:

`composer require glpi-project/php-library-glpi`

Thank you. | non_process | update readme hi please replace this url in the readme file to this one and the new install command is composer require glpi project php library glpi thank you | 0 |

15,735 | 19,910,273,748 | IssuesEvent | 2022-01-25 16:30:20 | input-output-hk/high-assurance-legacy | https://api.github.com/repos/input-output-hk/high-assurance-legacy | closed | Add an automatic proof method that shows a given set of possible transitions to be complete | type: enhancement reason: wontfix language: isabelle topic: process calculus | When proving that a certain relation is a simulation, one often needs to consider all forms of transitions possible from a process of a certain shape. Applying case distinction repeatedly for this purpose is laborious and leads to complicated proofs.

In an informal proof, one would typically state the ultimate cases... | 1.0 | Add an automatic proof method that shows a given set of possible transitions to be complete - When proving that a certain relation is a simulation, one often needs to consider all forms of transitions possible from a process of a certain shape. Applying case distinction repeatedly for this purpose is laborious and lead... | process | add an automatic proof method that shows a given set of possible transitions to be complete when proving that a certain relation is a simulation one often needs to consider all forms of transitions possible from a process of a certain shape applying case distinction repeatedly for this purpose is laborious and lead... | 1 |

10,280 | 13,132,054,149 | IssuesEvent | 2020-08-06 18:10:04 | googleapis/code-suggester | https://api.github.com/repos/googleapis/code-suggester | closed | Framework-core: handle existing PRs and existing branches | type: process | - [x] When there is an existing PR on an up-stream repository and a PR from the same branch and same down-stream repository is opened, there will be an error thrown. Ensure that the latest version is made into a PR.

- [x] When there is an existing branch and someone tries to apply changes onto an existing branch, opti... | 1.0 | Framework-core: handle existing PRs and existing branches - - [x] When there is an existing PR on an up-stream repository and a PR from the same branch and same down-stream repository is opened, there will be an error thrown. Ensure that the latest version is made into a PR.

- [x] When there is an existing branch and ... | process | framework core handle existing prs and existing branches when there is an existing pr on an up stream repository and a pr from the same branch and same down stream repository is opened there will be an error thrown ensure that the latest version is made into a pr when there is an existing branch and some... | 1 |

21,553 | 29,868,206,783 | IssuesEvent | 2023-06-20 06:32:10 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Automatically parse log files in folder. | question log-processing | Hi

Love your work with GoAccess.

One thing, would it be possible to have it automatically parse log files in a defined folder?

That way log rotating will not mess-up parsing and you would not have to change the config every time you add a new log from a system. you would to have to tell what ever system it may... | 1.0 | Automatically parse log files in folder. - Hi

Love your work with GoAccess.

One thing, would it be possible to have it automatically parse log files in a defined folder?

That way log rotating will not mess-up parsing and you would not have to change the config every time you add a new log from a system. you wo... | process | automatically parse log files in folder hi love your work with goaccess one thing would it be possible to have it automatically parse log files in a defined folder that way log rotating will not mess up parsing and you would not have to change the config every time you add a new log from a system you wo... | 1 |

22,015 | 11,660,553,571 | IssuesEvent | 2020-03-03 03:44:26 | cityofaustin/atd-geospatial | https://api.github.com/repos/cityofaustin/atd-geospatial | closed | 1:1 GIS Training - M. Alonso | Service: Geo Type: IT Support Workgroup: OSE | Maria would like to improve her GIS skills for queries and using AMANDA data. I am going to work with her 1:1 since she has specific needs with using GIS. Meeting Thursday 2/27 1pm-3pm at OTC. | 1.0 | 1:1 GIS Training - M. Alonso - Maria would like to improve her GIS skills for queries and using AMANDA data. I am going to work with her 1:1 since she has specific needs with using GIS. Meeting Thursday 2/27 1pm-3pm at OTC. | non_process | gis training m alonso maria would like to improve her gis skills for queries and using amanda data i am going to work with her since she has specific needs with using gis meeting thursday at otc | 0 |

19,617 | 25,970,716,728 | IssuesEvent | 2022-12-19 11:00:14 | deepset-ai/haystack | https://api.github.com/repos/deepset-ai/haystack | closed | Incorporate LayoutLM for information extraction from PDFs | type:feature topic:preprocessing | Hugging Face recently [added](https://twitter.com/huggingface/status/1432717993637818383) LayoutLMv2 and published a [repo](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LayoutLMv2) with several nice tutorials on how to use them.

Could these models be useful for Haystack's handling of PDFs? | 1.0 | Incorporate LayoutLM for information extraction from PDFs - Hugging Face recently [added](https://twitter.com/huggingface/status/1432717993637818383) LayoutLMv2 and published a [repo](https://github.com/NielsRogge/Transformers-Tutorials/tree/master/LayoutLMv2) with several nice tutorials on how to use them.

Could th... | process | incorporate layoutlm for information extraction from pdfs hugging face recently and published a with several nice tutorials on how to use them could these models be useful for haystack s handling of pdfs | 1 |

261,913 | 19,750,880,849 | IssuesEvent | 2022-01-15 03:58:16 | UBC-MDS/bc_covid_simple_eda | https://api.github.com/repos/UBC-MDS/bc_covid_simple_eda | closed | Edit README | documentation | - [x] Add summary

- [x] Add `Role within Python Ecosystem` research

- [x] Add Function summary

| 1.0 | Edit README - - [x] Add summary

- [x] Add `Role within Python Ecosystem` research

- [x] Add Function summary

| non_process | edit readme add summary add role within python ecosystem research add function summary | 0 |

18,543 | 24,555,077,815 | IssuesEvent | 2022-10-12 15:16:33 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [IOS] [Standalone] Updated consent is not getting displayed in the following scenario | Bug P1 iOS Process: Fixed Process: Tested dev | **Description**

**Pre-condition:** Study should be created with Comprehension test questions in the Study builder and the study should be launched

**Steps:**

1. Sign up or sign in to the mobile app

2. Enroll to the study

3. Go to the study builder and update the consent for enrolled participants

4. Go to the ... | 2.0 | [IOS] [Standalone] Updated consent is not getting displayed in the following scenario - **Description**

**Pre-condition:** Study should be created with Comprehension test questions in the Study builder and the study should be launched

**Steps:**

1. Sign up or sign in to the mobile app

2. Enroll to the study

3.... | process | updated consent is not getting displayed in the following scenario description pre condition study should be created with comprehension test questions in the study builder and the study should be launched steps sign up or sign in to the mobile app enroll to the study go to the stud... | 1 |

418,321 | 28,114,390,839 | IssuesEvent | 2023-03-31 09:37:48 | natashatanyt/ped | https://api.github.com/repos/natashatanyt/ped | opened | Suggestions for UG | severity.VeryLow type.DocumentationBug | I feel like more could be done to improve the UG.

For example, more images could be included, or extracts from running the example commands. ie. when you run `add 3 /of jackets` you will get xyz output.

Additionally, there could be a table of contents. Although the UG is short, it does help to give an overview of wh... | 1.0 | Suggestions for UG - I feel like more could be done to improve the UG.

For example, more images could be included, or extracts from running the example commands. ie. when you run `add 3 /of jackets` you will get xyz output.

Additionally, there could be a table of contents. Although the UG is short, it does help to g... | non_process | suggestions for ug i feel like more could be done to improve the ug for example more images could be included or extracts from running the example commands ie when you run add of jackets you will get xyz output additionally there could be a table of contents although the ug is short it does help to g... | 0 |

5,879 | 8,701,865,878 | IssuesEvent | 2018-12-05 12:52:52 | dzhw/zofar | https://api.github.com/repos/dzhw/zofar | opened | information about massmailing used by FDZ | category: service.processes prio: ? status: discussion | FDZ uses a massmailing system for their newsletter - maybe an alternative for zofar ? | 1.0 | information about massmailing used by FDZ - FDZ uses a massmailing system for their newsletter - maybe an alternative for zofar ? | process | information about massmailing used by fdz fdz uses a massmailing system for their newsletter maybe an alternative for zofar | 1 |

92,365 | 18,843,841,635 | IssuesEvent | 2021-11-11 12:48:40 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Feature]: Allow user to dynamically input spreadsheet url | Enhancement Actions Pod Google Sheets BE Coders Pod | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Summary

Option to add spreadsheet url dynamically. Ex - A user can enter the googlesheet URL via an input widget and then google API can show the data based on the input widget.

### Why should this be worked on?

Improves the GSh... | 1.0 | [Feature]: Allow user to dynamically input spreadsheet url - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Summary

Option to add spreadsheet url dynamically. Ex - A user can enter the googlesheet URL via an input widget and then google API can show the data based on the input... | non_process | allow user to dynamically input spreadsheet url is there an existing issue for this i have searched the existing issues summary option to add spreadsheet url dynamically ex a user can enter the googlesheet url via an input widget and then google api can show the data based on the input widget ... | 0 |

4,113 | 7,058,177,552 | IssuesEvent | 2018-01-04 19:18:08 | log2timeline/plaso | https://api.github.com/repos/log2timeline/plaso | closed | OS detection failing on dean-mac test image | preprocessing | The Dean-mac test image is not being detecting as OSX correctly, and all parsers are being enabled. | 1.0 | OS detection failing on dean-mac test image - The Dean-mac test image is not being detecting as OSX correctly, and all parsers are being enabled. | process | os detection failing on dean mac test image the dean mac test image is not being detecting as osx correctly and all parsers are being enabled | 1 |

650,161 | 21,336,847,801 | IssuesEvent | 2022-04-18 15:32:05 | CCAFS/MARLO | https://api.github.com/repos/CCAFS/MARLO | closed | [KT] (AICCRA) Update AICCRA Roadmap | Priority - High Type -Task AICCRA | The AICCRA roadmap is located at:

https://docs.google.com/presentation/d/1HYk3q4wsAv8mg2U5XjPbZzpYpaOZ3IJbC548vAbve2Q/edit#slide=id.gc7a83534ac_6_178

- [x] Review previous items

- [x] Add new and missing tasks

**Deliverable:**

**Move to Review when:** The information is complete

**Move to Closed when:** Be reviewed b... | 1.0 | [KT] (AICCRA) Update AICCRA Roadmap - The AICCRA roadmap is located at:

https://docs.google.com/presentation/d/1HYk3q4wsAv8mg2U5XjPbZzpYpaOZ3IJbC548vAbve2Q/edit#slide=id.gc7a83534ac_6_178

- [x] Review previous items

- [x] Add new and missing tasks

**Deliverable:**

**Move to Review when:** The information is complete

... | non_process | aiccra update aiccra roadmap the aiccra roadmap is located at review previous items add new and missing tasks deliverable move to review when the information is complete move to closed when be reviewed by hector | 0 |

1,718 | 6,574,482,976 | IssuesEvent | 2017-09-11 13:03:30 | ansible/ansible-modules-core | https://api.github.com/repos/ansible/ansible-modules-core | closed | pip module doesn't use proper pip executable from virtualenv | affects_2.2 bug_report waiting_on_maintainer | ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

pip_module

##### ANSIBLE VERSION

```

ansible 2.2.0.0

config file = /code/ansible.cfg

configured module search path = Default w/o overrides

```

Could affect 2.1 also

##### OS / ENVIRONMENT

CentOS 7

##### SUMMARY

pip module uses `/bin/pip2` ins... | True | pip module doesn't use proper pip executable from virtualenv - ##### ISSUE TYPE

- Bug Report

##### COMPONENT NAME

pip_module

##### ANSIBLE VERSION

```

ansible 2.2.0.0

config file = /code/ansible.cfg

configured module search path = Default w/o overrides

```

Could affect 2.1 also

##### OS / ENVIRONM... | non_process | pip module doesn t use proper pip executable from virtualenv issue type bug report component name pip module ansible version ansible config file code ansible cfg configured module search path default w o overrides could affect also os environm... | 0 |

6,405 | 9,487,304,253 | IssuesEvent | 2019-04-22 16:28:20 | allinurl/goaccess | https://api.github.com/repos/allinurl/goaccess | closed | Goaccess takes long time to quit | command-line options log-processing | There is a considerable time delay before goaccess closes once I send SIGINT to it. Is there any way to skip whatever it is doing throughout that time (other than killing it)? I need to start a new instance on the same port as soon as possible. Right now I use a slight time delay, around 2 minutes just to be sure.

I... | 1.0 | Goaccess takes long time to quit - There is a considerable time delay before goaccess closes once I send SIGINT to it. Is there any way to skip whatever it is doing throughout that time (other than killing it)? I need to start a new instance on the same port as soon as possible. Right now I use a slight time delay, aro... | process | goaccess takes long time to quit there is a considerable time delay before goaccess closes once i send sigint to it is there any way to skip whatever it is doing throughout that time other than killing it i need to start a new instance on the same port as soon as possible right now i use a slight time delay aro... | 1 |

7,091 | 10,238,826,654 | IssuesEvent | 2019-08-19 16:45:09 | cncf/sig-security | https://api.github.com/repos/cncf/sig-security | closed | security reviewers must not have a conflict of interest | assessment-process help wanted | We are practicing this, but we need some language that describes exactly what we believe represents a conflict of interest.

For starters:

- no one who is on the core team of the project should be a security reviewer

- if someone is a user of the project or has contributed a PR, that is fine (and would be positiv... | 1.0 | security reviewers must not have a conflict of interest - We are practicing this, but we need some language that describes exactly what we believe represents a conflict of interest.

For starters:

- no one who is on the core team of the project should be a security reviewer

- if someone is a user of the project o... | process | security reviewers must not have a conflict of interest we are practicing this but we need some language that describes exactly what we believe represents a conflict of interest for starters no one who is on the core team of the project should be a security reviewer if someone is a user of the project o... | 1 |

369,808 | 25,869,076,913 | IssuesEvent | 2022-12-14 00:13:38 | strapi/strapi | https://api.github.com/repos/strapi/strapi | closed | Swagger plugin issue on GCP - Documentation | source: plugin:documentation status: pending reproduction | ## Bug report

### Describe the bug

When accessing the `/documentation` page from the Strapi admin panel on a GCP App Engine (Standard) instance, I am seeing the below errors in GCP logs and Page not found with a 404 error.

`error Error: EROFS: read-only file system, open '/workspace/extensions/documentation/pu... | 1.0 | Swagger plugin issue on GCP - Documentation - ## Bug report

### Describe the bug

When accessing the `/documentation` page from the Strapi admin panel on a GCP App Engine (Standard) instance, I am seeing the below errors in GCP logs and Page not found with a 404 error.

`error Error: EROFS: read-only file system... | non_process | swagger plugin issue on gcp documentation bug report describe the bug when accessing the documentation page from the strapi admin panel on a gcp app engine standard instance i am seeing the below errors in gcp logs and page not found with a error error error erofs read only file system ... | 0 |

648,339 | 21,183,343,638 | IssuesEvent | 2022-04-08 10:06:20 | open62541/open62541 | https://api.github.com/repos/open62541/open62541 | closed | Error when building package that uses open62541 | Priority: Low Status: Pending Component: Server | ## Description

I have written my OPC UA client (opcua_bridge) using open62541. However, when I try and build it (using colcon build) I get the following error:

```

/usr/bin/ld: cannot find -lmbedtls

/usr/bin/ld: cannot find -lmbedx509

/usr/bin/ld: cannot find -lmbedcrypto

collect2: error: ld returned 1 exit sta... | 1.0 | Error when building package that uses open62541 - ## Description

I have written my OPC UA client (opcua_bridge) using open62541. However, when I try and build it (using colcon build) I get the following error:

```

/usr/bin/ld: cannot find -lmbedtls

/usr/bin/ld: cannot find -lmbedx509

/usr/bin/ld: cannot find -lm... | non_process | error when building package that uses description i have written my opc ua client opcua bridge using however when i try and build it using colcon build i get the following error usr bin ld cannot find lmbedtls usr bin ld cannot find usr bin ld cannot find lmbedcrypto error ld ... | 0 |

7,114 | 10,266,108,356 | IssuesEvent | 2019-08-22 20:34:05 | automotive-edge-computing-consortium/AECC | https://api.github.com/repos/automotive-edge-computing-consortium/AECC | opened | Missing technical item capture process | priority:High status:Open type:Process | Need a formal method for capturing missing technical items. Need to review and prioritize items. Need to review periodically. Need to be prepared to close/archive issues | 1.0 | Missing technical item capture process - Need a formal method for capturing missing technical items. Need to review and prioritize items. Need to review periodically. Need to be prepared to close/archive issues | process | missing technical item capture process need a formal method for capturing missing technical items need to review and prioritize items need to review periodically need to be prepared to close archive issues | 1 |

91,144 | 26,282,925,428 | IssuesEvent | 2023-01-07 14:06:07 | skypjack/uvw | https://api.github.com/repos/skypjack/uvw | opened | Failing workflows as github changed several compilers from ubuntu machines. | build system | I'm working on a PR and it's impossible to rely on the CI as several are failing on build tools installation.

Reference: https://github.com/actions/runner-images/issues/3235 | 1.0 | Failing workflows as github changed several compilers from ubuntu machines. - I'm working on a PR and it's impossible to rely on the CI as several are failing on build tools installation.

Reference: https://github.com/actions/runner-images/issues/3235 | non_process | failing workflows as github changed several compilers from ubuntu machines i m working on a pr and it s impossible to rely on the ci as several are failing on build tools installation reference | 0 |

15,590 | 19,715,423,597 | IssuesEvent | 2022-01-13 10:30:14 | chef/chef-oss-practices | https://api.github.com/repos/chef/chef-oss-practices | closed | We should migrate and default to the main branch | Development Process | We're currently using the master branch everywhere

GitHub now defaults to main everywhere, we should follow this standard where possible | 1.0 | We should migrate and default to the main branch - We're currently using the master branch everywhere

GitHub now defaults to main everywhere, we should follow this standard where possible | process | we should migrate and default to the main branch we re currently using the master branch everywhere github now defaults to main everywhere we should follow this standard where possible | 1 |

30,948 | 6,370,257,810 | IssuesEvent | 2017-08-01 13:50:36 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | closed | dnsdist: add setStaleCacheEntriesTTL to console autocomplete | defect dnsdist | <!-- Tell us what is issue is about -->

- Program: dnsdist <!-- delete the ones that do not apply -->

- Issue type: Bug report <!-- delete the one that does not apply -->

### Short description

setStaleCacheEntriesTTL is not available in dnsdist console autocompletion as discussed in IRC some time ago. Turns ou... | 1.0 | dnsdist: add setStaleCacheEntriesTTL to console autocomplete - <!-- Tell us what is issue is about -->

- Program: dnsdist <!-- delete the ones that do not apply -->

- Issue type: Bug report <!-- delete the one that does not apply -->

### Short description

setStaleCacheEntriesTTL is not available in dnsdist con... | non_process | dnsdist add setstalecacheentriesttl to console autocomplete program dnsdist issue type bug report short description setstalecacheentriesttl is not available in dnsdist console autocompletion as discussed in irc some time ago turns out to be a simple oversight please add | 0 |

5,037 | 7,853,646,247 | IssuesEvent | 2018-06-20 18:06:14 | aspnet/IISIntegration | https://api.github.com/repos/aspnet/IISIntegration | closed | Profile ANCM for performance | Task cost: M in-process invalid | I have been prioritizing correctness over performance for the implementation so far. One we get the in-process mode to a more correct state, we need to do a sweep to reduce allocations. @davidfowl | 1.0 | Profile ANCM for performance - I have been prioritizing correctness over performance for the implementation so far. One we get the in-process mode to a more correct state, we need to do a sweep to reduce allocations. @davidfowl | process | profile ancm for performance i have been prioritizing correctness over performance for the implementation so far one we get the in process mode to a more correct state we need to do a sweep to reduce allocations davidfowl | 1 |

20,426 | 27,089,518,549 | IssuesEvent | 2023-02-14 19:45:42 | googleapis/gapic-generator-java | https://api.github.com/repos/googleapis/gapic-generator-java | opened | Add configuration validation for renovate.json | type: process priority: p3 | Look into a way to add check to verify `renovate.json` in presubmit, so things like #1349's missed comma can be caught ahead of renovate bot [issue](https://github.com/googleapis/gapic-generator-java/issues/1352). | 1.0 | Add configuration validation for renovate.json - Look into a way to add check to verify `renovate.json` in presubmit, so things like #1349's missed comma can be caught ahead of renovate bot [issue](https://github.com/googleapis/gapic-generator-java/issues/1352). | process | add configuration validation for renovate json look into a way to add check to verify renovate json in presubmit so things like s missed comma can be caught ahead of renovate bot | 1 |

51,820 | 10,729,726,924 | IssuesEvent | 2019-10-28 16:05:05 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failed Tests: X-Pack Jest Tests.x-pack/plugins/code/server/lsp.passive launcher can start and end a process | Team:Code failed-test | ```

Stacktrace

Error: expect(received).toBe(expected) // Object.is equality

Expected: "process started"

Received: "socket connected"

at toBe (/var/lib/jenkins/workspace/elastic+kibana+pull-request/JOB/x-pack-intake/node/immutable/kibana/x-pack/plugins/code/server/lsp/abstract_launcher.test.ts:196:42)... | 1.0 | Failed Tests: X-Pack Jest Tests.x-pack/plugins/code/server/lsp.passive launcher can start and end a process - ```

Stacktrace

Error: expect(received).toBe(expected) // Object.is equality

Expected: "process started"

Received: "socket connected"

at toBe (/var/lib/jenkins/workspace/elastic+kibana+pull-re... | non_process | failed tests x pack jest tests x pack plugins code server lsp passive launcher can start and end a process stacktrace error expect received tobe expected object is equality expected process started received socket connected at tobe var lib jenkins workspace elastic kibana pull re... | 0 |

68,701 | 29,482,265,529 | IssuesEvent | 2023-06-02 06:58:12 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | Cannot disable all storage account bypass exceptions | service/storage | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->

* Please vote on this issue by adding a :thumbsup: [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the orig... | 1.0 | Cannot disable all storage account bypass exceptions - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->

* Please vote on this issue by adding a :thumbsup: [reaction](https://blog.github.com/2016-03-10-add-react... | non_process | cannot disable all storage account bypass exceptions is there an existing issue for this i have searched the existing issues community note please vote on this issue by adding a thumbsup to the original issue to help the community and maintainers prioritize this request please do not l... | 0 |

21,334 | 29,041,383,781 | IssuesEvent | 2023-05-13 02:00:07 | lizhihao6/get-daily-arxiv-noti | https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti | opened | New submissions for Fri, 12 May 23 | event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB | ## Keyword: events

### HyperE2VID: Improving Event-Based Video Reconstruction via Hypernetworks

- **Authors:** Burak Ercan, Onur Eker, Canberk Saglam, Aykut Erdem, Erkut Erdem

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2305.06382

- **Pdf link:** https://a... | 2.0 | New submissions for Fri, 12 May 23 - ## Keyword: events

### HyperE2VID: Improving Event-Based Video Reconstruction via Hypernetworks

- **Authors:** Burak Ercan, Onur Eker, Canberk Saglam, Aykut Erdem, Erkut Erdem

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/... | process | new submissions for fri may keyword events improving event based video reconstruction via hypernetworks authors burak ercan onur eker canberk saglam aykut erdem erkut erdem subjects computer vision and pattern recognition cs cv arxiv link pdf link abstrac... | 1 |

21,520 | 29,804,442,877 | IssuesEvent | 2023-06-16 10:31:36 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | opened | Electro-Voice N8000 NetMax 300 MIPS Digital Matrix Controller | NOT YET PROCESSED | - [x] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

- YES

The name of the device, hardware, or software you would like to control:

- Electro-Voice N8000 NetMax 300 MIPS Digital Matrix Controller

What you would like to be able to... | 1.0 | Electro-Voice N8000 NetMax 300 MIPS Digital Matrix Controller - - [x] **I have researched the list of existing Companion modules and requests and have determined this has not yet been requested**

- YES

The name of the device, hardware, or software you would like to control:

- Electro-Voice N8000 NetMax 300 MIP... | process | electro voice netmax mips digital matrix controller i have researched the list of existing companion modules and requests and have determined this has not yet been requested yes the name of the device hardware or software you would like to control electro voice netmax mips digital matr... | 1 |

68,629 | 21,769,783,847 | IssuesEvent | 2022-05-13 07:55:12 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Can't navigate long topics | T-Defect S-Minor A-Room-View Help Wanted Z-Visibility-1 Z-Impact-2 O-Uncommon good first issue Z-IA Z-Labs Z-WTF Team: Delight Z-NewUserJourney | I'm in a room with a long topic, and I can't read it all. Specifically, I'm trying to click on a link which is clipped out of bounds, and it's not clickable when viewing room settings making it even more frustrating.

Should we implement a mechanic that reveals more of the room topic? Either by making it reveal more ... | 1.0 | Can't navigate long topics - I'm in a room with a long topic, and I can't read it all. Specifically, I'm trying to click on a link which is clipped out of bounds, and it's not clickable when viewing room settings making it even more frustrating.

Should we implement a mechanic that reveals more of the room topic? Eit... | non_process | can t navigate long topics i m in a room with a long topic and i can t read it all specifically i m trying to click on a link which is clipped out of bounds and it s not clickable when viewing room settings making it even more frustrating should we implement a mechanic that reveals more of the room topic eit... | 0 |

8,676 | 11,809,680,792 | IssuesEvent | 2020-03-19 15:19:29 | MicrosoftDocs/vsts-docs | https://api.github.com/repos/MicrosoftDocs/vsts-docs | closed | Disable all stages at start by default so that only those required can be selected manually | Pri1 devops-cicd-process/tech devops/prod doc-bug | We would like to provide a pipeline that has various build configurations as different stages but depending on what build configuration they want they need to select appropriate stages.

Currently this is possible if they individually disable stages one by one before starting but by default all stages are always en... | 1.0 | Disable all stages at start by default so that only those required can be selected manually - We would like to provide a pipeline that has various build configurations as different stages but depending on what build configuration they want they need to select appropriate stages.

Currently this is possible if they i... | process | disable all stages at start by default so that only those required can be selected manually we would like to provide a pipeline that has various build configurations as different stages but depending on what build configuration they want they need to select appropriate stages currently this is possible if they i... | 1 |

7,497 | 10,583,898,105 | IssuesEvent | 2019-10-08 14:31:20 | prisma/studio | https://api.github.com/repos/prisma/studio | closed | Pressing Tab while editing a cell should not focus the browser's address bar | bug/2-confirmed kind/bug process/candidate | Instead, exit the edit mode and move to the next cell horizontally | 1.0 | Pressing Tab while editing a cell should not focus the browser's address bar - Instead, exit the edit mode and move to the next cell horizontally | process | pressing tab while editing a cell should not focus the browser s address bar instead exit the edit mode and move to the next cell horizontally | 1 |

1,717 | 2,665,174,183 | IssuesEvent | 2015-03-20 18:46:37 | phetsims/arch | https://api.github.com/repos/phetsims/arch | closed | code review for potential merge into master | code review | This is a consolidation of https://github.com/phetsims/axon/issues/34, https://github.com/phetsims/joist/issues/167 and https://github.com/phetsims/sun/issues/120.

Review arch and the 'arch' branches of axon, joist and sun. Sim examples are in the 'arch' branches of balancing-act and fluid-pressure-and-flow.

Eval... | 1.0 | code review for potential merge into master - This is a consolidation of https://github.com/phetsims/axon/issues/34, https://github.com/phetsims/joist/issues/167 and https://github.com/phetsims/sun/issues/120.

Review arch and the 'arch' branches of axon, joist and sun. Sim examples are in the 'arch' branches of bala... | non_process | code review for potential merge into master this is a consolidation of and review arch and the arch branches of axon joist and sun sim examples are in the arch branches of balancing act and fluid pressure and flow evaluate whether this approach to data collection is a suitable for merging into ma... | 0 |

21,665 | 30,110,942,025 | IssuesEvent | 2023-06-30 07:42:05 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | pipelines conditions.md example error | doc-bug Pri1 azure-devops-pipelines/svc azure-devops-pipelines-process/subsvc | The `if eq` from this block results in an error in ADO pipelines.

```yaml

# parameters.yml

parameters:

- name: doThing

default: true # value passed to the condition

type: boolean

jobs:

- job: B

steps:

- script: echo I did a thing

condition: ${{ if eq(parameters.doThing, true) }}

```

... | 1.0 | pipelines conditions.md example error - The `if eq` from this block results in an error in ADO pipelines.

```yaml

# parameters.yml

parameters: