Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

3,188 | 6,259,322,003 | IssuesEvent | 2017-07-14 17:47:41 | PeaceGeeksSociety/salesforce | https://api.github.com/repos/PeaceGeeksSociety/salesforce | opened | Identify engagement level of contacts | Behavioural Data Collection Community Processes | We would like to be able to determine/qualify/quantify someone's engagement level at a glance and filter them to ultimately automate this qualification/quantification.

This would allow us to identify contacts based on their engagement level and tailor our communications with them based on these levels.

We would l... | 1.0 | Identify engagement level of contacts - We would like to be able to determine/qualify/quantify someone's engagement level at a glance and filter them to ultimately automate this qualification/quantification.

This would allow us to identify contacts based on their engagement level and tailor our communications with t... | process | identify engagement level of contacts we would like to be able to determine qualify quantify someone s engagement level at a glance and filter them to ultimately automate this qualification quantification this would allow us to identify contacts based on their engagement level and tailor our communications with t... | 1 |

287,648 | 8,817,934,629 | IssuesEvent | 2018-12-31 07:09:33 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | photos.google.com - see bug description | browser-firefox-mobile priority-critical | <!-- @browser: Firefox Mobile 66.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:66.0) Gecko/66.0 Firefox/66.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://photos.google.com/

**Browser / Version**: Firefox Mobile 66.0

**Operating System**: Android

**Tested Another Browser**: Yes

**Problem ... | 1.0 | photos.google.com - see bug description - <!-- @browser: Firefox Mobile 66.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:66.0) Gecko/66.0 Firefox/66.0 -->

<!-- @reported_with: mobile-reporter -->

**URL**: https://photos.google.com/

**Browser / Version**: Firefox Mobile 66.0

**Operating System**: Android

*... | non_process | photos google com see bug description url browser version firefox mobile operating system android tested another browser yes problem type something else description unsupported browser redirect steps to reproduce redirects to unsupported browser page without optio... | 0 |

9,391 | 12,394,138,598 | IssuesEvent | 2020-05-20 16:27:35 | googleapis/python-secret-manager | https://api.github.com/repos/googleapis/python-secret-manager | closed | 4/13/2020: release to GA | api: secretmanager type: process | *28 days since last release on: April 13, 2020*

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [x] 28 days elapsed ... | 1.0 | 4/13/2020: release to GA - *28 days since last release on: April 13, 2020*

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Require... | process | release to ga days since last release on april current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue required ... | 1 |

5,036 | 18,293,825,311 | IssuesEvent | 2021-10-05 18:11:44 | CDCgov/prime-reportstream | https://api.github.com/repos/CDCgov/prime-reportstream | opened | Change All-In-One-Schema | sender-automation | ## Problem statement

OBX.24.3 thru OBX.24.5 - Performing Lab city, state and zip is wrong.

## Acceptance criteria

- [x] OBX.24.3 thru OBX.24.5 - Performing Lab city, state and zip must match Columns D, E and F from the CSV file.

## To do

- [ ] Correct the Schema | 1.0 | Change All-In-One-Schema - ## Problem statement

OBX.24.3 thru OBX.24.5 - Performing Lab city, state and zip is wrong.

## Acceptance criteria

- [x] OBX.24.3 thru OBX.24.5 - Performing Lab city, state and zip must match Columns D, E and F from the CSV file.

## To do

- [ ] Correct the Schema | non_process | change all in one schema problem statement obx thru obx performing lab city state and zip is wrong acceptance criteria obx thru obx performing lab city state and zip must match columns d e and f from the csv file to do correct the schema | 0 |

12,957 | 15,339,443,058 | IssuesEvent | 2021-02-27 02:01:32 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Check that all overriden constructors emulate native behavior on calling without a 'new' keyword | AREA: client STATE: Stale SYSTEM: client side processing TYPE: enhancement | ```js

window.Worker();

window.Image();

etc.

``` | 1.0 | Check that all overriden constructors emulate native behavior on calling without a 'new' keyword - ```js

window.Worker();

window.Image();

etc.

``` | process | check that all overriden constructors emulate native behavior on calling without a new keyword js window worker window image etc | 1 |

75,074 | 25,514,367,811 | IssuesEvent | 2022-11-28 15:18:14 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | opened | CMS: VAMC System Banner Alert with Situation Updates do not work for Lovell systems | Defect ⭐️ Facilities VA Lovell | ## Describe the defect

The existing logic to handle the placement of Banner Alerts with Situation Updates does not work with Lovell. When we attempt to create one of these nodes we do not have the option to display the Banner on the Lovell - VA or Lovell - TRICARE subsystems. These systems are not listed as options ( ... | 1.0 | CMS: VAMC System Banner Alert with Situation Updates do not work for Lovell systems - ## Describe the defect

The existing logic to handle the placement of Banner Alerts with Situation Updates does not work with Lovell. When we attempt to create one of these nodes we do not have the option to display the Banner on the ... | non_process | cms vamc system banner alert with situation updates do not work for lovell systems describe the defect the existing logic to handle the placement of banner alerts with situation updates does not work with lovell when we attempt to create one of these nodes we do not have the option to display the banner on the ... | 0 |

254,158 | 27,357,116,967 | IssuesEvent | 2023-02-27 13:37:10 | bturtu405/TestDev | https://api.github.com/repos/bturtu405/TestDev | reopened | webpack-dev-server-1.16.5.tgz: 2 vulnerabilities (highest severity is: 7.8) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>webpack-dev-server-1.16.5.tgz</b></p></summary>

<p>Serves a webpack app. Updates the browser on changes.</p>

<p>Library home page: <a href="https://registry.npmjs.org... | True | webpack-dev-server-1.16.5.tgz: 2 vulnerabilities (highest severity is: 7.8) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>webpack-dev-server-1.16.5.tgz</b></p></summary>

<p>Serves a webpack app. Updates the bro... | non_process | webpack dev server tgz vulnerabilities highest severity is vulnerable library webpack dev server tgz serves a webpack app updates the browser on changes library home page a href path to dependency file package json path to vulnerable library node modules webpack dev s... | 0 |

207,267 | 23,436,058,189 | IssuesEvent | 2022-08-15 09:57:32 | Gal-Doron/Baragon-test-3 | https://api.github.com/repos/Gal-Doron/Baragon-test-3 | opened | jetty-server-9.4.18.v20190429.jar: 4 vulnerabilities (highest severity is: 5.3) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jetty-server-9.4.18.v20190429.jar</b></p></summary>

<p>The core jetty server artifact.</p>

<p>Library home page: <a href="http://www.eclipse.org/jetty">http://www.ecl... | True | jetty-server-9.4.18.v20190429.jar: 4 vulnerabilities (highest severity is: 5.3) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jetty-server-9.4.18.v20190429.jar</b></p></summary>

<p>The core jetty server artifac... | non_process | jetty server jar vulnerabilities highest severity is vulnerable library jetty server jar the core jetty server artifact library home page a href path to dependency file baragonagentservice pom xml path to vulnerable library sitory org eclipse jetty jetty server ... | 0 |

20,561 | 27,222,207,747 | IssuesEvent | 2023-02-21 06:45:52 | sebastianbergmann/phpunit | https://api.github.com/repos/sebastianbergmann/phpunit | opened | @runClassInSeparateProcess has the same effect as @runTestsInSeparateProcesses | type/bug feature/test-runner feature/process-isolation version/9 version/10 | As previously reported in #3258, `@runClassInSeparateProcess` has the same effect as `@runTestsInSeparateProcesses`.

Unless I am mistaken (which I may well be), this can be proven by applying ...

```patch

diff --git a/tests/end-to-end/regression/2724/SeparateClassRunMethodInNewProcessTest.php b/tests/end-to-end/... | 1.0 | @runClassInSeparateProcess has the same effect as @runTestsInSeparateProcesses - As previously reported in #3258, `@runClassInSeparateProcess` has the same effect as `@runTestsInSeparateProcesses`.

Unless I am mistaken (which I may well be), this can be proven by applying ...

```patch

diff --git a/tests/end-to-e... | process | runclassinseparateprocess has the same effect as runtestsinseparateprocesses as previously reported in runclassinseparateprocess has the same effect as runtestsinseparateprocesses unless i am mistaken which i may well be this can be proven by applying patch diff git a tests end to end ... | 1 |

10,246 | 13,101,768,756 | IssuesEvent | 2020-08-04 04:51:37 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | qgis_process incorrectly lists GRASS algorithms even if GRASS is not installed | Bug Processing | <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

-... | 1.0 | qgis_process incorrectly lists GRASS algorithms even if GRASS is not installed - <!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support ... | process | qgis process incorrectly lists grass algorithms even if grass is not installed bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support ... | 1 |

201,097 | 15,173,741,706 | IssuesEvent | 2021-02-13 15:28:53 | tomfrenken/kassen-system | https://api.github.com/repos/tomfrenken/kassen-system | closed | Create JUnit Test for productList.addProduct() | model tests | AC:

- Read the task again since we have to create special "Äquivalenzklassen" for our documentation

- Create a JUnit test for "Preis" | 1.0 | Create JUnit Test for productList.addProduct() - AC:

- Read the task again since we have to create special "Äquivalenzklassen" for our documentation

- Create a JUnit test for "Preis" | non_process | create junit test for productlist addproduct ac read the task again since we have to create special äquivalenzklassen for our documentation create a junit test for preis | 0 |

11,803 | 14,627,059,664 | IssuesEvent | 2020-12-23 11:31:26 | darktable-org/darktable | https://api.github.com/repos/darktable-org/darktable | closed | Haze module and other modules that rely on dt_box_* cause image deterioration or crash when enabled | bug: pending priority: high scope: image processing | WSL Ubuntu 20.04.1 LTS

Master : darktable 3.5.0+111~g3cca160f9

Compressed history to original.

Activate Haze module.

Image goes black.

Any image that was previously edited and has an active ha... | 1.0 | Haze module and other modules that rely on dt_box_* cause image deterioration or crash when enabled - WSL Ubuntu 20.04.1 LTS

Master : darktable 3.5.0+111~g3cca160f9

Compressed history to original.

Activate Haze module.

Image goes black.

for howto, this is just a checklist version:

- [x] Spring clean

- [x] Re-fetch tooling

- [x] Configure and build

- [x] Pass all tests

- [x] Cut release

- [x] Push tag to master

- Update website

- [x] Copy changelo... | 1.0 | v0.12.5 release checklist - See full [release checklist](https://github.com/sile-typesetter/sile/wiki/Release-Checklist) for howto, this is just a checklist version:

- [x] Spring clean

- [x] Re-fetch tooling

- [x] Configure and build

- [x] Pass all tests

- [x] Cut release

- [x] Push tag to master

- Update webs... | non_process | release checklist see full for howto this is just a checklist version spring clean re fetch tooling configure and build pass all tests cut release push tag to master update website copy changelog and prefix with a summary as a blog post copy and post ma... | 0 |

664,703 | 22,285,285,439 | IssuesEvent | 2022-06-11 14:22:23 | magento/magento2 | https://api.github.com/repos/magento/magento2 | closed | [Issue] Change description of NewRelic configuration | Issue: Confirmed Reproduced on 2.4.x Progress: PR in progress Priority: P2 stale issue Reported on 2.4.x Area: UI Framework | This issue is automatically created based on existing pull request: magento/magento2#31944: Change description of NewRelic configuration

---------

### Description (*)

Change description of NewRelic configuration as it is not representing real feature.

Currently extension created own APP for each Area code if this... | 1.0 | [Issue] Change description of NewRelic configuration - This issue is automatically created based on existing pull request: magento/magento2#31944: Change description of NewRelic configuration

---------

### Description (*)

Change description of NewRelic configuration as it is not representing real feature.

Curren... | non_process | change description of newrelic configuration this issue is automatically created based on existing pull request magento change description of newrelic configuration description change description of newrelic configuration as it is not representing real feature currently extension cre... | 0 |

12,117 | 14,740,649,581 | IssuesEvent | 2021-01-07 09:25:13 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | SCBS report error | anc-process anp-1 ant-bug has attachment | In GitLab by @kdjstudios on Nov 23, 2018, 13:26

**Submitted by:** "Richard Soltoff" <richard.soltoff@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-11-23-86134/conversation

**Server:** Internal

**Client/Site:** Fairlawn

**Account:** NA

**Issue:**

Getting error message whe... | 1.0 | SCBS report error - In GitLab by @kdjstudios on Nov 23, 2018, 13:26

**Submitted by:** "Richard Soltoff" <richard.soltoff@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-11-23-86134/conversation

**Server:** Internal

**Client/Site:** Fairlawn

**Account:** NA

**Issue:**

Getti... | process | scbs report error in gitlab by kdjstudios on nov submitted by richard soltoff helpdesk server internal client site fairlawn account na issue getting error message when trying to export this report in csv we re sorry but something went wrong uploads im... | 1 |

165,604 | 26,198,708,332 | IssuesEvent | 2023-01-03 15:36:35 | Mbed-TLS/mbedtls | https://api.github.com/repos/Mbed-TLS/mbedtls | opened | Bignum: Agree on an approach for scalars | enhancement needs-design-approval component-crypto size-s | ### Context

Scalars in EC modules are both used in point operations as scalars and modular operations modulo the group order. The original approach was to represent them as an `mpi_mod_residue`. The representation of `mpi_mod_residue` is opaque and direct bit manipulation operations might not yield correct results. ... | 1.0 | Bignum: Agree on an approach for scalars - ### Context

Scalars in EC modules are both used in point operations as scalars and modular operations modulo the group order. The original approach was to represent them as an `mpi_mod_residue`. The representation of `mpi_mod_residue` is opaque and direct bit manipulation o... | non_process | bignum agree on an approach for scalars context scalars in ec modules are both used in point operations as scalars and modular operations modulo the group order the original approach was to represent them as an mpi mod residue the representation of mpi mod residue is opaque and direct bit manipulation o... | 0 |

23,757 | 2,663,138,778 | IssuesEvent | 2015-03-20 01:24:52 | jaischeema/lesswrong-issues | https://api.github.com/repos/jaischeema/lesswrong-issues | closed | Stateful summary marker | bug Contributions-Welcome imported invalid Priority-Medium | _From [wjmo...@gmail.com](https://code.google.com/u/117567618910921056910/) on 2009-01-28T17:38:03Z_

Add statefulness to the 'end summary ' marker in TinyMCE.

_Original issue: http://code.google.com/p/lesswrong/issues/detail?id=2_ | 1.0 | Stateful summary marker - _From [wjmo...@gmail.com](https://code.google.com/u/117567618910921056910/) on 2009-01-28T17:38:03Z_

Add statefulness to the 'end summary ' marker in TinyMCE.

_Original issue: http://code.google.com/p/lesswrong/issues/detail?id=2_ | non_process | stateful summary marker from on add statefulness to the end summary marker in tinymce original issue | 0 |

151,984 | 23,900,876,654 | IssuesEvent | 2022-09-08 18:39:38 | microsoft/azuredatastudio | https://api.github.com/repos/microsoft/azuredatastudio | closed | Provide ability to create columnstore index in table designer | Enhancement Pri: 1 Triage: Done Area - Designer | **Is your feature request related to a problem? Please describe.**

The table designer does not provide a way to create columnstore indexes.

**Describe the solution or feature you'd like**

Provide the ability to create either a clustered columnstore, or nonclustered columnstore, index in the table designer.

**De... | 1.0 | Provide ability to create columnstore index in table designer - **Is your feature request related to a problem? Please describe.**

The table designer does not provide a way to create columnstore indexes.

**Describe the solution or feature you'd like**

Provide the ability to create either a clustered columnstore, o... | non_process | provide ability to create columnstore index in table designer is your feature request related to a problem please describe the table designer does not provide a way to create columnstore indexes describe the solution or feature you d like provide the ability to create either a clustered columnstore o... | 0 |

13,248 | 15,716,794,445 | IssuesEvent | 2021-03-28 09:10:19 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Export to PostgreSQL (available connections) broken | Bug Processing Regression |

When I try to upload layers to a PostGIS database using the GDAL algorithm 'Export to PostgreSQL (available connections) ' in the Model Builder it fails. I get the message 'FAILURE: Unable to open datasource'. If I try the same process using the GDAL algorithm 'Export to PostgreSQL (new connection)' it works fine wit... | 1.0 | Export to PostgreSQL (available connections) broken -

When I try to upload layers to a PostGIS database using the GDAL algorithm 'Export to PostgreSQL (available connections) ' in the Model Builder it fails. I get the message 'FAILURE: Unable to open datasource'. If I try the same process using the GDAL algorithm 'Ex... | process | export to postgresql available connections broken when i try to upload layers to a postgis database using the gdal algorithm export to postgresql available connections in the model builder it fails i get the message failure unable to open datasource if i try the same process using the gdal algorithm ex... | 1 |

2,545 | 5,301,717,075 | IssuesEvent | 2017-02-10 10:31:50 | codurance/site | https://api.github.com/repos/codurance/site | closed | Migrate Jenkins configuration to be stored in the repo fully | improve-process | There are some pieces of the configuration, e.g. which scripts to launch and in which order, stored in Jenkins itself. If there are changes done to this configuration it will affect all branches. It would be good imho to have this done per-branch, which new Jenkins can do I believe. Previously it was done by the Pipeli... | 1.0 | Migrate Jenkins configuration to be stored in the repo fully - There are some pieces of the configuration, e.g. which scripts to launch and in which order, stored in Jenkins itself. If there are changes done to this configuration it will affect all branches. It would be good imho to have this done per-branch, which new... | process | migrate jenkins configuration to be stored in the repo fully there are some pieces of the configuration e g which scripts to launch and in which order stored in jenkins itself if there are changes done to this configuration it will affect all branches it would be good imho to have this done per branch which new... | 1 |

188 | 2,590,796,582 | IssuesEvent | 2015-02-18 21:07:12 | arduino/Arduino | https://api.github.com/repos/arduino/Arduino | closed | transformation .ino to .cpp fails with multi-line macro definition | Component: Preprocessor | This code is not properly transformed from .ino to .cpp; the line containing "0" is erroneously emitted right after the #include "Arduino.h" statement resulting in a diagnostic such as:

buggy.ino:5: error: expected unqualified-id before numeric constant

Rewriting the macro "abc" so it fits on one line avoids th... | 1.0 | transformation .ino to .cpp fails with multi-line macro definition - This code is not properly transformed from .ino to .cpp; the line containing "0" is erroneously emitted right after the #include "Arduino.h" statement resulting in a diagnostic such as:

buggy.ino:5: error: expected unqualified-id before numeric c... | process | transformation ino to cpp fails with multi line macro definition this code is not properly transformed from ino to cpp the line containing is erroneously emitted right after the include arduino h statement resulting in a diagnostic such as buggy ino error expected unqualified id before numeric c... | 1 |

13,012 | 15,369,227,743 | IssuesEvent | 2021-03-02 07:02:02 | mt-ag/apex-flowsforapex | https://api.github.com/repos/mt-ag/apex-flowsforapex | opened | Process Plugin to call complete_step | enhancement process-plugin | Create a process plugin which makes it even easier to integrate Flows for APEX.

Single responsibility should be taking process_id and subflow_id from application / page item and calling complete_step. | 1.0 | Process Plugin to call complete_step - Create a process plugin which makes it even easier to integrate Flows for APEX.

Single responsibility should be taking process_id and subflow_id from application / page item and calling complete_step. | process | process plugin to call complete step create a process plugin which makes it even easier to integrate flows for apex single responsibility should be taking process id and subflow id from application page item and calling complete step | 1 |

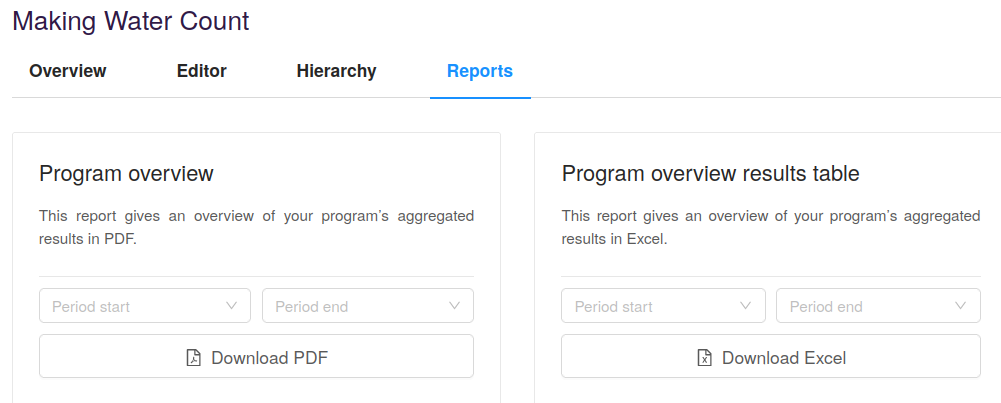

768,183 | 26,957,319,357 | IssuesEvent | 2023-02-08 15:43:47 | akvo/akvo-rsr | https://api.github.com/repos/akvo/akvo-rsr | closed | Bug: Cumulative indicator program report does not have correct aggregation | Bug Priority: High | ### What were you doing?

Downloaded program report for "[Making Water Count](https://rsr.akvo.org/my-rsr/programs/9062/reports)"

It the aggregated actual value for the Result - Indicator "1- Improved acc... | 1.0 | Bug: Cumulative indicator program report does not have correct aggregation - ### What were you doing?

Downloaded program report for "[Making Water Count](https://rsr.akvo.org/my-rsr/programs/9062/reports)"

... | non_process | bug cumulative indicator program report does not have correct aggregation what were you doing downloaded program report for it the aggregated actual value for the result indicator improved access to safe water sanitation and iwrm services products total number of people reached wit... | 0 |

1,440 | 4,005,703,799 | IssuesEvent | 2016-05-12 12:36:56 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Wrong processing of the script | !IMPORTANT! AREA: server SYSTEM: resource processing TYPE: bug | Let's consider the following script:

```javascript

script.src += params.join("&");

```

It is processed correctly:

```javascript

__set$(script, "src", __get$(script, "src") + params.join("&"));

```

But if we add a condition to this script:

```javascript

if (1) { } script.src += params.join("&");

```

What w... | 1.0 | Wrong processing of the script - Let's consider the following script:

```javascript

script.src += params.join("&");

```

It is processed correctly:

```javascript

__set$(script, "src", __get$(script, "src") + params.join("&"));

```

But if we add a condition to this script:

```javascript

if (1) { } script.src ... | process | wrong processing of the script let s consider the following script javascript script src params join it is processed correctly javascript set script src get script src params join but if we add a condition to this script javascript if script src ... | 1 |

210,542 | 16,107,491,691 | IssuesEvent | 2021-04-27 16:35:00 | newrelic/newrelic-php-agent | https://api.github.com/repos/newrelic/newrelic-php-agent | opened | 9.17.1: Performance Testing | testing | Simple Performance, throughput per second over five runs, using cross_version_arena docker image:

```

PHP 7.2.13 (cli) (built: Dec 10 2018 14:26:50) ( NTS )

Copyright (c) 1997-2018 The PHP Group

Zend Engine v3.2.0, Copyright (c) 1998-2018 Zend Technologies

======================================================... | 1.0 | 9.17.1: Performance Testing - Simple Performance, throughput per second over five runs, using cross_version_arena docker image:

```

PHP 7.2.13 (cli) (built: Dec 10 2018 14:26:50) ( NTS )

Copyright (c) 1997-2018 The PHP Group

Zend Engine v3.2.0, Copyright (c) 1998-2018 Zend Technologies

========================... | non_process | performance testing simple performance throughput per second over five runs using cross version arena docker image php cli built dec nts copyright c the php group zend engine copyright c zend technologies ... | 0 |

66,033 | 16,527,409,063 | IssuesEvent | 2021-05-26 22:17:45 | spack/spack | https://api.github.com/repos/spack/spack | opened | Installation issue: gdal | build-error | ### Steps to reproduce the issue

<!-- Fill in the exact spec you are trying to build and the relevant part of the error message -->

```console

$ spack install gdal

...

>> 232 checking for shared library run path origin... /bin/sh: /var/tmp/d

ahlgren/spack-stage/spack-stage-gdal-3.3.0-t26ewxar... | 1.0 | Installation issue: gdal - ### Steps to reproduce the issue

<!-- Fill in the exact spec you are trying to build and the relevant part of the error message -->

```console

$ spack install gdal

...

>> 232 checking for shared library run path origin... /bin/sh: /var/tmp/d

ahlgren/spack-stage/spac... | non_process | installation issue gdal steps to reproduce the issue console spack install gdal checking for shared library run path origin bin sh var tmp d ahlgren spack stage spack stage gdal zgmctzfvq spack src config rpath no such file or directory ... | 0 |

15,559 | 19,703,503,771 | IssuesEvent | 2022-01-12 19:08:01 | googleapis/java-video-transcoder | https://api.github.com/repos/googleapis/java-video-transcoder | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'video-transcoder' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can close this issue.

Reach out to **go/github-automa... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* release_level must be equal to one of the allowed values in .repo-metadata.json

* api_shortname 'video-transcoder' invalid in .repo-metadata.json

☝️ Once you correct these problems, you can c... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 release level must be equal to one of the allowed values in repo metadata json api shortname video transcoder invalid in repo metadata json ☝️ once you correct these problems you can c... | 1 |

11,325 | 14,140,777,735 | IssuesEvent | 2020-11-10 11:42:25 | MarcElrick/level-4-individual-project | https://api.github.com/repos/MarcElrick/level-4-individual-project | closed | Chop test files to be run quickly in unit test suite. | data processing testing | Develop shorter versions of test files to be quickly run by unit tests. Also, find the best-practice place to store these files. | 1.0 | Chop test files to be run quickly in unit test suite. - Develop shorter versions of test files to be quickly run by unit tests. Also, find the best-practice place to store these files. | process | chop test files to be run quickly in unit test suite develop shorter versions of test files to be quickly run by unit tests also find the best practice place to store these files | 1 |

9,509 | 8,655,605,928 | IssuesEvent | 2018-11-27 16:19:49 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | closed | REB wait for dependent services | area/service-catalog enhancement | Right now pod is restarting constantly and when finally SC is ready REB pod is not restarted immediately.

See:

```

kyma-system core-remote-environment-broker-57fd5b957-l9l48 1/1 Running 7 9m

```

AC:

- Wait for SC resources, fail after 10min timeout (nice log if timeout)

- ta... | 1.0 | REB wait for dependent services - Right now pod is restarting constantly and when finally SC is ready REB pod is not restarted immediately.

See:

```

kyma-system core-remote-environment-broker-57fd5b957-l9l48 1/1 Running 7 9m

```

AC:

- Wait for SC resources, fail after 10min t... | non_process | reb wait for dependent services right now pod is restarting constantly and when finally sc is ready reb pod is not restarted immediately see kyma system core remote environment broker running ac wait for sc resources fail after timeout nice log ... | 0 |

13,773 | 16,528,931,963 | IssuesEvent | 2021-05-27 01:30:03 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | Add time-to-k8s benchmark generation to minikube | kind/process priority/important-soon | The idea is to add a script to minikube what will run the [time-to-k8s](https://github.com/tstromberg/time-to-k8s) benchmarks against it and then generate a graph and commit the graph to the site.

This should also be automated to run on release so no one has to manually run the job. | 1.0 | Add time-to-k8s benchmark generation to minikube - The idea is to add a script to minikube what will run the [time-to-k8s](https://github.com/tstromberg/time-to-k8s) benchmarks against it and then generate a graph and commit the graph to the site.

This should also be automated to run on release so no one has to manu... | process | add time to benchmark generation to minikube the idea is to add a script to minikube what will run the benchmarks against it and then generate a graph and commit the graph to the site this should also be automated to run on release so no one has to manually run the job | 1 |

21,943 | 30,446,799,731 | IssuesEvent | 2023-07-15 19:28:46 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pyutils 0.0.1b3 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b3",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt: ... | 1.0 | pyutils 0.0.1b3 has 2 GuardDog issues - https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b3",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names... | process | pyutils has guarddog issues dependency pyutils version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt python utils pytils silen... | 1 |

8,890 | 11,985,628,470 | IssuesEvent | 2020-04-07 17:50:53 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | Docs for autogen libraries are minimal, unsat for GA | api: automl api: bigquerydatatransfer api: cloudasset api: cloudiot api: cloudkms api: cloudtasks api: dlp api: oslogin api: redis api: texttospeech api: websecurityscanner type: process | There is nothing like usage docs for any of these libraries.

/cc @theacodes | 1.0 | Docs for autogen libraries are minimal, unsat for GA - There is nothing like usage docs for any of these libraries.

/cc @theacodes | process | docs for autogen libraries are minimal unsat for ga there is nothing like usage docs for any of these libraries cc theacodes | 1 |

8,219 | 11,406,730,867 | IssuesEvent | 2020-01-31 14:52:58 | kubeflow/website | https://api.github.com/repos/kubeflow/website | opened | Publish v1-0 version of the docs | area/docs kind/process priority/p0 | We'd like to do the following

1. Start publishing master on v1-0.kubeflow.org

1. Have www.kubeflow.org continue to direct to the v0.7 version of the docs

1. When the docs on master are ready redirect www.kubeflow.org to the v1 version of the docs.

I think doing the above should be fairly straightforward based o... | 1.0 | Publish v1-0 version of the docs - We'd like to do the following

1. Start publishing master on v1-0.kubeflow.org

1. Have www.kubeflow.org continue to direct to the v0.7 version of the docs

1. When the docs on master are ready redirect www.kubeflow.org to the v1 version of the docs.

I think doing the above shoul... | process | publish version of the docs we d like to do the following start publishing master on kubeflow org have continue to direct to the version of the docs when the docs on master are ready redirect to the version of the docs i think doing the above should be fairly straightforward based ... | 1 |

19,621 | 25,975,265,186 | IssuesEvent | 2022-12-19 14:25:26 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | closed | Coverage is Failing on Master | bug development-process | **Describe the bug**

<!--

A clear and concise description of what the bug is.

-->

Coverage is failing on master with

```

Error: "Failed to get test coverage! Error: Failed to run tests: Error: Timed out waiting for test response"

```

https://github.com/apache/arrow-rs/actions/runs/3651786788/jobs/61694121... | 1.0 | Coverage is Failing on Master - **Describe the bug**

<!--

A clear and concise description of what the bug is.

-->

Coverage is failing on master with

```

Error: "Failed to get test coverage! Error: Failed to run tests: Error: Timed out waiting for test response"

```

https://github.com/apache/arrow-rs/actio... | process | coverage is failing on master describe the bug a clear and concise description of what the bug is coverage is failing on master with error failed to get test coverage error failed to run tests error timed out waiting for test response to reproduce steps to... | 1 |

14,229 | 17,149,379,742 | IssuesEvent | 2021-07-13 18:21:55 | googleapis/sphinx-docfx-yaml | https://api.github.com/repos/googleapis/sphinx-docfx-yaml | closed | Allow testing the plugin through the Kokoro job without running through Nox sessions | priority: p1 type: process | The current Kokoro job that runs `sphinx-build` for all repositories relies on using Nox, as we also use this job to refresh the docs. We should be able to test the plugin (and perhaps Sphinx as well) with newer versions without much needed manual work or a release having to be done.

We could also do [pre-releases](... | 1.0 | Allow testing the plugin through the Kokoro job without running through Nox sessions - The current Kokoro job that runs `sphinx-build` for all repositories relies on using Nox, as we also use this job to refresh the docs. We should be able to test the plugin (and perhaps Sphinx as well) with newer versions without much... | process | allow testing the plugin through the kokoro job without running through nox sessions the current kokoro job that runs sphinx build for all repositories relies on using nox as we also use this job to refresh the docs we should be able to test the plugin and perhaps sphinx as well with newer versions without much... | 1 |

22,698 | 32,007,362,030 | IssuesEvent | 2023-09-21 15:36:27 | X-Sharp/XSharpPublic | https://api.github.com/repos/X-Sharp/XSharpPublic | closed | Preprocessor bug in WP (XBase++ dialect) | bug Preprocessor | **Describe the bug**

Hello. Preprocessor version 2.17.0.3 (release) still does not process [WP](https://github.com/X-Sharp/XSharpPublic/issues/1288) correctly.

I duplicated the ticket, since it was closed a long time ago, and I was only able to test it recently. I also have suspicions that notifications about message... | 1.0 | Preprocessor bug in WP (XBase++ dialect) - **Describe the bug**

Hello. Preprocessor version 2.17.0.3 (release) still does not process [WP](https://github.com/X-Sharp/XSharpPublic/issues/1288) correctly.

I duplicated the ticket, since it was closed a long time ago, and I was only able to test it recently. I also have ... | process | preprocessor bug in wp xbase dialect describe the bug hello preprocessor version release still does not process correctly i duplicated the ticket since it was closed a long time ago and i was only able to test it recently i also have suspicions that notifications about messages in closed ... | 1 |

51,794 | 6,548,875,522 | IssuesEvent | 2017-09-05 02:32:32 | ValerioLyndon/MAL-Public-List-Designs | https://api.github.com/repos/ValerioLyndon/MAL-Public-List-Designs | closed | T| Anime status is visible below the expanded tags. | problem with design | The text pokes out to the left. Find a way to make this look better. *(add a border to the :before element?)*

| 1.0 | T| Anime status is visible below the expanded tags. - The text pokes out to the left. Find a way to make this look better. *(add a border to the :before element?)*

| non_process | t anime status is visible below the expanded tags the text pokes out to the left find a way to make this look better add a border to the before element | 0 |

7,056 | 10,212,191,424 | IssuesEvent | 2019-08-14 18:48:47 | material-components/material-components-ios | https://api.github.com/repos/material-components/material-components-ios | closed | [BottomNavigation] Meet with design to show largeContentSizeImage behavior and gather feedback. | [BottomNavigation] type:Process | This was filed as an internal issue. If you are a Googler, please visit [b/127495112](http://b/127495112) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/127495112](http://b/127495112) | 1.0 | [BottomNavigation] Meet with design to show largeContentSizeImage behavior and gather feedback. - This was filed as an internal issue. If you are a Googler, please visit [b/127495112](http://b/127495112) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated interna... | process | meet with design to show largecontentsizeimage behavior and gather feedback this was filed as an internal issue if you are a googler please visit for more details internal data associated internal bug | 1 |

12,278 | 3,062,240,653 | IssuesEvent | 2015-08-16 11:34:52 | ELENA-LANG/elena-lang | https://api.github.com/repos/ELENA-LANG/elena-lang | opened | #define statement | Design Idea Discussion | _#define_ statement name is misleading, probable it should be renamed into _#using_ or _#include_ | 1.0 | #define statement - _#define_ statement name is misleading, probable it should be renamed into _#using_ or _#include_ | non_process | define statement define statement name is misleading probable it should be renamed into using or include | 0 |

16,338 | 20,996,982,298 | IssuesEvent | 2022-03-29 14:15:03 | sjmog/smartflix | https://api.github.com/repos/sjmog/smartflix | opened | Render shows to the homepage | Rails/File processing Rails/Haml | WW91IGhhdmUganVzdCBzZXQgdXAgYSBSYWlscyBhcHBsaWNhdGlvbiB3aXRo

IGEgdGVzdC1kcml2ZW4gZHVtbXkgdmlldyEg8J+OiQoKSW4gdGhpcyBjaGFs

bGVuZ2UsIHlvdSB3aWxsIHVwZGF0ZSB0aGUgYXBwbGljYXRpb24gc28gdGhl

IHJvb3Qgcm91dGUgcmVuZGVycyB0aGUgc2hvd3MgZnJvbSB0aGUgW3Byb3Zp

ZGVkIENTViBmaWxlXSguLi90cmFpbmluZy1kYXRhL25ldGZsaXhfdGl0bGVz

LnppcCkuCgpIZXJ... | 1.0 | Render shows to the homepage - WW91IGhhdmUganVzdCBzZXQgdXAgYSBSYWlscyBhcHBsaWNhdGlvbiB3aXRo

IGEgdGVzdC1kcml2ZW4gZHVtbXkgdmlldyEg8J+OiQoKSW4gdGhpcyBjaGFs

bGVuZ2UsIHlvdSB3aWxsIHVwZGF0ZSB0aGUgYXBwbGljYXRpb24gc28gdGhl

IHJvb3Qgcm91dGUgcmVuZGVycyB0aGUgc2hvd3MgZnJvbSB0aGUgW3Byb3Zp

ZGVkIENTViBmaWxlXSguLi90cmFpbmluZy1kYXRhL25ld... | process | render shows to the homepage | 1 |

8,254 | 11,423,088,648 | IssuesEvent | 2020-02-03 15:19:10 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | NTR: positive regulation of GO:0002221 | New term request multi-species process |

I need a "positive regulation" of this signalling pathway for a plant protein (TPR1) which acts upstream of the receptor.

The (unknown) receptor(s) recognise chitin and the chitinase. form the pathogen

A pathogen chitinase acts as a receptor decoy/sequesters chitin

A plant TPR protein (TPR1) binds to the p... | 1.0 | NTR: positive regulation of GO:0002221 -

I need a "positive regulation" of this signalling pathway for a plant protein (TPR1) which acts upstream of the receptor.

The (unknown) receptor(s) recognise chitin and the chitinase. form the pathogen

A pathogen chitinase acts as a receptor decoy/sequesters chitin

... | process | ntr positive regulation of go i need a positive regulation of this signalling pathway for a plant protein which acts upstream of the receptor the unknown receptor s recognise chitin and the chitinase form the pathogen a pathogen chitinase acts as a receptor decoy sequesters chitin a plant t... | 1 |

21,985 | 30,482,365,767 | IssuesEvent | 2023-07-17 21:30:40 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | roblox-pyc 1.16.34 has 3 GuardDog issues | guarddog silent-process-execution | https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.16.34",

"result": {

"issues": 3,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "roblox-pyc-1.16.34/src/robloxpy.py:86",

... | 1.0 | roblox-pyc 1.16.34 has 3 GuardDog issues - https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.16.34",

"result": {

"issues": 3,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "ro... | process | roblox pyc has guarddog issues dependency roblox pyc version result issues errors results silent process execution location roblox pyc src robloxpy py code subprocess call stdo... | 1 |

198,439 | 22,659,617,889 | IssuesEvent | 2022-07-02 01:05:29 | kxxt/kxxt-website | https://api.github.com/repos/kxxt/kxxt-website | opened | CVE-2022-31108 (Medium) detected in mermaid-8.13.8.tgz | security vulnerability | ## CVE-2022-31108 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mermaid-8.13.8.tgz</b></p></summary>

<p>Markdownish syntax for generating flowcharts, sequence diagrams, class diagr... | True | CVE-2022-31108 (Medium) detected in mermaid-8.13.8.tgz - ## CVE-2022-31108 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mermaid-8.13.8.tgz</b></p></summary>

<p>Markdownish syntax ... | non_process | cve medium detected in mermaid tgz cve medium severity vulnerability vulnerable library mermaid tgz markdownish syntax for generating flowcharts sequence diagrams class diagrams gantt charts and git graphs library home page a href path to dependency file package js... | 0 |

33,783 | 7,753,965,707 | IssuesEvent | 2018-05-31 03:53:47 | pywbem/pywbem | https://api.github.com/repos/pywbem/pywbem | closed | Add mock capability to wbemcli | area: code resolution: fixed type: enhancement | A new option on wbemcli that allows it to execute the mock wbemconnection with a defined input file. | 1.0 | Add mock capability to wbemcli - A new option on wbemcli that allows it to execute the mock wbemconnection with a defined input file. | non_process | add mock capability to wbemcli a new option on wbemcli that allows it to execute the mock wbemconnection with a defined input file | 0 |

362,736 | 10,731,457,900 | IssuesEvent | 2019-10-28 19:35:56 | Sage-Bionetworks/dccvalidator | https://api.github.com/repos/Sage-Bionetworks/dccvalidator | closed | Track User Feedback | medium priority | It would be beneficial to the end user to provide them a mechanism in the app where they can:

1. file bugs

2. request new annotation keys or values

3. provide general feedback | 1.0 | Track User Feedback - It would be beneficial to the end user to provide them a mechanism in the app where they can:

1. file bugs

2. request new annotation keys or values

3. provide general feedback | non_process | track user feedback it would be beneficial to the end user to provide them a mechanism in the app where they can file bugs request new annotation keys or values provide general feedback | 0 |

391,759 | 26,906,792,994 | IssuesEvent | 2023-02-06 19:47:31 | osism/issues | https://api.github.com/repos/osism/issues | closed | Lag of documentation vGPU | documentation | If you want to use vGPU and pci-passthrough in Openstack, documentation is refering to add configurations to /etc/modules and /etc/modprobe.d/vfio.conf

As in Ubuntu 20.04, vfio-pci is part of the kernel. So configuration in modprobe is not working any more.

We managed to get it running by adding configurations to g... | 1.0 | Lag of documentation vGPU - If you want to use vGPU and pci-passthrough in Openstack, documentation is refering to add configurations to /etc/modules and /etc/modprobe.d/vfio.conf

As in Ubuntu 20.04, vfio-pci is part of the kernel. So configuration in modprobe is not working any more.

We managed to get it running b... | non_process | lag of documentation vgpu if you want to use vgpu and pci passthrough in openstack documentation is refering to add configurations to etc modules and etc modprobe d vfio conf as in ubuntu vfio pci is part of the kernel so configuration in modprobe is not working any more we managed to get it running by ... | 0 |

101,471 | 21,698,828,401 | IssuesEvent | 2022-05-10 00:08:20 | WordPress/openverse-api | https://api.github.com/repos/WordPress/openverse-api | opened | Command to re-send validation emails | 🟥 priority: critical 🛠 goal: fix 💻 aspect: code 🐍 tech: python 🔧 tech: django | ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

As a result of https://github.com/WordPress/openverse-api/releases/tag/v2.5.0, API token requ... | 1.0 | Command to re-send validation emails - ## Description

<!-- Concisely describe the bug. Compare your experience with what you expected to happen. -->

<!-- For example: "I clicked the 'submit' button and instead of seeing a thank you message, I saw a blank page." -->

As a result of https://github.com/WordPress/openverse-... | non_process | command to re send validation emails description as a result of api token requests will now appropriately create validation emails however we need to perform this process for the existing applications sarayourfriend has suggested a django command that could be run on a production box which would send ou... | 0 |

5,350 | 8,179,391,963 | IssuesEvent | 2018-08-28 16:17:51 | cypress-io/cypress-documentation | https://api.github.com/repos/cypress-io/cypress-documentation | closed | Document our custom tags for Hexo | process: internal docs | We have added a few custom tags, like url and contributor, need to document them (in addition to the link to https://hexo.io/docs/tag-plugins.html) | 1.0 | Document our custom tags for Hexo - We have added a few custom tags, like url and contributor, need to document them (in addition to the link to https://hexo.io/docs/tag-plugins.html) | process | document our custom tags for hexo we have added a few custom tags like url and contributor need to document them in addition to the link to | 1 |

54,752 | 13,445,660,144 | IssuesEvent | 2020-09-08 11:46:49 | gradle/gradle | https://api.github.com/repos/gradle/gradle | closed | Maven2Gradle conversion support targeting Kotlin DSL | a:feature from:contributor good first issue in:build-init-plugin | I had a short chat with @bamboo about this idea at Kotlin Conf.

Currently the `init` task which invokes the [Maven2Gradle](https://github.com/gradle/gradle/blob/master/subprojects/build-init/src/main/groovy/org/gradle/buildinit/plugins/internal/maven/Maven2Gradle.groovy) converter only supports generating groovy bas... | 1.0 | Maven2Gradle conversion support targeting Kotlin DSL - I had a short chat with @bamboo about this idea at Kotlin Conf.

Currently the `init` task which invokes the [Maven2Gradle](https://github.com/gradle/gradle/blob/master/subprojects/build-init/src/main/groovy/org/gradle/buildinit/plugins/internal/maven/Maven2Gradl... | non_process | conversion support targeting kotlin dsl i had a short chat with bamboo about this idea at kotlin conf currently the init task which invokes the converter only supports generating groovy based dsl expected behavior ability to convert a maven project to the gradle kotlin dsl or gradle groovy dsl ... | 0 |

721 | 3,207,023,639 | IssuesEvent | 2015-10-05 08:06:03 | pwittchen/ReactiveBeacons | https://api.github.com/repos/pwittchen/ReactiveBeacons | closed | Release 0.1.0 | release process | **Initial release notes**:

- added `Filter` class providing methods, which can be used with `filter(...)` method from RxJava inside specific subscription. These methods can be used for filtering stream of Beacons by Proximity, distance, device names and MAC addresses.

- added missing `reactivebeacons` package to li... | 1.0 | Release 0.1.0 - **Initial release notes**:

- added `Filter` class providing methods, which can be used with `filter(...)` method from RxJava inside specific subscription. These methods can be used for filtering stream of Beacons by Proximity, distance, device names and MAC addresses.

- added missing `reactivebeacon... | process | release initial release notes added filter class providing methods which can be used with filter method from rxjava inside specific subscription these methods can be used for filtering stream of beacons by proximity distance device names and mac addresses added missing reactivebeacon... | 1 |

11,589 | 14,446,895,730 | IssuesEvent | 2020-12-08 02:24:50 | RezanTuran/MossensOnlinePizza | https://api.github.com/repos/RezanTuran/MossensOnlinePizza | opened | Vecka 6 Buggar och förberedelser (40 timmar) | Test process | 1. Testa om allting fungerar som det ska. Testa i olika webbläsare, olika skärm storlekar (10 timmar)

2. Skriva Rapport (20 timmar)

3. Fixa en powerpoint för presentera projektet för förbereda sig (10 timmar) | 1.0 | Vecka 6 Buggar och förberedelser (40 timmar) - 1. Testa om allting fungerar som det ska. Testa i olika webbläsare, olika skärm storlekar (10 timmar)

2. Skriva Rapport (20 timmar)

3. Fixa en powerpoint för presentera projektet för förbereda sig (10 timmar) | process | vecka buggar och förberedelser timmar testa om allting fungerar som det ska testa i olika webbläsare olika skärm storlekar timmar skriva rapport timmar fixa en powerpoint för presentera projektet för förbereda sig timmar | 1 |

14,224 | 4,848,325,311 | IssuesEvent | 2016-11-10 17:11:54 | ghoshnirmalya/hub-client | https://api.github.com/repos/ghoshnirmalya/hub-client | closed | Implementing the profile update mechanism | code enhancement | Add functionality from where a user can update his profile. | 1.0 | Implementing the profile update mechanism - Add functionality from where a user can update his profile. | non_process | implementing the profile update mechanism add functionality from where a user can update his profile | 0 |

399,410 | 11,748,056,434 | IssuesEvent | 2020-03-12 14:37:39 | oslc-op/oslc-specs | https://api.github.com/repos/oslc-op/oslc-specs | closed | OSLC Core 3.0 Discovery does not describe the results of GET on a QueryCapability queryBase URL. | Core: Main Spec Core: Query Priority: Medium Xtra: Jira | OSLC Core 3.0 Discovery defines QueryCapability and oslc:queryBase: The base URI to use for queries. Queries are invoked via HTTP GET on a query URI formed by appending a key=value pair to the base URI, as described in Query Capabilities section. But there is no Query Capabilities section in the Discovery document that... | 1.0 | OSLC Core 3.0 Discovery does not describe the results of GET on a QueryCapability queryBase URL. - OSLC Core 3.0 Discovery defines QueryCapability and oslc:queryBase: The base URI to use for queries. Queries are invoked via HTTP GET on a query URI formed by appending a key=value pair to the base URI, as described in Qu... | non_process | oslc core discovery does not describe the results of get on a querycapability querybase url oslc core discovery defines querycapability and oslc querybase the base uri to use for queries queries are invoked via http get on a query uri formed by appending a key value pair to the base uri as described in qu... | 0 |

92,045 | 18,763,699,193 | IssuesEvent | 2021-11-05 19:53:07 | cycleplanet/cycle-planet | https://api.github.com/repos/cycleplanet/cycle-planet | closed | Give NiceDate and NiceDate2 descriptive names | help wanted good first issue average priority code-quality | At the moment we have two Vue components named "NiceDate":

[NiceDate](https://github.com/cycleplanet/cycle-planet/tree/main/src/components/Shared/Modals/NiceDate.vue)

[NiceDate2](https://github.com/cycleplanet/cycle-planet/tree/main/src/components/Shared/Modals/NiceDate2.vue)

These have to be given better name... | 1.0 | Give NiceDate and NiceDate2 descriptive names - At the moment we have two Vue components named "NiceDate":

[NiceDate](https://github.com/cycleplanet/cycle-planet/tree/main/src/components/Shared/Modals/NiceDate.vue)

[NiceDate2](https://github.com/cycleplanet/cycle-planet/tree/main/src/components/Shared/Modals/NiceDa... | non_process | give nicedate and descriptive names at the moment we have two vue components named nicedate these have to be given better names that describe the differences between them also we should check if in fact they can be combined into one component that can be parameterised for the desired behaviour ... | 0 |

148,305 | 23,338,623,231 | IssuesEvent | 2022-08-09 12:17:15 | mapasculturais/mapasculturais | https://api.github.com/repos/mapasculturais/mapasculturais | closed | [Oportunidades] Desenho de arquiteturas de informação do perfil de Agente proponente | Design / UX Modernização da Interface | Desenhar arquiteturas de informação do módulo de oportunidades do perfil de Agente proponente

> Link do figma: https://www.figma.com/file/GhzUhEhVOUVi9TT3xti56U/Jornadas-ideais-e-Arquiteturas-de-informa%C3%A7%C3%A3o?node-id=0%3A1

- [x] Inscrição em oportunidade

- [x] Acompanhamento de inscrição

- [x] Fluxo de prestaç... | 1.0 | [Oportunidades] Desenho de arquiteturas de informação do perfil de Agente proponente - Desenhar arquiteturas de informação do módulo de oportunidades do perfil de Agente proponente

> Link do figma: https://www.figma.com/file/GhzUhEhVOUVi9TT3xti56U/Jornadas-ideais-e-Arquiteturas-de-informa%C3%A7%C3%A3o?node-id=0%3A1

-... | non_process | desenho de arquiteturas de informação do perfil de agente proponente desenhar arquiteturas de informação do módulo de oportunidades do perfil de agente proponente link do figma inscrição em oportunidade acompanhamento de inscrição fluxo de prestação de contas | 0 |

695,117 | 23,845,284,685 | IssuesEvent | 2022-09-06 13:36:36 | pendulum-chain/spacewalk | https://api.github.com/repos/pendulum-chain/spacewalk | opened | Create 'Issue' pallet | priority:medium type:feature | Create the pallet that handles spacewalk's 'issue' requests.

Tightly coupled to the (new) 'spacewalk' and 'vault_registry' pallets. (Tight coupling should be done by making the pallet's `Config` trait extend the `spacewalk::Config` so that one can use the functions of other pallets)

### Storage

- IssueRequests: `M... | 1.0 | Create 'Issue' pallet - Create the pallet that handles spacewalk's 'issue' requests.

Tightly coupled to the (new) 'spacewalk' and 'vault_registry' pallets. (Tight coupling should be done by making the pallet's `Config` trait extend the `spacewalk::Config` so that one can use the functions of other pallets)

### Stor... | non_process | create issue pallet create the pallet that handles spacewalk s issue requests tightly coupled to the new spacewalk and vault registry pallets tight coupling should be done by making the pallet s config trait extend the spacewalk config so that one can use the functions of other pallets stor... | 0 |

19,943 | 26,416,643,423 | IssuesEvent | 2023-01-13 16:28:29 | zammad/zammad | https://api.github.com/repos/zammad/zammad | opened | Email processing of messages with a lot of empty space results in a timeout | bug verified mail processing | ### Used Zammad Version

5.3

### Environment

- Installation method: any

- Operating system: MacOS 13.1

- Database + version: PostgreSQL 10.21

- Elasticsearch version: 7.14.2

- Browser + version: any

### Actual behaviour

When trying to import [test.eml.txt](https://github.com/zammad/zammad/files/10413385/test.e... | 1.0 | Email processing of messages with a lot of empty space results in a timeout - ### Used Zammad Version

5.3

### Environment

- Installation method: any

- Operating system: MacOS 13.1

- Database + version: PostgreSQL 10.21

- Elasticsearch version: 7.14.2

- Browser + version: any

### Actual behaviour

When trying t... | process | email processing of messages with a lot of empty space results in a timeout used zammad version environment installation method any operating system macos database version postgresql elasticsearch version browser version any actual behaviour when trying to im... | 1 |

19,263 | 13,211,298,570 | IssuesEvent | 2020-08-15 22:08:14 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | HitSpool interface: variable HsSender location (Trac #945) | Incomplete Migration Migrated from Trac enhancement infrastructure | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/945">https://code.icecube.wisc.edu/projects/icecube/ticket/945</a>, reported by dheeremanand owned by dheereman</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-08-10T22:35:41",

"_ts": "... | 1.0 | HitSpool interface: variable HsSender location (Trac #945) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/945">https://code.icecube.wisc.edu/projects/icecube/ticket/945</a>, reported by dheeremanand owned by dheereman</em></summary>

<p>

```json

{

"status": "c... | non_process | hitspool interface variable hssender location trac migrated from json status closed changetime ts description nit would be nice to have an option on where the hssender should run standard is on sps but in case we need to switch e g to ... | 0 |

792 | 3,274,410,580 | IssuesEvent | 2015-10-26 10:44:30 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Behavior of the force-unique flag | bug in progress P2 preprocess | Since DITA-OT version 2.0 there is a new option to automatically generate `copy-to` attributes to duplicate `<topicref>` elements.

This is an interesting feature and I gave it a try. However this feature has undesired side-effects for DITA maps that contain a relationship table. Topics part of relational links will ... | 1.0 | Behavior of the force-unique flag - Since DITA-OT version 2.0 there is a new option to automatically generate `copy-to` attributes to duplicate `<topicref>` elements.

This is an interesting feature and I gave it a try. However this feature has undesired side-effects for DITA maps that contain a relationship table. T... | process | behavior of the force unique flag since dita ot version there is a new option to automatically generate copy to attributes to duplicate elements this is an interesting feature and i gave it a try however this feature has undesired side effects for dita maps that contain a relationship table topics par... | 1 |

376,111 | 26,186,135,500 | IssuesEvent | 2023-01-03 00:41:51 | mrlucciola/proof-of-stake | https://api.github.com/repos/mrlucciola/proof-of-stake | closed | Add sequence diagram and tests for `Block` module | documentation m-block | Go thru `ledger/blocks.rs` in the `Blocks` module and see all references to it in documentation and create mapping for it.

Document all properties, associated functions and methods within `Block` and all references to those properties, fxns and methods in all modules.

As of commit `2d78f5263a4f94c3ded0335da36370d1a90... | 1.0 | Add sequence diagram and tests for `Block` module - Go thru `ledger/blocks.rs` in the `Blocks` module and see all references to it in documentation and create mapping for it.

Document all properties, associated functions and methods within `Block` and all references to those properties, fxns and methods in all modules... | non_process | add sequence diagram and tests for block module go thru ledger blocks rs in the blocks module and see all references to it in documentation and create mapping for it document all properties associated functions and methods within block and all references to those properties fxns and methods in all modules... | 0 |

11,881 | 14,679,195,829 | IssuesEvent | 2020-12-31 06:14:00 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | participant manager user invitation email fails to send | Bug P1 Participant manager Process: Fixed Process: Tested dev | UI shows a success message, there are no error logs on participant-manager-datastore and yet no email gets sent | 2.0 | participant manager user invitation email fails to send - UI shows a success message, there are no error logs on participant-manager-datastore and yet no email gets sent | process | participant manager user invitation email fails to send ui shows a success message there are no error logs on participant manager datastore and yet no email gets sent | 1 |

75,619 | 9,879,125,061 | IssuesEvent | 2019-06-24 09:17:36 | quirc-bot/QuIRC | https://api.github.com/repos/quirc-bot/QuIRC | closed | Rebase Beta1 with Master | Documentation good first issue | Add

Security

Attribution/*

GitHub/*

And any other change made straight to master to the beta update branch | 1.0 | Rebase Beta1 with Master - Add

Security

Attribution/*

GitHub/*

And any other change made straight to master to the beta update branch | non_process | rebase with master add security attribution github and any other change made straight to master to the beta update branch | 0 |

14,581 | 17,703,488,368 | IssuesEvent | 2021-08-25 03:07:56 | tdwg/dwc | https://api.github.com/repos/tdwg/dwc | closed | Change term - associatedOccurrences | Term - change Class - Occurrence Class - Organism Class - ResourceRelationship non-normative Process - complete | ## Change term

* Submitter: John Wieczorek @tucotuco

* Justification (why is this change necessary?): Inconsistency between definition and term organization

* Proponents (who needs this change): Everyone, for clarity

Current Term definition: https://dwc.tdwg.org/terms/#dwc:associatedOccurrences

Proposed new... | 1.0 | Change term - associatedOccurrences - ## Change term

* Submitter: John Wieczorek @tucotuco

* Justification (why is this change necessary?): Inconsistency between definition and term organization

* Proponents (who needs this change): Everyone, for clarity

Current Term definition: https://dwc.tdwg.org/terms/#dwc... | process | change term associatedoccurrences change term submitter john wieczorek tucotuco justification why is this change necessary inconsistency between definition and term organization proponents who needs this change everyone for clarity current term definition proposed new attributes of... | 1 |

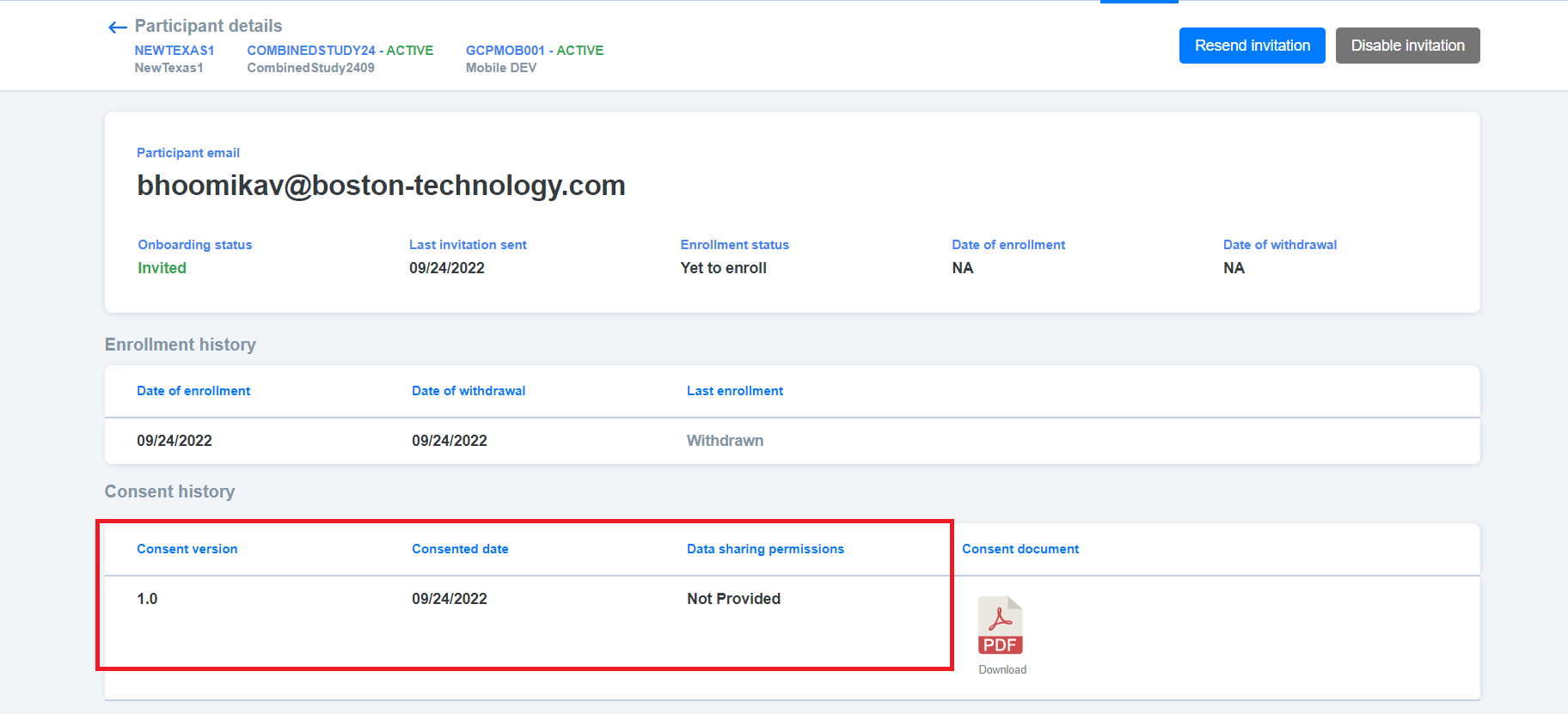

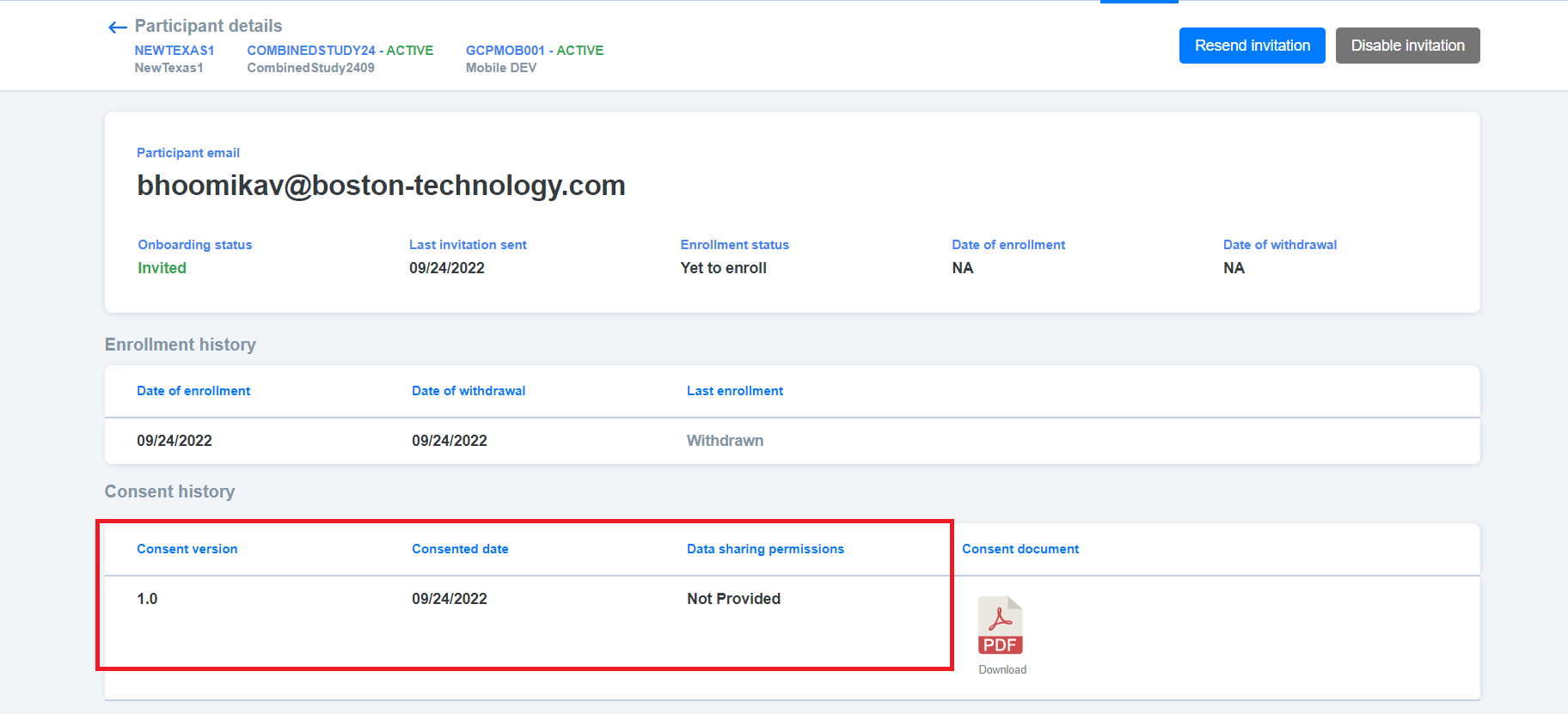

18,465 | 24,549,733,416 | IssuesEvent | 2022-10-12 11:39:12 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [VAPT] [PM] Participant details > Consent history > Consent history values should be aligned in the center | Bug P2 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | Participant details > Consent history > Consent history values should be aligned in the center

| 3.0 | [VAPT] [PM] Participant details > Consent history > Consent history values should be aligned in the center - Participant details > Consent history > Consent history values should be aligned in the center

| process | participant details consent history consent history values should be aligned in the center participant details consent history consent history values should be aligned in the center | 1 |

2,684 | 3,004,278,617 | IssuesEvent | 2015-07-25 19:30:55 | Mottie/tablesorter | https://api.github.com/repos/Mottie/tablesorter | closed | Build table widget - JSON - headers - translation error | Demo How To... Widget Widget-Build | When using the build table widget for JSON, the headers are translated into HTML cells incorrectly - each letter of each word is translated into a th

example:

"headers":["URL","Grade","Price","Features","Count","status"]

converts to:

<img width="1306" alt="ford_comparison_tool_and__build_static_data_ford_... | 1.0 | Build table widget - JSON - headers - translation error - When using the build table widget for JSON, the headers are translated into HTML cells incorrectly - each letter of each word is translated into a th

example:

"headers":["URL","Grade","Price","Features","Count","status"]

converts to:

<img width="13... | non_process | build table widget json headers translation error when using the build table widget for json the headers are translated into html cells incorrectly each letter of each word is translated into a th example headers converts to img width alt ford comparison tool and build static data ... | 0 |

47,954 | 13,264,900,718 | IssuesEvent | 2020-08-21 05:08:18 | istio/istio | https://api.github.com/repos/istio/istio | closed | dns certificate support: stop creating Secrets | area/security lifecycle/stale | Now that we have Istiod, we have no need to persist DNS certificates to Secrets. This is outside the scope of Istio, and should be handled by systems like cert-manager.

This issue involves removing this feature, and removing the associated doc: https://istio.io/docs/tasks/security/dns-cert/ and tests: TestDNSCertif... | True | dns certificate support: stop creating Secrets - Now that we have Istiod, we have no need to persist DNS certificates to Secrets. This is outside the scope of Istio, and should be handled by systems like cert-manager.

This issue involves removing this feature, and removing the associated doc: https://istio.io/docs/... | non_process | dns certificate support stop creating secrets now that we have istiod we have no need to persist dns certificates to secrets this is outside the scope of istio and should be handled by systems like cert manager this issue involves removing this feature and removing the associated doc and tests testdnsce... | 0 |

214,692 | 16,605,993,681 | IssuesEvent | 2021-06-02 03:55:50 | rancher/dashboard | https://api.github.com/repos/rancher/dashboard | closed | Auth Provider Config: Steps should be grouped as per the Ember UI | [zube]: To Test | When configuring an auth provider (e.g. GitHub) - we show a single box with 3 groups of list items. You can't really tell they're in 3 groups, other than spacing looking different between them.

In Ember, we had nice numbering on the steps and the UI was nice and clear.

We should update the presentation to number ... | 1.0 | Auth Provider Config: Steps should be grouped as per the Ember UI - When configuring an auth provider (e.g. GitHub) - we show a single box with 3 groups of list items. You can't really tell they're in 3 groups, other than spacing looking different between them.

In Ember, we had nice numbering on the steps and the UI... | non_process | auth provider config steps should be grouped as per the ember ui when configuring an auth provider e g github we show a single box with groups of list items you can t really tell they re in groups other than spacing looking different between them in ember we had nice numbering on the steps and the ui... | 0 |

214,649 | 7,275,315,815 | IssuesEvent | 2018-02-21 13:11:24 | datahq/datahub-qa | https://api.github.com/repos/datahq/datahub-qa | closed | data init throws an error when try to assign dataset name with numeric value | Priority ★★ Severity: Major | Tried to package file, but got the following error on assigning dataset `name` property

```

? Enter Data Package name (scratchpad) : small-dataset-100kb

>> Must consist only of lowercase alphanumeric characters plus ".", "-" and "_"

```

## How to reproduce

- run data init sample.csv

- when it asks for `name`... | 1.0 | data init throws an error when try to assign dataset name with numeric value - Tried to package file, but got the following error on assigning dataset `name` property

```

? Enter Data Package name (scratchpad) : small-dataset-100kb

>> Must consist only of lowercase alphanumeric characters plus ".", "-" and "_"

```

... | non_process | data init throws an error when try to assign dataset name with numeric value tried to package file but got the following error on assigning dataset name property enter data package name scratchpad small dataset must consist only of lowercase alphanumeric characters plus and ... | 0 |

74,489 | 15,349,991,673 | IssuesEvent | 2021-03-01 01:03:26 | jgeraigery/mongo-csfl-encryption-java-demo | https://api.github.com/repos/jgeraigery/mongo-csfl-encryption-java-demo | opened | CVE-2021-20328 (Medium) detected in mongodb-driver-3.11.2.jar | security vulnerability | ## CVE-2021-20328 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mongodb-driver-3.11.2.jar</b></p></summary>

<p>The MongoDB Driver uber-artifact that combines mongodb-driver-sync an... | True | CVE-2021-20328 (Medium) detected in mongodb-driver-3.11.2.jar - ## CVE-2021-20328 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mongodb-driver-3.11.2.jar</b></p></summary>

<p>The M... | non_process | cve medium detected in mongodb driver jar cve medium severity vulnerability vulnerable library mongodb driver jar the mongodb driver uber artifact that combines mongodb driver sync and the legacy driver library home page a href path to dependency file mongo csfl encrypt... | 0 |

12,073 | 14,739,893,066 | IssuesEvent | 2021-01-07 08:07:51 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Create Query Engine N-API bridge | process/candidate team/client | As already has been shown in https://github.com/pimeys/napi-test it's possible to connect from Node.js to the query engine via the n-api. This would be a big step forward in protocol integration between Node.js and the Rust Query Engine. | 1.0 | Create Query Engine N-API bridge - As already has been shown in https://github.com/pimeys/napi-test it's possible to connect from Node.js to the query engine via the n-api. This would be a big step forward in protocol integration between Node.js and the Rust Query Engine. | process | create query engine n api bridge as already has been shown in it s possible to connect from node js to the query engine via the n api this would be a big step forward in protocol integration between node js and the rust query engine | 1 |

10,062 | 13,044,161,787 | IssuesEvent | 2020-07-29 03:47:26 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `AddDateStringDecimal` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `AddDateStringDecimal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/s... | 2.0 | UCP: Migrate scalar function `AddDateStringDecimal` from TiDB -

## Description

Port the scalar function `AddDateStringDecimal` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @sticnarf

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- ... | process | ucp migrate scalar function adddatestringdecimal from tidb description port the scalar function adddatestringdecimal from tidb to coprocessor score mentor s sticnarf recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

19,774 | 26,150,856,571 | IssuesEvent | 2022-12-30 13:22:33 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Error in migration engine. Reason: entered unreachable code | bug/1-unconfirmed kind/bug process/candidate topic: error reporting team/schema | <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma db push`

Version: `4.7.1`

Binary Version: `272861e07ab64f234d3ffc4094e32bd61775599c`

Report: https://prisma-errors.netlify.app/report/14481

OS: `arm64 darwin 22.1.0`

JS Stacktrace:

```

Error: Error in migration engin... | 1.0 | Error in migration engine. Reason: entered unreachable code - <!-- If required, please update the title to be clear and descriptive -->

Command: `prisma db push`

Version: `4.7.1`

Binary Version: `272861e07ab64f234d3ffc4094e32bd61775599c`

Report: https://prisma-errors.netlify.app/report/14481

OS: `arm64 darwin ... | process | error in migration engine reason entered unreachable code command prisma db push version binary version report os darwin js stacktrace error error in migration engine reason internal error entered unreachable code rust stacktrace starting mig... | 1 |