Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

19,651 | 26,009,696,425 | IssuesEvent | 2022-12-20 23:38:24 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Release production workflow fails to publish helm chart | bug process | ### Description

When triggered by tag `v0.71.0-beta3`, the release production workflow failed at the job `Publish helm chart`:

```

remote: Permission to hashgraph/hedera-mirror-node.git denied to github-actions[bot].

fatal: unable to access 'https://github.com/hashgraph/hedera-mirror-node/': The requested URL ret... | 1.0 | Release production workflow fails to publish helm chart - ### Description

When triggered by tag `v0.71.0-beta3`, the release production workflow failed at the job `Publish helm chart`:

```

remote: Permission to hashgraph/hedera-mirror-node.git denied to github-actions[bot].

fatal: unable to access 'https://github... | process | release production workflow fails to publish helm chart description when triggered by tag the release production workflow failed at the job publish helm chart remote permission to hashgraph hedera mirror node git denied to github actions fatal unable to access the requested url retu... | 1 |

12,714 | 15,088,769,322 | IssuesEvent | 2021-02-06 02:09:03 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Sandboxing on tables with remapped FK (Display Values) causes query to fail | .Regression .Reproduced Administration/Data Sandboxes Priority:P1 Querying/Nested Queries Querying/Processor Type:Bug | **Describe the bug**

When using column sandboxing on a table with remapped FK (Display Values), then the sandboxed query fails on 1.38.0-rc4.

This works in 1.37.8.

**To Reproduce**

1. Admin > Data Model > Sample Dataset > Reviews > Product ID :gear: > Display Values = Use foreign key: `Products.Title`

causes query to fail - **Describe the bug**

When using column sandboxing on a table with remapped FK (Display Values), then the sandboxed query fails on 1.38.0-rc4.

This works in 1.37.8.

**To Reproduce**

1. Admin > Data Model > Sample Dataset > Reviews > Prod... | process | sandboxing on tables with remapped fk display values causes query to fail describe the bug when using column sandboxing on a table with remapped fk display values then the sandboxed query fails on this works in to reproduce admin data model sample dataset reviews product ... | 1 |

16,583 | 21,626,639,997 | IssuesEvent | 2022-05-05 03:41:42 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Multiple triggered interrupting boundary events can deadlock process instance | kind/bug scope/broker area/reliability team/process-automation | **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

When multiple interrupting boundary events are triggered simultaneously for a process instance, the process instance may not be able to finish terminating. Such an instance can no longer be canceled from outside either.

This was ... | 1.0 | Multiple triggered interrupting boundary events can deadlock process instance - **Describe the bug**

<!-- A clear and concise description of what the bug is. -->

When multiple interrupting boundary events are triggered simultaneously for a process instance, the process instance may not be able to finish terminati... | process | multiple triggered interrupting boundary events can deadlock process instance describe the bug when multiple interrupting boundary events are triggered simultaneously for a process instance the process instance may not be able to finish terminating such an instance can no longer be canceled from outside... | 1 |

256,981 | 19,483,891,121 | IssuesEvent | 2021-12-26 00:23:13 | KinsonDigital/Velaptor | https://api.github.com/repos/KinsonDigital/Velaptor | opened | 🚧Update copyright in project file to 2022 | documentation good first issue high priority preview | ### I have done the items below . . .

- [X] I have updated the title by replacing the '**_<title_**>' section.

### Description

Update the copyright in the project file from `2020` to `2022`

### Acceptance Criteria

**This issue is finished when:**

- [ ] Code documentation added if required

~Unit tests added~

~Al... | 1.0 | 🚧Update copyright in project file to 2022 - ### I have done the items below . . .

- [X] I have updated the title by replacing the '**_<title_**>' section.

### Description

Update the copyright in the project file from `2020` to `2022`

### Acceptance Criteria

**This issue is finished when:**

- [ ] Code documentati... | non_process | 🚧update copyright in project file to i have done the items below i have updated the title by replacing the section description update the copyright in the project file from to acceptance criteria this issue is finished when code documentation added if required ... | 0 |

665 | 3,135,929,990 | IssuesEvent | 2015-09-10 17:27:43 | wekan/wekan | https://api.github.com/repos/wekan/wekan | closed | Release v0.9 | Meta:Release-process | I've released the [first release candidate](https://github.com/wekan/wekan/releases/tag/v0.9.0-rc1) of Wekan v0.9:

* [Release notes](https://github.com/wekan/wekan/blob/master/History.md#next--v09)

* [Official docker image](https://hub.docker.com/r/mquandalle/wekan/)

* [Official sandstorm package](https://apps.san... | 1.0 | Release v0.9 - I've released the [first release candidate](https://github.com/wekan/wekan/releases/tag/v0.9.0-rc1) of Wekan v0.9:

* [Release notes](https://github.com/wekan/wekan/blob/master/History.md#next--v09)

* [Official docker image](https://hub.docker.com/r/mquandalle/wekan/)

* [Official sandstorm package](h... | process | release i ve released the of wekan go download and test it bugs that i plan to fix before the final release are tracked using the corresponding as it introduces a new user interface this release changes quite a bit of displayed text and thus requires some translati... | 1 |

15,684 | 19,847,823,638 | IssuesEvent | 2022-01-21 08:55:08 | ooi-data/CE04OSSM-RID26-07-NUTNRB000-recovered_inst-suna_instrument_recovered | https://api.github.com/repos/ooi-data/CE04OSSM-RID26-07-NUTNRB000-recovered_inst-suna_instrument_recovered | opened | 🛑 Processing failed: ValueError | process | ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T08:55:07.736867.

## Details

Flow name: `CE04OSSM-RID26-07-NUTNRB000-recovered_inst-suna_instrument_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, got 0)

... | 1.0 | 🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T08:55:07.736867.

## Details

Flow name: `CE04OSSM-RID26-07-NUTNRB000-recovered_inst-suna_instrument_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough valu... | process | 🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name recovered inst suna instrument recovered task name processing task error type valueerror error message not enough values to unpack expected got ... | 1 |

7,718 | 10,822,041,060 | IssuesEvent | 2019-11-08 20:11:17 | bazelbuild/vscode-bazel | https://api.github.com/repos/bazelbuild/vscode-bazel | closed | `npm outdated`: Unpin/updated some deps? | type: process | Current setup seems to have a few things listed:

`npm outdated` -

```

Package Current Wanted Latest Location

@types/node 6.14.2 6.14.4 11.13.4 vscode-bazel

tslint 5.11.0 5.15.0 5.15.0 vscode-bazel

typescript 3.1.6 3.4.3 3.4.3 vscode-bazel

... | 1.0 | `npm outdated`: Unpin/updated some deps? - Current setup seems to have a few things listed:

`npm outdated` -

```

Package Current Wanted Latest Location

@types/node 6.14.2 6.14.4 11.13.4 vscode-bazel

tslint 5.11.0 5.15.0 5.15.0 vscode-bazel

typescript ... | process | npm outdated unpin updated some deps current setup seems to have a few things listed npm outdated package current wanted latest location types node vscode bazel tslint vscode bazel typescript ... | 1 |

4,470 | 7,333,611,953 | IssuesEvent | 2018-03-05 19:58:10 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | metadata.json contains vmware_desktop instead of vmware_fusion | bug good first issue post-processor/vagrant | Version: 1.2.0

Platform: OS X 10.12.6

Using the templates found here https://github.com/boxcutter/windows a vmware box built on OSX will have `{"provider":"vmware_desktop"}` in its metadata.json instead of `vmware_fusion` which makes vagrant barf when you try to bring up the box with `vmware_fusion` as the provider... | 1.0 | metadata.json contains vmware_desktop instead of vmware_fusion - Version: 1.2.0

Platform: OS X 10.12.6

Using the templates found here https://github.com/boxcutter/windows a vmware box built on OSX will have `{"provider":"vmware_desktop"}` in its metadata.json instead of `vmware_fusion` which makes vagrant barf when... | process | metadata json contains vmware desktop instead of vmware fusion version platform os x using the templates found here a vmware box built on osx will have provider vmware desktop in its metadata json instead of vmware fusion which makes vagrant barf when you try to bring up the box with vm... | 1 |

166,375 | 20,718,465,481 | IssuesEvent | 2022-03-13 01:48:33 | ghc-dev/Randy-Weber | https://api.github.com/repos/ghc-dev/Randy-Weber | opened | nancy.1.4.3.nupkg: 1 vulnerabilities (highest severity is: 9.8) | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nancy.1.4.3.nupkg</b></p></summary>

<p>Nancy is a lightweight web framework for the .Net platform, inspired by Sinatra. Nancy aim at delive...</p>

<p>Library home pag... | True | nancy.1.4.3.nupkg: 1 vulnerabilities (highest severity is: 9.8) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nancy.1.4.3.nupkg</b></p></summary>

<p>Nancy is a lightweight web framework for the .Net platform, i... | non_process | nancy nupkg vulnerabilities highest severity is vulnerable library nancy nupkg nancy is a lightweight web framework for the net platform inspired by sinatra nancy aim at delive library home page a href path to dependency file csproj path to vulnerable library t ... | 0 |

17,495 | 23,305,507,996 | IssuesEvent | 2022-08-07 23:50:04 | lynnandtonic/nestflix.fun | https://api.github.com/repos/lynnandtonic/nestflix.fun | closed | Add Brazzos (2022) from "Only Murders in the Building" (Screenshots and Poster Added) | suggested title in process | Please add as much of the following info as you can:

Title: Brazzos (2022)

Type (film/tv show): TV series - detective drama

Film or show in which it appears: Only Murders in the Building

Is the parent film/show streaming anywhere? Yes - Hulu

About when in the parent film/show does it appear? Ep. 2x06 - ... | 1.0 | Add Brazzos (2022) from "Only Murders in the Building" (Screenshots and Poster Added) - Please add as much of the following info as you can:

Title: Brazzos (2022)

Type (film/tv show): TV series - detective drama

Film or show in which it appears: Only Murders in the Building

Is the parent film/show streamin... | process | add brazzos from only murders in the building screenshots and poster added please add as much of the following info as you can title brazzos type film tv show tv series detective drama film or show in which it appears only murders in the building is the parent film show streaming anyw... | 1 |

19,629 | 25,986,244,980 | IssuesEvent | 2022-12-20 00:40:25 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | ANALISTA DE SISTEMAS na [AVANSYS] | SALVADOR WORDPRESS HELP WANTED GEOPROCESSAMENTO ARCGIS JOOMLA Stale | <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

========================... | 1.0 | ANALISTA DE SISTEMAS na [AVANSYS] - <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA... | process | analista de sistemas na por favor só poste se a vaga for para salvador e cidades vizinhas use desenvolvedor front end ao invés de front end developer o exemplo desenvolvedor front end na ... | 1 |

61,424 | 25,518,311,625 | IssuesEvent | 2022-11-28 18:10:51 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | closed | [Enhancement] Show the dev environment alert banner in all environments except production | Service: Dev Type: Enhancement Product: Moped Type: Snackoo 🍫 Project: Moped v2.0 | To stay on the safe side, we should always show the alert banner indicating when you're not in the production Moped environment.

[Discussion](https://austininnovation.slack.com/archives/CNUEPKLB1/p1668562704235619). | 1.0 | [Enhancement] Show the dev environment alert banner in all environments except production - To stay on the safe side, we should always show the alert banner indicating when you're not in the production Moped environment.

[Discussion](https://austininnovation.slack.com/archives/CNUEPKLB1/p1668562704235619). | non_process | show the dev environment alert banner in all environments except production to stay on the safe side we should always show the alert banner indicating when you re not in the production moped environment | 0 |

13,658 | 16,373,579,537 | IssuesEvent | 2021-05-15 16:43:47 | bridgetownrb/bridgetown | https://api.github.com/repos/bridgetownrb/bridgetown | closed | Add /bin/false to netlify configuration | process | Currently Netlify routinely fails to build the site due to it thinking there are no changes to the website, even though we want it to build due to other underlying code or data changes. Apparently we can fix this by adding to the `netlify.toml` file:

```

[build]

ignore = "/bin/false"

``` | 1.0 | Add /bin/false to netlify configuration - Currently Netlify routinely fails to build the site due to it thinking there are no changes to the website, even though we want it to build due to other underlying code or data changes. Apparently we can fix this by adding to the `netlify.toml` file:

```

[build]

ignore =... | process | add bin false to netlify configuration currently netlify routinely fails to build the site due to it thinking there are no changes to the website even though we want it to build due to other underlying code or data changes apparently we can fix this by adding to the netlify toml file ignore bin... | 1 |

213,522 | 24,007,142,565 | IssuesEvent | 2022-09-14 15:36:13 | centrica-engineering/muon | https://api.github.com/repos/centrica-engineering/muon | closed | CVE-2022-25858 (High) detected in terser-5.12.1.tgz, terser-4.8.0.tgz - autoclosed | security vulnerability | ## CVE-2022-25858 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>terser-5.12.1.tgz</b>, <b>terser-4.8.0.tgz</b></p></summary>

<p>

<details><summary><b>terser-5.12.1.tgz</b></p></sum... | True | CVE-2022-25858 (High) detected in terser-5.12.1.tgz, terser-4.8.0.tgz - autoclosed - ## CVE-2022-25858 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>terser-5.12.1.tgz</b>, <b>terser... | non_process | cve high detected in terser tgz terser tgz autoclosed cve high severity vulnerability vulnerable libraries terser tgz terser tgz terser tgz javascript parser mangler compressor and beautifier toolkit for library home page a href path ... | 0 |

8,490 | 11,647,041,824 | IssuesEvent | 2020-03-01 13:00:32 | processing/processing | https://api.github.com/repos/processing/processing | closed | enum & interface after a bad line logs an error | preprocessor | ## Description

Entering (just pasting) the following code throws a long error in the console:

```java

void f (int) {}

enum e {a}

interface i {}

```

does strange stuff.

## Expected Behavior

Some error should appear in the errors tab about the unnamed parameter `int`

## Current Behavior

This is written t... | 1.0 | enum & interface after a bad line logs an error - ## Description

Entering (just pasting) the following code throws a long error in the console:

```java

void f (int) {}

enum e {a}

interface i {}

```

does strange stuff.

## Expected Behavior

Some error should appear in the errors tab about the unnamed paramet... | process | enum interface after a bad line logs an error description entering just pasting the following code throws a long error in the console java void f int enum e a interface i does strange stuff expected behavior some error should appear in the errors tab about the unnamed paramet... | 1 |

160,183 | 13,783,358,924 | IssuesEvent | 2020-10-08 19:05:29 | ni/niveristand-scan-engine-ethercat-custom-device | https://api.github.com/repos/ni/niveristand-scan-engine-ethercat-custom-device | closed | Not correct "Add-On Installation Instructions" | bug documentation | **Describe the bug**

Repository don`t have "Scan Engine" folder.

**Desktop (please complete the following information):**

- OS: Windows 10

- Veristand: 2019

- LabVIEW: 2019

**Additional context**

If i put folder "Custom Device Source" to "<Public Documents>\National Instruments\NI VeriStand 20xx\Custom Dev... | 1.0 | Not correct "Add-On Installation Instructions" - **Describe the bug**

Repository don`t have "Scan Engine" folder.

**Desktop (please complete the following information):**

- OS: Windows 10

- Veristand: 2019

- LabVIEW: 2019

**Additional context**

If i put folder "Custom Device Source" to "<Public Documents>\... | non_process | not correct add on installation instructions describe the bug repository don t have scan engine folder desktop please complete the following information os windows veristand labview additional context if i put folder custom device source to national instruments ni ... | 0 |

317,529 | 9,666,374,045 | IssuesEvent | 2019-05-21 10:38:57 | DigitalCampus/oppia-mobile-android | https://api.github.com/repos/DigitalCampus/oppia-mobile-android | closed | Points activity graph page - not showing description correctly | Medium priority bug | The description in the points list is showing "Description 1" etc rather than the actual description of the points (eg "for completing activity xxxx"), see:

| 1.0 | Points activity graph page - not showing description correctly - The description in the points list is showing "Description 1" etc rather than the actual description of the points (eg "for completing activity xxxx"), see:

, with a breakpoint at

https://githu... | 1.0 | Inconsistencies between radiance calculations, unexpected impact of changing ε - Differences between radiances calculated directly and through the symbolic measurement equation are too large when either emissivity or non-linearity are switched off individually. With a codebase that includes a15aeed and 9e67af7 (such t... | non_process | inconsistencies between radiance calculations unexpected impact of changing ε differences between radiances calculated directly and through the symbolic measurement equation are too large when either emissivity or non linearity are switched off individually with a codebase that includes and such that shoul... | 0 |

3,337 | 6,472,857,751 | IssuesEvent | 2017-08-17 14:47:37 | uvacw/inca | https://api.github.com/repos/uvacw/inca | closed | Sentiment analysis | 2017_CorpCom_PG_brainstorm CorpCom PersCom PROCESSORS | PersCom: Hanneke: Automatic coding/help with coding this content. Things that could be coded automatically are likes/comments/tone of comments | 1.0 | Sentiment analysis - PersCom: Hanneke: Automatic coding/help with coding this content. Things that could be coded automatically are likes/comments/tone of comments | process | sentiment analysis perscom hanneke automatic coding help with coding this content things that could be coded automatically are likes comments tone of comments | 1 |

2,974 | 10,708,108,267 | IssuesEvent | 2019-10-24 18:56:40 | 18F/cg-product | https://api.github.com/repos/18F/cg-product | closed | Fix Kubernetes pod not running false alarms | contractor-3-maintainability operations | Starting in August or September, we started getting alerts in Prometheus for "Kubernetes pod not running" that seem to be for pods that either are running, or have been deleted and replaced. These alerts seem to never clear, making it difficult to tell when there are real issues with kubernetes.

[Slack thread about ... | True | Fix Kubernetes pod not running false alarms - Starting in August or September, we started getting alerts in Prometheus for "Kubernetes pod not running" that seem to be for pods that either are running, or have been deleted and replaced. These alerts seem to never clear, making it difficult to tell when there are real i... | non_process | fix kubernetes pod not running false alarms starting in august or september we started getting alerts in prometheus for kubernetes pod not running that seem to be for pods that either are running or have been deleted and replaced these alerts seem to never clear making it difficult to tell when there are real i... | 0 |

46,944 | 24,794,622,649 | IssuesEvent | 2022-10-24 16:10:55 | iree-org/iree | https://api.github.com/repos/iree-org/iree | closed | Add `hal_inline` dialect/module for tiny environments. | runtime performance ⚡ | For environments where the execution model is known to be exclusively local and inline (embedded systems) we can have a paired down HAL that pretty much only contains executable support. The idea is to still use HAL executable translation in the compiler but lowering the stream dialect to a new lightweight dialect that... | True | Add `hal_inline` dialect/module for tiny environments. - For environments where the execution model is known to be exclusively local and inline (embedded systems) we can have a paired down HAL that pretty much only contains executable support. The idea is to still use HAL executable translation in the compiler but lowe... | non_process | add hal inline dialect module for tiny environments for environments where the execution model is known to be exclusively local and inline embedded systems we can have a paired down hal that pretty much only contains executable support the idea is to still use hal executable translation in the compiler but lowe... | 0 |

600,891 | 18,361,298,959 | IssuesEvent | 2021-10-09 08:48:36 | AY2122S1-CS2103T-W11-2/tp | https://api.github.com/repos/AY2122S1-CS2103T-W11-2/tp | closed | Adding and removing fields from Staff class | type.Task priority.High severity.High | To remove: Tags

To add: Role, Status, Index, Schedule | 1.0 | Adding and removing fields from Staff class - To remove: Tags

To add: Role, Status, Index, Schedule | non_process | adding and removing fields from staff class to remove tags to add role status index schedule | 0 |

52,679 | 7,780,068,631 | IssuesEvent | 2018-06-05 18:49:48 | openmpf/openmpf | https://api.github.com/repos/openmpf/openmpf | closed | Update online docs with Darknet component | documentation release 2.1.0 | The Darknet component needs to be added to the table on [this page](https://openmpf.github.io/docs/site/) and the bulleted list on [this page](https://openmpf.github.io). | 1.0 | Update online docs with Darknet component - The Darknet component needs to be added to the table on [this page](https://openmpf.github.io/docs/site/) and the bulleted list on [this page](https://openmpf.github.io). | non_process | update online docs with darknet component the darknet component needs to be added to the table on and the bulleted list on | 0 |

17,834 | 23,773,303,651 | IssuesEvent | 2022-09-01 18:21:05 | Azure/azure-sdk-tools | https://api.github.com/repos/Azure/azure-sdk-tools | opened | Onboarding Step: Cadl | Cadl Engagement Experience WS: Process Tools & Automation | The purpose of this Epic is to define the gaps on the onboarding process inside the Azure SDK Release process affected by Cadl.

Some general parts on the step that need to be accounted for:

- New Service Setup:

- Get Started with Cadl documentation

- Necessary permissions for tooling around Cadl

- Existing... | 1.0 | Onboarding Step: Cadl - The purpose of this Epic is to define the gaps on the onboarding process inside the Azure SDK Release process affected by Cadl.

Some general parts on the step that need to be accounted for:

- New Service Setup:

- Get Started with Cadl documentation

- Necessary permissions for tooling ... | process | onboarding step cadl the purpose of this epic is to define the gaps on the onboarding process inside the azure sdk release process affected by cadl some general parts on the step that need to be accounted for new service setup get started with cadl documentation necessary permissions for tooling ... | 1 |

2,625 | 5,399,567,119 | IssuesEvent | 2017-02-27 19:46:31 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Wrong format for BigQuery timestamp in filters | Bug Database/BigQuery Parameters/Variables Query Processor | - Chrome 55

- Windows 7

- BigQuery

- Metabase 0.21.1

- Metabase hosting environment: Google App Engine

- Metabase internal database: Google SQL

When using a custom SQL request with a date type filter :

```

SELECT foo

FROM bar

WHERE timestamp >= {{startdate}}

```

I have the error... | 1.0 | Wrong format for BigQuery timestamp in filters - - Chrome 55

- Windows 7

- BigQuery

- Metabase 0.21.1

- Metabase hosting environment: Google App Engine

- Metabase internal database: Google SQL

When using a custom SQL request with a date type filter :

```

SELECT foo

FROM bar

WHERE t... | process | wrong format for bigquery timestamp in filters chrome windows bigquery metabase metabase hosting environment google app engine metabase internal database google sql when using a custom sql request with a date type filter select foo from bar where tim... | 1 |

16,189 | 20,626,811,925 | IssuesEvent | 2022-03-07 23:45:53 | bartvanosnabrugge/summaWorkflow_public | https://api.github.com/repos/bartvanosnabrugge/summaWorkflow_public | opened | 4b_remapping/2_forcing/3_temperature_lapsing_and_datastep | function conversion model agnostic processing | - [ ] copy/ change and adjust code to work as python function

- [ ] make sure to add docstring in the sphinx format (see for example util/util.py - read_summa_workflow_control_file or data_specific_processing/merit.py)

- [ ] Add to the description parts of the readme.rst file that come with the summa-cwarhm script th... | 1.0 | 4b_remapping/2_forcing/3_temperature_lapsing_and_datastep - - [ ] copy/ change and adjust code to work as python function

- [ ] make sure to add docstring in the sphinx format (see for example util/util.py - read_summa_workflow_control_file or data_specific_processing/merit.py)

- [ ] Add to the description parts of t... | process | remapping forcing temperature lapsing and datastep copy change and adjust code to work as python function make sure to add docstring in the sphinx format see for example util util py read summa workflow control file or data specific processing merit py add to the description parts of the read... | 1 |

9,959 | 12,991,950,199 | IssuesEvent | 2020-07-23 05:28:56 | GoogleCloudPlatform/stackdriver-sandbox | https://api.github.com/repos/GoogleCloudPlatform/stackdriver-sandbox | closed | [docs] Add section to User Guide with ./destroy.sh instructions | priority: p2 type: process | In order for users to complete their use of Stackdriver Sandbox without incurring additional billing, it is important for the users to run the ./destroy.sh script at the end of their use.

However, the User Guide does not currently have a section instructing users to do so. A section near the end of the User Guide (b... | 1.0 | [docs] Add section to User Guide with ./destroy.sh instructions - In order for users to complete their use of Stackdriver Sandbox without incurring additional billing, it is important for the users to run the ./destroy.sh script at the end of their use.

However, the User Guide does not currently have a section instr... | process | add section to user guide with destroy sh instructions in order for users to complete their use of stackdriver sandbox without incurring additional billing it is important for the users to run the destroy sh script at the end of their use however the user guide does not currently have a section instructin... | 1 |

118,153 | 25,262,564,247 | IssuesEvent | 2022-11-16 00:28:22 | vektor-inc/vk-blocks-pro | https://api.github.com/repos/vektor-inc/vk-blocks-pro | closed | register_settingのパラメーター取得方法を修正する | Code Quality | https://developer.wordpress.org/reference/functions/register_setting/

options_schemeでget_properties、get_defaultsしていた方法を廃止する

書式設定( #1057 #1367 )やブロックマネージャー(#1047 )を作っていてschemeをまとめてpropertiesやdefaultsを設定するのは配列の中に配列があった時にどのように対応できない問題が出てきたので、propertiesやdefaultsをそれぞれ定義しようと思います

やや冗長な書き方になるかもしれないですがpropertiesやdefaul... | 1.0 | register_settingのパラメーター取得方法を修正する - https://developer.wordpress.org/reference/functions/register_setting/

options_schemeでget_properties、get_defaultsしていた方法を廃止する

書式設定( #1057 #1367 )やブロックマネージャー(#1047 )を作っていてschemeをまとめてpropertiesやdefaultsを設定するのは配列の中に配列があった時にどのように対応できない問題が出てきたので、propertiesやdefaultsをそれぞれ定義しようと思います

やや... | non_process | register settingのパラメーター取得方法を修正する options schemeでget properties、get defaultsしていた方法を廃止する 書式設定 やブロックマネージャー を作っていてschemeをまとめてpropertiesやdefaultsを設定するのは配列の中に配列があった時にどのように対応できない問題が出てきたので、propertiesやdefaultsをそれぞれ定義しようと思います やや冗長な書き方になるかもしれないですがpropertiesやdefaultsを柔軟に定義できるようになるので結果的には分かりやすくなると思います 何か... | 0 |

431,268 | 30,225,651,716 | IssuesEvent | 2023-07-06 00:05:57 | Requisitos-de-Software/2023.1-Simplenote | https://api.github.com/repos/Requisitos-de-Software/2023.1-Simplenote | closed | Corrigir documentos do projeto com base na avaliação do grupo 4 | documentation Revisão Correção Escrita | ## Descrição

Corrigir documentos do projeto com base na avaliação do grupo 4

## Tarefas

- Planejamento

- [x] Rich picture

- [x] App

- [x] Cronograma

- [x] Cronograma realizado

- [x] Ferramentas

- [x] Metodologias

- Elicitação

- [x] Introspecção

- [x] Brainstorming

- [x] Entrevi... | 1.0 | Corrigir documentos do projeto com base na avaliação do grupo 4 - ## Descrição

Corrigir documentos do projeto com base na avaliação do grupo 4

## Tarefas

- Planejamento

- [x] Rich picture

- [x] App

- [x] Cronograma

- [x] Cronograma realizado

- [x] Ferramentas

- [x] Metodologias

- Elicitaçã... | non_process | corrigir documentos do projeto com base na avaliação do grupo descrição corrigir documentos do projeto com base na avaliação do grupo tarefas planejamento rich picture app cronograma cronograma realizado ferramentas metodologias elicitação in... | 0 |

1,335 | 3,899,642,871 | IssuesEvent | 2016-04-17 21:19:58 | kerubistan/kerub | https://api.github.com/repos/kerubistan/kerub | closed | allow user to upload disk image | component:data processing enhancement priority: normal | user should be able to upload a disk image as a file, the file must be stored on a host | 1.0 | allow user to upload disk image - user should be able to upload a disk image as a file, the file must be stored on a host | process | allow user to upload disk image user should be able to upload a disk image as a file the file must be stored on a host | 1 |

22,226 | 30,775,503,814 | IssuesEvent | 2023-07-31 06:02:13 | h4sh5/npm-auto-scanner | https://api.github.com/repos/h4sh5/npm-auto-scanner | opened | electron-mocha 12.0.1 has 1 guarddog issues | npm-silent-process-execution | ```{"npm-silent-process-execution":[{"code":" const child = spawn(process.execPath, ['cleanup.js', userData], {\n detached: true,\n stdio: 'ignore',\n env: { ELECTRON_RUN_AS_NODE: 1 },\n cwd: __dirname\n })","location":"package/lib/main.js:53","message":"This package is silently executing another executab... | 1.0 | electron-mocha 12.0.1 has 1 guarddog issues - ```{"npm-silent-process-execution":[{"code":" const child = spawn(process.execPath, ['cleanup.js', userData], {\n detached: true,\n stdio: 'ignore',\n env: { ELECTRON_RUN_AS_NODE: 1 },\n cwd: __dirname\n })","location":"package/lib/main.js:53","message":"This ... | process | electron mocha has guarddog issues npm silent process execution n detached true n stdio ignore n env electron run as node n cwd dirname n location package lib main js message this package is silently executing another executable | 1 |

11,891 | 14,686,116,231 | IssuesEvent | 2021-01-01 13:17:10 | yuta252/startlens_web_backend | https://api.github.com/repos/yuta252/startlens_web_backend | closed | JWTによるユーザー認証機能及びユーザー機能の追加 | dev process | ## 概要

JWTトークンを利用したユーザー認証を実装することでフロントエンドからAPIを利用したときにユーザーのログイン状態を管理できるようにする。ユーザーの新規登録及びログイン処理に必要な処理を実装する。

## 変更点

---

- [x] TokensControllerにおいてログイン時にJWTトークンを生成しレスポンスを返すように実装

- [x] UsersControllerにおいて新規登録時にユーザーとそれに紐づくプロフィールを作成するように実装

- [x] JSON レスポンスをデフォルトのsnake_caseからcamelCaseに変更

- [x] AuthorizationヘッダーのJWT... | 1.0 | JWTによるユーザー認証機能及びユーザー機能の追加 - ## 概要

JWTトークンを利用したユーザー認証を実装することでフロントエンドからAPIを利用したときにユーザーのログイン状態を管理できるようにする。ユーザーの新規登録及びログイン処理に必要な処理を実装する。

## 変更点

---

- [x] TokensControllerにおいてログイン時にJWTトークンを生成しレスポンスを返すように実装

- [x] UsersControllerにおいて新規登録時にユーザーとそれに紐づくプロフィールを作成するように実装

- [x] JSON レスポンスをデフォルトのsnake_caseからcamelCaseに変更

... | process | jwtによるユーザー認証機能及びユーザー機能の追加 概要 jwtトークンを利用したユーザー認証を実装することでフロントエンドからapiを利用したときにユーザーのログイン状態を管理できるようにする。ユーザーの新規登録及びログイン処理に必要な処理を実装する。 変更点 tokenscontrollerにおいてログイン時にjwtトークンを生成しレスポンスを返すように実装 userscontrollerにおいて新規登録時にユーザーとそれに紐づくプロフィールを作成するように実装 json レスポンスをデフォルトのsnake caseからcamelcaseに変更 a... | 1 |

225,524 | 24,855,885,702 | IssuesEvent | 2022-10-27 02:12:10 | hackforla/ops | https://api.github.com/repos/hackforla/ops | opened | Split the project-leads user group into developer-group, ops-group, and project-leads group | feature: AWS IAM feature: administrative feature: security | ### Overview

AWS best practice is to give minimum permission to users [only what's needed for the task]. Some users currently have more than that.

### Action Items

- [ ] Create a developer-group

- [ ] Create an ops-group

- [ ] Add relevant permissions to developer-group and ops-group

- [ ] Reassign groups t... | True | Split the project-leads user group into developer-group, ops-group, and project-leads group - ### Overview

AWS best practice is to give minimum permission to users [only what's needed for the task]. Some users currently have more than that.

### Action Items

- [ ] Create a developer-group

- [ ] Create an ops-g... | non_process | split the project leads user group into developer group ops group and project leads group overview aws best practice is to give minimum permission to users some users currently have more than that action items create a developer group create an ops group add relevant permissions t... | 0 |

20,819 | 27,578,844,541 | IssuesEvent | 2023-03-08 14:54:02 | ukri-excalibur/excalibur-tests | https://api.github.com/repos/ukri-excalibur/excalibur-tests | opened | Investigate what other environment variables are needed for postprocessing | postprocessing | ... in addition to the fields in the perflogs - things like compilers and their versions, MPI implementations, the benchmark app version, etc.

- Could any of them be fished out of the perflog fields?

- Should we just ask users to add whatever they need logged as an environment variable? | 1.0 | Investigate what other environment variables are needed for postprocessing - ... in addition to the fields in the perflogs - things like compilers and their versions, MPI implementations, the benchmark app version, etc.

- Could any of them be fished out of the perflog fields?

- Should we just ask users to add whatev... | process | investigate what other environment variables are needed for postprocessing in addition to the fields in the perflogs things like compilers and their versions mpi implementations the benchmark app version etc could any of them be fished out of the perflog fields should we just ask users to add whatev... | 1 |

97,344 | 8,653,058,953 | IssuesEvent | 2018-11-27 09:51:17 | humera987/FXLabs-Test-Automation | https://api.github.com/repos/humera987/FXLabs-Test-Automation | closed | new tested 27 : ApiV1IssuesProjectIdIdGetQueryParamPagesizeDdos | new tested 27 | Project : new tested 27

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-Cook... | 1.0 | new tested 27 : ApiV1IssuesProjectIdIdGetQueryParamPagesizeDdos - Project : new tested 27

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate]... | non_process | new tested project new tested job uat env uat region us west result fail status code headers x content type options x xss protection cache control pragma expires x frame options set cookie content type transfer encoding date endpoint r... | 0 |

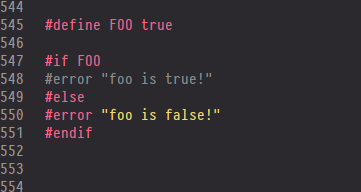

14,996 | 18,676,778,517 | IssuesEvent | 2021-10-31 17:43:14 | slynch8/10x | https://api.github.com/repos/slynch8/10x | closed | Preprocessor doesn't recognise "true" | bug Priority 3 trivial preprocessor |

If I use this instead:

`#define FOO 1`

Then the correct branch is used. | 1.0 | Preprocessor doesn't recognise "true" -

If I use this instead:

`#define FOO 1`

Then the correct branch is used. | process | preprocessor doesn t recognise true if i use this instead define foo then the correct branch is used | 1 |

6,662 | 9,782,046,282 | IssuesEvent | 2019-06-07 21:46:29 | googleapis/google-cloud-java | https://api.github.com/repos/googleapis/google-cloud-java | closed | Dependency convergence errors in datastore | type: process | It's not immediately obvious to me why I see this and the checks in this project's own pom.xml don't; but I definitely see this in a project that does nothing but import the datastore dependency from the google-cloud-java BOM and check dependency convergence:

```

[INFO] Scanning for projects...

[INFO]

[INFO] ---... | 1.0 | Dependency convergence errors in datastore - It's not immediately obvious to me why I see this and the checks in this project's own pom.xml don't; but I definitely see this in a project that does nothing but import the datastore dependency from the google-cloud-java BOM and check dependency convergence:

```

[INFO] ... | process | dependency convergence errors in datastore it s not immediately obvious to me why i see this and the checks in this project s own pom xml don t but i definitely see this in a project that does nothing but import the datastore dependency from the google cloud java bom and check dependency convergence scann... | 1 |

31,183 | 6,443,910,982 | IssuesEvent | 2017-08-12 02:29:03 | opendatakit/opendatakit | https://api.github.com/repos/opendatakit/opendatakit | closed | Erorr Display wen Redirect to IP Public | Aggregate Priority-Medium Type-Defect | Originally reported on Google Code with ID 1124

```

What steps will reproduce the problem?

1.I Just Deploy the ODK Aggregate on Windows Server 2012 R2

2.Install MYSQL

3. Install ODK

What is the expected output? What do you see instead?

setting up a localhost odk aggregate + mysql + tomcat.

Instead, I still can not di... | 1.0 | Erorr Display wen Redirect to IP Public - Originally reported on Google Code with ID 1124

```

What steps will reproduce the problem?

1.I Just Deploy the ODK Aggregate on Windows Server 2012 R2

2.Install MYSQL

3. Install ODK

What is the expected output? What do you see instead?

setting up a localhost odk aggregate + m... | non_process | erorr display wen redirect to ip public originally reported on google code with id what steps will reproduce the problem i just deploy the odk aggregate on windows server install mysql install odk what is the expected output what do you see instead setting up a localhost odk aggregate mysql ... | 0 |

9,918 | 2,616,010,381 | IssuesEvent | 2015-03-02 00:53:53 | jasonhall/bwapi | https://api.github.com/repos/jasonhall/bwapi | closed | BWAPI fails to load AIModule or crashes | auto-migrated Type-Defect | ```

When I build the ExampleAIModule in Debug mode BWAPI fails to load it.

The LoadLibrary call in BWAPI Returns NULL and GetLastError says 998:

Invalid memory access. either something is wrong with dynamic linking or

the BWAPI::Init() call in the DLL makes problems:

http://support.microsoft.com/kb/196069

Sometimes t... | 1.0 | BWAPI fails to load AIModule or crashes - ```

When I build the ExampleAIModule in Debug mode BWAPI fails to load it.

The LoadLibrary call in BWAPI Returns NULL and GetLastError says 998:

Invalid memory access. either something is wrong with dynamic linking or

the BWAPI::Init() call in the DLL makes problems:

http://su... | non_process | bwapi fails to load aimodule or crashes when i build the exampleaimodule in debug mode bwapi fails to load it the loadlibrary call in bwapi returns null and getlasterror says invalid memory access either something is wrong with dynamic linking or the bwapi init call in the dll makes problems sometime... | 0 |

18,862 | 24,783,515,237 | IssuesEvent | 2022-10-24 07:55:12 | solid/specification | https://api.github.com/repos/solid/specification | closed | Strategy for test-suite | status: Needs Process Help | Creating this issue to bring to attention the need for a test suite strategy. Note that there was quite a bit of progress made some months ago by @kjetilk on https://github.com/solid/test-suite, but there hasn't been much activity of late, or use by others working in various areas of the specification and/or implementa... | 1.0 | Strategy for test-suite - Creating this issue to bring to attention the need for a test suite strategy. Note that there was quite a bit of progress made some months ago by @kjetilk on https://github.com/solid/test-suite, but there hasn't been much activity of late, or use by others working in various areas of the speci... | process | strategy for test suite creating this issue to bring to attention the need for a test suite strategy note that there was quite a bit of progress made some months ago by kjetilk on but there hasn t been much activity of late or use by others working in various areas of the specification and or implementations ... | 1 |

275,568 | 20,925,901,642 | IssuesEvent | 2022-03-24 22:52:50 | capactio/capact | https://api.github.com/repos/capactio/capact | closed | Implement Go Template storage backend service | enhancement area/hub area/documentation | ## Description

In Capact manifests, Content Developer is able to render `config` based on other TypeInstances data, see:

https://github.com/capactio/hub-manifests/blob/9987550e2341cc761c8613cc4e6bb716d5a328f1/manifests/implementation/gcp/cloudsql/postgresql/install-0.2.0.yaml#L102-L108

This is not supported dir... | 1.0 | Implement Go Template storage backend service - ## Description

In Capact manifests, Content Developer is able to render `config` based on other TypeInstances data, see:

https://github.com/capactio/hub-manifests/blob/9987550e2341cc761c8613cc4e6bb716d5a328f1/manifests/implementation/gcp/cloudsql/postgresql/install-0... | non_process | implement go template storage backend service description in capact manifests content developer is able to render config based on other typeinstances data see this is not supported directly in new external backends we want to have a dedicated backend which will do this projection read more on ... | 0 |

128,682 | 18,070,022,569 | IssuesEvent | 2021-09-21 01:02:15 | hugh-whitesource/NodeGoat-1 | https://api.github.com/repos/hugh-whitesource/NodeGoat-1 | closed | WS-2019-0231 (Medium) detected in adm-zip-0.4.4.tgz - autoclosed | security vulnerability | ## WS-2019-0231 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>adm-zip-0.4.4.tgz</b></p></summary>

<p>A Javascript implementation of zip for nodejs. Allows user to create or extract... | True | WS-2019-0231 (Medium) detected in adm-zip-0.4.4.tgz - autoclosed - ## WS-2019-0231 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>adm-zip-0.4.4.tgz</b></p></summary>

<p>A Javascript... | non_process | ws medium detected in adm zip tgz autoclosed ws medium severity vulnerability vulnerable library adm zip tgz a javascript implementation of zip for nodejs allows user to create or extract zip files both in memory or to from disk library home page a href dependency h... | 0 |

17,166 | 22,743,192,477 | IssuesEvent | 2022-07-07 06:43:34 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | opened | Remove LogStream writers from Engine | kind/toil team/distributed team/process-automation area/maintainability | **Description**

Part of #9600

In the current state the TypedProcessors (which might become the Engine later) and other entities, like [JobTimeoutTrigger](https://github.com/camunda/zeebe/blob/2d4901c0e516b7fa8b1cf3408b06708c1644ca57/engine/src/main/java/io/camunda/zeebe/engine/processing/job/JobTimeoutTrigger.ja... | 1.0 | Remove LogStream writers from Engine - **Description**

Part of #9600

In the current state the TypedProcessors (which might become the Engine later) and other entities, like [JobTimeoutTrigger](https://github.com/camunda/zeebe/blob/2d4901c0e516b7fa8b1cf3408b06708c1644ca57/engine/src/main/java/io/camunda/zeebe/eng... | process | remove logstream writers from engine description part of in the current state the typedprocessors which might become the engine later and other entities like deploymentdistributor etc have knowledge about how to write records to the logstream abstraction ideally they shouldn t care about that ... | 1 |

18,718 | 5,696,544,882 | IssuesEvent | 2017-04-16 13:00:17 | javaparser/javaparser | https://api.github.com/repos/javaparser/javaparser | closed | Generators should sort properties (minor) | Feature request Metamodel/code generation | In this way we would not have irrelevant changes in generated code | 1.0 | Generators should sort properties (minor) - In this way we would not have irrelevant changes in generated code | non_process | generators should sort properties minor in this way we would not have irrelevant changes in generated code | 0 |

13,377 | 15,838,344,615 | IssuesEvent | 2021-04-06 22:18:03 | 90301/TextReplace | https://api.github.com/repos/90301/TextReplace | closed | Line Instance Count | Log Processor | LineMatchCount function:

- Text to match

- Min number of instances per line

- [Optional] > or <

If a match is found:

Return the line.

Possibly add another that counts the instances per line and returns the count.

Ex:

LineMatchCount( [ , 2 )

this[1][2] <---- would trigger

this[1] <----- would not trig... | 1.0 | Line Instance Count - LineMatchCount function:

- Text to match

- Min number of instances per line

- [Optional] > or <

If a match is found:

Return the line.

Possibly add another that counts the instances per line and returns the count.

Ex:

LineMatchCount( [ , 2 )

this[1][2] <---- would trigger

this[1] ... | process | line instance count linematchcount function text to match min number of instances per line or if a match is found return the line possibly add another that counts the instances per line and returns the count ex linematchcount this would trigger this would n... | 1 |

126,091 | 26,778,635,947 | IssuesEvent | 2023-01-31 19:11:16 | SAST-org/SAST-Test-Repo-a8102749-e741-4799-bcc4-443eaad2f363 | https://api.github.com/repos/SAST-org/SAST-Test-Repo-a8102749-e741-4799-bcc4-443eaad2f363 | opened | Code Security Report: 24 high severity findings, 27 total findings | code security findings | # Code Security Report

**Latest Scan:** 2023-01-31 07:10pm

**Total Findings:** 27

**Tested Project Files:** 117

**Detected Programming Languages:** 2

<!-- SAST-MANUAL-SCAN-START -->

- [ ] Check this box to manually trigger a scan

<!-- SAST-MANUAL-SCAN-END -->

## Language: JavaScript / Node.js

> No vulnerability find... | 1.0 | Code Security Report: 24 high severity findings, 27 total findings - # Code Security Report

**Latest Scan:** 2023-01-31 07:10pm

**Total Findings:** 27

**Tested Project Files:** 117

**Detected Programming Languages:** 2

<!-- SAST-MANUAL-SCAN-START -->

- [ ] Check this box to manually trigger a scan

<!-- SAST-MANUAL-SCA... | non_process | code security report high severity findings total findings code security report latest scan total findings tested project files detected programming languages check this box to manually trigger a scan language javascript node js no vulnerability findings ... | 0 |

218,147 | 7,330,614,761 | IssuesEvent | 2018-03-05 10:31:50 | NCEAS/metacat | https://api.github.com/repos/NCEAS/metacat | closed | automatically insert pubDate on registry submission | Category: registry Component: Bugzilla-Id Priority: Normal Status: Resolved Tracker: Bug | ---

Author Name: **Callie Bowdish** (Callie Bowdish)

Original Redmine Issue: 2211, https://projects.ecoinformatics.org/ecoinfo/issues/2211

Original Date: 2005-09-28

Original Assignee: Saurabh Garg

---

We need to automatically insert pubDate on registry submission. The 'pubDate'

field represents the date that the r... | 1.0 | automatically insert pubDate on registry submission - ---

Author Name: **Callie Bowdish** (Callie Bowdish)

Original Redmine Issue: 2211, https://projects.ecoinformatics.org/ecoinfo/issues/2211

Original Date: 2005-09-28

Original Assignee: Saurabh Garg

---

We need to automatically insert pubDate on registry submissio... | non_process | automatically insert pubdate on registry submission author name callie bowdish callie bowdish original redmine issue original date original assignee saurabh garg we need to automatically insert pubdate on registry submission the pubdate field represents the date that the resource ... | 0 |

2,796 | 5,724,417,765 | IssuesEvent | 2017-04-20 14:30:02 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | reopened | Add timeout parameter to the SSHClient | process_duplicate type_feature | Can you add a timeout parameter in SSHClient?

We use the SShClient run command to verify some stuff for the external arakoons but if one node is down we receive a timeout error.

It takes 248 sec before the timeout error appears.

```

externalArakoons : Install config Arakoons ---------------------------- 248.39s

... | 1.0 | Add timeout parameter to the SSHClient - Can you add a timeout parameter in SSHClient?

We use the SShClient run command to verify some stuff for the external arakoons but if one node is down we receive a timeout error.

It takes 248 sec before the timeout error appears.

```

externalArakoons : Install config Arakoo... | process | add timeout parameter to the sshclient can you add a timeout parameter in sshclient we use the sshclient run command to verify some stuff for the external arakoons but if one node is down we receive a timeout error it takes sec before the timeout error appears externalarakoons install config arakoons... | 1 |

82,080 | 3,602,667,322 | IssuesEvent | 2016-02-03 16:23:02 | rh-lab-q/rpg | https://api.github.com/repos/rh-lab-q/rpg | opened | c plugin (and any other compiled lang) plugin should uncheck BuildArch: noarch option | low_priority | It should be checked by default and if any plugin find compilable file then it mark it it in Spec object. | 1.0 | c plugin (and any other compiled lang) plugin should uncheck BuildArch: noarch option - It should be checked by default and if any plugin find compilable file then it mark it it in Spec object. | non_process | c plugin and any other compiled lang plugin should uncheck buildarch noarch option it should be checked by default and if any plugin find compilable file then it mark it it in spec object | 0 |

14,068 | 16,890,535,793 | IssuesEvent | 2021-06-23 08:43:13 | arcus-azure/arcus.messaging | https://api.github.com/repos/arcus-azure/arcus.messaging | closed | Provide more flexible message parsing in message pump | area:message-processing feature | **Is your feature request related to a problem? Please describe.**

When adding new properties to the messaging model we do not always remember to update message consumers' projects

Because of that, the messages are no longer being processed by the defined message handler because it shows the following verbose level... | 1.0 | Provide more flexible message parsing in message pump - **Is your feature request related to a problem? Please describe.**

When adding new properties to the messaging model we do not always remember to update message consumers' projects

Because of that, the messages are no longer being processed by the defined mess... | process | provide more flexible message parsing in message pump is your feature request related to a problem please describe when adding new properties to the messaging model we do not always remember to update message consumers projects because of that the messages are no longer being processed by the defined mess... | 1 |

156,950 | 24,627,563,716 | IssuesEvent | 2022-10-16 18:09:35 | dotnet/efcore | https://api.github.com/repos/dotnet/efcore | closed | Backing field is null when lazy loading hasmany collection | closed-by-design customer-reported | Noticed that backing field collection is null if the property is configured to be lazy loaded. Once you access the property then the backing field gets loaded.

```C#

public class Blog

{

protected Blog() { }

public int BlogId { get; private set; }

public string BlogName { get; private set; }

p... | 1.0 | Backing field is null when lazy loading hasmany collection - Noticed that backing field collection is null if the property is configured to be lazy loaded. Once you access the property then the backing field gets loaded.

```C#

public class Blog

{

protected Blog() { }

public int BlogId { get; private set;... | non_process | backing field is null when lazy loading hasmany collection noticed that backing field collection is null if the property is configured to be lazy loaded once you access the property then the backing field gets loaded c public class blog protected blog public int blogid get private set ... | 0 |

17,277 | 23,067,845,172 | IssuesEvent | 2022-07-25 15:17:29 | open-telemetry/opentelemetry-collector-contrib | https://api.github.com/repos/open-telemetry/opentelemetry-collector-contrib | closed | ec2 resourcedetectionprocessor: Support configuring the http client used | processor/resourcedetection | **Is your feature request related to a problem? Please describe.**

The resource detection processors don't support configuring the http client they use while other exporters and receivers do. This is an issue when you want to configure settings like the http client timeout for processors.

**Describe the solution yo... | 1.0 | ec2 resourcedetectionprocessor: Support configuring the http client used - **Is your feature request related to a problem? Please describe.**

The resource detection processors don't support configuring the http client they use while other exporters and receivers do. This is an issue when you want to configure settings... | process | resourcedetectionprocessor support configuring the http client used is your feature request related to a problem please describe the resource detection processors don t support configuring the http client they use while other exporters and receivers do this is an issue when you want to configure settings l... | 1 |

17,870 | 23,814,254,681 | IssuesEvent | 2022-09-05 04:01:27 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | A validity time period is not correct at "Update a webhook" | automation/svc triaged cxp doc-enhancement process-automation/subsvc Pri2 | It is "1 year" not "10 years" at section "Update a webhook". Could you fix it?

_When a webhook is created, it has a validity time period of 10 years, after which it automatically expires_

https://docs.microsoft.com/en-us/azure/automation/automation-webhooks?tabs=portal#update-a-webhook

---

#### Document Details... | 1.0 | A validity time period is not correct at "Update a webhook" - It is "1 year" not "10 years" at section "Update a webhook". Could you fix it?

_When a webhook is created, it has a validity time period of 10 years, after which it automatically expires_

https://docs.microsoft.com/en-us/azure/automation/automation-webho... | process | a validity time period is not correct at update a webhook it is year not years at section update a webhook could you fix it when a webhook is created it has a validity time period of years after which it automatically expires document details ⚠ do not edit this section it i... | 1 |

48,654 | 12,227,178,887 | IssuesEvent | 2020-05-03 14:14:30 | stitchEm/stitchEm | https://api.github.com/repos/stitchEm/stitchEm | closed | Jetson Nano cmake Error | Build | I'm attempting to get StitchEm Studio running on a Nvidia Jetson Nano. I'm running into it complaining that several variables are used in the project but they are not set:

CMake Error: The following variables are used in this project, but they are set to NOTFOUND.

Please set them or make sure they are set and teste... | 1.0 | Jetson Nano cmake Error - I'm attempting to get StitchEm Studio running on a Nvidia Jetson Nano. I'm running into it complaining that several variables are used in the project but they are not set:

CMake Error: The following variables are used in this project, but they are set to NOTFOUND.

Please set them or make s... | non_process | jetson nano cmake error i m attempting to get stitchem studio running on a nvidia jetson nano i m running into it complaining that several variables are used in the project but they are not set cmake error the following variables are used in this project but they are set to notfound please set them or make s... | 0 |

4,062 | 6,994,135,421 | IssuesEvent | 2017-12-15 14:18:57 | symfony/symfony | https://api.github.com/repos/symfony/symfony | closed | [Process] ContextErrorException | Array to string conversion | Bug Process Status: Needs Review Status: Waiting feedback | | Q | A

| ---------------- | -----

| Bug report? | yes

| Feature request? | no

| BC Break report? | yes

| RFC? | no

| Symfony version | 3.4.2

Environnement variables passed to `proc_open()` in https://github.com/symfony/symfony/blob/v3.4.2/src/Symfony/Component/Process/Process.... | 1.0 | [Process] ContextErrorException | Array to string conversion - | Q | A

| ---------------- | -----

| Bug report? | yes

| Feature request? | no

| BC Break report? | yes

| RFC? | no

| Symfony version | 3.4.2

Environnement variables passed to `proc_open()` in https://github.com/sym... | process | contexterrorexception array to string conversion q a bug report yes feature request no bc break report yes rfc no symfony version environnement variables passed to proc open in throw a contexterrorexcepti... | 1 |

3,274 | 6,359,073,793 | IssuesEvent | 2017-07-31 05:41:04 | dzhw/zofar | https://api.github.com/repos/dzhw/zofar | closed | create Wiki | category: service.processes prio: 1 status: testing type: backlog.task | Create the Wiki @ repository

- [x] Def. of Done

- [x] Sprint History

- [x] Refinement | 1.0 | create Wiki - Create the Wiki @ repository

- [x] Def. of Done

- [x] Sprint History

- [x] Refinement | process | create wiki create the wiki repository def of done sprint history refinement | 1 |

284,575 | 8,743,983,870 | IssuesEvent | 2018-12-12 20:49:26 | spacetelescope/jwql | https://api.github.com/repos/spacetelescope/jwql | closed | Provide some RESTful services/API in web app | Medium Priority Web Application enhancement | It would be useful to build in a RESTful API into our web application, so that users can easily get data programatically. It could even be useful to us developers when we are in need of static data, and could cut down on the amount of code that we need to write in `views.py` and `data_containers.py`.

#### A simple ... | 1.0 | Provide some RESTful services/API in web app - It would be useful to build in a RESTful API into our web application, so that users can easily get data programatically. It could even be useful to us developers when we are in need of static data, and could cut down on the amount of code that we need to write in `views.... | non_process | provide some restful services api in web app it would be useful to build in a restful api into our web application so that users can easily get data programatically it could even be useful to us developers when we are in need of static data and could cut down on the amount of code that we need to write in views ... | 0 |

260,150 | 19,659,495,528 | IssuesEvent | 2022-01-10 15:41:19 | Nautilus-Cyberneering/nautilus-librarian | https://api.github.com/repos/Nautilus-Cyberneering/nautilus-librarian | closed | Improve docs about running commands locally | documentation | For some complex commands like the "Gold Image Processing", it could be convenient to explain how you can run tests locally. We have an explanation on the docs:

https://nautilus-cyberneering.github.io/nautilus-librarian/development/#run-workflows-locally

but it's deprecated and besides it only explains how to run... | 1.0 | Improve docs about running commands locally - For some complex commands like the "Gold Image Processing", it could be convenient to explain how you can run tests locally. We have an explanation on the docs:

https://nautilus-cyberneering.github.io/nautilus-librarian/development/#run-workflows-locally

but it's depr... | non_process | improve docs about running commands locally for some complex commands like the gold image processing it could be convenient to explain how you can run tests locally we have an explanation on the docs but it s deprecated and besides it only explains how to run github workflows you can also execute direct... | 0 |

20,549 | 27,210,425,419 | IssuesEvent | 2023-02-20 16:07:00 | cse442-at-ub/project_s23-iweatherify | https://api.github.com/repos/cse442-at-ub/project_s23-iweatherify | closed | Creating PHP Documentation | Processing Task Sprint 1 | **Task Tests**

*Test 1*

1) Open the Google Document called “PHP Documentation”

https://docs.google.com/document/d/1f2W6JGzp1QbLZvDrYjKrBlevV2UbyYYYefAmMZBztbA/edit

2) Read the “Getting Started with PHP” section

3) Read the “Recommended PHP Resources” section

4) Read the “Connecting to UB Servers” section

5) Complete t... | 1.0 | Creating PHP Documentation - **Task Tests**

*Test 1*

1) Open the Google Document called “PHP Documentation”

https://docs.google.com/document/d/1f2W6JGzp1QbLZvDrYjKrBlevV2UbyYYYefAmMZBztbA/edit

2) Read the “Getting Started with PHP” section

3) Read the “Recommended PHP Resources” section

4) Read the “Connecting to UB S... | process | creating php documentation task tests test open the google document called “php documentation” read the “getting started with php” section read the “recommended php resources” section read the “connecting to ub servers” section complete the tutorial | 1 |

77,564 | 14,883,743,415 | IssuesEvent | 2021-01-20 13:44:38 | JosefPihrt/Roslynator | https://api.github.com/repos/JosefPihrt/Roslynator | closed | [Question] Enable refactorings in vscode (RRxxxx) | VS Code | **Product and Version Used**:

IDE: vscode latest

config: `.editorconfig` (not rulesets)

**Steps to Reproduce**:

I add the three [nuget packages](https://github.com/JosefPihrt/Roslynator#nuget-analyzers) to the project.

The RCS analyzers work automatically.

How do I enable the refactorings (RRxxxx)?

e.g.... | 1.0 | [Question] Enable refactorings in vscode (RRxxxx) - **Product and Version Used**:

IDE: vscode latest

config: `.editorconfig` (not rulesets)

**Steps to Reproduce**:

I add the three [nuget packages](https://github.com/JosefPihrt/Roslynator#nuget-analyzers) to the project.

The RCS analyzers work automatically.

... | non_process | enable refactorings in vscode rrxxxx product and version used ide vscode latest config editorconfig not rulesets steps to reproduce i add the three to the project the rcs analyzers work automatically how do i enable the refactorings rrxxxx e g i tried dotnet diagnostic ... | 0 |

794,228 | 28,027,015,132 | IssuesEvent | 2023-03-28 09:47:36 | kubernetes/ingress-nginx | https://api.github.com/repos/kubernetes/ingress-nginx | closed | controller goes into CrashLoopBackOff if controller.publishService.pathOverride is invalid | needs-kind needs-triage needs-priority | **What happened**:

Deploying the controller with a invalid value for controller.publishService.pathOverride, results in the controller exiting immediately after starting. This happened due to a typo in the configuration.

Similar reports in https://github.com/kubernetes/ingress-nginx/issues/7770.

Logs:

```

I0... | 1.0 | controller goes into CrashLoopBackOff if controller.publishService.pathOverride is invalid - **What happened**:

Deploying the controller with a invalid value for controller.publishService.pathOverride, results in the controller exiting immediately after starting. This happened due to a typo in the configuration.

... | non_process | controller goes into crashloopbackoff if controller publishservice pathoverride is invalid what happened deploying the controller with a invalid value for controller publishservice pathoverride results in the controller exiting immediately after starting this happened due to a typo in the configuration ... | 0 |

460,977 | 13,221,599,214 | IssuesEvent | 2020-08-17 14:17:37 | clowdr-app/clowdr-web-app | https://api.github.com/repos/clowdr-app/clowdr-web-app | opened | Add Lobby to list of text chats | Medium priority | We've eliminated almost all of the session-level text chats, but there are still a number of places where the Lobby chat shows, and I think this is something we want to keep. But it is then a bit confusing that people can't find it in the usual list of text chats.

Not critical for ICFP, if not easy.

| 1.0 | Add Lobby to list of text chats - We've eliminated almost all of the session-level text chats, but there are still a number of places where the Lobby chat shows, and I think this is something we want to keep. But it is then a bit confusing that people can't find it in the usual list of text chats.

Not critical for ... | non_process | add lobby to list of text chats we ve eliminated almost all of the session level text chats but there are still a number of places where the lobby chat shows and i think this is something we want to keep but it is then a bit confusing that people can t find it in the usual list of text chats not critical for ... | 0 |

3,262 | 3,385,077,850 | IssuesEvent | 2015-11-27 09:25:22 | coreos/rkt | https://api.github.com/repos/coreos/rkt | closed | stage0: Can't use paths with a + in them in volumes | area/usability dependency/appc spec kind/bug | ```

sudo ./rkt run --mds-register=false --volume html,kind=host,source=/tmp/h+ml aci.gonyeo.com/nginx

...

Failed to stat /tmp/h ml: No such file or directory

``` | True | stage0: Can't use paths with a + in them in volumes - ```

sudo ./rkt run --mds-register=false --volume html,kind=host,source=/tmp/h+ml aci.gonyeo.com/nginx

...

Failed to stat /tmp/h ml: No such file or directory

``` | non_process | can t use paths with a in them in volumes sudo rkt run mds register false volume html kind host source tmp h ml aci gonyeo com nginx failed to stat tmp h ml no such file or directory | 0 |

11,768 | 14,598,225,422 | IssuesEvent | 2020-12-20 23:51:58 | ewen-lbh/portfolio | https://api.github.com/repos/ewen-lbh/portfolio | opened | Add subline for made with | processing | See prototype: there's an additional subline over the main tech name (ie _Adobe_ Photoshop). Implement that with a `Tag.Author` on the go struct. | 1.0 | Add subline for made with - See prototype: there's an additional subline over the main tech name (ie _Adobe_ Photoshop). Implement that with a `Tag.Author` on the go struct. | process | add subline for made with see prototype there s an additional subline over the main tech name ie adobe photoshop implement that with a tag author on the go struct | 1 |

7,636 | 10,733,298,071 | IssuesEvent | 2019-10-29 00:49:04 | AlmuraDev/SGCraft | https://api.github.com/repos/AlmuraDev/SGCraft | closed | Event Horizon isn't destroying blocks | bug in process | Blocks within the gate ring itself are not being destroyed.

SGCraft 2.0.2 | 1.0 | Event Horizon isn't destroying blocks - Blocks within the gate ring itself are not being destroyed.

SGCraft 2.0.2 | process | event horizon isn t destroying blocks blocks within the gate ring itself are not being destroyed sgcraft | 1 |

450,770 | 13,019,014,878 | IssuesEvent | 2020-07-26 20:15:00 | aaugustin/websockets | https://api.github.com/repos/aaugustin/websockets | closed | Add assertions to validate fail_connection requirements | enhancement low priority | In https://github.com/aaugustin/websockets/issues/465#issuecomment-434479748 I said:

> Don't call fail_connection() with the default code (1006) unless:

>

> a. you changed the connection state to CLOSING, typically by writing a Close frame, or

> b. you know that the connection is dead already, typically because... | 1.0 | Add assertions to validate fail_connection requirements - In https://github.com/aaugustin/websockets/issues/465#issuecomment-434479748 I said:

> Don't call fail_connection() with the default code (1006) unless:

>

> a. you changed the connection state to CLOSING, typically by writing a Close frame, or

> b. you k... | non_process | add assertions to validate fail connection requirements in i said don t call fail connection with the default code unless a you changed the connection state to closing typically by writing a close frame or b you know that the connection is dead already typically because you hit connecti... | 0 |

20,066 | 26,555,710,110 | IssuesEvent | 2023-01-20 11:49:59 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | closed | [C++] Nightly Integration Testing Report for Firestore | type: process nightly-testing |

<hidden value="integration-test-status-comment"></hidden>

### [build against repo] Integration test with FLAKINESS (succeeded after retry)

Requested by @sunmou99 on commit 45f8e3268c2adbabca165ed0a937835f18930d2f

Last updated: Thu Jan 19 04:03 PST 2023

**[View integration test log & download artifacts](https://gith... | 1.0 | [C++] Nightly Integration Testing Report for Firestore -

<hidden value="integration-test-status-comment"></hidden>

### [build against repo] Integration test with FLAKINESS (succeeded after retry)

Requested by @sunmou99 on commit 45f8e3268c2adbabca165ed0a937835f18930d2f

Last updated: Thu Jan 19 04:03 PST 2023

**[Vie... | process | nightly integration testing report for firestore integration test with flakiness succeeded after retry requested by on commit last updated thu jan pst failures configs firestore failed tests nbsp nbsp crash timeout add flaky te... | 1 |

22,384 | 31,142,284,448 | IssuesEvent | 2023-08-16 01:44:07 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Flaky test: by deleting query member | stage: backlog process: flaky test topic: flake ❄️ priority: low stage: flake topic: net_stubbing.cy.ts stale | ### Link to dashboard or CircleCI failure

https://dashboard.cypress.io/projects/ypt4pf/runs/38102/test-results/f7e0ef07-6232-450b-9b29-b9728b276a8f

### Link to failing test in GitHub

https://github.com/cypress-io/cypress/blob/develop/packages/driver/cypress/e2e/commands/net_stubbing.cy.ts#L1836

### Analysis... | 1.0 | Flaky test: by deleting query member - ### Link to dashboard or CircleCI failure

https://dashboard.cypress.io/projects/ypt4pf/runs/38102/test-results/f7e0ef07-6232-450b-9b29-b9728b276a8f

### Link to failing test in GitHub

https://github.com/cypress-io/cypress/blob/develop/packages/driver/cypress/e2e/commands/n... | process | flaky test by deleting query member link to dashboard or circleci failure link to failing test in github analysis img width alt screen shot at pm src cypress version other search for this issue number in the codebase to find the test s ... | 1 |

21,922 | 30,446,459,805 | IssuesEvent | 2023-07-15 18:31:22 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pyutils 0.0.1b5 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b5",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt: ... | 1.0 | pyutils 0.0.1b5 has 2 GuardDog issues - https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1b5",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names... | process | pyutils has guarddog issues dependency pyutils version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt pytils python utils silen... | 1 |

11,082 | 13,923,922,100 | IssuesEvent | 2020-10-21 14:57:56 | timberio/vector | https://api.github.com/repos/timberio/vector | closed | New `split` remap function | domain: processing domain: remap type: feature | The `split` remap function splits a string with the provided pattern

## Example

Given this event for all examples:

```js

{

"message": "This is a long string"

}

```

### Default

By default, the `split` function splits on all occurences of the passed pattern:

```

.message = split(.message, / /)

`... | 1.0 | New `split` remap function - The `split` remap function splits a string with the provided pattern

## Example

Given this event for all examples:

```js

{

"message": "This is a long string"

}

```

### Default

By default, the `split` function splits on all occurences of the passed pattern:

```

.mess... | process | new split remap function the split remap function splits a string with the provided pattern example given this event for all examples js message this is a long string default by default the split function splits on all occurences of the passed pattern mess... | 1 |

10,855 | 13,630,314,025 | IssuesEvent | 2020-09-24 16:15:51 | eddieantonio/predictive-text-studio | https://api.github.com/repos/eddieantonio/predictive-text-studio | opened | Update to actual kmp.json format 😫 | bug data-backing data-processing enhancement worker | I was wrong about the format of a `kmp.json` file. It actually follows this spec:

Documentation: https://help.keyman.com/developer/11.0/reference/file-types/metadata

(Outdated) Documentation: https://github.com/keymanapp/keyman/wiki/KMP-Metadata-File-(kmp.inf-and-kmp.json)#fields

JSON Schema: https://api.keyman.c... | 1.0 | Update to actual kmp.json format 😫 - I was wrong about the format of a `kmp.json` file. It actually follows this spec:

Documentation: https://help.keyman.com/developer/11.0/reference/file-types/metadata