Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

410,637 | 27,795,493,546 | IssuesEvent | 2023-03-17 12:13:42 | mindsdb/mindsdb | https://api.github.com/repos/mindsdb/mindsdb | reopened | [Docs] Update a title of the `MySQL Question Answering` tutorial | help wanted good first issue documentation first-timers-only | ## Instructions :page_facing_up:

Here are the step-by-step instructions:

1. Go to the file `/docs/nlp/question-answering-inside-mysql-with-openai.mdx`.

2. Change the values of `title` and `sidebarTitle` to read as follows:

```

---

title: Question Answering with MindsDB and OpenAI using SQL

sidebarTitle: ... | 1.0 | [Docs] Update a title of the `MySQL Question Answering` tutorial - ## Instructions :page_facing_up:

Here are the step-by-step instructions:

1. Go to the file `/docs/nlp/question-answering-inside-mysql-with-openai.mdx`.

2. Change the values of `title` and `sidebarTitle` to read as follows:

```

---

title: Q... | non_process | update a title of the mysql question answering tutorial instructions page facing up here are the step by step instructions go to the file docs nlp question answering inside mysql with openai mdx change the values of title and sidebartitle to read as follows title questi... | 0 |

624,131 | 19,687,437,674 | IssuesEvent | 2022-01-12 00:31:33 | apcountryman/picolibrary | https://api.github.com/repos/apcountryman/picolibrary | opened | Add unit testing pseudo-random value generation | priority-normal status-awaiting_development type-feature | Add unit testing pseudo-random value generation (`::picolibrary::Testing::Unit::random()`).

- [ ] The `random()` function should be defined in the `include/picolibrary/testing/unit/random.h`/`source/picolibrary/testing/unit/random.cc` header/source file pair

- [ ] The `random()` function should have the following ove... | 1.0 | Add unit testing pseudo-random value generation - Add unit testing pseudo-random value generation (`::picolibrary::Testing::Unit::random()`).

- [ ] The `random()` function should be defined in the `include/picolibrary/testing/unit/random.h`/`source/picolibrary/testing/unit/random.cc` header/source file pair

- [ ] The... | non_process | add unit testing pseudo random value generation add unit testing pseudo random value generation picolibrary testing unit random the random function should be defined in the include picolibrary testing unit random h source picolibrary testing unit random cc header source file pair the ra... | 0 |

14,224 | 17,145,268,054 | IssuesEvent | 2021-07-13 14:01:33 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | qgis:executesql cannot find input layer if it is a temporary layer with accentuated characters | Bug Processing | Hi,

When using qgis:executesql algorithm from processing toolbox, if input layer is a temporary layer created by another algorithm with accentuated characters, such as "Reprojeté" created by reprojectlayer algorithm in french, I get the following error message :

<p><b>virtual:</b> Cannot find layer Reprojet%C3%A9_e83... | 1.0 | qgis:executesql cannot find input layer if it is a temporary layer with accentuated characters - Hi,

When using qgis:executesql algorithm from processing toolbox, if input layer is a temporary layer created by another algorithm with accentuated characters, such as "Reprojeté" created by reprojectlayer algorithm in fre... | process | qgis executesql cannot find input layer if it is a temporary layer with accentuated characters hi when using qgis executesql algorithm from processing toolbox if input layer is a temporary layer created by another algorithm with accentuated characters such as reprojeté created by reprojectlayer algorithm in fre... | 1 |

17,564 | 23,377,430,485 | IssuesEvent | 2022-08-11 05:44:52 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | Rows processed by the right side of IN operator are not counted | st-fixed unexpected behaviour comp-processors | The first query uses a subselect for IN, and the second one just uses constants (disregard weird coding with `materialize` etc, this is to work around other bugs). Note that the number of processed rows is the same, despite the fact that the subselect processes another 81k rows.

```

/4/ :)

SELECT UserID

FROM hit... | 1.0 | Rows processed by the right side of IN operator are not counted - The first query uses a subselect for IN, and the second one just uses constants (disregard weird coding with `materialize` etc, this is to work around other bugs). Note that the number of processed rows is the same, despite the fact that the subselect pr... | process | rows processed by the right side of in operator are not counted the first query uses a subselect for in and the second one just uses constants disregard weird coding with materialize etc this is to work around other bugs note that the number of processed rows is the same despite the fact that the subselect pr... | 1 |

3,195 | 6,261,571,104 | IssuesEvent | 2017-07-15 01:13:36 | PHPSocialNetwork/phpfastcache | https://api.github.com/repos/PHPSocialNetwork/phpfastcache | closed | performance regression with v6 | 6.0 [-_-] In Process ^_^ enhancement ^_^ Fixed | ### Configuration:

PhpFastCache version: 6.0.1 / 5.0.16

PHP version: 7.0

Operating system: Linux / Windows

#### Issue description:

I've updated a project to phpfastcache v6 and noticed a perfomance regression.

The project is running on windows using the wincache driver with php 7.0. The perfomance regressio... | 1.0 | performance regression with v6 - ### Configuration:

PhpFastCache version: 6.0.1 / 5.0.16

PHP version: 7.0

Operating system: Linux / Windows

#### Issue description:

I've updated a project to phpfastcache v6 and noticed a perfomance regression.

The project is running on windows using the wincache driver with ... | process | performance regression with configuration phpfastcache version php version operating system linux windows issue description i ve updated a project to phpfastcache and noticed a perfomance regression the project is running on windows using the wincache driver with php... | 1 |

15,220 | 19,088,283,017 | IssuesEvent | 2021-11-29 09:14:14 | opensafely-core/job-server | https://api.github.com/repos/opensafely-core/job-server | closed | Add Section Links to ApplicationDetail in Staff Area | application-process | Mirroring the confirmation page a User sees we need to add `Change` links to each section of the ApplicationDetail page to let a Staff user jump to that section. | 1.0 | Add Section Links to ApplicationDetail in Staff Area - Mirroring the confirmation page a User sees we need to add `Change` links to each section of the ApplicationDetail page to let a Staff user jump to that section. | process | add section links to applicationdetail in staff area mirroring the confirmation page a user sees we need to add change links to each section of the applicationdetail page to let a staff user jump to that section | 1 |

35,433 | 31,279,520,126 | IssuesEvent | 2023-08-22 08:42:17 | arduino/arduino-fwuploader | https://api.github.com/repos/arduino/arduino-fwuploader | closed | :open_umbrella: Umbrella: migrate `Nina` to plugin system | type: enhancement topic: infrastructure | ## Phase 1

- [x] #188

- [x] #191

- [x] #196

- [x] #193

## Phase 2

- [x] #195

## Phase 3

- [x] #202

- [ ] Make a new release of `arduino-fwuploader` | 1.0 | :open_umbrella: Umbrella: migrate `Nina` to plugin system - ## Phase 1

- [x] #188

- [x] #191

- [x] #196

- [x] #193

## Phase 2

- [x] #195

## Phase 3

- [x] #202

- [ ] Make a new release of `arduino-fwuploader` | non_process | open umbrella umbrella migrate nina to plugin system phase phase phase make a new release of arduino fwuploader | 0 |

14,750 | 18,020,485,077 | IssuesEvent | 2021-09-16 18:45:09 | googleapis/gax-go | https://api.github.com/repos/googleapis/gax-go | closed | v2.1.0 is released but Version is still "2.0.5" ? | type: process priority: p2 | https://pkg.go.dev/github.com/googleapis/gax-go/v2

shows `Version: v2.1.0 Latest`

but

```

const Version = "2.0.5"

```

tag 2.1.0 is unexpected one? | 1.0 | v2.1.0 is released but Version is still "2.0.5" ? - https://pkg.go.dev/github.com/googleapis/gax-go/v2

shows `Version: v2.1.0 Latest`

but

```

const Version = "2.0.5"

```

tag 2.1.0 is unexpected one? | process | is released but version is still shows version latest but const version tag is unexpected one | 1 |

5,990 | 8,805,374,729 | IssuesEvent | 2018-12-26 19:14:03 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Pre-processing of map <navtitle> elements different in DITA-OT >=2.0 | bug preprocess priority/medium stale | Pre-processing of map `<navtitle>` elements different in DITA-OT >=2.0

After a couple of hours deep-dive I'm pretty sure that the pre-processing behaviour of populating the `<navtitle>` elements inside the pre-processed DITA maps during mappull has changed. I'm almost sure that this is not intentional behaviour and h... | 1.0 | Pre-processing of map <navtitle> elements different in DITA-OT >=2.0 - Pre-processing of map `<navtitle>` elements different in DITA-OT >=2.0

After a couple of hours deep-dive I'm pretty sure that the pre-processing behaviour of populating the `<navtitle>` elements inside the pre-processed DITA maps during mappull ha... | process | pre processing of map elements different in dita ot pre processing of map elements different in dita ot after a couple of hours deep dive i m pretty sure that the pre processing behaviour of populating the elements inside the pre processed dita maps during mappull has changed i m almost sure ... | 1 |

122,662 | 10,229,005,419 | IssuesEvent | 2019-08-17 08:32:44 | Joystream/joystream | https://api.github.com/repos/Joystream/joystream | opened | Rome: Live Milestones | release release-plan rome-testnet | ## Live Milestones

The Rome Release Plan contains a set of Milestones, with a target date for each. Experience have shown that:

- we might not be able to follow these

- we may want to add or remove certain items

- we may want to adjust the scope of each milestone

This approach, like with `Tracking Issues`, giv... | 1.0 | Rome: Live Milestones - ## Live Milestones

The Rome Release Plan contains a set of Milestones, with a target date for each. Experience have shown that:

- we might not be able to follow these

- we may want to add or remove certain items

- we may want to adjust the scope of each milestone

This approach, like wit... | non_process | rome live milestones live milestones the rome release plan contains a set of milestones with a target date for each experience have shown that we might not be able to follow these we may want to add or remove certain items we may want to adjust the scope of each milestone this approach like wit... | 0 |

61,344 | 3,144,707,336 | IssuesEvent | 2015-09-14 14:40:41 | andresriancho/w3af | https://api.github.com/repos/andresriancho/w3af | closed | TypeError: gdbm key must be string, not unicode | bug gui priority:medium | ## Version Information

```

Python version: 2.7.10 (default, Jul 13 2015, 12:05:58) [GCC 4.2.1 Compatible Apple LLVM 6.1.0 (clang-602.0.53)]

GTK version: 2.24.28

PyGTK version: 2.24.0

w3af version:

w3af - Web Application Attack and Audit Framework

Version: 1.7.6

Revision: 6e48526969 - 12 Ağu 2015 11:... | 1.0 | TypeError: gdbm key must be string, not unicode - ## Version Information

```

Python version: 2.7.10 (default, Jul 13 2015, 12:05:58) [GCC 4.2.1 Compatible Apple LLVM 6.1.0 (clang-602.0.53)]

GTK version: 2.24.28

PyGTK version: 2.24.0

w3af version:

w3af - Web Application Attack and Audit Framework

Version... | non_process | typeerror gdbm key must be string not unicode version information python version default jul gtk version pygtk version version web application attack and audit framework version revision ağu branch master local chang... | 0 |

677,612 | 23,167,734,880 | IssuesEvent | 2022-07-30 07:50:12 | ObsidianMC/Obsidian | https://api.github.com/repos/ObsidianMC/Obsidian | closed | Implement new chat message/command changes (1.19 branch) | enhancement help wanted good first issue priority: high networking | As the title suggests I'm opening up this issue for someone who wants to do this before I get to it myself.

Helpful Links:

https://wiki.vg/images/f/f4/MinecraftChat.drawio4.png

https://wiki.vg/Chat#Processing_chat

Packets that should be looked at (Classes for these packets are created already)

https://wiki.vg/... | 1.0 | Implement new chat message/command changes (1.19 branch) - As the title suggests I'm opening up this issue for someone who wants to do this before I get to it myself.

Helpful Links:

https://wiki.vg/images/f/f4/MinecraftChat.drawio4.png

https://wiki.vg/Chat#Processing_chat

Packets that should be looked at (Class... | non_process | implement new chat message command changes branch as the title suggests i m opening up this issue for someone who wants to do this before i get to it myself helpful links packets that should be looked at classes for these packets are created already this should work reliably with onl... | 0 |

20,624 | 27,295,616,569 | IssuesEvent | 2023-02-23 20:04:00 | googleapis/google-cloudevents-python | https://api.github.com/repos/googleapis/google-cloudevents-python | opened | Warning: a recent release failed | type: process | The following release PRs may have failed:

* #187 - The release job was triggered, but has not reported back success.

* #185 - The release job was triggered, but has not reported back success.

* #183 - The release job was triggered, but has not reported back success.

* #181 - The release job was triggered, but has not... | 1.0 | Warning: a recent release failed - The following release PRs may have failed:

* #187 - The release job was triggered, but has not reported back success.

* #185 - The release job was triggered, but has not reported back success.

* #183 - The release job was triggered, but has not reported back success.

* #181 - The rel... | process | warning a recent release failed the following release prs may have failed the release job was triggered but has not reported back success the release job was triggered but has not reported back success the release job was triggered but has not reported back success the release job... | 1 |

117,270 | 15,085,387,511 | IssuesEvent | 2021-02-05 18:35:31 | uswds/uswds-team | https://api.github.com/repos/uswds/uswds-team | closed | Valentine's- draft comms plan and graphic updates | outreach skill: communications skill: ux design | Pre-existing designed cards will be pushed socially for Valentine's Day: Feb 10, 11, and 12. Graphics need to be updated, comms plan developed.

Materials for approval located [here ](https://drive.google.com/drive/folders/1aFmUfT7uTGuZu8EhMNtA13b5dMgRqHys?usp=sharing). posted for [review ](https://gsa-tts.slack.com/a... | 1.0 | Valentine's- draft comms plan and graphic updates - Pre-existing designed cards will be pushed socially for Valentine's Day: Feb 10, 11, and 12. Graphics need to be updated, comms plan developed.

Materials for approval located [here ](https://drive.google.com/drive/folders/1aFmUfT7uTGuZu8EhMNtA13b5dMgRqHys?usp=sharin... | non_process | valentine s draft comms plan and graphic updates pre existing designed cards will be pushed socially for valentine s day feb and graphics need to be updated comms plan developed materials for approval located posted for tasks to complete per ammie design k carr to update... | 0 |

45,430 | 13,112,516,799 | IssuesEvent | 2020-08-05 02:27:00 | ElliotChen/spring_boot_example | https://api.github.com/repos/ElliotChen/spring_boot_example | opened | CVE-2019-8331 (Medium) detected in bootstrap-3.3.6.min.js, bootstrap-3.3.6.js | security vulnerability | ## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.6.min.js</b>, <b>bootstrap-3.3.6.js</b></p></summary>

<p>

<details><summary><b>bootstrap-3.3.6.min.js... | True | CVE-2019-8331 (Medium) detected in bootstrap-3.3.6.min.js, bootstrap-3.3.6.js - ## CVE-2019-8331 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.6.min.js</b>, <b>boots... | non_process | cve medium detected in bootstrap min js bootstrap js cve medium severity vulnerability vulnerable libraries bootstrap min js bootstrap js bootstrap min js the most popular front end framework for developing responsive mobile first projects on the ... | 0 |

5,231 | 8,030,837,037 | IssuesEvent | 2018-07-27 21:16:05 | brucemiller/LaTeXML | https://api.github.com/repos/brucemiller/LaTeXML | closed | bold and italic markup is sometimes lost in index terms | bug postprocessing | When converting

```tex

\documentclass{article}

\begin{document}

\textbf{BOLD\index{NORMAL}}

\textbf{BOLD\index{\textbf{BOLD}}}

\textbf{BOLD\index{\textit{ITALIC}}}

\textit{ITALIC\index{NORMAL}}

\textit{ITALIC\index{\textbf{BOLD}}}

\textit{ITALIC\index{\textit{ITALIC}}}

{NORMAL\index{NORMAL}}

... | 1.0 | bold and italic markup is sometimes lost in index terms - When converting

```tex

\documentclass{article}

\begin{document}

\textbf{BOLD\index{NORMAL}}

\textbf{BOLD\index{\textbf{BOLD}}}

\textbf{BOLD\index{\textit{ITALIC}}}

\textit{ITALIC\index{NORMAL}}

\textit{ITALIC\index{\textbf{BOLD}}}

\textit{IT... | process | bold and italic markup is sometimes lost in index terms when converting tex documentclass article begin document textbf bold index normal textbf bold index textbf bold textbf bold index textit italic textit italic index normal textit italic index textbf bold textit it... | 1 |

264,540 | 23,123,697,585 | IssuesEvent | 2022-07-28 01:51:57 | milvus-io/milvus | https://api.github.com/repos/milvus-io/milvus | closed | [Bug]: [benchmark][cluster]Milvus 2 replicas concurrently with 2 clients, search, query, load latency becomes higher | kind/bug priority/critical-urgent needs-triage test/benchmark | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Environment

```markdown

- Milvus version:2.1.0-20220723-1e038a75

- Deployment mode(standalone or cluster): cluster

- SDK version(e.g. pymilvus v2.0.0rc2):pymilvus 2.1.0dev103

- OS(Ubuntu or CentOS):

- CPU/Memory:

- GPU:

-... | 1.0 | [Bug]: [benchmark][cluster]Milvus 2 replicas concurrently with 2 clients, search, query, load latency becomes higher - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Environment

```markdown

- Milvus version:2.1.0-20220723-1e038a75

- Deployment mode(standalone or cluster): clu... | non_process | milvus replicas concurrently with clients search query load latency becomes higher is there an existing issue for this i have searched the existing issues environment markdown milvus version deployment mode standalone or cluster cluster sdk version e g pymilvus ... | 0 |

20,903 | 27,742,619,298 | IssuesEvent | 2023-03-15 15:10:35 | toggl/track-windows-feedback | https://api.github.com/repos/toggl/track-windows-feedback | closed | Memory leak related to the UI (300-850MB+ RAM usage) | bug processed | **Describe the bug**

RAM isn't being flushed. `webFrame.clearCache()` every screen change might solve.

**Steps to reproduce**

Moving around the UI fast and repeatedly makes the RAM usage increase and never goes down to its base level of about 130MB.

- About 5 GB of free system RAM when I was running, so, probably... | 1.0 | Memory leak related to the UI (300-850MB+ RAM usage) - **Describe the bug**

RAM isn't being flushed. `webFrame.clearCache()` every screen change might solve.

**Steps to reproduce**

Moving around the UI fast and repeatedly makes the RAM usage increase and never goes down to its base level of about 130MB.

- About 5... | process | memory leak related to the ui ram usage describe the bug ram isn t being flushed webframe clearcache every screen change might solve steps to reproduce moving around the ui fast and repeatedly makes the ram usage increase and never goes down to its base level of about about gb of fre... | 1 |

36,347 | 7,894,198,096 | IssuesEvent | 2018-06-28 20:38:24 | pyroscope/rtorrent-ps | https://api.github.com/repos/pyroscope/rtorrent-ps | closed | Possible defect in xmlrpc logging or log.xmlrpc re-open | P1 defect in progress | Recently, had fragments of XMLRPC payloads in a file on disk, which hints at memory overwrites ‘somewhere’.

```

………………ponse>

<params>

<param><value><i4>0</i4></value></param>

</params>

</methodResponse>

---

```

The problem is new since a few days. A *recent* related change was https://github.com/pyroscop... | 1.0 | Possible defect in xmlrpc logging or log.xmlrpc re-open - Recently, had fragments of XMLRPC payloads in a file on disk, which hints at memory overwrites ‘somewhere’.

```

………………ponse>

<params>

<param><value><i4>0</i4></value></param>

</params>

</methodResponse>

---

```

The problem is new since a few days.... | non_process | possible defect in xmlrpc logging or log xmlrpc re open recently had fragments of xmlrpc payloads in a file on disk which hints at memory overwrites ‘somewhere’ ………………ponse the problem is new since a few days a recent related change was a functional difference is the ... | 0 |

7,694 | 10,780,331,740 | IssuesEvent | 2019-11-04 12:45:17 | prisma/photonjs | https://api.github.com/repos/prisma/photonjs | opened | Implement batching protocol | process/candidate | As described in https://github.com/prisma/specs/issues/242#issuecomment-548382431

This issue only tracks the implementation of the batching protocol both on the query-engine and Photon.js side.

The underlying optimization of "merging multiple findOne" queries is a separate issue. | 1.0 | Implement batching protocol - As described in https://github.com/prisma/specs/issues/242#issuecomment-548382431

This issue only tracks the implementation of the batching protocol both on the query-engine and Photon.js side.

The underlying optimization of "merging multiple findOne" queries is a separate issue. | process | implement batching protocol as described in this issue only tracks the implementation of the batching protocol both on the query engine and photon js side the underlying optimization of merging multiple findone queries is a separate issue | 1 |

166,883 | 20,725,611,305 | IssuesEvent | 2022-03-14 01:14:25 | jgeraigery/FHIR | https://api.github.com/repos/jgeraigery/FHIR | opened | CVE-2021-37713 (High) detected in tar-2.2.2.tgz | security vulnerability | ## CVE-2021-37713 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-2.2.2.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home page: <a href="https://registry.npmjs.org/tar/-/ta... | True | CVE-2021-37713 (High) detected in tar-2.2.2.tgz - ## CVE-2021-37713 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tar-2.2.2.tgz</b></p></summary>

<p>tar for node</p>

<p>Library home ... | non_process | cve high detected in tar tgz cve high severity vulnerability vulnerable library tar tgz tar for node library home page a href path to dependency file docs package json path to vulnerable library docs node modules tar package json dependency hierarchy gatsb... | 0 |

49,959 | 13,187,300,122 | IssuesEvent | 2020-08-13 02:58:36 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | [docs] Missing `cd ../build` on "Parasitic metaprojects" documentation (Trac #2372) | Incomplete Migration Migrated from Trac cmake defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2372">https://code.icecube.wisc.edu/ticket/2372</a>, reported by icecube and owned by dgillcrist</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2020-06-24T12:31:42",

"description": "The documentation loca... | 1.0 | [docs] Missing `cd ../build` on "Parasitic metaprojects" documentation (Trac #2372) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2372">https://code.icecube.wisc.edu/ticket/2372</a>, reported by icecube and owned by dgillcrist</em></summary>

<p>

```json

{

"status": "closed",... | non_process | missing cd build on parasitic metaprojects documentation trac migrated from json status closed changetime description the documentation located at has a missing command in the tutorial there should be a line build right before svn co ... | 0 |

569,246 | 17,009,313,495 | IssuesEvent | 2021-07-02 00:07:49 | waldronlab/BugSigDB | https://api.github.com/repos/waldronlab/BugSigDB | closed | Reproducible installation of bugsigdb.org | major priority | Want a containerized installation or instructions for otherwise easily reproducible installation of the full bugsigdb.org. | 1.0 | Reproducible installation of bugsigdb.org - Want a containerized installation or instructions for otherwise easily reproducible installation of the full bugsigdb.org. | non_process | reproducible installation of bugsigdb org want a containerized installation or instructions for otherwise easily reproducible installation of the full bugsigdb org | 0 |

442,779 | 30,856,216,876 | IssuesEvent | 2023-08-02 20:51:25 | gravitational/teleport | https://api.github.com/repos/gravitational/teleport | opened | Add a prominent link to the Teleport labs within the docs | documentation | ## Applies To

The `/docs/` landing page, probably.

## Details

The is a request from Developer Relations. Add a link to the [Teleport labs](https://goteleport.com/labs/) from the docs so readers can try Teleport without spinning up their own environment.

## How will we know this is resolved?

There is a li... | 1.0 | Add a prominent link to the Teleport labs within the docs - ## Applies To

The `/docs/` landing page, probably.

## Details

The is a request from Developer Relations. Add a link to the [Teleport labs](https://goteleport.com/labs/) from the docs so readers can try Teleport without spinning up their own environmen... | non_process | add a prominent link to the teleport labs within the docs applies to the docs landing page probably details the is a request from developer relations add a link to the from the docs so readers can try teleport without spinning up their own environment how will we know this is resolved... | 0 |

145,018 | 5,557,157,151 | IssuesEvent | 2017-03-24 11:11:30 | kubernetes-incubator/kompose | https://api.github.com/repos/kubernetes-incubator/kompose | closed | Improving `down` to handle Volumes | priority/P1 | In 7349dc9 i'm proposing adding --emptyvols to the up/down operations. This raises a bigger question on whether we should enable passing the other **convert** options so that they can be used with up/down - this can be useful, but maybe not something we want to do.

| 1.0 | Improving `down` to handle Volumes - In 7349dc9 i'm proposing adding --emptyvols to the up/down operations. This raises a bigger question on whether we should enable passing the other **convert** options so that they can be used with up/down - this can be useful, but maybe not something we want to do.

| non_process | improving down to handle volumes in i m proposing adding emptyvols to the up down operations this raises a bigger question on whether we should enable passing the other convert options so that they can be used with up down this can be useful but maybe not something we want to do | 0 |

15,485 | 19,694,693,566 | IssuesEvent | 2022-01-12 10:52:01 | googleapis/python-bigtable | https://api.github.com/repos/googleapis/python-bigtable | closed | Fix samples CI runs under Python 3.9 / 3.10. | api: bigtable type: process samples | From PR #476, see [3.9](https://source.cloud.google.com/results/invocations/09b02d64-075e-4dd6-a81f-794b27bbc967) and [3.10](https://source.cloud.google.com/results/invocations/04364ec0-9ef4-42d7-affc-745cf7d7481b) samples run failures.

The generated `noxfile.py` files in `samples/` don't have those sessions. I bel... | 1.0 | Fix samples CI runs under Python 3.9 / 3.10. - From PR #476, see [3.9](https://source.cloud.google.com/results/invocations/09b02d64-075e-4dd6-a81f-794b27bbc967) and [3.10](https://source.cloud.google.com/results/invocations/04364ec0-9ef4-42d7-affc-745cf7d7481b) samples run failures.

The generated `noxfile.py` files ... | process | fix samples ci runs under python from pr see and samples run failures the generated noxfile py files in samples don t have those sessions i believe the correct fix is to add noxfile config py to each sample directory overriding the generated set of python versions to run | 1 |

379,803 | 11,235,843,623 | IssuesEvent | 2020-01-09 09:15:28 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | thetechnicaltraders.us6.list-manage.com - site is not usable | browser-firefox engine-gecko priority-important | <!-- @browser: Firefox 72.0.1 -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.13; rv:71.0) Gecko/20100101 Firefox/71.0 -->

<!-- @reported_with: -->

**URL**: https://thetechnicaltraders.us6.list-manage.com/track/click?u=333a7c6a2dc511d85228db7b6&id=25afa799ed&e=390f881618

**Browser / Version**: Firefox... | 1.0 | thetechnicaltraders.us6.list-manage.com - site is not usable - <!-- @browser: Firefox 72.0.1 -->

<!-- @ua_header: Mozilla/5.0 (Macintosh; Intel Mac OS X 10.13; rv:71.0) Gecko/20100101 Firefox/71.0 -->

<!-- @reported_with: -->

**URL**: https://thetechnicaltraders.us6.list-manage.com/track/click?u=333a7c6a2dc511d85228d... | non_process | thetechnicaltraders list manage com site is not usable url browser version firefox operating system mac os x tested another browser yes problem type site is not usable description the video does not load after i login firefox opens a blank page and displays... | 0 |

8,889 | 11,985,070,741 | IssuesEvent | 2020-04-07 16:51:54 | kubeflow/code-intelligence | https://api.github.com/repos/kubeflow/code-intelligence | opened | [label bot] code duplication among notebooks | area/labelbot kind/feature kind/process priority/p2 | I'm noticing a lot of code duplication between the various notebooks. Which makes it hard to identify which notebook to use. This is probably tech debt as a result of us creating new copies of code rather than refactoring and reusing. We should try to clean this up.

1. Code shared between notebooks should be moved ... | 1.0 | [label bot] code duplication among notebooks - I'm noticing a lot of code duplication between the various notebooks. Which makes it hard to identify which notebook to use. This is probably tech debt as a result of us creating new copies of code rather than refactoring and reusing. We should try to clean this up.

1.... | process | code duplication among notebooks i m noticing a lot of code duplication between the various notebooks which makes it hard to identify which notebook to use this is probably tech debt as a result of us creating new copies of code rather than refactoring and reusing we should try to clean this up code shar... | 1 |

30,580 | 24,940,236,660 | IssuesEvent | 2022-10-31 18:17:45 | dotnet/aspnetcore | https://api.github.com/repos/dotnet/aspnetcore | closed | Microsoft Dev Box integration with dotnet/aspnetcore | area-infrastructure feature-infrastructure-improvements | The [Microsoft Dev Box](https://techcommunity.microsoft.com/t5/azure-developer-community-blog/introducing-microsoft-dev-box/ba-p/3412063) was recently announced.

> Dev teams preconfigure Dev Boxes for specific projects and tasks, enabling devs to get started quickly with an environment that’s ready to build and run... | 2.0 | Microsoft Dev Box integration with dotnet/aspnetcore - The [Microsoft Dev Box](https://techcommunity.microsoft.com/t5/azure-developer-community-blog/introducing-microsoft-dev-box/ba-p/3412063) was recently announced.

> Dev teams preconfigure Dev Boxes for specific projects and tasks, enabling devs to get started qu... | non_process | microsoft dev box integration with dotnet aspnetcore the was recently announced dev teams preconfigure dev boxes for specific projects and tasks enabling devs to get started quickly with an environment that’s ready to build and run their app in minutes this issue tracks setting up the dotnet aspnet... | 0 |

14,671 | 17,788,802,478 | IssuesEvent | 2021-08-31 14:05:58 | Leviatan-Analytics/LA-data-processing | https://api.github.com/repos/Leviatan-Analytics/LA-data-processing | closed | Document new ways to add value with the project [3] | Data Processing Week 4 Sprint 3 | Add to research document all the different feature ideas that can be useful to the end users. | 1.0 | Document new ways to add value with the project [3] - Add to research document all the different feature ideas that can be useful to the end users. | process | document new ways to add value with the project add to research document all the different feature ideas that can be useful to the end users | 1 |

34,988 | 4,958,787,131 | IssuesEvent | 2016-12-02 10:58:22 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | github.com/cockroachdb/cockroach/pkg/security: TestUseCerts failed under stress | Robot test-failure | SHA: https://github.com/cockroachdb/cockroach/commits/318358c6b5af6ef5769fbcf5ed7903b8c35eea13

Parameters:

```

COCKROACH_PROPOSER_EVALUATED_KV=true

TAGS=stress

GOFLAGS=

```

Stress build found a failed test: https://teamcity.cockroachdb.com/viewLog.html?buildId=73959&tab=buildLog

```

I161202 07:40:48.552599 21 gossip... | 1.0 | github.com/cockroachdb/cockroach/pkg/security: TestUseCerts failed under stress - SHA: https://github.com/cockroachdb/cockroach/commits/318358c6b5af6ef5769fbcf5ed7903b8c35eea13

Parameters:

```

COCKROACH_PROPOSER_EVALUATED_KV=true

TAGS=stress

GOFLAGS=

```

Stress build found a failed test: https://teamcity.cockroachdb.... | non_process | github com cockroachdb cockroach pkg security testusecerts failed under stress sha parameters cockroach proposer evaluated kv true tags stress goflags stress build found a failed test gossip gossip go initial resolvers gossip gossip go no resolvers found u... | 0 |

10,726 | 9,081,625,256 | IssuesEvent | 2019-02-17 03:28:50 | AlwaysBeCrafting/Mysticell | https://api.github.com/repos/AlwaysBeCrafting/Mysticell | opened | Migrate redux to data hooks | enhancement service-web | ## Prerequisites

- #10 Migrate to React v16.8 / React Hooks

## Summary

Mysticell is a good place to demo and iterate on the data hooks pattern (see [`useStore()` and Data Hooks](https://gist.github.com/Alexander-Prime/7b7f2ef07fb3fb3587672bb775ac3140#usestore-and-data-hooks)). Currently we use `redux`, along with ... | 1.0 | Migrate redux to data hooks - ## Prerequisites

- #10 Migrate to React v16.8 / React Hooks

## Summary

Mysticell is a good place to demo and iterate on the data hooks pattern (see [`useStore()` and Data Hooks](https://gist.github.com/Alexander-Prime/7b7f2ef07fb3fb3587672bb775ac3140#usestore-and-data-hooks)). Current... | non_process | migrate redux to data hooks prerequisites migrate to react react hooks summary mysticell is a good place to demo and iterate on the data hooks pattern see currently we use redux along with react redux s cumbersome connect function to inject store data into component props data hoo... | 0 |

338,822 | 10,237,920,661 | IssuesEvent | 2019-08-19 14:50:21 | adamcaudill/yawast | https://api.github.com/repos/adamcaudill/yawast | opened | Connection error in check_local_ip_disclosure | bug high-priority | User reported issue:

```

Completed (Elapsed: 6:20:51.327802 - Peak Memory: 3.14GB)

Traceback (most recent call last):

File "/usr/local/bin/yawast", line 42, in <module>

main.main()

File "/usr/local/lib/python3.7/site-packages/yawast/main.py", line 76, in main

args.func(args, urls)

File "/... | 1.0 | Connection error in check_local_ip_disclosure - User reported issue:

```

Completed (Elapsed: 6:20:51.327802 - Peak Memory: 3.14GB)

Traceback (most recent call last):

File "/usr/local/bin/yawast", line 42, in <module>

main.main()

File "/usr/local/lib/python3.7/site-packages/yawast/main.py", line 7... | non_process | connection error in check local ip disclosure user reported issue completed elapsed peak memory traceback most recent call last file usr local bin yawast line in main main file usr local lib site packages yawast main py line in main args func... | 0 |

21,427 | 29,359,594,056 | IssuesEvent | 2023-05-28 00:37:18 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [QA Automation] [NODE.JS] QA Automation na @YellowIpe | TESTE PJ JAVASCRIPT SCRUM NODE.JS REQUISITOS SELENIUM REMOTO PROCESSOS GITHUB KANBAN UMA C QUALIDADE ESPRESSO PADRÕ QA TESTES AUTOMATIZADOS METODOLOGIAS ÁGEIS HELP WANTED ESPECIALISTA UI XCTEST AUTOMAÇÃO DE TESTES Stale | <!-- IMPORTANTE:

1. VOCÊ PODE SER BLOQUEADO de divulgar vaga se realizar ações que geram spam como:

1.1. Abrir diversas issues para a mesma vaga em um curto período de tempo.

1.2. Fechar qualquer issue.

Se alguma issue aberta por você é referente a uma vaga que não está mais aberta, mude o título da iss... | 1.0 | [QA Automation] [NODE.JS] QA Automation na @YellowIpe - <!-- IMPORTANTE:

1. VOCÊ PODE SER BLOQUEADO de divulgar vaga se realizar ações que geram spam como:

1.1. Abrir diversas issues para a mesma vaga em um curto período de tempo.

1.2. Fechar qualquer issue.

Se alguma issue aberta por você é referente a... | process | qa automation na yellowipe importante você pode ser bloqueado de divulgar vaga se realizar ações que geram spam como abrir diversas issues para a mesma vaga em um curto período de tempo fechar qualquer issue se alguma issue aberta por você é referente a uma vaga que não está... | 1 |

289,470 | 21,784,775,072 | IssuesEvent | 2022-05-14 01:16:28 | Equipment-and-Tool-Institute/j1939-84 | https://api.github.com/repos/Equipment-and-Tool-Institute/j1939-84 | closed | Support DEF Used This Operating Cycle in Table A-1 | documentation Mtask8 DR | Support DEF Used This Operating Cycle in Table A-1

DEF Used This Operating Cycle in Table A-1 is needed for 2024 MY engines | 1.0 | Support DEF Used This Operating Cycle in Table A-1 - Support DEF Used This Operating Cycle in Table A-1

DEF Used This Operating Cycle in Table A-1 is needed for 2024 MY engines | non_process | support def used this operating cycle in table a support def used this operating cycle in table a def used this operating cycle in table a is needed for my engines | 0 |

16,401 | 21,184,277,099 | IssuesEvent | 2022-04-08 11:02:55 | paul-buerkner/brms | https://api.github.com/repos/paul-buerkner/brms | closed | error-adding-loo-criterion-to-brms-ordinal-model-with-link-cloglog | bug post-processing | https://discourse.mc-stan.org/t/error-adding-loo-criterion-to-brms-ordinal-model-with-link-cloglog/22600

The model looks fine but when I try to add loo or waic criterion I get the error

`validate_ll(x) : All input values must be finite`

I'm not convinced that the `cloglog` link is appropriate. I get fairly go... | 1.0 | error-adding-loo-criterion-to-brms-ordinal-model-with-link-cloglog - https://discourse.mc-stan.org/t/error-adding-loo-criterion-to-brms-ordinal-model-with-link-cloglog/22600

The model looks fine but when I try to add loo or waic criterion I get the error

`validate_ll(x) : All input values must be finite`

I'm ... | process | error adding loo criterion to brms ordinal model with link cloglog the model looks fine but when i try to add loo or waic criterion i get the error validate ll x all input values must be finite i m not convinced that the cloglog link is appropriate i get fairly good results using the logit link ... | 1 |

679,040 | 23,219,572,438 | IssuesEvent | 2022-08-02 16:51:16 | KinsonDigital/Infrastructure | https://api.github.com/repos/KinsonDigital/Infrastructure | closed | 🚧Create re-usable workflow to resolve project files | high priority workflow | ### I have done the items below . . .

- [X] I have updated the title without removing the 🚧 emoji.

### Description

Create a workflow with the name `resolve-charp-proj-file.yml` that can be used to resolve the path to a C# project file.

### Acceptance Criteria

**This issue is finished when:**

- [x] Work... | 1.0 | 🚧Create re-usable workflow to resolve project files - ### I have done the items below . . .

- [X] I have updated the title without removing the 🚧 emoji.

### Description

Create a workflow with the name `resolve-charp-proj-file.yml` that can be used to resolve the path to a C# project file.

### Acceptance C... | non_process | 🚧create re usable workflow to resolve project files i have done the items below i have updated the title without removing the 🚧 emoji description create a workflow with the name resolve charp proj file yml that can be used to resolve the path to a c project file acceptance cri... | 0 |

7,063 | 10,219,207,715 | IssuesEvent | 2019-08-15 17:58:37 | toggl/mobileapp | https://api.github.com/repos/toggl/mobileapp | closed | Write localization framework doc | process | This doc should explain how the whole translation workflow works.

It should:

- explain the idea of the translations being crowdsourced

- explain how we'll use a project(s) for the translations

- mention the translation guide from #4834

- how we organize our `Resource.<language-code>.resx` files (and comments ins... | 1.0 | Write localization framework doc - This doc should explain how the whole translation workflow works.

It should:

- explain the idea of the translations being crowdsourced

- explain how we'll use a project(s) for the translations

- mention the translation guide from #4834

- how we organize our `Resource.<language-... | process | write localization framework doc this doc should explain how the whole translation workflow works it should explain the idea of the translations being crowdsourced explain how we ll use a project s for the translations mention the translation guide from how we organize our resource resx files... | 1 |

13,321 | 15,786,571,084 | IssuesEvent | 2021-04-01 17:56:36 | hasura/ask-me-anything | https://api.github.com/repos/hasura/ask-me-anything | closed | What's the history behind the devil looking logo of Hasura? | processing-for-shortvid question | The company name Hasura is a portmanteu of Haskell and Asura.

* [Haskell](https://en.wikipedia.org/wiki/Haskell_%28programming_language%29) being the language stack that Hasura is built in.

* [Asura](https://en.wikipedia.org/wiki/Asura) in Hindu mythology, is a class of beings defined by their opposition to the dev... | 1.0 | What's the history behind the devil looking logo of Hasura? - The company name Hasura is a portmanteu of Haskell and Asura.

* [Haskell](https://en.wikipedia.org/wiki/Haskell_%28programming_language%29) being the language stack that Hasura is built in.

* [Asura](https://en.wikipedia.org/wiki/Asura) in Hindu mytholog... | process | what s the history behind the devil looking logo of hasura the company name hasura is a portmanteu of haskell and asura being the language stack that hasura is built in in hindu mythology is a class of beings defined by their opposition to the devas or suras | 1 |

333,373 | 29,577,979,663 | IssuesEvent | 2023-06-07 01:39:54 | Joystream/pioneer | https://api.github.com/repos/Joystream/pioneer | closed | Verified status: no visual representation in Pioneer UI | enhancement high-prio community-dev qa-tested-ready-for-prod release:1.5.0 | Currently there is no visual representation of a user having the `verified: true` flag on their membership on Pioneer, this includes:

- Member directory

- Forums

- Proposals

I wouldn't say this is a high-priority thing to fix, but given the coming new Staking UI/UX and the fact that `jsgenesis` and `Gleev` member... | 1.0 | Verified status: no visual representation in Pioneer UI - Currently there is no visual representation of a user having the `verified: true` flag on their membership on Pioneer, this includes:

- Member directory

- Forums

- Proposals

I wouldn't say this is a high-priority thing to fix, but given the coming new Stak... | non_process | verified status no visual representation in pioneer ui currently there is no visual representation of a user having the verified true flag on their membership on pioneer this includes member directory forums proposals i wouldn t say this is a high priority thing to fix but given the coming new stak... | 0 |

29,868 | 13,179,720,779 | IssuesEvent | 2020-08-12 11:29:13 | terraform-providers/terraform-provider-aws | https://api.github.com/repos/terraform-providers/terraform-provider-aws | closed | Terraform does not support event_bus_name in aws_cloudwatch_event_rule | enhancement service/cloudwatch service/cloudwatchevents service/eventbridge | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 3.0 | Terraform does not support event_bus_name in aws_cloudwatch_event_rule - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help... | non_process | terraform does not support event bus name in aws cloudwatch event rule community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or... | 0 |

135,434 | 10,987,084,746 | IssuesEvent | 2019-12-02 08:26:34 | dzhw/SLC-IntEr | https://api.github.com/repos/dzhw/SLC-IntEr | closed | Befragungseinstieg Mobile Endgeräte | Hohe Priorität bug testing | Auf mobilen Endgeräten wird man zur Zeit nach Eingabe des Tokens direkt auf die Seite st0001.html geleitet. Der komplette erste Befragungsteil (Einstieg/beg) sowie das Kalendarium werden übersprungen.

| 1.0 | Befragungseinstieg Mobile Endgeräte - Auf mobilen Endgeräten wird man zur Zeit nach Eingabe des Tokens direkt auf die Seite st0001.html geleitet. Der komplette erste Befragungsteil (Einstieg/beg) sowie das Kalendarium werden übersprungen.

| non_process | befragungseinstieg mobile endgeräte auf mobilen endgeräten wird man zur zeit nach eingabe des tokens direkt auf die seite html geleitet der komplette erste befragungsteil einstieg beg sowie das kalendarium werden übersprungen | 0 |

5,927 | 8,751,241,809 | IssuesEvent | 2018-12-13 21:41:43 | de-ai/designengine.ai | https://api.github.com/repos/de-ai/designengine.ai | closed | [Processing] Processing is processing the wrong file IF it was not closed correctly | Processing | example...

http://earlyaccess.designengine.ai/proj/2/design-engine-v11

This file isn't the file I uploaded | 1.0 | [Processing] Processing is processing the wrong file IF it was not closed correctly - example...

http://earlyaccess.designengine.ai/proj/2/design-engine-v11

This file isn't the file I uploaded | process | processing is processing the wrong file if it was not closed correctly example this file isn t the file i uploaded | 1 |

12,191 | 14,742,299,477 | IssuesEvent | 2021-01-07 12:03:12 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Orlando 045- Missing Payment | anc-process anp-0.5 ant-enhancement | In GitLab by @kdjstudios on Apr 3, 2019, 11:50

**Submitted by:** "David Difo" <david.difo@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-03-25939/conversation

**Server:** Internal

**Client/Site:** Orlando

**Account:**

**Issue:**

We have client Ficurma 045-S8762 who ... | 1.0 | Orlando 045- Missing Payment - In GitLab by @kdjstudios on Apr 3, 2019, 11:50

**Submitted by:** "David Difo" <david.difo@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2019-04-03-25939/conversation

**Server:** Internal

**Client/Site:** Orlando

**Account:**

**Issue:**

We have... | process | orlando missing payment in gitlab by kdjstudios on apr submitted by david difo helpdesk server internal client site orlando account issue we have client ficurma who has reached out stating e check payment processed on does not show under bank stat... | 1 |

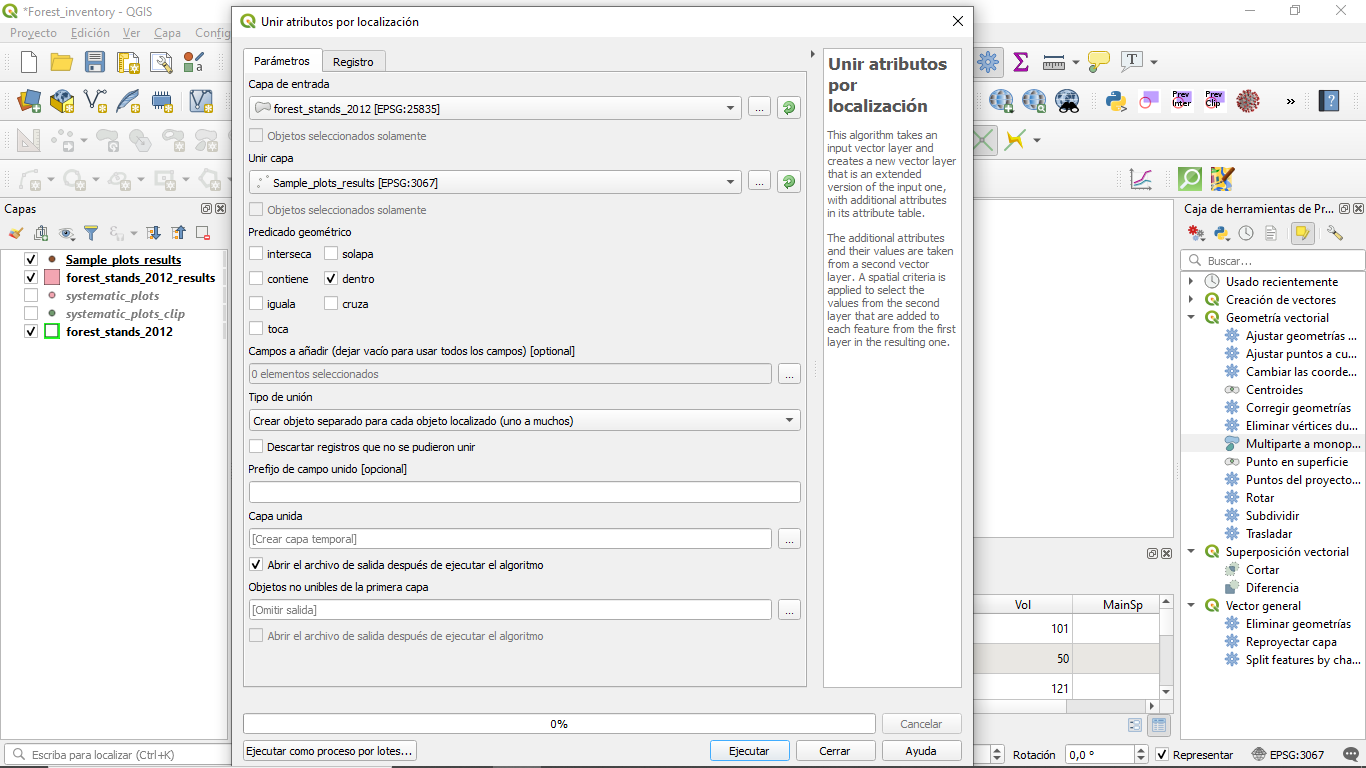

12,571 | 14,986,015,927 | IssuesEvent | 2021-01-28 20:41:35 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | summary staistics not found in "join atributes by location" function into QGIS 3.10.5 version | Bug Feedback Processing |

| 1.0 | summary staistics not found in "join atributes by location" function into QGIS 3.10.5 version -

detected in bootstrap-3.3.5.min.js, bootstrap-3.3.5.js - autoclosed | Mend: dependency security vulnerability | ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.5.min.js</b>, <b>bootstrap-3.3.5.js</b></p></summary>

<p>

<details><summary><b>bootstrap-3.3.5.min.j... | True | CVE-2018-14042 (Medium) detected in bootstrap-3.3.5.min.js, bootstrap-3.3.5.js - autoclosed - ## CVE-2018-14042 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>bootstrap-3.3.5.min.j... | non_process | cve medium detected in bootstrap min js bootstrap js autoclosed cve medium severity vulnerability vulnerable libraries bootstrap min js bootstrap js bootstrap min js the most popular front end framework for developing responsive mobile first pro... | 0 |

474,965 | 13,685,163,147 | IssuesEvent | 2020-09-30 06:38:21 | shahednasser/sbuttons | https://api.github.com/repos/shahednasser/sbuttons | opened | Change "btn-disabled" to "disabled-btn" | Hacktoberfest Priority: Medium buttons enhancement good first issue help wanted up-for-grabs | Now we have a new button type "Disabled" but its class name does not follow the convention we are using for the website. We just need to change the class name from "btn-disabled" to "disabled-btn" | 1.0 | Change "btn-disabled" to "disabled-btn" - Now we have a new button type "Disabled" but its class name does not follow the convention we are using for the website. We just need to change the class name from "btn-disabled" to "disabled-btn" | non_process | change btn disabled to disabled btn now we have a new button type disabled but its class name does not follow the convention we are using for the website we just need to change the class name from btn disabled to disabled btn | 0 |

20,209 | 26,796,273,489 | IssuesEvent | 2023-02-01 12:08:18 | threefoldtech/tfchain | https://api.github.com/repos/threefoldtech/tfchain | closed | Add disks field to nodes | process_wontfix type_feature | To accommodate https://github.com/threefoldtech/zos/issues/1830, TF Chain's node object will need a data field that stores a list of disks. Something like:

```

node {

disks = [disk, ...]

}

disk {

type = "SSD" or "HDD"

size = capacity

}

``` | 1.0 | Add disks field to nodes - To accommodate https://github.com/threefoldtech/zos/issues/1830, TF Chain's node object will need a data field that stores a list of disks. Something like:

```

node {

disks = [disk, ...]

}

disk {

type = "SSD" or "HDD"

size = capacity

}

``` | process | add disks field to nodes to accommodate tf chain s node object will need a data field that stores a list of disks something like node disks disk type ssd or hdd size capacity | 1 |

21,937 | 30,446,798,339 | IssuesEvent | 2023-07-15 19:28:29 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | pyutils 0.0.1a1 has 2 GuardDog issues | guarddog typosquatting silent-process-execution | https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1a1",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names, and might be a typosquatting attempt: ... | 1.0 | pyutils 0.0.1a1 has 2 GuardDog issues - https://pypi.org/project/pyutils

https://inspector.pypi.io/project/pyutils

```{

"dependency": "pyutils",

"version": "0.0.1a1",

"result": {

"issues": 2,

"errors": {},

"results": {

"typosquatting": "This package closely ressembles the following package names... | process | pyutils has guarddog issues dependency pyutils version result issues errors results typosquatting this package closely ressembles the following package names and might be a typosquatting attempt python utils pytils silen... | 1 |

14,002 | 16,773,267,617 | IssuesEvent | 2021-06-14 17:22:04 | googleapis/python-cloud-core | https://api.github.com/repos/googleapis/python-cloud-core | closed | Add 'black' to nox for consistency | type: process | The codebase is not in line with our current standards: it does not use `black` to ensure consistent code style. | 1.0 | Add 'black' to nox for consistency - The codebase is not in line with our current standards: it does not use `black` to ensure consistent code style. | process | add black to nox for consistency the codebase is not in line with our current standards it does not use black to ensure consistent code style | 1 |

216,360 | 24,278,525,331 | IssuesEvent | 2022-09-28 15:28:30 | billmcchesney1/davinci | https://api.github.com/repos/billmcchesney1/davinci | reopened | CVE-2020-7656 (Medium) detected in jquery-1.7.1.min.js | security vulnerability | ## CVE-2020-7656 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="htt... | True | CVE-2020-7656 (Medium) detected in jquery-1.7.1.min.js - ## CVE-2020-7656 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library ... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file node modules sockjs examples express x index html path to vulnerable libra... | 0 |

411,397 | 27,821,180,296 | IssuesEvent | 2023-03-19 08:54:40 | jbloomAus/DecisionTransformerInterpretability | https://api.github.com/repos/jbloomAus/DecisionTransformerInterpretability | opened | Write much better tutorials for the repo. | documentation | I've explained how to use the repo in the docs, but this could be way better.

Some examples of pages what might be useful to add to the sphinx docs.

1. A page explaining what Minigrid is, and where to look at each MiniGrid environment (maybe include a table of environments by row with columns for different models ... | 1.0 | Write much better tutorials for the repo. - I've explained how to use the repo in the docs, but this could be way better.

Some examples of pages what might be useful to add to the sphinx docs.

1. A page explaining what Minigrid is, and where to look at each MiniGrid environment (maybe include a table of environme... | non_process | write much better tutorials for the repo i ve explained how to use the repo in the docs but this could be way better some examples of pages what might be useful to add to the sphinx docs a page explaining what minigrid is and where to look at each minigrid environment maybe include a table of environme... | 0 |

747,806 | 26,099,228,408 | IssuesEvent | 2022-12-27 03:33:36 | simonbaird/tiddlyhost | https://api.github.com/repos/simonbaird/tiddlyhost | closed | Site backups and "restore previous version" functionality | priority-high | As I'm now beginning to use TH more seriously to develop stuff, I need a backup feature like in TS. Maybe I'm just not seeing something in front of my nose? IMO the most suitable place for it would be Your sites page or inside the Settings. | 1.0 | Site backups and "restore previous version" functionality - As I'm now beginning to use TH more seriously to develop stuff, I need a backup feature like in TS. Maybe I'm just not seeing something in front of my nose? IMO the most suitable place for it would be Your sites page or inside the Settings. | non_process | site backups and restore previous version functionality as i m now beginning to use th more seriously to develop stuff i need a backup feature like in ts maybe i m just not seeing something in front of my nose imo the most suitable place for it would be your sites page or inside the settings | 0 |

9,807 | 12,819,943,821 | IssuesEvent | 2020-07-06 04:06:54 | asyml/forte | https://api.github.com/repos/asyml/forte | closed | StanfordNLP sentence segmenter bug | bug priority: medium topic: processors | While trying to find sentence boundaries, the technique to find the sentence ending can fail.

We are using `find` which gives the first occurrence of a word in a sentence. This will definitely fail when there are 2 duplicate words in a sentence.

https://github.com/asyml/forte/blob/master/forte/processors/stanford... | 1.0 | StanfordNLP sentence segmenter bug - While trying to find sentence boundaries, the technique to find the sentence ending can fail.

We are using `find` which gives the first occurrence of a word in a sentence. This will definitely fail when there are 2 duplicate words in a sentence.

https://github.com/asyml/forte/... | process | stanfordnlp sentence segmenter bug while trying to find sentence boundaries the technique to find the sentence ending can fail we are using find which gives the first occurrence of a word in a sentence this will definitely fail when there are duplicate words in a sentence | 1 |

143,542 | 11,568,861,940 | IssuesEvent | 2020-02-20 16:33:42 | RPTools/maptool | https://api.github.com/repos/RPTools/maptool | closed | input() PROPS option no longer works. | bug feature macro changes tested | **Describe the bug**

I'm getting an error in alpha 6 that I don't get in 1.5.12. Apparently the PROPS option no longer works. Gives null pointer exception error.

**To Reproduce**

[input("test|one=1;two=2;|Test|props")]

**Expected behavior**

Should give me fields "one" and "two" with their values.

**MapTool ... | 1.0 | input() PROPS option no longer works. - **Describe the bug**

I'm getting an error in alpha 6 that I don't get in 1.5.12. Apparently the PROPS option no longer works. Gives null pointer exception error.

**To Reproduce**

[input("test|one=1;two=2;|Test|props")]

**Expected behavior**

Should give me fields "one" an... | non_process | input props option no longer works describe the bug i m getting an error in alpha that i don t get in apparently the props option no longer works gives null pointer exception error to reproduce expected behavior should give me fields one and two with their values maptool... | 0 |

6,437 | 9,539,163,714 | IssuesEvent | 2019-04-30 16:15:45 | material-components/material-components-ios | https://api.github.com/repos/material-components/material-components-ios | closed | Internal issue:: b/130723794 | type:Process | This was filed as an internal issue. If you are a Googler, please visit [b/130723794](http://b/130723794) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/130723794](http://b/130723794) | 1.0 | Internal issue:: b/130723794 - This was filed as an internal issue. If you are a Googler, please visit [b/130723794](http://b/130723794) for more details.

<!-- Auto-generated content below, do not modify -->

---

#### Internal data

- Associated internal bug: [b/130723794](http://b/130723794) | process | internal issue b this was filed as an internal issue if you are a googler please visit for more details internal data associated internal bug | 1 |

276,857 | 30,557,551,707 | IssuesEvent | 2023-07-20 12:42:53 | nagyesta/abort-mission-maven-plugin | https://api.github.com/repos/nagyesta/abort-mission-maven-plugin | closed | jszip-3.7.1.js: 1 vulnerabilities (highest severity is: 7.3) | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jszip-3.7.1.js</b></p></summary>

<p>Create, read and edit .zip files with Javascript http://stuartk.com/jszip</p>

<p>Library home page: <a href="https://cdnjs.cloudfl... | True | jszip-3.7.1.js: 1 vulnerabilities (highest severity is: 7.3) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jszip-3.7.1.js</b></p></summary>

<p>Create, read and edit .zip files with Javascript http://stuartk.com... | non_process | jszip js vulnerabilities highest severity is vulnerable library jszip js create read and edit zip files with javascript library home page a href path to vulnerable library target apidocs jquery jszip dist jszip js found in head commit a href vulnerabilitie... | 0 |

69,818 | 13,347,081,462 | IssuesEvent | 2020-08-29 11:37:34 | codeisscience/code-is-science | https://api.github.com/repos/codeisscience/code-is-science | closed | journal page - make it include the header include file [interesting mix of R and hugo templating] | code-task help wanted | So, the site is built largely using [hugo](https://gohugo.io/) with the exception of the journals page, which is rendered using [knitr](https://yihui.name/knitr/) R-related wizardry. @alokpant wisely suggested that the journals page should use the same header, which is entirely sane. Offhand I'm not sure how to mate th... | 1.0 | journal page - make it include the header include file [interesting mix of R and hugo templating] - So, the site is built largely using [hugo](https://gohugo.io/) with the exception of the journals page, which is rendered using [knitr](https://yihui.name/knitr/) R-related wizardry. @alokpant wisely suggested that the j... | non_process | journal page make it include the header include file so the site is built largely using with the exception of the journals page which is rendered using r related wizardry alokpant wisely suggested that the journals page should use the same header which is entirely sane offhand i m not sure how to ma... | 0 |

200,840 | 22,916,001,210 | IssuesEvent | 2022-07-17 01:05:54 | nanopathi/frameworks_av_AOSP10_r33_CVE-2020-0241 | https://api.github.com/repos/nanopathi/frameworks_av_AOSP10_r33_CVE-2020-0241 | closed | CVE-2020-0160 (High) detected in avandroid-10.0.0_r44 - autoclosed | security vulnerability | ## CVE-2020-0160 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>avandroid-10.0.0_r44</b></p></summary>

<p>

<p>Library home page: <a href=https://android.googlesource.com/platform/fram... | True | CVE-2020-0160 (High) detected in avandroid-10.0.0_r44 - autoclosed - ## CVE-2020-0160 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>avandroid-10.0.0_r44</b></p></summary>

<p>

<p>Libr... | non_process | cve high detected in avandroid autoclosed cve high severity vulnerability vulnerable library avandroid library home page a href found in head commit a href found in base branch master vulnerable source files media extractors s... | 0 |

725,756 | 24,974,793,086 | IssuesEvent | 2022-11-02 06:35:51 | matrixorigin/matrixone | https://api.github.com/repos/matrixorigin/matrixone | opened | [Enhancement]: error handling on reading and writing io enties | kind/enhancement priority/p0.5 | ### Is there an existing issue for enhancement?

- [X] I have checked the existing issues.

### What would you like to be added ?

```Markdown

error handling on reading and writing io entries

```

### Why is this needed ?

1. Timeout

2. Duplicated

3. Not found

4. Unexpected

### Additional information

_No response... | 1.0 | [Enhancement]: error handling on reading and writing io enties - ### Is there an existing issue for enhancement?

- [X] I have checked the existing issues.

### What would you like to be added ?

```Markdown

error handling on reading and writing io entries

```

### Why is this needed ?

1. Timeout

2. Duplicated

3. N... | non_process | error handling on reading and writing io enties is there an existing issue for enhancement i have checked the existing issues what would you like to be added markdown error handling on reading and writing io entries why is this needed timeout duplicated not found u... | 0 |

239,352 | 19,848,909,853 | IssuesEvent | 2022-01-21 10:03:13 | zephyrproject-rtos/test_results | https://api.github.com/repos/zephyrproject-rtos/test_results | closed |

tests-ci : can: isotp: conformance test failed

| bug area: Tests |

**Describe the bug**

conformance test is failed on v2.7.99-3293-g93b0ea978293 on mimxrt1060_evk

see logs for details

**To Reproduce**

1.

```

scripts/twister --device-testing --device-serial /dev/ttyACM0 -p mimxrt1060_evk --sub-test can.isotp

```

2. See error

**Expected behavior**

test pass

**Impact**

**Logs an... | 1.0 |

tests-ci : can: isotp: conformance test failed

-

**Describe the bug**

conformance test is failed on v2.7.99-3293-g93b0ea978293 on mimxrt1060_evk

see logs for details

**To Reproduce**

1.

```

scripts/twister --device-testing --device-serial /dev/ttyACM0 -p mimxrt1060_evk --sub-test can.isotp

```

2. See error

**Ex... | non_process | tests ci can isotp conformance test failed describe the bug conformance test is failed on on evk see logs for details to reproduce scripts twister device testing device serial dev p evk sub test can isotp see error expected behavior test pass impact ... | 0 |

9,032 | 12,129,836,723 | IssuesEvent | 2020-04-22 23:42:22 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | remove gcp-devrel-py-tools from datastore/cloud-client/requirements-test.txt | priority: p2 remove-gcp-devrel-py-tools type: process | remove gcp-devrel-py-tools from datastore/cloud-client/requirements-test.txt | 1.0 | remove gcp-devrel-py-tools from datastore/cloud-client/requirements-test.txt - remove gcp-devrel-py-tools from datastore/cloud-client/requirements-test.txt | process | remove gcp devrel py tools from datastore cloud client requirements test txt remove gcp devrel py tools from datastore cloud client requirements test txt | 1 |

10,138 | 13,044,162,437 | IssuesEvent | 2020-07-29 03:47:32 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | UCP: Migrate scalar function `JsonMergePreserveSig` from TiDB | challenge-program-2 component/coprocessor difficulty/easy sig/coprocessor |

## Description

Port the scalar function `JsonMergePreserveSig` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- https://github.com/tikv/tikv/tree/master/components/tidb_query/sr... | 2.0 | UCP: Migrate scalar function `JsonMergePreserveSig` from TiDB -

## Description

Port the scalar function `JsonMergePreserveSig` from TiDB to coprocessor.

## Score

* 50

## Mentor(s)

* @mapleFU

## Recommended Skills

* Rust programming

## Learning Materials

Already implemented expressions ported from TiDB

- h... | process | ucp migrate scalar function jsonmergepreservesig from tidb description port the scalar function jsonmergepreservesig from tidb to coprocessor score mentor s maplefu recommended skills rust programming learning materials already implemented expressions ported from tidb ... | 1 |

260,827 | 19,686,807,026 | IssuesEvent | 2022-01-11 23:25:18 | corretto/amazon-corretto-crypto-provider | https://api.github.com/repos/corretto/amazon-corretto-crypto-provider | closed | Document all system properties | Documentation | All system properties defined by ACCP need to be documented. This must include include the target audience for each property and how long we commit to supporting it. | 1.0 | Document all system properties - All system properties defined by ACCP need to be documented. This must include include the target audience for each property and how long we commit to supporting it. | non_process | document all system properties all system properties defined by accp need to be documented this must include include the target audience for each property and how long we commit to supporting it | 0 |

337,887 | 30,269,726,165 | IssuesEvent | 2023-07-07 14:28:30 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | ccl/changefeedccl: TestChangefeedNemeses failed | C-test-failure O-robot A-cdc branch-master T-cdc | ccl/changefeedccl.TestChangefeedNemeses [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Ci_TestsAwsLinuxArm64_UnitTests/9120744?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Ci_TestsAwsLinuxArm64_UnitTests/9120744?buildTab=artifacts#/) on master @ [8... | 1.0 | ccl/changefeedccl: TestChangefeedNemeses failed - ccl/changefeedccl.TestChangefeedNemeses [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Ci_TestsAwsLinuxArm64_UnitTests/9120744?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Ci_TestsAwsLinuxArm64_Unit... | non_process | ccl changefeedccl testchangefeednemeses failed ccl changefeedccl testchangefeednemeses with on master run testchangefeednemeses test log scope go test logs captured to artifacts tmp tmp test log scope go use show logs to present logs inline cont testchangefeedneme... | 0 |

69,334 | 8,393,888,115 | IssuesEvent | 2018-10-09 22:00:08 | LetsEatCo/Restaurants | https://api.github.com/repos/LetsEatCo/Restaurants | opened | ✨ Add Kiosk | priority: high 🔥 scope: back-office scope: design scope: functional type: feature ✨ | **Is your feature request related to a problem ? Please describe.**

A logged in store should be able to add a kiosk

**Describe the solution you'd like**

CS

**Describe alternatives you've considered**

None

**Additional context**

None | 1.0 | ✨ Add Kiosk - **Is your feature request related to a problem ? Please describe.**

A logged in store should be able to add a kiosk

**Describe the solution you'd like**

CS

**Describe alternatives you've considered**

None

**Additional context**

None | non_process | ✨ add kiosk is your feature request related to a problem please describe a logged in store should be able to add a kiosk describe the solution you d like cs describe alternatives you ve considered none additional context none | 0 |

190,625 | 22,106,875,277 | IssuesEvent | 2022-06-01 17:40:52 | BCDevOps/developer-experience | https://api.github.com/repos/BCDevOps/developer-experience | opened | KeyCloak CSS STRA Review and Updates | security | - [x] Review STRA completed by Bruce and

- [x] fix-up/update

- [ ] Complete SoAR

- [ ] Review w/ KeyCloak team

| True | KeyCloak CSS STRA Review and Updates - - [x] Review STRA completed by Bruce and

- [x] fix-up/update

- [ ] Complete SoAR

- [ ] Review w/ KeyCloak team

| non_process | keycloak css stra review and updates review stra completed by bruce and fix up update complete soar review w keycloak team | 0 |

283,787 | 24,562,799,560 | IssuesEvent | 2022-10-12 22:11:07 | yugabyte/yugabyte-db | https://api.github.com/repos/yugabyte/yugabyte-db | opened | [YSQL] flaky test: undefined behavior in PgLibPqTest.DBCatalogVersion | area/ysql kind/failing-test priority/high status/awaiting-triage | ### Description

https://detective-gcp.dev.yugabyte.com/stability/test?branch=master&build_type=all&class=PgLibPqTest&fail_tag=undefined_behavior&name=DBCatalogVersion&platform=linux

```

[ts-1] ../../../../../../../src/postgres/src/backend/catalog/yb_catalog/yb_catalog_version.c:472:5: runtime error: null pointer p... | 1.0 | [YSQL] flaky test: undefined behavior in PgLibPqTest.DBCatalogVersion - ### Description

https://detective-gcp.dev.yugabyte.com/stability/test?branch=master&build_type=all&class=PgLibPqTest&fail_tag=undefined_behavior&name=DBCatalogVersion&platform=linux

```

[ts-1] ../../../../../../../src/postgres/src/backend/cata... | non_process | flaky test undefined behavior in pglibpqtest dbcatalogversion description src postgres src backend catalog yb catalog yb catalog version c runtime error null pointer passed as argument which is declared to never be null usr include stdlib h note nonnull attr... | 0 |

22,564 | 7,190,669,633 | IssuesEvent | 2018-02-02 18:05:43 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | Useful information for geth support. | build-testing status-inprocess type-enhancement | h t t p s : / / g i t h u . b.com/ethereum/go-ethereum/wiki/Management-APIs | 1.0 | Useful information for geth support. - h t t p s : / / g i t h u . b.com/ethereum/go-ethereum/wiki/Management-APIs | non_process | useful information for geth support h t t p s g i t h u b com ethereum go ethereum wiki management apis | 0 |

38,230 | 2,842,441,988 | IssuesEvent | 2015-05-28 09:21:03 | hydrosolutions/imomo-hydromet-client | https://api.github.com/repos/hydrosolutions/imomo-hydromet-client | closed | Make it clear what a station being ready for processing means | enhancement Priority low question | When using a station that has not all the required initial data, the user gets an error message but it is not clear what is needed from the user. | 1.0 | Make it clear what a station being ready for processing means - When using a station that has not all the required initial data, the user gets an error message but it is not clear what is needed from the user. | non_process | make it clear what a station being ready for processing means when using a station that has not all the required initial data the user gets an error message but it is not clear what is needed from the user | 0 |

365,428 | 25,535,396,607 | IssuesEvent | 2022-11-29 11:34:51 | Quozul/template | https://api.github.com/repos/Quozul/template | closed | Add security policy | documentation | Using the following guide, please add a security policy to the repository.

https://docs.github.com/en/code-security/getting-started/adding-a-security-policy-to-your-repository | 1.0 | Add security policy - Using the following guide, please add a security policy to the repository.

https://docs.github.com/en/code-security/getting-started/adding-a-security-policy-to-your-repository | non_process | add security policy using the following guide please add a security policy to the repository | 0 |