Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

9,956 | 12,979,256,640 | IssuesEvent | 2020-07-22 01:30:53 | googleapis/python-bigquery | https://api.github.com/repos/googleapis/python-bigquery | closed | release 1.26.0 | api: bigquery type: process | Any chance we could get a release? I'd like #170 in a released version to support a tool I'm building on my current team. | 1.0 | release 1.26.0 - Any chance we could get a release? I'd like #170 in a released version to support a tool I'm building on my current team. | process | release any chance we could get a release i d like in a released version to support a tool i m building on my current team | 1 |

3,615 | 6,061,817,407 | IssuesEvent | 2017-06-14 07:49:54 | aditya00j/Griffin | https://api.github.com/repos/aditya00j/Griffin | opened | Create Simulation instance and test | requirement | Create a Simulation instance with 1 DynamicObject and test. This entails the following:

1. Properly instantiating the Simulation class, with inputs, states, and outputs.

2. Integrating DynamicObject interactions.

3. ODE solver.

4. Positive testing required. Negative testing not required. | 1.0 | Create Simulation instance and test - Create a Simulation instance with 1 DynamicObject and test. This entails the following:

1. Properly instantiating the Simulation class, with inputs, states, and outputs.

2. Integrating DynamicObject interactions.

3. ODE solver.

4. Positive testing required. Negative testing n... | non_process | create simulation instance and test create a simulation instance with dynamicobject and test this entails the following properly instantiating the simulation class with inputs states and outputs integrating dynamicobject interactions ode solver positive testing required negative testing n... | 0 |

636,307 | 20,596,943,256 | IssuesEvent | 2022-03-05 16:57:33 | AY2122S2-CS2103-F09-4/tp | https://api.github.com/repos/AY2122S2-CS2103-F09-4/tp | closed | As a home baker that has multiple customers, I can look at all my customers | type.story priority.high | ... so that I can access the information for different customers | 1.0 | As a home baker that has multiple customers, I can look at all my customers - ... so that I can access the information for different customers | non_process | as a home baker that has multiple customers i can look at all my customers so that i can access the information for different customers | 0 |

102,019 | 16,543,129,244 | IssuesEvent | 2021-05-27 19:36:34 | RG4421/grafana | https://api.github.com/repos/RG4421/grafana | opened | CVE-2020-7753 (High) detected in trim-0.0.1.tgz | security vulnerability | ## CVE-2020-7753 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>trim-0.0.1.tgz</b></p></summary>

<p>Trim string whitespace</p>

<p>Library home page: <a href="https://registry.npmjs.or... | True | CVE-2020-7753 (High) detected in trim-0.0.1.tgz - ## CVE-2020-7753 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>trim-0.0.1.tgz</b></p></summary>

<p>Trim string whitespace</p>

<p>Lib... | non_process | cve high detected in trim tgz cve high severity vulnerability vulnerable library trim tgz trim string whitespace library home page a href path to dependency file grafana package json path to vulnerable library grafana node modules trim dependency hierarchy ... | 0 |

274,541 | 30,052,891,798 | IssuesEvent | 2023-06-28 03:02:33 | temporalio/samples-java | https://api.github.com/repos/temporalio/samples-java | opened | h2-2.1.214.jar: 1 vulnerabilities (highest severity is: 7.8) | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>h2-2.1.214.jar</b></p></summary>

<p>H2 Database Engine</p>

<p>Library home page: <a href="https://h2database.com">https://h2database.com</a></p>

<p>Path to dependency... | True | h2-2.1.214.jar: 1 vulnerabilities (highest severity is: 7.8) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>h2-2.1.214.jar</b></p></summary>

<p>H2 Database Engine</p>

<p>Library home page: <a href="https://h2dat... | non_process | jar vulnerabilities highest severity is vulnerable library jar database engine library home page a href path to dependency file springboot build gradle path to vulnerable library home wss scanner gradle caches modules files com jar ... | 0 |

449,864 | 12,976,152,270 | IssuesEvent | 2020-07-21 18:15:32 | google/ground-platform | https://api.github.com/repos/google/ground-platform | closed | [Side panel] Routing from one feature to another does not change contents of side panel | priority: p1 type: bug | **Describe the bug**

Routing from one feature to another changes the side panel, but the color is not correct (still the previous color)

**To Reproduce**

Steps to reproduce the behavior:

1. Go to home page.

2. Click on a feature.

3. Shows the feature in side panel.

4. Click on a feature from a different layer.... | 1.0 | [Side panel] Routing from one feature to another does not change contents of side panel - **Describe the bug**

Routing from one feature to another changes the side panel, but the color is not correct (still the previous color)

**To Reproduce**

Steps to reproduce the behavior:

1. Go to home page.

2. Click on a fe... | non_process | routing from one feature to another does not change contents of side panel describe the bug routing from one feature to another changes the side panel but the color is not correct still the previous color to reproduce steps to reproduce the behavior go to home page click on a feature ... | 0 |

9,655 | 12,625,149,758 | IssuesEvent | 2020-06-14 10:22:00 | Arch666Angel/mods | https://api.github.com/repos/Arch666Angel/mods | opened | Take bio out of beta | Angels Bio Processing Impact: Enhancement | **Describe the bug**

Seems like bio is more or less to a stable state, maybe still some tweaking needed, but content wise seems to be stable, so we should take it out of beta? | 1.0 | Take bio out of beta - **Describe the bug**

Seems like bio is more or less to a stable state, maybe still some tweaking needed, but content wise seems to be stable, so we should take it out of beta? | process | take bio out of beta describe the bug seems like bio is more or less to a stable state maybe still some tweaking needed but content wise seems to be stable so we should take it out of beta | 1 |

11,649 | 4,270,894,315 | IssuesEvent | 2016-07-13 09:04:28 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [com_media] media form field gives javascript error | No Code Attached Yet | #### Steps to reproduce the issue

On a clean installation of Joomla 3.6.0 beta 2 with sample data:

1) Go to administrator

2) Component Banners -> Banners

3) Select any of the banners (for example Shop 1)

4) On details tab remove the current value of image

5) Select a new image on the modal window and insert

... | 1.0 | [com_media] media form field gives javascript error - #### Steps to reproduce the issue

On a clean installation of Joomla 3.6.0 beta 2 with sample data:

1) Go to administrator

2) Component Banners -> Banners

3) Select any of the banners (for example Shop 1)

4) On details tab remove the current value of image

... | non_process | media form field gives javascript error steps to reproduce the issue on a clean installation of joomla beta with sample data go to administrator component banners banners select any of the banners for example shop on details tab remove the current value of image select ... | 0 |

270,380 | 20,599,018,095 | IssuesEvent | 2022-03-06 00:31:31 | intelligent-environments-lab/bevo_iaq | https://api.github.com/repos/intelligent-environments-lab/bevo_iaq | opened | BEVO Beacon Log: 46 | documentation | # BEVO Beacon 46

### Sensors/Connections

| Module | Included | Condition | Connections |

| ---| --- | --- | --- |

| CO | 🟢 |Original | Factory |

| CO2 | 🟢 |Original | Factory |

| Fan | 🟢 |Original/Cleaned | Original |

| Light | 🟢 |Original | Factory |

| NO2 | 🔴 |- | - |

| PM | 🟢 |Original | Factory |... | 1.0 | BEVO Beacon Log: 46 - # BEVO Beacon 46

### Sensors/Connections

| Module | Included | Condition | Connections |

| ---| --- | --- | --- |

| CO | 🟢 |Original | Factory |

| CO2 | 🟢 |Original | Factory |

| Fan | 🟢 |Original/Cleaned | Original |

| Light | 🟢 |Original | Factory |

| NO2 | 🔴 |- | - |

| PM | 🟢... | non_process | bevo beacon log bevo beacon sensors connections module included condition connections co 🟢 original factory 🟢 original factory fan 🟢 original cleaned original light 🟢 original factory 🔴 pm 🟢 orig... | 0 |

22,210 | 30,762,150,656 | IssuesEvent | 2023-07-29 21:06:32 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | reopened | Prototype ArrayView Types | arrow enhancement development-process | **Is your feature request related to a problem or challenge? Please describe what you are trying to do.**

<!--

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

(This section helps Arrow developers understand the context and *why* for this feature, in addition to the *wha... | 1.0 | Prototype ArrayView Types - **Is your feature request related to a problem or challenge? Please describe what you are trying to do.**

<!--

A clear and concise description of what the problem is. Ex. I'm always frustrated when [...]

(This section helps Arrow developers understand the context and *why* for this featu... | process | prototype arrayview types is your feature request related to a problem or challenge please describe what you are trying to do a clear and concise description of what the problem is ex i m always frustrated when this section helps arrow developers understand the context and why for this feature ... | 1 |

14,117 | 17,014,188,556 | IssuesEvent | 2021-07-02 09:37:04 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | opened | Status of Bazel 5.0.0-pre.20210623.2 | P1 release team-XProduct type: process | - Expected release date: July 2nd

Task list:

- [x] Pick release baseline: 8b453331163378071f1cfe0ae7c74d551c21b834 with cherrypick 223113c9202e8f338b183d1736d97327d28241ea

- [ ] Create release candidate:

- [ ] Post-submit:

- [ ] Push the release:

- [ ] Update the [release page](https://github.com/bazelbuil... | 1.0 | Status of Bazel 5.0.0-pre.20210623.2 - - Expected release date: July 2nd

Task list:

- [x] Pick release baseline: 8b453331163378071f1cfe0ae7c74d551c21b834 with cherrypick 223113c9202e8f338b183d1736d97327d28241ea

- [ ] Create release candidate:

- [ ] Post-submit:

- [ ] Push the release:

- [ ] Update the [rel... | process | status of bazel pre expected release date july task list pick release baseline with cherrypick create release candidate post submit push the release update the | 1 |

909 | 3,371,993,215 | IssuesEvent | 2015-11-23 21:31:38 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Perf_Process.Kill test failed in CI on Windows | 2 - In Progress System.Diagnostics.Process | http://dotnet-ci.cloudapp.net/job/dotnet_corefx_windows_debug_prtest/6046/console

```

System.InvalidOperationException : Cannot process request because the process (10824) has exited.

Stack Trace:

d:\j\workspace\dotnet_corefx_windows_debug_prtest\src\System.Diagnostics.Process\src\System\Diagnost... | 1.0 | Perf_Process.Kill test failed in CI on Windows - http://dotnet-ci.cloudapp.net/job/dotnet_corefx_windows_debug_prtest/6046/console

```

System.InvalidOperationException : Cannot process request because the process (10824) has exited.

Stack Trace:

d:\j\workspace\dotnet_corefx_windows_debug_prtest\s... | process | perf process kill test failed in ci on windows system invalidoperationexception cannot process request because the process has exited stack trace d j workspace dotnet corefx windows debug prtest src system diagnostics process src system diagnostics process windows cs at sys... | 1 |

9,158 | 12,217,582,740 | IssuesEvent | 2020-05-01 17:30:29 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | closed | Create sample scripts for verifying HSM certificates and attestations | api: kms type: process | Scripts to verify HSM certificates and attestations need to be added to https://cloud.google.com/kms/docs/attest-key#script_for_verifying_an_attestation, but they should be hosted on Github rather than in the documentation. | 1.0 | Create sample scripts for verifying HSM certificates and attestations - Scripts to verify HSM certificates and attestations need to be added to https://cloud.google.com/kms/docs/attest-key#script_for_verifying_an_attestation, but they should be hosted on Github rather than in the documentation. | process | create sample scripts for verifying hsm certificates and attestations scripts to verify hsm certificates and attestations need to be added to but they should be hosted on github rather than in the documentation | 1 |

10,322 | 13,161,677,907 | IssuesEvent | 2020-08-10 20:01:33 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | ACR 'endpoint myprivate.azurecr.io' not possible w/out preview | Pri1 devops-cicd-process/tech devops/prod doc-bug | Add notice that accessing private ACR repositories requires an endpoint that is in preview currently.

I was unpleasantly surprised by this and am now having to wait for support to give me access.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

... | 1.0 | ACR 'endpoint myprivate.azurecr.io' not possible w/out preview - Add notice that accessing private ACR repositories requires an endpoint that is in preview currently.

I was unpleasantly surprised by this and am now having to wait for support to give me access.

---

#### Document Details

⚠ *Do not edit this section... | process | acr endpoint myprivate azurecr io not possible w out preview add notice that accessing private acr repositories requires an endpoint that is in preview currently i was unpleasantly surprised by this and am now having to wait for support to give me access document details ⚠ do not edit this section... | 1 |

19,461 | 25,753,179,015 | IssuesEvent | 2022-12-08 14:38:31 | googleapis/google-cloud-dotnet | https://api.github.com/repos/googleapis/google-cloud-dotnet | closed | Enable UseSelfSignedJwts by default in Storage/Translation | api: storage api: translation type: process | Once GAX 2.3.0 is released, we should modify StorageClientBuilder and TranslationClientBuilder (and AdvancedTranslationClientBuilder) to enable the use of self-signed JWTs with scopes by default.

We can't do this *just* by default, as BigQuery doesn't appear to support self-signed JWTs with scopes at the moment, and... | 1.0 | Enable UseSelfSignedJwts by default in Storage/Translation - Once GAX 2.3.0 is released, we should modify StorageClientBuilder and TranslationClientBuilder (and AdvancedTranslationClientBuilder) to enable the use of self-signed JWTs with scopes by default.

We can't do this *just* by default, as BigQuery doesn't appe... | process | enable useselfsignedjwts by default in storage translation once gax is released we should modify storageclientbuilder and translationclientbuilder and advancedtranslationclientbuilder to enable the use of self signed jwts with scopes by default we can t do this just by default as bigquery doesn t appe... | 1 |

17,462 | 23,286,430,702 | IssuesEvent | 2022-08-05 17:01:17 | googleapis/google-cloud-php | https://api.github.com/repos/googleapis/google-cloud-php | opened | Warning: a recent release failed | type: process | The following release PRs may have failed:

* #5427 - The release job is 'autorelease: tagged', but expected 'autorelease: published'.

* #5370 - The release job is 'autorelease: tagged', but expected 'autorelease: published'.

* #5347 - The release job is 'autorelease: tagged', but expected 'autorelease: published'. | 1.0 | Warning: a recent release failed - The following release PRs may have failed:

* #5427 - The release job is 'autorelease: tagged', but expected 'autorelease: published'.

* #5370 - The release job is 'autorelease: tagged', but expected 'autorelease: published'.

* #5347 - The release job is 'autorelease: tagged', but exp... | process | warning a recent release failed the following release prs may have failed the release job is autorelease tagged but expected autorelease published the release job is autorelease tagged but expected autorelease published the release job is autorelease tagged but expected au... | 1 |

39,250 | 12,651,379,026 | IssuesEvent | 2020-06-17 00:07:50 | rhari26/RailsDemo | https://api.github.com/repos/rhari26/RailsDemo | opened | CVE-2019-16770 (High) detected in puma-3.6.2.gem | security vulnerability | ## CVE-2019-16770 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>puma-3.6.2.gem</b></p></summary>

<p>Puma is a simple, fast, threaded, and highly concurrent HTTP 1.1 server for Ruby/R... | True | CVE-2019-16770 (High) detected in puma-3.6.2.gem - ## CVE-2019-16770 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>puma-3.6.2.gem</b></p></summary>

<p>Puma is a simple, fast, threade... | non_process | cve high detected in puma gem cve high severity vulnerability vulnerable library puma gem puma is a simple fast threaded and highly concurrent http server for ruby rack applications puma is intended for use in both development and production environments in order to get... | 0 |

21,084 | 28,038,948,132 | IssuesEvent | 2023-03-28 16:59:26 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | When Sandbox is granted to linked table column, but no access to the linked table, then "dirty" queries fail | Type:Bug Priority:P3 Querying/Processor Administration/Permissions Querying/Nested Queries Difficulty:Easy .Backend .Reproduced Administration/Data Sandboxes .Product Input Needed .Team/FunPolice :police_officer: | **Describe the bug**

If sandbox grant access is set to a linked table column, but without granting access to that table explicitly, then all "dirty" queries will result in `You do not have permissions to run this query` until the query is saved (and refreshed query/browser).

**To Reproduce**

1. Admin > People > cr... | 1.0 | When Sandbox is granted to linked table column, but no access to the linked table, then "dirty" queries fail - **Describe the bug**

If sandbox grant access is set to a linked table column, but without granting access to that table explicitly, then all "dirty" queries will result in `You do not have permissions to run ... | process | when sandbox is granted to linked table column but no access to the linked table then dirty queries fail describe the bug if sandbox grant access is set to a linked table column but without granting access to that table explicitly then all dirty queries will result in you do not have permissions to run ... | 1 |

14,410 | 17,462,336,329 | IssuesEvent | 2021-08-06 12:21:12 | arcus-azure/arcus.messaging | https://api.github.com/repos/arcus-azure/arcus.messaging | opened | Provide access to the message router options via the message pump options for custom message router implementations | enhancement area:message-processing | **Is your feature request related to a problem? Please describe.**

When implementing a custom `IAzureServiceBusMessageRouter`, we can't use the same `configureMessagePump` function (message router options are part of the message pump options in this case), because the message router options are `internal` in the messa... | 1.0 | Provide access to the message router options via the message pump options for custom message router implementations - **Is your feature request related to a problem? Please describe.**

When implementing a custom `IAzureServiceBusMessageRouter`, we can't use the same `configureMessagePump` function (message router opti... | process | provide access to the message router options via the message pump options for custom message router implementations is your feature request related to a problem please describe when implementing a custom iazureservicebusmessagerouter we can t use the same configuremessagepump function message router opti... | 1 |

125,590 | 12,263,597,232 | IssuesEvent | 2020-05-07 01:31:55 | rerost/issue-creator | https://api.github.com/repos/rerost/issue-creator | closed | [08/21/2019-08/28/2019] Sample | documentation | issue-creator

Create new issue from this issue

```

issue-creator create https://github.com/rerost/issue-creator/issues/1

```

Create new issue from this issue by every monday

```

issue-creator schedule apply '0 0 * * 1' https://github.com/rerost/issue-creator/issues/1

```

last issue: https://github.com/re... | 1.0 | [08/21/2019-08/28/2019] Sample - issue-creator

Create new issue from this issue

```

issue-creator create https://github.com/rerost/issue-creator/issues/1

```

Create new issue from this issue by every monday

```

issue-creator schedule apply '0 0 * * 1' https://github.com/rerost/issue-creator/issues/1

```

... | non_process | sample issue creator create new issue from this issue issue creator create create new issue from this issue by every monday issue creator schedule apply last issue created from by | 0 |

19,526 | 25,837,160,001 | IssuesEvent | 2022-12-12 20:38:16 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Failed to split the terminal created by "open in integrated terminal" | bug *not-reproducible remote terminal-process | <!-- ⚠️⚠️ Do Not Delete This! bug_report_template ⚠️⚠️ -->

<!-- Please read our Rules of Conduct: https://opensource.microsoft.com/codeofconduct/ -->

<!-- 🕮 Read our guide about submitting issues: https://github.com/microsoft/vscode/wiki/Submitting-Bugs-and-Suggestions -->

<!-- 🔎 Search existing issues to avoid cr... | 1.0 | Failed to split the terminal created by "open in integrated terminal" - <!-- ⚠️⚠️ Do Not Delete This! bug_report_template ⚠️⚠️ -->

<!-- Please read our Rules of Conduct: https://opensource.microsoft.com/codeofconduct/ -->

<!-- 🕮 Read our guide about submitting issues: https://github.com/microsoft/vscode/wiki/Submitt... | process | failed to split the terminal created by open in integrated terminal does this issue occur when all extensions are disabled i don t know i need the remote development extension and extension bisect seems not to support test with only one extension enabled report issue dialog can... | 1 |

18,268 | 24,347,508,682 | IssuesEvent | 2022-10-02 14:14:22 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | closed | [FALSE-POSITIVE?] ccsu.edu | whitelisting process | **Domains or links**

```

web.ccsu.edu

www.ccsu.edu

www1.ccsu.edu

www2.ccsu.edu

chortle.ccsu.edu

```

**Example url**

`https://chortle.ccsu.edu/finiteautomata/Section07/sect07_12.html`

**More Information**

domain blocked by UHB dns.

**Have you requested removal from other sources?**

No, as does... | 1.0 | [FALSE-POSITIVE?] ccsu.edu - **Domains or links**

```

web.ccsu.edu

www.ccsu.edu

www1.ccsu.edu

www2.ccsu.edu

chortle.ccsu.edu

```

**Example url**

`https://chortle.ccsu.edu/finiteautomata/Section07/sect07_12.html`

**More Information**

domain blocked by UHB dns.

**Have you requested removal from ot... | process | ccsu edu domains or links web ccsu edu ccsu edu ccsu edu chortle ccsu edu example url more information domain blocked by uhb dns have you requested removal from other sources no as does not belong to any blacklist | 1 |

87,525 | 15,779,928,756 | IssuesEvent | 2021-04-01 09:18:49 | AlexRogalskiy/gradle-java-sample | https://api.github.com/repos/AlexRogalskiy/gradle-java-sample | closed | CVE-2021-21350 (High) detected in xstream-1.4.10.jar - autoclosed | security vulnerability | ## CVE-2021-21350 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.10.jar</b></p></summary>

<p>XStream is a serialization library from Java objects to XML and back.</p>

<p>L... | True | CVE-2021-21350 (High) detected in xstream-1.4.10.jar - autoclosed - ## CVE-2021-21350 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.10.jar</b></p></summary>

<p>XStream is... | non_process | cve high detected in xstream jar autoclosed cve high severity vulnerability vulnerable library xstream jar xstream is a serialization library from java objects to xml and back library home page a href path to dependency file gradle java sample buildsrc build gradle ... | 0 |

17,865 | 23,812,322,729 | IssuesEvent | 2022-09-04 23:26:53 | bisq-network/proposals | https://api.github.com/repos/bisq-network/proposals | closed | Have a clearly defined process for how users with accepted DAO reimbursement requests can trade with Burning Man | was:approved a:proposal re:processes | > _This is a Bisq Network proposal. Please familiarize yourself with the [submission and review process](https://bisq.wiki/Proposals)._

<!-- Please do not remove the text above. -->

## Background

About 12 months ago @refund-agent2 started to [partially reimburse high volume trades](https://github.com/bisq-netw... | 1.0 | Have a clearly defined process for how users with accepted DAO reimbursement requests can trade with Burning Man - > _This is a Bisq Network proposal. Please familiarize yourself with the [submission and review process](https://bisq.wiki/Proposals)._

<!-- Please do not remove the text above. -->

## Background

... | process | have a clearly defined process for how users with accepted dao reimbursement requests can trade with burning man this is a bisq network proposal please familiarize yourself with the background about months ago refund started to this was done to reduce the risk of human error eg an... | 1 |

578,690 | 17,150,121,982 | IssuesEvent | 2021-07-13 19:21:52 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | opened | Storybook: Build out Send Tab UI | OS/Desktop QA/No feature/wallet priority/P3 release-notes/exclude | <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROV... | 1.0 | Storybook: Build out Send Tab UI - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REO... | non_process | storybook build out send tab ui have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the issue closed it will only be reo... | 0 |

138,892 | 20,740,673,403 | IssuesEvent | 2022-03-14 17:22:38 | cryptic-game/frontend | https://api.github.com/repos/cryptic-game/frontend | closed | Base Components without Styling | wontfix enhancement design | ## Description

Add Base Components without Styling.

## Problem

No consistent design.

## Important

Do not merge for now, so that no new interaction elements can be created later. | 1.0 | Base Components without Styling - ## Description

Add Base Components without Styling.

## Problem

No consistent design.

## Important

Do not merge for now, so that no new interaction elements can be created later. | non_process | base components without styling description add base components without styling problem no consistent design important do not merge for now so that no new interaction elements can be created later | 0 |

1,032 | 3,489,289,899 | IssuesEvent | 2016-01-03 19:16:26 | Forket/connect2sa.co.za_01 | https://api.github.com/repos/Forket/connect2sa.co.za_01 | opened | Rating on thumbnail | In process | Rating on thumbnail

http://themes.themegoods2.com/rigel/demo/?rigelstyle=15

On the original design the rating for every post is displayed in the corner of thumbnails. Although we have changed our rating/review system, we’d like to display the ratings on the thumbnails in the same way. Can you please develop that for ... | 1.0 | Rating on thumbnail - Rating on thumbnail

http://themes.themegoods2.com/rigel/demo/?rigelstyle=15

On the original design the rating for every post is displayed in the corner of thumbnails. Although we have changed our rating/review system, we’d like to display the ratings on the thumbnails in the same way. Can you pl... | process | rating on thumbnail rating on thumbnail on the original design the rating for every post is displayed in the corner of thumbnails although we have changed our rating review system we’d like to display the ratings on the thumbnails in the same way can you please develop that for us | 1 |

270,899 | 8,474,475,107 | IssuesEvent | 2018-10-24 16:14:50 | syndesisio/syndesis.io | https://api.github.com/repos/syndesisio/syndesis.io | opened | Improve/update information and documentation | bug enhancement high priority | Similar, but separate, effort to #88 . This is to update the information that currently exists on the website (and is likely outdated), or lack thereof. | 1.0 | Improve/update information and documentation - Similar, but separate, effort to #88 . This is to update the information that currently exists on the website (and is likely outdated), or lack thereof. | non_process | improve update information and documentation similar but separate effort to this is to update the information that currently exists on the website and is likely outdated or lack thereof | 0 |

39,825 | 12,704,316,921 | IssuesEvent | 2020-06-23 01:01:44 | mpulsemobile/doccano | https://api.github.com/repos/mpulsemobile/doccano | opened | CVE-2020-13822 (High) detected in elliptic-6.4.0.tgz | security vulnerability | ## CVE-2020-13822 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.4.0.tgz</b></p></summary>

<p>EC cryptography</p>

<p>Library home page: <a href="https://registry.npmjs.org/... | True | CVE-2020-13822 (High) detected in elliptic-6.4.0.tgz - ## CVE-2020-13822 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.4.0.tgz</b></p></summary>

<p>EC cryptography</p>

<p>... | non_process | cve high detected in elliptic tgz cve high severity vulnerability vulnerable library elliptic tgz ec cryptography library home page a href path to dependency file tmp ws scm doccano app server static package json path to vulnerable library tmp ws scm doccano app se... | 0 |

17,869 | 9,941,490,216 | IssuesEvent | 2019-07-03 11:45:13 | JuliaReach/LazySets.jl | https://api.github.com/repos/JuliaReach/LazySets.jl | closed | Pass solver to removevredundancy of polytopes | performance | `Polyhedra.removevredundancy` has improved, but we are not using it correctly. We should pass an (LP) solver. | True | Pass solver to removevredundancy of polytopes - `Polyhedra.removevredundancy` has improved, but we are not using it correctly. We should pass an (LP) solver. | non_process | pass solver to removevredundancy of polytopes polyhedra removevredundancy has improved but we are not using it correctly we should pass an lp solver | 0 |

11,361 | 14,175,663,259 | IssuesEvent | 2020-11-12 21:59:51 | googleapis/python-storage | https://api.github.com/repos/googleapis/python-storage | closed | New test for 'Blob._do_multipart_upload' w/ metadata flakes | api: storage priority: p1 type: process | The [flaky test](https://source.cloud.google.com/results/invocations/26bf2df0-2ddb-4699-bc41-2430500326e9/targets/cloud-devrel%2Fclient-libraries%2Fpython%2Fgoogleapis%2Fpython-storage%2Fpresubmit%2Fpresubmit/log) was [introduced in #298](https://github.com/googleapis/python-storage/pull/298/files#diff-e4a098aa380a5eea... | 1.0 | New test for 'Blob._do_multipart_upload' w/ metadata flakes - The [flaky test](https://source.cloud.google.com/results/invocations/26bf2df0-2ddb-4699-bc41-2430500326e9/targets/cloud-devrel%2Fclient-libraries%2Fpython%2Fgoogleapis%2Fpython-storage%2Fpresubmit%2Fpresubmit/log) was [introduced in #298](https://github.com/... | process | new test for blob do multipart upload w metadata flakes the was the issue is that the code which constructs the expected payload for the request is flaky given python s python blob data b name blob name r n if metadata blob data b... | 1 |

148,513 | 23,356,237,980 | IssuesEvent | 2022-08-10 07:41:38 | DouyinFE/semi-design | https://api.github.com/repos/DouyinFE/semi-design | closed | [Input] 按下态 bg color 与 Select 对齐 | 💄 Design PR Welcome | ### Which Component 出现bug的组件

- Input

### semi-ui version

- latest

### Expected result 期望的结果是什么

### Actual result 实际的结果是什么

### Actual result 实际的结果是什么

.

We should get back to a place where we have a good integration test. | 1.0 | tests: we no longer have a good integration tests - Our samples tests recently began failing, due to a collision on resource name [see](https://github.com/googleapis/nodejs-phishing-protection/pull/190).

We should get back to a place where we have a good integration test. | process | tests we no longer have a good integration tests our samples tests recently began failing due to a collision on resource name we should get back to a place where we have a good integration test | 1 |

5,881 | 8,705,216,601 | IssuesEvent | 2018-12-05 21:43:15 | googleapis/google-cloud-python | https://api.github.com/repos/googleapis/google-cloud-python | closed | Trace: lease do a new release | api: cloudtrace type: process | This package seems to be forgotten and the last release is from ~1y ago. | 1.0 | Trace: lease do a new release - This package seems to be forgotten and the last release is from ~1y ago. | process | trace lease do a new release this package seems to be forgotten and the last release is from ago | 1 |

196,485 | 22,441,937,126 | IssuesEvent | 2022-06-21 02:21:03 | arielorn/goalert | https://api.github.com/repos/arielorn/goalert | opened | CVE-2022-33987 (Medium) detected in got-7.1.0.tgz, got-8.3.2.tgz | security vulnerability | ## CVE-2022-33987 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>got-7.1.0.tgz</b>, <b>got-8.3.2.tgz</b></p></summary>

<p>

<details><summary><b>got-7.1.0.tgz</b></p></summary>

<p... | True | CVE-2022-33987 (Medium) detected in got-7.1.0.tgz, got-8.3.2.tgz - ## CVE-2022-33987 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>got-7.1.0.tgz</b>, <b>got-8.3.2.tgz</b></p></sum... | non_process | cve medium detected in got tgz got tgz cve medium severity vulnerability vulnerable libraries got tgz got tgz got tgz simplified http requests library home page a href path to dependency file web src package json path to vulnerable libr... | 0 |

115,195 | 24,732,261,542 | IssuesEvent | 2022-10-20 18:39:13 | nagios-plugins/nagios-plugins | https://api.github.com/repos/nagios-plugins/nagios-plugins | closed | check_disk.c: build failure with upcoming clang-16 | Code Quality | clang-15 tried to enable the following by default:

* `-Werror=implicit-function-declaration`

* `-Werror=implicit-int`

* `-Werror=strict-prototypes`

This caused some breakage, so they're delaying it until clang-16. But it's still coming. With those flags, nagios-plugins fails to build:

```

check_disk.c:392:2... | 1.0 | check_disk.c: build failure with upcoming clang-16 - clang-15 tried to enable the following by default:

* `-Werror=implicit-function-declaration`

* `-Werror=implicit-int`

* `-Werror=strict-prototypes`

This caused some breakage, so they're delaying it until clang-16. But it's still coming. With those flags, nagi... | non_process | check disk c build failure with upcoming clang clang tried to enable the following by default werror implicit function declaration werror implicit int werror strict prototypes this caused some breakage so they re delaying it until clang but it s still coming with those flags nagios ... | 0 |

32,592 | 6,088,352,864 | IssuesEvent | 2017-06-18 20:59:53 | uccser/cs-unplugged | https://api.github.com/repos/uccser/cs-unplugged | closed | Update authors in the docs | documentation | `authors` currently lists a few of our names, this should be changed to "University of Canterbury Computer Science Education Research Group" :) | 1.0 | Update authors in the docs - `authors` currently lists a few of our names, this should be changed to "University of Canterbury Computer Science Education Research Group" :) | non_process | update authors in the docs authors currently lists a few of our names this should be changed to university of canterbury computer science education research group | 0 |

118,154 | 17,576,869,117 | IssuesEvent | 2021-08-15 19:34:52 | ghc-dev/Ronald-Lynch | https://api.github.com/repos/ghc-dev/Ronald-Lynch | opened | CVE-2017-18077 (High) detected in brace-expansion-1.1.6.tgz | security vulnerability | ## CVE-2017-18077 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>brace-expansion-1.1.6.tgz</b></p></summary>

<p>Brace expansion as known from sh/bash</p>

<p>Library home page: <a href... | True | CVE-2017-18077 (High) detected in brace-expansion-1.1.6.tgz - ## CVE-2017-18077 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>brace-expansion-1.1.6.tgz</b></p></summary>

<p>Brace exp... | non_process | cve high detected in brace expansion tgz cve high severity vulnerability vulnerable library brace expansion tgz brace expansion as known from sh bash library home page a href path to dependency file ronald lynch package json path to vulnerable library ronald lynch no... | 0 |

364,414 | 25,490,130,854 | IssuesEvent | 2022-11-27 00:11:25 | Zsupi/VolumeRendering | https://api.github.com/repos/Zsupi/VolumeRendering | closed | Documentation | documentation framework volume rendering | # Task:

## Create documentation for the project

- [ ] Volume Rendering

- [ ] Physic

- [ ] Framework | 1.0 | Documentation - # Task:

## Create documentation for the project

- [ ] Volume Rendering

- [ ] Physic

- [ ] Framework | non_process | documentation task create documentation for the project volume rendering physic framework | 0 |

318,757 | 9,696,956,641 | IssuesEvent | 2019-05-25 12:38:35 | yalla-coop/earwig | https://api.github.com/repos/yalla-coop/earwig | opened | I cannot see images uploaded to Worksite profiles | bug priority-1 | ERROR: type should be string, got "\r\nhttps://www.loom.com/share/ee34cffff2514322adbc911bfa2e6363 | \r\n\r\nThis isn't rendering the photos when you look at the review in the view section\r\n\r\n\r\n\r\n" | 1.0 | I cannot see images uploaded to Worksite profiles -

https://www.loom.com/share/ee34cffff2514322adbc911bfa2e6363 |

This isn't rendering the photos when you look at the review in the view section

| non_process | i cannot see images uploaded to worksite profiles this isn t rendering the photos when you look at the review in the view section | 0 |

175,052 | 21,300,718,535 | IssuesEvent | 2022-04-15 02:28:41 | YaronSpawn/NodeGoat | https://api.github.com/repos/YaronSpawn/NodeGoat | opened | CVE-2021-44906 (High) detected in multiple libraries | security vulnerability | ## CVE-2021-44906 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>minimist-0.0.10.tgz</b>, <b>minimist-1.2.0.tgz</b></p></summary>

<p>

<details><summary><b... | True | CVE-2021-44906 (High) detected in multiple libraries - ## CVE-2021-44906 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>minimist-0.0.10.tgz</b>, <b>minimis... | non_process | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries minimist tgz minimist tgz minimist tgz minimist tgz parse argument options library home page a href path to dependency file package json path to vu... | 0 |

205,850 | 15,691,938,154 | IssuesEvent | 2021-03-25 18:29:19 | M-Davies/eye-of-horus | https://api.github.com/repos/M-Davies/eye-of-horus | opened | Consider making lock gesture optional | bug gesture testing | Mainly thinking of scenarios where a user cannot log themselves out (although that could also be handled with timeouts) | 1.0 | Consider making lock gesture optional - Mainly thinking of scenarios where a user cannot log themselves out (although that could also be handled with timeouts) | non_process | consider making lock gesture optional mainly thinking of scenarios where a user cannot log themselves out although that could also be handled with timeouts | 0 |

2,657 | 5,434,174,072 | IssuesEvent | 2017-03-05 03:34:55 | coala/documentation | https://api.github.com/repos/coala/documentation | reopened | Docs: Add short links on the pages that have them | difficulty/low difficulty/newcomer process/pending review | Some presentation had direct links to the newcomer guide.

We have some "URL shortener":

https://coala.io/newcomer -> http://docs.coala.io/en/latest/Developers/Newcomers_Guide.html

https://coala.io/new -> https://github.com/issues?utf8=%E2%9C%93&q=is%3Aopen+is%3Aissue+user%3Acoala+label%3Adifficulty%2Fnewcomer++no%3A... | 1.0 | Docs: Add short links on the pages that have them - Some presentation had direct links to the newcomer guide.

We have some "URL shortener":

https://coala.io/newcomer -> http://docs.coala.io/en/latest/Developers/Newcomers_Guide.html

https://coala.io/new -> https://github.com/issues?utf8=%E2%9C%93&q=is%3Aopen+is%3Aiss... | process | docs add short links on the pages that have them some presentation had direct links to the newcomer guide we have some url shortener etc we should add the url shortened link on the page getting shortened in the documentation so people browsing around the docs know there s a short url point... | 1 |

820,715 | 30,784,649,758 | IssuesEvent | 2023-07-31 12:29:07 | PHI-base/PHI5_web_display | https://api.github.com/repos/PHI-base/PHI5_web_display | opened | Don't show 'Annotation extension term' in Advanced Search | medium priority | In the Advanced Search page, there is an option for 'Annotation extension term' that was meant to search based on the values of annotation extensions.

This search option currently doesn't do anything, and in issue #94 we decided that this search option probably won't be useful for most users of PHI-base 5.

So, we... | 1.0 | Don't show 'Annotation extension term' in Advanced Search - In the Advanced Search page, there is an option for 'Annotation extension term' that was meant to search based on the values of annotation extensions.

This search option currently doesn't do anything, and in issue #94 we decided that this search option prob... | non_process | don t show annotation extension term in advanced search in the advanced search page there is an option for annotation extension term that was meant to search based on the values of annotation extensions this search option currently doesn t do anything and in issue we decided that this search option proba... | 0 |

115,204 | 14,703,595,089 | IssuesEvent | 2021-01-04 15:16:13 | dusk-network/plonk | https://api.github.com/repos/dusk-network/plonk | opened | Implement min constant generics | API-design area:cryptography constraint_system type:feature | Currently, the api allows the user to build two different arity lookup tables. Either 3 or 4.

The former is for witness of single operations types for lookups and the latter is for concatenated lookups. Instead of having both structs, we should implement min constant generics to have rules that permeates both use c... | 1.0 | Implement min constant generics - Currently, the api allows the user to build two different arity lookup tables. Either 3 or 4.

The former is for witness of single operations types for lookups and the latter is for concatenated lookups. Instead of having both structs, we should implement min constant generics to ha... | non_process | implement min constant generics currently the api allows the user to build two different arity lookup tables either or the former is for witness of single operations types for lookups and the latter is for concatenated lookups instead of having both structs we should implement min constant generics to ha... | 0 |

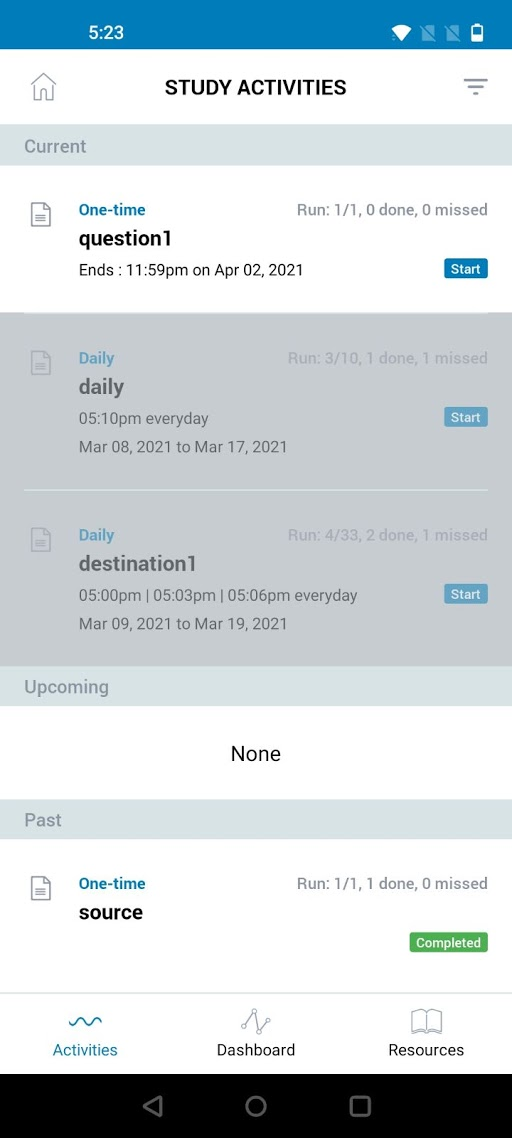

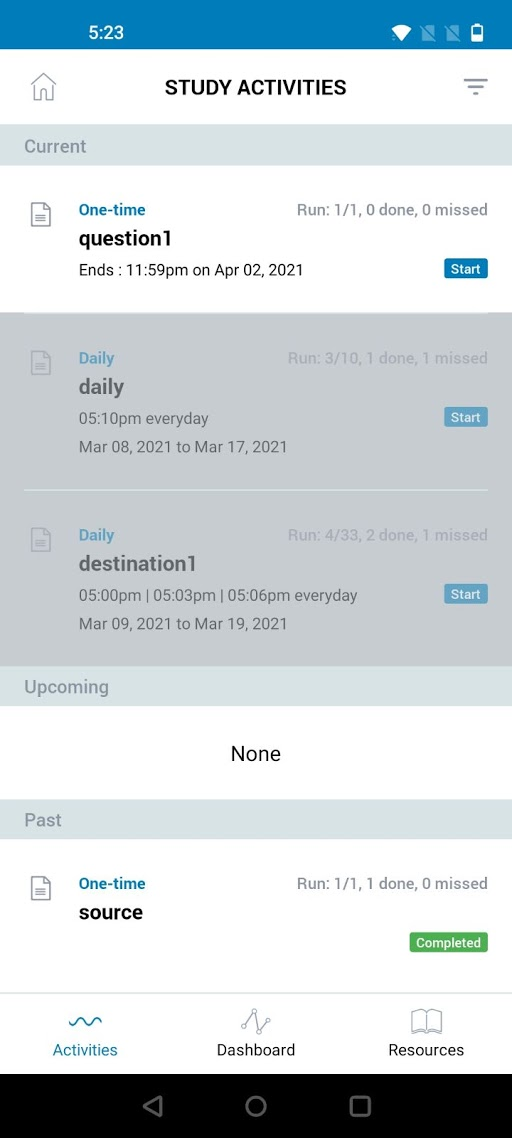

13,390 | 15,865,857,526 | IssuesEvent | 2021-04-08 15:08:46 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Android] Study Activities > Need to correct the format to display the activity runs | Android P2 Process: Enhancement Process: Fixed Process: Tested dev |

A/R:- **Run: 4/33, 3 done, 1 missed**

E/R:- **Run 4 of 33, 3 done, 1 missed** | 3.0 | [Android] Study Activities > Need to correct the format to display the activity runs -

A/R:- **Run: 4/33, 3 done, 1 missed**

E/R:- **Run 4 of 33, 3 done, 1 missed** | process | study activities need to correct the format to display the activity runs a r run done missed e r run of done missed | 1 |

15,667 | 19,847,154,340 | IssuesEvent | 2022-01-21 08:07:58 | ooi-data/CE02SHSM-SBD12-08-FDCHPA000-recovered_host-fdchp_a_dcl_instrument_recovered | https://api.github.com/repos/ooi-data/CE02SHSM-SBD12-08-FDCHPA000-recovered_host-fdchp_a_dcl_instrument_recovered | opened | 🛑 Processing failed: ValueError | process | ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T08:07:57.895380.

## Details

Flow name: `CE02SHSM-SBD12-08-FDCHPA000-recovered_host-fdchp_a_dcl_instrument_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enough values to unpack (expected 3, go... | 1.0 | 🛑 Processing failed: ValueError - ## Overview

`ValueError` found in `processing_task` task during run ended on 2022-01-21T08:07:57.895380.

## Details

Flow name: `CE02SHSM-SBD12-08-FDCHPA000-recovered_host-fdchp_a_dcl_instrument_recovered`

Task name: `processing_task`

Error type: `ValueError`

Error message: not enou... | process | 🛑 processing failed valueerror overview valueerror found in processing task task during run ended on details flow name recovered host fdchp a dcl instrument recovered task name processing task error type valueerror error message not enough values to unpack expected go... | 1 |

4,515 | 7,360,184,040 | IssuesEvent | 2018-03-10 15:59:35 | ODiogoSilva/assemblerflow | https://api.github.com/repos/ODiogoSilva/assemblerflow | opened | Add definition of compute resources to Process classes | enhancement process | Add the `cpu` and `ram` attributes to the Process base class. These attributes will be used to build the nextflow configuration file based on the preset values. They can be later edited in the nextflow config as usual. | 1.0 | Add definition of compute resources to Process classes - Add the `cpu` and `ram` attributes to the Process base class. These attributes will be used to build the nextflow configuration file based on the preset values. They can be later edited in the nextflow config as usual. | process | add definition of compute resources to process classes add the cpu and ram attributes to the process base class these attributes will be used to build the nextflow configuration file based on the preset values they can be later edited in the nextflow config as usual | 1 |

24,406 | 12,291,937,766 | IssuesEvent | 2020-05-10 12:28:45 | Bantr/Spawn | https://api.github.com/repos/Bantr/Spawn | opened | useWhyDidYouUpdate | Performance | This hook makes it easy to see which prop changes are causing a component to re-render. If a function is particularly expensive to run | True | useWhyDidYouUpdate - This hook makes it easy to see which prop changes are causing a component to re-render. If a function is particularly expensive to run | non_process | usewhydidyouupdate this hook makes it easy to see which prop changes are causing a component to re render if a function is particularly expensive to run | 0 |

13,160 | 15,589,713,407 | IssuesEvent | 2021-03-18 08:25:59 | Ultimate-Hosts-Blacklist/whitelist | https://api.github.com/repos/Ultimate-Hosts-Blacklist/whitelist | opened | [FALSE-POSITIVE?] boards-api.greenhouse.io | whitelisting process | Greenhouse is an applicant tracking system and recruiting software that is designed to help make companies great at hiring and hire for what's next.

Many companies use it, and it shouldn't be blocked. Example of broken page: https://www.knock.com/careers#current-openings | 1.0 | [FALSE-POSITIVE?] boards-api.greenhouse.io - Greenhouse is an applicant tracking system and recruiting software that is designed to help make companies great at hiring and hire for what's next.

Many companies use it, and it shouldn't be blocked. Example of broken page: https://www.knock.com/careers#current-openings | process | boards api greenhouse io greenhouse is an applicant tracking system and recruiting software that is designed to help make companies great at hiring and hire for what s next many companies use it and it shouldn t be blocked example of broken page | 1 |

22,053 | 30,571,748,330 | IssuesEvent | 2023-07-20 23:11:42 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | roblox-pyc 1.19.72 has 2 GuardDog issues | guarddog silent-process-execution | https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.19.72",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "roblox-pyc-1.19.72/src/robloxpy.py:134",

... | 1.0 | roblox-pyc 1.19.72 has 2 GuardDog issues - https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.19.72",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "ro... | process | roblox pyc has guarddog issues dependency roblox pyc version result issues errors results silent process execution location roblox pyc src robloxpy py code subprocess call ... | 1 |

36,535 | 9,819,964,006 | IssuesEvent | 2019-06-14 00:12:30 | Exawind/nalu-wind | https://api.github.com/repos/Exawind/nalu-wind | closed | Compilation failure with nalu-wind VOTD and Trilinos dev VOTD | build-issues question | Using NaluWind SHA 32994aff27c5 and Trilinos dev 8a82b322ba, I'm seeing this error. Is my Trilinos just too far ahead of NaluWind?

```

/opt/cray/pe/craype/2.5.15/bin/CC -DHYPRE_COGMRES -DNALU_USES_HYPRE -I/global/project/projectdirs/m2853/jjhu/build-try/source/nalu-wind/include -Iinclude -isystem /global/project/... | 1.0 | Compilation failure with nalu-wind VOTD and Trilinos dev VOTD - Using NaluWind SHA 32994aff27c5 and Trilinos dev 8a82b322ba, I'm seeing this error. Is my Trilinos just too far ahead of NaluWind?

```

/opt/cray/pe/craype/2.5.15/bin/CC -DHYPRE_COGMRES -DNALU_USES_HYPRE -I/global/project/projectdirs/m2853/jjhu/build-... | non_process | compilation failure with nalu wind votd and trilinos dev votd using naluwind sha and trilinos dev i m seeing this error is my trilinos just too far ahead of naluwind opt cray pe craype bin cc dhypre cogmres dnalu uses hypre i global project projectdirs jjhu build try source nalu wind incl... | 0 |

82,099 | 10,270,335,939 | IssuesEvent | 2019-08-23 11:20:05 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | opened | Documentation: Add AJAX events | documentation | Based on this Stack Overflow: https://stackoverflow.com/questions/57616538/what-are-the-possible-ajax-events-for-a-primefaces-inputtext

I think our docs pages should have an AJAX Events table for each component that simply has...

AJAX Events

**Default Event:** valueChange;

**Events:** [blur, change, valueChange... | 1.0 | Documentation: Add AJAX events - Based on this Stack Overflow: https://stackoverflow.com/questions/57616538/what-are-the-possible-ajax-events-for-a-primefaces-inputtext

I think our docs pages should have an AJAX Events table for each component that simply has...

AJAX Events

**Default Event:** valueChange;

**Eve... | non_process | documentation add ajax events based on this stack overflow i think our docs pages should have an ajax events table for each component that simply has ajax events default event valuechange events | 0 |

16,027 | 20,188,243,255 | IssuesEvent | 2022-02-11 01:21:05 | savitamittalmsft/WAS-SEC-TEST | https://api.github.com/repos/savitamittalmsft/WAS-SEC-TEST | opened | Establish a SecOps team and monitor security related events | WARP-Import WAF FEB 2021 Security Performance and Scalability Capacity Management Processes Health Modeling & Monitoring Application Level Monitoring | <a href="https://docs.microsoft.com/azure/architecture/framework/security/monitor-security-operations#incident-response">Establish a SecOps team and monitor security related events</a>

<p><b>Why Consider This?</b></p>

Is the organization effectively monitoring security posture across workloads, with a central S... | 1.0 | Establish a SecOps team and monitor security related events - <a href="https://docs.microsoft.com/azure/architecture/framework/security/monitor-security-operations#incident-response">Establish a SecOps team and monitor security related events</a>

<p><b>Why Consider This?</b></p>

Is the organization effectively ... | process | establish a secops team and monitor security related events why consider this is the organization effectively monitoring security posture across workloads with a central secops team monitoring security related telemetry data and investigating possible security breaches communication investigation an... | 1 |

10,544 | 13,326,325,571 | IssuesEvent | 2020-08-27 11:26:55 | GoogleCloudPlatform/cloud-opensource-java | https://api.github.com/repos/GoogleCloudPlatform/cloud-opensource-java | opened | Check kokoro scripts in google3 for JDK choice | process | audit the various release scripts and kokoro configs in google3 to check whether they explicitly specify a JDK. java 8 or Java 11, this should be a deliberate choice, not an accident. | 1.0 | Check kokoro scripts in google3 for JDK choice - audit the various release scripts and kokoro configs in google3 to check whether they explicitly specify a JDK. java 8 or Java 11, this should be a deliberate choice, not an accident. | process | check kokoro scripts in for jdk choice audit the various release scripts and kokoro configs in to check whether they explicitly specify a jdk java or java this should be a deliberate choice not an accident | 1 |

395,854 | 11,697,386,468 | IssuesEvent | 2020-03-06 11:42:25 | codacy/codacy-meta | https://api.github.com/repos/codacy/codacy-meta | opened | Support new PHPMD configuration files | Medium Priority | > Was wondering why Codacy could not detect my phpmd.dist.xml file, and then noticed you are expecting one that doesn't appear to be documented as the default by phpmd. Ideally, you would use the same file as standard, so that phpmd config can be shared across multiple consumers.

Both `phpmd.xml` and `phpmd.xml.dist` ... | 1.0 | Support new PHPMD configuration files - > Was wondering why Codacy could not detect my phpmd.dist.xml file, and then noticed you are expecting one that doesn't appear to be documented as the default by phpmd. Ideally, you would use the same file as standard, so that phpmd config can be shared across multiple consumers.... | non_process | support new phpmd configuration files was wondering why codacy could not detect my phpmd dist xml file and then noticed you are expecting one that doesn t appear to be documented as the default by phpmd ideally you would use the same file as standard so that phpmd config can be shared across multiple consumers ... | 0 |

186,715 | 6,742,250,084 | IssuesEvent | 2017-10-20 06:48:02 | RSPluto/Web-UI | https://api.github.com/repos/RSPluto/Web-UI | closed | 实时监测 - 平面图编辑区域中的设备位置保存后,离开再进入无法看到保存结果 | bug Fixed High Priority | 测试步骤:

1. 进入“实时监测” -> "切换至平面图";

2. 选择某一个区域;

3. 点击“编辑”,在区域中选择某些设备进行位置拖动;

4. 点击“保存”

5. 点击“切换至列表”,之后再重复步骤1. 2 检查刚才保存的内容。

期望结果:

5. 拖动设备位置可以保存成功并显示;

实际结果:

5. 设备没有在拖动的新位置上显示,依然是之前的。 | 1.0 | 实时监测 - 平面图编辑区域中的设备位置保存后,离开再进入无法看到保存结果 - 测试步骤:

1. 进入“实时监测” -> "切换至平面图";

2. 选择某一个区域;

3. 点击“编辑”,在区域中选择某些设备进行位置拖动;

4. 点击“保存”

5. 点击“切换至列表”,之后再重复步骤1. 2 检查刚才保存的内容。

期望结果:

5. 拖动设备位置可以保存成功并显示;

实际结果:

5. 设备没有在拖动的新位置上显示,依然是之前的。 | non_process | 实时监测 平面图编辑区域中的设备位置保存后,离开再进入无法看到保存结果 测试步骤 进入“实时监测” 切换至平面图 ; 选择某一个区域; 点击“编辑”,在区域中选择某些设备进行位置拖动; 点击“保存” 点击“切换至列表”, 检查刚才保存的内容。 期望结果: 拖动设备位置可以保存成功并显示; 实际结果: 设备没有在拖动的新位置上显示,依然是之前的。 | 0 |

29,805 | 8,410,174,328 | IssuesEvent | 2018-10-12 09:43:47 | PowerShell/PowerShell | https://api.github.com/repos/PowerShell/PowerShell | closed | Ubuntu: Software updater and apt-get update to 6.1.0-preview despite pre-release option being not ticked | Area-Build Issue-Enhancement OS-Linux | Steps to reproduce

------------------

Run the Ubuntu Software updater GUI or `sudo apt-get update; sudo apt-get upgrade`

Expected behavior

-----------------

When `pre-releases` are not ticked then the updater should not suggest an upgrade to preview versions.

Actual behavior

---------------

It suggest... | 1.0 | Ubuntu: Software updater and apt-get update to 6.1.0-preview despite pre-release option being not ticked - Steps to reproduce

------------------

Run the Ubuntu Software updater GUI or `sudo apt-get update; sudo apt-get upgrade`

Expected behavior

-----------------

When `pre-releases` are not ticked then the u... | non_process | ubuntu software updater and apt get update to preview despite pre release option being not ticked steps to reproduce run the ubuntu software updater gui or sudo apt get update sudo apt get upgrade expected behavior when pre releases are not ticked then the u... | 0 |

5,019 | 7,845,527,818 | IssuesEvent | 2018-06-19 13:12:44 | openvstorage/volumedriver | https://api.github.com/repos/openvstorage/volumedriver | closed | Volume migration times out due to slow volume shutdown | process_duplicate type_bug | * source:

```

2017-09-08 04:20:02 957916 -0400 - NY1SRV0008 - 5111/0x00007f79787f8700 - volumedriverfs/VFSLocalNode - 000000000420a9dc - info - transfer: Volume-d65df314-cebe-4d6b-97d4-fb6bae85e055: target_node data-ny1-02roLR7yTDk9hMdPGK

2017-09-08 04:20:02 958025 -0400 - NY1SRV0008 - 5111/0x00007f79787f8700 - volu... | 1.0 | Volume migration times out due to slow volume shutdown - * source:

```

2017-09-08 04:20:02 957916 -0400 - NY1SRV0008 - 5111/0x00007f79787f8700 - volumedriverfs/VFSLocalNode - 000000000420a9dc - info - transfer: Volume-d65df314-cebe-4d6b-97d4-fb6bae85e055: target_node data-ny1-02roLR7yTDk9hMdPGK

2017-09-08 04:20:02 9... | process | volume migration times out due to slow volume shutdown source volumedriverfs vfslocalnode info transfer volume cebe target node data volumedriverfs vfslocalnode info destroy cebe trying to sync to the backend ... | 1 |

17,139 | 22,677,761,367 | IssuesEvent | 2022-07-04 07:03:29 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | opened | Adopt utility process for shared process and set `app.enableSandbox()` | plan-item shared-process sandbox | This is a follow up from https://github.com/microsoft/vscode/issues/92164 and covers remaining work to eventually enable sandboxed renderers fully in Electron.

This means that our shared process has to move away from a node.js enabled browser window to the new utility process. Breaking down the usages today:

* exte... | 1.0 | Adopt utility process for shared process and set `app.enableSandbox()` - This is a follow up from https://github.com/microsoft/vscode/issues/92164 and covers remaining work to eventually enable sandboxed renderers fully in Electron.

This means that our shared process has to move away from a node.js enabled browser w... | process | adopt utility process for shared process and set app enablesandbox this is a follow up from and covers remaining work to eventually enable sandboxed renderers fully in electron this means that our shared process has to move away from a node js enabled browser window to the new utility process breaking down... | 1 |

72,352 | 8,723,533,540 | IssuesEvent | 2018-12-09 22:39:36 | hypnospinner/Project-Focus | https://api.github.com/repos/hypnospinner/Project-Focus | closed | Создать back-end решение и настроить инфраструктуру | design groundwork important | Back-end приложение будет использовать .NET Core 2.1 микросервисы. В качестве языка наиболее выгодно использовать F# (алгебраические типы данных для команд и событий улучшат восприятие кода и ускорят разработку). В качестве СУБД предлагается использовать Mongo DB (структура хранимых типов данных будет сильно изменяться... | 1.0 | Создать back-end решение и настроить инфраструктуру - Back-end приложение будет использовать .NET Core 2.1 микросервисы. В качестве языка наиболее выгодно использовать F# (алгебраические типы данных для команд и событий улучшат восприятие кода и ускорят разработку). В качестве СУБД предлагается использовать Mongo DB (с... | non_process | создать back end решение и настроить инфраструктуру back end приложение будет использовать net core микросервисы в качестве языка наиболее выгодно использовать f алгебраические типы данных для команд и событий улучшат восприятие кода и ускорят разработку в качестве субд предлагается использовать mongo db с... | 0 |

201,990 | 15,818,277,975 | IssuesEvent | 2021-04-05 15:47:58 | linrunner/TLP | https://api.github.com/repos/linrunner/TLP | closed | Docs vs manpage on battery thresholds | committed documentation change | On the latest docs version (1.3) for `setcharge` it [says](https://linrunner.de/tlp/usage/tlp.html#change-battery-charge-thresholds-to-temporary-values),

> Without parameters the configured settings for the main battery (BAT0) are applied. **Upon reboot, thresholds are reset to the configured settings.**

and late... | 1.0 | Docs vs manpage on battery thresholds - On the latest docs version (1.3) for `setcharge` it [says](https://linrunner.de/tlp/usage/tlp.html#change-battery-charge-thresholds-to-temporary-values),

> Without parameters the configured settings for the main battery (BAT0) are applied. **Upon reboot, thresholds are reset t... | non_process | docs vs manpage on battery thresholds on the latest docs version for setcharge it without parameters the configured settings for the main battery are applied upon reboot thresholds are reset to the configured settings and latest tlp manpage says configured thresholds are... | 0 |

12,377 | 14,897,108,338 | IssuesEvent | 2021-01-21 11:17:24 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [Hydra - Mobile apps] [Audit Logs] "appVersion" is displayed null for the events | Bug Hydra P2 Process: Fixed Process: Tested dev | **Events:**

1. SIGNIN_SUCCEEDED

2. SIGNIN_FAILED_UNREGISTERED_USER

3. SIGNIN_FAILED_INVALID_PASSWORD

4. ACCOUNT_LOCKED

and other events in Hydra for mobile

Sample snippet for PASSWORD_RESET_EMAIL_SENT_FOR_LOCKED_ACCOUNT event

```

{

"insertId": "1sxm6f8g1bpho96",

"jsonPayload": {

"userIp": "5... | 2.0 | [Hydra - Mobile apps] [Audit Logs] "appVersion" is displayed null for the events - **Events:**

1. SIGNIN_SUCCEEDED

2. SIGNIN_FAILED_UNREGISTERED_USER

3. SIGNIN_FAILED_INVALID_PASSWORD

4. ACCOUNT_LOCKED

and other events in Hydra for mobile

Sample snippet for PASSWORD_RESET_EMAIL_SENT_FOR_LOCKED_ACCOUNT even... | process | appversion is displayed null for the events events signin succeeded signin failed unregistered user signin failed invalid password account locked and other events in hydra for mobile sample snippet for password reset email sent for locked account event insertid ... | 1 |

614,225 | 19,161,554,192 | IssuesEvent | 2021-12-03 01:10:31 | yukiHaga/regex-hunting | https://api.github.com/repos/yukiHaga/regex-hunting | opened | spaのルーティングを設定する | Priority: high Type: new feature | ## 概要

spaのルーティングを設定する。

## やること

- [ ] AccountSettings.jsxにコンポーネントを定義する。

- [ ] Games.jsxにコンポーネントを定義する。

- [ ] LandingPages.jsxにコンポーネントを定義する。

- [ ] MyPages.jsxにコンポーネントを定義する。

- [ ] PasswordResets.jsxにコンポーネントを定義する。

- [ ] PasswordUpdates.jsxにコンポーネントを定義する。

- [ ] PrivacyPolicies.jsxにコンポーネントを定義する。

- [ ] Rankings.jsxに... | 1.0 | spaのルーティングを設定する - ## 概要

spaのルーティングを設定する。

## やること

- [ ] AccountSettings.jsxにコンポーネントを定義する。

- [ ] Games.jsxにコンポーネントを定義する。

- [ ] LandingPages.jsxにコンポーネントを定義する。

- [ ] MyPages.jsxにコンポーネントを定義する。

- [ ] PasswordResets.jsxにコンポーネントを定義する。

- [ ] PasswordUpdates.jsxにコンポーネントを定義する。

- [ ] PrivacyPolicies.jsxにコンポーネントを定義する。

-... | non_process | spaのルーティングを設定する 概要 spaのルーティングを設定する。 やること accountsettings jsxにコンポーネントを定義する。 games jsxにコンポーネントを定義する。 landingpages jsxにコンポーネントを定義する。 mypages jsxにコンポーネントを定義する。 passwordresets jsxにコンポーネントを定義する。 passwordupdates jsxにコンポーネントを定義する。 privacypolicies jsxにコンポーネントを定義する。 rankings js... | 0 |

670 | 3,143,384,302 | IssuesEvent | 2015-09-14 06:25:31 | e-government-ua/i | https://api.github.com/repos/e-government-ua/i | closed | В дашборде устранить ошибочное появление диалога "Не обран шаблон для друку" | active hi priority In process of testing test | Это происходит, когда в комбобоксе выбран шаблон для печати а потом "Роздрукувати", закрыть диалог и перейти на другую таску

| 1.0 | В дашборде устранить ошибочное появление диалога "Не обран шаблон для друку" - Это происходит, когда в комбобоксе выбран шаблон для печати а потом "Роздрукувати", закрыть диалог и перейти на другую таску

| process | в дашборде устранить ошибочное появление диалога не обран шаблон для друку это происходит когда в комбобоксе выбран шаблон для печати а потом роздрукувати закрыть диалог и перейти на другую таску | 1 |

10,625 | 13,439,391,072 | IssuesEvent | 2020-09-07 20:56:37 | timberio/vector | https://api.github.com/repos/timberio/vector | opened | New URL functions for the remap syntax | domain: mapping domain: processing needs: requirements type: feature | Similar to #3761, I wanted to open an issue to represent a set of URL related functions. | 1.0 | New URL functions for the remap syntax - Similar to #3761, I wanted to open an issue to represent a set of URL related functions. | process | new url functions for the remap syntax similar to i wanted to open an issue to represent a set of url related functions | 1 |

18,667 | 24,583,055,247 | IssuesEvent | 2022-10-13 17:10:53 | keras-team/keras-cv | https://api.github.com/repos/keras-team/keras-cv | closed | Add RandomFog preprocessing layer | preprocessing | ## Weather Augmentation

One of the real-world scenarios that pose challenges for training neural networks of Autonomous vehicles

Impl. Ref

- https://github.com/UjjwalSaxena/Automold--Road-Augm... | 1.0 | Add RandomFog preprocessing layer - ## Weather Augmentation

One of the real-world scenarios that pose challenges for training neural networks of Autonomous vehicles

Impl. Ref

- https://github.... | process | add randomfog preprocessing layer weather augmentation one of the real world scenarios that pose challenges for training neural networks of autonomous vehicles impl ref | 1 |

11,560 | 14,438,665,966 | IssuesEvent | 2020-12-07 13:22:32 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | Expand --version command | kind/improvement process/candidate team/client topic: binary topic: cli | Currently the version command just returns the CLI version and one commit hash for the binary which is hardcoded in the package.json - not the _actual_ hash that the binary returns when asked for it.

Ideally the CLI would request the version from all the binaries version endpoints and output something like this:

... | 1.0 | Expand --version command - Currently the version command just returns the CLI version and one commit hash for the binary which is hardcoded in the package.json - not the _actual_ hash that the binary returns when asked for it.

Ideally the CLI would request the version from all the binaries version endpoints and outp... | process | expand version command currently the version command just returns the cli version and one commit hash for the binary which is hardcoded in the package json not the actual hash that the binary returns when asked for it ideally the cli would request the version from all the binaries version endpoints and outp... | 1 |

16,930 | 22,274,358,745 | IssuesEvent | 2022-06-10 15:09:32 | python/cpython | https://api.github.com/repos/python/cpython | closed | Library multiprocess leaks named resources. | performance stdlib 3.11 3.10 3.9 3.8 expert-multiprocessing | BPO | [46391](https://bugs.python.org/issue46391)

--- | :---

Nosy | @pitrou, @benjaminp, @1st1, @applio, @arhadthedev, @jxdabc, @BarkingBad

PRs | <li>python/cpython#30617</li>

Files | <li>[screen.png](https://bugs.python.org/file50580/screen.png "Uploaded as image/png at 2022-01-24.09:31:44 by @jxdabc"): screenshot</li... | 1.0 | Library multiprocess leaks named resources. - BPO | [46391](https://bugs.python.org/issue46391)

--- | :---

Nosy | @pitrou, @benjaminp, @1st1, @applio, @arhadthedev, @jxdabc, @BarkingBad

PRs | <li>python/cpython#30617</li>

Files | <li>[screen.png](https://bugs.python.org/file50580/screen.png "Uploaded as image/png at 20... | process | library multiprocess leaks named resources bpo nosy pitrou benjaminp applio arhadthedev jxdabc barkingbad prs python cpython files uploaded as image png at by jxdabc screenshot note these values reflect the state of the issue at the time it was mig... | 1 |

557,721 | 16,517,037,583 | IssuesEvent | 2021-05-26 10:46:25 | woocommerce/woocommerce-ios | https://api.github.com/repos/woocommerce/woocommerce-ios | closed | [Mobile Payments] Connecting a reader from payments leaves the app in an odd state and sometimes crashes | feature: mobile payments priority: critical type: bug type: crash | I'm not sure how to explain what's going on, but I recorded a video. When you connect to a reader directly from an Order details, there are a few issues:

1. The list of connected readers with the option to disconnect is briefly visible after connecting, before the modal is dismissed [00:16, 00:48]. Possibly related ... | 1.0 | [Mobile Payments] Connecting a reader from payments leaves the app in an odd state and sometimes crashes - I'm not sure how to explain what's going on, but I recorded a video. When you connect to a reader directly from an Order details, there are a few issues:

1. The list of connected readers with the option to disc... | non_process | connecting a reader from payments leaves the app in an odd state and sometimes crashes i m not sure how to explain what s going on but i recorded a video when you connect to a reader directly from an order details there are a few issues the list of connected readers with the option to disconnect is briefl... | 0 |

109,457 | 13,774,599,744 | IssuesEvent | 2020-10-08 06:32:27 | PostHog/posthog.com | https://api.github.com/repos/PostHog/posthog.com | opened | Standardize .com design & make code modular | design enhancement | After speaking with @berntgl and @jamesefhawkins and looking through posthog.com + code, it seems like there are some key areas we can improve:

- More design consistency throughout different pages

- Make this easy with a component-based design system in Figma

- Figure out where we're at –– review & docu... | 1.0 | Standardize .com design & make code modular - After speaking with @berntgl and @jamesefhawkins and looking through posthog.com + code, it seems like there are some key areas we can improve:

- More design consistency throughout different pages

- Make this easy with a component-based design system in Figma

... | non_process | standardize com design make code modular after speaking with berntgl and jamesefhawkins and looking through posthog com code it seems like there are some key areas we can improve more design consistency throughout different pages make this easy with a component based design system in figma ... | 0 |

9,547 | 12,512,085,379 | IssuesEvent | 2020-06-02 21:53:56 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | closed | Add Ruby 2.7 to the CI | type: process | The Ruby 2.7 compatible build of google-protobuf (version 3.12.0) landed a few days ago. We should be able to add Ruby 2.7 to the CI now. | 1.0 | Add Ruby 2.7 to the CI - The Ruby 2.7 compatible build of google-protobuf (version 3.12.0) landed a few days ago. We should be able to add Ruby 2.7 to the CI now. | process | add ruby to the ci the ruby compatible build of google protobuf version landed a few days ago we should be able to add ruby to the ci now | 1 |

18,786 | 24,690,980,309 | IssuesEvent | 2022-10-19 08:31:48 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | BP refactoring: homeostatic process: amino acid homeostatis process is_a cellular homeostatic process | cellular processes | - [x] GO:0080144 amino acid homeostasis' -> changed label to 'cellular amino acid homeostasis', changed parent to GO:0019725 cellular homeostasis & definition to "Any process involved in the maintenance of an internal steady state of amino acid within a cell." (done in #24218)

- [x] Change labels and definitions for a... | 1.0 | BP refactoring: homeostatic process: amino acid homeostatis process is_a cellular homeostatic process - - [x] GO:0080144 amino acid homeostasis' -> changed label to 'cellular amino acid homeostasis', changed parent to GO:0019725 cellular homeostasis & definition to "Any process involved in the maintenance of an interna... | process | bp refactoring homeostatic process amino acid homeostatis process is a cellular homeostatic process go amino acid homeostasis changed label to cellular amino acid homeostasis changed parent to go cellular homeostasis definition to any process involved in the maintenance of an internal steady state... | 1 |