Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

13,091 | 15,440,018,606 | IssuesEvent | 2021-03-08 02:08:54 | DevExpress/testcafe-hammerhead | https://api.github.com/repos/DevExpress/testcafe-hammerhead | closed | Clarify `console` methods arguments representation | AREA: client STATE: Stale SYSTEM: client side processing TYPE: enhancement | Now we have simply `String(arg)`/`'object'` representation (src/client/sandbox/console.js):

```js

_toString (obj) {

try {

return String(obj);

}

catch (e) {

return 'object';

}

}

```

**Native behavior:**

```js

const tst = Object.create(null);

tst.key = 'val';

console.log... | 1.0 | Clarify `console` methods arguments representation - Now we have simply `String(arg)`/`'object'` representation (src/client/sandbox/console.js):

```js

_toString (obj) {

try {

return String(obj);

}

catch (e) {

return 'object';

}

}

```

**Native behavior:**

```js

const tst = ... | process | clarify console methods arguments representation now we have simply string arg object representation src client sandbox console js js tostring obj try return string obj catch e return object native behavior js const tst ... | 1 |

12,605 | 15,008,141,354 | IssuesEvent | 2021-01-31 08:41:17 | panther-labs/panther | https://api.github.com/repos/panther-labs/panther | closed | Parser: NGINX JSON | enhancement logs p2 team:data processing | ### Describe the ideal solution

Support for NGINX Access logs in JSON format

### References

http://nginx.org/en/docs/http/ngx_http_log_module.html#log_format

NGINX can also be installed locally with brew for end-to-end validation | 1.0 | Parser: NGINX JSON - ### Describe the ideal solution

Support for NGINX Access logs in JSON format

### References

http://nginx.org/en/docs/http/ngx_http_log_module.html#log_format

NGINX can also be installed locally with brew for end-to-end validation | process | parser nginx json describe the ideal solution support for nginx access logs in json format references nginx can also be installed locally with brew for end to end validation | 1 |

200,156 | 22,739,462,609 | IssuesEvent | 2022-07-07 01:16:08 | scrapedia/scrapy-cookies | https://api.github.com/repos/scrapedia/scrapy-cookies | opened | CVE-2022-31116 (High) detected in ujson-2.0.3-cp27-cp27mu-manylinux1_x86_64.whl | security vulnerability | ## CVE-2022-31116 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ujson-2.0.3-cp27-cp27mu-manylinux1_x86_64.whl</b></p></summary>

<p>Ultra fast JSON encoder and decoder for Python</p>

... | True | CVE-2022-31116 (High) detected in ujson-2.0.3-cp27-cp27mu-manylinux1_x86_64.whl - ## CVE-2022-31116 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ujson-2.0.3-cp27-cp27mu-manylinux1_x8... | non_process | cve high detected in ujson whl cve high severity vulnerability vulnerable library ujson whl ultra fast json encoder and decoder for python library home page a href path to dependency file tmp ws scm scrapy cookies path to vulnerable library scra... | 0 |

120,942 | 10,142,799,919 | IssuesEvent | 2019-08-04 05:29:23 | motlabs/awesome-ml-demos-with-ios | https://api.github.com/repos/motlabs/awesome-ml-demos-with-ios | opened | Change performance table format | enhancement performance test | Example:

## Inference Time(ms)

| Repo | Model | XS | XS<br>Max | XR | X | 8 | 8+ | 7 | 7+ | 6S+ | 6+ |

| ----- | ----: | :----: | :----: | :----: | :----: | :----: | :----: | :----: | :----: | :----: | :----: |

| PoseEstimation-CoreML | cpm | - | 27 | 27 | 32 | 31 | 31 | 39 | 37 | 44 | 115 |

| PoseEstimatio... | 1.0 | Change performance table format - Example:

## Inference Time(ms)

| Repo | Model | XS | XS<br>Max | XR | X | 8 | 8+ | 7 | 7+ | 6S+ | 6+ |

| ----- | ----: | :----: | :----: | :----: | :----: | :----: | :----: | :----: | :----: | :----: | :----: |

| PoseEstimation-CoreML | cpm | - | 27 | 27 | 32 | 31 | 31 | 39 |... | non_process | change performance table format example inference time ms repo model xs xs max xr x poseestimation coreml cpm ... | 0 |

157,863 | 12,393,958,899 | IssuesEvent | 2020-05-20 16:11:27 | spack/spack | https://api.github.com/repos/spack/spack | closed | Bug/tests: three relocate.py tests fail with additional gcc libs | bug tests | I ran `spack test` on an LLNL LC machine and three relocate tests fail. I confirmed this is the case with commit `c50b586`. The bug appears to be related to a too-specific check of the RPATHs.

### Error Message

```

___________________________ test_replace_prefix_bin ____________________________

hello_world = <... | 1.0 | Bug/tests: three relocate.py tests fail with additional gcc libs - I ran `spack test` on an LLNL LC machine and three relocate tests fail. I confirmed this is the case with commit `c50b586`. The bug appears to be related to a too-specific check of the RPATHs.

### Error Message

```

___________________________ t... | non_process | bug tests three relocate py tests fail with additional gcc libs i ran spack test on an llnl lc machine and three relocate tests fail i confirmed this is the case with commit the bug appears to be related to a too specific check of the rpaths error message test re... | 0 |

19,504 | 25,812,565,446 | IssuesEvent | 2022-12-12 00:22:05 | esmero/strawberryfield | https://api.github.com/repos/esmero/strawberryfield | closed | Trigger a parent Node (or parent parent) Index tracker update on last sequence of a SBF + Computed field | enhancement Drupal Views JSON Postprocessors Property Keys Providers Events and Subscriber Typed Data and Search Strawberry Flavor | # What?

We need to aggregate Strawberry Flavors at the ADO level for unified search to happen (performance reasons means a subquery is a bad idea given how Drupal Views work related to Search API functionality...)

The idea is basic. An ADO Solr Document is ready way before all their Flavor Children are ready. So ... | 1.0 | Trigger a parent Node (or parent parent) Index tracker update on last sequence of a SBF + Computed field - # What?

We need to aggregate Strawberry Flavors at the ADO level for unified search to happen (performance reasons means a subquery is a bad idea given how Drupal Views work related to Search API functionality.... | process | trigger a parent node or parent parent index tracker update on last sequence of a sbf computed field what we need to aggregate strawberry flavors at the ado level for unified search to happen performance reasons means a subquery is a bad idea given how drupal views work related to search api functionality ... | 1 |

8,603 | 11,761,334,129 | IssuesEvent | 2020-03-13 21:37:43 | kubernetes/minikube | https://api.github.com/repos/kubernetes/minikube | closed | release_update_brew: access_token: unbound variable | help wanted kind/process packaging/brew priority/important-soon | minikube migrated from cask to brew, but the automation has not been updated to use `bump-formula-pr.rb` to trigger updates: https://github.com/Linuxbrew/brew/blob/master/docs/How-To-Open-a-Homebrew-Pull-Request.md

Here is where the automation should go:

https://github.com/kubernetes/minikube/blob/9be404689b6860f... | 1.0 | release_update_brew: access_token: unbound variable - minikube migrated from cask to brew, but the automation has not been updated to use `bump-formula-pr.rb` to trigger updates: https://github.com/Linuxbrew/brew/blob/master/docs/How-To-Open-a-Homebrew-Pull-Request.md

Here is where the automation should go:

https... | process | release update brew access token unbound variable minikube migrated from cask to brew but the automation has not been updated to use bump formula pr rb to trigger updates here is where the automation should go | 1 |

81,490 | 15,630,052,062 | IssuesEvent | 2021-03-22 01:12:33 | benchabot/react-native-maps | https://api.github.com/repos/benchabot/react-native-maps | opened | CVE-2020-7754 (High) detected in npm-user-validate-1.0.0.tgz, npm-user-validate-0.1.5.tgz | security vulnerability | ## CVE-2020-7754 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>npm-user-validate-1.0.0.tgz</b>, <b>npm-user-validate-0.1.5.tgz</b></p></summary>

<p>

<details><summary><b>npm-user-v... | True | CVE-2020-7754 (High) detected in npm-user-validate-1.0.0.tgz, npm-user-validate-0.1.5.tgz - ## CVE-2020-7754 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>npm-user-validate-1.0.0.tg... | non_process | cve high detected in npm user validate tgz npm user validate tgz cve high severity vulnerability vulnerable libraries npm user validate tgz npm user validate tgz npm user validate tgz user validations for npm library home page a href path t... | 0 |

19,264 | 25,455,863,274 | IssuesEvent | 2022-11-24 14:08:44 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [IDP] [PM] Admin is able to sign in , even when same user is disabled in the organizational directory | Bug P0 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | **Steps:**

1. Login to PM

2. Click on 'Admins' tab

3. Click on 'Add admin' button

4. Add organizational user in the email dropdown

5. After receiving the link to set up your participant manager account

6. Deactivate the same user in identity platform

7. Now, Click on the link to set up your participant manager... | 3.0 | [IDP] [PM] Admin is able to sign in , even when same user is disabled in the organizational directory - **Steps:**

1. Login to PM

2. Click on 'Admins' tab

3. Click on 'Add admin' button

4. Add organizational user in the email dropdown

5. After receiving the link to set up your participant manager account

6. De... | process | admin is able to sign in even when same user is disabled in the organizational directory steps login to pm click on admins tab click on add admin button add organizational user in the email dropdown after receiving the link to set up your participant manager account deactivat... | 1 |

15,125 | 18,869,551,051 | IssuesEvent | 2021-11-13 00:41:45 | 2i2c-org/team-compass | https://api.github.com/repos/2i2c-org/team-compass | opened | Create a 2i2c sustainability team | type: enhancement :label: strategy :label: team-process :label: business development | ### Description

Right now there are several issues related to sustainability that are largely being spearheaded by Chris. Because he is also working on many other parts of 2i2c (see https://github.com/2i2c-org/meta/issues/256 among other conversations), there are likely sub-optimal decisions being made and balls being... | 1.0 | Create a 2i2c sustainability team - ### Description

Right now there are several issues related to sustainability that are largely being spearheaded by Chris. Because he is also working on many other parts of 2i2c (see https://github.com/2i2c-org/meta/issues/256 among other conversations), there are likely sub-optimal ... | process | create a sustainability team description right now there are several issues related to sustainability that are largely being spearheaded by chris because he is also working on many other parts of see among other conversations there are likely sub optimal decisions being made and balls being dropped mo... | 1 |

10,000 | 13,042,376,094 | IssuesEvent | 2020-07-28 22:21:05 | hashicorp/packer | https://api.github.com/repos/hashicorp/packer | closed | Vsphere postprocessor hangs on ssl fingerprint verification | bug post-processor/vsphere | Packer 0.12.0 on linux. Ovftools 4.10, vcenter 5.10

The postprocessor vsphere hangs forever without any message (even with PACKER_LOG=1)

```javascript

"post-processors": [{

"type": "vsphere",

"disk_mode": "thin",

"host": "{{user `vcenter_host`}}",

"datastore": "{{user `vcenter_datastore`}}",... | 1.0 | Vsphere postprocessor hangs on ssl fingerprint verification - Packer 0.12.0 on linux. Ovftools 4.10, vcenter 5.10

The postprocessor vsphere hangs forever without any message (even with PACKER_LOG=1)

```javascript

"post-processors": [{

"type": "vsphere",

"disk_mode": "thin",

"host": "{{user `vcent... | process | vsphere postprocessor hangs on ssl fingerprint verification packer on linux ovftools vcenter the postprocessor vsphere hangs forever without any message even with packer log javascript post processors type vsphere disk mode thin host user vcenter ... | 1 |

15,933 | 20,158,933,300 | IssuesEvent | 2022-02-09 19:16:37 | 2i2c-org/team-compass | https://api.github.com/repos/2i2c-org/team-compass | opened | Use GitHub as a CDN for our images / GIFs in documentation | :label: team-process | ### Description of problem and opportunity to address it

**Context to understand the problem**

It is common for us to include images and GIFs as a part of our documentation. Currently, we just check those into git like any other file.

However, binary files like these will beef up our git history by quite a lot, es... | 1.0 | Use GitHub as a CDN for our images / GIFs in documentation - ### Description of problem and opportunity to address it

**Context to understand the problem**

It is common for us to include images and GIFs as a part of our documentation. Currently, we just check those into git like any other file.

However, binary fil... | process | use github as a cdn for our images gifs in documentation description of problem and opportunity to address it context to understand the problem it is common for us to include images and gifs as a part of our documentation currently we just check those into git like any other file however binary fil... | 1 |

17,653 | 23,472,139,697 | IssuesEvent | 2022-08-16 23:33:03 | brucemiller/LaTeXML | https://api.github.com/repos/brucemiller/LaTeXML | closed | download button in listings data broken in EPUB3 | bug packages postprocessing | When clicking on the download button of a `\begin{listings}` environment, some EPUB readers (Apple Books!) navigate to the file but do not offer a way back. In the case of Apple Books, one needs to trigger a reflow by resizing the window to resume reading. This is arguably a bug in Apple Books. This happens even if I a... | 1.0 | download button in listings data broken in EPUB3 - When clicking on the download button of a `\begin{listings}` environment, some EPUB readers (Apple Books!) navigate to the file but do not offer a way back. In the case of Apple Books, one needs to trigger a reflow by resizing the window to resume reading. This is argu... | process | download button in listings data broken in when clicking on the download button of a begin listings environment some epub readers apple books navigate to the file but do not offer a way back in the case of apple books one needs to trigger a reflow by resizing the window to resume reading this is arguably... | 1 |

11,556 | 7,293,676,986 | IssuesEvent | 2018-02-25 16:34:22 | eclipse/dirigible | https://api.github.com/repos/eclipse/dirigible | closed | Schema Modeler | component-ide component-workspace enhancement usability web-ide | An editor for database schema model. It has to provide a generic definition of tables with relations as well as the supported column attributes by the current data structure model. | True | Schema Modeler - An editor for database schema model. It has to provide a generic definition of tables with relations as well as the supported column attributes by the current data structure model. | non_process | schema modeler an editor for database schema model it has to provide a generic definition of tables with relations as well as the supported column attributes by the current data structure model | 0 |

8,149 | 11,354,729,456 | IssuesEvent | 2020-01-24 18:19:17 | googleapis/java-cloudbuild | https://api.github.com/repos/googleapis/java-cloudbuild | closed | Promote to GA | type: process | Package name: **google-cloud-build**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [ ] 28 days elapsed since las... | 1.0 | Promote to GA - Package name: **google-cloud-build**

Current release: **beta**

Proposed release: **GA**

## Instructions

Check the lists below, adding tests / documentation as required. Once all the "required" boxes are ticked, please create a release and close this issue.

## Required

- [ ] 28 days e... | process | promote to ga package name google cloud build current release beta proposed release ga instructions check the lists below adding tests documentation as required once all the required boxes are ticked please create a release and close this issue required days elap... | 1 |

799 | 3,097,399,655 | IssuesEvent | 2015-08-28 01:29:32 | cucyberdefense/defense-hackpack | https://api.github.com/repos/cucyberdefense/defense-hackpack | opened | Service management in SysV init | services | Examples of how to manage services on Linux using `/etc/init.d/*` | 1.0 | Service management in SysV init - Examples of how to manage services on Linux using `/etc/init.d/*` | non_process | service management in sysv init examples of how to manage services on linux using etc init d | 0 |

20,304 | 26,944,137,913 | IssuesEvent | 2023-02-08 06:18:17 | bobocode-blyznytsia/bring-framework | https://api.github.com/repos/bobocode-blyznytsia/bring-framework | closed | Implement RawBeanProcessor | bean-post-processor | ### Description

The `RawBeanProcessor` is responsible for the construction and initialization logic of `Bean`.

### Solution

In context of this story the `RawBeanProcessor` should be implemented.

`RawBeanProcessor` creates beans and puts them into the `Map<String, Object> rawBeanMap`. Injecting of dependencies, ... | 1.0 | Implement RawBeanProcessor - ### Description

The `RawBeanProcessor` is responsible for the construction and initialization logic of `Bean`.

### Solution

In context of this story the `RawBeanProcessor` should be implemented.

`RawBeanProcessor` creates beans and puts them into the `Map<String, Object> rawBeanMap`... | process | implement rawbeanprocessor description the rawbeanprocessor is responsible for the construction and initialization logic of bean solution in context of this story the rawbeanprocessor should be implemented rawbeanprocessor creates beans and puts them into the map rawbeanmap injecting of ... | 1 |

10,337 | 13,165,465,500 | IssuesEvent | 2020-08-11 06:39:30 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | NTR: effector-mediated supression of host host salicylic acid-mediated signal transduction pathway | multi-species process |

NTR: effector-mediated suppression of host salicylic acid-mediated host innate immune signalling.

A process mediated by a molecule secreted by a symbiont that results in the suppression of host salicylic acid-mediated host innate immune signalling.

descendant of

GO:0052003 suppression by symbiont of defense-r... | 1.0 | NTR: effector-mediated supression of host host salicylic acid-mediated signal transduction pathway -

NTR: effector-mediated suppression of host salicylic acid-mediated host innate immune signalling.

A process mediated by a molecule secreted by a symbiont that results in the suppression of host salicylic acid-media... | process | ntr effector mediated supression of host host salicylic acid mediated signal transduction pathway ntr effector mediated suppression of host salicylic acid mediated host innate immune signalling a process mediated by a molecule secreted by a symbiont that results in the suppression of host salicylic acid media... | 1 |

9,543 | 12,510,250,221 | IssuesEvent | 2020-06-02 18:18:46 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | workspace: clean: all doesn't work for deployment jobs | Pri1 devops-cicd-process/tech devops/prod doc-bug | The docs regarding cleaning a workspace don't work for deployment jobs. Either the docs are wrong or this is a bug. See [these other people](https://developercommunity.visualstudio.com/content/problem/614016/there-is-no-way-how-to-clean-workspace-in-deployme.html) also having problems. Please update appropriately.

... | 1.0 | workspace: clean: all doesn't work for deployment jobs - The docs regarding cleaning a workspace don't work for deployment jobs. Either the docs are wrong or this is a bug. See [these other people](https://developercommunity.visualstudio.com/content/problem/614016/there-is-no-way-how-to-clean-workspace-in-deployme.html... | process | workspace clean all doesn t work for deployment jobs the docs regarding cleaning a workspace don t work for deployment jobs either the docs are wrong or this is a bug see also having problems please update appropriately document details ⚠ do not edit this section it is required for docs ... | 1 |

7,913 | 11,092,955,772 | IssuesEvent | 2019-12-15 22:31:27 | shirou/gopsutil | https://api.github.com/repos/shirou/gopsutil | closed | [process][darwin] Process names are truncated to 16 characters | os:darwin os:freebsd os:openbsd package:process | **Describe the bug**

Process names are truncated to 16 characters

**To Reproduce**

```go

p, _ := process.NewProcess(pidOfProcessWithLongName)

pName, _ := p.Name() // pName is truncated

```

**Expected behavior**

Process names should be returned in full.

**Environment (please complete the following informa... | 1.0 | [process][darwin] Process names are truncated to 16 characters - **Describe the bug**

Process names are truncated to 16 characters

**To Reproduce**

```go

p, _ := process.NewProcess(pidOfProcessWithLongName)

pName, _ := p.Name() // pName is truncated

```

**Expected behavior**

Process names should be returned... | process | process names are truncated to characters describe the bug process names are truncated to characters to reproduce go p process newprocess pidofprocesswithlongname pname p name pname is truncated expected behavior process names should be returned in full en... | 1 |

4,705 | 7,544,127,913 | IssuesEvent | 2018-04-17 17:28:54 | UnbFeelings/unb-feelings-docs | https://api.github.com/repos/UnbFeelings/unb-feelings-docs | closed | Métrica de endpoints do GQM | Processo invalid question | No GQM de vocês, existe uma métrica documentada "Percentual de endpoints documentados) [url](https://github.com/UnbFeelings/unb-feelings-docs/wiki/Processo-de-Garantia-da-Qualidade#122-garantir-a-manuteabilidade) porém na parte de plano de análise [aqui](https://github.com/UnbFeelings/unb-feelings-docs/wiki/Processo-de... | 1.0 | Métrica de endpoints do GQM - No GQM de vocês, existe uma métrica documentada "Percentual de endpoints documentados) [url](https://github.com/UnbFeelings/unb-feelings-docs/wiki/Processo-de-Garantia-da-Qualidade#122-garantir-a-manuteabilidade) porém na parte de plano de análise [aqui](https://github.com/UnbFeelings/unb-... | process | métrica de endpoints do gqm no gqm de vocês existe uma métrica documentada percentual de endpoints documentados porém na parte de plano de análise está como percentual de endpoints testados não consegui entender muito bem essa métrica | 1 |

6,051 | 8,872,284,764 | IssuesEvent | 2019-01-11 15:03:06 | kiwicom/orbit-components | https://api.github.com/repos/kiwicom/orbit-components | closed | Support React Component in Select label prop | Enhancement Processing | I have a `Select` component and want to add a label. The label should use component `Text` from nitro for translations. But `label` prop accepts only string.

Extend `label` prop to accept also `React.Node`.

Is this valid solution? If yes, I would create a PR.

| 1.0 | Support React Component in Select label prop - I have a `Select` component and want to add a label. The label should use component `Text` from nitro for translations. But `label` prop accepts only string.

Extend `label` prop to accept also `React.Node`.

Is this valid solution? If yes, I would create a PR.

| process | support react component in select label prop i have a select component and want to add a label the label should use component text from nitro for translations but label prop accepts only string extend label prop to accept also react node is this valid solution if yes i would create a pr | 1 |

7,983 | 11,170,752,554 | IssuesEvent | 2019-12-28 15:11:21 | bisq-network/bisq | https://api.github.com/repos/bisq-network/bisq | closed | Trade with serious bug | an:investigation in:trade-process was:dropped | <img width="1148" alt="Captura de pantalla 2019-08-25 a las 15 25 47" src="https://user-images.githubusercontent.com/52173515/63654440-97e7d000-c77a-11e9-88d7-8c152caae453.png">

Trade "aikOA" with a very weird bug.

the 3 transactions aren't related:

maker tx - b4b25063df8e060348d7f859e8f6dc1ea9d068c6a58072c18ac4fe... | 1.0 | Trade with serious bug - <img width="1148" alt="Captura de pantalla 2019-08-25 a las 15 25 47" src="https://user-images.githubusercontent.com/52173515/63654440-97e7d000-c77a-11e9-88d7-8c152caae453.png">

Trade "aikOA" with a very weird bug.

the 3 transactions aren't related:

maker tx - b4b25063df8e060348d7f859e8f6d... | process | trade with serious bug img width alt captura de pantalla a las src trade aikoa with a very weird bug the transactions aren t related maker tx taker tx deposit tx the btc seller received the payment and released the btc to the buyer but the payout was never made to the... | 1 |

408,154 | 11,942,272,778 | IssuesEvent | 2020-04-02 19:59:19 | guilds-plugin/Guilds | https://api.github.com/repos/guilds-plugin/Guilds | closed | [Feature Request] Guild Guards | Priority: Low Type: Feature | Possibility of having guards defending their guild from enemies and being upgradeable, this feature would be useful to people because so while you are offline there is someone to defend.

Sorry if my english is bad, but, i'm italian. | 1.0 | [Feature Request] Guild Guards - Possibility of having guards defending their guild from enemies and being upgradeable, this feature would be useful to people because so while you are offline there is someone to defend.

Sorry if my english is bad, but, i'm italian. | non_process | guild guards possibility of having guards defending their guild from enemies and being upgradeable this feature would be useful to people because so while you are offline there is someone to defend sorry if my english is bad but i m italian | 0 |

30,640 | 11,842,011,206 | IssuesEvent | 2020-03-23 22:00:51 | Mohib-hub/karate | https://api.github.com/repos/Mohib-hub/karate | opened | CVE-2019-20445 (High) detected in netty-codec-http-4.1.32.Final.jar | security vulnerability | ## CVE-2019-20445 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.32.Final.jar</b></p></summary>

<p>Netty is an asynchronous event-driven network application frame... | True | CVE-2019-20445 (High) detected in netty-codec-http-4.1.32.Final.jar - ## CVE-2019-20445 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-codec-http-4.1.32.Final.jar</b></p></summar... | non_process | cve high detected in netty codec http final jar cve high severity vulnerability vulnerable library netty codec http final jar netty is an asynchronous event driven network application framework for rapid development of maintainable high performance protocol servers and c... | 0 |

568,921 | 16,990,595,407 | IssuesEvent | 2021-06-30 19:53:35 | ita-social-projects/TeachUA | https://api.github.com/repos/ita-social-projects/TeachUA | closed | [Гуртки] First page with the 'Гуртки' list is displayed all the time after pressing 'Back' navigation button | Priority: High bug | Environment: Windows 7, Service Pack 1, Google Chrome, 90.0.4430.212 (Розробка) (64-розрядна версія).

Reproducible: always

Build found: the last build

Steps to reproduce

1. Go to 'https://speak-ukrainian.org.ua/dev'

2. Click on 'Гутрки' tab

3. Scroll down to pagination items below the club's list

4. Choose any... | 1.0 | [Гуртки] First page with the 'Гуртки' list is displayed all the time after pressing 'Back' navigation button - Environment: Windows 7, Service Pack 1, Google Chrome, 90.0.4430.212 (Розробка) (64-розрядна версія).

Reproducible: always

Build found: the last build

Steps to reproduce

1. Go to 'https://speak-ukrainian... | non_process | first page with the гуртки list is displayed all the time after pressing back navigation button environment windows service pack google chrome розробка розрядна версія reproducible always build found the last build steps to reproduce go to click on гутрки tab scrol... | 0 |

12,723 | 15,093,988,206 | IssuesEvent | 2021-02-07 03:42:10 | rdoddanavar/hpr-sim | https://api.github.com/repos/rdoddanavar/hpr-sim | closed | src/preproc: preproc_model module --> prop model | pre-processing | Proposed `preproc_model.py` that defines parsing routines specific to model input files; ex. aero model, prop model, etc.

Engine model file format: http://www.thrustcurve.org/raspformat.shtml

Module should create data suitable for initializing prop model | 1.0 | src/preproc: preproc_model module --> prop model - Proposed `preproc_model.py` that defines parsing routines specific to model input files; ex. aero model, prop model, etc.

Engine model file format: http://www.thrustcurve.org/raspformat.shtml

Module should create data suitable for initializing prop model | process | src preproc preproc model module prop model proposed preproc model py that defines parsing routines specific to model input files ex aero model prop model etc engine model file format module should create data suitable for initializing prop model | 1 |

10,212 | 13,069,247,880 | IssuesEvent | 2020-07-31 06:03:18 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Processing Modeler: "Load layer into project", ignoring loaded layer name | Bug Modeller Processing Regression | **Describe the bug**

When using the processing modeler algorithm "Load layer into project", there's an option called Loaded layer name.

In 3.4 the loaded layer name applies to the loaded file.

In 3.10 the loaded layer name is ignored, instead using the actual filename.

**How to Reproduce**

Creating a new mod... | 1.0 | Processing Modeler: "Load layer into project", ignoring loaded layer name - **Describe the bug**

When using the processing modeler algorithm "Load layer into project", there's an option called Loaded layer name.

In 3.4 the loaded layer name applies to the loaded file.

In 3.10 the loaded layer name is ignored, inst... | process | processing modeler load layer into project ignoring loaded layer name describe the bug when using the processing modeler algorithm load layer into project there s an option called loaded layer name in the loaded layer name applies to the loaded file in the loaded layer name is ignored inste... | 1 |

351,707 | 10,522,447,431 | IssuesEvent | 2019-09-30 08:46:15 | wso2/docs-ei | https://api.github.com/repos/wso2/docs-ei | closed | Register Ballerina language for highlighting | Priority/Highest Type/Docs Type/UX ballerina | Need to highlight code and syntax for Ballerina. This code can be found here:

The code for this can be found here: https://github.com/ballerina-platform/ballerina-www/blob/master/website/main-pages/ballerina.io-website-theme/js/ballerina-common.js#L79-L156 | 1.0 | Register Ballerina language for highlighting - Need to highlight code and syntax for Ballerina. This code can be found here:

The code for this can be found here: https://github.com/ballerina-platform/ballerina-www/blob/master/website/main-pages/ballerina.io-website-theme/js/ballerina-common.js#L79-L156 | non_process | register ballerina language for highlighting need to highlight code and syntax for ballerina this code can be found here the code for this can be found here | 0 |

198,601 | 15,713,197,687 | IssuesEvent | 2021-03-27 15:13:13 | nim-lang/Nim | https://api.github.com/repos/nim-lang/Nim | closed | Newruntime: incomplete treatment of `owned` non-`ref`'s, including documentation... | Documentation New runtime | Although recently updated and improved, [the documentation for the newruntime destructors](https://github.com/nim-lang/Nim/blob/devel/doc/destructors.rst) still doesn't mention that the `owned` modifier can also be applied to any type and not just `ref`'s and what that implies.

It is not documented that currently, n... | 1.0 | Newruntime: incomplete treatment of `owned` non-`ref`'s, including documentation... - Although recently updated and improved, [the documentation for the newruntime destructors](https://github.com/nim-lang/Nim/blob/devel/doc/destructors.rst) still doesn't mention that the `owned` modifier can also be applied to any type... | non_process | newruntime incomplete treatment of owned non ref s including documentation although recently updated and improved still doesn t mention that the owned modifier can also be applied to any type and not just ref s and what that implies it is not documented that currently non global proc s which... | 0 |

71,947 | 23,865,977,400 | IssuesEvent | 2022-09-07 11:03:33 | matrix-org/synapse | https://api.github.com/repos/matrix-org/synapse | closed | Enable cancellation for `POST /_matrix/client/v3/keys/query` | S-Major T-Defect | `POST /_matrix/client/v3/keys/query` can take a long time.

When clients retry the request, the new request gets queued behind the previous one by `E2eKeysHandler._query_devices_linearizer`, so retrying a request that timed out only makes response times worse.

```

...

2022-05-26 14:34:57,053 - synapse.access.htt... | 1.0 | Enable cancellation for `POST /_matrix/client/v3/keys/query` - `POST /_matrix/client/v3/keys/query` can take a long time.

When clients retry the request, the new request gets queued behind the previous one by `E2eKeysHandler._query_devices_linearizer`, so retrying a request that timed out only makes response times w... | non_process | enable cancellation for post matrix client keys query post matrix client keys query can take a long time when clients retry the request the new request gets queued behind the previous one by query devices linearizer so retrying a request that timed out only makes response times worse ... | 0 |

2,035 | 4,847,360,866 | IssuesEvent | 2016-11-10 14:46:23 | Alfresco/alfresco-ng2-components | https://api.github.com/repos/Alfresco/alfresco-ng2-components | opened | Right hand side of form not displayed within form attached to start event | browser: all bug comp: activiti-processList | **activiti**

**component**

| 1.0 | Right hand side of form not displayed within form attached to start event - **activiti**

**component**

Response: Code 200. {status: "OK"} | 1.0 | API processing service - Define http endpoints:

* **POST** /v1/action/{enum:type} any JSON.

Pass this JSON to waterpipe. Action type should be passed with a request to waterpipe.

Response: Code 202. NO BODY;

* Actuator with health (GET /actuator/health)

Response: Code 200. {status: "OK"} | process | api processing service define http endpoints post action enum type any json pass this json to waterpipe action type should be passed with a request to waterpipe response code no body actuator with health get actuator health response code status ok | 1 |

6,678 | 9,795,442,250 | IssuesEvent | 2019-06-11 03:43:40 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | GDAL Warp (Reproject) tool: make the target CRS parameter optional | Easy fix Feature Request Processing | Author Name: **Mike Taves** (Mike Taves)

Original Redmine Issue: [21571](https://issues.qgis.org/issues/21571)

Redmine category:processing/gdal

Assignee: Giovanni Manghi

---

With QGIS 3.6, From Raster > Projections > Warp (Reproject), the dialog has a few options with a few of the defaults set in.

Source CRS is op... | 1.0 | GDAL Warp (Reproject) tool: make the target CRS parameter optional - Author Name: **Mike Taves** (Mike Taves)

Original Redmine Issue: [21571](https://issues.qgis.org/issues/21571)

Redmine category:processing/gdal

Assignee: Giovanni Manghi

---

With QGIS 3.6, From Raster > Projections > Warp (Reproject), the dialog has... | process | gdal warp reproject tool make the target crs parameter optional author name mike taves mike taves original redmine issue redmine category processing gdal assignee giovanni manghi with qgis from raster projections warp reproject the dialog has a few options with a few of the defaults ... | 1 |

6,682 | 9,799,414,361 | IssuesEvent | 2019-06-11 14:22:24 | googleapis/cloud-bigtable-client | https://api.github.com/repos/googleapis/cloud-bigtable-client | closed | Add static analysis to find bugs sooner | type: process | Static analysis tools enable finding and avoiding bugs at compile time, before they become issues at runtime, and become much harder (and hence, costlier) to find and fix.

There are tools that can be run offline, such as SpotBugs (successor to FindBugs) and [ErrorProne](http://errorprone.info/), or via online servic... | 1.0 | Add static analysis to find bugs sooner - Static analysis tools enable finding and avoiding bugs at compile time, before they become issues at runtime, and become much harder (and hence, costlier) to find and fix.

There are tools that can be run offline, such as SpotBugs (successor to FindBugs) and [ErrorProne](http... | process | add static analysis to find bugs sooner static analysis tools enable finding and avoiding bugs at compile time before they become issues at runtime and become much harder and hence costlier to find and fix there are tools that can be run offline such as spotbugs successor to findbugs and or via online... | 1 |

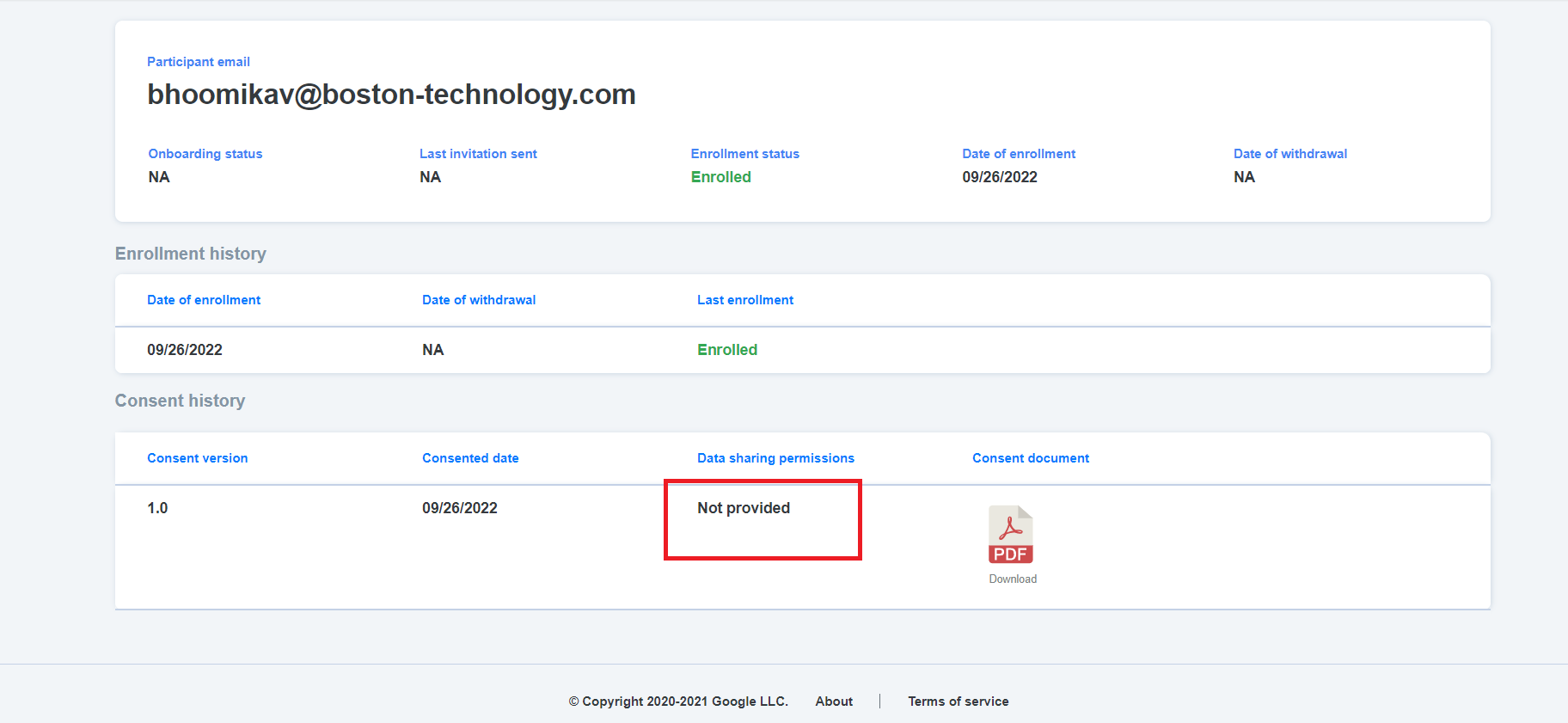

18,463 | 24,549,702,590 | IssuesEvent | 2022-10-12 11:37:45 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [PM] Status is getting displayed as 'Not provided' even when there is no data sharing permission | Bug P1 Participant manager Process: Fixed Process: Tested QA Process: Tested dev | **AR:** Status is getting displayed as 'Not provided' even when there is no data sharing permission

**ER:** Status should get displayed as 'Not applicable' , when there is no data sharing permission

| 3.0 | [PM] Status is getting displayed as 'Not provided' even when there is no data sharing permission - **AR:** Status is getting displayed as 'Not provided' even when there is no data sharing permission

**ER:** Status should get displayed as 'Not applicable' , when there is no data sharing permission

- Use the *Preview* tab to see what your issue will actually look like.

- We may ask some questions or ask you to provide addition information after you placed your... | 1.0 | FirstDeliveryDate returns NULL causing issues when creating slips/labels - ### Submitting issues through Github

## Please follow the guide below

- Put an `x` into all the boxes [ ] relevant to your *issue* (like this: `[x]`)

- Use the *Preview* tab to see what your issue will actually look like.

- We may ask some... | process | firstdeliverydate returns null causing issues when creating slips labels submitting issues through github please follow the guide below put an x into all the boxes relevant to your issue like this use the preview tab to see what your issue will actually look like we may ask some que... | 1 |

20,451 | 27,113,326,265 | IssuesEvent | 2023-02-15 16:44:50 | googleapis/testing-infra-docker | https://api.github.com/repos/googleapis/testing-infra-docker | closed | WARNING: JAVA - DO NOT UPDATE to maven 3.8.2 | type: process priority: p3 | maven 3.8.2 breaks many of the java client library builds. 3.8.3 should fix this. | 1.0 | WARNING: JAVA - DO NOT UPDATE to maven 3.8.2 - maven 3.8.2 breaks many of the java client library builds. 3.8.3 should fix this. | process | warning java do not update to maven maven breaks many of the java client library builds should fix this | 1 |

2,755 | 5,681,063,372 | IssuesEvent | 2017-04-13 04:26:39 | inasafe/inasafe-realtime | https://api.github.com/repos/inasafe/inasafe-realtime | closed | EQ Realtime - feedback on Indonesian disclaimer on the report page | bug ready realtime processor | @adelebearcrozier, Anjar and Nugi have supplied feedback on the Indonesian disclaimer on the report page for EQ Realtime.

See original ticket at https://github.com/inasafe/inasafe/issues/2698 for further discussion. | 1.0 | EQ Realtime - feedback on Indonesian disclaimer on the report page - @adelebearcrozier, Anjar and Nugi have supplied feedback on the Indonesian disclaimer on the report page for EQ Realtime.

See original ticket at https://github.com/inasafe/inasafe/issues/2698 for further discussion. | process | eq realtime feedback on indonesian disclaimer on the report page adelebearcrozier anjar and nugi have supplied feedback on the indonesian disclaimer on the report page for eq realtime see original ticket at for further discussion | 1 |

10,210 | 13,068,153,212 | IssuesEvent | 2020-07-31 02:42:15 | nion-software/nionswift | https://api.github.com/repos/nion-software/nionswift | opened | Processing asking for new data shapes should allow user to enter percentages | f - inspector f - processing type - enhancement | Resize, reshape, re-bin, etc. | 1.0 | Processing asking for new data shapes should allow user to enter percentages - Resize, reshape, re-bin, etc. | process | processing asking for new data shapes should allow user to enter percentages resize reshape re bin etc | 1 |

63,385 | 12,310,938,954 | IssuesEvent | 2020-05-12 11:31:10 | GTNewHorizons/GT-New-Horizons-Modpack | https://api.github.com/repos/GTNewHorizons/GT-New-Horizons-Modpack | closed | Storage Interface on Super/QuantumChechts does not work propperly | AE2 issue Need Code changes bugMinor reminder | #### Which modpack version are you using?

2.0.3.1Dev

Storage Interface on Super/QuantumChechts does not work propperly | 1.0 | Storage Interface on Super/QuantumChechts does not work propperly - #### Which modpack version are you using?

2.0.3.1Dev

Storage Interface on Super/QuantumChechts does not work propperly | non_process | storage interface on super quantumchechts does not work propperly which modpack version are you using storage interface on super quantumchechts does not work propperly | 0 |

10,869 | 13,640,422,981 | IssuesEvent | 2020-09-25 12:43:53 | timberio/vector | https://api.github.com/repos/timberio/vector | closed | New `strip_whitespace` remap function | domain: mapping domain: processing type: feature | The `strip_whitespace` remap function strips leading and trailing whitespace

## Example

Given this event:

```js

{

"message": "\t\tThis string has whitespace around it "

}

```

And this remap instruction set:

```

.message = strip_whitespace(.message)

```

Would result in:

```js

{

"messa... | 1.0 | New `strip_whitespace` remap function - The `strip_whitespace` remap function strips leading and trailing whitespace

## Example

Given this event:

```js

{

"message": "\t\tThis string has whitespace around it "

}

```

And this remap instruction set:

```

.message = strip_whitespace(.message)

```

... | process | new strip whitespace remap function the strip whitespace remap function strips leading and trailing whitespace example given this event js message t tthis string has whitespace around it and this remap instruction set message strip whitespace message ... | 1 |

68 | 2,523,409,328 | IssuesEvent | 2015-01-20 10:16:16 | Graylog2/graylog2-server | https://api.github.com/repos/Graylog2/graylog2-server | closed | Grok support for extractors | processing | When it comes to parsing complex (read:crappy) log formats Jordan Sissel hit a homerun with the concepts and ideas behind Grok.

I have tried plain regex'es and drools but neither can match the ease, speed, and maintainability of grok patterns.

As a bonus it would enable a lot of transparency between Logstash and ... | 1.0 | Grok support for extractors - When it comes to parsing complex (read:crappy) log formats Jordan Sissel hit a homerun with the concepts and ideas behind Grok.

I have tried plain regex'es and drools but neither can match the ease, speed, and maintainability of grok patterns.

As a bonus it would enable a lot of tran... | process | grok support for extractors when it comes to parsing complex read crappy log formats jordan sissel hit a homerun with the concepts and ideas behind grok i have tried plain regex es and drools but neither can match the ease speed and maintainability of grok patterns as a bonus it would enable a lot of tran... | 1 |

20,748 | 27,453,767,583 | IssuesEvent | 2023-03-02 19:30:19 | pfmc-assessments/canary_2023 | https://api.github.com/repos/pfmc-assessments/canary_2023 | opened | Compil data - Foreign Catches | Data obtaining Data processing | The Rogers_foreign_catch (2003) pdf in the google drive contains the estimates of canary for foreign fleets. Need to add within our landings script.

| 1.0 | Compil data - Foreign Catches - The Rogers_foreign_catch (2003) pdf in the google drive contains the estimates of canary for foreign fleets. Need to add within our landings script.

| process | compil data foreign catches the rogers foreign catch pdf in the google drive contains the estimates of canary for foreign fleets need to add within our landings script | 1 |

378 | 2,823,564,930 | IssuesEvent | 2015-05-21 09:36:31 | austundag/testing | https://api.github.com/repos/austundag/testing | closed | Patient header allergies refresh issues needs to be investigated/fixed | enhancement in process | When user adds/cancels a new/old allergy the patient header allergies should be updated. | 1.0 | Patient header allergies refresh issues needs to be investigated/fixed - When user adds/cancels a new/old allergy the patient header allergies should be updated. | process | patient header allergies refresh issues needs to be investigated fixed when user adds cancels a new old allergy the patient header allergies should be updated | 1 |

136,325 | 19,760,729,956 | IssuesEvent | 2022-01-16 11:19:33 | TeamHavit/Havit-iOS | https://api.github.com/repos/TeamHavit/Havit-iOS | closed | [FEAT] EmptyView 레이아웃 및 분기처리 구현 | 🗂 수연 🟣 Category 🖍 Design | ## 💡 Issue

<!-- 이슈에 대한 내용을 설명해주세요. -->

카테고리뷰에서 카테고리가 없을 때 빈 화면에 띄울 EmptyView UI를 구성합니다.

## 📝 todo

<!-- 해야 할 일들을 적어주세요. -->

- [x] EmptyView UI 구성 및 레이아웃

- [x] 기본 hidden으로 설정 후, 카테고리가 없을 때 hidden속성 false 해주기 | 1.0 | [FEAT] EmptyView 레이아웃 및 분기처리 구현 - ## 💡 Issue

<!-- 이슈에 대한 내용을 설명해주세요. -->

카테고리뷰에서 카테고리가 없을 때 빈 화면에 띄울 EmptyView UI를 구성합니다.

## 📝 todo

<!-- 해야 할 일들을 적어주세요. -->

- [x] EmptyView UI 구성 및 레이아웃

- [x] 기본 hidden으로 설정 후, 카테고리가 없을 때 hidden속성 false 해주기 | non_process | emptyview 레이아웃 및 분기처리 구현 💡 issue 카테고리뷰에서 카테고리가 없을 때 빈 화면에 띄울 emptyview ui를 구성합니다 📝 todo emptyview ui 구성 및 레이아웃 기본 hidden으로 설정 후 카테고리가 없을 때 hidden속성 false 해주기 | 0 |

17,439 | 23,265,835,868 | IssuesEvent | 2022-08-04 17:16:54 | MPMG-DCC-UFMG/C01 | https://api.github.com/repos/MPMG-DCC-UFMG/C01 | opened | Transparência - Detalhes do coletor/Extrair Links e Baixar Arquivos | [1] Requisito [0] Desenvolvimento [2] Média Prioridade [3] Processamento Dinâmico | ## Comportamento Esperado

Espera-se que as configurações `Extrair links` e `Baixar arquivos` se apliquem também às coletas que usam processamento dinâmico.

## Comportamento Atual

Ao configurar um coletor dinâmico com essa ferramenta, os links extraídos são, basicamente, o que podem ser obtidos através do processam... | 1.0 | Transparência - Detalhes do coletor/Extrair Links e Baixar Arquivos - ## Comportamento Esperado

Espera-se que as configurações `Extrair links` e `Baixar arquivos` se apliquem também às coletas que usam processamento dinâmico.

## Comportamento Atual

Ao configurar um coletor dinâmico com essa ferramenta, os links ex... | process | transparência detalhes do coletor extrair links e baixar arquivos comportamento esperado espera se que as configurações extrair links e baixar arquivos se apliquem também às coletas que usam processamento dinâmico comportamento atual ao configurar um coletor dinâmico com essa ferramenta os links ex... | 1 |

16,735 | 21,899,891,479 | IssuesEvent | 2022-05-20 12:26:10 | camunda/zeebe-process-test | https://api.github.com/repos/camunda/zeebe-process-test | opened | Zeebe Test engine should start on a different port | kind/feature team/process-automation | **Description**

When I started a local docker-compose for dev, I cannot start the tests and get an error message: `Port 26500 already in use`.

It's is very inconvenient for a process automation developer to stop the runtime, run the tests, start the runtime, do the integration test, develop next feature...

The... | 1.0 | Zeebe Test engine should start on a different port - **Description**

When I started a local docker-compose for dev, I cannot start the tests and get an error message: `Port 26500 already in use`.

It's is very inconvenient for a process automation developer to stop the runtime, run the tests, start the runtime, do... | process | zeebe test engine should start on a different port description when i started a local docker compose for dev i cannot start the tests and get an error message port already in use it s is very inconvenient for a process automation developer to stop the runtime run the tests start the runtime do the... | 1 |

30,762 | 4,215,013,409 | IssuesEvent | 2016-06-30 01:14:55 | apapadimoulis/what-bugs | https://api.github.com/repos/apapadimoulis/what-bugs | closed | Cannot reply with highlighted quote to necro topic | weird but by design | Go to a necro topic.

Highlight something.

Click REPLY.

Get "OMFG WHY U NECRO" popup, and click "Look, asshole, I want to necro this thread"

Composes appears, but no quoted reply. | 1.0 | Cannot reply with highlighted quote to necro topic - Go to a necro topic.

Highlight something.

Click REPLY.

Get "OMFG WHY U NECRO" popup, and click "Look, asshole, I want to necro this thread"

Composes appears, but no quoted reply. | non_process | cannot reply with highlighted quote to necro topic go to a necro topic highlight something click reply get omfg why u necro popup and click look asshole i want to necro this thread composes appears but no quoted reply | 0 |

5,811 | 8,648,530,597 | IssuesEvent | 2018-11-26 16:48:47 | googlegenomics/gcp-variant-transforms | https://api.github.com/repos/googlegenomics/gcp-variant-transforms | opened | Setup CPU profiling and optimize code | P2 process | We have not really spent too much time optimizing our code, but it seems like there may be opportunities to gain performance. For instance, a small change in PR #417 resulted in 50% speedup in one of the PTransforms (~10% overall speedup; or more depending on the size of the data).

However, rather than trying 'rando... | 1.0 | Setup CPU profiling and optimize code - We have not really spent too much time optimizing our code, but it seems like there may be opportunities to gain performance. For instance, a small change in PR #417 resulted in 50% speedup in one of the PTransforms (~10% overall speedup; or more depending on the size of the data... | process | setup cpu profiling and optimize code we have not really spent too much time optimizing our code but it seems like there may be opportunities to gain performance for instance a small change in pr resulted in speedup in one of the ptransforms overall speedup or more depending on the size of the data ... | 1 |

227 | 2,495,730,037 | IssuesEvent | 2015-01-06 14:16:00 | firebug/firebug.next | https://api.github.com/repos/firebug/firebug.next | closed | Computed side panel is empty | bug inspector platform test-needed | Firebug.next seems to produce an empty computed properties panel when run on Nightly and DevEd.

Changing themes does not seem to help. If however, I run Nightly without fir... | 1.0 | Computed side panel is empty - Firebug.next seems to produce an empty computed properties panel when run on Nightly and DevEd.

Changing themes does not seem to help. If how... | non_process | computed side panel is empty firebug next seems to produce an empty computed properties panel when run on nightly and deved changing themes does not seem to help if however i run nightly without firebug then devtools does produce results | 0 |

13,002 | 15,361,166,485 | IssuesEvent | 2021-03-01 17:48:09 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | opened | small psychometric calc | Calculator Process Cooling Quick Fix | dropdown has "gas dew point" as an option. change to just "dew point" or "air dew point" | 1.0 | small psychometric calc - dropdown has "gas dew point" as an option. change to just "dew point" or "air dew point" | process | small psychometric calc dropdown has gas dew point as an option change to just dew point or air dew point | 1 |

6,824 | 9,967,829,164 | IssuesEvent | 2019-07-08 14:23:27 | AnalyticalGraphicsInc/cesium | https://api.github.com/repos/AnalyticalGraphicsInc/cesium | opened | Make bloom post process check for selected feature | category - post-processing good first issue type - enhancement | Right now you can apply certain post process effects to just one feature. The bloom shader doesn't do any check to allow you to apply it to just to the selected feature as you would expect.

This is potentially pretty easy, you just need to take the check from another post process like the black and white one: https:... | 1.0 | Make bloom post process check for selected feature - Right now you can apply certain post process effects to just one feature. The bloom shader doesn't do any check to allow you to apply it to just to the selected feature as you would expect.

This is potentially pretty easy, you just need to take the check from anot... | process | make bloom post process check for selected feature right now you can apply certain post process effects to just one feature the bloom shader doesn t do any check to allow you to apply it to just to the selected feature as you would expect this is potentially pretty easy you just need to take the check from anot... | 1 |

31,313 | 14,930,942,682 | IssuesEvent | 2021-01-25 04:27:56 | iterative/dvc | https://api.github.com/repos/iterative/dvc | opened | -R collects stage from all of the repo | optimize p1-important performance | # Bug Report

We are building `repo.graph` and using `path` to search for stages in the graph when `-R` instead of just reading files from the `path` directory.

### Context

https://groups.google.com/a/iterative.ai/g/support/c/H_c36GuAsPM/m/6mLNdPIRAgAJ | True | -R collects stage from all of the repo - # Bug Report

We are building `repo.graph` and using `path` to search for stages in the graph when `-R` instead of just reading files from the `path` directory.

### Context

https://groups.google.com/a/iterative.ai/g/support/c/H_c36GuAsPM/m/6mLNdPIRAgAJ | non_process | r collects stage from all of the repo bug report we are building repo graph and using path to search for stages in the graph when r instead of just reading files from the path directory context | 0 |

13,013 | 15,369,907,214 | IssuesEvent | 2021-03-02 08:04:56 | prisma/prisma | https://api.github.com/repos/prisma/prisma | closed | prisma migrate not working with basic example `Reason: [libs/sql-schema-describer/src/getters.rs:42:14] called `Result::unwrap()` on an `Err` value: "Getting non_unique from Resultrow ResultRow { columns: [\"index_name\", \"non_unique\", \"column_name\", \"seq_in_index\", \"table_name\"], values: [Text(Some(\"PRIMARY\"... | bug/0-needs-info kind/bug process/candidate team/migrations | Hi Prisma Team! Prisma Migrate just crashed.

## Versions

| Name | Version |

|-------------|--------------------|

| Platform | darwin |

| Node | v14.15.0 |

| Prisma CLI | 2.15.0 |

| Binary | e51dc3b5a9ee790a07104bec1c9477d51740fe54|... | 1.0 | prisma migrate not working with basic example `Reason: [libs/sql-schema-describer/src/getters.rs:42:14] called `Result::unwrap()` on an `Err` value: "Getting non_unique from Resultrow ResultRow { columns: [\"index_name\", \"non_unique\", \"column_name\", \"seq_in_index\", \"table_name\"], values: [Text(Some(\"PRIMARY\"... | process | prisma migrate not working with basic example reason called result unwrap on an err value getting non unique from resultrow resultrow columns values as bool failed hi prisma team prisma migrate just crashed versions name version ... | 1 |

181,864 | 21,664,457,514 | IssuesEvent | 2022-05-07 01:24:52 | phunware/react-select | https://api.github.com/repos/phunware/react-select | opened | CVE-2022-29167 (High) detected in hawk-3.1.3.tgz | security vulnerability | ## CVE-2022-29167 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hawk-3.1.3.tgz</b></p></summary>

<p>HTTP Hawk Authentication Scheme</p>

<p>Library home page: <a href="https://registr... | True | CVE-2022-29167 (High) detected in hawk-3.1.3.tgz - ## CVE-2022-29167 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hawk-3.1.3.tgz</b></p></summary>

<p>HTTP Hawk Authentication Scheme... | non_process | cve high detected in hawk tgz cve high severity vulnerability vulnerable library hawk tgz http hawk authentication scheme library home page a href path to dependency file package json path to vulnerable library node modules hawk package json dependency hierarch... | 0 |

303,460 | 26,209,260,106 | IssuesEvent | 2023-01-04 03:51:34 | phetsims/number-play | https://api.github.com/repos/phetsims/number-play | closed | CT cannot read properties of undefined | type:automated-testing | ```

number-play : fuzz : built

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1637662989114/number-play/build/phet/number-play_en_phet.html?continuousTest=%7B%22test%22%3A%5B%22number-play%22%2C%22fuzz%22%2C%22built%22%5D%2C%22snapshotName%22%3A%22snapshot-1637662989114%22%2C%22timestamp%22%3A163767462890... | 1.0 | CT cannot read properties of undefined - ```

number-play : fuzz : built

https://bayes.colorado.edu/continuous-testing/ct-snapshots/1637662989114/number-play/build/phet/number-play_en_phet.html?continuousTest=%7B%22test%22%3A%5B%22number-play%22%2C%22fuzz%22%2C%22built%22%5D%2C%22snapshotName%22%3A%22snapshot-16376629... | non_process | ct cannot read properties of undefined number play fuzz built query fuzz memorylimit uncaught typeerror cannot read properties of undefined reading x typeerror cannot read properties of undefined reading x at hi distancesquared at hi distance at at at vt at at at ... | 0 |

19,919 | 26,380,461,608 | IssuesEvent | 2023-01-12 08:11:48 | zammad/zammad | https://api.github.com/repos/zammad/zammad | closed | Error while processing S/MIME signed emails when the sender name is different than CN | bug verified mail processing smime | ### Used Zammad Version

5.4.x (git)

### Environment

- Installation method: any

- Operating system: MacOS 13.1

- Database + version: PostgreSQL 10.21

- Elasticsearch version: any

- Browser + version: any

### Actual behaviour

When an S/MIME signed email is being processed, the following error is logge... | 1.0 | Error while processing S/MIME signed emails when the sender name is different than CN - ### Used Zammad Version

5.4.x (git)

### Environment

- Installation method: any

- Operating system: MacOS 13.1

- Database + version: PostgreSQL 10.21

- Elasticsearch version: any

- Browser + version: any

### Actual b... | process | error while processing s mime signed emails when the sender name is different than cn used zammad version x git environment installation method any operating system macos database version postgresql elasticsearch version any browser version any actual beha... | 1 |

279 | 6,001,197,266 | IssuesEvent | 2017-06-05 08:25:58 | datacite/datacite | https://api.github.com/repos/datacite/datacite | opened | Announce planned outages | data center member reliability | As a data center manager (or member), I want to know when there is planned outages in order to alert my users so they don’t get mad at me. | True | Announce planned outages - As a data center manager (or member), I want to know when there is planned outages in order to alert my users so they don’t get mad at me. | non_process | announce planned outages as a data center manager or member i want to know when there is planned outages in order to alert my users so they don’t get mad at me | 0 |

224,229 | 24,769,725,982 | IssuesEvent | 2022-10-23 01:17:07 | ncorejava/moment | https://api.github.com/repos/ncorejava/moment | opened | CVE-2022-37598 (High) detected in uglify-js-3.13.0.tgz | security vulnerability | ## CVE-2022-37598 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>uglify-js-3.13.0.tgz</b></p></summary>

<p>JavaScript parser, mangler/compressor and beautifier toolkit</p>

<p>Library ... | True | CVE-2022-37598 (High) detected in uglify-js-3.13.0.tgz - ## CVE-2022-37598 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>uglify-js-3.13.0.tgz</b></p></summary>

<p>JavaScript parser, ... | non_process | cve high detected in uglify js tgz cve high severity vulnerability vulnerable library uglify js tgz javascript parser mangler compressor and beautifier toolkit library home page a href path to dependency file package json path to vulnerable library node modules ug... | 0 |

521,247 | 15,106,256,336 | IssuesEvent | 2021-02-08 14:05:29 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.reddit.com - site is not usable | browser-fenix engine-gecko priority-critical | <!-- @browser: Firefox Mobile 87.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:87.0) Gecko/87.0 Firefox/87.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66763 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://www.reddit.c... | 1.0 | www.reddit.com - site is not usable - <!-- @browser: Firefox Mobile 87.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 10; Mobile; rv:87.0) Gecko/87.0 Firefox/87.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66763 -->

<!-- @extra_labels: browser-fe... | non_process | site is not usable url browser version firefox mobile operating system android tested another browser yes chrome problem type site is not usable description buttons or links not working steps to reproduce can t upload images to reddit doesn t work on chro... | 0 |

20,626 | 27,298,796,496 | IssuesEvent | 2023-02-23 23:02:10 | TUM-Dev/NavigaTUM | https://api.github.com/repos/TUM-Dev/NavigaTUM | closed | [Entry] [2930.EG.001]: Koordinate bearbeiten | entry webform delete-after-processing | Hallo, ich möchte diese Koordinate zum Roomfinder hinzufügen:

```yaml

"2930.EG.001": { lat: 48.88517446990414, lon: 12.571829694610074 }

``` | 1.0 | [Entry] [2930.EG.001]: Koordinate bearbeiten - Hallo, ich möchte diese Koordinate zum Roomfinder hinzufügen:

```yaml

"2930.EG.001": { lat: 48.88517446990414, lon: 12.571829694610074 }

``` | process | koordinate bearbeiten hallo ich möchte diese koordinate zum roomfinder hinzufügen yaml eg lat lon | 1 |

16,905 | 22,217,491,812 | IssuesEvent | 2022-06-08 04:17:18 | bazelbuild/bazel | https://api.github.com/repos/bazelbuild/bazel | closed | Bazel traverses generated symlink and fails to build | more data needed type: support / not a bug (process) team-ExternalDeps | <!--

ATTENTION! Please read and follow:

- if this is a _question_ about how to build / test / query / deploy using Bazel, or a _discussion starter_, send it to bazel-discuss@googlegroups.com

- if this is a _bug_ or _feature request_, fill the form below as best as you can.

-->

### Description of the problem / fe... | 1.0 | Bazel traverses generated symlink and fails to build - <!--

ATTENTION! Please read and follow:

- if this is a _question_ about how to build / test / query / deploy using Bazel, or a _discussion starter_, send it to bazel-discuss@googlegroups.com

- if this is a _bug_ or _feature request_, fill the form below as best ... | process | bazel traverses generated symlink and fails to build attention please read and follow if this is a question about how to build test query deploy using bazel or a discussion starter send it to bazel discuss googlegroups com if this is a bug or feature request fill the form below as best ... | 1 |

59,711 | 24,853,876,407 | IssuesEvent | 2022-10-26 23:06:21 | microsoft/vscode-cpptools | https://api.github.com/repos/microsoft/vscode-cpptools | closed | Default C_Cpp.codeAnalysis.clangTidy.headerFilter doesn't work on Windows in 1.13.2 | bug Language Service fixed (release pending) quick fix regression insiders | There's a regression causing the headerFilter to be capitalized.

| 1.0 | Default C_Cpp.codeAnalysis.clangTidy.headerFilter doesn't work on Windows in 1.13.2 - There's a regression causing the headerFilter to be capitalized.

| non_process | default c cpp codeanalysis clangtidy headerfilter doesn t work on windows in there s a regression causing the headerfilter to be capitalized | 0 |

49,018 | 10,314,929,752 | IssuesEvent | 2019-08-30 05:51:39 | Mtaethefarmer/My-Interactive-Story | https://api.github.com/repos/Mtaethefarmer/My-Interactive-Story | closed | As a player I should be able to adjust settings of the camera to match my desired motion senstivity | code design | - [x] Expose player variables to the options menu

| 1.0 | As a player I should be able to adjust settings of the camera to match my desired motion senstivity - - [x] Expose player variables to the options menu

| non_process | as a player i should be able to adjust settings of the camera to match my desired motion senstivity expose player variables to the options menu | 0 |

111,771 | 17,033,496,594 | IssuesEvent | 2021-07-05 01:26:04 | attesch/hackazon | https://api.github.com/repos/attesch/hackazon | opened | CVE-2019-11358 (Medium) detected in jquery-1.9.1.min.js | security vulnerability | ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.9.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="ht... | True | CVE-2019-11358 (Medium) detected in jquery-1.9.1.min.js - ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.9.1.min.js</b></p></summary>

<p>JavaScript librar... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to vulnerable library hackazon vendor phpunit php code coverage php codecoverage report html rende... | 0 |

51,968 | 12,831,704,885 | IssuesEvent | 2020-07-07 06:06:16 | jorgicio/jorgicio-gentoo-overlay | https://api.github.com/repos/jorgicio/jorgicio-gentoo-overlay | closed | x11-misc/optimus-manager: can't work in Gentoo | ebuild fail missing file | I'm using lightdm and i3. optimus-manager can't switch gpu.

```

➜ optimus-manager --status

ERROR: a GPU setup was initiated but Xorg post-start hook did not run.

Log at /var/log/optimus-manager/switch/switch-20200624T234730.log

If your login manager is GDM, make sure to follow those instructions:

https://github... | 1.0 | x11-misc/optimus-manager: can't work in Gentoo - I'm using lightdm and i3. optimus-manager can't switch gpu.

```

➜ optimus-manager --status

ERROR: a GPU setup was initiated but Xorg post-start hook did not run.

Log at /var/log/optimus-manager/switch/switch-20200624T234730.log

If your login manager is GDM, make s... | non_process | misc optimus manager can t work in gentoo i m using lightdm and optimus manager can t switch gpu ➜ optimus manager status error a gpu setup was initiated but xorg post start hook did not run log at var log optimus manager switch switch log if your login manager is gdm make sure to follow tho... | 0 |

232,167 | 25,565,380,569 | IssuesEvent | 2022-11-30 13:57:23 | hygieia/hygieia-whitesource-collector | https://api.github.com/repos/hygieia/hygieia-whitesource-collector | closed | CVE-2020-36188 (High) detected in jackson-databind-2.8.11.3.jar - autoclosed | wontfix security vulnerability | ## CVE-2020-36188 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.11.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core strea... | True | CVE-2020-36188 (High) detected in jackson-databind-2.8.11.3.jar - autoclosed - ## CVE-2020-36188 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.11.3.jar</b></p></s... | non_process | cve high detected in jackson databind jar autoclosed cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file pom xml pat... | 0 |

14,042 | 16,849,534,958 | IssuesEvent | 2021-06-20 08:00:36 | log2timeline/plaso | https://api.github.com/repos/log2timeline/plaso | closed | Change preprocessor to handle localized time zone on Windows | enhancement preprocessing | Preprocessor currently unable to handle localized time zones for Windows

```

[INFO] [PreProcess] Set attribute: time_zone_str to West-Europa (standaardtijd)

[INFO] Parser filter expression changed to: winxp

[INFO] Setting timezone to: West-Europa (standaardtijd)

[WARNING] Unable to automatically configure timezo... | 1.0 | Change preprocessor to handle localized time zone on Windows - Preprocessor currently unable to handle localized time zones for Windows

```

[INFO] [PreProcess] Set attribute: time_zone_str to West-Europa (standaardtijd)

[INFO] Parser filter expression changed to: winxp

[INFO] Setting timezone to: West-Europa (sta... | process | change preprocessor to handle localized time zone on windows preprocessor currently unable to handle localized time zones for windows set attribute time zone str to west europa standaardtijd parser filter expression changed to winxp setting timezone to west europa standaardtijd unable to a... | 1 |

174,738 | 14,497,570,786 | IssuesEvent | 2020-12-11 14:24:34 | oncleben31/cookiecutter-homeassistant-custom-component | https://api.github.com/repos/oncleben31/cookiecutter-homeassistant-custom-component | closed | Synchronize with latest changes in blueprint. | documentation enhancement | 2020.11.16 is synchronized with blueprint commit from Oct 2, 2020

Add a way in the documentation to show with which version the cookiecutter is synchronized. | 1.0 | Synchronize with latest changes in blueprint. - 2020.11.16 is synchronized with blueprint commit from Oct 2, 2020

Add a way in the documentation to show with which version the cookiecutter is synchronized. | non_process | synchronize with latest changes in blueprint is synchronized with blueprint commit from oct add a way in the documentation to show with which version the cookiecutter is synchronized | 0 |

12,599 | 14,996,574,167 | IssuesEvent | 2021-01-29 15:46:07 | ORNL-AMO/AMO-Tools-Desktop | https://api.github.com/repos/ORNL-AMO/AMO-Tools-Desktop | closed | Several PH Calcs Fuel Use | Calculator Process Heating bug | I think the Fuel Use calculation is a problem for other calculators too. (not the gross loss, that seems to be working)

This seems to be related to multiple losses. I'm guessing it is not using the right operating hours for the second loss?

Is a problem for: Wall, Opening, Gas Leak, Atmo

Charge Materials is okay and it... | 1.0 | Several PH Calcs Fuel Use - I think the Fuel Use calculation is a problem for other calculators too. (not the gross loss, that seems to be working)

This seems to be related to multiple losses. I'm guessing it is not using the right operating hours for the second loss?

Is a problem for: Wall, Opening, Gas Leak, Atmo

Cha... | process | several ph calcs fuel use i think the fuel use calculation is a problem for other calculators too not the gross loss that seems to be working this seems to be related to multiple losses i m guessing it is not using the right operating hours for the second loss is a problem for wall opening gas leak atmo cha... | 1 |

5,495 | 8,362,898,528 | IssuesEvent | 2018-10-03 18:10:26 | cityofaustin/techstack | https://api.github.com/repos/cityofaustin/techstack | closed | Process Page Content | Content type: Process Page Department: Animal Center Department: EMS Foster Application Team: Content | Finalize Process Page Content that I believe is close to done so it can be added to the Alpha site. (still being added via yaml files). Let me know if you need the structure of the Process data model to do this.

- [ ] Foster Content

- [ ] EMS Content | 1.0 | Process Page Content - Finalize Process Page Content that I believe is close to done so it can be added to the Alpha site. (still being added via yaml files). Let me know if you need the structure of the Process data model to do this.

- [ ] Foster Content

- [ ] EMS Content | process | process page content finalize process page content that i believe is close to done so it can be added to the alpha site still being added via yaml files let me know if you need the structure of the process data model to do this foster content ems content | 1 |

658,649 | 21,899,264,527 | IssuesEvent | 2022-05-20 11:49:41 | mozilla/addons-frontend | https://api.github.com/repos/mozilla/addons-frontend | closed | Addon detail and /blocked-addon/ pages return a 404 when accessed with a `guid` in format `{6eeb4879-...}` | priority: p2 type: regression type: prod_bug | ### Describe the problem and steps to reproduce it:

Open the following detail page - `https://addons.allizom.org/en-US/firefox/{6eeb4879-9c55-4c50-bc21-ad9af693e6ae}/`

### What happened?

A 404 is received

### What did you expect to happen?

The page should redirect to this addon - https://addons.allizom.org/en-... | 1.0 | Addon detail and /blocked-addon/ pages return a 404 when accessed with a `guid` in format `{6eeb4879-...}` - ### Describe the problem and steps to reproduce it:

Open the following detail page - `https://addons.allizom.org/en-US/firefox/{6eeb4879-9c55-4c50-bc21-ad9af693e6ae}/`

### What happened?

A 404 is received

... | non_process | addon detail and blocked addon pages return a when accessed with a guid in format describe the problem and steps to reproduce it open the following detail page what happened a is received what did you expect to happen the page should redirect to this addon any... | 0 |

484,184 | 13,935,789,353 | IssuesEvent | 2020-10-22 12:03:29 | StargateMC/IssueTracker | https://api.github.com/repos/StargateMC/IssueTracker | closed | Improvements to NPC Spawn logic | 1.12.2 Coding High Priority feature | I'd suggest the following changes to spawn logic:

- [ ] Only allowing NPCs to spawn within 25 blocks of sea level on a world, and only when there is nothing above them.

This will prevent NPCs spawning inside buildings, under trees or in caves and provide guarrranteed safe areas - eg: high in the mountains or unde... | 1.0 | Improvements to NPC Spawn logic - I'd suggest the following changes to spawn logic:

- [ ] Only allowing NPCs to spawn within 25 blocks of sea level on a world, and only when there is nothing above them.