Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

10,823 | 3,436,596,271 | IssuesEvent | 2015-12-12 14:39:16 | lintool/warcbase | https://api.github.com/repos/lintool/warcbase | closed | Redo Documentation to Account for getContentString, getContentBytes, etc. | documentation | I've just redone the ["Analysis of Site Link Structure"](http://lintool.github.io/warcbase-docs/Spark-Analysis-of-Site-Link-Structure/) walkthrough in the docs to account for our API revisions. Currently, all these scripts will crash as they're referring to depreciated code.

Will doublecheck that it works and can d... | 1.0 | Redo Documentation to Account for getContentString, getContentBytes, etc. - I've just redone the ["Analysis of Site Link Structure"](http://lintool.github.io/warcbase-docs/Spark-Analysis-of-Site-Link-Structure/) walkthrough in the docs to account for our API revisions. Currently, all these scripts will crash as they're... | non_process | redo documentation to account for getcontentstring getcontentbytes etc i ve just redone the walkthrough in the docs to account for our api revisions currently all these scripts will crash as they re referring to depreciated code will doublecheck that it works and can do others such as | 0 |

123,930 | 26,357,838,033 | IssuesEvent | 2023-01-11 11:03:47 | vegaprotocol/specs | https://api.github.com/repos/vegaprotocol/specs | closed | 0059-STKG-simple_staking_and_delegating - spec needs updating to align with implementation | ac-code-remediation | Specs seems out of date and requires a full review against what has been delivered

Once completed we can review again for missing ACs | 1.0 | 0059-STKG-simple_staking_and_delegating - spec needs updating to align with implementation - Specs seems out of date and requires a full review against what has been delivered

Once completed we can review again for missing ACs | non_process | stkg simple staking and delegating spec needs updating to align with implementation specs seems out of date and requires a full review against what has been delivered once completed we can review again for missing acs | 0 |

116,387 | 4,701,053,082 | IssuesEvent | 2016-10-12 20:24:29 | Innovate-Inc/CRS | https://api.github.com/repos/Innovate-Inc/CRS | opened | Review CRS ArcGIS Server Environment | CRS Review GIS Team MVP Priority: Medium | Set up a time to test and finishing configuring ArcGIS Server and SQL Server connectivity. | 1.0 | Review CRS ArcGIS Server Environment - Set up a time to test and finishing configuring ArcGIS Server and SQL Server connectivity. | non_process | review crs arcgis server environment set up a time to test and finishing configuring arcgis server and sql server connectivity | 0 |

6,109 | 4,155,302,782 | IssuesEvent | 2016-06-16 14:33:22 | sixeco/ProjectAlbatross | https://api.github.com/repos/sixeco/ProjectAlbatross | opened | Relevance Assessment | usability user story | Als Usabiltiy - Fachmann möchte ich ein Relevance Assessment durchführen, um zu sehen wie gut meine Suche ranked. | True | Relevance Assessment - Als Usabiltiy - Fachmann möchte ich ein Relevance Assessment durchführen, um zu sehen wie gut meine Suche ranked. | non_process | relevance assessment als usabiltiy fachmann möchte ich ein relevance assessment durchführen um zu sehen wie gut meine suche ranked | 0 |

2,726 | 5,612,442,533 | IssuesEvent | 2017-04-03 05:11:30 | AllenFang/react-bootstrap-table | https://api.github.com/repos/AllenFang/react-bootstrap-table | closed | Insertrow modal placeholders have commas when dynamically generating the table. | enhancement inprocess | This is probably because the <TableHeaderColumn/> gets its child through a map operation.

`this.props.keys.map( (key) => <TableHeaderColumn key={key} width={key==="sno"?"50px":"150px"} dataField={key}> {key.toUpperCase()} </TableHeaderColumn> )`

=> <TableHeaderColumn key={key} width={key==="sno"?"50px":"150px"} dataField={key}> {key.toUpperCase()} </TableHeade... | process | insertrow modal placeholders have commas when dynamically generating the table this is probably because the gets its child through a map operation this props keys map key key touppercase | 1 |

21,076 | 28,019,955,588 | IssuesEvent | 2023-03-28 04:02:59 | 0xPolygonMiden/miden-vm | https://api.github.com/repos/0xPolygonMiden/miden-vm | closed | Replace `mtree_cwm` instruction with `mtree_merge` | assembly processor | Once we integrate `MerkleStore` into the `AdviceProvider` (#774) `mtree_cwm` instruction will no longer be necessary - so, we should remove it.

At the same time, we need to introduce a new instruction which would merge two nodes in the advice provider using [merge_roots()](https://github.com/0xPolygonMiden/crypto/bl... | 1.0 | Replace `mtree_cwm` instruction with `mtree_merge` - Once we integrate `MerkleStore` into the `AdviceProvider` (#774) `mtree_cwm` instruction will no longer be necessary - so, we should remove it.

At the same time, we need to introduce a new instruction which would merge two nodes in the advice provider using [merge... | process | replace mtree cwm instruction with mtree merge once we integrate merklestore into the adviceprovider mtree cwm instruction will no longer be necessary so we should remove it at the same time we need to introduce a new instruction which would merge two nodes in the advice provider using func... | 1 |

90,310 | 3,814,366,915 | IssuesEvent | 2016-03-28 12:52:54 | minetest/minetest | https://api.github.com/repos/minetest/minetest | closed | Incomplete mapblocks being saved to database | @ Mapgen Can't fix Low priority | How to reproduce: (with a Lua mapgen)

1) Manipulate the mapgen, so it throws an error while building the terrain

2) Fix the error

3) Rejoin and enjoy the TNT-effect

Basically, the database should not save incomplete mapblocks, but in this case, it does. | 1.0 | Incomplete mapblocks being saved to database - How to reproduce: (with a Lua mapgen)

1) Manipulate the mapgen, so it throws an error while building the terrain

2) Fix the error

3) Rejoin and enjoy the TNT-effect

Basically, the database should not save incomplete mapblocks, but in this case, it does. | non_process | incomplete mapblocks being saved to database how to reproduce with a lua mapgen manipulate the mapgen so it throws an error while building the terrain fix the error rejoin and enjoy the tnt effect basically the database should not save incomplete mapblocks but in this case it does | 0 |

60,231 | 8,409,312,424 | IssuesEvent | 2018-10-12 06:49:17 | bio-phys/MDBenchmark | https://api.github.com/repos/bio-phys/MDBenchmark | opened | Update documentation for 2.0 | documentation good first issue | We just merged the documentation into `develop` (PR #101). Before releasing version 2.0, we should update the documentation to reflect the new functionality. This should actually be only minor changes, because the biggest part was to write the initial documentation in the first place. | 1.0 | Update documentation for 2.0 - We just merged the documentation into `develop` (PR #101). Before releasing version 2.0, we should update the documentation to reflect the new functionality. This should actually be only minor changes, because the biggest part was to write the initial documentation in the first place. | non_process | update documentation for we just merged the documentation into develop pr before releasing version we should update the documentation to reflect the new functionality this should actually be only minor changes because the biggest part was to write the initial documentation in the first place | 0 |

202,976 | 15,863,576,389 | IssuesEvent | 2021-04-08 12:56:39 | cornellius-gp/gpytorch | https://api.github.com/repos/cornellius-gp/gpytorch | reopened | How to use Adam optimizer instead of SGD in the example given in the document SVDKL | documentation | Hi,

I am using the [example](https://docs.gpytorch.ai/en/v1.2.1/examples/06_PyTorch_NN_Integration_DKL/Deep_Kernel_Learning_DenseNet_CIFAR_Tutorial.html) and whilst using SGD optimzer , my accuracy stuck at 50% on my custom dataset. I would like to use Adam instead but I know from the [link](https://docs.gpytorch.ai/... | 1.0 | How to use Adam optimizer instead of SGD in the example given in the document SVDKL - Hi,

I am using the [example](https://docs.gpytorch.ai/en/v1.2.1/examples/06_PyTorch_NN_Integration_DKL/Deep_Kernel_Learning_DenseNet_CIFAR_Tutorial.html) and whilst using SGD optimzer , my accuracy stuck at 50% on my custom dataset.... | non_process | how to use adam optimizer instead of sgd in the example given in the document svdkl hi i am using the and whilst using sgd optimzer my accuracy stuck at on my custom dataset i would like to use adam instead but i know from the that adam can be used for hyper parameters so how can i use it in deep ke... | 0 |

137,546 | 11,140,629,213 | IssuesEvent | 2019-12-21 15:52:37 | bitfocus/companion-module-requests | https://api.github.com/repos/bitfocus/companion-module-requests | closed | H2R Support | Needs testers Software Stale | Module Support for a H2R that does cut and fill and Chroma key messages and lower thrids

https://heretorecord.com/graphics#download

https://heretorecord.com/graphics/docs#osc-control

Just adding OSC support in a module | 1.0 | H2R Support - Module Support for a H2R that does cut and fill and Chroma key messages and lower thrids

https://heretorecord.com/graphics#download

https://heretorecord.com/graphics/docs#osc-control

Just adding OSC support in a module | non_process | support module support for a that does cut and fill and chroma key messages and lower thrids just adding osc support in a module | 0 |

203,409 | 15,366,343,815 | IssuesEvent | 2021-03-02 01:08:08 | gardners/surveysystem | https://api.github.com/repos/gardners/surveysystem | closed | backend, extend test_serialiser | Priority: MEDIUM backend tests | housekeeping: `test_serialiser.c` can be used for unit tests in general, rename and improve

This is the first step to better unit test ing | 1.0 | backend, extend test_serialiser - housekeeping: `test_serialiser.c` can be used for unit tests in general, rename and improve

This is the first step to better unit test ing | non_process | backend extend test serialiser housekeeping test serialiser c can be used for unit tests in general rename and improve this is the first step to better unit test ing | 0 |

622 | 3,089,032,404 | IssuesEvent | 2015-08-25 19:32:08 | dita-ot/dita-ot | https://api.github.com/repos/dita-ot/dita-ot | closed | Can no longer publish to XHTML image with data protocol [DOT 2.x develop branch] | bug P2 preprocess | In my DITA topic I refer to an embedded image like:

```xml

<image href="data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAAjUAAAC9CAIAAADECxtTAAAAAXNSR0IArs4c6QAAAARnQU1BAACxjwv8YQUAAAAJcEhZcwAAEnQAABJ0Ad5mH3gAABp8SURBVHhe7Zxbjlw3kkC9K6nWI6n20EsQ5K7f+ehNGLBRCxkDPXZ9CGiggQa0APV4ZgBPkEHyksHHfWRmJbPyHARuJ8lgRJCXjKgsVfuHPwEA... | 1.0 | Can no longer publish to XHTML image with data protocol [DOT 2.x develop branch] - In my DITA topic I refer to an embedded image like:

```xml

<image href="data:image/png;base64,iVBORw0KGgoAAAANSUhEUgAAAjUAAAC9CAIAAADECxtTAAAAAXNSR0IArs4c6QAAAARnQU1BAACxjwv8YQUAAAAJcEhZcwAAEnQAABJ0Ad5mH3gAABp8SURBVHhe7Zxbjlw3kkC9K6nWI... | process | can no longer publish to xhtml image with data protocol in my dita topic i refer to an embedded image like xml publishing to xhtml works with dita ot but publishing with dita ot x fails build failed d projects exml frameworks dita dita x build xml the following error occurred while ... | 1 |

18,016 | 24,032,773,087 | IssuesEvent | 2022-09-15 16:18:26 | googleapis/java-beyondcorp-appconnections | https://api.github.com/repos/googleapis/java-beyondcorp-appconnections | opened | Your .repo-metadata.json file has a problem 🤒 | type: process repo-metadata: lint | You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'beyondcorp-appconnections' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com/googleapis/repo-automation-bots/blob/main/packag... | 1.0 | Your .repo-metadata.json file has a problem 🤒 - You have a problem with your .repo-metadata.json file:

Result of scan 📈:

* api_shortname 'beyondcorp-appconnections' invalid in .repo-metadata.json

☝️ Once you address these problems, you can close this issue.

### Need help?

* [Schema definition](https://github.com... | process | your repo metadata json file has a problem 🤒 you have a problem with your repo metadata json file result of scan 📈 api shortname beyondcorp appconnections invalid in repo metadata json ☝️ once you address these problems you can close this issue need help lists valid options for each field... | 1 |

18,419 | 10,962,706,858 | IssuesEvent | 2019-11-27 17:52:15 | microsoft/BotFramework-Composer | https://api.github.com/repos/microsoft/BotFramework-Composer | closed | Initialize Property for metadata usage in QNA maker | Bot Services customer-replied-to customer-reported | Is there any way currently to initialize the property as the metadata from QNA to retain context. Without composer I would use the Post json response of qna to retain context.

This holds good for Follow-up prompts as well. Any suggestions? Other way I tried is by using HTTP request rather than QNA to have post base... | 1.0 | Initialize Property for metadata usage in QNA maker - Is there any way currently to initialize the property as the metadata from QNA to retain context. Without composer I would use the Post json response of qna to retain context.

This holds good for Follow-up prompts as well. Any suggestions? Other way I tried is b... | non_process | initialize property for metadata usage in qna maker is there any way currently to initialize the property as the metadata from qna to retain context without composer i would use the post json response of qna to retain context this holds good for follow up prompts as well any suggestions other way i tried is b... | 0 |

16,197 | 20,681,978,884 | IssuesEvent | 2022-03-10 14:41:16 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | GDAL Dissolve with temporal layers misses geometries | Feedback Processing Bug | ### What is the bug or the crash?

When doing a Dissolve using the GDAL library, the process missess some geometries. It is reproduced with a temporal layer, but if you save it in a Shapefile, it works well.

### Steps to reproduce the issue

[Data.zip](https://github.com/qgis/QGIS/files/8222662/Data.zip)

1) We do a... | 1.0 | GDAL Dissolve with temporal layers misses geometries - ### What is the bug or the crash?

When doing a Dissolve using the GDAL library, the process missess some geometries. It is reproduced with a temporal layer, but if you save it in a Shapefile, it works well.

### Steps to reproduce the issue

[Data.zip](https://git... | process | gdal dissolve with temporal layers misses geometries what is the bug or the crash when doing a dissolve using the gdal library the process missess some geometries it is reproduced with a temporal layer but if you save it in a shapefile it works well steps to reproduce the issue we do an int... | 1 |

74,029 | 14,169,751,921 | IssuesEvent | 2020-11-12 13:40:02 | mentaLwz/gitblogOfMental | https://api.github.com/repos/mentaLwz/gitblogOfMental | opened | 922. 按奇偶排序数组 II | Leetcode2020 | ```go

func sortArrayByParityII(A []int) []int {

l := len(A)

e := 0

o := 1

a := make([]int, l)

for i := 0; i< l; i++ {

if A[i] %2 == 0 {

a[e] = A[i]

e += 2

} else {

a[o] = A[i]

o += 2

}

}

return a

}

```... | 1.0 | 922. 按奇偶排序数组 II - ```go

func sortArrayByParityII(A []int) []int {

l := len(A)

e := 0

o := 1

a := make([]int, l)

for i := 0; i< l; i++ {

if A[i] %2 == 0 {

a[e] = A[i]

e += 2

} else {

a[o] = A[i]

o += 2

}

}

... | non_process | 按奇偶排序数组 ii go func sortarraybyparityii a int int l len a e o a make int l for i i l i if a a a e else a a o return a ... | 0 |

4,852 | 7,742,888,913 | IssuesEvent | 2018-05-29 11:00:10 | ethereumjs/ethereumjs-client | https://api.github.com/repos/ethereumjs/ethereumjs-client | opened | Early on VM tests | Block Processing / VM External | #### Description

Thanks to the work of @vpulim the ``ethereumjs-blockchain`` library is now compatible with Geth chain DBs starting with the [v3.x](https://github.com/ethereumjs/ethereumjs-blockchain/releases/tag/v3.0.0) release series.

This can (and should 😛) be used to run the VM on a post-Byzantium synced Get... | 1.0 | Early on VM tests - #### Description

Thanks to the work of @vpulim the ``ethereumjs-blockchain`` library is now compatible with Geth chain DBs starting with the [v3.x](https://github.com/ethereumjs/ethereumjs-blockchain/releases/tag/v3.0.0) release series.

This can (and should 😛) be used to run the VM on a post-... | process | early on vm tests description thanks to the work of vpulim the ethereumjs blockchain library is now compatible with geth chain dbs starting with the release series this can and should 😛 be used to run the vm on a post byzantium synced geth chain db and process actual mainnet transactions to se... | 1 |

97,605 | 16,236,396,570 | IssuesEvent | 2021-05-07 01:38:05 | michaeldotson/auth-app | https://api.github.com/repos/michaeldotson/auth-app | opened | CVE-2020-8164 (High) detected in actionpack-5.2.2.gem | security vulnerability | ## CVE-2020-8164 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>actionpack-5.2.2.gem</b></p></summary>

<p>Web apps on Rails. Simple, battle-tested conventions for building and testing... | True | CVE-2020-8164 (High) detected in actionpack-5.2.2.gem - ## CVE-2020-8164 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>actionpack-5.2.2.gem</b></p></summary>

<p>Web apps on Rails. Si... | non_process | cve high detected in actionpack gem cve high severity vulnerability vulnerable library actionpack gem web apps on rails simple battle tested conventions for building and testing mvc web applications works with any rack compatible server library home page a href path ... | 0 |

128,306 | 17,475,859,579 | IssuesEvent | 2021-08-08 05:28:19 | reconness/reconness-frontend | https://api.github.com/repos/reconness/reconness-frontend | closed | Delete pipeline | story points: 3 discussion design | We need an option to delete a pipeline from the Pipeline list (in Mosaic and List mode) and from a Pipeline Details page.

When the user clicks the Delete option, a confirmation popup will appear.

Confirmation popup will be similar to but the reference will be for a Pipeline

and from a Pipeline Details page.

When the user clicks the Delete option, a confirmation popup will appear.

Confirmation popup will be similar to but the reference will be for a Pipeline

Not urgent | 1.0 | PD-Datepicker Error - If the PD-Datepicker is set to not have user_input the error state is not correctly displayed (no red border)

Not urgent | non_process | pd datepicker error if the pd datepicker is set to not have user input the error state is not correctly displayed no red border not urgent | 0 |

18,980 | 24,968,680,959 | IssuesEvent | 2022-11-01 21:58:56 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | opened | Update OS packages in image | enhancement security process | ### Problem

The container images frequently contains out of date packages from the base image that may contain vulnerabilities. We should keep them up to date even if there's not an updated base image to consume.

### Solution

* Update base image for all mirror node components

* Add an extra command to update all OS... | 1.0 | Update OS packages in image - ### Problem

The container images frequently contains out of date packages from the base image that may contain vulnerabilities. We should keep them up to date even if there's not an updated base image to consume.

### Solution

* Update base image for all mirror node components

* Add an ... | process | update os packages in image problem the container images frequently contains out of date packages from the base image that may contain vulnerabilities we should keep them up to date even if there s not an updated base image to consume solution update base image for all mirror node components add an ... | 1 |

73,401 | 8,871,796,987 | IssuesEvent | 2019-01-11 13:45:49 | bitpay/copay | https://api.github.com/repos/bitpay/copay | closed | Improve delete wallet view design | Design Needed | _From @bitjson on October 18, 2016 22:45_

Needs love:

_Copied from original issue: bitpay/bitpay-wallet#562_

| 1.0 | Improve delete wallet view design - _From @bitjson on October 18, 2016 22:45_

Needs love:

_Copied from original issue: bitpay/bitpay-wallet#562_

| non_process | improve delete wallet view design from bitjson on october needs love copied from original issue bitpay bitpay wallet | 0 |

176,217 | 28,045,023,192 | IssuesEvent | 2023-03-28 21:54:12 | MozillaFoundation/foundation.mozilla.org | https://api.github.com/repos/MozillaFoundation/foundation.mozilla.org | closed | Roadmap and Prioritize Site Improvement Recommendations | design | following up issue #6303

The recommendations from the IA refresh has been labeled and categorized on a spreadsheet https://docs.google.com/spreadsheets/d/14XlcxPYT5qJFPnMUsPmDk512lMzTuLx0OJeSwGbmAQM/edit?usp=sharing

These will need be prioritized so 'complexity for implementation' and 'impact' need to be scoped.... | 1.0 | Roadmap and Prioritize Site Improvement Recommendations - following up issue #6303

The recommendations from the IA refresh has been labeled and categorized on a spreadsheet https://docs.google.com/spreadsheets/d/14XlcxPYT5qJFPnMUsPmDk512lMzTuLx0OJeSwGbmAQM/edit?usp=sharing

These will need be prioritized so 'comp... | non_process | roadmap and prioritize site improvement recommendations following up issue the recommendations from the ia refresh has been labeled and categorized on a spreadsheet these will need be prioritized so complexity for implementation and impact need to be scoped the action items can then be roadmapped to ... | 0 |

150,577 | 11,967,347,977 | IssuesEvent | 2020-04-06 06:27:53 | ubtue/DatenProbleme | https://api.github.com/repos/ubtue/DatenProbleme | closed | ISSN 1573-0697 Journal of business ethics Mix aus Online First und Standardartikel | Zotero_AUTO_RSS blocked ready for testing | Die Daten enthalten sowohl Artikel die einem Heft zugeordnet sind, als auch solche ohne Heftzuordnung | 1.0 | ISSN 1573-0697 Journal of business ethics Mix aus Online First und Standardartikel - Die Daten enthalten sowohl Artikel die einem Heft zugeordnet sind, als auch solche ohne Heftzuordnung | non_process | issn journal of business ethics mix aus online first und standardartikel die daten enthalten sowohl artikel die einem heft zugeordnet sind als auch solche ohne heftzuordnung | 0 |

71,688 | 15,207,902,600 | IssuesEvent | 2021-02-17 01:17:40 | billmcchesney1/hadoop | https://api.github.com/repos/billmcchesney1/hadoop | opened | CVE-2020-36189 (Medium) detected in jackson-databind-2.9.10.1.jar | security vulnerability | ## CVE-2020-36189 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.1.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core str... | True | CVE-2020-36189 (Medium) detected in jackson-databind-2.9.10.1.jar - ## CVE-2020-36189 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.9.10.1.jar</b></p></summary>

... | non_process | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to vulnerable library hadoop hadoop yarn p... | 0 |

8,415 | 11,580,353,798 | IssuesEvent | 2020-02-21 19:57:11 | usgpo/bill-status | https://api.github.com/repos/usgpo/bill-status | closed | Incorrect Senate Homeland Subcommittee Name | file reprocessed | In the bulk data source on govinfo.gov, some Senate bills have the incorrect subcommittee name for the Homeland Security and Governmental Affairs Subcommittee on Regulatory Affairs and Federal Management:

https://www.govinfo.gov/bulkdata/BILLSTATUS/116/s/BILLSTATUS-116s1120.xml

```

<subcommittees>

<item>

<syst... | 1.0 | Incorrect Senate Homeland Subcommittee Name - In the bulk data source on govinfo.gov, some Senate bills have the incorrect subcommittee name for the Homeland Security and Governmental Affairs Subcommittee on Regulatory Affairs and Federal Management:

https://www.govinfo.gov/bulkdata/BILLSTATUS/116/s/BILLSTATUS-116s1... | process | incorrect senate homeland subcommittee name in the bulk data source on govinfo gov some senate bills have the incorrect subcommittee name for the homeland security and governmental affairs subcommittee on regulatory affairs and federal management and federal management subcommittee ... | 1 |

511,356 | 14,858,727,212 | IssuesEvent | 2021-01-18 17:14:31 | weaveworks/eksctl | https://api.github.com/repos/weaveworks/eksctl | closed | Ability to specify egress/ingress rules for cluster shared security group | area/nodegroup kind/feature priority/backlog stale | **Why do you want this feature?**

See background in https://github.com/weaveworks/eksctl/issues/1773

Managing security groups outside of `eksctl` just to customize egress/ingress adds inordinate complexity

**What feature/behavior/change do you want?**

> Ideally that would be accomplished through a feature whe... | 1.0 | Ability to specify egress/ingress rules for cluster shared security group - **Why do you want this feature?**

See background in https://github.com/weaveworks/eksctl/issues/1773

Managing security groups outside of `eksctl` just to customize egress/ingress adds inordinate complexity

**What feature/behavior/chang... | non_process | ability to specify egress ingress rules for cluster shared security group why do you want this feature see background in managing security groups outside of eksctl just to customize egress ingress adds inordinate complexity what feature behavior change do you want ideally that would be accom... | 0 |

101,047 | 11,211,974,826 | IssuesEvent | 2020-01-06 16:32:47 | project-koku/koku | https://api.github.com/repos/project-koku/koku | opened | Update nise documentation | developer productivity documentation | ## User Story

As a user of nise (especially now that its on pypi) I want proper documentation so that I can use the tool.

## Assumptions

- We can keep our readme (or some form of it)

- nise does more than when it was first created, we can create a docs folder and add more structured documentation there

- We can ... | 1.0 | Update nise documentation - ## User Story

As a user of nise (especially now that its on pypi) I want proper documentation so that I can use the tool.

## Assumptions

- We can keep our readme (or some form of it)

- nise does more than when it was first created, we can create a docs folder and add more structured do... | non_process | update nise documentation user story as a user of nise especially now that its on pypi i want proper documentation so that i can use the tool assumptions we can keep our readme or some form of it nise does more than when it was first created we can create a docs folder and add more structured do... | 0 |

153,339 | 13,503,385,993 | IssuesEvent | 2020-09-13 13:19:39 | geek-engineer-future/podcast | https://api.github.com/repos/geek-engineer-future/podcast | closed | [2020-09-18] Recording Document | documentation |

## テーマ

hoge

## 内容

hoge

## appendix

hoge

# ---

- 最近の気になるトピックをコメントに書きましょう!(技術トピックの場合は「タイトル」+「URL」+「概要」も一緒に書くこと)

- 今週話せそうなテーマがある方はコメントに書きましょう!

| 1.0 | [2020-09-18] Recording Document -

## テーマ

hoge

## 内容

hoge

## appendix

hoge

# ---

- 最近の気になるトピックをコメントに書きましょう!(技術トピックの場合は「タイトル」+「URL」+「概要」も一緒に書くこと)

- 今週話せそうなテーマがある方はコメントに書きましょう!

| non_process | recording document テーマ hoge 内容 hoge appendix hoge 最近の気になるトピックをコメントに書きましょう!(技術トピックの場合は「タイトル」 「url」 「概要」も一緒に書くこと) 今週話せそうなテーマがある方はコメントに書きましょう! | 0 |

43,499 | 9,449,984,941 | IssuesEvent | 2019-04-16 04:30:01 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | opened | Filing issues with multiple batched code locations ("bookmarks") | code-nav feature-request | As a user who is reviewing code for mistakes, I want to be able to "bookmark" multiple locations of code and then create a batch issue (eg on Jira) with all of the locations. I want anyone viewing the code on Sourcegraph to be able to see the issue I filed, and I want anyone viewing the issue on Jira to be able to go t... | 1.0 | Filing issues with multiple batched code locations ("bookmarks") - As a user who is reviewing code for mistakes, I want to be able to "bookmark" multiple locations of code and then create a batch issue (eg on Jira) with all of the locations. I want anyone viewing the code on Sourcegraph to be able to see the issue I fi... | non_process | filing issues with multiple batched code locations bookmarks as a user who is reviewing code for mistakes i want to be able to bookmark multiple locations of code and then create a batch issue eg on jira with all of the locations i want anyone viewing the code on sourcegraph to be able to see the issue i fi... | 0 |

899 | 2,594,288,805 | IssuesEvent | 2015-02-20 01:31:09 | BALL-Project/ball | https://api.github.com/repos/BALL-Project/ball | closed | BALLView does not report any progress when exporting VRML file | C: VIEW P: minor R: fixed T: defect | **Reported by odin on 9 Jul 39586499 03:33 UTC**

Should write out at least its done into the log window. Now, the only way to know the export is finished is to watch the file size growing. | 1.0 | BALLView does not report any progress when exporting VRML file - **Reported by odin on 9 Jul 39586499 03:33 UTC**

Should write out at least its done into the log window. Now, the only way to know the export is finished is to watch the file size growing. | non_process | ballview does not report any progress when exporting vrml file reported by odin on jul utc should write out at least its done into the log window now the only way to know the export is finished is to watch the file size growing | 0 |

1,753 | 4,445,924,236 | IssuesEvent | 2016-08-20 10:26:01 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | Issue passing file descriptors in OS X | child_process net os x | <!--

Thank you for reporting an issue.

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

If possible, please provide code that demo... | 1.0 | Issue passing file descriptors in OS X - <!--

Thank you for reporting an issue.

Please fill in as much of the template below as you're able.

Version: output of `node -v`

Platform: output of `uname -a` (UNIX), or version and 32 or 64-bit (Windows)

Subsystem: if known, please specify affected core module name

I... | process | issue passing file descriptors in os x thank you for reporting an issue please fill in as much of the template below as you re able version output of node v platform output of uname a unix or version and or bit windows subsystem if known please specify affected core module name if ... | 1 |

816,250 | 30,595,163,827 | IssuesEvent | 2023-07-21 21:06:06 | dotnet/aspnetcore | https://api.github.com/repos/dotnet/aspnetcore | closed | Auto switching for interactive components | enhancement area-blazor Priority:1 feature-full-stack-web-ui | In scope:

* Use Server by default while loading WebAssembly runtime in the background

* If WebAssembly files are cached, use WebAssembly mode

Out of scope:

* Customizing how the auto mode makes its decision (#48756)

* Shutting down Server circuits eagerly once WebAssembly files are downloaded (instead, j... | 1.0 | Auto switching for interactive components - In scope:

* Use Server by default while loading WebAssembly runtime in the background

* If WebAssembly files are cached, use WebAssembly mode

Out of scope:

* Customizing how the auto mode makes its decision (#48756)

* Shutting down Server circuits eagerly once ... | non_process | auto switching for interactive components in scope use server by default while loading webassembly runtime in the background if webassembly files are cached use webassembly mode out of scope customizing how the auto mode makes its decision shutting down server circuits eagerly once weba... | 0 |

21,562 | 29,922,573,555 | IssuesEvent | 2023-06-22 00:38:08 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [Remoto] Scrum Master na Coodesh | SALVADOR PJ BIG DATA PHP JAVA SCRUM AGILE MOBILE REQUISITOS REMOTO PROCESSOS GITHUB KANBAN CI UMA ANALYTICS ENGENHARIA DE SOFTWARE Stale | ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/jobs/scrum-master-144141746?utm_source=gith... | 1.0 | [Remoto] Scrum Master na Coodesh - ## Descrição da vaga:

Esta é uma vaga de um parceiro da plataforma Coodesh, ao candidatar-se você terá acesso as informações completas sobre a empresa e benefícios.

Fique atento ao redirecionamento que vai te levar para uma url [https://coodesh.com](https://coodesh.com/jobs/scr... | process | scrum master na coodesh descrição da vaga esta é uma vaga de um parceiro da plataforma coodesh ao candidatar se você terá acesso as informações completas sobre a empresa e benefícios fique atento ao redirecionamento que vai te levar para uma url com o pop up personalizado de candidatura 👋 a log... | 1 |

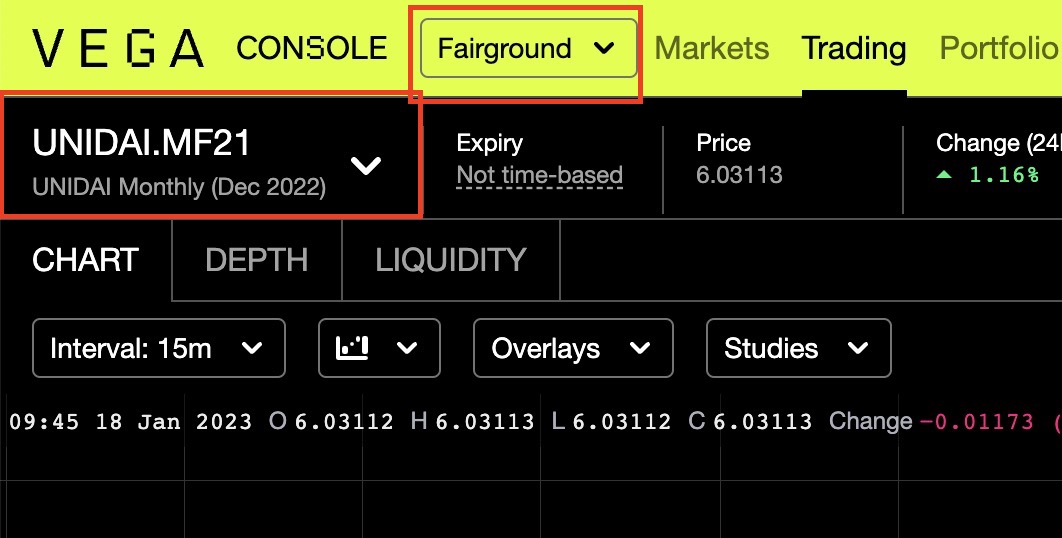

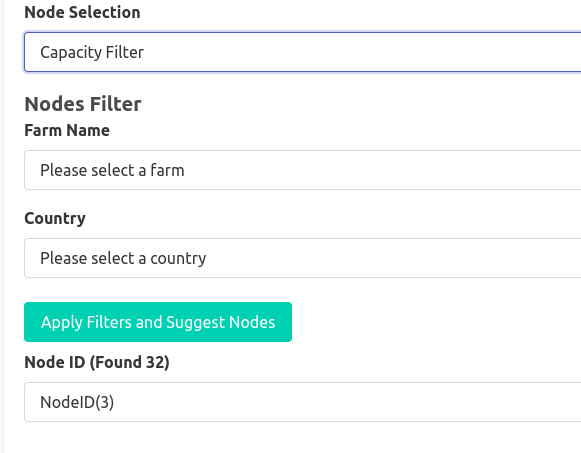

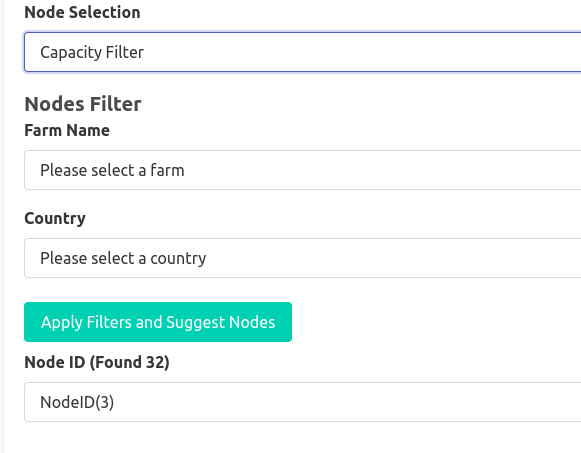

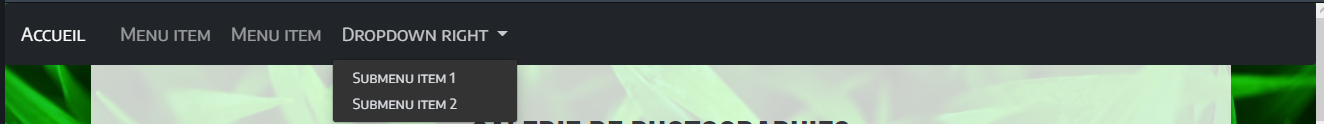

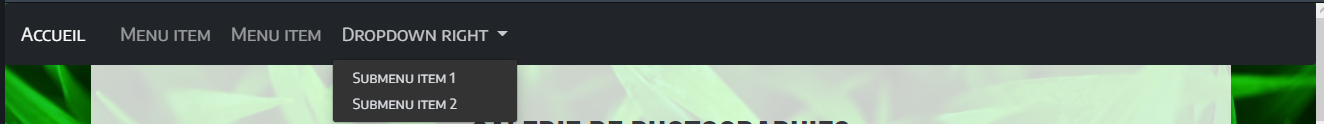

167,584 | 26,517,867,958 | IssuesEvent | 2023-01-18 22:32:32 | vegaprotocol/frontend-monorepo | https://api.github.com/repos/vegaprotocol/frontend-monorepo | opened | Replace dropdown icon with custom version | Trading ux-and-visual-design chore common | ## The Chore

Currently using a blueprint version of the icon:

vega.xyz uses a different icon for dropdowns:

vega.xyz uses a different icon for dropdowns:

![Screenshot 2023-01-... | non_process | replace dropdown icon with custom version the chore currently using a blueprint version of the icon vega xyz uses a different icon for dropdowns tasks consider design options use vega xyz pattern or add a new icon for the apps design system add to design system dev | 0 |

17,013 | 22,386,217,717 | IssuesEvent | 2022-06-17 00:51:38 | figlesias221/ProyectoDevOps_Grupo3_IglesiasPerezMolinoloJuan | https://api.github.com/repos/figlesias221/ProyectoDevOps_Grupo3_IglesiasPerezMolinoloJuan | closed | Review FrontEnd Alta de Puntos de carga | task process | Esfuerzo en HS-P:

Estimado: 1

Real: 1 (@mperezjodal ), 1 (@andrujuanoo ) | 1.0 | Review FrontEnd Alta de Puntos de carga - Esfuerzo en HS-P:

Estimado: 1

Real: 1 (@mperezjodal ), 1 (@andrujuanoo ) | process | review frontend alta de puntos de carga esfuerzo en hs p estimado real mperezjodal andrujuanoo | 1 |

74,769 | 20,366,507,576 | IssuesEvent | 2022-02-21 06:33:23 | pandres95/ndi.js | https://api.github.com/repos/pandres95/ndi.js | closed | Error 403 while installing module | bug build | An error `403` is encountered whilst attempting to install the package from https://ndijs.s3.us-east-2.amazonaws.com/ndi/v1.0.5/Release/linux-x64.tar.gz

If you're wondering, I'm using WSL and tried Node 16.14.0 and Node 17.5.0

| 1.0 | Error 403 while installing module - An error `403` is encountered whilst attempting to install the package from https://ndijs.s3.us-east-2.amazonaws.com/ndi/v1.0.5/Release/linux-x64.tar.gz

If you're wondering, I'm using WSL and tried Node 16.14.0 and Node 17.5.0

| non_process | error while installing module an error is encountered whilst attempting to install the package from if you re wondering i m using wsl and tried node and node | 0 |

33,669 | 7,743,208,769 | IssuesEvent | 2018-05-29 12:07:13 | guirisan/arrelaires | https://api.github.com/repos/guirisan/arrelaires | closed | Enviar correu a admins al registrar nova usuària o col·laboració | code things | Afegides en `app/config/mail.php` una clau `admins` amb les adreces de les admins

### REGISTRE

Creem mail `NewUserNotification` i la vista `/resources/views/emails/admin/new-user-notification.blade.php

En RegistersUsers@register afegim `\Mail::to(config('mail.admins'))->send(new NewUserNotification($user));`

##... | 1.0 | Enviar correu a admins al registrar nova usuària o col·laboració - Afegides en `app/config/mail.php` una clau `admins` amb les adreces de les admins

### REGISTRE

Creem mail `NewUserNotification` i la vista `/resources/views/emails/admin/new-user-notification.blade.php

En RegistersUsers@register afegim `\Mail::to(c... | non_process | enviar correu a admins al registrar nova usuària o col·laboració afegides en app config mail php una clau admins amb les adreces de les admins registre creem mail newusernotification i la vista resources views emails admin new user notification blade php en registersusers register afegim mail to c... | 0 |

140,372 | 5,400,755,063 | IssuesEvent | 2017-02-27 22:52:56 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | [k8s.io] Empty [Feature:Empty] does nothing {Kubernetes e2e suite} | kind/flake priority/P2 | https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-kubemark-5-gce/1946/

Failed: [k8s.io] Empty [Feature:Empty] does nothing {Kubernetes e2e suite}

```

/go/src/k8s.io/kubernetes/_output/dockerized/go/src/k8s.io/kubernetes/test/e2e/framework/framework.go:143

Dec 9 12:38:16.486: Couldn't del... | 1.0 | [k8s.io] Empty [Feature:Empty] does nothing {Kubernetes e2e suite} - https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/logs/ci-kubernetes-kubemark-5-gce/1946/

Failed: [k8s.io] Empty [Feature:Empty] does nothing {Kubernetes e2e suite}

```

/go/src/k8s.io/kubernetes/_output/dockerized/go/src/k8s.io/kubernetes/... | non_process | empty does nothing kubernetes suite failed empty does nothing kubernetes suite go src io kubernetes output dockerized go src io kubernetes test framework framework go dec couldn t delete ns tests empty client etcd cluster is unavailable or misconfigured errors... | 0 |

21,757 | 30,276,364,266 | IssuesEvent | 2023-07-07 20:04:57 | gsoft-inc/ov-igloo-ui | https://api.github.com/repos/gsoft-inc/ov-igloo-ui | closed | [Bug]: ActionMenu with disablePortal has a weird behaviour | bug in process | ### Contact Details

_No response_

### What happened?

When I play with the component, it sometimes render at a random place (the top and left css properties are not good) :

Also, as you can hardly see at ... | 1.0 | [Bug]: ActionMenu with disablePortal has a weird behaviour - ### Contact Details

_No response_

### What happened?

When I play with the component, it sometimes render at a random place (the top and left css properties are not good) :

Il campo "sito internet" accetta solo domini. Serve che pre... | 1.0 | Correzioni minori form registrazione - Compare un flag "nome inserito non corretto" quando si seleziona il campo "Nome utente", e non va più via anche se il nome è valido

Il campo "sito inter... | non_process | correzioni minori form registrazione compare un flag nome inserito non corretto quando si seleziona il campo nome utente e non va più via anche se il nome è valido il campo sito internet accetta solo domini serve che prenda anche indirizzi di pagine perchè potrebbe essere che i produttori vogliano li... | 0 |

690,497 | 23,661,863,445 | IssuesEvent | 2022-08-26 16:21:30 | TheYellowArchitect/doubledamnation | https://api.github.com/repos/TheYellowArchitect/doubledamnation | opened | Secret Ending 1 Alternative Intro Voiceline Swap | low priority | There are five endings in the game. This is the second hardest.

The camera of the intro is flipped/inverted, to signify the difference, but imo, all voicelines should be swapped ("Abandoned by the gods" should be spoken by P2/Mage, not P1/Warrior) | 1.0 | Secret Ending 1 Alternative Intro Voiceline Swap - There are five endings in the game. This is the second hardest.

The camera of the intro is flipped/inverted, to signify the difference, but imo, all voicelines should be swapped ("Abandoned by the gods" should be spoken by P2/Mage, not P1/Warrior) | non_process | secret ending alternative intro voiceline swap there are five endings in the game this is the second hardest the camera of the intro is flipped inverted to signify the difference but imo all voicelines should be swapped abandoned by the gods should be spoken by mage not warrior | 0 |

690,608 | 23,665,571,490 | IssuesEvent | 2022-08-26 20:28:39 | googleapis/doc-pipeline | https://api.github.com/repos/googleapis/doc-pipeline | closed | generate: docfx-python-assuredworkloads-0.2.0.tar.gz failed | type: bug priority: p1 flakybot: issue | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: d542fc9c171ed5d1f13eca605ad9f516637aaef6

b... | 1.0 | generate: docfx-python-assuredworkloads-0.2.0.tar.gz failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commen... | non_process | generate docfx python assuredworkloads tar gz failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed | 0 |

2,051 | 4,861,287,471 | IssuesEvent | 2016-11-14 08:09:28 | triplea-game/triplea | https://api.github.com/repos/triplea-game/triplea | opened | Non-Compatible Changes - Next release branch? | Discussion Process | I'm concerned/thinking about the next PR that breaks serialization/RMI and would require a major release.

- I would like for us to ask PR submitters to test version compatibility. This means logging in to the lobby and seeing if a game can launch and chat works (this tests java RMI for the most part). Ideally we'd a... | 1.0 | Non-Compatible Changes - Next release branch? - I'm concerned/thinking about the next PR that breaks serialization/RMI and would require a major release.

- I would like for us to ask PR submitters to test version compatibility. This means logging in to the lobby and seeing if a game can launch and chat works (this t... | process | non compatible changes next release branch i m concerned thinking about the next pr that breaks serialization rmi and would require a major release i would like for us to ask pr submitters to test version compatibility this means logging in to the lobby and seeing if a game can launch and chat works this t... | 1 |

14,328 | 17,362,458,021 | IssuesEvent | 2021-07-29 23:17:53 | googleapis/google-auth-library-java | https://api.github.com/repos/googleapis/google-auth-library-java | closed | Setup end-to-end integration tests | type: process | We should be testing this library in different execution environments (GCE, GAE, etc) | 1.0 | Setup end-to-end integration tests - We should be testing this library in different execution environments (GCE, GAE, etc) | process | setup end to end integration tests we should be testing this library in different execution environments gce gae etc | 1 |

8,977 | 12,093,585,419 | IssuesEvent | 2020-04-19 20:14:21 | Pretronic/PretronicLibraries | https://api.github.com/repos/Pretronic/PretronicLibraries | closed | Copy utility | In processing global-utility | Create a copy utility based on reflections.

- [x] Copy normal classes

- [x] Deep Copy option

- [x] Annotations for extra handlers

- [x] CopyAble and DeepCopyAble interface (Self copy method)

- [ ] Adapters for special objects

- [ ] Default Adapter for List

- [ ] Default Adapter for Map

- [ ] Default Adapter for Collect... | 1.0 | Copy utility - Create a copy utility based on reflections.

- [x] Copy normal classes

- [x] Deep Copy option

- [x] Annotations for extra handlers

- [x] CopyAble and DeepCopyAble interface (Self copy method)

- [ ] Adapters for special objects

- [ ] Default Adapter for List

- [ ] Default Adapter for Map

- [ ] Default Adap... | process | copy utility create a copy utility based on reflections copy normal classes deep copy option annotations for extra handlers copyable and deepcopyable interface self copy method adapters for special objects default adapter for list default adapter for map default adapter for collecti... | 1 |

570,245 | 17,023,071,492 | IssuesEvent | 2021-07-03 00:15:28 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | ability to not show landsat in the applet | Component: applet Priority: minor Resolution: wontfix Type: enhancement | **[Submitted to the original trac issue database at 3.19pm, Thursday, 10th November 2005]**

simplest would be to request the WMS layers seperately and show/hide them? | 1.0 | ability to not show landsat in the applet - **[Submitted to the original trac issue database at 3.19pm, Thursday, 10th November 2005]**

simplest would be to request the WMS layers seperately and show/hide them? | non_process | ability to not show landsat in the applet simplest would be to request the wms layers seperately and show hide them | 0 |

21,102 | 28,056,452,302 | IssuesEvent | 2023-03-29 09:41:12 | camunda/issues | https://api.github.com/repos/camunda/issues | opened | Support OIDC for Elasticsearch in Self-Managed | component:distribution component:operate component:optimize component:tasklist component:zeebe component:zeebe-process-automation public kind:epic potential:8.3 | ### Value Proposition Statement

Secure connections to Elasticsearch using OpenIDConnect in Self-Managed

### User Problem

Today connection between Webapps & Zeebe Elastic Exporter can only use basic authentication.

Nowadays organizations have often policies that forbid using Basic Authentication and that rely on... | 1.0 | Support OIDC for Elasticsearch in Self-Managed - ### Value Proposition Statement

Secure connections to Elasticsearch using OpenIDConnect in Self-Managed

### User Problem

Today connection between Webapps & Zeebe Elastic Exporter can only use basic authentication.

Nowadays organizations have often policies that f... | process | support oidc for elasticsearch in self managed value proposition statement secure connections to elasticsearch using openidconnect in self managed user problem today connection between webapps zeebe elastic exporter can only use basic authentication nowadays organizations have often policies that f... | 1 |

296,076 | 22,287,757,962 | IssuesEvent | 2022-06-11 22:46:10 | CR6Community/CR-6-touchscreen | https://api.github.com/repos/CR6Community/CR-6-touchscreen | closed | Strange screen is displayed on Touch Screen firmware flashing. | documentation | I just ordered an additional touch screen for the CR-6 SE and it was delivered.

While flashing the new CR-6 SE's touch screen using the 61F_RC_290422_v1 released by CR6Community/CR-6-touchscreen, near completion, a white noise-like screen appears.

| Feature: Processor/x86 Type: Enhancement | Version 9.1 of Ghidra has switched to Intel's 325383-60US manual for the instruction set reference. Thank you. This is still available on Intel's website. However they appear to have issued 325383-70US in May of this year. It is what you get if you start here: https://software.intel.com/en-us/articles/intel-sdm. T... | 1.0 | Support current Intel x86/x64 manuals (again) - Version 9.1 of Ghidra has switched to Intel's 325383-60US manual for the instruction set reference. Thank you. This is still available on Intel's website. However they appear to have issued 325383-70US in May of this year. It is what you get if you start here: https:/... | process | support current intel manuals again version of ghidra has switched to intel s manual for the instruction set reference thank you this is still available on intel s website however they appear to have issued in may of this year it is what you get if you start here the two manuals are off ... | 1 |

8,682 | 11,811,459,158 | IssuesEvent | 2020-03-19 18:14:41 | googleapis/java-mediatranslation | https://api.github.com/repos/googleapis/java-mediatranslation | opened | Switch samples/snippets/pom.xml to use libraries-bom | type: process | We cannot suggest using the libraries-bom here until this library is included in the libraries-bom | 1.0 | Switch samples/snippets/pom.xml to use libraries-bom - We cannot suggest using the libraries-bom here until this library is included in the libraries-bom | process | switch samples snippets pom xml to use libraries bom we cannot suggest using the libraries bom here until this library is included in the libraries bom | 1 |

348,434 | 10,442,372,910 | IssuesEvent | 2019-09-18 12:57:40 | getkirby/kirby | https://api.github.com/repos/getkirby/kirby | closed | Json::encode escapes unicode entities | priority: low 🐌 type: enhancement ✨ | **To Reproduce**

Steps to reproduce the behavior:

1. Put this in a template:

```php

echo \Kirby\Data\Json::encode('здравей');

```

2. Echoed string is:

```

\u0437\u0434\u0440\u0430\u0432\u0435\u0439

```

**Expected behavior**

The encoded string should be `здравей`

**Kirby Version**

3.2.3

**Additional ... | 1.0 | Json::encode escapes unicode entities - **To Reproduce**

Steps to reproduce the behavior:

1. Put this in a template:

```php

echo \Kirby\Data\Json::encode('здравей');

```

2. Echoed string is:

```

\u0437\u0434\u0440\u0430\u0432\u0435\u0439

```

**Expected behavior**

The encoded string should be `здравей`

*... | non_process | json encode escapes unicode entities to reproduce steps to reproduce the behavior put this in a template php echo kirby data json encode здравей echoed string is expected behavior the encoded string should be здравей kirby version ... | 0 |

11,113 | 13,957,681,439 | IssuesEvent | 2020-10-24 08:07:25 | alexanderkotsev/geoportal | https://api.github.com/repos/alexanderkotsev/geoportal | opened | DE: request regarding XML schema validation of metadata records | DE - Germany Geoportal Harvesting process | Related to issue #3563 note-47 to note-50

Dear Daniele,

We are planning to change our schema-validation-file for the harvest process (from apiso.xsd version 1.0.0 to apiso.xsd version 1.0.1). To be on the save site and don't "loose" any records during the harvest process for the INSPIRE Geoportal, it is i... | 1.0 | DE: request regarding XML schema validation of metadata records - Related to issue #3563 note-47 to note-50

Dear Daniele,

We are planning to change our schema-validation-file for the harvest process (from apiso.xsd version 1.0.0 to apiso.xsd version 1.0.1). To be on the save site and don't "loose" any rec... | process | de request regarding xml schema validation of metadata records related to issue note to note dear daniele we are planning to change our schema validation file for the harvest process from apiso xsd version to apiso xsd version to be on the save site and don t quot loose quot any records d... | 1 |

344,787 | 10,349,640,108 | IssuesEvent | 2019-09-04 23:18:11 | oslc-op/jira-migration-landfill | https://api.github.com/repos/oslc-op/jira-migration-landfill | closed | literal_value of the oslc_where syntax is not well-defined | Core: Query Priority: High Xtra: Jira | The spec is not clear on how to interpret the literals w/o the xsd data type.

E.g.

The terms boolean and decimal are short forms for typed literals. For example, true is a short form for "true"^xsd:booleancode>, 42 is a short form for "42"xsd:integer and 3.14159 is a short form for "3.14159"^xsd:decimal.

does not sp... | 1.0 | literal_value of the oslc_where syntax is not well-defined - The spec is not clear on how to interpret the literals w/o the xsd data type.

E.g.

The terms boolean and decimal are short forms for typed literals. For example, true is a short form for "true"^xsd:booleancode>, 42 is a short form for "42"xsd:integer and 3.... | non_process | literal value of the oslc where syntax is not well defined the spec is not clear on how to interpret the literals w o the xsd data type e g the terms boolean and decimal are short forms for typed literals for example true is a short form for true xsd booleancode is a short form for xsd integer and ... | 0 |

11,592 | 14,447,380,996 | IssuesEvent | 2020-12-08 03:37:45 | A01731346/5a | https://api.github.com/repos/A01731346/5a | closed | fill_size_estimating_template | process-dashboard | - Llenado de template de estimación de líneas de código en process dashboard

- Correr el PROBE Wizard | 1.0 | fill_size_estimating_template - - Llenado de template de estimación de líneas de código en process dashboard

- Correr el PROBE Wizard | process | fill size estimating template llenado de template de estimación de líneas de código en process dashboard correr el probe wizard | 1 |

301,800 | 26,101,935,283 | IssuesEvent | 2022-12-27 08:18:30 | wazuh/wazuh | https://api.github.com/repos/wazuh/wazuh | opened | Release 4.4.0 - Alpha 2 - E2E UX tests - Wazuh Indexer | type/test/manual release test/4.4.0 | The following issue aims to run the specified test for the current release candidate, report the results, and open new issues for any encountered errors.

## Test information

| | |

|-------------------------|-----------------------------------------... | 2.0 | Release 4.4.0 - Alpha 2 - E2E UX tests - Wazuh Indexer - The following issue aims to run the specified test for the current release candidate, report the results, and open new issues for any encountered errors.

## Test information

| | |

|----------... | non_process | release alpha ux tests wazuh indexer the following issue aims to run the specified test for the current release candidate report the results and open new issues for any encountered errors test information ... | 0 |

13,867 | 16,623,137,571 | IssuesEvent | 2021-06-03 05:57:28 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Refactor incorrectly converts from int64 to int8 | Bug Processing |

<!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially sponsoring a fix

Checklist before submitting

... | 1.0 | Refactor incorrectly converts from int64 to int8 -

<!--

Bug fixing and feature development is a community responsibility, and not the responsibility of the QGIS project alone.

If this bug report or feature request is high-priority for you, we suggest engaging a QGIS developer or support organisation and financially... | process | refactor incorrectly converts from to bug fixing and feature development is a community responsibility and not the responsibility of the qgis project alone if this bug report or feature request is high priority for you we suggest engaging a qgis developer or support organisation and financially sponso... | 1 |

278,349 | 21,075,277,652 | IssuesEvent | 2022-04-02 03:39:20 | Shopify/shopify-cli | https://api.github.com/repos/Shopify/shopify-cli | closed | Update the blog regarding CLI | area:documentation no-issue-activity | Per feedback provided on #762

> Finding the install instructions was a bit of a maze

> The blog leads you to shopify.dev which doesn't actually mention CLI but refers to "tools" down the page - OK, click on that - there is a link to the CLI source on GitHub, with a documentation link in the readme — click — then c... | 1.0 | Update the blog regarding CLI - Per feedback provided on #762

> Finding the install instructions was a bit of a maze

> The blog leads you to shopify.dev which doesn't actually mention CLI but refers to "tools" down the page - OK, click on that - there is a link to the CLI source on GitHub, with a documentation lin... | non_process | update the blog regarding cli per feedback provided on finding the install instructions was a bit of a maze the blog leads you to shopify dev which doesn t actually mention cli but refers to tools down the page ok click on that there is a link to the cli source on github with a documentation link ... | 0 |

231,417 | 7,632,155,584 | IssuesEvent | 2018-05-05 11:56:28 | pzahemszky/sudoku | https://api.github.com/repos/pzahemszky/sudoku | opened | Separate primary and secondary peers | enhancement good first issue low priority | There could be a simple two-level hierarchy between peers: those operations that are more likely to be successful should be placed in front of the secondary operations in the queue of `remove_rearrange`.

In particular, for the triple `(dig, row, col)` and corresponding `row_slice` and `col_slice` objects the below o... | 1.0 | Separate primary and secondary peers - There could be a simple two-level hierarchy between peers: those operations that are more likely to be successful should be placed in front of the secondary operations in the queue of `remove_rearrange`.

In particular, for the triple `(dig, row, col)` and corresponding `row_sli... | non_process | separate primary and secondary peers there could be a simple two level hierarchy between peers those operations that are more likely to be successful should be placed in front of the secondary operations in the queue of remove rearrange in particular for the triple dig row col and corresponding row sli... | 0 |

242,465 | 18,545,121,060 | IssuesEvent | 2021-10-21 20:59:20 | ReznikovRoman/airbnb-clone | https://api.github.com/repos/ReznikovRoman/airbnb-clone | opened | [FEATURE] Improve documentation | documentation feature cleanup/optimization | **Description**

- Change README.md file: makefile.env file is required (used by pre-commit)

- Add docker-compose.yml file: populate database with fake data, run tests, etc.

- Add guidelines.md file: specify project style guide:

- Code style

- Naming conventions

- Project structure (models, views, services... | 1.0 | [FEATURE] Improve documentation - **Description**

- Change README.md file: makefile.env file is required (used by pre-commit)

- Add docker-compose.yml file: populate database with fake data, run tests, etc.

- Add guidelines.md file: specify project style guide:

- Code style

- Naming conventions

- Project ... | non_process | improve documentation description change readme md file makefile env file is required used by pre commit add docker compose yml file populate database with fake data run tests etc add guidelines md file specify project style guide code style naming conventions project structur... | 0 |

22,510 | 31,562,740,230 | IssuesEvent | 2023-09-03 12:57:05 | nextflow-io/nextflow | https://api.github.com/repos/nextflow-io/nextflow | closed | make inputs read-only | lang/processes good first issue | I've run into several hard-to-trace pipeline bugs caused by tasks inadvertently modifying input files that were staged in as symlinks or hardlinks. It would be good if Nextflow could make such inputs read-only before task execution, and restore their mode afterwards. | 1.0 | make inputs read-only - I've run into several hard-to-trace pipeline bugs caused by tasks inadvertently modifying input files that were staged in as symlinks or hardlinks. It would be good if Nextflow could make such inputs read-only before task execution, and restore their mode afterwards. | process | make inputs read only i ve run into several hard to trace pipeline bugs caused by tasks inadvertently modifying input files that were staged in as symlinks or hardlinks it would be good if nextflow could make such inputs read only before task execution and restore their mode afterwards | 1 |

1,410 | 3,971,742,637 | IssuesEvent | 2016-05-04 13:10:47 | openvstorage/openvstorage-health-check | https://api.github.com/repos/openvstorage/openvstorage-health-check | closed | Exception in halted volumes when volume is detached/unreachable | priority_critical process_duplicate type_bug | ```

[INFO] Checking vPool 'env1newvpool':

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/usr/lib/python2.7/dist-packages/celery/local.py", line 167, in <lambda>

__call__ = lambda x, *a, **kw: x._get_current_object()(*a, **kw)

File "/usr/lib/python2.7/dist-packages/celery... | 1.0 | Exception in halted volumes when volume is detached/unreachable - ```

[INFO] Checking vPool 'env1newvpool':

Traceback (most recent call last):

File "<string>", line 1, in <module>

File "/usr/lib/python2.7/dist-packages/celery/local.py", line 167, in <lambda>

__call__ = lambda x, *a, **kw: x._get_current_o... | process | exception in halted volumes when volume is detached unreachable checking vpool traceback most recent call last file line in file usr lib dist packages celery local py line in call lambda x a kw x get current object a kw file usr lib dist ... | 1 |

13,730 | 5,435,976,267 | IssuesEvent | 2017-03-05 21:20:26 | docker/docker | https://api.github.com/repos/docker/docker | closed | Possible docker 1.12.5 problem with Centos 7.3 upgrade | area/builder area/distribution area/runtime status/more-info-needed version/1.12 | **Description**

After running yum upgrade (to Centos 7.3) the Centos distribution docker 1.12.5 is unable to complete a docker build due to an oci runtime error apparently due to a missing /var/lib/docker/devicemapper/mnt/..../rootfs directory.

Reverting back to a VM snapshot from ~ two weeks ago when docker was wo... | 1.0 | Possible docker 1.12.5 problem with Centos 7.3 upgrade - **Description**

After running yum upgrade (to Centos 7.3) the Centos distribution docker 1.12.5 is unable to complete a docker build due to an oci runtime error apparently due to a missing /var/lib/docker/devicemapper/mnt/..../rootfs directory.

Reverting back... | non_process | possible docker problem with centos upgrade description after running yum upgrade to centos the centos distribution docker is unable to complete a docker build due to an oci runtime error apparently due to a missing var lib docker devicemapper mnt rootfs directory reverting back t... | 0 |

1,533 | 4,119,268,774 | IssuesEvent | 2016-06-08 14:25:27 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | opened | NTR: epidermal growth factor receptor signaling pathway involved in heart process | BHF-UCL miRNA New term request RNA processes signaling | Dear Editors,

I'd like to request a new term re: PMID:23069713

In this study inhibition of epidermal growth factor receptor (EGFR) was shown to promote cardiogenic differentiation of human Mesenchymal Stem Cells (hMSCs) and the transplantation of hMSCs, in which EGFR was inhibited, resulted in enhancement of heart ... | 1.0 | NTR: epidermal growth factor receptor signaling pathway involved in heart process - Dear Editors,

I'd like to request a new term re: PMID:23069713

In this study inhibition of epidermal growth factor receptor (EGFR) was shown to promote cardiogenic differentiation of human Mesenchymal Stem Cells (hMSCs) and the tran... | process | ntr epidermal growth factor receptor signaling pathway involved in heart process dear editors i d like to request a new term re pmid in this study inhibition of epidermal growth factor receptor egfr was shown to promote cardiogenic differentiation of human mesenchymal stem cells hmscs and the transplanta... | 1 |

473,413 | 13,641,998,933 | IssuesEvent | 2020-09-25 14:55:16 | OpenNebula/one | https://api.github.com/repos/OpenNebula/one | closed | create cli flags for install_gems | Category: Packages Community Priority: Low Status: Accepted Type: Backlog | ---

Author Name: **Rogier Mars** (Rogier Mars)

Original Redmine Issue: 4980, https://dev.opennebula.org/issues/4980

Original Date: 2017-01-12

---

Hi,

Would it be possible to create flags to set the OS and make the script non-interactive? This would make it easier to run the script from configmanagement like an... | 1.0 | create cli flags for install_gems - ---

Author Name: **Rogier Mars** (Rogier Mars)

Original Redmine Issue: 4980, https://dev.opennebula.org/issues/4980

Original Date: 2017-01-12

---

Hi,

Would it be possible to create flags to set the OS and make the script non-interactive? This would make it easier to run the ... | non_process | create cli flags for install gems author name rogier mars rogier mars original redmine issue original date hi would it be possible to create flags to set the os and make the script non interactive this would make it easier to run the script from configmanagement like ansible no... | 0 |

13,815 | 16,577,454,616 | IssuesEvent | 2021-05-31 07:19:30 | paul-buerkner/brms | https://api.github.com/repos/paul-buerkner/brms | closed | loo moment_match crashes R if save_all_pars not specified | bug post-processing | As mentioned in #1126 loo with moment matching doesn't work without `save_all_pars=save_pars(all = TRUE)`. But it seems worse than just not having an appropriate warning message, as it can crash R if tried without the parameter.

Example:

With `save_all_pars`

```{r}

library(brms)

m <- brm(yield ~ N*P*K, npk, sa... | 1.0 | loo moment_match crashes R if save_all_pars not specified - As mentioned in #1126 loo with moment matching doesn't work without `save_all_pars=save_pars(all = TRUE)`. But it seems worse than just not having an appropriate warning message, as it can crash R if tried without the parameter.

Example:

With `save_all_par... | process | loo moment match crashes r if save all pars not specified as mentioned in loo with moment matching doesn t work without save all pars save pars all true but it seems worse than just not having an appropriate warning message as it can crash r if tried without the parameter example with save all pars ... | 1 |

10,967 | 3,152,375,513 | IssuesEvent | 2015-09-16 13:35:36 | galenframework/galen | https://api.github.com/repos/galenframework/galen | closed | Add COUNT spec for multiple objects | c1 enhancement p2 ready for test | When using multiple object identification, would be great to have a COUNT spec

Example:

```

=====================================

menu-items-* css .menuitem

=====================================

menu-items-*

count: 5

``` | 1.0 | Add COUNT spec for multiple objects - When using multiple object identification, would be great to have a COUNT spec

Example:

```

=====================================

menu-items-* css .menuitem

=====================================

menu-items-*

count: 5

``` | non_process | add count spec for multiple objects when using multiple object identification would be great to have a count spec example menu items css menuitem menu items count | 0 |

75,229 | 9,829,284,390 | IssuesEvent | 2019-06-15 19:17:24 | paul-buerkner/brms | https://api.github.com/repos/paul-buerkner/brms | closed | Number of multiple imputation in "missing values" vignette | documentation | In the vignette "Handle Missing Values with brms", section "Imputation before model fitting", there are m = 5 multiply imputed datasets used for mixing their posterior draws. However, according to the paper cited below, m = 5 is not enough for reliable posterior inferences. The authors recommend to choose a larger numb... | 1.0 | Number of multiple imputation in "missing values" vignette - In the vignette "Handle Missing Values with brms", section "Imputation before model fitting", there are m = 5 multiply imputed datasets used for mixing their posterior draws. However, according to the paper cited below, m = 5 is not enough for reliable poster... | non_process | number of multiple imputation in missing values vignette in the vignette handle missing values with brms section imputation before model fitting there are m multiply imputed datasets used for mixing their posterior draws however according to the paper cited below m is not enough for reliable poster... | 0 |

332,059 | 10,083,740,195 | IssuesEvent | 2019-07-25 14:16:54 | getkirby/kirby | https://api.github.com/repos/getkirby/kirby | closed | KirbyTag gets escaped twice when using escape() | missing: discussion 🗣 missing: information ❓ priority: minor 🔜 | **Describe the bug**

When using `escape()` on a KirbyText, KirbyTags get escaped twice.

**To Reproduce**

Given a field text with a textarea and the following content:

```

foo & bar

(link: http://example.com text: foo & bar)

```

In my template/snippet I am using the following line:

`<?= $page->tex... | 1.0 | KirbyTag gets escaped twice when using escape() - **Describe the bug**

When using `escape()` on a KirbyText, KirbyTags get escaped twice.

**To Reproduce**

Given a field text with a textarea and the following content:

```

foo & bar

(link: http://example.com text: foo & bar)

```

In my template/snippet... | non_process | kirbytag gets escaped twice when using escape describe the bug when using escape on a kirbytext kirbytags get escaped twice to reproduce given a field text with a textarea and the following content foo bar link text foo bar in my template snippet i am using the f... | 0 |

10,423 | 13,215,849,266 | IssuesEvent | 2020-08-17 01:25:55 | nion-software/nionswift | https://api.github.com/repos/nion-software/nionswift | opened | Add ability to designate dependent/source data to be used in displays, computations | f - processing f - user-interface feature stage - planning type - enhancement | For example, if background subtraction is applied to a line plot, the user could process the original data (re-binning or smoothing, for example) and then designate the resulting data to be used anywhere the original data is used (computations, displays, maybe more).

Other ideas are: if the designated replacement is... | 1.0 | Add ability to designate dependent/source data to be used in displays, computations - For example, if background subtraction is applied to a line plot, the user could process the original data (re-binning or smoothing, for example) and then designate the resulting data to be used anywhere the original data is used (com... | process | add ability to designate dependent source data to be used in displays computations for example if background subtraction is applied to a line plot the user could process the original data re binning or smoothing for example and then designate the resulting data to be used anywhere the original data is used com... | 1 |

7,120 | 10,266,291,252 | IssuesEvent | 2019-08-22 21:02:10 | automotive-edge-computing-consortium/AECC | https://api.github.com/repos/automotive-edge-computing-consortium/AECC | opened | Good place to keep the Issues list | priority:High status:Open type:Process | Looking for good place to store this issue list. Perhaps this is part of ticketing system.

Currently this issue list doesn't include sensitive item. But it will have sensitive information such as launching new SIGs. So better to be stored in work space where limited people (chairs or sponsor members) have access rig... | 1.0 | Good place to keep the Issues list - Looking for good place to store this issue list. Perhaps this is part of ticketing system.

Currently this issue list doesn't include sensitive item. But it will have sensitive information such as launching new SIGs. So better to be stored in work space where limited people (chair... | process | good place to keep the issues list looking for good place to store this issue list perhaps this is part of ticketing system currently this issue list doesn t include sensitive item but it will have sensitive information such as launching new sigs so better to be stored in work space where limited people chair... | 1 |

189,861 | 14,525,777,071 | IssuesEvent | 2020-12-14 13:24:10 | fourMs/MGT-python | https://api.github.com/repos/fourMs/MGT-python | closed | Make sure the ffmpeg commands always use the -y flag | bug testing | Because if not and the destination file happens to exist already the process will just quit without doing anything. | 1.0 | Make sure the ffmpeg commands always use the -y flag - Because if not and the destination file happens to exist already the process will just quit without doing anything. | non_process | make sure the ffmpeg commands always use the y flag because if not and the destination file happens to exist already the process will just quit without doing anything | 0 |

20,843 | 27,612,216,142 | IssuesEvent | 2023-03-09 16:43:24 | influxdata/telegraf | https://api.github.com/repos/influxdata/telegraf | closed | processors.converter - convert time | help wanted feature request plugin/processor size/m | ### Use Case

The idea is to improve `processors.converter` and allow it to manage timestamps.

Currently, there is no "easy" way to override/set the timestamp of a point by getting it from a tag/field, the only option is to use `processors.starlark`.

This is a rare use case, but still, I think it's worth having it

... | 1.0 | processors.converter - convert time - ### Use Case

The idea is to improve `processors.converter` and allow it to manage timestamps.

Currently, there is no "easy" way to override/set the timestamp of a point by getting it from a tag/field, the only option is to use `processors.starlark`.

This is a rare use case, b... | process | processors converter convert time use case the idea is to improve processors converter and allow it to manage timestamps currently there is no easy way to override set the timestamp of a point by getting it from a tag field the only option is to use processors starlark this is a rare use case b... | 1 |

521,769 | 15,115,337,783 | IssuesEvent | 2021-02-09 04:12:47 | openmsupply/mobile | https://api.github.com/repos/openmsupply/mobile | opened | Add cumulative breach calculation logic to BreachManager | Docs: not needed Feature Module: vaccines Priority: high | ## Is your feature request related to a problem? Please describe.

Add cumulative breach calculation logic to mobile app

## Describe the solution you'd like

- Add methods to BreachManager to calculate cumulative duration above/below configured thresholds

- When consecutive breach calculation is performed, call abo... | 1.0 | Add cumulative breach calculation logic to BreachManager - ## Is your feature request related to a problem? Please describe.

Add cumulative breach calculation logic to mobile app

## Describe the solution you'd like

- Add methods to BreachManager to calculate cumulative duration above/below configured thresholds

-... | non_process | add cumulative breach calculation logic to breachmanager is your feature request related to a problem please describe add cumulative breach calculation logic to mobile app describe the solution you d like add methods to breachmanager to calculate cumulative duration above below configured thresholds ... | 0 |

5,712 | 8,567,916,927 | IssuesEvent | 2018-11-10 16:33:43 | Great-Hill-Corporation/quickBlocks | https://api.github.com/repos/Great-Hill-Corporation/quickBlocks | closed | Wrong phone number on website / Web Form does not deliver mail | status-inprocess type-bug website-general | <img width="467" alt="screen shot 2018-07-06 at 4 39 24 pm" src="https://user-images.githubusercontent.com/5417918/43462686-c5509e8c-94a4-11e8-9d51-71aafb574283.png">

| 1.0 | Wrong phone number on website / Web Form does not deliver mail - <img width="467" alt="screen shot 2018-07-06 at 4 39 24 pm" src="https://user-images.githubusercontent.com/5417918/43462686-c5509e8c-94a4-11e8-9d51-71aafb574283.png">

| process | wrong phone number on website web form does not deliver mail img width alt screen shot at pm src | 1 |

33,204 | 4,818,098,226 | IssuesEvent | 2016-11-04 15:29:37 | infiniteautomation/ma-core-public | https://api.github.com/repos/infiniteautomation/ma-core-public | closed | Log4j data source - ALL level doesn't work | Bug Ready for Testing | The ALL level fails to match any messages whether using Regex or not.