Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

29,202

| 23,796,487,306

|

IssuesEvent

|

2022-09-02 20:23:51

|

dotnet/runtime

|

https://api.github.com/repos/dotnet/runtime

|

opened

|

Mono Bionic Android arm64 CI failure - Failed to get device's property SupportedArchitectures / Device unauthorized / $ADB_VENDOR_KEYS is not set

|

area-Infrastructure-mono test-failure arm64

|

Found in a release/7.0 PR: https://github.com/dotnet/runtime/pull/74926

- Queue: `net7.0-Linux-Release-arm64-Mono_Release_LinuxBionic-Windows.10.Amd64.Android.Open`

- Artifacts: https://dev.azure.com/dnceng-public/public/_build/results?buildId=1392&view=ms.vss-test-web.build-test-results-tab&runId=19052&paneView=dotnet-dnceng.dnceng-anon-build-release-tasks.helix-anon-test-information-tab&resultId=180803

- Affected the `System.Diagnostics.Contracts.Tests` work item.

Log file: https://helixre107v0xdeko0k025g8.blob.core.windows.net/dotnet-runtime-refs-pull-74926-merge-0416eef15c6a45a1a6/System.Diagnostics.Contracts.Tests/1/console.fa330ef5.log?helixlogtype=result

Callstack:

```

[11:56:41] fail: Failed to get device's property SupportedArchitectures. Check if a device is attached / emulator is started

error: device unauthorized.

This adb server's $ADB_VENDOR_KEYS is not set

Try 'adb kill-server' if that seems wrong.

Otherwise check for a confirmation dialog on your device.

[11:56:41] fail: No attached device supports one of required architectures arm64-v8a

[11:56:41] dbug: No suitable devices found

[11:56:41] crit: Failed to find compatible device: arm64-v8a

```

|

1.0

|

Mono Bionic Android arm64 CI failure - Failed to get device's property SupportedArchitectures / Device unauthorized / $ADB_VENDOR_KEYS is not set - Found in a release/7.0 PR: https://github.com/dotnet/runtime/pull/74926

- Queue: `net7.0-Linux-Release-arm64-Mono_Release_LinuxBionic-Windows.10.Amd64.Android.Open`

- Artifacts: https://dev.azure.com/dnceng-public/public/_build/results?buildId=1392&view=ms.vss-test-web.build-test-results-tab&runId=19052&paneView=dotnet-dnceng.dnceng-anon-build-release-tasks.helix-anon-test-information-tab&resultId=180803

- Affected the `System.Diagnostics.Contracts.Tests` work item.

Log file: https://helixre107v0xdeko0k025g8.blob.core.windows.net/dotnet-runtime-refs-pull-74926-merge-0416eef15c6a45a1a6/System.Diagnostics.Contracts.Tests/1/console.fa330ef5.log?helixlogtype=result

Callstack:

```

[11:56:41] fail: Failed to get device's property SupportedArchitectures. Check if a device is attached / emulator is started

error: device unauthorized.

This adb server's $ADB_VENDOR_KEYS is not set

Try 'adb kill-server' if that seems wrong.

Otherwise check for a confirmation dialog on your device.

[11:56:41] fail: No attached device supports one of required architectures arm64-v8a

[11:56:41] dbug: No suitable devices found

[11:56:41] crit: Failed to find compatible device: arm64-v8a

```

|

non_process

|

mono bionic android ci failure failed to get device s property supportedarchitectures device unauthorized adb vendor keys is not set found in a release pr queue linux release mono release linuxbionic windows android open artifacts affected the system diagnostics contracts tests work item log file callstack fail failed to get device s property supportedarchitectures check if a device is attached emulator is started error device unauthorized this adb server s adb vendor keys is not set try adb kill server if that seems wrong otherwise check for a confirmation dialog on your device fail no attached device supports one of required architectures dbug no suitable devices found crit failed to find compatible device

| 0

|

8,342

| 11,497,807,619

|

IssuesEvent

|

2020-02-12 10:43:11

|

18F/tts-tech-portfolio

|

https://api.github.com/repos/18F/tts-tech-portfolio

|

closed

|

TTS Tech Portfolio agile approach -- in depth discussion

|

epic: internal workflow/procedures needs grooming workflow: process

|

## Background information

https://github.com/18F/tts-tech-portfolio/issues/282

## User stories

<!-- one or more -->

- As a ..., I want ... so that ...

- As a ..., I want ... so that ...

## Implementation

- [ ] [first small task]

- [ ] [another small task]

## Acceptance criteria

- [ ] size labeling

- [ ] do we need Entrance Criteria and Exit Criteria for columns, or could we consolidate to one?

- [ ] want deadlines or specifying a finish-by date?

- [ ] Consider making a theme of the week/sprint/month

|

1.0

|

TTS Tech Portfolio agile approach -- in depth discussion - ## Background information

https://github.com/18F/tts-tech-portfolio/issues/282

## User stories

<!-- one or more -->

- As a ..., I want ... so that ...

- As a ..., I want ... so that ...

## Implementation

- [ ] [first small task]

- [ ] [another small task]

## Acceptance criteria

- [ ] size labeling

- [ ] do we need Entrance Criteria and Exit Criteria for columns, or could we consolidate to one?

- [ ] want deadlines or specifying a finish-by date?

- [ ] Consider making a theme of the week/sprint/month

|

process

|

tts tech portfolio agile approach in depth discussion background information user stories as a i want so that as a i want so that implementation acceptance criteria size labeling do we need entrance criteria and exit criteria for columns or could we consolidate to one want deadlines or specifying a finish by date consider making a theme of the week sprint month

| 1

|

7,673

| 10,760,662,277

|

IssuesEvent

|

2019-10-31 19:04:35

|

googleapis/google-cloud-python

|

https://api.github.com/repos/googleapis/google-cloud-python

|

opened

|

Testing: rationalize / normalize VPCSC environment detection in systests

|

api: automl api: cloudasset api: dlp api: monitoring api: storage api: translation api: videointelligence testing type: process

|

We need a clear pattern for how to test whether the appropriate environment variables are set for VPCSC, and ways to skip tests when they are missing. I believe the constraints should be:

- Never set the environment variables in `noxfile.py`.

- If the inside / outside project variables are missing, skip the affected testcases cleanly.

I'm proposing to create a new module, `test_utils/test_utils/vpcsc_config.py`, which centralizes all this policy. Usage from systests would look like:

```python

from test_utils.vpcsc_config import vpcsc_config

@vpcsc_config.skip_if_no_inside_project

def test_requiring_inside_project():

do_something_with(vpcsc_config.project_inside)

@vpcsc_config.skip_if_no_outside_project

def test_requiring_outside_project():

do_something_with(vpcsc_config.project_outside)

@vpcsc_config.skip_if_no_inside_project

@vpcsc_config.skip_if_no_outside_project

def test_requiring_inside_and_outside_projects():

if vpcsc_config.inside_vpcsc:

do_something_with(vpcsc_config.project_inside, vpcsc_config.project_outside)

```

|

1.0

|

Testing: rationalize / normalize VPCSC environment detection in systests - We need a clear pattern for how to test whether the appropriate environment variables are set for VPCSC, and ways to skip tests when they are missing. I believe the constraints should be:

- Never set the environment variables in `noxfile.py`.

- If the inside / outside project variables are missing, skip the affected testcases cleanly.

I'm proposing to create a new module, `test_utils/test_utils/vpcsc_config.py`, which centralizes all this policy. Usage from systests would look like:

```python

from test_utils.vpcsc_config import vpcsc_config

@vpcsc_config.skip_if_no_inside_project

def test_requiring_inside_project():

do_something_with(vpcsc_config.project_inside)

@vpcsc_config.skip_if_no_outside_project

def test_requiring_outside_project():

do_something_with(vpcsc_config.project_outside)

@vpcsc_config.skip_if_no_inside_project

@vpcsc_config.skip_if_no_outside_project

def test_requiring_inside_and_outside_projects():

if vpcsc_config.inside_vpcsc:

do_something_with(vpcsc_config.project_inside, vpcsc_config.project_outside)

```

|

process

|

testing rationalize normalize vpcsc environment detection in systests we need a clear pattern for how to test whether the appropriate environment variables are set for vpcsc and ways to skip tests when they are missing i believe the constraints should be never set the environment variables in noxfile py if the inside outside project variables are missing skip the affected testcases cleanly i m proposing to create a new module test utils test utils vpcsc config py which centralizes all this policy usage from systests would look like python from test utils vpcsc config import vpcsc config vpcsc config skip if no inside project def test requiring inside project do something with vpcsc config project inside vpcsc config skip if no outside project def test requiring outside project do something with vpcsc config project outside vpcsc config skip if no inside project vpcsc config skip if no outside project def test requiring inside and outside projects if vpcsc config inside vpcsc do something with vpcsc config project inside vpcsc config project outside

| 1

|

7,517

| 10,596,006,065

|

IssuesEvent

|

2019-10-09 20:15:41

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

translocation into host/entry into host

|

multi-species process

|

responding to

https://github.com/geneontology/go-ontology/issues/17045

made me realise there are some unnecessary and confusing grouping terms under

GO:0044403 symbiont process

~For example

GO:0051824 recognition of other organism involved in symbiotic interaction

with a symbiont and host split~ see

https://github.com/geneontology/go-ontology/issues/17977

GO:0051836 translocation of molecules into other organism involved in symbiotic interaction

with a symbiont and host split

(the processes under here represent movement of the symbiont into the host, and movement of nutrients into the symbiont. The grouping term is biologically meaningless here. these processes aren't related at all.

~These terms often give pairs of descendants which are not biologically related at all, or any more than similar processes in no symbionts, they would be better grouped under~

~1 "host colonization" or similar

and

2. a suitable grouping term for the symbiont if required for terms which do not fit under such a term~

|

1.0

|

translocation into host/entry into host - responding to

https://github.com/geneontology/go-ontology/issues/17045

made me realise there are some unnecessary and confusing grouping terms under

GO:0044403 symbiont process

~For example

GO:0051824 recognition of other organism involved in symbiotic interaction

with a symbiont and host split~ see

https://github.com/geneontology/go-ontology/issues/17977

GO:0051836 translocation of molecules into other organism involved in symbiotic interaction

with a symbiont and host split

(the processes under here represent movement of the symbiont into the host, and movement of nutrients into the symbiont. The grouping term is biologically meaningless here. these processes aren't related at all.

~These terms often give pairs of descendants which are not biologically related at all, or any more than similar processes in no symbionts, they would be better grouped under~

~1 "host colonization" or similar

and

2. a suitable grouping term for the symbiont if required for terms which do not fit under such a term~

|

process

|

translocation into host entry into host responding to made me realise there are some unnecessary and confusing grouping terms under go symbiont process for example go recognition of other organism involved in symbiotic interaction with a symbiont and host split see go translocation of molecules into other organism involved in symbiotic interaction with a symbiont and host split the processes under here represent movement of the symbiont into the host and movement of nutrients into the symbiont the grouping term is biologically meaningless here these processes aren t related at all these terms often give pairs of descendants which are not biologically related at all or any more than similar processes in no symbionts they would be better grouped under host colonization or similar and a suitable grouping term for the symbiont if required for terms which do not fit under such a term

| 1

|

20,840

| 27,610,490,456

|

IssuesEvent

|

2023-03-09 15:40:41

|

Sebastian009w/hyper-burguer

|

https://api.github.com/repos/Sebastian009w/hyper-burguer

|

opened

|

Components

|

process

|

- [ ] Form

- [ ] Button

- [ ] Card

- [ ] TableContent

- [ ] Logo

- [ ] Title

- [ ] Hours

- [ ] Booking

|

1.0

|

Components - - [ ] Form

- [ ] Button

- [ ] Card

- [ ] TableContent

- [ ] Logo

- [ ] Title

- [ ] Hours

- [ ] Booking

|

process

|

components form button card tablecontent logo title hours booking

| 1

|

16,705

| 10,554,634,366

|

IssuesEvent

|

2019-10-03 19:55:48

|

microsoft/BotBuilder-Samples

|

https://api.github.com/repos/microsoft/BotBuilder-Samples

|

closed

|

Runing nodeJs npl-with-dispatch BotBuilder sample behind corporate proxy

|

Bot Services customer-replied-to customer-reported

|

I'm trying to run the sample "14.nlp-with-dispatch" on my local machine and test it with the emulator. I have a luis and Qna azure services. I have modified some code under node_module to add proxy configuration (just to run the exemple on my local machine) : this works for my luis service but I didn't find where to add proxy configuration for qna service knowing that I have tried to use npm packages "global-tunnel" and "global-tunnel-ng" but this didn't work for me because my node version is 12.9.1.

Has anyone experienced this?

Is there any other way to add proxy configuration for this two services ?

|

1.0

|

Runing nodeJs npl-with-dispatch BotBuilder sample behind corporate proxy - I'm trying to run the sample "14.nlp-with-dispatch" on my local machine and test it with the emulator. I have a luis and Qna azure services. I have modified some code under node_module to add proxy configuration (just to run the exemple on my local machine) : this works for my luis service but I didn't find where to add proxy configuration for qna service knowing that I have tried to use npm packages "global-tunnel" and "global-tunnel-ng" but this didn't work for me because my node version is 12.9.1.

Has anyone experienced this?

Is there any other way to add proxy configuration for this two services ?

|

non_process

|

runing nodejs npl with dispatch botbuilder sample behind corporate proxy i m trying to run the sample nlp with dispatch on my local machine and test it with the emulator i have a luis and qna azure services i have modified some code under node module to add proxy configuration just to run the exemple on my local machine this works for my luis service but i didn t find where to add proxy configuration for qna service knowing that i have tried to use npm packages global tunnel and global tunnel ng but this didn t work for me because my node version is has anyone experienced this is there any other way to add proxy configuration for this two services

| 0

|

3,685

| 6,715,898,957

|

IssuesEvent

|

2017-10-14 00:09:55

|

HelpyTeam/HelpyWeb

|

https://api.github.com/repos/HelpyTeam/HelpyWeb

|

closed

|

Update Conversation View

|

Front-end In Process priority/1

|

# Overview

Update front-end task

# Target

- [x] Set height for conversation view

- [x] Create scroll-bar for for conversation view _(Ref: https://www.w3schools.com/howto/howto_css_menu_horizontal_scroll.asp)_

- ~Find textarea format and apply to the front-end _(Ref: https://github.com/sstur/react-rte)_~

- ~Change current "Loading..." text into loading button. _(Ref: http://www.material-ui.com/#/components/refresh-indicator)_~

|

1.0

|

Update Conversation View - # Overview

Update front-end task

# Target

- [x] Set height for conversation view

- [x] Create scroll-bar for for conversation view _(Ref: https://www.w3schools.com/howto/howto_css_menu_horizontal_scroll.asp)_

- ~Find textarea format and apply to the front-end _(Ref: https://github.com/sstur/react-rte)_~

- ~Change current "Loading..." text into loading button. _(Ref: http://www.material-ui.com/#/components/refresh-indicator)_~

|

process

|

update conversation view overview update front end task target set height for conversation view create scroll bar for for conversation view ref find textarea format and apply to the front end ref change current loading text into loading button ref

| 1

|

183,266

| 31,240,191,585

|

IssuesEvent

|

2023-08-20 19:19:09

|

HUSTLE-UMC/HUSTLE_web

|

https://api.github.com/repos/HUSTLE-UMC/HUSTLE_web

|

closed

|

[Refactor] 로그인 화면 수정

|

Refactor 데이브/김원준 🎨Design

|

### Issue

> 로그인 화면 수정

### ToDoList

- [ ] 카카오 로그인 버튼 수정

### 추가 선택사항(Optional)

|

1.0

|

[Refactor] 로그인 화면 수정 - ### Issue

> 로그인 화면 수정

### ToDoList

- [ ] 카카오 로그인 버튼 수정

### 추가 선택사항(Optional)

|

non_process

|

로그인 화면 수정 issue 로그인 화면 수정 todolist 카카오 로그인 버튼 수정 추가 선택사항 optional

| 0

|

249,125

| 26,887,011,761

|

IssuesEvent

|

2023-02-06 04:46:04

|

MendDemo/PoiMakia

|

https://api.github.com/repos/MendDemo/PoiMakia

|

opened

|

jsoup-1.9.2.jar: 2 vulnerabilities (highest severity is: 7.5)

|

security vulnerability

|

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsoup-1.9.2.jar</b></p></summary>

<p>jsoup HTML parser</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar,/lib/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar</p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/MendDemo/PoiMakia/commit/47d070b80133c8fe80f3796674688596a612e8dd">47d070b80133c8fe80f3796674688596a612e8dd</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (jsoup version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2021-37714](https://www.mend.io/vulnerability-database/CVE-2021-37714) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High | 7.5 | jsoup-1.9.2.jar | Direct | 1.14.2 | ❌ |

| [CVE-2022-36033](https://www.mend.io/vulnerability-database/CVE-2022-36033) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium | 6.1 | jsoup-1.9.2.jar | Direct | 1.15.3 | ❌ |

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> CVE-2021-37714</summary>

### Vulnerable Library - <b>jsoup-1.9.2.jar</b></p>

<p>jsoup HTML parser</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar,/lib/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar</p>

<p>

Dependency Hierarchy:

- :x: **jsoup-1.9.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/MendDemo/PoiMakia/commit/47d070b80133c8fe80f3796674688596a612e8dd">47d070b80133c8fe80f3796674688596a612e8dd</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

jsoup is a Java library for working with HTML. Those using jsoup versions prior to 1.14.2 to parse untrusted HTML or XML may be vulnerable to DOS attacks. If the parser is run on user supplied input, an attacker may supply content that causes the parser to get stuck (loop indefinitely until cancelled), to complete more slowly than usual, or to throw an unexpected exception. This effect may support a denial of service attack. The issue is patched in version 1.14.2. There are a few available workarounds. Users may rate limit input parsing, limit the size of inputs based on system resources, and/or implement thread watchdogs to cap and timeout parse runtimes.

<p>Publish Date: 2021-08-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2021-37714>CVE-2021-37714</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://jsoup.org/news/release-1.14.2">https://jsoup.org/news/release-1.14.2</a></p>

<p>Release Date: 2021-08-18</p>

<p>Fix Resolution: 1.14.2</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> CVE-2022-36033</summary>

### Vulnerable Library - <b>jsoup-1.9.2.jar</b></p>

<p>jsoup HTML parser</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar,/lib/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar</p>

<p>

Dependency Hierarchy:

- :x: **jsoup-1.9.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/MendDemo/PoiMakia/commit/47d070b80133c8fe80f3796674688596a612e8dd">47d070b80133c8fe80f3796674688596a612e8dd</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

jsoup is a Java HTML parser, built for HTML editing, cleaning, scraping, and cross-site scripting (XSS) safety. jsoup may incorrectly sanitize HTML including `javascript:` URL expressions, which could allow XSS attacks when a reader subsequently clicks that link. If the non-default `SafeList.preserveRelativeLinks` option is enabled, HTML including `javascript:` URLs that have been crafted with control characters will not be sanitized. If the site that this HTML is published on does not set a Content Security Policy, an XSS attack is then possible. This issue is patched in jsoup 1.15.3. Users should upgrade to this version. Additionally, as the unsanitized input may have been persisted, old content should be cleaned again using the updated version. To remediate this issue without immediately upgrading: - disable `SafeList.preserveRelativeLinks`, which will rewrite input URLs as absolute URLs - ensure an appropriate [Content Security Policy](https://developer.mozilla.org/en-US/docs/Web/HTTP/CSP) is defined. (This should be used regardless of upgrading, as a defence-in-depth best practice.)

<p>Publish Date: 2022-08-29

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-36033>CVE-2022-36033</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>6.1</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/jhy/jsoup/security/advisories/GHSA-gp7f-rwcx-9369">https://github.com/jhy/jsoup/security/advisories/GHSA-gp7f-rwcx-9369</a></p>

<p>Release Date: 2022-08-29</p>

<p>Fix Resolution: 1.15.3</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details>

|

True

|

jsoup-1.9.2.jar: 2 vulnerabilities (highest severity is: 7.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsoup-1.9.2.jar</b></p></summary>

<p>jsoup HTML parser</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar,/lib/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar</p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/MendDemo/PoiMakia/commit/47d070b80133c8fe80f3796674688596a612e8dd">47d070b80133c8fe80f3796674688596a612e8dd</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (jsoup version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2021-37714](https://www.mend.io/vulnerability-database/CVE-2021-37714) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High | 7.5 | jsoup-1.9.2.jar | Direct | 1.14.2 | ❌ |

| [CVE-2022-36033](https://www.mend.io/vulnerability-database/CVE-2022-36033) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium | 6.1 | jsoup-1.9.2.jar | Direct | 1.15.3 | ❌ |

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> CVE-2021-37714</summary>

### Vulnerable Library - <b>jsoup-1.9.2.jar</b></p>

<p>jsoup HTML parser</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar,/lib/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar</p>

<p>

Dependency Hierarchy:

- :x: **jsoup-1.9.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/MendDemo/PoiMakia/commit/47d070b80133c8fe80f3796674688596a612e8dd">47d070b80133c8fe80f3796674688596a612e8dd</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

jsoup is a Java library for working with HTML. Those using jsoup versions prior to 1.14.2 to parse untrusted HTML or XML may be vulnerable to DOS attacks. If the parser is run on user supplied input, an attacker may supply content that causes the parser to get stuck (loop indefinitely until cancelled), to complete more slowly than usual, or to throw an unexpected exception. This effect may support a denial of service attack. The issue is patched in version 1.14.2. There are a few available workarounds. Users may rate limit input parsing, limit the size of inputs based on system resources, and/or implement thread watchdogs to cap and timeout parse runtimes.

<p>Publish Date: 2021-08-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2021-37714>CVE-2021-37714</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://jsoup.org/news/release-1.14.2">https://jsoup.org/news/release-1.14.2</a></p>

<p>Release Date: 2021-08-18</p>

<p>Fix Resolution: 1.14.2</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> CVE-2022-36033</summary>

### Vulnerable Library - <b>jsoup-1.9.2.jar</b></p>

<p>jsoup HTML parser</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar,/lib/org/jsoup/jsoup/1.9.2/jsoup-1.9.2.jar</p>

<p>

Dependency Hierarchy:

- :x: **jsoup-1.9.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/MendDemo/PoiMakia/commit/47d070b80133c8fe80f3796674688596a612e8dd">47d070b80133c8fe80f3796674688596a612e8dd</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

jsoup is a Java HTML parser, built for HTML editing, cleaning, scraping, and cross-site scripting (XSS) safety. jsoup may incorrectly sanitize HTML including `javascript:` URL expressions, which could allow XSS attacks when a reader subsequently clicks that link. If the non-default `SafeList.preserveRelativeLinks` option is enabled, HTML including `javascript:` URLs that have been crafted with control characters will not be sanitized. If the site that this HTML is published on does not set a Content Security Policy, an XSS attack is then possible. This issue is patched in jsoup 1.15.3. Users should upgrade to this version. Additionally, as the unsanitized input may have been persisted, old content should be cleaned again using the updated version. To remediate this issue without immediately upgrading: - disable `SafeList.preserveRelativeLinks`, which will rewrite input URLs as absolute URLs - ensure an appropriate [Content Security Policy](https://developer.mozilla.org/en-US/docs/Web/HTTP/CSP) is defined. (This should be used regardless of upgrading, as a defence-in-depth best practice.)

<p>Publish Date: 2022-08-29

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-36033>CVE-2022-36033</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>6.1</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/jhy/jsoup/security/advisories/GHSA-gp7f-rwcx-9369">https://github.com/jhy/jsoup/security/advisories/GHSA-gp7f-rwcx-9369</a></p>

<p>Release Date: 2022-08-29</p>

<p>Fix Resolution: 1.15.3</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details>

|

non_process

|

jsoup jar vulnerabilities highest severity is vulnerable library jsoup jar jsoup html parser path to dependency file pom xml path to vulnerable library home wss scanner repository org jsoup jsoup jsoup jar lib org jsoup jsoup jsoup jar found in head commit a href vulnerabilities cve severity cvss dependency type fixed in jsoup version remediation available high jsoup jar direct medium jsoup jar direct details cve vulnerable library jsoup jar jsoup html parser path to dependency file pom xml path to vulnerable library home wss scanner repository org jsoup jsoup jsoup jar lib org jsoup jsoup jsoup jar dependency hierarchy x jsoup jar vulnerable library found in head commit a href found in base branch master vulnerability details jsoup is a java library for working with html those using jsoup versions prior to to parse untrusted html or xml may be vulnerable to dos attacks if the parser is run on user supplied input an attacker may supply content that causes the parser to get stuck loop indefinitely until cancelled to complete more slowly than usual or to throw an unexpected exception this effect may support a denial of service attack the issue is patched in version there are a few available workarounds users may rate limit input parsing limit the size of inputs based on system resources and or implement thread watchdogs to cap and timeout parse runtimes publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend cve vulnerable library jsoup jar jsoup html parser path to dependency file pom xml path to vulnerable library home wss scanner repository org jsoup jsoup jsoup jar lib org jsoup jsoup jsoup jar dependency hierarchy x jsoup jar vulnerable library found in head commit a href found in base branch master vulnerability details jsoup is a java html parser built for html editing cleaning scraping and cross site scripting xss safety jsoup may incorrectly sanitize html including javascript url expressions which could allow xss attacks when a reader subsequently clicks that link if the non default safelist preserverelativelinks option is enabled html including javascript urls that have been crafted with control characters will not be sanitized if the site that this html is published on does not set a content security policy an xss attack is then possible this issue is patched in jsoup users should upgrade to this version additionally as the unsanitized input may have been persisted old content should be cleaned again using the updated version to remediate this issue without immediately upgrading disable safelist preserverelativelinks which will rewrite input urls as absolute urls ensure an appropriate is defined this should be used regardless of upgrading as a defence in depth best practice publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

18,564

| 24,555,759,759

|

IssuesEvent

|

2022-10-12 15:44:44

|

GoogleCloudPlatform/fda-mystudies

|

https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies

|

closed

|

[Mobile apps] Study activities screen > UI issue

|

Bug P2 iOS Android Process: Fixed Process: Tested QA Process: Tested dev

|

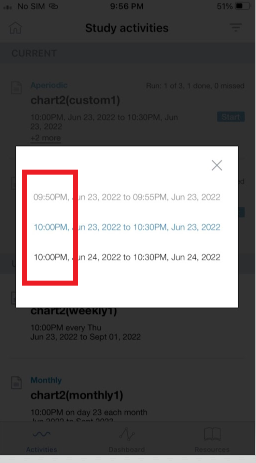

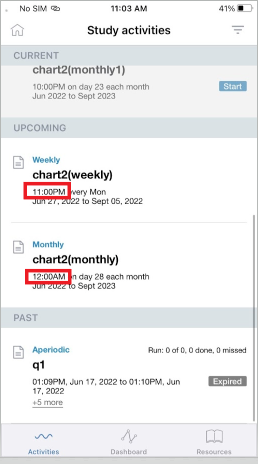

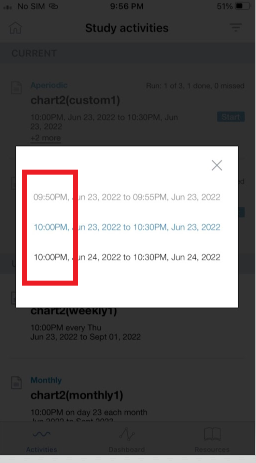

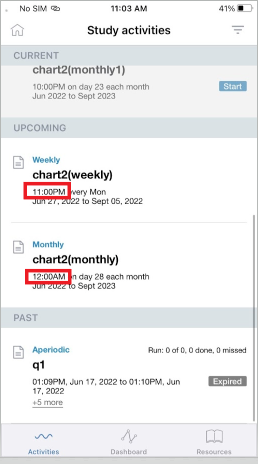

Study activities screen > UI issue > There should be a gap between time and time unit (AM or PM)

|

3.0

|

[Mobile apps] Study activities screen > UI issue - Study activities screen > UI issue > There should be a gap between time and time unit (AM or PM)

|

process

|

study activities screen ui issue study activities screen ui issue there should be a gap between time and time unit am or pm

| 1

|

2,607

| 5,367,273,329

|

IssuesEvent

|

2017-02-22 03:23:44

|

jlm2017/jlm-video-subtitles

|

https://api.github.com/repos/jlm2017/jlm-video-subtitles

|

closed

|

[subtitles] [fr] « La France est le pays européen qui utilise le plus de pesticides »

|

Language: French Process: [6] Approved

|

# Video title

« La France est le pays européen qui utilise le plus de pesticides »

# URL

https://www.youtube.com/watch?v=Ju3vITxmjQs&t=4s

# Youtube subtitles language

French

# Duration

1:09

# Subtitles URL

https://www.youtube.com/timedtext_editor?bl=vmp&lang=fr&ref=player&action_mde_edit_form=1&ui=hd&v=Ju3vITxmjQs&tab=captions

|

1.0

|

[subtitles] [fr] « La France est le pays européen qui utilise le plus de pesticides » - # Video title

« La France est le pays européen qui utilise le plus de pesticides »

# URL

https://www.youtube.com/watch?v=Ju3vITxmjQs&t=4s

# Youtube subtitles language

French

# Duration

1:09

# Subtitles URL

https://www.youtube.com/timedtext_editor?bl=vmp&lang=fr&ref=player&action_mde_edit_form=1&ui=hd&v=Ju3vITxmjQs&tab=captions

|

process

|

« la france est le pays européen qui utilise le plus de pesticides » video title « la france est le pays européen qui utilise le plus de pesticides » url youtube subtitles language french duration subtitles url

| 1

|

27,660

| 5,075,252,152

|

IssuesEvent

|

2016-12-27 18:36:14

|

phingofficial/phing

|

https://api.github.com/repos/phingofficial/phing

|

closed

|

Repeated prompt when Input task is used within Then block (Trac #1006)

|

defect Migrated from Trac phing-core

|

Using an input task within the `then` block of an `if` statement causes the text or message to be printed four times instead of one.

Some exploration indicates that the addText method is being called four times, so it appends the text to itself.

---

build.xml:

``` text

<?xml version="1.0"?>

<project name="test" default="test">

<target name="test">

<if>

<equals arg1="true" arg2="true" />

<then>

<input propertyname="test" defaultValue="no" validargs="yes,no">Phing?</input>

</then>

</if>

</target>

</project>

```

Expected:

``` text

Phing?(yes/no) [no]

```

Actual:

``` text

Phing?Phing?Phing?Phing?(yes/no) [no]

```

Migrated from https://www.phing.info/trac/ticket/1006

``` json

{

"status": "new",

"changetime": "2013-06-14T23:43:47",

"description": "Using an input task within the `then` block of an `if` statement causes the text or message to be printed four times instead of one.\n\nSome exploration indicates that the addText method is being called four times, so it appends the text to itself.\n\n----\nbuild.xml:\n{{{\n<?xml version=\"1.0\"?>\n<project name=\"test\" default=\"test\">\n\n\t<target name=\"test\">\n\t\t<if>\n\t\t\t<equals arg1=\"true\" arg2=\"true\" />\n\t\t\t<then>\n\t\t\t\t<input propertyname=\"test\" defaultValue=\"no\" validargs=\"yes,no\">Phing?</input>\n\t\t\t</then>\n\t\t</if>\n\t</target>\n\n</project>\n}}}\n\nExpected:\n{{{\nPhing?(yes/no) [no]\n}}}\n\nActual:\n{{{\nPhing?Phing?Phing?Phing?(yes/no) [no]\n}}}",

"reporter": "doug.fitzmaurice@ents24.com",

"cc": "",

"resolution": "",

"_ts": "1371253427358917",

"component": "phing-core",

"summary": "Repeated prompt when Input task is used within Then block",

"priority": "tbd",

"keywords": "",

"version": "2.5.0",

"time": "2013-04-19T12:42:56",

"milestone": "",

"owner": "mrook",

"type": "defect"

}

```

|

1.0

|

Repeated prompt when Input task is used within Then block (Trac #1006) - Using an input task within the `then` block of an `if` statement causes the text or message to be printed four times instead of one.

Some exploration indicates that the addText method is being called four times, so it appends the text to itself.

---

build.xml:

``` text

<?xml version="1.0"?>

<project name="test" default="test">

<target name="test">

<if>

<equals arg1="true" arg2="true" />

<then>

<input propertyname="test" defaultValue="no" validargs="yes,no">Phing?</input>

</then>

</if>

</target>

</project>

```

Expected:

``` text

Phing?(yes/no) [no]

```

Actual:

``` text

Phing?Phing?Phing?Phing?(yes/no) [no]

```

Migrated from https://www.phing.info/trac/ticket/1006

``` json

{

"status": "new",

"changetime": "2013-06-14T23:43:47",

"description": "Using an input task within the `then` block of an `if` statement causes the text or message to be printed four times instead of one.\n\nSome exploration indicates that the addText method is being called four times, so it appends the text to itself.\n\n----\nbuild.xml:\n{{{\n<?xml version=\"1.0\"?>\n<project name=\"test\" default=\"test\">\n\n\t<target name=\"test\">\n\t\t<if>\n\t\t\t<equals arg1=\"true\" arg2=\"true\" />\n\t\t\t<then>\n\t\t\t\t<input propertyname=\"test\" defaultValue=\"no\" validargs=\"yes,no\">Phing?</input>\n\t\t\t</then>\n\t\t</if>\n\t</target>\n\n</project>\n}}}\n\nExpected:\n{{{\nPhing?(yes/no) [no]\n}}}\n\nActual:\n{{{\nPhing?Phing?Phing?Phing?(yes/no) [no]\n}}}",

"reporter": "doug.fitzmaurice@ents24.com",

"cc": "",

"resolution": "",

"_ts": "1371253427358917",

"component": "phing-core",

"summary": "Repeated prompt when Input task is used within Then block",

"priority": "tbd",

"keywords": "",

"version": "2.5.0",

"time": "2013-04-19T12:42:56",

"milestone": "",

"owner": "mrook",

"type": "defect"

}

```

|

non_process

|

repeated prompt when input task is used within then block trac using an input task within the then block of an if statement causes the text or message to be printed four times instead of one some exploration indicates that the addtext method is being called four times so it appends the text to itself build xml text phing expected text phing yes no actual text phing phing phing phing yes no migrated from json status new changetime description using an input task within the then block of an if statement causes the text or message to be printed four times instead of one n nsome exploration indicates that the addtext method is being called four times so it appends the text to itself n n nbuild xml n n n n n t n t t n t t t n t t t n t t t t phing n t t t n t t n t n n n n nexpected n nphing yes no n n nactual n nphing phing phing phing yes no n reporter doug fitzmaurice com cc resolution ts component phing core summary repeated prompt when input task is used within then block priority tbd keywords version time milestone owner mrook type defect

| 0

|

233,485

| 7,698,238,764

|

IssuesEvent

|

2018-05-18 22:06:29

|

trailofbits/echidna

|

https://api.github.com/repos/trailofbits/echidna

|

opened

|

Inconsistent test results in a simple contract (2)

|

bug help wanted high-priority

|

In this simple contract:

```solidity

contract A {}

contract B {

function f() {

return;

}

}

contract TEST {

A[] private xs;

B b = new B();

function g() public {

return;

}

//function add() {

// xs.push(new A());

//}

function echidna_true() returns (bool) {

b.f();

return true;

}

}

```

the echidna_true test cannot fail and Echida works as expected. However it reports a failed test **when you uncomment the add() function**. For instance:

```bash

$ echidna-test test.sol test.sol:TEST

━━━ test.sol ━━━

✗ "echidna_true" failed after 2 tests.

│ Call sequence: add();

✗ 1 failed.

```

|

1.0

|

Inconsistent test results in a simple contract (2) - In this simple contract:

```solidity

contract A {}

contract B {

function f() {

return;

}

}

contract TEST {

A[] private xs;

B b = new B();

function g() public {

return;

}

//function add() {

// xs.push(new A());

//}

function echidna_true() returns (bool) {

b.f();

return true;

}

}

```

the echidna_true test cannot fail and Echida works as expected. However it reports a failed test **when you uncomment the add() function**. For instance:

```bash

$ echidna-test test.sol test.sol:TEST

━━━ test.sol ━━━

✗ "echidna_true" failed after 2 tests.

│ Call sequence: add();

✗ 1 failed.

```

|

non_process

|

inconsistent test results in a simple contract in this simple contract solidity contract a contract b function f return contract test a private xs b b new b function g public return function add xs push new a function echidna true returns bool b f return true the echidna true test cannot fail and echida works as expected however it reports a failed test when you uncomment the add function for instance bash echidna test test sol test sol test ━━━ test sol ━━━ ✗ echidna true failed after tests │ call sequence add ✗ failed

| 0

|

17,080

| 12,219,420,058

|

IssuesEvent

|

2020-05-01 21:41:28

|

enarx/enarx

|

https://api.github.com/repos/enarx/enarx

|

opened

|

Investigate a 'cargo-make' feature that tests all combinations of features

|

infrastructure research

|

Some of our crates conditionally compile code depending on if a feature flag is enabled.

`cargo-make` can enable all features or certain features, but can it test all different combinations of features automatically?

|

1.0

|

Investigate a 'cargo-make' feature that tests all combinations of features - Some of our crates conditionally compile code depending on if a feature flag is enabled.

`cargo-make` can enable all features or certain features, but can it test all different combinations of features automatically?

|

non_process

|

investigate a cargo make feature that tests all combinations of features some of our crates conditionally compile code depending on if a feature flag is enabled cargo make can enable all features or certain features but can it test all different combinations of features automatically

| 0

|

9,489

| 12,483,529,912

|

IssuesEvent

|

2020-05-30 09:52:46

|

darktable-org/darktable

|

https://api.github.com/repos/darktable-org/darktable

|

closed

|

export size not correct

|

bug: pending difficulty: hard priority: high reproduce: confirmed scope: image processing

|

<!-- IMPORTANT

Bug reports that do not make an effort to help the developers will be closed without notice.

Make sure that this bug has not already been opened and/or closed by searching the issues on GitHub, as duplicate bug reports will be closed.

A bug report simply stating that Darktable crashes is unhelpful, so please fill in most of the items below and provide detailed information.

-->

**Describe the bug**

An attempt to fix this issue has been made in #5022. But this has introduced a regression.

**To Reproduce**

$ cd src/tests/integration

$ darktable-cli --width 2048 --height 2048 --hq true images/mire1.cr2 0001-exposure/exposure.xmp output.png

$ exiv2 output.png | grep -i "Image size"

Image size : 2049 x 1365

**Expected behavior**

Reverting : c12e3d3 and d881b85

The export size is correct and 2048 x 1364

$ exiv2 output.png | grep -i "Image size"

Image size : 2048 x 1364

**Platform (please complete the following information):**

- Darktable Version: master

- OS: GNU/Linux

**Additional context**

We should either revert commits c12e3d3 and d881b85 or have a fix for 3.2.

|

1.0

|

export size not correct - <!-- IMPORTANT

Bug reports that do not make an effort to help the developers will be closed without notice.

Make sure that this bug has not already been opened and/or closed by searching the issues on GitHub, as duplicate bug reports will be closed.

A bug report simply stating that Darktable crashes is unhelpful, so please fill in most of the items below and provide detailed information.

-->

**Describe the bug**

An attempt to fix this issue has been made in #5022. But this has introduced a regression.

**To Reproduce**

$ cd src/tests/integration

$ darktable-cli --width 2048 --height 2048 --hq true images/mire1.cr2 0001-exposure/exposure.xmp output.png

$ exiv2 output.png | grep -i "Image size"

Image size : 2049 x 1365

**Expected behavior**

Reverting : c12e3d3 and d881b85

The export size is correct and 2048 x 1364

$ exiv2 output.png | grep -i "Image size"

Image size : 2048 x 1364

**Platform (please complete the following information):**

- Darktable Version: master

- OS: GNU/Linux

**Additional context**

We should either revert commits c12e3d3 and d881b85 or have a fix for 3.2.

|

process

|

export size not correct important bug reports that do not make an effort to help the developers will be closed without notice make sure that this bug has not already been opened and or closed by searching the issues on github as duplicate bug reports will be closed a bug report simply stating that darktable crashes is unhelpful so please fill in most of the items below and provide detailed information describe the bug an attempt to fix this issue has been made in but this has introduced a regression to reproduce cd src tests integration darktable cli width height hq true images exposure exposure xmp output png output png grep i image size image size x expected behavior reverting and the export size is correct and x output png grep i image size image size x platform please complete the following information darktable version master os gnu linux additional context we should either revert commits and or have a fix for

| 1

|

1,418

| 2,514,194,332

|

IssuesEvent

|

2015-01-15 09:10:15

|

georchestra/georchestra

|

https://api.github.com/repos/georchestra/georchestra

|

opened

|

doc - native libs

|

1 - Ready enhancement priority-top

|

Quoting gaston:

```

10:06 < gaston> finalement entre https://github.com/georchestra/georchestra/blob/master/geoserver/NATIVE_LIBS.md et

https://github.com/georchestra/georchestra/blob/master/doc/setup/native_libs.md...

10:07 < gaston> faut suivre lequel ? :)

```

|

1.0

|

doc - native libs - Quoting gaston:

```

10:06 < gaston> finalement entre https://github.com/georchestra/georchestra/blob/master/geoserver/NATIVE_LIBS.md et

https://github.com/georchestra/georchestra/blob/master/doc/setup/native_libs.md...

10:07 < gaston> faut suivre lequel ? :)

```

|

non_process

|

doc native libs quoting gaston finalement entre et faut suivre lequel

| 0

|

5,962

| 8,785,529,783

|

IssuesEvent

|

2018-12-20 13:17:37

|

lutraconsulting/qgis-crayfish-plugin

|

https://api.github.com/repos/lutraconsulting/qgis-crayfish-plugin

|

closed

|

Export mesh&datasets to vector format

|

enhancement processing

|

We need to add processing algorithm in crayfish plugin to be able to export raw MDAL data to geopackage.

MDAL supports data defined on vertices and faces (https://github.com/qgis/QGIS-Enhancement-Proposals/issues/119). Also it defines scalar data and vector data (x,y).

relevant docs: https://github.com/qgis/QGIS/blob/master/src/core/mesh/qgsmeshlayer.h

https://github.com/qgis/QGIS/blob/master/src/core/mesh/qgsmeshdataprovider.h

test data: https://github.com/lutraconsulting/MDAL/tree/master/tests (2dm + ascii_dat)

a) For face datasets, one feature in the vector layer will be one face. Attributes of the face will be dataset values in particular timestep, e.g. let say we have dataset "Depth" and "Velocity"

so attributes will be

Depth = 1

Velocity_x = 1.0

Velocity_y = 2.0

....

b) for vertex datasets, the attributes will be the same, but the exported features will be nodes (vertices)

note: user will be able to export just one (selected timestep!). If one wants to export more/all, best to run this algorithm in batch mode. Note that some datasets have just 1 value (e.g. Depth/Maximums). in this case it does not matter which timestep user selects, it is always exported

-------

As for graphical side, one will be able to select

- INPUT: mesh layer

- INPUT: dataset groups (multichoice, ideally "all/none" button)

- INPUT: a timestep (combo box)

and

- OUTPUT: geopackage filename where to put export or "in-memory" option

the input/output UX should be in-line with most used processing algorithms so user knows where to click.

-----

```

m = iface.activeLayer()

dp = m.dataProvider()

dp

<qgis._core.QgsMeshDataProvider object at 0x12d194678>

mesh = QgsMesh()

dp.populateMesh(mesh)

mesh.faceCount()

1600

mesh.face(0)

[34, 33, 0, 1]

mesh.vertexCount()

1683

mesh.vertex(0)

<QgsPoint: PointZ (20 10 0)>

dp.datasetGroupCount()

1

groupMeta = dp.datasetGroupMetadata(0)

groupMeta.isScalar()

False

dp.datasetCount(QgsMeshDatasetIndex(0))

27

values = dp.datasetValues(QgsMeshDatasetIndex(0, 2), 0, 300)

value = values.value(0)

value

<qgis._core.QgsMeshDatasetValue object at 0x12d194948>

value.x()

nan

value.y()

nan

```

|

1.0

|

Export mesh&datasets to vector format - We need to add processing algorithm in crayfish plugin to be able to export raw MDAL data to geopackage.

MDAL supports data defined on vertices and faces (https://github.com/qgis/QGIS-Enhancement-Proposals/issues/119). Also it defines scalar data and vector data (x,y).

relevant docs: https://github.com/qgis/QGIS/blob/master/src/core/mesh/qgsmeshlayer.h

https://github.com/qgis/QGIS/blob/master/src/core/mesh/qgsmeshdataprovider.h

test data: https://github.com/lutraconsulting/MDAL/tree/master/tests (2dm + ascii_dat)

a) For face datasets, one feature in the vector layer will be one face. Attributes of the face will be dataset values in particular timestep, e.g. let say we have dataset "Depth" and "Velocity"

so attributes will be

Depth = 1

Velocity_x = 1.0

Velocity_y = 2.0

....

b) for vertex datasets, the attributes will be the same, but the exported features will be nodes (vertices)

note: user will be able to export just one (selected timestep!). If one wants to export more/all, best to run this algorithm in batch mode. Note that some datasets have just 1 value (e.g. Depth/Maximums). in this case it does not matter which timestep user selects, it is always exported

-------

As for graphical side, one will be able to select

- INPUT: mesh layer

- INPUT: dataset groups (multichoice, ideally "all/none" button)

- INPUT: a timestep (combo box)

and

- OUTPUT: geopackage filename where to put export or "in-memory" option

the input/output UX should be in-line with most used processing algorithms so user knows where to click.

-----

```

m = iface.activeLayer()

dp = m.dataProvider()

dp

<qgis._core.QgsMeshDataProvider object at 0x12d194678>

mesh = QgsMesh()

dp.populateMesh(mesh)

mesh.faceCount()

1600

mesh.face(0)

[34, 33, 0, 1]

mesh.vertexCount()

1683

mesh.vertex(0)

<QgsPoint: PointZ (20 10 0)>

dp.datasetGroupCount()

1

groupMeta = dp.datasetGroupMetadata(0)

groupMeta.isScalar()

False

dp.datasetCount(QgsMeshDatasetIndex(0))

27

values = dp.datasetValues(QgsMeshDatasetIndex(0, 2), 0, 300)

value = values.value(0)

value

<qgis._core.QgsMeshDatasetValue object at 0x12d194948>

value.x()

nan

value.y()

nan

```

|

process

|

export mesh datasets to vector format we need to add processing algorithm in crayfish plugin to be able to export raw mdal data to geopackage mdal supports data defined on vertices and faces also it defines scalar data and vector data x y relevant docs test data ascii dat a for face datasets one feature in the vector layer will be one face attributes of the face will be dataset values in particular timestep e g let say we have dataset depth and velocity so attributes will be depth velocity x velocity y b for vertex datasets the attributes will be the same but the exported features will be nodes vertices note user will be able to export just one selected timestep if one wants to export more all best to run this algorithm in batch mode note that some datasets have just value e g depth maximums in this case it does not matter which timestep user selects it is always exported as for graphical side one will be able to select input mesh layer input dataset groups multichoice ideally all none button input a timestep combo box and output geopackage filename where to put export or in memory option the input output ux should be in line with most used processing algorithms so user knows where to click m iface activelayer dp m dataprovider dp mesh qgsmesh dp populatemesh mesh mesh facecount mesh face mesh vertexcount mesh vertex dp datasetgroupcount groupmeta dp datasetgroupmetadata groupmeta isscalar false dp datasetcount qgsmeshdatasetindex values dp datasetvalues qgsmeshdatasetindex value values value value value x nan value y nan

| 1

|

313,420

| 9,561,330,726

|

IssuesEvent

|

2019-05-03 22:45:07

|

AugurProject/augur

|

https://api.github.com/repos/AugurProject/augur

|

closed

|

Update v1 docs with recent Augur Node changes

|

Chore Priority: Medium

|

This is mostly for changes made to `getUserTradingPositions` and `getReportingFees`:

https://github.com/AugurProject/augur-node/pull/821

https://github.com/AugurProject/augur-node/pull/834

https://github.com/AugurProject/augur-node/pull/840

https://github.com/AugurProject/augur-node/pull/846

https://github.com/AugurProject/augur-node/pull/854

Also includes a few other miscellaneous recent changes to Augur Node.

|

1.0

|

Update v1 docs with recent Augur Node changes - This is mostly for changes made to `getUserTradingPositions` and `getReportingFees`:

https://github.com/AugurProject/augur-node/pull/821

https://github.com/AugurProject/augur-node/pull/834

https://github.com/AugurProject/augur-node/pull/840

https://github.com/AugurProject/augur-node/pull/846

https://github.com/AugurProject/augur-node/pull/854

Also includes a few other miscellaneous recent changes to Augur Node.

|

non_process

|

update docs with recent augur node changes this is mostly for changes made to getusertradingpositions and getreportingfees also includes a few other miscellaneous recent changes to augur node

| 0

|

59,680

| 24,849,392,240

|

IssuesEvent

|

2022-10-26 18:38:50

|

cityofaustin/atd-data-tech

|

https://api.github.com/repos/cityofaustin/atd-data-tech

|

closed

|

[Enhancement] Address UI shortcomings with status updates

|

Type: Bug Report Service: Dev Need: 2-Should Have Type: Enhancement Product: Moped Project: Moped v2.0

|

- [x] add linebreaks to project types and project partners

- [x] add border radius to highlighted div

- [x] autofocus inputs

- [x] Set cursor to "pointer" when hovering over editable field

- [x] fix up status update highlight to not highlight the label

- [x] Display ` - ` instead of `None` when a field is blank

<img width="1451" alt="Screen Shot 2022-10-06 at 2 55 23 PM" src="https://user-images.githubusercontent.com/14793120/194395770-4951a601-0aa4-4f46-8464-037653125c2c.png">

|

1.0

|

[Enhancement] Address UI shortcomings with status updates - - [x] add linebreaks to project types and project partners

- [x] add border radius to highlighted div

- [x] autofocus inputs

- [x] Set cursor to "pointer" when hovering over editable field

- [x] fix up status update highlight to not highlight the label

- [x] Display ` - ` instead of `None` when a field is blank

<img width="1451" alt="Screen Shot 2022-10-06 at 2 55 23 PM" src="https://user-images.githubusercontent.com/14793120/194395770-4951a601-0aa4-4f46-8464-037653125c2c.png">

|

non_process

|

address ui shortcomings with status updates add linebreaks to project types and project partners add border radius to highlighted div autofocus inputs set cursor to pointer when hovering over editable field fix up status update highlight to not highlight the label display instead of none when a field is blank img width alt screen shot at pm src

| 0

|

825,212

| 31,279,090,152

|

IssuesEvent

|

2023-08-22 08:26:44

|

bitcoin-dev-project/sim-ln

|

https://api.github.com/repos/bitcoin-dev-project/sim-ln

|

opened

|

Feature: Generate Activity From Graph Topology

|

feature Medium Priority

|

Rather than require a user to provide a description of activity, provide a mode that will randomly generate activity based on the graph + some heuristics, eg:

* Number of channels a node has

* Size of channels

This is primarily aimed to serve the "regtest" case where the person running the simulation has access to all of the nodes, and can just easily spin up the simulation to run random activity.

|

1.0

|

Feature: Generate Activity From Graph Topology - Rather than require a user to provide a description of activity, provide a mode that will randomly generate activity based on the graph + some heuristics, eg:

* Number of channels a node has

* Size of channels

This is primarily aimed to serve the "regtest" case where the person running the simulation has access to all of the nodes, and can just easily spin up the simulation to run random activity.

|

non_process

|

feature generate activity from graph topology rather than require a user to provide a description of activity provide a mode that will randomly generate activity based on the graph some heuristics eg number of channels a node has size of channels this is primarily aimed to serve the regtest case where the person running the simulation has access to all of the nodes and can just easily spin up the simulation to run random activity

| 0

|

2,805

| 5,738,493,411

|

IssuesEvent

|

2017-04-23 04:47:19

|

g8os/core0

|

https://api.github.com/repos/g8os/core0

|

closed

|

Installer for G8OS

|

process_wontfix

|

We need an installer that prepare the disks and filesystem on the host machine.

Reason for this is the G8OS FS needs to reserve some disk space to store its backend files.

Also now that we will have to include extra tools then the one from the initramfs (qemu/kvm, libvirt), having a dedicated space for the core0 on a disk to store these file is also required.

|

1.0

|

Installer for G8OS - We need an installer that prepare the disks and filesystem on the host machine.

Reason for this is the G8OS FS needs to reserve some disk space to store its backend files.

Also now that we will have to include extra tools then the one from the initramfs (qemu/kvm, libvirt), having a dedicated space for the core0 on a disk to store these file is also required.

|

process

|

installer for we need an installer that prepare the disks and filesystem on the host machine reason for this is the fs needs to reserve some disk space to store its backend files also now that we will have to include extra tools then the one from the initramfs qemu kvm libvirt having a dedicated space for the on a disk to store these file is also required

| 1

|

168,524

| 14,163,644,763

|

IssuesEvent

|

2020-11-12 02:54:20

|

pi-fatec-bd/semaforo-consumidor

|

https://api.github.com/repos/pi-fatec-bd/semaforo-consumidor

|

closed

|

Como é o Calculo do Score

|

documentation front-end

|

O usuário deve entender como é calculado o score através da página inicial.

**Requisito relacionado:** R19

**Nome da branch:** explicar-score

|

1.0

|

Como é o Calculo do Score - O usuário deve entender como é calculado o score através da página inicial.

**Requisito relacionado:** R19

**Nome da branch:** explicar-score

|

non_process

|

como é o calculo do score o usuário deve entender como é calculado o score através da página inicial requisito relacionado nome da branch explicar score

| 0

|

445,763

| 31,280,890,117

|

IssuesEvent

|

2023-08-22 09:30:40

|

openstack-k8s-operators/install_yamls

|

https://api.github.com/repos/openstack-k8s-operators/install_yamls

|

closed

|

Getting kubectl: command not found error

|

bug documentation

|

Getting below issue when running make openstack command using crc,

~/install_yamls/out/operator/baremetal-operator

~/install_yamls/out/operator/baremetal-operator ~/install_yamls/out/operator/baremetal-operator

tools/deploy.sh: line 213: kubectl: command not found

make: *** [Makefile:384: crc_bmo_setup] Error 127

|

1.0

|

Getting kubectl: command not found error - Getting below issue when running make openstack command using crc,

~/install_yamls/out/operator/baremetal-operator

~/install_yamls/out/operator/baremetal-operator ~/install_yamls/out/operator/baremetal-operator

tools/deploy.sh: line 213: kubectl: command not found

make: *** [Makefile:384: crc_bmo_setup] Error 127

|

non_process

|

getting kubectl command not found error getting below issue when running make openstack command using crc install yamls out operator baremetal operator install yamls out operator baremetal operator install yamls out operator baremetal operator tools deploy sh line kubectl command not found make error

| 0

|

13,635

| 16,255,150,816

|

IssuesEvent

|

2021-05-08 03:06:57

|

tikv/tikv

|

https://api.github.com/repos/tikv/tikv

|

closed

|

copr: Roadmap to chunk-based Enum/Set support in TiKV

|

sig/coprocessor type/enhancement

|

## Goal

Implement RFC https://github.com/tikv/rfcs/pull/57

## Roadmap

- [x] Add Enum/Set type #8849

- [x] Add ChunkedVecEnum/ChunkedVecSet type #8948 #8988

- [x] Add enum/set into ScalarValue and VectorValue #9021

- [x] Implement enum/set related copr functions #9133

- [x] Implement enum/set related aggr functions

- [x] Count #9143

- [x] First #9135

- [x] Sum #9148 #9184

- [x] Avg #9186

- [x] Max/Min #9146 #9184

---

- [ ] Add `elems` in FieldType (tipb)

- [ ] Export `elems` from praser to tipb (tidb)

- [ ] Extrace `elems` from FieldType to construct ChunkedVecEnum/ChunkedVecSet

|

1.0

|

copr: Roadmap to chunk-based Enum/Set support in TiKV - ## Goal

Implement RFC https://github.com/tikv/rfcs/pull/57

## Roadmap

- [x] Add Enum/Set type #8849

- [x] Add ChunkedVecEnum/ChunkedVecSet type #8948 #8988

- [x] Add enum/set into ScalarValue and VectorValue #9021

- [x] Implement enum/set related copr functions #9133

- [x] Implement enum/set related aggr functions

- [x] Count #9143

- [x] First #9135

- [x] Sum #9148 #9184

- [x] Avg #9186

- [x] Max/Min #9146 #9184

---

- [ ] Add `elems` in FieldType (tipb)

- [ ] Export `elems` from praser to tipb (tidb)

- [ ] Extrace `elems` from FieldType to construct ChunkedVecEnum/ChunkedVecSet

|

process

|

copr roadmap to chunk based enum set support in tikv goal implement rfc roadmap add enum set type add chunkedvecenum chunkedvecset type add enum set into scalarvalue and vectorvalue implement enum set related copr functions implement enum set related aggr functions count first sum avg max min add elems in fieldtype tipb export elems from praser to tipb tidb extrace elems from fieldtype to construct chunkedvecenum chunkedvecset

| 1

|

4,836

| 7,726,701,323

|

IssuesEvent

|

2018-05-24 22:14:46

|

nion-software/nionswift

|

https://api.github.com/repos/nion-software/nionswift

|

opened

|

Improve error messages when operations are applied to invalid inputs.

|

f - processing level - easy p2 - high type - bug w4 - ready

|

The "error message" right now is to do nothing.

An alert? Or a notification? Something in the output window?

|

1.0

|

Improve error messages when operations are applied to invalid inputs. - The "error message" right now is to do nothing.

An alert? Or a notification? Something in the output window?

|

process

|

improve error messages when operations are applied to invalid inputs the error message right now is to do nothing an alert or a notification something in the output window

| 1

|

16,490

| 5,240,878,633

|

IssuesEvent

|

2017-01-31 14:22:54

|

jOOQ/jOOQ

|

https://api.github.com/repos/jOOQ/jOOQ

|

closed

|

Oracle types in package are not generated

|

C: Code Generation C: DB: Oracle P: High R: Worksforme T: Defect

|

The jOOQ generator does not generate classes for types inside a package if only the package name is given.

Given a package with the name "per_per". If I tell jOOQ to include this package in the code-generation with

```xml

<includes>per_per</includes>

```

It will create classes for all Stored Procedures inside the package. But the types in the package are ignored.

This behavior stays exactly the same if I use this:

```xml

<includes>per_per(\..*)?</includes>

```

Only if I add each type with name then jOOQ will generate classes.

```xml

<includes>per_per

| TYPE_NAME1

| TYPE_NAME2

|...

</includes>

```

I think it would be a great improvement if I only had to specifiy the package name and the jooq generator includes the types and the procedures inside the package.

|

1.0

|

Oracle types in package are not generated - The jOOQ generator does not generate classes for types inside a package if only the package name is given.

Given a package with the name "per_per". If I tell jOOQ to include this package in the code-generation with

```xml

<includes>per_per</includes>

```

It will create classes for all Stored Procedures inside the package. But the types in the package are ignored.

This behavior stays exactly the same if I use this:

```xml

<includes>per_per(\..*)?</includes>

```

Only if I add each type with name then jOOQ will generate classes.

```xml

<includes>per_per

| TYPE_NAME1

| TYPE_NAME2

|...

</includes>

```

I think it would be a great improvement if I only had to specifiy the package name and the jooq generator includes the types and the procedures inside the package.

|

non_process

|

oracle types in package are not generated the jooq generator does not generate classes for types inside a package if only the package name is given given a package with the name per per if i tell jooq to include this package in the code generation with xml per per it will create classes for all stored procedures inside the package but the types in the package are ignored this behavior stays exactly the same if i use this xml per per only if i add each type with name then jooq will generate classes xml per per type type i think it would be a great improvement if i only had to specifiy the package name and the jooq generator includes the types and the procedures inside the package

| 0

|

108,627

| 16,796,206,980

|

IssuesEvent

|

2021-06-16 04:10:41

|

Techini/WebGoat

|

https://api.github.com/repos/Techini/WebGoat

|

opened

|

WS-2020-0293 (Medium) detected in spring-security-web-5.2.0.RELEASE.jar

|

security vulnerability

|

## WS-2020-0293 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-security-web-5.2.0.RELEASE.jar</b></p></summary>

<p>spring-security-web</p>

<p>Library home page: <a href="http://spring.io/spring-security">http://spring.io/spring-security</a></p>

<p>Path to dependency file: WebGoat/webgoat-integration-tests/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/springframework/security/spring-security-web/5.2.0.RELEASE/spring-security-web-5.2.0.RELEASE.jar</p>

<p>

Dependency Hierarchy:

- webwolf-v8.0.0-SNAPSHOT.jar (Root Library)

- spring-boot-starter-security-2.2.0.RELEASE.jar

- :x: **spring-security-web-5.2.0.RELEASE.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/Techini/WebGoat/commit/d33cc0e32a0d1b949ff1b85af16890cd452276f8">d33cc0e32a0d1b949ff1b85af16890cd452276f8</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Spring Security before 5.2.9, 5.3.7, and 5.4.3 vulnerable to side-channel attacks. Vulnerable versions of Spring Security don't use constant time comparisons for CSRF tokens.

<p>Publish Date: 2020-12-17

<p>URL: <a href=https://github.com/spring-projects/spring-security/commit/40e027c56d11b9b4c5071360bfc718165c937784>WS-2020-0293</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.9</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None