Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 744 | labels stringlengths 4 574 | body stringlengths 9 211k | index stringclasses 10

values | text_combine stringlengths 96 211k | label stringclasses 2

values | text stringlengths 96 188k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

8,800 | 11,908,256,757 | IssuesEvent | 2020-03-31 00:25:35 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Define how many features to read in a processing model | Feature Request Processing | Author Name: **Magnus Nilsson** (Magnus Nilsson)

Original Redmine Issue: [20952](https://issues.qgis.org/issues/20952)

Redmine category:processing/modeller

---

In some cases, I have large data sets (millions of features or more) and when creating a processing model, I would like to be able to set a (temporary) limit... | 1.0 | Define how many features to read in a processing model - Author Name: **Magnus Nilsson** (Magnus Nilsson)

Original Redmine Issue: [20952](https://issues.qgis.org/issues/20952)

Redmine category:processing/modeller

---

In some cases, I have large data sets (millions of features or more) and when creating a processing ... | process | define how many features to read in a processing model author name magnus nilsson magnus nilsson original redmine issue redmine category processing modeller in some cases i have large data sets millions of features or more and when creating a processing model i would like to be able to set a t... | 1 |

4,532 | 7,372,788,466 | IssuesEvent | 2018-03-13 15:35:26 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Resizing root partition | cxp in-process product-question triaged virtual-machines-linux | How do I resize the size of /dev/sda1 mounted on /? I can't easily umount it, since it is always being used when the VM is started.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 79e96564-edb3-8f68-63a4-960954f5d25b

* Version Independent ID... | 1.0 | Resizing root partition - How do I resize the size of /dev/sda1 mounted on /? I can't easily umount it, since it is always being used when the VM is started.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 79e96564-edb3-8f68-63a4-960954f5d25... | process | resizing root partition how do i resize the size of dev mounted on i can t easily umount it since it is always being used when the vm is started document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id ... | 1 |

1,690 | 2,517,618,217 | IssuesEvent | 2015-01-16 16:04:07 | pydio/pydio-sync | https://api.github.com/repos/pydio/pydio-sync | closed | Console does not close on Ctrl-C | priority:high | Running:

python -m pydio.main -s http://foo.bar -d c:\foo\bar -w workspace -u user -p pass

After a while (when sync threads start) process does not allow to close it'self from console, have to be killed via process manager. | 1.0 | Console does not close on Ctrl-C - Running:

python -m pydio.main -s http://foo.bar -d c:\foo\bar -w workspace -u user -p pass

After a while (when sync threads start) process does not allow to close it'self from console, have to be killed via process manager. | non_process | console does not close on ctrl c running python m pydio main s d c foo bar w workspace u user p pass after a while when sync threads start process does not allow to close it self from console have to be killed via process manager | 0 |

595,529 | 18,068,140,054 | IssuesEvent | 2021-09-20 21:48:42 | cormas/cormas | https://api.github.com/repos/cormas/cormas | closed | Apply scenario settings button should provide feedback | enhancement UI priority 2 | Once the Apply button was hit, it would be nice to have some kind of visual confirmation that the settings were applied. The information dialog should not be modal or require user interaction.

| 1.0 | Apply scenario settings button should provide feedback - Once the Apply button was hit, it would be nice to have some kind of visual confirmation that the settings were applied. The information dialog should not be modal or require user interaction.

| non_process | apply scenario settings button should provide feedback once the apply button was hit it would be nice to have some kind of visual confirmation that the settings were applied the information dialog should not be modal or require user interaction | 0 |

126,593 | 17,947,249,657 | IssuesEvent | 2021-09-12 02:52:27 | corbantjoyce/website | https://api.github.com/repos/corbantjoyce/website | closed | CVE-2020-28498 (Medium) detected in elliptic-6.5.2.tgz - autoclosed | security vulnerability | ## CVE-2020-28498 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.5.2.tgz</b></p></summary>

<p>EC cryptography</p>

<p>Library home page: <a href="https://registry.npmjs.or... | True | CVE-2020-28498 (Medium) detected in elliptic-6.5.2.tgz - autoclosed - ## CVE-2020-28498 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.5.2.tgz</b></p></summary>

<p>EC cry... | non_process | cve medium detected in elliptic tgz autoclosed cve medium severity vulnerability vulnerable library elliptic tgz ec cryptography library home page a href path to dependency file website package json path to vulnerable library website node modules elliptic package j... | 0 |

7,428 | 10,546,438,245 | IssuesEvent | 2019-10-02 21:29:01 | openPMD/openPMD-projects | https://api.github.com/repos/openPMD/openPMD-projects | opened | Add GAPD | data source post-processing | Hi @franzpoeschel & @ejcjason,

do you want to provide a PR to add GAPD to the list of openPMD-enabled projects in this repo? :sparkles: :rocket:

All the best,

Axel | 1.0 | Add GAPD - Hi @franzpoeschel & @ejcjason,

do you want to provide a PR to add GAPD to the list of openPMD-enabled projects in this repo? :sparkles: :rocket:

All the best,

Axel | process | add gapd hi franzpoeschel ejcjason do you want to provide a pr to add gapd to the list of openpmd enabled projects in this repo sparkles rocket all the best axel | 1 |

12,446 | 9,661,140,817 | IssuesEvent | 2019-05-20 17:12:12 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Is this supported with the REST version of Speech-to-text ? | cognitive-services/svc cxp product-question speech-service/subsvc triaged | This page only lists examples for the SDK version and there are no mentions of this feature on the REST API page. I really need this feature with the REST version, is it supported ?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 6c3e4d66-e6... | 2.0 | Is this supported with the REST version of Speech-to-text ? - This page only lists examples for the SDK version and there are no mentions of this feature on the REST API page. I really need this feature with the REST version, is it supported ?

---

#### Document Details

⚠ *Do not edit this section. It is required for ... | non_process | is this supported with the rest version of speech to text this page only lists examples for the sdk version and there are no mentions of this feature on the rest api page i really need this feature with the rest version is it supported document details ⚠ do not edit this section it is required for ... | 0 |

88,922 | 3,787,539,497 | IssuesEvent | 2016-03-21 11:06:27 | kubernetes/dashboard | https://api.github.com/repos/kubernetes/dashboard | closed | Inconsistent behavior on logs page for different k8s master versions | area/usability kind/bug priority/P2 | #### Issue details

I'm using `local-up-cluster` with newest kubernetes HEAD. After deploying some card with wrong `containerImage` pod stays in pending state with errors. Logs page show `Internal error(500)`. On older cluster we can see normal log entry saying that pod is not started and is in state pending.

##### ... | 1.0 | Inconsistent behavior on logs page for different k8s master versions - #### Issue details

I'm using `local-up-cluster` with newest kubernetes HEAD. After deploying some card with wrong `containerImage` pod stays in pending state with errors. Logs page show `Internal error(500)`. On older cluster we can see normal log ... | non_process | inconsistent behavior on logs page for different master versions issue details i m using local up cluster with newest kubernetes head after deploying some card with wrong containerimage pod stays in pending state with errors logs page show internal error on older cluster we can see normal log entr... | 0 |

18,133 | 24,174,691,436 | IssuesEvent | 2022-09-22 23:22:47 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | Outputs of processing algorithms should honor default setting | Processing Feedback Feature Request Stale | ### Feature description

It seems that in all processing algorithms the output is stated as a default 'temporary layer' , for instance check the buffer part

https://docs.qgis.org/testing/en/docs/user_manual/processing_algs/qgis/vectorgeometry.html#buffer

Now in theory when an optional parameter is not provided the ... | 1.0 | Outputs of processing algorithms should honor default setting - ### Feature description

It seems that in all processing algorithms the output is stated as a default 'temporary layer' , for instance check the buffer part

https://docs.qgis.org/testing/en/docs/user_manual/processing_algs/qgis/vectorgeometry.html#buffer

... | process | outputs of processing algorithms should honor default setting feature description it seems that in all processing algorithms the output is stated as a default temporary layer for instance check the buffer part now in theory when an optional parameter is not provided the default value is to be used or ... | 1 |

21,261 | 28,434,726,608 | IssuesEvent | 2023-04-15 06:48:43 | GoogleCloudPlatform/pgadapter | https://api.github.com/repos/GoogleCloudPlatform/pgadapter | closed | Test failure for random date tests | type: process priority: p3 | ```

AbortedMockServerTest.testRandomResults:876->assertEqual:931 expected:<1582-10-12> but was:<1582-10-22>

```

The random results tests can fail if a random date during the julian/gregorian cut-off is selected. | 1.0 | Test failure for random date tests - ```

AbortedMockServerTest.testRandomResults:876->assertEqual:931 expected:<1582-10-12> but was:<1582-10-22>

```

The random results tests can fail if a random date during the julian/gregorian cut-off is selected. | process | test failure for random date tests abortedmockservertest testrandomresults assertequal expected but was the random results tests can fail if a random date during the julian gregorian cut off is selected | 1 |

14,451 | 2,812,163,966 | IssuesEvent | 2015-05-18 06:27:27 | minux/go-tour | https://api.github.com/repos/minux/go-tour | closed | ui: block page change on tab change in Firefox | auto-migrated Priority-Medium Type-Defect | ```

>What steps will reproduce the problem?

1. Start go-tour in a tabbed browser with at least one other tab open

2. Press Ctrl+page up to switch to a different tab

3. Press Ctrl+page down to switch back

>What is the expected output? What do you see instead?

Expect to stay on same slide in go-tour; instead, go-tour p... | 1.0 | ui: block page change on tab change in Firefox - ```

>What steps will reproduce the problem?

1. Start go-tour in a tabbed browser with at least one other tab open

2. Press Ctrl+page up to switch to a different tab

3. Press Ctrl+page down to switch back

>What is the expected output? What do you see instead?

Expect to ... | non_process | ui block page change on tab change in firefox what steps will reproduce the problem start go tour in a tabbed browser with at least one other tab open press ctrl page up to switch to a different tab press ctrl page down to switch back what is the expected output what do you see instead expect to ... | 0 |

252,835 | 19,073,632,805 | IssuesEvent | 2021-11-27 11:02:34 | arcanaktepe/swe5732021fall | https://api.github.com/repos/arcanaktepe/swe5732021fall | closed | Requirements validation questions | documentation enhancement | Requirements validation questions should be updated with new ones | 1.0 | Requirements validation questions - Requirements validation questions should be updated with new ones | non_process | requirements validation questions requirements validation questions should be updated with new ones | 0 |

25,149 | 12,501,513,604 | IssuesEvent | 2020-06-02 01:35:38 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | opened | Model fit incredibly slow | type:performance | Hi, I've been trying to fit the following model for the last 3 hours and the the only output displayed by the model is 'Epoch 1/5'. I noticed when others fitted their models, the output would also display 'Train on X samples, Validate on X samples' and thought maybe the lack of seeing that display and the model hanging... | True | Model fit incredibly slow - Hi, I've been trying to fit the following model for the last 3 hours and the the only output displayed by the model is 'Epoch 1/5'. I noticed when others fitted their models, the output would also display 'Train on X samples, Validate on X samples' and thought maybe the lack of seeing that ... | non_process | model fit incredibly slow hi i ve been trying to fit the following model for the last hours and the the only output displayed by the model is epoch i noticed when others fitted their models the output would also display train on x samples validate on x samples and thought maybe the lack of seeing that ... | 0 |

8,282 | 7,324,877,288 | IssuesEvent | 2018-03-03 01:41:44 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | [uap] CoreFx build is failing since 'RemotelyInvokable' does not contain a definition for 'LongWait' | area-Infrastructure | https://devdiv.visualstudio.com/DefaultCollection/DevDiv/_build?_a=summary&buildId=1436514

```text

2018-03-02T21:25:47.5255290Z Build FAILED.

2018-03-02T21:25:47.5268140Z

2018-03-02T21:25:47.5269301Z ProcessTestBase.Uap.cs(68,57): error CS0117: 'RemotelyInvokable' does not contain a definition for 'LongWait' [E:\A... | 1.0 | [uap] CoreFx build is failing since 'RemotelyInvokable' does not contain a definition for 'LongWait' - https://devdiv.visualstudio.com/DefaultCollection/DevDiv/_build?_a=summary&buildId=1436514

```text

2018-03-02T21:25:47.5255290Z Build FAILED.

2018-03-02T21:25:47.5268140Z

2018-03-02T21:25:47.5269301Z ProcessTestB... | non_process | corefx build is failing since remotelyinvokable does not contain a definition for longwait text build failed processtestbase uap cs error remotelyinvokable does not contain a definition for longwait warning s er... | 0 |

136,929 | 5,290,518,337 | IssuesEvent | 2017-02-08 20:05:45 | urbit/urbit | https://api.github.com/repos/urbit/urbit | closed | eyre crashes with no ;head; | %eyre difficulty low priority low | With

```

;html

;body

;pre:'''

hey

kids

'''

==

==

```

as a `hymn.hook` I produce a crash in eyre:

```

/~bud/home/~2015.6.3..20.24.40..d933/arvo/eyre/:<[190 3].[194 68]>

```

Which by the look of it is where we inject the charset. I guess this is right because a well formed html do... | 1.0 | eyre crashes with no ;head; - With

```

;html

;body

;pre:'''

hey

kids

'''

==

==

```

as a `hymn.hook` I produce a crash in eyre:

```

/~bud/home/~2015.6.3..20.24.40..d933/arvo/eyre/:<[190 3].[194 68]>

```

Which by the look of it is where we inject the charset. I guess this is right... | non_process | eyre crashes with no head with html body pre hey kids as a hymn hook i produce a crash in eyre bud home arvo eyre which by the look of it is where we inject the charset i guess this is right because a well formed htm... | 0 |

275,321 | 8,575,571,830 | IssuesEvent | 2018-11-12 17:39:11 | aowen87/TicketTester | https://api.github.com/repos/aowen87/TicketTester | closed | Exodus reader not serving up quadratic elements when present. | Bug Likelihood: 2 - Rare Priority: Normal Severity: 4 - Crash / Wrong Results | A customer has an exodus file with a 10-node tet element. The Exodus reader serves it up as a VTK_TETRA instead of VTK_QUADRATIC_TETRA.

Standard plots seem to render okay, but the Slice operator causes havoc.

I've attached the provided sample file.

Looking at the reader's source it should be a simple enough cha... | 1.0 | Exodus reader not serving up quadratic elements when present. - A customer has an exodus file with a 10-node tet element. The Exodus reader serves it up as a VTK_TETRA instead of VTK_QUADRATIC_TETRA.

Standard plots seem to render okay, but the Slice operator causes havoc.

I've attached the provided sample file.

... | non_process | exodus reader not serving up quadratic elements when present a customer has an exodus file with a node tet element the exodus reader serves it up as a vtk tetra instead of vtk quadratic tetra standard plots seem to render okay but the slice operator causes havoc i ve attached the provided sample file ... | 0 |

19,379 | 10,416,896,137 | IssuesEvent | 2019-09-14 17:10:52 | BattletechModders/ModTek | https://api.github.com/repos/BattletechModders/ModTek | closed | Request: Improve loading performance by not reading un-munged files | performance | InnerSphereMap loads more than 3K .json files. This adds significant time to the system load, due to the ExpandManifest() calls. When this line is invoked:

` var childModEntry = new ModEntry(modEntry, path, InferIDFromFile(filePath));`

The JSON files are parsed and loaded into a cache. This takes between 30-200... | True | Request: Improve loading performance by not reading un-munged files - InnerSphereMap loads more than 3K .json files. This adds significant time to the system load, due to the ExpandManifest() calls. When this line is invoked:

` var childModEntry = new ModEntry(modEntry, path, InferIDFromFile(filePath));`

The JS... | non_process | request improve loading performance by not reading un munged files innerspheremap loads more than json files this adds significant time to the system load due to the expandmanifest calls when this line is invoked var childmodentry new modentry modentry path inferidfromfile filepath the jso... | 0 |

15,461 | 19,678,442,243 | IssuesEvent | 2022-01-11 14:39:10 | plazi/community | https://api.github.com/repos/plazi/community | closed | to be processed | process request | * please process this including holotype, and GBIF

* please remove first (researchgate) page.

*

[Jouault_Nel_2021_Tyrannomecia_Menat.pdf](https://github.com/plazi/community/files/7835665/Jouault_Nel_2021_Tyrannomecia_Menat.pdf)

e | 1.0 | to be processed - * please process this including holotype, and GBIF

* please remove first (researchgate) page.

*

[Jouault_Nel_2021_Tyrannomecia_Menat.pdf](https://github.com/plazi/community/files/7835665/Jouault_Nel_2021_Tyrannomecia_Menat.pdf)

e | process | to be processed please process this including holotype and gbif please remove first researchgate page e | 1 |

1,007 | 3,474,612,828 | IssuesEvent | 2015-12-25 00:16:23 | DoSomething/MessageBroker-PHP | https://api.github.com/repos/DoSomething/MessageBroker-PHP | closed | Process loggingQueue to create loggingGatewayQueue entries | mbc-logging-processor | Create new script `mbc-logging-processot_userTransactionals` to process messages in `loggingQueue` to create entries in `loggingGatewayQueue`.

- [ ] Add `log-type` = `transactional`

**Related**:

- https://github.com/DoSomething/MessageBroker-PHP/issues/13

**Note**:

This is a transition to ultimately point tr... | 1.0 | Process loggingQueue to create loggingGatewayQueue entries - Create new script `mbc-logging-processot_userTransactionals` to process messages in `loggingQueue` to create entries in `loggingGatewayQueue`.

- [ ] Add `log-type` = `transactional`

**Related**:

- https://github.com/DoSomething/MessageBroker-PHP/issues... | process | process loggingqueue to create logginggatewayqueue entries create new script mbc logging processot usertransactionals to process messages in loggingqueue to create entries in logginggatewayqueue add log type transactional related note this is a transition to ultimately point ... | 1 |

440,126 | 12,693,648,805 | IssuesEvent | 2020-06-22 04:07:33 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | opened | Improvement to customise the auth failure message headers description | Priority/Normal Type/Improvement | ### Describe your problem(s)

Needs to have an improvement while we are getting auth failures by customizing the error response message.

### How will you implement it

This improvement is already available in the latest WUM updated APIM 2.6.0 | 1.0 | Improvement to customise the auth failure message headers description - ### Describe your problem(s)

Needs to have an improvement while we are getting auth failures by customizing the error response message.

### How will you implement it

This improvement is already available in the latest WUM updated APIM 2.6.0 | non_process | improvement to customise the auth failure message headers description describe your problem s needs to have an improvement while we are getting auth failures by customizing the error response message how will you implement it this improvement is already available in the latest wum updated apim | 0 |

21,468 | 29,503,166,069 | IssuesEvent | 2023-06-03 02:03:28 | cypress-io/cypress | https://api.github.com/repos/cypress-io/cypress | closed | Unable to import `PreprocessorOptions` from types | type: typings npm: @cypress/webpack-preprocessor stale | Regression from https://github.com/cypress-io/cypress-webpack-preprocessor/pull/83/files

### Current behavior:

```js

const webpack = require('@cypress/webpack-preprocessor');

// Error:

// Namespace '_default' has no exported member 'PreprocessorOptions'

/** @type {webpack. PreprocessorOptions} */

const optio... | 1.0 | Unable to import `PreprocessorOptions` from types - Regression from https://github.com/cypress-io/cypress-webpack-preprocessor/pull/83/files

### Current behavior:

```js

const webpack = require('@cypress/webpack-preprocessor');

// Error:

// Namespace '_default' has no exported member 'PreprocessorOptions'

/** ... | process | unable to import preprocessoroptions from types regression from current behavior js const webpack require cypress webpack preprocessor error namespace default has no exported member preprocessoroptions type webpack preprocessoroptions const options webpackop... | 1 |

2,482 | 5,255,760,923 | IssuesEvent | 2017-02-02 16:18:49 | CGAL/cgal | https://api.github.com/repos/CGAL/cgal | closed | Polyhedron demo: bug in point selection tool | bug CGAL 3D demo Pkg::Point_set_processing_3 | ## Issue Details

When doing a rectangle selection of N points the N point are drawn in red.

But when I create a point set item from this selection it contains N-1 points.

## Environment

master | 1.0 | Polyhedron demo: bug in point selection tool - ## Issue Details

When doing a rectangle selection of N points the N point are drawn in red.

But when I create a point set item from this selection it contains N-1 points.

## Environment

master | process | polyhedron demo bug in point selection tool issue details when doing a rectangle selection of n points the n point are drawn in red but when i create a point set item from this selection it contains n points environment master | 1 |

111,512 | 9,533,436,911 | IssuesEvent | 2019-04-29 21:17:25 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | sql: speed-of-light benchmark for lower-level projections | A-testing C-enhancement | - less copies between C++ memory and Go - how much does that help?

- less deserialization from bytes into `roachpb.KeyValue` - how much does that help?

- what's the quickest we can read just one column or column family out of many?

- compare speed between an MVCCScan that retrieves 2 needed and 8 unneeded columns vs... | 1.0 | sql: speed-of-light benchmark for lower-level projections - - less copies between C++ memory and Go - how much does that help?

- less deserialization from bytes into `roachpb.KeyValue` - how much does that help?

- what's the quickest we can read just one column or column family out of many?

- compare speed between a... | non_process | sql speed of light benchmark for lower level projections less copies between c memory and go how much does that help less deserialization from bytes into roachpb keyvalue how much does that help what s the quickest we can read just one column or column family out of many compare speed between a... | 0 |

184,414 | 31,885,045,208 | IssuesEvent | 2023-09-16 21:08:22 | pulumi/ci-mgmt | https://api.github.com/repos/pulumi/ci-mgmt | closed | Workflow failure: Update GH workflows, native providers (auto-pr) | p1 kind/engineering resolution/by-design | ## Workflow Failure

[Update GH workflows, native providers (auto-pr)](https://github.com/pulumi/ci-mgmt/blob/master/.github/workflows/update-native-provider-workflows.yml) has failed. See the list of failures below:

- [2023-09-16T05:02:03.000Z](https://github.com/pulumi/ci-mgmt/actions/runs/6205404124) | 1.0 | Workflow failure: Update GH workflows, native providers (auto-pr) - ## Workflow Failure

[Update GH workflows, native providers (auto-pr)](https://github.com/pulumi/ci-mgmt/blob/master/.github/workflows/update-native-provider-workflows.yml) has failed. See the list of failures below:

- [2023-09-16T05:02:03.000Z](https... | non_process | workflow failure update gh workflows native providers auto pr workflow failure has failed see the list of failures below | 0 |

13,972 | 16,744,831,307 | IssuesEvent | 2021-06-11 14:19:46 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] Push notifications are not triggered to iOS on disabling and re-enabling the 'Receive Push Notifications?' option | Bug P2 Process: Fixed Process: Tested QA Process: Tested dev iOS | **Steps:**

1. Login/Signup into mobile

2. Navigate to my account

3. Disable 'Receive Push Notifications?'

4. Send push notification from SB. Push notifications are not triggered to iOS

5. Enable the option 'Receive Push Notifications?' again

6. Send push notification from SB and Observe

**Actual:** Push noti... | 3.0 | [iOS] Push notifications are not triggered to iOS on disabling and re-enabling the 'Receive Push Notifications?' option - **Steps:**

1. Login/Signup into mobile

2. Navigate to my account

3. Disable 'Receive Push Notifications?'

4. Send push notification from SB. Push notifications are not triggered to iOS

5. Ena... | process | push notifications are not triggered to ios on disabling and re enabling the receive push notifications option steps login signup into mobile navigate to my account disable receive push notifications send push notification from sb push notifications are not triggered to ios enable ... | 1 |

1,920 | 4,756,474,355 | IssuesEvent | 2016-10-24 14:06:42 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | doc: `process` formatting issues | doc good first contribution process | * **Version**: master, v6.x

* **Platform**: n/a

* **Subsystem**: doc

I noticed there are some markdown formatting issues with the `process` documentation:

* There is a missing backtick in the description for [`process.setuid()`](https://github.com/nodejs/node/blob/50be885285c602c2aa1eb9c6010cb26fe7d186ff/doc/ap... | 1.0 | doc: `process` formatting issues - * **Version**: master, v6.x

* **Platform**: n/a

* **Subsystem**: doc

I noticed there are some markdown formatting issues with the `process` documentation:

* There is a missing backtick in the description for [`process.setuid()`](https://github.com/nodejs/node/blob/50be885285c6... | process | doc process formatting issues version master x platform n a subsystem doc i noticed there are some markdown formatting issues with the process documentation there is a missing backtick in the description for after process setuid id there are some missing link referen... | 1 |

8,768 | 11,885,536,640 | IssuesEvent | 2020-03-27 19:49:32 | googleapis/google-cloud-cpp | https://api.github.com/repos/googleapis/google-cloud-cpp | closed | Consider: Which CI tests should run with -fno-exceptions | type: process | We [decided](https://github.com/googleapis/google-cloud-cpp/blob/master/doc/adr/2019-01-04-error-reporting-with-statusor.md) that our libraries will not require exceptions to work. That is, a user should be able to compile with `-fno-exceptions` and still be able to use our library just fine. All of our error reporting... | 1.0 | Consider: Which CI tests should run with -fno-exceptions - We [decided](https://github.com/googleapis/google-cloud-cpp/blob/master/doc/adr/2019-01-04-error-reporting-with-statusor.md) that our libraries will not require exceptions to work. That is, a user should be able to compile with `-fno-exceptions` and still be ab... | process | consider which ci tests should run with fno exceptions we that our libraries will not require exceptions to work that is a user should be able to compile with fno exceptions and still be able to use our library just fine all of our error reporting is done via status or statusor objects instead of thr... | 1 |

18,993 | 24,986,710,305 | IssuesEvent | 2022-11-02 15:33:51 | nextflow-io/nextflow | https://api.github.com/repos/nextflow-io/nextflow | closed | List of length 1 is converted to path | lang/processes | ## Bug report

When creating a list with a single element containing a path, this list gets automatically converted to path object.

### Expected behavior and actual behavior

I would expect that list will always be passed to processes as list regardless of number of elements stored in it.

### Steps to reproduce ... | 1.0 | List of length 1 is converted to path - ## Bug report

When creating a list with a single element containing a path, this list gets automatically converted to path object.

### Expected behavior and actual behavior

I would expect that list will always be passed to processes as list regardless of number of elements ... | process | list of length is converted to path bug report when creating a list with a single element containing a path this list gets automatically converted to path object expected behavior and actual behavior i would expect that list will always be passed to processes as list regardless of number of elements ... | 1 |

18,185 | 24,235,793,156 | IssuesEvent | 2022-09-26 23:04:51 | ethereum/EIPs | https://api.github.com/repos/ethereum/EIPs | closed | Rollback EIP-712 from "final" to "draft" OR deprecate EIP-712 with a new one | w-stale question r-process | EIP-1 mandates a "final" status to be terminal. But the current snapshot of EIP-712 is flawed in various ways, as discussed in #5457

More over, it has two "specification“ sections.

We need to make the following decision:

Option 1: rollback EIP-712 status from FINAL back to DRAFT to allow massive update, a one... | 1.0 | Rollback EIP-712 from "final" to "draft" OR deprecate EIP-712 with a new one - EIP-1 mandates a "final" status to be terminal. But the current snapshot of EIP-712 is flawed in various ways, as discussed in #5457

More over, it has two "specification“ sections.

We need to make the following decision:

Option 1: ... | process | rollback eip from final to draft or deprecate eip with a new one eip mandates a final status to be terminal but the current snapshot of eip is flawed in various ways as discussed in more over it has two specification“ sections we need to make the following decision option rollback ... | 1 |

24,211 | 7,467,424,155 | IssuesEvent | 2018-04-02 15:15:01 | zurb/foundation-sites | https://api.github.com/repos/zurb/foundation-sites | closed | build: use Husky for Git hooks | build | It makes sense to run some checks when working with the develop branch.

We should use [husky](https://github.com/typicode/husky) for this.

* [ ] eslint

* [ ] sasslint / stylelint

* [x] unit tests

* [ ] ...

Can we adapt airbnb coding guidelines for Foundation Sites? https://github.com/zurb/foundation-sites/pul... | 1.0 | build: use Husky for Git hooks - It makes sense to run some checks when working with the develop branch.

We should use [husky](https://github.com/typicode/husky) for this.

* [ ] eslint

* [ ] sasslint / stylelint

* [x] unit tests

* [ ] ...

Can we adapt airbnb coding guidelines for Foundation Sites? https://git... | non_process | build use husky for git hooks it makes sense to run some checks when working with the develop branch we should use for this eslint sasslint stylelint unit tests can we adapt airbnb coding guidelines for foundation sites | 0 |

54,696 | 13,886,161,125 | IssuesEvent | 2020-10-18 23:22:37 | ascott18/TellMeWhen | https://api.github.com/repos/ascott18/TellMeWhen | closed | [Shadowlands] [Bug] Crash when trying to edit text icons' displays | S: cantfix T: defect V: retail | **What version of TellMeWhen are you using? **

<!-- Found in-game at the top of TMW's configuration window. "The latest" is not a version. -->

9.0 PTR

**What steps will reproduce the problem?**

1.When the mouse moves to the box under the text display scheme, the game will exit directly

2.

3.

<!-- Add more step... | 1.0 | [Shadowlands] [Bug] Crash when trying to edit text icons' displays - **What version of TellMeWhen are you using? **

<!-- Found in-game at the top of TMW's configuration window. "The latest" is not a version. -->

9.0 PTR

**What steps will reproduce the problem?**

1.When the mouse moves to the box under the text di... | non_process | crash when trying to edit text icons displays what version of tellmewhen are you using ptr what steps will reproduce the problem when the mouse moves to the box under the text display scheme the game will exit directly what do you expect to happen what happens instead... | 0 |

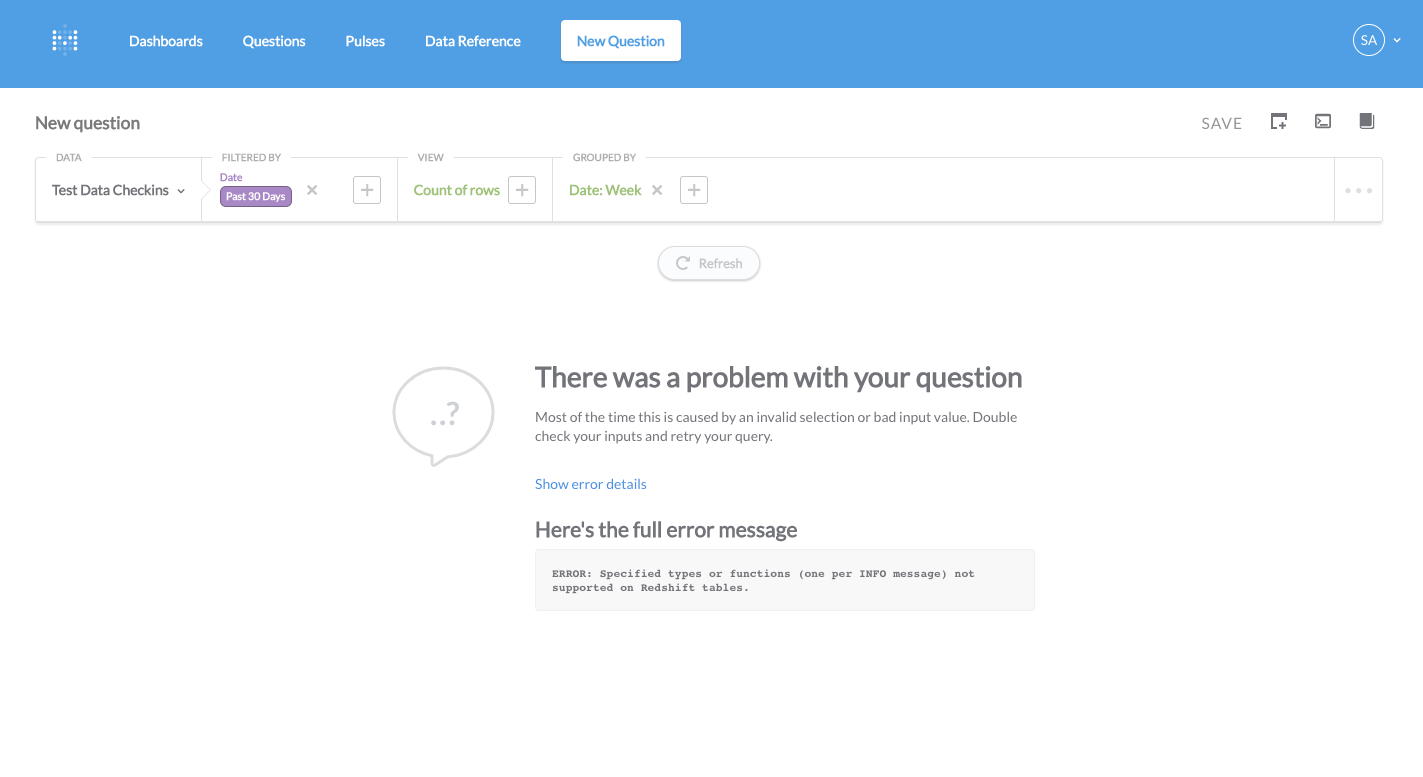

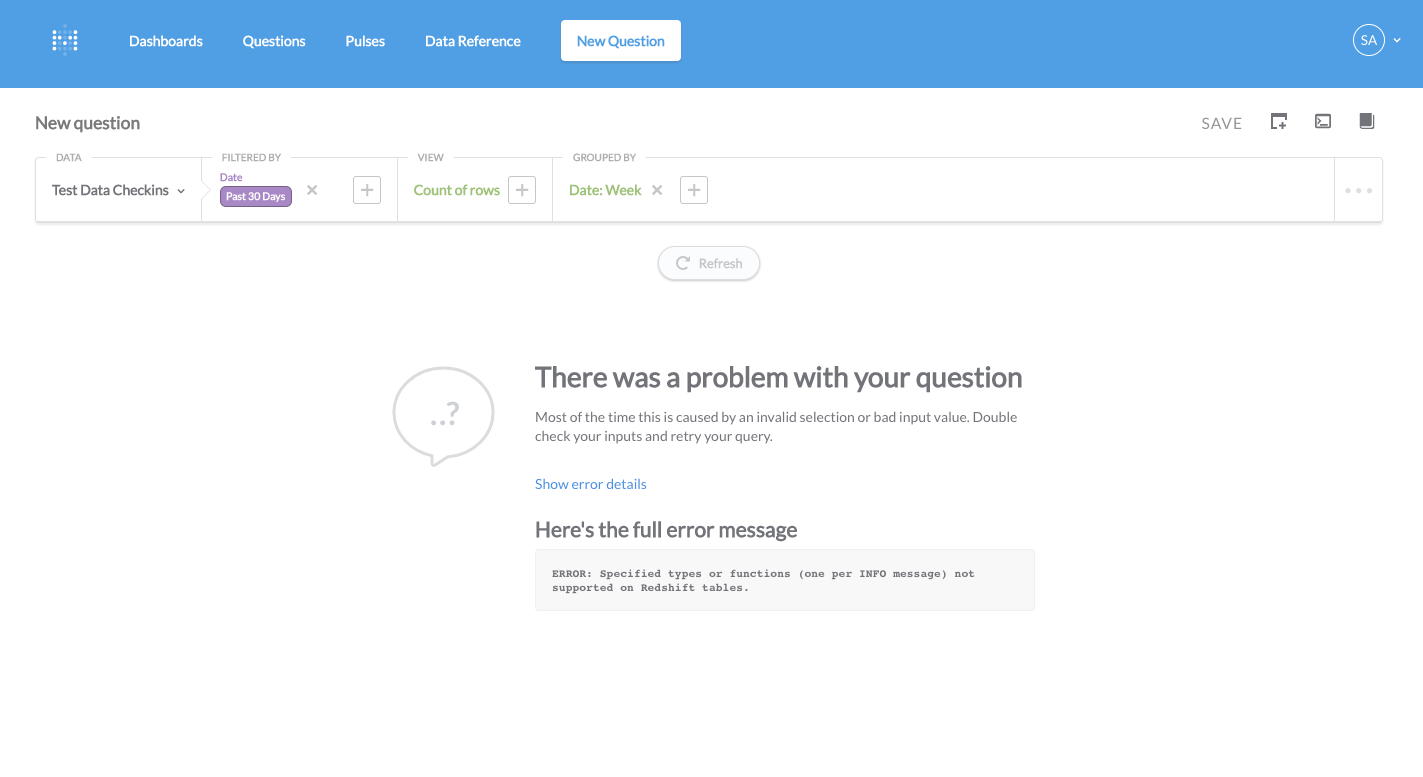

4,042 | 6,973,256,188 | IssuesEvent | 2017-12-11 19:50:46 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Error filtering on time buckets on redshift. | Bug Database/Redshift Priority/P2 Query Processor | A count of rows by week limited to the past 30 days blows up on our redshift CI instance.

| 1.0 | Error filtering on time buckets on redshift. - A count of rows by week limited to the past 30 days blows up on our redshift CI instance.

| process | error filtering on time buckets on redshift a count of rows by week limited to the past days blows up on our redshift ci instance | 1 |

544,862 | 15,930,393,674 | IssuesEvent | 2021-04-14 00:46:04 | Xmetalfanx/website | https://api.github.com/repos/Xmetalfanx/website | closed | loading="lazy" change | Priority enhancement | start doing this on SELECTED images ... keep the lazysizes.js file in place but un"link" it from the template | 1.0 | loading="lazy" change - start doing this on SELECTED images ... keep the lazysizes.js file in place but un"link" it from the template | non_process | loading lazy change start doing this on selected images keep the lazysizes js file in place but un link it from the template | 0 |

4,337 | 7,243,838,267 | IssuesEvent | 2018-02-14 13:15:58 | bounswe/bounswe2018group1 | https://api.github.com/repos/bounswe/bounswe2018group1 | closed | Searching Good Repositories | Position: In-Process Priority: High Who: Personal | Due Date: 12.02.2018

The first week homework is about git and some repositories that we like. After determining repos, everyone can create a google docs file, and update it. Then everyone should send the link of the file that he/she have created.

* [x] Cemal Aytekin

* [x] Ahmet Yasin Alp

* [x] Ece Ata

* [x]... | 1.0 | Searching Good Repositories - Due Date: 12.02.2018

The first week homework is about git and some repositories that we like. After determining repos, everyone can create a google docs file, and update it. Then everyone should send the link of the file that he/she have created.

* [x] Cemal Aytekin

* [x] Ahmet Y... | process | searching good repositories due date the first week homework is about git and some repositories that we like after determining repos everyone can create a google docs file and update it then everyone should send the link of the file that he she have created cemal aytekin ahmet yasin alp ... | 1 |

22,691 | 31,994,001,792 | IssuesEvent | 2023-09-21 08:03:31 | dotnet/csharpstandard | https://api.github.com/repos/dotnet/csharpstandard | closed | TC49-TG2 dead link to HTML version of C# standard | type: process | **Describe the bug**

At <https://www.ecma-international.org/task-groups/tc49-tg2/?tab=activities>, there is a dead link to an HTML version of C#.

**Example**

```HTML

<p>Jon Jagger has made an <a href="http://www.jaggersoft.com/csharp_standard/index.html">html</a> version of C#.</p>

```

**Expected behavior... | 1.0 | TC49-TG2 dead link to HTML version of C# standard - **Describe the bug**

At <https://www.ecma-international.org/task-groups/tc49-tg2/?tab=activities>, there is a dead link to an HTML version of C#.

**Example**

```HTML

<p>Jon Jagger has made an <a href="http://www.jaggersoft.com/csharp_standard/index.html">htm... | process | dead link to html version of c standard describe the bug at there is a dead link to an html version of c example html jon jagger has made an expected behavior delete the link perhaps link to this github repository instead additional context the link red... | 1 |

202,558 | 23,077,509,360 | IssuesEvent | 2022-07-26 02:06:46 | idmarinas/lotgd-modules | https://api.github.com/repos/idmarinas/lotgd-modules | opened | CVE-2021-35065 (High) detected in glob-parent-5.1.2.tgz, glob-parent-3.1.0.tgz | security vulnerability | ## CVE-2021-35065 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>glob-parent-5.1.2.tgz</b>, <b>glob-parent-3.1.0.tgz</b></p></summary>

<p>

<details><summary><b>glob-parent-5.1.2.tgz... | True | CVE-2021-35065 (High) detected in glob-parent-5.1.2.tgz, glob-parent-3.1.0.tgz - ## CVE-2021-35065 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>glob-parent-5.1.2.tgz</b>, <b>glob-p... | non_process | cve high detected in glob parent tgz glob parent tgz cve high severity vulnerability vulnerable libraries glob parent tgz glob parent tgz glob parent tgz extract the non magic parent path from a glob string library home page a href path to... | 0 |

115,180 | 4,652,809,470 | IssuesEvent | 2016-10-03 15:02:53 | ArctosDB/arctos | https://api.github.com/repos/ArctosDB/arctos | opened | blacklist | Priority-Critical | Nobody seems to be watching blacklist objection emails when DLM is out. There are lots, including from traveling UAM Curators, someone with a unm.edu email, etc. | 1.0 | blacklist - Nobody seems to be watching blacklist objection emails when DLM is out. There are lots, including from traveling UAM Curators, someone with a unm.edu email, etc. | non_process | blacklist nobody seems to be watching blacklist objection emails when dlm is out there are lots including from traveling uam curators someone with a unm edu email etc | 0 |

11,870 | 14,672,562,363 | IssuesEvent | 2020-12-30 10:55:31 | heim-rs/heim | https://api.github.com/repos/heim-rs/heim | closed | Replace bundled Duration::as_secs_f64 implementation with a real one | A-cpu A-process C-enhancement C-good-first-issue P-low | This one code block at `heim-process` crate: https://github.com/heim-rs/heim/blob/58d7110f803f94fb186a95a55000edea49ace7bd/heim-process/src/process/cpu_usage.rs#L27-L31 and its copy introduced in #295 (see ` heim-cpu/src/usage.rs`) should be replaced with `Duration::as_secs_f64`.

MSRV was bumped to 1.45 and it is sa... | 1.0 | Replace bundled Duration::as_secs_f64 implementation with a real one - This one code block at `heim-process` crate: https://github.com/heim-rs/heim/blob/58d7110f803f94fb186a95a55000edea49ace7bd/heim-process/src/process/cpu_usage.rs#L27-L31 and its copy introduced in #295 (see ` heim-cpu/src/usage.rs`) should be replace... | process | replace bundled duration as secs implementation with a real one this one code block at heim process crate and its copy introduced in see heim cpu src usage rs should be replaced with duration as secs msrv was bumped to and it is safe to do it now | 1 |

2,457 | 5,240,680,192 | IssuesEvent | 2017-01-31 13:51:28 | opentrials/opentrials | https://api.github.com/repos/opentrials/opentrials | closed | Normalize takeda and gsk identifiers where possible | bug Launch? Processors | # Overview

See corresponding re:dash query. Also for identifiers outside of the core set (`nct/euctr/jprn/isrctn/actrn/who`) should be improved regexps in `get_clean_identifiers` function.

| 1.0 | Normalize takeda and gsk identifiers where possible - # Overview

See corresponding re:dash query. Also for identifiers outside of the core set (`nct/euctr/jprn/isrctn/actrn/who`) should be improved regexps in `get_clean_identifiers` function.

| process | normalize takeda and gsk identifiers where possible overview see corresponding re dash query also for identifiers outside of the core set nct euctr jprn isrctn actrn who should be improved regexps in get clean identifiers function | 1 |

14,112 | 17,012,004,104 | IssuesEvent | 2021-07-02 06:39:13 | beyondhb1079/s4us | https://api.github.com/repos/beyondhb1079/s4us | opened | PSA Email: Site is in beta | process | The site is polished up and we've added a bunch of scholarships we're aware. Please click around, file bugs, and add any scholarships we missed! | 1.0 | PSA Email: Site is in beta - The site is polished up and we've added a bunch of scholarships we're aware. Please click around, file bugs, and add any scholarships we missed! | process | psa email site is in beta the site is polished up and we ve added a bunch of scholarships we re aware please click around file bugs and add any scholarships we missed | 1 |

330,949 | 28,497,385,692 | IssuesEvent | 2023-04-18 15:02:59 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix gradients.test_random_uniform | Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4320387980/jobs/7540583857" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|None

|numpy|None

|jax|None

<details>

<summary>Not found</summary>

Not found

</details>

... | 1.0 | Fix gradients.test_random_uniform - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4320387980/jobs/7540583857" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|None

|numpy|None

|jax|None

<details>

<summary>Not found... | non_process | fix gradients test random uniform tensorflow img src torch none numpy none jax none not found not found | 0 |

100,358 | 16,489,865,849 | IssuesEvent | 2021-05-25 01:02:55 | billmcchesney1/rcloud | https://api.github.com/repos/billmcchesney1/rcloud | opened | CVE-2020-28500 (Medium) detected in lodash-4.17.14.tgz | security vulnerability | ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.14.tgz</b></p></summary>

<p>Lodash modular utilities.</p>

<p>Library home page: <a href="https://registr... | True | CVE-2020-28500 (Medium) detected in lodash-4.17.14.tgz - ## CVE-2020-28500 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-4.17.14.tgz</b></p></summary>

<p>Lodash modular util... | non_process | cve medium detected in lodash tgz cve medium severity vulnerability vulnerable library lodash tgz lodash modular utilities library home page a href path to dependency file rcloud package json path to vulnerable library rcloud node modules lodash package json dep... | 0 |

100,958 | 21,560,087,782 | IssuesEvent | 2022-05-01 03:01:01 | DS-13-Dev-Team/DS13 | https://api.github.com/repos/DS-13-Dev-Team/DS13 | closed | Suggestions and Ideas for Outbreak Escalation IV | Suggestion Type: Code | Suggestion:

Make necros softer in health and damage but give them more planning tools like vents, hidding spots and/or some sort of "dead disguise".

What do you think it'd add:

The necros actually are playing swarm tactics in the game, they need to play more clever and will add more intensity to the game. Also t... | 1.0 | Suggestions and Ideas for Outbreak Escalation IV - Suggestion:

Make necros softer in health and damage but give them more planning tools like vents, hidding spots and/or some sort of "dead disguise".

What do you think it'd add:

The necros actually are playing swarm tactics in the game, they need to play more cle... | non_process | suggestions and ideas for outbreak escalation iv suggestion make necros softer in health and damage but give them more planning tools like vents hidding spots and or some sort of dead disguise what do you think it d add the necros actually are playing swarm tactics in the game they need to play more cle... | 0 |

4,603 | 7,451,432,214 | IssuesEvent | 2018-03-29 02:55:46 | shobrook/BitVision | https://api.github.com/repos/shobrook/BitVision | closed | Run some checks | low priority model preprocessing | Firstly, we should check Quandl for other candidate Blockchain-related datasets and, if we find any, test them out. Secondly, I'm not sure if the specificity and sensitivity calculations are correct in the analysis module––this needs to be checked. Lastly, let's check a random sample from each TA-Lib output to ensure t... | 1.0 | Run some checks - Firstly, we should check Quandl for other candidate Blockchain-related datasets and, if we find any, test them out. Secondly, I'm not sure if the specificity and sensitivity calculations are correct in the analysis module––this needs to be checked. Lastly, let's check a random sample from each TA-Lib ... | process | run some checks firstly we should check quandl for other candidate blockchain related datasets and if we find any test them out secondly i m not sure if the specificity and sensitivity calculations are correct in the analysis module––this needs to be checked lastly let s check a random sample from each ta lib ... | 1 |

146,340 | 5,619,444,618 | IssuesEvent | 2017-04-04 01:38:12 | smartcatdev/support-system | https://api.github.com/repos/smartcatdev/support-system | closed | Filters return tickets for multiple users on non privileged accounts | bug Important Priority | ## Steps to Reproduce

- Create a user as either a subscriber or a customer

- Create some tickets in the admin interface (As an admin user)

- As the newly created user, change the filters so that they will return the newly created ticket

## Expected Result

The filter should only return tickets that have been cr... | 1.0 | Filters return tickets for multiple users on non privileged accounts - ## Steps to Reproduce

- Create a user as either a subscriber or a customer

- Create some tickets in the admin interface (As an admin user)

- As the newly created user, change the filters so that they will return the newly created ticket

## E... | non_process | filters return tickets for multiple users on non privileged accounts steps to reproduce create a user as either a subscriber or a customer create some tickets in the admin interface as an admin user as the newly created user change the filters so that they will return the newly created ticket e... | 0 |

63,075 | 3,193,931,190 | IssuesEvent | 2015-09-30 09:06:41 | fusioninventory/fusioninventory-for-glpi | https://api.github.com/repos/fusioninventory/fusioninventory-for-glpi | closed | Dynamic agent crash for netdiscovery | Category: Tasks Component: For junior contributor Component: Found in version Priority: High Status: Closed Tracker: Bug | ---

Author Name: **David Durieux** (@ddurieux)

Original Redmine Issue: 1560, http://forge.fusioninventory.org/issues/1560

Original Date: 2012-04-10

Original Assignee: David Durieux

---

None

| 1.0 | Dynamic agent crash for netdiscovery - ---

Author Name: **David Durieux** (@ddurieux)

Original Redmine Issue: 1560, http://forge.fusioninventory.org/issues/1560

Original Date: 2012-04-10

Original Assignee: David Durieux

---

None

| non_process | dynamic agent crash for netdiscovery author name david durieux ddurieux original redmine issue original date original assignee david durieux none | 0 |

13,913 | 16,674,232,623 | IssuesEvent | 2021-06-07 14:26:18 | ESMValGroup/ESMValCore | https://api.github.com/repos/ESMValGroup/ESMValCore | opened | cf-units=2.1.5 for OSX still preserves older version behaviour (<2.1.4) | preprocessor testing | `cf-units=2.1.5` installed in the esmvaltool conda env on OSX as seen from the [GA test](https://github.com/ESMValGroup/ESMValCore/runs/2759882084?check_suite_focus=true) preserves the behavior of `num2date` from an older version, <2.1.4. We need to raise this with the `cf-units` develsm @bjlittle this is a SciTools pa... | 1.0 | cf-units=2.1.5 for OSX still preserves older version behaviour (<2.1.4) - `cf-units=2.1.5` installed in the esmvaltool conda env on OSX as seen from the [GA test](https://github.com/ESMValGroup/ESMValCore/runs/2759882084?check_suite_focus=true) preserves the behavior of `num2date` from an older version, <2.1.4. We need... | process | cf units for osx still preserves older version behaviour cf units installed in the esmvaltool conda env on osx as seen from the preserves the behavior of from an older version we need to raise this with the cf units develsm bjlittle this is a scitools package would you be a... | 1 |

8,528 | 11,704,841,582 | IssuesEvent | 2020-03-07 12:12:48 | 3wcircus/DamnThePotHolesBackend | https://api.github.com/repos/3wcircus/DamnThePotHolesBackend | opened | Setup/enforce Git Team activities | Process | Setup Git Team Skeleton and share with Team

- Enforce pull/merge requests

- Add users to project

- Educate others | 1.0 | Setup/enforce Git Team activities - Setup Git Team Skeleton and share with Team

- Enforce pull/merge requests

- Add users to project

- Educate others | process | setup enforce git team activities setup git team skeleton and share with team enforce pull merge requests add users to project educate others | 1 |

159,864 | 20,085,918,962 | IssuesEvent | 2022-02-05 01:12:20 | DavidSpek/kale | https://api.github.com/repos/DavidSpek/kale | opened | CVE-2021-41216 (High) detected in tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl, tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl | security vulnerability | ## CVE-2021-41216 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl</b>, <b>tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl</b><... | True | CVE-2021-41216 (High) detected in tensorflow-1.0.0-cp27-cp27mu-manylinux1_x86_64.whl, tensorflow-2.1.0-cp27-cp27mu-manylinux2010_x86_64.whl - ## CVE-2021-41216 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> V... | non_process | cve high detected in tensorflow whl tensorflow whl cve high severity vulnerability vulnerable libraries tensorflow whl tensorflow whl tensorflow whl tensorflow is an open source machine learning fra... | 0 |

4,369 | 5,025,493,888 | IssuesEvent | 2016-12-15 09:23:43 | asciidoctor/asciidoctor | https://api.github.com/repos/asciidoctor/asciidoctor | closed | Upgrade to Nokogiri 1.6.x | infrastructure | Upgrade to Nokogiri 1.6.x to coincide with dropping support for Ruby 1.8.

| 1.0 | Upgrade to Nokogiri 1.6.x - Upgrade to Nokogiri 1.6.x to coincide with dropping support for Ruby 1.8.

| non_process | upgrade to nokogiri x upgrade to nokogiri x to coincide with dropping support for ruby | 0 |

17,171 | 22,745,012,944 | IssuesEvent | 2022-07-07 08:24:56 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | opened | Clean up StreamingPlatform / Engine | kind/toil team/distributed team/process-automation | **Description**

There a multiple things we can remove or clean up during #9600, some of these might be only possible at the end, but some are already possible at the begin.

**Todo:**

- [ ] Remove the position from the TypedRecordProcessor#process see #9602

- [ ] Remove ProxyWriter from the ProcessingContext ... | 1.0 | Clean up StreamingPlatform / Engine - **Description**

There a multiple things we can remove or clean up during #9600, some of these might be only possible at the end, but some are already possible at the begin.

**Todo:**

- [ ] Remove the position from the TypedRecordProcessor#process see #9602

- [ ] Remove P... | process | clean up streamingplatform engine description there a multiple things we can remove or clean up during some of these might be only possible at the end but some are already possible at the begin todo remove the position from the typedrecordprocessor process see remove proxywriter... | 1 |

232,859 | 7,681,065,920 | IssuesEvent | 2018-05-16 05:40:30 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.xfinity.com - Xfinity splash screen is not displayed | Re-triaged browser-firefox priority-normal | <!-- @browser: Firefox Mobile Nightly 55.0a1 (2017-04-07) -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; WOW64; rv:52.0) Gecko/20100101 Firefox/52.0 -->

<!-- @reported_with: addon-reporter-firefox -->

**URL**: www.xfinity.com

**Browser / Version**: Firefox Mobile Nightly 55.0a1 (2017-04-07)

**Operating Syste... | 1.0 | www.xfinity.com - Xfinity splash screen is not displayed - <!-- @browser: Firefox Mobile Nightly 55.0a1 (2017-04-07) -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; WOW64; rv:52.0) Gecko/20100101 Firefox/52.0 -->

<!-- @reported_with: addon-reporter-firefox -->

**URL**: www.xfinity.com

**Browser / Version**: F... | non_process | xfinity splash screen is not displayed url browser version firefox mobile nightly operating system android problem type something else i ll add details below steps to reproduce navigate to observe behavior expected behavior xfi... | 0 |

33,744 | 12,216,887,480 | IssuesEvent | 2020-05-01 16:03:16 | tomdgl397/goof | https://api.github.com/repos/tomdgl397/goof | opened | CVE-2020-11022 (Medium) detected in jquery-1.7.1.min.js | security vulnerability | ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="ht... | True | CVE-2020-11022 (Medium) detected in jquery-1.7.1.min.js - ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript librar... | non_process | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file tmp ws scm goof node modules vm browserify example run index html path to vu... | 0 |

5,058 | 7,861,207,729 | IssuesEvent | 2018-06-21 23:01:42 | StrikeNP/trac_test | https://api.github.com/repos/StrikeNP/trac_test | closed | GABLS2 rtm, rtp2, thlm, and thlp2 are set to zero when plotgen is run manually (but not for the nightly tests) (Trac #24) | Migrated from Trac enhancement post_processing senkbeil@uwm.edu | Some time ago, in order to test CLUBB's scalars, Brandon changed plotgen so that it outputs scalars in place of rtm and thlm. The nightly plots work great.

However, if CLUBB is run manually without outputting scalars, and then plotgen is executed manually, then rtm, thlm, rtp2, and thlp2 are set to zero. For manual ... | 1.0 | GABLS2 rtm, rtp2, thlm, and thlp2 are set to zero when plotgen is run manually (but not for the nightly tests) (Trac #24) - Some time ago, in order to test CLUBB's scalars, Brandon changed plotgen so that it outputs scalars in place of rtm and thlm. The nightly plots work great.

However, if CLUBB is run manually with... | process | rtm thlm and are set to zero when plotgen is run manually but not for the nightly tests trac some time ago in order to test clubb s scalars brandon changed plotgen so that it outputs scalars in place of rtm and thlm the nightly plots work great however if clubb is run manually without outputtin... | 1 |

77,690 | 27,109,984,831 | IssuesEvent | 2023-02-15 14:43:15 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | closed | I cant login from FtueAuthCombinedLoginFragment! | T-Defect | ### Steps to reproduce

I am changing this line `true -> FtueAuthSplashCarouselFragment::class.java` to `true -> FtueAuthCombinedLoginFragment::class.java` in `FtueAuthVariant class` but when I am using from app get me bellow error:

Invalid username or password

But I login from `FtueAuthSplashCarouselFragment` ... | 1.0 | I cant login from FtueAuthCombinedLoginFragment! - ### Steps to reproduce

I am changing this line `true -> FtueAuthSplashCarouselFragment::class.java` to `true -> FtueAuthCombinedLoginFragment::class.java` in `FtueAuthVariant class` but when I am using from app get me bellow error:

Invalid username or password

... | non_process | i cant login from ftueauthcombinedloginfragment steps to reproduce i am changing this line true ftueauthsplashcarouselfragment class java to true ftueauthcombinedloginfragment class java in ftueauthvariant class but when i am using from app get me bellow error invalid username or password ... | 0 |

392,412 | 11,590,972,334 | IssuesEvent | 2020-02-24 08:21:46 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | support.mozilla.org - see bug description | browser-firefox engine-gecko form-v2-experiment priority-important | <!-- @browser: Firefox 74.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:74.0) Gecko/20100101 Firefox/74.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/48826 -->

<!-- @extra_labels: form-v2-experiment -->

**URL**: https://support.mozi... | 1.0 | support.mozilla.org - see bug description - <!-- @browser: Firefox 74.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:74.0) Gecko/20100101 Firefox/74.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/48826 -->

<!-- @extra_labels: form-v2-e... | non_process | support mozilla org see bug description url browser version firefox operating system windows tested another browser no problem type something else description scroll down problem steps to reproduce scroll down not coming in this browser view the screensh... | 0 |

19,581 | 25,904,878,355 | IssuesEvent | 2022-12-15 09:27:16 | UserOfficeProject/user-office-project-issue-tracker | https://api.github.com/repos/UserOfficeProject/user-office-project-issue-tracker | closed | Extract PIs and Co-Is to SharePoint Experiment Team list for ISIS Direct | origin: project type: process ops: comms area: uop/stfc | We need to run a one time export of PIs and Co-Is to the SharePoint Experiment Team list for ISIS Direct. This is to allow time for the scheduler team to do https://github.com/isisbusapps/ERA/issues/1352.

We should update S&O once this is released so that they can update any messages. | 1.0 | Extract PIs and Co-Is to SharePoint Experiment Team list for ISIS Direct - We need to run a one time export of PIs and Co-Is to the SharePoint Experiment Team list for ISIS Direct. This is to allow time for the scheduler team to do https://github.com/isisbusapps/ERA/issues/1352.

We should update S&O once this is rel... | process | extract pis and co is to sharepoint experiment team list for isis direct we need to run a one time export of pis and co is to the sharepoint experiment team list for isis direct this is to allow time for the scheduler team to do we should update s o once this is released so that they can update any messages | 1 |

172,826 | 21,054,870,119 | IssuesEvent | 2022-04-01 01:24:39 | peterwkc85/Spring_petclinic | https://api.github.com/repos/peterwkc85/Spring_petclinic | opened | CVE-2022-22965 (High) detected in spring-beans-5.1.6.RELEASE.jar | security vulnerability | ## CVE-2022-22965 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-beans-5.1.6.RELEASE.jar</b></p></summary>

<p>Spring Beans</p>

<p>Library home page: <a href="https://github.com... | True | CVE-2022-22965 (High) detected in spring-beans-5.1.6.RELEASE.jar - ## CVE-2022-22965 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-beans-5.1.6.RELEASE.jar</b></p></summary>

<p... | non_process | cve high detected in spring beans release jar cve high severity vulnerability vulnerable library spring beans release jar spring beans library home page a href path to dependency file spring petclinic master spring petclinic master pom xml path to vulnerable library ... | 0 |

20,982 | 27,846,576,202 | IssuesEvent | 2023-03-20 15:51:03 | hashgraph/hedera-mirror-node | https://api.github.com/repos/hashgraph/hedera-mirror-node | closed | Snyk code check failing | bug security process | ### Description

The snyk code workflow is [failing](https://github.com/hashgraph/hedera-mirror-node/actions/runs/4452221619/jobs/7819675560).

```

✗ [High] Cross-site Scripting (XSS)

Path: hedera-mirror-rest/monitoring/monitor_apis/server.js, line 86

Info: Unsanitized input from an HTTP parameter flows... | 1.0 | Snyk code check failing - ### Description

The snyk code workflow is [failing](https://github.com/hashgraph/hedera-mirror-node/actions/runs/4452221619/jobs/7819675560).

```

✗ [High] Cross-site Scripting (XSS)

Path: hedera-mirror-rest/monitoring/monitor_apis/server.js, line 86

Info: Unsanitized input fr... | process | snyk code check failing description the snyk code workflow is ✗ cross site scripting xss path hedera mirror rest monitoring monitor apis server js line info unsanitized input from an http parameter flows into send where it is used to render an html page returned to the user th... | 1 |

8,194 | 11,393,928,941 | IssuesEvent | 2020-01-30 08:08:17 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | ntr: effector-mediated modulation of host process by symbiont | multi-species process |

I also need an additional parent to

GO:0140404 JSON

effector-mediated modulation of host immune response by symbiont

effector-mediated modulation of host process by symbiont

my collaborators pointed out that many effectors are not manipulating defenses (for example, effectors inducing ribotoxic stress) | 1.0 | ntr: effector-mediated modulation of host process by symbiont -

I also need an additional parent to

GO:0140404 JSON

effector-mediated modulation of host immune response by symbiont

effector-mediated modulation of host process by symbiont

my collaborators pointed out that many effectors are not manipulat... | process | ntr effector mediated modulation of host process by symbiont i also need an additional parent to go json effector mediated modulation of host immune response by symbiont effector mediated modulation of host process by symbiont my collaborators pointed out that many effectors are not manipulating de... | 1 |

11,331 | 14,144,527,413 | IssuesEvent | 2020-11-10 16:33:25 | MicrosoftDocs/azure-devops-docs | https://api.github.com/repos/MicrosoftDocs/azure-devops-docs | closed | Dynamically evaluating conditions | Pri2 devops-cicd-process/tech devops/prod product-question | Hi,

No issue with the article, just a question regarding the evaluation of conditions.

In Azure Devops you have conditions like the following:

`condition: and(contains(variables['build.sourceBranch'], 'refs/heads/master'), succeeded())`

I'm looking to implement something similar to this in a project and wonde... | 1.0 | Dynamically evaluating conditions - Hi,

No issue with the article, just a question regarding the evaluation of conditions.

In Azure Devops you have conditions like the following:

`condition: and(contains(variables['build.sourceBranch'], 'refs/heads/master'), succeeded())`

I'm looking to implement something si... | process | dynamically evaluating conditions hi no issue with the article just a question regarding the evaluation of conditions in azure devops you have conditions like the following condition and contains variables refs heads master succeeded i m looking to implement something similar to this in a pr... | 1 |

31,958 | 7,470,353,267 | IssuesEvent | 2018-04-03 04:25:37 | City-Bureau/city-scrapers | https://api.github.com/repos/City-Bureau/city-scrapers | closed | Board of Education Description Field | code: bug report good first issue help wanted | The Chicago Board of Education spider currently doesn't have description info coming through—can we add this text to that field?

"The Chicago Board of Education is responsible for the governance, organizational and financial oversight of Chicago Public Schools (CPS), the third largest school district in the United S... | 1.0 | Board of Education Description Field - The Chicago Board of Education spider currently doesn't have description info coming through—can we add this text to that field?

"The Chicago Board of Education is responsible for the governance, organizational and financial oversight of Chicago Public Schools (CPS), the third ... | non_process | board of education description field the chicago board of education spider currently doesn t have description info coming through—can we add this text to that field the chicago board of education is responsible for the governance organizational and financial oversight of chicago public schools cps the third ... | 0 |

20,080 | 26,576,186,448 | IssuesEvent | 2023-01-21 21:10:43 | serai-dex/serai | https://api.github.com/repos/serai-dex/serai | opened | Use Ethereum as a checkpointing system | feature improvement cryptography processor node | Right now, for a multisig key K, K is supposed to be chain-bound by the processor. Ideally, we do the chain binding before publication to Substrate so clients don't need to replicate it locally.

If after we publish it to Substrate, we then apply an additive offset of the block confirming the key, publishing that to ... | 1.0 | Use Ethereum as a checkpointing system - Right now, for a multisig key K, K is supposed to be chain-bound by the processor. Ideally, we do the chain binding before publication to Substrate so clients don't need to replicate it locally.

If after we publish it to Substrate, we then apply an additive offset of the bloc... | process | use ethereum as a checkpointing system right now for a multisig key k k is supposed to be chain bound by the processor ideally we do the chain binding before publication to substrate so clients don t need to replicate it locally if after we publish it to substrate we then apply an additive offset of the bloc... | 1 |

164,997 | 12,825,259,737 | IssuesEvent | 2020-07-06 14:42:35 | Oldes/Rebol-issues | https://api.github.com/repos/Oldes/Rebol-issues | closed | RANDOM/only returns NONE for series not at the HEAD | CC.resolved Status.important Test.written Type.bug | _Submitted by:_ **abolka**

RANDOM/only erratically returns NONE when passed a series which is not positioned at the head.

Originally discovered by Gregg Irwin.

``` rebol

;; showing the general effect

>> unique collect [loop 100 [keep random/only next [1 2 3]]]

== [none 3]

;; a minimal demonstration, which always re... | 1.0 | RANDOM/only returns NONE for series not at the HEAD - _Submitted by:_ **abolka**

RANDOM/only erratically returns NONE when passed a series which is not positioned at the head.

Originally discovered by Gregg Irwin.

``` rebol

;; showing the general effect

>> unique collect [loop 100 [keep random/only next [1 2 3]]]

==... | non_process | random only returns none for series not at the head submitted by abolka random only erratically returns none when passed a series which is not positioned at the head originally discovered by gregg irwin rebol showing the general effect unique collect a minimal demonstration which a... | 0 |

4,791 | 7,674,626,119 | IssuesEvent | 2018-05-15 05:16:36 | qgis/QGIS-Documentation | https://api.github.com/repos/qgis/QGIS-Documentation | closed | State of layer reprojection operations before using Processing algorithms | Processing User Manual question | https://docs.qgis.org/testing/en/docs/user_manual/processing/toolbox.html#a-note-on-projections explains that layers should be reprojected to the same crs before processing any algorithm.

I remember some discussion (or PR) that mentions that some algs no more needs it.

As I could not find any information on this in ... | 1.0 | State of layer reprojection operations before using Processing algorithms - https://docs.qgis.org/testing/en/docs/user_manual/processing/toolbox.html#a-note-on-projections explains that layers should be reprojected to the same crs before processing any algorithm.

I remember some discussion (or PR) that mentions that ... | process | state of layer reprojection operations before using processing algorithms explains that layers should be reprojected to the same crs before processing any algorithm i remember some discussion or pr that mentions that some algs no more needs it as i could not find any information on this in the repo i d like ... | 1 |

69,730 | 9,330,228,890 | IssuesEvent | 2019-03-28 06:06:25 | s-fleck/lgr | https://api.github.com/repos/s-fleck/lgr | closed | logger threshold per logger | documentation invalid | Sorry, may be I'm missing something again, but I think it will be beneficial to be able to specify per logger thresholds. Now it seems root logger amend individual loggers threshold.

Reproduce:

```r

# remotes::install_github('dselivanov/rsparse')

library(rsparse)

logger = lgr::get_logger('rsparse')

x = matrix(r... | 1.0 | logger threshold per logger - Sorry, may be I'm missing something again, but I think it will be beneficial to be able to specify per logger thresholds. Now it seems root logger amend individual loggers threshold.

Reproduce:

```r

# remotes::install_github('dselivanov/rsparse')

library(rsparse)

logger = lgr::get_l... | non_process | logger threshold per logger sorry may be i m missing something again but i think it will be beneficial to be able to specify per logger thresholds now it seems root logger amend individual loggers threshold reproduce r remotes install github dselivanov rsparse library rsparse logger lgr get l... | 0 |

67,070 | 3,265,968,961 | IssuesEvent | 2015-10-22 18:34:23 | der-On/XPlane2Blender | https://api.github.com/repos/der-On/XPlane2Blender | closed | Fix Bone matrices | priority high | here are the steps to get the simple bone animation test case working:

`python tests.py --filter bone_animations --debug`

Resulting `test_bone_animations.obj` will be written to `/tests/tmp`

Source blender file is under `/tests/animations/bone_animations.test.blend`

Relevant code can be found from here on:

... | 1.0 | Fix Bone matrices - here are the steps to get the simple bone animation test case working:

`python tests.py --filter bone_animations --debug`

Resulting `test_bone_animations.obj` will be written to `/tests/tmp`

Source blender file is under `/tests/animations/bone_animations.test.blend`

Relevant code can be ... | non_process | fix bone matrices here are the steps to get the simple bone animation test case working python tests py filter bone animations debug resulting test bone animations obj will be written to tests tmp source blender file is under tests animations bone animations test blend relevant code can be ... | 0 |

16,380 | 21,102,548,788 | IssuesEvent | 2022-04-04 15:38:32 | googleapis/python-bigquery-pandas | https://api.github.com/repos/googleapis/python-bigquery-pandas | closed | document pandas-gbq vision and roadmap | type: process api: bigquery | Both pandas-gbq and [google-cloud-bigquery](https://github.com/GoogleCloudPlatform/google-cloud-python/tree/master/bigquery) are doing many of the same things, and increasingly so (e.g. `.to_dataframe()` in google-cloud-bigquery)

- Are there different use cases? Can we define those?

- Should we focus development on... | 1.0 | document pandas-gbq vision and roadmap - Both pandas-gbq and [google-cloud-bigquery](https://github.com/GoogleCloudPlatform/google-cloud-python/tree/master/bigquery) are doing many of the same things, and increasingly so (e.g. `.to_dataframe()` in google-cloud-bigquery)

- Are there different use cases? Can we define... | process | document pandas gbq vision and roadmap both pandas gbq and are doing many of the same things and increasingly so e g to dataframe in google cloud bigquery are there different use cases can we define those should we focus development on one and wrap the other even if not wholly for a subset of... | 1 |

46,322 | 9,923,253,881 | IssuesEvent | 2019-07-01 06:40:02 | petershirley/raytracinginoneweekend | https://api.github.com/repos/petershirley/raytracinginoneweekend | closed | Unused variable p in main.cc | book code | p is never used in main.cc

89: vec3 p = r.point_at_parameter(2.0);

90: col += color(r, world,0);

should the r in 90: be p ?

| 1.0 | Unused variable p in main.cc - p is never used in main.cc

89: vec3 p = r.point_at_parameter(2.0);

90: col += color(r, world,0);

should the r in 90: be p ?

| non_process | unused variable p in main cc p is never used in main cc p r point at parameter col color r world should the r in be p | 0 |

5,384 | 8,211,414,690 | IssuesEvent | 2018-09-04 13:46:17 | openvstorage/framework | https://api.github.com/repos/openvstorage/framework | closed | Work around volumedriver lockup when removing MDS slaves of removed volumes | process_wontfix | https://github.com/openvstorage/volumedriver-ee/issues/79

After volume removal MDS slaves self-destruct once the absence of the backend namespace is detected (during the periodic backend poll). If at the same time an MDS slave is removed via the Python API there's a potential for a lockup. | 1.0 | Work around volumedriver lockup when removing MDS slaves of removed volumes - https://github.com/openvstorage/volumedriver-ee/issues/79

After volume removal MDS slaves self-destruct once the absence of the backend namespace is detected (during the periodic backend poll). If at the same time an MDS slave is removed v... | process | work around volumedriver lockup when removing mds slaves of removed volumes after volume removal mds slaves self destruct once the absence of the backend namespace is detected during the periodic backend poll if at the same time an mds slave is removed via the python api there s a potential for a lockup | 1 |