Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

4,535

| 7,373,325,266

|

IssuesEvent

|

2018-03-13 16:58:00

|

haskell-api-discussions/haskell-api-discussions

|

https://api.github.com/repos/haskell-api-discussions/haskell-api-discussions

|

opened

|

Difficult to run 'foo > /dev/null' and throw an exception on non-zero exit code

|

process

|

With `process`, it's hard to spawn an external process in IO, re-throw its non-zero exit code as a Haskell exception, and also redirect its output. Here's what I came up with:

```haskell

withFile "/dev/null" WriteMode $ \h ->

(_, _, _, ph) <- createProcess_ "" (shell "foo") { std_out = UseHandle h }

code <- waitForProcess ph

when (code /= ExitSuccess) $

throwIO code

```

If I didn't want to redirect output to a handle, this would be a one-liner using:

```haskell

callCommand :: String -> IO ()

```

This makes me think the API is warty, because it has a bunch of convenience functions that sit atop one tediously verbose master run function. Anything slightly out of the ordinary and you're forced to use it.

I wonder if there's a better design for `createProcess` overall. I don't have one off the top of my head, just the tedium of accomplishing this simple task using `process` made me want to complain about it here ;)

|

1.0

|

Difficult to run 'foo > /dev/null' and throw an exception on non-zero exit code - With `process`, it's hard to spawn an external process in IO, re-throw its non-zero exit code as a Haskell exception, and also redirect its output. Here's what I came up with:

```haskell

withFile "/dev/null" WriteMode $ \h ->

(_, _, _, ph) <- createProcess_ "" (shell "foo") { std_out = UseHandle h }

code <- waitForProcess ph

when (code /= ExitSuccess) $

throwIO code

```

If I didn't want to redirect output to a handle, this would be a one-liner using:

```haskell

callCommand :: String -> IO ()

```

This makes me think the API is warty, because it has a bunch of convenience functions that sit atop one tediously verbose master run function. Anything slightly out of the ordinary and you're forced to use it.

I wonder if there's a better design for `createProcess` overall. I don't have one off the top of my head, just the tedium of accomplishing this simple task using `process` made me want to complain about it here ;)

|

process

|

difficult to run foo dev null and throw an exception on non zero exit code with process it s hard to spawn an external process in io re throw its non zero exit code as a haskell exception and also redirect its output here s what i came up with haskell withfile dev null writemode h ph createprocess shell foo std out usehandle h code waitforprocess ph when code exitsuccess throwio code if i didn t want to redirect output to a handle this would be a one liner using haskell callcommand string io this makes me think the api is warty because it has a bunch of convenience functions that sit atop one tediously verbose master run function anything slightly out of the ordinary and you re forced to use it i wonder if there s a better design for createprocess overall i don t have one off the top of my head just the tedium of accomplishing this simple task using process made me want to complain about it here

| 1

|

21,945

| 3,768,666,286

|

IssuesEvent

|

2016-03-16 06:40:49

|

hollyjoke/33HU6POQKFJUS6K4M6BXQISV

|

https://api.github.com/repos/hollyjoke/33HU6POQKFJUS6K4M6BXQISV

|

closed

|

FOuPTQaQmLunnfNrw3IxjeYBCCvb4b/3o1OO3bNI/Ec42NECJO3VUtARIZ9gIZDl3JdRQUwcGy1dx/iYH+bRbU01ZOUDjRbqjlk1cKg7seV8h0OSNssiP/uf1k2G9GVwaggLPgn0ikHxRauvTk6fX8jkc2xTvm1CDN0qiAu6G9w=

|

design

|

DCDMcDY05vKF1+fb9v68FsRXICuHuAk6XZTR+RgQr1+m8uF7kLAkIca3o1J2Xot51JlXC9sD/SJ38VtiNl2wqZY962BWwPQEzjhZptT9Jjr949zkktXoLYFAE5kWSS4cKbOy/Eb25KnoM9oYPZ+TGSPQvSYZM2xgWzVDl8VRQnqWM3tBYESI9Ti4EMJIr2ERDiRC8Pt2MuhnE6GJc6Bfcxqm/XRqi3fpUtGb62EX6HNyhHgb872NIPow6wTNnYbByzp5204s/LMGdKrqbNZQjqPwnMFA0dSUmb/DUimhDSUhZqmGy3m1GZYduztYRyxC++m9DVgoHGdQHzi6ucrS58GPbzeY0qHNXkVMLTGcs3ip1gFT2rILYrIUKHAtV8eeqxNFCVGrsTfcWF7GqIs9VTPCYzFMh5d9g3BvWa8K1BL6KGi5Gggn33UWeIuPPVVXN/svxn06AyGxEbPMDLrrsiGwllFIQo3iqAFoFq8u7FrOUHjQ6WCZZoLsOZfbJ11CkBaKdmNiYgGifat822R7orc1N4ugvYaa0GMYtURBVxF7krL28nBFZwV3SPMuMlKqdM4SeX6KPnQa1dI7+HR+SWuD9f/XNPPnWnetAUWWQxcDo0xsgEGUI9Dqwrh8bcXB5pGFKNHQ4klCItW/bn98/gpRT+jH/Njg2YZumAX6UP83yLfL7x7VzJ3/watlNBJbgnFJ7ZdhzDVyn7HQAFx+aiNK7/2PnhPQcozfrP5vrlH8PDOjieeNkDMInfIkcbdUvzZvdPz/Vfk/q9+P2U6QlagdN1up05vv+c4Y4+5cwTcqkvi4+1KDr1gspy6JiJDp6gcJitDEDk34CfJYNgU0ayUwnnsaLpqUvmkN1+giHMG1VxANqZidSgmvik5nJcyexEFKsBE/QUQH2uH1Aw+AT9mtOn73vpYDBut9Cj1eLT99jNtIhpwwbcDl8yAzbPNyPXvyPfNBkmB66Br+t+cbZ2WoHveXuA4qiOBO/IW9GZ0icaEuzACcp1BzS/F7BAummcic1u7Y60u44QRSkJiSOIHlWobIiAQFTpH7QkTh8N5+2tG1rUuMKSM9x6+ZBvKD7qKqbT2OfCbyV2KEtbaorVaWWjz+Wa5yYo46ji/iwf4RR/7pxbjXkDDioEBTLAFbFLb9yVGmSCF4KSvAEMqaSux80stoH68Z099kRv7vpgZ+B8GBUwIymQYTgBHePm/thmW3kx1nocUc2bToROrkneFcJh/5ykJ1unHUr2+6cqNyhoz0GQ1rkjTgrsgUaeEkJMTN4xwlE0+0PTFreTUFPuEegsvbirjFcd8hivrfGjYv1L0DWbP0nciX/zczw0W6hHBX6VFY7WUPpEt1CZfGJLo4pob3jEdtGZjTAjDEwQ5JCuR3xufaxohBAx+AtodRg5Dlh8hk9hrizPbqoH8753SiWmQnOJHOTkji7eWyOXcrSml2Gul9MKsp4wYgH804YWmdaRfwt3xwnDMhGB1BWMih53NK8h0Pp1Kkxphim/fy1kezX7Da17Gs4rS3nEbdNQltQvFqL5iI0p05O4qG5kkqWw8a6JwylrwCrYjcLTb05O8yzJR9jyX1dYlQe5e/Qma3vNR6Y+yOQ0vlOlIxvxdlQaBRnEdXaILQs5JRr5rNFatP1FFrCGsM5cCNMOHPF3Y+rtlmP2t2iTA7dAXFePu67dldKT+NdBjM42asHcnNzJRD71OrkWh34jACX1Pb9tbdqG+MTXI91oqPbJrYUajIuzGSeFuR01tNNqmSsfnfCo9gZBX7cV427zysI2VQIJK1Jv8Im3TYFooYQ+Se5NXOS0MwCVyAW9HtLH0dAx+rE0UJUauxN9xYXsaoiz1VRG6d5gq45pu1+a/zrYD1jwCxVNpAwX3uZdoIKJAPbq4zrOYircynHao6L9cLI4xAuRYyheoDsHRm1oGOZ4HpDNgXxrzYlkuuJFUzAteGDLglCNzzHkJxAMSJlYP7eFY2q4vjbOYAk4SAtxE1FAuC6fRvxWubACGNjPKnii3avHgAUrkZXEUzNl7mEX69MXGfBfRQHB59SNBzGGslh19icKPmthPQ6NBMWoKui/pXx+9VQnDYhvk1Hm7O73N7f+N+6JH9WubYUwiR13jsrQunlU9prwUk1mVdHHBFbU4SNDw6fNoRhP+oetTtTxEfeL+Tq+WKyR5ihWJDe1C0UwSUgzOUfMPoF4btYV6MEYbCDeyGLjDROnL1LioORaygV01QOhC3Uy4zWYM/O7dZhGg3+3LdG17xuzmAnNkmTec1ncmDs4Zvtf9dOXnBdSk9c+DD/EPJ52blruyoKERd6udenba83sZMRVg2Oz5c8FfEXz4LimYaXlguDByKdW5e8y7Ke5oUxltFNePEUL+YgpJMxWGpQyCjUPgYrHqc4SlMRQprEMvmZkG3enM2UY1tdVWYqxNFCVGrsTfcWF7GqIs9Vee9lPZbtDfOOMpkwYxXnt5xnrhlwbSjTS1ZXF+OCnwyLa7ofXZ23KvE8F43zh6Q3GctiHt6FQxMk+dtlCC5KYFqIVKMvvf81kLU8wPFbAEi+4Z5Zv6k5CZRMoB1t8iZFnbcDhhguTnnKrDeH9m94trNzJRD71OrkWh34jACX1PbHqBZJ5WudwPMumiszKQspClYBMbHHfFIj94w4S4oX8p7mhTGW0U148RQv5iCkkzF8Zb/NzOSxcRzjN7PFjd2KayKwKsNcicLqesNG1dr20YIdPvuicuI2+5iNX6pY8T8X1ixR13s5Qf2mpBj0Hz5pTmCfO0s0uiyTy1v2uHjlFW58wwLb6VWqE/YMYQ5VmiN0n++qm70vHVeZVu+L3brZz3E14BICaUl+r/alkCh1IcWDvuk6H6e993LBiOwR3YBqgRKAZGD2GeFHmluCuKQt7O4AVhFssoG8pAlGUKlez+nNXwejgTlHr3m2bcYaRohkiVSGFNRB9bHu2yoOUCHM84oCRaZz/SXBKNODOG08vZUUSJ3Rnd5iqq3lNkuwy5Z13PuI+0mbFeZVPq3oRFLNKam+tdnJyMedDt8usyKtOZwosK9JJRtvm9tTe1o+FIxfvWc0jXeT6cE5ZhrgiuCRRSIWgQ5MlPfIR3otS905WOPRcpBCQwBGL17hkW+bEjO2MVGkTe5XcguwG0VuXxlYsieFFlW/ahDQaxk5Yx9HyWKcPAtk7nsXucvEV82MKVjZtfpHf6H3ozPCGUGlEbyFlicmKFKvYXFJVRmzzvgFXuzmrQYj3AzV4EHWr9JbZETVMl4xRZjmrT1ePgbWhc1aeXPcHpb5xoVhip0nTNFN2PFnR2A4Fmu1uFb4I/uiaCgHM2einE2zOTe3YmL04Isq7wRTXzBsaMmJddPItOdfKuP5KHjquq4pC+kij3ojAVeEYhAuM0H36s4jBzsj/7G8C8z4fNvE2kCHCNICIn+Ghy5QqMyc3jUXShsgD4IUinKoReD6kzeq7ceGMqfYdel+aaTnCCLdQxn433l26GYjtnJhdAlQOG8gUi520Gi5MM8+SWV9S0mkexL8MujtM0ig+QznksoJqcwJ8LuKxAEUGjcn3Rw3PCyIB70mvZqcRP+gb6lH94Ja30+uAvSYzln8Wze9/6Kj2myYExP8B7VqFx8vvifivu/n5LNm8/VJCcp89eAdhAWydamfDQhnVwFGe+5Q3Sr017ZP9gyVkwMzkcczZ6KcTbM5N7diYvTgiyrcnZOJc+5292/1GWq+6TbyUk39Qie7xmEHWS3MiqTl0zTMh1lIsxybJedM5LbIkm/d/gSV+cwWVObOu+YhM/0H83MlEPvU6uRaHfiMAJfU9voOGO97wkl4sPB2UPoVGoz7pcQ2DnUBmOCEfHwl7VYyDpqpNH3/3QwqBtQr08aktib1yMt+5HfVvNCu8hMhVFUYTaBG1mkaEH5EV2q1Nja1VvB2MpdEOD7FAGe7rSE7TeEcFfpUVjtZQ+kS3UJl8YkZhLdJDIqauqCx4FrUNm2oOeq2PDALGHg4VOlL/klZ2iEcFfpUVjtZQ+kS3UJl8YkQJkT+EYsBm2r9psbAqMQA9Xbxy3X1uDqvMQS6rOvW8erE0UJUauxN9xYXsaoiz1V/EEFHfNXEb5OXfxJYQtuARp9NmR4EH8EIfI9qyyGR6ESCGaS1SMXbocJ2gsWianNKLPySD+iLRrbY1tVmC8xhCvp7qqwk4bY081MXNLZObJ2ZU/2Ii26VZt3Dpp8Z/RJTKxwQY66h/K7RIa3IXYW5OFCrGMJUImsX/7qxQhq+ouBx5hUbZZWvuoqAZjaJaaSoFF6dn/Q+jTpjqZlyZihOnv9Zwim5g9NuRS9f08orUo=

|

1.0

|

FOuPTQaQmLunnfNrw3IxjeYBCCvb4b/3o1OO3bNI/Ec42NECJO3VUtARIZ9gIZDl3JdRQUwcGy1dx/iYH+bRbU01ZOUDjRbqjlk1cKg7seV8h0OSNssiP/uf1k2G9GVwaggLPgn0ikHxRauvTk6fX8jkc2xTvm1CDN0qiAu6G9w= - DCDMcDY05vKF1+fb9v68FsRXICuHuAk6XZTR+RgQr1+m8uF7kLAkIca3o1J2Xot51JlXC9sD/SJ38VtiNl2wqZY962BWwPQEzjhZptT9Jjr949zkktXoLYFAE5kWSS4cKbOy/Eb25KnoM9oYPZ+TGSPQvSYZM2xgWzVDl8VRQnqWM3tBYESI9Ti4EMJIr2ERDiRC8Pt2MuhnE6GJc6Bfcxqm/XRqi3fpUtGb62EX6HNyhHgb872NIPow6wTNnYbByzp5204s/LMGdKrqbNZQjqPwnMFA0dSUmb/DUimhDSUhZqmGy3m1GZYduztYRyxC++m9DVgoHGdQHzi6ucrS58GPbzeY0qHNXkVMLTGcs3ip1gFT2rILYrIUKHAtV8eeqxNFCVGrsTfcWF7GqIs9VTPCYzFMh5d9g3BvWa8K1BL6KGi5Gggn33UWeIuPPVVXN/svxn06AyGxEbPMDLrrsiGwllFIQo3iqAFoFq8u7FrOUHjQ6WCZZoLsOZfbJ11CkBaKdmNiYgGifat822R7orc1N4ugvYaa0GMYtURBVxF7krL28nBFZwV3SPMuMlKqdM4SeX6KPnQa1dI7+HR+SWuD9f/XNPPnWnetAUWWQxcDo0xsgEGUI9Dqwrh8bcXB5pGFKNHQ4klCItW/bn98/gpRT+jH/Njg2YZumAX6UP83yLfL7x7VzJ3/watlNBJbgnFJ7ZdhzDVyn7HQAFx+aiNK7/2PnhPQcozfrP5vrlH8PDOjieeNkDMInfIkcbdUvzZvdPz/Vfk/q9+P2U6QlagdN1up05vv+c4Y4+5cwTcqkvi4+1KDr1gspy6JiJDp6gcJitDEDk34CfJYNgU0ayUwnnsaLpqUvmkN1+giHMG1VxANqZidSgmvik5nJcyexEFKsBE/QUQH2uH1Aw+AT9mtOn73vpYDBut9Cj1eLT99jNtIhpwwbcDl8yAzbPNyPXvyPfNBkmB66Br+t+cbZ2WoHveXuA4qiOBO/IW9GZ0icaEuzACcp1BzS/F7BAummcic1u7Y60u44QRSkJiSOIHlWobIiAQFTpH7QkTh8N5+2tG1rUuMKSM9x6+ZBvKD7qKqbT2OfCbyV2KEtbaorVaWWjz+Wa5yYo46ji/iwf4RR/7pxbjXkDDioEBTLAFbFLb9yVGmSCF4KSvAEMqaSux80stoH68Z099kRv7vpgZ+B8GBUwIymQYTgBHePm/thmW3kx1nocUc2bToROrkneFcJh/5ykJ1unHUr2+6cqNyhoz0GQ1rkjTgrsgUaeEkJMTN4xwlE0+0PTFreTUFPuEegsvbirjFcd8hivrfGjYv1L0DWbP0nciX/zczw0W6hHBX6VFY7WUPpEt1CZfGJLo4pob3jEdtGZjTAjDEwQ5JCuR3xufaxohBAx+AtodRg5Dlh8hk9hrizPbqoH8753SiWmQnOJHOTkji7eWyOXcrSml2Gul9MKsp4wYgH804YWmdaRfwt3xwnDMhGB1BWMih53NK8h0Pp1Kkxphim/fy1kezX7Da17Gs4rS3nEbdNQltQvFqL5iI0p05O4qG5kkqWw8a6JwylrwCrYjcLTb05O8yzJR9jyX1dYlQe5e/Qma3vNR6Y+yOQ0vlOlIxvxdlQaBRnEdXaILQs5JRr5rNFatP1FFrCGsM5cCNMOHPF3Y+rtlmP2t2iTA7dAXFePu67dldKT+NdBjM42asHcnNzJRD71OrkWh34jACX1Pb9tbdqG+MTXI91oqPbJrYUajIuzGSeFuR01tNNqmSsfnfCo9gZBX7cV427zysI2VQIJK1Jv8Im3TYFooYQ+Se5NXOS0MwCVyAW9HtLH0dAx+rE0UJUauxN9xYXsaoiz1VRG6d5gq45pu1+a/zrYD1jwCxVNpAwX3uZdoIKJAPbq4zrOYircynHao6L9cLI4xAuRYyheoDsHRm1oGOZ4HpDNgXxrzYlkuuJFUzAteGDLglCNzzHkJxAMSJlYP7eFY2q4vjbOYAk4SAtxE1FAuC6fRvxWubACGNjPKnii3avHgAUrkZXEUzNl7mEX69MXGfBfRQHB59SNBzGGslh19icKPmthPQ6NBMWoKui/pXx+9VQnDYhvk1Hm7O73N7f+N+6JH9WubYUwiR13jsrQunlU9prwUk1mVdHHBFbU4SNDw6fNoRhP+oetTtTxEfeL+Tq+WKyR5ihWJDe1C0UwSUgzOUfMPoF4btYV6MEYbCDeyGLjDROnL1LioORaygV01QOhC3Uy4zWYM/O7dZhGg3+3LdG17xuzmAnNkmTec1ncmDs4Zvtf9dOXnBdSk9c+DD/EPJ52blruyoKERd6udenba83sZMRVg2Oz5c8FfEXz4LimYaXlguDByKdW5e8y7Ke5oUxltFNePEUL+YgpJMxWGpQyCjUPgYrHqc4SlMRQprEMvmZkG3enM2UY1tdVWYqxNFCVGrsTfcWF7GqIs9Vee9lPZbtDfOOMpkwYxXnt5xnrhlwbSjTS1ZXF+OCnwyLa7ofXZ23KvE8F43zh6Q3GctiHt6FQxMk+dtlCC5KYFqIVKMvvf81kLU8wPFbAEi+4Z5Zv6k5CZRMoB1t8iZFnbcDhhguTnnKrDeH9m94trNzJRD71OrkWh34jACX1PbHqBZJ5WudwPMumiszKQspClYBMbHHfFIj94w4S4oX8p7mhTGW0U148RQv5iCkkzF8Zb/NzOSxcRzjN7PFjd2KayKwKsNcicLqesNG1dr20YIdPvuicuI2+5iNX6pY8T8X1ixR13s5Qf2mpBj0Hz5pTmCfO0s0uiyTy1v2uHjlFW58wwLb6VWqE/YMYQ5VmiN0n++qm70vHVeZVu+L3brZz3E14BICaUl+r/alkCh1IcWDvuk6H6e993LBiOwR3YBqgRKAZGD2GeFHmluCuKQt7O4AVhFssoG8pAlGUKlez+nNXwejgTlHr3m2bcYaRohkiVSGFNRB9bHu2yoOUCHM84oCRaZz/SXBKNODOG08vZUUSJ3Rnd5iqq3lNkuwy5Z13PuI+0mbFeZVPq3oRFLNKam+tdnJyMedDt8usyKtOZwosK9JJRtvm9tTe1o+FIxfvWc0jXeT6cE5ZhrgiuCRRSIWgQ5MlPfIR3otS905WOPRcpBCQwBGL17hkW+bEjO2MVGkTe5XcguwG0VuXxlYsieFFlW/ahDQaxk5Yx9HyWKcPAtk7nsXucvEV82MKVjZtfpHf6H3ozPCGUGlEbyFlicmKFKvYXFJVRmzzvgFXuzmrQYj3AzV4EHWr9JbZETVMl4xRZjmrT1ePgbWhc1aeXPcHpb5xoVhip0nTNFN2PFnR2A4Fmu1uFb4I/uiaCgHM2einE2zOTe3YmL04Isq7wRTXzBsaMmJddPItOdfKuP5KHjquq4pC+kij3ojAVeEYhAuM0H36s4jBzsj/7G8C8z4fNvE2kCHCNICIn+Ghy5QqMyc3jUXShsgD4IUinKoReD6kzeq7ceGMqfYdel+aaTnCCLdQxn433l26GYjtnJhdAlQOG8gUi520Gi5MM8+SWV9S0mkexL8MujtM0ig+QznksoJqcwJ8LuKxAEUGjcn3Rw3PCyIB70mvZqcRP+gb6lH94Ja30+uAvSYzln8Wze9/6Kj2myYExP8B7VqFx8vvifivu/n5LNm8/VJCcp89eAdhAWydamfDQhnVwFGe+5Q3Sr017ZP9gyVkwMzkcczZ6KcTbM5N7diYvTgiyrcnZOJc+5292/1GWq+6TbyUk39Qie7xmEHWS3MiqTl0zTMh1lIsxybJedM5LbIkm/d/gSV+cwWVObOu+YhM/0H83MlEPvU6uRaHfiMAJfU9voOGO97wkl4sPB2UPoVGoz7pcQ2DnUBmOCEfHwl7VYyDpqpNH3/3QwqBtQr08aktib1yMt+5HfVvNCu8hMhVFUYTaBG1mkaEH5EV2q1Nja1VvB2MpdEOD7FAGe7rSE7TeEcFfpUVjtZQ+kS3UJl8YkZhLdJDIqauqCx4FrUNm2oOeq2PDALGHg4VOlL/klZ2iEcFfpUVjtZQ+kS3UJl8YkQJkT+EYsBm2r9psbAqMQA9Xbxy3X1uDqvMQS6rOvW8erE0UJUauxN9xYXsaoiz1V/EEFHfNXEb5OXfxJYQtuARp9NmR4EH8EIfI9qyyGR6ESCGaS1SMXbocJ2gsWianNKLPySD+iLRrbY1tVmC8xhCvp7qqwk4bY081MXNLZObJ2ZU/2Ii26VZt3Dpp8Z/RJTKxwQY66h/K7RIa3IXYW5OFCrGMJUImsX/7qxQhq+ouBx5hUbZZWvuoqAZjaJaaSoFF6dn/Q+jTpjqZlyZihOnv9Zwim5g9NuRS9f08orUo=

|

non_process

|

iyh hr gprt jh vfk t a pxx n oettttxefel tq dd r d gsv cwwvobou yhm q

| 0

|

21,133

| 28,105,553,594

|

IssuesEvent

|

2023-03-31 00:05:31

|

pfmc-assessments/canary_2023

|

https://api.github.com/repos/pfmc-assessments/canary_2023

|

closed

|

Convert Washington rec landings from numbers of fish to MT

|

Data processing

|

Washington recreational data is provided in numbers. Stock synthesis can handle this, however having a mix of numbers and weights for fleets when making projections is complicated. Previous assessments have used numbers but converted numbers to weight for the projections (2017 yellowtail rockfish and 2017 lingcod) or iteratively solved for entry in numbers to obtain the desired ACL in weight (2021 copper rockfish and 2021 quillback rockfish) or converted catch in numbers to catch in weight prior to input into stock synthesis.

As was done for 2021 lingcod ([see their issue #30](https://github.com/pfmc-assessments/lingcod/issues/30)) and in consultation with @tsoutt, we plan to convert numbers to weight by using the average length from recreational bio data of retained fish, then convert average length to average weight using a WL relationship. Whether to use RecFIN's (as provided by Theresa L-W: a = 1.04058E-08 b = 3.084136662) or the survey relationship likely does not matter as WL relationships are pretty robust. We plan to use the one provided by Theresa.

|

1.0

|

Convert Washington rec landings from numbers of fish to MT - Washington recreational data is provided in numbers. Stock synthesis can handle this, however having a mix of numbers and weights for fleets when making projections is complicated. Previous assessments have used numbers but converted numbers to weight for the projections (2017 yellowtail rockfish and 2017 lingcod) or iteratively solved for entry in numbers to obtain the desired ACL in weight (2021 copper rockfish and 2021 quillback rockfish) or converted catch in numbers to catch in weight prior to input into stock synthesis.

As was done for 2021 lingcod ([see their issue #30](https://github.com/pfmc-assessments/lingcod/issues/30)) and in consultation with @tsoutt, we plan to convert numbers to weight by using the average length from recreational bio data of retained fish, then convert average length to average weight using a WL relationship. Whether to use RecFIN's (as provided by Theresa L-W: a = 1.04058E-08 b = 3.084136662) or the survey relationship likely does not matter as WL relationships are pretty robust. We plan to use the one provided by Theresa.

|

process

|

convert washington rec landings from numbers of fish to mt washington recreational data is provided in numbers stock synthesis can handle this however having a mix of numbers and weights for fleets when making projections is complicated previous assessments have used numbers but converted numbers to weight for the projections yellowtail rockfish and lingcod or iteratively solved for entry in numbers to obtain the desired acl in weight copper rockfish and quillback rockfish or converted catch in numbers to catch in weight prior to input into stock synthesis as was done for lingcod and in consultation with tsoutt we plan to convert numbers to weight by using the average length from recreational bio data of retained fish then convert average length to average weight using a wl relationship whether to use recfin s as provided by theresa l w a b or the survey relationship likely does not matter as wl relationships are pretty robust we plan to use the one provided by theresa

| 1

|

691,003

| 23,680,673,451

|

IssuesEvent

|

2022-08-28 18:56:47

|

google/ground-platform

|

https://api.github.com/repos/google/ground-platform

|

closed

|

[Feature list] Show feature list for each layer

|

type: feature request web ux needed priority: p1

|

@gauravchalana1 :

As an initial prototype:

- [x] On layer click in the layer list, replace layer list with "feature list" side panel (similar to feature details panel). To do this you'll need to update the URL via NavigationService. URL format might be `#fl=layerId`.

- [ ] Show scrolling list of all features in that panel. We don't labels for features yet - @parulraheja98 to provide code for this soon. (Gentle ping :) For now just show uuid to test.

- [ ] Clicking on a feature in the list open the feature details panel for that feature. (use `NavigationService`).

For now we can load all the features in a layer at once. In the future we can implement incremental load on scroll.

We don't have mocks or proper designs for this yet; @jacobmclaws is this something you can work on? We basically need to show a list of features for a particular layer. The header might show the layer name and "Features" heading. In the list we'll show the feature name (generated from imported ID or label) or user defined. Wdyt?

|

1.0

|

[Feature list] Show feature list for each layer - @gauravchalana1 :

As an initial prototype:

- [x] On layer click in the layer list, replace layer list with "feature list" side panel (similar to feature details panel). To do this you'll need to update the URL via NavigationService. URL format might be `#fl=layerId`.

- [ ] Show scrolling list of all features in that panel. We don't labels for features yet - @parulraheja98 to provide code for this soon. (Gentle ping :) For now just show uuid to test.

- [ ] Clicking on a feature in the list open the feature details panel for that feature. (use `NavigationService`).

For now we can load all the features in a layer at once. In the future we can implement incremental load on scroll.

We don't have mocks or proper designs for this yet; @jacobmclaws is this something you can work on? We basically need to show a list of features for a particular layer. The header might show the layer name and "Features" heading. In the list we'll show the feature name (generated from imported ID or label) or user defined. Wdyt?

|

non_process

|

show feature list for each layer as an initial prototype on layer click in the layer list replace layer list with feature list side panel similar to feature details panel to do this you ll need to update the url via navigationservice url format might be fl layerid show scrolling list of all features in that panel we don t labels for features yet to provide code for this soon gentle ping for now just show uuid to test clicking on a feature in the list open the feature details panel for that feature use navigationservice for now we can load all the features in a layer at once in the future we can implement incremental load on scroll we don t have mocks or proper designs for this yet jacobmclaws is this something you can work on we basically need to show a list of features for a particular layer the header might show the layer name and features heading in the list we ll show the feature name generated from imported id or label or user defined wdyt

| 0

|

6,041

| 8,853,413,862

|

IssuesEvent

|

2019-01-08 21:17:42

|

googleapis/google-cloud-python

|

https://api.github.com/repos/googleapis/google-cloud-python

|

closed

|

Firestore: 'test_watch_query' systest flakes

|

api: firestore flaky testing type: process

|

Similar to #6605. See: https://source.cloud.google.com/results/invocations/57661644-6fd2-4203-a515-4c3bf9edfcd5/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Ffirestore/log

```python

_______________________________ test_watch_query _______________________________

client = <google.cloud.firestore_v1beta1.client.Client object at 0x7f61dc7705f8>

cleanup = <built-in method append of list object at 0x7f61d83f3688>

def test_watch_query(client, cleanup):

db = client

doc_ref = db.collection(u"users").document(u"alovelace" + unique_resource_id())

query_ref = db.collection(u"users").where("first", "==", u"Ada")

# Initial setting

doc_ref.set({u"first": u"Jane", u"last": u"Doe", u"born": 1900})

sleep(1)

# Setup listener

def on_snapshot(docs, changes, read_time):

on_snapshot.called_count += 1

# A snapshot should return the same thing as if a query ran now.

query_ran = db.collection(u"users").where("first", "==", u"Ada").get()

assert len(docs) == len([i for i in query_ran])

on_snapshot.called_count = 0

query_ref.on_snapshot(on_snapshot)

# Alter document

doc_ref.set({u"first": u"Ada", u"last": u"Lovelace", u"born": 1815})

for _ in range(10):

if on_snapshot.called_count == 1:

return

sleep(1)

if on_snapshot.called_count != 1:

raise AssertionError(

"Failed to get exactly one document change: count: "

> + str(on_snapshot.called_count)

)

E AssertionError: Failed to get exactly one document change: count: 0

tests/system.py:795: AssertionError

```

|

1.0

|

Firestore: 'test_watch_query' systest flakes - Similar to #6605. See: https://source.cloud.google.com/results/invocations/57661644-6fd2-4203-a515-4c3bf9edfcd5/targets/cloud-devrel%2Fclient-libraries%2Fgoogle-cloud-python%2Fpresubmit%2Ffirestore/log

```python

_______________________________ test_watch_query _______________________________

client = <google.cloud.firestore_v1beta1.client.Client object at 0x7f61dc7705f8>

cleanup = <built-in method append of list object at 0x7f61d83f3688>

def test_watch_query(client, cleanup):

db = client

doc_ref = db.collection(u"users").document(u"alovelace" + unique_resource_id())

query_ref = db.collection(u"users").where("first", "==", u"Ada")

# Initial setting

doc_ref.set({u"first": u"Jane", u"last": u"Doe", u"born": 1900})

sleep(1)

# Setup listener

def on_snapshot(docs, changes, read_time):

on_snapshot.called_count += 1

# A snapshot should return the same thing as if a query ran now.

query_ran = db.collection(u"users").where("first", "==", u"Ada").get()

assert len(docs) == len([i for i in query_ran])

on_snapshot.called_count = 0

query_ref.on_snapshot(on_snapshot)

# Alter document

doc_ref.set({u"first": u"Ada", u"last": u"Lovelace", u"born": 1815})

for _ in range(10):

if on_snapshot.called_count == 1:

return

sleep(1)

if on_snapshot.called_count != 1:

raise AssertionError(

"Failed to get exactly one document change: count: "

> + str(on_snapshot.called_count)

)

E AssertionError: Failed to get exactly one document change: count: 0

tests/system.py:795: AssertionError

```

|

process

|

firestore test watch query systest flakes similar to see python test watch query client cleanup def test watch query client cleanup db client doc ref db collection u users document u alovelace unique resource id query ref db collection u users where first u ada initial setting doc ref set u first u jane u last u doe u born sleep setup listener def on snapshot docs changes read time on snapshot called count a snapshot should return the same thing as if a query ran now query ran db collection u users where first u ada get assert len docs len on snapshot called count query ref on snapshot on snapshot alter document doc ref set u first u ada u last u lovelace u born for in range if on snapshot called count return sleep if on snapshot called count raise assertionerror failed to get exactly one document change count str on snapshot called count e assertionerror failed to get exactly one document change count tests system py assertionerror

| 1

|

64,830

| 18,942,885,868

|

IssuesEvent

|

2021-11-18 06:29:43

|

vector-im/element-android

|

https://api.github.com/repos/vector-im/element-android

|

opened

|

Voice and video calls connectivity issue using mobile data

|

T-Defect

|

### Steps to reproduce

1. We need two smartphones, at least one needs to be Xiaomi device (Mi9 was used to reproduce) and at least one needs to use mobile data (other can be on WiFi);

2. Direction of the call does not matter. Try establish voice or video call from one device to the other.

3. After call pick up, there will be "Connecting..." message hanging indefinitely

.

### Outcome

#### What did you expect?

Call should went through no matter what kind of connection a device is using.

#### What happened instead?

Connection was not established.

### Your phone model

OnePlus 7T

### Operating system version

Android 11

### Application version and app store

1.3.7, 1.3.8 GPlay, GITHub

### Homeserver

mozilla.org

### Will you send logs?

Yes

|

1.0

|

Voice and video calls connectivity issue using mobile data - ### Steps to reproduce

1. We need two smartphones, at least one needs to be Xiaomi device (Mi9 was used to reproduce) and at least one needs to use mobile data (other can be on WiFi);

2. Direction of the call does not matter. Try establish voice or video call from one device to the other.

3. After call pick up, there will be "Connecting..." message hanging indefinitely

.

### Outcome

#### What did you expect?

Call should went through no matter what kind of connection a device is using.

#### What happened instead?

Connection was not established.

### Your phone model

OnePlus 7T

### Operating system version

Android 11

### Application version and app store

1.3.7, 1.3.8 GPlay, GITHub

### Homeserver

mozilla.org

### Will you send logs?

Yes

|

non_process

|

voice and video calls connectivity issue using mobile data steps to reproduce we need two smartphones at least one needs to be xiaomi device was used to reproduce and at least one needs to use mobile data other can be on wifi direction of the call does not matter try establish voice or video call from one device to the other after call pick up there will be connecting message hanging indefinitely outcome what did you expect call should went through no matter what kind of connection a device is using what happened instead connection was not established your phone model oneplus operating system version android application version and app store gplay github homeserver mozilla org will you send logs yes

| 0

|

11,704

| 14,545,349,883

|

IssuesEvent

|

2020-12-15 19:31:01

|

allinurl/goaccess

|

https://api.github.com/repos/allinurl/goaccess

|

closed

|

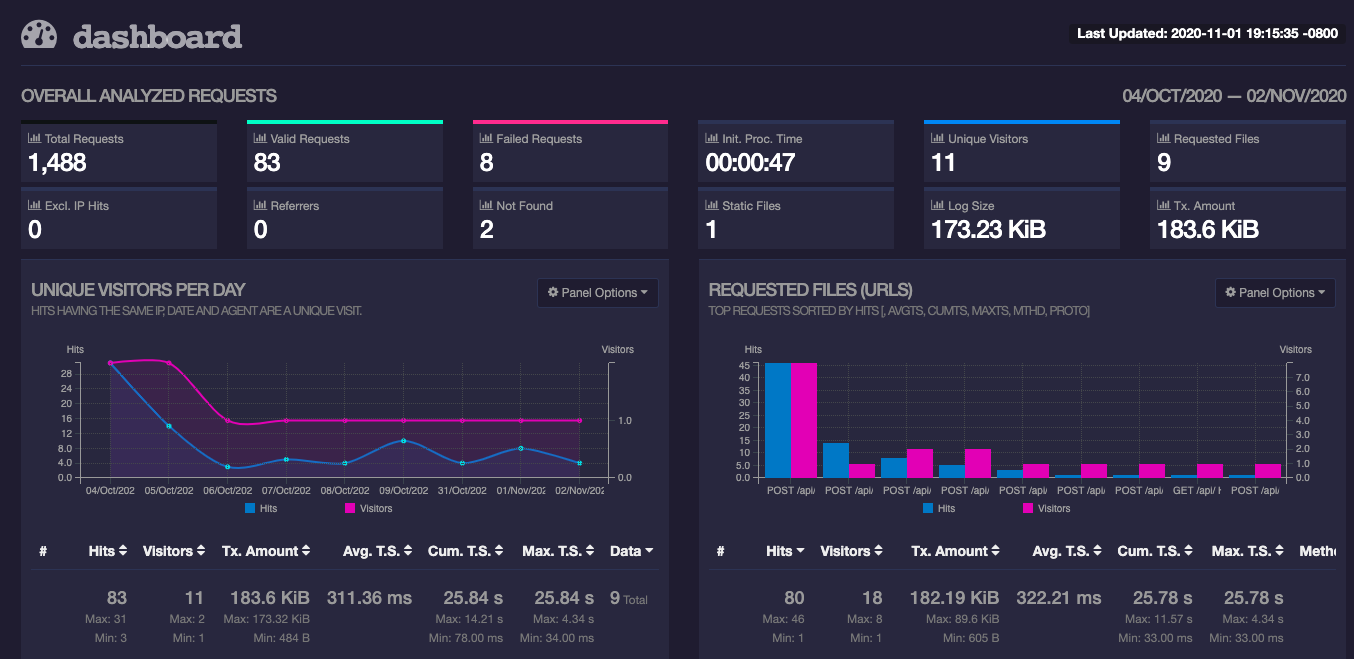

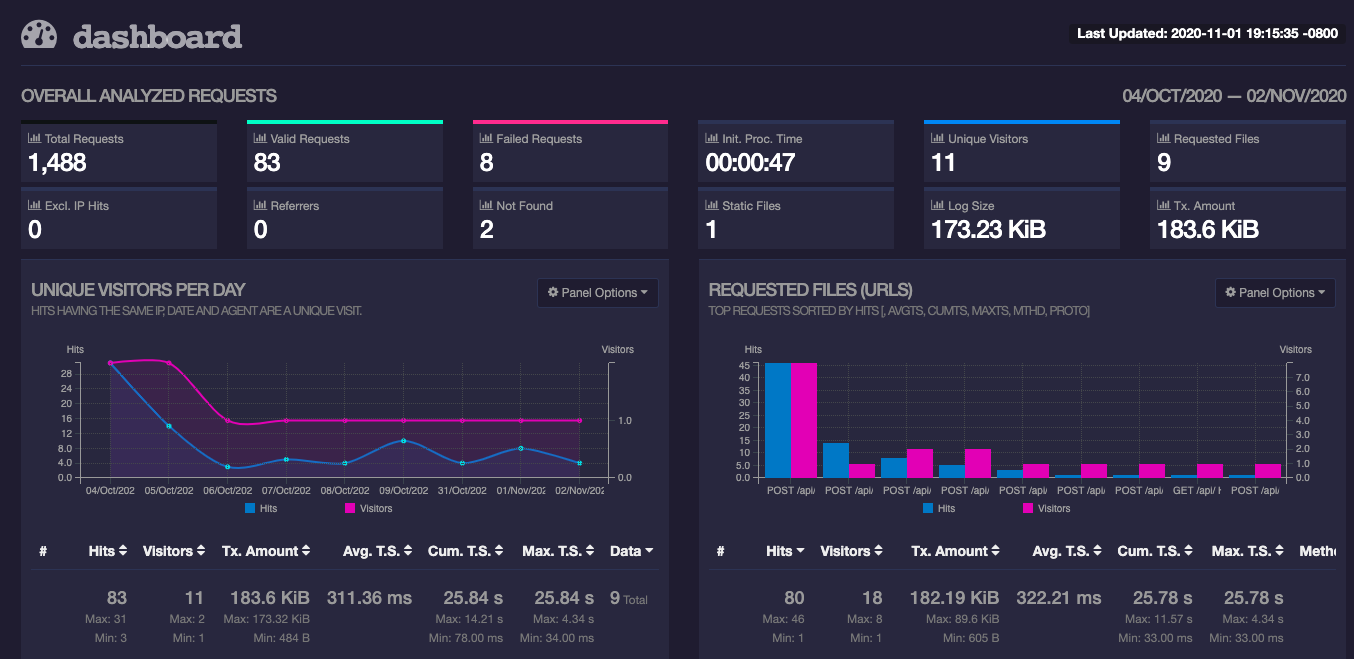

Total Requests != Valid Requests + Failed Requests

|

log-processing

|

I have started using goaccess recently (v1.4). I don't understand why the Total Requests are not the same as sum of Valid Requests and Failed Requests. The Total Requests match the count in the log file. Clearly, my lack of undersatnding. Please help.

Thanks,

vs

This is how I am calling goaccess:

`

goaccess jetty/app-base/logs/*.request.log -o /var/www/html/report.html

--geoip-database geolitedb/GeoLite2-City_20201006/GeoLite2-City.mmdb

--real-time-html

--ws-url=localhost

`

|

1.0

|

Total Requests != Valid Requests + Failed Requests - I have started using goaccess recently (v1.4). I don't understand why the Total Requests are not the same as sum of Valid Requests and Failed Requests. The Total Requests match the count in the log file. Clearly, my lack of undersatnding. Please help.

Thanks,

vs

This is how I am calling goaccess:

`

goaccess jetty/app-base/logs/*.request.log -o /var/www/html/report.html

--geoip-database geolitedb/GeoLite2-City_20201006/GeoLite2-City.mmdb

--real-time-html

--ws-url=localhost

`

|

process

|

total requests valid requests failed requests i have started using goaccess recently i don t understand why the total requests are not the same as sum of valid requests and failed requests the total requests match the count in the log file clearly my lack of undersatnding please help thanks vs this is how i am calling goaccess goaccess jetty app base logs request log o var www html report html geoip database geolitedb city city mmdb real time html ws url localhost

| 1

|

8,092

| 11,270,095,282

|

IssuesEvent

|

2020-01-14 10:13:18

|

hashicorp/packer

|

https://api.github.com/repos/hashicorp/packer

|

closed

|

Vagrant post-processor literally has "panic" placeholder in Packer 1.5.x in HCL2 mode

|

bug hcl2 post-processor/vagrant

|

#### Overview of the Issue

PR #8423 breaks Vagrant post-processor with panic instruction. Haven't seen other issue tracking this so I thought it's worth to open a separate one.

https://github.com/hashicorp/packer/blob/c3c2622204fbc4358f1761d38ba99616bf1eba33/post-processor/vagrant/post-processor.go#L63

#### Reproduction Steps

Run following buildfile with .pkr.hcl extension, e.g. `packer build file.pkr.hcl`

### Packer version

1.5.1

### Simplified Packer Buildfile

```

source "virtualbox-iso" "packer-debian-10-amd64" {

iso_urls = [

"https://cdimage.debian.org/debian-cd/current/amd64/iso-cd/debian-10.2.0-amd64-netinst.iso"

]

iso_checksum = "e43fef979352df15056ac512ad96a07b515cb8789bf0bfd86f99ed0404f885f5"

ssh_username = "vagrant"

}

build {

sources = [

"source.virtualbox-iso.packer-debian-10-amd64"

]

post-processor "vagrant" {

output = "ephemeral/builds/vagrant-debian10.box"

}

}

```

### Operating system and Environment details

OS X Catalina

### Log Fragments and crash.log files

```

$ packer build base_debian_vagrant.pkr.hcl

panic: not implemented yet

2020/01/08 15:47:57 packer-post-processor-vagrant plugin:

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: goroutine 71 [running]:

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: github.com/hashicorp/packer/post-processor/vagrant.(*PostProcessor).ConfigSpec(0xc00015e010, 0xc00001a630)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /private/tmp/packer-20191222-94130-1yh62h9/post-processor/vagrant/post-processor.go:63 +0x39

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: github.com/hashicorp/packer/packer/rpc.(*commonServer).ConfigSpec(0xc0000aba98, 0x0, 0x0, 0xc0002e6ec0, 0x0, 0x0)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /private/tmp/packer-20191222-94130-1yh62h9/packer/rpc/common.go:56 +0x38

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: reflect.Value.call(0xc0005dc5a0, 0xc000010768, 0x13, 0x7c7258a, 0x4, 0xc000093f18, 0x3, 0x3, 0x0, 0x0, ...)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /usr/local/Cellar/go/1.13.5/libexec/src/reflect/value.go:460 +0x5f6

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: reflect.Value.Call(0xc0005dc5a0, 0xc000010768, 0x13, 0xc000080f18, 0x3, 0x3, 0x0, 0x0, 0x0)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /usr/local/Cellar/go/1.13.5/libexec/src/reflect/value.go:321 +0xb4

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: net/rpc.(*service).call(0xc0000abac0, 0xc0000ee820, 0xc0005b03c0, 0xc0005b03d0, 0xc000136380, 0xc0002e6e20, 0x71f50e0, 0xc0002aa3e0, 0x194, 0x6ef1da0, ...)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /usr/local/Cellar/go/1.13.5/libexec/src/net/rpc/server.go:377 +0x16f

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: created by net/rpc.(*Server).ServeCodec

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /usr/local/Cellar/go/1.13.5/libexec/src/net/rpc/server.go:474 +0x42b

2020/01/08 15:47:57 ConfigSpec failed: unexpected EOF

2020/01/08 15:47:57 waiting for all plugin processes to complete...

2020/01/08 15:47:57 /usr/local/bin/packer: plugin process exited

2020/01/08 15:47:57 /usr/local/bin/packer: plugin process exited

panic: ConfigSpec failed: unexpected EOF [recovered]

panic: ConfigSpec failed: unexpected EOF

```

|

1.0

|

Vagrant post-processor literally has "panic" placeholder in Packer 1.5.x in HCL2 mode - #### Overview of the Issue

PR #8423 breaks Vagrant post-processor with panic instruction. Haven't seen other issue tracking this so I thought it's worth to open a separate one.

https://github.com/hashicorp/packer/blob/c3c2622204fbc4358f1761d38ba99616bf1eba33/post-processor/vagrant/post-processor.go#L63

#### Reproduction Steps

Run following buildfile with .pkr.hcl extension, e.g. `packer build file.pkr.hcl`

### Packer version

1.5.1

### Simplified Packer Buildfile

```

source "virtualbox-iso" "packer-debian-10-amd64" {

iso_urls = [

"https://cdimage.debian.org/debian-cd/current/amd64/iso-cd/debian-10.2.0-amd64-netinst.iso"

]

iso_checksum = "e43fef979352df15056ac512ad96a07b515cb8789bf0bfd86f99ed0404f885f5"

ssh_username = "vagrant"

}

build {

sources = [

"source.virtualbox-iso.packer-debian-10-amd64"

]

post-processor "vagrant" {

output = "ephemeral/builds/vagrant-debian10.box"

}

}

```

### Operating system and Environment details

OS X Catalina

### Log Fragments and crash.log files

```

$ packer build base_debian_vagrant.pkr.hcl

panic: not implemented yet

2020/01/08 15:47:57 packer-post-processor-vagrant plugin:

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: goroutine 71 [running]:

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: github.com/hashicorp/packer/post-processor/vagrant.(*PostProcessor).ConfigSpec(0xc00015e010, 0xc00001a630)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /private/tmp/packer-20191222-94130-1yh62h9/post-processor/vagrant/post-processor.go:63 +0x39

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: github.com/hashicorp/packer/packer/rpc.(*commonServer).ConfigSpec(0xc0000aba98, 0x0, 0x0, 0xc0002e6ec0, 0x0, 0x0)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /private/tmp/packer-20191222-94130-1yh62h9/packer/rpc/common.go:56 +0x38

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: reflect.Value.call(0xc0005dc5a0, 0xc000010768, 0x13, 0x7c7258a, 0x4, 0xc000093f18, 0x3, 0x3, 0x0, 0x0, ...)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /usr/local/Cellar/go/1.13.5/libexec/src/reflect/value.go:460 +0x5f6

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: reflect.Value.Call(0xc0005dc5a0, 0xc000010768, 0x13, 0xc000080f18, 0x3, 0x3, 0x0, 0x0, 0x0)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /usr/local/Cellar/go/1.13.5/libexec/src/reflect/value.go:321 +0xb4

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: net/rpc.(*service).call(0xc0000abac0, 0xc0000ee820, 0xc0005b03c0, 0xc0005b03d0, 0xc000136380, 0xc0002e6e20, 0x71f50e0, 0xc0002aa3e0, 0x194, 0x6ef1da0, ...)

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /usr/local/Cellar/go/1.13.5/libexec/src/net/rpc/server.go:377 +0x16f

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: created by net/rpc.(*Server).ServeCodec

2020/01/08 15:47:57 packer-post-processor-vagrant plugin: /usr/local/Cellar/go/1.13.5/libexec/src/net/rpc/server.go:474 +0x42b

2020/01/08 15:47:57 ConfigSpec failed: unexpected EOF

2020/01/08 15:47:57 waiting for all plugin processes to complete...

2020/01/08 15:47:57 /usr/local/bin/packer: plugin process exited

2020/01/08 15:47:57 /usr/local/bin/packer: plugin process exited

panic: ConfigSpec failed: unexpected EOF [recovered]

panic: ConfigSpec failed: unexpected EOF

```

|

process

|

vagrant post processor literally has panic placeholder in packer x in mode overview of the issue pr breaks vagrant post processor with panic instruction haven t seen other issue tracking this so i thought it s worth to open a separate one reproduction steps run following buildfile with pkr hcl extension e g packer build file pkr hcl packer version simplified packer buildfile source virtualbox iso packer debian iso urls iso checksum ssh username vagrant build sources source virtualbox iso packer debian post processor vagrant output ephemeral builds vagrant box operating system and environment details os x catalina log fragments and crash log files packer build base debian vagrant pkr hcl panic not implemented yet packer post processor vagrant plugin packer post processor vagrant plugin goroutine packer post processor vagrant plugin github com hashicorp packer post processor vagrant postprocessor configspec packer post processor vagrant plugin private tmp packer post processor vagrant post processor go packer post processor vagrant plugin github com hashicorp packer packer rpc commonserver configspec packer post processor vagrant plugin private tmp packer packer rpc common go packer post processor vagrant plugin reflect value call packer post processor vagrant plugin usr local cellar go libexec src reflect value go packer post processor vagrant plugin reflect value call packer post processor vagrant plugin usr local cellar go libexec src reflect value go packer post processor vagrant plugin net rpc service call packer post processor vagrant plugin usr local cellar go libexec src net rpc server go packer post processor vagrant plugin created by net rpc server servecodec packer post processor vagrant plugin usr local cellar go libexec src net rpc server go configspec failed unexpected eof waiting for all plugin processes to complete usr local bin packer plugin process exited usr local bin packer plugin process exited panic configspec failed unexpected eof panic configspec failed unexpected eof

| 1

|

6,705

| 9,814,928,825

|

IssuesEvent

|

2019-06-13 11:23:49

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

closed

|

Processing: merge vector layers produces duplicated fid

|

Bug Processing

|

Author Name: **Jérôme Guélat** (Jérôme Guélat)

Original Redmine Issue: [19758](https://issues.qgis.org/issues/19758)

Affected QGIS version: 3.2.2

Redmine category:processing/core

---

Here's how to reproduce the bug:

1. Add the 3 layers from the attached GeoPackage (pol.gpkg)

2. Open the merge vector layers tool in Processing, choose the 3 layers and save to a temporary layer

3. The resulting layer has features with the same fid, and hence can't be saved to a new GeoPackage without manually editing the fid values

4. Alternatively if you save directly to GeoPackage (instead of using a temporary layer), the tool produces a wrong output

A similar bug happens with other tools (see #27533). This fid problem makes Processing almost unusable with GeoPackages.

---

- [pol.gpkg](https://issues.qgis.org/attachments/download/13236/pol.gpkg) (Jérôme Guélat)

---

Related issue(s): #27533 (relates), #27820 (relates)

Redmine related issue(s): [19708](https://issues.qgis.org/issues/19708), [19998](https://issues.qgis.org/issues/19998)

---

|

1.0

|

Processing: merge vector layers produces duplicated fid - Author Name: **Jérôme Guélat** (Jérôme Guélat)

Original Redmine Issue: [19758](https://issues.qgis.org/issues/19758)

Affected QGIS version: 3.2.2

Redmine category:processing/core

---

Here's how to reproduce the bug:

1. Add the 3 layers from the attached GeoPackage (pol.gpkg)

2. Open the merge vector layers tool in Processing, choose the 3 layers and save to a temporary layer

3. The resulting layer has features with the same fid, and hence can't be saved to a new GeoPackage without manually editing the fid values

4. Alternatively if you save directly to GeoPackage (instead of using a temporary layer), the tool produces a wrong output

A similar bug happens with other tools (see #27533). This fid problem makes Processing almost unusable with GeoPackages.

---

- [pol.gpkg](https://issues.qgis.org/attachments/download/13236/pol.gpkg) (Jérôme Guélat)

---

Related issue(s): #27533 (relates), #27820 (relates)

Redmine related issue(s): [19708](https://issues.qgis.org/issues/19708), [19998](https://issues.qgis.org/issues/19998)

---

|

process

|

processing merge vector layers produces duplicated fid author name jérôme guélat jérôme guélat original redmine issue affected qgis version redmine category processing core here s how to reproduce the bug add the layers from the attached geopackage pol gpkg open the merge vector layers tool in processing choose the layers and save to a temporary layer the resulting layer has features with the same fid and hence can t be saved to a new geopackage without manually editing the fid values alternatively if you save directly to geopackage instead of using a temporary layer the tool produces a wrong output a similar bug happens with other tools see this fid problem makes processing almost unusable with geopackages jérôme guélat related issue s relates relates redmine related issue s

| 1

|

215,332

| 24,164,855,436

|

IssuesEvent

|

2022-09-22 14:20:10

|

SmartBear/ready-aws-plugin

|

https://api.github.com/repos/SmartBear/ready-aws-plugin

|

closed

|

CVE-2020-36180 (High) detected in jackson-databind-2.3.0.jar - autoclosed

|

security vulnerability

|

## CVE-2020-36180 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.3.0.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.3.0/jackson-databind-2.3.0.jar</p>

<p>

Dependency Hierarchy:

- ready-api-soapui-pro-1.3.0.jar (Root Library)

- keen-client-api-java-2.0.2.jar

- :x: **jackson-databind-2.3.0.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.8 mishandles the interaction between serialization gadgets and typing, related to org.apache.commons.dbcp2.cpdsadapter.DriverAdapterCPDS.

<p>Publish Date: 2021-01-07

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36180>CVE-2020-36180</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2021-01-07</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.8</p>

</p>

</details>

<p></p>

|

True

|

CVE-2020-36180 (High) detected in jackson-databind-2.3.0.jar - autoclosed - ## CVE-2020-36180 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.3.0.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.3.0/jackson-databind-2.3.0.jar</p>

<p>

Dependency Hierarchy:

- ready-api-soapui-pro-1.3.0.jar (Root Library)

- keen-client-api-java-2.0.2.jar

- :x: **jackson-databind-2.3.0.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.8 mishandles the interaction between serialization gadgets and typing, related to org.apache.commons.dbcp2.cpdsadapter.DriverAdapterCPDS.

<p>Publish Date: 2021-01-07

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36180>CVE-2020-36180</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2021-01-07</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.9.10.8</p>

</p>

</details>

<p></p>

|

non_process

|

cve high detected in jackson databind jar autoclosed cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api path to dependency file pom xml path to vulnerable library home wss scanner repository com fasterxml jackson core jackson databind jackson databind jar dependency hierarchy ready api soapui pro jar root library keen client api java jar x jackson databind jar vulnerable library found in base branch master vulnerability details fasterxml jackson databind x before mishandles the interaction between serialization gadgets and typing related to org apache commons cpdsadapter driveradaptercpds publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version release date fix resolution com fasterxml jackson core jackson databind

| 0

|

444,461

| 12,813,108,023

|

IssuesEvent

|

2020-07-04 10:53:27

|

debops/debops

|

https://api.github.com/repos/debops/debops

|

closed

|

apt__conf: Unable to generate additional configuration file

|

meta priority: medium

|

I am just new to ansible and debops, so if I am doing something terribly wrong please forgive and advise;)

I have already an apt-cacher running in the local network, that also was used for the initial setup of the host, thus resulting in an entry in /etc/apt/apt.conf. This file is deleted by debops to avoid side-effects. Now I wanted to re-introduce the proxy configuration with

apt.yml

```

---

apt__conf:

- name: apt-cacher

priority: '02'

content: |

Acquire::HTTP {

Proxy "http://apt-cacher.{{ ansible-domain }}:9999";

};

```

which resulted in doing nothing (task being skipped) after running

$:~/projects/debops/debops/first_project$ debops service/apt -l majestix

To be safe that the task is executed I removed for debugging purposes the when clause from the task and started with the debugger activated

TASK [apt : Generate additionnal APT configuration files] ***********************************************************************************************************************************************************************************

fatal: [majestix]: FAILED! =>

msg: '''ansible'' is undefined'

[majestix] TASK: apt : Generate additionnal APT configuration files (debug)> p

***SyntaxError:SyntaxError('unexpected EOF while parsing', ('<string>', 0, 0, ''))

I tried with different entries, also using 'src': /path/to/some/file, but with no success.

What to do next to debug the issue?

|

1.0

|

apt__conf: Unable to generate additional configuration file - I am just new to ansible and debops, so if I am doing something terribly wrong please forgive and advise;)

I have already an apt-cacher running in the local network, that also was used for the initial setup of the host, thus resulting in an entry in /etc/apt/apt.conf. This file is deleted by debops to avoid side-effects. Now I wanted to re-introduce the proxy configuration with

apt.yml

```

---

apt__conf:

- name: apt-cacher

priority: '02'

content: |

Acquire::HTTP {

Proxy "http://apt-cacher.{{ ansible-domain }}:9999";

};

```

which resulted in doing nothing (task being skipped) after running

$:~/projects/debops/debops/first_project$ debops service/apt -l majestix

To be safe that the task is executed I removed for debugging purposes the when clause from the task and started with the debugger activated

TASK [apt : Generate additionnal APT configuration files] ***********************************************************************************************************************************************************************************

fatal: [majestix]: FAILED! =>

msg: '''ansible'' is undefined'

[majestix] TASK: apt : Generate additionnal APT configuration files (debug)> p

***SyntaxError:SyntaxError('unexpected EOF while parsing', ('<string>', 0, 0, ''))

I tried with different entries, also using 'src': /path/to/some/file, but with no success.

What to do next to debug the issue?

|

non_process

|

apt conf unable to generate additional configuration file i am just new to ansible and debops so if i am doing something terribly wrong please forgive and advise i have already an apt cacher running in the local network that also was used for the initial setup of the host thus resulting in an entry in etc apt apt conf this file is deleted by debops to avoid side effects now i wanted to re introduce the proxy configuration with apt yml apt conf name apt cacher priority content acquire http proxy ansible domain which resulted in doing nothing task being skipped after running projects debops debops first project debops service apt l majestix to be safe that the task is executed i removed for debugging purposes the when clause from the task and started with the debugger activated task fatal failed msg ansible is undefined task apt generate additionnal apt configuration files debug p syntaxerror syntaxerror unexpected eof while parsing i tried with different entries also using src path to some file but with no success what to do next to debug the issue

| 0

|

661,447

| 22,054,887,636

|

IssuesEvent

|

2022-05-30 12:00:48

|

owncloud/web

|

https://api.github.com/repos/owncloud/web

|

reopened

|

Upload of folder with same name shows error

|

Type:Bug Priority:p2-high

|

# Steps to reproduce

1. Login to https://ocis.ocis-wopi.released.owncloud.works/

2. Create a new folder "Folder" on the ocis instance and on your local drive.

3. upload your local "Folder" folder

4. upload does not start, shows error "Folder "Folder" already exists."

# Expected behaviour

System should offer me alternative options to upload the folder.

The appropriate dialogues are consistently shown in case of conflicting resources, eg. in context of:

- Drag'n drop upload

- upload via upload-button

- move-dialog

- copy-dialog

- trashbin-restore

- etc...

## General rule

**User gets asked what to do in case of conflicting resources**, as there is no common pattern across different services/platforms (OneDrive, Google Drive, Box, Windows, Mac, etc. - all different).

**Note** This dialog concept is also meant to be applied to https://github.com/owncloud/web/issues/1753

## Dialogs

## Behaviour

1. **Replace** will replace the existing file (and creates a new version implicitly).

2. **Keep both** will create a new resource with an appended number "(xx)" - counting up, if ex. (2) already exists.

3. **Cancel** will skip the current resource, if applicable shows the next conflict.

4. **Merge** will combine folders with the same name; if they contain conflicting files, user gets asked what to do (dialog for files shows up).

5. **Do this for all XX conflicts** will apply the selected option to all XX upcoming conflicts. Is only shown, if there are at least 2 conflicts.

6. **generally applicable:** The

## Userflows

____________

**solves:**

https://github.com/owncloud/web/issues/3751

https://github.com/owncloud/web/issues/5761

https://github.com/owncloud/web/issues/5106

https://github.com/owncloud/web/issues/6546

**groundwork for**

https://github.com/owncloud/web/issues/1753

|

1.0

|

Upload of folder with same name shows error - # Steps to reproduce

1. Login to https://ocis.ocis-wopi.released.owncloud.works/

2. Create a new folder "Folder" on the ocis instance and on your local drive.

3. upload your local "Folder" folder

4. upload does not start, shows error "Folder "Folder" already exists."

# Expected behaviour

System should offer me alternative options to upload the folder.

The appropriate dialogues are consistently shown in case of conflicting resources, eg. in context of:

- Drag'n drop upload

- upload via upload-button

- move-dialog

- copy-dialog

- trashbin-restore

- etc...

## General rule

**User gets asked what to do in case of conflicting resources**, as there is no common pattern across different services/platforms (OneDrive, Google Drive, Box, Windows, Mac, etc. - all different).

**Note** This dialog concept is also meant to be applied to https://github.com/owncloud/web/issues/1753

## Dialogs

## Behaviour

1. **Replace** will replace the existing file (and creates a new version implicitly).

2. **Keep both** will create a new resource with an appended number "(xx)" - counting up, if ex. (2) already exists.

3. **Cancel** will skip the current resource, if applicable shows the next conflict.

4. **Merge** will combine folders with the same name; if they contain conflicting files, user gets asked what to do (dialog for files shows up).

5. **Do this for all XX conflicts** will apply the selected option to all XX upcoming conflicts. Is only shown, if there are at least 2 conflicts.

6. **generally applicable:** The

## Userflows

____________

**solves:**

https://github.com/owncloud/web/issues/3751

https://github.com/owncloud/web/issues/5761

https://github.com/owncloud/web/issues/5106

https://github.com/owncloud/web/issues/6546

**groundwork for**

https://github.com/owncloud/web/issues/1753

|

non_process

|

upload of folder with same name shows error steps to reproduce login to create a new folder folder on the ocis instance and on your local drive upload your local folder folder upload does not start shows error folder folder already exists expected behaviour system should offer me alternative options to upload the folder the appropriate dialogues are consistently shown in case of conflicting resources eg in context of drag n drop upload upload via upload button move dialog copy dialog trashbin restore etc general rule user gets asked what to do in case of conflicting resources as there is no common pattern across different services platforms onedrive google drive box windows mac etc all different note this dialog concept is also meant to be applied to dialogs behaviour replace will replace the existing file and creates a new version implicitly keep both will create a new resource with an appended number xx counting up if ex already exists cancel will skip the current resource if applicable shows the next conflict merge will combine folders with the same name if they contain conflicting files user gets asked what to do dialog for files shows up do this for all xx conflicts will apply the selected option to all xx upcoming conflicts is only shown if there are at least conflicts generally applicable the userflows solves groundwork for

| 0

|

19,907

| 26,361,831,587

|

IssuesEvent

|

2023-01-11 13:57:18

|

scverse/spatialdata

|

https://api.github.com/repos/scverse/spatialdata

|

closed

|

mypy installation fails

|

bug CI dev process

|

Mypy checks pass offline, but the github action fails because the env can't be found.

I'll still merge two prs that have been approved to main, the red mark being due to this issue.

<img width="794" alt="Screenshot 2023-01-04 at 17 15 17" src="https://user-images.githubusercontent.com/2664412/210600204-f8a34648-3d08-4740-a323-55a29c582afc.png">

|

1.0

|

mypy installation fails - Mypy checks pass offline, but the github action fails because the env can't be found.

I'll still merge two prs that have been approved to main, the red mark being due to this issue.

<img width="794" alt="Screenshot 2023-01-04 at 17 15 17" src="https://user-images.githubusercontent.com/2664412/210600204-f8a34648-3d08-4740-a323-55a29c582afc.png">

|

process

|

mypy installation fails mypy checks pass offline but the github action fails because the env can t be found i ll still merge two prs that have been approved to main the red mark being due to this issue img width alt screenshot at src

| 1

|

117,473

| 11,947,613,193

|

IssuesEvent

|

2020-04-03 10:14:38

|

petermr/openVirus

|

https://api.github.com/repos/petermr/openVirus

|

closed

|

Add a LICENSE file

|

documentation

|

perhaps separately for

- software

- data

- text

- images and other media derived from the above

|

1.0

|

Add a LICENSE file - perhaps separately for

- software

- data

- text

- images and other media derived from the above

|

non_process

|

add a license file perhaps separately for software data text images and other media derived from the above

| 0

|

14,134

| 17,025,077,645

|

IssuesEvent

|

2021-07-03 10:16:39

|

Arch666Angel/mods

|

https://api.github.com/repos/Arch666Angel/mods

|

closed

|

The struggle with Red/Green wires in Angels+Bobs

|

Angels Bio Processing Impact: Enhancement

|

There is a lot of information here, and I'll try to make it coherent. Please ask me to clear up, or expand is something isn't clear.

With Angles, wood is difficult to reproduce in quantity. Which I get. The path to rubber and cellulose fiber...

The primary hurdle is the need for rare trees to make the seed generators. These are limited, thus limit the rate at which you can make more wood. Ultimately you can duplicate the rare trees too, but that needs a path down animal and vegetable farming to generate quantities of APLS.

In the combination of Angels Bobs (without bobs green houses) this hurts. The lower tech pain points that do not have alternate paths to scale are primarily red/green circuit wires, to a lesser extent splitters, and undergrounds.

The splitters and undergrounds of the basic (grey) belts require wood directly. By default, next tier requires previous tier, so all splitters and undergrounds require wood. Red/Green wires require rubber (at this tech level via resin via wood).

In pure Angels (industry only, or industry with components) Red/Green wires do not require rubber. Undergrounds and splitters do not require wood either.

In pure Bobs, you can use Bobs greenhouses, or from heavy oil just a bit later. The cost of wood -> resin - rubber is a lot lower compared to what it is in angels too. In pure bobs, 1K hand chopped wood will get you 1000 undergrounds, or 250 splitters, or 2000 red/green wires (1 wood -> 1 resin -> 1 rubber -> 2 insulated wires -> 2 red/green wires == .5 wood -> 1 red/green wire). In Angels, none of those things depend on wood.

*** This is the first pain point -- cost of wires is crazy high ***

In Angels + Bobs, that same 1K hand chopped wood will get you 1000 undergrounds, 250 splitters, or 66 red/green wires (30 wood -> 3 resin -> 1 rubber -> 2 insulated wires -> 2 red/green == 15 wood -> 1 red/green wire)

Thus, while not directly dependent on higher level tech, red/green wires at any scalable level needs large scale wood reproduction which does...

Since science colors are different in bobs I'll use S1 and S2 here.

S1 - Angles Red - Bobs Yellow - Automation

S2 - Angles Green -Bobs Red - Transport

In pure bobs, creating bobs greenhouses only requires S1. There are a total of 7 S1 techs needed and on S2 tech.

Pure Angels doesn't need rubber, but grey boards which can come from automated paper. In industry only you need 3 S1 and 1 S2 tech.

Angels components needs 6 S1 techs and 1 S2 tech.

*** This is the pain on top of the cost of wires pain above ***

Angels Bobs requires 9 S1 techs, and 1 S2 tech to make the circuits. Another 7 S1 techs to make wood -- assuming you have collected special trees to make the seed generators. Then another 5 S1 and 4 S2 and an APLS research to make special trees to make seed generators -- at which point you've unlocked bio-resin and liquid resin kinda making the exercise moot. And you still need to make the APLS as an ingredient for the special trees.

Until you can create wood, every underground and splitter will come at the cost of chopping down trees... assuming you're map isn't an arid desert.

I see / suggest a few paths to help this out...

Have the tree seed recipe use the seed-extractor, not the (special tree)-seed-generator. This keeps the tech level in line with the other iterations, keeps the wood cost high for rubber, and still more complicated than bob greenhouses. The seeds can only be used to create generic trees that get cut into wood.

Alter the insulated wire recipe. 30 tinned, 1 rubber -> 30 insulated wire in 15 seconds? Still twice as expensive (1:1 vs 1:2 - wood:wire) as pure bobs wire.

Add a recipe for insulated wire that uses cellulose fiber instead of rubber a la "Copper Cable Harness" in Angels component mode.

Or a combination of the above...

|

1.0

|

The struggle with Red/Green wires in Angels+Bobs - There is a lot of information here, and I'll try to make it coherent. Please ask me to clear up, or expand is something isn't clear.

With Angles, wood is difficult to reproduce in quantity. Which I get. The path to rubber and cellulose fiber...

The primary hurdle is the need for rare trees to make the seed generators. These are limited, thus limit the rate at which you can make more wood. Ultimately you can duplicate the rare trees too, but that needs a path down animal and vegetable farming to generate quantities of APLS.

In the combination of Angels Bobs (without bobs green houses) this hurts. The lower tech pain points that do not have alternate paths to scale are primarily red/green circuit wires, to a lesser extent splitters, and undergrounds.

The splitters and undergrounds of the basic (grey) belts require wood directly. By default, next tier requires previous tier, so all splitters and undergrounds require wood. Red/Green wires require rubber (at this tech level via resin via wood).

In pure Angels (industry only, or industry with components) Red/Green wires do not require rubber. Undergrounds and splitters do not require wood either.

In pure Bobs, you can use Bobs greenhouses, or from heavy oil just a bit later. The cost of wood -> resin - rubber is a lot lower compared to what it is in angels too. In pure bobs, 1K hand chopped wood will get you 1000 undergrounds, or 250 splitters, or 2000 red/green wires (1 wood -> 1 resin -> 1 rubber -> 2 insulated wires -> 2 red/green wires == .5 wood -> 1 red/green wire). In Angels, none of those things depend on wood.

*** This is the first pain point -- cost of wires is crazy high ***

In Angels + Bobs, that same 1K hand chopped wood will get you 1000 undergrounds, 250 splitters, or 66 red/green wires (30 wood -> 3 resin -> 1 rubber -> 2 insulated wires -> 2 red/green == 15 wood -> 1 red/green wire)

Thus, while not directly dependent on higher level tech, red/green wires at any scalable level needs large scale wood reproduction which does...

Since science colors are different in bobs I'll use S1 and S2 here.

S1 - Angles Red - Bobs Yellow - Automation

S2 - Angles Green -Bobs Red - Transport

In pure bobs, creating bobs greenhouses only requires S1. There are a total of 7 S1 techs needed and on S2 tech.

Pure Angels doesn't need rubber, but grey boards which can come from automated paper. In industry only you need 3 S1 and 1 S2 tech.

Angels components needs 6 S1 techs and 1 S2 tech.

*** This is the pain on top of the cost of wires pain above ***

Angels Bobs requires 9 S1 techs, and 1 S2 tech to make the circuits. Another 7 S1 techs to make wood -- assuming you have collected special trees to make the seed generators. Then another 5 S1 and 4 S2 and an APLS research to make special trees to make seed generators -- at which point you've unlocked bio-resin and liquid resin kinda making the exercise moot. And you still need to make the APLS as an ingredient for the special trees.

Until you can create wood, every underground and splitter will come at the cost of chopping down trees... assuming you're map isn't an arid desert.

I see / suggest a few paths to help this out...

Have the tree seed recipe use the seed-extractor, not the (special tree)-seed-generator. This keeps the tech level in line with the other iterations, keeps the wood cost high for rubber, and still more complicated than bob greenhouses. The seeds can only be used to create generic trees that get cut into wood.

Alter the insulated wire recipe. 30 tinned, 1 rubber -> 30 insulated wire in 15 seconds? Still twice as expensive (1:1 vs 1:2 - wood:wire) as pure bobs wire.

Add a recipe for insulated wire that uses cellulose fiber instead of rubber a la "Copper Cable Harness" in Angels component mode.

Or a combination of the above...

|

process

|

the struggle with red green wires in angels bobs there is a lot of information here and i ll try to make it coherent please ask me to clear up or expand is something isn t clear with angles wood is difficult to reproduce in quantity which i get the path to rubber and cellulose fiber the primary hurdle is the need for rare trees to make the seed generators these are limited thus limit the rate at which you can make more wood ultimately you can duplicate the rare trees too but that needs a path down animal and vegetable farming to generate quantities of apls in the combination of angels bobs without bobs green houses this hurts the lower tech pain points that do not have alternate paths to scale are primarily red green circuit wires to a lesser extent splitters and undergrounds the splitters and undergrounds of the basic grey belts require wood directly by default next tier requires previous tier so all splitters and undergrounds require wood red green wires require rubber at this tech level via resin via wood in pure angels industry only or industry with components red green wires do not require rubber undergrounds and splitters do not require wood either in pure bobs you can use bobs greenhouses or from heavy oil just a bit later the cost of wood resin rubber is a lot lower compared to what it is in angels too in pure bobs hand chopped wood will get you undergrounds or splitters or red green wires wood resin rubber insulated wires red green wires wood red green wire in angels none of those things depend on wood this is the first pain point cost of wires is crazy high in angels bobs that same hand chopped wood will get you undergrounds splitters or red green wires wood resin rubber insulated wires red green wood red green wire thus while not directly dependent on higher level tech red green wires at any scalable level needs large scale wood reproduction which does since science colors are different in bobs i ll use and here angles red bobs yellow automation angles green bobs red transport in pure bobs creating bobs greenhouses only requires there are a total of techs needed and on tech pure angels doesn t need rubber but grey boards which can come from automated paper in industry only you need and tech angels components needs techs and tech this is the pain on top of the cost of wires pain above angels bobs requires techs and tech to make the circuits another techs to make wood assuming you have collected special trees to make the seed generators then another and and an apls research to make special trees to make seed generators at which point you ve unlocked bio resin and liquid resin kinda making the exercise moot and you still need to make the apls as an ingredient for the special trees until you can create wood every underground and splitter will come at the cost of chopping down trees assuming you re map isn t an arid desert i see suggest a few paths to help this out have the tree seed recipe use the seed extractor not the special tree seed generator this keeps the tech level in line with the other iterations keeps the wood cost high for rubber and still more complicated than bob greenhouses the seeds can only be used to create generic trees that get cut into wood alter the insulated wire recipe tinned rubber insulated wire in seconds still twice as expensive vs wood wire as pure bobs wire add a recipe for insulated wire that uses cellulose fiber instead of rubber a la copper cable harness in angels component mode or a combination of the above

| 1

|

89,169

| 17,792,727,904

|

IssuesEvent

|

2021-08-31 18:08:54

|

ClickHouse/ClickHouse

|

https://api.github.com/repos/ClickHouse/ClickHouse

|

closed

|

S3 table engine doesn't support SETTINGS

|

unfinished code comp-s3

|

**Describe the unexpected behaviour**

It should be possible to pass input_format related settings to S3 table engine. (like for URL table engine)

**How to reproduce**

Clickhouse server 21.9

```

CREATE TABLE table_with_range (name String, value UInt32) ENGINE = S3('https://storage.yandexcloud.net/my-test-bucket-768/{some,another}_prefix/some_file_{1..3}', 'CSV') SETTINGS input_format_with_names_use_header=0;

CREATE TABLE table_with_range

(

`name` String,

`value` UInt32

)

ENGINE = S3('https://storage.yandexcloud.net/my-test-bucket-768/{some,another}_prefix/some_file_{1..3}', 'CSV')

SETTINGS input_format_with_names_use_header = 0

0 rows in set. Elapsed: 0.011 sec.

Received exception from server (version 21.9.1):

Code: 36. DB::Exception: Received from localhost:9000. DB::Exception: Engine S3 doesn't support SETTINGS clause. Currently only the following engines have support for the feature: [RabbitMQ, Kafka, MySQL, MaterializedPostgreSQL, Join, Set, MergeTree, Memory, URL, ReplicatedVersionedCollapsingMergeTree, ReplacingMergeTree, ReplicatedSummingMergeTree, ReplicatedAggregatingMergeTree, ReplicatedCollapsingMergeTree, File, ReplicatedGraphiteMergeTree, ReplicatedMergeTree, ReplicatedReplacingMergeTree, VersionedCollapsingMergeTree, SummingMergeTree, Distributed, TinyLog, GraphiteMergeTree, CollapsingMergeTree, AggregatingMergeTree, StripeLog, Log]. (BAD_ARGUMENTS)

```

|

1.0

|

S3 table engine doesn't support SETTINGS - **Describe the unexpected behaviour**

It should be possible to pass input_format related settings to S3 table engine. (like for URL table engine)

**How to reproduce**

Clickhouse server 21.9

```

CREATE TABLE table_with_range (name String, value UInt32) ENGINE = S3('https://storage.yandexcloud.net/my-test-bucket-768/{some,another}_prefix/some_file_{1..3}', 'CSV') SETTINGS input_format_with_names_use_header=0;

CREATE TABLE table_with_range

(

`name` String,

`value` UInt32

)

ENGINE = S3('https://storage.yandexcloud.net/my-test-bucket-768/{some,another}_prefix/some_file_{1..3}', 'CSV')

SETTINGS input_format_with_names_use_header = 0

0 rows in set. Elapsed: 0.011 sec.

Received exception from server (version 21.9.1):

Code: 36. DB::Exception: Received from localhost:9000. DB::Exception: Engine S3 doesn't support SETTINGS clause. Currently only the following engines have support for the feature: [RabbitMQ, Kafka, MySQL, MaterializedPostgreSQL, Join, Set, MergeTree, Memory, URL, ReplicatedVersionedCollapsingMergeTree, ReplacingMergeTree, ReplicatedSummingMergeTree, ReplicatedAggregatingMergeTree, ReplicatedCollapsingMergeTree, File, ReplicatedGraphiteMergeTree, ReplicatedMergeTree, ReplicatedReplacingMergeTree, VersionedCollapsingMergeTree, SummingMergeTree, Distributed, TinyLog, GraphiteMergeTree, CollapsingMergeTree, AggregatingMergeTree, StripeLog, Log]. (BAD_ARGUMENTS)

```

|

non_process

|

table engine doesn t support settings describe the unexpected behaviour it should be possible to pass input format related settings to table engine like for url table engine how to reproduce clickhouse server create table table with range name string value engine csv settings input format with names use header create table table with range name string value engine csv settings input format with names use header rows in set elapsed sec received exception from server version code db exception received from localhost db exception engine doesn t support settings clause currently only the following engines have support for the feature bad arguments

| 0

|

284,827

| 21,472,300,834

|

IssuesEvent

|

2022-04-26 10:36:27

|

sonofmagic/weapp-tailwindcss-webpack-plugin

|

https://api.github.com/repos/sonofmagic/weapp-tailwindcss-webpack-plugin

|

closed

|

使用uniapp demo,disabled不生效

|

documentation question

|

使用uniapp demo,编译为小程序,disabled类不生效

`<button class="bg-green-500 text-white disabled:opacity-50" disabled>disable</button>`

<img width="326" alt="image" src="https://user-images.githubusercontent.com/30710337/165274535-8752bcc3-f7fd-4dac-bb09-f6dbed3d0217.png">

<img width="863" alt="image" src="https://user-images.githubusercontent.com/30710337/165275451-62aa4fd0-323f-4437-adc9-45220d5854aa.png">

正常效果

<img width="762" alt="image" src="https://user-images.githubusercontent.com/30710337/165277755-26a57c64-3b95-45f3-8780-a9337c13e8dc.png">

|

1.0

|

使用uniapp demo,disabled不生效 - 使用uniapp demo,编译为小程序,disabled类不生效

`<button class="bg-green-500 text-white disabled:opacity-50" disabled>disable</button>`