Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

9,553

| 12,514,584,126

|

IssuesEvent

|

2020-06-03 05:42:49

|

qgis/QGIS

|

https://api.github.com/repos/qgis/QGIS

|

opened

|

Improve processing framework for mesh layers

|

Feature Request Mesh Processing

|

related to https://github.com/lutraconsulting/qgis-crayfish-plugin/issues/448

see https://github.com/qgis/QGIS/blob/3adbed503d5c1621d4dc8e4e217ce37835eceaae/src/core/processing/qgsprocessing.h#L44

We have to change TypeMesh to multiple enums like `TypeMeshEdges, TypeMeshVectices, TypeMeshFaces, TypeMeshAnyElementType` so in the processing algorithms we can select which type of mesh data we want to handle

|

1.0

|

Improve processing framework for mesh layers - related to https://github.com/lutraconsulting/qgis-crayfish-plugin/issues/448

see https://github.com/qgis/QGIS/blob/3adbed503d5c1621d4dc8e4e217ce37835eceaae/src/core/processing/qgsprocessing.h#L44

We have to change TypeMesh to multiple enums like `TypeMeshEdges, TypeMeshVectices, TypeMeshFaces, TypeMeshAnyElementType` so in the processing algorithms we can select which type of mesh data we want to handle

|

process

|

improve processing framework for mesh layers related to see we have to change typemesh to multiple enums like typemeshedges typemeshvectices typemeshfaces typemeshanyelementtype so in the processing algorithms we can select which type of mesh data we want to handle

| 1

|

17,208

| 22,793,151,761

|

IssuesEvent

|

2022-07-10 10:05:58

|

giacomorebecchi/food-pricing

|

https://api.github.com/repos/giacomorebecchi/food-pricing

|

opened

|

Empty description text is translated as "nan"

|

preprocessing

|

We should either add a custom word (e.g. "EMPTY_DESCRIPTION"), or leave it empty.

|

1.0

|

Empty description text is translated as "nan" - We should either add a custom word (e.g. "EMPTY_DESCRIPTION"), or leave it empty.

|

process

|

empty description text is translated as nan we should either add a custom word e g empty description or leave it empty

| 1

|

508,684

| 14,704,586,614

|

IssuesEvent

|

2021-01-04 16:43:16

|

GemsTracker/gemstracker-library

|

https://api.github.com/repos/GemsTracker/gemstracker-library

|

closed

|

Extend length if gla_remote_ip

|

bug impact major priority urgent

|

Extend the length of gla_remote_ip to allow storage of more complex $request->getClientIp() results, e.g. when working from behind a firewall.

|

1.0

|

Extend length if gla_remote_ip - Extend the length of gla_remote_ip to allow storage of more complex $request->getClientIp() results, e.g. when working from behind a firewall.

|

non_process

|

extend length if gla remote ip extend the length of gla remote ip to allow storage of more complex request getclientip results e g when working from behind a firewall

| 0

|

9,077

| 12,148,979,467

|

IssuesEvent

|

2020-04-24 15:24:58

|

MicrosoftDocs/azure-docs

|

https://api.github.com/repos/MicrosoftDocs/azure-docs

|

closed

|

Alternative to downloading locally

|

Pri2 cxp doc-enhancement machine-learning/svc team-data-science-process/subsvc triaged

|

It would be helpful to know if/how one can avoid downloading locally for serverless apps.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 2f45a6b5-0fea-7fbb-5d4d-37e0e2583fd7

* Version Independent ID: 7be6f792-09f8-c22f-86b6-d0f690e9b3a4

* Content: [Explore data in Azure blob storage with pandas - Team Data Science Process](https://docs.microsoft.com/en-us/azure/machine-learning/team-data-science-process/explore-data-blob#feedback)

* Content Source: [articles/machine-learning/team-data-science-process/explore-data-blob.md](https://github.com/Microsoft/azure-docs/blob/master/articles/machine-learning/team-data-science-process/explore-data-blob.md)

* Service: **machine-learning**

* Sub-service: **team-data-science-process**

* GitHub Login: @marktab

* Microsoft Alias: **tdsp**

|

1.0

|

Alternative to downloading locally - It would be helpful to know if/how one can avoid downloading locally for serverless apps.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 2f45a6b5-0fea-7fbb-5d4d-37e0e2583fd7

* Version Independent ID: 7be6f792-09f8-c22f-86b6-d0f690e9b3a4

* Content: [Explore data in Azure blob storage with pandas - Team Data Science Process](https://docs.microsoft.com/en-us/azure/machine-learning/team-data-science-process/explore-data-blob#feedback)

* Content Source: [articles/machine-learning/team-data-science-process/explore-data-blob.md](https://github.com/Microsoft/azure-docs/blob/master/articles/machine-learning/team-data-science-process/explore-data-blob.md)

* Service: **machine-learning**

* Sub-service: **team-data-science-process**

* GitHub Login: @marktab

* Microsoft Alias: **tdsp**

|

process

|

alternative to downloading locally it would be helpful to know if how one can avoid downloading locally for serverless apps document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service machine learning sub service team data science process github login marktab microsoft alias tdsp

| 1

|

21,282

| 28,455,615,831

|

IssuesEvent

|

2023-04-17 06:48:33

|

sap-tutorials/sap-build-process-automation

|

https://api.github.com/repos/sap-tutorials/sap-build-process-automation

|

closed

|

Run the Business Process

|

tutorial:spa-run-process

|

Tutorials: https://developers.sap.com/tutorials/spa-run-process.html

--------------------------

Hi,

during the deployment of the order process. I'm getting asked to enter run variables and to enter a trigger. Both options are not mentioned on the tutorial page. I guess it makes sense to add at least a hint to these panels. Moreover, instead of Undeploy a Redeploy button is shown.

BR,

Ingo

|

1.0

|

Run the Business Process - Tutorials: https://developers.sap.com/tutorials/spa-run-process.html

--------------------------

Hi,

during the deployment of the order process. I'm getting asked to enter run variables and to enter a trigger. Both options are not mentioned on the tutorial page. I guess it makes sense to add at least a hint to these panels. Moreover, instead of Undeploy a Redeploy button is shown.

BR,

Ingo

|

process

|

run the business process tutorials hi during the deployment of the order process i m getting asked to enter run variables and to enter a trigger both options are not mentioned on the tutorial page i guess it makes sense to add at least a hint to these panels moreover instead of undeploy a redeploy button is shown br ingo

| 1

|

42,190

| 6,969,592,257

|

IssuesEvent

|

2017-12-11 06:30:25

|

benwiley4000/react-responsive-audio-player

|

https://api.github.com/repos/benwiley4000/react-responsive-audio-player

|

opened

|

Remove propType annotations from AudioPlayer.js

|

documentation

|

Maybe we'll bring this back if we do some jsdoc type thing in the future, but for now it's too much to maintain multiple descriptions of the proptypes accepted by `AudioPlayer`.

|

1.0

|

Remove propType annotations from AudioPlayer.js - Maybe we'll bring this back if we do some jsdoc type thing in the future, but for now it's too much to maintain multiple descriptions of the proptypes accepted by `AudioPlayer`.

|

non_process

|

remove proptype annotations from audioplayer js maybe we ll bring this back if we do some jsdoc type thing in the future but for now it s too much to maintain multiple descriptions of the proptypes accepted by audioplayer

| 0

|

59,140

| 8,338,297,858

|

IssuesEvent

|

2018-09-28 13:56:34

|

coenvalk/conductAR

|

https://api.github.com/repos/coenvalk/conductAR

|

closed

|

Usage Documentation for Music Playback

|

documentation

|

Create documents to explain usage for music playback scripts.

|

1.0

|

Usage Documentation for Music Playback - Create documents to explain usage for music playback scripts.

|

non_process

|

usage documentation for music playback create documents to explain usage for music playback scripts

| 0

|

17,130

| 22,649,071,951

|

IssuesEvent

|

2022-07-01 11:41:56

|

PyCQA/pylint

|

https://api.github.com/repos/PyCQA/pylint

|

closed

|

Different results with -j4 and -j8

|

Bug :beetle: topic-multiprocessing

|

I'm not sure if this is a duplicate of #2573 or #374 because I don't know if those are particular to the specific checks they mention.

### Steps to reproduce

1. `git clone https://github.com/nedbat/coveragepy; cd coveragepy`

2. `git checkout 50cc68846f`

3. `pip install tox`

4. `tox -e lint --notest`

5. `.tox/lint/bin/python -m pylint --notes= -j 4 coverage tests doc ci igor.py setup.py __main__.py`

6. No violations are reported

7. `.tox/lint/bin/python -m pylint --notes= -j 8 coverage tests doc ci igor.py setup.py __main__.py`

```

************* Module coverage.pytracer

coverage/pytracer.py:140 E: self.should_start_context is not callable (not-callable)

coverage/pytracer.py:165 E: self.should_trace is not callable (not-callable)

coverage/pytracer.py:166 E: 'self.should_trace_cache' does not support item assignment (unsupported-assignment-operation)

coverage/pytracer.py:171 E: Value 'self.data' doesn't support membership test (unsupported-membership-test)

coverage/pytracer.py:172 E: 'self.data' does not support item assignment (unsupported-assignment-operation)

coverage/pytracer.py:173 E: Value 'self.data' is unsubscriptable (unsubscriptable-object)

coverage/pytracer.py:259 E: self.warn is not callable (not-callable)

```

A different manifestation of what might be the same underlying cause: in my CI, running -j4, I get occasional failures with these same violations reported.

### Expected behavior

The number of processes shouldn't change the results, only the performance.

### pylint --version output

Result of `pylint --version` output:

```

$ .tox/lint/bin/python -m pylint --version

pylint 2.7.4

astroid 2.5.3

Python 3.8.8 (default, Mar 21 2021, 13:50:20)

[Clang 12.0.0 (clang-1200.0.32.29)]

```

<!--

Additional dependencies:

```

pandas==0.23.2

marshmallow==3.10.0

...

```

-->

|

1.0

|

Different results with -j4 and -j8 - I'm not sure if this is a duplicate of #2573 or #374 because I don't know if those are particular to the specific checks they mention.

### Steps to reproduce

1. `git clone https://github.com/nedbat/coveragepy; cd coveragepy`

2. `git checkout 50cc68846f`

3. `pip install tox`

4. `tox -e lint --notest`

5. `.tox/lint/bin/python -m pylint --notes= -j 4 coverage tests doc ci igor.py setup.py __main__.py`

6. No violations are reported

7. `.tox/lint/bin/python -m pylint --notes= -j 8 coverage tests doc ci igor.py setup.py __main__.py`

```

************* Module coverage.pytracer

coverage/pytracer.py:140 E: self.should_start_context is not callable (not-callable)

coverage/pytracer.py:165 E: self.should_trace is not callable (not-callable)

coverage/pytracer.py:166 E: 'self.should_trace_cache' does not support item assignment (unsupported-assignment-operation)

coverage/pytracer.py:171 E: Value 'self.data' doesn't support membership test (unsupported-membership-test)

coverage/pytracer.py:172 E: 'self.data' does not support item assignment (unsupported-assignment-operation)

coverage/pytracer.py:173 E: Value 'self.data' is unsubscriptable (unsubscriptable-object)

coverage/pytracer.py:259 E: self.warn is not callable (not-callable)

```

A different manifestation of what might be the same underlying cause: in my CI, running -j4, I get occasional failures with these same violations reported.

### Expected behavior

The number of processes shouldn't change the results, only the performance.

### pylint --version output

Result of `pylint --version` output:

```

$ .tox/lint/bin/python -m pylint --version

pylint 2.7.4

astroid 2.5.3

Python 3.8.8 (default, Mar 21 2021, 13:50:20)

[Clang 12.0.0 (clang-1200.0.32.29)]

```

<!--

Additional dependencies:

```

pandas==0.23.2

marshmallow==3.10.0

...

```

-->

|

process

|

different results with and i m not sure if this is a duplicate of or because i don t know if those are particular to the specific checks they mention steps to reproduce git clone cd coveragepy git checkout pip install tox tox e lint notest tox lint bin python m pylint notes j coverage tests doc ci igor py setup py main py no violations are reported tox lint bin python m pylint notes j coverage tests doc ci igor py setup py main py module coverage pytracer coverage pytracer py e self should start context is not callable not callable coverage pytracer py e self should trace is not callable not callable coverage pytracer py e self should trace cache does not support item assignment unsupported assignment operation coverage pytracer py e value self data doesn t support membership test unsupported membership test coverage pytracer py e self data does not support item assignment unsupported assignment operation coverage pytracer py e value self data is unsubscriptable unsubscriptable object coverage pytracer py e self warn is not callable not callable a different manifestation of what might be the same underlying cause in my ci running i get occasional failures with these same violations reported expected behavior the number of processes shouldn t change the results only the performance pylint version output result of pylint version output tox lint bin python m pylint version pylint astroid python default mar additional dependencies pandas marshmallow

| 1

|

17,894

| 23,868,683,822

|

IssuesEvent

|

2022-09-07 13:15:18

|

streamnative/flink

|

https://api.github.com/repos/streamnative/flink

|

closed

|

[BUG] SQL Connector key fields with avro format returns null schema

|

compute/data-processing type/bug

|

When running the PulsarTableITCase with the key and partial value, the tests failed because for the keySerailization the returned schema was null. I loooked into the code it should not return null. It might be a bug in the flink-avro format and we need to find out why if we want to use the format for key + value.

|

1.0

|

[BUG] SQL Connector key fields with avro format returns null schema -

When running the PulsarTableITCase with the key and partial value, the tests failed because for the keySerailization the returned schema was null. I loooked into the code it should not return null. It might be a bug in the flink-avro format and we need to find out why if we want to use the format for key + value.

|

process

|

sql connector key fields with avro format returns null schema when running the pulsartableitcase with the key and partial value the tests failed because for the keyserailization the returned schema was null i loooked into the code it should not return null it might be a bug in the flink avro format and we need to find out why if we want to use the format for key value

| 1

|

215,813

| 24,196,526,426

|

IssuesEvent

|

2022-09-24 01:12:41

|

haeli05/source

|

https://api.github.com/repos/haeli05/source

|

opened

|

CVE-2020-36604 (Medium) detected in hoek-8.2.4.tgz

|

security vulnerability

|

## CVE-2020-36604 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hoek-8.2.4.tgz</b></p></summary>

<p>General purpose node utilities</p>

<p>Library home page: <a href="https://registry.npmjs.org/@hapi/hoek/-/hoek-8.2.4.tgz">https://registry.npmjs.org/@hapi/hoek/-/hoek-8.2.4.tgz</a></p>

<p>Path to dependency file: /FrontEnd/package.json</p>

<p>Path to vulnerable library: /FrontEnd/node_modules/@hapi/hoek/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.1.1.tgz (Root Library)

- workbox-webpack-plugin-4.3.1.tgz

- workbox-build-4.3.1.tgz

- joi-15.1.1.tgz

- :x: **hoek-8.2.4.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

hoek before 8.5.1 and 9.x before 9.0.3 allows prototype poisoning in the clone function.

<p>Publish Date: 2022-09-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36604>CVE-2020-36604</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2022-09-23</p>

<p>Fix Resolution (@hapi/hoek): 8.5.1</p>

<p>Direct dependency fix Resolution (react-scripts): 3.1.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-36604 (Medium) detected in hoek-8.2.4.tgz - ## CVE-2020-36604 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hoek-8.2.4.tgz</b></p></summary>

<p>General purpose node utilities</p>

<p>Library home page: <a href="https://registry.npmjs.org/@hapi/hoek/-/hoek-8.2.4.tgz">https://registry.npmjs.org/@hapi/hoek/-/hoek-8.2.4.tgz</a></p>

<p>Path to dependency file: /FrontEnd/package.json</p>

<p>Path to vulnerable library: /FrontEnd/node_modules/@hapi/hoek/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.1.1.tgz (Root Library)

- workbox-webpack-plugin-4.3.1.tgz

- workbox-build-4.3.1.tgz

- joi-15.1.1.tgz

- :x: **hoek-8.2.4.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

hoek before 8.5.1 and 9.x before 9.0.3 allows prototype poisoning in the clone function.

<p>Publish Date: 2022-09-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-36604>CVE-2020-36604</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2022-09-23</p>

<p>Fix Resolution (@hapi/hoek): 8.5.1</p>

<p>Direct dependency fix Resolution (react-scripts): 3.1.2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in hoek tgz cve medium severity vulnerability vulnerable library hoek tgz general purpose node utilities library home page a href path to dependency file frontend package json path to vulnerable library frontend node modules hapi hoek package json dependency hierarchy react scripts tgz root library workbox webpack plugin tgz workbox build tgz joi tgz x hoek tgz vulnerable library vulnerability details hoek before and x before allows prototype poisoning in the clone function publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version release date fix resolution hapi hoek direct dependency fix resolution react scripts step up your open source security game with mend

| 0

|

410,553

| 11,993,566,094

|

IssuesEvent

|

2020-04-08 12:15:20

|

woocommerce/woocommerce

|

https://api.github.com/repos/woocommerce/woocommerce

|

closed

|

[4.1 beta 1]: Can't create manual order

|

bug component: order priority: high

|

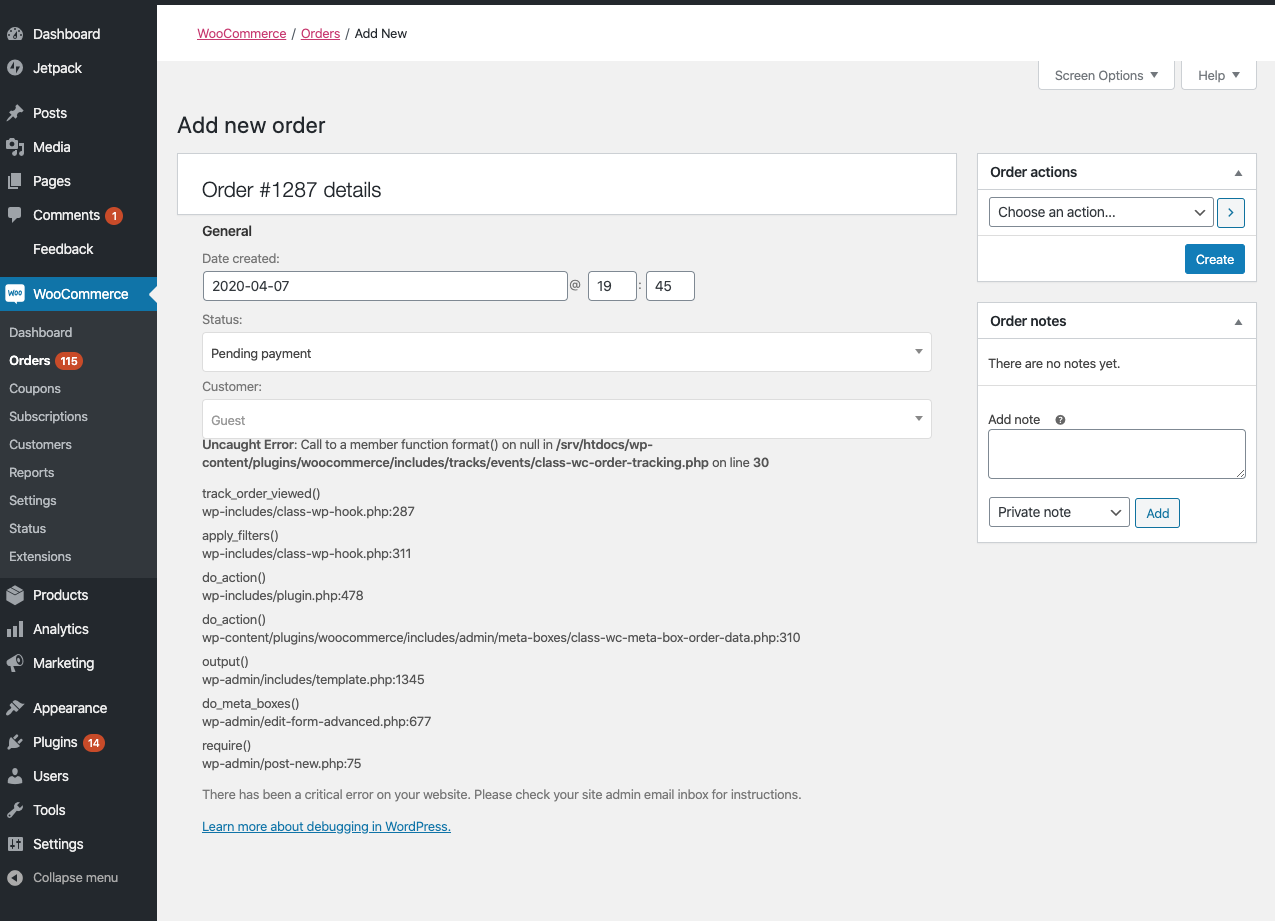

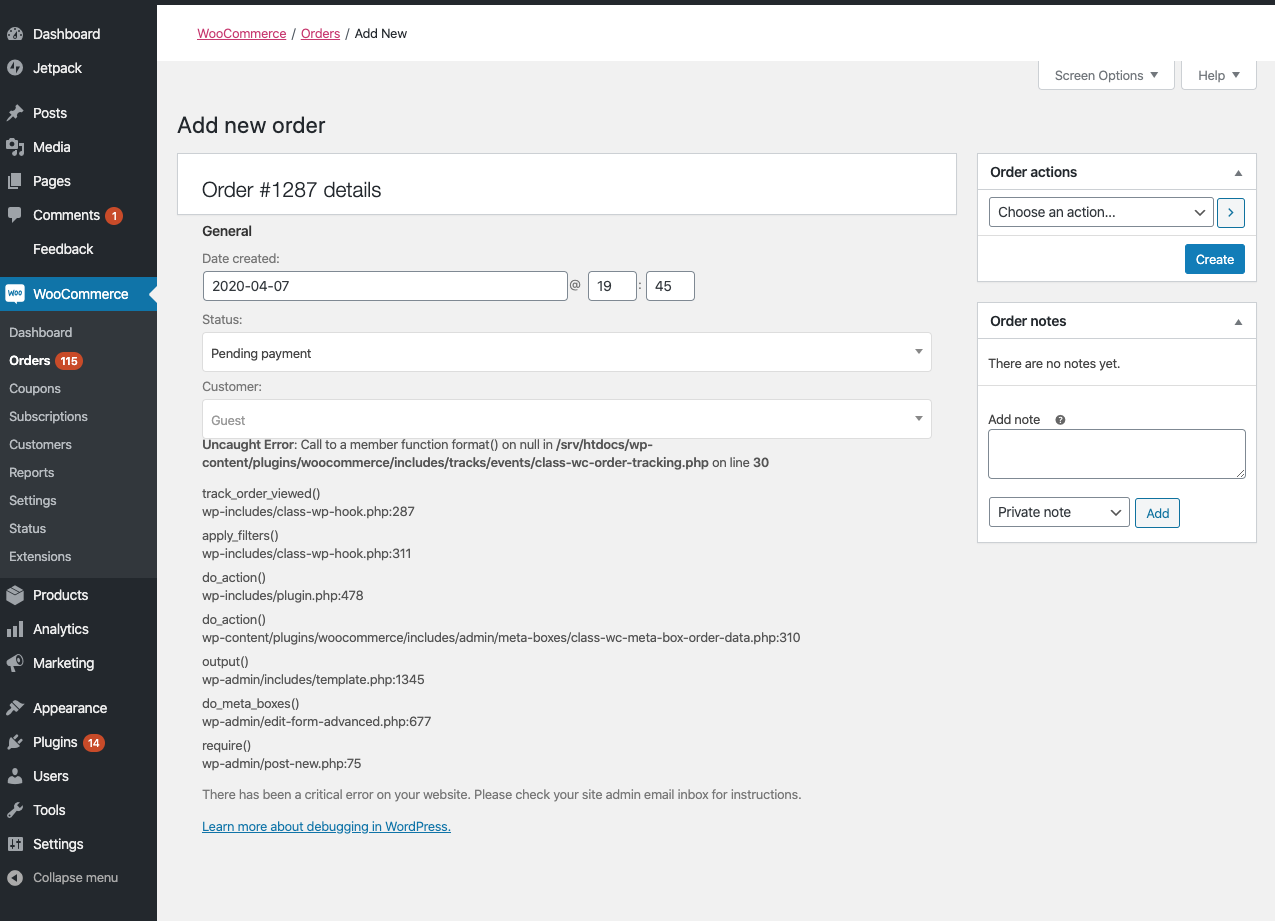

**Describe the bug**

Can't seem to create manual order while testing 4.1 beta 1. Tried on 2 Pressable test sites.

**To Reproduce**

Steps to reproduce the behavior:

1. Create any product on the site;

2. Try creating manual order;

3. See error:

Error:

```

Uncaught Error: Call to a member function format() on null in /srv/htdocs/wp-content/plugins/woocommerce/includes/tracks/events/class-wc-order-tracking.php on line 30

track_order_viewed()

wp-includes/class-wp-hook.php:287

apply_filters()

wp-includes/class-wp-hook.php:311

do_action()

wp-includes/plugin.php:478

do_action()

wp-content/plugins/woocommerce/includes/admin/meta-boxes/class-wc-meta-box-order-data.php:310

output()

wp-admin/includes/template.php:1345

do_meta_boxes()

wp-admin/edit-form-advanced.php:677

require()

wp-admin/post-new.php:75

```

**Screenshots**

See above.

**Expected behavior**

I expected to be able to create manual order without any issues.

**Isolating the problem (mark completed items with an [x]):**

- [x] I have deactivated other plugins and confirmed this bug occurs when only WooCommerce plugin is active.

- [x] This bug happens with a default WordPress theme active, or [Storefront](https://woocommerce.com/storefront/).

- [x] I can reproduce this bug consistently using the steps above.

**WordPress Environment**

<details>

```

`

### WordPress Environment ###

WordPress address (URL): https://jamosova2.mystagingwebsite.com

Site address (URL): https://jamosova2.mystagingwebsite.com

WC Version: 4.1.0

REST API Version: ✔ 1.0.7

WC Blocks Version: ✔ 2.5.16

Action Scheduler Version: ✔ 3.1.4

WC Admin Version: ✔ 1.1.0

Log Directory Writable: ✔

WP Version: 5.4

WP Multisite: –

WP Memory Limit: 256 MB

WP Debug Mode: –

WP Cron: ✔

Language: en_US

External object cache: ✔

### Server Environment ###

Server Info: nginx

PHP Version: 7.4.4

PHP Post Max Size: 2 GB

PHP Time Limit: 1200

PHP Max Input Vars: 6144

cURL Version: 7.69.1

OpenSSL/1.1.0l

SUHOSIN Installed: –

MySQL Version: 5.5.5-10.3.21-MariaDB-log

Max Upload Size: 2 GB

Default Timezone is UTC: ✔

fsockopen/cURL: ✔

SoapClient: ✔

DOMDocument: ✔

GZip: ✔

Multibyte String: ✔

Remote Post: ✔

Remote Get: ✔

### Database ###

WC Database Version: 4.1.0

WC Database Prefix: wp_

Total Database Size: 11.61MB

Database Data Size: 6.47MB

Database Index Size: 5.14MB

wp_woocommerce_sessions: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_api_keys: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_attribute_taxonomies: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_downloadable_product_permissions: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_order_items: Data: 0.05MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_order_itemmeta: Data: 0.25MB + Index: 0.25MB + Engine InnoDB

wp_woocommerce_tax_rates: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_tax_rate_locations: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_woocommerce_shipping_zones: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_shipping_zone_locations: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_woocommerce_shipping_zone_methods: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_payment_tokens: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_payment_tokenmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_log: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_actions: Data: 0.31MB + Index: 0.36MB + Engine InnoDB

wp_actionscheduler_claims: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_groups: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_logs: Data: 0.31MB + Index: 0.25MB + Engine InnoDB

wp_commentmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_comments: Data: 0.06MB + Index: 0.09MB + Engine InnoDB

wp_links: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_ms_snippets: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_options: Data: 1.17MB + Index: 0.09MB + Engine InnoDB

wp_postmeta: Data: 1.52MB + Index: 1.88MB + Engine InnoDB

wp_posts: Data: 1.30MB + Index: 0.22MB + Engine InnoDB

wp_snippets: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_termmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_terms: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_term_relationships: Data: 0.08MB + Index: 0.05MB + Engine InnoDB

wp_term_taxonomy: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_usermeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_users: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_wc_admin_notes: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_admin_note_actions: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_category_lookup: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_customer_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_deposits_payment_plans: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_deposits_payment_plans_schedule: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_download_log: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_coupon_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_product_lookup: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_wc_order_stats: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_wc_order_tax_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_product_meta_lookup: Data: 0.06MB + Index: 0.09MB + Engine InnoDB

wp_wc_reserved_stock: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_tax_rate_classes: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_webhooks: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_gzd_dhl_labelmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_dhl_labels: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_shipmentmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_shipments: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_shipment_itemmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_shipment_items: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_gzd_shipping_provider: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_gzd_shipping_providermeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wsal_metadata: Data: 0.39MB + Index: 0.61MB + Engine InnoDB

wp_wsal_occurrences: Data: 0.05MB + Index: 0.03MB + Engine InnoDB

wp_wsal_options: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

### Post Type Counts ###

attachment: 204

jetpack_migration: 2

jp_img_sitemap: 4

jp_sitemap: 4

jp_sitemap_master: 4

nav_menu_item: 11

page: 9

post: 23

product: 259

product_variation: 188

revision: 45

shop_coupon: 6

shop_order: 150

shop_order_refund: 18

shop_subscription: 2

### Security ###

Secure connection (HTTPS): ✔

Hide errors from visitors: ✔

### Active Plugins (5) ###

Query Monitor: by John Blackbourn – 3.5.2

Akismet Anti-Spam: by Automattic – 4.1.4

Jetpack by WordPress.com: by Automattic – 8.4.1

WooCommerce Beta Tester: by WooCommerce – 2.0.1 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce: by Automattic – 4.1.0-beta.1

### Inactive Plugins (30) ###

Advanced Custom Fields: by Elliot Condon – 5.8.7

Email Templates: by Damian Logghe – 1.3.1.2

Facebook for WooCommerce: by Facebook – 1.10.0 – Installed version not tested with active version of WooCommerce 4.1.0

Germanized for WooCommerce: by Vendidero – 3.1.4 – Installed version not tested with active version of WooCommerce 4.1.0

Gutenberg: by Gutenberg Team – 7.1.0

Loco Translate: by Tim Whitlock – 2.3.1

Payment Gateway Based Fees and Discounts for WooCommerce: by Tyche Softwares – 2.6 – Installed version not tested with active version of WooCommerce 4.1.0

Storefront Powerpack: by WooCommerce – 1.5.0

SVG Support: by Benbodhi – 2.3.15

WooCommerce Admin: by WooCommerce – 0.24.0 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Blocks: by Automattic – 2.5.2 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Customer/Order CSV Export: by SkyVerge – 4.3.5 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Deposits: by Automattic – 1.3.3 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Dynamic Pricing: by Lucas Stark – 3.1.10 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce PayPal Checkout Gateway: by WooCommerce – 1.6.18 (update to version 1.6.20 is available) – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce PayPal Powered by Braintree Gateway: by WooCommerce – 2.0.3 (update to version 2.3.8 is available) – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Print Invoices/Packing Lists: by SkyVerge – 3.6.1 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Product Add-ons: by WooCommerce – 2.9.7 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Sequential Order Numbers Pro: by SkyVerge – 1.11.2 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Services: by Automattic – 1.22.2 (update to version 1.22.5 is available) – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Stamps.com API integration: by WooCommerce – 1.3.15 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Stamps.com Export Suite: by SkyVerge – 2.10.1 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Store Catalog PDF Download: by WooCommerce – 1.0.8 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Stripe Gateway: by WooCommerce – 4.3.2 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Subscriptions: by WooCommerce – 3.0.1 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce UPS Shipping: by WooCommerce – 3.2.19 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Wholesale Prices: by Rymera Web Co – 1.11.1 – Installed version not tested with active version of WooCommerce 4.1.0

WP Crontrol: by John Blackbourn & crontributors – 1.7.1

WP Migrate DB: by Delicious Brains – 0.9.2

WP Security Audit Log: by WP White Security – 4.0.1

### Dropin Plugins (3) ###

advanced-cache.php: advanced-cache.php

db.php: Query Monitor Database Class

object-cache.php: Memcached

### Settings ###

API Enabled: ✔

Force SSL: ✔

Currency: USD ($)

Currency Position: left

Thousand Separator: ,

Decimal Separator: .

Number of Decimals: 2

Taxonomies: Product Types: external (external)

grouped (grouped)

simple (simple)

subscription (subscription)

variable (variable)

variable subscription (variable-subscription)

Taxonomies: Product Visibility: exclude-from-catalog (exclude-from-catalog)

exclude-from-search (exclude-from-search)

featured (featured)

outofstock (outofstock)

rated-1 (rated-1)

rated-2 (rated-2)

rated-3 (rated-3)

rated-4 (rated-4)

rated-5 (rated-5)

Connected to WooCommerce.com: –

### WC Pages ###

Shop base: #4 - /shop/

Cart: #5 - /cart/

Checkout: #6 - /checkout/

My account: #7 - /my-account/

Terms and conditions: ❌ Page not set

### Theme ###

Name: Storefront

Version: 2.5.5

Author URL: https://woocommerce.com/

Child Theme: ❌ – If you are modifying WooCommerce on a parent theme that you did not build personally we recommend using a child theme. See: How to create a child theme

WooCommerce Support: ✔

### Templates ###

Overrides: –

### Action Scheduler ###

Complete: 1,047

Oldest: 2020-03-09 06:51:33 -0400

Newest: 2020-04-07 19:52:08 -0400

Pending: 4

Oldest: 2020-04-07 19:52:14 -0400

Newest: 2020-05-06 09:06:20 -0400

`

```

</details>

|

1.0

|

[4.1 beta 1]: Can't create manual order - **Describe the bug**

Can't seem to create manual order while testing 4.1 beta 1. Tried on 2 Pressable test sites.

**To Reproduce**

Steps to reproduce the behavior:

1. Create any product on the site;

2. Try creating manual order;

3. See error:

Error:

```

Uncaught Error: Call to a member function format() on null in /srv/htdocs/wp-content/plugins/woocommerce/includes/tracks/events/class-wc-order-tracking.php on line 30

track_order_viewed()

wp-includes/class-wp-hook.php:287

apply_filters()

wp-includes/class-wp-hook.php:311

do_action()

wp-includes/plugin.php:478

do_action()

wp-content/plugins/woocommerce/includes/admin/meta-boxes/class-wc-meta-box-order-data.php:310

output()

wp-admin/includes/template.php:1345

do_meta_boxes()

wp-admin/edit-form-advanced.php:677

require()

wp-admin/post-new.php:75

```

**Screenshots**

See above.

**Expected behavior**

I expected to be able to create manual order without any issues.

**Isolating the problem (mark completed items with an [x]):**

- [x] I have deactivated other plugins and confirmed this bug occurs when only WooCommerce plugin is active.

- [x] This bug happens with a default WordPress theme active, or [Storefront](https://woocommerce.com/storefront/).

- [x] I can reproduce this bug consistently using the steps above.

**WordPress Environment**

<details>

```

`

### WordPress Environment ###

WordPress address (URL): https://jamosova2.mystagingwebsite.com

Site address (URL): https://jamosova2.mystagingwebsite.com

WC Version: 4.1.0

REST API Version: ✔ 1.0.7

WC Blocks Version: ✔ 2.5.16

Action Scheduler Version: ✔ 3.1.4

WC Admin Version: ✔ 1.1.0

Log Directory Writable: ✔

WP Version: 5.4

WP Multisite: –

WP Memory Limit: 256 MB

WP Debug Mode: –

WP Cron: ✔

Language: en_US

External object cache: ✔

### Server Environment ###

Server Info: nginx

PHP Version: 7.4.4

PHP Post Max Size: 2 GB

PHP Time Limit: 1200

PHP Max Input Vars: 6144

cURL Version: 7.69.1

OpenSSL/1.1.0l

SUHOSIN Installed: –

MySQL Version: 5.5.5-10.3.21-MariaDB-log

Max Upload Size: 2 GB

Default Timezone is UTC: ✔

fsockopen/cURL: ✔

SoapClient: ✔

DOMDocument: ✔

GZip: ✔

Multibyte String: ✔

Remote Post: ✔

Remote Get: ✔

### Database ###

WC Database Version: 4.1.0

WC Database Prefix: wp_

Total Database Size: 11.61MB

Database Data Size: 6.47MB

Database Index Size: 5.14MB

wp_woocommerce_sessions: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_api_keys: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_attribute_taxonomies: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_downloadable_product_permissions: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_order_items: Data: 0.05MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_order_itemmeta: Data: 0.25MB + Index: 0.25MB + Engine InnoDB

wp_woocommerce_tax_rates: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_tax_rate_locations: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_woocommerce_shipping_zones: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_shipping_zone_locations: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_woocommerce_shipping_zone_methods: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_payment_tokens: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_payment_tokenmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_log: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_actions: Data: 0.31MB + Index: 0.36MB + Engine InnoDB

wp_actionscheduler_claims: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_groups: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_actionscheduler_logs: Data: 0.31MB + Index: 0.25MB + Engine InnoDB

wp_commentmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_comments: Data: 0.06MB + Index: 0.09MB + Engine InnoDB

wp_links: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_ms_snippets: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_options: Data: 1.17MB + Index: 0.09MB + Engine InnoDB

wp_postmeta: Data: 1.52MB + Index: 1.88MB + Engine InnoDB

wp_posts: Data: 1.30MB + Index: 0.22MB + Engine InnoDB

wp_snippets: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_termmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_terms: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_term_relationships: Data: 0.08MB + Index: 0.05MB + Engine InnoDB

wp_term_taxonomy: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_usermeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_users: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_wc_admin_notes: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_admin_note_actions: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_category_lookup: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_customer_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_deposits_payment_plans: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_deposits_payment_plans_schedule: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_download_log: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_coupon_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_order_product_lookup: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_wc_order_stats: Data: 0.02MB + Index: 0.05MB + Engine InnoDB

wp_wc_order_tax_lookup: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wc_product_meta_lookup: Data: 0.06MB + Index: 0.09MB + Engine InnoDB

wp_wc_reserved_stock: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_wc_tax_rate_classes: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_wc_webhooks: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

wp_woocommerce_gzd_dhl_labelmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_dhl_labels: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_shipmentmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_shipments: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_shipment_itemmeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_woocommerce_gzd_shipment_items: Data: 0.02MB + Index: 0.06MB + Engine InnoDB

wp_woocommerce_gzd_shipping_provider: Data: 0.02MB + Index: 0.00MB + Engine InnoDB

wp_woocommerce_gzd_shipping_providermeta: Data: 0.02MB + Index: 0.03MB + Engine InnoDB

wp_wsal_metadata: Data: 0.39MB + Index: 0.61MB + Engine InnoDB

wp_wsal_occurrences: Data: 0.05MB + Index: 0.03MB + Engine InnoDB

wp_wsal_options: Data: 0.02MB + Index: 0.02MB + Engine InnoDB

### Post Type Counts ###

attachment: 204

jetpack_migration: 2

jp_img_sitemap: 4

jp_sitemap: 4

jp_sitemap_master: 4

nav_menu_item: 11

page: 9

post: 23

product: 259

product_variation: 188

revision: 45

shop_coupon: 6

shop_order: 150

shop_order_refund: 18

shop_subscription: 2

### Security ###

Secure connection (HTTPS): ✔

Hide errors from visitors: ✔

### Active Plugins (5) ###

Query Monitor: by John Blackbourn – 3.5.2

Akismet Anti-Spam: by Automattic – 4.1.4

Jetpack by WordPress.com: by Automattic – 8.4.1

WooCommerce Beta Tester: by WooCommerce – 2.0.1 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce: by Automattic – 4.1.0-beta.1

### Inactive Plugins (30) ###

Advanced Custom Fields: by Elliot Condon – 5.8.7

Email Templates: by Damian Logghe – 1.3.1.2

Facebook for WooCommerce: by Facebook – 1.10.0 – Installed version not tested with active version of WooCommerce 4.1.0

Germanized for WooCommerce: by Vendidero – 3.1.4 – Installed version not tested with active version of WooCommerce 4.1.0

Gutenberg: by Gutenberg Team – 7.1.0

Loco Translate: by Tim Whitlock – 2.3.1

Payment Gateway Based Fees and Discounts for WooCommerce: by Tyche Softwares – 2.6 – Installed version not tested with active version of WooCommerce 4.1.0

Storefront Powerpack: by WooCommerce – 1.5.0

SVG Support: by Benbodhi – 2.3.15

WooCommerce Admin: by WooCommerce – 0.24.0 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Blocks: by Automattic – 2.5.2 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Customer/Order CSV Export: by SkyVerge – 4.3.5 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Deposits: by Automattic – 1.3.3 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Dynamic Pricing: by Lucas Stark – 3.1.10 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce PayPal Checkout Gateway: by WooCommerce – 1.6.18 (update to version 1.6.20 is available) – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce PayPal Powered by Braintree Gateway: by WooCommerce – 2.0.3 (update to version 2.3.8 is available) – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Print Invoices/Packing Lists: by SkyVerge – 3.6.1 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Product Add-ons: by WooCommerce – 2.9.7 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Sequential Order Numbers Pro: by SkyVerge – 1.11.2 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Services: by Automattic – 1.22.2 (update to version 1.22.5 is available) – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Stamps.com API integration: by WooCommerce – 1.3.15 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Stamps.com Export Suite: by SkyVerge – 2.10.1 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Store Catalog PDF Download: by WooCommerce – 1.0.8 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Stripe Gateway: by WooCommerce – 4.3.2 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Subscriptions: by WooCommerce – 3.0.1 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce UPS Shipping: by WooCommerce – 3.2.19 – Installed version not tested with active version of WooCommerce 4.1.0

WooCommerce Wholesale Prices: by Rymera Web Co – 1.11.1 – Installed version not tested with active version of WooCommerce 4.1.0

WP Crontrol: by John Blackbourn & crontributors – 1.7.1

WP Migrate DB: by Delicious Brains – 0.9.2

WP Security Audit Log: by WP White Security – 4.0.1

### Dropin Plugins (3) ###

advanced-cache.php: advanced-cache.php

db.php: Query Monitor Database Class

object-cache.php: Memcached

### Settings ###

API Enabled: ✔

Force SSL: ✔

Currency: USD ($)

Currency Position: left

Thousand Separator: ,

Decimal Separator: .

Number of Decimals: 2

Taxonomies: Product Types: external (external)

grouped (grouped)

simple (simple)

subscription (subscription)

variable (variable)

variable subscription (variable-subscription)

Taxonomies: Product Visibility: exclude-from-catalog (exclude-from-catalog)

exclude-from-search (exclude-from-search)

featured (featured)

outofstock (outofstock)

rated-1 (rated-1)

rated-2 (rated-2)

rated-3 (rated-3)

rated-4 (rated-4)

rated-5 (rated-5)

Connected to WooCommerce.com: –

### WC Pages ###

Shop base: #4 - /shop/

Cart: #5 - /cart/

Checkout: #6 - /checkout/

My account: #7 - /my-account/

Terms and conditions: ❌ Page not set

### Theme ###

Name: Storefront

Version: 2.5.5

Author URL: https://woocommerce.com/

Child Theme: ❌ – If you are modifying WooCommerce on a parent theme that you did not build personally we recommend using a child theme. See: How to create a child theme

WooCommerce Support: ✔

### Templates ###

Overrides: –

### Action Scheduler ###

Complete: 1,047

Oldest: 2020-03-09 06:51:33 -0400

Newest: 2020-04-07 19:52:08 -0400

Pending: 4

Oldest: 2020-04-07 19:52:14 -0400

Newest: 2020-05-06 09:06:20 -0400

`

```

</details>

|

non_process

|

can t create manual order describe the bug can t seem to create manual order while testing beta tried on pressable test sites to reproduce steps to reproduce the behavior create any product on the site try creating manual order see error error uncaught error call to a member function format on null in srv htdocs wp content plugins woocommerce includes tracks events class wc order tracking php on line track order viewed wp includes class wp hook php apply filters wp includes class wp hook php do action wp includes plugin php do action wp content plugins woocommerce includes admin meta boxes class wc meta box order data php output wp admin includes template php do meta boxes wp admin edit form advanced php require wp admin post new php screenshots see above expected behavior i expected to be able to create manual order without any issues isolating the problem mark completed items with an i have deactivated other plugins and confirmed this bug occurs when only woocommerce plugin is active this bug happens with a default wordpress theme active or i can reproduce this bug consistently using the steps above wordpress environment wordpress environment wordpress address url site address url wc version rest api version ✔ wc blocks version ✔ action scheduler version ✔ wc admin version ✔ log directory writable ✔ wp version wp multisite – wp memory limit mb wp debug mode – wp cron ✔ language en us external object cache ✔ server environment server info nginx php version php post max size gb php time limit php max input vars curl version openssl suhosin installed – mysql version mariadb log max upload size gb default timezone is utc ✔ fsockopen curl ✔ soapclient ✔ domdocument ✔ gzip ✔ multibyte string ✔ remote post ✔ remote get ✔ database wc database version wc database prefix wp total database size database data size database index size wp woocommerce sessions data index engine innodb wp woocommerce api keys data index engine innodb wp woocommerce attribute taxonomies data index engine innodb wp woocommerce downloadable product permissions data index engine innodb wp woocommerce order items data index engine innodb wp woocommerce order itemmeta data index engine innodb wp woocommerce tax rates data index engine innodb wp woocommerce tax rate locations data index engine innodb wp woocommerce shipping zones data index engine innodb wp woocommerce shipping zone locations data index engine innodb wp woocommerce shipping zone methods data index engine innodb wp woocommerce payment tokens data index engine innodb wp woocommerce payment tokenmeta data index engine innodb wp woocommerce log data index engine innodb wp actionscheduler actions data index engine innodb wp actionscheduler claims data index engine innodb wp actionscheduler groups data index engine innodb wp actionscheduler logs data index engine innodb wp commentmeta data index engine innodb wp comments data index engine innodb wp links data index engine innodb wp ms snippets data index engine innodb wp options data index engine innodb wp postmeta data index engine innodb wp posts data index engine innodb wp snippets data index engine innodb wp termmeta data index engine innodb wp terms data index engine innodb wp term relationships data index engine innodb wp term taxonomy data index engine innodb wp usermeta data index engine innodb wp users data index engine innodb wp wc admin notes data index engine innodb wp wc admin note actions data index engine innodb wp wc category lookup data index engine innodb wp wc customer lookup data index engine innodb wp wc deposits payment plans data index engine innodb wp wc deposits payment plans schedule data index engine innodb wp wc download log data index engine innodb wp wc order coupon lookup data index engine innodb wp wc order product lookup data index engine innodb wp wc order stats data index engine innodb wp wc order tax lookup data index engine innodb wp wc product meta lookup data index engine innodb wp wc reserved stock data index engine innodb wp wc tax rate classes data index engine innodb wp wc webhooks data index engine innodb wp woocommerce gzd dhl labelmeta data index engine innodb wp woocommerce gzd dhl labels data index engine innodb wp woocommerce gzd shipmentmeta data index engine innodb wp woocommerce gzd shipments data index engine innodb wp woocommerce gzd shipment itemmeta data index engine innodb wp woocommerce gzd shipment items data index engine innodb wp woocommerce gzd shipping provider data index engine innodb wp woocommerce gzd shipping providermeta data index engine innodb wp wsal metadata data index engine innodb wp wsal occurrences data index engine innodb wp wsal options data index engine innodb post type counts attachment jetpack migration jp img sitemap jp sitemap jp sitemap master nav menu item page post product product variation revision shop coupon shop order shop order refund shop subscription security secure connection https ✔ hide errors from visitors ✔ active plugins query monitor by john blackbourn – akismet anti spam by automattic – jetpack by wordpress com by automattic – woocommerce beta tester by woocommerce – – installed version not tested with active version of woocommerce woocommerce by automattic – beta inactive plugins advanced custom fields by elliot condon – email templates by damian logghe – facebook for woocommerce by facebook – – installed version not tested with active version of woocommerce germanized for woocommerce by vendidero – – installed version not tested with active version of woocommerce gutenberg by gutenberg team – loco translate by tim whitlock – payment gateway based fees and discounts for woocommerce by tyche softwares – – installed version not tested with active version of woocommerce storefront powerpack by woocommerce – svg support by benbodhi – woocommerce admin by woocommerce – – installed version not tested with active version of woocommerce woocommerce blocks by automattic – – installed version not tested with active version of woocommerce woocommerce customer order csv export by skyverge – – installed version not tested with active version of woocommerce woocommerce deposits by automattic – – installed version not tested with active version of woocommerce woocommerce dynamic pricing by lucas stark – – installed version not tested with active version of woocommerce woocommerce paypal checkout gateway by woocommerce – update to version is available – installed version not tested with active version of woocommerce woocommerce paypal powered by braintree gateway by woocommerce – update to version is available – installed version not tested with active version of woocommerce woocommerce print invoices packing lists by skyverge – – installed version not tested with active version of woocommerce woocommerce product add ons by woocommerce – – installed version not tested with active version of woocommerce woocommerce sequential order numbers pro by skyverge – – installed version not tested with active version of woocommerce woocommerce services by automattic – update to version is available – installed version not tested with active version of woocommerce woocommerce stamps com api integration by woocommerce – – installed version not tested with active version of woocommerce woocommerce stamps com export suite by skyverge – – installed version not tested with active version of woocommerce woocommerce store catalog pdf download by woocommerce – – installed version not tested with active version of woocommerce woocommerce stripe gateway by woocommerce – – installed version not tested with active version of woocommerce woocommerce subscriptions by woocommerce – – installed version not tested with active version of woocommerce woocommerce ups shipping by woocommerce – – installed version not tested with active version of woocommerce woocommerce wholesale prices by rymera web co – – installed version not tested with active version of woocommerce wp crontrol by john blackbourn crontributors – wp migrate db by delicious brains – wp security audit log by wp white security – dropin plugins advanced cache php advanced cache php db php query monitor database class object cache php memcached settings api enabled ✔ force ssl ✔ currency usd currency position left thousand separator decimal separator number of decimals taxonomies product types external external grouped grouped simple simple subscription subscription variable variable variable subscription variable subscription taxonomies product visibility exclude from catalog exclude from catalog exclude from search exclude from search featured featured outofstock outofstock rated rated rated rated rated rated rated rated rated rated connected to woocommerce com – wc pages shop base shop cart cart checkout checkout my account my account terms and conditions ❌ page not set theme name storefront version author url child theme ❌ – if you are modifying woocommerce on a parent theme that you did not build personally we recommend using a child theme see how to create a child theme woocommerce support ✔ templates overrides – action scheduler complete oldest newest pending oldest newest

| 0

|

64,105

| 6,892,926,140

|

IssuesEvent

|

2017-11-22 23:38:00

|

null511/PiServerLite

|

https://api.github.com/repos/null511/PiServerLite

|

closed

|

HTTPS Support

|

enhancement Testing

|

Need an option to enable strict use of HTTPS.

- When enabled, automatically redirect **all** incoming _HTTP_ requests to _HTTPS_.

- Support non-standard port settings. ie, **!**_443_

|

1.0

|

HTTPS Support - Need an option to enable strict use of HTTPS.

- When enabled, automatically redirect **all** incoming _HTTP_ requests to _HTTPS_.

- Support non-standard port settings. ie, **!**_443_

|

non_process

|

https support need an option to enable strict use of https when enabled automatically redirect all incoming http requests to https support non standard port settings ie

| 0

|

10,291

| 13,145,268,188

|

IssuesEvent

|

2020-08-08 02:30:44

|

elastic/beats

|

https://api.github.com/repos/elastic/beats

|

closed

|

Add_locale processor not refreshing timezone info.

|

:Processors Stalled enhancement libbeat needs_team

|

- Version: 6.3.1

- Operating System: Win 10 x64 (maybe all?)

- Discuss Forum URL: [https://discuss.elastic.co/t/add-locale-processor-not-refreshing-timezone-info/141397](https://discuss.elastic.co/t/add-locale-processor-not-refreshing-timezone-info/141397)

- Steps to Reproduce:

- Add locale processor

- Start a filebeat

- Change system timezone

- Events will be emitted with original timezone until beat restart

From Discuss Forum:

> Recently added the add_locale processor to a Filebeat instance and its adding the current timezone offset to logs correctly. However, if I change the timezone without restarting Filebeat, the timezone offset reported is the old one. This appears to happen with Winlogbeat as well. For timezone changes to take effect in beats, does a beat need to be restarted or is something bugged in the way the timezone is determined?

> I could see this being a problem with long running beats when a timezone change to standard time to daylight savings.

|

1.0

|

Add_locale processor not refreshing timezone info. - - Version: 6.3.1

- Operating System: Win 10 x64 (maybe all?)

- Discuss Forum URL: [https://discuss.elastic.co/t/add-locale-processor-not-refreshing-timezone-info/141397](https://discuss.elastic.co/t/add-locale-processor-not-refreshing-timezone-info/141397)

- Steps to Reproduce:

- Add locale processor

- Start a filebeat

- Change system timezone

- Events will be emitted with original timezone until beat restart

From Discuss Forum:

> Recently added the add_locale processor to a Filebeat instance and its adding the current timezone offset to logs correctly. However, if I change the timezone without restarting Filebeat, the timezone offset reported is the old one. This appears to happen with Winlogbeat as well. For timezone changes to take effect in beats, does a beat need to be restarted or is something bugged in the way the timezone is determined?

> I could see this being a problem with long running beats when a timezone change to standard time to daylight savings.

|

process

|

add locale processor not refreshing timezone info version operating system win maybe all discuss forum url steps to reproduce add locale processor start a filebeat change system timezone events will be emitted with original timezone until beat restart from discuss forum recently added the add locale processor to a filebeat instance and its adding the current timezone offset to logs correctly however if i change the timezone without restarting filebeat the timezone offset reported is the old one this appears to happen with winlogbeat as well for timezone changes to take effect in beats does a beat need to be restarted or is something bugged in the way the timezone is determined i could see this being a problem with long running beats when a timezone change to standard time to daylight savings

| 1

|

19,191

| 25,318,760,776

|

IssuesEvent

|

2022-11-18 00:44:49

|

devssa/onde-codar-em-salvador

|

https://api.github.com/repos/devssa/onde-codar-em-salvador

|

closed

|

ANALISTA DE SISTEMAS SR na [MAXIFROTA]

|

SALVADOR BACK-END DESENVOLVIMENTO DE SOFTWARE GESTÃO DE PROJETOS JAVA SENIOR SQL MOBILE REQUISITOS GRAILS GROOVY PROCESSOS INOVAÇÃO Stale

|

<!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

==================================================

-->

## ANALISTA DE SISTEMAS SR

- Realizar levantamento de requisitos e especificações para testes e melhorias dos sistemas.

- Desenvolver sistemas e relatórios.

- Aplicar conhecimentos tecnicos, utilizando novas ferramentas e tecnologias para melhorias nos processos da area.

- Realizar consultas no banco de dados e disponibilizar para os usuarios. Verificar o correto funcionamento das rotinas dos sistemas e aplicacoes da empresa reportando as areas responsaveis.

- Elaborar e realizar levantamentos sobre informacoes e dados, para estudo e implantacao de sistemas.

- Avaliar e identificar necessidades de soluções tecnologicas, elaborando planejamento de projetos e entendimento das necessidades do negOcio e dos clientes.

- Dar suporte a usuarios de sistemas.

- Dar apoio a supervisao e fazer levantamento de indicadores da area.

## Local

Salvador - Bahia

## Benefícios

- Assistência médica

- Assistência odontologica

- Vale-alimentacao

- Vale-refeicao

- Vale-transporte

## Requisitos

**Obrigatórios:**

- Experiencia minima de 03 anos com suporte a usuario e desenvolvimento de sistemas em ambiente WEB, com conhecimento em ferramentas mobile

- Escolaridade Ensino Superior em Tecnologia da Informacao, lnformatica, Ciencias da Computacao ou areas afins.

- Java

- Grails

- Groovy

- SQL

**Desejáveis:**

- Desejável Pós Graduacao na area de atuacao

- Experiencia em banco de dados

- Lideranca técnica de times de desenvolvimento

## MAXIFROTA

Através de produtos que aliam meios de pagamentos e sistemas de gestão, oferecemos uma solução integrada e inteligente que atua diretamente sobre os custos com o abastecimento e a manutenção de veículos, principais despesas e desafios para gerenciamento de frotas.

## Gente MaxiFrota

Caso tenha interesse em fazer parte da nossa equipe, preencha o formulário abaixo. Seu currículo será encaminhado para o setor responsável e, caso existam vagas com o seu perfil, entraremos em contato

https://www.maxifrota.com.br/trabalhe-conosco

|

1.0

|

ANALISTA DE SISTEMAS SR na [MAXIFROTA] - <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

==================================================

-->

## ANALISTA DE SISTEMAS SR

- Realizar levantamento de requisitos e especificações para testes e melhorias dos sistemas.

- Desenvolver sistemas e relatórios.

- Aplicar conhecimentos tecnicos, utilizando novas ferramentas e tecnologias para melhorias nos processos da area.

- Realizar consultas no banco de dados e disponibilizar para os usuarios. Verificar o correto funcionamento das rotinas dos sistemas e aplicacoes da empresa reportando as areas responsaveis.

- Elaborar e realizar levantamentos sobre informacoes e dados, para estudo e implantacao de sistemas.

- Avaliar e identificar necessidades de soluções tecnologicas, elaborando planejamento de projetos e entendimento das necessidades do negOcio e dos clientes.

- Dar suporte a usuarios de sistemas.

- Dar apoio a supervisao e fazer levantamento de indicadores da area.

## Local

Salvador - Bahia

## Benefícios

- Assistência médica

- Assistência odontologica

- Vale-alimentacao

- Vale-refeicao

- Vale-transporte

## Requisitos

**Obrigatórios:**

- Experiencia minima de 03 anos com suporte a usuario e desenvolvimento de sistemas em ambiente WEB, com conhecimento em ferramentas mobile

- Escolaridade Ensino Superior em Tecnologia da Informacao, lnformatica, Ciencias da Computacao ou areas afins.

- Java

- Grails

- Groovy

- SQL

**Desejáveis:**

- Desejável Pós Graduacao na area de atuacao

- Experiencia em banco de dados

- Lideranca técnica de times de desenvolvimento

## MAXIFROTA

Através de produtos que aliam meios de pagamentos e sistemas de gestão, oferecemos uma solução integrada e inteligente que atua diretamente sobre os custos com o abastecimento e a manutenção de veículos, principais despesas e desafios para gerenciamento de frotas.

## Gente MaxiFrota

Caso tenha interesse em fazer parte da nossa equipe, preencha o formulário abaixo. Seu currículo será encaminhado para o setor responsável e, caso existam vagas com o seu perfil, entraremos em contato

https://www.maxifrota.com.br/trabalhe-conosco

|

process

|

analista de sistemas sr na por favor só poste se a vaga for para salvador e cidades vizinhas use desenvolvedor front end ao invés de front end developer o exemplo desenvolvedor front end na analista de sistemas sr realizar levantamento de requisitos e especificações para testes e melhorias dos sistemas desenvolver sistemas e relatórios aplicar conhecimentos tecnicos utilizando novas ferramentas e tecnologias para melhorias nos processos da area realizar consultas no banco de dados e disponibilizar para os usuarios verificar o correto funcionamento das rotinas dos sistemas e aplicacoes da empresa reportando as areas responsaveis elaborar e realizar levantamentos sobre informacoes e dados para estudo e implantacao de sistemas avaliar e identificar necessidades de soluções tecnologicas elaborando planejamento de projetos e entendimento das necessidades do negocio e dos clientes dar suporte a usuarios de sistemas dar apoio a supervisao e fazer levantamento de indicadores da area local salvador bahia benefícios assistência médica assistência odontologica vale alimentacao vale refeicao vale transporte requisitos obrigatórios experiencia minima de anos com suporte a usuario e desenvolvimento de sistemas em ambiente web com conhecimento em ferramentas mobile escolaridade ensino superior em tecnologia da informacao lnformatica ciencias da computacao ou areas afins java grails groovy sql desejáveis desejável pós graduacao na area de atuacao experiencia em banco de dados lideranca técnica de times de desenvolvimento maxifrota através de produtos que aliam meios de pagamentos e sistemas de gestão oferecemos uma solução integrada e inteligente que atua diretamente sobre os custos com o abastecimento e a manutenção de veículos principais despesas e desafios para gerenciamento de frotas gente maxifrota caso tenha interesse em fazer parte da nossa equipe preencha o formulário abaixo seu currículo será encaminhado para o setor responsável e caso existam vagas com o seu perfil entraremos em contato

| 1

|

2,998

| 5,988,167,687

|

IssuesEvent

|

2017-06-02 03:11:41

|

P0cL4bs/WiFi-Pumpkin

|

https://api.github.com/repos/P0cL4bs/WiFi-Pumpkin

|

closed

|

Error bs4 python module

|

in process priority solved

|

Hello, this is error:

~# wifi-pumpkin

Traceback (most recent call last):

File "wifi-pumpkin.py", line 39, in <module>

from core.loaders.checker.networkmanager import CLI_NetworkManager,UI_NetworkManager

File "/usr/share/WiFi-Pumpkin/core/loaders/checker/networkmanager.py", line 4, in <module>

from core.main import Initialize

File "/usr/share/WiFi-Pumpkin/core/main.py", line 32, in <module>

from core.widgets.tabmodels import (

File "/usr/share/WiFi-Pumpkin/core/widgets/tabmodels.py", line 7, in <module>

from core.utility.threads import ThreadPopen

File "/usr/share/WiFi-Pumpkin/core/utility/threads.py", line 19, in <module>

from core.servers.proxy.http.controller.handler import MasterHandler

File "/usr/share/WiFi-Pumpkin/core/servers/proxy/http/controller/handler.py", line 1, in <module>

from plugins.extension import *

File "/usr/share/WiFi-Pumpkin/plugins/extension/html_inject.py", line 2, in <module>

from bs4 import BeautifulSoup

File "build/bdist.linux-x86_64/egg/bs4/__init__.py", line 30, in <module>

File "build/bdist.linux-x86_64/egg/bs4/builder/__init__.py", line 314, in <module>

File "build/bdist.linux-x86_64/egg/bs4/builder/_html5lib.py", line 70, in <module>

AttributeError: 'module' object has no attribute '_base'

I have update html5lib.py but the error is the same.

Please help me

Thanks

Network adapter TpLink wn722n

no vmachine

Kali linux rolling

wifi pumpkin latest version

|

1.0

|

Error bs4 python module - Hello, this is error:

~# wifi-pumpkin

Traceback (most recent call last):

File "wifi-pumpkin.py", line 39, in <module>

from core.loaders.checker.networkmanager import CLI_NetworkManager,UI_NetworkManager

File "/usr/share/WiFi-Pumpkin/core/loaders/checker/networkmanager.py", line 4, in <module>

from core.main import Initialize

File "/usr/share/WiFi-Pumpkin/core/main.py", line 32, in <module>

from core.widgets.tabmodels import (

File "/usr/share/WiFi-Pumpkin/core/widgets/tabmodels.py", line 7, in <module>

from core.utility.threads import ThreadPopen

File "/usr/share/WiFi-Pumpkin/core/utility/threads.py", line 19, in <module>

from core.servers.proxy.http.controller.handler import MasterHandler

File "/usr/share/WiFi-Pumpkin/core/servers/proxy/http/controller/handler.py", line 1, in <module>

from plugins.extension import *

File "/usr/share/WiFi-Pumpkin/plugins/extension/html_inject.py", line 2, in <module>

from bs4 import BeautifulSoup

File "build/bdist.linux-x86_64/egg/bs4/__init__.py", line 30, in <module>

File "build/bdist.linux-x86_64/egg/bs4/builder/__init__.py", line 314, in <module>

File "build/bdist.linux-x86_64/egg/bs4/builder/_html5lib.py", line 70, in <module>

AttributeError: 'module' object has no attribute '_base'

I have update html5lib.py but the error is the same.

Please help me

Thanks

Network adapter TpLink wn722n

no vmachine

Kali linux rolling

wifi pumpkin latest version

|

process

|

error python module hello this is error wifi pumpkin traceback most recent call last file wifi pumpkin py line in from core loaders checker networkmanager import cli networkmanager ui networkmanager file usr share wifi pumpkin core loaders checker networkmanager py line in from core main import initialize file usr share wifi pumpkin core main py line in from core widgets tabmodels import file usr share wifi pumpkin core widgets tabmodels py line in from core utility threads import threadpopen file usr share wifi pumpkin core utility threads py line in from core servers proxy http controller handler import masterhandler file usr share wifi pumpkin core servers proxy http controller handler py line in from plugins extension import file usr share wifi pumpkin plugins extension html inject py line in from import beautifulsoup file build bdist linux egg init py line in file build bdist linux egg builder init py line in file build bdist linux egg builder py line in attributeerror module object has no attribute base i have update py but the error is the same please help me thanks network adapter tplink no vmachine kali linux rolling wifi pumpkin latest version

| 1

|

106,686

| 4,282,380,749

|

IssuesEvent

|

2016-07-15 08:55:02

|

opencv/opencv

|

https://api.github.com/repos/opencv/opencv

|

reopened

|

BFMatcher SigSegv (OpenCL implementation)

|

affected: master bug category: t-api priority: normal

|

My application very rarely segfaults around BFMatching.knnMatch (OpenCL via transparent API).

After investigation I created sample code that also segfaults:

```cpp

#include <opencv2/features2d.hpp>

#include <opencv2/imgcodecs.hpp>

#include <opencv2/opencv.hpp>

#include <opencv2/core/ocl.hpp>

#include <vector>

#include <iostream>

#include <stdio.h>

#include <tuple>

using namespace std;

using namespace cv;

void print_ocl_device_name() {

vector<ocl::PlatformInfo> platforms;

ocl::getPlatfomsInfo(platforms);

for (size_t i = 0; i < platforms.size(); i++) {

const cv::ocl::PlatformInfo* platform = &platforms[i];

cout << "Platform Name: " << platform->name().c_str() << "\n";

for (int j = 0; j < platform->deviceNumber(); j++) {

cv::ocl::Device current_device;

platform->getDevice(current_device, j);

int deviceType = current_device.type();

cout << "Device " << j << ": " << current_device.name() << "\n";

}

}

ocl::Device default_device = ocl::Device::getDefault();

cout << "Used device: " << default_device.name() << "\n";

}

int main(void) {

print_ocl_device_name();

RNG r(239);

Mat desc1(5100, 64, CV_32F);

Mat desc2(5046, 64, CV_32F);

r.fill(desc1, RNG::UNIFORM, Scalar::all(0.0), Scalar::all(1.0));

r.fill(desc2, RNG::UNIFORM, Scalar::all(0.0), Scalar::all(1.0));

for (int i = 0; i < 1000 * 1000; i++) {

BFMatcher matcher(NORM_L2);

UMat udesc1, udesc2;

desc1.copyTo(udesc1);

desc2.copyTo(udesc2);

vector< vector<DMatch> > nn_matches;

matcher.knnMatch(udesc1, udesc2, nn_matches, 2);

printf("%d\n", i);

}

return 0;

}

```

**Note**: this happens very rare. I see it after tens of thousands of iterations (tens of minutes on fast GPU).

## Environment

OpenCV version: **3.0.0**

I have segfaults on Nvidia GTX 680 (**OpenCL 1.1**):

```

Device name: GeForce GTX 680

Nvidia driver verison: 331.113

Platform Version: OpenCL 1.1 CUDA 6.0.1

```

The same behaivour on Nvidia GTX 580 with 352.30 driver (**OpenCL 1.1**).

On CPU device (not CPU implementation, here I mean CPU as OpenCL device) I was trying - but for now there is no segfaults. But I think this is only because of speed difference between CPU and GPU. (On GPU it takes tens of minutes or even more - CPU slower up to to one or two orders). Or these can be explained driver implementation details, or the reason, that CPU RAM is the same that the Host RAM.

**GDB backtraces**:

```