Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

17,684

| 9,858,878,150

|

IssuesEvent

|

2019-06-20 08:16:14

|

galaxyproject/galaxy

|

https://api.github.com/repos/galaxyproject/galaxy

|

opened

|

Sharing history is slow with a lot of items

|

area/performance help wanted kind/enhancement

|

Maybe we could optimise the query. For a history with 70k items, attempting to share (and make all objects accessible) took ~200 seconds. My user gave up initially and I ended up doing it in the database:

```

delete from dataset_permissions where dataset_id in (select dataset_id from history_dataset_association where history_id = 129258) and action = 'access';

```

which was quite fast. I'm guessing it iterates over all items? Perhaps we could do something more efficient here?

|

True

|

Sharing history is slow with a lot of items - Maybe we could optimise the query. For a history with 70k items, attempting to share (and make all objects accessible) took ~200 seconds. My user gave up initially and I ended up doing it in the database:

```

delete from dataset_permissions where dataset_id in (select dataset_id from history_dataset_association where history_id = 129258) and action = 'access';

```

which was quite fast. I'm guessing it iterates over all items? Perhaps we could do something more efficient here?

|

non_process

|

sharing history is slow with a lot of items maybe we could optimise the query for a history with items attempting to share and make all objects accessible took seconds my user gave up initially and i ended up doing it in the database delete from dataset permissions where dataset id in select dataset id from history dataset association where history id and action access which was quite fast i m guessing it iterates over all items perhaps we could do something more efficient here

| 0

|

19,205

| 25,338,864,056

|

IssuesEvent

|

2022-11-18 19:26:10

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

closed

|

[bazel.build] Problem with /install/ubuntu

|

type: support / not a bug (process) untriaged team-OSS

|

Trying to install a fresh install on both Debian and Ubuntu I'm unable to install Bazel onto the stock image of a cloud VM from Google.

When I run `sudo apt update && sudo apt install bazel` from the instructions I get:

Hit:1 http://us-west1.gce.archive.ubuntu.com/ubuntu bionic InRelease

Get:2 http://us-west1.gce.archive.ubuntu.com/ubuntu bionic-updates InRelease [88.7 kB]

Get:3 http://us-west1.gce.archive.ubuntu.com/ubuntu bionic-backports InRelease [83.3 kB]

Hit:4 http://security.ubuntu.com/ubuntu bionic-security InRelease

Fetched 172 kB in 0s (499 kB/s)

Reading package lists... Done

Building dependency tree

Reading state information... Done

19 packages can be upgraded. Run 'apt list --upgradable' to see them.

Reading package lists... Done

Building dependency tree

Reading state information... Done

E: Unable to locate package bazel

|

1.0

|

[bazel.build] Problem with /install/ubuntu - Trying to install a fresh install on both Debian and Ubuntu I'm unable to install Bazel onto the stock image of a cloud VM from Google.

When I run `sudo apt update && sudo apt install bazel` from the instructions I get:

Hit:1 http://us-west1.gce.archive.ubuntu.com/ubuntu bionic InRelease

Get:2 http://us-west1.gce.archive.ubuntu.com/ubuntu bionic-updates InRelease [88.7 kB]

Get:3 http://us-west1.gce.archive.ubuntu.com/ubuntu bionic-backports InRelease [83.3 kB]

Hit:4 http://security.ubuntu.com/ubuntu bionic-security InRelease

Fetched 172 kB in 0s (499 kB/s)

Reading package lists... Done

Building dependency tree

Reading state information... Done

19 packages can be upgraded. Run 'apt list --upgradable' to see them.

Reading package lists... Done

Building dependency tree

Reading state information... Done

E: Unable to locate package bazel

|

process

|

problem with install ubuntu trying to install a fresh install on both debian and ubuntu i m unable to install bazel onto the stock image of a cloud vm from google when i run sudo apt update sudo apt install bazel from the instructions i get hit bionic inrelease get bionic updates inrelease get bionic backports inrelease hit bionic security inrelease fetched kb in kb s reading package lists done building dependency tree reading state information done packages can be upgraded run apt list upgradable to see them reading package lists done building dependency tree reading state information done e unable to locate package bazel

| 1

|

20,828

| 27,585,433,402

|

IssuesEvent

|

2023-03-08 19:21:51

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

opened

|

Release 6.2.0 - June 2022

|

P1 type: process release team-OSS

|

# Status of Bazel 6.2.0

- Expected release date: 2020-06-01

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/48)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and add it to the milestone.

To cherry-pick a mainline commit into 6.2.0, simply send a PR against the `release-6.2.0` branch.

Task list:

- [x] [Create draft release announcement](https://docs.google.com/document/d/1pu2ARPweOCTxPsRR8snoDtkC9R51XWRyBXeiC6Ql5so/edit?usp=sharing)

- [x] Send for review the release announcement PR

- [x] Push the release, notify package maintainers

- [x] Update the documentation

- [x] Update the [release page](https://github.com/bazelbuild/bazel/releases/)

|

1.0

|

Release 6.2.0 - June 2022 - # Status of Bazel 6.2.0

- Expected release date: 2020-06-01

- [List of release blockers](https://github.com/bazelbuild/bazel/milestone/48)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and add it to the milestone.

To cherry-pick a mainline commit into 6.2.0, simply send a PR against the `release-6.2.0` branch.

Task list:

- [x] [Create draft release announcement](https://docs.google.com/document/d/1pu2ARPweOCTxPsRR8snoDtkC9R51XWRyBXeiC6Ql5so/edit?usp=sharing)

- [x] Send for review the release announcement PR

- [x] Push the release, notify package maintainers

- [x] Update the documentation

- [x] Update the [release page](https://github.com/bazelbuild/bazel/releases/)

|

process

|

release june status of bazel expected release date to report a release blocking bug please add a comment with the text bazel io flag to the issue a release manager will triage it and add it to the milestone to cherry pick a mainline commit into simply send a pr against the release branch task list send for review the release announcement pr push the release notify package maintainers update the documentation update the

| 1

|

601,228

| 18,391,868,148

|

IssuesEvent

|

2021-10-12 06:56:01

|

magento/magento2

|

https://api.github.com/repos/magento/magento2

|

opened

|

[Issue] Replace repetitive actions with Action Groups in StorefrontProductNameWithDoubleQuoteTest

|

Priority: P2

|

This issue is automatically created based on existing pull request: magento/magento2#34256: Replace repetitive actions with Action Groups in StorefrontProductNameWithDoubleQuoteTest

---------

<!---

Thank you for contributing to Magento.

To help us process this pull request we recommend that you add the following information:

- Summary of the pull request,

- Issue(s) related to the changes made,

- Manual testing scenarios

Fields marked with (*) are required. Please don't remove the template.

-->

<!--- Please provide a general summary of the Pull Request in the Title above -->

### Description (*)

<!---

Please provide a description of the changes proposed in the pull request.

Letting us know what has changed and why it needed changing will help us validate this pull request.

-->

### Related Pull Requests

<!-- related pull request placeholder -->

### Fixed Issues (if relevant)

Test is refactored according to the best practices followed by MFTF.

### Manual testing scenarios (*)

### Questions or comments

<!---

If relevant, here you can ask questions or provide comments on your pull request for the reviewer

For example if you need assistance with writing tests or would like some feedback on one of your development ideas

-->

### Contribution checklist (*)

- [ ] Pull request has a meaningful description of its purpose

- [ ] All commits are accompanied by meaningful commit messages

- [ ] All new or changed code is covered with unit/integration tests (if applicable)

- [ ] README.md files for modified modules are updated and included in the pull request if any [README.md predefined sections](https://github.com/magento/devdocs/wiki/Magento-module-README.md) require an update

- [ ] All automated tests passed successfully (all builds are green)

|

1.0

|

[Issue] Replace repetitive actions with Action Groups in StorefrontProductNameWithDoubleQuoteTest - This issue is automatically created based on existing pull request: magento/magento2#34256: Replace repetitive actions with Action Groups in StorefrontProductNameWithDoubleQuoteTest

---------

<!---

Thank you for contributing to Magento.

To help us process this pull request we recommend that you add the following information:

- Summary of the pull request,

- Issue(s) related to the changes made,

- Manual testing scenarios

Fields marked with (*) are required. Please don't remove the template.

-->

<!--- Please provide a general summary of the Pull Request in the Title above -->

### Description (*)

<!---

Please provide a description of the changes proposed in the pull request.

Letting us know what has changed and why it needed changing will help us validate this pull request.

-->

### Related Pull Requests

<!-- related pull request placeholder -->

### Fixed Issues (if relevant)

Test is refactored according to the best practices followed by MFTF.

### Manual testing scenarios (*)

### Questions or comments

<!---

If relevant, here you can ask questions or provide comments on your pull request for the reviewer

For example if you need assistance with writing tests or would like some feedback on one of your development ideas

-->

### Contribution checklist (*)

- [ ] Pull request has a meaningful description of its purpose

- [ ] All commits are accompanied by meaningful commit messages

- [ ] All new or changed code is covered with unit/integration tests (if applicable)

- [ ] README.md files for modified modules are updated and included in the pull request if any [README.md predefined sections](https://github.com/magento/devdocs/wiki/Magento-module-README.md) require an update

- [ ] All automated tests passed successfully (all builds are green)

|

non_process

|

replace repetitive actions with action groups in storefrontproductnamewithdoublequotetest this issue is automatically created based on existing pull request magento replace repetitive actions with action groups in storefrontproductnamewithdoublequotetest thank you for contributing to magento to help us process this pull request we recommend that you add the following information summary of the pull request issue s related to the changes made manual testing scenarios fields marked with are required please don t remove the template description please provide a description of the changes proposed in the pull request letting us know what has changed and why it needed changing will help us validate this pull request related pull requests fixed issues if relevant test is refactored according to the best practices followed by mftf manual testing scenarios questions or comments if relevant here you can ask questions or provide comments on your pull request for the reviewer for example if you need assistance with writing tests or would like some feedback on one of your development ideas contribution checklist pull request has a meaningful description of its purpose all commits are accompanied by meaningful commit messages all new or changed code is covered with unit integration tests if applicable readme md files for modified modules are updated and included in the pull request if any require an update all automated tests passed successfully all builds are green

| 0

|

209,376

| 16,018,449,748

|

IssuesEvent

|

2021-04-20 19:08:25

|

totvs/tds-vscode

|

https://api.github.com/repos/totvs/tds-vscode

|

closed

|

Apply Patch ocasiona erro DBGCpyFile error

|

awaiting user test

|

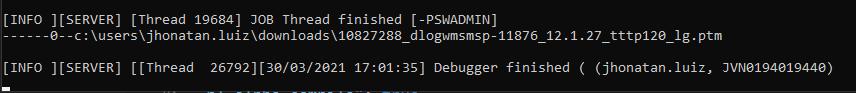

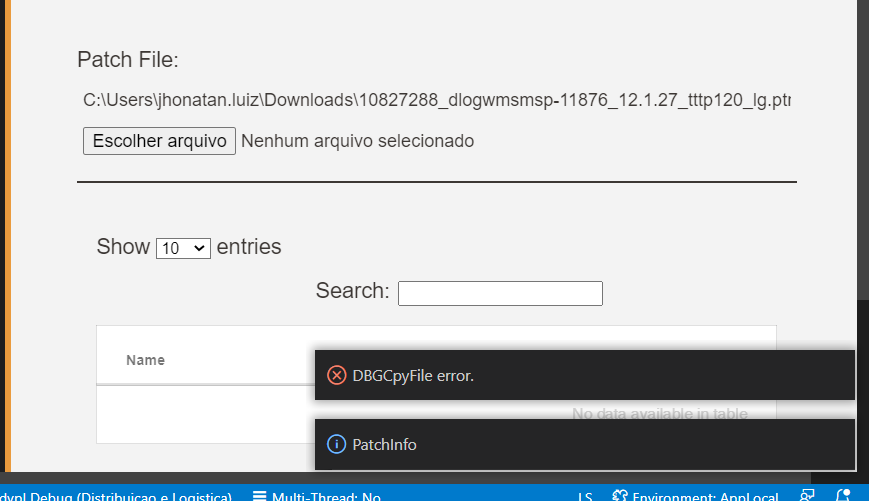

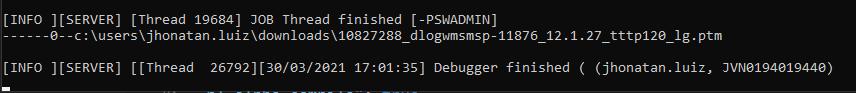

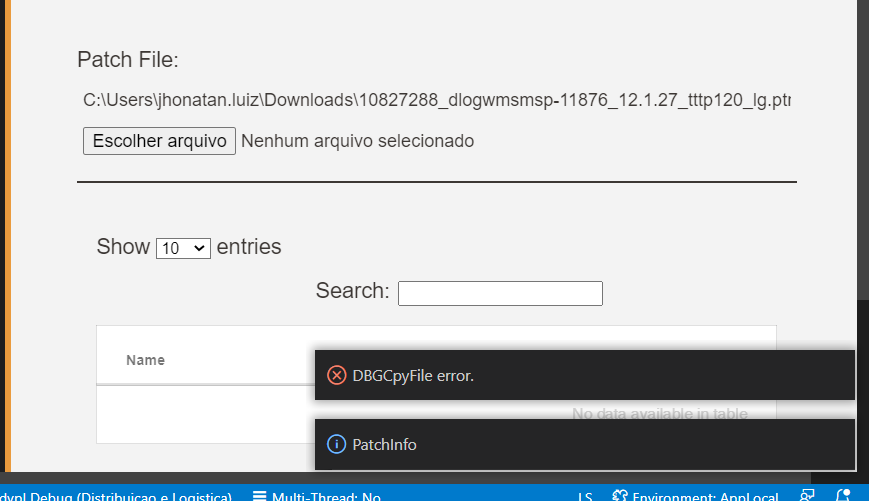

**Describe the bug**

Ao tentar aplicar Patch por via da extensão no VS Code, o erro DBGCpyFile error aparece. Foi testado aplicando o patch 10827288_dlogwmsmsp-11876_12.1.27_tttp120_lg e com o patch P12_SetupRobo_por_lobo_guara

**To Reproduce**

Steps to reproduce the behavior:

1. Vá na extensão aplicar patch selecionar o patch desejado.

2. Clique em Aply

**Expected behavior**

A aplicação do patch ser executada e o êxito no terminal do app server

**Screenshots**

App Server

Ao selecionar Patch Info

**Desktop (please complete the following information):**

- OS/Architecture: Windows 10 64 bit

**Appserver (please complete the following information):**

- Build (with date): Build 7.00.191205P - Feb 20 2020 - 17:32:02

- OS/Architecture: Windows 10 64 bit

- Build Version 19.3.0.2

|

1.0

|

Apply Patch ocasiona erro DBGCpyFile error - **Describe the bug**

Ao tentar aplicar Patch por via da extensão no VS Code, o erro DBGCpyFile error aparece. Foi testado aplicando o patch 10827288_dlogwmsmsp-11876_12.1.27_tttp120_lg e com o patch P12_SetupRobo_por_lobo_guara

**To Reproduce**

Steps to reproduce the behavior:

1. Vá na extensão aplicar patch selecionar o patch desejado.

2. Clique em Aply

**Expected behavior**

A aplicação do patch ser executada e o êxito no terminal do app server

**Screenshots**

App Server

Ao selecionar Patch Info

**Desktop (please complete the following information):**

- OS/Architecture: Windows 10 64 bit

**Appserver (please complete the following information):**

- Build (with date): Build 7.00.191205P - Feb 20 2020 - 17:32:02

- OS/Architecture: Windows 10 64 bit

- Build Version 19.3.0.2

|

non_process

|

apply patch ocasiona erro dbgcpyfile error describe the bug ao tentar aplicar patch por via da extensão no vs code o erro dbgcpyfile error aparece foi testado aplicando o patch dlogwmsmsp lg e com o patch setuprobo por lobo guara to reproduce steps to reproduce the behavior vá na extensão aplicar patch selecionar o patch desejado clique em aply expected behavior a aplicação do patch ser executada e o êxito no terminal do app server screenshots app server ao selecionar patch info desktop please complete the following information os architecture windows bit appserver please complete the following information build with date build feb os architecture windows bit build version

| 0

|

218,626

| 7,331,906,956

|

IssuesEvent

|

2018-03-05 14:55:37

|

enviroCar/enviroCar-app

|

https://api.github.com/repos/enviroCar/enviroCar-app

|

closed

|

User Story: app produces correct measurements

|

0 - Backlog Priority - 1 - High User Story

|

We need to make sure that the measurements produced by the app and uploaded to the server are correct.

There seem to be wrong fuel consumption / CO2 measurements; see:

https://github.com/enviroCar/enviroCar-www/issues/41

The process of creating the measurements needs to be understood and well documented (also on website).

<!---

@huboard:{"order":1.94580078125}

-->

|

1.0

|

User Story: app produces correct measurements - We need to make sure that the measurements produced by the app and uploaded to the server are correct.

There seem to be wrong fuel consumption / CO2 measurements; see:

https://github.com/enviroCar/enviroCar-www/issues/41

The process of creating the measurements needs to be understood and well documented (also on website).

<!---

@huboard:{"order":1.94580078125}

-->

|

non_process

|

user story app produces correct measurements we need to make sure that the measurements produced by the app and uploaded to the server are correct there seem to be wrong fuel consumption measurements see the process of creating the measurements needs to be understood and well documented also on website huboard order

| 0

|

3,953

| 6,892,281,480

|

IssuesEvent

|

2017-11-22 20:20:54

|

PWRFLcreative/Lightwork-Mapper

|

https://api.github.com/repos/PWRFLcreative/Lightwork-Mapper

|

opened

|

Network Connect button stops working on failed connection

|

Processing

|

Reviewed code, looks like it disposes of the network object correctly.

Need to investigate the case that's breaking it further.

|

1.0

|

Network Connect button stops working on failed connection - Reviewed code, looks like it disposes of the network object correctly.

Need to investigate the case that's breaking it further.

|

process

|

network connect button stops working on failed connection reviewed code looks like it disposes of the network object correctly need to investigate the case that s breaking it further

| 1

|

22,094

| 30,614,491,553

|

IssuesEvent

|

2023-07-24 00:58:01

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

closed

|

DISABLED test_fd_pool (__main__.TestMultiprocessing)

|

high priority triage review module: multiprocessing module: flaky-tests skipped

|

This test has been determined flaky through reruns in CI and its instances are reported in our flaky_tests table here https://metrics.pytorch.org/d/L0r6ErGnk/github-status?orgId=1&from=1636426818307&to=1639018818307&viewPanel=57.

It is our second flakiest test in the last 15 days with 28 failed instances.

```

======================================================================

FAIL [4.720s]: test_fd_pool (__main__.TestMultiprocessing)

----------------------------------------------------------------------

Traceback (most recent call last):

File "test_multiprocessing.py", line 341, in test_fd_pool

self._test_pool(repeat=TEST_REPEATS)

File "test_multiprocessing.py", line 327, in _test_pool

do_test()

File "test_multiprocessing.py", line 206, in __exit__

self.test_case.assertFalse(self.has_shm_files())

AssertionError: True is not false

```

Please look at the table for details from the past 30 days such as

* number of failed instances

* an example url

* which platforms it failed on

* the number of times it failed on trunk vs on PRs.

cc @ezyang @gchanan @zou3519 @bdhirsh @jbschlosser @VitalyFedyunin

|

1.0

|

DISABLED test_fd_pool (__main__.TestMultiprocessing) - This test has been determined flaky through reruns in CI and its instances are reported in our flaky_tests table here https://metrics.pytorch.org/d/L0r6ErGnk/github-status?orgId=1&from=1636426818307&to=1639018818307&viewPanel=57.

It is our second flakiest test in the last 15 days with 28 failed instances.

```

======================================================================

FAIL [4.720s]: test_fd_pool (__main__.TestMultiprocessing)

----------------------------------------------------------------------

Traceback (most recent call last):

File "test_multiprocessing.py", line 341, in test_fd_pool

self._test_pool(repeat=TEST_REPEATS)

File "test_multiprocessing.py", line 327, in _test_pool

do_test()

File "test_multiprocessing.py", line 206, in __exit__

self.test_case.assertFalse(self.has_shm_files())

AssertionError: True is not false

```

Please look at the table for details from the past 30 days such as

* number of failed instances

* an example url

* which platforms it failed on

* the number of times it failed on trunk vs on PRs.

cc @ezyang @gchanan @zou3519 @bdhirsh @jbschlosser @VitalyFedyunin

|

process

|

disabled test fd pool main testmultiprocessing this test has been determined flaky through reruns in ci and its instances are reported in our flaky tests table here it is our second flakiest test in the last days with failed instances fail test fd pool main testmultiprocessing traceback most recent call last file test multiprocessing py line in test fd pool self test pool repeat test repeats file test multiprocessing py line in test pool do test file test multiprocessing py line in exit self test case assertfalse self has shm files assertionerror true is not false please look at the table for details from the past days such as number of failed instances an example url which platforms it failed on the number of times it failed on trunk vs on prs cc ezyang gchanan bdhirsh jbschlosser vitalyfedyunin

| 1

|

21,529

| 29,810,891,808

|

IssuesEvent

|

2023-06-16 14:58:55

|

bazelbuild/bazel

|

https://api.github.com/repos/bazelbuild/bazel

|

closed

|

Release X.Y.Z - $MONTH $YEAR

|

P1 type: process release team-OSS

|

# Status of Bazel X.Y.Z

- Expected first release candidate date: [date]

- Expected release date: [date]

- [List of release blockers](link-to-milestone)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and add it to the milestone.

To cherry-pick a mainline commit into X.Y.Z, simply send a PR against the `release-X.Y.Z` branch.

**Task list:**

<!-- The first item is only needed for major releases (X.0.0) -->

- [ ] Pick release baseline: [link to base commit]

- [ ] Create release candidate: X.Y.Zrc1

- [ ] Check downstream projects

- [ ] Create [draft release announcement](https://docs.google.com/document/d/1pu2ARPweOCTxPsRR8snoDtkC9R51XWRyBXeiC6Ql5so/edit) <!-- Note that there should be a new Bazel Release Announcement document for every major release. For minor and patch releases, use the latest open doc. -->

- [ ] Send the release announcement PR for review: [link to bazel-blog PR] <!-- Only for major releases. -->

- [ ] Push the release and notify package maintainers: [link to comment notifying package maintainers]

- [ ] Update the documentation

- [ ] Push the blog post: [link to blog post] <!-- Only for major releases. -->

- [ ] Update the [release page](https://github.com/bazelbuild/bazel/releases/)

|

1.0

|

Release X.Y.Z - $MONTH $YEAR - # Status of Bazel X.Y.Z

- Expected first release candidate date: [date]

- Expected release date: [date]

- [List of release blockers](link-to-milestone)

To report a release-blocking bug, please add a comment with the text `@bazel-io flag` to the issue. A release manager will triage it and add it to the milestone.

To cherry-pick a mainline commit into X.Y.Z, simply send a PR against the `release-X.Y.Z` branch.

**Task list:**

<!-- The first item is only needed for major releases (X.0.0) -->

- [ ] Pick release baseline: [link to base commit]

- [ ] Create release candidate: X.Y.Zrc1

- [ ] Check downstream projects

- [ ] Create [draft release announcement](https://docs.google.com/document/d/1pu2ARPweOCTxPsRR8snoDtkC9R51XWRyBXeiC6Ql5so/edit) <!-- Note that there should be a new Bazel Release Announcement document for every major release. For minor and patch releases, use the latest open doc. -->

- [ ] Send the release announcement PR for review: [link to bazel-blog PR] <!-- Only for major releases. -->

- [ ] Push the release and notify package maintainers: [link to comment notifying package maintainers]

- [ ] Update the documentation

- [ ] Push the blog post: [link to blog post] <!-- Only for major releases. -->

- [ ] Update the [release page](https://github.com/bazelbuild/bazel/releases/)

|

process

|

release x y z month year status of bazel x y z expected first release candidate date expected release date link to milestone to report a release blocking bug please add a comment with the text bazel io flag to the issue a release manager will triage it and add it to the milestone to cherry pick a mainline commit into x y z simply send a pr against the release x y z branch task list pick release baseline create release candidate x y check downstream projects create send the release announcement pr for review push the release and notify package maintainers update the documentation push the blog post update the

| 1

|

3,506

| 6,559,856,291

|

IssuesEvent

|

2017-09-07 06:52:07

|

inasafe/inasafe-realtime

|

https://api.github.com/repos/inasafe/inasafe-realtime

|

closed

|

Realtime earthquake translation in Bahasa Indonesia

|

earthquake feature request in progress realtime processor web page

|

Problem

Similar issue with InaSAFE Realtime flood translation (#3291), currently InaSAFE Realtime Earthquake in Bahasa Indonesia is not 100% translated yet.

See original ticket at https://github.com/inasafe/inasafe/issues/3714 for further discussion.

|

1.0

|

Realtime earthquake translation in Bahasa Indonesia - Problem

Similar issue with InaSAFE Realtime flood translation (#3291), currently InaSAFE Realtime Earthquake in Bahasa Indonesia is not 100% translated yet.

See original ticket at https://github.com/inasafe/inasafe/issues/3714 for further discussion.

|

process

|

realtime earthquake translation in bahasa indonesia problem similar issue with inasafe realtime flood translation currently inasafe realtime earthquake in bahasa indonesia is not translated yet see original ticket at for further discussion

| 1

|

519,822

| 15,057,636,451

|

IssuesEvent

|

2021-02-03 22:00:36

|

zephyrproject-rtos/zephyr

|

https://api.github.com/repos/zephyrproject-rtos/zephyr

|

closed

|

intel_adsp_cavs15:running tests/kernel/sched/schedule_api failed

|

bug priority: medium

|

Describe the bug

running tests/kernel/sched/schedule_api error, it showed assertion failed.

To Reproduce

Steps to reproduce the behavior:

sanitycheck -W -p intel_adsp_cavs15 --device-testing -T tests/kernel/sched/ --west-flash="/home/ztest/work/zephyrproject/zephyr/boards/xtensa/up_squared_adsp/tools/up_squared_adsp_flash.sh, /home/ztest/work/sof/rimage/keys/otc_private_key.pem" --device-serial-pty="/home/ztest/work/zephyrproject/zephyr/boards/xtensa/intel_adsp_cavs15/tools/dump_trace.py" -x=CONFIG_IPM=y -x=CONFIG_CONSOLE=y -x=CONFIG_LOG_PRINTK=n

see error:

START - test_slice_reset

Assertion failed at WEST_TOPDIR/zephyr/tests/kernel/sched/schedule_api/src/test_sched_timeslice_reset.c:91: thread_time_slice: (t <= expected_slice_max is false)

timeslice too big, expected 3840384 got 3840934

Assertion failed at WEST_TOPDIR/zephyr/tests/kernel/sched/schedule_api/src/test_sched_timeslice_reset.c:88: thread_time_slice: (t >= expected_slice_min is false)

timeslice too small, expected 3839616 got 15295

FAIL - test_slice_reset

Environment (please complete the following information):

OS: Fedora28

Toolchain: Zephyr-sdk-0.11.4

Commit ID: 90d06cff

|

1.0

|

intel_adsp_cavs15:running tests/kernel/sched/schedule_api failed - Describe the bug

running tests/kernel/sched/schedule_api error, it showed assertion failed.

To Reproduce

Steps to reproduce the behavior:

sanitycheck -W -p intel_adsp_cavs15 --device-testing -T tests/kernel/sched/ --west-flash="/home/ztest/work/zephyrproject/zephyr/boards/xtensa/up_squared_adsp/tools/up_squared_adsp_flash.sh, /home/ztest/work/sof/rimage/keys/otc_private_key.pem" --device-serial-pty="/home/ztest/work/zephyrproject/zephyr/boards/xtensa/intel_adsp_cavs15/tools/dump_trace.py" -x=CONFIG_IPM=y -x=CONFIG_CONSOLE=y -x=CONFIG_LOG_PRINTK=n

see error:

START - test_slice_reset

Assertion failed at WEST_TOPDIR/zephyr/tests/kernel/sched/schedule_api/src/test_sched_timeslice_reset.c:91: thread_time_slice: (t <= expected_slice_max is false)

timeslice too big, expected 3840384 got 3840934

Assertion failed at WEST_TOPDIR/zephyr/tests/kernel/sched/schedule_api/src/test_sched_timeslice_reset.c:88: thread_time_slice: (t >= expected_slice_min is false)

timeslice too small, expected 3839616 got 15295

FAIL - test_slice_reset

Environment (please complete the following information):

OS: Fedora28

Toolchain: Zephyr-sdk-0.11.4

Commit ID: 90d06cff

|

non_process

|

intel adsp running tests kernel sched schedule api failed describe the bug running tests kernel sched schedule api error it showed assertion failed to reproduce steps to reproduce the behavior sanitycheck w p intel adsp device testing t tests kernel sched west flash home ztest work zephyrproject zephyr boards xtensa up squared adsp tools up squared adsp flash sh home ztest work sof rimage keys otc private key pem device serial pty home ztest work zephyrproject zephyr boards xtensa intel adsp tools dump trace py x config ipm y x config console y x config log printk n see error start test slice reset assertion failed at west topdir zephyr tests kernel sched schedule api src test sched timeslice reset c thread time slice t expected slice max is false timeslice too big expected got assertion failed at west topdir zephyr tests kernel sched schedule api src test sched timeslice reset c thread time slice t expected slice min is false timeslice too small expected got fail test slice reset environment please complete the following information os toolchain zephyr sdk commit id

| 0

|

405,999

| 11,885,873,617

|

IssuesEvent

|

2020-03-27 20:34:11

|

microsoftgraph/microsoft-graph-toolkit

|

https://api.github.com/repos/microsoftgraph/microsoft-graph-toolkit

|

closed

|

Update all components to leverage new features from mgt-flyout

|

Priority: 0 State: In Review feature-request

|

This issue tracks the progress of updating components to leverage the new updates to mgt-flyout in #351 and #344. The following components need to be updated:

- [x] person

- [x] login

- [x] people picker

- [x] tasks

- [x] person card

Updates include (might not apply to all component):

* moving the anchor element inside the flyout so the flyout can properly render from bottom

* using light-dismiss logic from the flyout and remove logic from each component

* leverage the new `--mgt-flyout-set-width` property to resize the flyout to fir the screen when window is too narrow

|

1.0

|

Update all components to leverage new features from mgt-flyout - This issue tracks the progress of updating components to leverage the new updates to mgt-flyout in #351 and #344. The following components need to be updated:

- [x] person

- [x] login

- [x] people picker

- [x] tasks

- [x] person card

Updates include (might not apply to all component):

* moving the anchor element inside the flyout so the flyout can properly render from bottom

* using light-dismiss logic from the flyout and remove logic from each component

* leverage the new `--mgt-flyout-set-width` property to resize the flyout to fir the screen when window is too narrow

|

non_process

|

update all components to leverage new features from mgt flyout this issue tracks the progress of updating components to leverage the new updates to mgt flyout in and the following components need to be updated person login people picker tasks person card updates include might not apply to all component moving the anchor element inside the flyout so the flyout can properly render from bottom using light dismiss logic from the flyout and remove logic from each component leverage the new mgt flyout set width property to resize the flyout to fir the screen when window is too narrow

| 0

|

8,222

| 11,410,592,725

|

IssuesEvent

|

2020-02-01 00:03:15

|

parcel-bundler/parcel

|

https://api.github.com/repos/parcel-bundler/parcel

|

closed

|

Postcss-modules config broken with plugins array syntax

|

:bug: Bug CSS Preprocessing Stale

|

# 🐛 bug report

Postcss config support plugins set as an array (`{plugins: []}`), but doing so prevents being able to set custom `postcss-modules` config. The trouble here is that I use a shared postcss-config file, but even if I didn't, the plugins array syntax is more explicit, not depending on js's unsupported object key order.

## 🤔 Expected Behavior

`postcss-modules` config should be detected.

## 😯 Current Behavior

`postcss-modules` config is not detected.

## 💁 Possible Solution

Either parcel should search the array for module names matching `postcss-modules`, or you should be able to use the top-level modules key to a config object (`{modules: {}, plugins: []}`).

|

1.0

|

Postcss-modules config broken with plugins array syntax - # 🐛 bug report

Postcss config support plugins set as an array (`{plugins: []}`), but doing so prevents being able to set custom `postcss-modules` config. The trouble here is that I use a shared postcss-config file, but even if I didn't, the plugins array syntax is more explicit, not depending on js's unsupported object key order.

## 🤔 Expected Behavior

`postcss-modules` config should be detected.

## 😯 Current Behavior

`postcss-modules` config is not detected.

## 💁 Possible Solution

Either parcel should search the array for module names matching `postcss-modules`, or you should be able to use the top-level modules key to a config object (`{modules: {}, plugins: []}`).

|

process

|

postcss modules config broken with plugins array syntax 🐛 bug report postcss config support plugins set as an array plugins but doing so prevents being able to set custom postcss modules config the trouble here is that i use a shared postcss config file but even if i didn t the plugins array syntax is more explicit not depending on js s unsupported object key order 🤔 expected behavior postcss modules config should be detected 😯 current behavior postcss modules config is not detected 💁 possible solution either parcel should search the array for module names matching postcss modules or you should be able to use the top level modules key to a config object modules plugins

| 1

|

424,582

| 12,313,293,988

|

IssuesEvent

|

2020-05-12 15:06:47

|

mozilla/addons-server

|

https://api.github.com/repos/mozilla/addons-server

|

closed

|

Handle moved/deleted files better during git extraction

|

component: git priority: p3

|

We should do something like we did for code-search.

|

1.0

|

Handle moved/deleted files better during git extraction - We should do something like we did for code-search.

|

non_process

|

handle moved deleted files better during git extraction we should do something like we did for code search

| 0

|

3,016

| 6,023,158,656

|

IssuesEvent

|

2017-06-07 23:04:38

|

dotnet/corefx

|

https://api.github.com/repos/dotnet/corefx

|

closed

|

Avoid HWND functions in UWP Process class

|

area-System.Diagnostics.Process

|

HWND related functions are not meaningful in an app. When bringing back API for the Process class, anything that relies on these should throw PNSE with a nice message.

```

EnumWindows

GetWindow

GetWindowLong

GetWindowText

GetWindowTextLength

GetWindowThreadProcessId

IsWindowVisible

PostMessage

SendMessageTimeout

WaitForInputIdle

GetKeyState

```

|

1.0

|

Avoid HWND functions in UWP Process class - HWND related functions are not meaningful in an app. When bringing back API for the Process class, anything that relies on these should throw PNSE with a nice message.

```

EnumWindows

GetWindow

GetWindowLong

GetWindowText

GetWindowTextLength

GetWindowThreadProcessId

IsWindowVisible

PostMessage

SendMessageTimeout

WaitForInputIdle

GetKeyState

```

|

process

|

avoid hwnd functions in uwp process class hwnd related functions are not meaningful in an app when bringing back api for the process class anything that relies on these should throw pnse with a nice message enumwindows getwindow getwindowlong getwindowtext getwindowtextlength getwindowthreadprocessid iswindowvisible postmessage sendmessagetimeout waitforinputidle getkeystate

| 1

|

334,932

| 10,147,379,119

|

IssuesEvent

|

2019-08-05 10:24:58

|

ahmedkaludi/accelerated-mobile-pages

|

https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages

|

closed

|

If title is loading then only its markup should load otherwise not

|

NEED FAST REVIEW [Priority: HIGH] bug

|

Help Scout Link: https://secure.helpscout.net/conversation/908191818/74764?folderId=2770545

If the title is loading then its markup(h1> tag) should load else not.

User is waiting for this so need to push it soon.

|

1.0

|

If title is loading then only its markup should load otherwise not - Help Scout Link: https://secure.helpscout.net/conversation/908191818/74764?folderId=2770545

If the title is loading then its markup(h1> tag) should load else not.

User is waiting for this so need to push it soon.

|

non_process

|

if title is loading then only its markup should load otherwise not help scout link if the title is loading then its markup tag should load else not user is waiting for this so need to push it soon

| 0

|

12,744

| 15,106,934,298

|

IssuesEvent

|

2021-02-08 14:52:21

|

apache/incubator-flagon-useralejs

|

https://api.github.com/repos/apache/incubator-flagon-useralejs

|

opened

|

Update Readme to be consistent with Incubator NPM Release Policies

|

Process

|

https://incubator.apache.org/guides/distribution.html#npm

our release process is compliant, save the "full disclaimer" at the bottom of ReadME

|

1.0

|

Update Readme to be consistent with Incubator NPM Release Policies - https://incubator.apache.org/guides/distribution.html#npm

our release process is compliant, save the "full disclaimer" at the bottom of ReadME

|

process

|

update readme to be consistent with incubator npm release policies our release process is compliant save the full disclaimer at the bottom of readme

| 1

|

4,595

| 7,433,361,689

|

IssuesEvent

|

2018-03-26 07:13:17

|

pwittchen/ReactiveBus

|

https://api.github.com/repos/pwittchen/ReactiveBus

|

closed

|

release 0.0.5

|

release process

|

**Initial release notes**:

- Improved builder pattern in the `Event` class - PR #10

**things to do**:

- [x] bump library version

- [x] publish artifact to sonatype

- [x] close and release artifact on sonatype

- [x] update changelog after maven sync

- [x] update download section after maven sync

- [x] publish new release on GitHub

|

1.0

|

release 0.0.5 - **Initial release notes**:

- Improved builder pattern in the `Event` class - PR #10

**things to do**:

- [x] bump library version

- [x] publish artifact to sonatype

- [x] close and release artifact on sonatype

- [x] update changelog after maven sync

- [x] update download section after maven sync

- [x] publish new release on GitHub

|

process

|

release initial release notes improved builder pattern in the event class pr things to do bump library version publish artifact to sonatype close and release artifact on sonatype update changelog after maven sync update download section after maven sync publish new release on github

| 1

|

22,246

| 30,801,443,677

|

IssuesEvent

|

2023-08-01 02:00:08

|

lizhihao6/get-daily-arxiv-noti

|

https://api.github.com/repos/lizhihao6/get-daily-arxiv-noti

|

opened

|

New submissions for Tue, 1 Aug 23

|

event camera white balance isp compression image signal processing image signal process raw raw image events camera color contrast events AWB

|

## Keyword: events

### Seeing Behind Dynamic Occlusions with Event Cameras

- **Authors:** Rong Zou, Manasi Muglikar, Niko Messikommer, Davide Scaramuzza

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.15829

- **Pdf link:** https://arxiv.org/pdf/2307.15829

- **Abstract**

Unwanted camera occlusions, such as debris, dust, rain-drops, and snow, can severely degrade the performance of computer-vision systems. Dynamic occlusions are particularly challenging because of the continuously changing pattern. Existing occlusion-removal methods currently use synthetic aperture imaging or image inpainting. However, they face issues with dynamic occlusions as these require multiple viewpoints or user-generated masks to hallucinate the background intensity. We propose a novel approach to reconstruct the background from a single viewpoint in the presence of dynamic occlusions. Our solution relies for the first time on the combination of a traditional camera with an event camera. When an occlusion moves across a background image, it causes intensity changes that trigger events. These events provide additional information on the relative intensity changes between foreground and background at a high temporal resolution, enabling a truer reconstruction of the background content. We present the first large-scale dataset consisting of synchronized images and event sequences to evaluate our approach. We show that our method outperforms image inpainting methods by 3dB in terms of PSNR on our dataset.

### CMDA: Cross-Modality Domain Adaptation for Nighttime Semantic Segmentation

- **Authors:** Ruihao Xia, Chaoqiang Zhao, Meng Zheng, Ziyan Wu, Qiyu Sun, Yang Tang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.15942

- **Pdf link:** https://arxiv.org/pdf/2307.15942

- **Abstract**

Most nighttime semantic segmentation studies are based on domain adaptation approaches and image input. However, limited by the low dynamic range of conventional cameras, images fail to capture structural details and boundary information in low-light conditions. Event cameras, as a new form of vision sensors, are complementary to conventional cameras with their high dynamic range. To this end, we propose a novel unsupervised Cross-Modality Domain Adaptation (CMDA) framework to leverage multi-modality (Images and Events) information for nighttime semantic segmentation, with only labels on daytime images. In CMDA, we design the Image Motion-Extractor to extract motion information and the Image Content-Extractor to extract content information from images, in order to bridge the gap between different modalities (Images to Events) and domains (Day to Night). Besides, we introduce the first image-event nighttime semantic segmentation dataset. Extensive experiments on both the public image dataset and the proposed image-event dataset demonstrate the effectiveness of our proposed approach. We open-source our code, models, and dataset at https://github.com/XiaRho/CMDA.

### Fully $1\times1$ Convolutional Network for Lightweight Image Super-Resolution

- **Authors:** Gang Wu, Junjun Jiang, Kui Jiang, Xianming Liu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI)

- **Arxiv link:** https://arxiv.org/abs/2307.16140

- **Pdf link:** https://arxiv.org/pdf/2307.16140

- **Abstract**

Deep models have achieved significant process on single image super-resolution (SISR) tasks, in particular large models with large kernel ($3\times3$ or more). However, the heavy computational footprint of such models prevents their deployment in real-time, resource-constrained environments. Conversely, $1\times1$ convolutions bring substantial computational efficiency, but struggle with aggregating local spatial representations, an essential capability to SISR models. In response to this dichotomy, we propose to harmonize the merits of both $3\times3$ and $1\times1$ kernels, and exploit a great potential for lightweight SISR tasks. Specifically, we propose a simple yet effective fully $1\times1$ convolutional network, named Shift-Conv-based Network (SCNet). By incorporating a parameter-free spatial-shift operation, it equips the fully $1\times1$ convolutional network with powerful representation capability while impressive computational efficiency. Extensive experiments demonstrate that SCNets, despite its fully $1\times1$ convolutional structure, consistently matches or even surpasses the performance of existing lightweight SR models that employ regular convolutions.

## Keyword: event camera

### Seeing Behind Dynamic Occlusions with Event Cameras

- **Authors:** Rong Zou, Manasi Muglikar, Niko Messikommer, Davide Scaramuzza

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.15829

- **Pdf link:** https://arxiv.org/pdf/2307.15829

- **Abstract**

Unwanted camera occlusions, such as debris, dust, rain-drops, and snow, can severely degrade the performance of computer-vision systems. Dynamic occlusions are particularly challenging because of the continuously changing pattern. Existing occlusion-removal methods currently use synthetic aperture imaging or image inpainting. However, they face issues with dynamic occlusions as these require multiple viewpoints or user-generated masks to hallucinate the background intensity. We propose a novel approach to reconstruct the background from a single viewpoint in the presence of dynamic occlusions. Our solution relies for the first time on the combination of a traditional camera with an event camera. When an occlusion moves across a background image, it causes intensity changes that trigger events. These events provide additional information on the relative intensity changes between foreground and background at a high temporal resolution, enabling a truer reconstruction of the background content. We present the first large-scale dataset consisting of synchronized images and event sequences to evaluate our approach. We show that our method outperforms image inpainting methods by 3dB in terms of PSNR on our dataset.

### CMDA: Cross-Modality Domain Adaptation for Nighttime Semantic Segmentation

- **Authors:** Ruihao Xia, Chaoqiang Zhao, Meng Zheng, Ziyan Wu, Qiyu Sun, Yang Tang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.15942

- **Pdf link:** https://arxiv.org/pdf/2307.15942

- **Abstract**

Most nighttime semantic segmentation studies are based on domain adaptation approaches and image input. However, limited by the low dynamic range of conventional cameras, images fail to capture structural details and boundary information in low-light conditions. Event cameras, as a new form of vision sensors, are complementary to conventional cameras with their high dynamic range. To this end, we propose a novel unsupervised Cross-Modality Domain Adaptation (CMDA) framework to leverage multi-modality (Images and Events) information for nighttime semantic segmentation, with only labels on daytime images. In CMDA, we design the Image Motion-Extractor to extract motion information and the Image Content-Extractor to extract content information from images, in order to bridge the gap between different modalities (Images to Events) and domains (Day to Night). Besides, we introduce the first image-event nighttime semantic segmentation dataset. Extensive experiments on both the public image dataset and the proposed image-event dataset demonstrate the effectiveness of our proposed approach. We open-source our code, models, and dataset at https://github.com/XiaRho/CMDA.

## Keyword: events camera

There is no result

## Keyword: white balance

There is no result

## Keyword: color contrast

There is no result

## Keyword: AWB

There is no result

## Keyword: ISP

### Implementing Edge Based Object Detection For Microplastic Debris

- **Authors:** Amardeep Singh, Prof. Charles Jia, Prof. Donald Kirk

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI)

- **Arxiv link:** https://arxiv.org/abs/2307.16289

- **Pdf link:** https://arxiv.org/pdf/2307.16289

- **Abstract**

Plastic has imbibed itself as an indispensable part of our day to day activities, becoming a source of problems due to its non-biodegradable nature and cheaper production prices. With these problems, comes the challenge of mitigating and responding to the aftereffects of disposal or the lack of proper disposal which leads to waste concentrating in locations and disturbing ecosystems for both plants and animals. As plastic debris levels continue to rise with the accumulation of waste in garbage patches in landfills and more hazardously in natural water bodies, swift action is necessary to plug or cease this flow. While manual sorting operations and detection can offer a solution, they can be augmented using highly advanced computer imagery linked with robotic appendages for removing wastes. The primary application of focus in this report are the much-discussed Computer Vision and Open Vision which have gained novelty for their light dependence on internet and ability to relay information in remote areas. These applications can be applied to the creation of edge-based mobility devices that can as a counter to the growing problem of plastic debris in oceans and rivers, demanding little connectivity and still offering the same results with reasonably timed maintenance. The principal findings of this project cover the various methods that were tested and deployed to detect waste in images, as well as comparing them against different waste types. The project has been able to produce workable models that can perform on time detection of sampled images using an augmented CNN approach. Latter portions of the project have also achieved a better interpretation of the necessary preprocessing steps required to arrive at the best accuracies, including the best hardware for expanding waste detection studies to larger environments.

### DRAW: Defending Camera-shooted RAW against Image Manipulation

- **Authors:** Xiaoxiao Hu, Qichao Ying, Zhenxing Qian, Sheng Li, Xinpeng Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Multimedia (cs.MM); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2307.16418

- **Pdf link:** https://arxiv.org/pdf/2307.16418

- **Abstract**

RAW files are the initial measurement of scene radiance widely used in most cameras, and the ubiquitously-used RGB images are converted from RAW data through Image Signal Processing (ISP) pipelines. Nowadays, digital images are risky of being nefariously manipulated. Inspired by the fact that innate immunity is the first line of body defense, we propose DRAW, a novel scheme of defending images against manipulation by protecting their sources, i.e., camera-shooted RAWs. Specifically, we design a lightweight Multi-frequency Partial Fusion Network (MPF-Net) friendly to devices with limited computing resources by frequency learning and partial feature fusion. It introduces invisible watermarks as protective signal into the RAW data. The protection capability can not only be transferred into the rendered RGB images regardless of the applied ISP pipeline, but also is resilient to post-processing operations such as blurring or compression. Once the image is manipulated, we can accurately identify the forged areas with a localization network. Extensive experiments on several famous RAW datasets, e.g., RAISE, FiveK and SIDD, indicate the effectiveness of our method. We hope that this technique can be used in future cameras as an option for image protection, which could effectively restrict image manipulation at the source.

### Digging Into Uncertainty-based Pseudo-label for Robust Stereo Matching

- **Authors:** Zhelun Shen, Xibin Song, Yuchao Dai, Dingfu Zhou, Zhibo Rao, Liangjun Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.16509

- **Pdf link:** https://arxiv.org/pdf/2307.16509

- **Abstract**

Due to the domain differences and unbalanced disparity distribution across multiple datasets, current stereo matching approaches are commonly limited to a specific dataset and generalize poorly to others. Such domain shift issue is usually addressed by substantial adaptation on costly target-domain ground-truth data, which cannot be easily obtained in practical settings. In this paper, we propose to dig into uncertainty estimation for robust stereo matching. Specifically, to balance the disparity distribution, we employ a pixel-level uncertainty estimation to adaptively adjust the next stage disparity searching space, in this way driving the network progressively prune out the space of unlikely correspondences. Then, to solve the limited ground truth data, an uncertainty-based pseudo-label is proposed to adapt the pre-trained model to the new domain, where pixel-level and area-level uncertainty estimation are proposed to filter out the high-uncertainty pixels of predicted disparity maps and generate sparse while reliable pseudo-labels to align the domain gap. Experimentally, our method shows strong cross-domain, adapt, and joint generalization and obtains \textbf{1st} place on the stereo task of Robust Vision Challenge 2020. Additionally, our uncertainty-based pseudo-labels can be extended to train monocular depth estimation networks in an unsupervised way and even achieves comparable performance with the supervised methods. The code will be available at https://github.com/gallenszl/UCFNet.

### Multi-Spectral Image Stitching via Spatial Graph Reasoning

- **Authors:** Zhiying Jiang, Zengxi Zhang, Jinyuan Liu, Xin Fan, Risheng Liu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.16741

- **Pdf link:** https://arxiv.org/pdf/2307.16741

- **Abstract**

Multi-spectral image stitching leverages the complementarity between infrared and visible images to generate a robust and reliable wide field-of-view (FOV) scene. The primary challenge of this task is to explore the relations between multi-spectral images for aligning and integrating multi-view scenes. Capitalizing on the strengths of Graph Convolutional Networks (GCNs) in modeling feature relationships, we propose a spatial graph reasoning based multi-spectral image stitching method that effectively distills the deformation and integration of multi-spectral images across different viewpoints. To accomplish this, we embed multi-scale complementary features from the same view position into a set of nodes. The correspondence across different views is learned through powerful dense feature embeddings, where both inter- and intra-correlations are developed to exploit cross-view matching and enhance inner feature disparity. By introducing long-range coherence along spatial and channel dimensions, the complementarity of pixel relations and channel interdependencies aids in the reconstruction of aligned multi-view features, generating informative and reliable wide FOV scenes. Moreover, we release a challenging dataset named ChaMS, comprising both real-world and synthetic sets with significant parallax, providing a new option for comprehensive evaluation. Extensive experiments demonstrate that our method surpasses the state-of-the-arts.

## Keyword: image signal processing

### DRAW: Defending Camera-shooted RAW against Image Manipulation

- **Authors:** Xiaoxiao Hu, Qichao Ying, Zhenxing Qian, Sheng Li, Xinpeng Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Multimedia (cs.MM); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2307.16418

- **Pdf link:** https://arxiv.org/pdf/2307.16418

- **Abstract**

RAW files are the initial measurement of scene radiance widely used in most cameras, and the ubiquitously-used RGB images are converted from RAW data through Image Signal Processing (ISP) pipelines. Nowadays, digital images are risky of being nefariously manipulated. Inspired by the fact that innate immunity is the first line of body defense, we propose DRAW, a novel scheme of defending images against manipulation by protecting their sources, i.e., camera-shooted RAWs. Specifically, we design a lightweight Multi-frequency Partial Fusion Network (MPF-Net) friendly to devices with limited computing resources by frequency learning and partial feature fusion. It introduces invisible watermarks as protective signal into the RAW data. The protection capability can not only be transferred into the rendered RGB images regardless of the applied ISP pipeline, but also is resilient to post-processing operations such as blurring or compression. Once the image is manipulated, we can accurately identify the forged areas with a localization network. Extensive experiments on several famous RAW datasets, e.g., RAISE, FiveK and SIDD, indicate the effectiveness of our method. We hope that this technique can be used in future cameras as an option for image protection, which could effectively restrict image manipulation at the source.

## Keyword: image signal process

### DRAW: Defending Camera-shooted RAW against Image Manipulation

- **Authors:** Xiaoxiao Hu, Qichao Ying, Zhenxing Qian, Sheng Li, Xinpeng Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Multimedia (cs.MM); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2307.16418

- **Pdf link:** https://arxiv.org/pdf/2307.16418

- **Abstract**

RAW files are the initial measurement of scene radiance widely used in most cameras, and the ubiquitously-used RGB images are converted from RAW data through Image Signal Processing (ISP) pipelines. Nowadays, digital images are risky of being nefariously manipulated. Inspired by the fact that innate immunity is the first line of body defense, we propose DRAW, a novel scheme of defending images against manipulation by protecting their sources, i.e., camera-shooted RAWs. Specifically, we design a lightweight Multi-frequency Partial Fusion Network (MPF-Net) friendly to devices with limited computing resources by frequency learning and partial feature fusion. It introduces invisible watermarks as protective signal into the RAW data. The protection capability can not only be transferred into the rendered RGB images regardless of the applied ISP pipeline, but also is resilient to post-processing operations such as blurring or compression. Once the image is manipulated, we can accurately identify the forged areas with a localization network. Extensive experiments on several famous RAW datasets, e.g., RAISE, FiveK and SIDD, indicate the effectiveness of our method. We hope that this technique can be used in future cameras as an option for image protection, which could effectively restrict image manipulation at the source.

## Keyword: compression

### InfoStyler: Disentanglement Information Bottleneck for Artistic Style Transfer

- **Authors:** Yueming Lyu, Yue Jiang, Bo Peng, Jing Dong

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.16227

- **Pdf link:** https://arxiv.org/pdf/2307.16227

- **Abstract**

Artistic style transfer aims to transfer the style of an artwork to a photograph while maintaining its original overall content. Many prior works focus on designing various transfer modules to transfer the style statistics to the content image. Although effective, ignoring the clear disentanglement of the content features and the style features from the first beginning, they have difficulty in balancing between content preservation and style transferring. To tackle this problem, we propose a novel information disentanglement method, named InfoStyler, to capture the minimal sufficient information for both content and style representations from the pre-trained encoding network. InfoStyler formulates the disentanglement representation learning as an information compression problem by eliminating style statistics from the content image and removing the content structure from the style image. Besides, to further facilitate disentanglement learning, a cross-domain Information Bottleneck (IB) learning strategy is proposed by reconstructing the content and style domains. Extensive experiments demonstrate that our InfoStyler can synthesize high-quality stylized images while balancing content structure preservation and style pattern richness.

### DRAW: Defending Camera-shooted RAW against Image Manipulation

- **Authors:** Xiaoxiao Hu, Qichao Ying, Zhenxing Qian, Sheng Li, Xinpeng Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Multimedia (cs.MM); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2307.16418

- **Pdf link:** https://arxiv.org/pdf/2307.16418

- **Abstract**

RAW files are the initial measurement of scene radiance widely used in most cameras, and the ubiquitously-used RGB images are converted from RAW data through Image Signal Processing (ISP) pipelines. Nowadays, digital images are risky of being nefariously manipulated. Inspired by the fact that innate immunity is the first line of body defense, we propose DRAW, a novel scheme of defending images against manipulation by protecting their sources, i.e., camera-shooted RAWs. Specifically, we design a lightweight Multi-frequency Partial Fusion Network (MPF-Net) friendly to devices with limited computing resources by frequency learning and partial feature fusion. It introduces invisible watermarks as protective signal into the RAW data. The protection capability can not only be transferred into the rendered RGB images regardless of the applied ISP pipeline, but also is resilient to post-processing operations such as blurring or compression. Once the image is manipulated, we can accurately identify the forged areas with a localization network. Extensive experiments on several famous RAW datasets, e.g., RAISE, FiveK and SIDD, indicate the effectiveness of our method. We hope that this technique can be used in future cameras as an option for image protection, which could effectively restrict image manipulation at the source.

## Keyword: RAW

### DRAW: Defending Camera-shooted RAW against Image Manipulation

- **Authors:** Xiaoxiao Hu, Qichao Ying, Zhenxing Qian, Sheng Li, Xinpeng Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Multimedia (cs.MM); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2307.16418

- **Pdf link:** https://arxiv.org/pdf/2307.16418

- **Abstract**

RAW files are the initial measurement of scene radiance widely used in most cameras, and the ubiquitously-used RGB images are converted from RAW data through Image Signal Processing (ISP) pipelines. Nowadays, digital images are risky of being nefariously manipulated. Inspired by the fact that innate immunity is the first line of body defense, we propose DRAW, a novel scheme of defending images against manipulation by protecting their sources, i.e., camera-shooted RAWs. Specifically, we design a lightweight Multi-frequency Partial Fusion Network (MPF-Net) friendly to devices with limited computing resources by frequency learning and partial feature fusion. It introduces invisible watermarks as protective signal into the RAW data. The protection capability can not only be transferred into the rendered RGB images regardless of the applied ISP pipeline, but also is resilient to post-processing operations such as blurring or compression. Once the image is manipulated, we can accurately identify the forged areas with a localization network. Extensive experiments on several famous RAW datasets, e.g., RAISE, FiveK and SIDD, indicate the effectiveness of our method. We hope that this technique can be used in future cameras as an option for image protection, which could effectively restrict image manipulation at the source.

### Towards General Low-Light Raw Noise Synthesis and Modeling

- **Authors:** Feng Zhang, Bin Xu, Zhiqiang Li, Xinran Liu, Qingbo Lu, Changxin Gao, Nong Sang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Multimedia (cs.MM); Image and Video Processing (eess.IV)

- **Arxiv link:** https://arxiv.org/abs/2307.16508

- **Pdf link:** https://arxiv.org/pdf/2307.16508

- **Abstract**

Modeling and synthesizing low-light raw noise is a fundamental problem for computational photography and image processing applications. Although most recent works have adopted physics-based models to synthesize noise, the signal-independent noise in low-light conditions is far more complicated and varies dramatically across camera sensors, which is beyond the description of these models. To address this issue, we introduce a new perspective to synthesize the signal-independent noise by a generative model. Specifically, we synthesize the signal-dependent and signal-independent noise in a physics- and learning-based manner, respectively. In this way, our method can be considered as a general model, that is, it can simultaneously learn different noise characteristics for different ISO levels and generalize to various sensors. Subsequently, we present an effective multi-scale discriminator termed Fourier transformer discriminator (FTD) to distinguish the noise distribution accurately. Additionally, we collect a new low-light raw denoising (LRD) dataset for training and benchmarking. Qualitative validation shows that the noise generated by our proposed noise model can be highly similar to the real noise in terms of distribution. Furthermore, extensive denoising experiments demonstrate that our method performs favorably against state-of-the-art methods on different sensors. The source code and dataset can be found at ~\url{https://github.com/fengzhang427/LRD}.

### Echoes Beyond Points: Unleashing the Power of Raw Radar Data in Multi-modality Fusion

- **Authors:** Yang Liu, Feng Wang, Naiyan Wang, Zhaoxiang Zhang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.16532

- **Pdf link:** https://arxiv.org/pdf/2307.16532

- **Abstract**

Radar is ubiquitous in autonomous driving systems due to its low cost and good adaptability to bad weather. Nevertheless, the radar detection performance is usually inferior because its point cloud is sparse and not accurate due to the poor azimuth and elevation resolution. Moreover, point cloud generation algorithms already drop weak signals to reduce the false targets which may be suboptimal for the use of deep fusion. In this paper, we propose a novel method named EchoFusion to skip the existing radar signal processing pipeline and then incorporate the radar raw data with other sensors. Specifically, we first generate the Bird's Eye View (BEV) queries and then take corresponding spectrum features from radar to fuse with other sensors. By this approach, our method could utilize both rich and lossless distance and speed clues from radar echoes and rich semantic clues from images, making our method surpass all existing methods on the RADIal dataset, and approach the performance of LiDAR. Codes will be available upon acceptance.

## Keyword: raw image

There is no result

|

2.0

|

New submissions for Tue, 1 Aug 23 - ## Keyword: events

### Seeing Behind Dynamic Occlusions with Event Cameras

- **Authors:** Rong Zou, Manasi Muglikar, Niko Messikommer, Davide Scaramuzza

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.15829

- **Pdf link:** https://arxiv.org/pdf/2307.15829

- **Abstract**

Unwanted camera occlusions, such as debris, dust, rain-drops, and snow, can severely degrade the performance of computer-vision systems. Dynamic occlusions are particularly challenging because of the continuously changing pattern. Existing occlusion-removal methods currently use synthetic aperture imaging or image inpainting. However, they face issues with dynamic occlusions as these require multiple viewpoints or user-generated masks to hallucinate the background intensity. We propose a novel approach to reconstruct the background from a single viewpoint in the presence of dynamic occlusions. Our solution relies for the first time on the combination of a traditional camera with an event camera. When an occlusion moves across a background image, it causes intensity changes that trigger events. These events provide additional information on the relative intensity changes between foreground and background at a high temporal resolution, enabling a truer reconstruction of the background content. We present the first large-scale dataset consisting of synchronized images and event sequences to evaluate our approach. We show that our method outperforms image inpainting methods by 3dB in terms of PSNR on our dataset.

### CMDA: Cross-Modality Domain Adaptation for Nighttime Semantic Segmentation

- **Authors:** Ruihao Xia, Chaoqiang Zhao, Meng Zheng, Ziyan Wu, Qiyu Sun, Yang Tang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.15942

- **Pdf link:** https://arxiv.org/pdf/2307.15942

- **Abstract**

Most nighttime semantic segmentation studies are based on domain adaptation approaches and image input. However, limited by the low dynamic range of conventional cameras, images fail to capture structural details and boundary information in low-light conditions. Event cameras, as a new form of vision sensors, are complementary to conventional cameras with their high dynamic range. To this end, we propose a novel unsupervised Cross-Modality Domain Adaptation (CMDA) framework to leverage multi-modality (Images and Events) information for nighttime semantic segmentation, with only labels on daytime images. In CMDA, we design the Image Motion-Extractor to extract motion information and the Image Content-Extractor to extract content information from images, in order to bridge the gap between different modalities (Images to Events) and domains (Day to Night). Besides, we introduce the first image-event nighttime semantic segmentation dataset. Extensive experiments on both the public image dataset and the proposed image-event dataset demonstrate the effectiveness of our proposed approach. We open-source our code, models, and dataset at https://github.com/XiaRho/CMDA.

### Fully $1\times1$ Convolutional Network for Lightweight Image Super-Resolution

- **Authors:** Gang Wu, Junjun Jiang, Kui Jiang, Xianming Liu

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV); Artificial Intelligence (cs.AI)

- **Arxiv link:** https://arxiv.org/abs/2307.16140

- **Pdf link:** https://arxiv.org/pdf/2307.16140

- **Abstract**

Deep models have achieved significant process on single image super-resolution (SISR) tasks, in particular large models with large kernel ($3\times3$ or more). However, the heavy computational footprint of such models prevents their deployment in real-time, resource-constrained environments. Conversely, $1\times1$ convolutions bring substantial computational efficiency, but struggle with aggregating local spatial representations, an essential capability to SISR models. In response to this dichotomy, we propose to harmonize the merits of both $3\times3$ and $1\times1$ kernels, and exploit a great potential for lightweight SISR tasks. Specifically, we propose a simple yet effective fully $1\times1$ convolutional network, named Shift-Conv-based Network (SCNet). By incorporating a parameter-free spatial-shift operation, it equips the fully $1\times1$ convolutional network with powerful representation capability while impressive computational efficiency. Extensive experiments demonstrate that SCNets, despite its fully $1\times1$ convolutional structure, consistently matches or even surpasses the performance of existing lightweight SR models that employ regular convolutions.

## Keyword: event camera

### Seeing Behind Dynamic Occlusions with Event Cameras

- **Authors:** Rong Zou, Manasi Muglikar, Niko Messikommer, Davide Scaramuzza

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.15829

- **Pdf link:** https://arxiv.org/pdf/2307.15829

- **Abstract**

Unwanted camera occlusions, such as debris, dust, rain-drops, and snow, can severely degrade the performance of computer-vision systems. Dynamic occlusions are particularly challenging because of the continuously changing pattern. Existing occlusion-removal methods currently use synthetic aperture imaging or image inpainting. However, they face issues with dynamic occlusions as these require multiple viewpoints or user-generated masks to hallucinate the background intensity. We propose a novel approach to reconstruct the background from a single viewpoint in the presence of dynamic occlusions. Our solution relies for the first time on the combination of a traditional camera with an event camera. When an occlusion moves across a background image, it causes intensity changes that trigger events. These events provide additional information on the relative intensity changes between foreground and background at a high temporal resolution, enabling a truer reconstruction of the background content. We present the first large-scale dataset consisting of synchronized images and event sequences to evaluate our approach. We show that our method outperforms image inpainting methods by 3dB in terms of PSNR on our dataset.

### CMDA: Cross-Modality Domain Adaptation for Nighttime Semantic Segmentation

- **Authors:** Ruihao Xia, Chaoqiang Zhao, Meng Zheng, Ziyan Wu, Qiyu Sun, Yang Tang

- **Subjects:** Computer Vision and Pattern Recognition (cs.CV)

- **Arxiv link:** https://arxiv.org/abs/2307.15942

- **Pdf link:** https://arxiv.org/pdf/2307.15942

- **Abstract**