Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

189,677

| 6,800,668,521

|

IssuesEvent

|

2017-11-02 14:39:54

|

orange-alliance/the-orange-alliance

|

https://api.github.com/repos/orange-alliance/the-orange-alliance

|

opened

|

Allow API Key in the Header

|

enhancement High Priority

|

Allow the API Read Keys in the Header

DO NOT ADD TO THE WRITE PATHS

|

1.0

|

Allow API Key in the Header - Allow the API Read Keys in the Header

DO NOT ADD TO THE WRITE PATHS

|

non_process

|

allow api key in the header allow the api read keys in the header do not add to the write paths

| 0

|

3,090

| 6,106,524,094

|

IssuesEvent

|

2017-06-21 04:39:28

|

kerubistan/kerub

|

https://api.github.com/repos/kerubistan/kerub

|

closed

|

add a UI feature to add bmc info to the host

|

component:data processing component:ui enhancement

|

* type (ipmi/redfish)

* address

* authentication

|

1.0

|

add a UI feature to add bmc info to the host - * type (ipmi/redfish)

* address

* authentication

|

process

|

add a ui feature to add bmc info to the host type ipmi redfish address authentication

| 1

|

104,263

| 16,613,566,306

|

IssuesEvent

|

2021-06-02 14:15:35

|

Thanraj/linux-4.1.15

|

https://api.github.com/repos/Thanraj/linux-4.1.15

|

opened

|

CVE-2018-7191 (Medium) detected in linux-stable-rtv4.1.33, linuxlinux-4.1.17

|

security vulnerability

|

## CVE-2018-7191 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv4.1.33</b>, <b>linuxlinux-4.1.17</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In the tun subsystem in the Linux kernel before 4.13.14, dev_get_valid_name is not called before register_netdevice. This allows local users to cause a denial of service (NULL pointer dereference and panic) via an ioctl(TUNSETIFF) call with a dev name containing a / character. This is similar to CVE-2013-4343.

<p>Publish Date: 2019-05-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-7191>CVE-2018-7191</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-7191">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-7191</a></p>

<p>Release Date: 2019-05-17</p>

<p>Fix Resolution: v4.14-rc6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2018-7191 (Medium) detected in linux-stable-rtv4.1.33, linuxlinux-4.1.17 - ## CVE-2018-7191 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv4.1.33</b>, <b>linuxlinux-4.1.17</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In the tun subsystem in the Linux kernel before 4.13.14, dev_get_valid_name is not called before register_netdevice. This allows local users to cause a denial of service (NULL pointer dereference and panic) via an ioctl(TUNSETIFF) call with a dev name containing a / character. This is similar to CVE-2013-4343.

<p>Publish Date: 2019-05-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-7191>CVE-2018-7191</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-7191">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-7191</a></p>

<p>Release Date: 2019-05-17</p>

<p>Fix Resolution: v4.14-rc6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_process

|

cve medium detected in linux stable linuxlinux cve medium severity vulnerability vulnerable libraries linux stable linuxlinux vulnerability details in the tun subsystem in the linux kernel before dev get valid name is not called before register netdevice this allows local users to cause a denial of service null pointer dereference and panic via an ioctl tunsetiff call with a dev name containing a character this is similar to cve publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

19,867

| 26,278,141,214

|

IssuesEvent

|

2023-01-07 02:04:19

|

rusefi/rusefi_documentation

|

https://api.github.com/repos/rusefi/rusefi_documentation

|

closed

|

What's the plan for wiki2-human https://github.com/rusefi/rusefi/wiki

|

wiki location & process change

|

Once we move to https://wiki.rusefi.com/ do we want to remove or adjust https://github.com/rusefi/rusefi/wiki in order to reduce potential confusion between https://github.com/rusefi/rusefi/wiki and https://wiki.rusefi.com/?

to some expend that depends on if we can easily support both or not

|

1.0

|

What's the plan for wiki2-human https://github.com/rusefi/rusefi/wiki - Once we move to https://wiki.rusefi.com/ do we want to remove or adjust https://github.com/rusefi/rusefi/wiki in order to reduce potential confusion between https://github.com/rusefi/rusefi/wiki and https://wiki.rusefi.com/?

to some expend that depends on if we can easily support both or not

|

process

|

what s the plan for human once we move to do we want to remove or adjust in order to reduce potential confusion between and to some expend that depends on if we can easily support both or not

| 1

|

409,487

| 27,740,366,356

|

IssuesEvent

|

2023-03-15 13:58:56

|

eic/EICrecon

|

https://api.github.com/repos/eic/EICrecon

|

closed

|

jana-generate plugin quibbles

|

part:documentation

|

### Environment: (where does this bug occur, have you tried other environments)

- Which branch (often `main` for latest released): All

- Which version (or `HEAD` for the most recent on git):all

- Any specific OS or system where the issue occurs?no

- Any special versions of ROOT or Geant4?no

### Steps to reproduce: (give a step by step account of how to trigger the bug)

1. jana-generate.py Plugin

### Expected Result: (what do you expect when you execute the steps above)

I expect skeleton code, with only lines of code that are needed in (commented out examples are fine.

### Actual Result: (what do you get when you execute the steps above)

I see a defined string "genfit" which ma not be necessary and a TFile/TDirectory which may not e needed. The Example histogram is actually there. Init does many things including define the histogram. In process the filling of this histogram is commented out. I'd expect either it is commented out everywhere or in everywhere. You could have an unobservant user who compiles this skeleton and run it. It might be shocking to to see it produce an empty root file (but wait the histogram is defined)

|

1.0

|

jana-generate plugin quibbles - ### Environment: (where does this bug occur, have you tried other environments)

- Which branch (often `main` for latest released): All

- Which version (or `HEAD` for the most recent on git):all

- Any specific OS or system where the issue occurs?no

- Any special versions of ROOT or Geant4?no

### Steps to reproduce: (give a step by step account of how to trigger the bug)

1. jana-generate.py Plugin

### Expected Result: (what do you expect when you execute the steps above)

I expect skeleton code, with only lines of code that are needed in (commented out examples are fine.

### Actual Result: (what do you get when you execute the steps above)

I see a defined string "genfit" which ma not be necessary and a TFile/TDirectory which may not e needed. The Example histogram is actually there. Init does many things including define the histogram. In process the filling of this histogram is commented out. I'd expect either it is commented out everywhere or in everywhere. You could have an unobservant user who compiles this skeleton and run it. It might be shocking to to see it produce an empty root file (but wait the histogram is defined)

|

non_process

|

jana generate plugin quibbles environment where does this bug occur have you tried other environments which branch often main for latest released all which version or head for the most recent on git all any specific os or system where the issue occurs no any special versions of root or no steps to reproduce give a step by step account of how to trigger the bug jana generate py plugin expected result what do you expect when you execute the steps above i expect skeleton code with only lines of code that are needed in commented out examples are fine actual result what do you get when you execute the steps above i see a defined string genfit which ma not be necessary and a tfile tdirectory which may not e needed the example histogram is actually there init does many things including define the histogram in process the filling of this histogram is commented out i d expect either it is commented out everywhere or in everywhere you could have an unobservant user who compiles this skeleton and run it it might be shocking to to see it produce an empty root file but wait the histogram is defined

| 0

|

215,672

| 16,614,424,830

|

IssuesEvent

|

2021-06-02 15:03:59

|

RedisBloom/redisbloom-go

|

https://api.github.com/repos/RedisBloom/redisbloom-go

|

opened

|

Count-Min Sketch creation, ingestion, and querying command examples

|

documentation

|

Sample example that can be used as reference:

https://github.com/RedisBloom/redisbloom-go/blob/master/example_client_test.go#L11

Further reference for commands:

https://oss.redislabs.com/redisbloom/CountMinSketch_Commands/

to check locally the documentation effects

```

# start http service

godoc -http=:6060

# open browser tab with

http://localhost:6060/pkg/github.com/RedisBloom/redisbloom-go/

```

|

1.0

|

Count-Min Sketch creation, ingestion, and querying command examples - Sample example that can be used as reference:

https://github.com/RedisBloom/redisbloom-go/blob/master/example_client_test.go#L11

Further reference for commands:

https://oss.redislabs.com/redisbloom/CountMinSketch_Commands/

to check locally the documentation effects

```

# start http service

godoc -http=:6060

# open browser tab with

http://localhost:6060/pkg/github.com/RedisBloom/redisbloom-go/

```

|

non_process

|

count min sketch creation ingestion and querying command examples sample example that can be used as reference further reference for commands to check locally the documentation effects start http service godoc http open browser tab with

| 0

|

31,216

| 25,448,846,523

|

IssuesEvent

|

2022-11-24 08:53:28

|

dotnet/roslyn

|

https://api.github.com/repos/dotnet/roslyn

|

closed

|

[Automated] PRs inserted in VS build main-33123.395

|

Area-Infrastructure untriaged vs-insertion

|

[View Complete Diff of Changes](https://github.com/dotnet/roslyn/compare/3f65b818a94d74f2230bda40382ea755702fe674...413319eb370210c93296ea90aa73987e41ee8521?w=1)

- [Add asserts for debug only test failures and fix tests (65565)](https://github.com/dotnet/roslyn/pull/65565)

- [Make sure the container syntax node is not GlobalStatements (65570)](https://github.com/dotnet/roslyn/pull/65570)

- [Increase CodeCleanUp test value (65575)](https://github.com/dotnet/roslyn/pull/65575)

- [Move to multi-column primary keys for our sqlite database. (65553)](https://github.com/dotnet/roslyn/pull/65553)

- [Update to latest version of protocol with new pull diagnostic types (65514)](https://github.com/dotnet/roslyn/pull/65514)

- [Semantic Snippets - Fix snippet priority in completion list (65103)](https://github.com/dotnet/roslyn/pull/65103)

- [[EnC] Allow reordering of top level statements (65560)](https://github.com/dotnet/roslyn/pull/65560)

- [Fix handling blocks in top level code. (65557)](https://github.com/dotnet/roslyn/pull/65557)

- [Add additional cases whre 'use coalesce expression' can simplify code. (65371)](https://github.com/dotnet/roslyn/pull/65371)

- [Fix MEF composition used for the LSIF generator (65512)](https://github.com/dotnet/roslyn/pull/65512)

- [Results of running the arrow-placement analyzer on roslyn (65475)](https://github.com/dotnet/roslyn/pull/65475)

- [Fix null ref in add-import (65549)](https://github.com/dotnet/roslyn/pull/65549)

- [Simplify how options are checked in the tagger. (65543)](https://github.com/dotnet/roslyn/pull/65543)

- [Fix spelling mistake (65541)](https://github.com/dotnet/roslyn/pull/65541)

- [Add experimental formatting option to control placement of `=>` (65476)](https://github.com/dotnet/roslyn/pull/65476)

- [[Port] Gracefully handle additional locations in DiagnosticData.Create (65532)](https://github.com/dotnet/roslyn/pull/65532)

- [Delete duplicate "SystemThreadingChannelsVersion" (65539)](https://github.com/dotnet/roslyn/pull/65539)

- [Fix bug in partial solutions after a document was removed and added (65349)](https://github.com/dotnet/roslyn/pull/65349)

- [Fix a NullReferenceException in Signature help (65372)](https://github.com/dotnet/roslyn/pull/65372)

- [Avoid ordering dependency in active statement map (65525)](https://github.com/dotnet/roslyn/pull/65525)

- [Ensure lvalue struct receivers are not copied by value due to interpolated string handler rewrite (65505)](https://github.com/dotnet/roslyn/pull/65505)

- [Do not import the VSWorkspace during Roslyn package initialization (65492)](https://github.com/dotnet/roslyn/pull/65492)

- [Optimize codegen for tuple swap scenarios (65327)](https://github.com/dotnet/roslyn/pull/65327)

- [Improve `NormalizeWhitespace` for object initializers (65249)](https://github.com/dotnet/roslyn/pull/65249)

- [Move context-sensitive parsing to context-free parsing. (65480)](https://github.com/dotnet/roslyn/pull/65480)

- [Add A/B test to allow us to turn off background compilation/parsing (65489)](https://github.com/dotnet/roslyn/pull/65489)

- [Run the conditional-token-placement fixer on roslyn (65469)](https://github.com/dotnet/roslyn/pull/65469)

- [Check type parameter index bounds when parsing `DocumentationCommentId` (65457)](https://github.com/dotnet/roslyn/pull/65457)

- [Convert parser warning into a binding warning. (65440)](https://github.com/dotnet/roslyn/pull/65440)

- [View Call Hierarchy - fix thread block (65452)](https://github.com/dotnet/roslyn/pull/65452)

- [Enhance rule metadata and suppression info in SARIF V2 errorlog (64277)](https://github.com/dotnet/roslyn/pull/64277)

- [Move IDS_FeatureXXX checks out of the parser (65413)](https://github.com/dotnet/roslyn/pull/65413)

- [Warn for unused parameters in source (65466)](https://github.com/dotnet/roslyn/pull/65466)

- [Pass in HostServices when creating the LSP server (65384)](https://github.com/dotnet/roslyn/pull/65384)

|

1.0

|

[Automated] PRs inserted in VS build main-33123.395 - [View Complete Diff of Changes](https://github.com/dotnet/roslyn/compare/3f65b818a94d74f2230bda40382ea755702fe674...413319eb370210c93296ea90aa73987e41ee8521?w=1)

- [Add asserts for debug only test failures and fix tests (65565)](https://github.com/dotnet/roslyn/pull/65565)

- [Make sure the container syntax node is not GlobalStatements (65570)](https://github.com/dotnet/roslyn/pull/65570)

- [Increase CodeCleanUp test value (65575)](https://github.com/dotnet/roslyn/pull/65575)

- [Move to multi-column primary keys for our sqlite database. (65553)](https://github.com/dotnet/roslyn/pull/65553)

- [Update to latest version of protocol with new pull diagnostic types (65514)](https://github.com/dotnet/roslyn/pull/65514)

- [Semantic Snippets - Fix snippet priority in completion list (65103)](https://github.com/dotnet/roslyn/pull/65103)

- [[EnC] Allow reordering of top level statements (65560)](https://github.com/dotnet/roslyn/pull/65560)

- [Fix handling blocks in top level code. (65557)](https://github.com/dotnet/roslyn/pull/65557)

- [Add additional cases whre 'use coalesce expression' can simplify code. (65371)](https://github.com/dotnet/roslyn/pull/65371)

- [Fix MEF composition used for the LSIF generator (65512)](https://github.com/dotnet/roslyn/pull/65512)

- [Results of running the arrow-placement analyzer on roslyn (65475)](https://github.com/dotnet/roslyn/pull/65475)

- [Fix null ref in add-import (65549)](https://github.com/dotnet/roslyn/pull/65549)

- [Simplify how options are checked in the tagger. (65543)](https://github.com/dotnet/roslyn/pull/65543)

- [Fix spelling mistake (65541)](https://github.com/dotnet/roslyn/pull/65541)

- [Add experimental formatting option to control placement of `=>` (65476)](https://github.com/dotnet/roslyn/pull/65476)

- [[Port] Gracefully handle additional locations in DiagnosticData.Create (65532)](https://github.com/dotnet/roslyn/pull/65532)

- [Delete duplicate "SystemThreadingChannelsVersion" (65539)](https://github.com/dotnet/roslyn/pull/65539)

- [Fix bug in partial solutions after a document was removed and added (65349)](https://github.com/dotnet/roslyn/pull/65349)

- [Fix a NullReferenceException in Signature help (65372)](https://github.com/dotnet/roslyn/pull/65372)

- [Avoid ordering dependency in active statement map (65525)](https://github.com/dotnet/roslyn/pull/65525)

- [Ensure lvalue struct receivers are not copied by value due to interpolated string handler rewrite (65505)](https://github.com/dotnet/roslyn/pull/65505)

- [Do not import the VSWorkspace during Roslyn package initialization (65492)](https://github.com/dotnet/roslyn/pull/65492)

- [Optimize codegen for tuple swap scenarios (65327)](https://github.com/dotnet/roslyn/pull/65327)

- [Improve `NormalizeWhitespace` for object initializers (65249)](https://github.com/dotnet/roslyn/pull/65249)

- [Move context-sensitive parsing to context-free parsing. (65480)](https://github.com/dotnet/roslyn/pull/65480)

- [Add A/B test to allow us to turn off background compilation/parsing (65489)](https://github.com/dotnet/roslyn/pull/65489)

- [Run the conditional-token-placement fixer on roslyn (65469)](https://github.com/dotnet/roslyn/pull/65469)

- [Check type parameter index bounds when parsing `DocumentationCommentId` (65457)](https://github.com/dotnet/roslyn/pull/65457)

- [Convert parser warning into a binding warning. (65440)](https://github.com/dotnet/roslyn/pull/65440)

- [View Call Hierarchy - fix thread block (65452)](https://github.com/dotnet/roslyn/pull/65452)

- [Enhance rule metadata and suppression info in SARIF V2 errorlog (64277)](https://github.com/dotnet/roslyn/pull/64277)

- [Move IDS_FeatureXXX checks out of the parser (65413)](https://github.com/dotnet/roslyn/pull/65413)

- [Warn for unused parameters in source (65466)](https://github.com/dotnet/roslyn/pull/65466)

- [Pass in HostServices when creating the LSP server (65384)](https://github.com/dotnet/roslyn/pull/65384)

|

non_process

|

prs inserted in vs build main allow reordering of top level statements gracefully handle additional locations in diagnosticdata create

| 0

|

18,637

| 25,953,548,394

|

IssuesEvent

|

2022-12-17 23:12:57

|

ikemen-engine/Ikemen-GO

|

https://api.github.com/repos/ikemen-engine/Ikemen-GO

|

closed

|

Different handling of stage zooming

|

bug compatibility

|

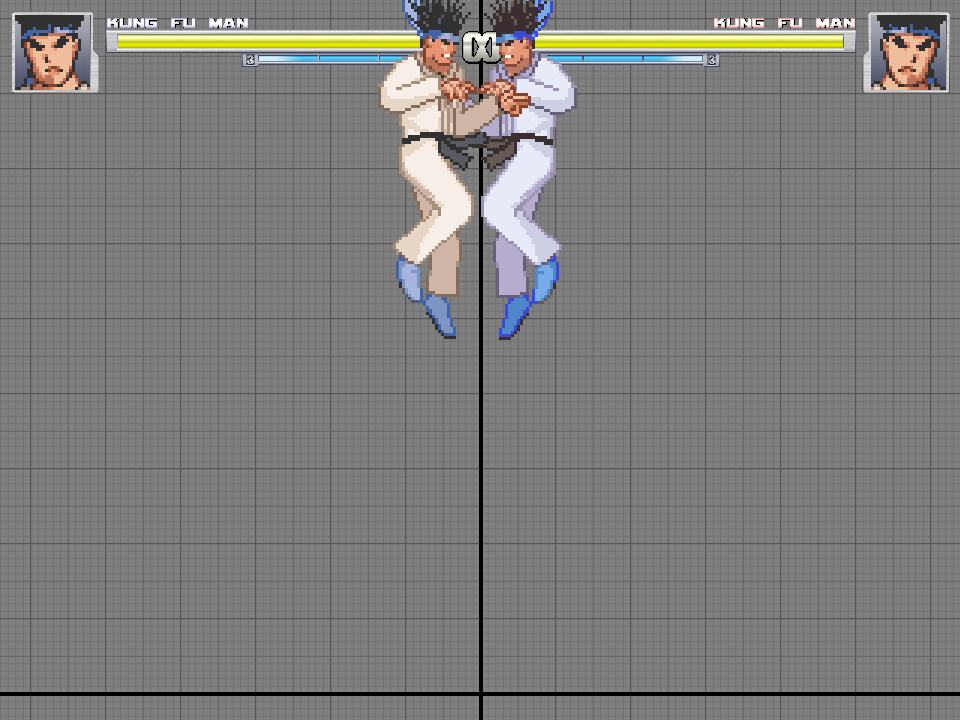

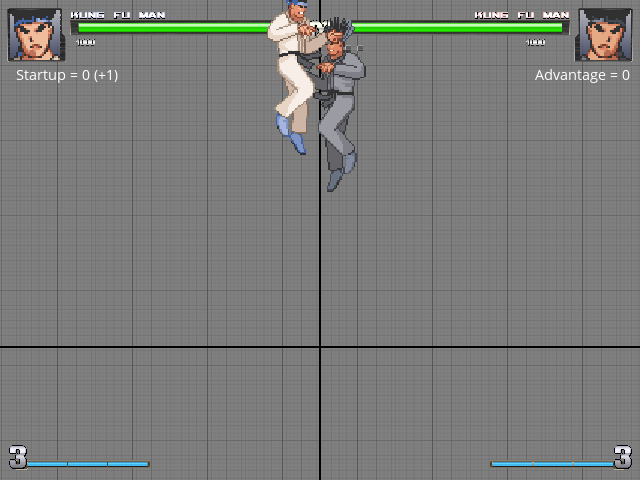

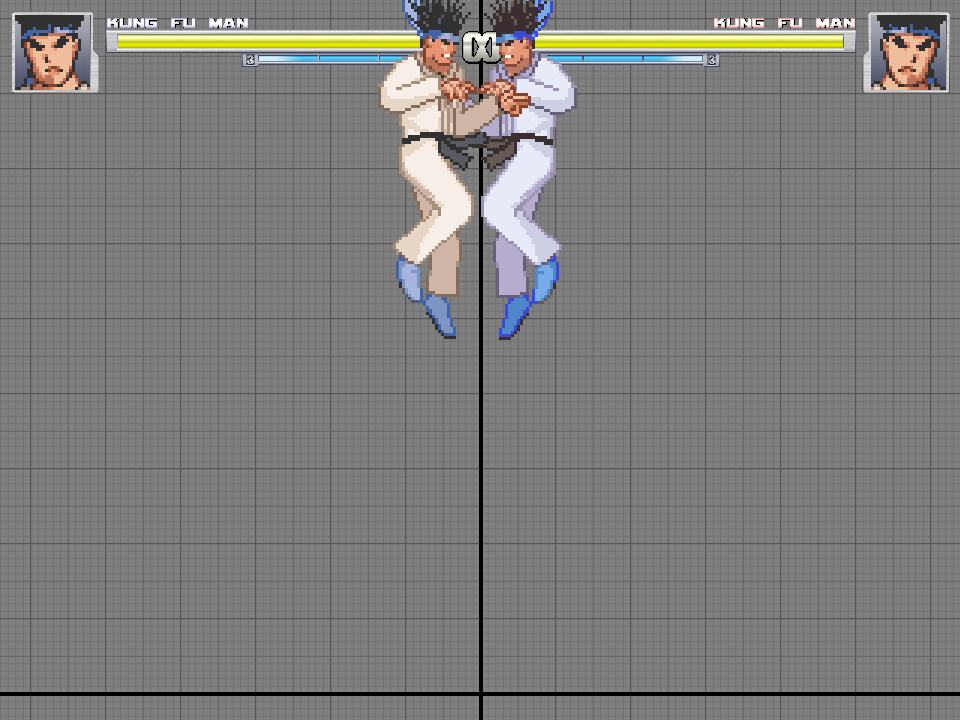

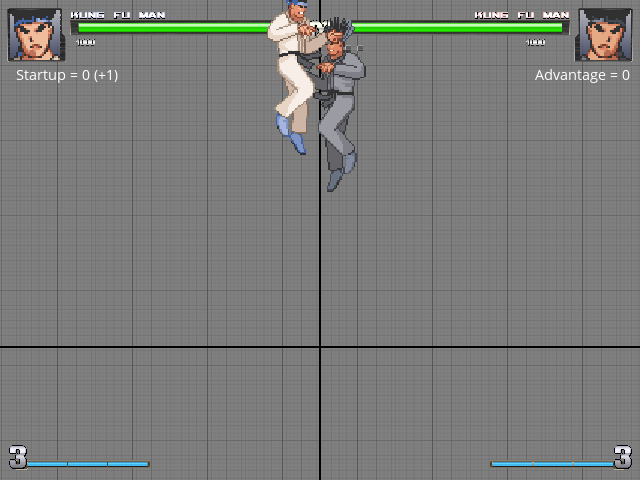

I've just noticed that stages that use tensionhigh and tensionlow have a different zoom behavior compared to Mugen 1.1. In Mugen, the stage will only zoom out if the characters are far apart, whereas in Ikemen it zooms out regardless of that.

Here's Mugen 1.1:

The characters are high above but the camera doesn't zoom out, because they are close to each other.

Ikemen GO (current repo):

Same scenario but the camera zooms out.

Here's the stage used:

[stage.zip](https://github.com/ikemen-engine/Ikemen-GO/files/9946463/stage.zip)

|

True

|

Different handling of stage zooming - I've just noticed that stages that use tensionhigh and tensionlow have a different zoom behavior compared to Mugen 1.1. In Mugen, the stage will only zoom out if the characters are far apart, whereas in Ikemen it zooms out regardless of that.

Here's Mugen 1.1:

The characters are high above but the camera doesn't zoom out, because they are close to each other.

Ikemen GO (current repo):

Same scenario but the camera zooms out.

Here's the stage used:

[stage.zip](https://github.com/ikemen-engine/Ikemen-GO/files/9946463/stage.zip)

|

non_process

|

different handling of stage zooming i ve just noticed that stages that use tensionhigh and tensionlow have a different zoom behavior compared to mugen in mugen the stage will only zoom out if the characters are far apart whereas in ikemen it zooms out regardless of that here s mugen the characters are high above but the camera doesn t zoom out because they are close to each other ikemen go current repo same scenario but the camera zooms out here s the stage used

| 0

|

17,596

| 23,424,464,549

|

IssuesEvent

|

2022-08-14 07:08:36

|

Battle-s/battle-school-backend

|

https://api.github.com/repos/Battle-s/battle-school-backend

|

closed

|

[FEAT] 학교 entity 생성

|

feature :computer: processing :hourglass_flowing_sand:

|

## 설명

## 체크사항

- [ ] 학교 관련 핵심 로직 설계 및 작성

- [ ] 회원가입 로직에 적용

## 참고자료

## 관련 논의

|

1.0

|

[FEAT] 학교 entity 생성 - ## 설명

## 체크사항

- [ ] 학교 관련 핵심 로직 설계 및 작성

- [ ] 회원가입 로직에 적용

## 참고자료

## 관련 논의

|

process

|

학교 entity 생성 설명 체크사항 학교 관련 핵심 로직 설계 및 작성 회원가입 로직에 적용 참고자료 관련 논의

| 1

|

63,639

| 12,359,948,475

|

IssuesEvent

|

2020-05-17 13:16:37

|

home-assistant/brands

|

https://api.github.com/repos/home-assistant/brands

|

closed

|

Foursquare is missing brand images

|

domain-missing has-codeowner

|

## The problem

The Foursquare integration does not have brand images in

this repository.

We recently started this Brands repository, to create a centralized storage of all brand-related images. These images are used on our website and the Home Assistant frontend.

The following images are missing and would ideally be added:

- `src/foursquare/icon.png`

- `src/foursquare/logo.png`

- `src/foursquare/icon@2x.png`

- `src/foursquare/logo@2x.png`

For image specifications and requirements, please see [README.md](https://github.com/home-assistant/brands/blob/master/README.md).

## Updating the documentation repository

Our documentation repository already has a logo for this integration, however, it does not meet the image requirements of this new Brands repository.

If adding images to this repository, please open up a PR to the documentation repository as well, removing the `logo: foursquare.png` line from this file:

<https://github.com/home-assistant/home-assistant.io/blob/current/source/_integrations/foursquare.markdown>

**Note**: The documentation PR needs to be opened against the `current` branch.

**Note2**: Please leave the actual logo file in the documentation repository. It will be cleaned up differently.

## Additional information

For more information about this repository, read the [README.md](https://github.com/home-assistant/brands/blob/master/README.md) file of this repository. It contains information on how this repository works, and image specification and requirements.

## Codeowner mention

Hi there, @robbiet480! Mind taking a look at this issue as it is with an integration (foursquare) you are listed as a [codeowner](https://github.com/home-assistant/core/blob/dev/homeassistant/components/foursquare/manifest.json) for? Thanks!

Resolving this issue is not limited to codeowners! If you want to help us out, feel free to resolve this issue! Thanks already!

|

1.0

|

Foursquare is missing brand images -

## The problem

The Foursquare integration does not have brand images in

this repository.

We recently started this Brands repository, to create a centralized storage of all brand-related images. These images are used on our website and the Home Assistant frontend.

The following images are missing and would ideally be added:

- `src/foursquare/icon.png`

- `src/foursquare/logo.png`

- `src/foursquare/icon@2x.png`

- `src/foursquare/logo@2x.png`

For image specifications and requirements, please see [README.md](https://github.com/home-assistant/brands/blob/master/README.md).

## Updating the documentation repository

Our documentation repository already has a logo for this integration, however, it does not meet the image requirements of this new Brands repository.

If adding images to this repository, please open up a PR to the documentation repository as well, removing the `logo: foursquare.png` line from this file:

<https://github.com/home-assistant/home-assistant.io/blob/current/source/_integrations/foursquare.markdown>

**Note**: The documentation PR needs to be opened against the `current` branch.

**Note2**: Please leave the actual logo file in the documentation repository. It will be cleaned up differently.

## Additional information

For more information about this repository, read the [README.md](https://github.com/home-assistant/brands/blob/master/README.md) file of this repository. It contains information on how this repository works, and image specification and requirements.

## Codeowner mention

Hi there, @robbiet480! Mind taking a look at this issue as it is with an integration (foursquare) you are listed as a [codeowner](https://github.com/home-assistant/core/blob/dev/homeassistant/components/foursquare/manifest.json) for? Thanks!

Resolving this issue is not limited to codeowners! If you want to help us out, feel free to resolve this issue! Thanks already!

|

non_process

|

foursquare is missing brand images the problem the foursquare integration does not have brand images in this repository we recently started this brands repository to create a centralized storage of all brand related images these images are used on our website and the home assistant frontend the following images are missing and would ideally be added src foursquare icon png src foursquare logo png src foursquare icon png src foursquare logo png for image specifications and requirements please see updating the documentation repository our documentation repository already has a logo for this integration however it does not meet the image requirements of this new brands repository if adding images to this repository please open up a pr to the documentation repository as well removing the logo foursquare png line from this file note the documentation pr needs to be opened against the current branch please leave the actual logo file in the documentation repository it will be cleaned up differently additional information for more information about this repository read the file of this repository it contains information on how this repository works and image specification and requirements codeowner mention hi there mind taking a look at this issue as it is with an integration foursquare you are listed as a for thanks resolving this issue is not limited to codeowners if you want to help us out feel free to resolve this issue thanks already

| 0

|

275,974

| 8,582,861,629

|

IssuesEvent

|

2018-11-13 18:06:02

|

sunjun-group/Ziyuan

|

https://api.github.com/repos/sunjun-group/Ziyuan

|

opened

|

Program Stuck

|

high priority

|

Hi Lyly,

Please check this, the program stuck for the following method:

org.apache.commons.math.analysis.integration.SimpsonIntegrator.integrate.70

|

1.0

|

Program Stuck - Hi Lyly,

Please check this, the program stuck for the following method:

org.apache.commons.math.analysis.integration.SimpsonIntegrator.integrate.70

|

non_process

|

program stuck hi lyly please check this the program stuck for the following method org apache commons math analysis integration simpsonintegrator integrate

| 0

|

435,139

| 30,487,264,873

|

IssuesEvent

|

2023-07-18 04:03:18

|

wehs7661/ensemble_md

|

https://api.github.com/repos/wehs7661/ensemble_md

|

closed

|

Enable coordinate modification in the EEXE framework

|

documentation enhancement

|

To expand the usage of EEXE, we want to enable coordinate manipulation at exchanges between replicas, which is most likely to be useful for estimating the free energy of multiple serial mutations using expanded ensemble simulations, such as mutating methane into ethane and then propane.

For example, we can have an EEXE simulation composed of two replicas mutating methane into ethane and ethane into propane, respectively, and only exchange the coordinates between replicas when they are at the end states, i.e., replica 1 being at λ=1 and replica 2 being at λ=0. In this example, we will have the following end states:

- Replica 1: Mutating methane to ethane

- State a: At λ = 0, we have methane with a dummy methyl group.

- State b: At λ = 1, we have ethane with a dummy H atom.

- Replica 2: Mutating ethane to propane

- State c: At λ = 0, we have ethane with a dummy methyl group.

- State d: At λ = 1, we have propane with a dummy H atom.

At exchanges, we will have two output `gro` files respectively from replicas 1 and 2, namely `rep1.gro` (state b, ethane with a dummy H atom at the first carbon) and `rep2.gro` (state c, ethane with a dummy ethyl group at the second carbon).

Note that in EEXE, each replica is bound to the transformation for its assigned alchemical range. In our case, this means that replica 1 will only be responsible for the mutation of a methane to an ethane, and replica 2 will only be responsible for mutating an ethane to a propane. Normally, we would just swap the `gro` files as is, so in the next iteration, replica 1 will be initialized with `rep2.gro` and sample the intermediate states along the mutation path between methane and ethane. However, `rep2.gro` is an ethane with a dummy methyl group, not an ethane with a dummy H atom that we need for such sampling. The same thing would happen when trying to initialize the next iteration of replica 2 using `rep1.gro`.

To address this issue, we can modify `rep2.gro` as follows and use it to proceed to the next iteration of replica 1:

- Remove the dummy methyl group at the second carbon atom from `rep2.gro`.

- Attach a dummy H atom to the first carbon atom in `rep2.gro`. Specifically, the coordinate of the dummy H atom can just be the coordinates of the second carbon atom. There won't be clashes since the dummy H atoms have no interactions with the rest of the system.

Similarly, we can modify `rep1.gro` as follows for the next iteration of replica 2:

- Remove the dummy H atom at the first carbon from `rep1.gro`.

- Attach a dummy methyl group at the second carbon atom in `rep1.gro`. Specifically, we can take the internal coordinates of the methyl group in `rep2.gro`, treat the group as rigid, rotate, and attach the group to the second carbon atom in `rep1.gro`.

Importantly, we can make the two modified `gro` files have the same potential energy, so the proposed exchange will always be adopted.

Here, we are not going to implement functions for coordinate manipulation in EEXE but modify the CLI `run_EEXE` (and the function `run_grompp` in `ensemble_EXE.py`, if necessary) to allow the flexibility of calling a user-defined function for coordinate manipulation from an input python module (where the user-defined function is defined).

|

1.0

|

Enable coordinate modification in the EEXE framework - To expand the usage of EEXE, we want to enable coordinate manipulation at exchanges between replicas, which is most likely to be useful for estimating the free energy of multiple serial mutations using expanded ensemble simulations, such as mutating methane into ethane and then propane.

For example, we can have an EEXE simulation composed of two replicas mutating methane into ethane and ethane into propane, respectively, and only exchange the coordinates between replicas when they are at the end states, i.e., replica 1 being at λ=1 and replica 2 being at λ=0. In this example, we will have the following end states:

- Replica 1: Mutating methane to ethane

- State a: At λ = 0, we have methane with a dummy methyl group.

- State b: At λ = 1, we have ethane with a dummy H atom.

- Replica 2: Mutating ethane to propane

- State c: At λ = 0, we have ethane with a dummy methyl group.

- State d: At λ = 1, we have propane with a dummy H atom.

At exchanges, we will have two output `gro` files respectively from replicas 1 and 2, namely `rep1.gro` (state b, ethane with a dummy H atom at the first carbon) and `rep2.gro` (state c, ethane with a dummy ethyl group at the second carbon).

Note that in EEXE, each replica is bound to the transformation for its assigned alchemical range. In our case, this means that replica 1 will only be responsible for the mutation of a methane to an ethane, and replica 2 will only be responsible for mutating an ethane to a propane. Normally, we would just swap the `gro` files as is, so in the next iteration, replica 1 will be initialized with `rep2.gro` and sample the intermediate states along the mutation path between methane and ethane. However, `rep2.gro` is an ethane with a dummy methyl group, not an ethane with a dummy H atom that we need for such sampling. The same thing would happen when trying to initialize the next iteration of replica 2 using `rep1.gro`.

To address this issue, we can modify `rep2.gro` as follows and use it to proceed to the next iteration of replica 1:

- Remove the dummy methyl group at the second carbon atom from `rep2.gro`.

- Attach a dummy H atom to the first carbon atom in `rep2.gro`. Specifically, the coordinate of the dummy H atom can just be the coordinates of the second carbon atom. There won't be clashes since the dummy H atoms have no interactions with the rest of the system.

Similarly, we can modify `rep1.gro` as follows for the next iteration of replica 2:

- Remove the dummy H atom at the first carbon from `rep1.gro`.

- Attach a dummy methyl group at the second carbon atom in `rep1.gro`. Specifically, we can take the internal coordinates of the methyl group in `rep2.gro`, treat the group as rigid, rotate, and attach the group to the second carbon atom in `rep1.gro`.

Importantly, we can make the two modified `gro` files have the same potential energy, so the proposed exchange will always be adopted.

Here, we are not going to implement functions for coordinate manipulation in EEXE but modify the CLI `run_EEXE` (and the function `run_grompp` in `ensemble_EXE.py`, if necessary) to allow the flexibility of calling a user-defined function for coordinate manipulation from an input python module (where the user-defined function is defined).

|

non_process

|

enable coordinate modification in the eexe framework to expand the usage of eexe we want to enable coordinate manipulation at exchanges between replicas which is most likely to be useful for estimating the free energy of multiple serial mutations using expanded ensemble simulations such as mutating methane into ethane and then propane for example we can have an eexe simulation composed of two replicas mutating methane into ethane and ethane into propane respectively and only exchange the coordinates between replicas when they are at the end states i e replica being at λ and replica being at λ in this example we will have the following end states replica mutating methane to ethane state a at λ we have methane with a dummy methyl group state b at λ we have ethane with a dummy h atom replica mutating ethane to propane state c at λ we have ethane with a dummy methyl group state d at λ we have propane with a dummy h atom at exchanges we will have two output gro files respectively from replicas and namely gro state b ethane with a dummy h atom at the first carbon and gro state c ethane with a dummy ethyl group at the second carbon note that in eexe each replica is bound to the transformation for its assigned alchemical range in our case this means that replica will only be responsible for the mutation of a methane to an ethane and replica will only be responsible for mutating an ethane to a propane normally we would just swap the gro files as is so in the next iteration replica will be initialized with gro and sample the intermediate states along the mutation path between methane and ethane however gro is an ethane with a dummy methyl group not an ethane with a dummy h atom that we need for such sampling the same thing would happen when trying to initialize the next iteration of replica using gro to address this issue we can modify gro as follows and use it to proceed to the next iteration of replica remove the dummy methyl group at the second carbon atom from gro attach a dummy h atom to the first carbon atom in gro specifically the coordinate of the dummy h atom can just be the coordinates of the second carbon atom there won t be clashes since the dummy h atoms have no interactions with the rest of the system similarly we can modify gro as follows for the next iteration of replica remove the dummy h atom at the first carbon from gro attach a dummy methyl group at the second carbon atom in gro specifically we can take the internal coordinates of the methyl group in gro treat the group as rigid rotate and attach the group to the second carbon atom in gro importantly we can make the two modified gro files have the same potential energy so the proposed exchange will always be adopted here we are not going to implement functions for coordinate manipulation in eexe but modify the cli run eexe and the function run grompp in ensemble exe py if necessary to allow the flexibility of calling a user defined function for coordinate manipulation from an input python module where the user defined function is defined

| 0

|

4,858

| 7,746,517,683

|

IssuesEvent

|

2018-05-29 22:02:05

|

AppFolioOnboarding/image-sharer-ChaoHuangAtAppfolio

|

https://api.github.com/repos/AppFolioOnboarding/image-sharer-ChaoHuangAtAppfolio

|

closed

|

Image Index

|

in process

|

#### As a user I want the homepage to display all saved images.

__Story__:

Now that we are saving images for our users, we want them to be able to view

the list of images in the system. You might be thinking, "what if my users only

want to view images of cats", or "what if my users only want to see the images

they uploaded". Those are great questions. Maybe you will have the opportunity

to add those features in a future story. For now, we will keep it simple and

quickly deliver a little more value to our users.

__Acceptance criteria__:

- [ ] New images that are added show up on the homepage.

- [ ] These images are persisted if the browser is closed or even if the

server is restarted.

- [ ] Images are not displayed wider than 400px.

- [ ] Newest images appear first.

__Dependencies__:

- Save Image Link

|

1.0

|

Image Index - #### As a user I want the homepage to display all saved images.

__Story__:

Now that we are saving images for our users, we want them to be able to view

the list of images in the system. You might be thinking, "what if my users only

want to view images of cats", or "what if my users only want to see the images

they uploaded". Those are great questions. Maybe you will have the opportunity

to add those features in a future story. For now, we will keep it simple and

quickly deliver a little more value to our users.

__Acceptance criteria__:

- [ ] New images that are added show up on the homepage.

- [ ] These images are persisted if the browser is closed or even if the

server is restarted.

- [ ] Images are not displayed wider than 400px.

- [ ] Newest images appear first.

__Dependencies__:

- Save Image Link

|

process

|

image index as a user i want the homepage to display all saved images story now that we are saving images for our users we want them to be able to view the list of images in the system you might be thinking what if my users only want to view images of cats or what if my users only want to see the images they uploaded those are great questions maybe you will have the opportunity to add those features in a future story for now we will keep it simple and quickly deliver a little more value to our users acceptance criteria new images that are added show up on the homepage these images are persisted if the browser is closed or even if the server is restarted images are not displayed wider than newest images appear first dependencies save image link

| 1

|

268,113

| 8,403,618,993

|

IssuesEvent

|

2018-10-11 10:17:23

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

gtc.lm.com - desktop site instead of mobile site

|

browser-firefox priority-normal

|

<!-- @browser: Firefox 63.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.3; Win64; x64; rv:63.0) Gecko/20100101 Firefox/63.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://gtc.lm.com/LM/Security/login?Jwt=/rL3cPEzRImDYcQqSxP5+j+sCq7T21DltWy8WKZq7E397TBPTruGYSiGZ86MQ2y8T40BPVJZOw4UrLX5ZgHQQwlIvw19HzJt5zhn2LgtEg7qdvPurNvUztiUlXoCZWyJNfToeEUKLn0Jxw0WqollxFKwjSKLR3N/nYJFT0uu1CGlSG+zo/U1INeJcCn5S/rbtpMy7+kZ4evWiW29pa3fewZgyHNkPS4hGcEEGYG4uUcNGRBUz7xzXCP2Sfelz5dRFM3KqR+MVBjgkd+Es2R2TwBW2cWaTwSi5TzktPwAC9fQ14cycs0N0IoWDxC3QyzPsqhN5lcM0Hy8bV1+qEFbdOlMghyeeqdB0I/V/iVEFV8=

**Browser / Version**: Firefox 63.0

**Operating System**: Windows 8.1

**Tested Another Browser**: Yes

**Problem type**: Desktop site instead of mobile site

**Description**: its not redirecting right

**Steps to Reproduce**:

i try several severs

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>buildID: 20181004174654</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.all: false</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>channel: beta</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

1.0

|

gtc.lm.com - desktop site instead of mobile site - <!-- @browser: Firefox 63.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.3; Win64; x64; rv:63.0) Gecko/20100101 Firefox/63.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: https://gtc.lm.com/LM/Security/login?Jwt=/rL3cPEzRImDYcQqSxP5+j+sCq7T21DltWy8WKZq7E397TBPTruGYSiGZ86MQ2y8T40BPVJZOw4UrLX5ZgHQQwlIvw19HzJt5zhn2LgtEg7qdvPurNvUztiUlXoCZWyJNfToeEUKLn0Jxw0WqollxFKwjSKLR3N/nYJFT0uu1CGlSG+zo/U1INeJcCn5S/rbtpMy7+kZ4evWiW29pa3fewZgyHNkPS4hGcEEGYG4uUcNGRBUz7xzXCP2Sfelz5dRFM3KqR+MVBjgkd+Es2R2TwBW2cWaTwSi5TzktPwAC9fQ14cycs0N0IoWDxC3QyzPsqhN5lcM0Hy8bV1+qEFbdOlMghyeeqdB0I/V/iVEFV8=

**Browser / Version**: Firefox 63.0

**Operating System**: Windows 8.1

**Tested Another Browser**: Yes

**Problem type**: Desktop site instead of mobile site

**Description**: its not redirecting right

**Steps to Reproduce**:

i try several severs

<details>

<summary>Browser Configuration</summary>

<ul>

<li>mixed active content blocked: false</li><li>buildID: 20181004174654</li><li>tracking content blocked: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.all: false</li><li>mixed passive content blocked: false</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>channel: beta</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

non_process

|

gtc lm com desktop site instead of mobile site url browser version firefox operating system windows tested another browser yes problem type desktop site instead of mobile site description its not redirecting right steps to reproduce i try several severs browser configuration mixed active content blocked false buildid tracking content blocked false gfx webrender blob images true gfx webrender all false mixed passive content blocked false gfx webrender enabled false image mem shared true channel beta from with ❤️

| 0

|

20,956

| 27,817,074,295

|

IssuesEvent

|

2023-03-18 20:11:03

|

cse442-at-ub/project_s23-cinco

|

https://api.github.com/repos/cse442-at-ub/project_s23-cinco

|

closed

|

Connect database to react app.

|

Processing Task Sprint 2

|

Test

Navigate to https://www-student.cse.buffalo.edu/tools/db/phpmyadmin

Login to your account using your UBIT and UB person number

Go to CSE442 database "cse442_2023_spring_team_b_db", select Users table

Verify you can see username and password entries in database.

Prerequisite:

If you are not on campus and under UB wifi, you have to connect through VPN to access UB servers. Download AnyConnect here

https://www.buffalo.edu/ubit/service-guides/software/downloading/macintosh-software/managing-mac-software/anyconnect.html

and connect to vpn.buffalo.edu/UBVPN.

Test

Run Apache webserver using XAMPP.

Go to the root directory of the demo app and run "(sudo) npm start".

You are connected! Sadly, I don't think I can print out that you actually connected because echoing anything in the php file will assume it is the post response.

|

1.0

|

Connect database to react app. - Test

Navigate to https://www-student.cse.buffalo.edu/tools/db/phpmyadmin

Login to your account using your UBIT and UB person number

Go to CSE442 database "cse442_2023_spring_team_b_db", select Users table

Verify you can see username and password entries in database.

Prerequisite:

If you are not on campus and under UB wifi, you have to connect through VPN to access UB servers. Download AnyConnect here

https://www.buffalo.edu/ubit/service-guides/software/downloading/macintosh-software/managing-mac-software/anyconnect.html

and connect to vpn.buffalo.edu/UBVPN.

Test

Run Apache webserver using XAMPP.

Go to the root directory of the demo app and run "(sudo) npm start".

You are connected! Sadly, I don't think I can print out that you actually connected because echoing anything in the php file will assume it is the post response.

|

process

|

connect database to react app test navigate to login to your account using your ubit and ub person number go to database spring team b db select users table verify you can see username and password entries in database prerequisite if you are not on campus and under ub wifi you have to connect through vpn to access ub servers download anyconnect here and connect to vpn buffalo edu ubvpn test run apache webserver using xampp go to the root directory of the demo app and run sudo npm start you are connected sadly i don t think i can print out that you actually connected because echoing anything in the php file will assume it is the post response

| 1

|

22,010

| 30,513,683,550

|

IssuesEvent

|

2023-07-18 23:48:49

|

h4sh5/pypi-auto-scanner

|

https://api.github.com/repos/h4sh5/pypi-auto-scanner

|

opened

|

roblox-pyc 1.16.47 has 2 GuardDog issues

|

guarddog silent-process-execution

|

https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.16.47",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "roblox-pyc-1.16.47/src/robloxpy.py:115",

"code": " subprocess.call([\"luarocks\", \"--version\"], stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL, stdin=subprocess.DEVNULL)",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

},

{

"location": "roblox-pyc-1.16.47/src/robloxpy.py:122",

"code": " subprocess.call([\"moonc\", \"--version\"], stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL, stdin=subprocess.DEVNULL)",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmp_ppbq5ad/roblox-pyc"

}

}```

|

1.0

|

roblox-pyc 1.16.47 has 2 GuardDog issues - https://pypi.org/project/roblox-pyc

https://inspector.pypi.io/project/roblox-pyc

```{

"dependency": "roblox-pyc",

"version": "1.16.47",

"result": {

"issues": 2,

"errors": {},

"results": {

"silent-process-execution": [

{

"location": "roblox-pyc-1.16.47/src/robloxpy.py:115",

"code": " subprocess.call([\"luarocks\", \"--version\"], stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL, stdin=subprocess.DEVNULL)",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

},

{

"location": "roblox-pyc-1.16.47/src/robloxpy.py:122",

"code": " subprocess.call([\"moonc\", \"--version\"], stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL, stdin=subprocess.DEVNULL)",

"message": "This package is silently executing an external binary, redirecting stdout, stderr and stdin to /dev/null"

}

]

},

"path": "/tmp/tmp_ppbq5ad/roblox-pyc"

}

}```

|

process

|

roblox pyc has guarddog issues dependency roblox pyc version result issues errors results silent process execution location roblox pyc src robloxpy py code subprocess call stdout subprocess devnull stderr subprocess devnull stdin subprocess devnull message this package is silently executing an external binary redirecting stdout stderr and stdin to dev null location roblox pyc src robloxpy py code subprocess call stdout subprocess devnull stderr subprocess devnull stdin subprocess devnull message this package is silently executing an external binary redirecting stdout stderr and stdin to dev null path tmp tmp roblox pyc

| 1

|

20,461

| 10,757,341,964

|

IssuesEvent

|

2019-10-31 13:06:49

|

humhub/humhub

|

https://api.github.com/repos/humhub/humhub

|

opened

|

Cache loaded ContentContainer

|

Kind:Enhancement Topic:API Topic:Performance

|

Sometimes there are many `$model->content->container` calls for different models. We should cache already loaded containers by `contentcontainer_id` in order to prevent reloading already fetched containers.

|

True

|

Cache loaded ContentContainer - Sometimes there are many `$model->content->container` calls for different models. We should cache already loaded containers by `contentcontainer_id` in order to prevent reloading already fetched containers.

|

non_process

|

cache loaded contentcontainer sometimes there are many model content container calls for different models we should cache already loaded containers by contentcontainer id in order to prevent reloading already fetched containers

| 0

|

4,664

| 7,497,255,108

|

IssuesEvent

|

2018-04-08 17:56:27

|

UnbFeelings/unb-feelings-GQA

|

https://api.github.com/repos/UnbFeelings/unb-feelings-GQA

|

opened

|

Analisar Processo e Artefatos

|

document process wiki

|

Analisar o processo definido pela [equipe de processo][e-processo] para identificar quais partes deste processo e quais artefatos serão auditados pela equipe GQA. Eu recomendo que a definição destes artefatos e processos tenham algum embasamento, seja ele por conta dos objetivos organizacionais, qualidade do produto, ou porque é necessário para a disciplina.

|

1.0

|

Analisar Processo e Artefatos - Analisar o processo definido pela [equipe de processo][e-processo] para identificar quais partes deste processo e quais artefatos serão auditados pela equipe GQA. Eu recomendo que a definição destes artefatos e processos tenham algum embasamento, seja ele por conta dos objetivos organizacionais, qualidade do produto, ou porque é necessário para a disciplina.

|

process

|

analisar processo e artefatos analisar o processo definido pela para identificar quais partes deste processo e quais artefatos serão auditados pela equipe gqa eu recomendo que a definição destes artefatos e processos tenham algum embasamento seja ele por conta dos objetivos organizacionais qualidade do produto ou porque é necessário para a disciplina

| 1

|

6,696

| 9,813,846,162

|

IssuesEvent

|

2019-06-13 08:55:53

|

cropmapteam/Scotland-crop-map

|

https://api.github.com/repos/cropmapteam/Scotland-crop-map

|

closed

|

Check / Edit digitised RFI polygon shapefiles

|

GIS process

|

The script #31 which does the masking out of manually digitised RFI locations provided as shapefiles does some validation before doing the masking in rasterio and detected some problems with some of the shapefiles / some of the records in some of the shapefiles.

There were 51 shapefiles provided. 43 images have been processed to set to nodata, all pixels that fell within the polygons held in each shapefile

why the difference of 8?

This seems to be due to the following 3 issues with the digitised RFI polygon shapefiles which need to be looked-at; confirmed and fixed.

**Issue 1:** there are multiple (2) digitised RFI polygons shapefiles associated with a single image.

3 tiff files seemed to each have 2 shapefiles associated with them. In some cases duplicate RFI features have been captured, one in each shapefile as shown here:

The script assumes 1 shapefile per image, so processing was skipped.

So for the image S1B_20180120_132_asc_174936_175001_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif, there are these 2 shapefiles:

rfi/RFI_Interference_Shapefiles_Chrissy/S1B_20180120_132_asc_174936_175001_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

rfi/RFI_Interference_Shapefiles_Richard/S1B_20180120_132_asc_174936_175001_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

So for the image S1A_20180308_30_asc_175835_175900_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif, there are these 2 shapefiles:

rfi/RFI_Interference_Shapefiles_Zara/ S1A_20180308_30_asc_175835_175900_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

rfi/RFI_Interference_Shapefiles_Chrissy/S1A_20180308_30_asc_175835_175900_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

So for the image S1A_20180107_30_asc_175836_175901_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif, there are these 2 shapefiles:

rfi/RFI_Interference_Shapefiles_Chrissy/S1A_20180107_30_asc_175836_175901_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

rfi/RFI_Interference_Shapefiles_Richard/S1A_20180107_30_asc_175836_175901_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

Solution - in each case the shapefiles need to be checked and where duplicate RFI regions captured, a decision be made on which is best and the features from each of the 2 shapefiles then merged into a single shapefile.

**Issue 2:** Some of the digitised RFI polygons shapefiles contain no records at all or all the records contain invalid geometries.

For these images, either the associated shapefile contained no records at all or the records it did contain all had invalid geometries. Processing was skipped.

So for image S1B_20180309_132_asc_174935_175000_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif the shapefile rfi/RFI_Interference_Shapefiles_Zara/S1B_20180309_132_asc_174935_175000_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp seems to have no records

So for image S1B_20180618_30_asc_175812_175837_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif the shapefile rfi/RFI_Interference_Shapefiles_Chrissy/S1B_20180618_30_asc_175812_175837_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp seems to have no records with valid geometries

Solution - can the shapefiles / digitised polygons within them be checked?

**Issue3:** Some of the digitised RFI polygons shapefiles contain features with invalid geometries.

These seem to mostly be self-intersections like this:

In such cases, the pixels associated with polygons with valid geometries were masked out as normal and a new image created. The pixels associated with polygons with invalid geometries were skipped and the pixels retained in the new image.

This can be seen in this screenshot:

The pixels inside the valid polygons have been masked out. The pixels inside the invalid polygon have been retained. The following shapefiles were detected as containing features with invalid geometries:

Found invalid polygon with id 8 in rfi/RFI_Interference_Shapefiles_Chrissy/S1B_20180113_30_asc_175813_175838_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

Found invalid polygon with id 1 in rfi/RFI_Interference_Shapefiles_Zara/S1A_20180303_132_asc_175017_175042_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

Found invalid polygon with id 10 in rfi/RFI_Interference_Shapefiles_Chrissy/S1A_20180119_30_asc_175836_175901_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

Solution - can the digitised polygons be checked in these shapefiles. Once done, the image can be re-processed to mask out all the RFI regions.

|

1.0

|

Check / Edit digitised RFI polygon shapefiles - The script #31 which does the masking out of manually digitised RFI locations provided as shapefiles does some validation before doing the masking in rasterio and detected some problems with some of the shapefiles / some of the records in some of the shapefiles.

There were 51 shapefiles provided. 43 images have been processed to set to nodata, all pixels that fell within the polygons held in each shapefile

why the difference of 8?

This seems to be due to the following 3 issues with the digitised RFI polygon shapefiles which need to be looked-at; confirmed and fixed.

**Issue 1:** there are multiple (2) digitised RFI polygons shapefiles associated with a single image.

3 tiff files seemed to each have 2 shapefiles associated with them. In some cases duplicate RFI features have been captured, one in each shapefile as shown here:

The script assumes 1 shapefile per image, so processing was skipped.

So for the image S1B_20180120_132_asc_174936_175001_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif, there are these 2 shapefiles:

rfi/RFI_Interference_Shapefiles_Chrissy/S1B_20180120_132_asc_174936_175001_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

rfi/RFI_Interference_Shapefiles_Richard/S1B_20180120_132_asc_174936_175001_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

So for the image S1A_20180308_30_asc_175835_175900_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif, there are these 2 shapefiles:

rfi/RFI_Interference_Shapefiles_Zara/ S1A_20180308_30_asc_175835_175900_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

rfi/RFI_Interference_Shapefiles_Chrissy/S1A_20180308_30_asc_175835_175900_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

So for the image S1A_20180107_30_asc_175836_175901_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif, there are these 2 shapefiles:

rfi/RFI_Interference_Shapefiles_Chrissy/S1A_20180107_30_asc_175836_175901_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

rfi/RFI_Interference_Shapefiles_Richard/S1A_20180107_30_asc_175836_175901_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

Solution - in each case the shapefiles need to be checked and where duplicate RFI regions captured, a decision be made on which is best and the features from each of the 2 shapefiles then merged into a single shapefile.

**Issue 2:** Some of the digitised RFI polygons shapefiles contain no records at all or all the records contain invalid geometries.

For these images, either the associated shapefile contained no records at all or the records it did contain all had invalid geometries. Processing was skipped.

So for image S1B_20180309_132_asc_174935_175000_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif the shapefile rfi/RFI_Interference_Shapefiles_Zara/S1B_20180309_132_asc_174935_175000_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp seems to have no records

So for image S1B_20180618_30_asc_175812_175837_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.tif the shapefile rfi/RFI_Interference_Shapefiles_Chrissy/S1B_20180618_30_asc_175812_175837_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp seems to have no records with valid geometries

Solution - can the shapefiles / digitised polygons within them be checked?

**Issue3:** Some of the digitised RFI polygons shapefiles contain features with invalid geometries.

These seem to mostly be self-intersections like this:

In such cases, the pixels associated with polygons with valid geometries were masked out as normal and a new image created. The pixels associated with polygons with invalid geometries were skipped and the pixels retained in the new image.

This can be seen in this screenshot:

The pixels inside the valid polygons have been masked out. The pixels inside the invalid polygon have been retained. The following shapefiles were detected as containing features with invalid geometries:

Found invalid polygon with id 8 in rfi/RFI_Interference_Shapefiles_Chrissy/S1B_20180113_30_asc_175813_175838_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

Found invalid polygon with id 1 in rfi/RFI_Interference_Shapefiles_Zara/S1A_20180303_132_asc_175017_175042_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

Found invalid polygon with id 10 in rfi/RFI_Interference_Shapefiles_Chrissy/S1A_20180119_30_asc_175836_175901_DV_Gamma-0_GB_OSGB_RCTK_SpkRL.shp

Solution - can the digitised polygons be checked in these shapefiles. Once done, the image can be re-processed to mask out all the RFI regions.

|

process

|

check edit digitised rfi polygon shapefiles the script which does the masking out of manually digitised rfi locations provided as shapefiles does some validation before doing the masking in rasterio and detected some problems with some of the shapefiles some of the records in some of the shapefiles there were shapefiles provided images have been processed to set to nodata all pixels that fell within the polygons held in each shapefile why the difference of this seems to be due to the following issues with the digitised rfi polygon shapefiles which need to be looked at confirmed and fixed issue there are multiple digitised rfi polygons shapefiles associated with a single image tiff files seemed to each have shapefiles associated with them in some cases duplicate rfi features have been captured one in each shapefile as shown here the script assumes shapefile per image so processing was skipped so for the image asc dv gamma gb osgb rctk spkrl tif there are these shapefiles rfi rfi interference shapefiles chrissy asc dv gamma gb osgb rctk spkrl shp rfi rfi interference shapefiles richard asc dv gamma gb osgb rctk spkrl shp so for the image asc dv gamma gb osgb rctk spkrl tif there are these shapefiles rfi rfi interference shapefiles zara asc dv gamma gb osgb rctk spkrl shp rfi rfi interference shapefiles chrissy asc dv gamma gb osgb rctk spkrl shp so for the image asc dv gamma gb osgb rctk spkrl tif there are these shapefiles rfi rfi interference shapefiles chrissy asc dv gamma gb osgb rctk spkrl shp rfi rfi interference shapefiles richard asc dv gamma gb osgb rctk spkrl shp solution in each case the shapefiles need to be checked and where duplicate rfi regions captured a decision be made on which is best and the features from each of the shapefiles then merged into a single shapefile issue some of the digitised rfi polygons shapefiles contain no records at all or all the records contain invalid geometries for these images either the associated shapefile contained no records at all or the records it did contain all had invalid geometries processing was skipped so for image asc dv gamma gb osgb rctk spkrl tif the shapefile rfi rfi interference shapefiles zara asc dv gamma gb osgb rctk spkrl shp seems to have no records so for image asc dv gamma gb osgb rctk spkrl tif the shapefile rfi rfi interference shapefiles chrissy asc dv gamma gb osgb rctk spkrl shp seems to have no records with valid geometries solution can the shapefiles digitised polygons within them be checked some of the digitised rfi polygons shapefiles contain features with invalid geometries these seem to mostly be self intersections like this in such cases the pixels associated with polygons with valid geometries were masked out as normal and a new image created the pixels associated with polygons with invalid geometries were skipped and the pixels retained in the new image this can be seen in this screenshot the pixels inside the valid polygons have been masked out the pixels inside the invalid polygon have been retained the following shapefiles were detected as containing features with invalid geometries found invalid polygon with id in rfi rfi interference shapefiles chrissy asc dv gamma gb osgb rctk spkrl shp found invalid polygon with id in rfi rfi interference shapefiles zara asc dv gamma gb osgb rctk spkrl shp found invalid polygon with id in rfi rfi interference shapefiles chrissy asc dv gamma gb osgb rctk spkrl shp solution can the digitised polygons be checked in these shapefiles once done the image can be re processed to mask out all the rfi regions

| 1

|

456,190

| 13,146,666,951

|

IssuesEvent

|

2020-08-08 11:16:27

|

kubernetes/website

|

https://api.github.com/repos/kubernetes/website

|

closed

|

kubectl generated docs show path of user running generation tools

|

lifecycle/rotten priority/backlog sig/release

|

**This is a Bug Report**

<!--Required Information-->

**Problem:**

Spotted as part of review for #18010

@daminisatya generated docs which ended up containing:

```html

<tr>

<td colspan="2">--cache-dir string Default: "/Users/dsatya/.kube/http-cache"</td>

</tr>

```

whereas the docs should really show something like:

```html

<tr>

<td colspan="2">--cache-dir string Default: ~/.kube/http-cache</td>

</tr>

```

**Proposed Solution:** This needs fixing either upstream in the generation code, or maybe in the [wrapper tooling](https://kubernetes.io/docs/contribute/generate-ref-docs/kubernetes-components/).

Alternatively, clearly document a manual cleanup step to happen during the release process.

**Page to Update:**

https://kubernetes.io/docs/reference/kubectl/kubectl/

**Kubernetes Version**: v1.17 (barely!)

**Additional Information**:

Closed issue #10081 may also be relevant

|

1.0

|

kubectl generated docs show path of user running generation tools - **This is a Bug Report**

<!--Required Information-->

**Problem:**

Spotted as part of review for #18010

@daminisatya generated docs which ended up containing:

```html

<tr>

<td colspan="2">--cache-dir string Default: "/Users/dsatya/.kube/http-cache"</td>

</tr>

```

whereas the docs should really show something like:

```html

<tr>

<td colspan="2">--cache-dir string Default: ~/.kube/http-cache</td>

</tr>

```

**Proposed Solution:** This needs fixing either upstream in the generation code, or maybe in the [wrapper tooling](https://kubernetes.io/docs/contribute/generate-ref-docs/kubernetes-components/).

Alternatively, clearly document a manual cleanup step to happen during the release process.

**Page to Update:**

https://kubernetes.io/docs/reference/kubectl/kubectl/

**Kubernetes Version**: v1.17 (barely!)

**Additional Information**:

Closed issue #10081 may also be relevant

|

non_process

|

kubectl generated docs show path of user running generation tools this is a bug report problem spotted as part of review for daminisatya generated docs which ended up containing html cache dir string nbsp nbsp nbsp nbsp nbsp default users dsatya kube http cache whereas the docs should really show something like html cache dir string nbsp nbsp nbsp nbsp nbsp default kube http cache proposed solution this needs fixing either upstream in the generation code or maybe in the alternatively clearly document a manual cleanup step to happen during the release process page to update kubernetes version barely additional information closed issue may also be relevant

| 0

|

326,783

| 9,961,045,081

|

IssuesEvent

|

2019-07-06 23:06:30

|

kubeflow/kubeflow

|

https://api.github.com/repos/kubeflow/kubeflow

|

closed

|

[kfctl] better platform isolation

|

area/kfctl lifecycle/stale priority/p2

|

Currently in bootstrap/pkg/utils we have multiple platforms included. need to make platform isolation better - move GCP only stuffs to pkg/kfapp/gcp/...

|

1.0

|

[kfctl] better platform isolation - Currently in bootstrap/pkg/utils we have multiple platforms included. need to make platform isolation better - move GCP only stuffs to pkg/kfapp/gcp/...

|

non_process

|

better platform isolation currently in bootstrap pkg utils we have multiple platforms included need to make platform isolation better move gcp only stuffs to pkg kfapp gcp

| 0

|

482,732

| 13,912,396,545

|

IssuesEvent

|

2020-10-20 18:48:37

|

cds-snc/report-a-cybercrime

|

https://api.github.com/repos/cds-snc/report-a-cybercrime

|

closed

|

Extra space after Personal information (in mobile version)

|

bug medium priority

|

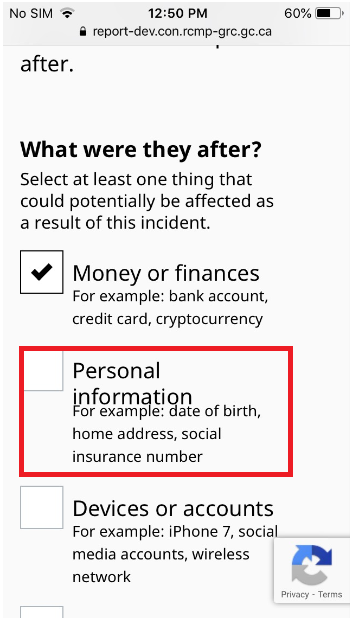

## Summary

The extra space is needed after personal information field in "What do you think could be affected?" page when the report is viewed on phone

## Steps to reproduce

> How exactly can the bug be reproduced? Be very specific.

## Unresolved questions

> Are there any related issues you consider out of scope for this issue that could be addressed in the future?

|

1.0

|

Extra space after Personal information (in mobile version) - ## Summary

The extra space is needed after personal information field in "What do you think could be affected?" page when the report is viewed on phone

## Steps to reproduce

> How exactly can the bug be reproduced? Be very specific.

## Unresolved questions

> Are there any related issues you consider out of scope for this issue that could be addressed in the future?

|

non_process

|

extra space after personal information in mobile version summary the extra space is needed after personal information field in what do you think could be affected page when the report is viewed on phone steps to reproduce how exactly can the bug be reproduced be very specific unresolved questions are there any related issues you consider out of scope for this issue that could be addressed in the future

| 0

|

825,512

| 31,393,174,645

|

IssuesEvent

|

2023-08-26 15:49:13

|

tcet-opensource/erp-backend

|

https://api.github.com/repos/tcet-opensource/erp-backend

|

closed

|

[Feat]: [Create a model for exam]

|

enhancement good first issue Issue Size: 1 Priority: Medium Models

|

## Description

Create an exam model

This model will be named exam.js

create a schema with key and value pairs with appropriate conditions.

Models should be added to the model folder.

## Proposed Solution

```

date: date

startTime: time

duration: int

supervisor: Faculty

infrastructure: Infrastructure

course: Course

```

|

1.0

|

[Feat]: [Create a model for exam] - ## Description

Create an exam model

This model will be named exam.js

create a schema with key and value pairs with appropriate conditions.

Models should be added to the model folder.

## Proposed Solution

```

date: date

startTime: time

duration: int

supervisor: Faculty

infrastructure: Infrastructure

course: Course

```

|

non_process

|

description create an exam model this model will be named exam js create a schema with key and value pairs with appropriate conditions models should be added to the model folder proposed solution date date starttime time duration int supervisor faculty infrastructure infrastructure course course

| 0

|

5,486

| 8,359,255,328

|

IssuesEvent

|

2018-10-03 07:34:43

|

bitshares/bitshares-community-ui

|

https://api.github.com/repos/bitshares/bitshares-community-ui

|

closed

|

Authentication question

|

Login Signup process question

|