Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 7

112

| repo_url

stringlengths 36

141

| action

stringclasses 3

values | title

stringlengths 1

744

| labels

stringlengths 4

574

| body

stringlengths 9

211k

| index

stringclasses 10

values | text_combine

stringlengths 96

211k

| label

stringclasses 2

values | text

stringlengths 96

188k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

8,213

| 11,405,306,143

|

IssuesEvent

|

2020-01-31 11:46:33

|

ESMValGroup/ESMValCore

|

https://api.github.com/repos/ESMValGroup/ESMValCore

|

closed

|

Several unit tests involving masked arrays are not implemented correctly ?

|

bug preprocessor

|

**Describe the bug**

While working on #392 I found out that the numpy testing function `assert_array_equal` operates on `masked arrays` differently than what one might expect.

Mwe:

```

import numpy as np

from numpy.testing import assert_array_equal

a = np.ma.masked_array(np.array([1,2]),mask=[True, True])

b = np.ma.masked_array(np.array([1,2]),mask=[True, False])

assert_array_equal(a,b) # doesn't raise !

```

Correct testing should check the mask and the data separately to assert equality.

```

assert_array_equal(a.data, b.data)

assert_array_equal(a.mask, b.mask)

```

Several functions in `test_mask.py` are impacted, e.g.:

https://github.com/ESMValGroup/ESMValCore/blob/7d682894d872bd00909c040aee435857bbb9768f/tests/unit/preprocessor/_mask/test_mask.py#L94-L98

|

1.0

|

Several unit tests involving masked arrays are not implemented correctly ? - **Describe the bug**

While working on #392 I found out that the numpy testing function `assert_array_equal` operates on `masked arrays` differently than what one might expect.

Mwe:

```

import numpy as np

from numpy.testing import assert_array_equal

a = np.ma.masked_array(np.array([1,2]),mask=[True, True])

b = np.ma.masked_array(np.array([1,2]),mask=[True, False])

assert_array_equal(a,b) # doesn't raise !

```

Correct testing should check the mask and the data separately to assert equality.

```

assert_array_equal(a.data, b.data)

assert_array_equal(a.mask, b.mask)

```

Several functions in `test_mask.py` are impacted, e.g.:

https://github.com/ESMValGroup/ESMValCore/blob/7d682894d872bd00909c040aee435857bbb9768f/tests/unit/preprocessor/_mask/test_mask.py#L94-L98

|

process

|

several unit tests involving masked arrays are not implemented correctly describe the bug while working on i found out that the numpy testing function assert array equal operates on masked arrays differently than what one might expect mwe import numpy as np from numpy testing import assert array equal a np ma masked array np array mask b np ma masked array np array mask assert array equal a b doesn t raise correct testing should check the mask and the data separately to assert equality assert array equal a data b data assert array equal a mask b mask several functions in test mask py are impacted e g

| 1

|

11,673

| 3,214,309,334

|

IssuesEvent

|

2015-10-07 00:42:23

|

broadinstitute/hellbender

|

https://api.github.com/repos/broadinstitute/hellbender

|

closed

|

use SmallBamWriter in dataflow tests

|

Dataflow tests

|

dataflow tests should use SmallBamWriter and compare the resulting bam files rather than outputting a text file with reads encoded as json

|

1.0

|

use SmallBamWriter in dataflow tests - dataflow tests should use SmallBamWriter and compare the resulting bam files rather than outputting a text file with reads encoded as json

|

non_process

|

use smallbamwriter in dataflow tests dataflow tests should use smallbamwriter and compare the resulting bam files rather than outputting a text file with reads encoded as json

| 0

|

17,962

| 23,973,740,276

|

IssuesEvent

|

2022-09-13 09:49:56

|

Open-Data-Product-Initiative/open-data-product-spec

|

https://api.github.com/repos/Open-Data-Product-Initiative/open-data-product-spec

|

opened

|

Prepayment option to Data Pricing

|

enhancement unprocessed

|

In the [API world prepayment option](https://thenewstack.io/how-developers-monetize-apis-prepay-emerges-as-new-option/) is emerging as one of the options in pricing.

It might make sense to add such an option to ODPS https://opendataproducts.org/#data-pricing as an option in _unit._

**option suggestion name:** _prepayment_

**option description:** A prepayment of credits into the platform in such a way that should it go low enough, automatically, we can refill that account. Once it goes to zero, no more service until the account is pre-paid again — just like the Starbucks app.

|

1.0

|

Prepayment option to Data Pricing - In the [API world prepayment option](https://thenewstack.io/how-developers-monetize-apis-prepay-emerges-as-new-option/) is emerging as one of the options in pricing.

It might make sense to add such an option to ODPS https://opendataproducts.org/#data-pricing as an option in _unit._

**option suggestion name:** _prepayment_

**option description:** A prepayment of credits into the platform in such a way that should it go low enough, automatically, we can refill that account. Once it goes to zero, no more service until the account is pre-paid again — just like the Starbucks app.

|

process

|

prepayment option to data pricing in the is emerging as one of the options in pricing it might make sense to add such an option to odps as an option in unit option suggestion name prepayment option description a prepayment of credits into the platform in such a way that should it go low enough automatically we can refill that account once it goes to zero no more service until the account is pre paid again — just like the starbucks app

| 1

|

70,228

| 8,513,738,192

|

IssuesEvent

|

2018-10-31 16:45:45

|

cockpit-project/cockpit

|

https://api.github.com/repos/cockpit-project/cockpit

|

closed

|

How much RAM do I have?

|

needsdesign

|

So one thing that I've been asking myself while looking at the memory performance graph on my home server is "Sure, 1.5 GB of Memory used, but 1.5 out of what now again?"

If we had this number somewhere in the UI, it would help an admin tremendously in deciding if it's time to buy more RAM for the server or not.

|

1.0

|

How much RAM do I have? - So one thing that I've been asking myself while looking at the memory performance graph on my home server is "Sure, 1.5 GB of Memory used, but 1.5 out of what now again?"

If we had this number somewhere in the UI, it would help an admin tremendously in deciding if it's time to buy more RAM for the server or not.

|

non_process

|

how much ram do i have so one thing that i ve been asking myself while looking at the memory performance graph on my home server is sure gb of memory used but out of what now again if we had this number somewhere in the ui it would help an admin tremendously in deciding if it s time to buy more ram for the server or not

| 0

|

274,401

| 23,837,310,420

|

IssuesEvent

|

2022-09-06 07:20:08

|

wazuh/wazuh-qa

|

https://api.github.com/repos/wazuh/wazuh-qa

|

closed

|

E2E tests: Research Emotet test failures

|

team/qa subteam/qa-hurricane test/e2e

|

## Description

After the debugging and testing achieved in https://github.com/wazuh/wazuh-qa/issues/3166, we could see that test_emotet was failing, so we must find the reason for the failure and a solution for it.

### Executions

- https://github.com/wazuh/wazuh-qa/issues/3166#issuecomment-1228250975

- https://github.com/wazuh/wazuh-qa/issues/3166#issuecomment-1228386460

|

1.0

|

E2E tests: Research Emotet test failures - ## Description

After the debugging and testing achieved in https://github.com/wazuh/wazuh-qa/issues/3166, we could see that test_emotet was failing, so we must find the reason for the failure and a solution for it.

### Executions

- https://github.com/wazuh/wazuh-qa/issues/3166#issuecomment-1228250975

- https://github.com/wazuh/wazuh-qa/issues/3166#issuecomment-1228386460

|

non_process

|

tests research emotet test failures description after the debugging and testing achieved in we could see that test emotet was failing so we must find the reason for the failure and a solution for it executions

| 0

|

204,603

| 23,259,548,186

|

IssuesEvent

|

2022-08-04 12:23:54

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

opened

|

[Security Solution] Only Full screen icon is showing for empty message under Execution events

|

bug triage_needed impact:low Team: SecuritySolution v8.4.0

|

**Describe the bug**

Only Full screen icon is showing for empty message under Execution events

**Build info**

```

VERSION : 8.4.0 BC1

Build: 54999

COMMIT: 58f7eaf0f8dc3c43cbfcd393e587f155e97b3d0d

```

**Preconditions**

1. Kibana should be running

2. Execution events tab should be enabled

3. Rule should be created

**Steps to Reproduce**

1. Navigate to security > Rules page

2. Click on above created rule

3. Click on Execution events tab under rule details page

4. Expand the log that doesnot have any message

5. Observe that Full screen icon is showing for message

6. Click on full screen icon

7. Observe that blank screen is displaying

**Actual Result**

Full screen icon is showing of no data in message under Execution events

**Expected Result**

- Full screen icon should not be displayed for empty message under Execution events

- Hyphen should be displayed for empty message

**Screen-cast**

https://user-images.githubusercontent.com/61860752/182845772-8db72ffc-7672-4043-9fa4-159c187bcae5.mp4

|

True

|

[Security Solution] Only Full screen icon is showing for empty message under Execution events - **Describe the bug**

Only Full screen icon is showing for empty message under Execution events

**Build info**

```

VERSION : 8.4.0 BC1

Build: 54999

COMMIT: 58f7eaf0f8dc3c43cbfcd393e587f155e97b3d0d

```

**Preconditions**

1. Kibana should be running

2. Execution events tab should be enabled

3. Rule should be created

**Steps to Reproduce**

1. Navigate to security > Rules page

2. Click on above created rule

3. Click on Execution events tab under rule details page

4. Expand the log that doesnot have any message

5. Observe that Full screen icon is showing for message

6. Click on full screen icon

7. Observe that blank screen is displaying

**Actual Result**

Full screen icon is showing of no data in message under Execution events

**Expected Result**

- Full screen icon should not be displayed for empty message under Execution events

- Hyphen should be displayed for empty message

**Screen-cast**

https://user-images.githubusercontent.com/61860752/182845772-8db72ffc-7672-4043-9fa4-159c187bcae5.mp4

|

non_process

|

only full screen icon is showing for empty message under execution events describe the bug only full screen icon is showing for empty message under execution events build info version build commit preconditions kibana should be running execution events tab should be enabled rule should be created steps to reproduce navigate to security rules page click on above created rule click on execution events tab under rule details page expand the log that doesnot have any message observe that full screen icon is showing for message click on full screen icon observe that blank screen is displaying actual result full screen icon is showing of no data in message under execution events expected result full screen icon should not be displayed for empty message under execution events hyphen should be displayed for empty message screen cast

| 0

|

16,248

| 20,798,555,620

|

IssuesEvent

|

2022-03-17 11:43:56

|

ltechkorea/mlperf-inference

|

https://api.github.com/repos/ltechkorea/mlperf-inference

|

closed

|

Run Benchmark

|

speech to text medical imaging Recommendation natural language processing object detection image classification pre-submit

|

### Run Benchmark

- [ ] Image Classification

- [ ] Object Detection

- [ ] Netural Language Processing

- [ ] Recommendation

- [ ] Medical Imaging

- [ ] Speech to Text

|

1.0

|

Run Benchmark - ### Run Benchmark

- [ ] Image Classification

- [ ] Object Detection

- [ ] Netural Language Processing

- [ ] Recommendation

- [ ] Medical Imaging

- [ ] Speech to Text

|

process

|

run benchmark run benchmark image classification object detection netural language processing recommendation medical imaging speech to text

| 1

|

15,304

| 19,343,736,284

|

IssuesEvent

|

2021-12-15 08:38:45

|

qgis/QGIS-Documentation

|

https://api.github.com/repos/qgis/QGIS-Documentation

|

closed

|

[feature][processing] Allow saving outputs direct to other database

destinations

|

Automatic new feature Processing 3.14

|

Original commit: https://github.com/qgis/QGIS/commit/c2161638d16c954186f0ecc4769bc7645636f01f by nyalldawson

Previously outputs could only be written direct to postgres databases.

With this change, this functionality has been made more flexible and

now supports direct writing to any database provider which implements

the connections API (currently postgres, geopackage, spatialite and

sql server)

Ultimately this exposes the new ability to directly save outputs

to SQL Server or Spatialite databases (alongside the previous

GPKG+Postgres options which already existed)

(As soon as oracle, db2, ... have the connections API implemented

we'll instantly gain direct write support for those too!)

|

1.0

|

[feature][processing] Allow saving outputs direct to other database

destinations - Original commit: https://github.com/qgis/QGIS/commit/c2161638d16c954186f0ecc4769bc7645636f01f by nyalldawson

Previously outputs could only be written direct to postgres databases.

With this change, this functionality has been made more flexible and

now supports direct writing to any database provider which implements

the connections API (currently postgres, geopackage, spatialite and

sql server)

Ultimately this exposes the new ability to directly save outputs

to SQL Server or Spatialite databases (alongside the previous

GPKG+Postgres options which already existed)

(As soon as oracle, db2, ... have the connections API implemented

we'll instantly gain direct write support for those too!)

|

process

|

allow saving outputs direct to other database destinations original commit by nyalldawson previously outputs could only be written direct to postgres databases with this change this functionality has been made more flexible and now supports direct writing to any database provider which implements the connections api currently postgres geopackage spatialite and sql server ultimately this exposes the new ability to directly save outputs to sql server or spatialite databases alongside the previous gpkg postgres options which already existed as soon as oracle have the connections api implemented we ll instantly gain direct write support for those too

| 1

|

5,661

| 8,531,480,731

|

IssuesEvent

|

2018-11-04 12:49:31

|

magit/magit

|

https://api.github.com/repos/magit/magit

|

closed

|

Show progress messages in magit-process buffer during fetch

|

feature request process

|

When doing a fetch using magit I'd like to be able to see how far it's got, but all I see in the ```*magit-process*``` buffer is the following:

```

run git … fetch origin

remote: Counting objects: 160, done.

remote: Compressing objects: 100% (78/78), done.

```

Then nothing else until the fetch completes. I'm currently waiting for >700MiB to download, but have no idea how far it's got. When I run ```git fetch``` from the command line I can see output like this:

```

Receiving objects: 20% (28/136), 1.34 MiB | 195.00 KiB/s

```

But magit hides this output. Please could it not hide this output?

Thanks,

Mark

|

1.0

|

Show progress messages in magit-process buffer during fetch - When doing a fetch using magit I'd like to be able to see how far it's got, but all I see in the ```*magit-process*``` buffer is the following:

```

run git … fetch origin

remote: Counting objects: 160, done.

remote: Compressing objects: 100% (78/78), done.

```

Then nothing else until the fetch completes. I'm currently waiting for >700MiB to download, but have no idea how far it's got. When I run ```git fetch``` from the command line I can see output like this:

```

Receiving objects: 20% (28/136), 1.34 MiB | 195.00 KiB/s

```

But magit hides this output. Please could it not hide this output?

Thanks,

Mark

|

process

|

show progress messages in magit process buffer during fetch when doing a fetch using magit i d like to be able to see how far it s got but all i see in the magit process buffer is the following run git … fetch origin remote counting objects done remote compressing objects done then nothing else until the fetch completes i m currently waiting for to download but have no idea how far it s got when i run git fetch from the command line i can see output like this receiving objects mib kib s but magit hides this output please could it not hide this output thanks mark

| 1

|

109,148

| 4,381,283,109

|

IssuesEvent

|

2016-08-06 04:46:41

|

WalkBikeCupertino/v2.0

|

https://api.github.com/repos/WalkBikeCupertino/v2.0

|

opened

|

Need walking section

|

enhancement P1 - Medium Priority

|

[Larry 7/30/2016] Walking Section: The city will conduct an effort this year to generate a Cupertino Pedestrian Plan. As this develops in the community this year, I’d like to see a section focused on that…. Your thoughts and suggestions are welcomed.

[Jennifer 8/3/2016] This might be good idea for a box at the bottom (replacing the other content in time), but I would think that the overall content (long-term) would fit into one of our existing categories. We don’t want to come across as a Bike site, with a sprinkling of pedestrian content. It should probably be integrated.

|

1.0

|

Need walking section - [Larry 7/30/2016] Walking Section: The city will conduct an effort this year to generate a Cupertino Pedestrian Plan. As this develops in the community this year, I’d like to see a section focused on that…. Your thoughts and suggestions are welcomed.

[Jennifer 8/3/2016] This might be good idea for a box at the bottom (replacing the other content in time), but I would think that the overall content (long-term) would fit into one of our existing categories. We don’t want to come across as a Bike site, with a sprinkling of pedestrian content. It should probably be integrated.

|

non_process

|

need walking section walking section the city will conduct an effort this year to generate a cupertino pedestrian plan as this develops in the community this year i’d like to see a section focused on that… your thoughts and suggestions are welcomed this might be good idea for a box at the bottom replacing the other content in time but i would think that the overall content long term would fit into one of our existing categories we don’t want to come across as a bike site with a sprinkling of pedestrian content it should probably be integrated

| 0

|

9,975

| 13,019,092,256

|

IssuesEvent

|

2020-07-26 20:39:09

|

GeorgesOatesLarsen/Physics-GRE-Testgen

|

https://api.github.com/repos/GeorgesOatesLarsen/Physics-GRE-Testgen

|

opened

|

ETS2017 Problem 5

|

PROBLEM: PLEASE PROCESS Trivia

|

> By definition the electric displacement current through a surface S is proportional to the

> magnetic flux through S

> rate of change of the magnetic flux through S

> time integral of the magnetic flux through S

> electric flux through S

> rate of change of the electric flux through S

|

1.0

|

ETS2017 Problem 5 - > By definition the electric displacement current through a surface S is proportional to the

> magnetic flux through S

> rate of change of the magnetic flux through S

> time integral of the magnetic flux through S

> electric flux through S

> rate of change of the electric flux through S

|

process

|

problem by definition the electric displacement current through a surface s is proportional to the magnetic flux through s rate of change of the magnetic flux through s time integral of the magnetic flux through s electric flux through s rate of change of the electric flux through s

| 1

|

62,035

| 12,197,360,329

|

IssuesEvent

|

2020-04-29 20:38:20

|

kwk/test-llvm-bz-import-5

|

https://api.github.com/repos/kwk/test-llvm-bz-import-5

|

closed

|

clang++ with -fno-elide-constructors generates incorrect code

|

BZ-BUG-STATUS: RESOLVED BZ-RESOLUTION: FIXED clang/LLVM Codegen dummy import from bugzilla

|

This issue was imported from Bugzilla https://bugs.llvm.org/show_bug.cgi?id=12208.

|

1.0

|

clang++ with -fno-elide-constructors generates incorrect code - This issue was imported from Bugzilla https://bugs.llvm.org/show_bug.cgi?id=12208.

|

non_process

|

clang with fno elide constructors generates incorrect code this issue was imported from bugzilla

| 0

|

285,359

| 8,757,814,851

|

IssuesEvent

|

2018-12-14 22:47:43

|

pravega/pravega

|

https://api.github.com/repos/pravega/pravega

|

closed

|

Metric Naming Problems

|

area/metrics kind/enhancement priority/P2 status/needs-attention

|

**Problem description**

We have a set of filters to break up the stats coming from pravega and create tags for them. There are new metrics in Pravega that no longer match those filters. Here are our current telegraf filters:

```

"*.*.*.pravega.*.* ....source.measurement pravega=1",

"*.*.*.pravega.*.*.* ....source.measurement.field pravega=1",

"*.*.*.pravega.*.*.*.*.* ....source.measurement.scope.stream.field pravega=1",

"*.*.*.pravega.*.*.*.*.*.* ....source.measurement.scope.stream.segment.field pravega=1",

```

We can add a new filter to catch the case where there are 4 items after pravega:

```

"*.*.*.pravega.*.* ....source.measurement pravega=1",

"*.*.*.pravega.*.*.* ....source.measurement.field pravega=1",

"*.*.*.pravega.*.*.*.* ....source.measurement.container.field pravega=1",

"*.*.*.pravega.*.*.*.*.* ....source.measurement.scope.stream.field pravega=1",

"*.*.*.pravega.*.*.*.*.*.* ....source.measurement.scope.stream.segment.field pravega=1",

```

Even with this change, we'll have a couple of problems:

1. These items do not have a source:

```

active_segments

bookkeeper_leger_count

```

2. There are some container ids that have the format of:

```

0-fail

1-fail

2-fail

```

**Problem location**

Metrics

**Suggestions for an improvement**

Add a source for the active_segments and bookkeeper_leger_count and move the "-fail" suffix to the measurement name and not the container id.

|

1.0

|

Metric Naming Problems - **Problem description**

We have a set of filters to break up the stats coming from pravega and create tags for them. There are new metrics in Pravega that no longer match those filters. Here are our current telegraf filters:

```

"*.*.*.pravega.*.* ....source.measurement pravega=1",

"*.*.*.pravega.*.*.* ....source.measurement.field pravega=1",

"*.*.*.pravega.*.*.*.*.* ....source.measurement.scope.stream.field pravega=1",

"*.*.*.pravega.*.*.*.*.*.* ....source.measurement.scope.stream.segment.field pravega=1",

```

We can add a new filter to catch the case where there are 4 items after pravega:

```

"*.*.*.pravega.*.* ....source.measurement pravega=1",

"*.*.*.pravega.*.*.* ....source.measurement.field pravega=1",

"*.*.*.pravega.*.*.*.* ....source.measurement.container.field pravega=1",

"*.*.*.pravega.*.*.*.*.* ....source.measurement.scope.stream.field pravega=1",

"*.*.*.pravega.*.*.*.*.*.* ....source.measurement.scope.stream.segment.field pravega=1",

```

Even with this change, we'll have a couple of problems:

1. These items do not have a source:

```

active_segments

bookkeeper_leger_count

```

2. There are some container ids that have the format of:

```

0-fail

1-fail

2-fail

```

**Problem location**

Metrics

**Suggestions for an improvement**

Add a source for the active_segments and bookkeeper_leger_count and move the "-fail" suffix to the measurement name and not the container id.

|

non_process

|

metric naming problems problem description we have a set of filters to break up the stats coming from pravega and create tags for them there are new metrics in pravega that no longer match those filters here are our current telegraf filters pravega source measurement pravega pravega source measurement field pravega pravega source measurement scope stream field pravega pravega source measurement scope stream segment field pravega we can add a new filter to catch the case where there are items after pravega pravega source measurement pravega pravega source measurement field pravega pravega source measurement container field pravega pravega source measurement scope stream field pravega pravega source measurement scope stream segment field pravega even with this change we ll have a couple of problems these items do not have a source active segments bookkeeper leger count there are some container ids that have the format of fail fail fail problem location metrics suggestions for an improvement add a source for the active segments and bookkeeper leger count and move the fail suffix to the measurement name and not the container id

| 0

|

619,454

| 19,526,320,844

|

IssuesEvent

|

2021-12-30 08:32:17

|

ita-social-projects/TeachUA

|

https://api.github.com/repos/ita-social-projects/TeachUA

|

closed

|

Check Put method for User Component

|

bug Backend Priority: High Task API

|

Oleksandr

Всім привіт, зараз працюю з сервісом для редагування даних в компоненті users для адмінки і зіткнувся з такою траблою, що при виконанні метода put в полі номер телефону, яке є неедітбл добавляються цифри 38 на беці і поле стає невалідним, тому що не відповідає реквайменту, що має бути 10 цифр і при цьому зникає можливість редагувати даного юзера, якраз через невалідність поля з номером телефону, чи можна якось це поправити?

|

1.0

|

Check Put method for User Component - Oleksandr

Всім привіт, зараз працюю з сервісом для редагування даних в компоненті users для адмінки і зіткнувся з такою траблою, що при виконанні метода put в полі номер телефону, яке є неедітбл добавляються цифри 38 на беці і поле стає невалідним, тому що не відповідає реквайменту, що має бути 10 цифр і при цьому зникає можливість редагувати даного юзера, якраз через невалідність поля з номером телефону, чи можна якось це поправити?

|

non_process

|

check put method for user component oleksandr всім привіт зараз працюю з сервісом для редагування даних в компоненті users для адмінки і зіткнувся з такою траблою що при виконанні метода put в полі номер телефону яке є неедітбл добавляються цифри на беці і поле стає невалідним тому що не відповідає реквайменту що має бути цифр і при цьому зникає можливість редагувати даного юзера якраз через невалідність поля з номером телефону чи можна якось це поправити

| 0

|

12,058

| 14,739,543,827

|

IssuesEvent

|

2021-01-07 07:25:30

|

kdjstudios/SABillingGitlab

|

https://api.github.com/repos/kdjstudios/SABillingGitlab

|

closed

|

Create new SAB Code for VCC Switch

|

anc-process anp-1 ant-enhancement

|

In GitLab by @kdjstudios on Sep 6, 2018, 10:02

**Submitted by:** "Cori Bartlett" <cori.bartlett@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-09-06-68787/conversation

**Server:** Internal

**Client/Site:** NA

**Account:** NA

**Issue:**

Would you please create new VCC Activity code:

VCC Total Agent Time 55099 =

In_calls_time_agent_talk (7006) +

out_calls_time_talk (7010) +

patch_time_withagent (7014) +

check_in_calls_time (7017) +

agent_work_time (7030)

Please make available to all AnswerNet VCC Sites. Thanks! Cori

|

1.0

|

Create new SAB Code for VCC Switch - In GitLab by @kdjstudios on Sep 6, 2018, 10:02

**Submitted by:** "Cori Bartlett" <cori.bartlett@answernet.com>

**Helpdesk:** http://www.servicedesk.answernet.com/profiles/ticket/2018-09-06-68787/conversation

**Server:** Internal

**Client/Site:** NA

**Account:** NA

**Issue:**

Would you please create new VCC Activity code:

VCC Total Agent Time 55099 =

In_calls_time_agent_talk (7006) +

out_calls_time_talk (7010) +

patch_time_withagent (7014) +

check_in_calls_time (7017) +

agent_work_time (7030)

Please make available to all AnswerNet VCC Sites. Thanks! Cori

|

process

|

create new sab code for vcc switch in gitlab by kdjstudios on sep submitted by cori bartlett helpdesk server internal client site na account na issue would you please create new vcc activity code vcc total agent time in calls time agent talk out calls time talk patch time withagent check in calls time agent work time please make available to all answernet vcc sites thanks cori

| 1

|

5,409

| 8,235,669,783

|

IssuesEvent

|

2018-09-09 07:47:53

|

pwittchen/neurosky-android-sdk

|

https://api.github.com/repos/pwittchen/neurosky-android-sdk

|

closed

|

Release 0.0.2

|

release process

|

**Release notes**:

- updating value of the type of the mid gamma brain wave signal -> https://github.com/pwittchen/neurosky-android-sdk/commit/45b8e292faeea0b2291d1a95f9a89b8c769fbf8f

|

1.0

|

Release 0.0.2 - **Release notes**:

- updating value of the type of the mid gamma brain wave signal -> https://github.com/pwittchen/neurosky-android-sdk/commit/45b8e292faeea0b2291d1a95f9a89b8c769fbf8f

|

process

|

release release notes updating value of the type of the mid gamma brain wave signal

| 1

|

43,958

| 9,526,389,151

|

IssuesEvent

|

2019-04-28 19:37:21

|

thirtybees/thirtybees

|

https://api.github.com/repos/thirtybees/thirtybees

|

opened

|

Get rid of the SemVer dependency

|

Code Quality Enhancement

|

After SemVer (introduced with #149) turned out to be incompatible with 4 part version numbers (see #915), it's only remaining usage is comparing a module's thirty bees version requirement in `Module::checkCompliancy()`. Allowing slightly more fancy version ranges in one place doesn't justify a whole dependency, IMHO.

Suggested replacement: replace `$this->tb_versions_compliancy` in the module's main file with `$this->tb_min_version`, a simple version string.

No _tb_max_version_, because a module developer can't know when a module becomes incompatible before it actually happens. Looking at the usage of _tb_versions_compliancy_ in all the thirty bees modules, none of them defines an upper version number.

The replacement needs a proper deprecation period, of course.

|

1.0

|

Get rid of the SemVer dependency - After SemVer (introduced with #149) turned out to be incompatible with 4 part version numbers (see #915), it's only remaining usage is comparing a module's thirty bees version requirement in `Module::checkCompliancy()`. Allowing slightly more fancy version ranges in one place doesn't justify a whole dependency, IMHO.

Suggested replacement: replace `$this->tb_versions_compliancy` in the module's main file with `$this->tb_min_version`, a simple version string.

No _tb_max_version_, because a module developer can't know when a module becomes incompatible before it actually happens. Looking at the usage of _tb_versions_compliancy_ in all the thirty bees modules, none of them defines an upper version number.

The replacement needs a proper deprecation period, of course.

|

non_process

|

get rid of the semver dependency after semver introduced with turned out to be incompatible with part version numbers see it s only remaining usage is comparing a module s thirty bees version requirement in module checkcompliancy allowing slightly more fancy version ranges in one place doesn t justify a whole dependency imho suggested replacement replace this tb versions compliancy in the module s main file with this tb min version a simple version string no tb max version because a module developer can t know when a module becomes incompatible before it actually happens looking at the usage of tb versions compliancy in all the thirty bees modules none of them defines an upper version number the replacement needs a proper deprecation period of course

| 0

|

91,595

| 26,431,931,441

|

IssuesEvent

|

2023-01-14 23:00:18

|

Leafwing-Studios/Emergence

|

https://api.github.com/repos/Leafwing-Studios/Emergence

|

opened

|

Fix `Cargo.lock` creation in CI sometimes failing

|

bug build-system

|

Sometimes, the `cargo update` command in the CI workflows to create a `Cargo.lock` file for proper caching, seems to fail:

<https://github.com/Leafwing-Studios/Emergence/actions/runs/3918987400/jobs/6699708119#step:4:10>

```

error: failed to get `bevy-trait-query` as a dependency of package `emergence_lib v0.1.0 (/home/runner/work/Emergence/Emergence/emergence_lib)`

Caused by:

failed to load source for dependency `bevy-trait-query`

Caused by:

Unable to update https://github.com/Leafwing-Studios/bevy-trait-query?rev=65533bf8680753a3f998056e1719b826652f3b69

Caused by:

revspec '65533bf8680753a3f998056e1719b826652f3b69' not found; class=Reference (4); code=NotFound (-3)

```

It seems like it can't find the specified commit hash.

If we search for the commit on GitHub [it finds it](https://github.com/Leafwing-Studios/bevy-trait-query/commit/65533bf8680753a3f998056e1719b826652f3b69), but gives the following warning:

> This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository.

We can try to change the commit rev to attempt to fix this.

|

1.0

|

Fix `Cargo.lock` creation in CI sometimes failing - Sometimes, the `cargo update` command in the CI workflows to create a `Cargo.lock` file for proper caching, seems to fail:

<https://github.com/Leafwing-Studios/Emergence/actions/runs/3918987400/jobs/6699708119#step:4:10>

```

error: failed to get `bevy-trait-query` as a dependency of package `emergence_lib v0.1.0 (/home/runner/work/Emergence/Emergence/emergence_lib)`

Caused by:

failed to load source for dependency `bevy-trait-query`

Caused by:

Unable to update https://github.com/Leafwing-Studios/bevy-trait-query?rev=65533bf8680753a3f998056e1719b826652f3b69

Caused by:

revspec '65533bf8680753a3f998056e1719b826652f3b69' not found; class=Reference (4); code=NotFound (-3)

```

It seems like it can't find the specified commit hash.

If we search for the commit on GitHub [it finds it](https://github.com/Leafwing-Studios/bevy-trait-query/commit/65533bf8680753a3f998056e1719b826652f3b69), but gives the following warning:

> This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository.

We can try to change the commit rev to attempt to fix this.

|

non_process

|

fix cargo lock creation in ci sometimes failing sometimes the cargo update command in the ci workflows to create a cargo lock file for proper caching seems to fail error failed to get bevy trait query as a dependency of package emergence lib home runner work emergence emergence emergence lib caused by failed to load source for dependency bevy trait query caused by unable to update caused by revspec not found class reference code notfound it seems like it can t find the specified commit hash if we search for the commit on github but gives the following warning this commit does not belong to any branch on this repository and may belong to a fork outside of the repository we can try to change the commit rev to attempt to fix this

| 0

|

8,259

| 11,425,424,769

|

IssuesEvent

|

2020-02-03 19:48:32

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

regulation of poly(A)-specific ribonuclease activity

|

New term request PomBase RNA processes community curation regulation

|

id: GO:new1

name: regulation of poly(A)-specific ribonuclease activity

namespace: biological_process

def: "Any process that modulates the rate, frequency or extent of the catalysis of the exonucleolytic cleavage of poly(A) to 5'-AMP." [GOC:mah,PMID:29932902]

intersection_of: GO:0008150 ! biological_process

intersection_of: regulates GO:0004535 ! poly(A)-specific ribonuclease activity

if you also need asserted links, they would be:

is_a: GO:1901917 ! regulation of exoribonuclease activity

relationship: regulates GO:0004535 ! poly(A)-specific ribonuclease activity

and + and - subtypes, following same pattern

id: GO:new2

name: negative regulation of poly(A)-specific ribonuclease activity

id: GO:new3

name: positive regulation of poly(A)-specific ribonuclease activity

to use to annotate effect of S. pombe Pabp RNA binding on Ccr4 and Caf1 nuclease activities in the context of removing poly(A) tails

|

1.0

|

regulation of poly(A)-specific ribonuclease activity - id: GO:new1

name: regulation of poly(A)-specific ribonuclease activity

namespace: biological_process

def: "Any process that modulates the rate, frequency or extent of the catalysis of the exonucleolytic cleavage of poly(A) to 5'-AMP." [GOC:mah,PMID:29932902]

intersection_of: GO:0008150 ! biological_process

intersection_of: regulates GO:0004535 ! poly(A)-specific ribonuclease activity

if you also need asserted links, they would be:

is_a: GO:1901917 ! regulation of exoribonuclease activity

relationship: regulates GO:0004535 ! poly(A)-specific ribonuclease activity

and + and - subtypes, following same pattern

id: GO:new2

name: negative regulation of poly(A)-specific ribonuclease activity

id: GO:new3

name: positive regulation of poly(A)-specific ribonuclease activity

to use to annotate effect of S. pombe Pabp RNA binding on Ccr4 and Caf1 nuclease activities in the context of removing poly(A) tails

|

process

|

regulation of poly a specific ribonuclease activity id go name regulation of poly a specific ribonuclease activity namespace biological process def any process that modulates the rate frequency or extent of the catalysis of the exonucleolytic cleavage of poly a to amp intersection of go biological process intersection of regulates go poly a specific ribonuclease activity if you also need asserted links they would be is a go regulation of exoribonuclease activity relationship regulates go poly a specific ribonuclease activity and and subtypes following same pattern id go name negative regulation of poly a specific ribonuclease activity id go name positive regulation of poly a specific ribonuclease activity to use to annotate effect of s pombe pabp rna binding on and nuclease activities in the context of removing poly a tails

| 1

|

629,017

| 20,021,208,142

|

IssuesEvent

|

2022-02-01 16:33:06

|

dbbs-lab/bsb

|

https://api.github.com/repos/dbbs-lab/bsb

|

closed

|

Release 4.0

|

priority.urgent

|

Since some of the changes such as #137 only make sense in the context of v4.0 we should aim to have v4 released before the publication of the paper. This means that all the design issues will need to be solved and all breaking changes need to be implemented; All non-breaking changes can then be postponed to minor releases (4.1, 4.2, ...) and we can just try to pack as many features as possible into 4.0 but release whenever the publication comes around.

All features of v4.0 should however be:

* Completely tested

* Completely documented

* Sensibly commented

## Module rework progress

### Configuration

* Completely reworked

* Amazing dynamic attribute based system

* Multi-document JSON parser

### Core

* `Scaffold` object cleanup, most functions will need to be moved into one of the storage interfaces.

### CLI

* [x] Reworked to allow the following:

* [x] Make pluggable

* [ ] Projects

* [ ] Audits

* [x] Include/Exclude/partials

### Placement

* [x] Parallel reconstruction (#139) (parallelism established with #223)

* [x] Assign morphologies during placement, not per connection type

* [x] Placement independent of cell types

* [x] Fix ParticlePlacement (overlap between cell types in same layer; strategy grouping)

* [ ] Add tests for topology

* [ ] Add docs for topology

* [ ] Make more regions and partitions (atlas, mesh, ...)

* [ ] Add tests for `after` and ordering of strats

* [ ] Fix the multi-partition placement for ParticlePlacement & Satellite.

* [x] Update PlacementSet properties to `load_*` functions

### Morphologies

* We have the new structure but there's a lot of legacy code that needs to be audited (#125)

* Compartments have been stripped from the codebase, so all of that code should now visibly error out when used.

### Connectivity

* [x] Adapted to v4 structure

* [x] Parallel connectivity

* ~~Labels & Filters API not completed yet~~ Filters replaced by `RegionOfInterest`

* Labels will be dealt with by `PlacementSet` like #310 did for v3

* [ ] Testing and documentation

* [ ] Rewrite the out of the box connectivity strategies (will overlap with #125)

### Plugins

Seem to be in a good place since the slight rework and documentation they just underwent.

* [ ] Make plugin overview command (https://github.com/dbbs-lab/bsb/issues/268)

### Simulation

I don't expect a lot of changes here except for decoupling the `Simulation` from the new `SimulatorAdapter` (#97) (no longer a `SimulationAdapter`), and capturing our output in the Neo format (#93) (which will be able to be exported to SONATA for example), we'll have to see for specific issues on a per-adapter basis

#### NEST

We need to upgrade to NEST 3 and maintain our extension modules. The output also needs to be captured a bit better.

* Entire adapter still needs to be documented, and how we pass the config params into NEST and our relationship with their models should be perfectly clear.

#### NEURON

Lots of features are missing like weights, overwriting variables on the models before instantiating them, having cells target relays (only devices atm).

* Entire adapter still needs to be documented, specifically all the targetting mechanisms (cell & section) and devices.

* Solve the NEURON & MPI conflict once and for all (will require changes to Patch aswell)

* Straighten out the responsibilities of Patch/Arborize and scaffold (I think the BSB shouldn't have any responsibilities except for initialising the models and asking the model to `create_synapses/receivers/transmitters`)

#### Arbor

Pretty much the best adapter now. Still also needs a way to distribute properties on individual cells.

### Storage

We're on a very good track here, we just need to expand the `Storage` interfaces as we go along reworking the other modules and can pin down exactly what data needs to be saved. I think attempting to define all that beforehand will lead to changing specifications and premature optimization.

### Reporting

Still a bit unclear. I'd like to work with the listener-pattern and register printing as the default listener. I'd also like to be able to specify `modes` or even richer metadata with a message object so that each listener can decide how to handle for example a progress bar, a progress map or plain report messages (this can differ greatly between for example a log and a terminal)

### Postprocessing

Nothing needs to happen here, perhaps we could recycle the reconstruction parallelization here aswell

### Plotting

Plotting has been given zero love, it has always been programmed "just-in-time" to produce figures so it's a Frankensteinian atrocity with at least 3 different paradigms (class based, decorated & functional elements) used and 0 coherence with kwargs thrown on everywhere and a terrible coupling between HDF5 result files and the plots.

It's state probably won't improve until we adopt something like Neo and we might just use whatever visualisation tools that are available for Neo rather than maintaining our own plotting library.

|

1.0

|

Release 4.0 - Since some of the changes such as #137 only make sense in the context of v4.0 we should aim to have v4 released before the publication of the paper. This means that all the design issues will need to be solved and all breaking changes need to be implemented; All non-breaking changes can then be postponed to minor releases (4.1, 4.2, ...) and we can just try to pack as many features as possible into 4.0 but release whenever the publication comes around.

All features of v4.0 should however be:

* Completely tested

* Completely documented

* Sensibly commented

## Module rework progress

### Configuration

* Completely reworked

* Amazing dynamic attribute based system

* Multi-document JSON parser

### Core

* `Scaffold` object cleanup, most functions will need to be moved into one of the storage interfaces.

### CLI

* [x] Reworked to allow the following:

* [x] Make pluggable

* [ ] Projects

* [ ] Audits

* [x] Include/Exclude/partials

### Placement

* [x] Parallel reconstruction (#139) (parallelism established with #223)

* [x] Assign morphologies during placement, not per connection type

* [x] Placement independent of cell types

* [x] Fix ParticlePlacement (overlap between cell types in same layer; strategy grouping)

* [ ] Add tests for topology

* [ ] Add docs for topology

* [ ] Make more regions and partitions (atlas, mesh, ...)

* [ ] Add tests for `after` and ordering of strats

* [ ] Fix the multi-partition placement for ParticlePlacement & Satellite.

* [x] Update PlacementSet properties to `load_*` functions

### Morphologies

* We have the new structure but there's a lot of legacy code that needs to be audited (#125)

* Compartments have been stripped from the codebase, so all of that code should now visibly error out when used.

### Connectivity

* [x] Adapted to v4 structure

* [x] Parallel connectivity

* ~~Labels & Filters API not completed yet~~ Filters replaced by `RegionOfInterest`

* Labels will be dealt with by `PlacementSet` like #310 did for v3

* [ ] Testing and documentation

* [ ] Rewrite the out of the box connectivity strategies (will overlap with #125)

### Plugins

Seem to be in a good place since the slight rework and documentation they just underwent.

* [ ] Make plugin overview command (https://github.com/dbbs-lab/bsb/issues/268)

### Simulation

I don't expect a lot of changes here except for decoupling the `Simulation` from the new `SimulatorAdapter` (#97) (no longer a `SimulationAdapter`), and capturing our output in the Neo format (#93) (which will be able to be exported to SONATA for example), we'll have to see for specific issues on a per-adapter basis

#### NEST

We need to upgrade to NEST 3 and maintain our extension modules. The output also needs to be captured a bit better.

* Entire adapter still needs to be documented, and how we pass the config params into NEST and our relationship with their models should be perfectly clear.

#### NEURON

Lots of features are missing like weights, overwriting variables on the models before instantiating them, having cells target relays (only devices atm).

* Entire adapter still needs to be documented, specifically all the targetting mechanisms (cell & section) and devices.

* Solve the NEURON & MPI conflict once and for all (will require changes to Patch aswell)

* Straighten out the responsibilities of Patch/Arborize and scaffold (I think the BSB shouldn't have any responsibilities except for initialising the models and asking the model to `create_synapses/receivers/transmitters`)

#### Arbor

Pretty much the best adapter now. Still also needs a way to distribute properties on individual cells.

### Storage

We're on a very good track here, we just need to expand the `Storage` interfaces as we go along reworking the other modules and can pin down exactly what data needs to be saved. I think attempting to define all that beforehand will lead to changing specifications and premature optimization.

### Reporting

Still a bit unclear. I'd like to work with the listener-pattern and register printing as the default listener. I'd also like to be able to specify `modes` or even richer metadata with a message object so that each listener can decide how to handle for example a progress bar, a progress map or plain report messages (this can differ greatly between for example a log and a terminal)

### Postprocessing

Nothing needs to happen here, perhaps we could recycle the reconstruction parallelization here aswell

### Plotting

Plotting has been given zero love, it has always been programmed "just-in-time" to produce figures so it's a Frankensteinian atrocity with at least 3 different paradigms (class based, decorated & functional elements) used and 0 coherence with kwargs thrown on everywhere and a terrible coupling between HDF5 result files and the plots.

It's state probably won't improve until we adopt something like Neo and we might just use whatever visualisation tools that are available for Neo rather than maintaining our own plotting library.

|

non_process

|

release since some of the changes such as only make sense in the context of we should aim to have released before the publication of the paper this means that all the design issues will need to be solved and all breaking changes need to be implemented all non breaking changes can then be postponed to minor releases and we can just try to pack as many features as possible into but release whenever the publication comes around all features of should however be completely tested completely documented sensibly commented module rework progress configuration completely reworked amazing dynamic attribute based system multi document json parser core scaffold object cleanup most functions will need to be moved into one of the storage interfaces cli reworked to allow the following make pluggable projects audits include exclude partials placement parallel reconstruction parallelism established with assign morphologies during placement not per connection type placement independent of cell types fix particleplacement overlap between cell types in same layer strategy grouping add tests for topology add docs for topology make more regions and partitions atlas mesh add tests for after and ordering of strats fix the multi partition placement for particleplacement satellite update placementset properties to load functions morphologies we have the new structure but there s a lot of legacy code that needs to be audited compartments have been stripped from the codebase so all of that code should now visibly error out when used connectivity adapted to structure parallel connectivity labels filters api not completed yet filters replaced by regionofinterest labels will be dealt with by placementset like did for testing and documentation rewrite the out of the box connectivity strategies will overlap with plugins seem to be in a good place since the slight rework and documentation they just underwent make plugin overview command simulation i don t expect a lot of changes here except for decoupling the simulation from the new simulatoradapter no longer a simulationadapter and capturing our output in the neo format which will be able to be exported to sonata for example we ll have to see for specific issues on a per adapter basis nest we need to upgrade to nest and maintain our extension modules the output also needs to be captured a bit better entire adapter still needs to be documented and how we pass the config params into nest and our relationship with their models should be perfectly clear neuron lots of features are missing like weights overwriting variables on the models before instantiating them having cells target relays only devices atm entire adapter still needs to be documented specifically all the targetting mechanisms cell section and devices solve the neuron mpi conflict once and for all will require changes to patch aswell straighten out the responsibilities of patch arborize and scaffold i think the bsb shouldn t have any responsibilities except for initialising the models and asking the model to create synapses receivers transmitters arbor pretty much the best adapter now still also needs a way to distribute properties on individual cells storage we re on a very good track here we just need to expand the storage interfaces as we go along reworking the other modules and can pin down exactly what data needs to be saved i think attempting to define all that beforehand will lead to changing specifications and premature optimization reporting still a bit unclear i d like to work with the listener pattern and register printing as the default listener i d also like to be able to specify modes or even richer metadata with a message object so that each listener can decide how to handle for example a progress bar a progress map or plain report messages this can differ greatly between for example a log and a terminal postprocessing nothing needs to happen here perhaps we could recycle the reconstruction parallelization here aswell plotting plotting has been given zero love it has always been programmed just in time to produce figures so it s a frankensteinian atrocity with at least different paradigms class based decorated functional elements used and coherence with kwargs thrown on everywhere and a terrible coupling between result files and the plots it s state probably won t improve until we adopt something like neo and we might just use whatever visualisation tools that are available for neo rather than maintaining our own plotting library

| 0

|

246,130

| 20,824,486,788

|

IssuesEvent

|

2022-03-18 19:00:22

|

ChainSafe/ui-monorepo

|

https://api.github.com/repos/ChainSafe/ui-monorepo

|

opened

|

Add ui test coverage for file copying to share folder

|

Testing

|

As described, automate the below scenarios:

- User can share a file to a new shared folder and delete original file

- User can share a file to a new shared folder and keep original file

- User can share a file to existing shared folder and delete original file

- User can share a file to existing shared folder and keep original file

|

1.0

|

Add ui test coverage for file copying to share folder - As described, automate the below scenarios:

- User can share a file to a new shared folder and delete original file

- User can share a file to a new shared folder and keep original file

- User can share a file to existing shared folder and delete original file

- User can share a file to existing shared folder and keep original file

|

non_process

|

add ui test coverage for file copying to share folder as described automate the below scenarios user can share a file to a new shared folder and delete original file user can share a file to a new shared folder and keep original file user can share a file to existing shared folder and delete original file user can share a file to existing shared folder and keep original file

| 0

|

8,998

| 12,109,124,567

|

IssuesEvent

|

2020-04-21 08:14:40

|

AmboVent-1690-108/AmboVent

|

https://api.github.com/repos/AmboVent-1690-108/AmboVent

|

closed

|

Add a pull request template in .github/pull_request_template.md

|

process-improvement

|

Add a pull request template in .github/pull_request_template.md. See here: https://help.github.com/en/github/building-a-strong-community/creating-a-pull-request-template-for-your-repository

Tell the user to run `./run_clang-format.sh` before submitting a PR, and have them check the box:

[x] I have run the auto-formatter: `./run_clang-format.sh`

Note that markdown comments in the template can be added with:

<!---comment--->

<!--- this is a comment --->

|

1.0

|

Add a pull request template in .github/pull_request_template.md - Add a pull request template in .github/pull_request_template.md. See here: https://help.github.com/en/github/building-a-strong-community/creating-a-pull-request-template-for-your-repository

Tell the user to run `./run_clang-format.sh` before submitting a PR, and have them check the box:

[x] I have run the auto-formatter: `./run_clang-format.sh`

Note that markdown comments in the template can be added with:

<!---comment--->

<!--- this is a comment --->

|

process

|

add a pull request template in github pull request template md add a pull request template in github pull request template md see here tell the user to run run clang format sh before submitting a pr and have them check the box i have run the auto formatter run clang format sh note that markdown comments in the template can be added with

| 1

|

2,638

| 5,413,716,308

|

IssuesEvent

|

2017-03-01 17:20:30

|

jlm2017/jlm-video-subtitles

|

https://api.github.com/repos/jlm2017/jlm-video-subtitles

|

closed

|

Pas vu à la télé (4) Panama papers et Société Générale

|

Language: French Process: [6] Approved

|

# Video title

Pas vu à la télé (4) Panama papers et Société Générale

# URL

https://www.youtube.com/watch?v=5exxOVSOBps

# Youtube subtitles language

French

# Duration

56:36

# Subtitles URL

https://www.youtube.com/timedtext_editor?action_mde_edit_form=1&ref=player&lang=fr&v=5exxOVSOBps&tab=captions&bl=vmp

|

1.0

|

Pas vu à la télé (4) Panama papers et Société Générale - # Video title

Pas vu à la télé (4) Panama papers et Société Générale

# URL

https://www.youtube.com/watch?v=5exxOVSOBps

# Youtube subtitles language

French

# Duration

56:36

# Subtitles URL

https://www.youtube.com/timedtext_editor?action_mde_edit_form=1&ref=player&lang=fr&v=5exxOVSOBps&tab=captions&bl=vmp

|

process

|

pas vu à la télé panama papers et société générale video title pas vu à la télé panama papers et société générale url youtube subtitles language french duration subtitles url

| 1

|

8,237

| 11,417,546,182

|

IssuesEvent

|

2020-02-03 00:03:09

|

parcel-bundler/parcel

|

https://api.github.com/repos/parcel-bundler/parcel

|

closed

|

Parcel sometimes fails to start due to LESS sourcemaps error

|

:bug: Bug CSS Preprocessing Stale

|

# 🐛 bug report

Parcel **sometimes** fails to start due to [a LESS sourcemaps error](https://i.imgur.com/bjnGMr1.png) (no files changed between those `npm start` instances), It seems to happen randomly, sometimes I have to re-run the script 3 times before it happens.

Editing any .LESS file (so that parcel rebuilds the changes) or restarting Parcel seems to solve it.

My hunch is that is due to the plugins that the LESS files (boostrap 4 LESS adaptation) use, in this case.

## 🎛 Configuration (.babelrc, package.json, cli command)

A demo project to reproduce the issue plus more configuration info [can be found here](https://github.com/mirkea/parcel-less-bug)

## 🤔 Expected Behavior

Parcel should finish the build all the time, or fail all the time.

## 😯 Current Behavior

Parcel fails to build, sometimes,

> /mnt/e/Work/CO/Parcel LESS bug demo/src/components/core/Grid/Grid.less: Cannot read property 'substring' of undefined

>at SourceMapOutput.add (/mnt/e/Work/CO/Parcel LESS bug demo/node_modules/less/lib/less/source-map-output.js:72:39)

## 💁 Possible Solution

Running with no sourcemaps works (as a workaround)

## 🔦 Context

I had to remove sourcemaps entirely (not just the css ones with only affect me but the .JS ones as well which the other devs working on the project might need)

## 💻 Code Sample

[Demo project](https://github.com/mirkea/parcel-less-bug)

## 🌍 Your Environment

| Software | Version(s) |

| ---------------- | ---------- |

| Parcel | 1.12.3

| Node | v8.10.0

| npm/Yarn | 6.9.0

| Operating System | Ubuntu (linux subsystem) on Windows 10

|

1.0

|

Parcel sometimes fails to start due to LESS sourcemaps error - # 🐛 bug report

Parcel **sometimes** fails to start due to [a LESS sourcemaps error](https://i.imgur.com/bjnGMr1.png) (no files changed between those `npm start` instances), It seems to happen randomly, sometimes I have to re-run the script 3 times before it happens.

Editing any .LESS file (so that parcel rebuilds the changes) or restarting Parcel seems to solve it.

My hunch is that is due to the plugins that the LESS files (boostrap 4 LESS adaptation) use, in this case.

## 🎛 Configuration (.babelrc, package.json, cli command)

A demo project to reproduce the issue plus more configuration info [can be found here](https://github.com/mirkea/parcel-less-bug)

## 🤔 Expected Behavior

Parcel should finish the build all the time, or fail all the time.

## 😯 Current Behavior

Parcel fails to build, sometimes,

> /mnt/e/Work/CO/Parcel LESS bug demo/src/components/core/Grid/Grid.less: Cannot read property 'substring' of undefined

>at SourceMapOutput.add (/mnt/e/Work/CO/Parcel LESS bug demo/node_modules/less/lib/less/source-map-output.js:72:39)

## 💁 Possible Solution

Running with no sourcemaps works (as a workaround)

## 🔦 Context

I had to remove sourcemaps entirely (not just the css ones with only affect me but the .JS ones as well which the other devs working on the project might need)

## 💻 Code Sample

[Demo project](https://github.com/mirkea/parcel-less-bug)

## 🌍 Your Environment

| Software | Version(s) |

| ---------------- | ---------- |

| Parcel | 1.12.3

| Node | v8.10.0

| npm/Yarn | 6.9.0

| Operating System | Ubuntu (linux subsystem) on Windows 10

|

process

|

parcel sometimes fails to start due to less sourcemaps error 🐛 bug report parcel sometimes fails to start due to no files changed between those npm start instances it seems to happen randomly sometimes i have to re run the script times before it happens editing any less file so that parcel rebuilds the changes or restarting parcel seems to solve it my hunch is that is due to the plugins that the less files boostrap less adaptation use in this case 🎛 configuration babelrc package json cli command a demo project to reproduce the issue plus more configuration info 🤔 expected behavior parcel should finish the build all the time or fail all the time 😯 current behavior parcel fails to build sometimes mnt e work co parcel less bug demo src components core grid grid less cannot read property substring of undefined at sourcemapoutput add mnt e work co parcel less bug demo node modules less lib less source map output js 💁 possible solution running with no sourcemaps works as a workaround 🔦 context i had to remove sourcemaps entirely not just the css ones with only affect me but the js ones as well which the other devs working on the project might need 💻 code sample 🌍 your environment software version s parcel node npm yarn operating system ubuntu linux subsystem on windows

| 1

|

50,429

| 26,638,104,481

|

IssuesEvent

|

2023-01-25 00:24:22

|

aesim-tech/simba-project

|

https://api.github.com/repos/aesim-tech/simba-project

|

closed

|

NDETE V2 & Multi time Steps

|

enhancement epic performance

|

**Motivations**

*Next Discontinuity Event Time Estimator V2*

Today, we always surround discontinuities by two points separated by the "min time step". This is to guarantee the accuracy of the discontinuity event. However the discontinuity event time of some events (like the PWM) is known accurately. If we discriminate the type of discontinuity event (accurate vs estimate), we can **improve the performance** (less simulated points) **and the accuracy** (discontinuity events exactly when they are supposed to happen).

*Multi time step*

Today, the control solver always iterates with the power solver. This is important because we have a variable time step and some power devices (like the controlled source) needs to be synchronized with the control solver. However, in many cases, the user wants to build a control loop that behaves like a real digital controller: fixed sampled time & no iteration.

Also, we can't model digital devices like a delay, or a THD calculation block and this will fix this.

**To-Do**

*Next Discontinuity Event Time Estimator V2*

- [x] Modify the discontinuous devices to discriminate the different types of discontinuity events (accurate / estimate)

- [x] Update the NDETE algorithm to calculate a time point exactly at the accurate discontinuity event time

- [x] Fix the issues related to the numerical precision and double number comparison

- [x] Tests

*Multi time step*

- [X] New sampling time setting on all control models

- [X] When the sampling time is set to "auto", the model behaves as it does today: Calculated at each time step and iterates with the power solver. The default value is "auto".

- [X] When the sampling time is set to a value, the model will be calculated at each sampling time and will not iterate with the power solver. It will be calculated at the end of a time step.

- [X] All the devices, with an "auto" sampling time that are connected to the output of a model with a defined sampling time will share the same sampling time.

- [x] All models with the same sampling time step will be attached to the same control solver.

- [x] The predictive (master) time step solver calculates a point at each sample of the control solvers.

- [x] Update the scopes to manage data with different time steps

- [x] Check if this is possible to parallelize the power and control solvers

- [x] Tests

|

True

|

NDETE V2 & Multi time Steps - **Motivations**

*Next Discontinuity Event Time Estimator V2*

Today, we always surround discontinuities by two points separated by the "min time step". This is to guarantee the accuracy of the discontinuity event. However the discontinuity event time of some events (like the PWM) is known accurately. If we discriminate the type of discontinuity event (accurate vs estimate), we can **improve the performance** (less simulated points) **and the accuracy** (discontinuity events exactly when they are supposed to happen).

*Multi time step*

Today, the control solver always iterates with the power solver. This is important because we have a variable time step and some power devices (like the controlled source) needs to be synchronized with the control solver. However, in many cases, the user wants to build a control loop that behaves like a real digital controller: fixed sampled time & no iteration.

Also, we can't model digital devices like a delay, or a THD calculation block and this will fix this.

**To-Do**

*Next Discontinuity Event Time Estimator V2*

- [x] Modify the discontinuous devices to discriminate the different types of discontinuity events (accurate / estimate)

- [x] Update the NDETE algorithm to calculate a time point exactly at the accurate discontinuity event time

- [x] Fix the issues related to the numerical precision and double number comparison

- [x] Tests

*Multi time step*

- [X] New sampling time setting on all control models

- [X] When the sampling time is set to "auto", the model behaves as it does today: Calculated at each time step and iterates with the power solver. The default value is "auto".

- [X] When the sampling time is set to a value, the model will be calculated at each sampling time and will not iterate with the power solver. It will be calculated at the end of a time step.

- [X] All the devices, with an "auto" sampling time that are connected to the output of a model with a defined sampling time will share the same sampling time.

- [x] All models with the same sampling time step will be attached to the same control solver.

- [x] The predictive (master) time step solver calculates a point at each sample of the control solvers.

- [x] Update the scopes to manage data with different time steps

- [x] Check if this is possible to parallelize the power and control solvers

- [x] Tests

|

non_process

|

ndete multi time steps motivations next discontinuity event time estimator today we always surround discontinuities by two points separated by the min time step this is to guarantee the accuracy of the discontinuity event however the discontinuity event time of some events like the pwm is known accurately if we discriminate the type of discontinuity event accurate vs estimate we can improve the performance less simulated points and the accuracy discontinuity events exactly when they are supposed to happen multi time step today the control solver always iterates with the power solver this is important because we have a variable time step and some power devices like the controlled source needs to be synchronized with the control solver however in many cases the user wants to build a control loop that behaves like a real digital controller fixed sampled time no iteration also we can t model digital devices like a delay or a thd calculation block and this will fix this to do next discontinuity event time estimator modify the discontinuous devices to discriminate the different types of discontinuity events accurate estimate update the ndete algorithm to calculate a time point exactly at the accurate discontinuity event time fix the issues related to the numerical precision and double number comparison tests multi time step new sampling time setting on all control models when the sampling time is set to auto the model behaves as it does today calculated at each time step and iterates with the power solver the default value is auto when the sampling time is set to a value the model will be calculated at each sampling time and will not iterate with the power solver it will be calculated at the end of a time step all the devices with an auto sampling time that are connected to the output of a model with a defined sampling time will share the same sampling time all models with the same sampling time step will be attached to the same control solver the predictive master time step solver calculates a point at each sample of the control solvers update the scopes to manage data with different time steps check if this is possible to parallelize the power and control solvers tests

| 0

|

20,844

| 27,615,193,464

|

IssuesEvent

|

2023-03-09 18:46:53

|

geneontology/go-ontology

|

https://api.github.com/repos/geneontology/go-ontology

|

closed

|

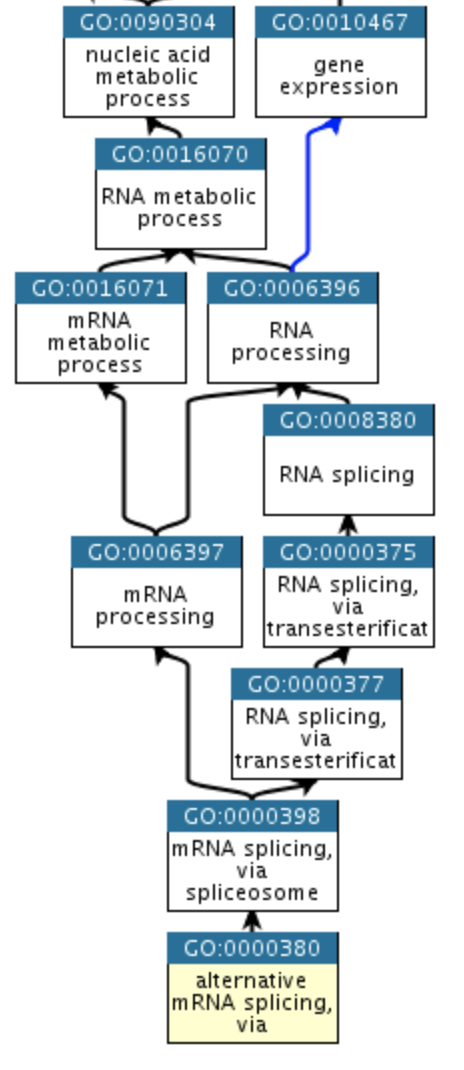

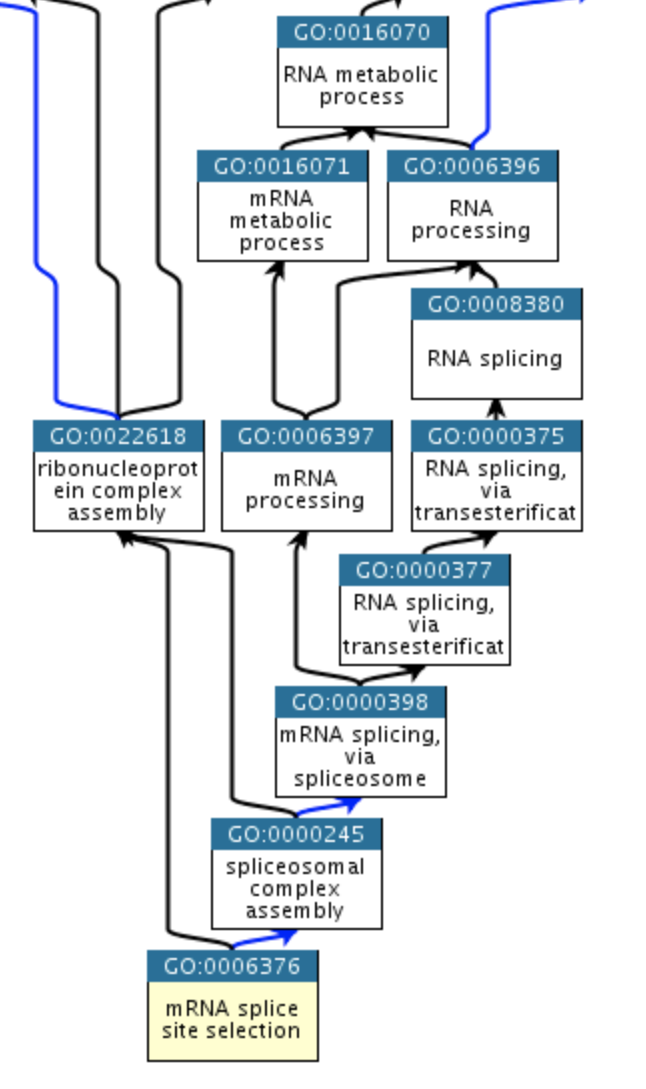

Review representation of alternative mRNA splicing, via spliceosome

|

RNA processes mini-project

|

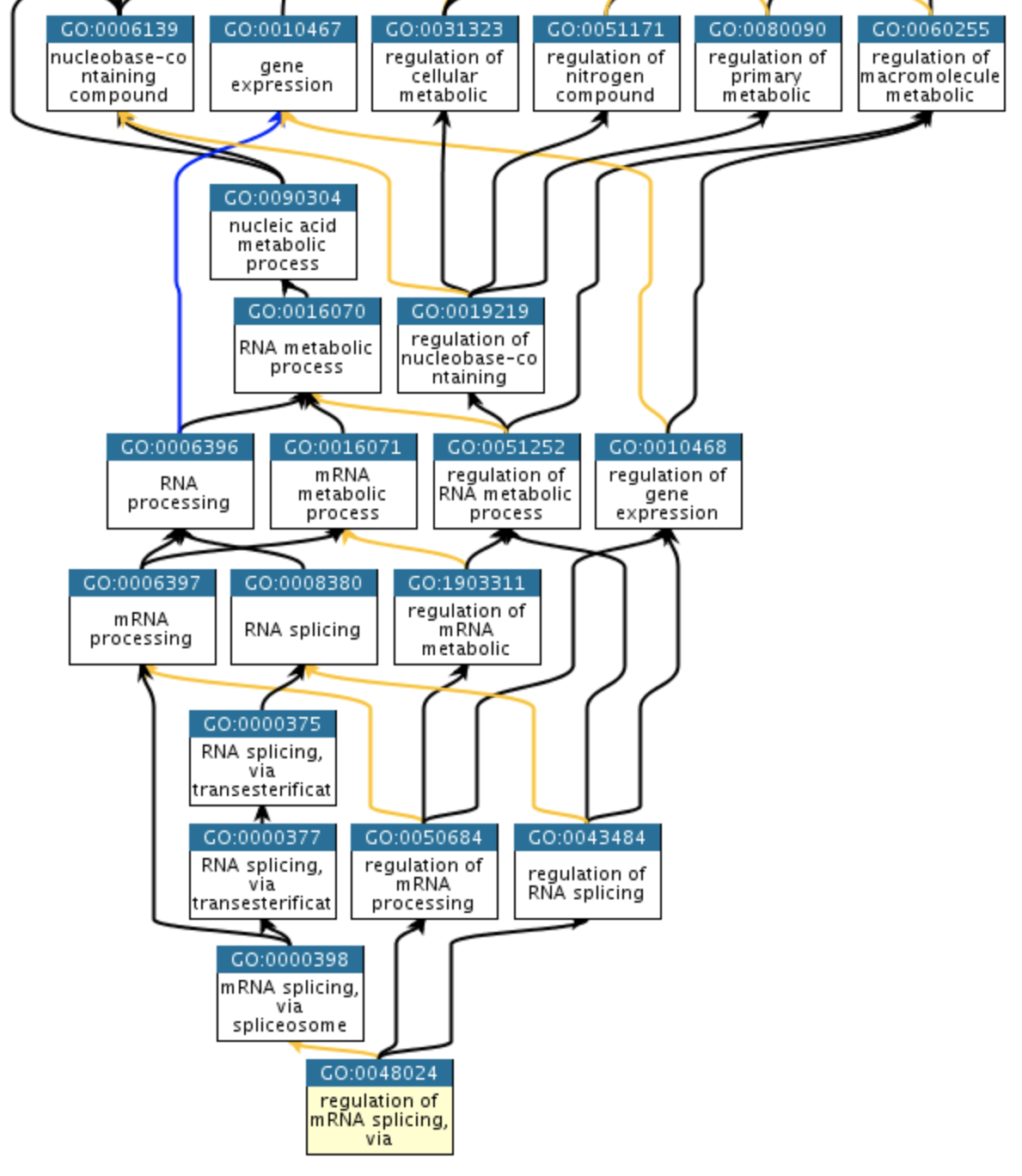

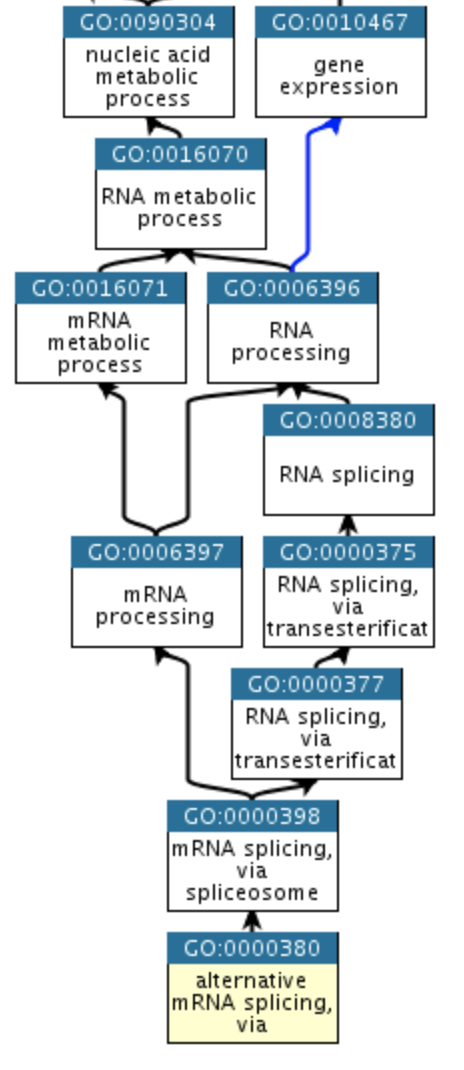

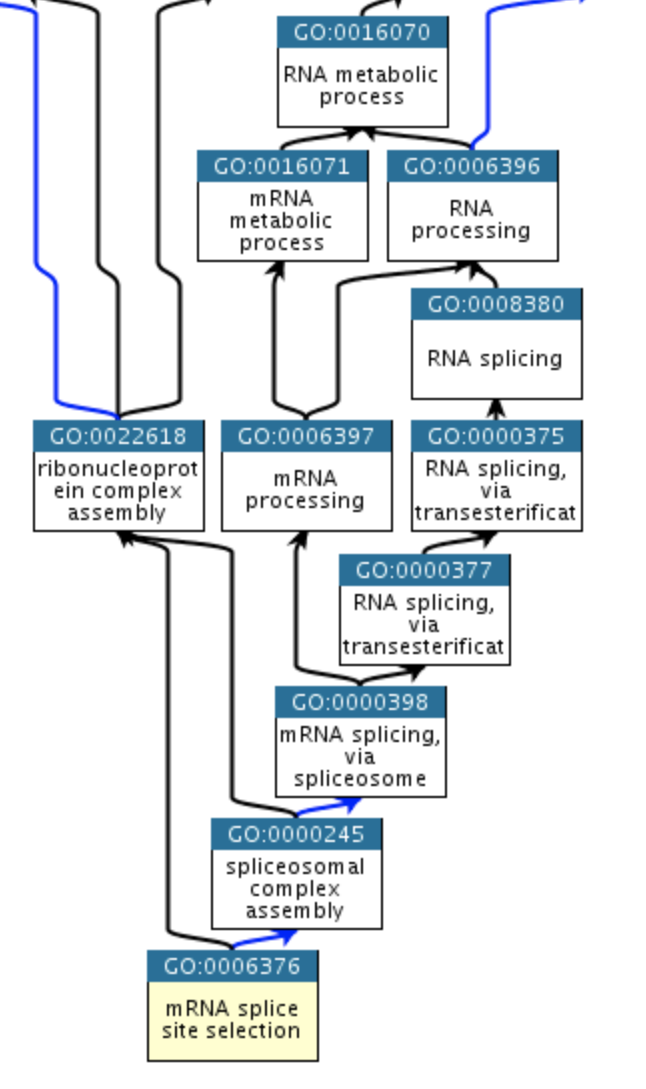

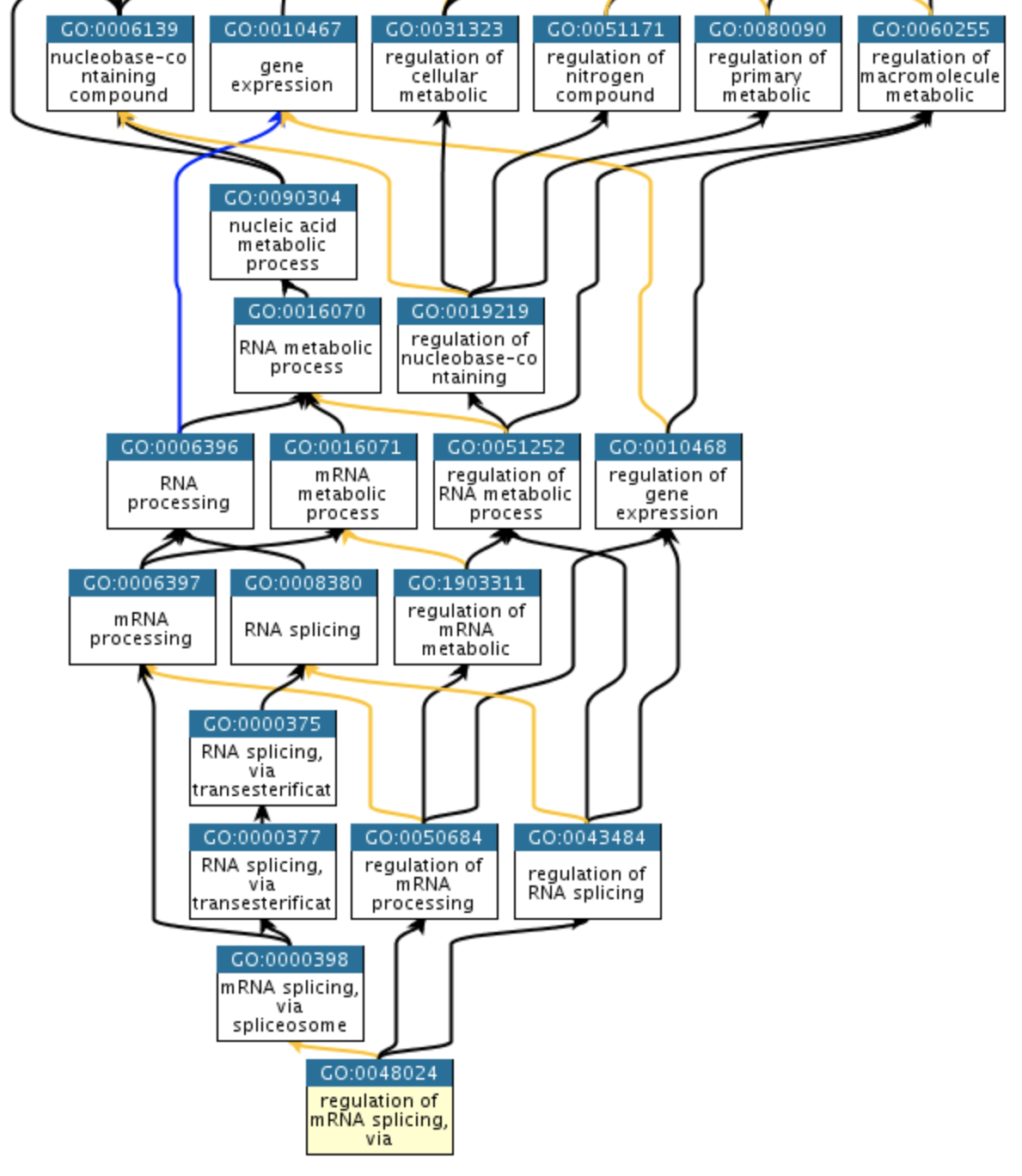

We need to review representation of alternative mRNA splicing, via spliceosome to clarify its relationship to mRNA splicing, via spliceosome and regulation of mRNA splicing, via spliceosome.

As part of this, we will need to review the associated sub-processes and molecular functions to ensure that we don't have redundant concepts here.

For reference:

|

1.0

|

Review representation of alternative mRNA splicing, via spliceosome - We need to review representation of alternative mRNA splicing, via spliceosome to clarify its relationship to mRNA splicing, via spliceosome and regulation of mRNA splicing, via spliceosome.

As part of this, we will need to review the associated sub-processes and molecular functions to ensure that we don't have redundant concepts here.

For reference:

|

process

|

review representation of alternative mrna splicing via spliceosome we need to review representation of alternative mrna splicing via spliceosome to clarify its relationship to mrna splicing via spliceosome and regulation of mrna splicing via spliceosome as part of this we will need to review the associated sub processes and molecular functions to ensure that we don t have redundant concepts here for reference

| 1

|

12,900

| 9,810,836,655

|

IssuesEvent

|

2019-06-12 21:31:48

|

Azure/azure-sdk-for-node

|

https://api.github.com/repos/Azure/azure-sdk-for-node

|

closed

|

SDK for CRUD operations on recovery services vault backup policies

|

Recovery Services Backup Service Attention customer-reported

|

Hi,

I can see there are list operations for listing backup policies: https://docs.microsoft.com/en-gb/javascript/api/azure-arm-recoveryservicesbackup/backuppolicies?view=azure-node-2.2.0

However I can't find any way to get, create, update or delete a backup policy - do these exist? In the past you have suggested calling APIs directly with `sendRequest`, so I'm wondering if that's an option here?

Also, are there SDK functions to attach backup policies to a VM?

Thanks,

Mike.

|

2.0

|

SDK for CRUD operations on recovery services vault backup policies - Hi,

I can see there are list operations for listing backup policies: https://docs.microsoft.com/en-gb/javascript/api/azure-arm-recoveryservicesbackup/backuppolicies?view=azure-node-2.2.0

However I can't find any way to get, create, update or delete a backup policy - do these exist? In the past you have suggested calling APIs directly with `sendRequest`, so I'm wondering if that's an option here?

Also, are there SDK functions to attach backup policies to a VM?

Thanks,

Mike.

|

non_process

|