Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

339,023 | 30,337,363,979 | IssuesEvent | 2023-07-11 10:24:57 | GSM-MSG/Hi-v2-BackEnd | https://api.github.com/repos/GSM-MSG/Hi-v2-BackEnd | opened | 대표자 위임 usecase testcode | ✅ Test | ### Describe

대표자 위임 유즈케이스 테스트코드를 작성합니다.

### Additional

_No response_ | 1.0 | 대표자 위임 usecase testcode - ### Describe

대표자 위임 유즈케이스 테스트코드를 작성합니다.

### Additional

_No response_ | test | 대표자 위임 usecase testcode describe 대표자 위임 유즈케이스 테스트코드를 작성합니다 additional no response | 1 |

48,200 | 5,949,065,981 | IssuesEvent | 2017-05-26 13:21:11 | pixelhumain/co2 | https://api.github.com/repos/pixelhumain/co2 | closed | Création description courte - compteur ne diminue pas | to test | Création d'une organisation (entreprise dans mon cas). Le compteur de caractère de la description courte ne diminue pas.

| 1.0 | Création description courte - compteur ne diminue pas - Création d'une organisation (entreprise dans mon cas). Le compteur de caractère de la description courte ne diminue pas.

| test | création description courte compteur ne diminue pas création d une organisation entreprise dans mon cas le compteur de caractère de la description courte ne diminue pas | 1 |

774,913 | 27,215,002,831 | IssuesEvent | 2023-02-20 20:36:57 | ascheid/itsg33-pbmm-issue-gen | https://api.github.com/repos/ascheid/itsg33-pbmm-issue-gen | closed | SA-4 ACQUISITION PROCESS | Priority: P3 | (A) The organization includes the following requirements, descriptions, and criteria, explicitly or by reference, in the acquisition contract for the information system, system component, or information system service in accordance with applicable GC legislation and TBS policies, directives and standards, and organizational mission/business needs:

(a) Security functional requirements;

(b) Security strength requirements;

(c) Security assurance requirements;

(d) Security-related documentation requirements;

(e) Requirements for protecting security-related documentation;

(f) Description of the information system development environment and environment in which the system is intended to operate; and

(g) Acceptance criteria.

(AA) The organization includes security-related documentation, requirements and/or specifications, explicitly or by reference, in information system acquisition contracts based on an assessment of risk and in accordance with the TBS Security and Contracting Management Standard [Reference 25].

(BB) The organization includes the development and evaluation-related requirements and/or specifications, explicitly or by reference, in information system acquisition contracts based on an assessment of risk and in accordance with applicable GC legislation and TBS policies, directives and standards. | 1.0 | SA-4 ACQUISITION PROCESS - (A) The organization includes the following requirements, descriptions, and criteria, explicitly or by reference, in the acquisition contract for the information system, system component, or information system service in accordance with applicable GC legislation and TBS policies, directives and standards, and organizational mission/business needs:

(a) Security functional requirements;

(b) Security strength requirements;

(c) Security assurance requirements;

(d) Security-related documentation requirements;

(e) Requirements for protecting security-related documentation;

(f) Description of the information system development environment and environment in which the system is intended to operate; and

(g) Acceptance criteria.

(AA) The organization includes security-related documentation, requirements and/or specifications, explicitly or by reference, in information system acquisition contracts based on an assessment of risk and in accordance with the TBS Security and Contracting Management Standard [Reference 25].

(BB) The organization includes the development and evaluation-related requirements and/or specifications, explicitly or by reference, in information system acquisition contracts based on an assessment of risk and in accordance with applicable GC legislation and TBS policies, directives and standards. | non_test | sa acquisition process a the organization includes the following requirements descriptions and criteria explicitly or by reference in the acquisition contract for the information system system component or information system service in accordance with applicable gc legislation and tbs policies directives and standards and organizational mission business needs a security functional requirements b security strength requirements c security assurance requirements d security related documentation requirements e requirements for protecting security related documentation f description of the information system development environment and environment in which the system is intended to operate and g acceptance criteria aa the organization includes security related documentation requirements and or specifications explicitly or by reference in information system acquisition contracts based on an assessment of risk and in accordance with the tbs security and contracting management standard bb the organization includes the development and evaluation related requirements and or specifications explicitly or by reference in information system acquisition contracts based on an assessment of risk and in accordance with applicable gc legislation and tbs policies directives and standards | 0 |

138,865 | 11,220,387,331 | IssuesEvent | 2020-01-07 15:43:10 | appsody/appsody | https://api.github.com/repos/appsody/appsody | closed | Code coverage analysis for stack_create.go | testing | - [x] if len (args) <1

test stack create without argument and verify error

- [x] if config.Dryrun

test stack create in dry run mode enabled and verify output

- [x] if dry run in unzip

test unzip with dry run mode enabled and verify output

test unzip with illegal file path, illegal dest

- [x] if runtime.GOOS == "windows"

test unzip on windows | 1.0 | Code coverage analysis for stack_create.go - - [x] if len (args) <1

test stack create without argument and verify error

- [x] if config.Dryrun

test stack create in dry run mode enabled and verify output

- [x] if dry run in unzip

test unzip with dry run mode enabled and verify output

test unzip with illegal file path, illegal dest

- [x] if runtime.GOOS == "windows"

test unzip on windows | test | code coverage analysis for stack create go if len args test stack create without argument and verify error if config dryrun test stack create in dry run mode enabled and verify output if dry run in unzip test unzip with dry run mode enabled and verify output test unzip with illegal file path illegal dest if runtime goos windows test unzip on windows | 1 |

4,509 | 2,730,490,715 | IssuesEvent | 2015-04-16 15:09:09 | uscensusbureau/citysdk | https://api.github.com/repos/uscensusbureau/citysdk | closed | Defining a batch call by lat/long + geographic scope + geographic resolution (e.g., give me all the blocks within *this* county). | bus-4 test | As a developer, I want to define a scope boundary which would allow a user to batch request variables & geographies within by a lat/long.

E.g., "I want all the *block-groups*(geo resolution) within *this*(lat/long) *county*(geo scope)" | 1.0 | Defining a batch call by lat/long + geographic scope + geographic resolution (e.g., give me all the blocks within *this* county). - As a developer, I want to define a scope boundary which would allow a user to batch request variables & geographies within by a lat/long.

E.g., "I want all the *block-groups*(geo resolution) within *this*(lat/long) *county*(geo scope)" | test | defining a batch call by lat long geographic scope geographic resolution e g give me all the blocks within this county as a developer i want to define a scope boundary which would allow a user to batch request variables geographies within by a lat long e g i want all the block groups geo resolution within this lat long county geo scope | 1 |

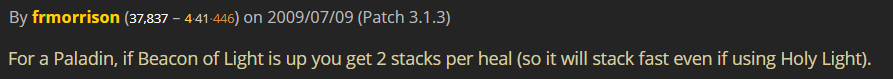

176,031 | 13,624,262,429 | IssuesEvent | 2020-09-24 07:47:32 | WoWManiaUK/Redemption | https://api.github.com/repos/WoWManiaUK/Redemption | closed | [Item] Meteorite Crystal | Fix - Tester Confirmed | **Links:** http://www.wow-mania.com/armory?item=46051

**What is Happening:** If there is a beacon of light casted on someone and heals are done while the trinket is activated, the stacks will still build up only 1 by 1.

In addition, Holy Shock spell does not trigger stacks at all. I would assume this is because of the ability being heal/damage at the same time.

**What Should happen:** Casting heals while beacon is present should award 2 stacks each. Holy Shock should award stacks as well

One of many comments from https://www.wowhead.com/item=46051/meteorite-crystal#comments:id=772039 | 1.0 | [Item] Meteorite Crystal - **Links:** http://www.wow-mania.com/armory?item=46051

**What is Happening:** If there is a beacon of light casted on someone and heals are done while the trinket is activated, the stacks will still build up only 1 by 1.

In addition, Holy Shock spell does not trigger stacks at all. I would assume this is because of the ability being heal/damage at the same time.

**What Should happen:** Casting heals while beacon is present should award 2 stacks each. Holy Shock should award stacks as well

One of many comments from https://www.wowhead.com/item=46051/meteorite-crystal#comments:id=772039 | test | meteorite crystal links what is happening if there is a beacon of light casted on someone and heals are done while the trinket is activated the stacks will still build up only by in addition holy shock spell does not trigger stacks at all i would assume this is because of the ability being heal damage at the same time what should happen casting heals while beacon is present should award stacks each holy shock should award stacks as well one of many comments from | 1 |

57,991 | 6,564,757,667 | IssuesEvent | 2017-09-08 04:00:12 | USEPA/E-Enterprise-Portal | https://api.github.com/repos/USEPA/E-Enterprise-Portal | closed | Build BWI response modal dynamically from service | EE-1941 Ready To Test Sprint 36 - TBD Technical task | Read list of contaminants from xml and build form ~ 8hrs

Build response dynamically from service ~ 13hrs | 1.0 | Build BWI response modal dynamically from service - Read list of contaminants from xml and build form ~ 8hrs

Build response dynamically from service ~ 13hrs | test | build bwi response modal dynamically from service read list of contaminants from xml and build form build response dynamically from service | 1 |

97,545 | 8,659,598,511 | IssuesEvent | 2018-11-28 06:50:39 | shahkhan40/shantestrep | https://api.github.com/repos/shahkhan40/shantestrep | closed | testing FX841 : ApiV1ProjectsIdProjectChecksumsGetQueryParamPagesizeInvalidDatatype | testing FX841 | Project : testing FX841

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-Cookie=[SESSION=ZWJmYmUyMDUtYzZkZC00N2FkLWJkYjgtZmE4YmQ5NTcxNmU5; Path=/; HttpOnly], Content-Type=[application/json;charset=UTF-8], Transfer-Encoding=[chunked], Date=[Wed, 28 Nov 2018 06:49:17 GMT]}

Endpoint : http://13.56.210.25/api/v1/api/v1/projects/OBMcxyZJ/project-checksums?pageSize=nZC5GW

Request :

Response :

{

"timestamp" : "2018-11-28T06:49:18.068+0000",

"status" : 404,

"error" : "Not Found",

"message" : "No message available",

"path" : "/api/v1/api/v1/projects/OBMcxyZJ/project-checksums"

}

Logs :

Assertion [@StatusCode != 401] resolved-to [404 != 401] result [Passed]Assertion [@StatusCode != 404] resolved-to [404 != 404] result [Failed]

--- FX Bot --- | 1.0 | testing FX841 : ApiV1ProjectsIdProjectChecksumsGetQueryParamPagesizeInvalidDatatype - Project : testing FX841

Job : UAT

Env : UAT

Region : US_WEST

Result : fail

Status Code : 404

Headers : {X-Content-Type-Options=[nosniff], X-XSS-Protection=[1; mode=block], Cache-Control=[no-cache, no-store, max-age=0, must-revalidate], Pragma=[no-cache], Expires=[0], X-Frame-Options=[DENY], Set-Cookie=[SESSION=ZWJmYmUyMDUtYzZkZC00N2FkLWJkYjgtZmE4YmQ5NTcxNmU5; Path=/; HttpOnly], Content-Type=[application/json;charset=UTF-8], Transfer-Encoding=[chunked], Date=[Wed, 28 Nov 2018 06:49:17 GMT]}

Endpoint : http://13.56.210.25/api/v1/api/v1/projects/OBMcxyZJ/project-checksums?pageSize=nZC5GW

Request :

Response :

{

"timestamp" : "2018-11-28T06:49:18.068+0000",

"status" : 404,

"error" : "Not Found",

"message" : "No message available",

"path" : "/api/v1/api/v1/projects/OBMcxyZJ/project-checksums"

}

Logs :

Assertion [@StatusCode != 401] resolved-to [404 != 401] result [Passed]Assertion [@StatusCode != 404] resolved-to [404 != 404] result [Failed]

--- FX Bot --- | test | testing project testing job uat env uat region us west result fail status code headers x content type options x xss protection cache control pragma expires x frame options set cookie content type transfer encoding date endpoint request response timestamp status error not found message no message available path api api projects obmcxyzj project checksums logs assertion resolved to result assertion resolved to result fx bot | 1 |

134,687 | 10,927,054,063 | IssuesEvent | 2019-11-22 15:54:50 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | [CI] DatafeedJobsIT.testRealtime_GivenProcessIsKilled fails to stop process | :ml >test-failure | *Original comment by @dakrone:*

Seems like it failed to stop the process:

```

FAILURE 335s | DatafeedJobsIT.testRealtime_GivenProcessIsKilled <<< FAILURES!

> Throwable LINK REDACTED: java.lang.AssertionError:

> Expected: <stopped>

> but: was <started>

> at __randomizedtesting.SeedInfo.seed([F8EED5748A2F4CE7:AA558501F4BAB52D]:0)

> at org.hamcrest.MatcherAssert.assertThat(MatcherAssert.java:20)

> at org.elasticsearch.xpack.ml.integration.DatafeedJobsIT.lambda$testRealtime_GivenProcessIsKilled$6(DatafeedJobsIT.java:235)

> at org.elasticsearch.test.ESTestCase.assertBusy(ESTestCase.java:732)

> at org.elasticsearch.test.ESTestCase.assertBusy(ESTestCase.java:706)

> at org.elasticsearch.xpack.ml.integration.DatafeedJobsIT.testRealtime_GivenProcessIsKilled(DatafeedJobsIT.java:232)

> at java.lang.Thread.run(Thread.java:748)

> Suppressed: java.lang.AssertionError:

```

I was not able to reproduce this

```

./gradlew :x-pack-elasticsearch:qa:ml-native-tests:integTestRunner -Dtests.seed=F8EED5748A2F4CE7 -Dtests.class=org.elasticsearch.xpack.ml.integration.DatafeedJobsIT -Dtests.method="testRealtime_GivenProcessIsKilled" -Dtests.security.manager=true -Dtests.locale=de-GR -Dtests.timezone=Pacific/Majuro

```

LINK REDACTED | 1.0 | [CI] DatafeedJobsIT.testRealtime_GivenProcessIsKilled fails to stop process - *Original comment by @dakrone:*

Seems like it failed to stop the process:

```

FAILURE 335s | DatafeedJobsIT.testRealtime_GivenProcessIsKilled <<< FAILURES!

> Throwable LINK REDACTED: java.lang.AssertionError:

> Expected: <stopped>

> but: was <started>

> at __randomizedtesting.SeedInfo.seed([F8EED5748A2F4CE7:AA558501F4BAB52D]:0)

> at org.hamcrest.MatcherAssert.assertThat(MatcherAssert.java:20)

> at org.elasticsearch.xpack.ml.integration.DatafeedJobsIT.lambda$testRealtime_GivenProcessIsKilled$6(DatafeedJobsIT.java:235)

> at org.elasticsearch.test.ESTestCase.assertBusy(ESTestCase.java:732)

> at org.elasticsearch.test.ESTestCase.assertBusy(ESTestCase.java:706)

> at org.elasticsearch.xpack.ml.integration.DatafeedJobsIT.testRealtime_GivenProcessIsKilled(DatafeedJobsIT.java:232)

> at java.lang.Thread.run(Thread.java:748)

> Suppressed: java.lang.AssertionError:

```

I was not able to reproduce this

```

./gradlew :x-pack-elasticsearch:qa:ml-native-tests:integTestRunner -Dtests.seed=F8EED5748A2F4CE7 -Dtests.class=org.elasticsearch.xpack.ml.integration.DatafeedJobsIT -Dtests.method="testRealtime_GivenProcessIsKilled" -Dtests.security.manager=true -Dtests.locale=de-GR -Dtests.timezone=Pacific/Majuro

```

LINK REDACTED | test | datafeedjobsit testrealtime givenprocessiskilled fails to stop process original comment by dakrone seems like it failed to stop the process failure datafeedjobsit testrealtime givenprocessiskilled failures throwable link redacted java lang assertionerror expected but was at randomizedtesting seedinfo seed at org hamcrest matcherassert assertthat matcherassert java at org elasticsearch xpack ml integration datafeedjobsit lambda testrealtime givenprocessiskilled datafeedjobsit java at org elasticsearch test estestcase assertbusy estestcase java at org elasticsearch test estestcase assertbusy estestcase java at org elasticsearch xpack ml integration datafeedjobsit testrealtime givenprocessiskilled datafeedjobsit java at java lang thread run thread java suppressed java lang assertionerror i was not able to reproduce this gradlew x pack elasticsearch qa ml native tests integtestrunner dtests seed dtests class org elasticsearch xpack ml integration datafeedjobsit dtests method testrealtime givenprocessiskilled dtests security manager true dtests locale de gr dtests timezone pacific majuro link redacted | 1 |

98,874 | 30,208,812,705 | IssuesEvent | 2023-07-05 11:20:47 | audacity/audacity | https://api.github.com/repos/audacity/audacity | closed | Building VST3SDK with Conan and gcc 12.2.0 fails | bug Build / CI | ### Bug description

_No response_

### Steps to reproduce

1. Download the latest release of Audacity: https://github.com/audacity/audacity/releases/tag/Audacity-3.2.3

2. Try to build it with CMake and Conan.

### Expected behavior

The program should complie

### Actual behavior

Compilation fails when Conan tries to build vst3sdk. The error message is:

```

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp: In function 'Steinberg::int32 Steinberg::FUnknownPrivate::atomicAdd(Steinberg::int32&, Steinberg::int32)':

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp:91:51: error: 'atomic_int_least32_t' does not name a type

91 | return atomic_fetch_add (reinterpret_cast<atomic_int_least32_t*> (&var), d) + d;

| ^~~~~~~~~~~~~~~~~~~~

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp:91:71: error: expected '>' before '*' token

91 | return atomic_fetch_add (reinterpret_cast<atomic_int_least32_t*> (&var), d) + d;

| ^

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp:91:71: error: expected '(' before '*' token

91 | return atomic_fetch_add (reinterpret_cast<atomic_int_least32_t*> (&var), d) + d;

| ^

| (

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp:91:72: error: expected primary-expression before '>' token

91 | return atomic_fetch_add (reinterpret_cast<atomic_int_least32_t*> (&var), d) + d;

```

Based on [this thread on forums.steinberg.net](https://forums.steinberg.net/t/pluginterfaces-lib-compilation-error-win-10-vs-2022/768976/8), it seems like defining `SMTG_USE_STDATOMIC_H=OFF` is the solution, but I'm not familiar enough with audacity's build system to figure out where to put this to get it to compile on my system.

### Audacity Version

latest stable version (from audacityteam.org/download)

### Operating system

Linux

### Additional context

My system has gcc v12.2.0 which I think is relevant to this issue as noted in the forum thread linked above.

The specific configure arguments I used are:

```

-Daudacity_use_ffmpeg=loaded

-Daudacity_lib_preference=system

-DCMAKE_BUILD_TYPE=Release

-Daudacity_conan_enabled=On

-DCMAKE_INSTALL_PREFIX=/usr

``` | 1.0 | Building VST3SDK with Conan and gcc 12.2.0 fails - ### Bug description

_No response_

### Steps to reproduce

1. Download the latest release of Audacity: https://github.com/audacity/audacity/releases/tag/Audacity-3.2.3

2. Try to build it with CMake and Conan.

### Expected behavior

The program should complie

### Actual behavior

Compilation fails when Conan tries to build vst3sdk. The error message is:

```

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp: In function 'Steinberg::int32 Steinberg::FUnknownPrivate::atomicAdd(Steinberg::int32&, Steinberg::int32)':

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp:91:51: error: 'atomic_int_least32_t' does not name a type

91 | return atomic_fetch_add (reinterpret_cast<atomic_int_least32_t*> (&var), d) + d;

| ^~~~~~~~~~~~~~~~~~~~

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp:91:71: error: expected '>' before '*' token

91 | return atomic_fetch_add (reinterpret_cast<atomic_int_least32_t*> (&var), d) + d;

| ^

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp:91:71: error: expected '(' before '*' token

91 | return atomic_fetch_add (reinterpret_cast<atomic_int_least32_t*> (&var), d) + d;

| ^

| (

/tmp/.conan/data/vst3sdk/3.7.3/_/_/build/e7c1133d61ad7d5d0234a08d33a88035fe98bd68/vst3sdk/pluginterfaces/base/funknown.cpp:91:72: error: expected primary-expression before '>' token

91 | return atomic_fetch_add (reinterpret_cast<atomic_int_least32_t*> (&var), d) + d;

```

Based on [this thread on forums.steinberg.net](https://forums.steinberg.net/t/pluginterfaces-lib-compilation-error-win-10-vs-2022/768976/8), it seems like defining `SMTG_USE_STDATOMIC_H=OFF` is the solution, but I'm not familiar enough with audacity's build system to figure out where to put this to get it to compile on my system.

### Audacity Version

latest stable version (from audacityteam.org/download)

### Operating system

Linux

### Additional context

My system has gcc v12.2.0 which I think is relevant to this issue as noted in the forum thread linked above.

The specific configure arguments I used are:

```

-Daudacity_use_ffmpeg=loaded

-Daudacity_lib_preference=system

-DCMAKE_BUILD_TYPE=Release

-Daudacity_conan_enabled=On

-DCMAKE_INSTALL_PREFIX=/usr

``` | non_test | building with conan and gcc fails bug description no response steps to reproduce download the latest release of audacity try to build it with cmake and conan expected behavior the program should complie actual behavior compilation fails when conan tries to build the error message is tmp conan data build pluginterfaces base funknown cpp in function steinberg steinberg funknownprivate atomicadd steinberg steinberg tmp conan data build pluginterfaces base funknown cpp error atomic int t does not name a type return atomic fetch add reinterpret cast var d d tmp conan data build pluginterfaces base funknown cpp error expected before token return atomic fetch add reinterpret cast var d d tmp conan data build pluginterfaces base funknown cpp error expected before token return atomic fetch add reinterpret cast var d d tmp conan data build pluginterfaces base funknown cpp error expected primary expression before token return atomic fetch add reinterpret cast var d d based on it seems like defining smtg use stdatomic h off is the solution but i m not familiar enough with audacity s build system to figure out where to put this to get it to compile on my system audacity version latest stable version from audacityteam org download operating system linux additional context my system has gcc which i think is relevant to this issue as noted in the forum thread linked above the specific configure arguments i used are daudacity use ffmpeg loaded daudacity lib preference system dcmake build type release daudacity conan enabled on dcmake install prefix usr | 0 |

322,394 | 27,598,331,560 | IssuesEvent | 2023-03-09 08:20:09 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | opened | Fix jax_numpy_math.test_jax_numpy_log2 | JAX Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4369130149/jobs/7642569725" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4369130149/jobs/7642569725" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/4369130149/jobs/7642569725" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/4369130149/jobs/7642569725" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_jax/test_jax_numpy_math.py::test_jax_numpy_log2[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-08T23:15:11.5396484Z E jax._src.traceback_util.UnfilteredStackTrace: TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5396835Z E

2023-03-08T23:15:11.5397122Z E The stack trace below excludes JAX-internal frames.

2023-03-08T23:15:11.5397447Z E The preceding is the original exception that occurred, unmodified.

2023-03-08T23:15:11.5397701Z E

2023-03-08T23:15:11.5397919Z E --------------------

2023-03-08T23:15:11.5401095Z E TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5401426Z E Falsifying example: test_jax_numpy_log2(

2023-03-08T23:15:11.5401773Z E dtype_and_x=(['float16'], [array(-1., dtype=float16)]),

2023-03-08T23:15:11.5402069Z E test_flags=FrontendFunctionTestFlags(

2023-03-08T23:15:11.5403110Z E num_positional_args=0,

2023-03-08T23:15:11.5403336Z E with_out=False,

2023-03-08T23:15:11.5403547Z E inplace=False,

2023-03-08T23:15:11.5403764Z E as_variable=[False],

2023-03-08T23:15:11.5403988Z E native_arrays=[False],

2023-03-08T23:15:11.5404193Z E ),

2023-03-08T23:15:11.5404523Z E fn_tree='ivy.functional.frontends.jax.numpy.log2',

2023-03-08T23:15:11.5404828Z E on_device='cpu',

2023-03-08T23:15:11.5405067Z E frontend='jax',

2023-03-08T23:15:11.5405243Z E )

2023-03-08T23:15:11.5405411Z E

2023-03-08T23:15:11.5405902Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCADTAAAkAAM=') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_jax/test_jax_numpy_math.py::test_jax_numpy_log2[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-08T23:15:11.5396484Z E jax._src.traceback_util.UnfilteredStackTrace: TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5396835Z E

2023-03-08T23:15:11.5397122Z E The stack trace below excludes JAX-internal frames.

2023-03-08T23:15:11.5397447Z E The preceding is the original exception that occurred, unmodified.

2023-03-08T23:15:11.5397701Z E

2023-03-08T23:15:11.5397919Z E --------------------

2023-03-08T23:15:11.5401095Z E TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5401426Z E Falsifying example: test_jax_numpy_log2(

2023-03-08T23:15:11.5401773Z E dtype_and_x=(['float16'], [array(-1., dtype=float16)]),

2023-03-08T23:15:11.5402069Z E test_flags=FrontendFunctionTestFlags(

2023-03-08T23:15:11.5403110Z E num_positional_args=0,

2023-03-08T23:15:11.5403336Z E with_out=False,

2023-03-08T23:15:11.5403547Z E inplace=False,

2023-03-08T23:15:11.5403764Z E as_variable=[False],

2023-03-08T23:15:11.5403988Z E native_arrays=[False],

2023-03-08T23:15:11.5404193Z E ),

2023-03-08T23:15:11.5404523Z E fn_tree='ivy.functional.frontends.jax.numpy.log2',

2023-03-08T23:15:11.5404828Z E on_device='cpu',

2023-03-08T23:15:11.5405067Z E frontend='jax',

2023-03-08T23:15:11.5405243Z E )

2023-03-08T23:15:11.5405411Z E

2023-03-08T23:15:11.5405902Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCADTAAAkAAM=') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_jax/test_jax_numpy_math.py::test_jax_numpy_log2[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-08T23:15:11.5396484Z E jax._src.traceback_util.UnfilteredStackTrace: TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5396835Z E

2023-03-08T23:15:11.5397122Z E The stack trace below excludes JAX-internal frames.

2023-03-08T23:15:11.5397447Z E The preceding is the original exception that occurred, unmodified.

2023-03-08T23:15:11.5397701Z E

2023-03-08T23:15:11.5397919Z E --------------------

2023-03-08T23:15:11.5401095Z E TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5401426Z E Falsifying example: test_jax_numpy_log2(

2023-03-08T23:15:11.5401773Z E dtype_and_x=(['float16'], [array(-1., dtype=float16)]),

2023-03-08T23:15:11.5402069Z E test_flags=FrontendFunctionTestFlags(

2023-03-08T23:15:11.5403110Z E num_positional_args=0,

2023-03-08T23:15:11.5403336Z E with_out=False,

2023-03-08T23:15:11.5403547Z E inplace=False,

2023-03-08T23:15:11.5403764Z E as_variable=[False],

2023-03-08T23:15:11.5403988Z E native_arrays=[False],

2023-03-08T23:15:11.5404193Z E ),

2023-03-08T23:15:11.5404523Z E fn_tree='ivy.functional.frontends.jax.numpy.log2',

2023-03-08T23:15:11.5404828Z E on_device='cpu',

2023-03-08T23:15:11.5405067Z E frontend='jax',

2023-03-08T23:15:11.5405243Z E )

2023-03-08T23:15:11.5405411Z E

2023-03-08T23:15:11.5405902Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCADTAAAkAAM=') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_jax/test_jax_numpy_math.py::test_jax_numpy_log2[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-08T23:15:11.5396484Z E jax._src.traceback_util.UnfilteredStackTrace: TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5396835Z E

2023-03-08T23:15:11.5397122Z E The stack trace below excludes JAX-internal frames.

2023-03-08T23:15:11.5397447Z E The preceding is the original exception that occurred, unmodified.

2023-03-08T23:15:11.5397701Z E

2023-03-08T23:15:11.5397919Z E --------------------

2023-03-08T23:15:11.5401095Z E TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5401426Z E Falsifying example: test_jax_numpy_log2(

2023-03-08T23:15:11.5401773Z E dtype_and_x=(['float16'], [array(-1., dtype=float16)]),

2023-03-08T23:15:11.5402069Z E test_flags=FrontendFunctionTestFlags(

2023-03-08T23:15:11.5403110Z E num_positional_args=0,

2023-03-08T23:15:11.5403336Z E with_out=False,

2023-03-08T23:15:11.5403547Z E inplace=False,

2023-03-08T23:15:11.5403764Z E as_variable=[False],

2023-03-08T23:15:11.5403988Z E native_arrays=[False],

2023-03-08T23:15:11.5404193Z E ),

2023-03-08T23:15:11.5404523Z E fn_tree='ivy.functional.frontends.jax.numpy.log2',

2023-03-08T23:15:11.5404828Z E on_device='cpu',

2023-03-08T23:15:11.5405067Z E frontend='jax',

2023-03-08T23:15:11.5405243Z E )

2023-03-08T23:15:11.5405411Z E

2023-03-08T23:15:11.5405902Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCADTAAAkAAM=') as a decorator on your test case

</details>

| 1.0 | Fix jax_numpy_math.test_jax_numpy_log2 - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4369130149/jobs/7642569725" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4369130149/jobs/7642569725" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/4369130149/jobs/7642569725" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/4369130149/jobs/7642569725" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_jax/test_jax_numpy_math.py::test_jax_numpy_log2[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-08T23:15:11.5396484Z E jax._src.traceback_util.UnfilteredStackTrace: TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5396835Z E

2023-03-08T23:15:11.5397122Z E The stack trace below excludes JAX-internal frames.

2023-03-08T23:15:11.5397447Z E The preceding is the original exception that occurred, unmodified.

2023-03-08T23:15:11.5397701Z E

2023-03-08T23:15:11.5397919Z E --------------------

2023-03-08T23:15:11.5401095Z E TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5401426Z E Falsifying example: test_jax_numpy_log2(

2023-03-08T23:15:11.5401773Z E dtype_and_x=(['float16'], [array(-1., dtype=float16)]),

2023-03-08T23:15:11.5402069Z E test_flags=FrontendFunctionTestFlags(

2023-03-08T23:15:11.5403110Z E num_positional_args=0,

2023-03-08T23:15:11.5403336Z E with_out=False,

2023-03-08T23:15:11.5403547Z E inplace=False,

2023-03-08T23:15:11.5403764Z E as_variable=[False],

2023-03-08T23:15:11.5403988Z E native_arrays=[False],

2023-03-08T23:15:11.5404193Z E ),

2023-03-08T23:15:11.5404523Z E fn_tree='ivy.functional.frontends.jax.numpy.log2',

2023-03-08T23:15:11.5404828Z E on_device='cpu',

2023-03-08T23:15:11.5405067Z E frontend='jax',

2023-03-08T23:15:11.5405243Z E )

2023-03-08T23:15:11.5405411Z E

2023-03-08T23:15:11.5405902Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCADTAAAkAAM=') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_jax/test_jax_numpy_math.py::test_jax_numpy_log2[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-08T23:15:11.5396484Z E jax._src.traceback_util.UnfilteredStackTrace: TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5396835Z E

2023-03-08T23:15:11.5397122Z E The stack trace below excludes JAX-internal frames.

2023-03-08T23:15:11.5397447Z E The preceding is the original exception that occurred, unmodified.

2023-03-08T23:15:11.5397701Z E

2023-03-08T23:15:11.5397919Z E --------------------

2023-03-08T23:15:11.5401095Z E TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5401426Z E Falsifying example: test_jax_numpy_log2(

2023-03-08T23:15:11.5401773Z E dtype_and_x=(['float16'], [array(-1., dtype=float16)]),

2023-03-08T23:15:11.5402069Z E test_flags=FrontendFunctionTestFlags(

2023-03-08T23:15:11.5403110Z E num_positional_args=0,

2023-03-08T23:15:11.5403336Z E with_out=False,

2023-03-08T23:15:11.5403547Z E inplace=False,

2023-03-08T23:15:11.5403764Z E as_variable=[False],

2023-03-08T23:15:11.5403988Z E native_arrays=[False],

2023-03-08T23:15:11.5404193Z E ),

2023-03-08T23:15:11.5404523Z E fn_tree='ivy.functional.frontends.jax.numpy.log2',

2023-03-08T23:15:11.5404828Z E on_device='cpu',

2023-03-08T23:15:11.5405067Z E frontend='jax',

2023-03-08T23:15:11.5405243Z E )

2023-03-08T23:15:11.5405411Z E

2023-03-08T23:15:11.5405902Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCADTAAAkAAM=') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_jax/test_jax_numpy_math.py::test_jax_numpy_log2[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-08T23:15:11.5396484Z E jax._src.traceback_util.UnfilteredStackTrace: TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5396835Z E

2023-03-08T23:15:11.5397122Z E The stack trace below excludes JAX-internal frames.

2023-03-08T23:15:11.5397447Z E The preceding is the original exception that occurred, unmodified.

2023-03-08T23:15:11.5397701Z E

2023-03-08T23:15:11.5397919Z E --------------------

2023-03-08T23:15:11.5401095Z E TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5401426Z E Falsifying example: test_jax_numpy_log2(

2023-03-08T23:15:11.5401773Z E dtype_and_x=(['float16'], [array(-1., dtype=float16)]),

2023-03-08T23:15:11.5402069Z E test_flags=FrontendFunctionTestFlags(

2023-03-08T23:15:11.5403110Z E num_positional_args=0,

2023-03-08T23:15:11.5403336Z E with_out=False,

2023-03-08T23:15:11.5403547Z E inplace=False,

2023-03-08T23:15:11.5403764Z E as_variable=[False],

2023-03-08T23:15:11.5403988Z E native_arrays=[False],

2023-03-08T23:15:11.5404193Z E ),

2023-03-08T23:15:11.5404523Z E fn_tree='ivy.functional.frontends.jax.numpy.log2',

2023-03-08T23:15:11.5404828Z E on_device='cpu',

2023-03-08T23:15:11.5405067Z E frontend='jax',

2023-03-08T23:15:11.5405243Z E )

2023-03-08T23:15:11.5405411Z E

2023-03-08T23:15:11.5405902Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCADTAAAkAAM=') as a decorator on your test case

</details>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_jax/test_jax_numpy_math.py::test_jax_numpy_log2[cpu-ivy.functional.backends.jax-False-False]</summary>

2023-03-08T23:15:11.5396484Z E jax._src.traceback_util.UnfilteredStackTrace: TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5396835Z E

2023-03-08T23:15:11.5397122Z E The stack trace below excludes JAX-internal frames.

2023-03-08T23:15:11.5397447Z E The preceding is the original exception that occurred, unmodified.

2023-03-08T23:15:11.5397701Z E

2023-03-08T23:15:11.5397919Z E --------------------

2023-03-08T23:15:11.5401095Z E TypeError: log2() got some positional-only arguments passed as keyword arguments: 'x'

2023-03-08T23:15:11.5401426Z E Falsifying example: test_jax_numpy_log2(

2023-03-08T23:15:11.5401773Z E dtype_and_x=(['float16'], [array(-1., dtype=float16)]),

2023-03-08T23:15:11.5402069Z E test_flags=FrontendFunctionTestFlags(

2023-03-08T23:15:11.5403110Z E num_positional_args=0,

2023-03-08T23:15:11.5403336Z E with_out=False,

2023-03-08T23:15:11.5403547Z E inplace=False,

2023-03-08T23:15:11.5403764Z E as_variable=[False],

2023-03-08T23:15:11.5403988Z E native_arrays=[False],

2023-03-08T23:15:11.5404193Z E ),

2023-03-08T23:15:11.5404523Z E fn_tree='ivy.functional.frontends.jax.numpy.log2',

2023-03-08T23:15:11.5404828Z E on_device='cpu',

2023-03-08T23:15:11.5405067Z E frontend='jax',

2023-03-08T23:15:11.5405243Z E )

2023-03-08T23:15:11.5405411Z E

2023-03-08T23:15:11.5405902Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCADTAAAkAAM=') as a decorator on your test case

</details>

| test | fix jax numpy math test jax numpy tensorflow img src torch img src numpy img src jax img src failed ivy tests test ivy test frontends test jax test jax numpy math py test jax numpy e jax src traceback util unfilteredstacktrace typeerror got some positional only arguments passed as keyword arguments x e e the stack trace below excludes jax internal frames e the preceding is the original exception that occurred unmodified e e e typeerror got some positional only arguments passed as keyword arguments x e falsifying example test jax numpy e dtype and x e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e e fn tree ivy functional frontends jax numpy e on device cpu e frontend jax e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case failed ivy tests test ivy test frontends test jax test jax numpy math py test jax numpy e jax src traceback util unfilteredstacktrace typeerror got some positional only arguments passed as keyword arguments x e e the stack trace below excludes jax internal frames e the preceding is the original exception that occurred unmodified e e e typeerror got some positional only arguments passed as keyword arguments x e falsifying example test jax numpy e dtype and x e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e e fn tree ivy functional frontends jax numpy e on device cpu e frontend jax e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case failed ivy tests test ivy test frontends test jax test jax numpy math py test jax numpy e jax src traceback util unfilteredstacktrace typeerror got some positional only arguments passed as keyword arguments x e e the stack trace below excludes jax internal frames e the preceding is the original exception that occurred unmodified e e e typeerror got some positional only arguments passed as keyword arguments x e falsifying example test jax numpy e dtype and x e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e e fn tree ivy functional frontends jax numpy e on device cpu e frontend jax e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case failed ivy tests test ivy test frontends test jax test jax numpy math py test jax numpy e jax src traceback util unfilteredstacktrace typeerror got some positional only arguments passed as keyword arguments x e e the stack trace below excludes jax internal frames e the preceding is the original exception that occurred unmodified e e e typeerror got some positional only arguments passed as keyword arguments x e falsifying example test jax numpy e dtype and x e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e e fn tree ivy functional frontends jax numpy e on device cpu e frontend jax e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case | 1 |

41,513 | 10,728,915,917 | IssuesEvent | 2019-10-28 14:44:46 | xamarin/xamarin-android | https://api.github.com/repos/xamarin/xamarin-android | opened | App builds hang when executing task LinkAssemblies if linking enabled | Area: App+Library Build | ### Steps to Reproduce

1. Build app with AndroidLinkMode=Full or AndroidLinkMode=SdkOnly

<!--

If you have a repro project, you may drag & drop the .zip/etc. onto the issue editor to attach it.

-->

### Expected Behavior

App builds successfully.

### Actual Behavior

Build hangs

### Version Information

Microsoft Visual Studio Enterprise 2019

Version 16.3.6

VisualStudio.16.Release/16.3.6+29418.71

Microsoft .NET Framework

Version 4.8.03752

Installed Version: Enterprise

Visual C++ 2019 00435-60000-00000-AA197

Microsoft Visual C++ 2019

ADL Tools Service Provider 1.0

This package contains services used by Data Lake tools

ASP.NET and Web Tools 2019 16.3.286.43615

ASP.NET and Web Tools 2019

ASP.NET Web Frameworks and Tools 2019 16.3.286.43615

For additional information, visit https://www.asp.net/

Azure App Service Tools v3.0.0 16.3.286.43615

Azure App Service Tools v3.0.0

Azure Data Lake Node 1.0

This package contains the Data Lake integration nodes for Server Explorer.

Azure Data Lake Tools for Visual Studio 2.4.2000.0

Microsoft Azure Data Lake Tools for Visual Studio

Azure Functions and Web Jobs Tools 16.3.286.43615

Azure Functions and Web Jobs Tools

Azure Logic Apps Tools for Visual Studio 1.0

Add-in for the Azure Resource Group project to support the Logic App Designer and template creation.

Azure Stream Analytics Tools for Visual Studio 2.4.2000.0

Microsoft Azure Stream Analytics Tools for Visual Studio

C# Tools 3.3.1-beta3-19461-02+2fd12c210e22f7d6245805c60340f6a34af6875b

C# components used in the IDE. Depending on your project type and settings, a different version of the compiler may be used.

Common Azure Tools 1.10

Provides common services for use by Azure Mobile Services and Microsoft Azure Tools.

Cookiecutter 16.3.19252.1

Provides tools for finding, instantiating and customizing templates in cookiecutter format.

EditorConfig Language Service 1.17.260

Language service for .editorconfig files.

EditorConfig helps developers define and maintain consistent coding styles between different editors and IDEs.

Extensibility Message Bus 1.2.0 (d16-2@8b56e20)

Provides common messaging-based MEF services for loosely coupled Visual Studio extension components communication and integration.

Fabric.DiagnosticEvents 1.0

Fabric Diagnostic Events

File Icons 2.7

Adds icons for files that are not recognized by Solution Explorer

GitHub.VisualStudio 2.10.8.8132

A Visual Studio Extension that brings the GitHub Flow into Visual Studio.

IntelliCode Extension 1.0

IntelliCode Visual Studio Extension Detailed Info

Markdown Editor 1.12.236

A full featured Markdown editor with live preview and syntax highlighting. Supports GitHub flavored Markdown.

Microsoft Azure HDInsight Azure Node 2.4.2000.0

HDInsight Node under Azure Node

Microsoft Azure Hive Query Language Service 2.4.2000.0

Language service for Hive query

Microsoft Azure Service Fabric Tools for Visual Studio 16.0

Microsoft Azure Service Fabric Tools for Visual Studio

Microsoft Azure Stream Analytics Language Service 2.4.2000.0

Language service for Azure Stream Analytics

Microsoft Azure Stream Analytics Node 1.0

Azure Stream Analytics Node under Azure Node

Microsoft Azure Tools 2.9

Microsoft Azure Tools for Microsoft Visual Studio 0x10 - v2.9.20816.1

Microsoft Continuous Delivery Tools for Visual Studio 0.4

Simplifying the configuration of Azure DevOps pipelines from within the Visual Studio IDE.

Microsoft JVM Debugger 1.0

Provides support for connecting the Visual Studio debugger to JDWP compatible Java Virtual Machines

Microsoft Library Manager 2.0.83+gbc8a4b23ec

Install client-side libraries easily to any web project

Microsoft MI-Based Debugger 1.0

Provides support for connecting Visual Studio to MI compatible debuggers

Microsoft Visual C++ Wizards 1.0

Microsoft Visual C++ Wizards

Microsoft Visual Studio Tools for Containers 1.1

Develop, run, validate your ASP.NET Core applications in the target environment. F5 your application directly into a container with debugging, or CTRL + F5 to edit & refresh your app without having to rebuild the container.

Microsoft Visual Studio VC Package 1.0

Microsoft Visual Studio VC Package

Mono Debugging for Visual Studio 16.3.7 (9d260c5)

Support for debugging Mono processes with Visual Studio.

NuGet Package Manager 5.3.1

NuGet Package Manager in Visual Studio. For more information about NuGet, visit https://docs.nuget.org/

Open Command Line 2.4.226

2.4.226

PowerShell Pro Tools for Visual Studio 1.0

A set of tools for developing and debugging PowerShell scripts and modules in Visual Studio.

Productivity Power Tools 2017/2019 16.0

Installs the individual extensions of Productivity Power Tools 2017/2019

Project File Tools 1.0.1

Provides Intellisense and other tooling for XML based project files such as .csproj and .vbproj files.

ProjectServicesPackage Extension 1.0

ProjectServicesPackage Visual Studio Extension Detailed Info

Python 16.3.19252.1

Provides IntelliSense, projects, templates, debugging, interactive windows, and other support for Python developers.

Python - Conda support 16.3.19252.1

Conda support for Python projects.

Python - Django support 16.3.19252.1

Provides templates and integration for the Django web framework.

Python - IronPython support 16.3.19252.1

Provides templates and integration for IronPython-based projects.

Python - Profiling support 16.3.19252.1

Profiling support for Python projects.

Redgate SQL Prompt 9.5.20.11737

Write, format, and refactor SQL effortlessly

Snapshot Debugging Extension 1.0

Snapshot Debugging Visual Studio Extension Detailed Info

SQL Server Data Tools 16.0.61908.27190

Microsoft SQL Server Data Tools

Test Adapter for Boost.Test 1.0

Enables Visual Studio's testing tools with unit tests written for Boost.Test. The use terms and Third Party Notices are available in the extension installation directory.

Test Adapter for Google Test 1.0

Enables Visual Studio's testing tools with unit tests written for Google Test. The use terms and Third Party Notices are available in the extension installation directory.

ToolWindowHostedEditor 1.0

Hosting json editor into a tool window

TypeScript Tools 16.0.10821.2002

TypeScript Tools for Microsoft Visual Studio

Visual Basic Tools 3.3.1-beta3-19461-02+2fd12c210e22f7d6245805c60340f6a34af6875b

Visual Basic components used in the IDE. Depending on your project type and settings, a different version of the compiler may be used.

Visual C++ for Cross Platform Mobile Development (Android) 16.0.29230.54

Visual C++ for Cross Platform Mobile Development (Android)

Visual F# Tools 10.4 for F# 4.6 16.3.0-beta.19455.1+0422ff293bb2cc722fe5021b85ef50378a9af823

Microsoft Visual F# Tools 10.4 for F# 4.6

Visual Studio Code Debug Adapter Host Package 1.0

Interop layer for hosting Visual Studio Code debug adapters in Visual Studio

Visual Studio Tools for CMake 1.0

Visual Studio Tools for CMake

Visual Studio Tools for CMake 1.0

Visual Studio Tools for CMake

Visual Studio Tools for Containers 1.0

Visual Studio Tools for Containers

Visual Studio Tools for Kubernetes 1.0

Visual Studio Tools for Kubernetes

VisualStudio.Mac 1.0

Mac Extension for Visual Studio

Xamarin 16.3.0.277 (d16-3@c0fcab7)

Visual Studio extension to enable development for Xamarin.iOS and Xamarin.Android.

Xamarin Designer 16.3.0.246 (remotes/origin/d16-3@bd2f86892)

Visual Studio extension to enable Xamarin Designer tools in Visual Studio.

Xamarin Templates 16.3.565 (27e9746)

Templates for building iOS, Android, and Windows apps with Xamarin and Xamarin.Forms.

Xamarin.Android SDK 10.0.3.0 (d16-3/4d45b41)

Xamarin.Android Reference Assemblies and MSBuild support.

Mono: mono/mono/2019-06@5608fe0abb3

Java.Interop: xamarin/java.interop/d16-3@5836f58

LibZipSharp: grendello/LibZipSharp/d16-3@71f4a94

LibZip: nih-at/libzip/rel-1-5-1@b95cf3fd

ProGuard: xamarin/proguard/master@905836d

SQLite: xamarin/sqlite/3.27.1@8212a2d

Xamarin.Android Tools: xamarin/xamarin-android-tools/d16-3@cb41333

Xamarin.iOS and Xamarin.Mac SDK 13.4.0.2 (e37549b)

Xamarin.iOS and Xamarin.Mac Reference Assemblies and MSBuild support.

| 1.0 | App builds hang when executing task LinkAssemblies if linking enabled - ### Steps to Reproduce

1. Build app with AndroidLinkMode=Full or AndroidLinkMode=SdkOnly

<!--

If you have a repro project, you may drag & drop the .zip/etc. onto the issue editor to attach it.

-->

### Expected Behavior

App builds successfully.

### Actual Behavior

Build hangs

### Version Information

Microsoft Visual Studio Enterprise 2019

Version 16.3.6

VisualStudio.16.Release/16.3.6+29418.71

Microsoft .NET Framework

Version 4.8.03752

Installed Version: Enterprise

Visual C++ 2019 00435-60000-00000-AA197

Microsoft Visual C++ 2019

ADL Tools Service Provider 1.0

This package contains services used by Data Lake tools

ASP.NET and Web Tools 2019 16.3.286.43615

ASP.NET and Web Tools 2019

ASP.NET Web Frameworks and Tools 2019 16.3.286.43615

For additional information, visit https://www.asp.net/

Azure App Service Tools v3.0.0 16.3.286.43615

Azure App Service Tools v3.0.0

Azure Data Lake Node 1.0

This package contains the Data Lake integration nodes for Server Explorer.

Azure Data Lake Tools for Visual Studio 2.4.2000.0

Microsoft Azure Data Lake Tools for Visual Studio

Azure Functions and Web Jobs Tools 16.3.286.43615

Azure Functions and Web Jobs Tools

Azure Logic Apps Tools for Visual Studio 1.0

Add-in for the Azure Resource Group project to support the Logic App Designer and template creation.

Azure Stream Analytics Tools for Visual Studio 2.4.2000.0

Microsoft Azure Stream Analytics Tools for Visual Studio

C# Tools 3.3.1-beta3-19461-02+2fd12c210e22f7d6245805c60340f6a34af6875b

C# components used in the IDE. Depending on your project type and settings, a different version of the compiler may be used.

Common Azure Tools 1.10

Provides common services for use by Azure Mobile Services and Microsoft Azure Tools.

Cookiecutter 16.3.19252.1

Provides tools for finding, instantiating and customizing templates in cookiecutter format.

EditorConfig Language Service 1.17.260

Language service for .editorconfig files.

EditorConfig helps developers define and maintain consistent coding styles between different editors and IDEs.

Extensibility Message Bus 1.2.0 (d16-2@8b56e20)

Provides common messaging-based MEF services for loosely coupled Visual Studio extension components communication and integration.

Fabric.DiagnosticEvents 1.0

Fabric Diagnostic Events

File Icons 2.7

Adds icons for files that are not recognized by Solution Explorer

GitHub.VisualStudio 2.10.8.8132

A Visual Studio Extension that brings the GitHub Flow into Visual Studio.

IntelliCode Extension 1.0

IntelliCode Visual Studio Extension Detailed Info

Markdown Editor 1.12.236

A full featured Markdown editor with live preview and syntax highlighting. Supports GitHub flavored Markdown.

Microsoft Azure HDInsight Azure Node 2.4.2000.0

HDInsight Node under Azure Node

Microsoft Azure Hive Query Language Service 2.4.2000.0

Language service for Hive query

Microsoft Azure Service Fabric Tools for Visual Studio 16.0

Microsoft Azure Service Fabric Tools for Visual Studio

Microsoft Azure Stream Analytics Language Service 2.4.2000.0

Language service for Azure Stream Analytics

Microsoft Azure Stream Analytics Node 1.0

Azure Stream Analytics Node under Azure Node

Microsoft Azure Tools 2.9

Microsoft Azure Tools for Microsoft Visual Studio 0x10 - v2.9.20816.1

Microsoft Continuous Delivery Tools for Visual Studio 0.4

Simplifying the configuration of Azure DevOps pipelines from within the Visual Studio IDE.

Microsoft JVM Debugger 1.0

Provides support for connecting the Visual Studio debugger to JDWP compatible Java Virtual Machines

Microsoft Library Manager 2.0.83+gbc8a4b23ec

Install client-side libraries easily to any web project

Microsoft MI-Based Debugger 1.0

Provides support for connecting Visual Studio to MI compatible debuggers

Microsoft Visual C++ Wizards 1.0

Microsoft Visual C++ Wizards

Microsoft Visual Studio Tools for Containers 1.1

Develop, run, validate your ASP.NET Core applications in the target environment. F5 your application directly into a container with debugging, or CTRL + F5 to edit & refresh your app without having to rebuild the container.

Microsoft Visual Studio VC Package 1.0

Microsoft Visual Studio VC Package

Mono Debugging for Visual Studio 16.3.7 (9d260c5)

Support for debugging Mono processes with Visual Studio.

NuGet Package Manager 5.3.1

NuGet Package Manager in Visual Studio. For more information about NuGet, visit https://docs.nuget.org/

Open Command Line 2.4.226

2.4.226

PowerShell Pro Tools for Visual Studio 1.0

A set of tools for developing and debugging PowerShell scripts and modules in Visual Studio.

Productivity Power Tools 2017/2019 16.0

Installs the individual extensions of Productivity Power Tools 2017/2019

Project File Tools 1.0.1

Provides Intellisense and other tooling for XML based project files such as .csproj and .vbproj files.

ProjectServicesPackage Extension 1.0

ProjectServicesPackage Visual Studio Extension Detailed Info

Python 16.3.19252.1

Provides IntelliSense, projects, templates, debugging, interactive windows, and other support for Python developers.

Python - Conda support 16.3.19252.1

Conda support for Python projects.

Python - Django support 16.3.19252.1

Provides templates and integration for the Django web framework.

Python - IronPython support 16.3.19252.1

Provides templates and integration for IronPython-based projects.

Python - Profiling support 16.3.19252.1

Profiling support for Python projects.

Redgate SQL Prompt 9.5.20.11737

Write, format, and refactor SQL effortlessly

Snapshot Debugging Extension 1.0

Snapshot Debugging Visual Studio Extension Detailed Info

SQL Server Data Tools 16.0.61908.27190

Microsoft SQL Server Data Tools

Test Adapter for Boost.Test 1.0

Enables Visual Studio's testing tools with unit tests written for Boost.Test. The use terms and Third Party Notices are available in the extension installation directory.

Test Adapter for Google Test 1.0

Enables Visual Studio's testing tools with unit tests written for Google Test. The use terms and Third Party Notices are available in the extension installation directory.

ToolWindowHostedEditor 1.0

Hosting json editor into a tool window

TypeScript Tools 16.0.10821.2002

TypeScript Tools for Microsoft Visual Studio

Visual Basic Tools 3.3.1-beta3-19461-02+2fd12c210e22f7d6245805c60340f6a34af6875b

Visual Basic components used in the IDE. Depending on your project type and settings, a different version of the compiler may be used.

Visual C++ for Cross Platform Mobile Development (Android) 16.0.29230.54

Visual C++ for Cross Platform Mobile Development (Android)

Visual F# Tools 10.4 for F# 4.6 16.3.0-beta.19455.1+0422ff293bb2cc722fe5021b85ef50378a9af823

Microsoft Visual F# Tools 10.4 for F# 4.6

Visual Studio Code Debug Adapter Host Package 1.0

Interop layer for hosting Visual Studio Code debug adapters in Visual Studio

Visual Studio Tools for CMake 1.0

Visual Studio Tools for CMake

Visual Studio Tools for CMake 1.0

Visual Studio Tools for CMake

Visual Studio Tools for Containers 1.0

Visual Studio Tools for Containers

Visual Studio Tools for Kubernetes 1.0

Visual Studio Tools for Kubernetes

VisualStudio.Mac 1.0

Mac Extension for Visual Studio

Xamarin 16.3.0.277 (d16-3@c0fcab7)

Visual Studio extension to enable development for Xamarin.iOS and Xamarin.Android.

Xamarin Designer 16.3.0.246 (remotes/origin/d16-3@bd2f86892)

Visual Studio extension to enable Xamarin Designer tools in Visual Studio.

Xamarin Templates 16.3.565 (27e9746)

Templates for building iOS, Android, and Windows apps with Xamarin and Xamarin.Forms.

Xamarin.Android SDK 10.0.3.0 (d16-3/4d45b41)

Xamarin.Android Reference Assemblies and MSBuild support.

Mono: mono/mono/2019-06@5608fe0abb3

Java.Interop: xamarin/java.interop/d16-3@5836f58

LibZipSharp: grendello/LibZipSharp/d16-3@71f4a94

LibZip: nih-at/libzip/rel-1-5-1@b95cf3fd

ProGuard: xamarin/proguard/master@905836d

SQLite: xamarin/sqlite/3.27.1@8212a2d

Xamarin.Android Tools: xamarin/xamarin-android-tools/d16-3@cb41333

Xamarin.iOS and Xamarin.Mac SDK 13.4.0.2 (e37549b)

Xamarin.iOS and Xamarin.Mac Reference Assemblies and MSBuild support.

| non_test | app builds hang when executing task linkassemblies if linking enabled steps to reproduce build app with androidlinkmode full or androidlinkmode sdkonly if you have a repro project you may drag drop the zip etc onto the issue editor to attach it expected behavior app builds successfully actual behavior build hangs version information microsoft visual studio enterprise version visualstudio release microsoft net framework version installed version enterprise visual c microsoft visual c adl tools service provider this package contains services used by data lake tools asp net and web tools asp net and web tools asp net web frameworks and tools for additional information visit azure app service tools azure app service tools azure data lake node this package contains the data lake integration nodes for server explorer azure data lake tools for visual studio microsoft azure data lake tools for visual studio azure functions and web jobs tools azure functions and web jobs tools azure logic apps tools for visual studio add in for the azure resource group project to support the logic app designer and template creation azure stream analytics tools for visual studio microsoft azure stream analytics tools for visual studio c tools c components used in the ide depending on your project type and settings a different version of the compiler may be used common azure tools provides common services for use by azure mobile services and microsoft azure tools cookiecutter provides tools for finding instantiating and customizing templates in cookiecutter format editorconfig language service language service for editorconfig files editorconfig helps developers define and maintain consistent coding styles between different editors and ides extensibility message bus provides common messaging based mef services for loosely coupled visual studio extension components communication and integration fabric diagnosticevents fabric diagnostic events file icons adds icons for files that are not recognized by solution explorer github visualstudio a visual studio extension that brings the github flow into visual studio intellicode extension intellicode visual studio extension detailed info markdown editor a full featured markdown editor with live preview and syntax highlighting supports github flavored markdown microsoft azure hdinsight azure node hdinsight node under azure node microsoft azure hive query language service language service for hive query microsoft azure service fabric tools for visual studio microsoft azure service fabric tools for visual studio microsoft azure stream analytics language service language service for azure stream analytics microsoft azure stream analytics node azure stream analytics node under azure node microsoft azure tools microsoft azure tools for microsoft visual studio microsoft continuous delivery tools for visual studio simplifying the configuration of azure devops pipelines from within the visual studio ide microsoft jvm debugger provides support for connecting the visual studio debugger to jdwp compatible java virtual machines microsoft library manager install client side libraries easily to any web project microsoft mi based debugger provides support for connecting visual studio to mi compatible debuggers microsoft visual c wizards microsoft visual c wizards microsoft visual studio tools for containers develop run validate your asp net core applications in the target environment your application directly into a container with debugging or ctrl to edit refresh your app without having to rebuild the container microsoft visual studio vc package microsoft visual studio vc package mono debugging for visual studio support for debugging mono processes with visual studio nuget package manager nuget package manager in visual studio for more information about nuget visit open command line powershell pro tools for visual studio a set of tools for developing and debugging powershell scripts and modules in visual studio productivity power tools installs the individual extensions of productivity power tools project file tools provides intellisense and other tooling for xml based project files such as csproj and vbproj files projectservicespackage extension projectservicespackage visual studio extension detailed info python provides intellisense projects templates debugging interactive windows and other support for python developers python conda support conda support for python projects python django support provides templates and integration for the django web framework python ironpython support provides templates and integration for ironpython based projects python profiling support profiling support for python projects redgate sql prompt write format and refactor sql effortlessly snapshot debugging extension snapshot debugging visual studio extension detailed info sql server data tools microsoft sql server data tools test adapter for boost test enables visual studio s testing tools with unit tests written for boost test the use terms and third party notices are available in the extension installation directory test adapter for google test enables visual studio s testing tools with unit tests written for google test the use terms and third party notices are available in the extension installation directory toolwindowhostededitor hosting json editor into a tool window typescript tools typescript tools for microsoft visual studio visual basic tools visual basic components used in the ide depending on your project type and settings a different version of the compiler may be used visual c for cross platform mobile development android visual c for cross platform mobile development android visual f tools for f beta microsoft visual f tools for f visual studio code debug adapter host package interop layer for hosting visual studio code debug adapters in visual studio visual studio tools for cmake visual studio tools for cmake visual studio tools for cmake visual studio tools for cmake visual studio tools for containers visual studio tools for containers visual studio tools for kubernetes visual studio tools for kubernetes visualstudio mac mac extension for visual studio xamarin visual studio extension to enable development for xamarin ios and xamarin android xamarin designer remotes origin visual studio extension to enable xamarin designer tools in visual studio xamarin templates templates for building ios android and windows apps with xamarin and xamarin forms xamarin android sdk xamarin android reference assemblies and msbuild support mono mono mono java interop xamarin java interop libzipsharp grendello libzipsharp libzip nih at libzip rel proguard xamarin proguard master sqlite xamarin sqlite xamarin android tools xamarin xamarin android tools xamarin ios and xamarin mac sdk xamarin ios and xamarin mac reference assemblies and msbuild support | 0 |

267,380 | 23,296,674,483 | IssuesEvent | 2022-08-06 17:34:03 | python/cpython | https://api.github.com/repos/python/cpython | closed | Similar to `test_grp` in `test_pwd`, add a test with null value in name | tests | Both getpwnam(name) and getgrnam(name) should return ValueErr if null is entered in the name value

In test_grp, the part is being tested, but in test_pwd, the part is not being tested.

Is it ok to write a PR that adds that test? | 1.0 | Similar to `test_grp` in `test_pwd`, add a test with null value in name - Both getpwnam(name) and getgrnam(name) should return ValueErr if null is entered in the name value

In test_grp, the part is being tested, but in test_pwd, the part is not being tested.

Is it ok to write a PR that adds that test? | test | similar to test grp in test pwd add a test with null value in name both getpwnam name and getgrnam name should return valueerr if null is entered in the name value in test grp the part is being tested but in test pwd the part is not being tested is it ok to write a pr that adds that test | 1 |

158,480 | 12,416,730,646 | IssuesEvent | 2020-05-22 18:53:10 | homebridge-xiaomi-roborock-vacuum/homebridge-xiaomi-roborock-vacuum | https://api.github.com/repos/homebridge-xiaomi-roborock-vacuum/homebridge-xiaomi-roborock-vacuum | closed | Report two issues with S5 FW 3.5.7 | enhancement help wanted please test | Hi,

Thanks for the plugin, works very well except for two minor issues with my S5 FW 3.5.7:

1) Looks like pause doesn't work. When in cleaning, I can pause it but the switch then flips back in 2 seconds, so I can't continue afterwards.

2) I can't seem to be able to enable Gentle mode. Reading the code S5 with 3.5.7 use the same speeds as Gen3, but Gen3 doesn't. have mopping function, maybe Gen4 is more correct?

gen3: [

// 0% = Off / Aus

{ homekitTopLevel: 0, miLevel: 0, name: "Off" },

// 1-38% = "Quiet / Leise"

{ homekitTopLevel: 38, miLevel: 101, name: "Quiet" },

// 39-60% = "Balanced / Standard"

{ homekitTopLevel: 60, miLevel: 102, name: "Balanced" },

// 61-77% = "Turbo / Stark"

{ homekitTopLevel: 77, miLevel: 103, name: "Turbo" },

// 78-100% = "Full Speed / Max Speed / Max"

{ homekitTopLevel: 100, miLevel: 104, name: "Max" }

],

One more suggestion:

Instead of allow any percentage of fan speed, only allow the speeds like 0, 20, 40, 60, 80, 100 for models with 5 speeds, and 0, 25, 50, 75, 100 for models with 4 speeds. Something like:

that.fanService.getCharacteristic(Characteristic.RotationSpeed).setProps({

minStep: 20

}).on('get', that.getSpeed.bind(that)).on('set', that.setSpeed.bind(that));

Thank you! | 1.0 | Report two issues with S5 FW 3.5.7 - Hi,

Thanks for the plugin, works very well except for two minor issues with my S5 FW 3.5.7:

1) Looks like pause doesn't work. When in cleaning, I can pause it but the switch then flips back in 2 seconds, so I can't continue afterwards.

2) I can't seem to be able to enable Gentle mode. Reading the code S5 with 3.5.7 use the same speeds as Gen3, but Gen3 doesn't. have mopping function, maybe Gen4 is more correct?

gen3: [

// 0% = Off / Aus

{ homekitTopLevel: 0, miLevel: 0, name: "Off" },

// 1-38% = "Quiet / Leise"

{ homekitTopLevel: 38, miLevel: 101, name: "Quiet" },

// 39-60% = "Balanced / Standard"

{ homekitTopLevel: 60, miLevel: 102, name: "Balanced" },

// 61-77% = "Turbo / Stark"

{ homekitTopLevel: 77, miLevel: 103, name: "Turbo" },

// 78-100% = "Full Speed / Max Speed / Max"

{ homekitTopLevel: 100, miLevel: 104, name: "Max" }

],

One more suggestion:

Instead of allow any percentage of fan speed, only allow the speeds like 0, 20, 40, 60, 80, 100 for models with 5 speeds, and 0, 25, 50, 75, 100 for models with 4 speeds. Something like:

that.fanService.getCharacteristic(Characteristic.RotationSpeed).setProps({

minStep: 20

}).on('get', that.getSpeed.bind(that)).on('set', that.setSpeed.bind(that));

Thank you! | test | report two issues with fw hi thanks for the plugin works very well except for two minor issues with my fw looks like pause doesn t work when in cleaning i can pause it but the switch then flips back in seconds so i can t continue afterwards i can t seem to be able to enable gentle mode reading the code with use the same speeds as but doesn t have mopping function maybe is more correct off aus homekittoplevel milevel name off quiet leise homekittoplevel milevel name quiet balanced standard homekittoplevel milevel name balanced turbo stark homekittoplevel milevel name turbo full speed max speed max homekittoplevel milevel name max one more suggestion instead of allow any percentage of fan speed only allow the speeds like for models with speeds and for models with speeds something like that fanservice getcharacteristic characteristic rotationspeed setprops minstep on get that getspeed bind that on set that setspeed bind that thank you | 1 |

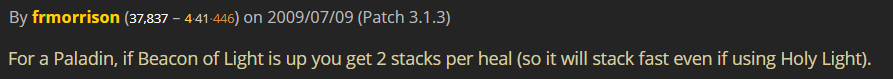

400,318 | 11,772,911,026 | IssuesEvent | 2020-03-16 05:41:27 | omou-org/mainframe | https://api.github.com/repos/omou-org/mainframe | closed | Create unpaid sessions endpoint | 3 hours enhancement priority | ## Background

This will be on the Admin tab providing a list of student's names, # of sessions taken, course name, $ amount owed. User will be able to identify at a glance all students who owe tuition payment, for what course, and the amount the student owes.

(There will be some wording adjustments)

~~In our database, we should be tracking paid sessions with an `is_paid` attribute. This will be complete when #55 is done.~~

REMINDER: enrollment - a student + course relationship. Refer to swagger for more detailed description.

## Development

We first need to identify the number of paid sessions left for an enrollment. The following steps should be taken to identify paid sessions left:

1. Count number of present day + future sessions that have a "is_paid" status as "true"

2. If there are no sessions on the present day + future that have a "is_paid" as true, we need to check if there were any sessions in the past that have an unpaid status.

3. Count the number of sessions that "is_paid" is false and "is_confirmed" is true

Next, we need to identify the amount due. If there are paid sessions left, no amount is due. If there are no paid sessions left and there is at least 1 unpaid session(s) left: Calculate the total amount due. This is done by:

1. Calculating the amount due per session. Take the duration of past session where "is_paid" is false and "is_confirmed" is true multiply it by the course's hourly rate.

2. Total the sum of all the amount due per session

This 2-step process is required in the case that a previous sessions where "is_paid" is false and "is_confirmed" is true had an extended duration.

We need to calculate this for each tutoring/small group enrollment. If the enrollment has less than or equal to 3 sessions left, we want to notify the receptionist.

## Request + Response

The endpoint should be something like `/payments/list-of-unpaid-students`

The expected response will be a JSON object with student_id keys, and for each student_id keys, there will be an array of objects describing the enrollment payment status. Only include enrollments that have at most 3 paid sessions left. Example:

```

{

[student_id]: [

{

student_id: //int,

paid_sessions: //int <- this should be at most 3,

amount_due: //double <- sum total of unpaid tutitions if paid_sessions < 0,

course_id: //int,

},

...

],

....

}

```

| 1.0 | Create unpaid sessions endpoint - ## Background

This will be on the Admin tab providing a list of student's names, # of sessions taken, course name, $ amount owed. User will be able to identify at a glance all students who owe tuition payment, for what course, and the amount the student owes.

(There will be some wording adjustments)

~~In our database, we should be tracking paid sessions with an `is_paid` attribute. This will be complete when #55 is done.~~

REMINDER: enrollment - a student + course relationship. Refer to swagger for more detailed description.

## Development

We first need to identify the number of paid sessions left for an enrollment. The following steps should be taken to identify paid sessions left:

1. Count number of present day + future sessions that have a "is_paid" status as "true"

2. If there are no sessions on the present day + future that have a "is_paid" as true, we need to check if there were any sessions in the past that have an unpaid status.

3. Count the number of sessions that "is_paid" is false and "is_confirmed" is true

Next, we need to identify the amount due. If there are paid sessions left, no amount is due. If there are no paid sessions left and there is at least 1 unpaid session(s) left: Calculate the total amount due. This is done by:

1. Calculating the amount due per session. Take the duration of past session where "is_paid" is false and "is_confirmed" is true multiply it by the course's hourly rate.

2. Total the sum of all the amount due per session