Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

51,845 | 3,014,617,585 | IssuesEvent | 2015-07-29 15:36:00 | jpchanson/BeSeenium | https://api.github.com/repos/jpchanson/BeSeenium | closed | do jetty proof of concept | Core functionality High Priority | get jetty to my controller test,

get jetty to display some stuff on the screen. | 1.0 | do jetty proof of concept - get jetty to my controller test,

get jetty to display some stuff on the screen. | non_test | do jetty proof of concept get jetty to my controller test get jetty to display some stuff on the screen | 0 |

1,868 | 4,697,449,435 | IssuesEvent | 2016-10-12 09:24:13 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | degrading performance after using child_process | child_process confirmed-bug lts-watch-v4.x os x performance | After running a child process ( using exec or spawn ) I have found that the performance of my node.js application decreases by a factor of 10. Below is a contrived example and output.

```

var exec = require('child_process').exec;

function runExpensiveOperation(times) {

while(times > 0) {

console.time('expensiveOperation');

var str = 'lorem';

for ( var i=0;i< 10000000; i++) {

// string concatenation

str = str.length < 1000 ? str + str : '';

// math operation

i * i * i;

}

console.timeEnd('expensiveOperation');

times--;

}

}

console.log('PRE EXEC');

runExpensiveOperation(10);

exec('echo "hello"');

console.log('POST EXEC');

runExpensiveOperation(10);

```

Output:

```

PRE EXEC

expensiveOperation: 66.458ms

expensiveOperation: 65.735ms

expensiveOperation: 69.237ms

expensiveOperation: 65.269ms

expensiveOperation: 69.133ms

expensiveOperation: 65.639ms

expensiveOperation: 67.944ms

expensiveOperation: 63.595ms

expensiveOperation: 64.153ms

expensiveOperation: 65.093ms

POST EXEC

expensiveOperation: 715.861ms

expensiveOperation: 739.671ms

expensiveOperation: 714.546ms

expensiveOperation: 714.845ms

expensiveOperation: 745.719ms

expensiveOperation: 743.240ms

expensiveOperation: 716.481ms

expensiveOperation: 732.916ms

expensiveOperation: 736.576ms

expensiveOperation: 742.416ms

```

In addition, this problem only occurs if the string concatenation AND math operation are run in the expensiveOperation - if either are commented out then there is no issue.

* **Version**: 5.8.0

* **Platform**: Darwin Kernel Version 15.3.0: Thu Dec 10 18:40:58 PST 2015; root:xnu-3248.30.4~1/RELEASE_X86_64 x86_64 ( Macbook Air OS X El Capitan )

* **Subsystem**: child_process

| 1.0 | degrading performance after using child_process - After running a child process ( using exec or spawn ) I have found that the performance of my node.js application decreases by a factor of 10. Below is a contrived example and output.

```

var exec = require('child_process').exec;

function runExpensiveOperation(times) {

while(times > 0) {

console.time('expensiveOperation');

var str = 'lorem';

for ( var i=0;i< 10000000; i++) {

// string concatenation

str = str.length < 1000 ? str + str : '';

// math operation

i * i * i;

}

console.timeEnd('expensiveOperation');

times--;

}

}

console.log('PRE EXEC');

runExpensiveOperation(10);

exec('echo "hello"');

console.log('POST EXEC');

runExpensiveOperation(10);

```

Output:

```

PRE EXEC

expensiveOperation: 66.458ms

expensiveOperation: 65.735ms

expensiveOperation: 69.237ms

expensiveOperation: 65.269ms

expensiveOperation: 69.133ms

expensiveOperation: 65.639ms

expensiveOperation: 67.944ms

expensiveOperation: 63.595ms

expensiveOperation: 64.153ms

expensiveOperation: 65.093ms

POST EXEC

expensiveOperation: 715.861ms

expensiveOperation: 739.671ms

expensiveOperation: 714.546ms

expensiveOperation: 714.845ms

expensiveOperation: 745.719ms

expensiveOperation: 743.240ms

expensiveOperation: 716.481ms

expensiveOperation: 732.916ms

expensiveOperation: 736.576ms

expensiveOperation: 742.416ms

```

In addition, this problem only occurs if the string concatenation AND math operation are run in the expensiveOperation - if either are commented out then there is no issue.

* **Version**: 5.8.0

* **Platform**: Darwin Kernel Version 15.3.0: Thu Dec 10 18:40:58 PST 2015; root:xnu-3248.30.4~1/RELEASE_X86_64 x86_64 ( Macbook Air OS X El Capitan )

* **Subsystem**: child_process

| non_test | degrading performance after using child process after running a child process using exec or spawn i have found that the performance of my node js application decreases by a factor of below is a contrived example and output var exec require child process exec function runexpensiveoperation times while times console time expensiveoperation var str lorem for var i i i string concatenation str str length str str math operation i i i console timeend expensiveoperation times console log pre exec runexpensiveoperation exec echo hello console log post exec runexpensiveoperation output pre exec expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation post exec expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation expensiveoperation in addition this problem only occurs if the string concatenation and math operation are run in the expensiveoperation if either are commented out then there is no issue version platform darwin kernel version thu dec pst root xnu release macbook air os x el capitan subsystem child process | 0 |

270,507 | 28,962,277,549 | IssuesEvent | 2023-05-10 04:19:23 | nidhi7598/external_curl_AOSP10_r33 | https://api.github.com/repos/nidhi7598/external_curl_AOSP10_r33 | opened | CVE-2022-32205 (Medium) detected in multiple libraries | Mend: dependency security vulnerability | ## CVE-2022-32205 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A malicious server can serve excessive amounts of `Set-Cookie:` headers in a HTTP response to curl and curl < 7.84.0 stores all of them. A sufficiently large amount of (big) cookies make subsequent HTTP requests to this, or other servers to which the cookies match, create requests that become larger than the threshold that curl uses internally to avoid sending crazy large requests (1048576 bytes) and instead returns an error.This denial state might remain for as long as the same cookies are kept, match and haven't expired. Due to cookie matching rules, a server on `foo.example.com` can set cookies that also would match for `bar.example.com`, making it it possible for a "sister server" to effectively cause a denial of service for a sibling site on the same second level domain using this method.

<p>Publish Date: 2022-07-07

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-32205>CVE-2022-32205</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2022-07-07</p>

<p>Fix Resolution: curl-7_71_0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-32205 (Medium) detected in multiple libraries - ## CVE-2022-32205 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b>, <b>curlcurl-7_64_1</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A malicious server can serve excessive amounts of `Set-Cookie:` headers in a HTTP response to curl and curl < 7.84.0 stores all of them. A sufficiently large amount of (big) cookies make subsequent HTTP requests to this, or other servers to which the cookies match, create requests that become larger than the threshold that curl uses internally to avoid sending crazy large requests (1048576 bytes) and instead returns an error.This denial state might remain for as long as the same cookies are kept, match and haven't expired. Due to cookie matching rules, a server on `foo.example.com` can set cookies that also would match for `bar.example.com`, making it it possible for a "sister server" to effectively cause a denial of service for a sibling site on the same second level domain using this method.

<p>Publish Date: 2022-07-07

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-32205>CVE-2022-32205</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2022-07-07</p>

<p>Fix Resolution: curl-7_71_0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries curlcurl curlcurl curlcurl curlcurl curlcurl curlcurl curlcurl curlcurl curlcurl vulnerability details a malicious server can serve excessive amounts of set cookie headers in a http response to curl and curl stores all of them a sufficiently large amount of big cookies make subsequent http requests to this or other servers to which the cookies match create requests that become larger than the threshold that curl uses internally to avoid sending crazy large requests bytes and instead returns an error this denial state might remain for as long as the same cookies are kept match and haven t expired due to cookie matching rules a server on foo example com can set cookies that also would match for bar example com making it it possible for a sister server to effectively cause a denial of service for a sibling site on the same second level domain using this method publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version release date fix resolution curl step up your open source security game with mend | 0 |

686,881 | 23,507,525,505 | IssuesEvent | 2022-08-18 13:49:55 | twisted/twisted | https://api.github.com/repos/twisted/twisted | closed | TypeError: 'DelayedCall' object is not iterable | core bug priority-normal new | |<img alt="allenap's avatar" src="https://avatars.githubusercontent.com/u/0?s=50" width="50" height="50">| allenap reported|

|-|-|

|Trac ID|trac#8307|

|Type|defect|

|Created|2016-04-26 16:06:50Z|

```

Python 3.5.1+ (default, Mar 30 2016, 22:46:26)

[GCC 5.3.1 20160330] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> from twisted.internet.base import DelayedCall

>>> dc = DelayedCall(1, lambda: None, (), {}, lambda dc: None, lambda dc: None)

>>> dc.debug = True

>>> dc.cancel()

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/gavin/GitHub/twisted/twisted/internet/base.py", line 94, in cancel

self._str = bytes(self)

TypeError: 'DelayedCall' object is not iterable

```

```

$ tail -n1 twisted/_version.py

version = versions.Version('twisted', 16, 1, 1)

```

<details><summary>Searchable metadata</summary>

```

trac-id__8307 8307

type__defect defect

reporter__allenap allenap

priority__normal normal

milestone__None None

branch__

branch_author__

status__new new

resolution__None None

component__core core

keywords__None None

time__1461686810312194 1461686810312194

changetime__1462290490013595 1462290490013595

version__None None

owner__None None

```

</details>

| 1.0 | TypeError: 'DelayedCall' object is not iterable - |<img alt="allenap's avatar" src="https://avatars.githubusercontent.com/u/0?s=50" width="50" height="50">| allenap reported|

|-|-|

|Trac ID|trac#8307|

|Type|defect|

|Created|2016-04-26 16:06:50Z|

```

Python 3.5.1+ (default, Mar 30 2016, 22:46:26)

[GCC 5.3.1 20160330] on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> from twisted.internet.base import DelayedCall

>>> dc = DelayedCall(1, lambda: None, (), {}, lambda dc: None, lambda dc: None)

>>> dc.debug = True

>>> dc.cancel()

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/gavin/GitHub/twisted/twisted/internet/base.py", line 94, in cancel

self._str = bytes(self)

TypeError: 'DelayedCall' object is not iterable

```

```

$ tail -n1 twisted/_version.py

version = versions.Version('twisted', 16, 1, 1)

```

<details><summary>Searchable metadata</summary>

```

trac-id__8307 8307

type__defect defect

reporter__allenap allenap

priority__normal normal

milestone__None None

branch__

branch_author__

status__new new

resolution__None None

component__core core

keywords__None None

time__1461686810312194 1461686810312194

changetime__1462290490013595 1462290490013595

version__None None

owner__None None

```

</details>

| non_test | typeerror delayedcall object is not iterable allenap reported trac id trac type defect created python default mar on linux type help copyright credits or license for more information from twisted internet base import delayedcall dc delayedcall lambda none lambda dc none lambda dc none dc debug true dc cancel traceback most recent call last file line in file home gavin github twisted twisted internet base py line in cancel self str bytes self typeerror delayedcall object is not iterable tail twisted version py version versions version twisted searchable metadata trac id type defect defect reporter allenap allenap priority normal normal milestone none none branch branch author status new new resolution none none component core core keywords none none time changetime version none none owner none none | 0 |

15,767 | 3,974,562,812 | IssuesEvent | 2016-05-04 22:46:02 | LigaData/Kamanja | https://api.github.com/repos/LigaData/Kamanja | closed | The documentation for creating I/O adapters on the website is not complete. | Bug (Documentation) P3 Verify | We have a document that can be put there. Some examples are necessary, as well. | 1.0 | The documentation for creating I/O adapters on the website is not complete. - We have a document that can be put there. Some examples are necessary, as well. | non_test | the documentation for creating i o adapters on the website is not complete we have a document that can be put there some examples are necessary as well | 0 |

261,929 | 22,781,153,799 | IssuesEvent | 2022-07-08 19:55:55 | NuGet/Home | https://api.github.com/repos/NuGet/Home | closed | Remove temporary Newtonsoft.Json workaround in dotnet integration tests | Type:Engineering Type:Test Pipeline:Backlog | Our dotnet integration tests copy a working .NET SDK folder, then patch the NuGet assemblies, and use this copy/patched .NET SDK to run tests.

We're upgrading to a version of newtonsoft.json higher than what the .NET SDK currently has (we're both upgrading at the same time), but until our CI pipeline start using builds of .NET SDK with a high enough version, we need to copy it ourselves.

Here's what needs to be removed:

https://github.com/NuGet/NuGet.Client/pull/4167/files/0e2f6a70900839b1f8670f8fd471d36bf1974df0#diff-8a81e906729d9dc1d11f635564b6eed54b859ab8aaacb3713fc29b19189f0f3b

| 1.0 | Remove temporary Newtonsoft.Json workaround in dotnet integration tests - Our dotnet integration tests copy a working .NET SDK folder, then patch the NuGet assemblies, and use this copy/patched .NET SDK to run tests.

We're upgrading to a version of newtonsoft.json higher than what the .NET SDK currently has (we're both upgrading at the same time), but until our CI pipeline start using builds of .NET SDK with a high enough version, we need to copy it ourselves.

Here's what needs to be removed:

https://github.com/NuGet/NuGet.Client/pull/4167/files/0e2f6a70900839b1f8670f8fd471d36bf1974df0#diff-8a81e906729d9dc1d11f635564b6eed54b859ab8aaacb3713fc29b19189f0f3b

| test | remove temporary newtonsoft json workaround in dotnet integration tests our dotnet integration tests copy a working net sdk folder then patch the nuget assemblies and use this copy patched net sdk to run tests we re upgrading to a version of newtonsoft json higher than what the net sdk currently has we re both upgrading at the same time but until our ci pipeline start using builds of net sdk with a high enough version we need to copy it ourselves here s what needs to be removed | 1 |

708,683 | 24,350,088,695 | IssuesEvent | 2022-10-02 20:47:38 | IAmTamal/Milan | https://api.github.com/repos/IAmTamal/Milan | closed | [DOCS] Readme + License changes 🛠 | 📄 aspect: text ✨ goal: improvement 🟨 priority: medium 🛠 status : under development hacktoberfest | ### Description

Hello!

I would like to help with the ReadMe documentation for the Milan project by making changes in the ReadMe for grammar and sentence formation as well as would like to brainstorm for anything that can be added that can make the project better along with linking the License in the file as well!

Would enjoy if you can assign this issue to me for hacktoberfest!

Thank you!

### Screenshots

_No response_

### Additional information

_No response_ | 1.0 | [DOCS] Readme + License changes 🛠 - ### Description

Hello!

I would like to help with the ReadMe documentation for the Milan project by making changes in the ReadMe for grammar and sentence formation as well as would like to brainstorm for anything that can be added that can make the project better along with linking the License in the file as well!

Would enjoy if you can assign this issue to me for hacktoberfest!

Thank you!

### Screenshots

_No response_

### Additional information

_No response_ | non_test | readme license changes 🛠 description hello i would like to help with the readme documentation for the milan project by making changes in the readme for grammar and sentence formation as well as would like to brainstorm for anything that can be added that can make the project better along with linking the license in the file as well would enjoy if you can assign this issue to me for hacktoberfest thank you screenshots no response additional information no response | 0 |

22,559 | 11,746,204,837 | IssuesEvent | 2020-03-12 11:14:58 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | No mention of the Docker Compose YAML 4000 character limit | Pri2 app-service/svc cxp doc-enhancement triaged | As discussed here https://social.msdn.microsoft.com/Forums/azure/en-US/ff353717-fcb0-42d8-8237-5891e998c1d2/error-on-creating-web-app-with-docker-compose?forum=windowsazurewebsitespreview .

This limits quite basic docker compose setups.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: ad0cae44-bfc2-c879-d406-23ab0b756ab9

* Version Independent ID: 5d812d22-559c-2c9f-7676-c62d7f5c980a

* Content: [Quickstart: Create a multi-container app - Azure App Service](https://docs.microsoft.com/en-us/azure/app-service/containers/quickstart-multi-container#feedback)

* Content Source: [articles/app-service/containers/quickstart-multi-container.md](https://github.com/Microsoft/azure-docs/blob/master/articles/app-service/containers/quickstart-multi-container.md)

* Service: **app-service**

* GitHub Login: @msangapu-msft

* Microsoft Alias: **msangapu** | 1.0 | No mention of the Docker Compose YAML 4000 character limit - As discussed here https://social.msdn.microsoft.com/Forums/azure/en-US/ff353717-fcb0-42d8-8237-5891e998c1d2/error-on-creating-web-app-with-docker-compose?forum=windowsazurewebsitespreview .

This limits quite basic docker compose setups.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: ad0cae44-bfc2-c879-d406-23ab0b756ab9

* Version Independent ID: 5d812d22-559c-2c9f-7676-c62d7f5c980a

* Content: [Quickstart: Create a multi-container app - Azure App Service](https://docs.microsoft.com/en-us/azure/app-service/containers/quickstart-multi-container#feedback)

* Content Source: [articles/app-service/containers/quickstart-multi-container.md](https://github.com/Microsoft/azure-docs/blob/master/articles/app-service/containers/quickstart-multi-container.md)

* Service: **app-service**

* GitHub Login: @msangapu-msft

* Microsoft Alias: **msangapu** | non_test | no mention of the docker compose yaml character limit as discussed here this limits quite basic docker compose setups document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service app service github login msangapu msft microsoft alias msangapu | 0 |

61,328 | 6,733,262,256 | IssuesEvent | 2017-10-18 14:20:15 | ThePenguin1140/OpenCVObstructionTracking | https://api.github.com/repos/ThePenguin1140/OpenCVObstructionTracking | opened | Split video and upload pieces | help wanted testing | I created a test video as part of #4 but it's too big to put on github so I think the best thing might be split it into a few different 'test scenarios' and then upload the pieces?

The video file can be found here:

https://drive.google.com/open?id=0B55XddbN7M0zRG5PTE5VdzhIRzA

| 1.0 | Split video and upload pieces - I created a test video as part of #4 but it's too big to put on github so I think the best thing might be split it into a few different 'test scenarios' and then upload the pieces?

The video file can be found here:

https://drive.google.com/open?id=0B55XddbN7M0zRG5PTE5VdzhIRzA

| test | split video and upload pieces i created a test video as part of but it s too big to put on github so i think the best thing might be split it into a few different test scenarios and then upload the pieces the video file can be found here | 1 |

301,882 | 26,107,190,512 | IssuesEvent | 2022-12-27 14:40:52 | QubesOS/updates-status | https://api.github.com/repos/QubesOS/updates-status | closed | core-agent-linux v4.2.3 (r4.2) | r4.2-vm-bullseye-cur-test r4.2-vm-bookworm-cur-test r4.2-vm-fc37-cur-test r4.2-vm-fc36-cur-test r4.2-vm-centos-stream8-cur-test | Update of core-agent-linux to v4.2.3 for Qubes r4.2, see comments below for details and build status.

From commit: https://github.com/QubesOS/qubes-core-agent-linux/commit/e248fae8e4b7cc89dc6f1b26e68b114fabbdf78a

[Changes since previous version](https://github.com/QubesOS/qubes-core-agent-linux/compare/v4.2.2...v4.2.3):

QubesOS/qubes-core-agent-linux@e248fae8 version 4.2.3

QubesOS/qubes-core-agent-linux@f2bd5c5e firewall: remove debug print

QubesOS/qubes-core-agent-linux@f7f7a026 Merge remote-tracking branch 'origin/pr/399'

QubesOS/qubes-core-agent-linux@765661af ci: fix uploading coverage to codecov

QubesOS/qubes-core-agent-linux@f2db11ae archlinux: update example repo to r4.2 too

QubesOS/qubes-core-agent-linux@90478b0b Revert "temporarily pretend to be 4.1"

QubesOS/qubes-core-agent-linux@292a8ac1 Add purging of no longer allowed connections from conntrack

QubesOS/qubes-core-agent-linux@119eb3ac qubes-rpc/nautilus: Execute external commands asynchronously

QubesOS/qubes-core-agent-linux@0f7f0d6f qubes-rpc/nautilus: Add support for Nautilus API 4.0 The get_file_items method of Nautilus.MenuProvider no longer take the window argument.

Referenced issues:

QubesOS/qubes-issues#7916

QubesOS/qubes-issues#4141

If you're release manager, you can issue GPG-inline signed command:

* `Upload-component r4.2 core-agent-linux e248fae8e4b7cc89dc6f1b26e68b114fabbdf78a current all` (available 5 days from now)

* `Upload-component r4.2 core-agent-linux e248fae8e4b7cc89dc6f1b26e68b114fabbdf78a security-testing`

You can choose subset of distributions like:

* `Upload-component r4.2 core-agent-linux e248fae8e4b7cc89dc6f1b26e68b114fabbdf78a current vm-bookworm,vm-fc37` (available 5 days from now)

Above commands will work only if packages in current-testing repository were built from given commit (i.e. no new version superseded it).

For more information on how to test this update, please take a look at https://www.qubes-os.org/doc/testing/#updates.

| 5.0 | core-agent-linux v4.2.3 (r4.2) - Update of core-agent-linux to v4.2.3 for Qubes r4.2, see comments below for details and build status.

From commit: https://github.com/QubesOS/qubes-core-agent-linux/commit/e248fae8e4b7cc89dc6f1b26e68b114fabbdf78a

[Changes since previous version](https://github.com/QubesOS/qubes-core-agent-linux/compare/v4.2.2...v4.2.3):

QubesOS/qubes-core-agent-linux@e248fae8 version 4.2.3

QubesOS/qubes-core-agent-linux@f2bd5c5e firewall: remove debug print

QubesOS/qubes-core-agent-linux@f7f7a026 Merge remote-tracking branch 'origin/pr/399'

QubesOS/qubes-core-agent-linux@765661af ci: fix uploading coverage to codecov

QubesOS/qubes-core-agent-linux@f2db11ae archlinux: update example repo to r4.2 too

QubesOS/qubes-core-agent-linux@90478b0b Revert "temporarily pretend to be 4.1"

QubesOS/qubes-core-agent-linux@292a8ac1 Add purging of no longer allowed connections from conntrack

QubesOS/qubes-core-agent-linux@119eb3ac qubes-rpc/nautilus: Execute external commands asynchronously

QubesOS/qubes-core-agent-linux@0f7f0d6f qubes-rpc/nautilus: Add support for Nautilus API 4.0 The get_file_items method of Nautilus.MenuProvider no longer take the window argument.

Referenced issues:

QubesOS/qubes-issues#7916

QubesOS/qubes-issues#4141

If you're release manager, you can issue GPG-inline signed command:

* `Upload-component r4.2 core-agent-linux e248fae8e4b7cc89dc6f1b26e68b114fabbdf78a current all` (available 5 days from now)

* `Upload-component r4.2 core-agent-linux e248fae8e4b7cc89dc6f1b26e68b114fabbdf78a security-testing`

You can choose subset of distributions like:

* `Upload-component r4.2 core-agent-linux e248fae8e4b7cc89dc6f1b26e68b114fabbdf78a current vm-bookworm,vm-fc37` (available 5 days from now)

Above commands will work only if packages in current-testing repository were built from given commit (i.e. no new version superseded it).

For more information on how to test this update, please take a look at https://www.qubes-os.org/doc/testing/#updates.

| test | core agent linux update of core agent linux to for qubes see comments below for details and build status from commit qubesos qubes core agent linux version qubesos qubes core agent linux firewall remove debug print qubesos qubes core agent linux merge remote tracking branch origin pr qubesos qubes core agent linux ci fix uploading coverage to codecov qubesos qubes core agent linux archlinux update example repo to too qubesos qubes core agent linux revert temporarily pretend to be qubesos qubes core agent linux add purging of no longer allowed connections from conntrack qubesos qubes core agent linux qubes rpc nautilus execute external commands asynchronously qubesos qubes core agent linux qubes rpc nautilus add support for nautilus api the get file items method of nautilus menuprovider no longer take the window argument referenced issues qubesos qubes issues qubesos qubes issues if you re release manager you can issue gpg inline signed command upload component core agent linux current all available days from now upload component core agent linux security testing you can choose subset of distributions like upload component core agent linux current vm bookworm vm available days from now above commands will work only if packages in current testing repository were built from given commit i e no new version superseded it for more information on how to test this update please take a look at | 1 |

325,575 | 27,944,834,556 | IssuesEvent | 2023-03-24 01:34:24 | WiIIiam278/HuskHomes2 | https://api.github.com/repos/WiIIiam278/HuskHomes2 | closed | Teleport strange behaviour with tabcomplete name event | status: needs testing | Version: 3.0.4 from [spigot](https://www.spigotmc.org/resources/%E2%AD%90-huskhomes-1-16-1-19-%E2%AD%90-simple-intuitive-teleportation-suite-with-cross-server-support.83767/updates)

Using REDIS as messanger_type.

With this messenger_type we found a strange behaviour in tabcompleting names.

1. /tp and /tpa are ok if you are in two separate servers

2. /tp and /tpa player list is taken from the global player list instead of the player list from the servers with REDIS cache (I think it's normal because of this

` public CompletableFuture<List<String>> updatePlayerListCache(@NotNull HuskHomes plugin, @NotNull OnlineUser requester) {

if (plugin.getSettings().crossServer) {

return plugin.getNetworkMessenger().getOnlinePlayerNames(requester).thenApply(returnedPlayerList -> {

players.clear();

players.addAll(List.of(returnedPlayerList));

return players;

});

} else {

players.clear();

players.addAll(plugin.getOnlinePlayers()

.stream()

.filter(player -> !player.isVanished())

.map(onlineUser -> onlineUser.username)

.toList());

System.out.println(String.join(", ", players));

return CompletableFuture.completedFuture(players);

}

}`

3. The biggest problem comes when you are both in the same server: /tp and /tpa doesn't tab complete the player names in the same server. Only if you first write his name and teleport to him, the second time both commands work as defined.

| 1.0 | Teleport strange behaviour with tabcomplete name event - Version: 3.0.4 from [spigot](https://www.spigotmc.org/resources/%E2%AD%90-huskhomes-1-16-1-19-%E2%AD%90-simple-intuitive-teleportation-suite-with-cross-server-support.83767/updates)

Using REDIS as messanger_type.

With this messenger_type we found a strange behaviour in tabcompleting names.

1. /tp and /tpa are ok if you are in two separate servers

2. /tp and /tpa player list is taken from the global player list instead of the player list from the servers with REDIS cache (I think it's normal because of this

` public CompletableFuture<List<String>> updatePlayerListCache(@NotNull HuskHomes plugin, @NotNull OnlineUser requester) {

if (plugin.getSettings().crossServer) {

return plugin.getNetworkMessenger().getOnlinePlayerNames(requester).thenApply(returnedPlayerList -> {

players.clear();

players.addAll(List.of(returnedPlayerList));

return players;

});

} else {

players.clear();

players.addAll(plugin.getOnlinePlayers()

.stream()

.filter(player -> !player.isVanished())

.map(onlineUser -> onlineUser.username)

.toList());

System.out.println(String.join(", ", players));

return CompletableFuture.completedFuture(players);

}

}`

3. The biggest problem comes when you are both in the same server: /tp and /tpa doesn't tab complete the player names in the same server. Only if you first write his name and teleport to him, the second time both commands work as defined.

| test | teleport strange behaviour with tabcomplete name event version from using redis as messanger type with this messenger type we found a strange behaviour in tabcompleting names tp and tpa are ok if you are in two separate servers tp and tpa player list is taken from the global player list instead of the player list from the servers with redis cache i think it s normal because of this public completablefuture updateplayerlistcache notnull huskhomes plugin notnull onlineuser requester if plugin getsettings crossserver return plugin getnetworkmessenger getonlineplayernames requester thenapply returnedplayerlist players clear players addall list of returnedplayerlist return players else players clear players addall plugin getonlineplayers stream filter player player isvanished map onlineuser onlineuser username tolist system out println string join players return completablefuture completedfuture players the biggest problem comes when you are both in the same server tp and tpa doesn t tab complete the player names in the same server only if you first write his name and teleport to him the second time both commands work as defined | 1 |

228,382 | 18,173,514,805 | IssuesEvent | 2021-09-27 22:55:09 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | opened | Test Autocomplete Preselection Powered By Inline Suggestions | testplan-item | Refs: https://github.com/microsoft/vscode/issues/131940

- [ ] anyOS

- [ ] anyOS

Complexity: 3

[Create Issue](https://github.com/microsoft/vscode/issues/new?body=Testing+%23133906%0A%0A&assignees=hediet)

---

# Preparation

* Enable suggest preview: `"editor.suggest.preview": true,`.

* Install Copilot

# Tasks

* Create a TypeScript file and write `// Write an error to the console` followed by a new line and `console` to prompt copilot.

* Type `.` to trigger autocomplete.

* Verify that the inline suggestion stays and becomes non-italic

* Verify that an autocomplete item is preselected that is a prefix of the inline suggestion

| 1.0 | Test Autocomplete Preselection Powered By Inline Suggestions - Refs: https://github.com/microsoft/vscode/issues/131940

- [ ] anyOS

- [ ] anyOS

Complexity: 3

[Create Issue](https://github.com/microsoft/vscode/issues/new?body=Testing+%23133906%0A%0A&assignees=hediet)

---

# Preparation

* Enable suggest preview: `"editor.suggest.preview": true,`.

* Install Copilot

# Tasks

* Create a TypeScript file and write `// Write an error to the console` followed by a new line and `console` to prompt copilot.

* Type `.` to trigger autocomplete.

* Verify that the inline suggestion stays and becomes non-italic

* Verify that an autocomplete item is preselected that is a prefix of the inline suggestion

| test | test autocomplete preselection powered by inline suggestions refs anyos anyos complexity preparation enable suggest preview editor suggest preview true install copilot tasks create a typescript file and write write an error to the console followed by a new line and console to prompt copilot type to trigger autocomplete verify that the inline suggestion stays and becomes non italic verify that an autocomplete item is preselected that is a prefix of the inline suggestion | 1 |

13,624 | 3,350,354,496 | IssuesEvent | 2015-11-17 14:24:25 | servo/servo | https://api.github.com/repos/servo/servo | closed | Look through old layout bugs and write reftests | A-testing E-less easy | The reftest suite was neglected for a while. We should have more regression tests for bugs we fixed during that time. | 1.0 | Look through old layout bugs and write reftests - The reftest suite was neglected for a while. We should have more regression tests for bugs we fixed during that time. | test | look through old layout bugs and write reftests the reftest suite was neglected for a while we should have more regression tests for bugs we fixed during that time | 1 |

339,802 | 30,476,929,857 | IssuesEvent | 2023-07-17 17:12:59 | rancher/highlander | https://api.github.com/repos/rancher/highlander | opened | Migration of clusters between different Rancher instances | area/testing area/eks kind/qa area/hosted-providers | The Rancher migration procedure (migrating clusters to a 'replacement' Rancher) needs better testing, esp. for regressions.

Apparently migration for EKS clusters behaves differently compared to other downstream clusters.

| 1.0 | Migration of clusters between different Rancher instances - The Rancher migration procedure (migrating clusters to a 'replacement' Rancher) needs better testing, esp. for regressions.

Apparently migration for EKS clusters behaves differently compared to other downstream clusters.

| test | migration of clusters between different rancher instances the rancher migration procedure migrating clusters to a replacement rancher needs better testing esp for regressions apparently migration for eks clusters behaves differently compared to other downstream clusters | 1 |

101,241 | 8,782,994,040 | IssuesEvent | 2018-12-20 03:10:35 | EasyRPG/Player | https://api.github.com/repos/EasyRPG/Player | closed | Pictures won't load when loading savefile in a project with title screen disabled. | Patch available Testcase available Window/Scenes | When you have a RM2k3 >=1.10 project without Ttitle screen enabled in database, when you try to load a savefile with pictures in game, these won't are being loaded (and didn't shown in screen). However, if you enable Title Screen in Database editor, the images are shown without issue.

#### Player platform:

Tested in 0.5.4 and Master (Dec 15th).

#### Test case

Here's a test case:

[imgBug.zip](https://github.com/EasyRPG/Player/files/2683010/imgBug.zip)

Web: https://easyrpg.org/play/master/?game=issue-1571&engine=rpg2k3e (ignore the missing FaceSets)

A RM2k3 1.12a project with title screen disabled. It goes directly to a almost empty map with a automatic event that shows a dummy image, then it deletes itself, and a helper event for save/load calling.

- Save the current progress (with debug features or with the helper event).

- Then load the savefile.

#### Expected behaviour (RPG_RT 1.12a):

The dummy image is being shown when the map loads.

#### Current behaviour (Player 0.5.4 , Master):

No image is shown.

Talking with @fdelapena , maybe this bug can be related with https://github.com/EasyRPG/Player/issues/1524 | 1.0 | Pictures won't load when loading savefile in a project with title screen disabled. - When you have a RM2k3 >=1.10 project without Ttitle screen enabled in database, when you try to load a savefile with pictures in game, these won't are being loaded (and didn't shown in screen). However, if you enable Title Screen in Database editor, the images are shown without issue.

#### Player platform:

Tested in 0.5.4 and Master (Dec 15th).

#### Test case

Here's a test case:

[imgBug.zip](https://github.com/EasyRPG/Player/files/2683010/imgBug.zip)

Web: https://easyrpg.org/play/master/?game=issue-1571&engine=rpg2k3e (ignore the missing FaceSets)

A RM2k3 1.12a project with title screen disabled. It goes directly to a almost empty map with a automatic event that shows a dummy image, then it deletes itself, and a helper event for save/load calling.

- Save the current progress (with debug features or with the helper event).

- Then load the savefile.

#### Expected behaviour (RPG_RT 1.12a):

The dummy image is being shown when the map loads.

#### Current behaviour (Player 0.5.4 , Master):

No image is shown.

Talking with @fdelapena , maybe this bug can be related with https://github.com/EasyRPG/Player/issues/1524 | test | pictures won t load when loading savefile in a project with title screen disabled when you have a project without ttitle screen enabled in database when you try to load a savefile with pictures in game these won t are being loaded and didn t shown in screen however if you enable title screen in database editor the images are shown without issue player platform tested in and master dec test case here s a test case web ignore the missing facesets a project with title screen disabled it goes directly to a almost empty map with a automatic event that shows a dummy image then it deletes itself and a helper event for save load calling save the current progress with debug features or with the helper event then load the savefile expected behaviour rpg rt the dummy image is being shown when the map loads current behaviour player master no image is shown talking with fdelapena maybe this bug can be related with | 1 |

67,391 | 12,952,869,085 | IssuesEvent | 2020-07-19 22:15:20 | eucalypto/eucalyptapp | https://api.github.com/repos/eucalypto/eucalyptapp | opened | Replace DataBindingUtil in GameWonFragment | code enhancement | The use of DataBindingUtil is deprecated and should be replaced by the generated Class for the specific Fragment. | 1.0 | Replace DataBindingUtil in GameWonFragment - The use of DataBindingUtil is deprecated and should be replaced by the generated Class for the specific Fragment. | non_test | replace databindingutil in gamewonfragment the use of databindingutil is deprecated and should be replaced by the generated class for the specific fragment | 0 |

62,241 | 3,179,650,107 | IssuesEvent | 2015-09-25 03:21:07 | cjfields/redmine-test | https://api.github.com/repos/cjfields/redmine-test | opened | Bio::Seq object loses sequence data when blessed as Bio::Seq::Meta::Array | Category: Core Components Priority: Normal Status: New Tracker: Bug | ---

Author Name: **Roy Chaudhuri** (Roy Chaudhuri)

Original Redmine Issue: 2262, https://redmine.open-bio.org/issues/2262

Original Date: 2007-04-04

Original Assignee: Bioperl Guts

---

When I bless a Bio::Seq object as a Bio::Seq::Meta::Array (as instructed by the POD) it loses the primary sequence information (and other PrimarySeqI information such as length and accession). Features and annotation are not affected.

Bio::Seq::Meta suffers from a worse bug- Bio::SeqIO warns that the Bio::Seq::Meta object is not SeqI compliant. This seems to be due to the omission of Bio::Seq from the use base line in Bio::Seq::Meta (but not Bio::Seq::Meta::Array). When I add Bio::Seq into the use base line the behaviour is the same as for Bio::Seq::Meta::Array.

The following code demonstrates the problem:

\#!/usr/bin/perl

use warnings;

use strict;

use Bio::SeqIO;

use Bio::Seq::Meta::Array;

my $seq=Bio::SeqIO-\>new(-fh=\>\\\*ARGV, ~~format=\>’genbank’)~~\>next\_seq;

bless $seq, ‘Bio::Seq::Meta::Array’;

Bio::SeqIO-\>new(~~format=\>’genbank’)~~\>write\_seq($seq);

| 1.0 | Bio::Seq object loses sequence data when blessed as Bio::Seq::Meta::Array - ---

Author Name: **Roy Chaudhuri** (Roy Chaudhuri)

Original Redmine Issue: 2262, https://redmine.open-bio.org/issues/2262

Original Date: 2007-04-04

Original Assignee: Bioperl Guts

---

When I bless a Bio::Seq object as a Bio::Seq::Meta::Array (as instructed by the POD) it loses the primary sequence information (and other PrimarySeqI information such as length and accession). Features and annotation are not affected.

Bio::Seq::Meta suffers from a worse bug- Bio::SeqIO warns that the Bio::Seq::Meta object is not SeqI compliant. This seems to be due to the omission of Bio::Seq from the use base line in Bio::Seq::Meta (but not Bio::Seq::Meta::Array). When I add Bio::Seq into the use base line the behaviour is the same as for Bio::Seq::Meta::Array.

The following code demonstrates the problem:

\#!/usr/bin/perl

use warnings;

use strict;

use Bio::SeqIO;

use Bio::Seq::Meta::Array;

my $seq=Bio::SeqIO-\>new(-fh=\>\\\*ARGV, ~~format=\>’genbank’)~~\>next\_seq;

bless $seq, ‘Bio::Seq::Meta::Array’;

Bio::SeqIO-\>new(~~format=\>’genbank’)~~\>write\_seq($seq);

| non_test | bio seq object loses sequence data when blessed as bio seq meta array author name roy chaudhuri roy chaudhuri original redmine issue original date original assignee bioperl guts when i bless a bio seq object as a bio seq meta array as instructed by the pod it loses the primary sequence information and other primaryseqi information such as length and accession features and annotation are not affected bio seq meta suffers from a worse bug bio seqio warns that the bio seq meta object is not seqi compliant this seems to be due to the omission of bio seq from the use base line in bio seq meta but not bio seq meta array when i add bio seq into the use base line the behaviour is the same as for bio seq meta array the following code demonstrates the problem usr bin perl use warnings use strict use bio seqio use bio seq meta array my seq bio seqio new fh argv format ’genbank’ next seq bless seq ‘bio seq meta array’ bio seqio new format ’genbank’ write seq seq | 0 |

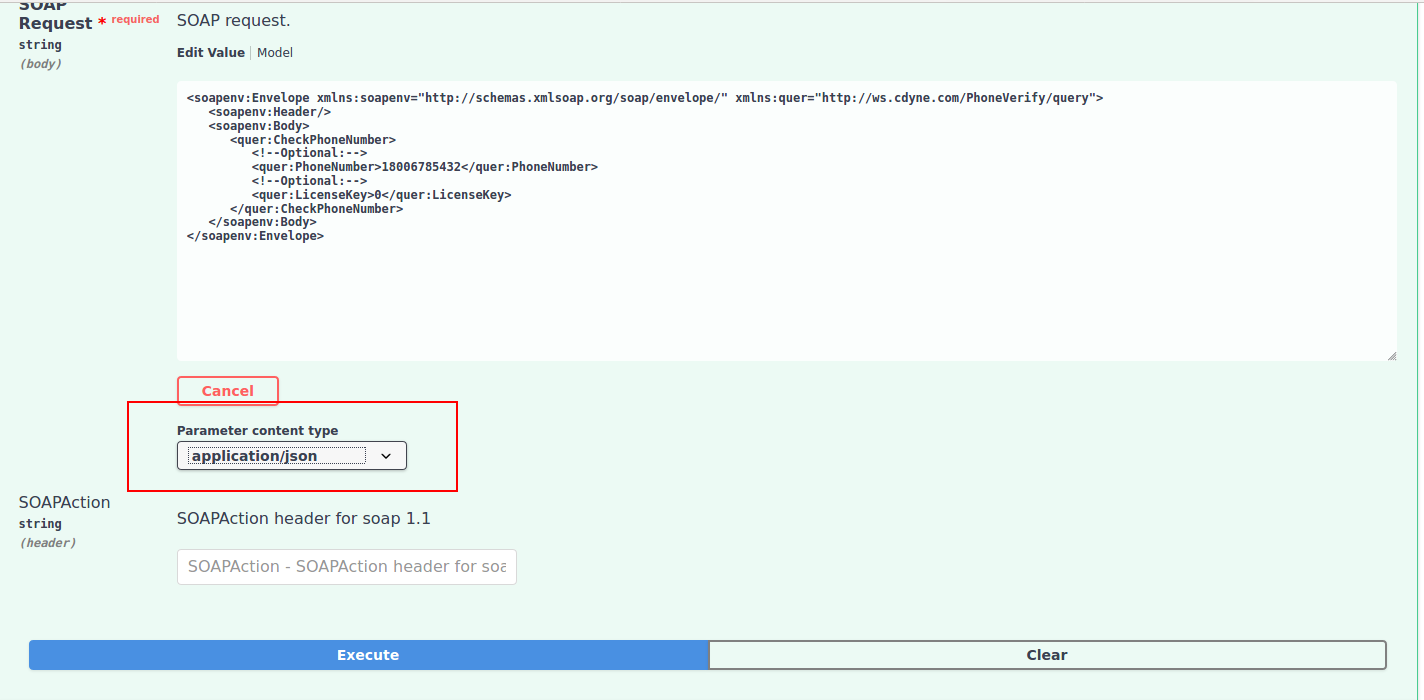

390,436 | 11,543,724,741 | IssuesEvent | 2020-02-18 10:07:15 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | opened | Issue in invoking SOAP APIs | Priority/Normal Type/Bug | ### Description:

Following issue occurred when invoking Pass through API created using SOAP back-end.

1. NPE when invoking:

```

ERROR - ServerWorker Error processing POST request for : /through/1.

java.lang.NullPointerException: null

at org.wso2.carbon.apimgt.gateway.utils.OpenAPIUtils.getPathItemSecurityScopes_aroundBody10(OpenAPIUtils.java:205) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.utils.OpenAPIUtils.getPathItemSecurityScopes(OpenAPIUtils.java:191) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.utils.OpenAPIUtils.getScopesOfResource_aroundBody2(OpenAPIUtils.java:77) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.utils.OpenAPIUtils.getScopesOfResource(OpenAPIUtils.java:71) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.jwt.JWTValidator.validateScopes_aroundBody8(JWTValidator.java:542) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.jwt.JWTValidator.validateScopes(JWTValidator.java:530) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.jwt.JWTValidator.authenticate_aroundBody0(JWTValidator.java:230) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.jwt.JWTValidator.authenticate(JWTValidator.java:91) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.oauth.OAuthAuthenticator.authenticate_aroundBody4(OAuthAuthenticator.java:333) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.oauth.OAuthAuthenticator.authenticate(OAuthAuthenticator.java:109) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.APIAuthenticationHandler.isAuthenticate_aroundBody42(APIAuthenticationHandler.java:419) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.APIAuthenticationHandler.isAuthenticate(APIAuthenticationHandler.java:413) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.APIAuthenticationHandler.handleRequest_aroundBody36(APIAuthenticationHandler.java:349) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.APIAuthenticationHandler.handleRequest(APIAuthenticationHandler.java:320) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.apache.synapse.rest.API.process(API.java:367) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.rest.RESTRequestHandler.apiProcessNonDefaultStrategy(RESTRequestHandler.java:149) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.rest.RESTRequestHandler.dispatchToAPI(RESTRequestHandler.java:95) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.rest.RESTRequestHandler.process(RESTRequestHandler.java:71) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.core.axis2.Axis2SynapseEnvironment.injectMessage(Axis2SynapseEnvironment.java:327) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.core.axis2.SynapseMessageReceiver.receive(SynapseMessageReceiver.java:98) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.axis2.engine.AxisEngine.receive(AxisEngine.java:180) ~[axis2_1.6.1.wso2v40.jar:?]

at org.apache.synapse.transport.passthru.ServerWorker.processNonEntityEnclosingRESTHandler(ServerWorker.java:368) [synapse-nhttp-transport_2.1.7.wso2v143.jar:?]

at org.apache.synapse.transport.passthru.ServerWorker.processEntityEnclosingRequest(ServerWorker.java:427) [synapse-nhttp-transport_2.1.7.wso2v143.jar:?]

at org.apache.synapse.transport.passthru.ServerWorker.run(ServerWorker.java:182) [synapse-nhttp-transport_2.1.7.wso2v143.jar:?]

at org.apache.axis2.transport.base.threads.NativeWorkerPool$1.run(NativeWorkerPool.java:172) [axis2_1.6.1.wso2v40.jar:?]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) [?:1.8.0_201]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) [?:1.8.0_201]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_201]

```

2. Generated swagger does not have the content-type of input (The default content type should be **Text/xml**). Hence, it is not possible to select the content-type in Swagger UI in Dev Portal. Refer the following screenshot:

| 1.0 | Issue in invoking SOAP APIs - ### Description:

Following issue occurred when invoking Pass through API created using SOAP back-end.

1. NPE when invoking:

```

ERROR - ServerWorker Error processing POST request for : /through/1.

java.lang.NullPointerException: null

at org.wso2.carbon.apimgt.gateway.utils.OpenAPIUtils.getPathItemSecurityScopes_aroundBody10(OpenAPIUtils.java:205) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.utils.OpenAPIUtils.getPathItemSecurityScopes(OpenAPIUtils.java:191) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.utils.OpenAPIUtils.getScopesOfResource_aroundBody2(OpenAPIUtils.java:77) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.utils.OpenAPIUtils.getScopesOfResource(OpenAPIUtils.java:71) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.jwt.JWTValidator.validateScopes_aroundBody8(JWTValidator.java:542) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.jwt.JWTValidator.validateScopes(JWTValidator.java:530) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.jwt.JWTValidator.authenticate_aroundBody0(JWTValidator.java:230) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.jwt.JWTValidator.authenticate(JWTValidator.java:91) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.oauth.OAuthAuthenticator.authenticate_aroundBody4(OAuthAuthenticator.java:333) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.oauth.OAuthAuthenticator.authenticate(OAuthAuthenticator.java:109) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.APIAuthenticationHandler.isAuthenticate_aroundBody42(APIAuthenticationHandler.java:419) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.APIAuthenticationHandler.isAuthenticate(APIAuthenticationHandler.java:413) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.APIAuthenticationHandler.handleRequest_aroundBody36(APIAuthenticationHandler.java:349) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.wso2.carbon.apimgt.gateway.handlers.security.APIAuthenticationHandler.handleRequest(APIAuthenticationHandler.java:320) ~[org.wso2.carbon.apimgt.gateway_6.6.64.jar:?]

at org.apache.synapse.rest.API.process(API.java:367) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.rest.RESTRequestHandler.apiProcessNonDefaultStrategy(RESTRequestHandler.java:149) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.rest.RESTRequestHandler.dispatchToAPI(RESTRequestHandler.java:95) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.rest.RESTRequestHandler.process(RESTRequestHandler.java:71) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.core.axis2.Axis2SynapseEnvironment.injectMessage(Axis2SynapseEnvironment.java:327) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.synapse.core.axis2.SynapseMessageReceiver.receive(SynapseMessageReceiver.java:98) ~[synapse-core_2.1.7.wso2v143.jar:2.1.7-wso2v143]

at org.apache.axis2.engine.AxisEngine.receive(AxisEngine.java:180) ~[axis2_1.6.1.wso2v40.jar:?]

at org.apache.synapse.transport.passthru.ServerWorker.processNonEntityEnclosingRESTHandler(ServerWorker.java:368) [synapse-nhttp-transport_2.1.7.wso2v143.jar:?]

at org.apache.synapse.transport.passthru.ServerWorker.processEntityEnclosingRequest(ServerWorker.java:427) [synapse-nhttp-transport_2.1.7.wso2v143.jar:?]

at org.apache.synapse.transport.passthru.ServerWorker.run(ServerWorker.java:182) [synapse-nhttp-transport_2.1.7.wso2v143.jar:?]

at org.apache.axis2.transport.base.threads.NativeWorkerPool$1.run(NativeWorkerPool.java:172) [axis2_1.6.1.wso2v40.jar:?]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) [?:1.8.0_201]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) [?:1.8.0_201]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_201]

```

2. Generated swagger does not have the content-type of input (The default content type should be **Text/xml**). Hence, it is not possible to select the content-type in Swagger UI in Dev Portal. Refer the following screenshot:

| non_test | issue in invoking soap apis description following issue occurred when invoking pass through api created using soap back end npe when invoking error serverworker error processing post request for through java lang nullpointerexception null at org carbon apimgt gateway utils openapiutils getpathitemsecurityscopes openapiutils java at org carbon apimgt gateway utils openapiutils getpathitemsecurityscopes openapiutils java at org carbon apimgt gateway utils openapiutils getscopesofresource openapiutils java at org carbon apimgt gateway utils openapiutils getscopesofresource openapiutils java at org carbon apimgt gateway handlers security jwt jwtvalidator validatescopes jwtvalidator java at org carbon apimgt gateway handlers security jwt jwtvalidator validatescopes jwtvalidator java at org carbon apimgt gateway handlers security jwt jwtvalidator authenticate jwtvalidator java at org carbon apimgt gateway handlers security jwt jwtvalidator authenticate jwtvalidator java at org carbon apimgt gateway handlers security oauth oauthauthenticator authenticate oauthauthenticator java at org carbon apimgt gateway handlers security oauth oauthauthenticator authenticate oauthauthenticator java at org carbon apimgt gateway handlers security apiauthenticationhandler isauthenticate apiauthenticationhandler java at org carbon apimgt gateway handlers security apiauthenticationhandler isauthenticate apiauthenticationhandler java at org carbon apimgt gateway handlers security apiauthenticationhandler handlerequest apiauthenticationhandler java at org carbon apimgt gateway handlers security apiauthenticationhandler handlerequest apiauthenticationhandler java at org apache synapse rest api process api java at org apache synapse rest restrequesthandler apiprocessnondefaultstrategy restrequesthandler java at org apache synapse rest restrequesthandler dispatchtoapi restrequesthandler java at org apache synapse rest restrequesthandler process restrequesthandler java at org apache synapse core injectmessage java at org apache synapse core synapsemessagereceiver receive synapsemessagereceiver java at org apache engine axisengine receive axisengine java at org apache synapse transport passthru serverworker processnonentityenclosingresthandler serverworker java at org apache synapse transport passthru serverworker processentityenclosingrequest serverworker java at org apache synapse transport passthru serverworker run serverworker java at org apache transport base threads nativeworkerpool run nativeworkerpool java at java util concurrent threadpoolexecutor runworker threadpoolexecutor java at java util concurrent threadpoolexecutor worker run threadpoolexecutor java at java lang thread run thread java generated swagger does not have the content type of input the default content type should be text xml hence it is not possible to select the content type in swagger ui in dev portal refer the following screenshot | 0 |

258,038 | 22,272,201,756 | IssuesEvent | 2022-06-10 13:25:23 | mozilla-mobile/fenix | https://api.github.com/repos/mozilla-mobile/fenix | opened | Intermittent UI test failure - TabbedBrowsingTest.closeTabTest | eng:ui-test | ### Firebase Test Run:

https://console.firebase.google.com/u/0/project/moz-fenix/testlab/histories/bh.66b7091e15d53d45/matrices/6696243506005561227/executions/bs.6f49200709fbc39c

### Stacktrace:

`java.lang.RuntimeException: Error while connecting UiAutomation@3740472[id=-1, flags=0]

at android.app.UiAutomation.connect(UiAutomation.java:259)

at android.app.Instrumentation.getUiAutomation(Instrumentation.java:2176)

at androidx.test.uiautomator.UiDevice.getUiAutomation(UiDevice.java:1129)

at androidx.test.uiautomator.QueryController.<init>(QueryController.java:95)

at androidx.test.uiautomator.UiDevice.<init>(UiDevice.java:109)

at androidx.test.uiautomator.UiDevice.getInstance(UiDevice.java:261)

at org.mozilla.fenix.ui.TabbedBrowsingTest.<init>(TabbedBrowsingTest.kt:43)`

### Build:

on main 6/9

Known issue: https://github.com/mozilla-mobile/fenix/issues/25132 | 1.0 | Intermittent UI test failure - TabbedBrowsingTest.closeTabTest - ### Firebase Test Run:

https://console.firebase.google.com/u/0/project/moz-fenix/testlab/histories/bh.66b7091e15d53d45/matrices/6696243506005561227/executions/bs.6f49200709fbc39c

### Stacktrace:

`java.lang.RuntimeException: Error while connecting UiAutomation@3740472[id=-1, flags=0]

at android.app.UiAutomation.connect(UiAutomation.java:259)

at android.app.Instrumentation.getUiAutomation(Instrumentation.java:2176)

at androidx.test.uiautomator.UiDevice.getUiAutomation(UiDevice.java:1129)

at androidx.test.uiautomator.QueryController.<init>(QueryController.java:95)

at androidx.test.uiautomator.UiDevice.<init>(UiDevice.java:109)

at androidx.test.uiautomator.UiDevice.getInstance(UiDevice.java:261)

at org.mozilla.fenix.ui.TabbedBrowsingTest.<init>(TabbedBrowsingTest.kt:43)`

### Build:

on main 6/9

Known issue: https://github.com/mozilla-mobile/fenix/issues/25132 | test | intermittent ui test failure tabbedbrowsingtest closetabtest firebase test run stacktrace java lang runtimeexception error while connecting uiautomation at android app uiautomation connect uiautomation java at android app instrumentation getuiautomation instrumentation java at androidx test uiautomator uidevice getuiautomation uidevice java at androidx test uiautomator querycontroller querycontroller java at androidx test uiautomator uidevice uidevice java at androidx test uiautomator uidevice getinstance uidevice java at org mozilla fenix ui tabbedbrowsingtest tabbedbrowsingtest kt build on main known issue | 1 |

174,691 | 13,505,085,402 | IssuesEvent | 2020-09-13 21:04:22 | thexerteproject/xerteonlinetoolkits | https://api.github.com/repos/thexerteproject/xerteonlinetoolkits | closed | Refactor Xenith engine code | Needs testing New feature XOT template enhancement | Xenith engine has got rather huge and spaghetti like and it's really hard for me to follow (having not been around the code for a while) so it must be impossible for anyone new coming in and it's only going to get more difficult for us to support unless we try and modernise it, group related code and refactor. It is getting on 8 years old now!

Anyway, i've run this past @FayCross a while ago but didn't have the time to implement any of it but with this self-isolation thing i've got all the time in the world!!

To start i'm going to refactor:

- Variables code (that's a good 600 lines alone)

- Glossary

- Menu

- Dialog

I'll leave them in xenith.js for now (xenith will get bigger just now but will eventually have the code blocks separated out to separate files) but I also have a loader which can load on the fly each of the blocks, when needed, but need to work on that to make sure it works offline and in scorm.

I know there are probably other bugs and developments that need done but i'm hope this refactor will reduce the complication of tracing and fixing those issues.

I've done VARIABLES and it's working fine as far as I can see. So i'll start committing them as separate commits and tag this issue so we can keep track of them.

Hope you guys are all safe! | 1.0 | Refactor Xenith engine code - Xenith engine has got rather huge and spaghetti like and it's really hard for me to follow (having not been around the code for a while) so it must be impossible for anyone new coming in and it's only going to get more difficult for us to support unless we try and modernise it, group related code and refactor. It is getting on 8 years old now!

Anyway, i've run this past @FayCross a while ago but didn't have the time to implement any of it but with this self-isolation thing i've got all the time in the world!!

To start i'm going to refactor:

- Variables code (that's a good 600 lines alone)

- Glossary

- Menu

- Dialog

I'll leave them in xenith.js for now (xenith will get bigger just now but will eventually have the code blocks separated out to separate files) but I also have a loader which can load on the fly each of the blocks, when needed, but need to work on that to make sure it works offline and in scorm.

I know there are probably other bugs and developments that need done but i'm hope this refactor will reduce the complication of tracing and fixing those issues.

I've done VARIABLES and it's working fine as far as I can see. So i'll start committing them as separate commits and tag this issue so we can keep track of them.

Hope you guys are all safe! | test | refactor xenith engine code xenith engine has got rather huge and spaghetti like and it s really hard for me to follow having not been around the code for a while so it must be impossible for anyone new coming in and it s only going to get more difficult for us to support unless we try and modernise it group related code and refactor it is getting on years old now anyway i ve run this past faycross a while ago but didn t have the time to implement any of it but with this self isolation thing i ve got all the time in the world to start i m going to refactor variables code that s a good lines alone glossary menu dialog i ll leave them in xenith js for now xenith will get bigger just now but will eventually have the code blocks separated out to separate files but i also have a loader which can load on the fly each of the blocks when needed but need to work on that to make sure it works offline and in scorm i know there are probably other bugs and developments that need done but i m hope this refactor will reduce the complication of tracing and fixing those issues i ve done variables and it s working fine as far as i can see so i ll start committing them as separate commits and tag this issue so we can keep track of them hope you guys are all safe | 1 |

202,484 | 15,286,694,431 | IssuesEvent | 2021-02-23 14:58:55 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: kv95/enc=false/nodes=3/cpu=32/seq failed | C-test-failure O-roachtest O-robot branch-release-20.1 release-blocker | [(roachtest).kv95/enc=false/nodes=3/cpu=32/seq failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2661561&tab=buildLog) on [release-20.1@90f78268f3b5b08ba838ac3ad164821d2f5a5362](https://github.com/cockroachdb/cockroach/commits/90f78268f3b5b08ba838ac3ad164821d2f5a5362):

```

| github.com/cockroachdb/cockroach/vendor/golang.org/x/sync/errgroup.(*Group).Go.func1

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/vendor/golang.org/x/sync/errgroup/errgroup.go:57

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1374

Wraps: (2) 2 safe details enclosed

Wraps: (3) output in run_074156.709_n4_workload_run_kv

Wraps: (4) /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-2661561-1612941349-20-n4cpu32:4 -- ./workload run kv --init --histograms=perf/stats.json --concurrency=192 --duration=10m0s --read-percent=95 --sequential {pgurl:1-3} returned

| stderr:

| ./workload: error while loading shared libraries: libncurses.so.6: cannot open shared object file: No such file or directory

| Error: COMMAND_PROBLEM: exit status 127

| (1) COMMAND_PROBLEM

| Wraps: (2) Node 4. Command with error:

| | ```

| | ./workload run kv --init --histograms=perf/stats.json --concurrency=192 --duration=10m0s --read-percent=95 --sequential {pgurl:1-3}

| | ```

| Wraps: (3) exit status 127

| Error types: (1) errors.Cmd (2) *hintdetail.withDetail (3) *exec.ExitError

|

| stdout:

Wraps: (5) exit status 20

Error types: (1) *withstack.withStack (2) *safedetails.withSafeDetails (3) *errutil.withMessage (4) *main.withCommandDetails (5) *exec.ExitError

cluster.go:2628,kv.go:96,kv.go:183,test_runner.go:749: monitor failure: monitor task failed: t.Fatal() was called

(1) attached stack trace

| main.(*monitor).WaitE

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2616

| main.(*monitor).Wait

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2624

| main.registerKV.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kv.go:96

| main.registerKV.func3

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kv.go:183

| main.(*testRunner).runTest.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/test_runner.go:749

Wraps: (2) monitor failure

Wraps: (3) attached stack trace

| main.(*monitor).wait.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2672

Wraps: (4) monitor task failed

Wraps: (5) attached stack trace

| main.init

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2586

| runtime.doInit

| /usr/local/go/src/runtime/proc.go:5652

| runtime.main

| /usr/local/go/src/runtime/proc.go:191

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1374

Wraps: (6) t.Fatal() was called

Error types: (1) *withstack.withStack (2) *errutil.withMessage (3) *withstack.withStack (4) *errutil.withMessage (5) *withstack.withStack (6) *errors.errorString

```

<details><summary>More</summary><p>

Artifacts: [/kv95/enc=false/nodes=3/cpu=32/seq](https://teamcity.cockroachdb.com/viewLog.html?buildId=2661561&tab=artifacts#/kv95/enc=false/nodes=3/cpu=32/seq)

Related:

- #60224 roachtest: kv95/enc=false/nodes=3/cpu=32/seq failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-60149](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-60149) [release-blocker](https://api.github.com/repos/cockroachdb/cockroach/labels/release-blocker)

- #60077 roachtest: kv95/enc=false/nodes=3/cpu=32/seq failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-release-20.2](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-release-20.2) [release-blocker](https://api.github.com/repos/cockroachdb/cockroach/labels/release-blocker)

- #59924 roachtest: kv95/enc=false/nodes=3/cpu=32/seq failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-master](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-master) [release-blocker](https://api.github.com/repos/cockroachdb/cockroach/labels/release-blocker)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Akv95%2Fenc%3Dfalse%2Fnodes%3D3%2Fcpu%3D32%2Fseq.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

| 2.0 | roachtest: kv95/enc=false/nodes=3/cpu=32/seq failed - [(roachtest).kv95/enc=false/nodes=3/cpu=32/seq failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2661561&tab=buildLog) on [release-20.1@90f78268f3b5b08ba838ac3ad164821d2f5a5362](https://github.com/cockroachdb/cockroach/commits/90f78268f3b5b08ba838ac3ad164821d2f5a5362):

```

| github.com/cockroachdb/cockroach/vendor/golang.org/x/sync/errgroup.(*Group).Go.func1

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/vendor/golang.org/x/sync/errgroup/errgroup.go:57

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1374

Wraps: (2) 2 safe details enclosed

Wraps: (3) output in run_074156.709_n4_workload_run_kv

Wraps: (4) /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-2661561-1612941349-20-n4cpu32:4 -- ./workload run kv --init --histograms=perf/stats.json --concurrency=192 --duration=10m0s --read-percent=95 --sequential {pgurl:1-3} returned

| stderr:

| ./workload: error while loading shared libraries: libncurses.so.6: cannot open shared object file: No such file or directory

| Error: COMMAND_PROBLEM: exit status 127

| (1) COMMAND_PROBLEM

| Wraps: (2) Node 4. Command with error:

| | ```

| | ./workload run kv --init --histograms=perf/stats.json --concurrency=192 --duration=10m0s --read-percent=95 --sequential {pgurl:1-3}

| | ```

| Wraps: (3) exit status 127

| Error types: (1) errors.Cmd (2) *hintdetail.withDetail (3) *exec.ExitError

|

| stdout:

Wraps: (5) exit status 20

Error types: (1) *withstack.withStack (2) *safedetails.withSafeDetails (3) *errutil.withMessage (4) *main.withCommandDetails (5) *exec.ExitError

cluster.go:2628,kv.go:96,kv.go:183,test_runner.go:749: monitor failure: monitor task failed: t.Fatal() was called

(1) attached stack trace

| main.(*monitor).WaitE

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2616

| main.(*monitor).Wait

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2624

| main.registerKV.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kv.go:96

| main.registerKV.func3

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/kv.go:183

| main.(*testRunner).runTest.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/test_runner.go:749

Wraps: (2) monitor failure

Wraps: (3) attached stack trace

| main.(*monitor).wait.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2672

Wraps: (4) monitor task failed

Wraps: (5) attached stack trace

| main.init

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2586

| runtime.doInit

| /usr/local/go/src/runtime/proc.go:5652

| runtime.main

| /usr/local/go/src/runtime/proc.go:191

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1374

Wraps: (6) t.Fatal() was called

Error types: (1) *withstack.withStack (2) *errutil.withMessage (3) *withstack.withStack (4) *errutil.withMessage (5) *withstack.withStack (6) *errors.errorString

```

<details><summary>More</summary><p>

Artifacts: [/kv95/enc=false/nodes=3/cpu=32/seq](https://teamcity.cockroachdb.com/viewLog.html?buildId=2661561&tab=artifacts#/kv95/enc=false/nodes=3/cpu=32/seq)

Related:

- #60224 roachtest: kv95/enc=false/nodes=3/cpu=32/seq failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-60149](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-60149) [release-blocker](https://api.github.com/repos/cockroachdb/cockroach/labels/release-blocker)

- #60077 roachtest: kv95/enc=false/nodes=3/cpu=32/seq failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-release-20.2](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-release-20.2) [release-blocker](https://api.github.com/repos/cockroachdb/cockroach/labels/release-blocker)

- #59924 roachtest: kv95/enc=false/nodes=3/cpu=32/seq failed [C-test-failure](https://api.github.com/repos/cockroachdb/cockroach/labels/C-test-failure) [O-roachtest](https://api.github.com/repos/cockroachdb/cockroach/labels/O-roachtest) [O-robot](https://api.github.com/repos/cockroachdb/cockroach/labels/O-robot) [branch-master](https://api.github.com/repos/cockroachdb/cockroach/labels/branch-master) [release-blocker](https://api.github.com/repos/cockroachdb/cockroach/labels/release-blocker)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2Akv95%2Fenc%3Dfalse%2Fnodes%3D3%2Fcpu%3D32%2Fseq.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>