Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

199,571 | 15,049,249,810 | IssuesEvent | 2021-02-03 11:13:44 | elastic/elasticsearch | https://api.github.com/repos/elastic/elasticsearch | closed | [CI] TimeSeriesDataStreamsIT.testShrinkAfterRollover | :Core/Features/ILM+SLM >test-failure Team:Core/Features v8.0.0 | <!--

Please fill out the following information, and ensure you have attempted

to reproduce locally

-->

**Build scan**:

https://gradle-enterprise.elastic.co/s/nqwhqniws3pp2

**Repro line**:

```

./gradlew ':x-pack:plugin:ilm:qa:multi-node:javaRestTest' --tests "org.elasticsearch.xpack.ilm.TimeSeriesDataStreamsIT.testShrinkAfterRollover" -Dtests.seed=1DCA2402DADCCD33 -Dtests.security.manager=true -Dtests.locale=es-AR -Dtests.timezone=America/Grand_Turk -Druntime.java=11

```

**Reproduces locally?**:

Not exactly - it failed for me locally with `the rollover action created the rollover index`.

**Applicable branches**:

`master`

**Failure history**:

https://gradle-enterprise.elastic.co/scans/tests?search.relativeStartTime=P7D&search.timeZoneId=Europe/London&tests.container=org.elasticsearch.xpack.ilm.TimeSeriesDataStreamsIT&tests.sortField=FAILED&tests.test=testShrinkAfterRollover&tests.unstableOnly=true

**Failure excerpt**:

```

java.lang.AssertionError: the shrunken index was deleted by the delete action

at org.elasticsearch.xpack.ilm.TimeSeriesDataStreamsIT.lambda$testShrinkAfterRollover$12(TimeSeriesDataStreamsIT.java:127)

at org.elasticsearch.xpack.ilm.TimeSeriesDataStreamsIT.testShrinkAfterRollover(TimeSeriesDataStreamsIT.java:127)

```

| 1.0 | [CI] TimeSeriesDataStreamsIT.testShrinkAfterRollover - <!--

Please fill out the following information, and ensure you have attempted

to reproduce locally

-->

**Build scan**:

https://gradle-enterprise.elastic.co/s/nqwhqniws3pp2

**Repro line**:

```

./gradlew ':x-pack:plugin:ilm:qa:multi-node:javaRestTest' --tests "org.elasticsearch.xpack.ilm.TimeSeriesDataStreamsIT.testShrinkAfterRollover" -Dtests.seed=1DCA2402DADCCD33 -Dtests.security.manager=true -Dtests.locale=es-AR -Dtests.timezone=America/Grand_Turk -Druntime.java=11

```

**Reproduces locally?**:

Not exactly - it failed for me locally with `the rollover action created the rollover index`.

**Applicable branches**:

`master`

**Failure history**:

https://gradle-enterprise.elastic.co/scans/tests?search.relativeStartTime=P7D&search.timeZoneId=Europe/London&tests.container=org.elasticsearch.xpack.ilm.TimeSeriesDataStreamsIT&tests.sortField=FAILED&tests.test=testShrinkAfterRollover&tests.unstableOnly=true

**Failure excerpt**:

```

java.lang.AssertionError: the shrunken index was deleted by the delete action

at org.elasticsearch.xpack.ilm.TimeSeriesDataStreamsIT.lambda$testShrinkAfterRollover$12(TimeSeriesDataStreamsIT.java:127)

at org.elasticsearch.xpack.ilm.TimeSeriesDataStreamsIT.testShrinkAfterRollover(TimeSeriesDataStreamsIT.java:127)

```

| test | timeseriesdatastreamsit testshrinkafterrollover please fill out the following information and ensure you have attempted to reproduce locally build scan repro line gradlew x pack plugin ilm qa multi node javaresttest tests org elasticsearch xpack ilm timeseriesdatastreamsit testshrinkafterrollover dtests seed dtests security manager true dtests locale es ar dtests timezone america grand turk druntime java reproduces locally not exactly it failed for me locally with the rollover action created the rollover index applicable branches master failure history failure excerpt java lang assertionerror the shrunken index was deleted by the delete action at org elasticsearch xpack ilm timeseriesdatastreamsit lambda testshrinkafterrollover timeseriesdatastreamsit java at org elasticsearch xpack ilm timeseriesdatastreamsit testshrinkafterrollover timeseriesdatastreamsit java | 1 |

25,033 | 4,128,253,667 | IssuesEvent | 2016-06-10 04:49:32 | openshift/source-to-image | https://api.github.com/repos/openshift/source-to-image | closed | Build test-images test flake | area/tests priority/P3 | Sometimes our test end with `You do not have necessary test images, be sure to run 'hack/build-test-images.sh' beforehand.` This is one example of such error: https://ci.openshift.redhat.com/jenkins/job/merge_pull_requests_sti/247/console . It would be good to nail down the reason for that. | 1.0 | Build test-images test flake - Sometimes our test end with `You do not have necessary test images, be sure to run 'hack/build-test-images.sh' beforehand.` This is one example of such error: https://ci.openshift.redhat.com/jenkins/job/merge_pull_requests_sti/247/console . It would be good to nail down the reason for that. | test | build test images test flake sometimes our test end with you do not have necessary test images be sure to run hack build test images sh beforehand this is one example of such error it would be good to nail down the reason for that | 1 |

50,655 | 6,418,969,681 | IssuesEvent | 2017-08-08 20:09:46 | Automattic/wp-calypso | https://api.github.com/repos/Automattic/wp-calypso | closed | Activity log: Handling large quantities of activities | API Design Jetpack Activity Log [Status] In Progress [Type] Question | STUB

I want to start some conversation about how we'll deal with activity data. | 1.0 | Activity log: Handling large quantities of activities - STUB

I want to start some conversation about how we'll deal with activity data. | non_test | activity log handling large quantities of activities stub i want to start some conversation about how we ll deal with activity data | 0 |

6,270 | 14,075,970,981 | IssuesEvent | 2020-11-04 09:49:01 | dusk-network/rusk | https://api.github.com/repos/dusk-network/rusk | opened | Refactor DUSK Contract circuits | area:architecture area:cryptography type:refactor | As per recently modified [specs](https://app.gitbook.com/@dusk-network/s/specs/specifications/smart-contracts/genenesis-contracts/dusk-contract/methods), there is a need to change the circuits in the DUSK contract.

These changes include:

- Using Schnorr gadget over secret key gadget

- Replacing preimage gadget

- Implementing crossover | 1.0 | Refactor DUSK Contract circuits - As per recently modified [specs](https://app.gitbook.com/@dusk-network/s/specs/specifications/smart-contracts/genenesis-contracts/dusk-contract/methods), there is a need to change the circuits in the DUSK contract.

These changes include:

- Using Schnorr gadget over secret key gadget

- Replacing preimage gadget

- Implementing crossover | non_test | refactor dusk contract circuits as per recently modified there is a need to change the circuits in the dusk contract these changes include using schnorr gadget over secret key gadget replacing preimage gadget implementing crossover | 0 |

57,415 | 7,055,840,251 | IssuesEvent | 2018-01-04 10:08:31 | MantaRayMedia/eahealth | https://api.github.com/repos/MantaRayMedia/eahealth | opened | Home page | needs design | ### Done criteria

- [ ] ?

- [ ] ?

---

### Wireframes

- [ ] https://projects.invisionapp.com/d/main#/console/12443475/271624627/preview

---

### Designs

- [ ] ?

- [ ] ?

| 1.0 | Home page - ### Done criteria

- [ ] ?

- [ ] ?

---

### Wireframes

- [ ] https://projects.invisionapp.com/d/main#/console/12443475/271624627/preview

---

### Designs

- [ ] ?

- [ ] ?

| non_test | home page done criteria wireframes designs | 0 |

53,252 | 6,713,301,196 | IssuesEvent | 2017-10-13 12:59:04 | SAP/techne | https://api.github.com/repos/SAP/techne | closed | Design Tool bar | backlog batch 2 design UX | goal: design and improve the toolbar

### Current v1.5.x

<img width="970" alt="screen shot 2017-07-13 at 12 38 37" src="https://user-images.githubusercontent.com/22662903/28162679-3a9416de-67c8-11e7-94ab-01f6e7ba065d.png">

### Tasks

- [x] 1. Define use cases

- [x] 1. Define **Filtering**

- [ ] 2. Define **Search**

- [x] 3. Define **Pagination**

- [x] 4. Define **Views**

- [x] 5. Define **Actions** - new

- [x] Define UX Elements for 1

- [x] Visual Design

- [x] Document results

| 1.0 | Design Tool bar - goal: design and improve the toolbar

### Current v1.5.x

<img width="970" alt="screen shot 2017-07-13 at 12 38 37" src="https://user-images.githubusercontent.com/22662903/28162679-3a9416de-67c8-11e7-94ab-01f6e7ba065d.png">

### Tasks

- [x] 1. Define use cases

- [x] 1. Define **Filtering**

- [ ] 2. Define **Search**

- [x] 3. Define **Pagination**

- [x] 4. Define **Views**

- [x] 5. Define **Actions** - new

- [x] Define UX Elements for 1

- [x] Visual Design

- [x] Document results

| non_test | design tool bar goal design and improve the toolbar current x img width alt screen shot at src tasks define use cases define filtering define search define pagination define views define actions new define ux elements for visual design document results | 0 |

92,674 | 8,375,629,180 | IssuesEvent | 2018-10-05 17:02:32 | elastic/eui | https://api.github.com/repos/elastic/eui | closed | Add guide to writing visual regression tests to README.md | test | In #630, the request was made to add a style guide/best practices guide for writing visual UI Tests.

This issue will track that work.

- [ ] Best Practices for Writing VR Tets

- [ ] Page Objects

- [ ] Navigation

- [ ] Hook Usage | 1.0 | Add guide to writing visual regression tests to README.md - In #630, the request was made to add a style guide/best practices guide for writing visual UI Tests.

This issue will track that work.

- [ ] Best Practices for Writing VR Tets

- [ ] Page Objects

- [ ] Navigation

- [ ] Hook Usage | test | add guide to writing visual regression tests to readme md in the request was made to add a style guide best practices guide for writing visual ui tests this issue will track that work best practices for writing vr tets page objects navigation hook usage | 1 |

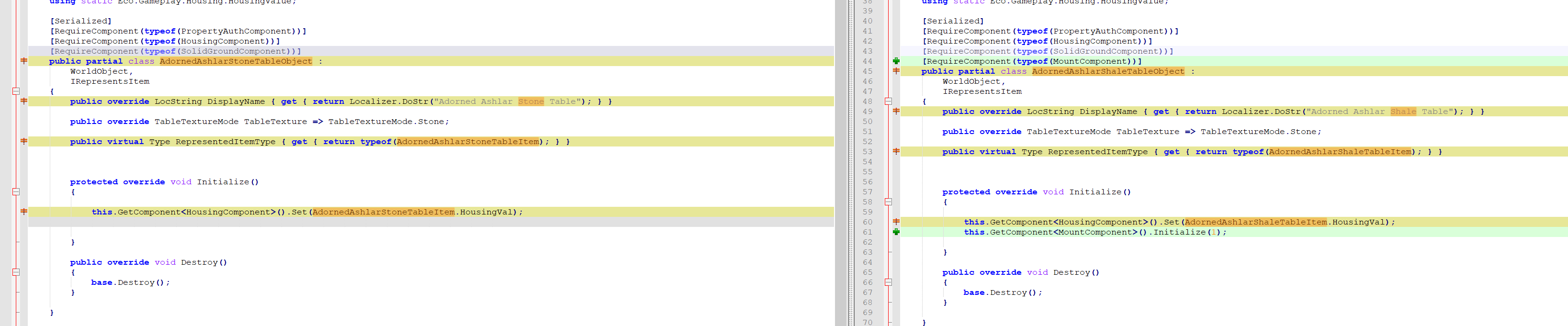

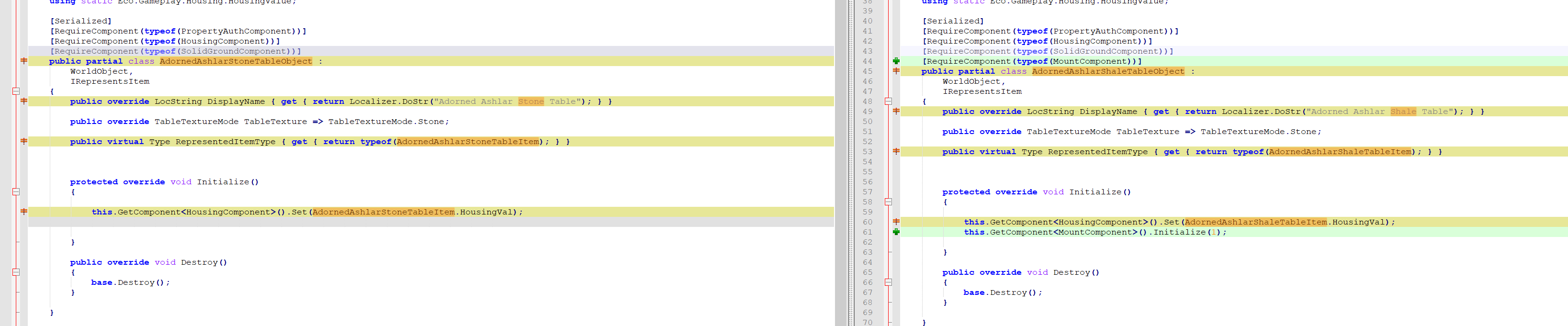

559,907 | 16,580,612,515 | IssuesEvent | 2021-05-31 11:16:08 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | 9.2.0 1897 AshlarStoneTable and AdornedAshlarStoneTable missing "mount" component | Category: Gameplay Priority: Low Squad: Redwood Status: Fixed | AshlarStoneTable and AdornedAshlarStoneTable are missing the "mount" components

also missing the bit just below porotected override void when comparing them

| 1.0 | 9.2.0 1897 AshlarStoneTable and AdornedAshlarStoneTable missing "mount" component - AshlarStoneTable and AdornedAshlarStoneTable are missing the "mount" components

also missing the bit just below porotected override void when comparing them

| non_test | ashlarstonetable and adornedashlarstonetable missing mount component ashlarstonetable and adornedashlarstonetable are missing the mount components also missing the bit just below porotected override void when comparing them | 0 |

3,154 | 2,659,168,233 | IssuesEvent | 2015-03-18 19:25:50 | DynamoRIO/drmemory | https://api.github.com/repos/DynamoRIO/drmemory | closed | app_suite.strcasecmp fails because expected locales are not available | Component-Tests OpSys-Linux Type-Bug | The test fails if en_US.iso88591 and en_US.iso885915 are not available. | 1.0 | app_suite.strcasecmp fails because expected locales are not available - The test fails if en_US.iso88591 and en_US.iso885915 are not available. | test | app suite strcasecmp fails because expected locales are not available the test fails if en us and en us are not available | 1 |

671,145 | 22,744,430,235 | IssuesEvent | 2022-07-07 07:55:06 | tektoncd/pipeline | https://api.github.com/repos/tektoncd/pipeline | closed | Tasks are failing when "init" is the first argument followed by two or more other arguments. | kind/bug priority/critical-urgent | # Expected Behavior

Given the following task

```yaml

apiVersion: tekton.dev/v1beta1

kind: Task

metadata:

name: tkn-arg-test

labels:

app.kubernetes.io/version: "0.4"

annotations:

tekton.dev/pipelines.minVersion: "0.22.0"

tekton.dev/tags: cli

spec:

description: >-

Test consuming args

params:

- name: ARGS

description: The terraform cli commands to tun

type: array

default:

- "--help"

- name: USER_HOME

description: Override home directory to /tekton/home

type: string

default: "/tekton/home"

steps:

- name: echo-cli

image: registry.access.redhat.com/ubi9/ubi-minimal:9.0.0-1580

workingDir: /tekton/home

args:

- "$(params.ARGS)"

command: ["echo"]

resources:

limits:

cpu: 250m

memory: 1Gi

requests:

cpu: 250m

memory: 500Mi

env:

- name: "HOME"

value: $(params.USER_HOME)

```

The following command should work:

```bash

$ tkn task start tkn-arg-test "-p=ARGS=init,two" --use-param-defaults --showlog

# Works…

[echo-cli] init two

$ tkn task start tkn-arg-test "-p=ARGS=init,two,three" --use-param-defaults --showlog

# Doesn't work

[echo-cli] 2022/07/05 17:05:42 init error: open two: no such file or directory

```

# Actual Behavior

It fails as written above

# Steps to Reproduce the Problem

- Create the task

- Issue the `tkn` commands

# Additional Info

This affect any Pipeline release that contains the follow change :

https://github.com/tektoncd/pipeline/pull/4826. This means it affects 0.32 to 0.37 and the fix should be backport in all.

The main reason why it fails is the way the `entrypoint` binary is written and the way `flag` acts. To simplify a tiny bit, the command created on a task looks like `/ko-app/entrypoint --entrypoint echo -- command and args here` (with a bunch of flags before `--` ommited here). With the above example, it becomes `/ko-app/entrypoint --entrypoint echo -- init foo bar`. The `flag` package, **in that particular case** ignores the `--` and tells the rest of the code that the arguments are `init foo bar`, which is similar to `/ko-app/entrypoint --entrypoint echo init foo bar`. And *this*, in terms, goes into the `init` subcommand.

/assign

/priority critical-urgent | 1.0 | Tasks are failing when "init" is the first argument followed by two or more other arguments. - # Expected Behavior

Given the following task

```yaml

apiVersion: tekton.dev/v1beta1

kind: Task

metadata:

name: tkn-arg-test

labels:

app.kubernetes.io/version: "0.4"

annotations:

tekton.dev/pipelines.minVersion: "0.22.0"

tekton.dev/tags: cli

spec:

description: >-

Test consuming args

params:

- name: ARGS

description: The terraform cli commands to tun

type: array

default:

- "--help"

- name: USER_HOME

description: Override home directory to /tekton/home

type: string

default: "/tekton/home"

steps:

- name: echo-cli

image: registry.access.redhat.com/ubi9/ubi-minimal:9.0.0-1580

workingDir: /tekton/home

args:

- "$(params.ARGS)"

command: ["echo"]

resources:

limits:

cpu: 250m

memory: 1Gi

requests:

cpu: 250m

memory: 500Mi

env:

- name: "HOME"

value: $(params.USER_HOME)

```

The following command should work:

```bash

$ tkn task start tkn-arg-test "-p=ARGS=init,two" --use-param-defaults --showlog

# Works…

[echo-cli] init two

$ tkn task start tkn-arg-test "-p=ARGS=init,two,three" --use-param-defaults --showlog

# Doesn't work

[echo-cli] 2022/07/05 17:05:42 init error: open two: no such file or directory

```

# Actual Behavior

It fails as written above

# Steps to Reproduce the Problem

- Create the task

- Issue the `tkn` commands

# Additional Info

This affect any Pipeline release that contains the follow change :

https://github.com/tektoncd/pipeline/pull/4826. This means it affects 0.32 to 0.37 and the fix should be backport in all.

The main reason why it fails is the way the `entrypoint` binary is written and the way `flag` acts. To simplify a tiny bit, the command created on a task looks like `/ko-app/entrypoint --entrypoint echo -- command and args here` (with a bunch of flags before `--` ommited here). With the above example, it becomes `/ko-app/entrypoint --entrypoint echo -- init foo bar`. The `flag` package, **in that particular case** ignores the `--` and tells the rest of the code that the arguments are `init foo bar`, which is similar to `/ko-app/entrypoint --entrypoint echo init foo bar`. And *this*, in terms, goes into the `init` subcommand.

/assign

/priority critical-urgent | non_test | tasks are failing when init is the first argument followed by two or more other arguments expected behavior given the following task yaml apiversion tekton dev kind task metadata name tkn arg test labels app kubernetes io version annotations tekton dev pipelines minversion tekton dev tags cli spec description test consuming args params name args description the terraform cli commands to tun type array default help name user home description override home directory to tekton home type string default tekton home steps name echo cli image registry access redhat com ubi minimal workingdir tekton home args params args command resources limits cpu memory requests cpu memory env name home value params user home the following command should work bash tkn task start tkn arg test p args init two use param defaults showlog works… init two tkn task start tkn arg test p args init two three use param defaults showlog doesn t work init error open two no such file or directory actual behavior it fails as written above steps to reproduce the problem create the task issue the tkn commands additional info this affect any pipeline release that contains the follow change this means it affects to and the fix should be backport in all the main reason why it fails is the way the entrypoint binary is written and the way flag acts to simplify a tiny bit the command created on a task looks like ko app entrypoint entrypoint echo command and args here with a bunch of flags before ommited here with the above example it becomes ko app entrypoint entrypoint echo init foo bar the flag package in that particular case ignores the and tells the rest of the code that the arguments are init foo bar which is similar to ko app entrypoint entrypoint echo init foo bar and this in terms goes into the init subcommand assign priority critical urgent | 0 |

175,831 | 6,554,350,106 | IssuesEvent | 2017-09-06 05:14:22 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | [upgrade test failure][sig-api-machinery] Initializers will be set to nil if a patch removes the last pending initializer | kind/bug priority/critical-urgent release-blocker sig/api-machinery | Seeing this test failure pretty consistently with kops, to the extent that we can't merge e.g. https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/pr-logs/pull/kops/3288/pull-kops-e2e-kubernetes-aws/2743/

Based on timing, seems likely to be #51082, although something odd happened in that this failure occurred on that PR but it still merged: https://github.com/kubernetes/kubernetes/pull/51082#issuecomment-325180686

cc @kubernetes/sig-api-machinery-bugs | 1.0 | [upgrade test failure][sig-api-machinery] Initializers will be set to nil if a patch removes the last pending initializer - Seeing this test failure pretty consistently with kops, to the extent that we can't merge e.g. https://k8s-gubernator.appspot.com/build/kubernetes-jenkins/pr-logs/pull/kops/3288/pull-kops-e2e-kubernetes-aws/2743/

Based on timing, seems likely to be #51082, although something odd happened in that this failure occurred on that PR but it still merged: https://github.com/kubernetes/kubernetes/pull/51082#issuecomment-325180686

cc @kubernetes/sig-api-machinery-bugs | non_test | initializers will be set to nil if a patch removes the last pending initializer seeing this test failure pretty consistently with kops to the extent that we can t merge e g based on timing seems likely to be although something odd happened in that this failure occurred on that pr but it still merged cc kubernetes sig api machinery bugs | 0 |

102,274 | 8,823,837,712 | IssuesEvent | 2019-01-02 15:04:18 | NativeScript/nativescript-cli | https://api.github.com/repos/NativeScript/nativescript-cli | closed | Fresh project build error on ios | backlog os: ios ready for test | @hamdiwanis commented on [Sun Dec 16 2018](https://github.com/NativeScript/NativeScript/issues/6715)

**Environment**

- CLI: 5.1.0

- Cross-platform modules: 5.1.0

- Android Runtime: 5.1.0

- iOS Runtime: 5.1.0

- Plugin(s): none

**Describe the bug**

i get this error with ios build

```

linking ObjC for iOS Simulator, but dylib (/Volumes/project/platforms/ios/internal/NativeScript.framework/NativeScript) was compiled for MacOSX

ld: framework not found CoreServices for architecture i386

```

**To Reproduce**

```

tns create test

cd test

npm i

tns run ios

```

---

@NickIliev commented on [Mon Dec 17 2018](https://github.com/NativeScript/NativeScript/issues/6715#issuecomment-447735889)

@hamdiwanis what is the simulator/device you are running on?

| 1.0 | Fresh project build error on ios - @hamdiwanis commented on [Sun Dec 16 2018](https://github.com/NativeScript/NativeScript/issues/6715)

**Environment**

- CLI: 5.1.0

- Cross-platform modules: 5.1.0

- Android Runtime: 5.1.0

- iOS Runtime: 5.1.0

- Plugin(s): none

**Describe the bug**

i get this error with ios build

```

linking ObjC for iOS Simulator, but dylib (/Volumes/project/platforms/ios/internal/NativeScript.framework/NativeScript) was compiled for MacOSX

ld: framework not found CoreServices for architecture i386

```

**To Reproduce**

```

tns create test

cd test

npm i

tns run ios

```

---

@NickIliev commented on [Mon Dec 17 2018](https://github.com/NativeScript/NativeScript/issues/6715#issuecomment-447735889)

@hamdiwanis what is the simulator/device you are running on?

| test | fresh project build error on ios hamdiwanis commented on environment cli cross platform modules android runtime ios runtime plugin s none describe the bug i get this error with ios build linking objc for ios simulator but dylib volumes project platforms ios internal nativescript framework nativescript was compiled for macosx ld framework not found coreservices for architecture to reproduce tns create test cd test npm i tns run ios nickiliev commented on hamdiwanis what is the simulator device you are running on | 1 |

174,729 | 13,508,126,361 | IssuesEvent | 2020-09-14 07:13:03 | knative/serving | https://api.github.com/repos/knative/serving | closed | Debug TestActivatorHAGraceful and stabilize it | area/autoscale area/test-and-release kind/bug | <!--

Pro-tip: You can leave this block commented, and it still works!

Select the appropriate areas for your issue:

/area autoscale

/area test-and-release

Classify what kind of issue this is:

/kind bug

-->

We kind of optimistically added `TestActivatorHAGraceful` assuming it should always pass. It doesn't. The test will be skipped but we should really look at what's wrong there and triage if the activator really can't be gracefully shutdown without losing traffic occasionally.

| 1.0 | Debug TestActivatorHAGraceful and stabilize it - <!--

Pro-tip: You can leave this block commented, and it still works!

Select the appropriate areas for your issue:

/area autoscale

/area test-and-release

Classify what kind of issue this is:

/kind bug

-->

We kind of optimistically added `TestActivatorHAGraceful` assuming it should always pass. It doesn't. The test will be skipped but we should really look at what's wrong there and triage if the activator really can't be gracefully shutdown without losing traffic occasionally.

| test | debug testactivatorhagraceful and stabilize it pro tip you can leave this block commented and it still works select the appropriate areas for your issue area autoscale area test and release classify what kind of issue this is kind bug we kind of optimistically added testactivatorhagraceful assuming it should always pass it doesn t the test will be skipped but we should really look at what s wrong there and triage if the activator really can t be gracefully shutdown without losing traffic occasionally | 1 |

151,701 | 12,056,085,592 | IssuesEvent | 2020-04-15 13:57:58 | ekmett/lens | https://api.github.com/repos/ekmett/lens | closed | Figure out what to do about the language-haskell-extract dependency | packaging test-suite third-party | I am not yet able to run `lens`' test suite + GHC 8.10.1 on Travis CI without the use of `head.hackage`. Why, you ask? It is because various test suites depend on `test-framework-th`, which in turn depends on `language-haskell-extract`. Sadly, `language-haskell-extract` [does not build with `template-haskell-2.16.*`](https://github.com/finnsson/template-helper/issues/12), and the maintainer of the library has not been active on GitHub for about two years. Bottom line: `language-haskell-extract` is likely abandonware.

This poses a problem for `lens`. I can see two ways forward:

1. Beg someone to do a package takeover of `language-haskell-extract` and upload a new version.

2. Remove `lens`' dependency on `test-framework-th`. As far as I can tell, that package only provides a mild syntactic convenience in the form of automatic test discovery, so removing it shouldn't be too difficult. | 1.0 | Figure out what to do about the language-haskell-extract dependency - I am not yet able to run `lens`' test suite + GHC 8.10.1 on Travis CI without the use of `head.hackage`. Why, you ask? It is because various test suites depend on `test-framework-th`, which in turn depends on `language-haskell-extract`. Sadly, `language-haskell-extract` [does not build with `template-haskell-2.16.*`](https://github.com/finnsson/template-helper/issues/12), and the maintainer of the library has not been active on GitHub for about two years. Bottom line: `language-haskell-extract` is likely abandonware.

This poses a problem for `lens`. I can see two ways forward:

1. Beg someone to do a package takeover of `language-haskell-extract` and upload a new version.

2. Remove `lens`' dependency on `test-framework-th`. As far as I can tell, that package only provides a mild syntactic convenience in the form of automatic test discovery, so removing it shouldn't be too difficult. | test | figure out what to do about the language haskell extract dependency i am not yet able to run lens test suite ghc on travis ci without the use of head hackage why you ask it is because various test suites depend on test framework th which in turn depends on language haskell extract sadly language haskell extract and the maintainer of the library has not been active on github for about two years bottom line language haskell extract is likely abandonware this poses a problem for lens i can see two ways forward beg someone to do a package takeover of language haskell extract and upload a new version remove lens dependency on test framework th as far as i can tell that package only provides a mild syntactic convenience in the form of automatic test discovery so removing it shouldn t be too difficult | 1 |

262,456 | 22,841,318,045 | IssuesEvent | 2022-07-12 22:18:40 | mapbox/mapbox-gl-js | https://api.github.com/repos/mapbox/mapbox-gl-js | closed | `fog/terrain/sky-composition` render test is flaky | testing :100: | `render-tests/fog/terrain/sky-composition` failed once on a completely unrelated change. We should fix it | 1.0 | `fog/terrain/sky-composition` render test is flaky - `render-tests/fog/terrain/sky-composition` failed once on a completely unrelated change. We should fix it | test | fog terrain sky composition render test is flaky render tests fog terrain sky composition failed once on a completely unrelated change we should fix it | 1 |

209,826 | 23,730,862,402 | IssuesEvent | 2022-08-31 01:29:04 | arohablue/salesforce-a.kumar-ap06 | https://api.github.com/repos/arohablue/salesforce-a.kumar-ap06 | opened | CVE-2020-11022 (Medium) detected in jquery-3.4.1.js | security vulnerability | ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-3.4.1.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/3.4.1/jquery.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/3.4.1/jquery.js</a></p>

<p>Path to vulnerable library: /force-app/main/default/staticresources/Jquery.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-3.4.1.js** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.2 and before 3.5.0, passing HTML from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11022>CVE-2020-11022</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://blog.jquery.com/2020/04/10/jquery-3-5-0-released/">https://blog.jquery.com/2020/04/10/jquery-3-5-0-released/</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jQuery - 3.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-11022 (Medium) detected in jquery-3.4.1.js - ## CVE-2020-11022 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-3.4.1.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/3.4.1/jquery.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/3.4.1/jquery.js</a></p>

<p>Path to vulnerable library: /force-app/main/default/staticresources/Jquery.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-3.4.1.js** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.2 and before 3.5.0, passing HTML from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11022>CVE-2020-11022</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://blog.jquery.com/2020/04/10/jquery-3-5-0-released/">https://blog.jquery.com/2020/04/10/jquery-3-5-0-released/</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jQuery - 3.5.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve medium detected in jquery js cve medium severity vulnerability vulnerable library jquery js javascript library for dom operations library home page a href path to vulnerable library force app main default staticresources jquery js dependency hierarchy x jquery js vulnerable library vulnerability details in jquery versions greater than or equal to and before passing html from untrusted sources even after sanitizing it to one of jquery s dom manipulation methods i e html append and others may execute untrusted code this problem is patched in jquery publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution jquery step up your open source security game with mend | 0 |

292,924 | 25,250,906,735 | IssuesEvent | 2022-11-15 14:37:41 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | com.hazelcast.map.impl.query.QueryIndexMigrationTest.testQueryWithIndexDuringJoin | Team: Core Type: Test-Failure Source: Internal Module: IMap Module: Query | master, rev afbee062bba410a246fd2b865360fe789824867a

Failed on Oracle 11, Linux

http://jenkins.hazelcast.com/view/Official%20Builds/job/Hazelcast-master-sonar/937/testReport/com.hazelcast.map.impl.query/QueryIndexMigrationTest/

Stacktrace:

```

org.junit.runners.model.TestTimedOutException: test timed out after 60000 milliseconds

at java.base@11/java.lang.Thread.sleep(Native Method)

at java.base@11/java.lang.Thread.sleep(Thread.java:339)

at java.base@11/java.util.concurrent.TimeUnit.sleep(TimeUnit.java:446)

at app//com.hazelcast.test.HazelcastTestSupport.sleepMillis(HazelcastTestSupport.java:366)

at app//com.hazelcast.test.HazelcastTestSupport.assertTrueEventually(HazelcastTestSupport.java:1270)

at app//com.hazelcast.test.HazelcastTestSupport.assertTrueEventually(HazelcastTestSupport.java:1279)

at app//com.hazelcast.test.HazelcastTestSupport.waitAllForSafeState(HazelcastTestSupport.java:746)

at app//com.hazelcast.test.HazelcastTestSupport.waitAllForSafeState(HazelcastTestSupport.java:742)

at app//com.hazelcast.map.impl.query.QueryIndexMigrationTest.testQueryWithIndexDuringJoin(QueryIndexMigrationTest.java:249)

at java.base@11/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base@11/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at java.base@11/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base@11/java.lang.reflect.Method.invoke(Method.java:566)

at app//org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:59)

at app//org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12)

at app//org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:56)

at app//org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17)

at app//com.hazelcast.test.FailOnTimeoutStatement$CallableStatement.call(FailOnTimeoutStatement.java:115)

at app//com.hazelcast.test.FailOnTimeoutStatement$CallableStatement.call(FailOnTimeoutStatement.java:107)

at java.base@11/java.util.concurrent.FutureTask.run(FutureTask.java:264)

at java.base@11/java.lang.Thread.run(Thread.java:834)

```

Standard output:

```

Finished Running Test: testQueryWithIndexesWhileMigrating[copyBehavior: COPY_ON_READ] in 16.605 seconds.

03:17:44,813 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-1 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:44,815 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:44,913 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-3 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:44,915 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-2 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,014 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-3 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,015 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,117 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-2 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,117 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-3 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,217 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-11 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,218 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,318 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,318 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,418 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-4 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,419 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-11 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,519 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,519 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-3 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,619 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-4 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,619 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,719 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,719 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-15 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,820 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-3 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,820 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-2 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,921 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-2 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:45,921 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,021 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,021 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,121 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,121 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-3 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,222 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-11 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,222 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-2 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,322 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-8 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,323 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-2 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,422 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-8 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,423 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-2 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,510 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-15 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,523 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-15 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,523 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-11 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,624 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,624 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,709 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,724 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-8 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,724 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-2 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,824 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,825 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-12 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,924 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-15 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:46,925 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-12 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,025 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-8 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,025 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-2 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,125 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-15 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,126 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,225 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-11 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,227 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,325 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-11 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,327 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,426 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-7 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,428 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,527 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-4 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,528 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,627 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-4 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,628 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-12 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,728 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-2 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,728 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-12 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,828 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-2 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,829 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-11 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,928 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-2 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:47,929 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,029 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,029 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,129 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-15 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,130 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,224 INFO |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [HealthMonitor] hz.sad_rubin.HealthMonitor - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] processors=8, physical.memory.total=755.6G, physical.memory.free=693.5G, swap.space.total=4.0G, swap.space.free=4.0G, heap.memory.used=1.4G, heap.memory.free=623.2M, heap.memory.total=2.0G, heap.memory.max=2.0G, heap.memory.used/total=69.57%, heap.memory.used/max=69.57%, minor.gc.count=7487, minor.gc.time=73256ms, major.gc.count=6, major.gc.time=2181ms, load.process=87.50%, load.system=19.53%, load.systemAverage=15.29, thread.count=1062, thread.peakCount=2648, cluster.timeDiff=0, event.q.size=0, executor.q.async.size=0, executor.q.client.size=0, executor.q.client.query.size=0, executor.q.client.blocking.size=0, executor.q.query.size=0, executor.q.scheduled.size=0, executor.q.io.size=0, executor.q.system.size=0, executor.q.operations.size=0, executor.q.priorityOperation.size=0, operations.completed.count=4715, executor.q.mapLoad.size=0, executor.q.mapLoadAllKeys.size=0, executor.q.cluster.size=0, executor.q.response.size=0, operations.running.count=0, operations.pending.invocations.percentage=0.00%, operations.pending.invocations.count=0, proxy.count=1, clientEndpoint.count=0, connection.active.count=0, client.connection.count=0, connection.count=0

03:17:48,316 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-1 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,319 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-2 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,416 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,420 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-8 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,510 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,517 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,521 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-9 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,617 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,621 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-7 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,709 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,712 INFO |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [HealthMonitor] hz.cool_rubin.HealthMonitor - [127.0.0.1]:5705 [dev] [5.0-SNAPSHOT] processors=8, physical.memory.total=755.6G, physical.memory.free=693.5G, swap.space.total=4.0G, swap.space.free=4.0G, heap.memory.used=1.4G, heap.memory.free=593.4M, heap.memory.total=2.0G, heap.memory.max=2.0G, heap.memory.used/total=71.02%, heap.memory.used/max=71.02%, minor.gc.count=7487, minor.gc.time=73256ms, major.gc.count=6, major.gc.time=2181ms, load.process=18.13%, load.system=17.72%, load.systemAverage=15.29, thread.count=1062, thread.peakCount=2648, cluster.timeDiff=-4, event.q.size=0, executor.q.async.size=0, executor.q.client.size=0, executor.q.client.query.size=0, executor.q.client.blocking.size=0, executor.q.query.size=0, executor.q.scheduled.size=0, executor.q.io.size=0, executor.q.system.size=0, executor.q.operations.size=0, executor.q.priorityOperation.size=0, operations.completed.count=743, executor.q.mapLoad.size=0, executor.q.mapLoadAllKeys.size=0, executor.q.cluster.size=0, executor.q.response.size=0, operations.running.count=0, operations.pending.invocations.percentage=0.00%, operations.pending.invocations.count=0, proxy.count=1, clientEndpoint.count=0, connection.active.count=0, client.connection.count=0, connection.count=0

03:17:48,713 INFO |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [HealthMonitor] hz.lucid_rubin.HealthMonitor - [127.0.0.1]:5704 [dev] [5.0-SNAPSHOT] processors=8, physical.memory.total=755.6G, physical.memory.free=693.5G, swap.space.total=4.0G, swap.space.free=4.0G, heap.memory.used=1.4G, heap.memory.free=593.0M, heap.memory.total=2.0G, heap.memory.max=2.0G, heap.memory.used/total=71.04%, heap.memory.used/max=71.04%, minor.gc.count=7487, minor.gc.time=73256ms, major.gc.count=6, major.gc.time=2181ms, load.process=60.00%, load.system=0.00%, load.systemAverage=15.29, thread.count=1062, thread.peakCount=2648, cluster.timeDiff=-3, event.q.size=0, executor.q.async.size=0, executor.q.client.size=0, executor.q.client.query.size=0, executor.q.client.blocking.size=0, executor.q.query.size=0, executor.q.scheduled.size=0, executor.q.io.size=0, executor.q.system.size=0, executor.q.operations.size=0, executor.q.priorityOperation.size=0, operations.completed.count=861, executor.q.mapLoad.size=0, executor.q.mapLoadAllKeys.size=0, executor.q.cluster.size=0, executor.q.response.size=0, operations.running.count=0, operations.pending.invocations.percentage=0.00%, operations.pending.invocations.count=0, proxy.count=1, clientEndpoint.count=0, connection.active.count=0, client.connection.count=0, connection.count=0

03:17:48,714 INFO |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [HealthMonitor] hz.stupefied_rubin.HealthMonitor - [127.0.0.1]:5703 [dev] [5.0-SNAPSHOT] processors=8, physical.memory.total=755.6G, physical.memory.free=693.5G, swap.space.total=4.0G, swap.space.free=4.0G, heap.memory.used=1.4G, heap.memory.free=592.7M, heap.memory.total=2.0G, heap.memory.max=2.0G, heap.memory.used/total=71.06%, heap.memory.used/max=71.06%, minor.gc.count=7487, minor.gc.time=73256ms, major.gc.count=6, major.gc.time=2181ms, load.process=75.00%, load.system=71.43%, load.systemAverage=15.29, thread.count=1062, thread.peakCount=2648, cluster.timeDiff=-3, event.q.size=0, executor.q.async.size=0, executor.q.client.size=0, executor.q.client.query.size=0, executor.q.client.blocking.size=0, executor.q.query.size=0, executor.q.scheduled.size=0, executor.q.io.size=0, executor.q.system.size=0, executor.q.operations.size=0, executor.q.priorityOperation.size=0, operations.completed.count=759, executor.q.mapLoad.size=0, executor.q.mapLoadAllKeys.size=0, executor.q.cluster.size=0, executor.q.response.size=0, operations.running.count=1, operations.pending.invocations.percentage=0.00%, operations.pending.invocations.count=0, proxy.count=1, clientEndpoint.count=0, connection.active.count=0, client.connection.count=0, connection.count=0

03:17:48,715 INFO |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [HealthMonitor] hz.vigorous_rubin.HealthMonitor - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] processors=8, physical.memory.total=755.6G, physical.memory.free=693.5G, swap.space.total=4.0G, swap.space.free=4.0G, heap.memory.used=1.4G, heap.memory.free=592.7M, heap.memory.total=2.0G, heap.memory.max=2.0G, heap.memory.used/total=71.06%, heap.memory.used/max=71.06%, minor.gc.count=7487, minor.gc.time=73256ms, major.gc.count=6, major.gc.time=2181ms, load.process=50.00%, load.system=50.00%, load.systemAverage=15.29, thread.count=1062, thread.peakCount=2648, cluster.timeDiff=-2, event.q.size=0, executor.q.async.size=0, executor.q.client.size=0, executor.q.client.query.size=0, executor.q.client.blocking.size=0, executor.q.query.size=0, executor.q.scheduled.size=0, executor.q.io.size=0, executor.q.system.size=0, executor.q.operations.size=0, executor.q.priorityOperation.size=0, operations.completed.count=778, executor.q.mapLoad.size=0, executor.q.mapLoadAllKeys.size=0, executor.q.cluster.size=0, executor.q.response.size=0, operations.running.count=0, operations.pending.invocations.percentage=0.00%, operations.pending.invocations.count=0, proxy.count=1, clientEndpoint.count=0, connection.active.count=0, client.connection.count=0, connection.count=0

03:17:48,717 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,721 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-7 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,815 INFO |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [HealthMonitor] hz.reverent_rubin.HealthMonitor - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] processors=8, physical.memory.total=755.6G, physical.memory.free=693.5G, swap.space.total=4.0G, swap.space.free=4.0G, heap.memory.used=1.4G, heap.memory.free=582.3M, heap.memory.total=2.0G, heap.memory.max=2.0G, heap.memory.used/total=71.56%, heap.memory.used/max=71.56%, minor.gc.count=7487, minor.gc.time=73256ms, major.gc.count=6, major.gc.time=2181ms, load.process=20.26%, load.system=18.23%, load.systemAverage=15.29, thread.count=1062, thread.peakCount=2648, cluster.timeDiff=-5, event.q.size=0, executor.q.async.size=0, executor.q.client.size=0, executor.q.client.query.size=0, executor.q.client.blocking.size=0, executor.q.query.size=0, executor.q.scheduled.size=0, executor.q.io.size=0, executor.q.system.size=0, executor.q.operations.size=0, executor.q.priorityOperation.size=0, operations.completed.count=601, executor.q.mapLoad.size=0, executor.q.mapLoadAllKeys.size=0, executor.q.cluster.size=0, executor.q.response.size=0, operations.running.count=2, operations.pending.invocations.percentage=0.00%, operations.pending.invocations.count=0, proxy.count=1, clientEndpoint.count=0, connection.active.count=0, client.connection.count=0, connection.count=0

03:17:48,820 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,822 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-8 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,920 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:48,922 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-8 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,021 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,023 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-8 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,121 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,123 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-8 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,134 INFO |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [HealthMonitor] hz.competent_rubin.HealthMonitor - [127.0.0.1]:5703 [dev] [5.0-SNAPSHOT] processors=8, physical.memory.total=755.6G, physical.memory.free=693.5G, swap.space.total=4.0G, swap.space.free=4.0G, heap.memory.used=1.5G, heap.memory.free=554.7M, heap.memory.total=2.0G, heap.memory.max=2.0G, heap.memory.used/total=72.91%, heap.memory.used/max=72.91%, minor.gc.count=7487, minor.gc.time=73256ms, major.gc.count=6, major.gc.time=2181ms, load.process=61.54%, load.system=57.14%, load.systemAverage=15.29, thread.count=1062, thread.peakCount=2648, cluster.timeDiff=-3, event.q.size=0, executor.q.async.size=0, executor.q.client.size=0, executor.q.client.query.size=0, executor.q.client.blocking.size=0, executor.q.query.size=0, executor.q.scheduled.size=0, executor.q.io.size=0, executor.q.system.size=0, executor.q.operations.size=0, executor.q.priorityOperation.size=0, operations.completed.count=583, executor.q.mapLoad.size=0, executor.q.mapLoadAllKeys.size=0, executor.q.cluster.size=0, executor.q.response.size=0, operations.running.count=2, operations.pending.invocations.percentage=0.00%, operations.pending.invocations.count=0, proxy.count=1, clientEndpoint.count=0, connection.active.count=0, client.connection.count=0, connection.count=0

03:17:49,221 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-8 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,223 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-12 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,322 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,324 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-12 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,422 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,424 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-4 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,524 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,525 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-2 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,625 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-4 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,626 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-4 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,726 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,727 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-14 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,826 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,827 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-14 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,927 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-7 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:49,929 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-14 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:50,027 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-16 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:50,029 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-14 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:50,127 DEBUG |testQueryWithIndexDuringJoin[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.sad_rubin.cached.thread-1 - [127.0.0.1]:5702 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:50,130 DEBUG |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [JobCoordinationService] hz.focused_rubin.cached.thread-14 - [127.0.0.1]:5701 [dev] [5.0-SNAPSHOT] Not starting jobs because partition replication is not in safe state, but in REPLICA_NOT_OWNED

03:17:50,130 INFO |testIndexCleanupOnMigration[copyBehavior: COPY_ON_READ]| - [HealthMonitor] hz.admiring_rubin.HealthMonitor - [127.0.0.1]:5704 [dev] [5.0-SNAPSHOT] processors=8, physical.memory.total=755.6G, physical.memory.free=693.5G, swap.space.total=4.0G, swap.space.free=4.0G, heap.memory.used=1.5G, heap.memory.free=488.7M, heap.memory.total=2.0G, heap.memory.max=2.0G, heap.memory.used/total=76.13%, heap.memory.used/max=76.13%, minor.gc.count=7487, minor.gc.time=73256ms, major.gc.count=6, major.gc.time=2181ms, load.process=66.67%, load.system=75.00%, load.systemAverage=15.29, thread.count=1062, thread.peakCount=2648, cluster.timeDiff=-3, event.q.size=0, executor.q.async.size=0, executor.q.client.size=0, executor.q.client.query.size=0, executor.q.client.blocking.size=0, executor.q.query.size=0, executor.q.scheduled.size=0, executor.q.io.size=0, executor.q.system.size=0, executor.q.operations.size=0, executor.q.priorityOperation.size=0, operations.completed.count=625, executor.q.mapLoad.size=0, executor.q.mapLoadAllKeys.size=0, executor.q.cluster.size=0, executor.q.response.size=0, operations.running.count=0, operations.pending.invocations.percentage=0.00%, operations.pending.invocations.count=0, proxy.count=1, clientEndpoint.count=0, connection.active.count=0, client.connection.count=0, connection.count=0