Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

490,428 | 14,121,065,105 | IssuesEvent | 2020-11-09 00:41:24 | kubeflow/kubeflow | https://api.github.com/repos/kubeflow/kubeflow | closed | Installation guide for GCP is incomplete and it's impossible to deploy new Kubeflow cluster | area/docs kind/bug platform/gcp priority/p1 | /kind bug

**What steps did you take and what happened:**

I've carefully followed the GCP Kubeflow deployment guide **twice** (starting from scratch), without success.

https://www.kubeflow.org/docs/gke/deploy/

My `set-values` from Makefile (the one from https://www.kubeflow.org/docs/gke/deploy/deploy-cli/#fetch-packages-using-kpt):

```makefile

set-values:

kpt cfg set ./instance gke.private false

kpt cfg set ./instance mgmt-ctxt kf-mgmnt

kpt cfg set ./upstream/manifests/gcp name kf

kpt cfg set ./upstream/manifests/gcp gcloud.core.project XXX

kpt cfg set ./upstream/manifests/gcp gcloud.compute.zone europe-west4

kpt cfg set ./upstream/manifests/gcp location europe-west4-b

kpt cfg set ./upstream/manifests/gcp log-firewalls false

kpt cfg set ./upstream/manifests/stacks/gcp name kf

kpt cfg set ./upstream/manifests/stacks/gcp gcloud.core.project XXX

kpt cfg set ./instance name kf

kpt cfg set ./instance location europe-west4-b

kpt cfg set ./instance gcloud.core.project XXX

kpt cfg set ./instance email X@XX

```

Final error I get after running `make apply` in step https://www.kubeflow.org/docs/gke/deploy/deploy-cli/#deploy-kubeflow is:

```bash

kubectl --context=kf-mgmnt wait --for=condition=Ready --timeout=600s containercluster kf

error: timed out waiting for the condition on containerclusters/kf

```

**What did you expect to happen:**

Installation is seamless and completes without errors, installation guide is complete.

**Anything else you would like to add:**

Current deployment documentation seems to be neglected and there are a lot of missing pieces there that waste people's time on digging through many GitHub issues.

**Issues I've had to deal with manually / questions:**

1. When management cluster has long name, like **kubeflow-management**, the setup https://www.kubeflow.org/docs/gke/deploy/management-setup/ cannot be completed due to violation of some max characters limit for service account names

1. The guide https://www.kubeflow.org/docs/gke/deploy/ does not mention that you need kustomize IN SPECIFIC VERSION (https://github.com/kubeflow/manifests/issues/1490#issuecomment-674092679), istioctl IN GOOGLE-PROVIDED version (https://github.com/kubeflow/manifests/issues/1490#issuecomment-673729937)

1. There is a strong assumption that cluster needs to have the namespace exactly the same as the project? Why is that?

1. Why Kubeflow needs a separate "management" cluster now?

**Environment:**

- Kubeflow version: n/a

- kfctl version: (use `kfctl version`): not installed

- Kubernetes platform: GCP

- Kubernetes version:

```

Client Version: version.Info{Major:"1", Minor:"16+", GitVersion:"v1.16.13-dispatcher", GitCommit:"fd22db44e150011eccc8729db223945384460143", GitTreeState:"clean", BuildDate:"2020-07-24T07:48:37Z", GoVersion:"go1.13.9", Compiler:"gc", Platform:"darwin/amd64"}

Server Version: version.Info{Major:"1", Minor:"16+", GitVersion:"v1.16.13-gke.1", GitCommit:"688c6543aa4b285355723f100302d80431e411cc", GitTreeState:"clean", BuildDate:"2020-07-21T02:37:26Z", GoVersion:"go1.13.9b4", Compiler:"gc", Platform:"linux/amd64"}

```

- OS: macOS 10.15.1

| 1.0 | Installation guide for GCP is incomplete and it's impossible to deploy new Kubeflow cluster - /kind bug

**What steps did you take and what happened:**

I've carefully followed the GCP Kubeflow deployment guide **twice** (starting from scratch), without success.

https://www.kubeflow.org/docs/gke/deploy/

My `set-values` from Makefile (the one from https://www.kubeflow.org/docs/gke/deploy/deploy-cli/#fetch-packages-using-kpt):

```makefile

set-values:

kpt cfg set ./instance gke.private false

kpt cfg set ./instance mgmt-ctxt kf-mgmnt

kpt cfg set ./upstream/manifests/gcp name kf

kpt cfg set ./upstream/manifests/gcp gcloud.core.project XXX

kpt cfg set ./upstream/manifests/gcp gcloud.compute.zone europe-west4

kpt cfg set ./upstream/manifests/gcp location europe-west4-b

kpt cfg set ./upstream/manifests/gcp log-firewalls false

kpt cfg set ./upstream/manifests/stacks/gcp name kf

kpt cfg set ./upstream/manifests/stacks/gcp gcloud.core.project XXX

kpt cfg set ./instance name kf

kpt cfg set ./instance location europe-west4-b

kpt cfg set ./instance gcloud.core.project XXX

kpt cfg set ./instance email X@XX

```

Final error I get after running `make apply` in step https://www.kubeflow.org/docs/gke/deploy/deploy-cli/#deploy-kubeflow is:

```bash

kubectl --context=kf-mgmnt wait --for=condition=Ready --timeout=600s containercluster kf

error: timed out waiting for the condition on containerclusters/kf

```

**What did you expect to happen:**

Installation is seamless and completes without errors, installation guide is complete.

**Anything else you would like to add:**

Current deployment documentation seems to be neglected and there are a lot of missing pieces there that waste people's time on digging through many GitHub issues.

**Issues I've had to deal with manually / questions:**

1. When management cluster has long name, like **kubeflow-management**, the setup https://www.kubeflow.org/docs/gke/deploy/management-setup/ cannot be completed due to violation of some max characters limit for service account names

1. The guide https://www.kubeflow.org/docs/gke/deploy/ does not mention that you need kustomize IN SPECIFIC VERSION (https://github.com/kubeflow/manifests/issues/1490#issuecomment-674092679), istioctl IN GOOGLE-PROVIDED version (https://github.com/kubeflow/manifests/issues/1490#issuecomment-673729937)

1. There is a strong assumption that cluster needs to have the namespace exactly the same as the project? Why is that?

1. Why Kubeflow needs a separate "management" cluster now?

**Environment:**

- Kubeflow version: n/a

- kfctl version: (use `kfctl version`): not installed

- Kubernetes platform: GCP

- Kubernetes version:

```

Client Version: version.Info{Major:"1", Minor:"16+", GitVersion:"v1.16.13-dispatcher", GitCommit:"fd22db44e150011eccc8729db223945384460143", GitTreeState:"clean", BuildDate:"2020-07-24T07:48:37Z", GoVersion:"go1.13.9", Compiler:"gc", Platform:"darwin/amd64"}

Server Version: version.Info{Major:"1", Minor:"16+", GitVersion:"v1.16.13-gke.1", GitCommit:"688c6543aa4b285355723f100302d80431e411cc", GitTreeState:"clean", BuildDate:"2020-07-21T02:37:26Z", GoVersion:"go1.13.9b4", Compiler:"gc", Platform:"linux/amd64"}

```

- OS: macOS 10.15.1

| non_test | installation guide for gcp is incomplete and it s impossible to deploy new kubeflow cluster kind bug what steps did you take and what happened i ve carefully followed the gcp kubeflow deployment guide twice starting from scratch without success my set values from makefile the one from makefile set values kpt cfg set instance gke private false kpt cfg set instance mgmt ctxt kf mgmnt kpt cfg set upstream manifests gcp name kf kpt cfg set upstream manifests gcp gcloud core project xxx kpt cfg set upstream manifests gcp gcloud compute zone europe kpt cfg set upstream manifests gcp location europe b kpt cfg set upstream manifests gcp log firewalls false kpt cfg set upstream manifests stacks gcp name kf kpt cfg set upstream manifests stacks gcp gcloud core project xxx kpt cfg set instance name kf kpt cfg set instance location europe b kpt cfg set instance gcloud core project xxx kpt cfg set instance email x xx final error i get after running make apply in step is bash kubectl context kf mgmnt wait for condition ready timeout containercluster kf error timed out waiting for the condition on containerclusters kf what did you expect to happen installation is seamless and completes without errors installation guide is complete anything else you would like to add current deployment documentation seems to be neglected and there are a lot of missing pieces there that waste people s time on digging through many github issues issues i ve had to deal with manually questions when management cluster has long name like kubeflow management the setup cannot be completed due to violation of some max characters limit for service account names the guide does not mention that you need kustomize in specific version istioctl in google provided version there is a strong assumption that cluster needs to have the namespace exactly the same as the project why is that why kubeflow needs a separate management cluster now environment kubeflow version n a kfctl version use kfctl version not installed kubernetes platform gcp kubernetes version client version version info major minor gitversion dispatcher gitcommit gittreestate clean builddate goversion compiler gc platform darwin server version version info major minor gitversion gke gitcommit gittreestate clean builddate goversion compiler gc platform linux os macos | 0 |

145,326 | 11,685,310,415 | IssuesEvent | 2020-03-05 08:51:12 | MorphCast/Studio | https://api.github.com/repos/MorphCast/Studio | closed | Deleting a video timeline item, exiting timeline, reentering and undoing the delete will crash | bug ready for testing | The "recursive" application of the locallydeleted commands for the timeline item and its "childrens" will create issues both when the timeline item is firstly locallydeleted or lastly. It's not a matter of that change, but instead a matter of creating a TimelineBox for each media item inside the timeline item instead of linking it to the front one. | 1.0 | Deleting a video timeline item, exiting timeline, reentering and undoing the delete will crash - The "recursive" application of the locallydeleted commands for the timeline item and its "childrens" will create issues both when the timeline item is firstly locallydeleted or lastly. It's not a matter of that change, but instead a matter of creating a TimelineBox for each media item inside the timeline item instead of linking it to the front one. | test | deleting a video timeline item exiting timeline reentering and undoing the delete will crash the recursive application of the locallydeleted commands for the timeline item and its childrens will create issues both when the timeline item is firstly locallydeleted or lastly it s not a matter of that change but instead a matter of creating a timelinebox for each media item inside the timeline item instead of linking it to the front one | 1 |

27,850 | 5,114,074,401 | IssuesEvent | 2017-01-06 17:16:04 | edno/kleis | https://api.github.com/repos/edno/kleis | closed | Field Group displayed whilst creating Super Admin | defect | When a field validation error occurs, then the field Group is displayed whilst creating Super Admin account. The field is empty (no values available), and it does not block the creation.

The field Group should be displayed only for Local Admin.

Steps to reproduce:

- Create a new Super Admin user with incorrect password format | 1.0 | Field Group displayed whilst creating Super Admin - When a field validation error occurs, then the field Group is displayed whilst creating Super Admin account. The field is empty (no values available), and it does not block the creation.

The field Group should be displayed only for Local Admin.

Steps to reproduce:

- Create a new Super Admin user with incorrect password format | non_test | field group displayed whilst creating super admin when a field validation error occurs then the field group is displayed whilst creating super admin account the field is empty no values available and it does not block the creation the field group should be displayed only for local admin steps to reproduce create a new super admin user with incorrect password format | 0 |

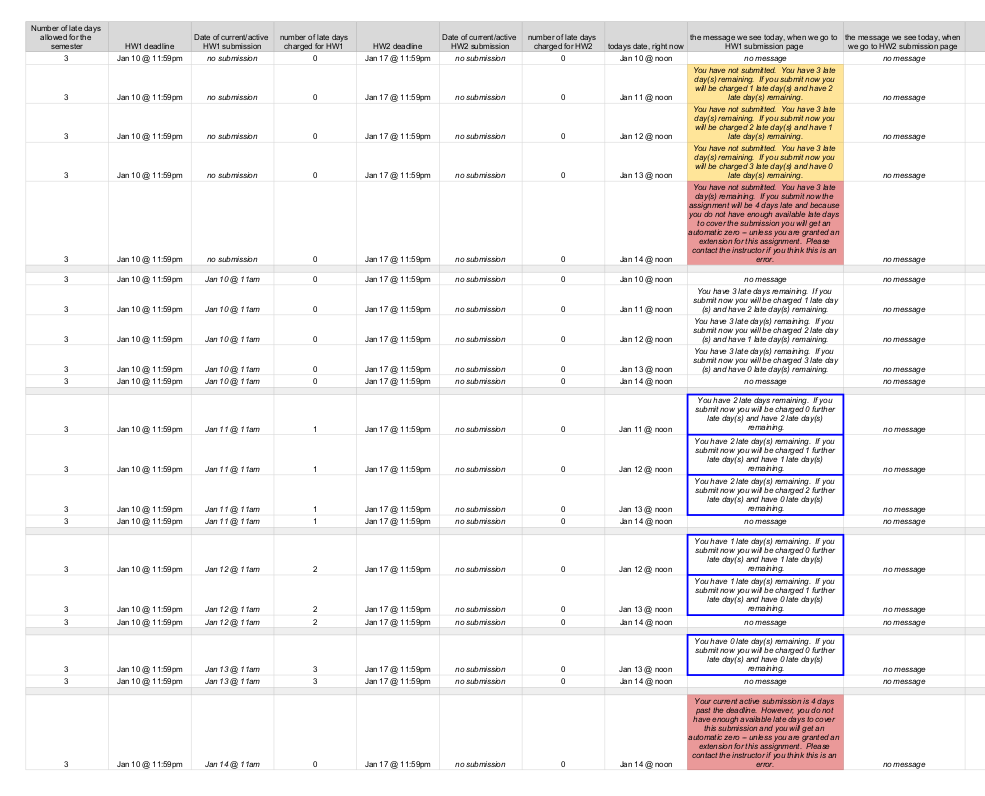

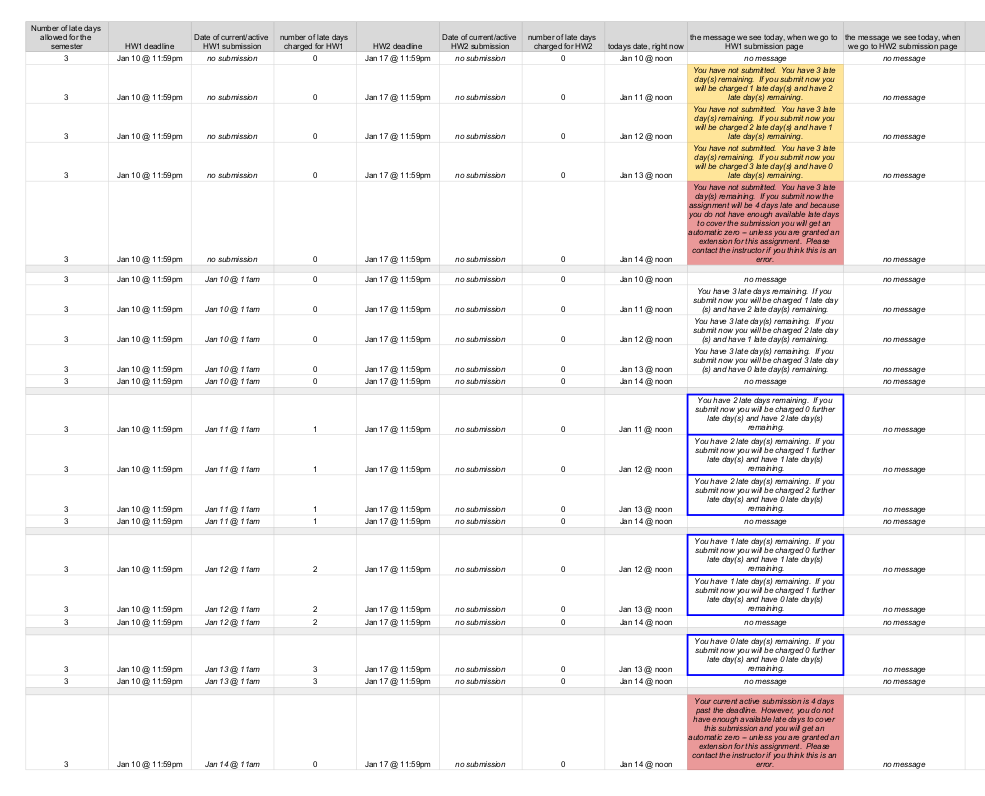

126,763 | 10,434,829,342 | IssuesEvent | 2019-09-17 15:57:54 | Submitty/Submitty | https://api.github.com/repos/Submitty/Submitty | closed | Add testing for late days | Testing / Continuous Integration (CI) | **What problem are you trying to solve with Submitty**

Add unit tests for the different types of late day states

| 1.0 | Add testing for late days - **What problem are you trying to solve with Submitty**

Add unit tests for the different types of late day states

| test | add testing for late days what problem are you trying to solve with submitty add unit tests for the different types of late day states | 1 |

348,118 | 31,468,224,035 | IssuesEvent | 2023-08-30 05:01:53 | gear-tech/gear | https://api.github.com/repos/gear-tech/gear | closed | Write tests for each send signal case (with asserting appropriate signal code) | C1-feature D4-test | ### Problem to Solve

/ .. /

### Possible Solution

/ .. /

### Notes

_No response_ | 1.0 | Write tests for each send signal case (with asserting appropriate signal code) - ### Problem to Solve

/ .. /

### Possible Solution

/ .. /

### Notes

_No response_ | test | write tests for each send signal case with asserting appropriate signal code problem to solve possible solution notes no response | 1 |

252,515 | 21,582,207,046 | IssuesEvent | 2022-05-02 20:02:21 | damccorm/test-migration-target | https://api.github.com/repos/damccorm/test-migration-target | opened | beam_PostCommit_XVR_GoUsingJava_Dataflow fails on some test transforms | bug test-failures cross-language sdk-go P2 | Example failure: https://ci-beam.apache.org/job/beam_PostCommit_XVR_GoUsingJava_Dataflow/7/

I couldn't find accurate details about why the tests are failing, but TestXLang_Prefix, TestXLang_Multi, and TestXLang_Partition are failing while running for some reason. Investigating the Dataflow logs, we can see SDK harnesses are failing to connect for some reason. For example:

`noformat`

"getPodContainerStatuses for pod "df-go-testxlang-multi-03300551-62xv-harness-3msv_default(a7f1d8dfb2c3d2b4e80f5d92c1728787)" failed: rpc error: code = Unknown desc = Error: No such container: bea0d9bde42bf890f6fe1d4f589932471037a5948fb9588d01a06425cd14c177"

`noformat`

However I haven't been able to find any further details showing why the harness fails, and the tests keep running beyond that for a while with other errors that are also pretty inscrutable.

Imported from Jira [BEAM-14214](https://issues.apache.org/jira/browse/BEAM-14214). Original Jira may contain additional context.

Reported by: danoliveira. | 1.0 | beam_PostCommit_XVR_GoUsingJava_Dataflow fails on some test transforms - Example failure: https://ci-beam.apache.org/job/beam_PostCommit_XVR_GoUsingJava_Dataflow/7/

I couldn't find accurate details about why the tests are failing, but TestXLang_Prefix, TestXLang_Multi, and TestXLang_Partition are failing while running for some reason. Investigating the Dataflow logs, we can see SDK harnesses are failing to connect for some reason. For example:

`noformat`

"getPodContainerStatuses for pod "df-go-testxlang-multi-03300551-62xv-harness-3msv_default(a7f1d8dfb2c3d2b4e80f5d92c1728787)" failed: rpc error: code = Unknown desc = Error: No such container: bea0d9bde42bf890f6fe1d4f589932471037a5948fb9588d01a06425cd14c177"

`noformat`

However I haven't been able to find any further details showing why the harness fails, and the tests keep running beyond that for a while with other errors that are also pretty inscrutable.

Imported from Jira [BEAM-14214](https://issues.apache.org/jira/browse/BEAM-14214). Original Jira may contain additional context.

Reported by: danoliveira. | test | beam postcommit xvr gousingjava dataflow fails on some test transforms example failure i couldn t find accurate details about why the tests are failing but testxlang prefix testxlang multi and testxlang partition are failing while running for some reason investigating the dataflow logs we can see sdk harnesses are failing to connect for some reason for example noformat getpodcontainerstatuses for pod df go testxlang multi harness default failed rpc error code unknown desc error no such container noformat however i haven t been able to find any further details showing why the harness fails and the tests keep running beyond that for a while with other errors that are also pretty inscrutable imported from jira original jira may contain additional context reported by danoliveira | 1 |

627,472 | 19,905,632,970 | IssuesEvent | 2022-01-25 12:30:21 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.derstandard.at - site is not usable | browser-firefox-mobile priority-normal type-tracking-protection-strict engine-gecko | <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/98670 -->

<!-- @extra_labels: type-tracking-protection-strict -->

**URL**: https://www.derstandard.at/story/2000132199542/indische-regierung-nimmt-mutter-teresa-in-der-mangel?ref=rss

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android 9

**Tested Another Browser**: Yes Chrome

**Problem type**: Site is not usable

**Description**: Missing items

**Steps to Reproduce**:

The community comments are not displayed.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2022/1/bd1e895c-5908-4752-a4f0-6474f3df8776.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200204012329</li><li>channel: default</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: true (strict)</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2022/1/dd9dfc87-cfaa-4e00-b8d0-e184b5249f92)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.derstandard.at - site is not usable - <!-- @browser: Firefox Mobile 68.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 9; Mobile; rv:68.0) Gecko/68.0 Firefox/68.0 -->

<!-- @reported_with: mobile-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/98670 -->

<!-- @extra_labels: type-tracking-protection-strict -->

**URL**: https://www.derstandard.at/story/2000132199542/indische-regierung-nimmt-mutter-teresa-in-der-mangel?ref=rss

**Browser / Version**: Firefox Mobile 68.0

**Operating System**: Android 9

**Tested Another Browser**: Yes Chrome

**Problem type**: Site is not usable

**Description**: Missing items

**Steps to Reproduce**:

The community comments are not displayed.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2022/1/bd1e895c-5908-4752-a4f0-6474f3df8776.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200204012329</li><li>channel: default</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: true (strict)</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2022/1/dd9dfc87-cfaa-4e00-b8d0-e184b5249f92)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_test | site is not usable url browser version firefox mobile operating system android tested another browser yes chrome problem type site is not usable description missing items steps to reproduce the community comments are not displayed view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel default hastouchscreen true mixed active content blocked false mixed passive content blocked false tracking content blocked true strict from with ❤️ | 0 |

164,746 | 12,812,898,687 | IssuesEvent | 2020-07-04 09:30:29 | aliasrobotics/RVD | https://api.github.com/repos/aliasrobotics/RVD | closed | RVD#2918: CWE-120 (buffer), Does not check for buffer overflows when concatenating to destination ... @ tforms/posix/src/main.cpp:500 | CWE-120 bug components software flawfinder flawfinder_level_4 mitigated robot component: PX4 static analysis testing triage version: v1.8.0 | ```yaml

id: 2918

title: 'RVD#2918: CWE-120 (buffer), Does not check for buffer overflows when concatenating

to destination ... @ tforms/posix/src/main.cpp:500'

type: bug

description: 'Does not check for buffer overflows when concatenating to destination

[MS-banned] (CWE-120). Consider using strcat_s, strncat, strlcat, or snprintf (warning:

strncat is easily misused). . Happening @ ...tforms/posix/src/main.cpp:500'

cwe:

- CWE-120

cve: None

keywords:

- flawfinder

- flawfinder_level_4

- static analysis

- testing

- triage

- CWE-120

- bug

- 'version: v1.8.0'

- 'robot component: PX4'

- components software

system: ./Firmware/platforms/posix/src/main.cpp:500:18

vendor: null

severity:

rvss-score: 0

rvss-vector: ''

severity-description: ''

cvss-score: 0

cvss-vector: ''

links:

- https://github.com/aliasrobotics/RVD/issues/2918

flaw:

phase: testing

specificity: subject-specific

architectural-location: application-specific

application: N/A

subsystem: N/A

package: N/A

languages: None

date-detected: 2020-06-29 (15:49)

detected-by: Alias Robotics

detected-by-method: testing static

date-reported: 2020-06-29 (15:49)

reported-by: Alias Robotics

reported-by-relationship: automatic

issue: https://github.com/aliasrobotics/RVD/issues/2918

reproducibility: always

trace: (context) \t\tif (nullptr == strcat(pwd_path, folderpath)) {

reproduction: See artifacts below (if available)

reproduction-image: gitlab.com/aliasrobotics/offensive/alurity/pipelines/active/pipeline_px4/-/jobs/615986299/artifacts/download

exploitation:

description: ''

exploitation-image: ''

exploitation-vector: ''

exploitation-recipe: ''

mitigation:

description: 'Consider using strcat_s, strncat, strlcat, or snprintf (warning: strncat

is easily misused)'

pull-request: ''

date-mitigation: ''

``` | 1.0 | RVD#2918: CWE-120 (buffer), Does not check for buffer overflows when concatenating to destination ... @ tforms/posix/src/main.cpp:500 - ```yaml

id: 2918

title: 'RVD#2918: CWE-120 (buffer), Does not check for buffer overflows when concatenating

to destination ... @ tforms/posix/src/main.cpp:500'

type: bug

description: 'Does not check for buffer overflows when concatenating to destination

[MS-banned] (CWE-120). Consider using strcat_s, strncat, strlcat, or snprintf (warning:

strncat is easily misused). . Happening @ ...tforms/posix/src/main.cpp:500'

cwe:

- CWE-120

cve: None

keywords:

- flawfinder

- flawfinder_level_4

- static analysis

- testing

- triage

- CWE-120

- bug

- 'version: v1.8.0'

- 'robot component: PX4'

- components software

system: ./Firmware/platforms/posix/src/main.cpp:500:18

vendor: null

severity:

rvss-score: 0

rvss-vector: ''

severity-description: ''

cvss-score: 0

cvss-vector: ''

links:

- https://github.com/aliasrobotics/RVD/issues/2918

flaw:

phase: testing

specificity: subject-specific

architectural-location: application-specific

application: N/A

subsystem: N/A

package: N/A

languages: None

date-detected: 2020-06-29 (15:49)

detected-by: Alias Robotics

detected-by-method: testing static

date-reported: 2020-06-29 (15:49)

reported-by: Alias Robotics

reported-by-relationship: automatic

issue: https://github.com/aliasrobotics/RVD/issues/2918

reproducibility: always

trace: (context) \t\tif (nullptr == strcat(pwd_path, folderpath)) {

reproduction: See artifacts below (if available)

reproduction-image: gitlab.com/aliasrobotics/offensive/alurity/pipelines/active/pipeline_px4/-/jobs/615986299/artifacts/download

exploitation:

description: ''

exploitation-image: ''

exploitation-vector: ''

exploitation-recipe: ''

mitigation:

description: 'Consider using strcat_s, strncat, strlcat, or snprintf (warning: strncat

is easily misused)'

pull-request: ''

date-mitigation: ''

``` | test | rvd cwe buffer does not check for buffer overflows when concatenating to destination tforms posix src main cpp yaml id title rvd cwe buffer does not check for buffer overflows when concatenating to destination tforms posix src main cpp type bug description does not check for buffer overflows when concatenating to destination cwe consider using strcat s strncat strlcat or snprintf warning strncat is easily misused happening tforms posix src main cpp cwe cwe cve none keywords flawfinder flawfinder level static analysis testing triage cwe bug version robot component components software system firmware platforms posix src main cpp vendor null severity rvss score rvss vector severity description cvss score cvss vector links flaw phase testing specificity subject specific architectural location application specific application n a subsystem n a package n a languages none date detected detected by alias robotics detected by method testing static date reported reported by alias robotics reported by relationship automatic issue reproducibility always trace context t tif nullptr strcat pwd path folderpath reproduction see artifacts below if available reproduction image gitlab com aliasrobotics offensive alurity pipelines active pipeline jobs artifacts download exploitation description exploitation image exploitation vector exploitation recipe mitigation description consider using strcat s strncat strlcat or snprintf warning strncat is easily misused pull request date mitigation | 1 |

318,293 | 27,297,075,801 | IssuesEvent | 2023-02-23 21:21:23 | nucleus-security/Test-repo | https://api.github.com/repos/nucleus-security/Test-repo | closed | Nucleus - [High] - 440057 | Test | Source: QUALYS

Finding Description: CentOS has released security update for kernel to fix the vulnerabilities. Affected Products: centos 6

Impact: Successful exploitation allows attacker to compromise the system.

Target(s): Asset name: 192.168.56.103

Solution: To resolve this issue, upgrade to the latest packages which contain a patch. Refer to CentOS advisory centos 6 (https://lists.centos.org/pipermail/centos-announce/2016-July/021977.html) for updates and patch information.

Patch:

Following are links for downloading patches to fix the vulnerabilities:

CESA-2016:1406: centos 6 (https://lists.centos.org/pipermail/centos-announce/2016-July/021977.html)

References:

QID:440057

CVE:CVE-2016-4565

Category:CentOS

PCI Flagged:yes

Vendor References:CESA-2016:1406 centos 6

Bugtraq IDs:90301

Severity: High

Date Discovered: 2022-11-12 08:04:44

Nucleus Notification Rules Triggered: Rule GitHub

Project Name: 6716

Please see Nucleus for more information on these vulnerabilities:https://192.168.56.101/nucleus/public/app/index.html#vuln/201000007/NDQwMDU3/UVVBTFlT/VnVsbg--/false/MjAxMDAwMDA3/c3VtbWFyeQ--/false | 1.0 | Nucleus - [High] - 440057 - Source: QUALYS

Finding Description: CentOS has released security update for kernel to fix the vulnerabilities. Affected Products: centos 6

Impact: Successful exploitation allows attacker to compromise the system.

Target(s): Asset name: 192.168.56.103

Solution: To resolve this issue, upgrade to the latest packages which contain a patch. Refer to CentOS advisory centos 6 (https://lists.centos.org/pipermail/centos-announce/2016-July/021977.html) for updates and patch information.

Patch:

Following are links for downloading patches to fix the vulnerabilities:

CESA-2016:1406: centos 6 (https://lists.centos.org/pipermail/centos-announce/2016-July/021977.html)

References:

QID:440057

CVE:CVE-2016-4565

Category:CentOS

PCI Flagged:yes

Vendor References:CESA-2016:1406 centos 6

Bugtraq IDs:90301

Severity: High

Date Discovered: 2022-11-12 08:04:44

Nucleus Notification Rules Triggered: Rule GitHub

Project Name: 6716

Please see Nucleus for more information on these vulnerabilities:https://192.168.56.101/nucleus/public/app/index.html#vuln/201000007/NDQwMDU3/UVVBTFlT/VnVsbg--/false/MjAxMDAwMDA3/c3VtbWFyeQ--/false | test | nucleus source qualys finding description centos has released security update for kernel to fix the vulnerabilities affected products centos impact successful exploitation allows attacker to compromise the system target s asset name solution to resolve this issue upgrade to the latest packages which contain a patch refer to centos advisory centos for updates and patch information patch following are links for downloading patches to fix the vulnerabilities cesa centos references qid cve cve category centos pci flagged yes vendor references cesa centos bugtraq ids severity high date discovered nucleus notification rules triggered rule github project name please see nucleus for more information on these vulnerabilities | 1 |

65,486 | 16,371,664,725 | IssuesEvent | 2021-05-15 08:43:09 | lettre/lettre | https://api.github.com/repos/lettre/lettre | closed | High-level methods for attachments and embedded files | component:builder component:docs status:in progress type:bug type:feature | Looks like the only way to add an attachment in v.0.10 at the moment is to manually create a multipart message.

1. It's too much hassle

2. I couldn't even make it work with the examples provided

I noticed this comment `// TODO: High-level methods for attachments and embedded files` in https://github.com/lettre/lettre/blob/13b48b656d916308c26680db896695034fdec1d9/src/message/mod.rs#L374

Since I now have to make it work for our project I'm happy to make an extra effort and implement adding attachments as high level methods.

#### Questions:

1. Can you make a simple example of having a plain text part with an attachment using multipart or singlepart to help me get started?

Both https://github.com/lettre/lettre/blob/master/src/message/mod.rs#L85 and https://github.com/lettre/lettre/issues/492#issuecomment-716111178 examples panic over a non-existing boundary with `called Option::unwrap() on a None value, /home/ubuntu/.cargo/git/checkouts/lettre-53652803723a9045/86763cc/src/message/mimebody.rs:402:63`

2. Did you plan to replicate the file attaching interface from the previous version or do something completely different?

| 1.0 | High-level methods for attachments and embedded files - Looks like the only way to add an attachment in v.0.10 at the moment is to manually create a multipart message.

1. It's too much hassle

2. I couldn't even make it work with the examples provided

I noticed this comment `// TODO: High-level methods for attachments and embedded files` in https://github.com/lettre/lettre/blob/13b48b656d916308c26680db896695034fdec1d9/src/message/mod.rs#L374

Since I now have to make it work for our project I'm happy to make an extra effort and implement adding attachments as high level methods.

#### Questions:

1. Can you make a simple example of having a plain text part with an attachment using multipart or singlepart to help me get started?

Both https://github.com/lettre/lettre/blob/master/src/message/mod.rs#L85 and https://github.com/lettre/lettre/issues/492#issuecomment-716111178 examples panic over a non-existing boundary with `called Option::unwrap() on a None value, /home/ubuntu/.cargo/git/checkouts/lettre-53652803723a9045/86763cc/src/message/mimebody.rs:402:63`

2. Did you plan to replicate the file attaching interface from the previous version or do something completely different?

| non_test | high level methods for attachments and embedded files looks like the only way to add an attachment in v at the moment is to manually create a multipart message it s too much hassle i couldn t even make it work with the examples provided i noticed this comment todo high level methods for attachments and embedded files in since i now have to make it work for our project i m happy to make an extra effort and implement adding attachments as high level methods questions can you make a simple example of having a plain text part with an attachment using multipart or singlepart to help me get started both and examples panic over a non existing boundary with called option unwrap on a none value home ubuntu cargo git checkouts lettre src message mimebody rs did you plan to replicate the file attaching interface from the previous version or do something completely different | 0 |

305,968 | 26,423,890,835 | IssuesEvent | 2023-01-14 00:27:59 | cosmos/cosmos-sdk | https://api.github.com/repos/cosmos/cosmos-sdk | closed | e2e has flaky CLI tests (TestCLIMultisignSortSignatures, TestEditValidatorMoniker and TestCLISignBatch) | good first issue T: Tests | <!-- < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < ☺

v ✰ Thanks for opening an issue! ✰

v Before smashing the submit button please review the template.

v Please also ensure that this is not a duplicate issue :)

☺ > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > -->

<!--

IMPORTANT: Prior to opening a bug report, check if it affects one of the core modules

and if its elegible for a bug bounty on `SECURITY.md`. Bugs that are not submitted

through the appropriate channels won't receive any bounty.

-->

## Summary of Bug

I've seen 3 new flaky tests:

```

--- FAIL: TestIntegrationTestSuite/TestCLIMultisignSortSignatures (3.37s)

suite.go:1024:

Error Trace: /home/ubuntu/actions-runner/_work/cosmos-sdk/cosmos-sdk/tests/e2e/auth/suite.go:1024

Error: Not equal:

expected: math.Int{i:(*big.Int)(0xc0043df100)}

actual : math.Int{i:(*big.Int)(0xc002243280)}

Diff:

--- Expected

+++ Actual

@@ -3,5 +3,3 @@

neg: (bool) false,

- abs: (big.nat) (len=1) {

- (big.Word) 10

- }

+ abs: (big.nat) <nil>

})

Test: TestIntegrationTestSuite/TestCLIMultisignSortSignatures

```

and

```

--- FAIL: TestIntegrationTestSuite (109.64s)

suite.go:42: setting up integration test suite

network.go:289: acquiring test network lock

network.go:299: preparing test network with chain-id "chain-dzqeIU"

network.go:568: starting test network...

network.go:574: started validator 0

network.go:574: started validator 1

network.go:582: started test network at height: 0

--- FAIL: TestIntegrationTestSuite/TestEditValidatorMoniker (2.19s)

suite.go:1538:

Error Trace: /home/ubuntu/actions-runner/_work/cosmos-sdk/cosmos-sdk/tests/e2e/staking/client/testutil/suite.go:1538

Error: Not equal:

expected: "node0"

actual : "testing"

Diff:

--- Expected

+++ Actual

@@ -1 +1 @@

-node0

+testing

Test: TestIntegrationTestSuite/TestEditValidatorMoniker

```

and

```

--- FAIL: TestIntegrationTestSuite/TestCLISignBatch (4.69s)

suite.go:320:

Error Trace: /home/runner/work/cosmos-sdk/cosmos-sdk/tests/e2e/auth/suite.go:320

Error: Not equal:

expected: 0x9

actual : 0x8

Test: TestIntegrationTestSuite/TestCLISignBatch

```

| 1.0 | e2e has flaky CLI tests (TestCLIMultisignSortSignatures, TestEditValidatorMoniker and TestCLISignBatch) - <!-- < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < < ☺

v ✰ Thanks for opening an issue! ✰

v Before smashing the submit button please review the template.

v Please also ensure that this is not a duplicate issue :)

☺ > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > > -->

<!--

IMPORTANT: Prior to opening a bug report, check if it affects one of the core modules

and if its elegible for a bug bounty on `SECURITY.md`. Bugs that are not submitted

through the appropriate channels won't receive any bounty.

-->

## Summary of Bug

I've seen 3 new flaky tests:

```

--- FAIL: TestIntegrationTestSuite/TestCLIMultisignSortSignatures (3.37s)

suite.go:1024:

Error Trace: /home/ubuntu/actions-runner/_work/cosmos-sdk/cosmos-sdk/tests/e2e/auth/suite.go:1024

Error: Not equal:

expected: math.Int{i:(*big.Int)(0xc0043df100)}

actual : math.Int{i:(*big.Int)(0xc002243280)}

Diff:

--- Expected

+++ Actual

@@ -3,5 +3,3 @@

neg: (bool) false,

- abs: (big.nat) (len=1) {

- (big.Word) 10

- }

+ abs: (big.nat) <nil>

})

Test: TestIntegrationTestSuite/TestCLIMultisignSortSignatures

```

and

```

--- FAIL: TestIntegrationTestSuite (109.64s)

suite.go:42: setting up integration test suite

network.go:289: acquiring test network lock

network.go:299: preparing test network with chain-id "chain-dzqeIU"

network.go:568: starting test network...

network.go:574: started validator 0

network.go:574: started validator 1

network.go:582: started test network at height: 0

--- FAIL: TestIntegrationTestSuite/TestEditValidatorMoniker (2.19s)

suite.go:1538:

Error Trace: /home/ubuntu/actions-runner/_work/cosmos-sdk/cosmos-sdk/tests/e2e/staking/client/testutil/suite.go:1538

Error: Not equal:

expected: "node0"

actual : "testing"

Diff:

--- Expected

+++ Actual

@@ -1 +1 @@

-node0

+testing

Test: TestIntegrationTestSuite/TestEditValidatorMoniker

```

and

```

--- FAIL: TestIntegrationTestSuite/TestCLISignBatch (4.69s)

suite.go:320:

Error Trace: /home/runner/work/cosmos-sdk/cosmos-sdk/tests/e2e/auth/suite.go:320

Error: Not equal:

expected: 0x9

actual : 0x8

Test: TestIntegrationTestSuite/TestCLISignBatch

```

| test | has flaky cli tests testclimultisignsortsignatures testeditvalidatormoniker and testclisignbatch ☺ v ✰ thanks for opening an issue ✰ v before smashing the submit button please review the template v please also ensure that this is not a duplicate issue ☺ important prior to opening a bug report check if it affects one of the core modules and if its elegible for a bug bounty on security md bugs that are not submitted through the appropriate channels won t receive any bounty summary of bug i ve seen new flaky tests fail testintegrationtestsuite testclimultisignsortsignatures suite go error trace home ubuntu actions runner work cosmos sdk cosmos sdk tests auth suite go error not equal expected math int i big int actual math int i big int diff expected actual neg bool false abs big nat len big word abs big nat test testintegrationtestsuite testclimultisignsortsignatures and fail testintegrationtestsuite suite go setting up integration test suite network go acquiring test network lock network go preparing test network with chain id chain dzqeiu network go starting test network network go started validator network go started validator network go started test network at height fail testintegrationtestsuite testeditvalidatormoniker suite go error trace home ubuntu actions runner work cosmos sdk cosmos sdk tests staking client testutil suite go error not equal expected actual testing diff expected actual testing test testintegrationtestsuite testeditvalidatormoniker and fail testintegrationtestsuite testclisignbatch suite go error trace home runner work cosmos sdk cosmos sdk tests auth suite go error not equal expected actual test testintegrationtestsuite testclisignbatch | 1 |

64,174 | 15,818,670,032 | IssuesEvent | 2021-04-05 16:21:27 | ns1labs/pktvisor | https://api.github.com/repos/ns1labs/pktvisor | opened | statically linked binaries | build-system | generate statically linked versions of `pktvisord`, `pktvisor-pcap` for various platforms | 1.0 | statically linked binaries - generate statically linked versions of `pktvisord`, `pktvisor-pcap` for various platforms | non_test | statically linked binaries generate statically linked versions of pktvisord pktvisor pcap for various platforms | 0 |

12,227 | 7,811,372,643 | IssuesEvent | 2018-06-12 09:54:09 | crate/crate | https://api.github.com/repos/crate/crate | closed | Bad join performance with no possible matches | feature: performance feature: sql: joins | Hello,

I have create 2 "clones" tables named people3 and people 4:

cr> select table_name, column_name, data_type

from information_schema.columns

where table_name like 'people%';

+------------+-------------+-----------+

| table_name | column_name | data_type |

+------------+-------------+-----------+

| people3 | birthdate | timestamp |

| people3 | name | string |

| people4 | birthdate | timestamp |

| people4 | name | string |

+------------+-------------+-----------+

And add many rows:

cr> select count(*) from people3;

+----------+

| count(*) |

+----------+

| 3449535 |

+----------+

| True | Bad join performance with no possible matches - Hello,

I have create 2 "clones" tables named people3 and people 4:

cr> select table_name, column_name, data_type

from information_schema.columns

where table_name like 'people%';

+------------+-------------+-----------+

| table_name | column_name | data_type |

+------------+-------------+-----------+

| people3 | birthdate | timestamp |

| people3 | name | string |

| people4 | birthdate | timestamp |

| people4 | name | string |

+------------+-------------+-----------+

And add many rows:

cr> select count(*) from people3;

+----------+

| count(*) |

+----------+

| 3449535 |

+----------+

| non_test | bad join performance with no possible matches hello i have create clones tables named and people cr select table name column name data type from information schema columns where table name like people table name column name data type birthdate timestamp name string birthdate timestamp name string and add many rows cr select count from count | 0 |

194,681 | 15,437,620,646 | IssuesEvent | 2021-03-07 17:22:21 | microsoft/azure-pipelines-task-lib | https://api.github.com/repos/microsoft/azure-pipelines-task-lib | closed | Documentation | documentation stale |

### Environment

azure-pipelines-task-lib version: current

### Issue Description

There is no documentation on how to use the node lib for common scenarios.

### Expected behaviour

There is clear documentation with examples for the most common (or all) commands.

1. for Example the `tl.exec()` command

2. for getting `connectedService` secrets, handling and using them

3. general construction of the UI and its features

Just pointing to working code or config files is not documentation.

I am sorry if something like this already exists, but I was unable to find it. It's late over here so please point to the right links. | 1.0 | Documentation -

### Environment

azure-pipelines-task-lib version: current

### Issue Description

There is no documentation on how to use the node lib for common scenarios.

### Expected behaviour

There is clear documentation with examples for the most common (or all) commands.

1. for Example the `tl.exec()` command

2. for getting `connectedService` secrets, handling and using them

3. general construction of the UI and its features

Just pointing to working code or config files is not documentation.

I am sorry if something like this already exists, but I was unable to find it. It's late over here so please point to the right links. | non_test | documentation environment azure pipelines task lib version current issue description there is no documentation on how to use the node lib for common scenarios expected behaviour there is clear documentation with examples for the most common or all commands for example the tl exec command for getting connectedservice secrets handling and using them general construction of the ui and its features just pointing to working code or config files is not documentation i am sorry if something like this already exists but i was unable to find it it s late over here so please point to the right links | 0 |

38,116 | 12,528,264,883 | IssuesEvent | 2020-06-04 09:17:44 | ckauhaus/nixpkgs | https://api.github.com/repos/ckauhaus/nixpkgs | opened | Vulnerability roundup 4: advancecomp-2.1: 1 advisory | 1.severity: security | [search](https://search.nix.gsc.io/?q=advancecomp&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=advancecomp+in%3Apath&type=Code)

* [ ] [CVE-2019-9210](https://nvd.nist.gov/vuln/detail/CVE-2019-9210) CVSSv3=7.8 (nixos-19.03)

Scanned versions: nixos-19.03: 34c7eb7545d. May contain false positives.

| True | Vulnerability roundup 4: advancecomp-2.1: 1 advisory - [search](https://search.nix.gsc.io/?q=advancecomp&i=fosho&repos=NixOS-nixpkgs), [files](https://github.com/NixOS/nixpkgs/search?utf8=%E2%9C%93&q=advancecomp+in%3Apath&type=Code)

* [ ] [CVE-2019-9210](https://nvd.nist.gov/vuln/detail/CVE-2019-9210) CVSSv3=7.8 (nixos-19.03)

Scanned versions: nixos-19.03: 34c7eb7545d. May contain false positives.

| non_test | vulnerability roundup advancecomp advisory nixos scanned versions nixos may contain false positives | 0 |

244,992 | 20,736,789,504 | IssuesEvent | 2022-03-14 14:23:00 | dnd-side-project/dnd-6th-5-backend | https://api.github.com/repos/dnd-side-project/dnd-6th-5-backend | closed | test: patchUserNickname api에 대한 통합테스트, 유닛테스트 작성 | test | - [x] node-mocks-http, supertest, jest 모듈 설치

- [x] tscongfig에 json파일을 import하기 위한 설정 추가

- [x] 에러 핸들링 미들웨어 추가

- [x] test script 수정

- [x] ormconfig.js test DB설정 추가

- [x] jset.config.js 설정중 transform에서 js확장자도 추가

- [x] tsconfig.json에서 js확장자 파일인 jest설정파일을 읽을 수 있도록 allowJs 설정 true로 설정

- [x] patchUserNickname 컨트롤러에 대한 unittest code작성

- [x] patchUserNickname 컨트롤러에 대한 통합테스트 code작성 | 1.0 | test: patchUserNickname api에 대한 통합테스트, 유닛테스트 작성 - - [x] node-mocks-http, supertest, jest 모듈 설치

- [x] tscongfig에 json파일을 import하기 위한 설정 추가

- [x] 에러 핸들링 미들웨어 추가

- [x] test script 수정

- [x] ormconfig.js test DB설정 추가

- [x] jset.config.js 설정중 transform에서 js확장자도 추가

- [x] tsconfig.json에서 js확장자 파일인 jest설정파일을 읽을 수 있도록 allowJs 설정 true로 설정

- [x] patchUserNickname 컨트롤러에 대한 unittest code작성

- [x] patchUserNickname 컨트롤러에 대한 통합테스트 code작성 | test | test patchusernickname api에 대한 통합테스트 유닛테스트 작성 node mocks http supertest jest 모듈 설치 tscongfig에 json파일을 import하기 위한 설정 추가 에러 핸들링 미들웨어 추가 test script 수정 ormconfig js test db설정 추가 jset config js 설정중 transform에서 js확장자도 추가 tsconfig json에서 js확장자 파일인 jest설정파일을 읽을 수 있도록 allowjs 설정 true로 설정 patchusernickname 컨트롤러에 대한 unittest code작성 patchusernickname 컨트롤러에 대한 통합테스트 code작성 | 1 |

315,804 | 27,107,822,811 | IssuesEvent | 2023-02-15 13:23:40 | ventoy/Ventoy | https://api.github.com/repos/ventoy/Ventoy | closed | [Success Image Report]: Tiny 11 ( by NTDEV ) based on Windows 11 Pro 22H2 | 【Tested Image Report】 | ### Official Website List

- [X] I have checked the list in official website and the image file is not listed there.

### Ventoy Version

1.0.88

### BIOS Mode

Both

### Partition Style

MBR

### Image file name

tiny11 b1.iso

### Image file checksum type

MD5

### Image file checksum value

efd53d1bd51854ee57391ea3a4700cbf

### Image file download link (if applicable)

https://archive.org/download/tiny-11-NTDEV/tiny11%20b1.iso

### Test environment

Lenovo ThinkPad X230 Laptop

### More Details?

This image file booted successfully in Ventoy and no errors were seen.

Laptop Specs: - Intel(R) Core(TM) i5-3320M CPU @ 2.60GHz, 2601 Mhz, 2 Core(s), 4 Logical Processor(s)

x64-based PC

8.00 GB RAM

500 GB SSD | 1.0 | [Success Image Report]: Tiny 11 ( by NTDEV ) based on Windows 11 Pro 22H2 - ### Official Website List

- [X] I have checked the list in official website and the image file is not listed there.

### Ventoy Version

1.0.88

### BIOS Mode

Both

### Partition Style

MBR

### Image file name

tiny11 b1.iso

### Image file checksum type

MD5

### Image file checksum value

efd53d1bd51854ee57391ea3a4700cbf

### Image file download link (if applicable)

https://archive.org/download/tiny-11-NTDEV/tiny11%20b1.iso

### Test environment

Lenovo ThinkPad X230 Laptop

### More Details?

This image file booted successfully in Ventoy and no errors were seen.

Laptop Specs: - Intel(R) Core(TM) i5-3320M CPU @ 2.60GHz, 2601 Mhz, 2 Core(s), 4 Logical Processor(s)

x64-based PC

8.00 GB RAM

500 GB SSD | test | tiny by ntdev based on windows pro official website list i have checked the list in official website and the image file is not listed there ventoy version bios mode both partition style mbr image file name iso image file checksum type image file checksum value image file download link if applicable test environment lenovo thinkpad laptop more details this image file booted successfully in ventoy and no errors were seen laptop specs intel r core tm cpu mhz core s logical processor s based pc gb ram gb ssd | 1 |

219,049 | 24,436,717,553 | IssuesEvent | 2022-10-06 12:02:06 | pazhanivel07/tcpdump-4.9.2 | https://api.github.com/repos/pazhanivel07/tcpdump-4.9.2 | opened | CVE-2018-14470 (High) detected in tcpdumptcpdump-4.9.2 | security vulnerability | ## CVE-2018-14470 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tcpdumptcpdump-4.9.2</b></p></summary>

<p>

<p>the TCPdump network dissector</p>

<p>Library home page: <a href=https://github.com/the-tcpdump-group/tcpdump.git>https://github.com/the-tcpdump-group/tcpdump.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/pazhanivel07/tcpdump-4.9.2/commit/761aa8f39eabb1228c1d03e3a55c861d76c46817">761aa8f39eabb1228c1d03e3a55c861d76c46817</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/print-babel.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The Babel parser in tcpdump before 4.9.3 has a buffer over-read in print-babel.c:babel_print_v2().

<p>Publish Date: 2019-10-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-14470>CVE-2018-14470</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-14470">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-14470</a></p>

<p>Release Date: 2019-10-03</p>

<p>Fix Resolution: 4.9.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2018-14470 (High) detected in tcpdumptcpdump-4.9.2 - ## CVE-2018-14470 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tcpdumptcpdump-4.9.2</b></p></summary>

<p>

<p>the TCPdump network dissector</p>

<p>Library home page: <a href=https://github.com/the-tcpdump-group/tcpdump.git>https://github.com/the-tcpdump-group/tcpdump.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/pazhanivel07/tcpdump-4.9.2/commit/761aa8f39eabb1228c1d03e3a55c861d76c46817">761aa8f39eabb1228c1d03e3a55c861d76c46817</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/print-babel.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The Babel parser in tcpdump before 4.9.3 has a buffer over-read in print-babel.c:babel_print_v2().

<p>Publish Date: 2019-10-03

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-14470>CVE-2018-14470</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-14470">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-14470</a></p>

<p>Release Date: 2019-10-03</p>

<p>Fix Resolution: 4.9.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve high detected in tcpdumptcpdump cve high severity vulnerability vulnerable library tcpdumptcpdump the tcpdump network dissector library home page a href found in head commit a href found in base branch master vulnerable source files print babel c vulnerability details the babel parser in tcpdump before has a buffer over read in print babel c babel print publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend | 0 |

199,387 | 15,036,594,904 | IssuesEvent | 2021-02-02 15:25:25 | apache/buildstream | https://api.github.com/repos/apache/buildstream | closed | Run CI as non-root user | enhancement tests | [See original issue on GitLab](https://gitlab.com/BuildStream/buildstream/-/issues/102)

In GitLab by [[Gitlab user @tlater]](https://gitlab.com/tlater) on Oct 3, 2017, 14:31

Currently all tests are run as root, which is not likely to reflect the actual user environment, and may mask permission issues. CI tests should run as a separate user for the linux platform.

Simple `su` calls don't appear to work in the container. | 1.0 | Run CI as non-root user - [See original issue on GitLab](https://gitlab.com/BuildStream/buildstream/-/issues/102)

In GitLab by [[Gitlab user @tlater]](https://gitlab.com/tlater) on Oct 3, 2017, 14:31

Currently all tests are run as root, which is not likely to reflect the actual user environment, and may mask permission issues. CI tests should run as a separate user for the linux platform.

Simple `su` calls don't appear to work in the container. | test | run ci as non root user in gitlab by on oct currently all tests are run as root which is not likely to reflect the actual user environment and may mask permission issues ci tests should run as a separate user for the linux platform simple su calls don t appear to work in the container | 1 |

130,471 | 10,617,051,333 | IssuesEvent | 2019-10-12 16:16:32 | unsuitable001/memoryJS | https://api.github.com/repos/unsuitable001/memoryJS | opened | [unit testing] Add coverage check using CODEBEAT | enhancement hacktoberfest help wanted testing up-for-grabs | [unit testing] Add coverage check using CODEBEAT.

You have to write the tests & try to fix if any error occurs.

Refer - https://hub.codebeat.co/docs/test-coverage-reports#section-setting-up-for-javascript-typescript | 1.0 | [unit testing] Add coverage check using CODEBEAT - [unit testing] Add coverage check using CODEBEAT.

You have to write the tests & try to fix if any error occurs.

Refer - https://hub.codebeat.co/docs/test-coverage-reports#section-setting-up-for-javascript-typescript | test | add coverage check using codebeat add coverage check using codebeat you have to write the tests try to fix if any error occurs refer | 1 |

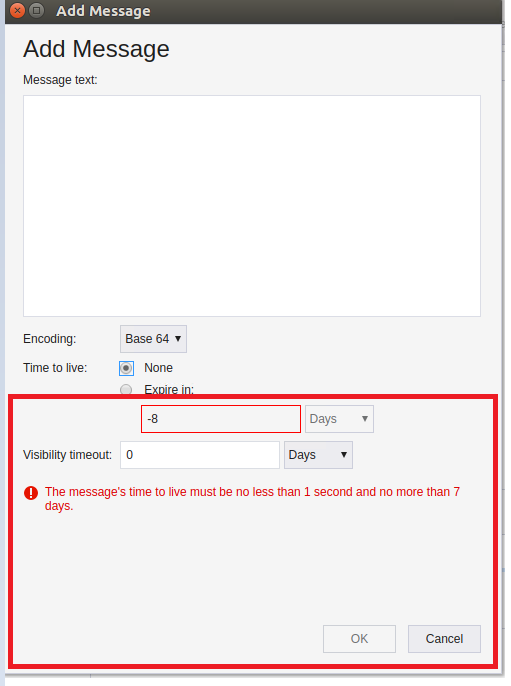

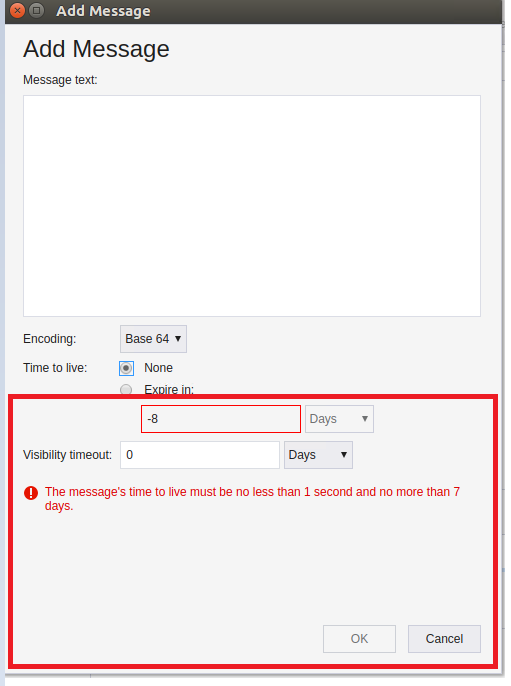

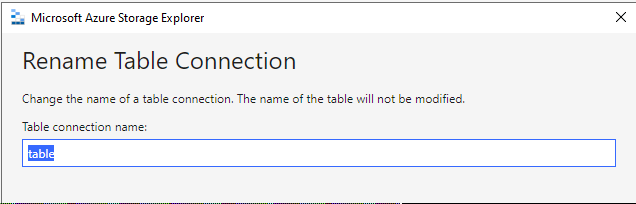

158,072 | 12,401,453,792 | IssuesEvent | 2020-05-21 09:54:17 | microsoft/AzureStorageExplorer | https://api.github.com/repos/microsoft/AzureStorageExplorer | opened | An invalid time warning still occurs when switching to 'None' in add message dialog | :gear: queues 🧪 testing | **Storage Explorer Version:** 1.14.0-dev

**Build**: [20200521.3](https://devdiv.visualstudio.com/DevDiv/_build/results?buildId=3746638&view=results)

**Branch:** master

**Platform/OS:** Windows 10/ Linux Ubuntu 16.04/ macOS Mojave

**Architecture:** ia32/x64

**Regression From:** Not a regression

**Steps to reproduce:**

1. Expand one storage account -> Queues.

2. Create a new queue -> Click 'Add Message'.

3. Check 'Expire in:' -> Input an invalid string -> Switch to 'None'.

4. Check the result.

**Expect Experience:**

No warning occurs.

**Actual Experience:**

The warning still occurs.

| 1.0 | An invalid time warning still occurs when switching to 'None' in add message dialog - **Storage Explorer Version:** 1.14.0-dev

**Build**: [20200521.3](https://devdiv.visualstudio.com/DevDiv/_build/results?buildId=3746638&view=results)

**Branch:** master

**Platform/OS:** Windows 10/ Linux Ubuntu 16.04/ macOS Mojave

**Architecture:** ia32/x64

**Regression From:** Not a regression

**Steps to reproduce:**

1. Expand one storage account -> Queues.

2. Create a new queue -> Click 'Add Message'.

3. Check 'Expire in:' -> Input an invalid string -> Switch to 'None'.

4. Check the result.

**Expect Experience:**

No warning occurs.

**Actual Experience:**

The warning still occurs.

| test | an invalid time warning still occurs when switching to none in add message dialog storage explorer version dev build branch master platform os windows linux ubuntu macos mojave architecture regression from not a regression steps to reproduce expand one storage account queues create a new queue click add message check expire in input an invalid string switch to none check the result expect experience no warning occurs actual experience the warning still occurs | 1 |

22,342 | 2,648,808,102 | IssuesEvent | 2015-03-14 08:37:31 | gunmetalbackupgooglecode/opendbg | https://api.github.com/repos/gunmetalbackupgooglecode/opendbg | closed | tracer doesn't have some functions for work with GUI | imported OpSys-Windows Priority-Critical Type-Review wontfix | _From [d1mk4nah@gmail.com](https://code.google.com/u/d1mk4nah@gmail.com/) on September 13, 2008 06:07:57_

We should have thunks for some functions (you can look at it when you try

compile tracer) at tracer or kernel side.

_Original issue: http://code.google.com/p/opendbg/issues/detail?id=1_ | 1.0 | tracer doesn't have some functions for work with GUI - _From [d1mk4nah@gmail.com](https://code.google.com/u/d1mk4nah@gmail.com/) on September 13, 2008 06:07:57_

We should have thunks for some functions (you can look at it when you try

compile tracer) at tracer or kernel side.

_Original issue: http://code.google.com/p/opendbg/issues/detail?id=1_ | non_test | tracer doesn t have some functions for work with gui from on september we should have thunks for some functions you can look at it when you try compile tracer at tracer or kernel side original issue | 0 |

53,098 | 6,300,485,965 | IssuesEvent | 2017-07-21 03:58:41 | yarnpkg/yarn | https://api.github.com/repos/yarnpkg/yarn | closed | yarn-e2e/label=docker,os=ubuntu-14.04 #258 failed | failure test | Build 'yarn-e2e/label=docker,os=ubuntu-14.04' is failing!

Last 50 lines of build output:

```

Started by upstream project "yarn-e2e" build number 258

originally caused by:

Started by timer

Building on master in workspace /var/lib/jenkins/workspace/yarn-e2e/label/docker/os/ubuntu-14.04

Cloning the remote Git repository

Cloning repository https://github.com/yarnpkg/yarn.git

> git init /var/lib/jenkins/workspace/yarn-e2e/label/docker/os/ubuntu-14.04 # timeout=10

Fetching upstream changes from https://github.com/yarnpkg/yarn.git

> git --version # timeout=10

> git fetch --tags --progress https://github.com/yarnpkg/yarn.git +refs/heads/*:refs/remotes/origin/*

ERROR: Error cloning remote repo 'origin'

hudson.plugins.git.GitException: Command "git fetch --tags --progress https://github.com/yarnpkg/yarn.git +refs/heads/*:refs/remotes/origin/*" returned status code 128:

stdout:

stderr: remote: Counting objects: 45503, done.

remote: Compressing objects: 1% (1/76) remote: Compressing objects: 2% (2/76) remote: Compressing objects: 3% (3/76) remote: Compressing objects: 5% (4/76) remote: Compressing objects: 6% (5/76) remote: Compressing objects: 7% (6/76) remote: Compressing objects: 9% (7/76) remote: Compressing objects: 10% (8/76) remote: Compressing objects: 11% (9/76) remote: Compressing objects: 13% (10/76) remote: Compressing objects: 14% (11/76) remote: Compressing objects: 15% (12/76) remote: Compressing objects: 17% (13/76) remote: Compressing objects: 18% (14/76) remote: Compressing objects: 19% (15/76) remote: Compressing objects: 21% (16/76) remote: Compressing objects: 22% (17/76) remote: Compressing objects: 23% (18/76) remote: Compressing objects: 25% (19/76) remote: Compressing objects: 26% (20/76) remote: Compressing objects: 27% (21/76) remote: Compressing objects: 28% (22/76) remote: Compressing objects: 30% (23/76) remote: Compressing objects: 31% (24/76) remote: Compressing objects: 32% (25/76) remote: Compressing objects: 34% (26/76) remote: Compressing objects: 35% (27/76) remote: Compressing objects: 36% (28/76) remote: Compressing objects: 38% (29/76) remote: Compressing objects: 39% (30/76) remote: Compressing objects: 40% (31/76) remote: Compressing objects: 42% (32/76) remote: Compressing objects: 43% (33/76) remote: Compressing objects: 44% (34/76) remote: Compressing objects: 46% (35/76) remote: Compressing objects: 47% (36/76) remote: Compressing objects: 48% (37/76) remote: Compressing objects: 50% (38/76) remote: Compressing objects: 51% (39/76) remote: Compressing objects: 52% (40/76) remote: Compressing objects: 53% (41/76) remote: Compressing objects: 55% (42/76) remote: Compressing objects: 56% (43/76) remote: Compressing objects: 57% (44/76) remote: Compressing objects: 59% (45/76) remote: Compressing objects: 60% (46/76) remote: Compressing objects: 61% (47/76) remote: Compressing objects: 63% (48/76) remote: Compressing objects: 64% (49/76) remote: Compressing objects: 65% (50/76) remote: Compressing objects: 67% (51/76) remote: Compressing objects: 68% (52/76) remote: Compressing objects: 69% (53/76) remote: Compressing objects: 71% (54/76) remote: Compressing objects: 72% (55/76) remote: Compressing objects: 73% (56/76) remote: Compressing objects: 75% (57/76) remote: Compressing objects: 76% (58/76) remote: Compressing objects: 77% (59/76) remote: Compressing objects: 78% (60/76) remote: Compressing objects: 80% (61/76) remote: Compressing objects: 81% (62/76) remote: Compressing objects: 82% (63/76) remote: Compressing objects: 84% (64/76) remote: Compressing objects: 85% (65/76) remote: Compressing objects: 86% (66/76) remote: Compressing objects: 88% (67/76) remote: Compressing objects: 89% (68/76) remote: Compressing objects: 90% (69/76) remote: Compressing objects: 92% (70/76) remote: Compressing objects: 93% (71/76) remote: Compressing objects: 94% (72/76) remote: Compressing objects: 96% (73/76) remote: Compressing objects: 97% (74/76) remote: Compressing objects: 98% (75/76) remote: Compressing objects: 100% (76/76) remote: Compressing objects: 100% (76/76), done.

Receiving objects: 0% (1/45503) Receiving objects: 1% (456/45503) Receiving objects: 2% (911/45503) Receiving objects: 3% (1366/45503) Receiving objects: 4% (1821/45503) Receiving objects: 5% (2276/45503) Receiving objects: 6% (2731/45503) Receiving objects: 7% (3186/45503) error: RPC failed; curl 56 GnuTLS recv error (-54): Error in the pull function.

fatal: The remote end hung up unexpectedly

fatal: early EOF

fatal: index-pack failed

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl.launchCommandIn(CliGitAPIImpl.java:1903)

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl.launchCommandWithCredentials(CliGitAPIImpl.java:1622)

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl.access$300(CliGitAPIImpl.java:71)

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl$1.execute(CliGitAPIImpl.java:348)

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl$2.execute(CliGitAPIImpl.java:545)

at hudson.plugins.git.GitSCM.retrieveChanges(GitSCM.java:1070)

at hudson.plugins.git.GitSCM.checkout(GitSCM.java:1110)

at hudson.scm.SCM.checkout(SCM.java:495)

at hudson.model.AbstractProject.checkout(AbstractProject.java:1276)

at hudson.model.AbstractBuild$AbstractBuildExecution.defaultCheckout(AbstractBuild.java:560)

at jenkins.scm.SCMCheckoutStrategy.checkout(SCMCheckoutStrategy.java:86)

at hudson.model.AbstractBuild$AbstractBuildExecution.run(AbstractBuild.java:485)

at hudson.model.Run.execute(Run.java:1735)

at hudson.matrix.MatrixRun.run(MatrixRun.java:146)

at hudson.model.ResourceController.execute(ResourceController.java:97)

at hudson.model.Executor.run(Executor.java:405)

ERROR: Error cloning remote repo 'origin'

```

Changes since last successful build:

No changes

[View full output](https://build.dan.cx/job/yarn-e2e/label=docker,os=ubuntu-14.04/258/)

cc @Daniel15 | 1.0 | yarn-e2e/label=docker,os=ubuntu-14.04 #258 failed - Build 'yarn-e2e/label=docker,os=ubuntu-14.04' is failing!

Last 50 lines of build output:

```

Started by upstream project "yarn-e2e" build number 258

originally caused by:

Started by timer

Building on master in workspace /var/lib/jenkins/workspace/yarn-e2e/label/docker/os/ubuntu-14.04

Cloning the remote Git repository

Cloning repository https://github.com/yarnpkg/yarn.git

> git init /var/lib/jenkins/workspace/yarn-e2e/label/docker/os/ubuntu-14.04 # timeout=10

Fetching upstream changes from https://github.com/yarnpkg/yarn.git

> git --version # timeout=10

> git fetch --tags --progress https://github.com/yarnpkg/yarn.git +refs/heads/*:refs/remotes/origin/*

ERROR: Error cloning remote repo 'origin'

hudson.plugins.git.GitException: Command "git fetch --tags --progress https://github.com/yarnpkg/yarn.git +refs/heads/*:refs/remotes/origin/*" returned status code 128:

stdout:

stderr: remote: Counting objects: 45503, done.

remote: Compressing objects: 1% (1/76) remote: Compressing objects: 2% (2/76) remote: Compressing objects: 3% (3/76) remote: Compressing objects: 5% (4/76) remote: Compressing objects: 6% (5/76) remote: Compressing objects: 7% (6/76) remote: Compressing objects: 9% (7/76) remote: Compressing objects: 10% (8/76) remote: Compressing objects: 11% (9/76) remote: Compressing objects: 13% (10/76) remote: Compressing objects: 14% (11/76) remote: Compressing objects: 15% (12/76) remote: Compressing objects: 17% (13/76) remote: Compressing objects: 18% (14/76) remote: Compressing objects: 19% (15/76) remote: Compressing objects: 21% (16/76) remote: Compressing objects: 22% (17/76) remote: Compressing objects: 23% (18/76) remote: Compressing objects: 25% (19/76) remote: Compressing objects: 26% (20/76) remote: Compressing objects: 27% (21/76) remote: Compressing objects: 28% (22/76) remote: Compressing objects: 30% (23/76) remote: Compressing objects: 31% (24/76) remote: Compressing objects: 32% (25/76) remote: Compressing objects: 34% (26/76) remote: Compressing objects: 35% (27/76) remote: Compressing objects: 36% (28/76) remote: Compressing objects: 38% (29/76) remote: Compressing objects: 39% (30/76) remote: Compressing objects: 40% (31/76) remote: Compressing objects: 42% (32/76) remote: Compressing objects: 43% (33/76) remote: Compressing objects: 44% (34/76) remote: Compressing objects: 46% (35/76) remote: Compressing objects: 47% (36/76) remote: Compressing objects: 48% (37/76) remote: Compressing objects: 50% (38/76) remote: Compressing objects: 51% (39/76) remote: Compressing objects: 52% (40/76) remote: Compressing objects: 53% (41/76) remote: Compressing objects: 55% (42/76) remote: Compressing objects: 56% (43/76) remote: Compressing objects: 57% (44/76) remote: Compressing objects: 59% (45/76) remote: Compressing objects: 60% (46/76) remote: Compressing objects: 61% (47/76) remote: Compressing objects: 63% (48/76) remote: Compressing objects: 64% (49/76) remote: Compressing objects: 65% (50/76) remote: Compressing objects: 67% (51/76) remote: Compressing objects: 68% (52/76) remote: Compressing objects: 69% (53/76) remote: Compressing objects: 71% (54/76) remote: Compressing objects: 72% (55/76) remote: Compressing objects: 73% (56/76) remote: Compressing objects: 75% (57/76) remote: Compressing objects: 76% (58/76) remote: Compressing objects: 77% (59/76) remote: Compressing objects: 78% (60/76) remote: Compressing objects: 80% (61/76) remote: Compressing objects: 81% (62/76) remote: Compressing objects: 82% (63/76) remote: Compressing objects: 84% (64/76) remote: Compressing objects: 85% (65/76) remote: Compressing objects: 86% (66/76) remote: Compressing objects: 88% (67/76) remote: Compressing objects: 89% (68/76) remote: Compressing objects: 90% (69/76) remote: Compressing objects: 92% (70/76) remote: Compressing objects: 93% (71/76) remote: Compressing objects: 94% (72/76) remote: Compressing objects: 96% (73/76) remote: Compressing objects: 97% (74/76) remote: Compressing objects: 98% (75/76) remote: Compressing objects: 100% (76/76) remote: Compressing objects: 100% (76/76), done.

Receiving objects: 0% (1/45503) Receiving objects: 1% (456/45503) Receiving objects: 2% (911/45503) Receiving objects: 3% (1366/45503) Receiving objects: 4% (1821/45503) Receiving objects: 5% (2276/45503) Receiving objects: 6% (2731/45503) Receiving objects: 7% (3186/45503) error: RPC failed; curl 56 GnuTLS recv error (-54): Error in the pull function.

fatal: The remote end hung up unexpectedly

fatal: early EOF

fatal: index-pack failed

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl.launchCommandIn(CliGitAPIImpl.java:1903)

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl.launchCommandWithCredentials(CliGitAPIImpl.java:1622)

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl.access$300(CliGitAPIImpl.java:71)

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl$1.execute(CliGitAPIImpl.java:348)

at org.jenkinsci.plugins.gitclient.CliGitAPIImpl$2.execute(CliGitAPIImpl.java:545)

at hudson.plugins.git.GitSCM.retrieveChanges(GitSCM.java:1070)

at hudson.plugins.git.GitSCM.checkout(GitSCM.java:1110)

at hudson.scm.SCM.checkout(SCM.java:495)

at hudson.model.AbstractProject.checkout(AbstractProject.java:1276)

at hudson.model.AbstractBuild$AbstractBuildExecution.defaultCheckout(AbstractBuild.java:560)

at jenkins.scm.SCMCheckoutStrategy.checkout(SCMCheckoutStrategy.java:86)

at hudson.model.AbstractBuild$AbstractBuildExecution.run(AbstractBuild.java:485)

at hudson.model.Run.execute(Run.java:1735)

at hudson.matrix.MatrixRun.run(MatrixRun.java:146)

at hudson.model.ResourceController.execute(ResourceController.java:97)

at hudson.model.Executor.run(Executor.java:405)

ERROR: Error cloning remote repo 'origin'

```

Changes since last successful build:

No changes

[View full output](https://build.dan.cx/job/yarn-e2e/label=docker,os=ubuntu-14.04/258/)