Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

19,762 | 26,135,599,237 | IssuesEvent | 2022-12-29 11:40:54 | firebase/firebase-cpp-sdk | https://api.github.com/repos/firebase/firebase-cpp-sdk | closed | [C++] Nightly Integration Testing Report for Firestore | type: process nightly-testing |

<hidden value="integration-test-status-comment"></hidden>

### [build against repo] Integration test with FLAKINESS (succeeded after retry)

Requested by @sunmou99 on commit b07793ae015b4a69f2ec68e1c8f46206f9fac0c7

Last updated: Wed Dec 28 10:56 PST 2022

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/3793209772)**

| Failures | Configs |

|----------|---------|

| firestore | [TEST] [FLAKINESS] [Android] [1/3 os: macos] [1/4 android_device: android_latest]<details><summary>(1 failed tests)</summary> NumericTransformsTest.DoubleIncrementWithExistingDouble</details> |

Add flaky tests to **[go/fpl-cpp-flake-tracker](http://go/fpl-cpp-flake-tracker)**

<hidden value="integration-test-status-comment"></hidden>

***

### ✅ [build against SDK] Integration test succeeded!

Requested by @firebase-workflow-trigger[bot] on commit b07793ae015b4a69f2ec68e1c8f46206f9fac0c7

Last updated: Wed Dec 28 10:56 PST 2022

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/3793839941)**

<hidden value="integration-test-status-comment"></hidden>

***

### ✅ [build against tip] Integration test succeeded!

Requested by @sunmou99 on commit b07793ae015b4a69f2ec68e1c8f46206f9fac0c7

Last updated: Wed Dec 28 10:55 PST 2022

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/3793510600)**

| 1.0 | [C++] Nightly Integration Testing Report for Firestore -

<hidden value="integration-test-status-comment"></hidden>

### [build against repo] Integration test with FLAKINESS (succeeded after retry)

Requested by @sunmou99 on commit b07793ae015b4a69f2ec68e1c8f46206f9fac0c7

Last updated: Wed Dec 28 10:56 PST 2022

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/3793209772)**

| Failures | Configs |

|----------|---------|

| firestore | [TEST] [FLAKINESS] [Android] [1/3 os: macos] [1/4 android_device: android_latest]<details><summary>(1 failed tests)</summary> NumericTransformsTest.DoubleIncrementWithExistingDouble</details> |

Add flaky tests to **[go/fpl-cpp-flake-tracker](http://go/fpl-cpp-flake-tracker)**

<hidden value="integration-test-status-comment"></hidden>

***

### ✅ [build against SDK] Integration test succeeded!

Requested by @firebase-workflow-trigger[bot] on commit b07793ae015b4a69f2ec68e1c8f46206f9fac0c7

Last updated: Wed Dec 28 10:56 PST 2022

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/3793839941)**

<hidden value="integration-test-status-comment"></hidden>

***

### ✅ [build against tip] Integration test succeeded!

Requested by @sunmou99 on commit b07793ae015b4a69f2ec68e1c8f46206f9fac0c7

Last updated: Wed Dec 28 10:55 PST 2022

**[View integration test log & download artifacts](https://github.com/firebase/firebase-cpp-sdk/actions/runs/3793510600)**

| non_test | nightly integration testing report for firestore integration test with flakiness succeeded after retry requested by on commit last updated wed dec pst failures configs firestore failed tests nbsp nbsp numerictransformstest doubleincrementwithexistingdouble add flaky tests to ✅ nbsp integration test succeeded requested by firebase workflow trigger on commit last updated wed dec pst ✅ nbsp integration test succeeded requested by on commit last updated wed dec pst | 0 |

388,476 | 26,767,984,371 | IssuesEvent | 2023-01-31 12:06:30 | readthedocs/readthedocs.org | https://api.github.com/repos/readthedocs/readthedocs.org | opened | Docs: explain how the" standard build outputs" work | Needed: documentation Accepted | After implementing #9888 we have improved how our build process works. We need to expand the documentation to reflect these changes.

There are good examples in https://github.com/readthedocs/readthedocs.org/issues/1939#issuecomment-1410230381 and other issues.

Also, we have to update our "Downloadable documentation" page: https://docs.readthedocs.io/en/stable/downloadable-documentation.html

| 1.0 | Docs: explain how the" standard build outputs" work - After implementing #9888 we have improved how our build process works. We need to expand the documentation to reflect these changes.

There are good examples in https://github.com/readthedocs/readthedocs.org/issues/1939#issuecomment-1410230381 and other issues.

Also, we have to update our "Downloadable documentation" page: https://docs.readthedocs.io/en/stable/downloadable-documentation.html

| non_test | docs explain how the standard build outputs work after implementing we have improved how our build process works we need to expand the documentation to reflect these changes there are good examples in and other issues also we have to update our downloadable documentation page | 0 |

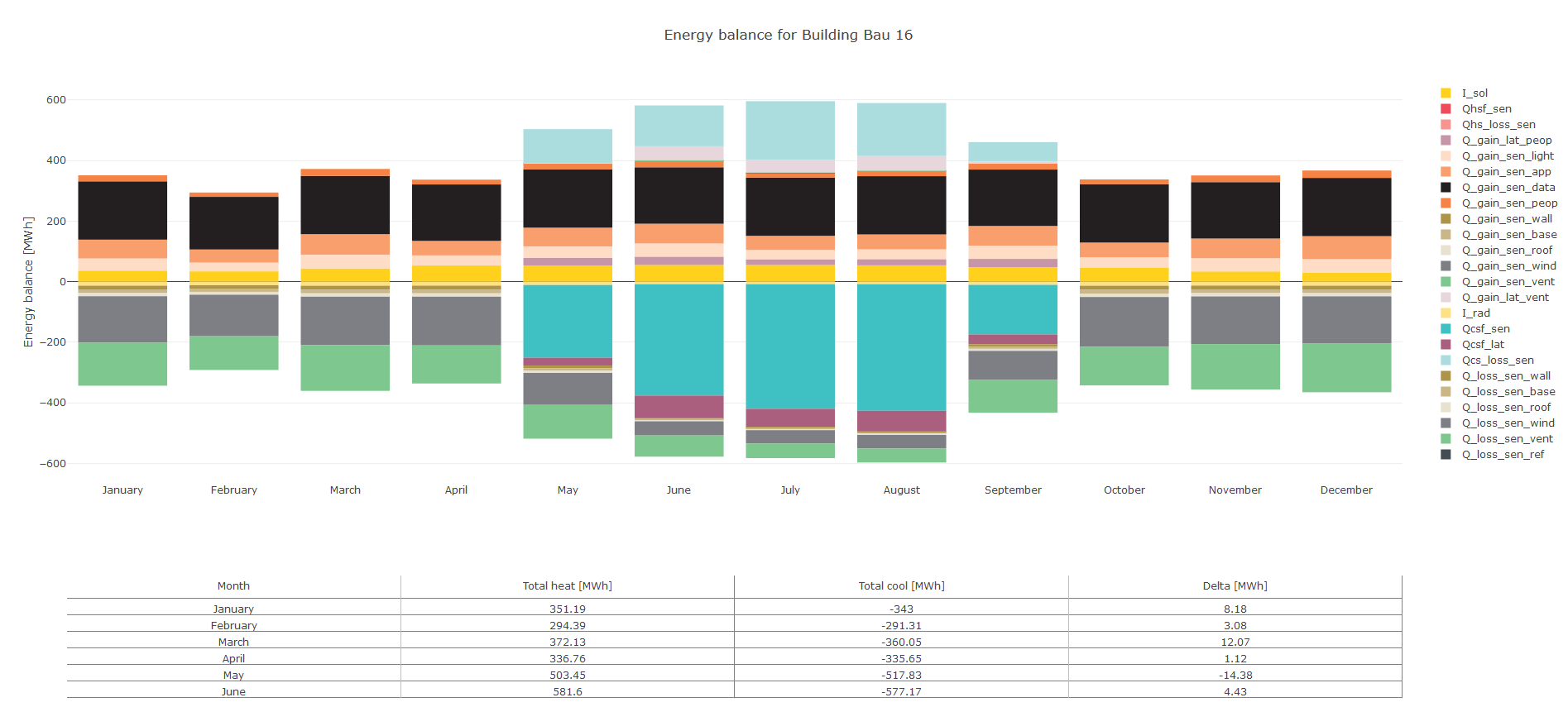

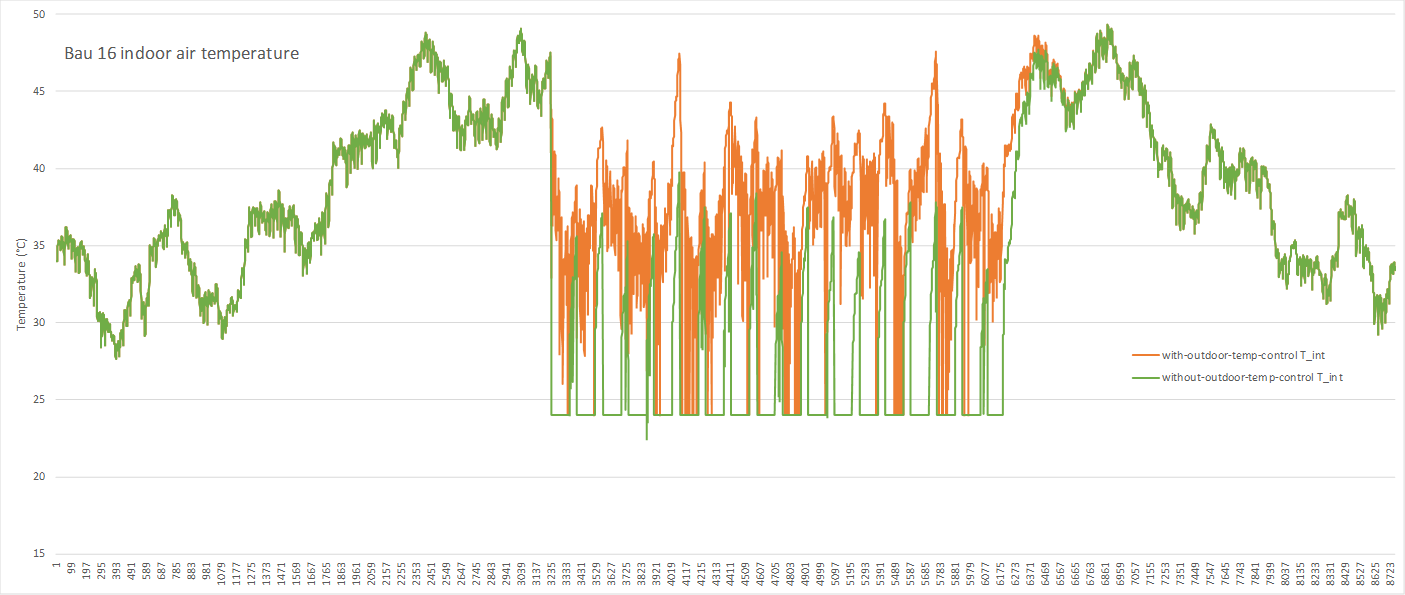

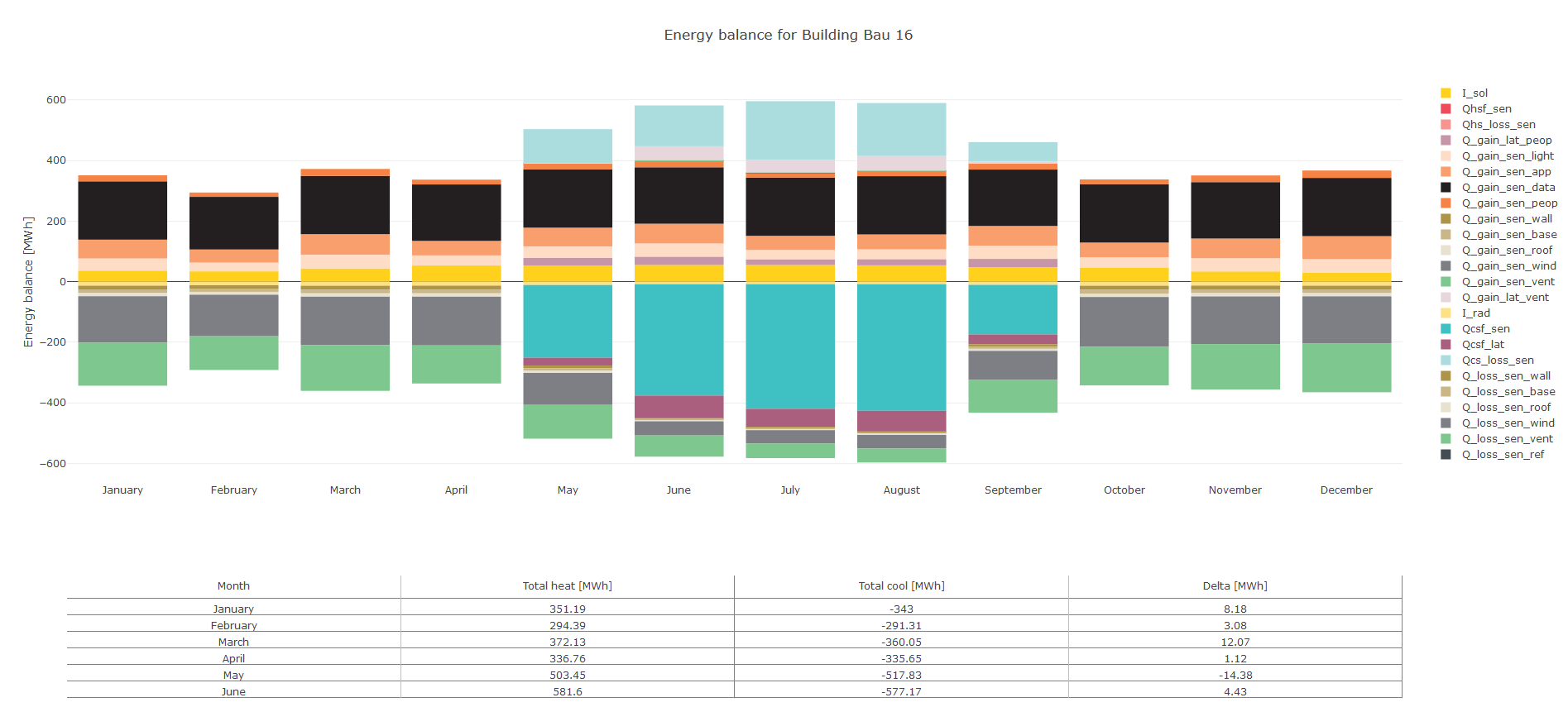

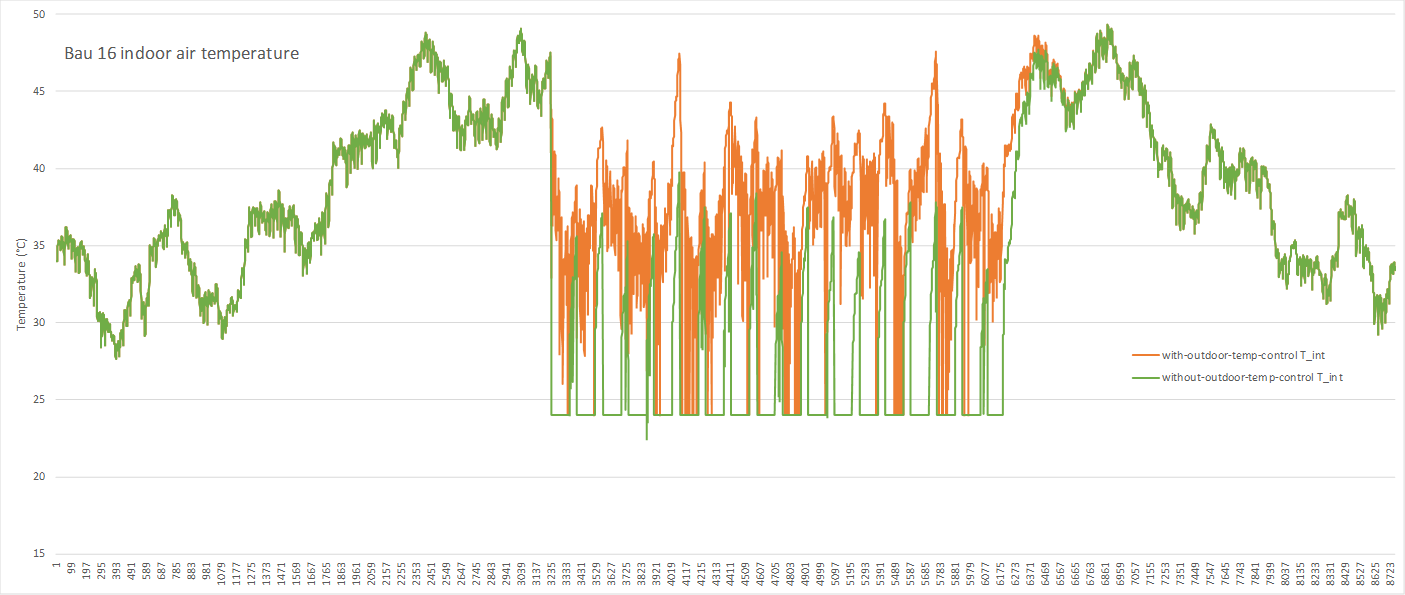

248,718 | 7,935,293,824 | IssuesEvent | 2018-07-09 04:04:15 | architecture-building-systems/CityEnergyAnalyst | https://api.github.com/repos/architecture-building-systems/CityEnergyAnalyst | closed | Maybe a BUG: electricity demand for datacenter might be too high | Priority 1 not a bug but... | The 500 W/m2 power for `SERVERRROM` in the archetypes DB might be too high.

It causes the BAU 16 in the Zug case study to overheat already in winter.

Heating is not needed at all in that building.

See also the comments in #1189

@JIMENOFONSECA can I assign this to you?

Or does @martin-mosteiro have any measured values from ETH?

| 1.0 | Maybe a BUG: electricity demand for datacenter might be too high - The 500 W/m2 power for `SERVERRROM` in the archetypes DB might be too high.

It causes the BAU 16 in the Zug case study to overheat already in winter.

Heating is not needed at all in that building.

See also the comments in #1189

@JIMENOFONSECA can I assign this to you?

Or does @martin-mosteiro have any measured values from ETH?

| non_test | maybe a bug electricity demand for datacenter might be too high the w power for serverrrom in the archetypes db might be too high it causes the bau in the zug case study to overheat already in winter heating is not needed at all in that building see also the comments in jimenofonseca can i assign this to you or does martin mosteiro have any measured values from eth | 0 |

209,867 | 16,064,173,549 | IssuesEvent | 2021-04-23 16:25:26 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Software crash when merging one Kinematicbody into another scene | bug crash needs testing topic:editor |

**Godot version:**

stable 3.2.3

**OS/device including version:**

Windows 10 Professional

Device name DESKTOP-T3MH083

Prozessor AMD Ryzen 5 2600 Six-Core Processor 3.40 GHz

Installed RAM 16.0 GB

Device ID CE860F4C-F151-429D-B0F8-52E69AF2E314

Product ID 00330-50000-00000-AAOEM

System type 64-bit operating system, x64-based processor

Pen and touch input No pen or touch input is available for this display.

**Issue description:**

When I wanted to add a 3D Kinematicbody with some subnodes to another scene via the "merge from scene" function, Godot suddenly crashed. This has happened many times lately.

| 1.0 | Software crash when merging one Kinematicbody into another scene -

**Godot version:**

stable 3.2.3

**OS/device including version:**

Windows 10 Professional

Device name DESKTOP-T3MH083

Prozessor AMD Ryzen 5 2600 Six-Core Processor 3.40 GHz

Installed RAM 16.0 GB

Device ID CE860F4C-F151-429D-B0F8-52E69AF2E314

Product ID 00330-50000-00000-AAOEM

System type 64-bit operating system, x64-based processor

Pen and touch input No pen or touch input is available for this display.

**Issue description:**

When I wanted to add a 3D Kinematicbody with some subnodes to another scene via the "merge from scene" function, Godot suddenly crashed. This has happened many times lately.

| test | software crash when merging one kinematicbody into another scene godot version stable os device including version windows professional device name desktop prozessor amd ryzen six core processor ghz installed ram gb device id product id aaoem system type bit operating system based processor pen and touch input no pen or touch input is available for this display issue description when i wanted to add a kinematicbody with some subnodes to another scene via the merge from scene function godot suddenly crashed this has happened many times lately | 1 |

21,917 | 11,425,095,422 | IssuesEvent | 2020-02-03 19:07:38 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | Training loop freezes and then stops when try to run Cycle-GAN on multi-GPU system on Google GCP using 'tf.distribute.MirroredStrategy()' . | TF 2.0 comp:dist-strat type:performance | <em>Please make sure that this is a bug. As per our [GitHub Policy](https://github.com/tensorflow/tensorflow/blob/master/ISSUES.md), we only address code/doc bugs, performance issues, feature requests and build/installation issues on GitHub. tag:bug_template</em>

**System information**

- Have I written custom code (as opposed to using a stock example script provided in TensorFlow):Yes

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04): Linux Ubuntu 18.04

- Mobile device (e.g. iPhone 8, Pixel 2, Samsung Galaxy) if the issue happens on mobile device:NaN

- TensorFlow installed from (source or binary): source

- TensorFlow version (use command below): 2.0.0

- Python version:3.6

- Bazel version (if compiling from source): NaN

- GCC/Compiler version (if compiling from source): NaN

- CUDA/cuDNN version: 10

- GPU model and memory: 4 Nvidia Tesla K80's of 11GB each

You can collect some of this information using our environment capture

[script](https://github.com/tensorflow/tensorflow/tree/master/tools/tf_env_collect.sh)

You can also obtain the TensorFlow version with: 1. TF 1.0: `python -c "import

tensorflow as tf; print(tf.GIT_VERSION, tf.VERSION)"` 2. TF 2.0: `python -c

"import tensorflow as tf; print(tf.version.GIT_VERSION, tf.version.VERSION)"`

**Describe the current behavior**

Training loop stops after few steps in the first epoch itself.

**Describe the expected behavior**

Training loop should run smoothly.

**Other info / logs**

The link below contains a zip file in which the python scripts which I used to train this model on Multi GPU using 'tf.distribute.MirroredStrategy()' is stored.

[The python scripts can be found here](https://drive.google.com/file/d/17TBNfs1h0Lnolq5ZaomqE9pTzdoGjEWb/view?usp=sharing)

> Running 'download.py' will download the dataset that I used.

> 'model.py' contains code for the architecture of the generator and the discriminator that I used for training this Cycle-GAN

> 'utils.py' contains code that I used for preprocessing the images

> 'main.py' contains the code in which I used 'tf.distribute.MirroredStrategy()' for multi-GPU training but it stops after few steps in the first epoch. | True | Training loop freezes and then stops when try to run Cycle-GAN on multi-GPU system on Google GCP using 'tf.distribute.MirroredStrategy()' . - <em>Please make sure that this is a bug. As per our [GitHub Policy](https://github.com/tensorflow/tensorflow/blob/master/ISSUES.md), we only address code/doc bugs, performance issues, feature requests and build/installation issues on GitHub. tag:bug_template</em>

**System information**

- Have I written custom code (as opposed to using a stock example script provided in TensorFlow):Yes

- OS Platform and Distribution (e.g., Linux Ubuntu 16.04): Linux Ubuntu 18.04

- Mobile device (e.g. iPhone 8, Pixel 2, Samsung Galaxy) if the issue happens on mobile device:NaN

- TensorFlow installed from (source or binary): source

- TensorFlow version (use command below): 2.0.0

- Python version:3.6

- Bazel version (if compiling from source): NaN

- GCC/Compiler version (if compiling from source): NaN

- CUDA/cuDNN version: 10

- GPU model and memory: 4 Nvidia Tesla K80's of 11GB each

You can collect some of this information using our environment capture

[script](https://github.com/tensorflow/tensorflow/tree/master/tools/tf_env_collect.sh)

You can also obtain the TensorFlow version with: 1. TF 1.0: `python -c "import

tensorflow as tf; print(tf.GIT_VERSION, tf.VERSION)"` 2. TF 2.0: `python -c

"import tensorflow as tf; print(tf.version.GIT_VERSION, tf.version.VERSION)"`

**Describe the current behavior**

Training loop stops after few steps in the first epoch itself.

**Describe the expected behavior**

Training loop should run smoothly.

**Other info / logs**

The link below contains a zip file in which the python scripts which I used to train this model on Multi GPU using 'tf.distribute.MirroredStrategy()' is stored.

[The python scripts can be found here](https://drive.google.com/file/d/17TBNfs1h0Lnolq5ZaomqE9pTzdoGjEWb/view?usp=sharing)

> Running 'download.py' will download the dataset that I used.

> 'model.py' contains code for the architecture of the generator and the discriminator that I used for training this Cycle-GAN

> 'utils.py' contains code that I used for preprocessing the images

> 'main.py' contains the code in which I used 'tf.distribute.MirroredStrategy()' for multi-GPU training but it stops after few steps in the first epoch. | non_test | training loop freezes and then stops when try to run cycle gan on multi gpu system on google gcp using tf distribute mirroredstrategy please make sure that this is a bug as per our we only address code doc bugs performance issues feature requests and build installation issues on github tag bug template system information have i written custom code as opposed to using a stock example script provided in tensorflow yes os platform and distribution e g linux ubuntu linux ubuntu mobile device e g iphone pixel samsung galaxy if the issue happens on mobile device nan tensorflow installed from source or binary source tensorflow version use command below python version bazel version if compiling from source nan gcc compiler version if compiling from source nan cuda cudnn version gpu model and memory nvidia tesla s of each you can collect some of this information using our environment capture you can also obtain the tensorflow version with tf python c import tensorflow as tf print tf git version tf version tf python c import tensorflow as tf print tf version git version tf version version describe the current behavior training loop stops after few steps in the first epoch itself describe the expected behavior training loop should run smoothly other info logs the link below contains a zip file in which the python scripts which i used to train this model on multi gpu using tf distribute mirroredstrategy is stored running download py will download the dataset that i used model py contains code for the architecture of the generator and the discriminator that i used for training this cycle gan utils py contains code that i used for preprocessing the images main py contains the code in which i used tf distribute mirroredstrategy for multi gpu training but it stops after few steps in the first epoch | 0 |

205,494 | 23,340,552,684 | IssuesEvent | 2022-08-09 13:43:22 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [Security Solution]Add HTTP header toggle remains enabled with zero header key value pair | bug impact:medium Team:Threat Hunting Team: SecuritySolution Team:Threat Hunting:Explore | **Describe the bug**

`Add HTTP header` toggle remains enabled with zero header key value pair

**Build Details:**

```

Version:8.4.0 BC1

Commit:58f7eaf0f8dc3c43cbfcd393e587f155e97b3d0d

Build:54999

```

**Steps**

- Go to Stack Management

- Select Webhook-Case Management connector

- Fill in below details

- connector name

- enable authentication and fill in the correct auth

- enable HTTP header toggle and add one header key value pair

- content-type:application/json

- now remove this by clicking on minus icon

- Observed that Add HTTP header toggle remains enabled with zero key value pair and also user can navigate to next step of connector form

-

Suggestion:

- Either restrict user to have at least one key value pair when toggle is enabled and disable the minus icon for that first key value field

- Turn Off the `Add HTTP Header` toggle as soon as user deletes all the header key value pair

**Scre

https://user-images.githubusercontent.com/59917825/182149124-9d7c5e3b-e60b-4c20-ae58-daa476928897.mp4

en-Cast**

| True | [Security Solution]Add HTTP header toggle remains enabled with zero header key value pair - **Describe the bug**

`Add HTTP header` toggle remains enabled with zero header key value pair

**Build Details:**

```

Version:8.4.0 BC1

Commit:58f7eaf0f8dc3c43cbfcd393e587f155e97b3d0d

Build:54999

```

**Steps**

- Go to Stack Management

- Select Webhook-Case Management connector

- Fill in below details

- connector name

- enable authentication and fill in the correct auth

- enable HTTP header toggle and add one header key value pair

- content-type:application/json

- now remove this by clicking on minus icon

- Observed that Add HTTP header toggle remains enabled with zero key value pair and also user can navigate to next step of connector form

-

Suggestion:

- Either restrict user to have at least one key value pair when toggle is enabled and disable the minus icon for that first key value field

- Turn Off the `Add HTTP Header` toggle as soon as user deletes all the header key value pair

**Scre

https://user-images.githubusercontent.com/59917825/182149124-9d7c5e3b-e60b-4c20-ae58-daa476928897.mp4

en-Cast**

| non_test | add http header toggle remains enabled with zero header key value pair describe the bug add http header toggle remains enabled with zero header key value pair build details version commit build steps go to stack management select webhook case management connector fill in below details connector name enable authentication and fill in the correct auth enable http header toggle and add one header key value pair content type application json now remove this by clicking on minus icon observed that add http header toggle remains enabled with zero key value pair and also user can navigate to next step of connector form suggestion either restrict user to have at least one key value pair when toggle is enabled and disable the minus icon for that first key value field turn off the add http header toggle as soon as user deletes all the header key value pair scre en cast | 0 |

126,758 | 26,909,537,172 | IssuesEvent | 2023-02-06 22:09:18 | MetaMask/design-tokens | https://api.github.com/repos/MetaMask/design-tokens | opened | [Ext] Insight Report: Tooltip | code design-system | ### **Description**

Fill out the `Tooltip` insight report from your findings from the audit

The insight report will be part of our decision making framework and is intend to:

- Document all findings from the component audit

- Confirm as many component details as possible

- Mitigate component inconsistencies across Figma, Mobile and Extension

Include your thoughts on component name, description, api and any comments or topic to discuss relating to the component or it's make up. We will review the audit and insight report in our Wednesday technical sync to finalize the details of the component for all platforms.

### **Technical Details**

The insight report should include the following for the component

- name

- description

- variants/props

- requirements (optional)

- discussion/questions (optional)

### **Acceptance Criteria**

- name, description variants/props is filled out and matches with other platforms where possible

### **References**

- [FigJam](https://www.figma.com/file/KP10I7OHiuUsGZ53xAgZTj/Popover-Audit?node-id=0%3A1&t=u3rGtzERyA5zkSPu-1)

- Read exercised `#05 Identify Existing Paradigms in Design and Code` and `#06 IdentifyEmergingandInteresting

Paradigms in Design and Code` in the Design System in 90 Days workbook

| 1.0 | [Ext] Insight Report: Tooltip - ### **Description**

Fill out the `Tooltip` insight report from your findings from the audit

The insight report will be part of our decision making framework and is intend to:

- Document all findings from the component audit

- Confirm as many component details as possible

- Mitigate component inconsistencies across Figma, Mobile and Extension

Include your thoughts on component name, description, api and any comments or topic to discuss relating to the component or it's make up. We will review the audit and insight report in our Wednesday technical sync to finalize the details of the component for all platforms.

### **Technical Details**

The insight report should include the following for the component

- name

- description

- variants/props

- requirements (optional)

- discussion/questions (optional)

### **Acceptance Criteria**

- name, description variants/props is filled out and matches with other platforms where possible

### **References**

- [FigJam](https://www.figma.com/file/KP10I7OHiuUsGZ53xAgZTj/Popover-Audit?node-id=0%3A1&t=u3rGtzERyA5zkSPu-1)

- Read exercised `#05 Identify Existing Paradigms in Design and Code` and `#06 IdentifyEmergingandInteresting

Paradigms in Design and Code` in the Design System in 90 Days workbook

| non_test | insight report tooltip description fill out the tooltip insight report from your findings from the audit the insight report will be part of our decision making framework and is intend to document all findings from the component audit confirm as many component details as possible mitigate component inconsistencies across figma mobile and extension include your thoughts on component name description api and any comments or topic to discuss relating to the component or it s make up we will review the audit and insight report in our wednesday technical sync to finalize the details of the component for all platforms technical details the insight report should include the following for the component name description variants props requirements optional discussion questions optional acceptance criteria name description variants props is filled out and matches with other platforms where possible references read exercised identify existing paradigms in design and code and identifyemergingandinteresting paradigms in design and code in the design system in days workbook | 0 |

820,802 | 30,789,669,914 | IssuesEvent | 2023-07-31 15:20:01 | RobotLocomotion/drake | https://api.github.com/repos/RobotLocomotion/drake | closed | System::Clone has incorrect SystemIDs for the ports | type: bug priority: medium component: system framework | ### What happened?

Initially reported by @AlexandreAmice ... the following test

```

GTEST_TEST(PendulumPlantTest, Clone) {

const PendulumPlant<double> plant;

auto clone = plant.Clone();

auto context = clone->CreateDefaultContext();

clone->get_input_port(0).FixValue(context.get(), Vector1d::Zero());

}

```

fails with

```

C++ exception with description "InputPort: The Context given as an argument was not created for this InputPort[0] (tau) of System ::_ (PendulumPlant<double>)" thrown in the test body.

```

Digging around in lldb (with a breakpoint on the last line), I see

```

(lldb) p plant.get_system_id()

(drake::systems::internal::SystemId) $5 = (value_ = 6)

(lldb) p clone->get_system_id()

(drake::systems::internal::SystemId) $6 = (value_ = 9)

(lldb) p plant.CreateDefaultContext()->get_system_id()

(drake::systems::internal::SystemId) $7 = (value_ = 6)

(lldb) p clone.CreateDefaultContext()->get_system_id()

(drake::systems::internal::SystemId) $8 = (value_ = 9)

(lldb) p clone->CreateDefaultContext()->get_system_id()

(drake::systems::internal::SystemId) $11 = (value_ = 9)

(lldb) p clone->get_input_port(0).owning_system_id_

(const drake::systems::internal::SystemId) $12 = (value_ = 8)

```

Stepping into the code, I believe that the problem probably happens [here](https://github.com/RobotLocomotion/drake/blob/8b25bb8cd378802e1b819d66b4fba724d4b2fcc9/systems/framework/system.cc#L42).... it looks like the system's ID gets reset, but the port's IDs do not.

### Version

_No response_

### What operating system are you using?

_No response_

### What installation option are you using?

_No response_

### Relevant log output

_No response_ | 1.0 | System::Clone has incorrect SystemIDs for the ports - ### What happened?

Initially reported by @AlexandreAmice ... the following test

```

GTEST_TEST(PendulumPlantTest, Clone) {

const PendulumPlant<double> plant;

auto clone = plant.Clone();

auto context = clone->CreateDefaultContext();

clone->get_input_port(0).FixValue(context.get(), Vector1d::Zero());

}

```

fails with

```

C++ exception with description "InputPort: The Context given as an argument was not created for this InputPort[0] (tau) of System ::_ (PendulumPlant<double>)" thrown in the test body.

```

Digging around in lldb (with a breakpoint on the last line), I see

```

(lldb) p plant.get_system_id()

(drake::systems::internal::SystemId) $5 = (value_ = 6)

(lldb) p clone->get_system_id()

(drake::systems::internal::SystemId) $6 = (value_ = 9)

(lldb) p plant.CreateDefaultContext()->get_system_id()

(drake::systems::internal::SystemId) $7 = (value_ = 6)

(lldb) p clone.CreateDefaultContext()->get_system_id()

(drake::systems::internal::SystemId) $8 = (value_ = 9)

(lldb) p clone->CreateDefaultContext()->get_system_id()

(drake::systems::internal::SystemId) $11 = (value_ = 9)

(lldb) p clone->get_input_port(0).owning_system_id_

(const drake::systems::internal::SystemId) $12 = (value_ = 8)

```

Stepping into the code, I believe that the problem probably happens [here](https://github.com/RobotLocomotion/drake/blob/8b25bb8cd378802e1b819d66b4fba724d4b2fcc9/systems/framework/system.cc#L42).... it looks like the system's ID gets reset, but the port's IDs do not.

### Version

_No response_

### What operating system are you using?

_No response_

### What installation option are you using?

_No response_

### Relevant log output

_No response_ | non_test | system clone has incorrect systemids for the ports what happened initially reported by alexandreamice the following test gtest test pendulumplanttest clone const pendulumplant plant auto clone plant clone auto context clone createdefaultcontext clone get input port fixvalue context get zero fails with c exception with description inputport the context given as an argument was not created for this inputport tau of system pendulumplant thrown in the test body digging around in lldb with a breakpoint on the last line i see lldb p plant get system id drake systems internal systemid value lldb p clone get system id drake systems internal systemid value lldb p plant createdefaultcontext get system id drake systems internal systemid value lldb p clone createdefaultcontext get system id drake systems internal systemid value lldb p clone createdefaultcontext get system id drake systems internal systemid value lldb p clone get input port owning system id const drake systems internal systemid value stepping into the code i believe that the problem probably happens it looks like the system s id gets reset but the port s ids do not version no response what operating system are you using no response what installation option are you using no response relevant log output no response | 0 |

306,892 | 9,412,570,838 | IssuesEvent | 2019-04-10 04:42:40 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Metabase hangs with unknown reason, CPU usage full, saying "The database(H2) has been closed" | Bug Priority/P1 Running Metabase | - Your databases: (e.x. MariaDB)

- Metabase version: (e.x. 0.31.2)

- Metabase hosting environment: (e.x. Debian 9)

- Metabase internal database: (e.x. H2)

- *Repeatable steps to reproduce the issue*

After long time run, the metabase hangs and CPU is full (400% at a 4 core cpu)

`kill` doesn't work and I have to do `kill -9` to force terminating. Sorry I forgot to do `jstack`.

```

02-21 08:02:32 DEBUG sync.util :: STARTING: step 'sync-fks' for mysql Database 2 'xxxxxxx'

02-21 08:43:12 DEBUG metabase.middleware :: GET /api/user/current 200 (29 mins) (2 DB calls). Jetty threads: 8/50 (34 busy, 2 idle, 0 queued)

02-21 08:43:27 ERROR jdbcjobstore.JobStoreTX :: Couldn't rollback jdbc connection. The database has been closed [90098-197]

org.h2.jdbc.JdbcSQLException: The database has been closed [90098-197]

at org.h2.message.DbException.getJdbcSQLException(DbException.java:357)

at org.h2.message.DbException.get(DbException.java:179)

at org.h2.message.DbException.get(DbException.java:155)

at org.h2.message.DbException.get(DbException.java:144)

at org.h2.engine.Session.getTransaction(Session.java:1686)

at org.h2.engine.Session.getStatementSavepoint(Session.java:1696)

at org.h2.engine.Session.setSavepoint(Session.java:859)

at org.h2.command.Command.executeUpdate(Command.java:255)

at org.h2.jdbc.JdbcConnection.rollbackInternal(JdbcConnection.java:1558)

at org.h2.jdbc.JdbcConnection.rollback(JdbcConnection.java:518)

at com.mchange.v2.c3p0.impl.NewProxyConnection.rollback(NewProxyConnection.java:1033)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.quartz.impl.jdbcjobstore.AttributeRestoringConnectionInvocationHandler.invoke(AttributeRestoringConnectionInvocationHandler.java:73)

at com.sun.proxy.$Proxy12.rollback(Unknown Source)

at org.quartz.impl.jdbcjobstore.JobStoreSupport.rollbackConnection(JobStoreSupport.java:3639)

at org.quartz.impl.jdbcjobstore.JobStoreSupport.doCheckin(JobStoreSupport.java:3264)

at org.quartz.impl.jdbcjobstore.JobStoreSupport$ClusterManager.manage(JobStoreSupport.java:3857)

at org.quartz.impl.jdbcjobstore.JobStoreSupport$ClusterManager.run(JobStoreSupport.java:3894)

02-21 08:43:31 WARN server.HttpChannel :: /api/collection/root

org.h2.jdbc.JdbcSQLException: The database has been closed [90098-197] [665/1998]

at org.h2.message.DbException.getJdbcSQLException(DbException.java:357)

at org.h2.message.DbException.get(DbException.java:179)

at org.h2.message.DbException.get(DbException.java:155)

at org.h2.message.DbException.get(DbException.java:144)

at org.h2.engine.Database.checkPowerOff(Database.java:536)

at org.h2.command.Command.executeQuery(Command.java:228)

at org.h2.jdbc.JdbcPreparedStatement.executeQuery(JdbcPreparedStatement.java:114)

at com.mchange.v2.c3p0.impl.NewProxyPreparedStatement.executeQuery(NewProxyPreparedStatement.java:353)

at clojure.java.jdbc$execute_query_with_params.invokeStatic(jdbc.clj:1002)

at clojure.java.jdbc$execute_query_with_params.invoke(jdbc.clj:996)

at clojure.java.jdbc$db_query_with_resultset_STAR_.invokeStatic(jdbc.clj:1025)

at clojure.java.jdbc$db_query_with_resultset_STAR_.invoke(jdbc.clj:1005)

at clojure.java.jdbc$query.invokeStatic(jdbc.clj:1099)

at clojure.java.jdbc$query.invoke(jdbc.clj:1056)

at toucan.db$query.invokeStatic(db.clj:275)

at toucan.db$query.doInvoke(db.clj:271)

at clojure.lang.RestFn.invoke(RestFn.java:410)

at toucan.db$simple_select.invokeStatic(db.clj:379)

at toucan.db$simple_select.invoke(db.clj:368)

at toucan.db$simple_select_one.invokeStatic(db.clj:405)

at toucan.db$simple_select_one.invoke(db.clj:394)

at toucan.db$select_one.invokeStatic(db.clj:606)

at toucan.db$select_one.doInvoke(db.clj:599)

at clojure.lang.RestFn.invoke(RestFn.java:516)

at metabase.middleware$session_with_id.invokeStatic(middleware.clj:73)

at metabase.middleware$session_with_id.invoke(middleware.clj:70)

at metabase.middleware$current_user_info_for_session.invokeStatic(middleware.clj:94)

at metabase.middleware$current_user_info_for_session.invoke(middleware.clj:90)

at metabase.middleware$add_current_user_info.invokeStatic(middleware.clj:100)

at metabase.middleware$add_current_user_info.invoke(middleware.clj:99)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at metabase.middleware$maybe_set_site_url$fn__56239.invoke(middleware.clj:290)

at puppetlabs.i18n.core$locale_negotiator$fn__124.invoke(core.clj:357)

at ring.middleware.cookies$wrap_cookies$fn__62401.invoke(cookies.clj:175)

at ring.middleware.session$wrap_session$fn__62658.invoke(session.clj:108)

at metabase.middleware$add_content_type$fn__56232.invoke(middleware.clj:262)

at ring.middleware.gzip$wrap_gzip$fn__62432.invoke(gzip.clj:65)

at ring.adapter.jetty$proxy_handler$fn__62260.invoke(jetty.clj:25)

at ring.adapter.jetty.proxy$org.eclipse.jetty.server.handler.AbstractHandler$ff19274a.handle(Unknown Source)

at org.eclipse.jetty.server.handler.HandlerWrapper.handle(HandlerWrapper.java:132) [623/1998]

at org.eclipse.jetty.server.Server.handle(Server.java:531)

at org.eclipse.jetty.server.HttpChannel.handle(HttpChannel.java:352)

at org.eclipse.jetty.server.HttpConnection.onFillable(HttpConnection.java:260)

at org.eclipse.jetty.io.AbstractConnection$ReadCallback.succeeded(AbstractConnection.java:281)

at org.eclipse.jetty.io.FillInterest.fillable(FillInterest.java:102)

at org.eclipse.jetty.io.ChannelEndPoint$2.run(ChannelEndPoint.java:118)

at org.eclipse.jetty.util.thread.QueuedThreadPool.runJob(QueuedThreadPool.java:762)

at org.eclipse.jetty.util.thread.QueuedThreadPool$2.run(QueuedThreadPool.java:680)

at java.lang.Thread.run(Thread.java:748)

02-21 08:43:41 ERROR jdbcjobstore.JobStoreTX :: Couldn't rollback jdbc connection. The database has been closed [90098-197]

org.h2.jdbc.JdbcSQLException: The database has been closed [90098-197]

at org.h2.message.DbException.getJdbcSQLException(DbException.java:357)

at org.h2.message.DbException.get(DbException.java:179)

at org.h2.message.DbException.get(DbException.java:155)

at org.h2.message.DbException.get(DbException.java:144)

at org.h2.engine.Session.getTransaction(Session.java:1686)

at org.h2.engine.Session.getStatementSavepoint(Session.java:1696)

at org.h2.engine.Session.setSavepoint(Session.java:859)

at org.h2.command.Command.executeUpdate(Command.java:255)

at org.h2.jdbc.JdbcConnection.rollbackInternal(JdbcConnection.java:1558)

at org.h2.jdbc.JdbcConnection.rollback(JdbcConnection.java:518)

at com.mchange.v2.c3p0.impl.NewProxyConnection.rollback(NewProxyConnection.java:1033)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.quartz.impl.jdbcjobstore.AttributeRestoringConnectionInvocationHandler.invoke(AttributeRestoringConnectionInvocationHandler.java:73)

at com.sun.proxy.$Proxy12.rollback(Unknown Source)

at org.quartz.impl.jdbcjobstore.JobStoreSupport.rollbackConnection(JobStoreSupport.java:3639)

at org.quartz.impl.jdbcjobstore.JobStoreSupport.doRecoverMisfires(JobStoreSupport.java:3183)

at org.quartz.impl.jdbcjobstore.JobStoreSupport$MisfireHandler.manage(JobStoreSupport.java:3934)

at org.quartz.impl.jdbcjobstore.JobStoreSupport$MisfireHandler.run(JobStoreSupport.java:3955)

02-21 08:43:41 WARN server.HttpChannel :: /api/dashboard/1

org.h2.jdbc.JdbcSQLException: The database has been closed [90098-197]

at org.h2.message.DbException.getJdbcSQLException(DbException.java:357)

at org.h2.message.DbException.get(DbException.java:179)

at org.h2.message.DbException.get(DbException.java:155)

at org.h2.message.DbException.get(DbException.java:144)

at org.h2.engine.Database.checkPowerOff(Database.java:536)

at org.h2.command.Command.executeQuery(Command.java:228)

at org.h2.jdbc.JdbcPreparedStatement.executeQuery(JdbcPreparedStatement.java:114)

at com.mchange.v2.c3p0.impl.NewProxyPreparedStatement.executeQuery(NewProxyPreparedStatement.java:353)

at clojure.java.jdbc$execute_query_with_params.invokeStatic(jdbc.clj:1002)

at clojure.java.jdbc$execute_query_with_params.invoke(jdbc.clj:996)

at clojure.java.jdbc$db_query_with_resultset_STAR_.invokeStatic(jdbc.clj:1025) [578/1998]

at clojure.java.jdbc$db_query_with_resultset_STAR_.invoke(jdbc.clj:1005)

at clojure.java.jdbc$query.invokeStatic(jdbc.clj:1099)

at clojure.java.jdbc$query.invoke(jdbc.clj:1056)

at toucan.db$query.invokeStatic(db.clj:275)

at toucan.db$query.doInvoke(db.clj:271)

at clojure.lang.RestFn.invoke(RestFn.java:410)

at toucan.db$simple_select.invokeStatic(db.clj:379)

at toucan.db$simple_select.invoke(db.clj:368)

at toucan.db$simple_select_one.invokeStatic(db.clj:405)

at toucan.db$simple_select_one.invoke(db.clj:394)

at toucan.db$select_one.invokeStatic(db.clj:606)

at toucan.db$select_one.doInvoke(db.clj:599)

at clojure.lang.RestFn.invoke(RestFn.java:516)

at metabase.middleware$session_with_id.invokeStatic(middleware.clj:73)

at metabase.middleware$session_with_id.invoke(middleware.clj:70)

at metabase.middleware$current_user_info_for_session.invokeStatic(middleware.clj:94)

at metabase.middleware$current_user_info_for_session.invoke(middleware.clj:90)

at metabase.middleware$add_current_user_info.invokeStatic(middleware.clj:100)

at metabase.middleware$add_current_user_info.invoke(middleware.clj:99)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at metabase.middleware$maybe_set_site_url$fn__56239.invoke(middleware.clj:290)

at puppetlabs.i18n.core$locale_negotiator$fn__124.invoke(core.clj:357)

at ring.middleware.cookies$wrap_cookies$fn__62401.invoke(cookies.clj:175)

at ring.middleware.session$wrap_session$fn__62658.invoke(session.clj:108)

at metabase.middleware$add_content_type$fn__56232.invoke(middleware.clj:262)

at ring.middleware.gzip$wrap_gzip$fn__62432.invoke(gzip.clj:65)

at ring.adapter.jetty$proxy_handler$fn__62260.invoke(jetty.clj:25)

at ring.adapter.jetty.proxy$org.eclipse.jetty.server.handler.AbstractHandler$ff19274a.handle(Unknown Source)

at org.eclipse.jetty.server.handler.HandlerWrapper.handle(HandlerWrapper.java:132)

at org.eclipse.jetty.server.Server.handle(Server.java:531)

at org.eclipse.jetty.server.HttpChannel.handle(HttpChannel.java:352)

at org.eclipse.jetty.server.HttpConnection.onFillable(HttpConnection.java:260)

at org.eclipse.jetty.io.AbstractConnection$ReadCallback.succeeded(AbstractConnection.java:281)

at org.eclipse.jetty.io.FillInterest.fillable(FillInterest.java:102)

at org.eclipse.jetty.io.ChannelEndPoint$2.run(ChannelEndPoint.java:118)

at org.eclipse.jetty.util.thread.QueuedThreadPool.runJob(QueuedThreadPool.java:762)

at org.eclipse.jetty.util.thread.QueuedThreadPool$2.run(QueuedThreadPool.java:680)

at java.lang.Thread.run(Thread.java:748)

```

| 1.0 | Metabase hangs with unknown reason, CPU usage full, saying "The database(H2) has been closed" - - Your databases: (e.x. MariaDB)

- Metabase version: (e.x. 0.31.2)

- Metabase hosting environment: (e.x. Debian 9)

- Metabase internal database: (e.x. H2)

- *Repeatable steps to reproduce the issue*

After long time run, the metabase hangs and CPU is full (400% at a 4 core cpu)

`kill` doesn't work and I have to do `kill -9` to force terminating. Sorry I forgot to do `jstack`.

```

02-21 08:02:32 DEBUG sync.util :: STARTING: step 'sync-fks' for mysql Database 2 'xxxxxxx'

02-21 08:43:12 DEBUG metabase.middleware :: GET /api/user/current 200 (29 mins) (2 DB calls). Jetty threads: 8/50 (34 busy, 2 idle, 0 queued)

02-21 08:43:27 ERROR jdbcjobstore.JobStoreTX :: Couldn't rollback jdbc connection. The database has been closed [90098-197]

org.h2.jdbc.JdbcSQLException: The database has been closed [90098-197]

at org.h2.message.DbException.getJdbcSQLException(DbException.java:357)

at org.h2.message.DbException.get(DbException.java:179)

at org.h2.message.DbException.get(DbException.java:155)

at org.h2.message.DbException.get(DbException.java:144)

at org.h2.engine.Session.getTransaction(Session.java:1686)

at org.h2.engine.Session.getStatementSavepoint(Session.java:1696)

at org.h2.engine.Session.setSavepoint(Session.java:859)

at org.h2.command.Command.executeUpdate(Command.java:255)

at org.h2.jdbc.JdbcConnection.rollbackInternal(JdbcConnection.java:1558)

at org.h2.jdbc.JdbcConnection.rollback(JdbcConnection.java:518)

at com.mchange.v2.c3p0.impl.NewProxyConnection.rollback(NewProxyConnection.java:1033)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.quartz.impl.jdbcjobstore.AttributeRestoringConnectionInvocationHandler.invoke(AttributeRestoringConnectionInvocationHandler.java:73)

at com.sun.proxy.$Proxy12.rollback(Unknown Source)

at org.quartz.impl.jdbcjobstore.JobStoreSupport.rollbackConnection(JobStoreSupport.java:3639)

at org.quartz.impl.jdbcjobstore.JobStoreSupport.doCheckin(JobStoreSupport.java:3264)

at org.quartz.impl.jdbcjobstore.JobStoreSupport$ClusterManager.manage(JobStoreSupport.java:3857)

at org.quartz.impl.jdbcjobstore.JobStoreSupport$ClusterManager.run(JobStoreSupport.java:3894)

02-21 08:43:31 WARN server.HttpChannel :: /api/collection/root

org.h2.jdbc.JdbcSQLException: The database has been closed [90098-197] [665/1998]

at org.h2.message.DbException.getJdbcSQLException(DbException.java:357)

at org.h2.message.DbException.get(DbException.java:179)

at org.h2.message.DbException.get(DbException.java:155)

at org.h2.message.DbException.get(DbException.java:144)

at org.h2.engine.Database.checkPowerOff(Database.java:536)

at org.h2.command.Command.executeQuery(Command.java:228)

at org.h2.jdbc.JdbcPreparedStatement.executeQuery(JdbcPreparedStatement.java:114)

at com.mchange.v2.c3p0.impl.NewProxyPreparedStatement.executeQuery(NewProxyPreparedStatement.java:353)

at clojure.java.jdbc$execute_query_with_params.invokeStatic(jdbc.clj:1002)

at clojure.java.jdbc$execute_query_with_params.invoke(jdbc.clj:996)

at clojure.java.jdbc$db_query_with_resultset_STAR_.invokeStatic(jdbc.clj:1025)

at clojure.java.jdbc$db_query_with_resultset_STAR_.invoke(jdbc.clj:1005)

at clojure.java.jdbc$query.invokeStatic(jdbc.clj:1099)

at clojure.java.jdbc$query.invoke(jdbc.clj:1056)

at toucan.db$query.invokeStatic(db.clj:275)

at toucan.db$query.doInvoke(db.clj:271)

at clojure.lang.RestFn.invoke(RestFn.java:410)

at toucan.db$simple_select.invokeStatic(db.clj:379)

at toucan.db$simple_select.invoke(db.clj:368)

at toucan.db$simple_select_one.invokeStatic(db.clj:405)

at toucan.db$simple_select_one.invoke(db.clj:394)

at toucan.db$select_one.invokeStatic(db.clj:606)

at toucan.db$select_one.doInvoke(db.clj:599)

at clojure.lang.RestFn.invoke(RestFn.java:516)

at metabase.middleware$session_with_id.invokeStatic(middleware.clj:73)

at metabase.middleware$session_with_id.invoke(middleware.clj:70)

at metabase.middleware$current_user_info_for_session.invokeStatic(middleware.clj:94)

at metabase.middleware$current_user_info_for_session.invoke(middleware.clj:90)

at metabase.middleware$add_current_user_info.invokeStatic(middleware.clj:100)

at metabase.middleware$add_current_user_info.invoke(middleware.clj:99)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at metabase.middleware$maybe_set_site_url$fn__56239.invoke(middleware.clj:290)

at puppetlabs.i18n.core$locale_negotiator$fn__124.invoke(core.clj:357)

at ring.middleware.cookies$wrap_cookies$fn__62401.invoke(cookies.clj:175)

at ring.middleware.session$wrap_session$fn__62658.invoke(session.clj:108)

at metabase.middleware$add_content_type$fn__56232.invoke(middleware.clj:262)

at ring.middleware.gzip$wrap_gzip$fn__62432.invoke(gzip.clj:65)

at ring.adapter.jetty$proxy_handler$fn__62260.invoke(jetty.clj:25)

at ring.adapter.jetty.proxy$org.eclipse.jetty.server.handler.AbstractHandler$ff19274a.handle(Unknown Source)

at org.eclipse.jetty.server.handler.HandlerWrapper.handle(HandlerWrapper.java:132) [623/1998]

at org.eclipse.jetty.server.Server.handle(Server.java:531)

at org.eclipse.jetty.server.HttpChannel.handle(HttpChannel.java:352)

at org.eclipse.jetty.server.HttpConnection.onFillable(HttpConnection.java:260)

at org.eclipse.jetty.io.AbstractConnection$ReadCallback.succeeded(AbstractConnection.java:281)

at org.eclipse.jetty.io.FillInterest.fillable(FillInterest.java:102)

at org.eclipse.jetty.io.ChannelEndPoint$2.run(ChannelEndPoint.java:118)

at org.eclipse.jetty.util.thread.QueuedThreadPool.runJob(QueuedThreadPool.java:762)

at org.eclipse.jetty.util.thread.QueuedThreadPool$2.run(QueuedThreadPool.java:680)

at java.lang.Thread.run(Thread.java:748)

02-21 08:43:41 ERROR jdbcjobstore.JobStoreTX :: Couldn't rollback jdbc connection. The database has been closed [90098-197]

org.h2.jdbc.JdbcSQLException: The database has been closed [90098-197]

at org.h2.message.DbException.getJdbcSQLException(DbException.java:357)

at org.h2.message.DbException.get(DbException.java:179)

at org.h2.message.DbException.get(DbException.java:155)

at org.h2.message.DbException.get(DbException.java:144)

at org.h2.engine.Session.getTransaction(Session.java:1686)

at org.h2.engine.Session.getStatementSavepoint(Session.java:1696)

at org.h2.engine.Session.setSavepoint(Session.java:859)

at org.h2.command.Command.executeUpdate(Command.java:255)

at org.h2.jdbc.JdbcConnection.rollbackInternal(JdbcConnection.java:1558)

at org.h2.jdbc.JdbcConnection.rollback(JdbcConnection.java:518)

at com.mchange.v2.c3p0.impl.NewProxyConnection.rollback(NewProxyConnection.java:1033)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.quartz.impl.jdbcjobstore.AttributeRestoringConnectionInvocationHandler.invoke(AttributeRestoringConnectionInvocationHandler.java:73)

at com.sun.proxy.$Proxy12.rollback(Unknown Source)

at org.quartz.impl.jdbcjobstore.JobStoreSupport.rollbackConnection(JobStoreSupport.java:3639)

at org.quartz.impl.jdbcjobstore.JobStoreSupport.doRecoverMisfires(JobStoreSupport.java:3183)

at org.quartz.impl.jdbcjobstore.JobStoreSupport$MisfireHandler.manage(JobStoreSupport.java:3934)

at org.quartz.impl.jdbcjobstore.JobStoreSupport$MisfireHandler.run(JobStoreSupport.java:3955)

02-21 08:43:41 WARN server.HttpChannel :: /api/dashboard/1

org.h2.jdbc.JdbcSQLException: The database has been closed [90098-197]

at org.h2.message.DbException.getJdbcSQLException(DbException.java:357)

at org.h2.message.DbException.get(DbException.java:179)

at org.h2.message.DbException.get(DbException.java:155)

at org.h2.message.DbException.get(DbException.java:144)

at org.h2.engine.Database.checkPowerOff(Database.java:536)

at org.h2.command.Command.executeQuery(Command.java:228)

at org.h2.jdbc.JdbcPreparedStatement.executeQuery(JdbcPreparedStatement.java:114)

at com.mchange.v2.c3p0.impl.NewProxyPreparedStatement.executeQuery(NewProxyPreparedStatement.java:353)

at clojure.java.jdbc$execute_query_with_params.invokeStatic(jdbc.clj:1002)

at clojure.java.jdbc$execute_query_with_params.invoke(jdbc.clj:996)

at clojure.java.jdbc$db_query_with_resultset_STAR_.invokeStatic(jdbc.clj:1025) [578/1998]

at clojure.java.jdbc$db_query_with_resultset_STAR_.invoke(jdbc.clj:1005)

at clojure.java.jdbc$query.invokeStatic(jdbc.clj:1099)

at clojure.java.jdbc$query.invoke(jdbc.clj:1056)

at toucan.db$query.invokeStatic(db.clj:275)

at toucan.db$query.doInvoke(db.clj:271)

at clojure.lang.RestFn.invoke(RestFn.java:410)

at toucan.db$simple_select.invokeStatic(db.clj:379)

at toucan.db$simple_select.invoke(db.clj:368)

at toucan.db$simple_select_one.invokeStatic(db.clj:405)

at toucan.db$simple_select_one.invoke(db.clj:394)

at toucan.db$select_one.invokeStatic(db.clj:606)

at toucan.db$select_one.doInvoke(db.clj:599)

at clojure.lang.RestFn.invoke(RestFn.java:516)

at metabase.middleware$session_with_id.invokeStatic(middleware.clj:73)

at metabase.middleware$session_with_id.invoke(middleware.clj:70)

at metabase.middleware$current_user_info_for_session.invokeStatic(middleware.clj:94)

at metabase.middleware$current_user_info_for_session.invoke(middleware.clj:90)

at metabase.middleware$add_current_user_info.invokeStatic(middleware.clj:100)

at metabase.middleware$add_current_user_info.invoke(middleware.clj:99)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at clojure.core$comp$fn__5529.invoke(core.clj:2561)

at metabase.middleware$maybe_set_site_url$fn__56239.invoke(middleware.clj:290)

at puppetlabs.i18n.core$locale_negotiator$fn__124.invoke(core.clj:357)

at ring.middleware.cookies$wrap_cookies$fn__62401.invoke(cookies.clj:175)

at ring.middleware.session$wrap_session$fn__62658.invoke(session.clj:108)

at metabase.middleware$add_content_type$fn__56232.invoke(middleware.clj:262)

at ring.middleware.gzip$wrap_gzip$fn__62432.invoke(gzip.clj:65)

at ring.adapter.jetty$proxy_handler$fn__62260.invoke(jetty.clj:25)

at ring.adapter.jetty.proxy$org.eclipse.jetty.server.handler.AbstractHandler$ff19274a.handle(Unknown Source)

at org.eclipse.jetty.server.handler.HandlerWrapper.handle(HandlerWrapper.java:132)

at org.eclipse.jetty.server.Server.handle(Server.java:531)

at org.eclipse.jetty.server.HttpChannel.handle(HttpChannel.java:352)

at org.eclipse.jetty.server.HttpConnection.onFillable(HttpConnection.java:260)

at org.eclipse.jetty.io.AbstractConnection$ReadCallback.succeeded(AbstractConnection.java:281)

at org.eclipse.jetty.io.FillInterest.fillable(FillInterest.java:102)

at org.eclipse.jetty.io.ChannelEndPoint$2.run(ChannelEndPoint.java:118)

at org.eclipse.jetty.util.thread.QueuedThreadPool.runJob(QueuedThreadPool.java:762)

at org.eclipse.jetty.util.thread.QueuedThreadPool$2.run(QueuedThreadPool.java:680)

at java.lang.Thread.run(Thread.java:748)

```

| non_test | metabase hangs with unknown reason cpu usage full saying the database has been closed your databases e x mariadb metabase version e x metabase hosting environment e x debian metabase internal database e x repeatable steps to reproduce the issue after long time run the metabase hangs and cpu is full at a core cpu kill doesn t work and i have to do kill to force terminating sorry i forgot to do jstack debug sync util starting step sync fks for mysql database xxxxxxx debug metabase middleware get api user current mins db calls jetty threads busy idle queued error jdbcjobstore jobstoretx couldn t rollback jdbc connection the database has been closed org jdbc jdbcsqlexception the database has been closed at org message dbexception getjdbcsqlexception dbexception java at org message dbexception get dbexception java at org message dbexception get dbexception java at org message dbexception get dbexception java at org engine session gettransaction session java at org engine session getstatementsavepoint session java at org engine session setsavepoint session java at org command command executeupdate command java at org jdbc jdbcconnection rollbackinternal jdbcconnection java at org jdbc jdbcconnection rollback jdbcconnection java at com mchange impl newproxyconnection rollback newproxyconnection java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org quartz impl jdbcjobstore attributerestoringconnectioninvocationhandler invoke attributerestoringconnectioninvocationhandler java at com sun proxy rollback unknown source at org quartz impl jdbcjobstore jobstoresupport rollbackconnection jobstoresupport java at org quartz impl jdbcjobstore jobstoresupport docheckin jobstoresupport java at org quartz impl jdbcjobstore jobstoresupport clustermanager manage jobstoresupport java at org quartz impl jdbcjobstore jobstoresupport clustermanager run jobstoresupport java warn server httpchannel api collection root org jdbc jdbcsqlexception the database has been closed at org message dbexception getjdbcsqlexception dbexception java at org message dbexception get dbexception java at org message dbexception get dbexception java at org message dbexception get dbexception java at org engine database checkpoweroff database java at org command command executequery command java at org jdbc jdbcpreparedstatement executequery jdbcpreparedstatement java at com mchange impl newproxypreparedstatement executequery newproxypreparedstatement java at clojure java jdbc execute query with params invokestatic jdbc clj at clojure java jdbc execute query with params invoke jdbc clj at clojure java jdbc db query with resultset star invokestatic jdbc clj at clojure java jdbc db query with resultset star invoke jdbc clj at clojure java jdbc query invokestatic jdbc clj at clojure java jdbc query invoke jdbc clj at toucan db query invokestatic db clj at toucan db query doinvoke db clj at clojure lang restfn invoke restfn java at toucan db simple select invokestatic db clj at toucan db simple select invoke db clj at toucan db simple select one invokestatic db clj at toucan db simple select one invoke db clj at toucan db select one invokestatic db clj at toucan db select one doinvoke db clj at clojure lang restfn invoke restfn java at metabase middleware session with id invokestatic middleware clj at metabase middleware session with id invoke middleware clj at metabase middleware current user info for session invokestatic middleware clj at metabase middleware current user info for session invoke middleware clj at metabase middleware add current user info invokestatic middleware clj at metabase middleware add current user info invoke middleware clj at clojure core comp fn invoke core clj at clojure core comp fn invoke core clj at clojure core comp fn invoke core clj at metabase middleware maybe set site url fn invoke middleware clj at puppetlabs core locale negotiator fn invoke core clj at ring middleware cookies wrap cookies fn invoke cookies clj at ring middleware session wrap session fn invoke session clj at metabase middleware add content type fn invoke middleware clj at ring middleware gzip wrap gzip fn invoke gzip clj at ring adapter jetty proxy handler fn invoke jetty clj at ring adapter jetty proxy org eclipse jetty server handler abstracthandler handle unknown source at org eclipse jetty server handler handlerwrapper handle handlerwrapper java at org eclipse jetty server server handle server java at org eclipse jetty server httpchannel handle httpchannel java at org eclipse jetty server httpconnection onfillable httpconnection java at org eclipse jetty io abstractconnection readcallback succeeded abstractconnection java at org eclipse jetty io fillinterest fillable fillinterest java at org eclipse jetty io channelendpoint run channelendpoint java at org eclipse jetty util thread queuedthreadpool runjob queuedthreadpool java at org eclipse jetty util thread queuedthreadpool run queuedthreadpool java at java lang thread run thread java error jdbcjobstore jobstoretx couldn t rollback jdbc connection the database has been closed org jdbc jdbcsqlexception the database has been closed at org message dbexception getjdbcsqlexception dbexception java at org message dbexception get dbexception java at org message dbexception get dbexception java at org message dbexception get dbexception java at org engine session gettransaction session java at org engine session getstatementsavepoint session java at org engine session setsavepoint session java at org command command executeupdate command java at org jdbc jdbcconnection rollbackinternal jdbcconnection java at org jdbc jdbcconnection rollback jdbcconnection java at com mchange impl newproxyconnection rollback newproxyconnection java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java lang reflect method invoke method java at org quartz impl jdbcjobstore attributerestoringconnectioninvocationhandler invoke attributerestoringconnectioninvocationhandler java at com sun proxy rollback unknown source at org quartz impl jdbcjobstore jobstoresupport rollbackconnection jobstoresupport java at org quartz impl jdbcjobstore jobstoresupport dorecovermisfires jobstoresupport java at org quartz impl jdbcjobstore jobstoresupport misfirehandler manage jobstoresupport java at org quartz impl jdbcjobstore jobstoresupport misfirehandler run jobstoresupport java warn server httpchannel api dashboard org jdbc jdbcsqlexception the database has been closed at org message dbexception getjdbcsqlexception dbexception java at org message dbexception get dbexception java at org message dbexception get dbexception java at org message dbexception get dbexception java at org engine database checkpoweroff database java at org command command executequery command java at org jdbc jdbcpreparedstatement executequery jdbcpreparedstatement java at com mchange impl newproxypreparedstatement executequery newproxypreparedstatement java at clojure java jdbc execute query with params invokestatic jdbc clj at clojure java jdbc execute query with params invoke jdbc clj at clojure java jdbc db query with resultset star invokestatic jdbc clj at clojure java jdbc db query with resultset star invoke jdbc clj at clojure java jdbc query invokestatic jdbc clj at clojure java jdbc query invoke jdbc clj at toucan db query invokestatic db clj at toucan db query doinvoke db clj at clojure lang restfn invoke restfn java at toucan db simple select invokestatic db clj at toucan db simple select invoke db clj at toucan db simple select one invokestatic db clj at toucan db simple select one invoke db clj at toucan db select one invokestatic db clj at toucan db select one doinvoke db clj at clojure lang restfn invoke restfn java at metabase middleware session with id invokestatic middleware clj at metabase middleware session with id invoke middleware clj at metabase middleware current user info for session invokestatic middleware clj at metabase middleware current user info for session invoke middleware clj at metabase middleware add current user info invokestatic middleware clj at metabase middleware add current user info invoke middleware clj at clojure core comp fn invoke core clj at clojure core comp fn invoke core clj at clojure core comp fn invoke core clj at metabase middleware maybe set site url fn invoke middleware clj at puppetlabs core locale negotiator fn invoke core clj at ring middleware cookies wrap cookies fn invoke cookies clj at ring middleware session wrap session fn invoke session clj at metabase middleware add content type fn invoke middleware clj at ring middleware gzip wrap gzip fn invoke gzip clj at ring adapter jetty proxy handler fn invoke jetty clj at ring adapter jetty proxy org eclipse jetty server handler abstracthandler handle unknown source at org eclipse jetty server handler handlerwrapper handle handlerwrapper java at org eclipse jetty server server handle server java at org eclipse jetty server httpchannel handle httpchannel java at org eclipse jetty server httpconnection onfillable httpconnection java at org eclipse jetty io abstractconnection readcallback succeeded abstractconnection java at org eclipse jetty io fillinterest fillable fillinterest java at org eclipse jetty io channelendpoint run channelendpoint java at org eclipse jetty util thread queuedthreadpool runjob queuedthreadpool java at org eclipse jetty util thread queuedthreadpool run queuedthreadpool java at java lang thread run thread java | 0 |

114,281 | 14,544,513,273 | IssuesEvent | 2020-12-15 18:17:22 | cloudfour/cloudfour.com-patterns | https://api.github.com/repos/cloudfour/cloudfour.com-patterns | opened | Button group pattern(s) | size:2 🎨 design | We could use a layout object for aligning a group of related buttons. This can be seen in our post-article notification area:

<img width="793" alt="Screen Shot 2020-12-15 at 10 15 08 AM" src="https://user-images.githubusercontent.com/69633/102255222-9fc30a80-3ebe-11eb-8695-ed47d764ede9.png">

And when replying to comments:

<img width="321" alt="Screen Shot 2020-12-15 at 10 15 59 AM" src="https://user-images.githubusercontent.com/69633/102255242-a782af00-3ebe-11eb-9de5-9608bc3428b6.png">

| 1.0 | Button group pattern(s) - We could use a layout object for aligning a group of related buttons. This can be seen in our post-article notification area:

<img width="793" alt="Screen Shot 2020-12-15 at 10 15 08 AM" src="https://user-images.githubusercontent.com/69633/102255222-9fc30a80-3ebe-11eb-8695-ed47d764ede9.png">

And when replying to comments:

<img width="321" alt="Screen Shot 2020-12-15 at 10 15 59 AM" src="https://user-images.githubusercontent.com/69633/102255242-a782af00-3ebe-11eb-9de5-9608bc3428b6.png">

| non_test | button group pattern s we could use a layout object for aligning a group of related buttons this can be seen in our post article notification area img width alt screen shot at am src and when replying to comments img width alt screen shot at am src | 0 |

134,840 | 12,627,960,990 | IssuesEvent | 2020-06-15 00:22:57 | tamasfe/taplo | https://api.github.com/repos/tamasfe/taplo | closed | Documentation | documentation | Proper documentation must be written before releasing 1.0.0.

This issue is just simply a reminder and an entry in the checklist of the milestone. | 1.0 | Documentation - Proper documentation must be written before releasing 1.0.0.

This issue is just simply a reminder and an entry in the checklist of the milestone. | non_test | documentation proper documentation must be written before releasing this issue is just simply a reminder and an entry in the checklist of the milestone | 0 |

248,198 | 21,002,517,685 | IssuesEvent | 2022-03-29 18:54:57 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: psycopg failed | C-test-failure O-robot O-roachtest branch-master release-blocker | roachtest.psycopg [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4718770&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4718770&tab=artifacts#/psycopg) on master @ [327f886758e64973e9e6ed221688622b6e1bde69](https://github.com/cockroachdb/cockroach/commits/327f886758e64973e9e6ed221688622b6e1bde69):

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /artifacts/psycopg/run_1

orm_helpers.go:190,orm_helpers.go:116,psycopg.go:144,psycopg.go:156,test_runner.go:875:

Tests run on Cockroach v22.1.0-alpha.4-449-g327f886758

Tests run against psycopg 2_8_6

453 Total Tests Run

451 tests passed

2 tests failed

319 tests skipped

3 tests ignored

0 tests passed unexpectedly

1 test failed unexpectedly

0 tests expected failed but skipped

0 tests expected failed but not run

---

--- FAIL: tests.test_async.AsyncTests.test_error (unexpected)

For a full summary look at the psycopg artifacts

An updated blocklist (psycopgBlockList22_1) is available in the artifacts' psycopg log

```

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/sql-experience

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*psycopg.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| 2.0 | roachtest: psycopg failed - roachtest.psycopg [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4718770&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4718770&tab=artifacts#/psycopg) on master @ [327f886758e64973e9e6ed221688622b6e1bde69](https://github.com/cockroachdb/cockroach/commits/327f886758e64973e9e6ed221688622b6e1bde69):

```

The test failed on branch=master, cloud=gce:

test artifacts and logs in: /artifacts/psycopg/run_1

orm_helpers.go:190,orm_helpers.go:116,psycopg.go:144,psycopg.go:156,test_runner.go:875:

Tests run on Cockroach v22.1.0-alpha.4-449-g327f886758

Tests run against psycopg 2_8_6

453 Total Tests Run

451 tests passed

2 tests failed

319 tests skipped

3 tests ignored

0 tests passed unexpectedly

1 test failed unexpectedly

0 tests expected failed but skipped

0 tests expected failed but not run

---

--- FAIL: tests.test_async.AsyncTests.test_error (unexpected)

For a full summary look at the psycopg artifacts

An updated blocklist (psycopgBlockList22_1) is available in the artifacts' psycopg log

```

<details><summary>Help</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

See: [How To Investigate \(internal\)](https://cockroachlabs.atlassian.net/l/c/SSSBr8c7)

</p>

</details>

/cc @cockroachdb/sql-experience

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*psycopg.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| test | roachtest psycopg failed roachtest psycopg with on master the test failed on branch master cloud gce test artifacts and logs in artifacts psycopg run orm helpers go orm helpers go psycopg go psycopg go test runner go tests run on cockroach alpha tests run against psycopg total tests run tests passed tests failed tests skipped tests ignored tests passed unexpectedly test failed unexpectedly tests expected failed but skipped tests expected failed but not run fail tests test async asynctests test error unexpected for a full summary look at the psycopg artifacts an updated blocklist is available in the artifacts psycopg log help see see cc cockroachdb sql experience | 1 |

327,303 | 28,052,073,572 | IssuesEvent | 2023-03-29 06:46:10 | ALTA-LapakUMKM-Group-2/LapakUMKM-APITesting | https://api.github.com/repos/ALTA-LapakUMKM-Group-2/LapakUMKM-APITesting | closed | [User-A026] POST Update Photo Profile With Valid Key | Manual Api Testing | **Given** Post update photo profile with valid json data

**When** User tick on body key

**Then** Send post update data

**And** API Should be 200 OK | 1.0 | [User-A026] POST Update Photo Profile With Valid Key - **Given** Post update photo profile with valid json data

**When** User tick on body key

**Then** Send post update data

**And** API Should be 200 OK | test | post update photo profile with valid key given post update photo profile with valid json data when user tick on body key then send post update data and api should be ok | 1 |

262,898 | 23,019,001,009 | IssuesEvent | 2022-07-22 01:48:59 | sigp/lighthouse | https://api.github.com/repos/sigp/lighthouse | closed | Add transactions to EE integration tests | test improvement bellatrix | ## Description

In https://github.com/sigp/lighthouse/pull/3157 we removed finalized payloads from the database in favour of reconstructing them from the execution layer. Our reconstruction depends on the correctness of the `ethers` library's transaction encoding, and it would be good to ensure this is correct in CI.

To do this we need to add some transactions to the execution blocks in our EE integration tests. This will involve creating and signing transactions, preferably of several different types (EIP-1559 and legacy), publishing them to the EE, ensuring they get included in payloads and that these payloads can be reconstructed.

Deeper testing could involve fuzzing, or live network testing (e.g. trying to reconstruct every block on Kiln)

| 1.0 | Add transactions to EE integration tests - ## Description

In https://github.com/sigp/lighthouse/pull/3157 we removed finalized payloads from the database in favour of reconstructing them from the execution layer. Our reconstruction depends on the correctness of the `ethers` library's transaction encoding, and it would be good to ensure this is correct in CI.

To do this we need to add some transactions to the execution blocks in our EE integration tests. This will involve creating and signing transactions, preferably of several different types (EIP-1559 and legacy), publishing them to the EE, ensuring they get included in payloads and that these payloads can be reconstructed.

Deeper testing could involve fuzzing, or live network testing (e.g. trying to reconstruct every block on Kiln)

| test | add transactions to ee integration tests description in we removed finalized payloads from the database in favour of reconstructing them from the execution layer our reconstruction depends on the correctness of the ethers library s transaction encoding and it would be good to ensure this is correct in ci to do this we need to add some transactions to the execution blocks in our ee integration tests this will involve creating and signing transactions preferably of several different types eip and legacy publishing them to the ee ensuring they get included in payloads and that these payloads can be reconstructed deeper testing could involve fuzzing or live network testing e g trying to reconstruct every block on kiln | 1 |

188,828 | 14,476,420,350 | IssuesEvent | 2020-12-10 04:05:02 | openshift/odo | https://api.github.com/repos/openshift/odo | closed | Namespaces creation in parallel are not handled properly by the test script | area/testing kind/bug lifecycle/rotten | /kind bug

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the Google group if you have a question rather than a bug or feature request.

The group is at: https://groups.google.com/forum/#!forum/odo-users

Thanks for understanding, and for contributing to the project!

-->

## What versions of software are you using?

**Operating System:**

All supported

**Output of `odo version`:**

master

## How did you run odo exactly?

Running test on travis CI.

## Actual behavior

```

Creating a new project: bivsrltawh

Running odo with args [odo project create bivsrltawh -w -v4]

[odo] • Waiting for project to come up ...

[odo] I0425 14:55:05.512561 9447 occlient.go:531] Status of creation of project bivsrltawh is Active

[odo] I0425 14:55:05.512642 9447 occlient.go:536] Project bivsrltawh now exists

[odo] I0425 14:55:05.528385 9447 occlient.go:571] Status of creation of service account &ServiceAccount{ObjectMeta:k8s_io_apimachinery_pkg_apis_meta_v1.ObjectMeta{Name:default,GenerateName:,Namespace:bivsrltawh,SelfLink:/api/v1/namespaces/bivsrltawh/serviceaccounts/default,UID:9ad08575-62da-48b5-af5f-bf30f409df1a,ResourceVersion:22723,Generation:0,CreationTimestamp:2020-04-25 14:55:04 +0000 UTC,DeletionTimestamp:<nil>,DeletionGracePeriodSeconds:nil,Labels:map[string]string{},Annotations:map[string]string{},OwnerReferences:[],Finalizers:[],ClusterName:,Initializers:nil,},Secrets:[{ default-token-w5gvn } { default-dockercfg-w62w8 }],ImagePullSecrets:[{default-dockercfg-w62w8}],AutomountServiceAccountToken:nil,} is ready

[odo]

✓ Waiting for project to come up [2s]

[odo] ✓ Project 'bivsrltawh' is ready for use

[odo] ✓ New project created and now using project: bivsrltawh

[odo] I0425 14:55:05.546426 9447 odo.go:80] Could not get the latest release information in time. Never mind, exiting gracefully :)

Running odo with args [odo create nodejs --context /tmp/220268031 --project bivsrltawh jjpzrc --ref master --git https://github.com/openshift/nodejs-ex --port 8080,8000]

[odo] Validation

[odo] • Validating component ...

[odo]

✓ Validating component [4ms]

[odo]

[odo] Please use `odo push` command to create the component with source deployed

Running odo with args [odo push --context /tmp/220268031]

[odo] ✗ projectrequests.project.openshift.io is forbidden: User "system:serviceaccount:ci-op-jkg9fswi:default" cannot create projectrequests.project.openshift.io at the cluster scope: no RBAC policy matched

Deleting project: bivsrltawh

Running odo with args [odo project delete bivsrltawh -f]

[odo] ✗ The project bivsrltawh does not exist. Please check the list of projects using `odo project list`

• Failure [2.313 seconds]

odo url command tests

/go/src/github.com/openshift/odo/tests/integration/cmd_url_test.go:15

Listing urls

/go/src/github.com/openshift/odo/tests/integration/cmd_url_test.go:41

should list appropriate URLs and push message [It]

/go/src/github.com/openshift/odo/tests/integration/cmd_url_test.go:42

No future change is possible. Bailing out early after 0.132s.

Running odo with args [odo push --context /tmp/220268031]

Expected

<int>: 1

to match exit code:

<int>: 0

```

On 4 test node we are observing this failure more prominently.

## Expected behavior

It should create the namespace successfully and do ```odo push```

## Any logs, error output, etc?

Logs : https://prow.svc.ci.openshift.org/view/gcs/origin-ci-test/pr-logs/pull/openshift_odo/2965/pull-ci-openshift-odo-master-v4.4-integration-e2e-benchmark/431#1:build-log.txt%3A429

| 1.0 | Namespaces creation in parallel are not handled properly by the test script - /kind bug

<!--

Welcome! - We kindly ask you to:

1. Fill out the issue template below

2. Use the Google group if you have a question rather than a bug or feature request.

The group is at: https://groups.google.com/forum/#!forum/odo-users

Thanks for understanding, and for contributing to the project!

-->

## What versions of software are you using?

**Operating System:**

All supported

**Output of `odo version`:**

master

## How did you run odo exactly?

Running test on travis CI.

## Actual behavior

```

Creating a new project: bivsrltawh

Running odo with args [odo project create bivsrltawh -w -v4]

[odo] • Waiting for project to come up ...

[odo] I0425 14:55:05.512561 9447 occlient.go:531] Status of creation of project bivsrltawh is Active

[odo] I0425 14:55:05.512642 9447 occlient.go:536] Project bivsrltawh now exists