Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

112,423 | 9,566,938,083 | IssuesEvent | 2019-05-06 00:24:29 | zulip/zulip | https://api.github.com/repos/zulip/zulip | opened | Fix broken LDAP test when running tests in reverse order | area: authentication area: testing-coverage bug priority: high | We had to disabled one of our LDAP tests in https://github.com/zulip/zulip/commit/b45a7a828ec914d64694db20c44ef7a36e1bfe1b, because it was broken; we should fix it so we can re-enable the test. | 1.0 | Fix broken LDAP test when running tests in reverse order - We had to disabled one of our LDAP tests in https://github.com/zulip/zulip/commit/b45a7a828ec914d64694db20c44ef7a36e1bfe1b, because it was broken; we should fix it so we can re-enable the test. | test | fix broken ldap test when running tests in reverse order we had to disabled one of our ldap tests in because it was broken we should fix it so we can re enable the test | 1 |

620,489 | 19,563,402,607 | IssuesEvent | 2022-01-03 19:38:24 | bounswe/2021SpringGroup1 | https://api.github.com/repos/bounswe/2021SpringGroup1 | closed | Converting all Backend functions to Json-LD format | Type: Enhancement Priority: Medium Platform: Backend | Previously, necessary changes were made to return it in Json-LD format for Community functions. Now this format should be added to other functions as well.

Here, relatively static fields such as "@context", "@type" should be added first. If there is no problem here, necessary changes should be made on the database and the field names in the Models. | 1.0 | Converting all Backend functions to Json-LD format - Previously, necessary changes were made to return it in Json-LD format for Community functions. Now this format should be added to other functions as well.

Here, relatively static fields such as "@context", "@type" should be added first. If there is no problem here, necessary changes should be made on the database and the field names in the Models. | non_test | converting all backend functions to json ld format previously necessary changes were made to return it in json ld format for community functions now this format should be added to other functions as well here relatively static fields such as context type should be added first if there is no problem here necessary changes should be made on the database and the field names in the models | 0 |

750,631 | 26,208,912,167 | IssuesEvent | 2023-01-04 03:15:58 | open-telemetry/opentelemetry-collector | https://api.github.com/repos/open-telemetry/opentelemetry-collector | closed | Retry link checking on network failures | enhancement Stale priority:p3 release:after-ga area:miscellaneous | The CI currently checks for links by going over the network to check if the destination exists. The problem is that the network isn't reliable ([fallacy 1](https://en.wikipedia.org/wiki/Fallacies_of_distributed_computing)).

Failure:

```

[✖] https://godoc.org/google.golang.org/grpc#WithInsecure → Status: 0 Error: ESOCKETTIMEDOUT

```

https://app.circleci.com/pipelines/github/open-telemetry/opentelemetry-collector/4166/workflows/f4d8eb48-9c89-4032-b08e-b7fbba3da81f/jobs/47015 | 1.0 | Retry link checking on network failures - The CI currently checks for links by going over the network to check if the destination exists. The problem is that the network isn't reliable ([fallacy 1](https://en.wikipedia.org/wiki/Fallacies_of_distributed_computing)).

Failure:

```

[✖] https://godoc.org/google.golang.org/grpc#WithInsecure → Status: 0 Error: ESOCKETTIMEDOUT

```

https://app.circleci.com/pipelines/github/open-telemetry/opentelemetry-collector/4166/workflows/f4d8eb48-9c89-4032-b08e-b7fbba3da81f/jobs/47015 | non_test | retry link checking on network failures the ci currently checks for links by going over the network to check if the destination exists the problem is that the network isn t reliable failure → status error esockettimedout | 0 |

90,332 | 18,108,697,851 | IssuesEvent | 2021-09-22 22:50:04 | aiekick/ImGuiFileDialog | https://api.github.com/repos/aiekick/ImGuiFileDialog | reopened | Windows OS can't display Chinese filename, also can't select those file | bug unicode | OS: Windows 10 Chinese version

ImGui 1.80

ImFileDialog git master

Can NOT display Chinese file name(I already added Chinese fonts, and can show Chinese char will on other interface)

Can NOT select Chinese file name or folder name. | 1.0 | Windows OS can't display Chinese filename, also can't select those file - OS: Windows 10 Chinese version

ImGui 1.80

ImFileDialog git master

Can NOT display Chinese file name(I already added Chinese fonts, and can show Chinese char will on other interface)

Can NOT select Chinese file name or folder name. | non_test | windows os can t display chinese filename also can t select those file os windows chinese version imgui imfiledialog git master can not display chinese file name i already added chinese fonts and can show chinese char will on other interface can not select chinese file name or folder name | 0 |

114,263 | 9,694,704,304 | IssuesEvent | 2019-05-24 19:47:07 | eclipse/openj9 | https://api.github.com/repos/eclipse/openj9 | closed | Test quick start instructions do not work for Java 8 | comp:test | Looking at the Java 8 build page, https://www.eclipse.org/openj9/oj9_build.html, it refers to the test quick start guide https://github.com/eclipse/openj9/blob/master/test/README.md

- These instructions do not default to Java 8, and do not specify the JAVA_VERSION. Attempting to follow the instructions after finding them from the Java 8 build page does not work, the "make compile" step fails.

- as Java 9 is out of support, the tests should default to something else. https://github.com/eclipse/openj9/blob/master/test/docs/OpenJ9TestUserGuide.md shows the default as SE90.

- after setting JAVA_VERSION=SE80, I reran the configure step and make compile and got an error because I had set JAVA_BIN to the j2sdk-image/bin directory and the compile was looking for j2sdk-image/bin/../../bin/javac, which doesn't work. | 1.0 | Test quick start instructions do not work for Java 8 - Looking at the Java 8 build page, https://www.eclipse.org/openj9/oj9_build.html, it refers to the test quick start guide https://github.com/eclipse/openj9/blob/master/test/README.md

- These instructions do not default to Java 8, and do not specify the JAVA_VERSION. Attempting to follow the instructions after finding them from the Java 8 build page does not work, the "make compile" step fails.

- as Java 9 is out of support, the tests should default to something else. https://github.com/eclipse/openj9/blob/master/test/docs/OpenJ9TestUserGuide.md shows the default as SE90.

- after setting JAVA_VERSION=SE80, I reran the configure step and make compile and got an error because I had set JAVA_BIN to the j2sdk-image/bin directory and the compile was looking for j2sdk-image/bin/../../bin/javac, which doesn't work. | test | test quick start instructions do not work for java looking at the java build page it refers to the test quick start guide these instructions do not default to java and do not specify the java version attempting to follow the instructions after finding them from the java build page does not work the make compile step fails as java is out of support the tests should default to something else shows the default as after setting java version i reran the configure step and make compile and got an error because i had set java bin to the image bin directory and the compile was looking for image bin bin javac which doesn t work | 1 |

280,222 | 24,285,141,456 | IssuesEvent | 2022-09-28 21:14:58 | Northeastern-Electric-Racing/FinishLine | https://api.github.com/repos/Northeastern-Electric-Racing/FinishLine | opened | Backend - Test addProposedSolution (part 2) | back-end straightforward testing | ### Description

Write unit tests for some of the cases of the addProposedSolution endpoint. To work on this ticket, you will need to read through the endpoint to learn how it works.

### Acceptance Criteria

The following cases should be covered:

* the associated scope cr does not exist

* it works as intended

### Proposed Solution

Check out this epic https://github.com/Northeastern-Electric-Racing/FinishLine/issues/238 for resources and tips on how to write these tests.

### Mocks

_No response_ | 1.0 | Backend - Test addProposedSolution (part 2) - ### Description

Write unit tests for some of the cases of the addProposedSolution endpoint. To work on this ticket, you will need to read through the endpoint to learn how it works.

### Acceptance Criteria

The following cases should be covered:

* the associated scope cr does not exist

* it works as intended

### Proposed Solution

Check out this epic https://github.com/Northeastern-Electric-Racing/FinishLine/issues/238 for resources and tips on how to write these tests.

### Mocks

_No response_ | test | backend test addproposedsolution part description write unit tests for some of the cases of the addproposedsolution endpoint to work on this ticket you will need to read through the endpoint to learn how it works acceptance criteria the following cases should be covered the associated scope cr does not exist it works as intended proposed solution check out this epic for resources and tips on how to write these tests mocks no response | 1 |

220,286 | 17,185,569,807 | IssuesEvent | 2021-07-16 00:58:20 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [test-failed]: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/monitoring/elasticsearch/index_detail_mb·js - Monitoring app Elasticsearch index detail mb Active Indices "before all" hook for "should have an index summary with green status index with full shard allocation" | failed-test test-cloud | **Version: 7.14.0**

**Class: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/monitoring/elasticsearch/index_detail_mb·js**

**Stack Trace:**

```

Error: retry.try timeout: TimeoutError: Waiting for element to be located By(css selector, [data-test-subj="alerts-modal-button"])

Wait timed out after 10044ms

at /var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/selenium-webdriver/lib/webdriver.js:842:17

at runMicrotasks (<anonymous>)

at processTicksAndRejections (internal/process/task_queues.js:95:5)

at onFailure (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:17:9)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:57:13)

at RetryService.try (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:31:12)

at Proxy.clickByCssSelector (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/functional/services/common/find.ts:360:5)

at TestSubjects.click (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/functional/services/common/test_subjects.ts:105:5)

at Context.<anonymous> (test/functional/apps/monitoring/elasticsearch/index_detail_mb.js:35:9)

at Object.apply (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16)

```

**Other test failures:**

_Test Report: https://internal-ci.elastic.co/view/Stack%20Tests/job/elastic+estf-cloud-kibana-tests/2041/testReport/_ | 2.0 | [test-failed]: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/monitoring/elasticsearch/index_detail_mb·js - Monitoring app Elasticsearch index detail mb Active Indices "before all" hook for "should have an index summary with green status index with full shard allocation" - **Version: 7.14.0**

**Class: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/monitoring/elasticsearch/index_detail_mb·js**

**Stack Trace:**

```

Error: retry.try timeout: TimeoutError: Waiting for element to be located By(css selector, [data-test-subj="alerts-modal-button"])

Wait timed out after 10044ms

at /var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/selenium-webdriver/lib/webdriver.js:842:17

at runMicrotasks (<anonymous>)

at processTicksAndRejections (internal/process/task_queues.js:95:5)

at onFailure (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:17:9)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:57:13)

at RetryService.try (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:31:12)

at Proxy.clickByCssSelector (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/functional/services/common/find.ts:360:5)

at TestSubjects.click (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/functional/services/common/test_subjects.ts:105:5)

at Context.<anonymous> (test/functional/apps/monitoring/elasticsearch/index_detail_mb.js:35:9)

at Object.apply (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp4/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16)

```

**Other test failures:**

_Test Report: https://internal-ci.elastic.co/view/Stack%20Tests/job/elastic+estf-cloud-kibana-tests/2041/testReport/_ | test | chrome x pack ui functional x pack test functional apps monitoring elasticsearch index detail mb·js monitoring app elasticsearch index detail mb active indices before all hook for should have an index summary with green status index with full shard allocation version class chrome x pack ui functional x pack test functional apps monitoring elasticsearch index detail mb·js stack trace error retry try timeout timeouterror waiting for element to be located by css selector wait timed out after at var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana node modules selenium webdriver lib webdriver js at runmicrotasks at processticksandrejections internal process task queues js at onfailure var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryforsuccess var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryservice try var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry ts at proxy clickbycssselector var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test functional services common find ts at testsubjects click var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test functional services common test subjects ts at context test functional apps monitoring elasticsearch index detail mb js at object apply var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana node modules kbn test target node functional test runner lib mocha wrap function js other test failures test report | 1 |

161,539 | 13,852,142,670 | IssuesEvent | 2020-10-15 05:53:39 | odoo/odoo | https://api.github.com/repos/odoo/odoo | closed | The Javascript API Doc page has not been ported to 13.0 | 13.0 Documentation | Impacted versions:

13.0 Documentation

Steps to reproduce:

go to https://www.odoo.com/documentation/13.0/reference/javascript_api.html#

Expected behavior:

https://www.odoo.com/documentation/12.0/reference/javascript_api.html# | 1.0 | The Javascript API Doc page has not been ported to 13.0 - Impacted versions:

13.0 Documentation

Steps to reproduce:

go to https://www.odoo.com/documentation/13.0/reference/javascript_api.html#

Expected behavior:

https://www.odoo.com/documentation/12.0/reference/javascript_api.html# | non_test | the javascript api doc page has not been ported to impacted versions documentation steps to reproduce go to expected behavior | 0 |

60,198 | 6,675,712,964 | IssuesEvent | 2017-10-05 00:01:45 | Indemnity83/solder | https://api.github.com/repos/Indemnity83/solder | closed | Browser testing fails on Travis-CI | PRs plz! type: test | There doesn't seem to be a clear solution to getting chrome working on Travis-CI build environments. There's either a lot of extra steps that need to be added to the test script (seems fragile) or users just simply changing to a different driver (like Firefox or PhantomJS).

I'd really like to get the tests working with Chrome since it works so seamlessly in local development so for now I'm disabling the automated tests and inviting the community to help me troubleshoot getting the automated tests running so I can concentrate on finishing the GUI instead of spinning my wheels debugging Travis. | 1.0 | Browser testing fails on Travis-CI - There doesn't seem to be a clear solution to getting chrome working on Travis-CI build environments. There's either a lot of extra steps that need to be added to the test script (seems fragile) or users just simply changing to a different driver (like Firefox or PhantomJS).

I'd really like to get the tests working with Chrome since it works so seamlessly in local development so for now I'm disabling the automated tests and inviting the community to help me troubleshoot getting the automated tests running so I can concentrate on finishing the GUI instead of spinning my wheels debugging Travis. | test | browser testing fails on travis ci there doesn t seem to be a clear solution to getting chrome working on travis ci build environments there s either a lot of extra steps that need to be added to the test script seems fragile or users just simply changing to a different driver like firefox or phantomjs i d really like to get the tests working with chrome since it works so seamlessly in local development so for now i m disabling the automated tests and inviting the community to help me troubleshoot getting the automated tests running so i can concentrate on finishing the gui instead of spinning my wheels debugging travis | 1 |

64,855 | 6,924,959,277 | IssuesEvent | 2017-11-30 14:34:08 | dwyl/bestevidence | https://api.github.com/repos/dwyl/bestevidence | closed | Bug - Filtering by evidence type no longer working | bug please-test priority-1 T4h | The dropdown menu, that enables users to filter by evidence type is not working on the app. I tested it when it was first developed and it worked but that functionality appears to have dropped off in the production version. This needs to be fixed urgently as it is pretty basic to the use of the app. | 1.0 | Bug - Filtering by evidence type no longer working - The dropdown menu, that enables users to filter by evidence type is not working on the app. I tested it when it was first developed and it worked but that functionality appears to have dropped off in the production version. This needs to be fixed urgently as it is pretty basic to the use of the app. | test | bug filtering by evidence type no longer working the dropdown menu that enables users to filter by evidence type is not working on the app i tested it when it was first developed and it worked but that functionality appears to have dropped off in the production version this needs to be fixed urgently as it is pretty basic to the use of the app | 1 |

108,148 | 9,276,397,669 | IssuesEvent | 2019-03-20 02:45:01 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | opened | Deadlock in RoamingVisualStudioProfileOptionPersister | Area-IDE Bug Integration-Test help wanted | **Version Used**:

Stacks:

```

[Managed to Native Transition]

mscorlib.dll!System.Threading.Monitor.Enter(object obj, ref bool lockTaken) Line 62 C#

> Microsoft.VisualStudio.LanguageServices.dll!Microsoft.VisualStudio.LanguageServices.Implementation.Options.RoamingVisualStudioProfileOptionPersister.RecordObservedValueToWatchForChanges(Microsoft.CodeAnalysis.Options.OptionKey optionKey, string storageKey) Line 222 C#

Microsoft.VisualStudio.LanguageServices.dll!Microsoft.VisualStudio.LanguageServices.Implementation.Options.RoamingVisualStudioProfileOptionPersister.GetFirstOrDefaultValue(Microsoft.CodeAnalysis.Options.OptionKey optionKey, System.Collections.Generic.IEnumerable<Microsoft.CodeAnalysis.Options.RoamingProfileStorageLocation> roamingSerializations) Line 99 C#

Microsoft.VisualStudio.LanguageServices.dll!Microsoft.VisualStudio.LanguageServices.Implementation.Options.RoamingVisualStudioProfileOptionPersister.TryFetch(Microsoft.CodeAnalysis.Options.OptionKey optionKey, out object value) Line 128 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.GlobalOptionService.LoadOptionFromSerializerOrGetDefault(Microsoft.CodeAnalysis.Options.OptionKey optionKey) Line 48 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.GlobalOptionService.GetOption(Microsoft.CodeAnalysis.Options.OptionKey optionKey) Line 83 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.OptionServiceFactory.OptionService.GetOption(Microsoft.CodeAnalysis.Options.OptionKey optionKey) Line 121 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.WorkspaceOptionSet.GetOption(Microsoft.CodeAnalysis.Options.OptionKey optionKey) Line 39 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.WorkspaceOptionSet.WithChangedOption(Microsoft.CodeAnalysis.Options.OptionKey optionAndLanguage, object value) Line 46 C#

Microsoft.VisualStudio.IntegrationTest.Utilities.dll!Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.VisualStudioWorkspace_InProc.SetPerLanguageOption(string optionName, string feature, string language, object value) Line 82 C#

[Native to Managed Transition]

[Managed to Native Transition]

mscorlib.dll!System.Runtime.Remoting.Messaging.StackBuilderSink.SyncProcessMessage(System.Runtime.Remoting.Messaging.IMessage msg) Line 189 C#

mscorlib.dll!System.Runtime.Remoting.Messaging.ServerObjectTerminatorSink.SyncProcessMessage(System.Runtime.Remoting.Messaging.IMessage reqMsg) Line 780 C#

mscorlib.dll!System.Runtime.Remoting.Messaging.ServerContextTerminatorSink.SyncProcessMessage(System.Runtime.Remoting.Messaging.IMessage reqMsg) Line 616 C#

mscorlib.dll!System.Runtime.Remoting.Channels.CrossContextChannel.SyncProcessMessageCallback(object[] args) Line 102 C#

mscorlib.dll!System.Runtime.Remoting.Channels.ChannelServices.DispatchMessage(System.Runtime.Remoting.Channels.IServerChannelSinkStack sinkStack, System.Runtime.Remoting.Messaging.IMessage msg, out System.Runtime.Remoting.Messaging.IMessage replyMsg) Line 767 C#

mscorlib.dll!System.Runtime.Remoting.Channels.DispatchChannelSink.ProcessMessage(System.Runtime.Remoting.Channels.IServerChannelSinkStack sinkStack, System.Runtime.Remoting.Messaging.IMessage requestMsg, System.Runtime.Remoting.Channels.ITransportHeaders requestHeaders, System.IO.Stream requestStream, out System.Runtime.Remoting.Messaging.IMessage responseMsg, out System.Runtime.Remoting.Channels.ITransportHeaders responseHeaders, out System.IO.Stream responseStream) Line 77 C#

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.BinaryServerFormatterSink.ProcessMessage(System.Runtime.Remoting.Channels.IServerChannelSinkStack sinkStack, System.Runtime.Remoting.Messaging.IMessage requestMsg, System.Runtime.Remoting.Channels.ITransportHeaders requestHeaders, System.IO.Stream requestStream, out System.Runtime.Remoting.Messaging.IMessage responseMsg, out System.Runtime.Remoting.Channels.ITransportHeaders responseHeaders, out System.IO.Stream responseStream) Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.Ipc.IpcServerTransportSink.ServiceRequest(object state) Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.SocketHandler.ProcessRequestNow() Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.RequestQueue.ProcessNextRequest(System.Runtime.Remoting.Channels.SocketHandler sh) Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.SocketHandler.BeginReadMessageCallback(System.IAsyncResult ar) Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.Ipc.IpcPort.AsyncFSCallback(uint errorCode, uint numBytes, System.Threading.NativeOverlapped* pOverlapped) Unknown

mscorlib.dll!System.Threading._IOCompletionCallback.PerformIOCompletionCallback(uint errorCode, uint numBytes, System.Threading.NativeOverlapped* pOVERLAP) Line 135 C#

[Native to Managed Transition]

```

```

[Managed to Native Transition]

mscorlib.dll!System.Threading.Monitor.Enter(object obj, ref bool lockTaken) Line 62 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.GlobalOptionService.RefreshOption(Microsoft.CodeAnalysis.Options.OptionKey optionKey, object newValue) Line 141 C#

> Microsoft.VisualStudio.LanguageServices.dll!Microsoft.VisualStudio.LanguageServices.Implementation.Options.RoamingVisualStudioProfileOptionPersister.OnSettingChangedAsync(object sender, System.ComponentModel.PropertyChangedEventArgs args) Line 76 C#

Microsoft.VisualStudio.Utilities.dll!Microsoft.VisualStudio.Settings.SettingsManager.AsyncHandler.Invoke(Microsoft.VisualStudio.Settings.SettingsManager sender, System.ComponentModel.PropertyChangedEventArgs args) Unknown

Microsoft.VisualStudio.Utilities.dll!Microsoft.VisualStudio.Settings.SettingsManager.FireLocalSettingChangeEventAsync(System.ComponentModel.PropertyChangedEventArgs args, System.Collections.Generic.List<Microsoft.VisualStudio.Settings.SettingsManager.ScopedEventHandler> handlers) Unknown

mscorlib.dll!System.Runtime.CompilerServices.AsyncMethodBuilderCore.MoveNextRunner.InvokeMoveNext(object stateMachine) Line 1090 C#

mscorlib.dll!System.Threading.ExecutionContext.RunInternal(System.Threading.ExecutionContext executionContext, System.Threading.ContextCallback callback, object state, bool preserveSyncCtx) Line 954 C#

mscorlib.dll!System.Threading.ExecutionContext.Run(System.Threading.ExecutionContext executionContext, System.Threading.ContextCallback callback, object state, bool preserveSyncCtx) Line 902 C#

mscorlib.dll!System.Runtime.CompilerServices.AsyncMethodBuilderCore.MoveNextRunner.Run() Line 1070 C#

mscorlib.dll!System.Threading.Tasks.AwaitTaskContinuation.System.Threading.IThreadPoolWorkItem.ExecuteWorkItem() Line 715 C#

mscorlib.dll!System.Threading.ThreadPoolWorkQueue.Dispatch() Line 820 C#

mscorlib.dll!System.Threading._ThreadPoolWaitCallback.PerformWaitCallback() Line 1161 C#

[Native to Managed Transition]

``` | 1.0 | Deadlock in RoamingVisualStudioProfileOptionPersister - **Version Used**:

Stacks:

```

[Managed to Native Transition]

mscorlib.dll!System.Threading.Monitor.Enter(object obj, ref bool lockTaken) Line 62 C#

> Microsoft.VisualStudio.LanguageServices.dll!Microsoft.VisualStudio.LanguageServices.Implementation.Options.RoamingVisualStudioProfileOptionPersister.RecordObservedValueToWatchForChanges(Microsoft.CodeAnalysis.Options.OptionKey optionKey, string storageKey) Line 222 C#

Microsoft.VisualStudio.LanguageServices.dll!Microsoft.VisualStudio.LanguageServices.Implementation.Options.RoamingVisualStudioProfileOptionPersister.GetFirstOrDefaultValue(Microsoft.CodeAnalysis.Options.OptionKey optionKey, System.Collections.Generic.IEnumerable<Microsoft.CodeAnalysis.Options.RoamingProfileStorageLocation> roamingSerializations) Line 99 C#

Microsoft.VisualStudio.LanguageServices.dll!Microsoft.VisualStudio.LanguageServices.Implementation.Options.RoamingVisualStudioProfileOptionPersister.TryFetch(Microsoft.CodeAnalysis.Options.OptionKey optionKey, out object value) Line 128 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.GlobalOptionService.LoadOptionFromSerializerOrGetDefault(Microsoft.CodeAnalysis.Options.OptionKey optionKey) Line 48 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.GlobalOptionService.GetOption(Microsoft.CodeAnalysis.Options.OptionKey optionKey) Line 83 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.OptionServiceFactory.OptionService.GetOption(Microsoft.CodeAnalysis.Options.OptionKey optionKey) Line 121 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.WorkspaceOptionSet.GetOption(Microsoft.CodeAnalysis.Options.OptionKey optionKey) Line 39 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.WorkspaceOptionSet.WithChangedOption(Microsoft.CodeAnalysis.Options.OptionKey optionAndLanguage, object value) Line 46 C#

Microsoft.VisualStudio.IntegrationTest.Utilities.dll!Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.VisualStudioWorkspace_InProc.SetPerLanguageOption(string optionName, string feature, string language, object value) Line 82 C#

[Native to Managed Transition]

[Managed to Native Transition]

mscorlib.dll!System.Runtime.Remoting.Messaging.StackBuilderSink.SyncProcessMessage(System.Runtime.Remoting.Messaging.IMessage msg) Line 189 C#

mscorlib.dll!System.Runtime.Remoting.Messaging.ServerObjectTerminatorSink.SyncProcessMessage(System.Runtime.Remoting.Messaging.IMessage reqMsg) Line 780 C#

mscorlib.dll!System.Runtime.Remoting.Messaging.ServerContextTerminatorSink.SyncProcessMessage(System.Runtime.Remoting.Messaging.IMessage reqMsg) Line 616 C#

mscorlib.dll!System.Runtime.Remoting.Channels.CrossContextChannel.SyncProcessMessageCallback(object[] args) Line 102 C#

mscorlib.dll!System.Runtime.Remoting.Channels.ChannelServices.DispatchMessage(System.Runtime.Remoting.Channels.IServerChannelSinkStack sinkStack, System.Runtime.Remoting.Messaging.IMessage msg, out System.Runtime.Remoting.Messaging.IMessage replyMsg) Line 767 C#

mscorlib.dll!System.Runtime.Remoting.Channels.DispatchChannelSink.ProcessMessage(System.Runtime.Remoting.Channels.IServerChannelSinkStack sinkStack, System.Runtime.Remoting.Messaging.IMessage requestMsg, System.Runtime.Remoting.Channels.ITransportHeaders requestHeaders, System.IO.Stream requestStream, out System.Runtime.Remoting.Messaging.IMessage responseMsg, out System.Runtime.Remoting.Channels.ITransportHeaders responseHeaders, out System.IO.Stream responseStream) Line 77 C#

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.BinaryServerFormatterSink.ProcessMessage(System.Runtime.Remoting.Channels.IServerChannelSinkStack sinkStack, System.Runtime.Remoting.Messaging.IMessage requestMsg, System.Runtime.Remoting.Channels.ITransportHeaders requestHeaders, System.IO.Stream requestStream, out System.Runtime.Remoting.Messaging.IMessage responseMsg, out System.Runtime.Remoting.Channels.ITransportHeaders responseHeaders, out System.IO.Stream responseStream) Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.Ipc.IpcServerTransportSink.ServiceRequest(object state) Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.SocketHandler.ProcessRequestNow() Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.RequestQueue.ProcessNextRequest(System.Runtime.Remoting.Channels.SocketHandler sh) Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.SocketHandler.BeginReadMessageCallback(System.IAsyncResult ar) Unknown

System.Runtime.Remoting.dll!System.Runtime.Remoting.Channels.Ipc.IpcPort.AsyncFSCallback(uint errorCode, uint numBytes, System.Threading.NativeOverlapped* pOverlapped) Unknown

mscorlib.dll!System.Threading._IOCompletionCallback.PerformIOCompletionCallback(uint errorCode, uint numBytes, System.Threading.NativeOverlapped* pOVERLAP) Line 135 C#

[Native to Managed Transition]

```

```

[Managed to Native Transition]

mscorlib.dll!System.Threading.Monitor.Enter(object obj, ref bool lockTaken) Line 62 C#

Microsoft.CodeAnalysis.Workspaces.dll!Microsoft.CodeAnalysis.Options.GlobalOptionService.RefreshOption(Microsoft.CodeAnalysis.Options.OptionKey optionKey, object newValue) Line 141 C#

> Microsoft.VisualStudio.LanguageServices.dll!Microsoft.VisualStudio.LanguageServices.Implementation.Options.RoamingVisualStudioProfileOptionPersister.OnSettingChangedAsync(object sender, System.ComponentModel.PropertyChangedEventArgs args) Line 76 C#

Microsoft.VisualStudio.Utilities.dll!Microsoft.VisualStudio.Settings.SettingsManager.AsyncHandler.Invoke(Microsoft.VisualStudio.Settings.SettingsManager sender, System.ComponentModel.PropertyChangedEventArgs args) Unknown

Microsoft.VisualStudio.Utilities.dll!Microsoft.VisualStudio.Settings.SettingsManager.FireLocalSettingChangeEventAsync(System.ComponentModel.PropertyChangedEventArgs args, System.Collections.Generic.List<Microsoft.VisualStudio.Settings.SettingsManager.ScopedEventHandler> handlers) Unknown

mscorlib.dll!System.Runtime.CompilerServices.AsyncMethodBuilderCore.MoveNextRunner.InvokeMoveNext(object stateMachine) Line 1090 C#

mscorlib.dll!System.Threading.ExecutionContext.RunInternal(System.Threading.ExecutionContext executionContext, System.Threading.ContextCallback callback, object state, bool preserveSyncCtx) Line 954 C#

mscorlib.dll!System.Threading.ExecutionContext.Run(System.Threading.ExecutionContext executionContext, System.Threading.ContextCallback callback, object state, bool preserveSyncCtx) Line 902 C#

mscorlib.dll!System.Runtime.CompilerServices.AsyncMethodBuilderCore.MoveNextRunner.Run() Line 1070 C#

mscorlib.dll!System.Threading.Tasks.AwaitTaskContinuation.System.Threading.IThreadPoolWorkItem.ExecuteWorkItem() Line 715 C#

mscorlib.dll!System.Threading.ThreadPoolWorkQueue.Dispatch() Line 820 C#

mscorlib.dll!System.Threading._ThreadPoolWaitCallback.PerformWaitCallback() Line 1161 C#

[Native to Managed Transition]

``` | test | deadlock in roamingvisualstudioprofileoptionpersister version used stacks mscorlib dll system threading monitor enter object obj ref bool locktaken line c microsoft visualstudio languageservices dll microsoft visualstudio languageservices implementation options roamingvisualstudioprofileoptionpersister recordobservedvaluetowatchforchanges microsoft codeanalysis options optionkey optionkey string storagekey line c microsoft visualstudio languageservices dll microsoft visualstudio languageservices implementation options roamingvisualstudioprofileoptionpersister getfirstordefaultvalue microsoft codeanalysis options optionkey optionkey system collections generic ienumerable roamingserializations line c microsoft visualstudio languageservices dll microsoft visualstudio languageservices implementation options roamingvisualstudioprofileoptionpersister tryfetch microsoft codeanalysis options optionkey optionkey out object value line c microsoft codeanalysis workspaces dll microsoft codeanalysis options globaloptionservice loadoptionfromserializerorgetdefault microsoft codeanalysis options optionkey optionkey line c microsoft codeanalysis workspaces dll microsoft codeanalysis options globaloptionservice getoption microsoft codeanalysis options optionkey optionkey line c microsoft codeanalysis workspaces dll microsoft codeanalysis options optionservicefactory optionservice getoption microsoft codeanalysis options optionkey optionkey line c microsoft codeanalysis workspaces dll microsoft codeanalysis options workspaceoptionset getoption microsoft codeanalysis options optionkey optionkey line c microsoft codeanalysis workspaces dll microsoft codeanalysis options workspaceoptionset withchangedoption microsoft codeanalysis options optionkey optionandlanguage object value line c microsoft visualstudio integrationtest utilities dll microsoft visualstudio integrationtest utilities inprocess visualstudioworkspace inproc setperlanguageoption string optionname string feature string language object value line c mscorlib dll system runtime remoting messaging stackbuildersink syncprocessmessage system runtime remoting messaging imessage msg line c mscorlib dll system runtime remoting messaging serverobjectterminatorsink syncprocessmessage system runtime remoting messaging imessage reqmsg line c mscorlib dll system runtime remoting messaging servercontextterminatorsink syncprocessmessage system runtime remoting messaging imessage reqmsg line c mscorlib dll system runtime remoting channels crosscontextchannel syncprocessmessagecallback object args line c mscorlib dll system runtime remoting channels channelservices dispatchmessage system runtime remoting channels iserverchannelsinkstack sinkstack system runtime remoting messaging imessage msg out system runtime remoting messaging imessage replymsg line c mscorlib dll system runtime remoting channels dispatchchannelsink processmessage system runtime remoting channels iserverchannelsinkstack sinkstack system runtime remoting messaging imessage requestmsg system runtime remoting channels itransportheaders requestheaders system io stream requeststream out system runtime remoting messaging imessage responsemsg out system runtime remoting channels itransportheaders responseheaders out system io stream responsestream line c system runtime remoting dll system runtime remoting channels binaryserverformattersink processmessage system runtime remoting channels iserverchannelsinkstack sinkstack system runtime remoting messaging imessage requestmsg system runtime remoting channels itransportheaders requestheaders system io stream requeststream out system runtime remoting messaging imessage responsemsg out system runtime remoting channels itransportheaders responseheaders out system io stream responsestream unknown system runtime remoting dll system runtime remoting channels ipc ipcservertransportsink servicerequest object state unknown system runtime remoting dll system runtime remoting channels sockethandler processrequestnow unknown system runtime remoting dll system runtime remoting channels requestqueue processnextrequest system runtime remoting channels sockethandler sh unknown system runtime remoting dll system runtime remoting channels sockethandler beginreadmessagecallback system iasyncresult ar unknown system runtime remoting dll system runtime remoting channels ipc ipcport asyncfscallback uint errorcode uint numbytes system threading nativeoverlapped poverlapped unknown mscorlib dll system threading iocompletioncallback performiocompletioncallback uint errorcode uint numbytes system threading nativeoverlapped poverlap line c mscorlib dll system threading monitor enter object obj ref bool locktaken line c microsoft codeanalysis workspaces dll microsoft codeanalysis options globaloptionservice refreshoption microsoft codeanalysis options optionkey optionkey object newvalue line c microsoft visualstudio languageservices dll microsoft visualstudio languageservices implementation options roamingvisualstudioprofileoptionpersister onsettingchangedasync object sender system componentmodel propertychangedeventargs args line c microsoft visualstudio utilities dll microsoft visualstudio settings settingsmanager asynchandler invoke microsoft visualstudio settings settingsmanager sender system componentmodel propertychangedeventargs args unknown microsoft visualstudio utilities dll microsoft visualstudio settings settingsmanager firelocalsettingchangeeventasync system componentmodel propertychangedeventargs args system collections generic list handlers unknown mscorlib dll system runtime compilerservices asyncmethodbuildercore movenextrunner invokemovenext object statemachine line c mscorlib dll system threading executioncontext runinternal system threading executioncontext executioncontext system threading contextcallback callback object state bool preservesyncctx line c mscorlib dll system threading executioncontext run system threading executioncontext executioncontext system threading contextcallback callback object state bool preservesyncctx line c mscorlib dll system runtime compilerservices asyncmethodbuildercore movenextrunner run line c mscorlib dll system threading tasks awaittaskcontinuation system threading ithreadpoolworkitem executeworkitem line c mscorlib dll system threading threadpoolworkqueue dispatch line c mscorlib dll system threading threadpoolwaitcallback performwaitcallback line c | 1 |

126,084 | 10,382,913,924 | IssuesEvent | 2019-09-10 08:33:40 | magento/async-import | https://api.github.com/repos/magento/async-import | closed | Create api-functional tests for DELETE source | Contribution Day Progress: dev in progress Topic: Asynchronous Import good first issue tests | ### Summary (*)

We need to cover with tests DELETE source functionality:

Related PR:

https://github.com/magento/async-import/pull/88

### Proposed solution

Please create Integration-Api tests for Delete source endpoint | 1.0 | Create api-functional tests for DELETE source - ### Summary (*)

We need to cover with tests DELETE source functionality:

Related PR:

https://github.com/magento/async-import/pull/88

### Proposed solution

Please create Integration-Api tests for Delete source endpoint | test | create api functional tests for delete source summary we need to cover with tests delete source functionality related pr proposed solution please create integration api tests for delete source endpoint | 1 |

63,410 | 12,313,274,714 | IssuesEvent | 2020-05-12 15:05:18 | phetsims/build-a-molecule | https://api.github.com/repos/phetsims/build-a-molecule | closed | Common prefix name for source files | dev:code-review | From the code review in https://github.com/phetsims/build-a-molecule/issues/173:

> Do filenames use an appropriate prefix? Some filenames may be prefixed with the repository name, e.g. MolarityConstants.js in molarity. If the repository name is long, the developer may choose to abbreviate the repository name, e.g. EEConstants.js in expression-exchange. If the abbreviation is already used by another respository, then the full name must be used. For example, if the "EE" abbreviation is already used by expression-exchange, then it should not be used in equality-explorer. Whichever convention is used, it should be used consistently within a repository - don't mix abbreviations and full names.

It looks like BAM is the prefix, but we have BuildAMoleculeQueryParameters... can we rename that to be consistent? | 1.0 | Common prefix name for source files - From the code review in https://github.com/phetsims/build-a-molecule/issues/173:

> Do filenames use an appropriate prefix? Some filenames may be prefixed with the repository name, e.g. MolarityConstants.js in molarity. If the repository name is long, the developer may choose to abbreviate the repository name, e.g. EEConstants.js in expression-exchange. If the abbreviation is already used by another respository, then the full name must be used. For example, if the "EE" abbreviation is already used by expression-exchange, then it should not be used in equality-explorer. Whichever convention is used, it should be used consistently within a repository - don't mix abbreviations and full names.

It looks like BAM is the prefix, but we have BuildAMoleculeQueryParameters... can we rename that to be consistent? | non_test | common prefix name for source files from the code review in do filenames use an appropriate prefix some filenames may be prefixed with the repository name e g molarityconstants js in molarity if the repository name is long the developer may choose to abbreviate the repository name e g eeconstants js in expression exchange if the abbreviation is already used by another respository then the full name must be used for example if the ee abbreviation is already used by expression exchange then it should not be used in equality explorer whichever convention is used it should be used consistently within a repository don t mix abbreviations and full names it looks like bam is the prefix but we have buildamoleculequeryparameters can we rename that to be consistent | 0 |

178,036 | 13,758,386,558 | IssuesEvent | 2020-10-06 23:54:20 | rancher/rke2 | https://api.github.com/repos/rancher/rke2 | closed | --data-dir flag is ignored | [zube]: To Test | Starting rke2 with the --data-dir option does not alter the data directory. It always uses the default value of /var/lib/rancher/rke2. | 1.0 | --data-dir flag is ignored - Starting rke2 with the --data-dir option does not alter the data directory. It always uses the default value of /var/lib/rancher/rke2. | test | data dir flag is ignored starting with the data dir option does not alter the data directory it always uses the default value of var lib rancher | 1 |

216,702 | 16,796,145,761 | IssuesEvent | 2021-06-16 04:02:33 | AleoHQ/leo | https://api.github.com/repos/AleoHQ/leo | closed | [Tests] more tests for characters and strings | tests | ## 🐛 Bug Report

There is no separate issue label for tests that are needed, so I will call this a bug.

There should be more tests for characters and strings.

In `leo/tests/parser/expression/literal/char.leo`

more kinds of literal characters should be tested.

The principle here is to test at least one kind of each special category.

Take a look at `rfc/001-initial-strings.md` to see what is special.

Currently need to be added are:

- '\'', '\\', '\n', 'u{..}' with 1, 2, or 3 hex digits in the braces;

- 'å' (or any unicode character that has a 2-byte utf-8 encoding,

in this example it is C3 A5 and the name is LATIN SMALL LETTER A WITH RING ABOVE);

- '😭' (or any unicode character that has a 4-byte utf-8 encoding,

in this example it is F0 9F 98 AD and the name is LOUDLY CRYING FACE);

- for the \x and \u{} escapes, add tests test lowercase letter hex digits

- Also, test all the nonprinting ascii characters as utf-8 chars.

In other words, literal chars with code points 0 through 31.

(If any of these are not supported, that should be documented.)

In `leo/tests/compiler/char/`

every special case that you see in `parser/expression/literal/char.leo`

should be tested:

* as an input literal

* as a literal within the program

* as an output literal (make sure the right thing shows up in the output)

* sent to console.log()

For string tests, there should be at least as good coverage of literals that there is

with characters, both under tests/parser and tests/compiler

There should be more failure tests, too.

- Any backslash-<something> that is not valid should have a must-fail test.

For example, \? where ? stands for any byte other than ascii \"'trn0xu

should all fail. It would be good to test all such bytes, but especially

important are ones that have an obvious reason to fail, like 1TRNXU

- \x followed by anything other than 0-7 should fail

- \x? followed by anything other than 0-9a-fA-F should fail

- \u followed by anything other than { should fail

- \u{ followed by anything other than 0-9a-fA-F should fail

- \u{? followed by anything other than 0-9a-fA-F} should fail

- \u{xxxxxx if the exes are all hex digits, then anything following other than } should fail

- Sequences of bytes in literal chars and strings that are invalid UTF-8 should fail.

To get good coverage of invalid UTF-8 byte sequences you can look at the

well-formed sequences in table 3.7 of the unicode specification:

https://www.unicode.org/versions/Unicode13.0.0/ch03.pdf#G7404

to see where the ill-formed sequences are.

For example, here are some that should fail (where brackets represent the

char or string quotes, spaces are ignored, and the hex values are the byte values):

[80], [C1], [C2 7F], [DF C0], [E0 9F], etc.

For tests that should fail parsing, it might be OK to test them only under

tests/parser and not bother with tests/compiler. Although there is the issue

of how the compiler handles parse tests, that might not need to be demonstrated

or every kind of parse failure.

## Your Environment

Leo 1.5.0

rustc 1.51.0

linux mint 19.3 (on ubuntu 18.04)

| 1.0 | [Tests] more tests for characters and strings - ## 🐛 Bug Report

There is no separate issue label for tests that are needed, so I will call this a bug.

There should be more tests for characters and strings.

In `leo/tests/parser/expression/literal/char.leo`

more kinds of literal characters should be tested.

The principle here is to test at least one kind of each special category.

Take a look at `rfc/001-initial-strings.md` to see what is special.

Currently need to be added are:

- '\'', '\\', '\n', 'u{..}' with 1, 2, or 3 hex digits in the braces;

- 'å' (or any unicode character that has a 2-byte utf-8 encoding,

in this example it is C3 A5 and the name is LATIN SMALL LETTER A WITH RING ABOVE);

- '😭' (or any unicode character that has a 4-byte utf-8 encoding,

in this example it is F0 9F 98 AD and the name is LOUDLY CRYING FACE);

- for the \x and \u{} escapes, add tests test lowercase letter hex digits

- Also, test all the nonprinting ascii characters as utf-8 chars.

In other words, literal chars with code points 0 through 31.

(If any of these are not supported, that should be documented.)

In `leo/tests/compiler/char/`

every special case that you see in `parser/expression/literal/char.leo`

should be tested:

* as an input literal

* as a literal within the program

* as an output literal (make sure the right thing shows up in the output)

* sent to console.log()

For string tests, there should be at least as good coverage of literals that there is

with characters, both under tests/parser and tests/compiler

There should be more failure tests, too.

- Any backslash-<something> that is not valid should have a must-fail test.

For example, \? where ? stands for any byte other than ascii \"'trn0xu

should all fail. It would be good to test all such bytes, but especially

important are ones that have an obvious reason to fail, like 1TRNXU

- \x followed by anything other than 0-7 should fail

- \x? followed by anything other than 0-9a-fA-F should fail

- \u followed by anything other than { should fail

- \u{ followed by anything other than 0-9a-fA-F should fail

- \u{? followed by anything other than 0-9a-fA-F} should fail

- \u{xxxxxx if the exes are all hex digits, then anything following other than } should fail

- Sequences of bytes in literal chars and strings that are invalid UTF-8 should fail.

To get good coverage of invalid UTF-8 byte sequences you can look at the

well-formed sequences in table 3.7 of the unicode specification:

https://www.unicode.org/versions/Unicode13.0.0/ch03.pdf#G7404

to see where the ill-formed sequences are.

For example, here are some that should fail (where brackets represent the

char or string quotes, spaces are ignored, and the hex values are the byte values):

[80], [C1], [C2 7F], [DF C0], [E0 9F], etc.

For tests that should fail parsing, it might be OK to test them only under

tests/parser and not bother with tests/compiler. Although there is the issue

of how the compiler handles parse tests, that might not need to be demonstrated

or every kind of parse failure.

## Your Environment

Leo 1.5.0

rustc 1.51.0

linux mint 19.3 (on ubuntu 18.04)

| test | more tests for characters and strings 🐛 bug report there is no separate issue label for tests that are needed so i will call this a bug there should be more tests for characters and strings in leo tests parser expression literal char leo more kinds of literal characters should be tested the principle here is to test at least one kind of each special category take a look at rfc initial strings md to see what is special currently need to be added are n u with or hex digits in the braces å or any unicode character that has a byte utf encoding in this example it is and the name is latin small letter a with ring above 😭 or any unicode character that has a byte utf encoding in this example it is ad and the name is loudly crying face for the x and u escapes add tests test lowercase letter hex digits also test all the nonprinting ascii characters as utf chars in other words literal chars with code points through if any of these are not supported that should be documented in leo tests compiler char every special case that you see in parser expression literal char leo should be tested as an input literal as a literal within the program as an output literal make sure the right thing shows up in the output sent to console log for string tests there should be at least as good coverage of literals that there is with characters both under tests parser and tests compiler there should be more failure tests too any backslash that is not valid should have a must fail test for example where stands for any byte other than ascii should all fail it would be good to test all such bytes but especially important are ones that have an obvious reason to fail like x followed by anything other than should fail x followed by anything other than fa f should fail u followed by anything other than should fail u followed by anything other than fa f should fail u followed by anything other than fa f should fail u xxxxxx if the exes are all hex digits then anything following other than should fail sequences of bytes in literal chars and strings that are invalid utf should fail to get good coverage of invalid utf byte sequences you can look at the well formed sequences in table of the unicode specification to see where the ill formed sequences are for example here are some that should fail where brackets represent the char or string quotes spaces are ignored and the hex values are the byte values etc for tests that should fail parsing it might be ok to test them only under tests parser and not bother with tests compiler although there is the issue of how the compiler handles parse tests that might not need to be demonstrated or every kind of parse failure your environment leo rustc linux mint on ubuntu | 1 |

246,387 | 20,862,339,690 | IssuesEvent | 2022-03-22 00:57:13 | backend-br/vagas | https://api.github.com/repos/backend-br/vagas | closed | [Remoto] Back-end Developer Ruby on Rails @Red Pill Pontomais | CLT Pleno TDD Ruby AWS Testes automatizados MongoDB Redis SQL Rest Stale | A Red Pill juntamente a Pontomais está em busca de um novo Desenvolvedor Ruby on Rails.

Como Pessoa Desenvolvedora Back-End você terá a missão de desenvolver tecnicamente o produto visando a melhor experiência para o usuário, mantendo a estabilidade, SLA e entregas de qualidade, usando as melhores práticas de código.

No seu dia a dia você vai:

Desenvolvimento de aplicações com o uso das melhores práticas de engenharia de software, com foco no produto, com aderência nos processos da empresa e com envolvimento de diversas áreas durante a execução, incluindo a entrega;

Garantir desenvolvimento de códigos com qualidade e cobertura de testes automatizados;

Implementar features e automações através de ferramentas homologadas e trarão valor para nossos clientes e nossa operação;

Garantir a estabilidade do produto visando alcançar a SLA de CES;

Fazer code review;

Contribuir para atingir os ORKS da empresa, desdobrados na área.

O que você precisa ter:

Experiência profissional a partir de 3 anos;

Desenvolvimento de softwares utilizando banco de dados e Ruby on Rails

Ferramenta de controle de versionamento de código;

API Rest;

Implantação End-to-End;

Seria legal qye você tenha coenhecimento básico em:

Automação de testes;

Desenvolvimento orientado ao TDD;

AWS - EC2, SQS, SNS, S3;

Conhecimento de bancos de dados no-sql como Redis e MongoDB;

Postgrsql;

Benefícios

Se liga aqui que tem muita coisa boa:

Vale Refeição de R$30 por dia;

Vale Alimentação de R$300 (quando vc sair de férias ainda recebe o VA, viu). VR e VA não tem aquele desconto na folha de pagamento;

Plano de Saúde sem custo na mensalidade;

Plano Odontológico sem custo na mensalidade;

Serviço de ambulância para emergências médicas;

Vale Farmácia;

Totall pass - Seguindo a linha da saúde temos mais esse beneficio para você;

R$100,00 de vale home office;

Plano de Carreiras, Cargos e Salários;

PAE - Programa de Assistência Jurídica, Psicológica, Previdenciária e mais;

Licença Maternidade e Paternidade estendida;

Treinamentos e workshops (internos e externos);

Parcerias com Instituições de Ensino;

Day-off em 1 dia do mês do seu aniversário - pq é um dia especial e vc merece esse mimo de escolher qual dia vc quer folgar no mês do seu aniversário (só não esquece de trazer um pedaço de bolo pra gente!);

CLT

Nível Pleno

Faixa de 10k

Currículos para crystal.g@redpillrh.com.br ou msg no whats 51985593215. | 1.0 | [Remoto] Back-end Developer Ruby on Rails @Red Pill Pontomais - A Red Pill juntamente a Pontomais está em busca de um novo Desenvolvedor Ruby on Rails.

Como Pessoa Desenvolvedora Back-End você terá a missão de desenvolver tecnicamente o produto visando a melhor experiência para o usuário, mantendo a estabilidade, SLA e entregas de qualidade, usando as melhores práticas de código.

No seu dia a dia você vai:

Desenvolvimento de aplicações com o uso das melhores práticas de engenharia de software, com foco no produto, com aderência nos processos da empresa e com envolvimento de diversas áreas durante a execução, incluindo a entrega;

Garantir desenvolvimento de códigos com qualidade e cobertura de testes automatizados;

Implementar features e automações através de ferramentas homologadas e trarão valor para nossos clientes e nossa operação;

Garantir a estabilidade do produto visando alcançar a SLA de CES;

Fazer code review;

Contribuir para atingir os ORKS da empresa, desdobrados na área.

O que você precisa ter:

Experiência profissional a partir de 3 anos;

Desenvolvimento de softwares utilizando banco de dados e Ruby on Rails

Ferramenta de controle de versionamento de código;

API Rest;

Implantação End-to-End;

Seria legal qye você tenha coenhecimento básico em:

Automação de testes;

Desenvolvimento orientado ao TDD;

AWS - EC2, SQS, SNS, S3;

Conhecimento de bancos de dados no-sql como Redis e MongoDB;

Postgrsql;

Benefícios

Se liga aqui que tem muita coisa boa:

Vale Refeição de R$30 por dia;

Vale Alimentação de R$300 (quando vc sair de férias ainda recebe o VA, viu). VR e VA não tem aquele desconto na folha de pagamento;

Plano de Saúde sem custo na mensalidade;

Plano Odontológico sem custo na mensalidade;

Serviço de ambulância para emergências médicas;

Vale Farmácia;

Totall pass - Seguindo a linha da saúde temos mais esse beneficio para você;

R$100,00 de vale home office;

Plano de Carreiras, Cargos e Salários;

PAE - Programa de Assistência Jurídica, Psicológica, Previdenciária e mais;

Licença Maternidade e Paternidade estendida;

Treinamentos e workshops (internos e externos);

Parcerias com Instituições de Ensino;

Day-off em 1 dia do mês do seu aniversário - pq é um dia especial e vc merece esse mimo de escolher qual dia vc quer folgar no mês do seu aniversário (só não esquece de trazer um pedaço de bolo pra gente!);

CLT

Nível Pleno

Faixa de 10k

Currículos para crystal.g@redpillrh.com.br ou msg no whats 51985593215. | test | back end developer ruby on rails red pill pontomais a red pill juntamente a pontomais está em busca de um novo desenvolvedor ruby on rails como pessoa desenvolvedora back end você terá a missão de desenvolver tecnicamente o produto visando a melhor experiência para o usuário mantendo a estabilidade sla e entregas de qualidade usando as melhores práticas de código no seu dia a dia você vai desenvolvimento de aplicações com o uso das melhores práticas de engenharia de software com foco no produto com aderência nos processos da empresa e com envolvimento de diversas áreas durante a execução incluindo a entrega garantir desenvolvimento de códigos com qualidade e cobertura de testes automatizados implementar features e automações através de ferramentas homologadas e trarão valor para nossos clientes e nossa operação garantir a estabilidade do produto visando alcançar a sla de ces fazer code review contribuir para atingir os orks da empresa desdobrados na área o que você precisa ter experiência profissional a partir de anos desenvolvimento de softwares utilizando banco de dados e ruby on rails ferramenta de controle de versionamento de código api rest implantação end to end seria legal qye você tenha coenhecimento básico em automação de testes desenvolvimento orientado ao tdd aws sqs sns conhecimento de bancos de dados no sql como redis e mongodb postgrsql benefícios se liga aqui que tem muita coisa boa vale refeição de r por dia vale alimentação de r quando vc sair de férias ainda recebe o va viu vr e va não tem aquele desconto na folha de pagamento plano de saúde sem custo na mensalidade plano odontológico sem custo na mensalidade serviço de ambulância para emergências médicas vale farmácia totall pass seguindo a linha da saúde temos mais esse beneficio para você r de vale home office plano de carreiras cargos e salários pae programa de assistência jurídica psicológica previdenciária e mais licença maternidade e paternidade estendida treinamentos e workshops internos e externos parcerias com instituições de ensino day off em dia do mês do seu aniversário pq é um dia especial e vc merece esse mimo de escolher qual dia vc quer folgar no mês do seu aniversário só não esquece de trazer um pedaço de bolo pra gente clt nível pleno faixa de currículos para crystal g redpillrh com br ou msg no whats | 1 |

66,055 | 6,987,327,493 | IssuesEvent | 2017-12-14 08:48:46 | nodejs/node | https://api.github.com/repos/nodejs/node | closed | make test triggers "node quit unexpectedly" on Fedora 27 | question test |

* **Version**: master

* **Platform**: Linux 4.14.3-300.fc27.x86_64 #1 SMP Mon Dec 4 17:18:27 UTC 2017 x86_64 x86_64 x86_64 GNU/Linux

* **Subsystem**: test

<!-- Enter your issue details below this comment. -->

Last week I formatted my main SSD and did a fresh installation of Fedora 27. Now, when I run `make test`, there are always three crashes reported by the system as "node quit unexpectedly". This did not happen with my old installation.

It seems those are part of the tests, but I wonder if it's possible to avoid having them caught by the system?

/cc @bnoordhuis ? | 1.0 | make test triggers "node quit unexpectedly" on Fedora 27 -

* **Version**: master

* **Platform**: Linux 4.14.3-300.fc27.x86_64 #1 SMP Mon Dec 4 17:18:27 UTC 2017 x86_64 x86_64 x86_64 GNU/Linux

* **Subsystem**: test

<!-- Enter your issue details below this comment. -->

Last week I formatted my main SSD and did a fresh installation of Fedora 27. Now, when I run `make test`, there are always three crashes reported by the system as "node quit unexpectedly". This did not happen with my old installation.

It seems those are part of the tests, but I wonder if it's possible to avoid having them caught by the system?

/cc @bnoordhuis ? | test | make test triggers node quit unexpectedly on fedora version master platform linux smp mon dec utc gnu linux subsystem test last week i formatted my main ssd and did a fresh installation of fedora now when i run make test there are always three crashes reported by the system as node quit unexpectedly this did not happen with my old installation it seems those are part of the tests but i wonder if it s possible to avoid having them caught by the system cc bnoordhuis | 1 |

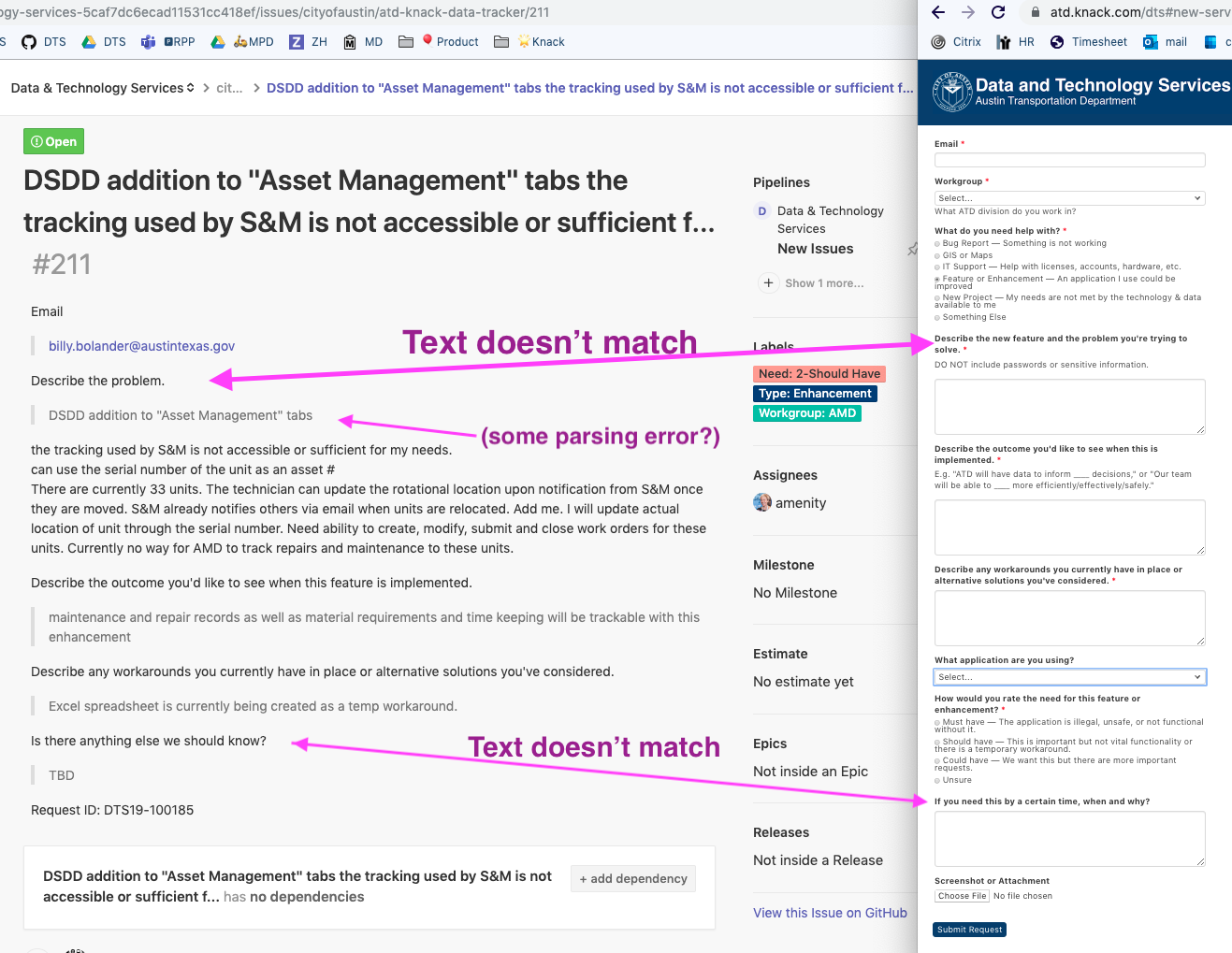

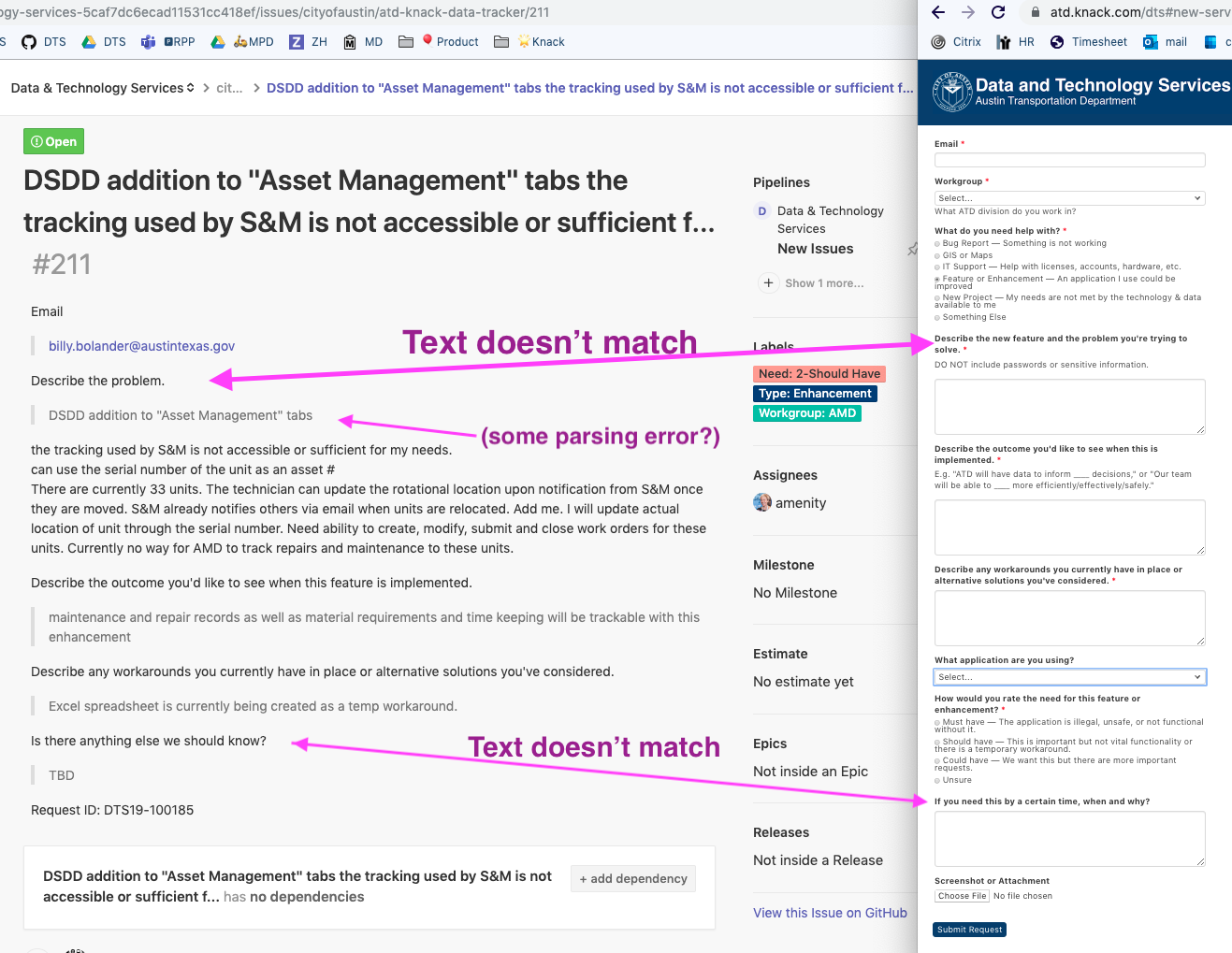

386,564 | 11,441,083,129 | IssuesEvent | 2020-02-05 10:55:42 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [studio] Error Site context is not valid anymore when creating first site in Studio | bug priority: high | ## Describe the bug

On a fresh install or when you delete the `data` folder, there is an error that the site context is not valid anymore when creating the very first site in Studio

```

[ERROR] 2020-01-06T11:11:16,696 [http-nio-8080-exec-6] [] [context.SiteContextManager] | Site context SiteContext{siteName='newsite', context=FileSystemContext{id='977d6174f06786497c2d88f76bf315b0', rootFolderPath='file:/Users/vita/temp/test3/craftercms/crafter-authoring/data/repos/sites/newsite/sandbox/'}, fallback=false, staticAssetsPath='/static-assets', templatesPath='/', restScriptsPath='/scripts/rest', controllerScriptsPath='/scripts/controllers'} is not valid anymore

```

## To Reproduce

Steps to reproduce the behavior:

1. Clone craftercms or, delete the `crafter-authoring/data` folder

2. Start Studio

3. Create a site using the website editorial bp

4. Watch the logs as the site is being created and notice after your site is created, thesite preview is not available and instead you get a `Could not resolve site for the current request.`

## Expected behavior

There should be no errors when creating the first site in Studio

## Screenshots

{{If applicable, add screenshots to help explain your problem.}}

## Logs

```

[ERROR] 2020-01-06T11:11:16,696 [http-nio-8080-exec-6] [] [context.SiteContextManager] | Site context SiteContext{siteName='newsite', context=FileSystemContext{id='977d6174f06786497c2d88f76bf315b0', rootFolderPath='file:/Users/vita/temp/test3/craftercms/crafter-authoring/data/repos/sites/newsite/sandbox/'}, fallback=false, staticAssetsPath='/static-assets', templatesPath='/', restScriptsPath='/scripts/rest', controllerScriptsPath='/scripts/controllers'} is not valid anymore

[INFO] 2020-01-06T11:11:16,696 [http-nio-8080-exec-6] [] [context.SiteContextManager] | ==================================================

[INFO] 2020-01-06T11:11:16,696 [http-nio-8080-exec-6] [] [context.SiteContextManager] | <Destroying site context: newsite>

[INFO] 2020-01-06T11:11:16,697 [http-nio-8080-exec-6] [] [context.SiteContextManager] | ==================================================

[INFO] 2020-01-06T11:11:16,697 [http-nio-8080-exec-6] [] [support.GenericApplicationContext] | Closing org.springframework.context.support.GenericApplicationContext@441fa52e: startup date [Mon Jan 06 11:11:15 EST 2020]; parent: Root WebApplicationContext

[INFO] 2020-01-06T11:11:16,698 [http-nio-8080-exec-6] [] [context.SiteContextManager] | Site context destroyed: SiteContext{siteName='newsite', context=FileSystemContext{id='977d6174f06786497c2d88f76bf315b0', rootFolderPath='file:/Users/vita/temp/test3/craftercms/crafter-authoring/data/repos/sites/newsite/sandbox/'}, fallback=false, staticAssetsPath='/static-assets', templatesPath='/', restScriptsPath='/scripts/rest', controllerScriptsPath='/scripts/controllers'}

[INFO] 2020-01-06T11:11:16,698 [http-nio-8080-exec-6] [] [context.SiteContextManager] | ==================================================

[INFO] 2020-01-06T11:11:16,699 [http-nio-8080-exec-6] [] [context.SiteContextManager] | </Destroying site context: newsite>

[INFO] 2020-01-06T11:11:16,699 [http-nio-8080-exec-6] [] [context.SiteContextManager] | ==================================================

[ERROR] 2020-01-06T11:11:16,699 [http-nio-8080-exec-6] [] [filter.SiteContextResolvingFilter] | Error while resolving site context for current request

java.lang.IllegalStateException: Unable to resolve context for site name 'newsite'

at org.craftercms.engine.service.context.SiteContextResolverImpl.getContext(SiteContextResolverImpl.java:81) ~[classes/:3.1.5-SNAPSHOT]

at org.craftercms.engine.servlet.filter.SiteContextResolvingFilter.getContext(SiteContextResolvingFilter.java:95) [classes/:3.1.5-SNAPSHOT]

at org.craftercms.engine.servlet.filter.SiteContextResolvingFilter.doFilter(SiteContextResolvingFilter.java:79) [classes/:3.1.5-SNAPSHOT]

at org.springframework.web.filter.DelegatingFilterProxy.invokeDelegate(DelegatingFilterProxy.java:347) [spring-web-4.3.18.RELEASE.jar:4.3.18.RELEASE]

at org.springframework.web.filter.DelegatingFilterProxy.doFilter(DelegatingFilterProxy.java:263) [spring-web-4.3.18.RELEASE.jar:4.3.18.RELEASE]

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:193) [catalina.jar:8.5.24]

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:166) [catalina.jar:8.5.24]

at org.craftercms.commons.http.RequestContextBindingFilter.doFilter(RequestContextBindingFilter.java:79) [crafter-commons-utilities-3.1.5-SNAPSHOT.jar:3.1.5-SNAPSHOT]

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:193) [catalina.jar:8.5.24]

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:166) [catalina.jar:8.5.24]

at org.springframework.web.filter.CharacterEncodingFilter.doFilterInternal(CharacterEncodingFilter.java:197) [spring-web-4.3.18.RELEASE.jar:4.3.18.RELEASE]

at org.springframework.web.filter.OncePerRequestFilter.doFilter(OncePerRequestFilter.java:107) [spring-web-4.3.18.RELEASE.jar:4.3.18.RELEASE]

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:193) [catalina.jar:8.5.24]

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:166) [catalina.jar:8.5.24]

at org.apache.logging.log4j.web.Log4jServletFilter.doFilter(Log4jServletFilter.java:71) [log4j-web-2.11.2.jar:2.11.2]

at org.apache.catalina.core.ApplicationFilterChain.internalDoFilter(ApplicationFilterChain.java:193) [catalina.jar:8.5.24]

at org.apache.catalina.core.ApplicationFilterChain.doFilter(ApplicationFilterChain.java:166) [catalina.jar:8.5.24]

at org.apache.catalina.core.StandardWrapperValve.invoke(StandardWrapperValve.java:198) [catalina.jar:8.5.24]

at org.apache.catalina.core.StandardContextValve.invoke(StandardContextValve.java:96) [catalina.jar:8.5.24]

at org.apache.catalina.authenticator.AuthenticatorBase.invoke(AuthenticatorBase.java:504) [catalina.jar:8.5.24]

at org.apache.catalina.core.StandardHostValve.invoke(StandardHostValve.java:140) [catalina.jar:8.5.24]

at org.apache.catalina.valves.ErrorReportValve.invoke(ErrorReportValve.java:81) [catalina.jar:8.5.24]

at org.apache.catalina.valves.AbstractAccessLogValve.invoke(AbstractAccessLogValve.java:650) [catalina.jar:8.5.24]

at org.apache.catalina.core.StandardEngineValve.invoke(StandardEngineValve.java:87) [catalina.jar:8.5.24]

at org.apache.catalina.connector.CoyoteAdapter.service(CoyoteAdapter.java:342) [catalina.jar:8.5.24]

at org.apache.coyote.http11.Http11Processor.service(Http11Processor.java:803) [tomcat-coyote.jar:8.5.24]

at org.apache.coyote.AbstractProcessorLight.process(AbstractProcessorLight.java:66) [tomcat-coyote.jar:8.5.24]

at org.apache.coyote.AbstractProtocol$ConnectionHandler.process(AbstractProtocol.java:790) [tomcat-coyote.jar:8.5.24]

at org.apache.tomcat.util.net.NioEndpoint$SocketProcessor.doRun(NioEndpoint.java:1459) [tomcat-coyote.jar:8.5.24]

at org.apache.tomcat.util.net.SocketProcessorBase.run(SocketProcessorBase.java:49) [tomcat-coyote.jar:8.5.24]

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149) [?:1.8.0_162]

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624) [?:1.8.0_162]

at org.apache.tomcat.util.threads.TaskThread$WrappingRunnable.run(TaskThread.java:61) [tomcat-util.jar:8.5.24]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_162]

```

## Specs

### Version

Studio Version Number: 3.1.5-SNAPSHOT-189958

Build Number: 189958a33a56a46094df29c2c9675f709331ceba

Build Date/Time: 01-06-2020 11:02:57 -0500

### OS

OS X

### Browser

Chrome browser

## Additional context

The error only appears the first time you create a site. Also, after getting the error `Could not resolve site for the current request.` in the UI, if you click on `Main Menu` -> `Sites` then click on your site, the site is now available for preview.

| 1.0 | [studio] Error Site context is not valid anymore when creating first site in Studio - ## Describe the bug

On a fresh install or when you delete the `data` folder, there is an error that the site context is not valid anymore when creating the very first site in Studio

```

[ERROR] 2020-01-06T11:11:16,696 [http-nio-8080-exec-6] [] [context.SiteContextManager] | Site context SiteContext{siteName='newsite', context=FileSystemContext{id='977d6174f06786497c2d88f76bf315b0', rootFolderPath='file:/Users/vita/temp/test3/craftercms/crafter-authoring/data/repos/sites/newsite/sandbox/'}, fallback=false, staticAssetsPath='/static-assets', templatesPath='/', restScriptsPath='/scripts/rest', controllerScriptsPath='/scripts/controllers'} is not valid anymore

```

## To Reproduce

Steps to reproduce the behavior:

1. Clone craftercms or, delete the `crafter-authoring/data` folder

2. Start Studio

3. Create a site using the website editorial bp

4. Watch the logs as the site is being created and notice after your site is created, thesite preview is not available and instead you get a `Could not resolve site for the current request.`

## Expected behavior

There should be no errors when creating the first site in Studio

## Screenshots

{{If applicable, add screenshots to help explain your problem.}}

## Logs

```

[ERROR] 2020-01-06T11:11:16,696 [http-nio-8080-exec-6] [] [context.SiteContextManager] | Site context SiteContext{siteName='newsite', context=FileSystemContext{id='977d6174f06786497c2d88f76bf315b0', rootFolderPath='file:/Users/vita/temp/test3/craftercms/crafter-authoring/data/repos/sites/newsite/sandbox/'}, fallback=false, staticAssetsPath='/static-assets', templatesPath='/', restScriptsPath='/scripts/rest', controllerScriptsPath='/scripts/controllers'} is not valid anymore

[INFO] 2020-01-06T11:11:16,696 [http-nio-8080-exec-6] [] [context.SiteContextManager] | ==================================================

[INFO] 2020-01-06T11:11:16,696 [http-nio-8080-exec-6] [] [context.SiteContextManager] | <Destroying site context: newsite>

[INFO] 2020-01-06T11:11:16,697 [http-nio-8080-exec-6] [] [context.SiteContextManager] | ==================================================

[INFO] 2020-01-06T11:11:16,697 [http-nio-8080-exec-6] [] [support.GenericApplicationContext] | Closing org.springframework.context.support.GenericApplicationContext@441fa52e: startup date [Mon Jan 06 11:11:15 EST 2020]; parent: Root WebApplicationContext

[INFO] 2020-01-06T11:11:16,698 [http-nio-8080-exec-6] [] [context.SiteContextManager] | Site context destroyed: SiteContext{siteName='newsite', context=FileSystemContext{id='977d6174f06786497c2d88f76bf315b0', rootFolderPath='file:/Users/vita/temp/test3/craftercms/crafter-authoring/data/repos/sites/newsite/sandbox/'}, fallback=false, staticAssetsPath='/static-assets', templatesPath='/', restScriptsPath='/scripts/rest', controllerScriptsPath='/scripts/controllers'}

[INFO] 2020-01-06T11:11:16,698 [http-nio-8080-exec-6] [] [context.SiteContextManager] | ==================================================

[INFO] 2020-01-06T11:11:16,699 [http-nio-8080-exec-6] [] [context.SiteContextManager] | </Destroying site context: newsite>

[INFO] 2020-01-06T11:11:16,699 [http-nio-8080-exec-6] [] [context.SiteContextManager] | ==================================================

[ERROR] 2020-01-06T11:11:16,699 [http-nio-8080-exec-6] [] [filter.SiteContextResolvingFilter] | Error while resolving site context for current request

java.lang.IllegalStateException: Unable to resolve context for site name 'newsite'

at org.craftercms.engine.service.context.SiteContextResolverImpl.getContext(SiteContextResolverImpl.java:81) ~[classes/:3.1.5-SNAPSHOT]

at org.craftercms.engine.servlet.filter.SiteContextResolvingFilter.getContext(SiteContextResolvingFilter.java:95) [classes/:3.1.5-SNAPSHOT]

at org.craftercms.engine.servlet.filter.SiteContextResolvingFilter.doFilter(SiteContextResolvingFilter.java:79) [classes/:3.1.5-SNAPSHOT]

at org.springframework.web.filter.DelegatingFilterProxy.invokeDelegate(DelegatingFilterProxy.java:347) [spring-web-4.3.18.RELEASE.jar:4.3.18.RELEASE]