Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

8,714 | 3,004,049,298 | IssuesEvent | 2015-07-25 14:43:35 | IntellectualSites/PlotSquared | https://api.github.com/repos/IntellectualSites/PlotSquared | closed | NPE | [!] bug [?] needs testing | [23:36:03] [Server thread/WARN]: [PlotSquared] Task #6 for PlotSquared v2.12.15 generated an exception

java.lang.NullPointerException

at com.intellectualcrafters.plot.listeners.PlotPlusListener$1.run(PlotPlusListener.java:90) ~[?:?]

at org.bukkit.craftbukkit.v1_8_R3.scheduler.CraftTask.run(CraftTask.java:71) ~[creative.jar:git-PaperSpigot-4d70f42-b105298]

at org.bukkit.craftbukkit.v1_8_R3.scheduler.CraftScheduler.mainThreadHeartbeat(CraftScheduler.java:350) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at net.minecraft.server.v1_8_R3.MinecraftServer.B(MinecraftServer.java:774) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at net.minecraft.server.v1_8_R3.DedicatedServer.B(DedicatedServer.java:378) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at net.minecraft.server.v1_8_R3.MinecraftServer.A(MinecraftServer.java:705) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at net.minecraft.server.v1_8_R3.MinecraftServer.run(MinecraftServer.java:608) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at java.lang.Thread.run(Thread.java:745) [?:1.8.0_45]

| 1.0 | NPE - [23:36:03] [Server thread/WARN]: [PlotSquared] Task #6 for PlotSquared v2.12.15 generated an exception

java.lang.NullPointerException

at com.intellectualcrafters.plot.listeners.PlotPlusListener$1.run(PlotPlusListener.java:90) ~[?:?]

at org.bukkit.craftbukkit.v1_8_R3.scheduler.CraftTask.run(CraftTask.java:71) ~[creative.jar:git-PaperSpigot-4d70f42-b105298]

at org.bukkit.craftbukkit.v1_8_R3.scheduler.CraftScheduler.mainThreadHeartbeat(CraftScheduler.java:350) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at net.minecraft.server.v1_8_R3.MinecraftServer.B(MinecraftServer.java:774) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at net.minecraft.server.v1_8_R3.DedicatedServer.B(DedicatedServer.java:378) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at net.minecraft.server.v1_8_R3.MinecraftServer.A(MinecraftServer.java:705) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at net.minecraft.server.v1_8_R3.MinecraftServer.run(MinecraftServer.java:608) [creative.jar:git-PaperSpigot-4d70f42-b105298]

at java.lang.Thread.run(Thread.java:745) [?:1.8.0_45]

| test | npe task for plotsquared generated an exception java lang nullpointerexception at com intellectualcrafters plot listeners plotpluslistener run plotpluslistener java at org bukkit craftbukkit scheduler crafttask run crafttask java at org bukkit craftbukkit scheduler craftscheduler mainthreadheartbeat craftscheduler java at net minecraft server minecraftserver b minecraftserver java at net minecraft server dedicatedserver b dedicatedserver java at net minecraft server minecraftserver a minecraftserver java at net minecraft server minecraftserver run minecraftserver java at java lang thread run thread java | 1 |

128,212 | 12,367,140,713 | IssuesEvent | 2020-05-18 11:46:25 | ponylang/ponyup | https://api.github.com/repos/ponylang/ponyup | opened | Document ponyup macOS/brew libressl connection | documentation help wanted | #117 was caused by this.

macOS, it's all dynamic linking.

`brew install libressl` will periodically change the version it installs as they switch to a newer version of libressl. This is rare, but does happen. When that happens, older versions of ponyup will stop working once libressl is updated.

We need to document the expected error that users would see and tell them to reinstall ponyup via the init script as that will download the most recent nightly version. Within 24 hours of a libressl change, it will work again.

This also means, that eventually if they update to a different version of ponyup, that it might be using a different libressl than they have installed and will fail. | 1.0 | Document ponyup macOS/brew libressl connection - #117 was caused by this.

macOS, it's all dynamic linking.

`brew install libressl` will periodically change the version it installs as they switch to a newer version of libressl. This is rare, but does happen. When that happens, older versions of ponyup will stop working once libressl is updated.

We need to document the expected error that users would see and tell them to reinstall ponyup via the init script as that will download the most recent nightly version. Within 24 hours of a libressl change, it will work again.

This also means, that eventually if they update to a different version of ponyup, that it might be using a different libressl than they have installed and will fail. | non_test | document ponyup macos brew libressl connection was caused by this macos it s all dynamic linking brew install libressl will periodically change the version it installs as they switch to a newer version of libressl this is rare but does happen when that happens older versions of ponyup will stop working once libressl is updated we need to document the expected error that users would see and tell them to reinstall ponyup via the init script as that will download the most recent nightly version within hours of a libressl change it will work again this also means that eventually if they update to a different version of ponyup that it might be using a different libressl than they have installed and will fail | 0 |

214,295 | 16,580,204,319 | IssuesEvent | 2021-05-31 10:41:11 | blynkkk/blynk_Issues | https://api.github.com/repos/blynkkk/blynk_Issues | closed | Web dashboard ignores empty space | bug ready to test web | On web dashboard, when you leave a space on top of a widget, after you save the dashboard, it goes up. It does not allow white space in UI design. (Mac Os 11.3.1, Firefox 88.0.1)

This is editing page.

This is after you save.

| 1.0 | Web dashboard ignores empty space - On web dashboard, when you leave a space on top of a widget, after you save the dashboard, it goes up. It does not allow white space in UI design. (Mac Os 11.3.1, Firefox 88.0.1)

This is editing page.

This is after you save.

| test | web dashboard ignores empty space on web dashboard when you leave a space on top of a widget after you save the dashboard it goes up it does not allow white space in ui design mac os firefox this is editing page this is after you save | 1 |

78,647 | 7,657,016,347 | IssuesEvent | 2018-05-10 18:13:42 | couchbase/couchbase-lite-ios | https://api.github.com/repos/couchbase/couchbase-lite-ios | closed | latest iOS cbl 2.1.0 builds are crashing | functional-test-blocker ready | ### Version

CBL - 2.1.0-150

sg version -> 2.1.0-55

### Issue caused

1. Running ios functional tests on jenkins having crash on the app and bunch of tests failing

I ran tests with 2.1.0-126 and that looks good

If I run tests individually, it works fine.

### Logs:

[CBLTestServer-iOS_2018-04-30-154305-1_Dans-Test-MacBook-Pro.crash.zip](https://github.com/couchbase/couchbase-lite-ios/files/1968363/CBLTestServer-iOS_2018-04-30-154305-1_Dans-Test-MacBook-Pro.crash.zip)

| 1.0 | latest iOS cbl 2.1.0 builds are crashing - ### Version

CBL - 2.1.0-150

sg version -> 2.1.0-55

### Issue caused

1. Running ios functional tests on jenkins having crash on the app and bunch of tests failing

I ran tests with 2.1.0-126 and that looks good

If I run tests individually, it works fine.

### Logs:

[CBLTestServer-iOS_2018-04-30-154305-1_Dans-Test-MacBook-Pro.crash.zip](https://github.com/couchbase/couchbase-lite-ios/files/1968363/CBLTestServer-iOS_2018-04-30-154305-1_Dans-Test-MacBook-Pro.crash.zip)

| test | latest ios cbl builds are crashing version cbl sg version issue caused running ios functional tests on jenkins having crash on the app and bunch of tests failing i ran tests with and that looks good if i run tests individually it works fine logs | 1 |

231,484 | 17,690,791,027 | IssuesEvent | 2021-08-24 09:40:33 | owncloud/ocis | https://api.github.com/repos/owncloud/ocis | reopened | Write documentation for roles & permissions concept | Topic:Documentation | Write documentation for roles & permissions concept. This is intended to be a living document and it should reflect decisions made during the development process. The end result being a document in a state that mirrors the settings service functionality regarding roles and permissions. | 1.0 | Write documentation for roles & permissions concept - Write documentation for roles & permissions concept. This is intended to be a living document and it should reflect decisions made during the development process. The end result being a document in a state that mirrors the settings service functionality regarding roles and permissions. | non_test | write documentation for roles permissions concept write documentation for roles permissions concept this is intended to be a living document and it should reflect decisions made during the development process the end result being a document in a state that mirrors the settings service functionality regarding roles and permissions | 0 |

251,817 | 27,211,188,498 | IssuesEvent | 2023-02-20 16:40:23 | ZSBRybnik/frontend | https://api.github.com/repos/ZSBRybnik/frontend | closed | node-jq-2.3.3.tgz: 3 vulnerabilities (highest severity is: 7.5) - autoclosed | security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-jq-2.3.3.tgz</b></p></summary>

<p></p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/ZSBRybnik/frontend/commit/273a134394edfb54991ff74097965c8f3cac3de7">273a134394edfb54991ff74097965c8f3cac3de7</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (node-jq version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2022-25881](https://www.mend.io/vulnerability-database/CVE-2022-25881) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High | 7.5 | http-cache-semantics-3.8.1.tgz | Transitive | N/A* | ❌ |

| [CVE-2023-25166](https://www.mend.io/vulnerability-database/CVE-2023-25166) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium | 6.5 | formula-3.0.0.tgz | Transitive | N/A* | ❌ |

| [CVE-2022-33987](https://www.mend.io/vulnerability-database/CVE-2022-33987) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium | 5.3 | detected in multiple dependencies | Transitive | N/A* | ❌ |

<p>*For some transitive vulnerabilities, there is no version of direct dependency with a fix. Check the section "Details" below to see if there is a version of transitive dependency where vulnerability is fixed.</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> CVE-2022-25881</summary>

### Vulnerable Library - <b>http-cache-semantics-3.8.1.tgz</b></p>

<p>Parses Cache-Control and other headers. Helps building correct HTTP caches and proxies</p>

<p>Library home page: <a href="https://registry.npmjs.org/http-cache-semantics/-/http-cache-semantics-3.8.1.tgz">https://registry.npmjs.org/http-cache-semantics/-/http-cache-semantics-3.8.1.tgz</a></p>

<p>

Dependency Hierarchy:

- node-jq-2.3.3.tgz (Root Library)

- download-8.0.0.tgz

- got-8.3.2.tgz

- cacheable-request-2.1.4.tgz

- :x: **http-cache-semantics-3.8.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ZSBRybnik/frontend/commit/273a134394edfb54991ff74097965c8f3cac3de7">273a134394edfb54991ff74097965c8f3cac3de7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

This affects versions of the package http-cache-semantics before 4.1.1. The issue can be exploited via malicious request header values sent to a server, when that server reads the cache policy from the request using this library.

<p>Publish Date: 2023-01-31

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-25881>CVE-2022-25881</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2022-25881">https://www.cve.org/CVERecord?id=CVE-2022-25881</a></p>

<p>Release Date: 2023-01-31</p>

<p>Fix Resolution: http-cache-semantics - 4.1.1</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> CVE-2023-25166</summary>

### Vulnerable Library - <b>formula-3.0.0.tgz</b></p>

<p>Math and string formula parser.</p>

<p>Library home page: <a href="https://registry.npmjs.org/@sideway/formula/-/formula-3.0.0.tgz">https://registry.npmjs.org/@sideway/formula/-/formula-3.0.0.tgz</a></p>

<p>

Dependency Hierarchy:

- node-jq-2.3.3.tgz (Root Library)

- joi-17.6.0.tgz

- :x: **formula-3.0.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ZSBRybnik/frontend/commit/273a134394edfb54991ff74097965c8f3cac3de7">273a134394edfb54991ff74097965c8f3cac3de7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

formula is a math and string formula parser. In versions prior to 3.0.1 crafted user-provided strings to formula's parser might lead to polynomial execution time and a denial of service. Users should upgrade to 3.0.1+. There are no known workarounds for this vulnerability.

<p>Publish Date: 2023-02-08

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-25166>CVE-2023-25166</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>6.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2023-25166">https://www.cve.org/CVERecord?id=CVE-2023-25166</a></p>

<p>Release Date: 2023-02-08</p>

<p>Fix Resolution: @sideway/formula - 3.0.1</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> CVE-2022-33987</summary>

### Vulnerable Libraries - <b>got-8.3.2.tgz</b>, <b>got-7.1.0.tgz</b></p>

<p>

### <b>got-8.3.2.tgz</b></p>

<p>Simplified HTTP requests</p>

<p>Library home page: <a href="https://registry.npmjs.org/got/-/got-8.3.2.tgz">https://registry.npmjs.org/got/-/got-8.3.2.tgz</a></p>

<p>

Dependency Hierarchy:

- node-jq-2.3.3.tgz (Root Library)

- download-8.0.0.tgz

- :x: **got-8.3.2.tgz** (Vulnerable Library)

### <b>got-7.1.0.tgz</b></p>

<p>Simplified HTTP requests</p>

<p>Library home page: <a href="https://registry.npmjs.org/got/-/got-7.1.0.tgz">https://registry.npmjs.org/got/-/got-7.1.0.tgz</a></p>

<p>

Dependency Hierarchy:

- node-jq-2.3.3.tgz (Root Library)

- bin-build-3.0.0.tgz

- download-6.2.5.tgz

- :x: **got-7.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ZSBRybnik/frontend/commit/273a134394edfb54991ff74097965c8f3cac3de7">273a134394edfb54991ff74097965c8f3cac3de7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

The got package before 12.1.0 (also fixed in 11.8.5) for Node.js allows a redirect to a UNIX socket.

<p>Publish Date: 2022-06-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-33987>CVE-2022-33987</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>5.3</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-33987">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-33987</a></p>

<p>Release Date: 2022-06-18</p>

<p>Fix Resolution: got - 11.8.5,12.1.0</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details> | True | node-jq-2.3.3.tgz: 3 vulnerabilities (highest severity is: 7.5) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>node-jq-2.3.3.tgz</b></p></summary>

<p></p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/ZSBRybnik/frontend/commit/273a134394edfb54991ff74097965c8f3cac3de7">273a134394edfb54991ff74097965c8f3cac3de7</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (node-jq version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2022-25881](https://www.mend.io/vulnerability-database/CVE-2022-25881) | <img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> High | 7.5 | http-cache-semantics-3.8.1.tgz | Transitive | N/A* | ❌ |

| [CVE-2023-25166](https://www.mend.io/vulnerability-database/CVE-2023-25166) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium | 6.5 | formula-3.0.0.tgz | Transitive | N/A* | ❌ |

| [CVE-2022-33987](https://www.mend.io/vulnerability-database/CVE-2022-33987) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Medium | 5.3 | detected in multiple dependencies | Transitive | N/A* | ❌ |

<p>*For some transitive vulnerabilities, there is no version of direct dependency with a fix. Check the section "Details" below to see if there is a version of transitive dependency where vulnerability is fixed.</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> CVE-2022-25881</summary>

### Vulnerable Library - <b>http-cache-semantics-3.8.1.tgz</b></p>

<p>Parses Cache-Control and other headers. Helps building correct HTTP caches and proxies</p>

<p>Library home page: <a href="https://registry.npmjs.org/http-cache-semantics/-/http-cache-semantics-3.8.1.tgz">https://registry.npmjs.org/http-cache-semantics/-/http-cache-semantics-3.8.1.tgz</a></p>

<p>

Dependency Hierarchy:

- node-jq-2.3.3.tgz (Root Library)

- download-8.0.0.tgz

- got-8.3.2.tgz

- cacheable-request-2.1.4.tgz

- :x: **http-cache-semantics-3.8.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ZSBRybnik/frontend/commit/273a134394edfb54991ff74097965c8f3cac3de7">273a134394edfb54991ff74097965c8f3cac3de7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

This affects versions of the package http-cache-semantics before 4.1.1. The issue can be exploited via malicious request header values sent to a server, when that server reads the cache policy from the request using this library.

<p>Publish Date: 2023-01-31

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-25881>CVE-2022-25881</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>7.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2022-25881">https://www.cve.org/CVERecord?id=CVE-2022-25881</a></p>

<p>Release Date: 2023-01-31</p>

<p>Fix Resolution: http-cache-semantics - 4.1.1</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> CVE-2023-25166</summary>

### Vulnerable Library - <b>formula-3.0.0.tgz</b></p>

<p>Math and string formula parser.</p>

<p>Library home page: <a href="https://registry.npmjs.org/@sideway/formula/-/formula-3.0.0.tgz">https://registry.npmjs.org/@sideway/formula/-/formula-3.0.0.tgz</a></p>

<p>

Dependency Hierarchy:

- node-jq-2.3.3.tgz (Root Library)

- joi-17.6.0.tgz

- :x: **formula-3.0.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ZSBRybnik/frontend/commit/273a134394edfb54991ff74097965c8f3cac3de7">273a134394edfb54991ff74097965c8f3cac3de7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

formula is a math and string formula parser. In versions prior to 3.0.1 crafted user-provided strings to formula's parser might lead to polynomial execution time and a denial of service. Users should upgrade to 3.0.1+. There are no known workarounds for this vulnerability.

<p>Publish Date: 2023-02-08

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-25166>CVE-2023-25166</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>6.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.cve.org/CVERecord?id=CVE-2023-25166">https://www.cve.org/CVERecord?id=CVE-2023-25166</a></p>

<p>Release Date: 2023-02-08</p>

<p>Fix Resolution: @sideway/formula - 3.0.1</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details><details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> CVE-2022-33987</summary>

### Vulnerable Libraries - <b>got-8.3.2.tgz</b>, <b>got-7.1.0.tgz</b></p>

<p>

### <b>got-8.3.2.tgz</b></p>

<p>Simplified HTTP requests</p>

<p>Library home page: <a href="https://registry.npmjs.org/got/-/got-8.3.2.tgz">https://registry.npmjs.org/got/-/got-8.3.2.tgz</a></p>

<p>

Dependency Hierarchy:

- node-jq-2.3.3.tgz (Root Library)

- download-8.0.0.tgz

- :x: **got-8.3.2.tgz** (Vulnerable Library)

### <b>got-7.1.0.tgz</b></p>

<p>Simplified HTTP requests</p>

<p>Library home page: <a href="https://registry.npmjs.org/got/-/got-7.1.0.tgz">https://registry.npmjs.org/got/-/got-7.1.0.tgz</a></p>

<p>

Dependency Hierarchy:

- node-jq-2.3.3.tgz (Root Library)

- bin-build-3.0.0.tgz

- download-6.2.5.tgz

- :x: **got-7.1.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/ZSBRybnik/frontend/commit/273a134394edfb54991ff74097965c8f3cac3de7">273a134394edfb54991ff74097965c8f3cac3de7</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

The got package before 12.1.0 (also fixed in 11.8.5) for Node.js allows a redirect to a UNIX socket.

<p>Publish Date: 2022-06-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-33987>CVE-2022-33987</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>5.3</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-33987">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-33987</a></p>

<p>Release Date: 2022-06-18</p>

<p>Fix Resolution: got - 11.8.5,12.1.0</p>

</p>

<p></p>

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

</details> | non_test | node jq tgz vulnerabilities highest severity is autoclosed vulnerable library node jq tgz found in head commit a href vulnerabilities cve severity cvss dependency type fixed in node jq version remediation available high http cache semantics tgz transitive n a medium formula tgz transitive n a medium detected in multiple dependencies transitive n a for some transitive vulnerabilities there is no version of direct dependency with a fix check the section details below to see if there is a version of transitive dependency where vulnerability is fixed details cve vulnerable library http cache semantics tgz parses cache control and other headers helps building correct http caches and proxies library home page a href dependency hierarchy node jq tgz root library download tgz got tgz cacheable request tgz x http cache semantics tgz vulnerable library found in head commit a href found in base branch master vulnerability details this affects versions of the package http cache semantics before the issue can be exploited via malicious request header values sent to a server when that server reads the cache policy from the request using this library publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution http cache semantics step up your open source security game with mend cve vulnerable library formula tgz math and string formula parser library home page a href dependency hierarchy node jq tgz root library joi tgz x formula tgz vulnerable library found in head commit a href found in base branch master vulnerability details formula is a math and string formula parser in versions prior to crafted user provided strings to formula s parser might lead to polynomial execution time and a denial of service users should upgrade to there are no known workarounds for this vulnerability publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution sideway formula step up your open source security game with mend cve vulnerable libraries got tgz got tgz got tgz simplified http requests library home page a href dependency hierarchy node jq tgz root library download tgz x got tgz vulnerable library got tgz simplified http requests library home page a href dependency hierarchy node jq tgz root library bin build tgz download tgz x got tgz vulnerable library found in head commit a href found in base branch master vulnerability details the got package before also fixed in for node js allows a redirect to a unix socket publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution got step up your open source security game with mend | 0 |

23,527 | 10,894,847,044 | IssuesEvent | 2019-11-19 09:31:21 | elikkatzgit/quantumsim | https://api.github.com/repos/elikkatzgit/quantumsim | closed | CVE-2015-0220 (Medium) detected in Django-1.3.tar.gz | security vulnerability | ## CVE-2015-0220 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-1.3.tar.gz</b></p></summary>

<p>A high-level Python Web framework that encourages rapid development and clean, pragmatic design.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/f5/d5/6722d3091946734194ffcfe8ef074f63e8acdd1ff51dfcfc87c2c194fd3f/Django-1.3.tar.gz">https://files.pythonhosted.org/packages/f5/d5/6722d3091946734194ffcfe8ef074f63e8acdd1ff51dfcfc87c2c194fd3f/Django-1.3.tar.gz</a></p>

<p>Path to dependency file: /tmp/ws-scm/quantumsim/requirements.txt</p>

<p>Path to vulnerable library: /quantumsim/requirements.txt</p>

<p>

Dependency Hierarchy:

- :x: **Django-1.3.tar.gz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/elikkatzgit/quantumsim/commit/d6624156203bb0fc439915ed3fc47432b9cbbeb5">d6624156203bb0fc439915ed3fc47432b9cbbeb5</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The django.util.http.is_safe_url function in Django before 1.4.18, 1.6.x before 1.6.10, and 1.7.x before 1.7.3 does not properly handle leading whitespaces, which allows remote attackers to conduct cross-site scripting (XSS) attacks via a crafted URL, related to redirect URLs, as demonstrated by a "\njavascript:" URL.

<p>Publish Date: 2015-01-16

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2015-0220>CVE-2015-0220</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2015-0220">https://nvd.nist.gov/vuln/detail/CVE-2015-0220</a></p>

<p>Release Date: 2015-01-16</p>

<p>Fix Resolution: 1.4.18,1.6.10,1.7.3</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Python","packageName":"Django","packageVersion":"1.3","isTransitiveDependency":false,"dependencyTree":"Django:1.3","isMinimumFixVersionAvailable":true,"minimumFixVersion":"1.4.18,1.6.10,1.7.3"}],"vulnerabilityIdentifier":"CVE-2015-0220","vulnerabilityDetails":"The django.util.http.is_safe_url function in Django before 1.4.18, 1.6.x before 1.6.10, and 1.7.x before 1.7.3 does not properly handle leading whitespaces, which allows remote attackers to conduct cross-site scripting (XSS) attacks via a crafted URL, related to redirect URLs, as demonstrated by a \"\\njavascript:\" URL.","vulnerabilityUrl":"https://cve.mitre.org/cgi-bin/cvename.cgi?name\u003dCVE-2015-0220","cvss2Severity":"medium","cvss2Score":"4.3","extraData":{}}</REMEDIATE> --> | True | CVE-2015-0220 (Medium) detected in Django-1.3.tar.gz - ## CVE-2015-0220 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>Django-1.3.tar.gz</b></p></summary>

<p>A high-level Python Web framework that encourages rapid development and clean, pragmatic design.</p>

<p>Library home page: <a href="https://files.pythonhosted.org/packages/f5/d5/6722d3091946734194ffcfe8ef074f63e8acdd1ff51dfcfc87c2c194fd3f/Django-1.3.tar.gz">https://files.pythonhosted.org/packages/f5/d5/6722d3091946734194ffcfe8ef074f63e8acdd1ff51dfcfc87c2c194fd3f/Django-1.3.tar.gz</a></p>

<p>Path to dependency file: /tmp/ws-scm/quantumsim/requirements.txt</p>

<p>Path to vulnerable library: /quantumsim/requirements.txt</p>

<p>

Dependency Hierarchy:

- :x: **Django-1.3.tar.gz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/elikkatzgit/quantumsim/commit/d6624156203bb0fc439915ed3fc47432b9cbbeb5">d6624156203bb0fc439915ed3fc47432b9cbbeb5</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The django.util.http.is_safe_url function in Django before 1.4.18, 1.6.x before 1.6.10, and 1.7.x before 1.7.3 does not properly handle leading whitespaces, which allows remote attackers to conduct cross-site scripting (XSS) attacks via a crafted URL, related to redirect URLs, as demonstrated by a "\njavascript:" URL.

<p>Publish Date: 2015-01-16

<p>URL: <a href=https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2015-0220>CVE-2015-0220</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 2 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics not available</p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2015-0220">https://nvd.nist.gov/vuln/detail/CVE-2015-0220</a></p>

<p>Release Date: 2015-01-16</p>

<p>Fix Resolution: 1.4.18,1.6.10,1.7.3</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Python","packageName":"Django","packageVersion":"1.3","isTransitiveDependency":false,"dependencyTree":"Django:1.3","isMinimumFixVersionAvailable":true,"minimumFixVersion":"1.4.18,1.6.10,1.7.3"}],"vulnerabilityIdentifier":"CVE-2015-0220","vulnerabilityDetails":"The django.util.http.is_safe_url function in Django before 1.4.18, 1.6.x before 1.6.10, and 1.7.x before 1.7.3 does not properly handle leading whitespaces, which allows remote attackers to conduct cross-site scripting (XSS) attacks via a crafted URL, related to redirect URLs, as demonstrated by a \"\\njavascript:\" URL.","vulnerabilityUrl":"https://cve.mitre.org/cgi-bin/cvename.cgi?name\u003dCVE-2015-0220","cvss2Severity":"medium","cvss2Score":"4.3","extraData":{}}</REMEDIATE> --> | non_test | cve medium detected in django tar gz cve medium severity vulnerability vulnerable library django tar gz a high level python web framework that encourages rapid development and clean pragmatic design library home page a href path to dependency file tmp ws scm quantumsim requirements txt path to vulnerable library quantumsim requirements txt dependency hierarchy x django tar gz vulnerable library found in head commit a href vulnerability details the django util http is safe url function in django before x before and x before does not properly handle leading whitespaces which allows remote attackers to conduct cross site scripting xss attacks via a crafted url related to redirect urls as demonstrated by a njavascript url publish date url a href cvss score details base score metrics not available suggested fix type upgrade version origin a href release date fix resolution isopenpronvulnerability false ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails the django util http is safe url function in django before x before and x before does not properly handle leading whitespaces which allows remote attackers to conduct cross site scripting xss attacks via a crafted url related to redirect urls as demonstrated by a njavascript url vulnerabilityurl | 0 |

372,395 | 11,013,717,793 | IssuesEvent | 2019-12-04 21:05:27 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | Break closure of phedex blocks into smaller chunks | BUG High Priority Need wmagent branch WMAgent | **Impact of the bug**

WMAgent (PhEDExInjector)

**Describe the bug**

Apparently we put too much data into the same PhEDEx call to close blocks here:

https://github.com/dmwm/WMCore/blob/master/src/python/WMComponent/PhEDExInjector/PhEDExInjectorPoller.py#L375

it's one call per location, and that location might have many many blocks from multiple datasets.

The problem is, if there is a problem with any of those blocks (e.g., the current state of vocms0253 which has a **dataset** closed in PhEDEx, see [1]), the whole request would fail and no blocks can be closed...

**How to reproduce it**

none

**Expected behavior**

My suggestion is to break the http requests per location and dataset, something like

for location in locations:

for dataset in datasets:

make a phedex request

Yes, it will increase the amount of PhEDEx calls, but still it's going to be 1 call per block that we close, which should be just fine for the phedex data-service.

**Additional context and error message**

[1]

```

2019-11-27 22:23:08,945:140168429426432:WARNING:Service:The cachefile /data/srv/wmagent/v1.2.6.patch1/install/wmagent/PhEDExInjector/.wmcore_cache/.wmcore_cache_31961/requests/cmsweb.cern.ch:8443/-7685143179613178687_POST_inject does n

ot exist and the service at https://cmsweb.cern.ch:8443/phedex/datasvc/json/prod/inject is unavailable - it returned 400 because Bad Request with result: injectData error: dataset /RadionToWW_narrow_M-6500_TuneCUETP8M1_13TeV-madgraph-p

ythia8/RunIISummer16MiniAODv3-PUMoriond17_94X_mcRun2_asymptotic_v3-v1/MINIAODSIM is closed\n

2019-11-27 22:23:08,946:140168429426432:ERROR:PhEDExInjectorPoller:PhEDEx block close failed with HTTPException: 400 injectData error: dataset /RadionToWW_narrow_M-6500_TuneCUETP8M1_13TeV-madgraph-pythia8/RunIISummer16MiniAODv3-PUMorio

nd17_94X_mcRun2_asymptotic_v3-v1/MINIAODSIM is closed\n

``` | 1.0 | Break closure of phedex blocks into smaller chunks - **Impact of the bug**

WMAgent (PhEDExInjector)

**Describe the bug**

Apparently we put too much data into the same PhEDEx call to close blocks here:

https://github.com/dmwm/WMCore/blob/master/src/python/WMComponent/PhEDExInjector/PhEDExInjectorPoller.py#L375

it's one call per location, and that location might have many many blocks from multiple datasets.

The problem is, if there is a problem with any of those blocks (e.g., the current state of vocms0253 which has a **dataset** closed in PhEDEx, see [1]), the whole request would fail and no blocks can be closed...

**How to reproduce it**

none

**Expected behavior**

My suggestion is to break the http requests per location and dataset, something like

for location in locations:

for dataset in datasets:

make a phedex request

Yes, it will increase the amount of PhEDEx calls, but still it's going to be 1 call per block that we close, which should be just fine for the phedex data-service.

**Additional context and error message**

[1]

```

2019-11-27 22:23:08,945:140168429426432:WARNING:Service:The cachefile /data/srv/wmagent/v1.2.6.patch1/install/wmagent/PhEDExInjector/.wmcore_cache/.wmcore_cache_31961/requests/cmsweb.cern.ch:8443/-7685143179613178687_POST_inject does n

ot exist and the service at https://cmsweb.cern.ch:8443/phedex/datasvc/json/prod/inject is unavailable - it returned 400 because Bad Request with result: injectData error: dataset /RadionToWW_narrow_M-6500_TuneCUETP8M1_13TeV-madgraph-p

ythia8/RunIISummer16MiniAODv3-PUMoriond17_94X_mcRun2_asymptotic_v3-v1/MINIAODSIM is closed\n

2019-11-27 22:23:08,946:140168429426432:ERROR:PhEDExInjectorPoller:PhEDEx block close failed with HTTPException: 400 injectData error: dataset /RadionToWW_narrow_M-6500_TuneCUETP8M1_13TeV-madgraph-pythia8/RunIISummer16MiniAODv3-PUMorio

nd17_94X_mcRun2_asymptotic_v3-v1/MINIAODSIM is closed\n

``` | non_test | break closure of phedex blocks into smaller chunks impact of the bug wmagent phedexinjector describe the bug apparently we put too much data into the same phedex call to close blocks here it s one call per location and that location might have many many blocks from multiple datasets the problem is if there is a problem with any of those blocks e g the current state of which has a dataset closed in phedex see the whole request would fail and no blocks can be closed how to reproduce it none expected behavior my suggestion is to break the http requests per location and dataset something like for location in locations for dataset in datasets make a phedex request yes it will increase the amount of phedex calls but still it s going to be call per block that we close which should be just fine for the phedex data service additional context and error message warning service the cachefile data srv wmagent install wmagent phedexinjector wmcore cache wmcore cache requests cmsweb cern ch post inject does n ot exist and the service at is unavailable it returned because bad request with result injectdata error dataset radiontoww narrow m madgraph p asymptotic miniaodsim is closed n error phedexinjectorpoller phedex block close failed with httpexception injectdata error dataset radiontoww narrow m madgraph pumorio asymptotic miniaodsim is closed n | 0 |

249,612 | 21,179,721,071 | IssuesEvent | 2022-04-08 06:34:23 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | opened | Terminal flaky tests | smoke-test-failure | Lately terminal tests became flaky with:

```

1) VSCode Smoke Tests (Web)

Terminal

Terminal Editors

should update color of the tab:

Error: Timeout: is active element '.quick-input-widget .quick-input-box input' after 20 seconds.

at Code.poll (D:\a\_work\1\s\test\automation\src\code.ts:296:11)

at Code.waitForActiveElement (D:\a\_work\1\s\test\automation\src\code.ts:232:3)

at QuickInput.waitForQuickInputOpened (D:\a\_work\1\s\test\automation\src\quickinput.ts:20:3)

at Terminal.runCommandWithValue (D:\a\_work\1\s\test\automation\src\terminal.ts:88:3)

at Context.<anonymous> (src\areas\terminal\terminal-editors.test.ts:21:4)

```

This tests uses `runCommandWithValue` and there is a somewhat questionable line here:

https://github.com/microsoft/vscode/blob/921264bfe3ffbbfe5ec8c4b08214b88e2148fa3e/test/automation/src/terminal.ts#L83-L88

Fyi we had updated playwright to latest.

I will go ahead and skip tests for now that use this method. | 1.0 | Terminal flaky tests - Lately terminal tests became flaky with:

```

1) VSCode Smoke Tests (Web)

Terminal

Terminal Editors

should update color of the tab:

Error: Timeout: is active element '.quick-input-widget .quick-input-box input' after 20 seconds.

at Code.poll (D:\a\_work\1\s\test\automation\src\code.ts:296:11)

at Code.waitForActiveElement (D:\a\_work\1\s\test\automation\src\code.ts:232:3)

at QuickInput.waitForQuickInputOpened (D:\a\_work\1\s\test\automation\src\quickinput.ts:20:3)

at Terminal.runCommandWithValue (D:\a\_work\1\s\test\automation\src\terminal.ts:88:3)

at Context.<anonymous> (src\areas\terminal\terminal-editors.test.ts:21:4)

```

This tests uses `runCommandWithValue` and there is a somewhat questionable line here:

https://github.com/microsoft/vscode/blob/921264bfe3ffbbfe5ec8c4b08214b88e2148fa3e/test/automation/src/terminal.ts#L83-L88

Fyi we had updated playwright to latest.

I will go ahead and skip tests for now that use this method. | test | terminal flaky tests lately terminal tests became flaky with vscode smoke tests web terminal terminal editors should update color of the tab error timeout is active element quick input widget quick input box input after seconds at code poll d a work s test automation src code ts at code waitforactiveelement d a work s test automation src code ts at quickinput waitforquickinputopened d a work s test automation src quickinput ts at terminal runcommandwithvalue d a work s test automation src terminal ts at context src areas terminal terminal editors test ts this tests uses runcommandwithvalue and there is a somewhat questionable line here fyi we had updated playwright to latest i will go ahead and skip tests for now that use this method | 1 |

296,736 | 25,572,522,611 | IssuesEvent | 2022-11-30 18:56:16 | MD-Anderson-Bioinformatics/NG-CHM | https://api.github.com/repos/MD-Anderson-Bioinformatics/NG-CHM | closed | Pubmed linkouts need to be opened in new frame. | bug linkouts passed retest 2.21.3 | If linkout frame opened, Pubmed linkouts do not work.

Refused to display 'https://pubmed.ncbi.nlm.nih.gov/' in a frame because it set 'X-Frame-Options' to 'deny'. | 1.0 | Pubmed linkouts need to be opened in new frame. - If linkout frame opened, Pubmed linkouts do not work.

Refused to display 'https://pubmed.ncbi.nlm.nih.gov/' in a frame because it set 'X-Frame-Options' to 'deny'. | test | pubmed linkouts need to be opened in new frame if linkout frame opened pubmed linkouts do not work refused to display in a frame because it set x frame options to deny | 1 |

293,607 | 8,998,093,882 | IssuesEvent | 2019-02-02 18:33:57 | Beep6581/RawTherapee | https://api.github.com/repos/Beep6581/RawTherapee | opened | Segfault in lmmse demosaic | Priority-Critical bug | There's a really hard to reproduce segfault in lmmse demosaic.

Searching..... | 1.0 | Segfault in lmmse demosaic - There's a really hard to reproduce segfault in lmmse demosaic.

Searching..... | non_test | segfault in lmmse demosaic there s a really hard to reproduce segfault in lmmse demosaic searching | 0 |

58,511 | 24,468,752,529 | IssuesEvent | 2022-10-07 17:31:38 | valor-software/valor-software.github.io | https://api.github.com/repos/valor-software/valor-software.github.io | closed | Design | Service page | service page | To create a Design page that should have a path:

Home > Services > Design

It can be accessible via:

https://valor-software.com/services

https://valor-software.com/

main menu

Design: https://www.figma.com/file/StpiCGh7YZyAPRjtD5gJBo/Valor-Site-Design-2021?node-id=9450%3A82643 | 1.0 | Design | Service page - To create a Design page that should have a path:

Home > Services > Design

It can be accessible via:

https://valor-software.com/services

https://valor-software.com/

main menu

Design: https://www.figma.com/file/StpiCGh7YZyAPRjtD5gJBo/Valor-Site-Design-2021?node-id=9450%3A82643 | non_test | design service page to create a design page that should have a path home services design it can be accessible via main menu design | 0 |

272,205 | 20,737,252,094 | IssuesEvent | 2022-03-14 14:42:46 | dj-stripe/dj-stripe | https://api.github.com/repos/dj-stripe/dj-stripe | closed | Ability to create express account attached to auth user | documentation | Hi. Is does this package have a way to create an express account and attach it to the django auth user (onetoonefield) or does this have to be done manually?

| 1.0 | Ability to create express account attached to auth user - Hi. Is does this package have a way to create an express account and attach it to the django auth user (onetoonefield) or does this have to be done manually?

| non_test | ability to create express account attached to auth user hi is does this package have a way to create an express account and attach it to the django auth user onetoonefield or does this have to be done manually | 0 |

60,590 | 6,711,005,623 | IssuesEvent | 2017-10-13 00:49:29 | ansible/galaxy-issues | https://api.github.com/repos/ansible/galaxy-issues | closed | No error when adding a new role/container project that has the same name as an existing project | bug ready for testing | ## Steps to Reproduce

1. Visit 'roleadd' on Ansible Galaxy (https://galaxy.ansible.com/roleadd#/)

2. Click the 'enable' toggle next to a role named `username/xyz`

3. Click the configure widget, and verify the role name is `xyz`

4. Wait for role import to succeed (green dot next to 'Succeeded')

5. Click the 'enable' toggle next to another role named `username/something-else`

6. Click the configure widget, and change role name to `xyz`

7. Click 'Save' and observe the result.

## Expected Result

* I should see an error message alerting me that a role with that name already exists in my Galaxy namespace.

## Actual Result

The 'Running' status widget keeps spinning forever, and nothing seems to happen. After a while, if you refresh the page, you can see the project was imported as `something-else` and not `xyz` (I think, if I remember correctly).

This was discovered when I was adding a `geerlingguy.solr` ansible-container project, while I already had a `geerlingguy.solr` ansible role in my user namespace. See: https://github.com/ansible/ansible-container/issues/629 | 1.0 | No error when adding a new role/container project that has the same name as an existing project - ## Steps to Reproduce

1. Visit 'roleadd' on Ansible Galaxy (https://galaxy.ansible.com/roleadd#/)

2. Click the 'enable' toggle next to a role named `username/xyz`

3. Click the configure widget, and verify the role name is `xyz`

4. Wait for role import to succeed (green dot next to 'Succeeded')

5. Click the 'enable' toggle next to another role named `username/something-else`

6. Click the configure widget, and change role name to `xyz`

7. Click 'Save' and observe the result.

## Expected Result

* I should see an error message alerting me that a role with that name already exists in my Galaxy namespace.

## Actual Result

The 'Running' status widget keeps spinning forever, and nothing seems to happen. After a while, if you refresh the page, you can see the project was imported as `something-else` and not `xyz` (I think, if I remember correctly).

This was discovered when I was adding a `geerlingguy.solr` ansible-container project, while I already had a `geerlingguy.solr` ansible role in my user namespace. See: https://github.com/ansible/ansible-container/issues/629 | test | no error when adding a new role container project that has the same name as an existing project steps to reproduce visit roleadd on ansible galaxy click the enable toggle next to a role named username xyz click the configure widget and verify the role name is xyz wait for role import to succeed green dot next to succeeded click the enable toggle next to another role named username something else click the configure widget and change role name to xyz click save and observe the result expected result i should see an error message alerting me that a role with that name already exists in my galaxy namespace actual result the running status widget keeps spinning forever and nothing seems to happen after a while if you refresh the page you can see the project was imported as something else and not xyz i think if i remember correctly this was discovered when i was adding a geerlingguy solr ansible container project while i already had a geerlingguy solr ansible role in my user namespace see | 1 |

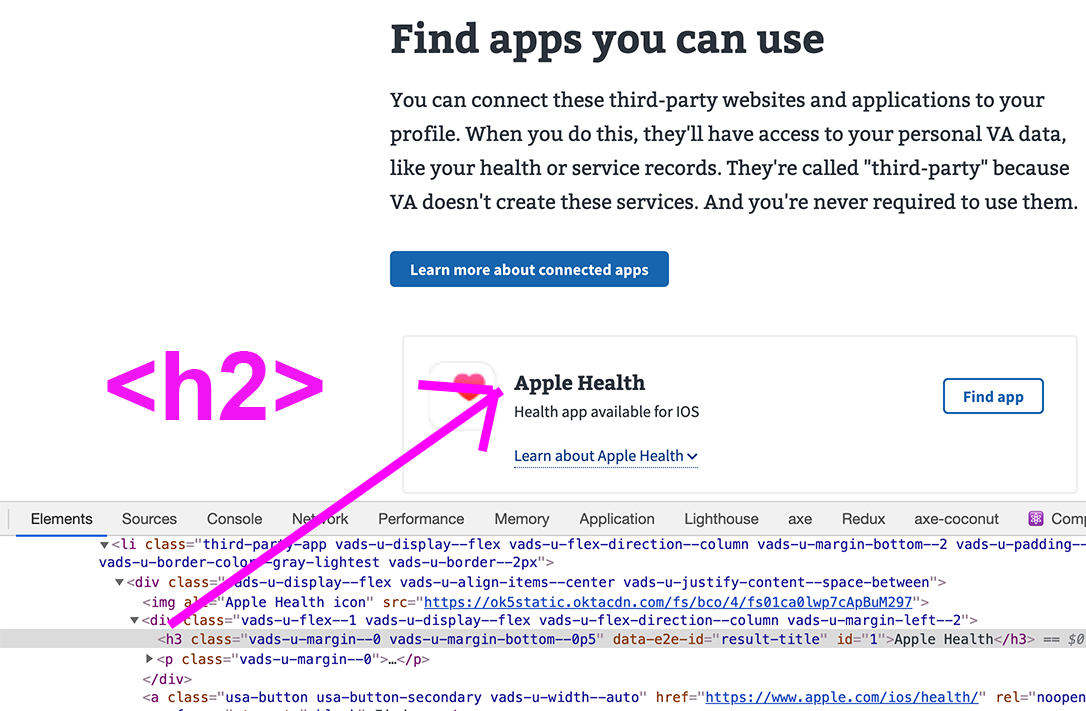

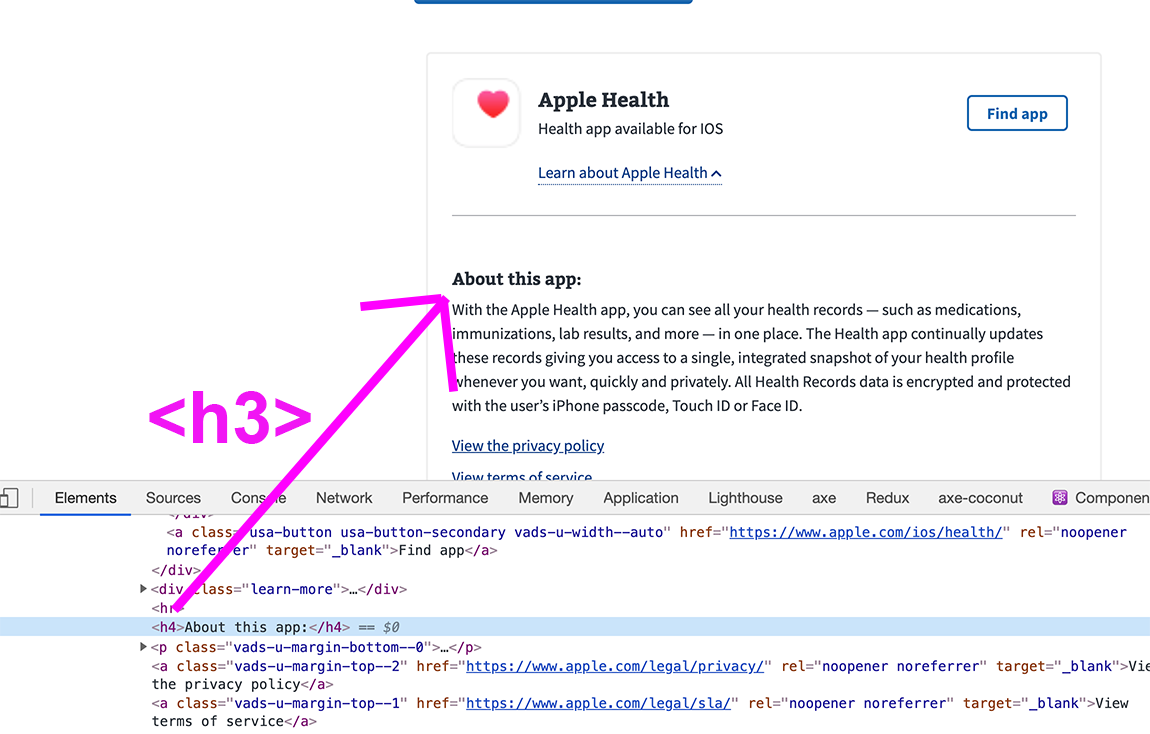

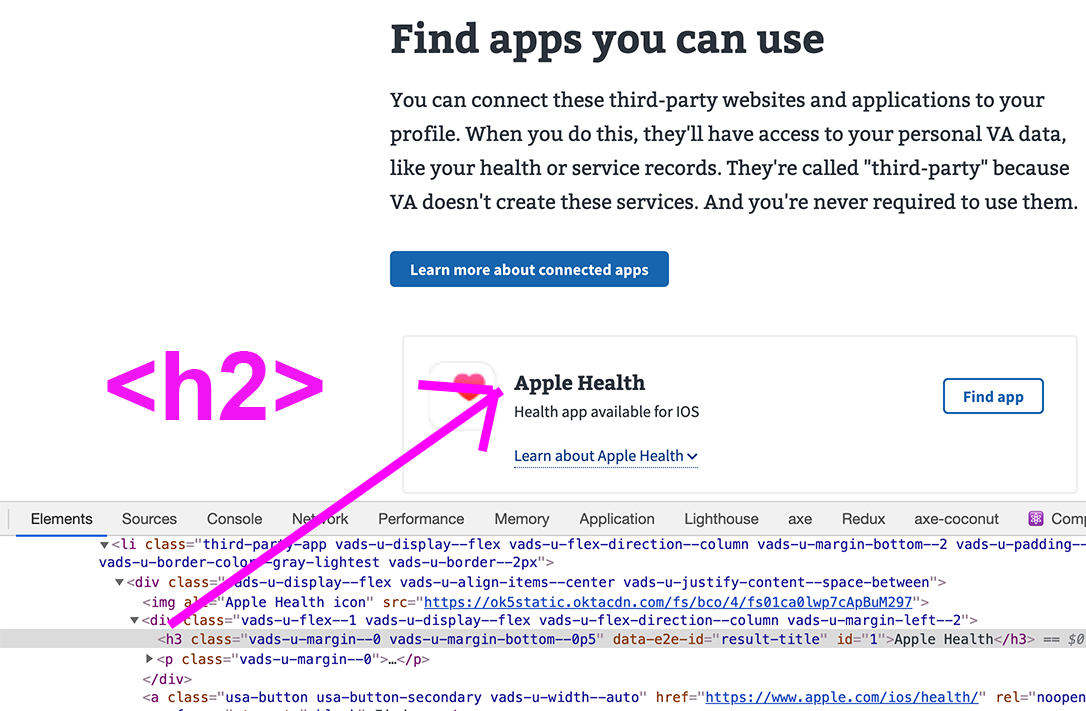

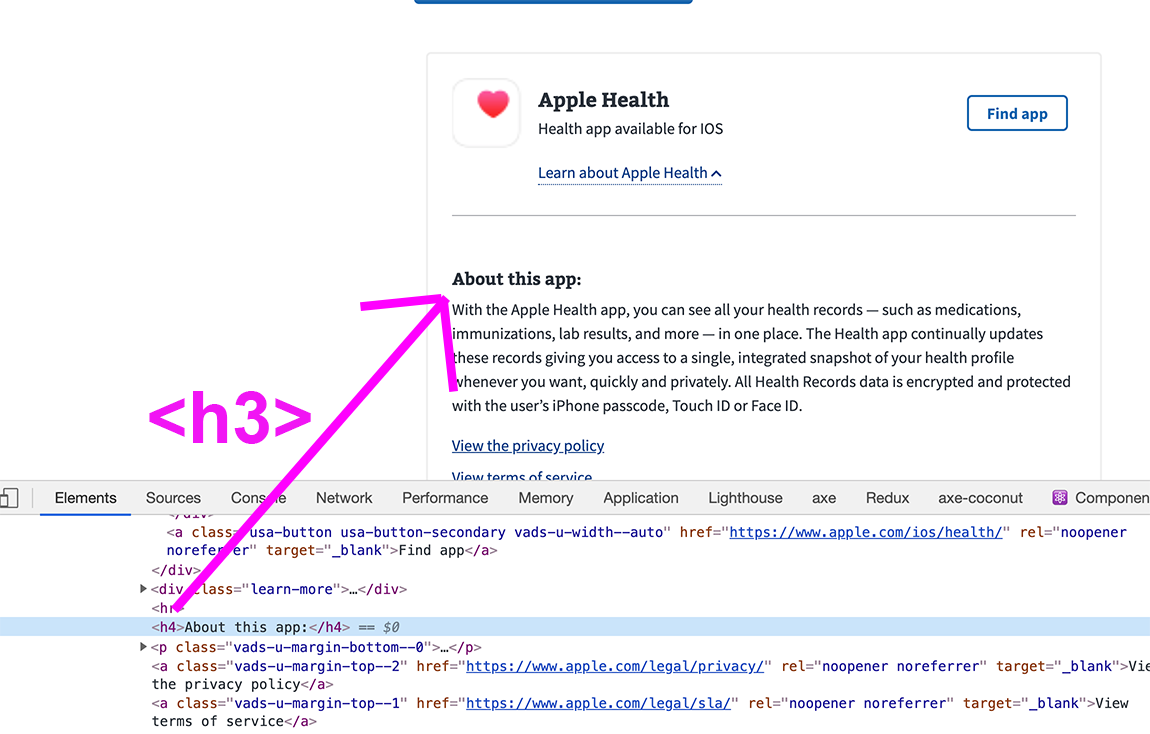

55,529 | 14,533,130,997 | IssuesEvent | 2020-12-14 23:51:30 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | 508-defect-2 [AXE-CORE]: App Directory - Heading levels should increase by one | 508-defect-2 508-issue-headings 508/Accessibility | # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-2)

<!--

Enter an issue title using the format [ERROR TYPE]: Brief description of the problem

---

[SCREENREADER]: Edit buttons need aria-label for context

[KEYBOARD]: Add another user link will not receive keyboard focus

[AXE-CORE]: Heading levels should increase by one

[COGNITION]: Error messages should be more specific

[COLOR]: Blue button on blue background does not have sufficient contrast ratio

---

-->

<!-- It's okay to delete the instructions above, but leave the link to the 508 defect severity level for your issue. -->

## Feedback framework

- **❗️ Must** for if the feedback must be applied

- **⚠️ Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Definition of done

1. Review and acknowledge feedback.

1. Fix and/or document decisions made.

1. Accessibility specialist will close ticket after reviewing documented decisions / validating fix.

## Point of Contact

<!-- If this issue is being opened by a VFS team member, please add a point of contact. Usually this is the same person who enters the issue ticket. -->

**VFS Point of Contact:** _Trevor_

## User Story or Problem Statement

<!-- Example: As a user with cognitive considerations, I expect to see a label and input pairing consistently styled as throughout the rest of the site, with the label just above the text/email/search input or to the right of a radio/checkbox input, so that I am clearly able to understand what entry is expected. -->

As an assistive tech user, I want to hear headings read out in the correct nesting order.

## Details

<!-- This is a detailed description of the issue. It should include a restatement of the title, and provide more background information. -->

Our app headings and sub-headings inside the accordions need to be H2 and H3 headings respectively. Screen shots attached below.

## Acceptance Criteria

- [ ] Axe browser plugin doesn't report a heading nesting best practice warning on future runs

- [ ] Current visual styles are maintained

## WCAG or Vendor Guidance (optional)

* [Heading levels should only increase by one](https://dequeuniversity.com/rules/axe/4.0/heading-order)

## Screenshots or Trace Logs

<!-- Drop any screenshots or error logs that might be useful for debugging -->

---

| 1.0 | 508-defect-2 [AXE-CORE]: App Directory - Heading levels should increase by one - # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-2)

<!--

Enter an issue title using the format [ERROR TYPE]: Brief description of the problem

---

[SCREENREADER]: Edit buttons need aria-label for context

[KEYBOARD]: Add another user link will not receive keyboard focus

[AXE-CORE]: Heading levels should increase by one

[COGNITION]: Error messages should be more specific

[COLOR]: Blue button on blue background does not have sufficient contrast ratio

---

-->

<!-- It's okay to delete the instructions above, but leave the link to the 508 defect severity level for your issue. -->

## Feedback framework

- **❗️ Must** for if the feedback must be applied

- **⚠️ Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Definition of done

1. Review and acknowledge feedback.

1. Fix and/or document decisions made.

1. Accessibility specialist will close ticket after reviewing documented decisions / validating fix.

## Point of Contact

<!-- If this issue is being opened by a VFS team member, please add a point of contact. Usually this is the same person who enters the issue ticket. -->

**VFS Point of Contact:** _Trevor_

## User Story or Problem Statement

<!-- Example: As a user with cognitive considerations, I expect to see a label and input pairing consistently styled as throughout the rest of the site, with the label just above the text/email/search input or to the right of a radio/checkbox input, so that I am clearly able to understand what entry is expected. -->

As an assistive tech user, I want to hear headings read out in the correct nesting order.

## Details

<!-- This is a detailed description of the issue. It should include a restatement of the title, and provide more background information. -->

Our app headings and sub-headings inside the accordions need to be H2 and H3 headings respectively. Screen shots attached below.

## Acceptance Criteria

- [ ] Axe browser plugin doesn't report a heading nesting best practice warning on future runs

- [ ] Current visual styles are maintained

## WCAG or Vendor Guidance (optional)

* [Heading levels should only increase by one](https://dequeuniversity.com/rules/axe/4.0/heading-order)

## Screenshots or Trace Logs

<!-- Drop any screenshots or error logs that might be useful for debugging -->

---

| non_test | defect app directory heading levels should increase by one enter an issue title using the format brief description of the problem edit buttons need aria label for context add another user link will not receive keyboard focus heading levels should increase by one error messages should be more specific blue button on blue background does not have sufficient contrast ratio feedback framework ❗️ must for if the feedback must be applied ⚠️ should if the feedback is best practice ✔️ consider for suggestions enhancements definition of done review and acknowledge feedback fix and or document decisions made accessibility specialist will close ticket after reviewing documented decisions validating fix point of contact vfs point of contact trevor user story or problem statement as an assistive tech user i want to hear headings read out in the correct nesting order details our app headings and sub headings inside the accordions need to be and headings respectively screen shots attached below acceptance criteria axe browser plugin doesn t report a heading nesting best practice warning on future runs current visual styles are maintained wcag or vendor guidance optional screenshots or trace logs | 0 |

280,984 | 24,352,632,114 | IssuesEvent | 2022-10-03 02:42:31 | ECP-WarpX/WarpX | https://api.github.com/repos/ECP-WarpX/WarpX | reopened | oneAPI 2022.2.0 Hangs in CI | bug component: tests install component: third party bug: affects latest release backend: dpc++ workaround | Since the update 1 week ago from `2022.1.0` to `2022.2.0`, most CI runs using either ICX (host) or DPC++ (device) compiles hang.

It looks like this is from the linking part: https://github.com/ECP-WarpX/WarpX/pull/3421#issuecomment-1261311343

The same problem appears in the [AMReX](https://github.com/AMReX-Codes/amrex/) CI.

Open this issue for triage and tracking.

cc @rscohn2 | 2.0 | oneAPI 2022.2.0 Hangs in CI - Since the update 1 week ago from `2022.1.0` to `2022.2.0`, most CI runs using either ICX (host) or DPC++ (device) compiles hang.

It looks like this is from the linking part: https://github.com/ECP-WarpX/WarpX/pull/3421#issuecomment-1261311343

The same problem appears in the [AMReX](https://github.com/AMReX-Codes/amrex/) CI.

Open this issue for triage and tracking.

cc @rscohn2 | test | oneapi hangs in ci since the update week ago from to most ci runs using either icx host or dpc device compiles hang it looks like this is from the linking part the same problem appears in the ci open this issue for triage and tracking cc | 1 |

64,744 | 16,021,378,649 | IssuesEvent | 2021-04-21 00:14:04 | jmuelbert/jmbde-QT | https://api.github.com/repos/jmuelbert/jmbde-QT | closed | Workflow: CD: RPM - openSUSE TW | build ci dependencies github_actions no-issue-activity | ## Build the RPM is not really implemented

Here is missing the dependencies. | 1.0 | Workflow: CD: RPM - openSUSE TW - ## Build the RPM is not really implemented

Here is missing the dependencies. | non_test | workflow cd rpm opensuse tw build the rpm is not really implemented here is missing the dependencies | 0 |

318,385 | 27,300,039,562 | IssuesEvent | 2023-02-24 00:37:24 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [REMOTO] [JAVA] [KAFKA] [AWS] [GIT] Pessoa Desenvolvedora Java Especialista na [INVILLIA] | HOME OFFICE JAVA SPRING SQL NOSQL AWS REMOTO JENKINS KAFKA GITFLOW TESTES UNITARIOS HELP WANTED ESPECIALISTA SPRING DATA SPRING BOOT Stale | <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

==================================================

-->

## Descrição da vaga

- Aproxime-se. A Invillia não apenas transformou a forma como as empresas mais revolucionárias do mundo criam e desenvolvem estratégias, negócios e produtos digitais.

- Inovou também a maneira como pessoas apaixonadas por tecnologia, de qualquer lugar do planeta, podem interagir, evoluir, mais conectados do que nunca.

- Para a Invillia, não importa onde você está. Se é um país grande. Ou uma cidade pequena. E sim a sua vontade. As suas ideias. O seu potencial.

- O tamanho do seu talento.

**Responsabilidades e atribuições:**

- O profissional será responsável em prover soluções técnicas para novas features e dar o suporte necessário as features já existentes, afinal, nem tudo são flores.

- Esperamos também que essa pessoa auxilie os outros membros do time em questões técnicas não esquecendo de fornecer a melhor solução para o negócio.

- Algo que prezamos bastante é qualidade, isso inclui um código limpo e legível (clean code).

- Também é desejável que o mesmo tenha um perfil intra-empreendedor, onde seus objetivos estejam alinhados com os objetivos da empresa, afinal, temos muito orgulho do que fazemos aqui!

## Local

- Home Office

## Benefícios

- Informações diretamente com o responsável pela vaga/recrutador.

## Requisitos

**Obrigatórios:**

- Experiência em desenvolvimento com Java;

- Definição de Arquitetura exercendo o papel de Referência Técnica;

- Experiência em desenvolvimento com Spring (Boot, Data, Cache, etc);

- Conhecimentos em Java 8 (mínimo);

- Conhecimento em Filas (Rabbit);

- Conhecimento em Kafka;

- Conhecimentos em AWS (SNS, SQS, S3);

- Conhecimentos em Git e Git-Flow;

- Experiência com bancos de dados SQL e NoSQL;

- Desenvolvimento com foco em qualidade: testes unitários e Sonar(métricas);

- Experiência em micro serviços e sistemas concorrentes;

- Contínuos delivery (Jenkins);

## Contratação

- a combinar

## Nossa empresa

- A Invillia é uma empresa global que vem revolucionando a maneira como game-changers expandem o poder de inovar, implementar tecnologias de ponta e desenvolver novas estratégias, produtos e serviços digitais.

- Nenhuma outra empresa no mundo atua como a Invillia.

- E o que torna nosso Global Growth Framework tão único e poderoso?

- Primeiro, dissolvemos os limites entre o físico e o virtual para ter em nosso time os melhores talentos do planeta.

- Criamos infinitas práticas e metodologias para que que cada squad seja super customizado e engajado na cultura e desafios de cada cliente.

- Adoramos usar ferramentas ágeis, métricas, inteligência de dados no dia-a-dia. Para que ideias e melhorias se multipliquem.

- Mas acreditamos que é na educação contínua, na abordagem mais humana e colaborativa que a mágica acontece.

- Novas oportunidades surgem. E a inovação nunca para. Infinite Digital Power.

## Como se candidatar

- [Clique aqui para se candidatar](https://invillia.gupy.io/jobs/571873?jobBoardSource=gupy_public_page)

| 1.0 | [REMOTO] [JAVA] [KAFKA] [AWS] [GIT] Pessoa Desenvolvedora Java Especialista na [INVILLIA] - <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

==================================================

-->

## Descrição da vaga

- Aproxime-se. A Invillia não apenas transformou a forma como as empresas mais revolucionárias do mundo criam e desenvolvem estratégias, negócios e produtos digitais.

- Inovou também a maneira como pessoas apaixonadas por tecnologia, de qualquer lugar do planeta, podem interagir, evoluir, mais conectados do que nunca.

- Para a Invillia, não importa onde você está. Se é um país grande. Ou uma cidade pequena. E sim a sua vontade. As suas ideias. O seu potencial.

- O tamanho do seu talento.

**Responsabilidades e atribuições:**

- O profissional será responsável em prover soluções técnicas para novas features e dar o suporte necessário as features já existentes, afinal, nem tudo são flores.

- Esperamos também que essa pessoa auxilie os outros membros do time em questões técnicas não esquecendo de fornecer a melhor solução para o negócio.

- Algo que prezamos bastante é qualidade, isso inclui um código limpo e legível (clean code).

- Também é desejável que o mesmo tenha um perfil intra-empreendedor, onde seus objetivos estejam alinhados com os objetivos da empresa, afinal, temos muito orgulho do que fazemos aqui!

## Local

- Home Office

## Benefícios

- Informações diretamente com o responsável pela vaga/recrutador.

## Requisitos

**Obrigatórios:**

- Experiência em desenvolvimento com Java;

- Definição de Arquitetura exercendo o papel de Referência Técnica;

- Experiência em desenvolvimento com Spring (Boot, Data, Cache, etc);

- Conhecimentos em Java 8 (mínimo);

- Conhecimento em Filas (Rabbit);

- Conhecimento em Kafka;

- Conhecimentos em AWS (SNS, SQS, S3);

- Conhecimentos em Git e Git-Flow;

- Experiência com bancos de dados SQL e NoSQL;

- Desenvolvimento com foco em qualidade: testes unitários e Sonar(métricas);

- Experiência em micro serviços e sistemas concorrentes;

- Contínuos delivery (Jenkins);

## Contratação

- a combinar

## Nossa empresa

- A Invillia é uma empresa global que vem revolucionando a maneira como game-changers expandem o poder de inovar, implementar tecnologias de ponta e desenvolver novas estratégias, produtos e serviços digitais.

- Nenhuma outra empresa no mundo atua como a Invillia.

- E o que torna nosso Global Growth Framework tão único e poderoso?

- Primeiro, dissolvemos os limites entre o físico e o virtual para ter em nosso time os melhores talentos do planeta.

- Criamos infinitas práticas e metodologias para que que cada squad seja super customizado e engajado na cultura e desafios de cada cliente.

- Adoramos usar ferramentas ágeis, métricas, inteligência de dados no dia-a-dia. Para que ideias e melhorias se multipliquem.

- Mas acreditamos que é na educação contínua, na abordagem mais humana e colaborativa que a mágica acontece.

- Novas oportunidades surgem. E a inovação nunca para. Infinite Digital Power.

## Como se candidatar

- [Clique aqui para se candidatar](https://invillia.gupy.io/jobs/571873?jobBoardSource=gupy_public_page)

| test | pessoa desenvolvedora java especialista na por favor só poste se a vaga for para salvador e cidades vizinhas use desenvolvedor front end ao invés de front end developer o exemplo desenvolvedor front end na descrição da vaga aproxime se a invillia não apenas transformou a forma como as empresas mais revolucionárias do mundo criam e desenvolvem estratégias negócios e produtos digitais inovou também a maneira como pessoas apaixonadas por tecnologia de qualquer lugar do planeta podem interagir evoluir mais conectados do que nunca para a invillia não importa onde você está se é um país grande ou uma cidade pequena e sim a sua vontade as suas ideias o seu potencial o tamanho do seu talento responsabilidades e atribuições o profissional será responsável em prover soluções técnicas para novas features e dar o suporte necessário as features já existentes afinal nem tudo são flores esperamos também que essa pessoa auxilie os outros membros do time em questões técnicas não esquecendo de fornecer a melhor solução para o negócio algo que prezamos bastante é qualidade isso inclui um código limpo e legível clean code também é desejável que o mesmo tenha um perfil intra empreendedor onde seus objetivos estejam alinhados com os objetivos da empresa afinal temos muito orgulho do que fazemos aqui local home office benefícios informações diretamente com o responsável pela vaga recrutador requisitos obrigatórios experiência em desenvolvimento com java definição de arquitetura exercendo o papel de referência técnica experiência em desenvolvimento com spring boot data cache etc conhecimentos em java mínimo conhecimento em filas rabbit conhecimento em kafka conhecimentos em aws sns sqs conhecimentos em git e git flow experiência com bancos de dados sql e nosql desenvolvimento com foco em qualidade testes unitários e sonar métricas experiência em micro serviços e sistemas concorrentes contínuos delivery jenkins contratação a combinar nossa empresa a invillia é uma empresa global que vem revolucionando a maneira como game changers expandem o poder de inovar implementar tecnologias de ponta e desenvolver novas estratégias produtos e serviços digitais nenhuma outra empresa no mundo atua como a invillia e o que torna nosso global growth framework tão único e poderoso primeiro dissolvemos os limites entre o físico e o virtual para ter em nosso time os melhores talentos do planeta criamos infinitas práticas e metodologias para que que cada squad seja super customizado e engajado na cultura e desafios de cada cliente adoramos usar ferramentas ágeis métricas inteligência de dados no dia a dia para que ideias e melhorias se multipliquem mas acreditamos que é na educação contínua na abordagem mais humana e colaborativa que a mágica acontece novas oportunidades surgem e a inovação nunca para infinite digital power como se candidatar | 1 |

158,802 | 24,899,512,456 | IssuesEvent | 2022-10-28 19:15:10 | vegaprotocol/vegawallet-desktop | https://api.github.com/repos/vegaprotocol/vegawallet-desktop | closed | Revoke permissions | feature desktop-wallet backend ux-and-visual-design refine | Revoking permissions between wallet and hostname should automatically shutdown the connection between these two entities, if any. | 1.0 | Revoke permissions - Revoking permissions between wallet and hostname should automatically shutdown the connection between these two entities, if any. | non_test | revoke permissions revoking permissions between wallet and hostname should automatically shutdown the connection between these two entities if any | 0 |

281,182 | 21,315,383,327 | IssuesEvent | 2022-04-16 07:15:19 | putaojuice/pe | https://api.github.com/repos/putaojuice/pe | opened | Unclear use case for sort task in DG | severity.Low type.DocumentationBug | In DG the sort task use case step 1 of MSS states that sort the task by certain property, I feel like this could have been elaborated and stated clearer because in the UG there are so many properties covered

**DG UC11**

**UG** `sort`

<!--session: 1650088126549-fc759982-4493-4e69-bd46-1702e0a9f91f-->

<!--Version: Web v3.4.2--> | 1.0 | Unclear use case for sort task in DG - In DG the sort task use case step 1 of MSS states that sort the task by certain property, I feel like this could have been elaborated and stated clearer because in the UG there are so many properties covered

**DG UC11**

**UG** `sort`

<!--session: 1650088126549-fc759982-4493-4e69-bd46-1702e0a9f91f-->

<!--Version: Web v3.4.2--> | non_test | unclear use case for sort task in dg in dg the sort task use case step of mss states that sort the task by certain property i feel like this could have been elaborated and stated clearer because in the ug there are so many properties covered dg ug sort | 0 |

252,178 | 21,561,161,074 | IssuesEvent | 2022-05-01 07:10:34 | prestodb/presto | https://api.github.com/repos/prestodb/presto | closed | Flaky TestJdbcWarnings.testLongRunningStatement | tests stale | Build failed with

```

[ERROR] testLongRunningStatement(com.facebook.presto.jdbc.TestJdbcWarnings) Time elapsed: 0.172 s <<< FAILURE!

java.lang.NullPointerException: throwable is null

at java.util.Objects.requireNonNull(Objects.java:228)

at com.facebook.presto.jdbc.TestJdbcWarnings$WarningEntry.<init>(TestJdbcWarnings.java:305)

at com.facebook.presto.jdbc.TestJdbcWarnings.testLongRunningStatement(TestJdbcWarnings.java:150)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.testng.internal.MethodInvocationHelper.invokeMethod(MethodInvocationHelper.java:104)

at org.testng.internal.Invoker.invokeMethod(Invoker.java:645)

at org.testng.internal.Invoker.invokeTestMethod(Invoker.java:851)

at org.testng.internal.Invoker.invokeTestMethods(Invoker.java:1177)

at org.testng.internal.TestMethodWorker.invokeTestMethods(TestMethodWorker.java:129)

at org.testng.internal.TestMethodWorker.run(TestMethodWorker.java:112)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

```

The relevant part of the test is

```

while (statement.getWarnings() == null) {

Thread.sleep(100);

}

SQLWarning warning = statement.getWarnings();

Set<WarningEntry> currentWarnings = new HashSet<>();

assertTrue(currentWarnings.add(new WarningEntry(warning)));

```

It seems like somehow the warnings are getting cleared or something between the two getWarnings() calls so that they are null at the second call. | 1.0 | Flaky TestJdbcWarnings.testLongRunningStatement - Build failed with

```

[ERROR] testLongRunningStatement(com.facebook.presto.jdbc.TestJdbcWarnings) Time elapsed: 0.172 s <<< FAILURE!

java.lang.NullPointerException: throwable is null

at java.util.Objects.requireNonNull(Objects.java:228)

at com.facebook.presto.jdbc.TestJdbcWarnings$WarningEntry.<init>(TestJdbcWarnings.java:305)

at com.facebook.presto.jdbc.TestJdbcWarnings.testLongRunningStatement(TestJdbcWarnings.java:150)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)