Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

203,242 | 15,875,519,143 | IssuesEvent | 2021-04-09 07:06:32 | curl/curl | https://api.github.com/repos/curl/curl | closed | -F for emails lacks documentation | SMTP cmdline tool documentation | I have searched the entire internet for this.

I post it here because it literally doesn't work.

Manpage reference: [-F, --form <name=content>](https://curl.se/docs/manpage.html#-F)

Example: the following command sends an SMTP mime e-mail consisting in an inline part in two alternative formats: plain text and HTML. It attaches a text file:

```

curl -F '=(;type=multipart/alternative' \

-F '=plain text message' \

-F '= <body>HTML message</body>;type=text/html' \

-F '=)' -F '=@textfile.txt' ... smtp://example.com

```

How in heavens name do you do this? No matter what I try, it says file not found.

Honestly, at this point it can even be a text/plain single part email.

I seriously doubt ANYONE can get this work. | 1.0 | -F for emails lacks documentation - I have searched the entire internet for this.

I post it here because it literally doesn't work.

Manpage reference: [-F, --form <name=content>](https://curl.se/docs/manpage.html#-F)

Example: the following command sends an SMTP mime e-mail consisting in an inline part in two alternative formats: plain text and HTML. It attaches a text file:

```

curl -F '=(;type=multipart/alternative' \

-F '=plain text message' \

-F '= <body>HTML message</body>;type=text/html' \

-F '=)' -F '=@textfile.txt' ... smtp://example.com

```

How in heavens name do you do this? No matter what I try, it says file not found.

Honestly, at this point it can even be a text/plain single part email.

I seriously doubt ANYONE can get this work. | non_test | f for emails lacks documentation i have searched the entire internet for this i post it here because it literally doesn t work manpage reference example the following command sends an smtp mime e mail consisting in an inline part in two alternative formats plain text and html it attaches a text file curl f type multipart alternative f plain text message f html message type text html f f textfile txt smtp example com how in heavens name do you do this no matter what i try it says file not found honestly at this point it can even be a text plain single part email i seriously doubt anyone can get this work | 0 |

42,846 | 5,478,031,190 | IssuesEvent | 2017-03-12 14:33:00 | perl6/whateverable | https://api.github.com/repos/perl6/whateverable | reopened | Bisectable and builds with no perl6 executable | bisectable testneeded | When Bisectable stumbles upon a build that has no ``perl6`` executable (there are some builds like this), it just drops the whole thing.

Here is an example:

https://irclog.perlgeek.de/perl6-dev/2017-03-01#i_14185671 | 1.0 | Bisectable and builds with no perl6 executable - When Bisectable stumbles upon a build that has no ``perl6`` executable (there are some builds like this), it just drops the whole thing.

Here is an example:

https://irclog.perlgeek.de/perl6-dev/2017-03-01#i_14185671 | test | bisectable and builds with no executable when bisectable stumbles upon a build that has no executable there are some builds like this it just drops the whole thing here is an example | 1 |

53,656 | 6,340,475,071 | IssuesEvent | 2017-07-27 10:59:50 | FlightControl-Master/MOOSE | https://api.github.com/repos/FlightControl-Master/MOOSE | closed | DESIGNATE: Spam Message "Can't Mark.." | enhancement implemented ready for testing | When I ordered to start to lase a group of enemy ground units, spam message "Can't Mark.." appear for every units wich the Recce can't detect. | 1.0 | DESIGNATE: Spam Message "Can't Mark.." - When I ordered to start to lase a group of enemy ground units, spam message "Can't Mark.." appear for every units wich the Recce can't detect. | test | designate spam message can t mark when i ordered to start to lase a group of enemy ground units spam message can t mark appear for every units wich the recce can t detect | 1 |

12,998 | 21,628,939,762 | IssuesEvent | 2022-05-05 07:37:41 | renovatebot/renovate | https://api.github.com/repos/renovatebot/renovate | opened | Support Nexus repository manager for RubyGems | type:feature status:requirements priority-5-triage | ### What would you like Renovate to be able to do?

Nexus repository manager doesn't support the '/api/v1/' pattern for RubyGem discovery, however, `bundler` does support installing Gems when the Nexus URL is used as the `source` in the `Gemfile`.

Following discussion here: https://github.com/renovatebot/renovate/discussions/15451

### If you have any ideas on how this should be implemented, please tell us here.

The `source` used for `bundler` is just a URL link to the Gem group rather than an API endpoint. Should maybe take that into account.

### Is this a feature you are interested in implementing yourself?

No | 1.0 | Support Nexus repository manager for RubyGems - ### What would you like Renovate to be able to do?

Nexus repository manager doesn't support the '/api/v1/' pattern for RubyGem discovery, however, `bundler` does support installing Gems when the Nexus URL is used as the `source` in the `Gemfile`.

Following discussion here: https://github.com/renovatebot/renovate/discussions/15451

### If you have any ideas on how this should be implemented, please tell us here.

The `source` used for `bundler` is just a URL link to the Gem group rather than an API endpoint. Should maybe take that into account.

### Is this a feature you are interested in implementing yourself?

No | non_test | support nexus repository manager for rubygems what would you like renovate to be able to do nexus repository manager doesn t support the api pattern for rubygem discovery however bundler does support installing gems when the nexus url is used as the source in the gemfile following discussion here if you have any ideas on how this should be implemented please tell us here the source used for bundler is just a url link to the gem group rather than an api endpoint should maybe take that into account is this a feature you are interested in implementing yourself no | 0 |

233,795 | 19,061,010,105 | IssuesEvent | 2021-11-26 07:44:29 | thelfer/tfel | https://api.github.com/repos/thelfer/tfel | closed | [mtest] Imposed inner radius evolution in pipe modelling | enhancement mtest | Dear Thomas,

I would like to impose via the module `PTest` the evolution of the inner radius of a pipe.

Is it possible to do this with the module `PTest` in `MTest` ?

Best regards,

Fabien | 1.0 | [mtest] Imposed inner radius evolution in pipe modelling - Dear Thomas,

I would like to impose via the module `PTest` the evolution of the inner radius of a pipe.

Is it possible to do this with the module `PTest` in `MTest` ?

Best regards,

Fabien | test | imposed inner radius evolution in pipe modelling dear thomas i would like to impose via the module ptest the evolution of the inner radius of a pipe is it possible to do this with the module ptest in mtest best regards fabien | 1 |

158,146 | 12,404,304,693 | IssuesEvent | 2020-05-21 15:19:08 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | ArgumentOutOfRangeException thrown in InteractiveWindow_InProc.GetLastReplOutput | Area-IDE Flaky Integration-Test | ```

System.ArgumentOutOfRangeException : StartIndex cannot be less than zero.

Parameter name: startIndex

Server stack trace:

at System.String.Substring(Int32 startIndex, Int32 length)

at Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.InteractiveWindow_InProc.GetLastReplOutput()

at Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.InteractiveWindow_InProc.WaitForPredicate(Func`1 getValue, Func`2 isExpectedValue)

at Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.InteractiveWindow_InProc.WaitForLastReplOutputContains(String outputText)

at System.Runtime.Remoting.Messaging.StackBuilderSink._PrivateProcessMessage(IntPtr md, Object[] args, Object server, Object[]& outArgs)

at System.Runtime.Remoting.Messaging.StackBuilderSink.SyncProcessMessage(IMessage msg)

Exception rethrown at [0]:

at System.Runtime.Remoting.Proxies.RealProxy.HandleReturnMessage(IMessage reqMsg, IMessage retMsg)

at System.Runtime.Remoting.Proxies.RealProxy.PrivateInvoke(MessageData& msgData, Int32 type)

at Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.InteractiveWindow_InProc.WaitForLastReplOutputContains(String outputText)

at Microsoft.VisualStudio.IntegrationTest.Utilities.OutOfProcess.InteractiveWindow_OutOfProc.WaitForLastReplOutputContains(String outputText) in /_/src/VisualStudio/IntegrationTest/TestUtilities/OutOfProcess/InteractiveWindow_OutOfProc.cs:line 76

at Roslyn.VisualStudio.IntegrationTests.CSharp.CSharpInteractive.ForStatement() in /_/src/VisualStudio/IntegrationTest/IntegrationTests/CSharp/CSharpInteractive.cs:line 40

```

There are a couple of other open issues for this test, but the failures there do not appear to be related to this stack. Failed in https://github.com/dotnet/roslyn/pull/43962.

https://dev.azure.com/dnceng/public/_build/results?buildId=630588&view=ms.vss-test-web.build-test-results-tab&runId=19673644&resultId=100468&paneView=debug | 1.0 | ArgumentOutOfRangeException thrown in InteractiveWindow_InProc.GetLastReplOutput - ```

System.ArgumentOutOfRangeException : StartIndex cannot be less than zero.

Parameter name: startIndex

Server stack trace:

at System.String.Substring(Int32 startIndex, Int32 length)

at Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.InteractiveWindow_InProc.GetLastReplOutput()

at Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.InteractiveWindow_InProc.WaitForPredicate(Func`1 getValue, Func`2 isExpectedValue)

at Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.InteractiveWindow_InProc.WaitForLastReplOutputContains(String outputText)

at System.Runtime.Remoting.Messaging.StackBuilderSink._PrivateProcessMessage(IntPtr md, Object[] args, Object server, Object[]& outArgs)

at System.Runtime.Remoting.Messaging.StackBuilderSink.SyncProcessMessage(IMessage msg)

Exception rethrown at [0]:

at System.Runtime.Remoting.Proxies.RealProxy.HandleReturnMessage(IMessage reqMsg, IMessage retMsg)

at System.Runtime.Remoting.Proxies.RealProxy.PrivateInvoke(MessageData& msgData, Int32 type)

at Microsoft.VisualStudio.IntegrationTest.Utilities.InProcess.InteractiveWindow_InProc.WaitForLastReplOutputContains(String outputText)

at Microsoft.VisualStudio.IntegrationTest.Utilities.OutOfProcess.InteractiveWindow_OutOfProc.WaitForLastReplOutputContains(String outputText) in /_/src/VisualStudio/IntegrationTest/TestUtilities/OutOfProcess/InteractiveWindow_OutOfProc.cs:line 76

at Roslyn.VisualStudio.IntegrationTests.CSharp.CSharpInteractive.ForStatement() in /_/src/VisualStudio/IntegrationTest/IntegrationTests/CSharp/CSharpInteractive.cs:line 40

```

There are a couple of other open issues for this test, but the failures there do not appear to be related to this stack. Failed in https://github.com/dotnet/roslyn/pull/43962.

https://dev.azure.com/dnceng/public/_build/results?buildId=630588&view=ms.vss-test-web.build-test-results-tab&runId=19673644&resultId=100468&paneView=debug | test | argumentoutofrangeexception thrown in interactivewindow inproc getlastreploutput system argumentoutofrangeexception startindex cannot be less than zero parameter name startindex server stack trace at system string substring startindex length at microsoft visualstudio integrationtest utilities inprocess interactivewindow inproc getlastreploutput at microsoft visualstudio integrationtest utilities inprocess interactivewindow inproc waitforpredicate func getvalue func isexpectedvalue at microsoft visualstudio integrationtest utilities inprocess interactivewindow inproc waitforlastreploutputcontains string outputtext at system runtime remoting messaging stackbuildersink privateprocessmessage intptr md object args object server object outargs at system runtime remoting messaging stackbuildersink syncprocessmessage imessage msg exception rethrown at at system runtime remoting proxies realproxy handlereturnmessage imessage reqmsg imessage retmsg at system runtime remoting proxies realproxy privateinvoke messagedata msgdata type at microsoft visualstudio integrationtest utilities inprocess interactivewindow inproc waitforlastreploutputcontains string outputtext at microsoft visualstudio integrationtest utilities outofprocess interactivewindow outofproc waitforlastreploutputcontains string outputtext in src visualstudio integrationtest testutilities outofprocess interactivewindow outofproc cs line at roslyn visualstudio integrationtests csharp csharpinteractive forstatement in src visualstudio integrationtest integrationtests csharp csharpinteractive cs line there are a couple of other open issues for this test but the failures there do not appear to be related to this stack failed in | 1 |

150,399 | 11,959,092,794 | IssuesEvent | 2020-04-04 20:33:20 | forseti-security/forseti-security | https://api.github.com/repos/forseti-security/forseti-security | opened | Migrate model use test to pytest | module: testing priority: p3 triaged: yes | As part of migration from inspec to pytest, replace the model use test with a pytest equivalent. Setup the test so that other client tests can be gradually added. | 1.0 | Migrate model use test to pytest - As part of migration from inspec to pytest, replace the model use test with a pytest equivalent. Setup the test so that other client tests can be gradually added. | test | migrate model use test to pytest as part of migration from inspec to pytest replace the model use test with a pytest equivalent setup the test so that other client tests can be gradually added | 1 |

328,093 | 28,101,067,190 | IssuesEvent | 2023-03-30 19:32:44 | DevelopingSpace/starchart | https://api.github.com/repos/DevelopingSpace/starchart | closed | Test for CRUD operations in models | category: testing | As part of #97, we need to have some tests for CRUD functions in PR #228 | 1.0 | Test for CRUD operations in models - As part of #97, we need to have some tests for CRUD functions in PR #228 | test | test for crud operations in models as part of we need to have some tests for crud functions in pr | 1 |

42,331 | 22,499,890,105 | IssuesEvent | 2022-06-23 10:49:57 | tarantool/tarantool | https://api.github.com/repos/tarantool/tarantool | closed | sql: Analyze works incorrectly | bug sql performance | https://github.com/tarantool/tarantool/commit/e3ec3d47a0ec520206fbb33d3f16ce448e3ad279?diff=unified#diff-8dc039e198a8fe0a2d70c89a7aca8a18R1105

This is a strange change.

It seems that rows in `_sql_stat4` should be unique and `analyze` should not replace rows which were created by itself just before.

Here are some useful snippets:

Src to repeat key duplicate in `_sql_stat4`

```

CREATE TABLE t1(a primary key ,b);

CREATE INDEX t1b ON t1(b);

insert into t1 values(1,2);

insert into t1 values(2,2);

analyze;

```

Part of analyze vdbe:

```

101> 108 String8 0 50 0 T1B 00 r[50]='T1B'; Analysis for T1.T1B [480/1903]

101> 109 OpenRead 6 526337 0 k(1,) 00 root=526337; T1B

101> 110 Count 6 48 0 00 r[48]=count()

101> 111 Integer 1 46 0 00 r[46]=1

101> 112 Integer 1 47 0 00 r[47]=1

101> 113 Function0 0 46 45 stat_init(3) 03 r[45]=func(r[46..48])

101> 114 Rewind 6 146 0 00

101> 115 Integer 0 46 0 00 r[46]=0

101> 116 Goto 0 122 0 00

101> 117 Integer 0 46 0 00 r[46]=0

101> 118 Column 6 1 48 00 r[48]=

101> 119 Ne 48 122 52 (BINARY) 80 if r[52]!=r[48] goto 122

101> 120 Integer 1 46 0 00 r[46]=1

101> 121 Goto 0 123 0 00

101> 122 Column 6 1 52 00 r[52]=

101> 123 Column 6 0 54 00 r[54]=A

101> 124 MakeRecord 54 1 47 00 r[47]=mkrec(r[54])

101> 125 Function0 1 45 48 stat_push(3) 03 r[48]=func(r[45..47])

101> 126 Next 6 117 0 00

```

Draw extra attention to lines 118, 119 and 122 | True | sql: Analyze works incorrectly - https://github.com/tarantool/tarantool/commit/e3ec3d47a0ec520206fbb33d3f16ce448e3ad279?diff=unified#diff-8dc039e198a8fe0a2d70c89a7aca8a18R1105

This is a strange change.

It seems that rows in `_sql_stat4` should be unique and `analyze` should not replace rows which were created by itself just before.

Here are some useful snippets:

Src to repeat key duplicate in `_sql_stat4`

```

CREATE TABLE t1(a primary key ,b);

CREATE INDEX t1b ON t1(b);

insert into t1 values(1,2);

insert into t1 values(2,2);

analyze;

```

Part of analyze vdbe:

```

101> 108 String8 0 50 0 T1B 00 r[50]='T1B'; Analysis for T1.T1B [480/1903]

101> 109 OpenRead 6 526337 0 k(1,) 00 root=526337; T1B

101> 110 Count 6 48 0 00 r[48]=count()

101> 111 Integer 1 46 0 00 r[46]=1

101> 112 Integer 1 47 0 00 r[47]=1

101> 113 Function0 0 46 45 stat_init(3) 03 r[45]=func(r[46..48])

101> 114 Rewind 6 146 0 00

101> 115 Integer 0 46 0 00 r[46]=0

101> 116 Goto 0 122 0 00

101> 117 Integer 0 46 0 00 r[46]=0

101> 118 Column 6 1 48 00 r[48]=

101> 119 Ne 48 122 52 (BINARY) 80 if r[52]!=r[48] goto 122

101> 120 Integer 1 46 0 00 r[46]=1

101> 121 Goto 0 123 0 00

101> 122 Column 6 1 52 00 r[52]=

101> 123 Column 6 0 54 00 r[54]=A

101> 124 MakeRecord 54 1 47 00 r[47]=mkrec(r[54])

101> 125 Function0 1 45 48 stat_push(3) 03 r[48]=func(r[45..47])

101> 126 Next 6 117 0 00

```

Draw extra attention to lines 118, 119 and 122 | non_test | sql analyze works incorrectly this is a strange change it seems that rows in sql should be unique and analyze should not replace rows which were created by itself just before here are some useful snippets src to repeat key duplicate in sql create table a primary key b create index on b insert into values insert into values analyze part of analyze vdbe r analysis for openread k root count r count integer r integer r stat init r func r rewind integer r goto integer r column r ne binary if r r goto integer r goto column r column r a makerecord r mkrec r stat push r func r next draw extra attention to lines and | 0 |

53,312 | 13,153,224,763 | IssuesEvent | 2020-08-10 02:27:45 | linagora/james-project | https://api.github.com/repos/linagora/james-project | closed | Fasten CI | build-time | Here are the maven modules that takes the most time to test.

We need to find a way to make things faster...

```

[INFO] Apache James :: Mailbox :: Tools :: Indexer ........ SUCCESS [02:02 min]

[INFO] Apache James :: Server :: MailRepository :: Cassandra SUCCESS [02:13 min]

[INFO] Apache James :: Server :: Web Admin :: mailbox ..... SUCCESS [02:41 min]

[INFO] Apache James :: Server :: Cassandra/Ldap with RabbitMQ - guice injection SUCCESS [03:05 min]

[INFO] apache-james-backends-es ........................... SUCCESS [03:06 min]

[INFO] Apache James :: Server :: Web Admin server integration tests :: Memory SUCCESS [03:19 min]

[INFO] Apache James :: Server :: Mailets Integration Testing SUCCESS [03:30 min]

[INFO] Apache James :: Server :: Data :: Cassandra Persistence SUCCESS [04:18 min]

[INFO] Apache James RabbitMQ backend ...................... SUCCESS [05:09 min]

[INFO] Apache James :: Server :: JMAP (draft) :: Cassandra Integration testing SUCCESS [05:11 min]

[INFO] Apache James :: Server :: Cassandra - guice injection SUCCESS [05:48 min]

[INFO] Apache James :: Server :: Blob :: Cassandra ........ SUCCESS [06:12 min]

[INFO] Apache James MPT Imap Mailbox - Cassandra .......... SUCCESS [07:02 min]

[INFO] Apache James :: Server :: JMAP (draft) :: Memory Integration testing SUCCESS [07:54 min]

[INFO] Apache James :: Server :: JMAP (draft) :: RabbitMQ + Object Store + Cassandra Integration testing SUCCESS [08:37 min]

[INFO] Apache James :: Server :: Task :: Distributed ...... SUCCESS [09:22 min]

[INFO] Apache James :: Mailbox :: Cassandra ............... SUCCESS [10:17 min]

[INFO] Apache James :: Server :: Cassandra with RabbitMQ - guice injection SUCCESS [10:19 min]

[INFO] Apache James :: Server :: Web Admin server integration tests :: Distributed SUCCESS [11:51 min]

[INFO] Apache James :: Server :: Blob :: Object storage ... SUCCESS [12:31 min]

[INFO] Apache James :: Server :: Mail Queue :: RabbitMQ ... SUCCESS [15:17 min]

[INFO] Apache James :: Mailbox :: Event :: RabbitMQ implementation SUCCESS [28:36 min]

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 03:45 h

[INFO] Finished at: 2020-07-24T15:41:30Z

[INFO] ------------------------------------------------------------------------

``` | 1.0 | Fasten CI - Here are the maven modules that takes the most time to test.

We need to find a way to make things faster...

```

[INFO] Apache James :: Mailbox :: Tools :: Indexer ........ SUCCESS [02:02 min]

[INFO] Apache James :: Server :: MailRepository :: Cassandra SUCCESS [02:13 min]

[INFO] Apache James :: Server :: Web Admin :: mailbox ..... SUCCESS [02:41 min]

[INFO] Apache James :: Server :: Cassandra/Ldap with RabbitMQ - guice injection SUCCESS [03:05 min]

[INFO] apache-james-backends-es ........................... SUCCESS [03:06 min]

[INFO] Apache James :: Server :: Web Admin server integration tests :: Memory SUCCESS [03:19 min]

[INFO] Apache James :: Server :: Mailets Integration Testing SUCCESS [03:30 min]

[INFO] Apache James :: Server :: Data :: Cassandra Persistence SUCCESS [04:18 min]

[INFO] Apache James RabbitMQ backend ...................... SUCCESS [05:09 min]

[INFO] Apache James :: Server :: JMAP (draft) :: Cassandra Integration testing SUCCESS [05:11 min]

[INFO] Apache James :: Server :: Cassandra - guice injection SUCCESS [05:48 min]

[INFO] Apache James :: Server :: Blob :: Cassandra ........ SUCCESS [06:12 min]

[INFO] Apache James MPT Imap Mailbox - Cassandra .......... SUCCESS [07:02 min]

[INFO] Apache James :: Server :: JMAP (draft) :: Memory Integration testing SUCCESS [07:54 min]

[INFO] Apache James :: Server :: JMAP (draft) :: RabbitMQ + Object Store + Cassandra Integration testing SUCCESS [08:37 min]

[INFO] Apache James :: Server :: Task :: Distributed ...... SUCCESS [09:22 min]

[INFO] Apache James :: Mailbox :: Cassandra ............... SUCCESS [10:17 min]

[INFO] Apache James :: Server :: Cassandra with RabbitMQ - guice injection SUCCESS [10:19 min]

[INFO] Apache James :: Server :: Web Admin server integration tests :: Distributed SUCCESS [11:51 min]

[INFO] Apache James :: Server :: Blob :: Object storage ... SUCCESS [12:31 min]

[INFO] Apache James :: Server :: Mail Queue :: RabbitMQ ... SUCCESS [15:17 min]

[INFO] Apache James :: Mailbox :: Event :: RabbitMQ implementation SUCCESS [28:36 min]

[INFO] ------------------------------------------------------------------------

[INFO] BUILD SUCCESS

[INFO] ------------------------------------------------------------------------

[INFO] Total time: 03:45 h

[INFO] Finished at: 2020-07-24T15:41:30Z

[INFO] ------------------------------------------------------------------------

``` | non_test | fasten ci here are the maven modules that takes the most time to test we need to find a way to make things faster apache james mailbox tools indexer success apache james server mailrepository cassandra success apache james server web admin mailbox success apache james server cassandra ldap with rabbitmq guice injection success apache james backends es success apache james server web admin server integration tests memory success apache james server mailets integration testing success apache james server data cassandra persistence success apache james rabbitmq backend success apache james server jmap draft cassandra integration testing success apache james server cassandra guice injection success apache james server blob cassandra success apache james mpt imap mailbox cassandra success apache james server jmap draft memory integration testing success apache james server jmap draft rabbitmq object store cassandra integration testing success apache james server task distributed success apache james mailbox cassandra success apache james server cassandra with rabbitmq guice injection success apache james server web admin server integration tests distributed success apache james server blob object storage success apache james server mail queue rabbitmq success apache james mailbox event rabbitmq implementation success build success total time h finished at | 0 |

122,551 | 12,155,304,813 | IssuesEvent | 2020-04-25 12:32:46 | mykeels/crypto-dip-alert | https://api.github.com/repos/mykeels/crypto-dip-alert | closed | There should be a reference to Coincap.io for their API | bug documentation good first issue | # Bug Description

The software in this repo depends on Coincap.io's free and open API. There is no mention of this, except in the code.

## Expected Behaviour

It should be mentioned in the root README.

| 1.0 | There should be a reference to Coincap.io for their API - # Bug Description

The software in this repo depends on Coincap.io's free and open API. There is no mention of this, except in the code.

## Expected Behaviour

It should be mentioned in the root README.

| non_test | there should be a reference to coincap io for their api bug description the software in this repo depends on coincap io s free and open api there is no mention of this except in the code expected behaviour it should be mentioned in the root readme | 0 |

383,087 | 26,531,923,993 | IssuesEvent | 2023-01-19 13:09:11 | pik-piam/mrdrivers | https://api.github.com/repos/pik-piam/mrdrivers | opened | No citable source for GDP data. | bug documentation | The documentation of [`calcGDP()`](https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R) does not contain any citable source for GDP data. Like one would need for _doing science_.

- https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L49

And what happens if `GDPCalib` is `NULL`?

- https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L65

And what happens if `GDPPast` is `NULL`?

- https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L82

And what happens if `GDPFuture` is `NULL`?

- https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L85

Besides being completely insufficient as a source, the link is also dead.

I would really like to know how https://github.com/pik-piam/mrdrivers/commit/ec02c71c7599a5bd2025ef8866d68390214399be was supposed to provide anything citable.

-----

https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L25-L28

At least somebody had fun.

| 1.0 | No citable source for GDP data. - The documentation of [`calcGDP()`](https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R) does not contain any citable source for GDP data. Like one would need for _doing science_.

- https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L49

And what happens if `GDPCalib` is `NULL`?

- https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L65

And what happens if `GDPPast` is `NULL`?

- https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L82

And what happens if `GDPFuture` is `NULL`?

- https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L85

Besides being completely insufficient as a source, the link is also dead.

I would really like to know how https://github.com/pik-piam/mrdrivers/commit/ec02c71c7599a5bd2025ef8866d68390214399be was supposed to provide anything citable.

-----

https://github.com/pik-piam/mrdrivers/blob/eb1da620d641ff8df83dbe8861a0e320400f3527/R/calcGDP.R#L25-L28

At least somebody had fun.

| non_test | no citable source for gdp data the documentation of does not contain any citable source for gdp data like one would need for doing science and what happens if gdpcalib is null and what happens if gdppast is null and what happens if gdpfuture is null besides being completely insufficient as a source the link is also dead i would really like to know how was supposed to provide anything citable at least somebody had fun | 0 |

223,495 | 17,603,223,677 | IssuesEvent | 2021-08-17 14:12:07 | cseelhoff/RimThreaded | https://api.github.com/repos/cseelhoff/RimThreaded | closed | "Replace Stuff" pretty frequent errors | Bug Reproducible Accepted For Testing Mod Incompatibility Confirmed 1.3.X.X 2.0.X.X 2.2.X.X 2.3.X.X | **Describe the bug**

IMPORTANT: Please first search existing bugs to ensure you are not creating a duplicate bug report.

errors with some frequency

**To Reproduce (VERY IMPORTANT)**

Steps to reproduce the behavior:

1. load any save

2. hit play

3. see errors

**Error Log**

```

Exception ticking SteamGeyser255415 (at (258, 0, 198)): System.InvalidOperationException: Collection was modified; enumeration operation may not execute.

at System.ThrowHelper.ThrowInvalidOperationException (System.ExceptionResource resource) [0x0000b] in <567df3e0919241ba98db88bec4c6696f>:0

at System.Collections.Generic.List`1+Enumerator[T].MoveNextRare () [0x00013] in <567df3e0919241ba98db88bec4c6696f>:0

at System.Collections.Generic.List`1+Enumerator[T].MoveNext () [0x0004a] in <567df3e0919241ba98db88bec4c6696f>:0

at Replace_Stuff.NewThing.NewThingReplacement.IsNewThingReplacement (Verse.ThingDef newDef, Verse.IntVec3 pos, Verse.Rot4 rotation, Verse.Map map, Verse.Thing& oldThing) [0x0004c] in <dd5f6b372d7741358d6491f16fb78d1f>:0

at Replace_Stuff.NewThing.TransferSettings.Prefix (Verse.Thing newThing, Verse.IntVec3 loc, Verse.Map map, Verse.Rot4 rot, System.Boolean respawningAfterLoad, Verse.Thing& __state) [0x00009] in <dd5f6b372d7741358d6491f16fb78d1f>:0

at (wrapper dynamic-method) Verse.GenSpawn.Verse.GenSpawn.Spawn_Patch2(Verse.Thing,Verse.IntVec3,Verse.Map,Verse.Rot4,Verse.WipeMode,bool)

at Verse.GenSpawn.Spawn (Verse.Thing newThing, Verse.IntVec3 loc, Verse.Map map, Verse.WipeMode wipeMode) [0x00008] in <d72310b4d8f64d25aee502792b58549f>:0

at RimWorld.MoteMaker.ThrowAirPuffUp (UnityEngine.Vector3 loc, Verse.Map map) [0x00086] in <d72310b4d8f64d25aee502792b58549f>:0

at RimWorld.IntermittentSteamSprayer.SteamSprayerTick () [0x0003c] in <d72310b4d8f64d25aee502792b58549f>:0

at RimWorld.Building_SteamGeyser.Tick () [0x00008] in <d72310b4d8f64d25aee502792b58549f>:0

at RimThreaded.RimThreaded.ExecuteTicks () [0x00105] in <08216f895b4b4c66b9b6902b448df752>:0

Verse.Log:Error(String, Boolean)

RimThreaded.RimThreaded:ExecuteTicks()

RimThreaded.RimThreaded:ProcessTicks(ThreadInfo)

RimThreaded.RimThreaded:InitializeThread(ThreadInfo)

RimThreaded.<>c__DisplayClass80_0:<CreateWorkerThread>b__0()

System.Threading.ThreadHelper:ThreadStart_Context(Object)

System.Threading.ExecutionContext:RunInternal(ExecutionContext, ContextCallback, Object, Boolean)

System.Threading.ExecutionContext:Run(ExecutionContext, ContextCallback, Object, Boolean)

System.Threading.ExecutionContext:Run(ExecutionContext, ContextCallback, Object)

System.Threading.ThreadHelper:ThreadStart()

```

https://gist.github.com/42ef0429766400500ba3a1d859319fce

**Mod List**

see log

https://steamcommunity.com/sharedfiles/filedetails/?id=1372003680&searchtext=replace

**Screenshots**

NA

| 1.0 | "Replace Stuff" pretty frequent errors - **Describe the bug**

IMPORTANT: Please first search existing bugs to ensure you are not creating a duplicate bug report.

errors with some frequency

**To Reproduce (VERY IMPORTANT)**

Steps to reproduce the behavior:

1. load any save

2. hit play

3. see errors

**Error Log**

```

Exception ticking SteamGeyser255415 (at (258, 0, 198)): System.InvalidOperationException: Collection was modified; enumeration operation may not execute.

at System.ThrowHelper.ThrowInvalidOperationException (System.ExceptionResource resource) [0x0000b] in <567df3e0919241ba98db88bec4c6696f>:0

at System.Collections.Generic.List`1+Enumerator[T].MoveNextRare () [0x00013] in <567df3e0919241ba98db88bec4c6696f>:0

at System.Collections.Generic.List`1+Enumerator[T].MoveNext () [0x0004a] in <567df3e0919241ba98db88bec4c6696f>:0

at Replace_Stuff.NewThing.NewThingReplacement.IsNewThingReplacement (Verse.ThingDef newDef, Verse.IntVec3 pos, Verse.Rot4 rotation, Verse.Map map, Verse.Thing& oldThing) [0x0004c] in <dd5f6b372d7741358d6491f16fb78d1f>:0

at Replace_Stuff.NewThing.TransferSettings.Prefix (Verse.Thing newThing, Verse.IntVec3 loc, Verse.Map map, Verse.Rot4 rot, System.Boolean respawningAfterLoad, Verse.Thing& __state) [0x00009] in <dd5f6b372d7741358d6491f16fb78d1f>:0

at (wrapper dynamic-method) Verse.GenSpawn.Verse.GenSpawn.Spawn_Patch2(Verse.Thing,Verse.IntVec3,Verse.Map,Verse.Rot4,Verse.WipeMode,bool)

at Verse.GenSpawn.Spawn (Verse.Thing newThing, Verse.IntVec3 loc, Verse.Map map, Verse.WipeMode wipeMode) [0x00008] in <d72310b4d8f64d25aee502792b58549f>:0

at RimWorld.MoteMaker.ThrowAirPuffUp (UnityEngine.Vector3 loc, Verse.Map map) [0x00086] in <d72310b4d8f64d25aee502792b58549f>:0

at RimWorld.IntermittentSteamSprayer.SteamSprayerTick () [0x0003c] in <d72310b4d8f64d25aee502792b58549f>:0

at RimWorld.Building_SteamGeyser.Tick () [0x00008] in <d72310b4d8f64d25aee502792b58549f>:0

at RimThreaded.RimThreaded.ExecuteTicks () [0x00105] in <08216f895b4b4c66b9b6902b448df752>:0

Verse.Log:Error(String, Boolean)

RimThreaded.RimThreaded:ExecuteTicks()

RimThreaded.RimThreaded:ProcessTicks(ThreadInfo)

RimThreaded.RimThreaded:InitializeThread(ThreadInfo)

RimThreaded.<>c__DisplayClass80_0:<CreateWorkerThread>b__0()

System.Threading.ThreadHelper:ThreadStart_Context(Object)

System.Threading.ExecutionContext:RunInternal(ExecutionContext, ContextCallback, Object, Boolean)

System.Threading.ExecutionContext:Run(ExecutionContext, ContextCallback, Object, Boolean)

System.Threading.ExecutionContext:Run(ExecutionContext, ContextCallback, Object)

System.Threading.ThreadHelper:ThreadStart()

```

https://gist.github.com/42ef0429766400500ba3a1d859319fce

**Mod List**

see log

https://steamcommunity.com/sharedfiles/filedetails/?id=1372003680&searchtext=replace

**Screenshots**

NA

| test | replace stuff pretty frequent errors describe the bug important please first search existing bugs to ensure you are not creating a duplicate bug report errors with some frequency to reproduce very important steps to reproduce the behavior load any save hit play see errors error log exception ticking at system invalidoperationexception collection was modified enumeration operation may not execute at system throwhelper throwinvalidoperationexception system exceptionresource resource in at system collections generic list enumerator movenextrare in at system collections generic list enumerator movenext in at replace stuff newthing newthingreplacement isnewthingreplacement verse thingdef newdef verse pos verse rotation verse map map verse thing oldthing in at replace stuff newthing transfersettings prefix verse thing newthing verse loc verse map map verse rot system boolean respawningafterload verse thing state in at wrapper dynamic method verse genspawn verse genspawn spawn verse thing verse verse map verse verse wipemode bool at verse genspawn spawn verse thing newthing verse loc verse map map verse wipemode wipemode in at rimworld motemaker throwairpuffup unityengine loc verse map map in at rimworld intermittentsteamsprayer steamsprayertick in at rimworld building steamgeyser tick in at rimthreaded rimthreaded executeticks in verse log error string boolean rimthreaded rimthreaded executeticks rimthreaded rimthreaded processticks threadinfo rimthreaded rimthreaded initializethread threadinfo rimthreaded c b system threading threadhelper threadstart context object system threading executioncontext runinternal executioncontext contextcallback object boolean system threading executioncontext run executioncontext contextcallback object boolean system threading executioncontext run executioncontext contextcallback object system threading threadhelper threadstart mod list see log screenshots na | 1 |

309,219 | 26,657,138,345 | IssuesEvent | 2023-01-25 17:44:12 | PalisadoesFoundation/talawa | https://api.github.com/repos/PalisadoesFoundation/talawa | closed | Complete Code Coverage for View Models | unapproved test parent | The Talawa code base needs to be 100% reliable. This means we need to have We 100% unittest code coverage. This is a parent issue for all View Model unittest issues.

We will be creating one issue per related file with the expectation that widgets referenced in these files must also have unittests.

- We'll only assign the child issues.

- Please comment on one of the child issues for assignment.

- Only one child issue will be assigned to a person at any one time.

Parent Issue:

- https://github.com/PalisadoesFoundation/talawa/issues/1124

Child Issues:

- https://github.com/PalisadoesFoundation/talawa/issues/1002

- https://github.com/PalisadoesFoundation/talawa/issues/1003

- https://github.com/PalisadoesFoundation/talawa/issues/1023

- https://github.com/PalisadoesFoundation/talawa/issues/1018

- https://github.com/PalisadoesFoundation/talawa/issues/1119

| 1.0 | Complete Code Coverage for View Models - The Talawa code base needs to be 100% reliable. This means we need to have We 100% unittest code coverage. This is a parent issue for all View Model unittest issues.

We will be creating one issue per related file with the expectation that widgets referenced in these files must also have unittests.

- We'll only assign the child issues.

- Please comment on one of the child issues for assignment.

- Only one child issue will be assigned to a person at any one time.

Parent Issue:

- https://github.com/PalisadoesFoundation/talawa/issues/1124

Child Issues:

- https://github.com/PalisadoesFoundation/talawa/issues/1002

- https://github.com/PalisadoesFoundation/talawa/issues/1003

- https://github.com/PalisadoesFoundation/talawa/issues/1023

- https://github.com/PalisadoesFoundation/talawa/issues/1018

- https://github.com/PalisadoesFoundation/talawa/issues/1119

| test | complete code coverage for view models the talawa code base needs to be reliable this means we need to have we unittest code coverage this is a parent issue for all view model unittest issues we will be creating one issue per related file with the expectation that widgets referenced in these files must also have unittests we ll only assign the child issues please comment on one of the child issues for assignment only one child issue will be assigned to a person at any one time parent issue child issues | 1 |

298,171 | 25,794,562,013 | IssuesEvent | 2022-12-10 12:11:56 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: sequelize failed | C-test-failure O-robot O-roachtest release-blocker branch-release-21.2 | roachtest.sequelize [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=7909414&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=7909414&tab=artifacts#/sequelize) on release-21.2 @ [14dff4d7f9832f50b4844ac1a79c96e4b06f521c](https://github.com/cockroachdb/cockroach/commits/14dff4d7f9832f50b4844ac1a79c96e4b06f521c):

```

The test failed on branch=release-21.2, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/sequelize/run_1

sequelize.go:138,sequelize.go:158,test_runner.go:777: all attempts failed for install dependencies due to error: output in run_121131.636286404_n1_cd: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-7909414-1670655128-72-n1cpu4:1 -- cd /mnt/data1/sequelize && sudo npm i returned: exit status 20

```

<details><summary>Reproduce</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

</p>

</details>

<details><summary>Same failure on other branches</summary>

<p>

- #91663 roachtest: sequelize failed [C-test-failure O-roachtest O-robot T-sql-experience deprecated-branch-release-22.2.0]

</p>

</details>

/cc @cockroachdb/sql-experience

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*sequelize.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| 2.0 | roachtest: sequelize failed - roachtest.sequelize [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=7909414&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=7909414&tab=artifacts#/sequelize) on release-21.2 @ [14dff4d7f9832f50b4844ac1a79c96e4b06f521c](https://github.com/cockroachdb/cockroach/commits/14dff4d7f9832f50b4844ac1a79c96e4b06f521c):

```

The test failed on branch=release-21.2, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/sequelize/run_1

sequelize.go:138,sequelize.go:158,test_runner.go:777: all attempts failed for install dependencies due to error: output in run_121131.636286404_n1_cd: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-7909414-1670655128-72-n1cpu4:1 -- cd /mnt/data1/sequelize && sudo npm i returned: exit status 20

```

<details><summary>Reproduce</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

</p>

</details>

<details><summary>Same failure on other branches</summary>

<p>

- #91663 roachtest: sequelize failed [C-test-failure O-roachtest O-robot T-sql-experience deprecated-branch-release-22.2.0]

</p>

</details>

/cc @cockroachdb/sql-experience

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*sequelize.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| test | roachtest sequelize failed roachtest sequelize with on release the test failed on branch release cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts sequelize run sequelize go sequelize go test runner go all attempts failed for install dependencies due to error output in run cd home agent work go src github com cockroachdb cockroach bin roachprod run teamcity cd mnt sequelize sudo npm i returned exit status reproduce see same failure on other branches roachtest sequelize failed cc cockroachdb sql experience | 1 |

20,693 | 6,916,523,547 | IssuesEvent | 2017-11-29 03:04:15 | opencv/opencv | https://api.github.com/repos/opencv/opencv | opened | MacOSX: LAPACK support detection doesn't work | bug category: build/install category: ios/osx | Since this [build](http://pullrequest.opencv.org/buildbot/builders/master_noOCL-mac/builds/10266):

```

-- A library with BLAS API found.

-- Looking for cheev_

-- Looking for cheev_ - found

-- A library with LAPACK API found.

-- LAPACK(LAPACK/Apple): LAPACK_LIBRARIES: /Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX10.12.sdk/System/Library/Frameworks/Accelerate.framework;/Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX10.12.sdk/System/Library/Frameworks/Accelerate.framework

-- Looking for Accelerate/Accelerate.h

-- Looking for Accelerate/Accelerate.h - found

-- Looking for Accelerate/Accelerate.h

-- Looking for Accelerate/Accelerate.h - found

-- LAPACK(LAPACK/Apple): Can't build LAPACK check code. This LAPACK version is not supported.

-- LAPACK(Apple): LAPACK_LIBRARIES: -framework Accelerate

-- Looking for Accelerate/Accelerate.h

-- Looking for Accelerate/Accelerate.h - found

-- Looking for Accelerate/Accelerate.h

-- Looking for Accelerate/Accelerate.h - found

-- LAPACK(Apple): Can't build LAPACK check code. This LAPACK version is not supported.

``` | 1.0 | MacOSX: LAPACK support detection doesn't work - Since this [build](http://pullrequest.opencv.org/buildbot/builders/master_noOCL-mac/builds/10266):

```

-- A library with BLAS API found.

-- Looking for cheev_

-- Looking for cheev_ - found

-- A library with LAPACK API found.

-- LAPACK(LAPACK/Apple): LAPACK_LIBRARIES: /Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX10.12.sdk/System/Library/Frameworks/Accelerate.framework;/Applications/Xcode.app/Contents/Developer/Platforms/MacOSX.platform/Developer/SDKs/MacOSX10.12.sdk/System/Library/Frameworks/Accelerate.framework

-- Looking for Accelerate/Accelerate.h

-- Looking for Accelerate/Accelerate.h - found

-- Looking for Accelerate/Accelerate.h

-- Looking for Accelerate/Accelerate.h - found

-- LAPACK(LAPACK/Apple): Can't build LAPACK check code. This LAPACK version is not supported.

-- LAPACK(Apple): LAPACK_LIBRARIES: -framework Accelerate

-- Looking for Accelerate/Accelerate.h

-- Looking for Accelerate/Accelerate.h - found

-- Looking for Accelerate/Accelerate.h

-- Looking for Accelerate/Accelerate.h - found

-- LAPACK(Apple): Can't build LAPACK check code. This LAPACK version is not supported.

``` | non_test | macosx lapack support detection doesn t work since this a library with blas api found looking for cheev looking for cheev found a library with lapack api found lapack lapack apple lapack libraries applications xcode app contents developer platforms macosx platform developer sdks sdk system library frameworks accelerate framework applications xcode app contents developer platforms macosx platform developer sdks sdk system library frameworks accelerate framework looking for accelerate accelerate h looking for accelerate accelerate h found looking for accelerate accelerate h looking for accelerate accelerate h found lapack lapack apple can t build lapack check code this lapack version is not supported lapack apple lapack libraries framework accelerate looking for accelerate accelerate h looking for accelerate accelerate h found looking for accelerate accelerate h looking for accelerate accelerate h found lapack apple can t build lapack check code this lapack version is not supported | 0 |

346,182 | 30,871,843,626 | IssuesEvent | 2023-08-03 11:55:15 | PerfectFit-project/virtual-coach-issues | https://api.github.com/repos/PerfectFit-project/virtual-coach-issues | closed | Check activity benefits in db | bug testing ticket | From here: https://github.com/PerfectFit-project/testing-tickets/issues/52.

It is important that the benefits of the activities do not start with "om", because the start of the sentence about benefits already contains "om": https://github.com/PerfectFit-project/virtual-coach-rasa/blob/main/Rasa_Bot/actions/definitions.py. | 1.0 | Check activity benefits in db - From here: https://github.com/PerfectFit-project/testing-tickets/issues/52.

It is important that the benefits of the activities do not start with "om", because the start of the sentence about benefits already contains "om": https://github.com/PerfectFit-project/virtual-coach-rasa/blob/main/Rasa_Bot/actions/definitions.py. | test | check activity benefits in db from here it is important that the benefits of the activities do not start with om because the start of the sentence about benefits already contains om | 1 |

324,873 | 27,826,467,729 | IssuesEvent | 2023-03-19 20:26:44 | SuperCowPowers/sageworks | https://api.github.com/repos/SuperCowPowers/sageworks | closed | Pandas To Feature Set Test Code | transform feature_set testing | Right now the test code for `pandas_to_features.py` is mostly disabled. After the class is finished, go back and enable the rest of the tests.

```

# FIXME: This rest if this test is disabled for now

``` | 1.0 | Pandas To Feature Set Test Code - Right now the test code for `pandas_to_features.py` is mostly disabled. After the class is finished, go back and enable the rest of the tests.

```

# FIXME: This rest if this test is disabled for now

``` | test | pandas to feature set test code right now the test code for pandas to features py is mostly disabled after the class is finished go back and enable the rest of the tests fixme this rest if this test is disabled for now | 1 |

79,500 | 10,130,285,009 | IssuesEvent | 2019-08-01 16:34:31 | bd1887/wine-reviews | https://api.github.com/repos/bd1887/wine-reviews | closed | Django Documentation | documentation | # Create a README explaining the following:

* How to clone the repository

* How to create a virtual environment

* How to install requirements.txt

* How to migrate the database

| 1.0 | Django Documentation - # Create a README explaining the following:

* How to clone the repository

* How to create a virtual environment

* How to install requirements.txt

* How to migrate the database

| non_test | django documentation create a readme explaining the following how to clone the repository how to create a virtual environment how to install requirements txt how to migrate the database | 0 |

159,415 | 12,475,070,369 | IssuesEvent | 2020-05-29 10:50:33 | aliasrobotics/RVD | https://api.github.com/repos/aliasrobotics/RVD | closed | RVD#2035: Use of insecure MD2, MD4, MD5, or SHA1 hash function., /opt/ros_noetic_ws/src/ros/rosbuild/core/rosbuild/bin/download_checkmd5.py:81 | bandit bug static analysis testing triage | ```yaml

{

"id": 2035,

"title": "RVD#2035: Use of insecure MD2, MD4, MD5, or SHA1 hash function., /opt/ros_noetic_ws/src/ros/rosbuild/core/rosbuild/bin/download_checkmd5.py:81",

"type": "bug",

"description": "HIGH confidence of MEDIUM severity bug. Use of insecure MD2, MD4, MD5, or SHA1 hash function. at /opt/ros_noetic_ws/src/ros/rosbuild/core/rosbuild/bin/download_checkmd5.py:81 See links for more info on the bug.",

"cwe": "None",

"cve": "None",

"keywords": [

"bandit",

"bug",

"static analysis",

"testing",

"triage",

"bug"

],

"system": "",

"vendor": null,

"severity": {

"rvss-score": 0,

"rvss-vector": "",

"severity-description": "",

"cvss-score": 0,

"cvss-vector": ""

},

"links": [

"https://github.com/aliasrobotics/RVD/issues/2035",

"https://bandit.readthedocs.io/en/latest/blacklists/blacklist_calls.html#b303-md5"

],

"flaw": {

"phase": "testing",

"specificity": "subject-specific",

"architectural-location": "application-specific",

"application": "N/A",

"subsystem": "N/A",

"package": "N/A",

"languages": "None",

"date-detected": "2020-05-29 (09:20)",

"detected-by": "Alias Robotics",

"detected-by-method": "testing static",

"date-reported": "2020-05-29 (09:20)",

"reported-by": "Alias Robotics",

"reported-by-relationship": "automatic",

"issue": "https://github.com/aliasrobotics/RVD/issues/2035",

"reproducibility": "always",

"trace": "/opt/ros_noetic_ws/src/ros/rosbuild/core/rosbuild/bin/download_checkmd5.py:81",

"reproduction": "See artifacts below (if available)",

"reproduction-image": ""

},

"exploitation": {

"description": "",

"exploitation-image": "",

"exploitation-vector": ""

},

"mitigation": {

"description": "",

"pull-request": "",

"date-mitigation": ""

}

}

``` | 1.0 | RVD#2035: Use of insecure MD2, MD4, MD5, or SHA1 hash function., /opt/ros_noetic_ws/src/ros/rosbuild/core/rosbuild/bin/download_checkmd5.py:81 - ```yaml

{

"id": 2035,

"title": "RVD#2035: Use of insecure MD2, MD4, MD5, or SHA1 hash function., /opt/ros_noetic_ws/src/ros/rosbuild/core/rosbuild/bin/download_checkmd5.py:81",

"type": "bug",

"description": "HIGH confidence of MEDIUM severity bug. Use of insecure MD2, MD4, MD5, or SHA1 hash function. at /opt/ros_noetic_ws/src/ros/rosbuild/core/rosbuild/bin/download_checkmd5.py:81 See links for more info on the bug.",

"cwe": "None",

"cve": "None",

"keywords": [

"bandit",

"bug",

"static analysis",

"testing",

"triage",

"bug"

],

"system": "",

"vendor": null,

"severity": {

"rvss-score": 0,

"rvss-vector": "",

"severity-description": "",

"cvss-score": 0,

"cvss-vector": ""

},

"links": [

"https://github.com/aliasrobotics/RVD/issues/2035",

"https://bandit.readthedocs.io/en/latest/blacklists/blacklist_calls.html#b303-md5"

],

"flaw": {

"phase": "testing",

"specificity": "subject-specific",

"architectural-location": "application-specific",

"application": "N/A",

"subsystem": "N/A",

"package": "N/A",

"languages": "None",

"date-detected": "2020-05-29 (09:20)",

"detected-by": "Alias Robotics",

"detected-by-method": "testing static",

"date-reported": "2020-05-29 (09:20)",

"reported-by": "Alias Robotics",

"reported-by-relationship": "automatic",

"issue": "https://github.com/aliasrobotics/RVD/issues/2035",

"reproducibility": "always",

"trace": "/opt/ros_noetic_ws/src/ros/rosbuild/core/rosbuild/bin/download_checkmd5.py:81",

"reproduction": "See artifacts below (if available)",

"reproduction-image": ""

},

"exploitation": {

"description": "",

"exploitation-image": "",

"exploitation-vector": ""

},

"mitigation": {

"description": "",

"pull-request": "",

"date-mitigation": ""

}

}

``` | test | rvd use of insecure or hash function opt ros noetic ws src ros rosbuild core rosbuild bin download py yaml id title rvd use of insecure or hash function opt ros noetic ws src ros rosbuild core rosbuild bin download py type bug description high confidence of medium severity bug use of insecure or hash function at opt ros noetic ws src ros rosbuild core rosbuild bin download py see links for more info on the bug cwe none cve none keywords bandit bug static analysis testing triage bug system vendor null severity rvss score rvss vector severity description cvss score cvss vector links flaw phase testing specificity subject specific architectural location application specific application n a subsystem n a package n a languages none date detected detected by alias robotics detected by method testing static date reported reported by alias robotics reported by relationship automatic issue reproducibility always trace opt ros noetic ws src ros rosbuild core rosbuild bin download py reproduction see artifacts below if available reproduction image exploitation description exploitation image exploitation vector mitigation description pull request date mitigation | 1 |

225,905 | 17,929,496,553 | IssuesEvent | 2021-09-10 07:16:08 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed | C-test-failure O-robot O-roachtest branch-release-21.2 | roachtest.scbench/randomload/nodes=3/ops=10000/conc=20 [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=3421825&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3421825&tab=artifacts#/scbench/randomload/nodes=3/ops=10000/conc=20) on release-21.2 @ [99a4816fc272228a63df20dae3cc41d235e705f3](https://github.com/cockroachdb/cockroach/commits/99a4816fc272228a63df20dae3cc41d235e705f3):

```

| }

| {

| "workerId": 0,

| "clientTimestamp": "07:15:46.030643",

| "ops": [

| "BEGIN",

| "DROP SEQUENCE public.seq573"

| ],

| "expectedExecErrors": "42P01",

| "expectedCommitErrors": "",

| "message": "ROLLBACK; Successfully got expected execution error: ERROR: relation \"public.seq573\" does not exist (SQLSTATE 42P01)"

| }

| {

| "workerId": 0,

| "clientTimestamp": "07:15:46.135861",

| "ops": [

| "BEGIN",

| "DROP TABLE IF EXISTS public.table550 CASCADE",

| "ALTER DATABASE schemachange ADD REGION \"us-east1\""

| ],

| "expectedExecErrors": "42710",

| "expectedCommitErrors": "",

| "message": "ROLLBACK; Successfully got expected execution error: ERROR: region \"us-east1\" already added to database (SQLSTATE 42710)"

| }

| {

| "workerId": 0,

| "clientTimestamp": "07:15:46.068299",

| "ops": [

| "BEGIN",

| "CREATE TABLE IF NOT EXISTS public.table603 (col603_604 NAME NULL, col603_605 GEOMETRY, col603_606 UUID, col603_607 FLOAT4 NULL, col603_608 TIMESTAMPTZ, col603_609 JSONB NULL, col603_610 FLOAT4, col603_611 \"char\" NULL, col603_612 INTERVAL NULL, col603_613 NAME NOT NULL, col603_614 REGCLASS NULL, col603_615 REGROLE NOT NULL, col603_616 REGPROCEDURE NOT NULL, col603_617 FLOAT4 NULL AS (col603_607 + col603_610) VIRTUAL, col603_618 STRING NULL AS (lower(col603_604)) STORED, col603_619 FLOAT4 NULL AS (col603_607 + col603_610) VIRTUAL, col603_620 FLOAT4 AS (col603_610 + col603_607) VIRTUAL, col603_621 STRING AS (lower(CAST(col603_605 AS STRING))) STORED, col603_622 STRING NOT NULL AS (lower(CAST(col603_616 AS STRING))) STORED, UNIQUE (lower(CAST(col603_614 AS STRING)), col603_621 ASC, col603_604, col603_619 ASC, col603_607 ASC, col603_622 ASC, col603_618 DESC), UNIQUE (col603_611), FAMILY (col603_612, col603_606), FAMILY (col603_607, col603_621), FAMILY (col603_610), FAMILY (col603_605, col603_618, col603_616), FAMILY (col603_615, col603_609), FAMILY (col603_608), FAMILY (col603_622), FAMILY (col603_611), FAMILY (col603_614, col603_613), FAMILY (col603_604))",

| "DROP SCHEMA \"schema625\" CASCADE"

| ],

| "expectedExecErrors": "3F000",

| "expectedCommitErrors": "",

| "message": "ROLLBACK; Successfully got expected execution error: ERROR: unknown schema \"schema625\" (SQLSTATE 3F000)"

| }

| {

| "workerId": 0,

| "clientTimestamp": "07:15:46.126758",

| "ops": [

| "BEGIN",

| "DROP VIEW IF EXISTS public.view593",

| "DROP TABLE IF EXISTS public.table550 CASCADE"

| ],

| "expectedExecErrors": "",

| "expectedCommitErrors": "",

| "message": "***UNEXPECTED ERROR; Failed to generate a random operation: ERROR: relation \"public.table550\" does not exist (SQLSTATE 42P01)"

| }

Wraps: (4) exit status 20

Error types: (1) *withstack.withStack (2) *errutil.withPrefix (3) *cluster.WithCommandDetails (4) *exec.ExitError

```

<details><summary>Reproduce</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

</p>

</details>

<details><summary>Same failure on other branches</summary>

<p>

- #63496 roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed [C-test-failure O-roachtest O-robot T-sql-schema branch-63484]

- #63373 roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed [C-test-failure O-roachtest O-robot T-sql-schema branch-release-20.2]

- #61688 roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed [C-test-failure O-roachtest O-robot T-sql-schema branch-release-21.1]

- #56230 roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed [C-test-failure O-roachtest O-robot T-sql-schema branch-master]

</p>

</details>

/cc @cockroachdb/sql-schema

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*scbench/randomload/nodes=3/ops=10000/conc=20.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| 2.0 | roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed - roachtest.scbench/randomload/nodes=3/ops=10000/conc=20 [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=3421825&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=3421825&tab=artifacts#/scbench/randomload/nodes=3/ops=10000/conc=20) on release-21.2 @ [99a4816fc272228a63df20dae3cc41d235e705f3](https://github.com/cockroachdb/cockroach/commits/99a4816fc272228a63df20dae3cc41d235e705f3):

```

| }

| {

| "workerId": 0,

| "clientTimestamp": "07:15:46.030643",

| "ops": [

| "BEGIN",

| "DROP SEQUENCE public.seq573"

| ],

| "expectedExecErrors": "42P01",

| "expectedCommitErrors": "",

| "message": "ROLLBACK; Successfully got expected execution error: ERROR: relation \"public.seq573\" does not exist (SQLSTATE 42P01)"

| }

| {

| "workerId": 0,

| "clientTimestamp": "07:15:46.135861",

| "ops": [

| "BEGIN",

| "DROP TABLE IF EXISTS public.table550 CASCADE",

| "ALTER DATABASE schemachange ADD REGION \"us-east1\""

| ],

| "expectedExecErrors": "42710",

| "expectedCommitErrors": "",

| "message": "ROLLBACK; Successfully got expected execution error: ERROR: region \"us-east1\" already added to database (SQLSTATE 42710)"

| }

| {

| "workerId": 0,

| "clientTimestamp": "07:15:46.068299",

| "ops": [

| "BEGIN",

| "CREATE TABLE IF NOT EXISTS public.table603 (col603_604 NAME NULL, col603_605 GEOMETRY, col603_606 UUID, col603_607 FLOAT4 NULL, col603_608 TIMESTAMPTZ, col603_609 JSONB NULL, col603_610 FLOAT4, col603_611 \"char\" NULL, col603_612 INTERVAL NULL, col603_613 NAME NOT NULL, col603_614 REGCLASS NULL, col603_615 REGROLE NOT NULL, col603_616 REGPROCEDURE NOT NULL, col603_617 FLOAT4 NULL AS (col603_607 + col603_610) VIRTUAL, col603_618 STRING NULL AS (lower(col603_604)) STORED, col603_619 FLOAT4 NULL AS (col603_607 + col603_610) VIRTUAL, col603_620 FLOAT4 AS (col603_610 + col603_607) VIRTUAL, col603_621 STRING AS (lower(CAST(col603_605 AS STRING))) STORED, col603_622 STRING NOT NULL AS (lower(CAST(col603_616 AS STRING))) STORED, UNIQUE (lower(CAST(col603_614 AS STRING)), col603_621 ASC, col603_604, col603_619 ASC, col603_607 ASC, col603_622 ASC, col603_618 DESC), UNIQUE (col603_611), FAMILY (col603_612, col603_606), FAMILY (col603_607, col603_621), FAMILY (col603_610), FAMILY (col603_605, col603_618, col603_616), FAMILY (col603_615, col603_609), FAMILY (col603_608), FAMILY (col603_622), FAMILY (col603_611), FAMILY (col603_614, col603_613), FAMILY (col603_604))",

| "DROP SCHEMA \"schema625\" CASCADE"

| ],

| "expectedExecErrors": "3F000",

| "expectedCommitErrors": "",

| "message": "ROLLBACK; Successfully got expected execution error: ERROR: unknown schema \"schema625\" (SQLSTATE 3F000)"

| }

| {

| "workerId": 0,

| "clientTimestamp": "07:15:46.126758",

| "ops": [

| "BEGIN",

| "DROP VIEW IF EXISTS public.view593",

| "DROP TABLE IF EXISTS public.table550 CASCADE"

| ],

| "expectedExecErrors": "",

| "expectedCommitErrors": "",

| "message": "***UNEXPECTED ERROR; Failed to generate a random operation: ERROR: relation \"public.table550\" does not exist (SQLSTATE 42P01)"

| }

Wraps: (4) exit status 20

Error types: (1) *withstack.withStack (2) *errutil.withPrefix (3) *cluster.WithCommandDetails (4) *exec.ExitError

```

<details><summary>Reproduce</summary>

<p>

See: [roachtest README](https://github.com/cockroachdb/cockroach/blob/master/pkg/cmd/roachtest/README.md)

</p>

</details>

<details><summary>Same failure on other branches</summary>

<p>

- #63496 roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed [C-test-failure O-roachtest O-robot T-sql-schema branch-63484]

- #63373 roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed [C-test-failure O-roachtest O-robot T-sql-schema branch-release-20.2]

- #61688 roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed [C-test-failure O-roachtest O-robot T-sql-schema branch-release-21.1]

- #56230 roachtest: scbench/randomload/nodes=3/ops=10000/conc=20 failed [C-test-failure O-roachtest O-robot T-sql-schema branch-master]

</p>

</details>

/cc @cockroachdb/sql-schema

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*scbench/randomload/nodes=3/ops=10000/conc=20.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

| test | roachtest scbench randomload nodes ops conc failed roachtest scbench randomload nodes ops conc with on release workerid clienttimestamp ops begin drop sequence public expectedexecerrors expectedcommiterrors message rollback successfully got expected execution error error relation public does not exist sqlstate workerid clienttimestamp ops begin drop table if exists public cascade alter database schemachange add region us expectedexecerrors expectedcommiterrors message rollback successfully got expected execution error error region us already added to database sqlstate workerid clienttimestamp ops begin create table if not exists public name null geometry uuid null timestamptz jsonb null char null interval null name not null regclass null regrole not null regprocedure not null null as virtual string null as lower stored null as virtual as virtual string as lower cast as string stored string not null as lower cast as string stored unique lower cast as string asc asc asc asc desc unique family family family family family family family family family family drop schema cascade expectedexecerrors expectedcommiterrors message rollback successfully got expected execution error error unknown schema sqlstate workerid clienttimestamp ops begin drop view if exists public drop table if exists public cascade expectedexecerrors expectedcommiterrors message unexpected error failed to generate a random operation error relation public does not exist sqlstate wraps exit status error types withstack withstack errutil withprefix cluster withcommanddetails exec exiterror reproduce see same failure on other branches roachtest scbench randomload nodes ops conc failed roachtest scbench randomload nodes ops conc failed roachtest scbench randomload nodes ops conc failed roachtest scbench randomload nodes ops conc failed cc cockroachdb sql schema | 1 |

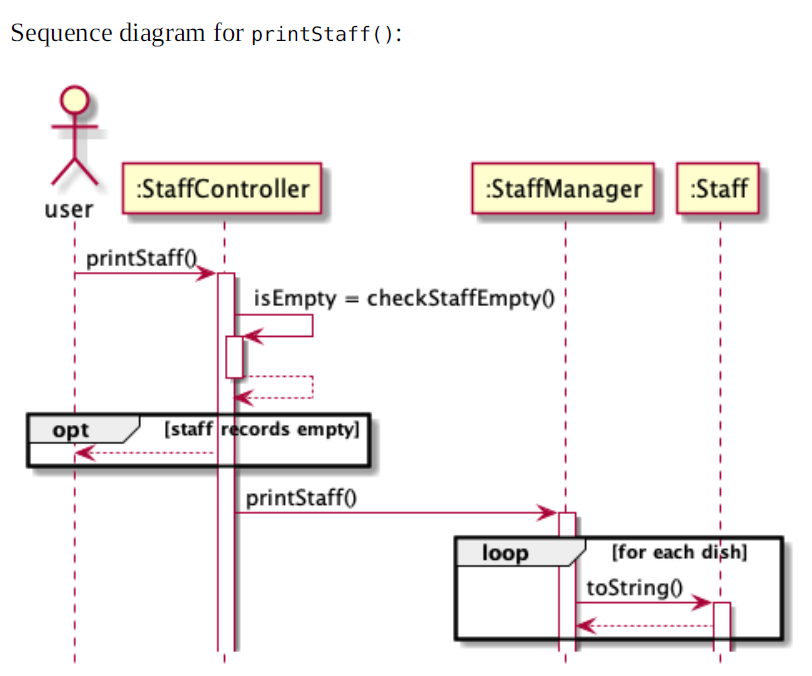

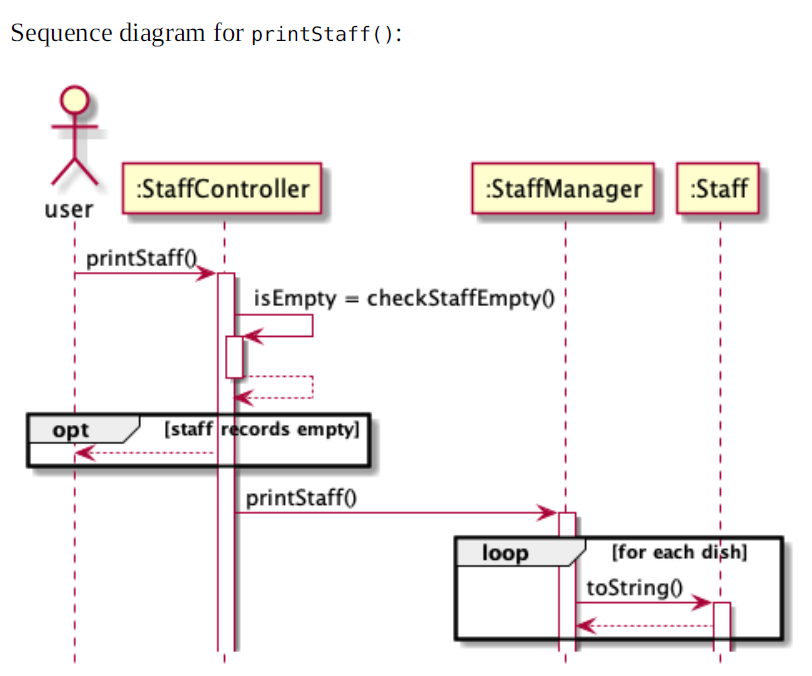

283,115 | 21,316,042,022 | IssuesEvent | 2022-04-16 09:40:06 | allyfern72/pe | https://api.github.com/repos/allyfern72/pe | opened | [DG] Typo in `printStaff()` sequence diagram | severity.VeryLow type.DocumentationBug | It looks like it should have been "for each staff" instead of "for each dish" in the loop frame.

<!--session: 1650096024879-64fa4b51-68b4-4157-8052-f142c2406b7b-->

<!--Version: Web v3.4.2--> | 1.0 | [DG] Typo in `printStaff()` sequence diagram - It looks like it should have been "for each staff" instead of "for each dish" in the loop frame.

<!--session: 1650096024879-64fa4b51-68b4-4157-8052-f142c2406b7b-->

<!--Version: Web v3.4.2--> | non_test | typo in printstaff sequence diagram it looks like it should have been for each staff instead of for each dish in the loop frame | 0 |

23,046 | 11,837,205,410 | IssuesEvent | 2020-03-23 13:50:29 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Built into a bot? | Pri1 cognitive-services/svc cxp product-question qna-maker/subsvc triaged | Is this something that's built into the default bot for Q&A? How do I enable rating suggestions in the bot?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: fcd7d96a-7def-6b8b-2753-2904737ac743

* Version Independent ID: e71a1b3f-da7c-e79e-8bdf-54a5ecf8d8ca

* Content: [Active learning suggestions - QnA Maker - Azure Cognitive Services](https://docs.microsoft.com/en-us/azure/cognitive-services/qnamaker/concepts/active-learning-suggestions)

* Content Source: [articles/cognitive-services/QnAMaker/Concepts/active-learning-suggestions.md](https://github.com/Microsoft/azure-docs/blob/master/articles/cognitive-services/QnAMaker/Concepts/active-learning-suggestions.md)

* Service: **cognitive-services**

* Sub-service: **qna-maker**

* GitHub Login: @diberry

* Microsoft Alias: **diberry** | 1.0 | Built into a bot? - Is this something that's built into the default bot for Q&A? How do I enable rating suggestions in the bot?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: fcd7d96a-7def-6b8b-2753-2904737ac743

* Version Independent ID: e71a1b3f-da7c-e79e-8bdf-54a5ecf8d8ca

* Content: [Active learning suggestions - QnA Maker - Azure Cognitive Services](https://docs.microsoft.com/en-us/azure/cognitive-services/qnamaker/concepts/active-learning-suggestions)

* Content Source: [articles/cognitive-services/QnAMaker/Concepts/active-learning-suggestions.md](https://github.com/Microsoft/azure-docs/blob/master/articles/cognitive-services/QnAMaker/Concepts/active-learning-suggestions.md)

* Service: **cognitive-services**

* Sub-service: **qna-maker**

* GitHub Login: @diberry

* Microsoft Alias: **diberry** | non_test | built into a bot is this something that s built into the default bot for q a how do i enable rating suggestions in the bot document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service cognitive services sub service qna maker github login diberry microsoft alias diberry | 0 |

5,111 | 3,510,141,865 | IssuesEvent | 2016-01-09 07:31:06 | SouthAfricaDigitalScience/python-deploy | https://api.github.com/repos/SouthAfricaDigitalScience/python-deploy | closed | python 3 check build fails on u1404 | build failures | The python 3 build fails on u1404 with gcc-4 :

It seems that python installation is not found :

```

Our python is

/usr/bin/python

```

There is indeed `python3`... not sure if this needs to be fixed. | 1.0 | python 3 check build fails on u1404 - The python 3 build fails on u1404 with gcc-4 :

It seems that python installation is not found :

```

Our python is

/usr/bin/python

```

There is indeed `python3`... not sure if this needs to be fixed. | non_test | python check build fails on the python build fails on with gcc it seems that python installation is not found our python is usr bin python there is indeed not sure if this needs to be fixed | 0 |

154,650 | 19,751,327,195 | IssuesEvent | 2022-01-15 04:56:24 | turkdevops/atom | https://api.github.com/repos/turkdevops/atom | closed | CVE-2021-3807 (High) detected in ansi-regex-4.1.0.tgz, ansi-regex-3.0.0.tgz - autoclosed | security vulnerability | ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-4.1.0.tgz</b>, <b>ansi-regex-3.0.0.tgz</b></p></summary>

<p>

<details><summary><b>ansi-regex-4.1.0.tgz</b></p></summary>

<p>Regular expression for matching ANSI escape codes</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-regex/-/ansi-regex-4.1.0.tgz">https://registry.npmjs.org/ansi-regex/-/ansi-regex-4.1.0.tgz</a></p>

<p>Path to dependency file: /script/package.json</p>

<p>Path to vulnerable library: /script/node_modules/inquirer/node_modules/strip-ansi/node_modules/ansi-regex/package.json,/script/node_modules/table/node_modules/ansi-regex/package.json</p>

<p>

Dependency Hierarchy:

- eslint-5.16.0.tgz (Root Library)

- inquirer-6.3.1.tgz

- strip-ansi-5.2.0.tgz

- :x: **ansi-regex-4.1.0.tgz** (Vulnerable Library)

</details>

<details><summary><b>ansi-regex-3.0.0.tgz</b></p></summary>

<p>Regular expression for matching ANSI escape codes</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-regex/-/ansi-regex-3.0.0.tgz">https://registry.npmjs.org/ansi-regex/-/ansi-regex-3.0.0.tgz</a></p>

<p>Path to dependency file: /script/package.json</p>

<p>Path to vulnerable library: /script/node_modules/npm/node_modules/string-width/node_modules/ansi-regex/package.json,/packages/update-package-dependencies/node_modules/table/node_modules/ansi-regex/package.json,/script/node_modules/@wdio/logger/node_modules/ansi-regex/package.json,/script/node_modules/eslint/node_modules/ansi-regex/package.json,/script/node_modules/inquirer/node_modules/ansi-regex/package.json,/script/node_modules/stylelint/node_modules/ansi-regex/package.json,/script/node_modules/npm/node_modules/cliui/node_modules/ansi-regex/package.json,/packages/one-light-ui/node_modules/ansi-regex/package.json,/packages/one-dark-ui/node_modules/ansi-regex/package.json,/packages/grammar-selector/node_modules/table/node_modules/ansi-regex/package.json</p>

<p>

Dependency Hierarchy:

- stylelint-9.3.0.tgz (Root Library)

- string-width-2.1.1.tgz

- strip-ansi-4.0.0.tgz

- :x: **ansi-regex-3.0.0.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/turkdevops/atom/commit/1eff9f4173420e33aa6739fdce981e7651e8f212">1eff9f4173420e33aa6739fdce981e7651e8f212</a></p>

<p>Found in base branch: <b>electron-upgrade</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ansi-regex is vulnerable to Inefficient Regular Expression Complexity

<p>Publish Date: 2021-09-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-3807>CVE-2021-3807</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://huntr.dev/bounties/5b3cf33b-ede0-4398-9974-800876dfd994/">https://huntr.dev/bounties/5b3cf33b-ede0-4398-9974-800876dfd994/</a></p>

<p>Release Date: 2021-09-17</p>

<p>Fix Resolution: ansi-regex - 5.0.1,6.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-3807 (High) detected in ansi-regex-4.1.0.tgz, ansi-regex-3.0.0.tgz - autoclosed - ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-4.1.0.tgz</b>, <b>ansi-regex-3.0.0.tgz</b></p></summary>

<p>

<details><summary><b>ansi-regex-4.1.0.tgz</b></p></summary>

<p>Regular expression for matching ANSI escape codes</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-regex/-/ansi-regex-4.1.0.tgz">https://registry.npmjs.org/ansi-regex/-/ansi-regex-4.1.0.tgz</a></p>

<p>Path to dependency file: /script/package.json</p>

<p>Path to vulnerable library: /script/node_modules/inquirer/node_modules/strip-ansi/node_modules/ansi-regex/package.json,/script/node_modules/table/node_modules/ansi-regex/package.json</p>

<p>

Dependency Hierarchy:

- eslint-5.16.0.tgz (Root Library)

- inquirer-6.3.1.tgz

- strip-ansi-5.2.0.tgz

- :x: **ansi-regex-4.1.0.tgz** (Vulnerable Library)

</details>

<details><summary><b>ansi-regex-3.0.0.tgz</b></p></summary>

<p>Regular expression for matching ANSI escape codes</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-regex/-/ansi-regex-3.0.0.tgz">https://registry.npmjs.org/ansi-regex/-/ansi-regex-3.0.0.tgz</a></p>

<p>Path to dependency file: /script/package.json</p>