Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

680,535 | 23,275,581,958 | IssuesEvent | 2022-08-05 06:48:02 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.oregon.gov - see bug description | browser-firefox priority-normal type-no-css engine-gecko | <!-- @browser: Firefox 103.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:103.0) Gecko/20100101 Firefox/103.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/108526 -->

**URL**: https://www.oregon.gov/DEQ/Pages/index.aspx?utm_source=pocket_mylist

**Browser / Version**: Firefox 103.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Edge

**Problem type**: Something else

**Description**: on English pages in large font, I see this A LOT "arrow_drop_down"

**Steps to Reproduce**:

I opened my firefox browser and went to a website and saw "arrow_drop_down" here and there on the websites I visit. It's clearly not supposed to be there.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2022/8/db7c01ae-5e10-4be0-83e8-f66ef16a1044.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | www.oregon.gov - see bug description - <!-- @browser: Firefox 103.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:103.0) Gecko/20100101 Firefox/103.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/108526 -->

**URL**: https://www.oregon.gov/DEQ/Pages/index.aspx?utm_source=pocket_mylist

**Browser / Version**: Firefox 103.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes Edge

**Problem type**: Something else

**Description**: on English pages in large font, I see this A LOT "arrow_drop_down"

**Steps to Reproduce**:

I opened my firefox browser and went to a website and saw "arrow_drop_down" here and there on the websites I visit. It's clearly not supposed to be there.

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2022/8/db7c01ae-5e10-4be0-83e8-f66ef16a1044.jpg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>None</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_test | see bug description url browser version firefox operating system windows tested another browser yes edge problem type something else description on english pages in large font i see this a lot arrow drop down steps to reproduce i opened my firefox browser and went to a website and saw arrow drop down here and there on the websites i visit it s clearly not supposed to be there view the screenshot img alt screenshot src browser configuration none from with ❤️ | 0 |

469,919 | 13,527,949,149 | IssuesEvent | 2020-09-15 16:03:20 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | opened | Missing link in 3.2 doc | Docs/Has Impact Priority/Normal Type/Docs | ### Description:

Link on the following page

https://apim.docs.wso2.com/en/latest/develop/extending-api-manager/extending-workflows/configuring-http-redirection-for-workflows/

on last paragraf

Invoking the API Manager¶

To invoke the API Manager from a third party entity, see **Invoking the API Manager from the BPEL Engine** .

link is not working

https://apim.docs.wso2.com/en/latest/develop/extending-api-manager/extending-workflows/configuring-http-redirection-for-workflows/invoking-the-api-manager-from-the-bpel-engine

| 1.0 | Missing link in 3.2 doc - ### Description:

Link on the following page

https://apim.docs.wso2.com/en/latest/develop/extending-api-manager/extending-workflows/configuring-http-redirection-for-workflows/

on last paragraf

Invoking the API Manager¶

To invoke the API Manager from a third party entity, see **Invoking the API Manager from the BPEL Engine** .

link is not working

https://apim.docs.wso2.com/en/latest/develop/extending-api-manager/extending-workflows/configuring-http-redirection-for-workflows/invoking-the-api-manager-from-the-bpel-engine

| non_test | missing link in doc description link on the following page on last paragraf invoking the api manager¶ to invoke the api manager from a third party entity see invoking the api manager from the bpel engine link is not working | 0 |

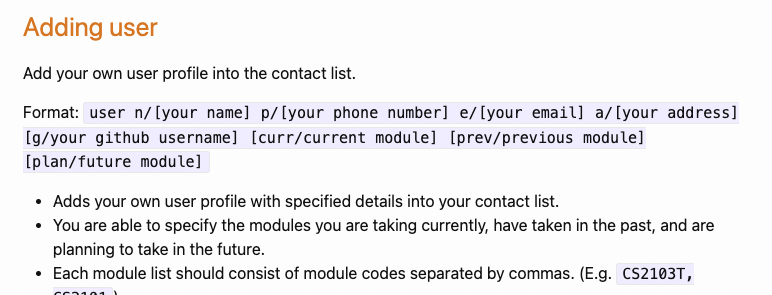

346,126 | 24,886,567,819 | IssuesEvent | 2022-10-28 08:17:59 | craeyeons/ped | https://api.github.com/repos/craeyeons/ped | opened | Misuse of Square Brackets in UG | type.DocumentationBug severity.Low |

For example in the "adding user" command, name is not an optional field, however square brackets are used to surround it which contradicts the instructions of the user guide.

<!--session: 1666944929838-03c33bcc-827d-4101-bd42-4c0ffb008d04-->

<!--Version: Web v3.4.4--> | 1.0 | Misuse of Square Brackets in UG -

For example in the "adding user" command, name is not an optional field, however square brackets are used to surround it which contradicts the instructions of the user guide.

<!--session: 1666944929838-03c33bcc-827d-4101-bd42-4c0ffb008d04-->

<!--Version: Web v3.4.4--> | non_test | misuse of square brackets in ug for example in the adding user command name is not an optional field however square brackets are used to surround it which contradicts the instructions of the user guide | 0 |

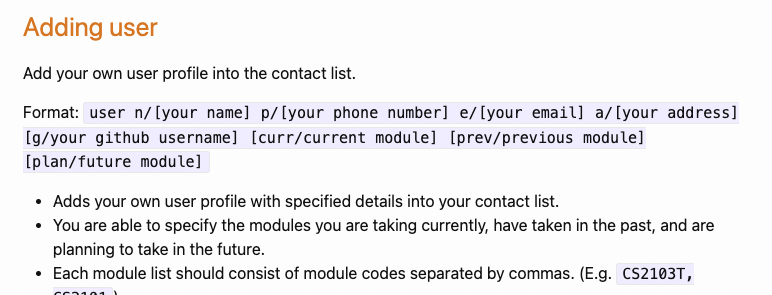

238,186 | 19,701,966,777 | IssuesEvent | 2022-01-12 17:29:35 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Removing title support will partially hide the first block floating menu | Needs Testing | ### Description

When registering a new custom post type through [register_post_type()](https://developer.wordpress.org/reference/functions/register_post_type/), and setting the `support` parameter, removing the `title` core feature will partially hide the first page block floating menu.

### Step-by-step reproduction instructions

1. Remove support for the `title` feature from a post type.

```php

<?php

add_action( 'init', function () {

remove_post_type_support( 'post', 'title' );

} );

```

2. Add a new post.

3. The first block floating menu is partially hidden.

### Screenshots, screen recording, code snippet

### Environment info

- Wordpress 5.8.2, matching Gutenberg on default theme.

- Chrome Version 96.0.4664.110 (Build officiel) (64 bits)

- Desktop Windows 10

### Please confirm that you have searched existing issues in the repo.

Yes

### Please confirm that you have tested with all plugins deactivated except Gutenberg.

Yes | 1.0 | Removing title support will partially hide the first block floating menu - ### Description

When registering a new custom post type through [register_post_type()](https://developer.wordpress.org/reference/functions/register_post_type/), and setting the `support` parameter, removing the `title` core feature will partially hide the first page block floating menu.

### Step-by-step reproduction instructions

1. Remove support for the `title` feature from a post type.

```php

<?php

add_action( 'init', function () {

remove_post_type_support( 'post', 'title' );

} );

```

2. Add a new post.

3. The first block floating menu is partially hidden.

### Screenshots, screen recording, code snippet

### Environment info

- Wordpress 5.8.2, matching Gutenberg on default theme.

- Chrome Version 96.0.4664.110 (Build officiel) (64 bits)

- Desktop Windows 10

### Please confirm that you have searched existing issues in the repo.

Yes

### Please confirm that you have tested with all plugins deactivated except Gutenberg.

Yes | test | removing title support will partially hide the first block floating menu description when registering a new custom post type through and setting the support parameter removing the title core feature will partially hide the first page block floating menu step by step reproduction instructions remove support for the title feature from a post type php php add action init function remove post type support post title add a new post the first block floating menu is partially hidden screenshots screen recording code snippet environment info wordpress matching gutenberg on default theme chrome version build officiel bits desktop windows please confirm that you have searched existing issues in the repo yes please confirm that you have tested with all plugins deactivated except gutenberg yes | 1 |

124,711 | 16,638,290,754 | IssuesEvent | 2021-06-04 03:53:30 | PostHog/posthog | https://api.github.com/repos/PostHog/posthog | opened | Legend key in chart | UI/UX core-experience design enhancement | ## Is your feature request related to a problem?

Currently we show the legend table describing each graph series at the bottom of the graph. This is not ideal because a) you have to know it's there, b) you have to scroll which makes visualization more difficult and c) doesn't work well for screenshots.

## Describe the solution you'd like

I'd like us to introduce a legend key within the graph. See one example below.

<img width="578" alt="Screen Shot 2021-06-03 at 8 43 36 PM" src="https://user-images.githubusercontent.com/25164963/120729143-6736dd80-c4ac-11eb-93ec-3a4c6b35713d.png">

## Describe alternatives you've considered

Keep only the legend table at the bottom.

## Additional context

Related to #4492.

#### *Thank you* for your feature request – we love each and every one!

| 1.0 | Legend key in chart - ## Is your feature request related to a problem?

Currently we show the legend table describing each graph series at the bottom of the graph. This is not ideal because a) you have to know it's there, b) you have to scroll which makes visualization more difficult and c) doesn't work well for screenshots.

## Describe the solution you'd like

I'd like us to introduce a legend key within the graph. See one example below.

<img width="578" alt="Screen Shot 2021-06-03 at 8 43 36 PM" src="https://user-images.githubusercontent.com/25164963/120729143-6736dd80-c4ac-11eb-93ec-3a4c6b35713d.png">

## Describe alternatives you've considered

Keep only the legend table at the bottom.

## Additional context

Related to #4492.

#### *Thank you* for your feature request – we love each and every one!

| non_test | legend key in chart is your feature request related to a problem currently we show the legend table describing each graph series at the bottom of the graph this is not ideal because a you have to know it s there b you have to scroll which makes visualization more difficult and c doesn t work well for screenshots describe the solution you d like i d like us to introduce a legend key within the graph see one example below img width alt screen shot at pm src describe alternatives you ve considered keep only the legend table at the bottom additional context related to thank you for your feature request – we love each and every one | 0 |

203,510 | 15,372,903,736 | IssuesEvent | 2021-03-02 11:52:57 | chartjs/Chart.js | https://api.github.com/repos/chartjs/Chart.js | closed | chart.js:7 Uncaught (in promise) TypeError: Cannot set property '_index' of undefined | status: needs test case type: bug | ## Environment

Chart.js 2.9.4

Google Chrome Version 88.0.4324.190 (Official Build) (64-bit)

```

function update(unit, window) {

unit = unit || this.root.find(`.controllers .units .active`).attr('value');

window = window || this.root.find(`.controllers .windows .active`).attr('value');

if (unit && window) {

this.currentUnit = unit;

this.currentWindow = window;

for (const metal of this.keys) {

const metalDatasetIndex = this.lineChart.datasetIndexes[metal];

if (metalDatasetIndex != undefined && metalDatasetIndex != null) {

const dataset = this.lineChart.data.datasets[metalDatasetIndex];

if (dataset) {

dataset.data = getData(metal, unit, window);

if (dataset.data.length > 600) dataset.pointRadius = 0;

else dataset.pointRadius = 1;

}

}

}

}

this.lineChart.update(); // error happens here

//this.lineChart.resetZoom();

}

```

Looks like something with my data but I can't debug it. | 1.0 | chart.js:7 Uncaught (in promise) TypeError: Cannot set property '_index' of undefined - ## Environment

Chart.js 2.9.4

Google Chrome Version 88.0.4324.190 (Official Build) (64-bit)

```

function update(unit, window) {

unit = unit || this.root.find(`.controllers .units .active`).attr('value');

window = window || this.root.find(`.controllers .windows .active`).attr('value');

if (unit && window) {

this.currentUnit = unit;

this.currentWindow = window;

for (const metal of this.keys) {

const metalDatasetIndex = this.lineChart.datasetIndexes[metal];

if (metalDatasetIndex != undefined && metalDatasetIndex != null) {

const dataset = this.lineChart.data.datasets[metalDatasetIndex];

if (dataset) {

dataset.data = getData(metal, unit, window);

if (dataset.data.length > 600) dataset.pointRadius = 0;

else dataset.pointRadius = 1;

}

}

}

}

this.lineChart.update(); // error happens here

//this.lineChart.resetZoom();

}

```

Looks like something with my data but I can't debug it. | test | chart js uncaught in promise typeerror cannot set property index of undefined environment chart js google chrome version official build bit function update unit window unit unit this root find controllers units active attr value window window this root find controllers windows active attr value if unit window this currentunit unit this currentwindow window for const metal of this keys const metaldatasetindex this linechart datasetindexes if metaldatasetindex undefined metaldatasetindex null const dataset this linechart data datasets if dataset dataset data getdata metal unit window if dataset data length dataset pointradius else dataset pointradius this linechart update error happens here this linechart resetzoom looks like something with my data but i can t debug it | 1 |

25,305 | 12,236,714,990 | IssuesEvent | 2020-05-04 16:48:13 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | opened | Changes and Reports Section Access Rules | Impact: 3-Minor Need: 3-Could Have Product: Vision Zero Crash Data System Service: Dev Type: Enhancement Workgroup: VZ | Make the Changes and Reports sections in VZE only accessible to Admins and IT Supervisors | 1.0 | Changes and Reports Section Access Rules - Make the Changes and Reports sections in VZE only accessible to Admins and IT Supervisors | non_test | changes and reports section access rules make the changes and reports sections in vze only accessible to admins and it supervisors | 0 |

1,953 | 2,579,451,029 | IssuesEvent | 2015-02-13 10:21:01 | ajency/Foodstree | https://api.github.com/repos/ajency/Foodstree | closed | Error while creating a seller if the 'Check if billing address same as registered address' is checked | bug Pushed to live site Pushed to test site | Steps:

1 Create a new seller

2.Check the field 'Check if billing address same as registered address' field

3. Enter the passwords such that there is mismatch

Current behaviour: On entering the correct password the field 'Check if billing address same as registered address' stays checked and the fields below it are also displayed

Expected behaviour:The fields under shipping address should not be displayed if 'Check if billing address same as registered address' is checked.

| 1.0 | Error while creating a seller if the 'Check if billing address same as registered address' is checked - Steps:

1 Create a new seller

2.Check the field 'Check if billing address same as registered address' field

3. Enter the passwords such that there is mismatch

Current behaviour: On entering the correct password the field 'Check if billing address same as registered address' stays checked and the fields below it are also displayed

Expected behaviour:The fields under shipping address should not be displayed if 'Check if billing address same as registered address' is checked.

| test | error while creating a seller if the check if billing address same as registered address is checked steps create a new seller check the field check if billing address same as registered address field enter the passwords such that there is mismatch current behaviour on entering the correct password the field check if billing address same as registered address stays checked and the fields below it are also displayed expected behaviour the fields under shipping address should not be displayed if check if billing address same as registered address is checked | 1 |

379,055 | 11,212,590,711 | IssuesEvent | 2020-01-06 17:57:29 | googleapis/nodejs-logging-bunyan | https://api.github.com/repos/googleapis/nodejs-logging-bunyan | closed | NodeJS 10: Async bunyan logging crashes the cloud function if await is not used while logging | priority: p2 type: bug | Issue:

- nodeJS 10 Cloud function runs but results in a crash while using bunyan logs and publishing to another topic!

## Environment details

- OS: Google cloud function

- Node.js version: v10.14.2

- npm version: 6.4.1

- `@google-cloud/logging-bunyan` version: 2.0.0

#### Steps to reproduce

1. Create a cloud function using nodeJS 10 runtime with pubsub as trigger

2. use bunyan logging and [redirect logs](https://github.com/googleapis/nodejs-logging-bunyan/issues/291#issuecomment-526758642) to cloud functions.

3. Try and publish to a sample topic and use bunyan logs right after publish

Error reported:

```

Ignoring extra callback call

```

```

Function execution took 1852 ms, finished with status: 'crash'

```

```

{

insertId: "000000-811aac99-2369-4f5b-801f-19364c10437c"

labels: {…}

logName: "projects/some-project/logs/cloudfunctions.googleapis.com%2Fcloud-functions"

receiveTimestamp: "2019-12-03T18:22:41.491846254Z"

resource: {

labels: {

function_name: "test-logger-lib-publish-duplicate"

project_id: "some-project"

region: "us-central1"

}

type: "cloud_function"

}

severity: "ERROR"

textPayload: "Error: Could not load the default credentials. Browse to https://cloud.google.com/docs/authentication/getting-started for more information.

at GoogleAuth.getApplicationDefaultAsync (/srv/functions/node_modules/google-auth-library/build/src/auth/googleauth.js:161:19)

at process._tickCallback (internal/process/next_tick.js:68:7)"

timestamp: "2019-12-03T18:22:40.664Z"

trace: "projects/some-project/traces/ce459298d8da7569d7fb40b07785d594"

}

```

Sample Code:

index.js

```const { PubSub } = require('@google-cloud/pubsub');

const bunyan = require('bunyan');

const { LoggingBunyan } = require('@google-cloud/logging-bunyan');

const loggingBunyan = new LoggingBunyan({

logName: process.env.LOG_NAME

});

function getLogger(logginglevel) {

let logger = bunyan.createLogger({

name: process.env.FUNCTION_TARGET,

level: logginglevel,

streams: [

loggingBunyan.stream()

]

});

logger.debug(`${process.env.FUNCTION_TARGET} :: ${process.env.LOG_NAME}`);

return logger;

}

const pubsub = new PubSub({

projectId: 'some-project'

});

exports.helloPubSub = async(event, context) => {

console.log('I am in!');

const logger = getLogger('debug');

const test_topic = pubsub.topic('logger-topic');

logger.debug('debug level test');

const data = Buffer.from('interesting', 'utf8');

await test_topic.publish(data);

logger.error('error level test');

logger.info('I am out');

};

```

package.json

```{

"name": "sample-pubsub",

"version": "0.0.1",

"dependencies": {

"@google-cloud/pubsub": "^1.0.0",

"bunyan": "^1.8.12",

"@google-cloud/logging-bunyan": "^2.0.0"

}

}

```

Note:

- The same code works fine for nodejs 8 runtime! This issue is only with nodejs 10 runtime.

- In the above index.js file, if I use await on the last logger.info('I am out') or all the logger calls, the function works like a charm!

Could anyone help me with what's wrong here?

Reference issues:

- [Async logging nodeJS 10](https://github.com/googleapis/nodejs-logging-bunyan/issues/304)

- [Redirect logs explicitly to cloud functions nodeJS 10](https://github.com/googleapis/nodejs-logging-bunyan/issues/291#issuecomment-526758642) | 1.0 | NodeJS 10: Async bunyan logging crashes the cloud function if await is not used while logging - Issue:

- nodeJS 10 Cloud function runs but results in a crash while using bunyan logs and publishing to another topic!

## Environment details

- OS: Google cloud function

- Node.js version: v10.14.2

- npm version: 6.4.1

- `@google-cloud/logging-bunyan` version: 2.0.0

#### Steps to reproduce

1. Create a cloud function using nodeJS 10 runtime with pubsub as trigger

2. use bunyan logging and [redirect logs](https://github.com/googleapis/nodejs-logging-bunyan/issues/291#issuecomment-526758642) to cloud functions.

3. Try and publish to a sample topic and use bunyan logs right after publish

Error reported:

```

Ignoring extra callback call

```

```

Function execution took 1852 ms, finished with status: 'crash'

```

```

{

insertId: "000000-811aac99-2369-4f5b-801f-19364c10437c"

labels: {…}

logName: "projects/some-project/logs/cloudfunctions.googleapis.com%2Fcloud-functions"

receiveTimestamp: "2019-12-03T18:22:41.491846254Z"

resource: {

labels: {

function_name: "test-logger-lib-publish-duplicate"

project_id: "some-project"

region: "us-central1"

}

type: "cloud_function"

}

severity: "ERROR"

textPayload: "Error: Could not load the default credentials. Browse to https://cloud.google.com/docs/authentication/getting-started for more information.

at GoogleAuth.getApplicationDefaultAsync (/srv/functions/node_modules/google-auth-library/build/src/auth/googleauth.js:161:19)

at process._tickCallback (internal/process/next_tick.js:68:7)"

timestamp: "2019-12-03T18:22:40.664Z"

trace: "projects/some-project/traces/ce459298d8da7569d7fb40b07785d594"

}

```

Sample Code:

index.js

```const { PubSub } = require('@google-cloud/pubsub');

const bunyan = require('bunyan');

const { LoggingBunyan } = require('@google-cloud/logging-bunyan');

const loggingBunyan = new LoggingBunyan({

logName: process.env.LOG_NAME

});

function getLogger(logginglevel) {

let logger = bunyan.createLogger({

name: process.env.FUNCTION_TARGET,

level: logginglevel,

streams: [

loggingBunyan.stream()

]

});

logger.debug(`${process.env.FUNCTION_TARGET} :: ${process.env.LOG_NAME}`);

return logger;

}

const pubsub = new PubSub({

projectId: 'some-project'

});

exports.helloPubSub = async(event, context) => {

console.log('I am in!');

const logger = getLogger('debug');

const test_topic = pubsub.topic('logger-topic');

logger.debug('debug level test');

const data = Buffer.from('interesting', 'utf8');

await test_topic.publish(data);

logger.error('error level test');

logger.info('I am out');

};

```

package.json

```{

"name": "sample-pubsub",

"version": "0.0.1",

"dependencies": {

"@google-cloud/pubsub": "^1.0.0",

"bunyan": "^1.8.12",

"@google-cloud/logging-bunyan": "^2.0.0"

}

}

```

Note:

- The same code works fine for nodejs 8 runtime! This issue is only with nodejs 10 runtime.

- In the above index.js file, if I use await on the last logger.info('I am out') or all the logger calls, the function works like a charm!

Could anyone help me with what's wrong here?

Reference issues:

- [Async logging nodeJS 10](https://github.com/googleapis/nodejs-logging-bunyan/issues/304)

- [Redirect logs explicitly to cloud functions nodeJS 10](https://github.com/googleapis/nodejs-logging-bunyan/issues/291#issuecomment-526758642) | non_test | nodejs async bunyan logging crashes the cloud function if await is not used while logging issue nodejs cloud function runs but results in a crash while using bunyan logs and publishing to another topic environment details os google cloud function node js version npm version google cloud logging bunyan version steps to reproduce create a cloud function using nodejs runtime with pubsub as trigger use bunyan logging and to cloud functions try and publish to a sample topic and use bunyan logs right after publish error reported ignoring extra callback call function execution took ms finished with status crash insertid labels … logname projects some project logs cloudfunctions googleapis com functions receivetimestamp resource labels function name test logger lib publish duplicate project id some project region us type cloud function severity error textpayload error could not load the default credentials browse to for more information at googleauth getapplicationdefaultasync srv functions node modules google auth library build src auth googleauth js at process tickcallback internal process next tick js timestamp trace projects some project traces sample code index js const pubsub require google cloud pubsub const bunyan require bunyan const loggingbunyan require google cloud logging bunyan const loggingbunyan new loggingbunyan logname process env log name function getlogger logginglevel let logger bunyan createlogger name process env function target level logginglevel streams loggingbunyan stream logger debug process env function target process env log name return logger const pubsub new pubsub projectid some project exports hellopubsub async event context console log i am in const logger getlogger debug const test topic pubsub topic logger topic logger debug debug level test const data buffer from interesting await test topic publish data logger error error level test logger info i am out package json name sample pubsub version dependencies google cloud pubsub bunyan google cloud logging bunyan note the same code works fine for nodejs runtime this issue is only with nodejs runtime in the above index js file if i use await on the last logger info i am out or all the logger calls the function works like a charm could anyone help me with what s wrong here reference issues | 0 |

189,048 | 14,484,090,977 | IssuesEvent | 2020-12-10 15:55:27 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | gowroc/meetups: slices_and_strings/slices_iteration_benchmark/slices_interation_test.go; 6 LoC | fresh test tiny |

Found a possible issue in [gowroc/meetups](https://www.github.com/gowroc/meetups) at [slices_and_strings/slices_iteration_benchmark/slices_interation_test.go](https://github.com/gowroc/meetups/blob/2204bf02b581a3d700b5bb3e75eb7ded9e2ba538/slices_and_strings/slices_iteration_benchmark/slices_interation_test.go#L26-L31)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call at line 30 may store a reference to b

[Click here to see the code in its original context.](https://github.com/gowroc/meetups/blob/2204bf02b581a3d700b5bb3e75eb7ded9e2ba538/slices_and_strings/slices_iteration_benchmark/slices_interation_test.go#L26-L31)

<details>

<summary>Click here to show the 6 line(s) of Go which triggered the analyzer.</summary>

```go

for i, b := range bigs {

if i > n {

return

}

accept(&b)

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 2204bf02b581a3d700b5bb3e75eb7ded9e2ba538

| 1.0 | gowroc/meetups: slices_and_strings/slices_iteration_benchmark/slices_interation_test.go; 6 LoC -

Found a possible issue in [gowroc/meetups](https://www.github.com/gowroc/meetups) at [slices_and_strings/slices_iteration_benchmark/slices_interation_test.go](https://github.com/gowroc/meetups/blob/2204bf02b581a3d700b5bb3e75eb7ded9e2ba538/slices_and_strings/slices_iteration_benchmark/slices_interation_test.go#L26-L31)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call at line 30 may store a reference to b

[Click here to see the code in its original context.](https://github.com/gowroc/meetups/blob/2204bf02b581a3d700b5bb3e75eb7ded9e2ba538/slices_and_strings/slices_iteration_benchmark/slices_interation_test.go#L26-L31)

<details>

<summary>Click here to show the 6 line(s) of Go which triggered the analyzer.</summary>

```go

for i, b := range bigs {

if i > n {

return

}

accept(&b)

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: 2204bf02b581a3d700b5bb3e75eb7ded9e2ba538

| test | gowroc meetups slices and strings slices iteration benchmark slices interation test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message function call at line may store a reference to b click here to show the line s of go which triggered the analyzer go for i b range bigs if i n return accept b leave a reaction on this issue to contribute to the project by classifying this instance as a bug mitigated or desirable behavior rocket see the descriptions of the classifications for more information commit id | 1 |

327,290 | 28,051,999,939 | IssuesEvent | 2023-03-29 06:42:16 | ALTA-LapakUMKM-Group-2/LapakUMKM-APITesting | https://api.github.com/repos/ALTA-LapakUMKM-Group-2/LapakUMKM-APITesting | closed | [User-A013] GET User With Invalid Parameter | Manual Api Testing | **Given** Get list users with invalid parameter

**And** Send Get users

**Then** API should return respon 404 Not Found | 1.0 | [User-A013] GET User With Invalid Parameter - **Given** Get list users with invalid parameter

**And** Send Get users

**Then** API should return respon 404 Not Found | test | get user with invalid parameter given get list users with invalid parameter and send get users then api should return respon not found | 1 |

303,485 | 26,213,059,809 | IssuesEvent | 2023-01-04 08:38:30 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | [Improvement]: Provide the Graal VM native support for function mocking | Type/Improvement Team/DevTools Area/TestFramework | ### Description

$subject

Related to #37690

### Describe your problem(s)

_No response_

### Describe your solution(s)

_No response_

### Related area

-> Test Framework

### Related issue(s) (optional)

_No response_

### Suggested label(s) (optional)

_No response_

### Suggested assignee(s) (optional)

_No response_ | 1.0 | [Improvement]: Provide the Graal VM native support for function mocking - ### Description

$subject

Related to #37690

### Describe your problem(s)

_No response_

### Describe your solution(s)

_No response_

### Related area

-> Test Framework

### Related issue(s) (optional)

_No response_

### Suggested label(s) (optional)

_No response_

### Suggested assignee(s) (optional)

_No response_ | test | provide the graal vm native support for function mocking description subject related to describe your problem s no response describe your solution s no response related area test framework related issue s optional no response suggested label s optional no response suggested assignee s optional no response | 1 |

391,722 | 26,905,300,387 | IssuesEvent | 2023-02-06 18:33:26 | DD2480-group30/CI | https://api.github.com/repos/DD2480-group30/CI | closed | Document the headers from Github's webhooks | documentation | Add documentation about how the HTTP POST request is formed from Github.

If such documentation is available online, add a link to it. Otherwise, write our own documentation. | 1.0 | Document the headers from Github's webhooks - Add documentation about how the HTTP POST request is formed from Github.

If such documentation is available online, add a link to it. Otherwise, write our own documentation. | non_test | document the headers from github s webhooks add documentation about how the http post request is formed from github if such documentation is available online add a link to it otherwise write our own documentation | 0 |

7,620 | 18,670,903,799 | IssuesEvent | 2021-10-30 17:36:48 | jtkaufman737/CMSC-495 | https://api.github.com/repos/jtkaufman737/CMSC-495 | closed | Deployment options | discussion-needed backend architecture | I was revisiting AWS stuff the other day briefly and they do have some "Free forever" tier options. The main thing I was hoping to get in a deployment option would be that it is free forever so if any group member wants to include it as part of a professional portfolio, there are no worries about it going offline when a free trial expires.

Unfortunately I think all the cloud providers (azure, GCP, AWS maaaaybe although I need to look more at the free forever tier) have some trip point in time where you would have to pay for deployments. Maybe one task can be looking into that.

There are also things like pythonanywhere and heroku which could definitely be used free indefinitely | 1.0 | Deployment options - I was revisiting AWS stuff the other day briefly and they do have some "Free forever" tier options. The main thing I was hoping to get in a deployment option would be that it is free forever so if any group member wants to include it as part of a professional portfolio, there are no worries about it going offline when a free trial expires.

Unfortunately I think all the cloud providers (azure, GCP, AWS maaaaybe although I need to look more at the free forever tier) have some trip point in time where you would have to pay for deployments. Maybe one task can be looking into that.

There are also things like pythonanywhere and heroku which could definitely be used free indefinitely | non_test | deployment options i was revisiting aws stuff the other day briefly and they do have some free forever tier options the main thing i was hoping to get in a deployment option would be that it is free forever so if any group member wants to include it as part of a professional portfolio there are no worries about it going offline when a free trial expires unfortunately i think all the cloud providers azure gcp aws maaaaybe although i need to look more at the free forever tier have some trip point in time where you would have to pay for deployments maybe one task can be looking into that there are also things like pythonanywhere and heroku which could definitely be used free indefinitely | 0 |

164,253 | 20,364,418,332 | IssuesEvent | 2022-02-21 02:45:18 | faizulho/sanity-gatsby-blog | https://api.github.com/repos/faizulho/sanity-gatsby-blog | opened | CVE-2022-0512 (High) detected in url-parse-1.4.7.tgz | security vulnerability | ## CVE-2022-0512 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>url-parse-1.4.7.tgz</b></p></summary>

<p>Small footprint URL parser that works seamlessly across Node.js and browser environments</p>

<p>Library home page: <a href="https://registry.npmjs.org/url-parse/-/url-parse-1.4.7.tgz">https://registry.npmjs.org/url-parse/-/url-parse-1.4.7.tgz</a></p>

<p>Path to dependency file: /studio/package.json</p>

<p>Path to vulnerable library: /studio/node_modules/url-parse/package.json,/web/node_modules/url-parse/package.json</p>

<p>

Dependency Hierarchy:

- gatsby-source-sanity-7.0.7.tgz (Root Library)

- :x: **url-parse-1.4.7.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Authorization Bypass Through User-Controlled Key in NPM url-parse prior to 1.5.6.

<p>Publish Date: 2022-02-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-0512>CVE-2022-0512</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-0512">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-0512</a></p>

<p>Release Date: 2022-02-14</p>

<p>Fix Resolution: url-parse - 1.5.6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-0512 (High) detected in url-parse-1.4.7.tgz - ## CVE-2022-0512 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>url-parse-1.4.7.tgz</b></p></summary>

<p>Small footprint URL parser that works seamlessly across Node.js and browser environments</p>

<p>Library home page: <a href="https://registry.npmjs.org/url-parse/-/url-parse-1.4.7.tgz">https://registry.npmjs.org/url-parse/-/url-parse-1.4.7.tgz</a></p>

<p>Path to dependency file: /studio/package.json</p>

<p>Path to vulnerable library: /studio/node_modules/url-parse/package.json,/web/node_modules/url-parse/package.json</p>

<p>

Dependency Hierarchy:

- gatsby-source-sanity-7.0.7.tgz (Root Library)

- :x: **url-parse-1.4.7.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Authorization Bypass Through User-Controlled Key in NPM url-parse prior to 1.5.6.

<p>Publish Date: 2022-02-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-0512>CVE-2022-0512</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-0512">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2022-0512</a></p>

<p>Release Date: 2022-02-14</p>

<p>Fix Resolution: url-parse - 1.5.6</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve high detected in url parse tgz cve high severity vulnerability vulnerable library url parse tgz small footprint url parser that works seamlessly across node js and browser environments library home page a href path to dependency file studio package json path to vulnerable library studio node modules url parse package json web node modules url parse package json dependency hierarchy gatsby source sanity tgz root library x url parse tgz vulnerable library found in base branch master vulnerability details authorization bypass through user controlled key in npm url parse prior to publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution url parse step up your open source security game with whitesource | 0 |

336,876 | 30,225,480,014 | IssuesEvent | 2023-07-05 23:50:45 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | closed | Examine `test_jax_numpy_statistical` tests | JAX Frontend Testing Test Sweep ToDo_internal | Ensure a comprehensive examination of all tests to confirm their alignment with the current test writing policy. Ensure the tests follow the following guidelines, among others:

1. Avoid restricting the use of `get_dtypes` to specific kinds (`float`, `int`) unless absolutely necessary; the default value should be used when possible.

2. Avoid the use of `assume` to bypass specific data types.

3. Unless necessary, avoid restricting the generation of numbers with `min_value` or `max_value`. For example, it is acceptable to set a maximum value for the `pad` function if the `pad_value` value can halt the test.

4. Ensure proper use of strategies.

5. Verify that all tests are executed rather than skipped by `test_values=False`, unless the test logic is implemented within the test body itself.

6. Confirm that testing is comprehensive and complete, meaning that all possible combinations of inputs are tested.

7. Avoid manually setting test parameters, including native array flags, container flags, the number of positional arguments, etc.

8. Ensure that no parameters are skipped during testing.

> Always refer to the documentation for **Ivy Tests** and **Ivy Frontend Tests** for complete test writing policy.

If a test function does not require update, simply mark the function in the list as completed, otherwise create an issue for that test function.

- [x] test_jax_numpy_einsum

- [x] test_jax_numpy_mean

- [x] test_jax_numpy_var

- [x] #16277

- [x] test_jax_numpy_argmin

- [x] test_jax_numpy_bincount

- [x] test_jax_numpy_cumprod

- [ ] #16285

- [x] test_jax_numpy_sum

- [x] test_jax_numpy_min

- [x] test_jax_numpy_max

- [x] test_jax_numpy_average

- [x] #16276

- [x] #16278

- [x] test_numpy_nanmin

| 2.0 | Examine `test_jax_numpy_statistical` tests - Ensure a comprehensive examination of all tests to confirm their alignment with the current test writing policy. Ensure the tests follow the following guidelines, among others:

1. Avoid restricting the use of `get_dtypes` to specific kinds (`float`, `int`) unless absolutely necessary; the default value should be used when possible.

2. Avoid the use of `assume` to bypass specific data types.

3. Unless necessary, avoid restricting the generation of numbers with `min_value` or `max_value`. For example, it is acceptable to set a maximum value for the `pad` function if the `pad_value` value can halt the test.

4. Ensure proper use of strategies.

5. Verify that all tests are executed rather than skipped by `test_values=False`, unless the test logic is implemented within the test body itself.

6. Confirm that testing is comprehensive and complete, meaning that all possible combinations of inputs are tested.

7. Avoid manually setting test parameters, including native array flags, container flags, the number of positional arguments, etc.

8. Ensure that no parameters are skipped during testing.

> Always refer to the documentation for **Ivy Tests** and **Ivy Frontend Tests** for complete test writing policy.

If a test function does not require update, simply mark the function in the list as completed, otherwise create an issue for that test function.

- [x] test_jax_numpy_einsum

- [x] test_jax_numpy_mean

- [x] test_jax_numpy_var

- [x] #16277

- [x] test_jax_numpy_argmin

- [x] test_jax_numpy_bincount

- [x] test_jax_numpy_cumprod

- [ ] #16285

- [x] test_jax_numpy_sum

- [x] test_jax_numpy_min

- [x] test_jax_numpy_max

- [x] test_jax_numpy_average

- [x] #16276

- [x] #16278

- [x] test_numpy_nanmin

| test | examine test jax numpy statistical tests ensure a comprehensive examination of all tests to confirm their alignment with the current test writing policy ensure the tests follow the following guidelines among others avoid restricting the use of get dtypes to specific kinds float int unless absolutely necessary the default value should be used when possible avoid the use of assume to bypass specific data types unless necessary avoid restricting the generation of numbers with min value or max value for example it is acceptable to set a maximum value for the pad function if the pad value value can halt the test ensure proper use of strategies verify that all tests are executed rather than skipped by test values false unless the test logic is implemented within the test body itself confirm that testing is comprehensive and complete meaning that all possible combinations of inputs are tested avoid manually setting test parameters including native array flags container flags the number of positional arguments etc ensure that no parameters are skipped during testing always refer to the documentation for ivy tests and ivy frontend tests for complete test writing policy if a test function does not require update simply mark the function in the list as completed otherwise create an issue for that test function test jax numpy einsum test jax numpy mean test jax numpy var test jax numpy argmin test jax numpy bincount test jax numpy cumprod test jax numpy sum test jax numpy min test jax numpy max test jax numpy average test numpy nanmin | 1 |

224,022 | 17,651,923,438 | IssuesEvent | 2021-08-20 14:16:52 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [test-failed]: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/graph/feature_controls/graph_security·ts - graph app feature controls security global graph read-only privileges "before all" hook for "shows graph navlink" | failed-test test-cloud | **Version: 7.15.0**

**Class: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/graph/feature_controls/graph_security·ts**

**Stack Trace:**

```

Error: timed out waiting for logout button visible -- last error: Error: retry.try timeout: Error: expected testSubject(userMenu) to exist

at TestSubjects.existOrFail (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/functional/services/common/test_subjects.ts:45:13)

at /var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/x-pack/test/functional/services/user_menu.js:48:9

at runAttempt (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:27:15)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:66:21)

at RetryService.try (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:31:12)

at UserMenu._ensureMenuOpen (test/functional/services/user_menu.js:46:7)

at UserMenu.logoutLinkExists (test/functional/services/user_menu.js:28:7)

at /var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/x-pack/test/functional/page_objects/security_page.ts:249:19

at runAttempt (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:27:15)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:66:21)

at retryForTruthy (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_truthy.ts:27:3)

at RetryService.waitFor (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:59:5)

at SecurityPageObject.login (test/functional/page_objects/security_page.ts:247:5)

at Context.<anonymous> (test/functional/apps/graph/feature_controls/graph_security.ts:116:9)

at Object.apply (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16)

at onFailure (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:17:9)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:57:13)

at RetryService.try (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:31:12)

at UserMenu._ensureMenuOpen (test/functional/services/user_menu.js:46:7)

at UserMenu.logoutLinkExists (test/functional/services/user_menu.js:28:7)

at /var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/x-pack/test/functional/page_objects/security_page.ts:249:19

at runAttempt (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:27:15)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:66:21)

at retryForTruthy (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_truthy.ts:27:3)

at RetryService.waitFor (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:59:5)

at SecurityPageObject.login (test/functional/page_objects/security_page.ts:247:5)

at Context.<anonymous> (test/functional/apps/graph/feature_controls/graph_security.ts:116:9)

at Object.apply (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16)

at onFailure (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_truthy.ts:39:13)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:57:13)

at retryForTruthy (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_truthy.ts:27:3)

at RetryService.waitFor (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:59:5)

at SecurityPageObject.login (test/functional/page_objects/security_page.ts:247:5)

at Context.<anonymous> (test/functional/apps/graph/feature_controls/graph_security.ts:116:9)

at Object.apply (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16)

```

**Other test failures:**

- graph app feature controls security no graph privileges "before all" hook for "doesn't show graph navlink"

_Test Report: https://internal-ci.elastic.co/view/Stack%20Tests/job/elastic+estf-cloud-kibana-tests/2193/testReport/_ | 2.0 | [test-failed]: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/graph/feature_controls/graph_security·ts - graph app feature controls security global graph read-only privileges "before all" hook for "shows graph navlink" - **Version: 7.15.0**

**Class: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/graph/feature_controls/graph_security·ts**

**Stack Trace:**

```

Error: timed out waiting for logout button visible -- last error: Error: retry.try timeout: Error: expected testSubject(userMenu) to exist

at TestSubjects.existOrFail (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/functional/services/common/test_subjects.ts:45:13)

at /var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/x-pack/test/functional/services/user_menu.js:48:9

at runAttempt (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:27:15)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:66:21)

at RetryService.try (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:31:12)

at UserMenu._ensureMenuOpen (test/functional/services/user_menu.js:46:7)

at UserMenu.logoutLinkExists (test/functional/services/user_menu.js:28:7)

at /var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/x-pack/test/functional/page_objects/security_page.ts:249:19

at runAttempt (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:27:15)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:66:21)

at retryForTruthy (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_truthy.ts:27:3)

at RetryService.waitFor (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:59:5)

at SecurityPageObject.login (test/functional/page_objects/security_page.ts:247:5)

at Context.<anonymous> (test/functional/apps/graph/feature_controls/graph_security.ts:116:9)

at Object.apply (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16)

at onFailure (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:17:9)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:57:13)

at RetryService.try (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:31:12)

at UserMenu._ensureMenuOpen (test/functional/services/user_menu.js:46:7)

at UserMenu.logoutLinkExists (test/functional/services/user_menu.js:28:7)

at /var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/x-pack/test/functional/page_objects/security_page.ts:249:19

at runAttempt (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:27:15)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:66:21)

at retryForTruthy (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_truthy.ts:27:3)

at RetryService.waitFor (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:59:5)

at SecurityPageObject.login (test/functional/page_objects/security_page.ts:247:5)

at Context.<anonymous> (test/functional/apps/graph/feature_controls/graph_security.ts:116:9)

at Object.apply (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16)

at onFailure (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_truthy.ts:39:13)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:57:13)

at retryForTruthy (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_truthy.ts:27:3)

at RetryService.waitFor (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/test/common/services/retry/retry.ts:59:5)

at SecurityPageObject.login (test/functional/page_objects/security_page.ts:247:5)

at Context.<anonymous> (test/functional/apps/graph/feature_controls/graph_security.ts:116:9)

at Object.apply (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp2/TASK/saas_run_kibana_tests/node/ess-testing/ci/cloud/common/build/kibana/node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16)

```

**Other test failures:**

- graph app feature controls security no graph privileges "before all" hook for "doesn't show graph navlink"

_Test Report: https://internal-ci.elastic.co/view/Stack%20Tests/job/elastic+estf-cloud-kibana-tests/2193/testReport/_ | test | chrome x pack ui functional x pack test functional apps graph feature controls graph security·ts graph app feature controls security global graph read only privileges before all hook for shows graph navlink version class chrome x pack ui functional x pack test functional apps graph feature controls graph security·ts stack trace error timed out waiting for logout button visible last error error retry try timeout error expected testsubject usermenu to exist at testsubjects existorfail var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test functional services common test subjects ts at var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana x pack test functional services user menu js at runattempt var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryforsuccess var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryservice try var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry ts at usermenu ensuremenuopen test functional services user menu js at usermenu logoutlinkexists test functional services user menu js at var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana x pack test functional page objects security page ts at runattempt var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryforsuccess var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryfortruthy var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for truthy ts at retryservice waitfor var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry ts at securitypageobject login test functional page objects security page ts at context test functional apps graph feature controls graph security ts at object apply var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana node modules kbn test target node functional test runner lib mocha wrap function js at onfailure var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryforsuccess var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryservice try var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry ts at usermenu ensuremenuopen test functional services user menu js at usermenu logoutlinkexists test functional services user menu js at var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana x pack test functional page objects security page ts at runattempt var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryforsuccess var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryfortruthy var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for truthy ts at retryservice waitfor var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry ts at securitypageobject login test functional page objects security page ts at context test functional apps graph feature controls graph security ts at object apply var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana node modules kbn test target node functional test runner lib mocha wrap function js at onfailure var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for truthy ts at retryforsuccess var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for success ts at retryfortruthy var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry for truthy ts at retryservice waitfor var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana test common services retry retry ts at securitypageobject login test functional page objects security page ts at context test functional apps graph feature controls graph security ts at object apply var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node ess testing ci cloud common build kibana node modules kbn test target node functional test runner lib mocha wrap function js other test failures graph app feature controls security no graph privileges before all hook for doesn t show graph navlink test report | 1 |

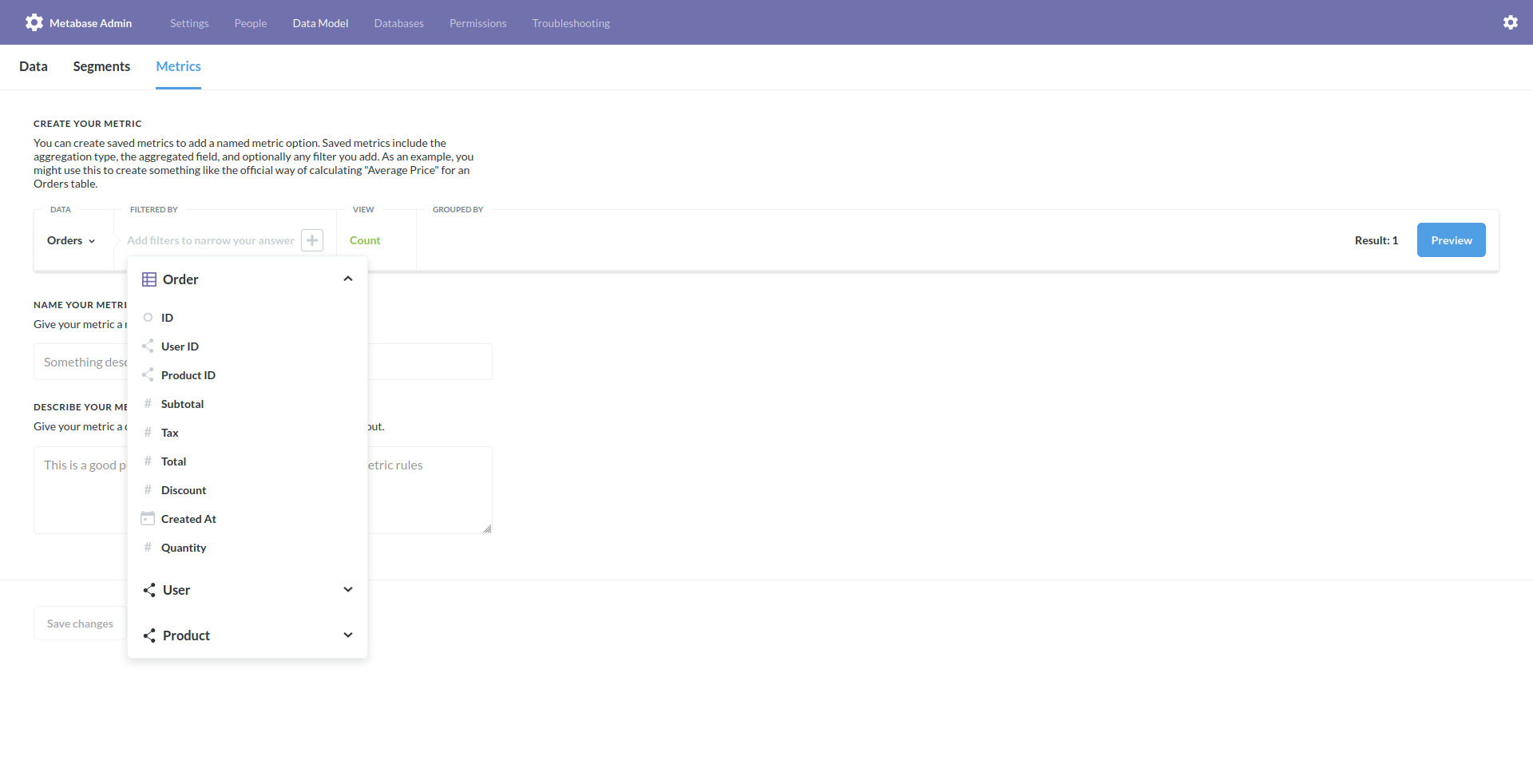

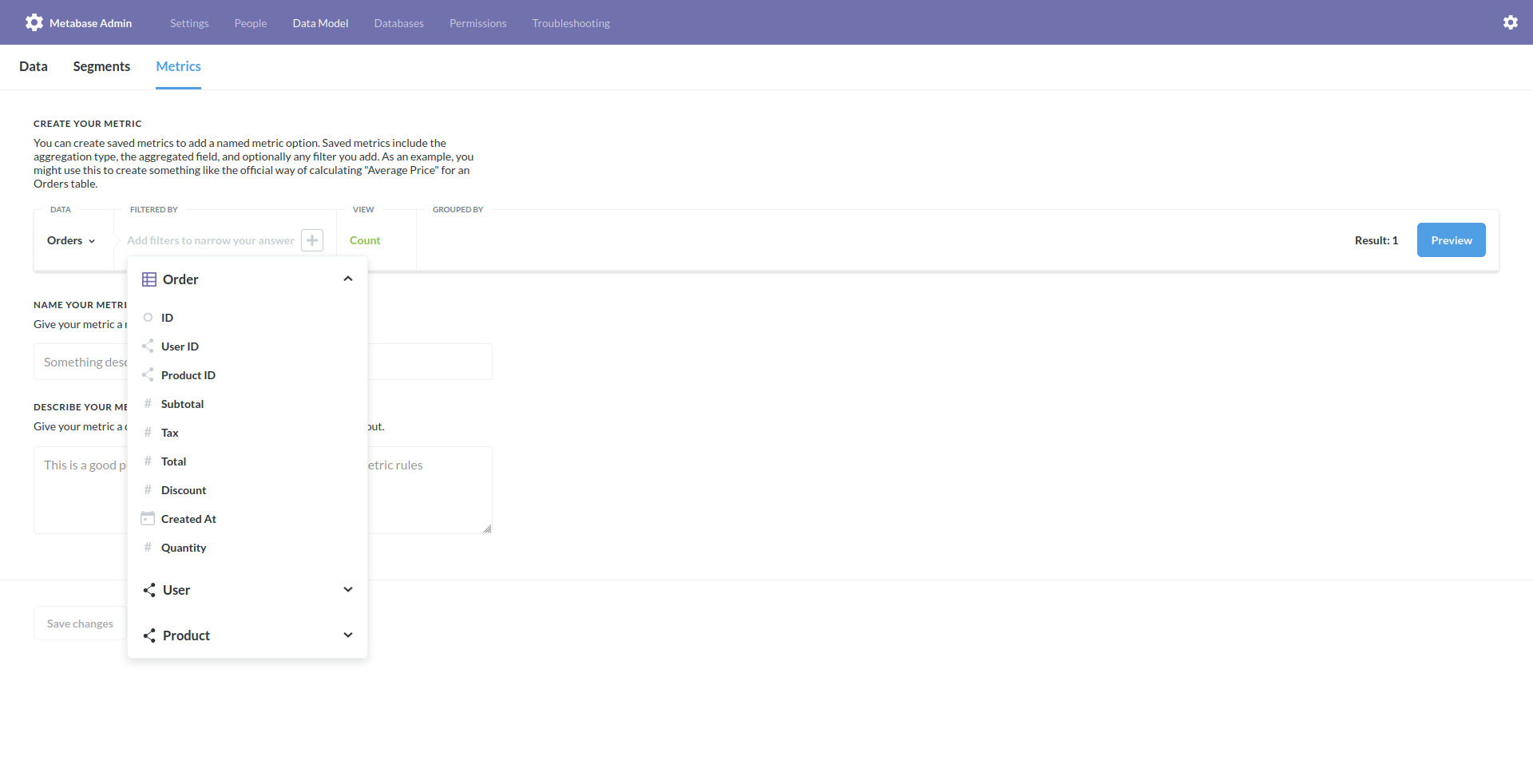

650,878 | 21,427,824,648 | IssuesEvent | 2022-04-23 00:24:26 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Custom Expression not available in filters of Segment or Metric | Type:Bug Priority:P2 Administration/Metrics & Segments .Reproduced .Regression Querying/Notebook/Custom Expression | **Describe the bug**

Custom Expression not available in filters of Segment or Metric

**To Reproduce**

Steps to reproduce the behavior:

1. Go to Admin > Data Model > Segment/Metric > Create new or edit one of the existing

2. Click "Filtered by", doesn't show Custom Expression

**Information about your Metabase Installation:**

Metabase 0.36.0, 0.36.3 - it does exist on 0.35.4

**Additional context**

Related to #4038 and #12899 | 1.0 | Custom Expression not available in filters of Segment or Metric - **Describe the bug**

Custom Expression not available in filters of Segment or Metric

**To Reproduce**

Steps to reproduce the behavior:

1. Go to Admin > Data Model > Segment/Metric > Create new or edit one of the existing

2. Click "Filtered by", doesn't show Custom Expression

**Information about your Metabase Installation:**

Metabase 0.36.0, 0.36.3 - it does exist on 0.35.4

**Additional context**

Related to #4038 and #12899 | non_test | custom expression not available in filters of segment or metric describe the bug custom expression not available in filters of segment or metric to reproduce steps to reproduce the behavior go to admin data model segment metric create new or edit one of the existing click filtered by doesn t show custom expression information about your metabase installation metabase it does exist on additional context related to and | 0 |

23,877 | 4,050,217,727 | IssuesEvent | 2016-05-23 17:24:13 | phetsims/capacitor-lab-basics | https://api.github.com/repos/phetsims/capacitor-lab-basics | closed | Plate charge density is too high | status:fixed-pending-testing type:bug | It looks like the plate charge density is a bit higher than it was in previous dev versions. For comparison, here is the capacitor at 1.5 V connected to the battery in version 18:

Here is the capacitor connected to the battery at 1.5 V in version 21:

| 1.0 | Plate charge density is too high - It looks like the plate charge density is a bit higher than it was in previous dev versions. For comparison, here is the capacitor at 1.5 V connected to the battery in version 18:

Here is the capacitor connected to the battery at 1.5 V in version 21:

| test | plate charge density is too high it looks like the plate charge density is a bit higher than it was in previous dev versions for comparison here is the capacitor at v connected to the battery in version here is the capacitor connected to the battery at v in version | 1 |

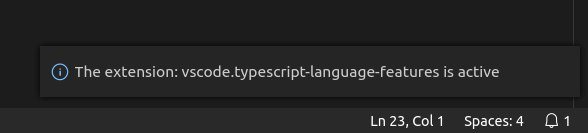

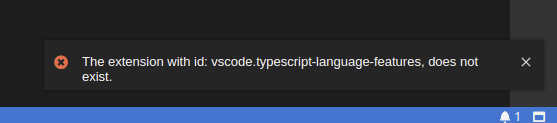

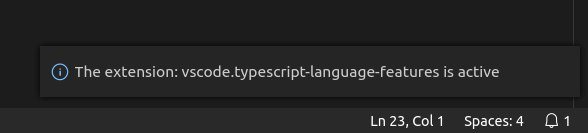

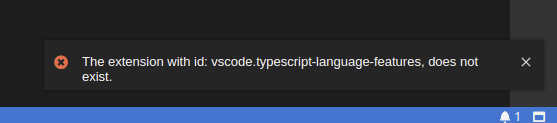

52,242 | 10,790,734,900 | IssuesEvent | 2019-11-05 15:28:52 | eclipse-theia/theia | https://api.github.com/repos/eclipse-theia/theia | opened | [vscode] builtin extensions not recognized | plug-in system vscode | **Description**

I've created a custom VS Code [`plugin`](https://github.com/vince-fugnitto/ts-tools-plugin) which simply verifies that the `vscode-builtin-typescript-language-features` builtin extension correctly works. The plugin itself works perfectly in VS Code while in Theia it fails to find the extension.

**Screenshots**

_VS Code:_

<div align='center'>

</div>

_Theia:_

<div align='center'>

</div>

**Setup**

The following updates were made to the example-browser [package.json](https://github.com/eclipse-theia/theia/blob/master/examples/browser/package.json):

_Additions:_

```json

"@theia/vscode-builtin-typescript": "0.2.1",

"@theia/vscode-builtin-typescript-language-features": "0.2.1",

```

_Deletions:_

```json

"@theia/typescript": "^0.12.0"

```

---

Am I doing something wrong when attempting to consume builtin extensions in Theia? | 1.0 | [vscode] builtin extensions not recognized - **Description**

I've created a custom VS Code [`plugin`](https://github.com/vince-fugnitto/ts-tools-plugin) which simply verifies that the `vscode-builtin-typescript-language-features` builtin extension correctly works. The plugin itself works perfectly in VS Code while in Theia it fails to find the extension.

**Screenshots**

_VS Code:_

<div align='center'>

</div>

_Theia:_

<div align='center'>

</div>

**Setup**

The following updates were made to the example-browser [package.json](https://github.com/eclipse-theia/theia/blob/master/examples/browser/package.json):

_Additions:_

```json

"@theia/vscode-builtin-typescript": "0.2.1",

"@theia/vscode-builtin-typescript-language-features": "0.2.1",

```

_Deletions:_

```json

"@theia/typescript": "^0.12.0"

```

---

Am I doing something wrong when attempting to consume builtin extensions in Theia? | non_test | builtin extensions not recognized description i ve created a custom vs code which simply verifies that the vscode builtin typescript language features builtin extension correctly works the plugin itself works perfectly in vs code while in theia it fails to find the extension screenshots vs code theia setup the following updates were made to the example browser additions json theia vscode builtin typescript theia vscode builtin typescript language features deletions json theia typescript am i doing something wrong when attempting to consume builtin extensions in theia | 0 |

95,207 | 8,552,705,132 | IssuesEvent | 2018-11-07 21:54:35 | aeternity/elixir-node | https://api.github.com/repos/aeternity/elixir-node | opened | Channel compatibility tests - Contracts offchain | blocked channel latest-compatibility | Channels contracts updates offchain compatibility should be tested and tests created. This excludes force progress.

Blocked by #762 | 1.0 | Channel compatibility tests - Contracts offchain - Channels contracts updates offchain compatibility should be tested and tests created. This excludes force progress.

Blocked by #762 | test | channel compatibility tests contracts offchain channels contracts updates offchain compatibility should be tested and tests created this excludes force progress blocked by | 1 |

769,268 | 26,998,998,093 | IssuesEvent | 2023-02-10 05:27:37 | infor-design/enterprise-ng | https://api.github.com/repos/infor-design/enterprise-ng | closed | Update SohoDataGrid dataset removes SohoToolbarFlex | type: bug :bug: type: patch [2] type: regression bug :leftwards_arrow_with_hook: priority: high | **Describe the bug**

For some reason the toolbar disappears when being interacted with (buttons are pressed)

**To Reproduce**

Steps to reproduce the behavior:

1. Go to https://stackblitz.com/edit/ids-quick-start-1480-1t7bj3?file=src/app/app.component.ts

2. Click on the toolbar button

4. See error -> toolbar disappears

**Expected behavior**

Should not disappear and it didnt in the previous version.

**Version**

- ids-enterprise-ng: 15.0.1

**Additional context**

- Issue described [here]

- Narrowed it down to setting `toolbar: { keywordFilter: true }` | 1.0 | Update SohoDataGrid dataset removes SohoToolbarFlex - **Describe the bug**

For some reason the toolbar disappears when being interacted with (buttons are pressed)

**To Reproduce**

Steps to reproduce the behavior:

1. Go to https://stackblitz.com/edit/ids-quick-start-1480-1t7bj3?file=src/app/app.component.ts

2. Click on the toolbar button

4. See error -> toolbar disappears

**Expected behavior**

Should not disappear and it didnt in the previous version.

**Version**

- ids-enterprise-ng: 15.0.1

**Additional context**

- Issue described [here]

- Narrowed it down to setting `toolbar: { keywordFilter: true }` | non_test | update sohodatagrid dataset removes sohotoolbarflex describe the bug for some reason the toolbar disappears when being interacted with buttons are pressed to reproduce steps to reproduce the behavior go to click on the toolbar button see error toolbar disappears expected behavior should not disappear and it didnt in the previous version version ids enterprise ng additional context issue described narrowed it down to setting toolbar keywordfilter true | 0 |