Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

140,130 | 18,895,235,951 | IssuesEvent | 2021-11-15 17:08:30 | bgoonz/searchAwesome | https://api.github.com/repos/bgoonz/searchAwesome | closed | CVE-2020-7774 (High) detected in y18n-3.2.1.tgz, y18n-4.0.0.tgz | security vulnerability | ## CVE-2020-7774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>y18n-3.2.1.tgz</b>, <b>y18n-4.0.0.tgz</b></p></summary>

<p>

<details><summary><b>y18n-3.2.1.tgz</b></p></summary>

<p>the bare-bones internationalization library used by yargs</p>

<p>Library home page: <a href="https://registry.npmjs.org/y18n/-/y18n-3.2.1.tgz">https://registry.npmjs.org/y18n/-/y18n-3.2.1.tgz</a></p>

<p>Path to dependency file: searchAwesome/clones/awesome-stacks/package.json</p>

<p>Path to vulnerable library: searchAwesome/clones/awesome-stacks/node_modules/y18n/package.json</p>

<p>

Dependency Hierarchy:

- gatsby-cli-2.5.7.tgz (Root Library)

- yargs-12.0.5.tgz

- :x: **y18n-3.2.1.tgz** (Vulnerable Library)

</details>

<details><summary><b>y18n-4.0.0.tgz</b></p></summary>

<p>the bare-bones internationalization library used by yargs</p>

<p>Library home page: <a href="https://registry.npmjs.org/y18n/-/y18n-4.0.0.tgz">https://registry.npmjs.org/y18n/-/y18n-4.0.0.tgz</a></p>

<p>Path to dependency file: searchAwesome/clones/awesome-wpo/website/package.json</p>

<p>Path to vulnerable library: searchAwesome/clones/awesome-wpo/website/node_modules/y18n/package.json,searchAwesome/clones/awesome-stacks/node_modules/cacache/node_modules/y18n/package.json</p>

<p>

Dependency Hierarchy:

- gatsby-2.1.19.tgz (Root Library)

- terser-webpack-plugin-1.2.3.tgz

- cacache-11.3.2.tgz

- :x: **y18n-4.0.0.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/bgoonz/searchAwesome/commit/cb1b8421c464b43b24d4816929e575612a00cd49">cb1b8421c464b43b24d4816929e575612a00cd49</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects the package y18n before 3.2.2, 4.0.1 and 5.0.5. PoC by po6ix: const y18n = require('y18n')(); y18n.setLocale('__proto__'); y18n.updateLocale({polluted: true}); console.log(polluted); // true

<p>Publish Date: 2020-11-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-7774>CVE-2020-7774</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1654">https://www.npmjs.com/advisories/1654</a></p>

<p>Release Date: 2020-11-17</p>

<p>Fix Resolution: 3.2.2, 4.0.1, 5.0.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-7774 (High) detected in y18n-3.2.1.tgz, y18n-4.0.0.tgz - ## CVE-2020-7774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>y18n-3.2.1.tgz</b>, <b>y18n-4.0.0.tgz</b></p></summary>

<p>

<details><summary><b>y18n-3.2.1.tgz</b></p></summary>

<p>the bare-bones internationalization library used by yargs</p>

<p>Library home page: <a href="https://registry.npmjs.org/y18n/-/y18n-3.2.1.tgz">https://registry.npmjs.org/y18n/-/y18n-3.2.1.tgz</a></p>

<p>Path to dependency file: searchAwesome/clones/awesome-stacks/package.json</p>

<p>Path to vulnerable library: searchAwesome/clones/awesome-stacks/node_modules/y18n/package.json</p>

<p>

Dependency Hierarchy:

- gatsby-cli-2.5.7.tgz (Root Library)

- yargs-12.0.5.tgz

- :x: **y18n-3.2.1.tgz** (Vulnerable Library)

</details>

<details><summary><b>y18n-4.0.0.tgz</b></p></summary>

<p>the bare-bones internationalization library used by yargs</p>

<p>Library home page: <a href="https://registry.npmjs.org/y18n/-/y18n-4.0.0.tgz">https://registry.npmjs.org/y18n/-/y18n-4.0.0.tgz</a></p>

<p>Path to dependency file: searchAwesome/clones/awesome-wpo/website/package.json</p>

<p>Path to vulnerable library: searchAwesome/clones/awesome-wpo/website/node_modules/y18n/package.json,searchAwesome/clones/awesome-stacks/node_modules/cacache/node_modules/y18n/package.json</p>

<p>

Dependency Hierarchy:

- gatsby-2.1.19.tgz (Root Library)

- terser-webpack-plugin-1.2.3.tgz

- cacache-11.3.2.tgz

- :x: **y18n-4.0.0.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/bgoonz/searchAwesome/commit/cb1b8421c464b43b24d4816929e575612a00cd49">cb1b8421c464b43b24d4816929e575612a00cd49</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects the package y18n before 3.2.2, 4.0.1 and 5.0.5. PoC by po6ix: const y18n = require('y18n')(); y18n.setLocale('__proto__'); y18n.updateLocale({polluted: true}); console.log(polluted); // true

<p>Publish Date: 2020-11-17

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-7774>CVE-2020-7774</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1654">https://www.npmjs.com/advisories/1654</a></p>

<p>Release Date: 2020-11-17</p>

<p>Fix Resolution: 3.2.2, 4.0.1, 5.0.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve high detected in tgz tgz cve high severity vulnerability vulnerable libraries tgz tgz tgz the bare bones internationalization library used by yargs library home page a href path to dependency file searchawesome clones awesome stacks package json path to vulnerable library searchawesome clones awesome stacks node modules package json dependency hierarchy gatsby cli tgz root library yargs tgz x tgz vulnerable library tgz the bare bones internationalization library used by yargs library home page a href path to dependency file searchawesome clones awesome wpo website package json path to vulnerable library searchawesome clones awesome wpo website node modules package json searchawesome clones awesome stacks node modules cacache node modules package json dependency hierarchy gatsby tgz root library terser webpack plugin tgz cacache tgz x tgz vulnerable library found in head commit a href found in base branch master vulnerability details this affects the package before and poc by const require setlocale proto updatelocale polluted true console log polluted true publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact low integrity impact low availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

37,684 | 6,626,474,070 | IssuesEvent | 2017-09-22 19:43:12 | Optum/ChaoSlingr | https://api.github.com/repos/Optum/ChaoSlingr | closed | Figure out flow for outside contributions | documentation | Figure out how submitting request from outside contributors works | 1.0 | Figure out flow for outside contributions - Figure out how submitting request from outside contributors works | non_test | figure out flow for outside contributions figure out how submitting request from outside contributors works | 0 |

337,336 | 30,247,489,684 | IssuesEvent | 2023-07-06 17:39:29 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix indexing_slicing_joining_mutating_ops.test_torch_transpose | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5478319447"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5467750133/jobs/9954465931"><img src=https://img.shields.io/badge/-success-success></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5478319447"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5478319447"><img src=https://img.shields.io/badge/-success-success></a>

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5467750133/jobs/9954465931"><img src=https://img.shields.io/badge/-success-success></a>

| 1.0 | Fix indexing_slicing_joining_mutating_ops.test_torch_transpose - | | |

|---|---|

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/5478319447"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/5467750133/jobs/9954465931"><img src=https://img.shields.io/badge/-success-success></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/5478319447"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/5478319447"><img src=https://img.shields.io/badge/-success-success></a>

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/5467750133/jobs/9954465931"><img src=https://img.shields.io/badge/-success-success></a>

| test | fix indexing slicing joining mutating ops test torch transpose jax a href src numpy a href src tensorflow a href src torch a href src paddle a href src | 1 |

205,779 | 16,008,933,276 | IssuesEvent | 2021-04-20 08:09:22 | ptarmiganlabs/butler-sos | https://api.github.com/repos/ptarmiganlabs/butler-sos | closed | Document dependency on InfluxDB 1.x | documentation | **Describe the bug**

While InfluxDB 2.x is a mighty fine database, it is not entirely backwards compatible with v1.x (which Butler still uses).

Document this dependency and stress the need to use for example InfluxDB 1.8.4 (which is latest as of this writing).

| 1.0 | Document dependency on InfluxDB 1.x - **Describe the bug**

While InfluxDB 2.x is a mighty fine database, it is not entirely backwards compatible with v1.x (which Butler still uses).

Document this dependency and stress the need to use for example InfluxDB 1.8.4 (which is latest as of this writing).

| non_test | document dependency on influxdb x describe the bug while influxdb x is a mighty fine database it is not entirely backwards compatible with x which butler still uses document this dependency and stress the need to use for example influxdb which is latest as of this writing | 0 |

53,832 | 13,262,361,571 | IssuesEvent | 2020-08-20 21:40:15 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | On wimpsim_reader (Trac #2156) | Migrated from Trac combo simulation defect | Found when running this script:

```text

from I3Tray import *

from icecube import icetray, dataclasses, dataio

from icecube import simclasses, wimpsim_reader, phys_services

from icecube.wimpsim_reader import WimpSimReaderEarth

tray = I3Tray()

tray.AddService("I3SPRNGRandomServiceFactory","Random",

NStreams = 2,

Seed = 42,

StreamNum = 1,

InstallServiceAs = "I3RandomService")

tray.AddSegment(WimpSimReaderEarth,"EarthWimpsim-reader",

Infile = '/data/user/grenzi/data/EarthWimpData/Earth/we-m50-ch11-earth.010010.000100.dat',

#GCDFileName = '/data/user/grenzi/data/EarthWimpData/gcd/GeoCalibDetectorStatus_IC86.55697_V2.i3.gz'

StartMJD=55555,

EndMJD=55666,

)

def prettyprint(frame):

icetray.logging.log_info("=====================")

icetray.logging.log_info(str(frame["I3EventHeader"]))

icetray.logging.log_info(str(frame["WIMP_params"]))

icetray.logging.log_info(str(frame["I3MCTree"]))

tray.AddModule(prettyprint, "print",

Streams = [icetray.I3Frame.Physics, icetray.I3Frame.DAQ])

tray.AddModule( 'I3Writer', 'EventWriter',

Filename = "/data/user/grenzi/data/EarthWimpData/Earth/we-m50-ch11-earth.010010.000100.i3.bz2",

#Streams = [icetray.I3Frame.Physics, icetray.I3Frame.DAQ],

DropOrphanStreams = [icetray.I3Frame.DAQ]

)

tray.Execute()

del tray

```

The segments in this submodule: http://code.icecube.wisc.edu/projects/icecube/browser/IceCube/projects/wimpsim-reader/trunk/python/wimpsimreader.py

Are set with not acceptable values of ```InjectionRadius```

Both:

```text

29 InjectionRadius = 0*I3Units.meter , #default 0*I3Units.meter

```

```text

74 InjectionRadius = 0*I3Units.meter , #default 0*I3Units.meter

```

While in /IceCube/projects/wimpsim-reader/trunk/private/wimpsim-reader/I3WimpSimReader.cxx we have:

```text

180 if (radius_<=0.)

181 log_fatal("Injection radius must be positive and not zero");

```

Hence, running this segments with whatever the inputs gives the error:

```text

RuntimeError: Injection radius must be positive and not zero (in virtual void I3WimpSimReader::Configure())

```

Also, it is commented that default is 0, but the log message when running is

```text

InjectionRadius

Description : If >0, events will be injected in cylinder with zmin, zmax height

Default : nan

Configured : 0.0

```

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2156">https://code.icecube.wisc.edu/projects/icecube/ticket/2156</a>, reported by grenziand owned by mjl5147</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:15:23",

"_ts": "1550067323910946",

"description": "Found when running this script:\n\n{{{\nfrom I3Tray import *\nfrom icecube import icetray, dataclasses, dataio\nfrom icecube import simclasses, wimpsim_reader, phys_services\nfrom icecube.wimpsim_reader import WimpSimReaderEarth\n\ntray = I3Tray()\n\ntray.AddService(\"I3SPRNGRandomServiceFactory\",\"Random\",\n NStreams = 2,\n Seed = 42,\n StreamNum = 1,\n InstallServiceAs = \"I3RandomService\")\n\ntray.AddSegment(WimpSimReaderEarth,\"EarthWimpsim-reader\",\n Infile = '/data/user/grenzi/data/EarthWimpData/Earth/we-m50-ch11-earth.010010.000100.dat',\n #GCDFileName = '/data/user/grenzi/data/EarthWimpData/gcd/GeoCalibDetectorStatus_IC86.55697_V2.i3.gz'\n StartMJD=55555,\n EndMJD=55666,\n )\n\ndef prettyprint(frame):\n icetray.logging.log_info(\"=====================\")\n icetray.logging.log_info(str(frame[\"I3EventHeader\"]))\n icetray.logging.log_info(str(frame[\"WIMP_params\"]))\n icetray.logging.log_info(str(frame[\"I3MCTree\"]))\ntray.AddModule(prettyprint, \"print\",\n Streams = [icetray.I3Frame.Physics, icetray.I3Frame.DAQ])\n\ntray.AddModule( 'I3Writer', 'EventWriter',\n Filename = \"/data/user/grenzi/data/EarthWimpData/Earth/we-m50-ch11-earth.010010.000100.i3.bz2\",\n #Streams = [icetray.I3Frame.Physics, icetray.I3Frame.DAQ],\n DropOrphanStreams = [icetray.I3Frame.DAQ]\n )\n\ntray.Execute()\n\ndel tray\n\n}}}\n\nThe segments in this submodule: http://code.icecube.wisc.edu/projects/icecube/browser/IceCube/projects/wimpsim-reader/trunk/python/wimpsimreader.py\n\nAre set with not acceptable values of {{{InjectionRadius}}}\n\nBoth:\n\n{{{\n29\t InjectionRadius = 0*I3Units.meter , #default 0*I3Units.meter\n}}}\n\n{{{\n74\t InjectionRadius = 0*I3Units.meter , #default 0*I3Units.meter\n}}}\n\nWhile in /IceCube/projects/wimpsim-reader/trunk/private/wimpsim-reader/I3WimpSimReader.cxx we have:\n\n{{{\n180\t if (radius_<=0.)\n181\t log_fatal(\"Injection radius must be positive and not zero\");\n}}}\n\nHence, running this segments with whatever the inputs gives the error:\n\n{{{\nRuntimeError: Injection radius must be positive and not zero (in virtual void I3WimpSimReader::Configure())\n}}}\n\nAlso, it is commented that default is 0, but the log message when running is \n{{{\nInjectionRadius\n Description : If >0, events will be injected in cylinder with zmin, zmax height\n Default : nan\n Configured : 0.0\n}}} \n\n",

"reporter": "grenzi",

"cc": "",

"resolution": "fixed",

"time": "2018-05-17T15:55:29",

"component": "combo simulation",

"summary": "On wimpsim_reader",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "mjl5147",

"type": "defect"

}

```

</p>

</details>

| 1.0 | On wimpsim_reader (Trac #2156) - Found when running this script:

```text

from I3Tray import *

from icecube import icetray, dataclasses, dataio

from icecube import simclasses, wimpsim_reader, phys_services

from icecube.wimpsim_reader import WimpSimReaderEarth

tray = I3Tray()

tray.AddService("I3SPRNGRandomServiceFactory","Random",

NStreams = 2,

Seed = 42,

StreamNum = 1,

InstallServiceAs = "I3RandomService")

tray.AddSegment(WimpSimReaderEarth,"EarthWimpsim-reader",

Infile = '/data/user/grenzi/data/EarthWimpData/Earth/we-m50-ch11-earth.010010.000100.dat',

#GCDFileName = '/data/user/grenzi/data/EarthWimpData/gcd/GeoCalibDetectorStatus_IC86.55697_V2.i3.gz'

StartMJD=55555,

EndMJD=55666,

)

def prettyprint(frame):

icetray.logging.log_info("=====================")

icetray.logging.log_info(str(frame["I3EventHeader"]))

icetray.logging.log_info(str(frame["WIMP_params"]))

icetray.logging.log_info(str(frame["I3MCTree"]))

tray.AddModule(prettyprint, "print",

Streams = [icetray.I3Frame.Physics, icetray.I3Frame.DAQ])

tray.AddModule( 'I3Writer', 'EventWriter',

Filename = "/data/user/grenzi/data/EarthWimpData/Earth/we-m50-ch11-earth.010010.000100.i3.bz2",

#Streams = [icetray.I3Frame.Physics, icetray.I3Frame.DAQ],

DropOrphanStreams = [icetray.I3Frame.DAQ]

)

tray.Execute()

del tray

```

The segments in this submodule: http://code.icecube.wisc.edu/projects/icecube/browser/IceCube/projects/wimpsim-reader/trunk/python/wimpsimreader.py

Are set with not acceptable values of ```InjectionRadius```

Both:

```text

29 InjectionRadius = 0*I3Units.meter , #default 0*I3Units.meter

```

```text

74 InjectionRadius = 0*I3Units.meter , #default 0*I3Units.meter

```

While in /IceCube/projects/wimpsim-reader/trunk/private/wimpsim-reader/I3WimpSimReader.cxx we have:

```text

180 if (radius_<=0.)

181 log_fatal("Injection radius must be positive and not zero");

```

Hence, running this segments with whatever the inputs gives the error:

```text

RuntimeError: Injection radius must be positive and not zero (in virtual void I3WimpSimReader::Configure())

```

Also, it is commented that default is 0, but the log message when running is

```text

InjectionRadius

Description : If >0, events will be injected in cylinder with zmin, zmax height

Default : nan

Configured : 0.0

```

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2156">https://code.icecube.wisc.edu/projects/icecube/ticket/2156</a>, reported by grenziand owned by mjl5147</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:15:23",

"_ts": "1550067323910946",

"description": "Found when running this script:\n\n{{{\nfrom I3Tray import *\nfrom icecube import icetray, dataclasses, dataio\nfrom icecube import simclasses, wimpsim_reader, phys_services\nfrom icecube.wimpsim_reader import WimpSimReaderEarth\n\ntray = I3Tray()\n\ntray.AddService(\"I3SPRNGRandomServiceFactory\",\"Random\",\n NStreams = 2,\n Seed = 42,\n StreamNum = 1,\n InstallServiceAs = \"I3RandomService\")\n\ntray.AddSegment(WimpSimReaderEarth,\"EarthWimpsim-reader\",\n Infile = '/data/user/grenzi/data/EarthWimpData/Earth/we-m50-ch11-earth.010010.000100.dat',\n #GCDFileName = '/data/user/grenzi/data/EarthWimpData/gcd/GeoCalibDetectorStatus_IC86.55697_V2.i3.gz'\n StartMJD=55555,\n EndMJD=55666,\n )\n\ndef prettyprint(frame):\n icetray.logging.log_info(\"=====================\")\n icetray.logging.log_info(str(frame[\"I3EventHeader\"]))\n icetray.logging.log_info(str(frame[\"WIMP_params\"]))\n icetray.logging.log_info(str(frame[\"I3MCTree\"]))\ntray.AddModule(prettyprint, \"print\",\n Streams = [icetray.I3Frame.Physics, icetray.I3Frame.DAQ])\n\ntray.AddModule( 'I3Writer', 'EventWriter',\n Filename = \"/data/user/grenzi/data/EarthWimpData/Earth/we-m50-ch11-earth.010010.000100.i3.bz2\",\n #Streams = [icetray.I3Frame.Physics, icetray.I3Frame.DAQ],\n DropOrphanStreams = [icetray.I3Frame.DAQ]\n )\n\ntray.Execute()\n\ndel tray\n\n}}}\n\nThe segments in this submodule: http://code.icecube.wisc.edu/projects/icecube/browser/IceCube/projects/wimpsim-reader/trunk/python/wimpsimreader.py\n\nAre set with not acceptable values of {{{InjectionRadius}}}\n\nBoth:\n\n{{{\n29\t InjectionRadius = 0*I3Units.meter , #default 0*I3Units.meter\n}}}\n\n{{{\n74\t InjectionRadius = 0*I3Units.meter , #default 0*I3Units.meter\n}}}\n\nWhile in /IceCube/projects/wimpsim-reader/trunk/private/wimpsim-reader/I3WimpSimReader.cxx we have:\n\n{{{\n180\t if (radius_<=0.)\n181\t log_fatal(\"Injection radius must be positive and not zero\");\n}}}\n\nHence, running this segments with whatever the inputs gives the error:\n\n{{{\nRuntimeError: Injection radius must be positive and not zero (in virtual void I3WimpSimReader::Configure())\n}}}\n\nAlso, it is commented that default is 0, but the log message when running is \n{{{\nInjectionRadius\n Description : If >0, events will be injected in cylinder with zmin, zmax height\n Default : nan\n Configured : 0.0\n}}} \n\n",

"reporter": "grenzi",

"cc": "",

"resolution": "fixed",

"time": "2018-05-17T15:55:29",

"component": "combo simulation",

"summary": "On wimpsim_reader",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "mjl5147",

"type": "defect"

}

```

</p>

</details>

| non_test | on wimpsim reader trac found when running this script text from import from icecube import icetray dataclasses dataio from icecube import simclasses wimpsim reader phys services from icecube wimpsim reader import wimpsimreaderearth tray tray addservice random nstreams seed streamnum installserviceas tray addsegment wimpsimreaderearth earthwimpsim reader infile data user grenzi data earthwimpdata earth we earth dat gcdfilename data user grenzi data earthwimpdata gcd geocalibdetectorstatus gz startmjd endmjd def prettyprint frame icetray logging log info icetray logging log info str frame icetray logging log info str frame icetray logging log info str frame tray addmodule prettyprint print streams tray addmodule eventwriter filename data user grenzi data earthwimpdata earth we earth streams droporphanstreams tray execute del tray the segments in this submodule are set with not acceptable values of injectionradius both text injectionradius meter default meter text injectionradius meter default meter while in icecube projects wimpsim reader trunk private wimpsim reader cxx we have text if radius log fatal injection radius must be positive and not zero hence running this segments with whatever the inputs gives the error text runtimeerror injection radius must be positive and not zero in virtual void configure also it is commented that default is but the log message when running is text injectionradius description if events will be injected in cylinder with zmin zmax height default nan configured migrated from json status closed changetime ts description found when running this script n n nfrom import nfrom icecube import icetray dataclasses dataio nfrom icecube import simclasses wimpsim reader phys services nfrom icecube wimpsim reader import wimpsimreaderearth n ntray n ntray addservice random n nstreams n seed n streamnum n installserviceas n ntray addsegment wimpsimreaderearth earthwimpsim reader n infile data user grenzi data earthwimpdata earth we earth dat n gcdfilename data user grenzi data earthwimpdata gcd geocalibdetectorstatus gz n startmjd n endmjd n n ndef prettyprint frame n icetray logging log info n icetray logging log info str frame n icetray logging log info str frame n icetray logging log info str frame ntray addmodule prettyprint print n streams n ntray addmodule eventwriter n filename data user grenzi data earthwimpdata earth we earth n streams n droporphanstreams n n ntray execute n ndel tray n n n nthe segments in this submodule set with not acceptable values of injectionradius n nboth n n t injectionradius meter default meter n n n t injectionradius meter default meter n n nwhile in icecube projects wimpsim reader trunk private wimpsim reader cxx we have n n t if radius events will be injected in cylinder with zmin zmax height n default nan n configured n n n reporter grenzi cc resolution fixed time component combo simulation summary on wimpsim reader priority normal keywords milestone owner type defect | 0 |

323,302 | 27,714,499,132 | IssuesEvent | 2023-03-14 16:06:28 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | opened | Fix math.test_tensorflow_add_n | TensorFlow Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4415955275/jobs/7739604896" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|None

|numpy|None

|jax|None

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_tensorflow/test_math.py::test_tensorflow_add_n[cpu-ivy.functional.backends.tensorflow-False-False]</summary>

2023-03-14T13:11:15.8387508Z E AttributeError: module 'ivy.functional.backends.tensorflow' has no attribute 'add_n'

2023-03-14T13:11:15.8388123Z E Falsifying example: test_tensorflow_add_n(

2023-03-14T13:11:15.8388708Z E dtype_and_x=(['float64'], [array(-1.)]),

2023-03-14T13:11:15.8389209Z E test_flags=FrontendFunctionTestFlags(

2023-03-14T13:11:15.8389690Z E num_positional_args=0,

2023-03-14T13:11:15.8390102Z E with_out=False,

2023-03-14T13:11:15.8390516Z E inplace=False,

2023-03-14T13:11:15.8390932Z E as_variable=[False],

2023-03-14T13:11:15.8391346Z E native_arrays=[False],

2023-03-14T13:11:15.8391741Z E ),

2023-03-14T13:11:15.8392333Z E fn_tree='ivy.functional.frontends.tensorflow.math.add_n',

2023-03-14T13:11:15.8393723Z E on_device='cpu',

2023-03-14T13:11:15.8394123Z E frontend='tensorflow',

2023-03-14T13:11:15.8394428Z E )

2023-03-14T13:11:15.8394700Z E

2023-03-14T13:11:15.8395409Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCIDQAAAnAAM=') as a decorator on your test case

</details>

| 1.0 | Fix math.test_tensorflow_add_n - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4415955275/jobs/7739604896" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|None

|numpy|None

|jax|None

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_tensorflow/test_math.py::test_tensorflow_add_n[cpu-ivy.functional.backends.tensorflow-False-False]</summary>

2023-03-14T13:11:15.8387508Z E AttributeError: module 'ivy.functional.backends.tensorflow' has no attribute 'add_n'

2023-03-14T13:11:15.8388123Z E Falsifying example: test_tensorflow_add_n(

2023-03-14T13:11:15.8388708Z E dtype_and_x=(['float64'], [array(-1.)]),

2023-03-14T13:11:15.8389209Z E test_flags=FrontendFunctionTestFlags(

2023-03-14T13:11:15.8389690Z E num_positional_args=0,

2023-03-14T13:11:15.8390102Z E with_out=False,

2023-03-14T13:11:15.8390516Z E inplace=False,

2023-03-14T13:11:15.8390932Z E as_variable=[False],

2023-03-14T13:11:15.8391346Z E native_arrays=[False],

2023-03-14T13:11:15.8391741Z E ),

2023-03-14T13:11:15.8392333Z E fn_tree='ivy.functional.frontends.tensorflow.math.add_n',

2023-03-14T13:11:15.8393723Z E on_device='cpu',

2023-03-14T13:11:15.8394123Z E frontend='tensorflow',

2023-03-14T13:11:15.8394428Z E )

2023-03-14T13:11:15.8394700Z E

2023-03-14T13:11:15.8395409Z E You can reproduce this example by temporarily adding @reproduce_failure('6.68.2', b'AXicY2AAAkYGCIDQAAAnAAM=') as a decorator on your test case

</details>

| test | fix math test tensorflow add n tensorflow img src torch none numpy none jax none failed ivy tests test ivy test frontends test tensorflow test math py test tensorflow add n e attributeerror module ivy functional backends tensorflow has no attribute add n e falsifying example test tensorflow add n e dtype and x e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e e fn tree ivy functional frontends tensorflow math add n e on device cpu e frontend tensorflow e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case | 1 |

68,096 | 7,087,693,496 | IssuesEvent | 2018-01-11 18:44:18 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | `OperationServiceImpl_invokeOnPartitionsTest` | Team: Core Team: QuSP Type: Test-Failure | `com.hazelcast.spi.impl.operationservice.impl.OperationServiceImpl_invokeOnPartitionsTest.testLongRunning`

```java

com.hazelcast.nio.serialization.HazelcastSerializationException: Problem while reading DataSerializable, namespace: 0, ID: 0, class: 'com.hazelcast.spi.impl.operationservice.impl.OperationServiceImpl_invokeOnPartitionsTest$SlowOperationFactoryImpl$1', exception: com.hazelcast.spi.impl.operationservice.impl.OperationServiceImpl_invokeOnPartitionsTest$SlowOperationFactoryImpl$1.<init>()

```

https://hazelcast-l337.ci.cloudbees.com/job/new-lab-fast-pr/12761/testReport/junit/com.hazelcast.spi.impl.operationservice.impl/OperationServiceImpl_invokeOnPartitionsTest/testLongRunning/ | 1.0 | `OperationServiceImpl_invokeOnPartitionsTest` - `com.hazelcast.spi.impl.operationservice.impl.OperationServiceImpl_invokeOnPartitionsTest.testLongRunning`

```java

com.hazelcast.nio.serialization.HazelcastSerializationException: Problem while reading DataSerializable, namespace: 0, ID: 0, class: 'com.hazelcast.spi.impl.operationservice.impl.OperationServiceImpl_invokeOnPartitionsTest$SlowOperationFactoryImpl$1', exception: com.hazelcast.spi.impl.operationservice.impl.OperationServiceImpl_invokeOnPartitionsTest$SlowOperationFactoryImpl$1.<init>()

```

https://hazelcast-l337.ci.cloudbees.com/job/new-lab-fast-pr/12761/testReport/junit/com.hazelcast.spi.impl.operationservice.impl/OperationServiceImpl_invokeOnPartitionsTest/testLongRunning/ | test | operationserviceimpl invokeonpartitionstest com hazelcast spi impl operationservice impl operationserviceimpl invokeonpartitionstest testlongrunning java com hazelcast nio serialization hazelcastserializationexception problem while reading dataserializable namespace id class com hazelcast spi impl operationservice impl operationserviceimpl invokeonpartitionstest slowoperationfactoryimpl exception com hazelcast spi impl operationservice impl operationserviceimpl invokeonpartitionstest slowoperationfactoryimpl | 1 |

365,775 | 10,797,591,855 | IssuesEvent | 2019-11-06 08:13:59 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | subtech.g.hatena.ne.jp - Page content is not displayed | browser-firefox engine-gecko priority-important severity-important | <!-- @browser: Firefox 72.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:72.0) Gecko/20100101 Firefox/72.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://subtech.g.hatena.ne.jp/

**Browser / Version**: Firefox 72.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes

**Problem type**: Design is broken

**Description**: Elements with style "zoom: 0" are invisible

**Steps to Reproduce**:

Some body texts, that should be displayed below headings, are not displayed.

The site has a CSS rule "zoom: 0".

In IE, Edge, and Chrome, "zoom: 0" seems to be ignored.

In Firefox 72, "zoom: 0" makes the element invisible.

Firefox 72 supports CSS zoom property via transform property https://bugzilla.mozilla.org/show_bug.cgi?id=1589766 .

[](https://webcompat.com/uploads/2019/10/bcbaf893-3f91-40cc-b52e-21d359dfdbcc.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191027212548</li><li>channel: nightly</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

<p>Console Messages:</p>

<pre>

[{'level': 'warn', 'log': ['This page uses the non standard property zoom. Consider using calc() in the relevant property values, or using transform along with transform-origin: 0 0.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://www.otsuka.co.jp/soy/entertainment/ichigobp/ichigobp.js was loaded even though its MIME type (text/html) is not a valid JavaScript MIME type.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source http://www.otsuka.co.jp/soy/entertainment/ichigobp/ichigobp.js.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '701:1'}, {'level': 'error', 'log': ["SyntaxError: expected expression, got ';'"], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '886:31'}, {'level': 'warn', 'log': ['Request to access cookie or storage on http://stats.g.doubleclick.net/dc.js was blocked because it came from a tracker and content blocking is enabled.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['Request to access cookie or storage on https://stats.g.doubleclick.net/dc.js was blocked because it came from a tracker and content blocking is enabled.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['Use of Mutation Events is deprecated. Use MutationObserver instead.'], 'uri': 'moz-extension://72fbe179-9ca6-4837-be8e-94e0ddd8bca7/content/widget_embedder.js', 'pos': '106:21'}, {'level': 'warn', 'log': ['onmozfullscreenchange is deprecated.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['onmozfullscreenerror is deprecated.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}]

</pre>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | subtech.g.hatena.ne.jp - Page content is not displayed - <!-- @browser: Firefox 72.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:72.0) Gecko/20100101 Firefox/72.0 -->

<!-- @reported_with: desktop-reporter -->

**URL**: http://subtech.g.hatena.ne.jp/

**Browser / Version**: Firefox 72.0

**Operating System**: Windows 10

**Tested Another Browser**: Yes

**Problem type**: Design is broken

**Description**: Elements with style "zoom: 0" are invisible

**Steps to Reproduce**:

Some body texts, that should be displayed below headings, are not displayed.

The site has a CSS rule "zoom: 0".

In IE, Edge, and Chrome, "zoom: 0" seems to be ignored.

In Firefox 72, "zoom: 0" makes the element invisible.

Firefox 72 supports CSS zoom property via transform property https://bugzilla.mozilla.org/show_bug.cgi?id=1589766 .

[](https://webcompat.com/uploads/2019/10/bcbaf893-3f91-40cc-b52e-21d359dfdbcc.jpeg)

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20191027212548</li><li>channel: nightly</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

<p>Console Messages:</p>

<pre>

[{'level': 'warn', 'log': ['This page uses the non standard property zoom. Consider using calc() in the relevant property values, or using transform along with transform-origin: 0 0.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['The script from https://www.otsuka.co.jp/soy/entertainment/ichigobp/ichigobp.js was loaded even though its MIME type (text/html) is not a valid JavaScript MIME type.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['Loading failed for the <script> with source http://www.otsuka.co.jp/soy/entertainment/ichigobp/ichigobp.js.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '701:1'}, {'level': 'error', 'log': ["SyntaxError: expected expression, got ';'"], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '886:31'}, {'level': 'warn', 'log': ['Request to access cookie or storage on http://stats.g.doubleclick.net/dc.js was blocked because it came from a tracker and content blocking is enabled.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['Request to access cookie or storage on https://stats.g.doubleclick.net/dc.js was blocked because it came from a tracker and content blocking is enabled.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['Use of Mutation Events is deprecated. Use MutationObserver instead.'], 'uri': 'moz-extension://72fbe179-9ca6-4837-be8e-94e0ddd8bca7/content/widget_embedder.js', 'pos': '106:21'}, {'level': 'warn', 'log': ['onmozfullscreenchange is deprecated.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}, {'level': 'warn', 'log': ['onmozfullscreenerror is deprecated.'], 'uri': 'http://subtech.g.hatena.ne.jp/', 'pos': '0:0'}]

</pre>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_test | subtech g hatena ne jp page content is not displayed url browser version firefox operating system windows tested another browser yes problem type design is broken description elements with style zoom are invisible steps to reproduce some body texts that should be displayed below headings are not displayed the site has a css rule zoom in ie edge and chrome zoom seems to be ignored in firefox zoom makes the element invisible firefox supports css zoom property via transform property browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel nightly hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false console messages uri pos level warn log uri pos level warn log uri pos level error log uri pos level warn log uri pos level warn log uri pos level warn log uri moz extension content widget embedder js pos level warn log uri pos level warn log uri pos from with ❤️ | 0 |

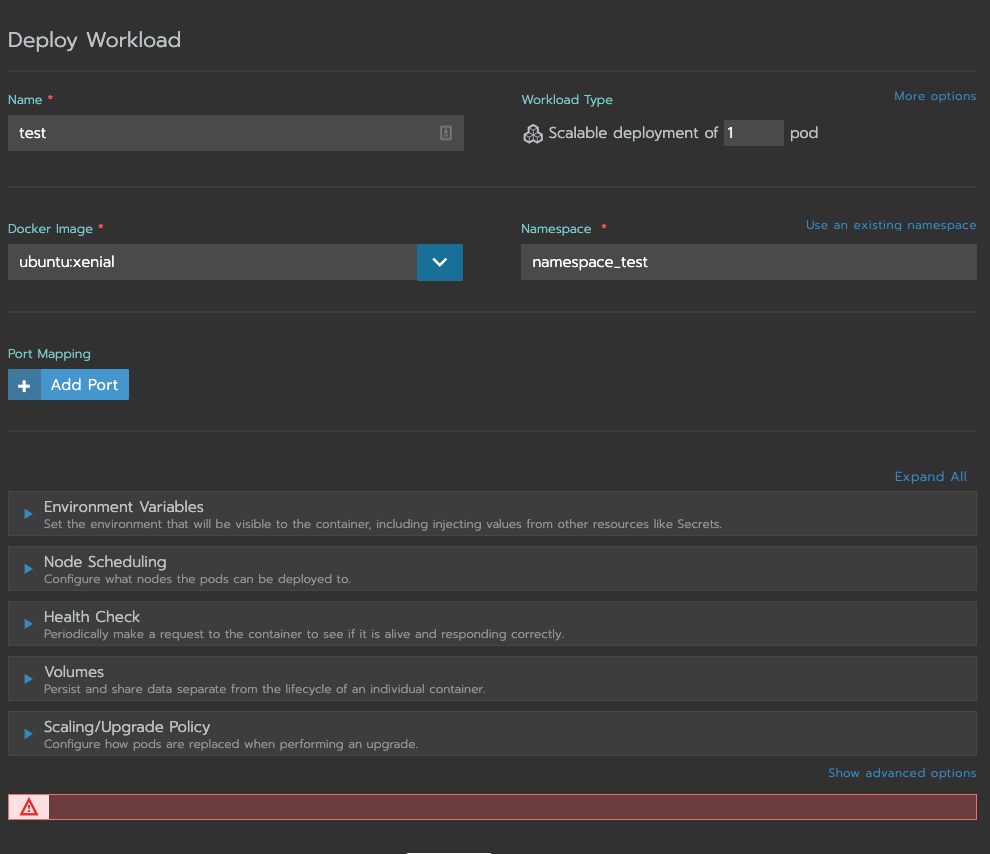

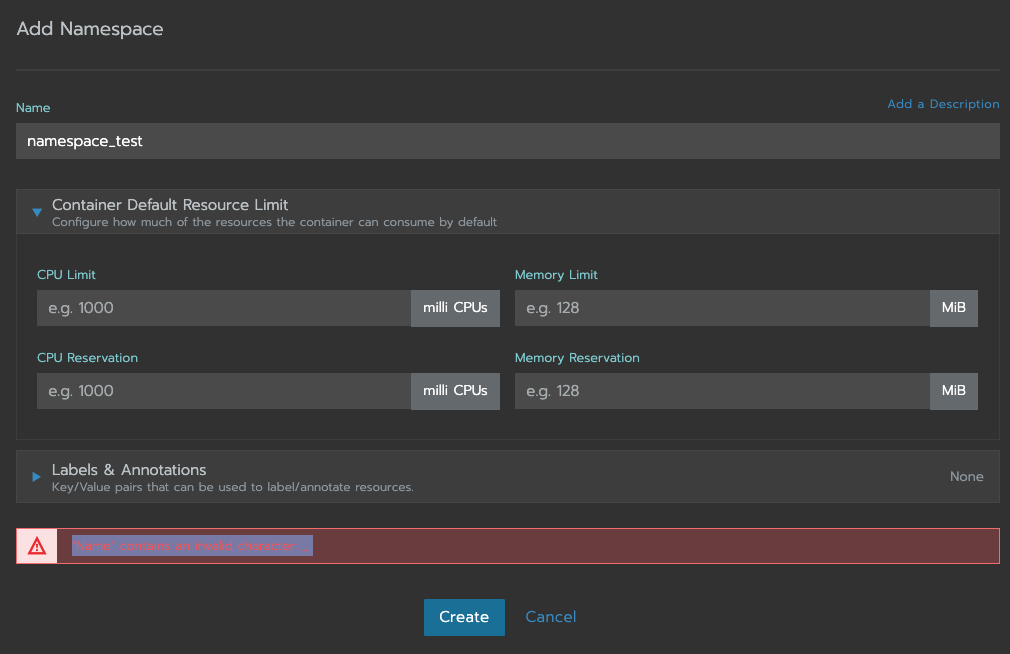

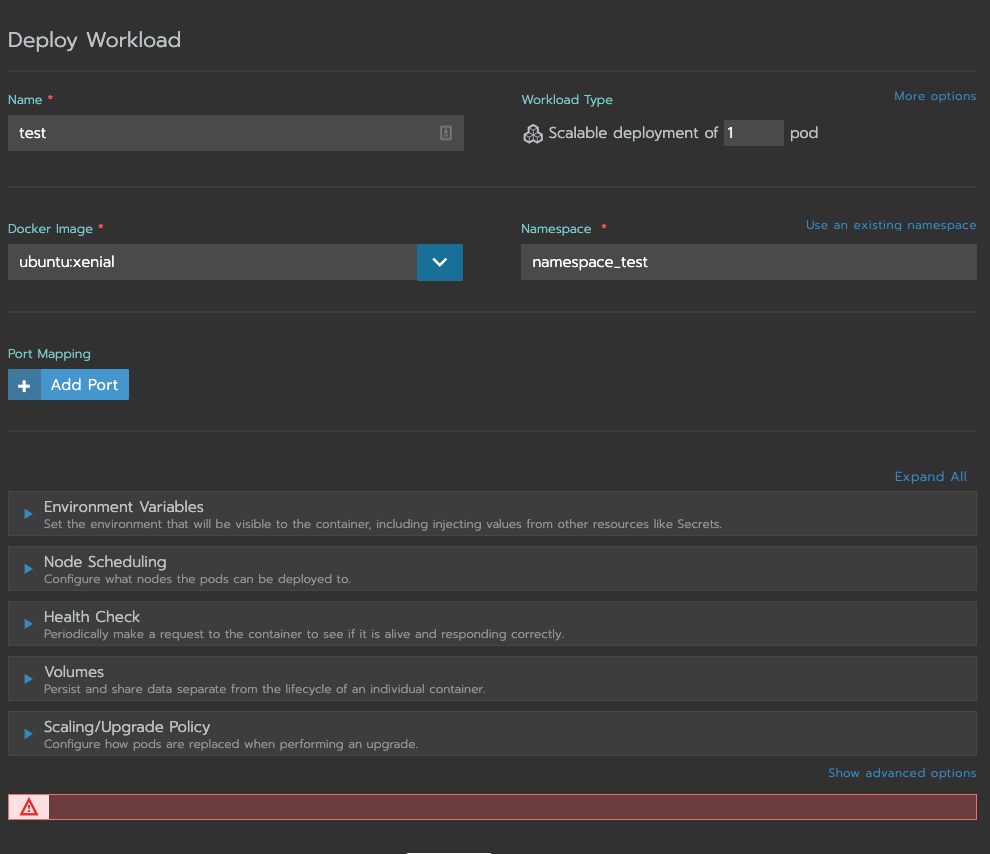

299,274 | 25,892,569,683 | IssuesEvent | 2022-12-14 19:15:54 | rancher/dashboard | https://api.github.com/repos/rancher/dashboard | closed | "Deploy Workload" should print an error when creating namespace containing an underscore | kind/bug [zube]: To Test internal QA/XS kind/enhancement team/area2 size/2 ember area/form-validation JIRA | **What kind of request is this (question/bug/enhancement/feature request):**

Bug

**Steps to reproduce (least amount of steps as possible):**

1. Head to the `Cluster` -> `Project` -> `Workloads` view.

2. Click `Deploy`

3. Attempt to deploy a workload.

* Name: test

* Dock Image: Anything

* Namespace: Select `Add to a new namespace`. For namespace, add something that contains an underscore, such as `namespace_test`

* Hit `Launch`

**Result:**

Rancher will return a red error box at the bottom with no error:

**Other details that may be helpful:**

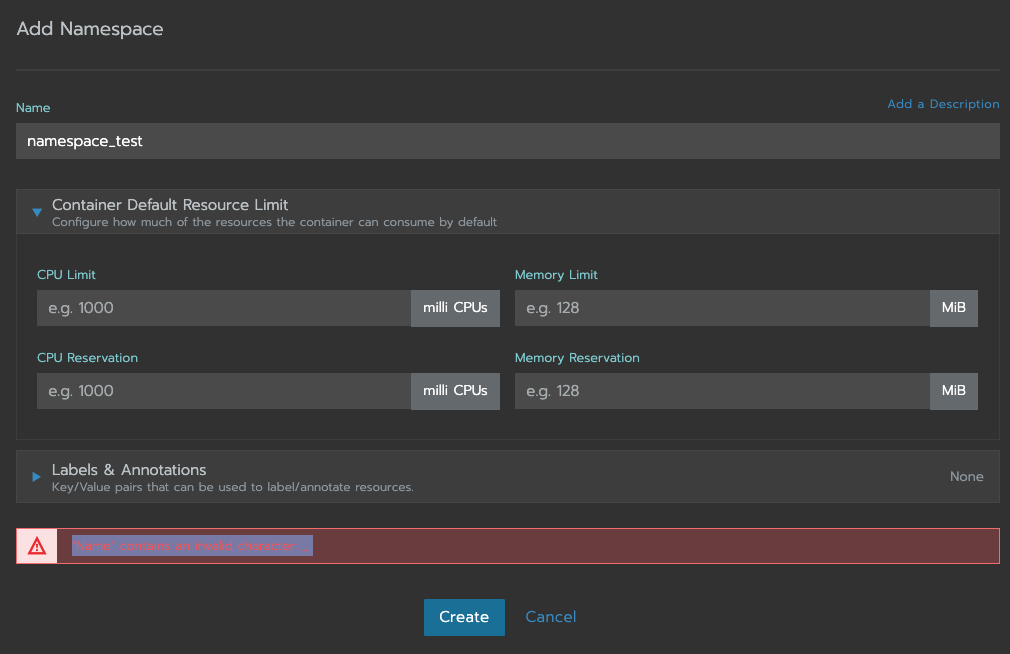

If I create a namespace another way, Rancher warns me that underscores are not allowed in namespaces.

1. Head to the `Cluster` -> `Project` view (not workload)

2. Click `Add Namespace`

3. Attempt to add a namespace

* Name: add something that contains an underscore, such as `namespace_test`

4. Hit `Create`

Rancher will print the error `"Name" contains an invalid character: _`

**Environment information**

- Rancher version: v2.4.5

- Rancher User interface: v2.4.28

- Installation option (single install/HA): HA

<!--

If the reported issue is regarding a created cluster, please provide requested info below

-->

**Cluster information**

- Cluster type (Hosted/Infrastructure Provider/Custom/Imported): Custom

- Machine type (cloud/VM/metal) and specifications (CPU/memory): VMware VMs. Specs n/a

- Kubernetes version (use `kubectl version`):

```

Client Version: version.Info{Major:"1", Minor:"17", GitVersion:"v1.17.9", GitCommit:"4fb7ed12476d57b8437ada90b4f93b17ffaeed99", GitTreeState:"clean", BuildDate:"2020-07-15T16:18:16Z", GoVersion:"go1.13.9", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"17", GitVersion:"v1.17.9", GitCommit:"4fb7ed12476d57b8437ada90b4f93b17ffaeed99", GitTreeState:"clean", BuildDate:"2020-07-15T16:10:45Z", GoVersion:"go1.13.9", Compiler:"gc", Platform:"linux/amd64"}

```

- Docker version (use `docker version`):

```

Client:

Version: 18.09.9

API version: 1.39

Go version: go1.11.13

Git commit: 039a7df9ba

Built: Wed Sep 4 16:57:28 2019

OS/Arch: linux/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 18.09.9

API version: 1.39 (minimum version 1.12)

Go version: go1.11.13

Git commit: 039a7df

Built: Wed Sep 4 16:19:38 2019

OS/Arch: linux/amd64

Experimental: false

```

gz#14010 | 1.0 | "Deploy Workload" should print an error when creating namespace containing an underscore - **What kind of request is this (question/bug/enhancement/feature request):**

Bug

**Steps to reproduce (least amount of steps as possible):**

1. Head to the `Cluster` -> `Project` -> `Workloads` view.

2. Click `Deploy`

3. Attempt to deploy a workload.

* Name: test

* Dock Image: Anything

* Namespace: Select `Add to a new namespace`. For namespace, add something that contains an underscore, such as `namespace_test`

* Hit `Launch`

**Result:**

Rancher will return a red error box at the bottom with no error:

**Other details that may be helpful:**

If I create a namespace another way, Rancher warns me that underscores are not allowed in namespaces.

1. Head to the `Cluster` -> `Project` view (not workload)

2. Click `Add Namespace`

3. Attempt to add a namespace

* Name: add something that contains an underscore, such as `namespace_test`

4. Hit `Create`

Rancher will print the error `"Name" contains an invalid character: _`

**Environment information**

- Rancher version: v2.4.5

- Rancher User interface: v2.4.28

- Installation option (single install/HA): HA

<!--

If the reported issue is regarding a created cluster, please provide requested info below

-->

**Cluster information**

- Cluster type (Hosted/Infrastructure Provider/Custom/Imported): Custom

- Machine type (cloud/VM/metal) and specifications (CPU/memory): VMware VMs. Specs n/a

- Kubernetes version (use `kubectl version`):

```

Client Version: version.Info{Major:"1", Minor:"17", GitVersion:"v1.17.9", GitCommit:"4fb7ed12476d57b8437ada90b4f93b17ffaeed99", GitTreeState:"clean", BuildDate:"2020-07-15T16:18:16Z", GoVersion:"go1.13.9", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"17", GitVersion:"v1.17.9", GitCommit:"4fb7ed12476d57b8437ada90b4f93b17ffaeed99", GitTreeState:"clean", BuildDate:"2020-07-15T16:10:45Z", GoVersion:"go1.13.9", Compiler:"gc", Platform:"linux/amd64"}

```

- Docker version (use `docker version`):

```

Client:

Version: 18.09.9

API version: 1.39

Go version: go1.11.13

Git commit: 039a7df9ba

Built: Wed Sep 4 16:57:28 2019

OS/Arch: linux/amd64

Experimental: false

Server: Docker Engine - Community

Engine:

Version: 18.09.9

API version: 1.39 (minimum version 1.12)

Go version: go1.11.13

Git commit: 039a7df

Built: Wed Sep 4 16:19:38 2019

OS/Arch: linux/amd64

Experimental: false

```

gz#14010 | test | deploy workload should print an error when creating namespace containing an underscore what kind of request is this question bug enhancement feature request bug steps to reproduce least amount of steps as possible head to the cluster project workloads view click deploy attempt to deploy a workload name test dock image anything namespace select add to a new namespace for namespace add something that contains an underscore such as namespace test hit launch result rancher will return a red error box at the bottom with no error other details that may be helpful if i create a namespace another way rancher warns me that underscores are not allowed in namespaces head to the cluster project view not workload click add namespace attempt to add a namespace name add something that contains an underscore such as namespace test hit create rancher will print the error name contains an invalid character environment information rancher version rancher user interface installation option single install ha ha if the reported issue is regarding a created cluster please provide requested info below cluster information cluster type hosted infrastructure provider custom imported custom machine type cloud vm metal and specifications cpu memory vmware vms specs n a kubernetes version use kubectl version client version version info major minor gitversion gitcommit gittreestate clean builddate goversion compiler gc platform linux server version version info major minor gitversion gitcommit gittreestate clean builddate goversion compiler gc platform linux docker version use docker version client version api version go version git commit built wed sep os arch linux experimental false server docker engine community engine version api version minimum version go version git commit built wed sep os arch linux experimental false gz | 1 |

211,229 | 16,191,162,415 | IssuesEvent | 2021-05-04 08:41:28 | lutraconsulting/qgis-mergin-plugin-manual-tests | https://api.github.com/repos/lutraconsulting/qgis-mergin-plugin-manual-tests | opened | Mergin plugin test plan | test plan | ## Test plan for mergin plugin

| Test environment | Value |

|---|---|

| Mergin Version: | 2021.5.1 |

| Mergin URL: <> | public.cloudmergin.com |

| QGIS Version: | 3.16 LTE |

| Mergin plugin Version: | 2021.2.1 |

| Date of Execution: | 4.5.2021 |

---

### Test Cases

- [X] ( #1 ) TC 01: Installation

- [X] ( #2 ) TC 02: Configuration

- [X] ( #2 ) TC 03: New project

- [X] ( #4 ) TC 04: Project management

- [X] ( #5 ) TC 05: Project permissions

- [X] ( #6 ) TC 06: Project tree

---

| Test Execution Outcome | |

|---|---|

| Issues Created During Testing: | https://github.com/lutraconsulting/qgis-mergin-plugin/issues/231

| | https://github.com/lutraconsulting/qgis-mergin-plugin/issues/229 |

| | https://github.com/lutraconsulting/qgis-mergin-plugin/issues/228 |

**Success** / **Bugs Created** (erase one) | 1.0 | Mergin plugin test plan - ## Test plan for mergin plugin

| Test environment | Value |

|---|---|

| Mergin Version: | 2021.5.1 |

| Mergin URL: <> | public.cloudmergin.com |

| QGIS Version: | 3.16 LTE |

| Mergin plugin Version: | 2021.2.1 |

| Date of Execution: | 4.5.2021 |

---

### Test Cases

- [X] ( #1 ) TC 01: Installation

- [X] ( #2 ) TC 02: Configuration

- [X] ( #2 ) TC 03: New project

- [X] ( #4 ) TC 04: Project management

- [X] ( #5 ) TC 05: Project permissions

- [X] ( #6 ) TC 06: Project tree

---

| Test Execution Outcome | |

|---|---|

| Issues Created During Testing: | https://github.com/lutraconsulting/qgis-mergin-plugin/issues/231

| | https://github.com/lutraconsulting/qgis-mergin-plugin/issues/229 |

| | https://github.com/lutraconsulting/qgis-mergin-plugin/issues/228 |

**Success** / **Bugs Created** (erase one) | test | mergin plugin test plan test plan for mergin plugin test environment value mergin version mergin url public cloudmergin com qgis version lte mergin plugin version date of execution test cases tc installation tc configuration tc new project tc project management tc project permissions tc project tree test execution outcome issues created during testing success bugs created erase one | 1 |

293,768 | 22,087,724,733 | IssuesEvent | 2022-06-01 01:35:59 | supabase/gotrue | https://api.github.com/repos/supabase/gotrue | closed | Enable Discussions | documentation | I don't know what "JAM projects" means, was hoping to ask in the discussions... but it doesn't look like it's set up. Would you consider enabling discussions for these kinds of questions? | 1.0 | Enable Discussions - I don't know what "JAM projects" means, was hoping to ask in the discussions... but it doesn't look like it's set up. Would you consider enabling discussions for these kinds of questions? | non_test | enable discussions i don t know what jam projects means was hoping to ask in the discussions but it doesn t look like it s set up would you consider enabling discussions for these kinds of questions | 0 |

207,246 | 15,798,782,111 | IssuesEvent | 2021-04-02 19:25:59 | hashicorp/terraform-provider-aws | https://api.github.com/repos/hashicorp/terraform-provider-aws | closed | Panic at Terraform 0.15-beta: can't use ElementIterator on unknown value | bug crash prerelease-tf-testing terraform-0.15 tests upstream-terraform | <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform CLI and Terraform AWS Provider Version

Terraform v0.15.0-beta2

AWS provider v3.32.0

### Affected Resources/Data Sources

<!--- Please list the affected resources and data sources. --->

* ds/aws_elasticsearch_domain

* r/aws_appsync_datasource

* r/aws_elasticsearch_domain_policy

* r/aws_kinesis_firehose_delivery_stream

* r/aws_opsworks_application

* r/aws_opsworks_custom_layer

* r/aws_opsworks_ganglia_layer

* r/aws_opsworks_haproxy_layer

* r/aws_opsworks_instance

* r/aws_opsworks_java_app_layer

* r/aws_opsworks_memcached_layer

* r/aws_opsworks_mysql_layer

* r/aws_opsworks_nodejs_app_layer

* r/aws_opsworks_permission

* r/aws_opsworks_php_app_layer

* r/aws_opsworks_rails_app_layer

* r/aws_opsworks_rds_db_instance

* r/aws_opsworks_static_web_layer

### Failing tests

1. TestAccAwsAppsyncDatasource_ElasticsearchConfig_Region

1. TestAccAwsAppsyncDatasource_Type_Elasticsearch

1. TestAccAWSDataElasticsearchDomain_basic

1. TestAccAWSElasticSearchDomainPolicy_basic

1. TestAccAWSKinesisFirehoseDeliveryStream_ElasticsearchConfigEndpointUpdates

1. TestAccAWSKinesisFirehoseDeliveryStream_ElasticsearchConfigUpdates

1. TestAccAWSKinesisFirehoseDeliveryStream_ElasticsearchWithVpcConfigUpdates

1. TestAccAWSOpsworksApplication_basic

1. TestAccAWSOpsworksCustomLayer_basic

1. TestAccAWSOpsworksCustomLayer_noVPC

1. TestAccAWSOpsworksCustomLayer_tags

1. TestAccAWSOpsworksGangliaLayer_basic

1. TestAccAWSOpsworksGangliaLayer_tags

1. TestAccAWSOpsworksHAProxyLayer_basic

1. TestAccAWSOpsworksHAProxyLayer_tags

1. TestAccAWSOpsworksInstance_basic

1. TestAccAWSOpsworksInstance_UpdateHostNameForceNew

1. TestAccAWSOpsworksJavaAppLayer_basic

1. TestAccAWSOpsworksJavaAppLayer_tags

1. TestAccAWSOpsworksMemcachedLayer_basic

1. TestAccAWSOpsworksMemcachedLayer_tags

1. TestAccAWSOpsworksMysqlLayer_basic

1. TestAccAWSOpsworksMysqlLayer_tags

1. TestAccAWSOpsworksNodejsAppLayer_basic

1. TestAccAWSOpsworksNodejsAppLayer_tags

1. TestAccAWSOpsworksPermission_basic

1. TestAccAWSOpsworksPermission_Self

1. TestAccAWSOpsworksPhpAppLayer_basic

1. TestAccAWSOpsworksPhpAppLayer_tags

1. TestAccAWSOpsworksRailsAppLayer_basic

1. TestAccAWSOpsworksRailsAppLayer_tags

1. TestAccAWSOpsworksRdsDbInstance_basic

1. TestAccAWSOpsworksStaticWebLayer_basic

1. TestAccAWSOpsworksStaticWebLayer_tags

### Terraform Configuration Files

See individual tests.

### Panic Output

```

data_source_aws_elasticsearch_domain_test.go:17: Step 1/1 error: Error running pre-apply refresh: exit status 2

panic: can't use ElementIterator on unknown value

goroutine 120 [running]:

github.com/zclconf/go-cty/cty.Value.ElementIterator(0x2c020b8, 0xc001896e40, 0x23a6120, 0x3caae20, 0xc00069a2a0, 0x19)

/go/pkg/mod/github.com/zclconf/go-cty@v1.8.1/cty/value_ops.go:1113 +0x13b

github.com/hashicorp/terraform/terraform.getValMarks(0xc00124c330, 0x2c02128, 0xc001897370, 0x23cfa00, 0xc002a1adb0, 0x0, 0x0, 0x0, 0x4010100, 0x0, ...)

/home/circleci/project/project/terraform/evaluate.go:992 +0x6c5

github.com/hashicorp/terraform/terraform.markProviderSensitiveAttributes(0xc00124c330, 0x2c02128, 0xc001897370, 0x23cfa00, 0xc002a1adb0, 0x2c02128, 0xc001897370, 0x23cfa00, 0xc002a1adb0)

/home/circleci/project/project/terraform/evaluate.go:959 +0x6e

github.com/hashicorp/terraform/terraform.(*evaluationStateData).GetResource(0xc000456fc0, 0x4d, 0xc000058708, 0x18, 0xc0001a69c8, 0x4, 0xc0000581e0, 0x18, 0x3d, 0x11, ...)

/home/circleci/project/project/terraform/evaluate.go:762 +0xbd5

github.com/hashicorp/terraform/lang.(*Scope).evalContext(0xc001eb1f90, 0xc0017dc3f0, 0x1, 0x1, 0x0, 0x0, 0x0, 0x0, 0x0, 0x30)

/home/circleci/project/project/lang/eval.go:360 +0x206d

github.com/hashicorp/terraform/lang.(*Scope).EvalContext(...)

/home/circleci/project/project/lang/eval.go:238

github.com/hashicorp/terraform/lang.(*Scope).EvalBlock(0xc001eb1f90, 0x2c00bb8, 0xc002997470, 0xc0017221b0, 0x1, 0x1, 0x0, 0x0, 0x0, 0x0, ...)

/home/circleci/project/project/lang/eval.go:51 +0xf3

github.com/hashicorp/terraform/terraform.(*BuiltinEvalContext).EvaluateBlock(0xc001144b60, 0x2c00e58, 0xc002997470, 0xc0017221b0, 0x0, 0x0, 0x0, 0x0, 0x0, 0x0, ...)

/home/circleci/project/project/terraform/eval_context_builtin.go:273 +0x1ad

github.com/hashicorp/terraform/terraform.(*NodeAbstractResourceInstance).planDataSource(0xc000452000, 0x2c37770, 0xc001144b60, 0x0, 0x1, 0x1, 0x0, 0x0, 0x0)

/home/circleci/project/project/terraform/node_resource_abstract_instance.go:1350 +0x515

github.com/hashicorp/terraform/terraform.(*NodePlannableResourceInstance).dataResourceExecute(0xc0018964e0, 0x2c37770, 0xc001144b60, 0xc000000001, 0xc0007a3118, 0xc002565c80)

/home/circleci/project/project/terraform/node_resource_plan_instance.go:73 +0x478

github.com/hashicorp/terraform/terraform.(*NodePlannableResourceInstance).Execute(0xc0018964e0, 0x2c37770, 0xc001144b60, 0xc000180002, 0xc002565d18, 0x40bb05, 0x2418a80)

/home/circleci/project/project/terraform/node_resource_plan_instance.go:43 +0x10d

github.com/hashicorp/terraform/terraform.(*ContextGraphWalker).Execute(0xc00039fc80, 0x2c37770, 0xc001144b60, 0x7f1682310508, 0xc0018964e0, 0x0, 0x0, 0x0)

/home/circleci/project/project/terraform/graph_walk_context.go:127 +0xbf

github.com/hashicorp/terraform/terraform.(*Graph).walk.func1(0x2745d00, 0xc0018964e0, 0x0, 0x0, 0x0)

/home/circleci/project/project/terraform/graph.go:59 +0xba2

github.com/hashicorp/terraform/dag.(*Walker).walkVertex(0xc001149ec0, 0x2745d00, 0xc0018964e0, 0xc001267900)

/home/circleci/project/project/dag/walk.go:381 +0x288

created by github.com/hashicorp/terraform/dag.(*Walker).Update

/home/circleci/project/project/dag/walk.go:304 +0x1246

--- FAIL: TestAccAWSDataElasticsearchDomain_basic (3.37s)

```

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Vendor documentation? For example:

--->

* hashicorp/terraform#28180

| 2.0 | Panic at Terraform 0.15-beta: can't use ElementIterator on unknown value - <!---

Please note the following potential times when an issue might be in Terraform core:

* [Configuration Language](https://www.terraform.io/docs/configuration/index.html) or resource ordering issues

* [State](https://www.terraform.io/docs/state/index.html) and [State Backend](https://www.terraform.io/docs/backends/index.html) issues

* [Provisioner](https://www.terraform.io/docs/provisioners/index.html) issues

* [Registry](https://registry.terraform.io/) issues

* Spans resources across multiple providers

If you are running into one of these scenarios, we recommend opening an issue in the [Terraform core repository](https://github.com/hashicorp/terraform/) instead.

--->

<!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not leave "+1" or other comments that do not add relevant new information or questions, they generate extra noise for issue followers and do not help prioritize the request

* If you are interested in working on this issue or have submitted a pull request, please leave a comment

<!--- Thank you for keeping this note for the community --->

### Terraform CLI and Terraform AWS Provider Version

Terraform v0.15.0-beta2

AWS provider v3.32.0

### Affected Resources/Data Sources

<!--- Please list the affected resources and data sources. --->

* ds/aws_elasticsearch_domain

* r/aws_appsync_datasource

* r/aws_elasticsearch_domain_policy

* r/aws_kinesis_firehose_delivery_stream

* r/aws_opsworks_application

* r/aws_opsworks_custom_layer

* r/aws_opsworks_ganglia_layer

* r/aws_opsworks_haproxy_layer

* r/aws_opsworks_instance

* r/aws_opsworks_java_app_layer

* r/aws_opsworks_memcached_layer

* r/aws_opsworks_mysql_layer

* r/aws_opsworks_nodejs_app_layer

* r/aws_opsworks_permission

* r/aws_opsworks_php_app_layer

* r/aws_opsworks_rails_app_layer

* r/aws_opsworks_rds_db_instance

* r/aws_opsworks_static_web_layer

### Failing tests

1. TestAccAwsAppsyncDatasource_ElasticsearchConfig_Region

1. TestAccAwsAppsyncDatasource_Type_Elasticsearch

1. TestAccAWSDataElasticsearchDomain_basic

1. TestAccAWSElasticSearchDomainPolicy_basic

1. TestAccAWSKinesisFirehoseDeliveryStream_ElasticsearchConfigEndpointUpdates

1. TestAccAWSKinesisFirehoseDeliveryStream_ElasticsearchConfigUpdates

1. TestAccAWSKinesisFirehoseDeliveryStream_ElasticsearchWithVpcConfigUpdates

1. TestAccAWSOpsworksApplication_basic

1. TestAccAWSOpsworksCustomLayer_basic

1. TestAccAWSOpsworksCustomLayer_noVPC

1. TestAccAWSOpsworksCustomLayer_tags

1. TestAccAWSOpsworksGangliaLayer_basic

1. TestAccAWSOpsworksGangliaLayer_tags

1. TestAccAWSOpsworksHAProxyLayer_basic

1. TestAccAWSOpsworksHAProxyLayer_tags

1. TestAccAWSOpsworksInstance_basic

1. TestAccAWSOpsworksInstance_UpdateHostNameForceNew

1. TestAccAWSOpsworksJavaAppLayer_basic

1. TestAccAWSOpsworksJavaAppLayer_tags

1. TestAccAWSOpsworksMemcachedLayer_basic

1. TestAccAWSOpsworksMemcachedLayer_tags

1. TestAccAWSOpsworksMysqlLayer_basic

1. TestAccAWSOpsworksMysqlLayer_tags

1. TestAccAWSOpsworksNodejsAppLayer_basic

1. TestAccAWSOpsworksNodejsAppLayer_tags

1. TestAccAWSOpsworksPermission_basic

1. TestAccAWSOpsworksPermission_Self

1. TestAccAWSOpsworksPhpAppLayer_basic

1. TestAccAWSOpsworksPhpAppLayer_tags

1. TestAccAWSOpsworksRailsAppLayer_basic

1. TestAccAWSOpsworksRailsAppLayer_tags

1. TestAccAWSOpsworksRdsDbInstance_basic

1. TestAccAWSOpsworksStaticWebLayer_basic

1. TestAccAWSOpsworksStaticWebLayer_tags

### Terraform Configuration Files

See individual tests.

### Panic Output

```

data_source_aws_elasticsearch_domain_test.go:17: Step 1/1 error: Error running pre-apply refresh: exit status 2

panic: can't use ElementIterator on unknown value

goroutine 120 [running]:

github.com/zclconf/go-cty/cty.Value.ElementIterator(0x2c020b8, 0xc001896e40, 0x23a6120, 0x3caae20, 0xc00069a2a0, 0x19)

/go/pkg/mod/github.com/zclconf/go-cty@v1.8.1/cty/value_ops.go:1113 +0x13b

github.com/hashicorp/terraform/terraform.getValMarks(0xc00124c330, 0x2c02128, 0xc001897370, 0x23cfa00, 0xc002a1adb0, 0x0, 0x0, 0x0, 0x4010100, 0x0, ...)

/home/circleci/project/project/terraform/evaluate.go:992 +0x6c5

github.com/hashicorp/terraform/terraform.markProviderSensitiveAttributes(0xc00124c330, 0x2c02128, 0xc001897370, 0x23cfa00, 0xc002a1adb0, 0x2c02128, 0xc001897370, 0x23cfa00, 0xc002a1adb0)

/home/circleci/project/project/terraform/evaluate.go:959 +0x6e

github.com/hashicorp/terraform/terraform.(*evaluationStateData).GetResource(0xc000456fc0, 0x4d, 0xc000058708, 0x18, 0xc0001a69c8, 0x4, 0xc0000581e0, 0x18, 0x3d, 0x11, ...)

/home/circleci/project/project/terraform/evaluate.go:762 +0xbd5

github.com/hashicorp/terraform/lang.(*Scope).evalContext(0xc001eb1f90, 0xc0017dc3f0, 0x1, 0x1, 0x0, 0x0, 0x0, 0x0, 0x0, 0x30)

/home/circleci/project/project/lang/eval.go:360 +0x206d

github.com/hashicorp/terraform/lang.(*Scope).EvalContext(...)

/home/circleci/project/project/lang/eval.go:238

github.com/hashicorp/terraform/lang.(*Scope).EvalBlock(0xc001eb1f90, 0x2c00bb8, 0xc002997470, 0xc0017221b0, 0x1, 0x1, 0x0, 0x0, 0x0, 0x0, ...)

/home/circleci/project/project/lang/eval.go:51 +0xf3

github.com/hashicorp/terraform/terraform.(*BuiltinEvalContext).EvaluateBlock(0xc001144b60, 0x2c00e58, 0xc002997470, 0xc0017221b0, 0x0, 0x0, 0x0, 0x0, 0x0, 0x0, ...)

/home/circleci/project/project/terraform/eval_context_builtin.go:273 +0x1ad

github.com/hashicorp/terraform/terraform.(*NodeAbstractResourceInstance).planDataSource(0xc000452000, 0x2c37770, 0xc001144b60, 0x0, 0x1, 0x1, 0x0, 0x0, 0x0)

/home/circleci/project/project/terraform/node_resource_abstract_instance.go:1350 +0x515

github.com/hashicorp/terraform/terraform.(*NodePlannableResourceInstance).dataResourceExecute(0xc0018964e0, 0x2c37770, 0xc001144b60, 0xc000000001, 0xc0007a3118, 0xc002565c80)

/home/circleci/project/project/terraform/node_resource_plan_instance.go:73 +0x478

github.com/hashicorp/terraform/terraform.(*NodePlannableResourceInstance).Execute(0xc0018964e0, 0x2c37770, 0xc001144b60, 0xc000180002, 0xc002565d18, 0x40bb05, 0x2418a80)

/home/circleci/project/project/terraform/node_resource_plan_instance.go:43 +0x10d

github.com/hashicorp/terraform/terraform.(*ContextGraphWalker).Execute(0xc00039fc80, 0x2c37770, 0xc001144b60, 0x7f1682310508, 0xc0018964e0, 0x0, 0x0, 0x0)

/home/circleci/project/project/terraform/graph_walk_context.go:127 +0xbf

github.com/hashicorp/terraform/terraform.(*Graph).walk.func1(0x2745d00, 0xc0018964e0, 0x0, 0x0, 0x0)

/home/circleci/project/project/terraform/graph.go:59 +0xba2

github.com/hashicorp/terraform/dag.(*Walker).walkVertex(0xc001149ec0, 0x2745d00, 0xc0018964e0, 0xc001267900)

/home/circleci/project/project/dag/walk.go:381 +0x288

created by github.com/hashicorp/terraform/dag.(*Walker).Update

/home/circleci/project/project/dag/walk.go:304 +0x1246

--- FAIL: TestAccAWSDataElasticsearchDomain_basic (3.37s)

```

### References

<!---

Information about referencing Github Issues: https://help.github.com/articles/basic-writing-and-formatting-syntax/#referencing-issues-and-pull-requests

Are there any other GitHub issues (open or closed) or pull requests that should be linked here? Vendor documentation? For example:

--->

* hashicorp/terraform#28180

| test | panic at terraform beta can t use elementiterator on unknown value please note the following potential times when an issue might be in terraform core or resource ordering issues and issues issues issues spans resources across multiple providers if you are running into one of these scenarios we recommend opening an issue in the instead community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or other comments that do not add relevant new information or questions they generate extra noise for issue followers and do not help prioritize the request if you are interested in working on this issue or have submitted a pull request please leave a comment terraform cli and terraform aws provider version terraform aws provider affected resources data sources ds aws elasticsearch domain r aws appsync datasource r aws elasticsearch domain policy r aws kinesis firehose delivery stream r aws opsworks application r aws opsworks custom layer r aws opsworks ganglia layer r aws opsworks haproxy layer r aws opsworks instance r aws opsworks java app layer r aws opsworks memcached layer r aws opsworks mysql layer r aws opsworks nodejs app layer r aws opsworks permission r aws opsworks php app layer r aws opsworks rails app layer r aws opsworks rds db instance r aws opsworks static web layer failing tests testaccawsappsyncdatasource elasticsearchconfig region testaccawsappsyncdatasource type elasticsearch testaccawsdataelasticsearchdomain basic testaccawselasticsearchdomainpolicy basic testaccawskinesisfirehosedeliverystream elasticsearchconfigendpointupdates testaccawskinesisfirehosedeliverystream elasticsearchconfigupdates testaccawskinesisfirehosedeliverystream elasticsearchwithvpcconfigupdates testaccawsopsworksapplication basic testaccawsopsworkscustomlayer basic testaccawsopsworkscustomlayer novpc testaccawsopsworkscustomlayer tags testaccawsopsworksganglialayer basic testaccawsopsworksganglialayer tags testaccawsopsworkshaproxylayer basic testaccawsopsworkshaproxylayer tags testaccawsopsworksinstance basic testaccawsopsworksinstance updatehostnameforcenew testaccawsopsworksjavaapplayer basic testaccawsopsworksjavaapplayer tags testaccawsopsworksmemcachedlayer basic testaccawsopsworksmemcachedlayer tags testaccawsopsworksmysqllayer basic testaccawsopsworksmysqllayer tags testaccawsopsworksnodejsapplayer basic testaccawsopsworksnodejsapplayer tags testaccawsopsworkspermission basic testaccawsopsworkspermission self testaccawsopsworksphpapplayer basic testaccawsopsworksphpapplayer tags testaccawsopsworksrailsapplayer basic testaccawsopsworksrailsapplayer tags testaccawsopsworksrdsdbinstance basic testaccawsopsworksstaticweblayer basic testaccawsopsworksstaticweblayer tags terraform configuration files see individual tests panic output data source aws elasticsearch domain test go step error error running pre apply refresh exit status panic can t use elementiterator on unknown value goroutine github com zclconf go cty cty value elementiterator go pkg mod github com zclconf go cty cty value ops go github com hashicorp terraform terraform getvalmarks home circleci project project terraform evaluate go github com hashicorp terraform terraform markprovidersensitiveattributes home circleci project project terraform evaluate go github com hashicorp terraform terraform evaluationstatedata getresource home circleci project project terraform evaluate go github com hashicorp terraform lang scope evalcontext home circleci project project lang eval go github com hashicorp terraform lang scope evalcontext home circleci project project lang eval go github com hashicorp terraform lang scope evalblock home circleci project project lang eval go github com hashicorp terraform terraform builtinevalcontext evaluateblock home circleci project project terraform eval context builtin go github com hashicorp terraform terraform nodeabstractresourceinstance plandatasource home circleci project project terraform node resource abstract instance go github com hashicorp terraform terraform nodeplannableresourceinstance dataresourceexecute home circleci project project terraform node resource plan instance go github com hashicorp terraform terraform nodeplannableresourceinstance execute home circleci project project terraform node resource plan instance go github com hashicorp terraform terraform contextgraphwalker execute home circleci project project terraform graph walk context go github com hashicorp terraform terraform graph walk home circleci project project terraform graph go github com hashicorp terraform dag walker walkvertex home circleci project project dag walk go created by github com hashicorp terraform dag walker update home circleci project project dag walk go fail testaccawsdataelasticsearchdomain basic references information about referencing github issues are there any other github issues open or closed or pull requests that should be linked here vendor documentation for example hashicorp terraform | 1 |

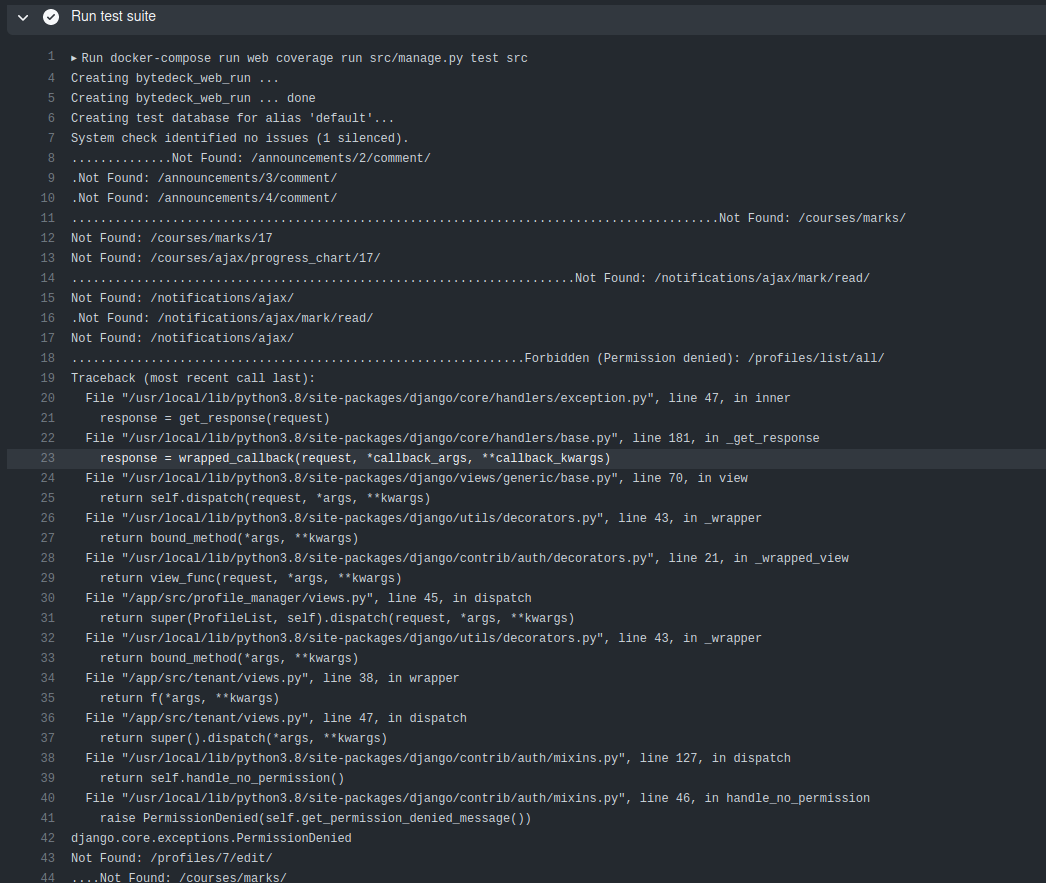

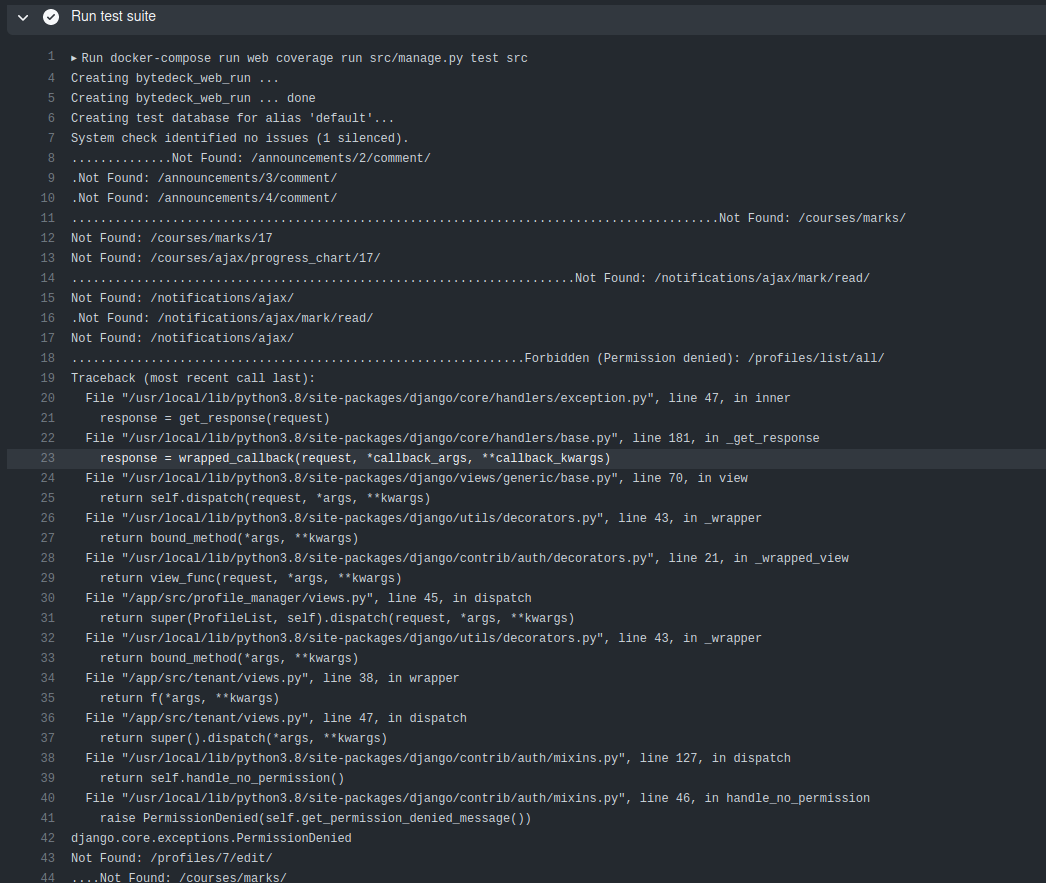

266,996 | 23,272,596,095 | IssuesEvent | 2022-08-05 01:58:14 | bytedeck/bytedeck | https://api.github.com/repos/bytedeck/bytedeck | closed | Pages not found and PermissionDenied exception in tests | testing devops | This has been going on as far back as I can check old test runs. Note that I was not getting these missing pages or permission errors locally until I deleted and recreated my venv, so it's possible there is some problem with a version of a module we are using in our requirements.txt ?

This is the oldest run that still has data, and it's showing the same thing (Click run test suite to view it)

https://github.com/bytedeck/bytedeck/runs/6438655994?check_suite_focus=true

| 1.0 | Pages not found and PermissionDenied exception in tests - This has been going on as far back as I can check old test runs. Note that I was not getting these missing pages or permission errors locally until I deleted and recreated my venv, so it's possible there is some problem with a version of a module we are using in our requirements.txt ?

This is the oldest run that still has data, and it's showing the same thing (Click run test suite to view it)

https://github.com/bytedeck/bytedeck/runs/6438655994?check_suite_focus=true

| test | pages not found and permissiondenied exception in tests this has been going on as far back as i can check old test runs note that i was not getting these missing pages or permission errors locally until i deleted and recreated my venv so it s possible there is some problem with a version of a module we are using in our requirements txt this is the oldest run that still has data and it s showing the same thing click run test suite to view it | 1 |

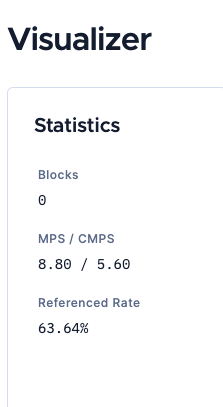

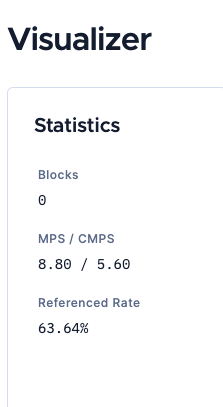

262,804 | 22,960,919,320 | IssuesEvent | 2022-07-19 15:18:25 | iotaledger/explorer | https://api.github.com/repos/iotaledger/explorer | opened | [Task]: Update Visualizer info labels | network:testnet network:shimmer context:visualizer | ### Task description

On Shimmer Visualiser the stats still show M as in Message in MPS / CMPS.

We should update it to B as in Block for stardust Networks.

### Requirements

N/A

### Acceptance criteria

N/A

### Creation checklist

- [X] I have assigned this task to the correct people

- [X] I have added the most appropriate labels

- [X] I have linked the correct milestone and/or project | 1.0 | [Task]: Update Visualizer info labels - ### Task description

On Shimmer Visualiser the stats still show M as in Message in MPS / CMPS.

We should update it to B as in Block for stardust Networks.

### Requirements

N/A

### Acceptance criteria

N/A

### Creation checklist

- [X] I have assigned this task to the correct people

- [X] I have added the most appropriate labels

- [X] I have linked the correct milestone and/or project | test | update visualizer info labels task description on shimmer visualiser the stats still show m as in message in mps cmps we should update it to b as in block for stardust networks requirements n a acceptance criteria n a creation checklist i have assigned this task to the correct people i have added the most appropriate labels i have linked the correct milestone and or project | 1 |

74,762 | 7,440,498,548 | IssuesEvent | 2018-03-27 10:16:30 | kettanaito/react-advanced-form | https://api.github.com/repos/kettanaito/react-advanced-form | closed | Integration: Set fast type speed and see form serialization | tests | ## Environment

* **react-advanaced-form:** 1.0.7

## What

See issue's title.

## Why

Selenium tests show that sometimes a form serialized with only the first characters entered into the field. That needs to be investigated. | 1.0 | Integration: Set fast type speed and see form serialization - ## Environment

* **react-advanaced-form:** 1.0.7

## What

See issue's title.

## Why

Selenium tests show that sometimes a form serialized with only the first characters entered into the field. That needs to be investigated. | test | integration set fast type speed and see form serialization environment react advanaced form what see issue s title why selenium tests show that sometimes a form serialized with only the first characters entered into the field that needs to be investigated | 1 |

155,889 | 12,281,106,513 | IssuesEvent | 2020-05-08 15:15:54 | d-r-q/qbit | https://api.github.com/repos/d-r-q/qbit | opened | Add child to parent tree test | api choose enhancement refactoring research tests | Requires API change to allow user to persist several entities in single call

Add test for factoring of tree of

```kotlin

data class ChildToParent(val id: Long?, parent: ChildToParent?)

``` | 1.0 | Add child to parent tree test - Requires API change to allow user to persist several entities in single call

Add test for factoring of tree of

```kotlin

data class ChildToParent(val id: Long?, parent: ChildToParent?)