Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3 values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17 values | text_combine stringlengths 95 262k | label stringclasses 2 values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

29,909 | 5,705,517,578 | IssuesEvent | 2017-04-18 08:45:49 | orbisgis/orbisgis | https://api.github.com/repos/orbisgis/orbisgis | closed | Wiki for the WPS process creation | Documentation Orbistoolbox WPS | Explain in the wiki how to build its own WPS script with the annotation, example ...

| 1.0 | Wiki for the WPS process creation - Explain in the wiki how to build its own WPS script with the annotation, example ...

| non_test | wiki for the wps process creation explain in the wiki how to build its own wps script with the annotation example | 0 |

24,344 | 11,031,190,574 | IssuesEvent | 2019-12-06 17:12:33 | brunobuzzi/BpmFlow | https://api.github.com/repos/brunobuzzi/BpmFlow | closed | Improve script analyzer for BpmScripts | enhancement security | The problem now is that a script can send message to any class then at runtime the execution of a script will be only limited by the security policy at GemStone level.

There should be a way to define valid and invalid classes to have more control of scripts execution.

Maybe also valid and invalid selectors. | True | Improve script analyzer for BpmScripts - The problem now is that a script can send message to any class then at runtime the execution of a script will be only limited by the security policy at GemStone level.

There should be a way to define valid and invalid classes to have more control of scripts execution.

Maybe also valid and invalid selectors. | non_test | improve script analyzer for bpmscripts the problem now is that a script can send message to any class then at runtime the execution of a script will be only limited by the security policy at gemstone level there should be a way to define valid and invalid classes to have more control of scripts execution maybe also valid and invalid selectors | 0 |

326,355 | 27,986,153,379 | IssuesEvent | 2023-03-26 18:13:17 | Sars9588/mywebclass-simulation | https://api.github.com/repos/Sars9588/mywebclass-simulation | closed | Testing clicking Privacy Policy Button in navigation bar | Test | Name of Test Developer: Meet

Test Name: clicking Privacy Policy Button in the navigation bar on the home page

Test Type: Button Click

| 1.0 | Testing clicking Privacy Policy Button in navigation bar - Name of Test Developer: Meet

Test Name: clicking Privacy Policy Button in the navigation bar on the home page

Test Type: Button Click

| test | testing clicking privacy policy button in navigation bar name of test developer meet test name clicking privacy policy button in the navigation bar on the home page test type button click | 1 |

197,067 | 14,907,005,596 | IssuesEvent | 2021-01-22 02:02:22 | gladiatorsprogramming1591/InfiniteRecharge2020-PBot | https://api.github.com/repos/gladiatorsprogramming1591/InfiniteRecharge2020-PBot | closed | Make sure migration retained all robot functionality. | testing | Test the code from e6c5af7af73cc670943f71f7a5f838027e767a31 to make sure it is the same as 15419c0fc4bee5ee55ce0a58bc204429e94149d4 (last commit on 2020 WPILib). | 1.0 | Make sure migration retained all robot functionality. - Test the code from e6c5af7af73cc670943f71f7a5f838027e767a31 to make sure it is the same as 15419c0fc4bee5ee55ce0a58bc204429e94149d4 (last commit on 2020 WPILib). | test | make sure migration retained all robot functionality test the code from to make sure it is the same as last commit on wpilib | 1 |

113,089 | 11,787,522,438 | IssuesEvent | 2020-03-17 14:10:28 | nest/nest-simulator | https://api.github.com/repos/nest/nest-simulator | opened | Create template for documentation issues | C: Documentation I: No breaking change P: Pending S: Low T: Enhancement | **Is your feature request related to a problem? Please describe.**

On https://github.com/nest/nest-simulator/issues/new/choose we currently have templates for bugs, feature requests and vulnerabilities, but not for errors or weaknesses related to documentation. This is problematic, since the other templates do not fit documentation very well.

**Describe the solution you'd like**

Create a template tailored to documentation-related issues.

| 1.0 | Create template for documentation issues - **Is your feature request related to a problem? Please describe.**

On https://github.com/nest/nest-simulator/issues/new/choose we currently have templates for bugs, feature requests and vulnerabilities, but not for errors or weaknesses related to documentation. This is problematic, since the other templates do not fit documentation very well.

**Describe the solution you'd like**

Create a template tailored to documentation-related issues.

| non_test | create template for documentation issues is your feature request related to a problem please describe on we currently have templates for bugs feature requests and vulnerabilities but not for errors or weaknesses related to documentation this is problematic since the other templates do not fit documentation very well describe the solution you d like create a template tailored to documentation related issues | 0 |

11,324 | 16,984,358,566 | IssuesEvent | 2021-06-30 12:51:14 | streamlink/streamlink | https://api.github.com/repos/streamlink/streamlink | closed | Streams kept buffering and ending on VLC for no reason | does not meet requirements more info required question | <!--

Thanks for reporting a bug!

USE THE TEMPLATE. Otherwise your bug report may be rejected.

First, see the contribution guidelines:

https://github.com/streamlink/streamlink/blob/master/CONTRIBUTING.md#contributing-to-streamlink

Bugs are the result of broken functionality within Streamlink's main code base. Use the plugin issue template if your report is about a broken plugin.

Also check the list of open and closed bug reports:

https://github.com/streamlink/streamlink/issues?q=is%3Aissue+label%3A%22bug%22

Please see the text preview to avoid unnecessary formatting errors.

-->

## Bug Report

<!-- Replace the space character between the square brackets with an x in order to check the boxes -->

- [ ] This is a bug report and I have read the contribution guidelines.

- [ ] I am using the latest development version from the master branch.

### Description

Most of the streams I'm trying to watch through VLC kept lagging and closing for no reason, and this isn't just with the plugin, but also in the Twitch GUI.

### Expected / Actual behavior

I expect the streams to play normally in VLC. But they've been lagging a lot and then they stopped playing and ended, and it's very annoying to no end.

### Reproduction steps / Explicit stream URLs to test

<!-- How can we reproduce this? Please note the exact steps below using the list format supplied. If you need more steps please add them. -->

1. ...

2. ...

3. ...

### Log output

<!--

DEBUG LOG OUTPUT IS REQUIRED for a bug report!

INCLUDE THE ENTIRE COMMAND LINE and make sure to **remove usernames and passwords**

Use the `--loglevel debug` parameter and avoid using parameters which suppress log output.

Debug log includes important details about your platform. Don't remove it.

https://streamlink.github.io/latest/cli.html#cmdoption-loglevel

You can copy the output to https://gist.github.com/ or paste it below.

Don't post screenshots of the log output and instead copy the text from your terminal application.

-->

```

REPLACE THIS TEXT WITH THE LOG OUTPUT

All log output should go between two blocks of triple backticks (grave accents) for proper formatting.

```

### Additional comments, etc.

[Love Streamlink? Please consider supporting our collective. Thanks!](https://opencollective.com/streamlink/donate)

| 1.0 | Streams kept buffering and ending on VLC for no reason - <!--

Thanks for reporting a bug!

USE THE TEMPLATE. Otherwise your bug report may be rejected.

First, see the contribution guidelines:

https://github.com/streamlink/streamlink/blob/master/CONTRIBUTING.md#contributing-to-streamlink

Bugs are the result of broken functionality within Streamlink's main code base. Use the plugin issue template if your report is about a broken plugin.

Also check the list of open and closed bug reports:

https://github.com/streamlink/streamlink/issues?q=is%3Aissue+label%3A%22bug%22

Please see the text preview to avoid unnecessary formatting errors.

-->

## Bug Report

<!-- Replace the space character between the square brackets with an x in order to check the boxes -->

- [ ] This is a bug report and I have read the contribution guidelines.

- [ ] I am using the latest development version from the master branch.

### Description

Most of the streams I'm trying to watch through VLC kept lagging and closing for no reason, and this isn't just with the plugin, but also in the Twitch GUI.

### Expected / Actual behavior

I expect the streams to play normally in VLC. But they've been lagging a lot and then they stopped playing and ended, and it's very annoying to no end.

### Reproduction steps / Explicit stream URLs to test

<!-- How can we reproduce this? Please note the exact steps below using the list format supplied. If you need more steps please add them. -->

1. ...

2. ...

3. ...

### Log output

<!--

DEBUG LOG OUTPUT IS REQUIRED for a bug report!

INCLUDE THE ENTIRE COMMAND LINE and make sure to **remove usernames and passwords**

Use the `--loglevel debug` parameter and avoid using parameters which suppress log output.

Debug log includes important details about your platform. Don't remove it.

https://streamlink.github.io/latest/cli.html#cmdoption-loglevel

You can copy the output to https://gist.github.com/ or paste it below.

Don't post screenshots of the log output and instead copy the text from your terminal application.

-->

```

REPLACE THIS TEXT WITH THE LOG OUTPUT

All log output should go between two blocks of triple backticks (grave accents) for proper formatting.

```

### Additional comments, etc.

[Love Streamlink? Please consider supporting our collective. Thanks!](https://opencollective.com/streamlink/donate)

| non_test | streams kept buffering and ending on vlc for no reason thanks for reporting a bug use the template otherwise your bug report may be rejected first see the contribution guidelines bugs are the result of broken functionality within streamlink s main code base use the plugin issue template if your report is about a broken plugin also check the list of open and closed bug reports please see the text preview to avoid unnecessary formatting errors bug report this is a bug report and i have read the contribution guidelines i am using the latest development version from the master branch description most of the streams i m trying to watch through vlc kept lagging and closing for no reason and this isn t just with the plugin but also in the twitch gui expected actual behavior i expect the streams to play normally in vlc but they ve been lagging a lot and then they stopped playing and ended and it s very annoying to no end reproduction steps explicit stream urls to test log output debug log output is required for a bug report include the entire command line and make sure to remove usernames and passwords use the loglevel debug parameter and avoid using parameters which suppress log output debug log includes important details about your platform don t remove it you can copy the output to or paste it below don t post screenshots of the log output and instead copy the text from your terminal application replace this text with the log output all log output should go between two blocks of triple backticks grave accents for proper formatting additional comments etc | 0 |

28,334 | 4,387,805,826 | IssuesEvent | 2016-08-08 16:53:08 | red/red | https://api.github.com/repos/red/red | closed | "Internal error: stack overflow" when parsing big string data | status.built status.tested type.bug | Using xml parser from https://github.com/Zamlox/red-tools/blob/master/xml/xml.red on file http://filebin.ca/2qrYaePtAywP/test.xml will give following error:

```

*** Internal Error: stack overflow

*** Where: copy

```

Sequence to reproduce:

````

content: read %test.xml

probe xml/to-block content

``` | 1.0 | "Internal error: stack overflow" when parsing big string data - Using xml parser from https://github.com/Zamlox/red-tools/blob/master/xml/xml.red on file http://filebin.ca/2qrYaePtAywP/test.xml will give following error:

```

*** Internal Error: stack overflow

*** Where: copy

```

Sequence to reproduce:

````

content: read %test.xml

probe xml/to-block content

``` | test | internal error stack overflow when parsing big string data using xml parser from on file will give following error internal error stack overflow where copy sequence to reproduce content read test xml probe xml to block content | 1 |

139,811 | 11,286,359,089 | IssuesEvent | 2020-01-16 00:22:00 | eventespresso/event-espresso-core | https://api.github.com/repos/eventespresso/event-espresso-core | closed | Write Tests for Application Hooks | EDTR Prototype category:unit-tests | - [ ] application/hooks/useIfMounted

- [ ] application/hooks/useTimeZoneTime | 1.0 | Write Tests for Application Hooks - - [ ] application/hooks/useIfMounted

- [ ] application/hooks/useTimeZoneTime | test | write tests for application hooks application hooks useifmounted application hooks usetimezonetime | 1 |

23,441 | 7,329,998,637 | IssuesEvent | 2018-03-05 08:17:17 | moment/moment | https://api.github.com/repos/moment/moment | closed | Missing files when installing with JSPM 0.17.0-beta.14 | Build/Release | When installing Moment.js via JSPM v0.17.0-beta.14 by `jspm install moment` I miss the following files in the downloaded package:

```

moment.d.ts

package.json

```

and the `min` folder's empty.

I suggest to add these files to `package.json`:

```

"jspm": {

"buildConfig": {

"uglify": true

},

"files": [

"package.json",

"moment.js",

"moment.d.ts",

"locale",

"min"

],

"map": {

"moment": "./moment"

}

},

```

Thanks!

P.S.: PR?

| 1.0 | Missing files when installing with JSPM 0.17.0-beta.14 - When installing Moment.js via JSPM v0.17.0-beta.14 by `jspm install moment` I miss the following files in the downloaded package:

```

moment.d.ts

package.json

```

and the `min` folder's empty.

I suggest to add these files to `package.json`:

```

"jspm": {

"buildConfig": {

"uglify": true

},

"files": [

"package.json",

"moment.js",

"moment.d.ts",

"locale",

"min"

],

"map": {

"moment": "./moment"

}

},

```

Thanks!

P.S.: PR?

| non_test | missing files when installing with jspm beta when installing moment js via jspm beta by jspm install moment i miss the following files in the downloaded package moment d ts package json and the min folder s empty i suggest to add these files to package json jspm buildconfig uglify true files package json moment js moment d ts locale min map moment moment thanks p s pr | 0 |

224,053 | 17,657,824,304 | IssuesEvent | 2021-08-21 00:05:31 | nasa/cFE | https://api.github.com/repos/nasa/cFE | closed | SB coverage test - need to verify MsgSendErrorCounter increments when msg too big | unit-test | **Is your feature request related to a problem? Please describe.**

Missing verification of MsgSendErrorCounter increment on message too big (requirement)

**Describe the solution you'd like**

Add verification

**Describe alternatives you've considered**

None

**Additional context**

None

**Requester Info**

Jacob Hageman - NASA/GSFC

| 1.0 | SB coverage test - need to verify MsgSendErrorCounter increments when msg too big - **Is your feature request related to a problem? Please describe.**

Missing verification of MsgSendErrorCounter increment on message too big (requirement)

**Describe the solution you'd like**

Add verification

**Describe alternatives you've considered**

None

**Additional context**

None

**Requester Info**

Jacob Hageman - NASA/GSFC

| test | sb coverage test need to verify msgsenderrorcounter increments when msg too big is your feature request related to a problem please describe missing verification of msgsenderrorcounter increment on message too big requirement describe the solution you d like add verification describe alternatives you ve considered none additional context none requester info jacob hageman nasa gsfc | 1 |

2,653 | 5,430,470,388 | IssuesEvent | 2017-03-03 21:20:29 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Ubuntu 16.04 outerloop debug - System.Diagnostics.Tests.ProcessWaitingTests.WaitChain failed with "Xunit.Sdk.EqualException" | area-System.Diagnostics.Process test bug test-run-core | Failed test: System.Diagnostics.Tests.ProcessWaitingTests.WaitChain

Detail: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_ubuntu16.04_debug/92/consoleText

Message:

~~~

System.Diagnostics.Tests.ProcessWaitingTests.WaitChain [FAIL]

Assert.Equal() Failure

Expected: 42

Actual: 145

~~~

Stack Trace:

~~~

/mnt/j/workspace/dotnet_corefx/master/outerloop_ubuntu16.04_debug/src/System.Diagnostics.Process/tests/ProcessWaitingTests.cs(190,0): at System.Diagnostics.Tests.ProcessWaitingTests.WaitChain()

~~~

Configuration:

OuterLoop_Ubuntu16.04_debug (build#92) | 1.0 | Ubuntu 16.04 outerloop debug - System.Diagnostics.Tests.ProcessWaitingTests.WaitChain failed with "Xunit.Sdk.EqualException" - Failed test: System.Diagnostics.Tests.ProcessWaitingTests.WaitChain

Detail: https://ci.dot.net/job/dotnet_corefx/job/master/job/outerloop_ubuntu16.04_debug/92/consoleText

Message:

~~~

System.Diagnostics.Tests.ProcessWaitingTests.WaitChain [FAIL]

Assert.Equal() Failure

Expected: 42

Actual: 145

~~~

Stack Trace:

~~~

/mnt/j/workspace/dotnet_corefx/master/outerloop_ubuntu16.04_debug/src/System.Diagnostics.Process/tests/ProcessWaitingTests.cs(190,0): at System.Diagnostics.Tests.ProcessWaitingTests.WaitChain()

~~~

Configuration:

OuterLoop_Ubuntu16.04_debug (build#92) | non_test | ubuntu outerloop debug system diagnostics tests processwaitingtests waitchain failed with xunit sdk equalexception failed test system diagnostics tests processwaitingtests waitchain detail message system diagnostics tests processwaitingtests waitchain assert equal failure expected actual stack trace mnt j workspace dotnet corefx master outerloop debug src system diagnostics process tests processwaitingtests cs at system diagnostics tests processwaitingtests waitchain configuration outerloop debug build | 0 |

690,878 | 23,675,767,170 | IssuesEvent | 2022-08-28 03:36:26 | angelside/zebra-password-changer-cli-py | https://api.github.com/repos/angelside/zebra-password-changer-cli-py | closed | 🔖 Invalid password message enhancement | priority: low status: pending type: enhancement | From

`"Please enter a 4 digit number!"`

to

`"Password is invalid! Please enter a 4 digit number."` | 1.0 | 🔖 Invalid password message enhancement - From

`"Please enter a 4 digit number!"`

to

`"Password is invalid! Please enter a 4 digit number."` | non_test | 🔖 invalid password message enhancement from please enter a digit number to password is invalid please enter a digit number | 0 |

75,764 | 15,495,568,909 | IssuesEvent | 2021-03-11 01:05:38 | ignatandrei/SimpleBookRental | https://api.github.com/repos/ignatandrei/SimpleBookRental | opened | CVE-2020-7693 (Medium) detected in sockjs-0.3.19.tgz | security vulnerability | ## CVE-2020-7693 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sockjs-0.3.19.tgz</b></p></summary>

<p>SockJS-node is a server counterpart of SockJS-client a JavaScript library that provides a WebSocket-like object in the browser. SockJS gives you a coherent, cross-browser, Javascript API which creates a low latency, full duplex, cross-domain communication</p>

<p>Library home page: <a href="https://registry.npmjs.org/sockjs/-/sockjs-0.3.19.tgz">https://registry.npmjs.org/sockjs/-/sockjs-0.3.19.tgz</a></p>

<p>Path to dependency file: SimpleBookRental/src/WebDashboard/book-dashboard/package.json</p>

<p>Path to vulnerable library: SimpleBookRental/src/WebDashboardNG/book-dash/node_modules/sockjs/package.json,SimpleBookRental/src/WebDashboardNG/book-dash/node_modules/sockjs/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.3.0.tgz (Root Library)

- webpack-dev-server-3.9.0.tgz

- :x: **sockjs-0.3.19.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Incorrect handling of Upgrade header with the value websocket leads in crashing of containers hosting sockjs apps. This affects the package sockjs before 0.3.20.

<p>Publish Date: 2020-07-09

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-7693>CVE-2020-7693</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/sockjs/sockjs-node/pull/265">https://github.com/sockjs/sockjs-node/pull/265</a></p>

<p>Release Date: 2020-07-09</p>

<p>Fix Resolution: sockjs - 0.3.20</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-7693 (Medium) detected in sockjs-0.3.19.tgz - ## CVE-2020-7693 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>sockjs-0.3.19.tgz</b></p></summary>

<p>SockJS-node is a server counterpart of SockJS-client a JavaScript library that provides a WebSocket-like object in the browser. SockJS gives you a coherent, cross-browser, Javascript API which creates a low latency, full duplex, cross-domain communication</p>

<p>Library home page: <a href="https://registry.npmjs.org/sockjs/-/sockjs-0.3.19.tgz">https://registry.npmjs.org/sockjs/-/sockjs-0.3.19.tgz</a></p>

<p>Path to dependency file: SimpleBookRental/src/WebDashboard/book-dashboard/package.json</p>

<p>Path to vulnerable library: SimpleBookRental/src/WebDashboardNG/book-dash/node_modules/sockjs/package.json,SimpleBookRental/src/WebDashboardNG/book-dash/node_modules/sockjs/package.json</p>

<p>

Dependency Hierarchy:

- react-scripts-3.3.0.tgz (Root Library)

- webpack-dev-server-3.9.0.tgz

- :x: **sockjs-0.3.19.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Incorrect handling of Upgrade header with the value websocket leads in crashing of containers hosting sockjs apps. This affects the package sockjs before 0.3.20.

<p>Publish Date: 2020-07-09

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-7693>CVE-2020-7693</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/sockjs/sockjs-node/pull/265">https://github.com/sockjs/sockjs-node/pull/265</a></p>

<p>Release Date: 2020-07-09</p>

<p>Fix Resolution: sockjs - 0.3.20</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | cve medium detected in sockjs tgz cve medium severity vulnerability vulnerable library sockjs tgz sockjs node is a server counterpart of sockjs client a javascript library that provides a websocket like object in the browser sockjs gives you a coherent cross browser javascript api which creates a low latency full duplex cross domain communication library home page a href path to dependency file simplebookrental src webdashboard book dashboard package json path to vulnerable library simplebookrental src webdashboardng book dash node modules sockjs package json simplebookrental src webdashboardng book dash node modules sockjs package json dependency hierarchy react scripts tgz root library webpack dev server tgz x sockjs tgz vulnerable library vulnerability details incorrect handling of upgrade header with the value websocket leads in crashing of containers hosting sockjs apps this affects the package sockjs before publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution sockjs step up your open source security game with whitesource | 0 |

87,580 | 17,332,103,223 | IssuesEvent | 2021-07-28 04:50:39 | BeccaLyria/discord-bot | https://api.github.com/repos/BeccaLyria/discord-bot | closed | [OTHER] - D-API V8 | 💻 aspect: code 🚧 status: blocked | # Other Issue

## Describe the issue

`discord.js` uses `v6` of the Discord API. This is fine, for the time being. However, at some point next year `v6` will be deprecated and the bot will need to transition to `v8`. (There's a story behind v7 being skipped...)

For now, **NO ACTION IS NEEDED** on this issue. I'm creating this as a reminder to keep an eye out for the next `major` release of `discord.js`, as this will be an upgrade to `v8`.

An important thing to keep an eye on will be the mandatory `intents`, which need to be declared in the connection string.

## Additional information

<!--Add any other context about the problem here.--> | 1.0 | [OTHER] - D-API V8 - # Other Issue

## Describe the issue

`discord.js` uses `v6` of the Discord API. This is fine, for the time being. However, at some point next year `v6` will be deprecated and the bot will need to transition to `v8`. (There's a story behind v7 being skipped...)

For now, **NO ACTION IS NEEDED** on this issue. I'm creating this as a reminder to keep an eye out for the next `major` release of `discord.js`, as this will be an upgrade to `v8`.

An important thing to keep an eye on will be the mandatory `intents`, which need to be declared in the connection string.

## Additional information

<!--Add any other context about the problem here.--> | non_test | d api other issue describe the issue discord js uses of the discord api this is fine for the time being however at some point next year will be deprecated and the bot will need to transition to there s a story behind being skipped for now no action is needed on this issue i m creating this as a reminder to keep an eye out for the next major release of discord js as this will be an upgrade to an important thing to keep an eye on will be the mandatory intents which need to be declared in the connection string additional information | 0 |

44,327 | 5,796,453,793 | IssuesEvent | 2017-05-02 19:30:28 | phetsims/states-of-matter | https://api.github.com/repos/phetsims/states-of-matter | closed | Items to Include in Teacher Tips | design:teaching-resources priority:2-high | From the Java Tips (some rewording necessary)

- [x] For solid water, the sim simplifies the model emphasizing that there is space between the

molecules. A resource for the most common visual for ice structure is

http://www1.lsbu.ac.uk/water/hexagonal_ice.html

- [x] The phase diagrams are shown qualitatively in the sim, to help students get a general

understanding of phase diagrams. Quantitative phase diagrams are shown for water, neon,

argon and oxygen on page 2 of these Tips. (Elaborate on this to address https://github.com/phetsims/states-of-matter/issues/168#issuecomment-264053333.)

- [x] The sim is not designed to be used as a comprehensive tool for learning about phase

diagrams, instead the focus is on phases of matter. The small number of particles shown and

the simplicity of the underlying models makes it difficult to map accurately the exact phase to

the correct regions of the phase diagram. However, we felt there would be some benefit to

students being exposed to a simplified phase diagram. In the sim, the diagram marker remains

on the coexistence line between liquid/gas or solid/gas (and is extrapolated into the critical

region). If this approximation does not fit your specific learning goals, and you are concerned

this might cause confusion, you can encourage your students to keep the phase diagram

closed.

Additional model simplifications

- [x] Pressure will be zero at 0K because zero motion = zero momentum transfers between the particles and the container walls = zero pressure #154

- [x] It is possible to reach absolute zero, but the rate of temperature change slows down quite substantially as 0K is approached. This is intentional, since it is very difficult to make a system of molecules this cold. True absolute zero is impossible to achieve, so this should be thought of as rounding down from anything below 0.5K.

- [x] A note that we do not include Plasma on purpose (even though it is considered a state of matter).

- [x] Some amount of gravity is simulated, but it is minimal - just enough to keep the solid forms of the substances on the floor of the container. For this reason, substances in their liquid form don't always spread out along the bottom of the container, as, say, water does in a glass.

- [x] The model works best when there are at least (roughly) 15 particles in the container. It is possible to create situations where there are only a few particles in the container and, in these situations, users may observe some odd behaviors. One example is occasional visible changes to the velocity of individual particles. If students observe such things, they should be told that this is a due to the limitations of the model, and doesn't represent "real world" phenomena.

- [x] Equilibrium states -- https://github.com/phetsims/states-of-matter/issues/168#issuecomment-264053333

- [ ] Limits of quantitative comparisons -- https://github.com/phetsims/states-of-matter/issues/168#issuecomment-264053333

- [x] Latent heat is not really being addressed (but could discuss the breaking of the crystal lattice order or such) -- https://github.com/phetsims/states-of-matter/issues/168#issuecomment-264054515

- [x] Temperature is allowed to change when particles are injected, based on the velocity of the particles -- #182

Other things to note

- [x] Bicycle pump indicator bars #167

- [x] A bit of explanation of "Return Lid" behavior, might be useful since it might not be completely obvious. basically that it captures the particles in the container at the time and the pump is "refilled" to a level that allows the max number of particles (I believe)

- [x] For younger students, it may be important to explain that the hand and the container are not at all to scale, since in the real world they too are made of atoms and molecules. | 1.0 | Items to Include in Teacher Tips - From the Java Tips (some rewording necessary)

- [x] For solid water, the sim simplifies the model emphasizing that there is space between the

molecules. A resource for the most common visual for ice structure is

http://www1.lsbu.ac.uk/water/hexagonal_ice.html

- [x] The phase diagrams are shown qualitatively in the sim, to help students get a general

understanding of phase diagrams. Quantitative phase diagrams are shown for water, neon,

argon and oxygen on page 2 of these Tips. (Elaborate on this to address https://github.com/phetsims/states-of-matter/issues/168#issuecomment-264053333.)

- [x] The sim is not designed to be used as a comprehensive tool for learning about phase

diagrams, instead the focus is on phases of matter. The small number of particles shown and

the simplicity of the underlying models makes it difficult to map accurately the exact phase to

the correct regions of the phase diagram. However, we felt there would be some benefit to

students being exposed to a simplified phase diagram. In the sim, the diagram marker remains

on the coexistence line between liquid/gas or solid/gas (and is extrapolated into the critical

region). If this approximation does not fit your specific learning goals, and you are concerned

this might cause confusion, you can encourage your students to keep the phase diagram

closed.

Additional model simplifications

- [x] Pressure will be zero at 0K because zero motion = zero momentum transfers between the particles and the container walls = zero pressure #154

- [x] It is possible to reach absolute zero, but the rate of temperature change slows down quite substantially as 0K is approached. This is intentional, since it is very difficult to make a system of molecules this cold. True absolute zero is impossible to achieve, so this should be thought of as rounding down from anything below 0.5K.

- [x] A note that we do not include Plasma on purpose (even though it is considered a state of matter).

- [x] Some amount of gravity is simulated, but it is minimal - just enough to keep the solid forms of the substances on the floor of the container. For this reason, substances in their liquid form don't always spread out along the bottom of the container, as, say, water does in a glass.

- [x] The model works best when there are at least (roughly) 15 particles in the container. It is possible to create situations where there are only a few particles in the container and, in these situations, users may observe some odd behaviors. One example is occasional visible changes to the velocity of individual particles. If students observe such things, they should be told that this is a due to the limitations of the model, and doesn't represent "real world" phenomena.

- [x] Equilibrium states -- https://github.com/phetsims/states-of-matter/issues/168#issuecomment-264053333

- [ ] Limits of quantitative comparisons -- https://github.com/phetsims/states-of-matter/issues/168#issuecomment-264053333

- [x] Latent heat is not really being addressed (but could discuss the breaking of the crystal lattice order or such) -- https://github.com/phetsims/states-of-matter/issues/168#issuecomment-264054515

- [x] Temperature is allowed to change when particles are injected, based on the velocity of the particles -- #182

Other things to note

- [x] Bicycle pump indicator bars #167

- [x] A bit of explanation of "Return Lid" behavior, might be useful since it might not be completely obvious. basically that it captures the particles in the container at the time and the pump is "refilled" to a level that allows the max number of particles (I believe)

- [x] For younger students, it may be important to explain that the hand and the container are not at all to scale, since in the real world they too are made of atoms and molecules. | non_test | items to include in teacher tips from the java tips some rewording necessary for solid water the sim simplifies the model emphasizing that there is space between the molecules a resource for the most common visual for ice structure is the phase diagrams are shown qualitatively in the sim to help students get a general understanding of phase diagrams quantitative phase diagrams are shown for water neon argon and oxygen on page of these tips elaborate on this to address the sim is not designed to be used as a comprehensive tool for learning about phase diagrams instead the focus is on phases of matter the small number of particles shown and the simplicity of the underlying models makes it difficult to map accurately the exact phase to the correct regions of the phase diagram however we felt there would be some benefit to students being exposed to a simplified phase diagram in the sim the diagram marker remains on the coexistence line between liquid gas or solid gas and is extrapolated into the critical region if this approximation does not fit your specific learning goals and you are concerned this might cause confusion you can encourage your students to keep the phase diagram closed additional model simplifications pressure will be zero at because zero motion zero momentum transfers between the particles and the container walls zero pressure it is possible to reach absolute zero but the rate of temperature change slows down quite substantially as is approached this is intentional since it is very difficult to make a system of molecules this cold true absolute zero is impossible to achieve so this should be thought of as rounding down from anything below a note that we do not include plasma on purpose even though it is considered a state of matter some amount of gravity is simulated but it is minimal just enough to keep the solid forms of the substances on the floor of the container for this reason substances in their liquid form don t always spread out along the bottom of the container as say water does in a glass the model works best when there are at least roughly particles in the container it is possible to create situations where there are only a few particles in the container and in these situations users may observe some odd behaviors one example is occasional visible changes to the velocity of individual particles if students observe such things they should be told that this is a due to the limitations of the model and doesn t represent real world phenomena equilibrium states limits of quantitative comparisons latent heat is not really being addressed but could discuss the breaking of the crystal lattice order or such temperature is allowed to change when particles are injected based on the velocity of the particles other things to note bicycle pump indicator bars a bit of explanation of return lid behavior might be useful since it might not be completely obvious basically that it captures the particles in the container at the time and the pump is refilled to a level that allows the max number of particles i believe for younger students it may be important to explain that the hand and the container are not at all to scale since in the real world they too are made of atoms and molecules | 0 |

2,844 | 8,392,143,210 | IssuesEvent | 2018-10-09 16:46:19 | qTox/qTox | https://api.github.com/repos/qTox/qTox | opened | Store per profile settings in the database | I-architecture | Currently we have a per profile *.ini file as well as a sqlite database. To reduce complexity we should move everything stored in the *.ini file to the database.

The switch has the following advantages:

- less files that the user has to backup

- probably easier settings upgrades

Disadvantages:

- some work

- Need to find a good db schema that allows future upgrades and allows us to change datatypes later

@Diadlo @sphaerophoria what do you think? | 1.0 | Store per profile settings in the database - Currently we have a per profile *.ini file as well as a sqlite database. To reduce complexity we should move everything stored in the *.ini file to the database.

The switch has the following advantages:

- less files that the user has to backup

- probably easier settings upgrades

Disadvantages:

- some work

- Need to find a good db schema that allows future upgrades and allows us to change datatypes later

@Diadlo @sphaerophoria what do you think? | non_test | store per profile settings in the database currently we have a per profile ini file as well as a sqlite database to reduce complexity we should move everything stored in the ini file to the database the switch has the following advantages less files that the user has to backup probably easier settings upgrades disadvantages some work need to find a good db schema that allows future upgrades and allows us to change datatypes later diadlo sphaerophoria what do you think | 0 |

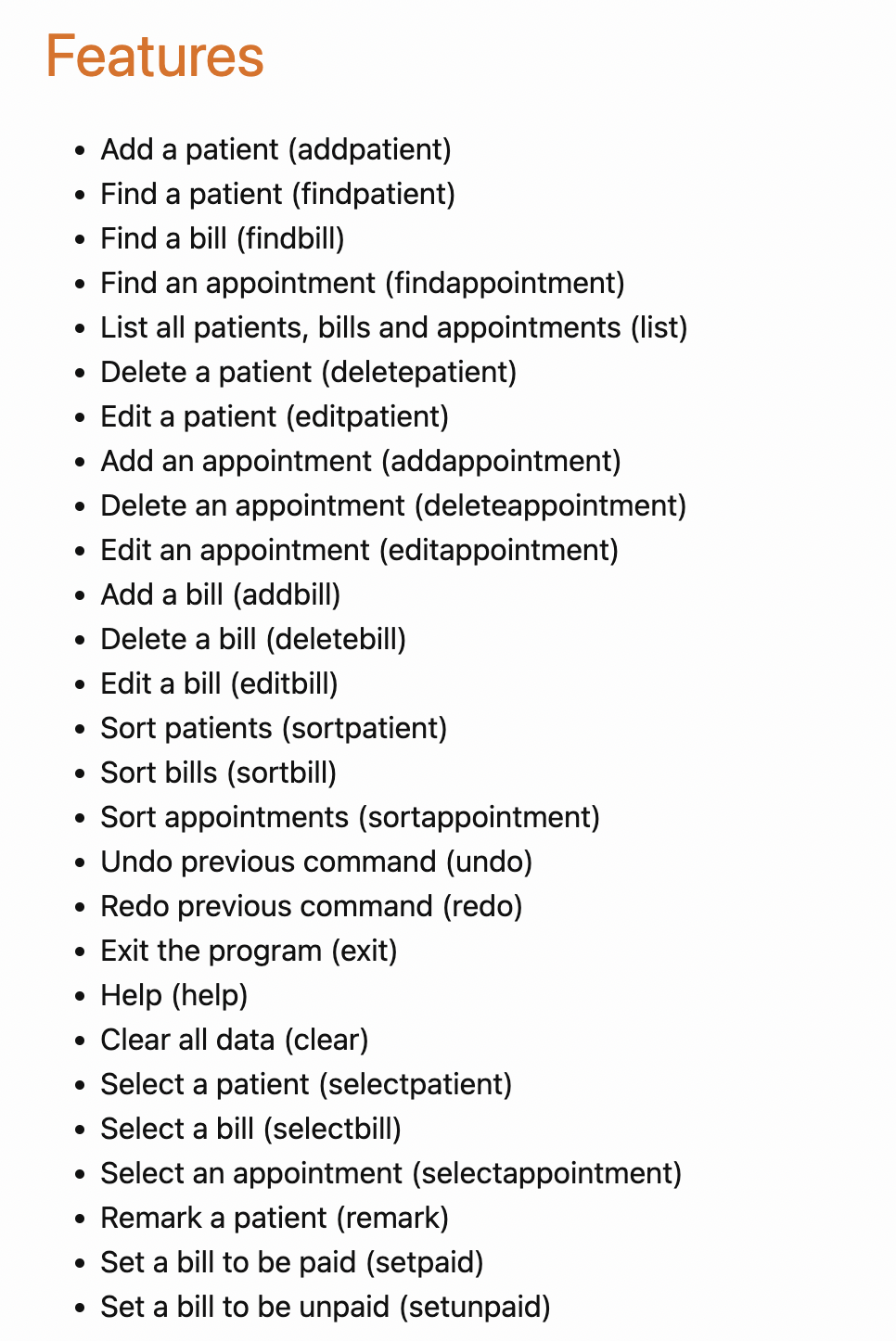

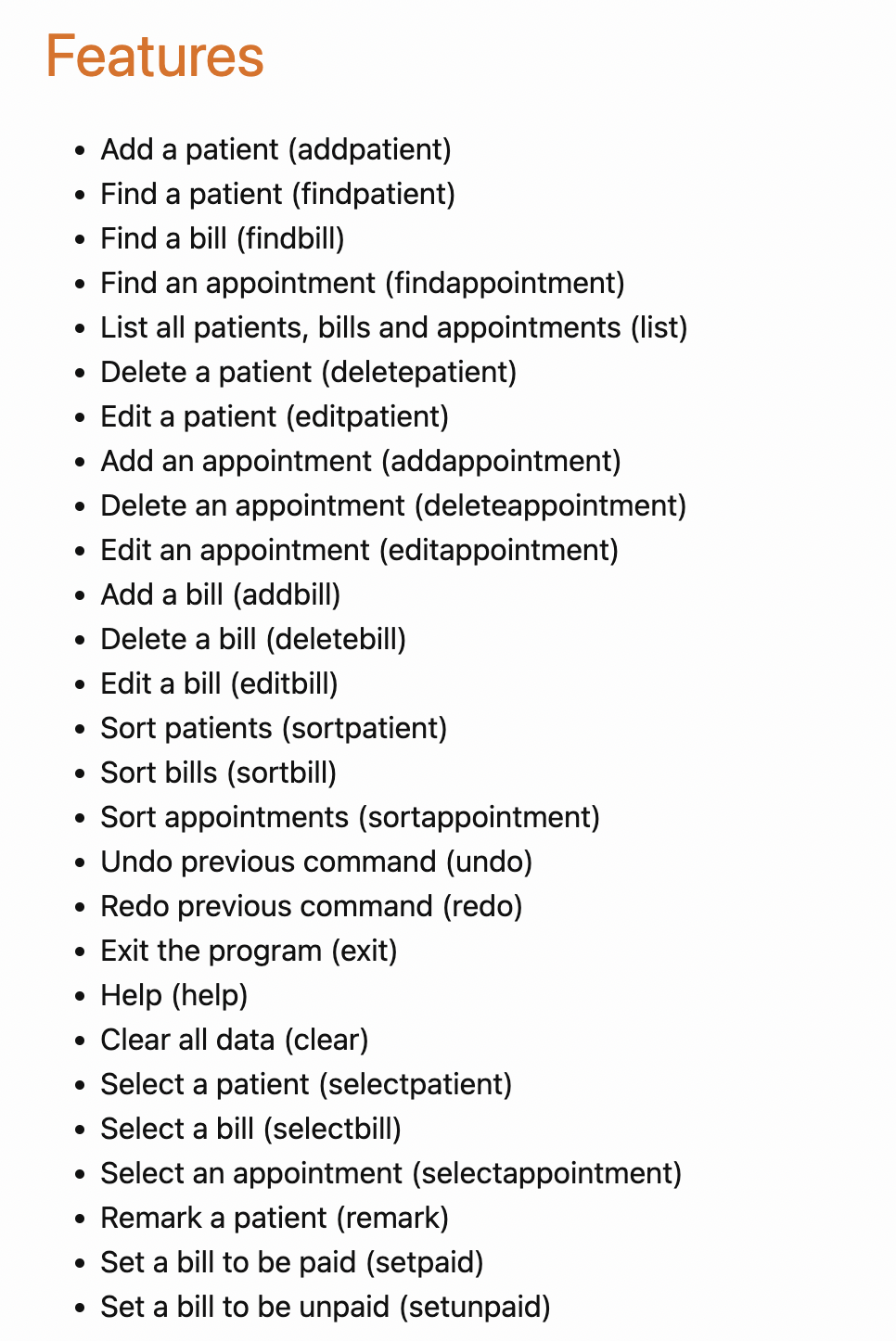

723,833 | 24,908,908,228 | IssuesEvent | 2022-10-29 16:08:23 | AY2223S1-CS2103T-W08-1/tp | https://api.github.com/repos/AY2223S1-CS2103T-W08-1/tp | closed | [PE-D][Tester F] User guide has a feature in the top overview that is unimplemented | low priority | - Select bill unimplemented

<!--session: 1666945444121-db087f50-d06e-4bb8-900f-4a9e5dacf5ba--><!--Version: Web v3.4.4-->

-------------

Labels: `type.DocumentationBug` `severity.Medium`

original: yunruu/ped#7 | 1.0 | [PE-D][Tester F] User guide has a feature in the top overview that is unimplemented - - Select bill unimplemented

<!--session: 1666945444121-db087f50-d06e-4bb8-900f-4a9e5dacf5ba--><!--Version: Web v3.4.4-->

-------------

Labels: `type.DocumentationBug` `severity.Medium`

original: yunruu/ped#7 | non_test | user guide has a feature in the top overview that is unimplemented select bill unimplemented labels type documentationbug severity medium original yunruu ped | 0 |

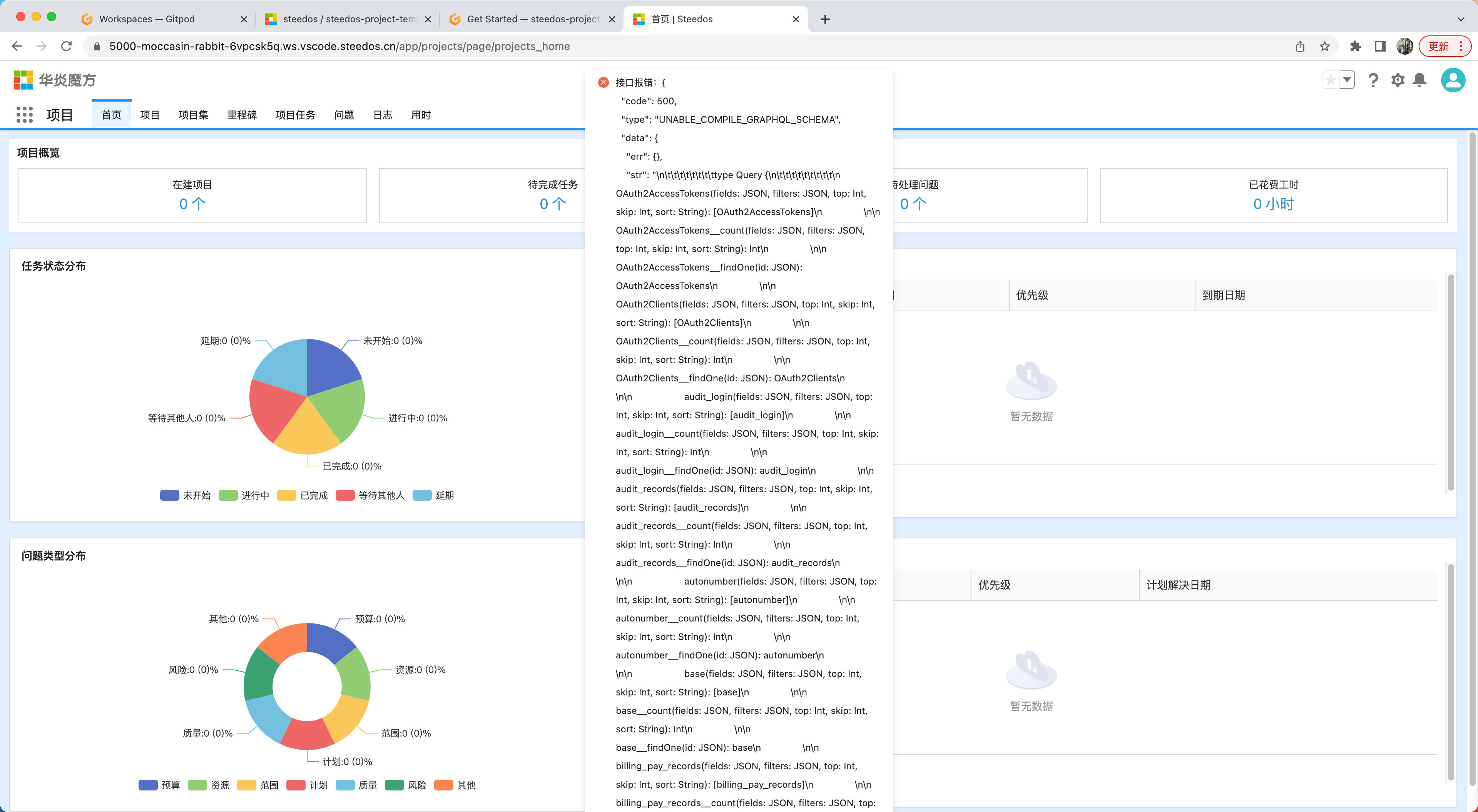

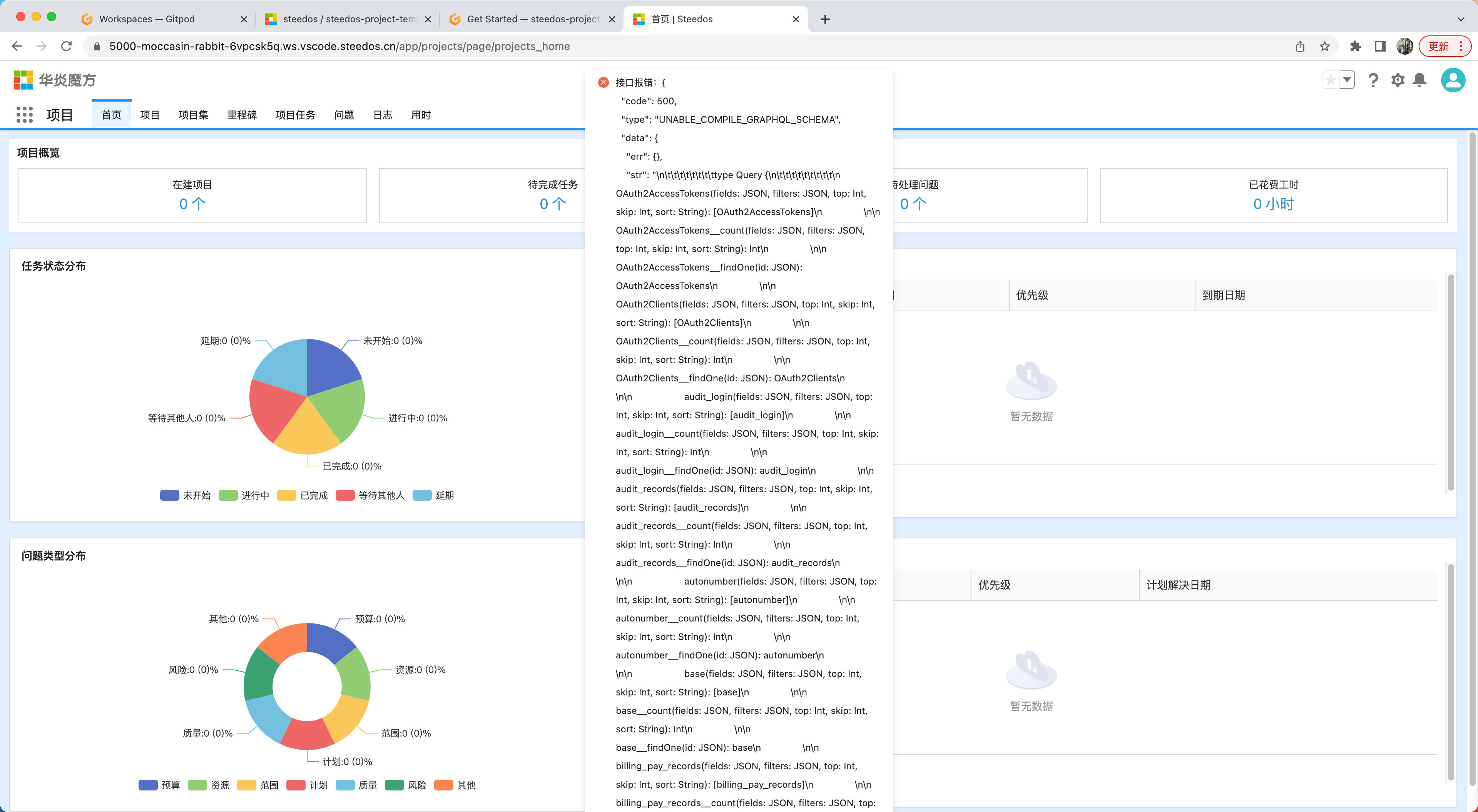

745,002 | 25,965,250,032 | IssuesEvent | 2022-12-19 06:04:25 | steedos/steedos-platform | https://api.github.com/repos/steedos/steedos-platform | opened | template模板项目环境初始化/换新库软件包数据初始化报错 | priority: High | - [ ] 新开环境初始化

- [ ] 换新库

import seal error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429612, 19). Collection minimum is Timestamp(1671429613, 92)

import finance_receive error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429618, 4). Collection minimum is Timestamp(1671429618, 49)

import measurement_unit error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429618, 56). Collection minimum is Timestamp(1671429618, 74)

import okr_objective error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429619, 17). Collection minimum is Timestamp(1671429619, 53)

import contract_types error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429619, 117). Collection minimum is Timestamp(1671429621, 15)

import contract_types error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429621, 17). Collection minimum is Timestamp(1671429621, 22)

import meeting error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429621, 54). Collection minimum is Timestamp(1671429622, 9)

import project_log error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429622, 28). Collection minimum is Timestamp(1671429622, 70)

import cost_business_reimburse_detail error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429623, 2). Collection minimum is Timestamp(1671429623, 15)

import finance_payment error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429623, 40). Collection minimum is Timestamp(1671429623, 65)

import events error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429623, 89). Collection minimum is Timestamp(1671429624, 31)

import cost_itinerary_information error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429624, 38). Collection minimum is Timestamp(1671429624, 60)

import tax_rates error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429624, 76). Collection minimum is Timestamp(1671429624, 101)

import currency error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429625, 60). Collection minimum is Timestamp(1671429626, 13)

import currency error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429626, 15). Collection minimum is Timestamp(1671429626, 16)

import cost_schedule_reimburse_detail error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429626, 56). Collection minimum is Timestamp(1671429626, 71)

import project_task error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429626, 96). Collection minimum is Timestamp(1671429627, 92)

This error originated either by throwing inside of an async function without a catch block, or by rejecting a promise which was not handled with .catch(). The promise rejected with the reason:

Error: [not-authorized]

at MethodInvocation.apply (meteor://💻app/packages/steedos_base/server/methods/object_workflows.coffee:8:19)

at maybeAuditArgumentChecks (meteor://💻app/packages/ddp-server/livedata_server.js:1771:12)

at meteor://💻app/packages/ddp-server/livedata_server.js:1689:15

at Meteor.EnvironmentVariable.withValue (packages/meteor.js:1234:12)

at meteor://💻app/packages/ddp-server/livedata_server.js:1687:36

at new Promise (<anonymous>)

at Server.applyAsync (meteor://💻app/packages/ddp-server/livedata_server.js:1686:12)

at Server.apply (meteor://💻app/packages/ddp-server/livedata_server.js:1625:26)

at Server.call (meteor://💻app/packages/ddp-server/livedata_server.js:1607:17)

at /workspace/steedos-project-template/node_modules/@steedos/service-ui/main/default/routes/bootstrap.router.js:159:50

at Generator.next (<anonymous>)

at fulfilled (/workspace/steedos-project-template/node_modules/tslib/tslib.js:115:62)

at /workspace/steedos-project-template/node_modules/meteor-promise/fiber_pool.js:43:39

=> awaited here:

at Promise.await (/workspace/steedos-project-template/node_modules/meteor-promise/promise_server.js:60:12)

at Server.apply (meteor://💻app/packages/ddp-server/livedata_server.js:1638:22)

at Server.call (meteor://💻app/packages/ddp-server/livedata_server.js:1607:17)

at /workspace/steedos-project-template/node_modules/@steedos/service-ui/main/default/routes/bootstrap.router.js:159:50

at Generator.next (<anonymous>)

at fulfilled (/workspace/steedos-project-template/node_modules/tslib/tslib.js:115:62)

at /workspace/steedos-project-template/node_modules/meteor-promise/fiber_pool.js:43:39

^C

gitpod /workspace/steedos-project-template (master) $ [SIGINT]服务已停止: namespace: steedos, nodeID: ws-4fb57439-602a-4c70-a089-05d18628d988-2564 | 1.0 | template模板项目环境初始化/换新库软件包数据初始化报错 - - [ ] 新开环境初始化

- [ ] 换新库

import seal error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429612, 19). Collection minimum is Timestamp(1671429613, 92)

import finance_receive error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429618, 4). Collection minimum is Timestamp(1671429618, 49)

import measurement_unit error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429618, 56). Collection minimum is Timestamp(1671429618, 74)

import okr_objective error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429619, 17). Collection minimum is Timestamp(1671429619, 53)

import contract_types error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429619, 117). Collection minimum is Timestamp(1671429621, 15)

import contract_types error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429621, 17). Collection minimum is Timestamp(1671429621, 22)

import meeting error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429621, 54). Collection minimum is Timestamp(1671429622, 9)

import project_log error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429622, 28). Collection minimum is Timestamp(1671429622, 70)

import cost_business_reimburse_detail error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429623, 2). Collection minimum is Timestamp(1671429623, 15)

import finance_payment error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429623, 40). Collection minimum is Timestamp(1671429623, 65)

import events error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429623, 89). Collection minimum is Timestamp(1671429624, 31)

import cost_itinerary_information error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429624, 38). Collection minimum is Timestamp(1671429624, 60)

import tax_rates error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429624, 76). Collection minimum is Timestamp(1671429624, 101)

import currency error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429625, 60). Collection minimum is Timestamp(1671429626, 13)

import currency error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429626, 15). Collection minimum is Timestamp(1671429626, 16)

import cost_schedule_reimburse_detail error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429626, 56). Collection minimum is Timestamp(1671429626, 71)

import project_task error Unable to read from a snapshot due to pending collection catalog changes; please retry the operation. Snapshot timestamp is Timestamp(1671429626, 96). Collection minimum is Timestamp(1671429627, 92)

This error originated either by throwing inside of an async function without a catch block, or by rejecting a promise which was not handled with .catch(). The promise rejected with the reason:

Error: [not-authorized]

at MethodInvocation.apply (meteor://💻app/packages/steedos_base/server/methods/object_workflows.coffee:8:19)

at maybeAuditArgumentChecks (meteor://💻app/packages/ddp-server/livedata_server.js:1771:12)

at meteor://💻app/packages/ddp-server/livedata_server.js:1689:15

at Meteor.EnvironmentVariable.withValue (packages/meteor.js:1234:12)

at meteor://💻app/packages/ddp-server/livedata_server.js:1687:36

at new Promise (<anonymous>)

at Server.applyAsync (meteor://💻app/packages/ddp-server/livedata_server.js:1686:12)

at Server.apply (meteor://💻app/packages/ddp-server/livedata_server.js:1625:26)

at Server.call (meteor://💻app/packages/ddp-server/livedata_server.js:1607:17)

at /workspace/steedos-project-template/node_modules/@steedos/service-ui/main/default/routes/bootstrap.router.js:159:50

at Generator.next (<anonymous>)

at fulfilled (/workspace/steedos-project-template/node_modules/tslib/tslib.js:115:62)

at /workspace/steedos-project-template/node_modules/meteor-promise/fiber_pool.js:43:39

=> awaited here:

at Promise.await (/workspace/steedos-project-template/node_modules/meteor-promise/promise_server.js:60:12)

at Server.apply (meteor://💻app/packages/ddp-server/livedata_server.js:1638:22)

at Server.call (meteor://💻app/packages/ddp-server/livedata_server.js:1607:17)

at /workspace/steedos-project-template/node_modules/@steedos/service-ui/main/default/routes/bootstrap.router.js:159:50

at Generator.next (<anonymous>)

at fulfilled (/workspace/steedos-project-template/node_modules/tslib/tslib.js:115:62)

at /workspace/steedos-project-template/node_modules/meteor-promise/fiber_pool.js:43:39

^C

gitpod /workspace/steedos-project-template (master) $ [SIGINT]服务已停止: namespace: steedos, nodeID: ws-4fb57439-602a-4c70-a089-05d18628d988-2564 | non_test | template模板项目环境初始化 换新库软件包数据初始化报错 新开环境初始化 换新库 import seal error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import finance receive error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import measurement unit error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import okr objective error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import contract types error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import contract types error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import meeting error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import project log error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import cost business reimburse detail error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import finance payment error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import events error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import cost itinerary information error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import tax rates error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import currency error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import currency error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import cost schedule reimburse detail error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp import project task error unable to read from a snapshot due to pending collection catalog changes please retry the operation snapshot timestamp is timestamp collection minimum is timestamp this error originated either by throwing inside of an async function without a catch block or by rejecting a promise which was not handled with catch the promise rejected with the reason error at methodinvocation apply meteor 💻app packages steedos base server methods object workflows coffee at maybeauditargumentchecks meteor 💻app packages ddp server livedata server js at meteor 💻app packages ddp server livedata server js at meteor environmentvariable withvalue packages meteor js at meteor 💻app packages ddp server livedata server js at new promise at server applyasync meteor 💻app packages ddp server livedata server js at server apply meteor 💻app packages ddp server livedata server js at server call meteor 💻app packages ddp server livedata server js at workspace steedos project template node modules steedos service ui main default routes bootstrap router js at generator next at fulfilled workspace steedos project template node modules tslib tslib js at workspace steedos project template node modules meteor promise fiber pool js awaited here at promise await workspace steedos project template node modules meteor promise promise server js at server apply meteor 💻app packages ddp server livedata server js at server call meteor 💻app packages ddp server livedata server js at workspace steedos project template node modules steedos service ui main default routes bootstrap router js at generator next at fulfilled workspace steedos project template node modules tslib tslib js at workspace steedos project template node modules meteor promise fiber pool js c gitpod workspace steedos project template master 服务已停止 namespace steedos nodeid ws | 0 |

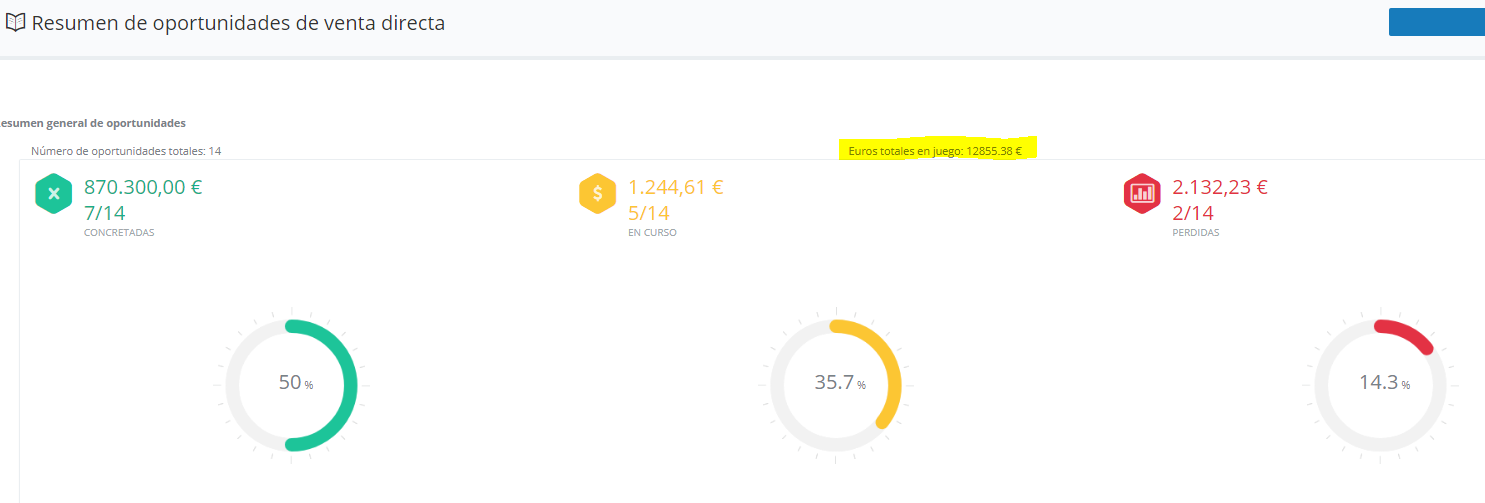

162,452 | 6,153,597,911 | IssuesEvent | 2017-06-28 10:21:39 | BinPar/PRM | https://api.github.com/repos/BinPar/PRM | opened | PRM VD : EUROS TOTALES EN JUEGO ¿A QUÉ SE REFIERE? | Priority: Low |

El importe en euros debiera tener este formato ##.###,## €

@CristianBinpar @minigoBinpar @Al3xBinpar | 1.0 | PRM VD : EUROS TOTALES EN JUEGO ¿A QUÉ SE REFIERE? -

El importe en euros debiera tener este formato ##.###,## €

@CristianBinpar @minigoBinpar @Al3xBinpar | non_test | prm vd euros totales en juego ¿a qué se refiere el importe en euros debiera tener este formato € cristianbinpar minigobinpar | 0 |

59,059 | 6,627,438,611 | IssuesEvent | 2017-09-23 02:43:37 | istio/istio | https://api.github.com/repos/istio/istio | reopened | ingress error in shared cluster | bug flaky-test help wanted oncall test-failure | W0913 05:50:45.201] E0913 05:50:45.199935 892 framework.go:197] Failed to complete Init. Error unable to find ingress ip

happens sporadically (probably related to a test finishing when one is starting)

e.g.

https://k8s-gubernator.appspot.com/build/istio-prow/pull/istio_istio/739/e2e-suite-rbac-auth/524/ | 2.0 | ingress error in shared cluster - W0913 05:50:45.201] E0913 05:50:45.199935 892 framework.go:197] Failed to complete Init. Error unable to find ingress ip

happens sporadically (probably related to a test finishing when one is starting)

e.g.

https://k8s-gubernator.appspot.com/build/istio-prow/pull/istio_istio/739/e2e-suite-rbac-auth/524/ | test | ingress error in shared cluster framework go failed to complete init error unable to find ingress ip happens sporadically probably related to a test finishing when one is starting e g | 1 |

26,639 | 4,236,987,361 | IssuesEvent | 2016-07-05 20:16:23 | bireme/bvs-noticias | https://api.github.com/repos/bireme/bvs-noticias | reopened | Quando o nível 2 não for utilizado, o conteúdo/ texto deve ocupar toda a página | task testing / validating | - Para ter um melhor aproveitamento do espaço.

- Melhora a navageção e visualização do conteúdo;

- O nível 2 deve ser mantido caso o usuário queira utilizá-lo.

| 1.0 | Quando o nível 2 não for utilizado, o conteúdo/ texto deve ocupar toda a página - - Para ter um melhor aproveitamento do espaço.

- Melhora a navageção e visualização do conteúdo;

- O nível 2 deve ser mantido caso o usuário queira utilizá-lo.

| test | quando o nível não for utilizado o conteúdo texto deve ocupar toda a página para ter um melhor aproveitamento do espaço melhora a navageção e visualização do conteúdo o nível deve ser mantido caso o usuário queira utilizá lo | 1 |

623,820 | 19,680,736,971 | IssuesEvent | 2022-01-11 16:30:57 | input-output-hk/cardano-node | https://api.github.com/repos/input-output-hk/cardano-node | closed | [FR] - Add CLI command to display the leadership schedule | enhancement priority medium cli revision API&CLI-Backlog | **Describe the feature you'd like**

I would like to know my leadership schedule, at least the nearest upcoming leadership event, so I can plan my node maintenance or/and KES rotating. | 1.0 | [FR] - Add CLI command to display the leadership schedule - **Describe the feature you'd like**

I would like to know my leadership schedule, at least the nearest upcoming leadership event, so I can plan my node maintenance or/and KES rotating. | non_test | add cli command to display the leadership schedule describe the feature you d like i would like to know my leadership schedule at least the nearest upcoming leadership event so i can plan my node maintenance or and kes rotating | 0 |

186,542 | 15,075,602,314 | IssuesEvent | 2021-02-05 02:29:25 | Solobrosco/site-update | https://api.github.com/repos/Solobrosco/site-update | closed | Fix tailwind dependencies | bug documentation | Tailwind depreciated some of their features.

Update the application and start it with no warnings

npm may need some updates too... | 1.0 | Fix tailwind dependencies - Tailwind depreciated some of their features.

Update the application and start it with no warnings

npm may need some updates too... | non_test | fix tailwind dependencies tailwind depreciated some of their features update the application and start it with no warnings npm may need some updates too | 0 |

82,024 | 31,858,990,869 | IssuesEvent | 2023-09-15 09:31:26 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Fix DefaultRecordMapper Javadoc to reflect actual behaviour | T: Defect C: Documentation P: Medium E: All Editions | A few "recent" changes aren't reflected correctly in the [`DefaultRecordMapper` Javadoc](https://www.jooq.org/javadoc/latest/org.jooq/org/jooq/impl/DefaultRecordMapper.html):

- `ValueTypeMapper` isn't based on whether the user type `E` is a built-in type, but on whether the `ConverterProvider` can convert between `T1` (from `Record1<T1>`) and `E`

- `RecordToRecordMapper` has a higher priority than `ValueTypeMapper` (see also: https://github.com/jOOQ/jOOQ/issues/11148)

- `ProxyMapper` is listed way too far down

----

See also:

https://groups.google.com/g/jooq-user/c/XMD2Rc0kEqQ | 1.0 | Fix DefaultRecordMapper Javadoc to reflect actual behaviour - A few "recent" changes aren't reflected correctly in the [`DefaultRecordMapper` Javadoc](https://www.jooq.org/javadoc/latest/org.jooq/org/jooq/impl/DefaultRecordMapper.html):

- `ValueTypeMapper` isn't based on whether the user type `E` is a built-in type, but on whether the `ConverterProvider` can convert between `T1` (from `Record1<T1>`) and `E`

- `RecordToRecordMapper` has a higher priority than `ValueTypeMapper` (see also: https://github.com/jOOQ/jOOQ/issues/11148)

- `ProxyMapper` is listed way too far down

----

See also:

https://groups.google.com/g/jooq-user/c/XMD2Rc0kEqQ | non_test | fix defaultrecordmapper javadoc to reflect actual behaviour a few recent changes aren t reflected correctly in the valuetypemapper isn t based on whether the user type e is a built in type but on whether the converterprovider can convert between from and e recordtorecordmapper has a higher priority than valuetypemapper see also proxymapper is listed way too far down see also | 0 |

180,184 | 13,925,153,826 | IssuesEvent | 2020-10-21 16:27:40 | EnterpriseDB/edb-ansible | https://api.github.com/repos/EnterpriseDB/edb-ansible | closed | Move with_dict servers in the roles to manage and iteration of roles' tasks | In_Testing REL_1_0_1 enhancement | Currently, users need to add with_dict as given below in the playbook to use the roles.

```

- name: Iterate through repo role with items from hosts file

include_role:

name: setup_repo

with_dict: "{{ servers }}"

```

It will be simpler if we can skip using with_dict in the playbook. Something like given below:

```

roles:

setup_repo

``` | 1.0 | Move with_dict servers in the roles to manage and iteration of roles' tasks - Currently, users need to add with_dict as given below in the playbook to use the roles.

```

- name: Iterate through repo role with items from hosts file

include_role:

name: setup_repo

with_dict: "{{ servers }}"

```

It will be simpler if we can skip using with_dict in the playbook. Something like given below:

```

roles:

setup_repo

``` | test | move with dict servers in the roles to manage and iteration of roles tasks currently users need to add with dict as given below in the playbook to use the roles name iterate through repo role with items from hosts file include role name setup repo with dict servers it will be simpler if we can skip using with dict in the playbook something like given below roles setup repo | 1 |

132,188 | 12,500,389,738 | IssuesEvent | 2020-06-01 22:09:42 | ibm-garage-cloud/planning | https://api.github.com/repos/ibm-garage-cloud/planning | closed | Rename account administrator to account manager | Persona: SysAdmin documentation enhancement | One of the three access groups is called account administrator, created by the `terraform/scripts/acp-acct` script. Rename this role from account administrator to account manager so as to better distinguish it from the environment administrator role and to align it with the name of the main IAM policy role for this user, the `account-management` role.

Requires:

- [ ] Update docs to refer to new name

- [ ] Rename script to new name

| 1.0 | Rename account administrator to account manager - One of the three access groups is called account administrator, created by the `terraform/scripts/acp-acct` script. Rename this role from account administrator to account manager so as to better distinguish it from the environment administrator role and to align it with the name of the main IAM policy role for this user, the `account-management` role.

Requires:

- [ ] Update docs to refer to new name

- [ ] Rename script to new name

| non_test | rename account administrator to account manager one of the three access groups is called account administrator created by the terraform scripts acp acct script rename this role from account administrator to account manager so as to better distinguish it from the environment administrator role and to align it with the name of the main iam policy role for this user the account management role requires update docs to refer to new name rename script to new name | 0 |

114,442 | 17,209,458,700 | IssuesEvent | 2021-07-19 00:12:57 | turkdevops/javascript-sdk | https://api.github.com/repos/turkdevops/javascript-sdk | opened | WS-2019-0183 (Medium) detected in lodash.defaultsdeep-4.3.2.tgz | security vulnerability | ## WS-2019-0183 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash.defaultsdeep-4.3.2.tgz</b></p></summary>

<p>The lodash method `_.defaultsDeep` exported as a module.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash.defaultsdeep/-/lodash.defaultsdeep-4.3.2.tgz">https://registry.npmjs.org/lodash.defaultsdeep/-/lodash.defaultsdeep-4.3.2.tgz</a></p>

<p>Path to dependency file: javascript-sdk/package.json</p>

<p>Path to vulnerable library: javascript-sdk/node_modules/lodash.defaultsdeep/package.json</p>

<p>

Dependency Hierarchy:

- nightwatch-0.9.21.tgz (Root Library)

- :x: **lodash.defaultsdeep-4.3.2.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/turkdevops/javascript-sdk/commit/2ed96566365ee89d8a9b1250ccd7c049281ed09c">2ed96566365ee89d8a9b1250ccd7c049281ed09c</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

lodash.defaultsdeep before 4.6.1 is vulnerable to prototype pollution. The function mergeWith() may allow a malicious user to modify the prototype of Object via {constructor: {prototype: {...}}} causing the addition or modification of an existing property that will exist on all objects.

<p>Publish Date: 2019-08-14

<p>URL: <a href=https://github.com/lodash/lodash/compare/4.6.0...4.6.1>WS-2019-0183</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.0</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: N/A

- Attack Complexity: N/A

- Privileges Required: N/A

- User Interaction: N/A

- Scope: N/A

- Impact Metrics:

- Confidentiality Impact: N/A

- Integrity Impact: N/A

- Availability Impact: N/A

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1068">https://www.npmjs.com/advisories/1068</a></p>

<p>Release Date: 2019-08-14</p>

<p>Fix Resolution: 4.6.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | WS-2019-0183 (Medium) detected in lodash.defaultsdeep-4.3.2.tgz - ## WS-2019-0183 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash.defaultsdeep-4.3.2.tgz</b></p></summary>

<p>The lodash method `_.defaultsDeep` exported as a module.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash.defaultsdeep/-/lodash.defaultsdeep-4.3.2.tgz">https://registry.npmjs.org/lodash.defaultsdeep/-/lodash.defaultsdeep-4.3.2.tgz</a></p>

<p>Path to dependency file: javascript-sdk/package.json</p>

<p>Path to vulnerable library: javascript-sdk/node_modules/lodash.defaultsdeep/package.json</p>

<p>

Dependency Hierarchy:

- nightwatch-0.9.21.tgz (Root Library)

- :x: **lodash.defaultsdeep-4.3.2.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/turkdevops/javascript-sdk/commit/2ed96566365ee89d8a9b1250ccd7c049281ed09c">2ed96566365ee89d8a9b1250ccd7c049281ed09c</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

lodash.defaultsdeep before 4.6.1 is vulnerable to prototype pollution. The function mergeWith() may allow a malicious user to modify the prototype of Object via {constructor: {prototype: {...}}} causing the addition or modification of an existing property that will exist on all objects.

<p>Publish Date: 2019-08-14

<p>URL: <a href=https://github.com/lodash/lodash/compare/4.6.0...4.6.1>WS-2019-0183</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.0</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: N/A

- Attack Complexity: N/A

- Privileges Required: N/A

- User Interaction: N/A

- Scope: N/A

- Impact Metrics:

- Confidentiality Impact: N/A

- Integrity Impact: N/A

- Availability Impact: N/A

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1068">https://www.npmjs.com/advisories/1068</a></p>

<p>Release Date: 2019-08-14</p>

<p>Fix Resolution: 4.6.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_test | ws medium detected in lodash defaultsdeep tgz ws medium severity vulnerability vulnerable library lodash defaultsdeep tgz the lodash method defaultsdeep exported as a module library home page a href path to dependency file javascript sdk package json path to vulnerable library javascript sdk node modules lodash defaultsdeep package json dependency hierarchy nightwatch tgz root library x lodash defaultsdeep tgz vulnerable library found in head commit a href found in base branch master vulnerability details lodash defaultsdeep before is vulnerable to prototype pollution the function mergewith may allow a malicious user to modify the prototype of object via constructor prototype causing the addition or modification of an existing property that will exist on all objects publish date url a href cvss score details base score metrics exploitability metrics attack vector n a attack complexity n a privileges required n a user interaction n a scope n a impact metrics confidentiality impact n a integrity impact n a availability impact n a for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

198,862 | 6,978,497,817 | IssuesEvent | 2017-12-12 17:42:46 | JKGDevs/JediKnightGalaxies | https://api.github.com/repos/JKGDevs/JediKnightGalaxies | opened | Animation freezes after being kicked | bug priority:medium |

If another player kicks you, after getting up your animation will freeze and you'll be unable to move or aim. However, you can get out of the "freeze" by switching to melee and then performing a kick. This will resume the animation and unstuck you. | 1.0 | Animation freezes after being kicked -