Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 1.02k | labels stringlengths 4 1.54k | body stringlengths 1 262k | index stringclasses 17

values | text_combine stringlengths 95 262k | label stringclasses 2

values | text stringlengths 96 252k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

321,295 | 27,520,368,428 | IssuesEvent | 2023-03-06 14:40:48 | wazuh/wazuh-qa | https://api.github.com/repos/wazuh/wazuh-qa | closed | Add Cisco Umbrella integration tests | team/framework test/integration type/test-development role/qa-deprecaped feature/aws | As part of https://github.com/wazuh/wazuh-qa/issues/3333 we need to Implement the integration test cases related to `Cisco Umbrella` defined in https://github.com/wazuh/wazuh-qa/issues/3334.

# Test cases list

## Tier 0

- <details><summary>Umbrella integration works properly using the default configuration</sum... | 2.0 | Add Cisco Umbrella integration tests - As part of https://github.com/wazuh/wazuh-qa/issues/3333 we need to Implement the integration test cases related to `Cisco Umbrella` defined in https://github.com/wazuh/wazuh-qa/issues/3334.

# Test cases list

## Tier 0

- <details><summary>Umbrella integration works proper... | test | add cisco umbrella integration tests as part of we need to implement the integration test cases related to cisco umbrella defined in test cases list tier umbrella integration works properly using the default configuration pre conditions there are already configured crede... | 1 |

205,654 | 15,652,380,394 | IssuesEvent | 2021-03-23 11:19:29 | elastic/elasticsearch-net | https://api.github.com/repos/elastic/elasticsearch-net | closed | BulkApiTests sometimes result in process_cluster_event_timeout_exception from server | Flakey test | Per #5288 - Error below found on three tests:

- ReturnsExpectedResponse

- ReturnsExpectedIsValid

- ReturnsExpectedStatusCode

Expected response.IsValid to be true because Failed to set up pipeline named 'pipeline' required for bulk Invalid NEST response built from a unsuccessful (503) low level call on PUT: /_in... | 1.0 | BulkApiTests sometimes result in process_cluster_event_timeout_exception from server - Per #5288 - Error below found on three tests:

- ReturnsExpectedResponse

- ReturnsExpectedIsValid

- ReturnsExpectedStatusCode

Expected response.IsValid to be true because Failed to set up pipeline named 'pipeline' required for... | test | bulkapitests sometimes result in process cluster event timeout exception from server per error below found on three tests returnsexpectedresponse returnsexpectedisvalid returnsexpectedstatuscode expected response isvalid to be true because failed to set up pipeline named pipeline required for bu... | 1 |

346,062 | 30,863,931,978 | IssuesEvent | 2023-08-03 06:34:28 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: network_logging failed | C-test-failure O-robot O-roachtest branch-master release-blocker T-observability-inf | roachtest.network_logging [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/11169499?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/11169499?buildTab=artifacts#/network_logging) on ma... | 2.0 | roachtest: network_logging failed - roachtest.network_logging [failed](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/11169499?buildTab=log) with [artifacts](https://teamcity.cockroachdb.com/buildConfiguration/Cockroach_Nightlies_RoachtestNightlyGceBazel/11169499?buildT... | test | roachtest network logging failed roachtest network logging with on master monitor go wait monitor failure monitor task failed output in run cockroach workload r cockroach workload run kv concurrency duration postgres root sslcert certs root crt sslkey certs ... | 1 |

332,718 | 29,491,356,806 | IssuesEvent | 2023-06-02 13:41:50 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Obras Públicas: Dados para acompanhamento - Santa Juliana | generalization test development template - GRP (27) tag - Obras Públicas subtag - Dados para acompanhamento | DoD: Realizar o teste de Generalização do validador da tag Obras Públicas: Dados para acompanhamento para o Município de Santa Juliana. | 1.0 | Teste de generalizacao para a tag Obras Públicas: Dados para acompanhamento - Santa Juliana - DoD: Realizar o teste de Generalização do validador da tag Obras Públicas: Dados para acompanhamento para o Município de Santa Juliana. | test | teste de generalizacao para a tag obras públicas dados para acompanhamento santa juliana dod realizar o teste de generalização do validador da tag obras públicas dados para acompanhamento para o município de santa juliana | 1 |

142,241 | 11,459,976,077 | IssuesEvent | 2020-02-07 08:44:27 | robotology/assistive-rehab | https://api.github.com/repos/robotology/assistive-rehab | closed | Verify temporal metric | ✅ test | We want to be sure that the computed time is not affected by disturbances (such as questions during the test). | 1.0 | Verify temporal metric - We want to be sure that the computed time is not affected by disturbances (such as questions during the test). | test | verify temporal metric we want to be sure that the computed time is not affected by disturbances such as questions during the test | 1 |

271,590 | 23,616,566,051 | IssuesEvent | 2022-08-24 16:21:59 | gradle/gradle | https://api.github.com/repos/gradle/gradle | closed | Named argument notation not supported for dependencies in test suite plugin | a:feature @core in:dependency-declarations in:test-suites | ### Expected Behavior

When declaring [dependencies of a test suite](https://docs.gradle.org/current/userguide/jvm_test_suite_plugin.html#configure_dependencies_of_a_test_suite), it should be possible to use map notation like in top level dependency declarations (e.g. `group = "org.junit.jupiter", name = "junit-jupiter... | 1.0 | Named argument notation not supported for dependencies in test suite plugin - ### Expected Behavior

When declaring [dependencies of a test suite](https://docs.gradle.org/current/userguide/jvm_test_suite_plugin.html#configure_dependencies_of_a_test_suite), it should be possible to use map notation like in top level dep... | test | named argument notation not supported for dependencies in test suite plugin expected behavior when declaring it should be possible to use map notation like in top level dependency declarations e g group org junit jupiter name junit jupiter version rather than be forced to use string... | 1 |

227,307 | 18,054,565,542 | IssuesEvent | 2021-09-20 06:06:33 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: X-Pack API Integration Tests.x-pack/test/api_integration/apis/ml/anomaly_detectors/close_with_spaces·ts - apis Machine Learning anomaly detectors POST anomaly_detectors _close with spaces "before all" hook for "should close job from same space" | :ml failed-test | A test failed on a tracked branch

```

Error: [POST http://elastic:changeme@localhost:61191/api/kibana/settings] request failed (attempt=3/3): Request failed with status code 503 -- and ran out of retries

at KbnClientRequester.request (/dev/shm/workspace/parallel/19/kibana/node_modules/@kbn/test/target_node/kbn_cli... | 1.0 | Failing test: X-Pack API Integration Tests.x-pack/test/api_integration/apis/ml/anomaly_detectors/close_with_spaces·ts - apis Machine Learning anomaly detectors POST anomaly_detectors _close with spaces "before all" hook for "should close job from same space" - A test failed on a tracked branch

```

Error: [POST http://... | test | failing test x pack api integration tests x pack test api integration apis ml anomaly detectors close with spaces·ts apis machine learning anomaly detectors post anomaly detectors close with spaces before all hook for should close job from same space a test failed on a tracked branch error request fai... | 1 |

271,289 | 23,593,578,273 | IssuesEvent | 2022-08-23 17:12:05 | MPMG-DCC-UFMG/F01 | https://api.github.com/repos/MPMG-DCC-UFMG/F01 | closed | Teste de generalizacao para a tag Obras públicas - Dados para acompanhamento - Cataguases | generalization test development template - Betha tag - Obras Públicas subtag - Dados para acompanhamento | DoD: Realizar o teste de Generalização do validador da tag Obras públicas - Dados para acompanhamento para o Município de Cataguases. | 1.0 | Teste de generalizacao para a tag Obras públicas - Dados para acompanhamento - Cataguases - DoD: Realizar o teste de Generalização do validador da tag Obras públicas - Dados para acompanhamento para o Município de Cataguases. | test | teste de generalizacao para a tag obras públicas dados para acompanhamento cataguases dod realizar o teste de generalização do validador da tag obras públicas dados para acompanhamento para o município de cataguases | 1 |

335,522 | 30,038,108,227 | IssuesEvent | 2023-06-27 13:57:36 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: Jest Tests.x-pack/plugins/cases/public/components/case_view - CaseViewPage Tabs renders the activity tab when the query parameter tabId has an unknown value | failed-test skipped-test Team:ResponseOps Feature:Cases | A test failed on a tracked branch

```

TestingLibraryElementError: Unable to find an element by: [data-test-subj="case-view-tab-content-activity"]

Ignored nodes: comments, script, style

<body>

<div />

</body>

at Object.getElementError (/var/lib/buildkite-agent/builds/kb-n2-4-spot-3f2434e6d3ddd216/elastic/kibana-... | 2.0 | Failing test: Jest Tests.x-pack/plugins/cases/public/components/case_view - CaseViewPage Tabs renders the activity tab when the query parameter tabId has an unknown value - A test failed on a tracked branch

```

TestingLibraryElementError: Unable to find an element by: [data-test-subj="case-view-tab-content-activity"]

... | test | failing test jest tests x pack plugins cases public components case view caseviewpage tabs renders the activity tab when the query parameter tabid has an unknown value a test failed on a tracked branch testinglibraryelementerror unable to find an element by ignored nodes comments script style ... | 1 |

411,766 | 27,830,449,666 | IssuesEvent | 2023-03-20 04:03:13 | 1C-Company/v8-code-style | https://api.github.com/repos/1C-Company/v8-code-style | opened | Добавить описание doc-comment-description-ends-on-dot.md | documentation | ## Раздел документации или код проверки

<!-- Путь к разделу документации: -->

`doc-comment-description-ends-on-dot.md`

## Что необходимо улучшить

<!-- Кратко опишите, что нужно улучшить, исправить. -->

Добавить описание

| 1.0 | Добавить описание doc-comment-description-ends-on-dot.md - ## Раздел документации или код проверки

<!-- Путь к разделу документации: -->

`doc-comment-description-ends-on-dot.md`

## Что необходимо улучшить

<!-- Кратко опишите, что нужно улучшить, исправить. -->

Добавить описание

| non_test | добавить описание doc comment description ends on dot md раздел документации или код проверки doc comment description ends on dot md что необходимо улучшить добавить описание | 0 |

12,860 | 15,108,285,034 | IssuesEvent | 2021-02-08 16:27:25 | Conjurinc-workato-dev/evoke | https://api.github.com/repos/Conjurinc-workato-dev/evoke | opened | j2g bug | Bugtype/Compatibility ONYX-6615 severity/low team/A Team | ##description

Steps to reproduce:

Current Results:ddddd

dsa

ads

a

sdas

Expected Results:

Error Messages:

Logs:

Other Symptoms:

Tenant ID / Pod Number:

##Found in version

10.8

##Workaround Complexity

NA

##Workaround Description

##Link to JIRA bug

https://ca-il-jira-test.il.cyber-ark.co... | True | j2g bug - ##description

Steps to reproduce:

Current Results:ddddd

dsa

ads

a

sdas

Expected Results:

Error Messages:

Logs:

Other Symptoms:

Tenant ID / Pod Number:

##Found in version

10.8

##Workaround Complexity

NA

##Workaround Description

##Link to JIRA bug

https://ca-il-jira-test.il.cy... | non_test | bug description steps to reproduce current results ddddd dsa ads a sdas expected results error messages logs other symptoms tenant id pod number found in version workaround complexity na workaround description link to jira bug | 0 |

26,269 | 4,212,770,789 | IssuesEvent | 2016-06-29 17:10:31 | ngageoint/hootenanny-ui | https://api.github.com/repos/ngageoint/hootenanny-ui | closed | Limit how far the user can expand the conflation area | Category: UI in progress Priority: Medium Status: Ready for Test | Currently, the dataset area can be expanded to cover the entire screen. This should be limited to at max, half the screen

Example:

| 1.0 | Limit how far the user can expand the conflation area - Currently, the dataset area can be expanded to cover the entire screen. This should be limited to at max, half the screen

Example:

| test | limit how far the user can expand the conflation area currently the dataset area can be expanded to cover the entire screen this should be limited to at max half the screen example | 1 |

88,199 | 8,134,643,198 | IssuesEvent | 2018-08-19 18:12:16 | YACS-RCOS/yacs-admin | https://api.github.com/repos/YACS-RCOS/yacs-admin | opened | Perform accessibility audit | accessibility testing | We need to perform an accessibility audit to make sure our application is fully accessible to people with disabilities.

Ideally, our project should meet the [WCAG 2.1](https://www.w3.org/WAI/WCAG21/quickref/) guidelines.

If we find any issues during the audit, we should create GitHub issues for each issue we fin... | 1.0 | Perform accessibility audit - We need to perform an accessibility audit to make sure our application is fully accessible to people with disabilities.

Ideally, our project should meet the [WCAG 2.1](https://www.w3.org/WAI/WCAG21/quickref/) guidelines.

If we find any issues during the audit, we should create GitHu... | test | perform accessibility audit we need to perform an accessibility audit to make sure our application is fully accessible to people with disabilities ideally our project should meet the guidelines if we find any issues during the audit we should create github issues for each issue we find and tag it with... | 1 |

3,289 | 4,310,915,316 | IssuesEvent | 2016-07-21 20:49:37 | mozilla/testpilot | https://api.github.com/repos/mozilla/testpilot | opened | Upgrade insecure Django dependency | security status: planned | This says our Django dependency is insecure:

https://requires.io/github/mozilla/testpilot/requirements/?branch=master

We should upgrade ASAP. | True | Upgrade insecure Django dependency - This says our Django dependency is insecure:

https://requires.io/github/mozilla/testpilot/requirements/?branch=master

We should upgrade ASAP. | non_test | upgrade insecure django dependency this says our django dependency is insecure we should upgrade asap | 0 |

193,378 | 14,648,480,852 | IssuesEvent | 2020-12-27 03:16:45 | github-vet/rangeloop-pointer-findings | https://api.github.com/repos/github-vet/rangeloop-pointer-findings | closed | zhuowei/go-1-2-haiku: src/pkg/runtime/race/testdata/mop_test.go; 12 LoC | fresh small test |

Found a possible issue in [zhuowei/go-1-2-haiku](https://www.github.com/zhuowei/go-1-2-haiku) at [src/pkg/runtime/race/testdata/mop_test.go](https://github.com/zhuowei/go-1-2-haiku/blob/c86129514c33070e68041753a5365fdbed1e0eed/src/pkg/runtime/race/testdata/mop_test.go#L327-L338)

Below is the message reported by the a... | 1.0 | zhuowei/go-1-2-haiku: src/pkg/runtime/race/testdata/mop_test.go; 12 LoC -

Found a possible issue in [zhuowei/go-1-2-haiku](https://www.github.com/zhuowei/go-1-2-haiku) at [src/pkg/runtime/race/testdata/mop_test.go](https://github.com/zhuowei/go-1-2-haiku/blob/c86129514c33070e68041753a5365fdbed1e0eed/src/pkg/runtime/ra... | test | zhuowei go haiku src pkg runtime race testdata mop test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below m... | 1 |

53,384 | 28,111,506,372 | IssuesEvent | 2023-03-31 07:34:42 | bevyengine/bevy | https://api.github.com/repos/bevyengine/bevy | closed | Event microbenchmarks | C-Enhancement A-ECS C-Performance A-Diagnostics | ## What problem does this solve or what need does it fill?

We currently don't have performance benchmarks for ECS events, and are driving blind when making any performance related decision for them.

## What solution would you like?

Basic benchmarks for writing and reading events, varying based on total number of e... | True | Event microbenchmarks - ## What problem does this solve or what need does it fill?

We currently don't have performance benchmarks for ECS events, and are driving blind when making any performance related decision for them.

## What solution would you like?

Basic benchmarks for writing and reading events, varying ba... | non_test | event microbenchmarks what problem does this solve or what need does it fill we currently don t have performance benchmarks for ecs events and are driving blind when making any performance related decision for them what solution would you like basic benchmarks for writing and reading events varying ba... | 0 |

91,711 | 8,317,039,983 | IssuesEvent | 2018-09-25 10:48:55 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | teamcity: failed tests on release-2.1: testrace/TestChangefeedTimestamps, test/TestChangefeedTimestamps | C-test-failure O-robot | The following tests appear to have failed:

[#881965](https://teamcity.cockroachdb.com/viewLog.html?buildId=881965):

```

--- FAIL: testrace/TestChangefeedTimestamps (2.860s)

sql_runner.go:82: error scanning '&{<nil> 0xc4210a8a00}': pq: restart transaction: HandledRetryableTxnError: TransactionRetryError: retry txn (R... | 1.0 | teamcity: failed tests on release-2.1: testrace/TestChangefeedTimestamps, test/TestChangefeedTimestamps - The following tests appear to have failed:

[#881965](https://teamcity.cockroachdb.com/viewLog.html?buildId=881965):

```

--- FAIL: testrace/TestChangefeedTimestamps (2.860s)

sql_runner.go:82: error scanning '&{<n... | test | teamcity failed tests on release testrace testchangefeedtimestamps test testchangefeedtimestamps the following tests appear to have failed fail testrace testchangefeedtimestamps sql runner go error scanning pq restart transaction handledretryabletxnerror transactionretrye... | 1 |

281,836 | 24,424,154,471 | IssuesEvent | 2022-10-06 00:06:20 | aws/amazon-vpc-cni-k8s | https://api.github.com/repos/aws/amazon-vpc-cni-k8s | closed | Scenario-based e2e testing | testing stale | Add parameterization for e2e testing to allow permutations/scenarios for:

* MTU

* routing behaviour

* num ENIs, IPs, instance types, etc | 1.0 | Scenario-based e2e testing - Add parameterization for e2e testing to allow permutations/scenarios for:

* MTU

* routing behaviour

* num ENIs, IPs, instance types, etc | test | scenario based testing add parameterization for testing to allow permutations scenarios for mtu routing behaviour num enis ips instance types etc | 1 |

65,135 | 7,857,842,448 | IssuesEvent | 2018-06-21 12:12:15 | toggl/mobileapp | https://api.github.com/repos/toggl/mobileapp | closed | Add haptic feedback to the iOS app | ios needs-design | This is a minor feature I want to add - many other iOS apps (facebook, twitter, ...) have this kind of feedback for the pull-to-refresh gesture where the user gets a light vibration when he can stop pulling and the app will refresh. I think it makes the app feel a lot better.

I added a PR with an implementation and ... | 1.0 | Add haptic feedback to the iOS app - This is a minor feature I want to add - many other iOS apps (facebook, twitter, ...) have this kind of feedback for the pull-to-refresh gesture where the user gets a light vibration when he can stop pulling and the app will refresh. I think it makes the app feel a lot better.

I a... | non_test | add haptic feedback to the ios app this is a minor feature i want to add many other ios apps facebook twitter have this kind of feedback for the pull to refresh gesture where the user gets a light vibration when he can stop pulling and the app will refresh i think it makes the app feel a lot better i a... | 0 |

141,128 | 11,395,153,755 | IssuesEvent | 2020-01-30 10:48:28 | wazuh/wazuh-qa | https://api.github.com/repos/wazuh/wazuh-qa | opened | FIM System tests: Select required OS's and generate AMIs for them | fim-system-tests | It's required to generate AMIs from selected OS in order to freeze the machine in order to get a controlled environment that always generates the same alerts by default.

### Supported OS's

**Agent**:

- [ ] Redhat 7

- [ ] Redhat 8

- [ ] Centos 7

- [ ] Centos 8

- [ ] Ubuntu 16.04

- [ ] Ubuntu 18.0... | 1.0 | FIM System tests: Select required OS's and generate AMIs for them - It's required to generate AMIs from selected OS in order to freeze the machine in order to get a controlled environment that always generates the same alerts by default.

### Supported OS's

**Agent**:

- [ ] Redhat 7

- [ ] Redhat 8

- [ ] ... | test | fim system tests select required os s and generate amis for them it s required to generate amis from selected os in order to freeze the machine in order to get a controlled environment that always generates the same alerts by default supported os s agent redhat redhat centos... | 1 |

42,212 | 22,352,358,262 | IssuesEvent | 2022-06-15 13:08:20 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | Performance drops after running tensor multiplication for 15 seconds on M1 MAX (Pytorch MPS). | module: performance triaged module: mps | ### 🐛 Describe the bug

Performance drops to half of the original performance after running tensor multiplication for 15 seconds on M1 MAX (Pytorch MPS).

Python version: 3.9.7

OS: macOS 12.4

Pytorch version: 1.13.0.dev20220612

The code below reproduces the error:

```

from tqdm import tqdm

import torch

... | True | Performance drops after running tensor multiplication for 15 seconds on M1 MAX (Pytorch MPS). - ### 🐛 Describe the bug

Performance drops to half of the original performance after running tensor multiplication for 15 seconds on M1 MAX (Pytorch MPS).

Python version: 3.9.7

OS: macOS 12.4

Pytorch version: 1.13.0.... | non_test | performance drops after running tensor multiplication for seconds on max pytorch mps 🐛 describe the bug performance drops to half of the original performance after running tensor multiplication for seconds on max pytorch mps python version os macos pytorch version t... | 0 |

38,552 | 15,727,676,612 | IssuesEvent | 2021-03-29 12:58:24 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | opened | Networking component | Epic area/installation area/service-mesh | **Description**

Introduce a new component that can configure and manage the network setup for Kyma depending on the provider, topology etc...

**Reasons**

Correctly setup the network dynamically for all possible cases.

| 1.0 | Networking component - **Description**

Introduce a new component that can configure and manage the network setup for Kyma depending on the provider, topology etc...

**Reasons**

Correctly setup the network dynamically for all possible cases.

| non_test | networking component description introduce a new component that can configure and manage the network setup for kyma depending on the provider topology etc reasons correctly setup the network dynamically for all possible cases | 0 |

183,214 | 14,933,997,520 | IssuesEvent | 2021-01-25 09:57:37 | biopython/biopython | https://api.github.com/repos/biopython/biopython | opened | Update Bio.Application examples in Tutorial to use subprocess directly | Documentation help wanted | See discussion on #2877, closed via #3344 declaring Bio.Application obsolete, suggesting using subprocess directly.

We need to update the Tutorial to translate the command line wrapper examples to use subprocess directly. Working on Linux/macOS only would be a reasonable first pass but ideally tested as working on W... | 1.0 | Update Bio.Application examples in Tutorial to use subprocess directly - See discussion on #2877, closed via #3344 declaring Bio.Application obsolete, suggesting using subprocess directly.

We need to update the Tutorial to translate the command line wrapper examples to use subprocess directly. Working on Linux/macOS... | non_test | update bio application examples in tutorial to use subprocess directly see discussion on closed via declaring bio application obsolete suggesting using subprocess directly we need to update the tutorial to translate the command line wrapper examples to use subprocess directly working on linux macos only ... | 0 |

16,851 | 3,567,738,507 | IssuesEvent | 2016-01-26 00:27:24 | d3athrow/vgstation13 | https://api.github.com/repos/d3athrow/vgstation13 | closed | (WEB REPORT BY: maddoscientisto REMOTE: 198.245.63.50:7777) Device analyzer can't unload items in the reverse engine | Needs Moar Testing | Revision: 0ab3a1198908b99f15d98e4b0a4b7f05f2b17978

>General description of the issue

I can't unload scanned items on the reverse engine neither from an analyzer nor from a pda, machine designs work fine but for example circuits don't.

Also the queue is empty and it's not doing any reversing.

>What you expected... | 1.0 | (WEB REPORT BY: maddoscientisto REMOTE: 198.245.63.50:7777) Device analyzer can't unload items in the reverse engine - Revision: 0ab3a1198908b99f15d98e4b0a4b7f05f2b17978

>General description of the issue

I can't unload scanned items on the reverse engine neither from an analyzer nor from a pda, machine designs wor... | test | web report by maddoscientisto remote device analyzer can t unload items in the reverse engine revision general description of the issue i can t unload scanned items on the reverse engine neither from an analyzer nor from a pda machine designs work fine but for example circuits don t also the... | 1 |

29,507 | 7,103,489,181 | IssuesEvent | 2018-01-16 05:26:19 | TornadoClientDev/Storm-Anticheat | https://api.github.com/repos/TornadoClientDev/Storm-Anticheat | reopened | 1 Block Step Bypass - Envy 1.6 | bug bypass code patching soon | 1 block step works on storm anti-cheat using the client Envy 1.6.

Envy 1.6 download: https://www.youtube.com/watch?v=OmqNHo2-9ag

Showcase: https://gyazo.com/403effddabc84a030f3e5db226dd60ec | 1.0 | 1 Block Step Bypass - Envy 1.6 - 1 block step works on storm anti-cheat using the client Envy 1.6.

Envy 1.6 download: https://www.youtube.com/watch?v=OmqNHo2-9ag

Showcase: https://gyazo.com/403effddabc84a030f3e5db226dd60ec | non_test | block step bypass envy block step works on storm anti cheat using the client envy envy download showcase | 0 |

351,783 | 32,026,698,775 | IssuesEvent | 2023-09-22 09:20:12 | project-codeflare/codeflare-operator | https://api.github.com/repos/project-codeflare/codeflare-operator | closed | e2e test support: Store test pod logs and events | testing triage/needs-triage | ### Name of Feature or Improvement

Pod logs and events for test namespaces should be stored to help identifying test issues.

### Description of Problem the Feature Should Solve

Right now the test failures can be hard to investigate as the logs of pods in test namespaces aren't stored anywhere. Also events in the n... | 1.0 | e2e test support: Store test pod logs and events - ### Name of Feature or Improvement

Pod logs and events for test namespaces should be stored to help identifying test issues.

### Description of Problem the Feature Should Solve

Right now the test failures can be hard to investigate as the logs of pods in test name... | test | test support store test pod logs and events name of feature or improvement pod logs and events for test namespaces should be stored to help identifying test issues description of problem the feature should solve right now the test failures can be hard to investigate as the logs of pods in test namesp... | 1 |

53,386 | 6,719,260,298 | IssuesEvent | 2017-10-15 22:07:16 | bnzk/djangocms-misc | https://api.github.com/repos/bnzk/djangocms-misc | closed | editmode_fallback: ordering and copy pasting not working... | bug design decision needed musthave | ordering and copy pasting is not working when done when fallback plugins are shown, as the plugin's placeholder id is the one from the original language the plugins where added. this is hard! monkey patch ahead :( | 1.0 | editmode_fallback: ordering and copy pasting not working... - ordering and copy pasting is not working when done when fallback plugins are shown, as the plugin's placeholder id is the one from the original language the plugins where added. this is hard! monkey patch ahead :( | non_test | editmode fallback ordering and copy pasting not working ordering and copy pasting is not working when done when fallback plugins are shown as the plugin s placeholder id is the one from the original language the plugins where added this is hard monkey patch ahead | 0 |

521,534 | 15,110,654,509 | IssuesEvent | 2021-02-08 19:31:20 | ansible/awx | https://api.github.com/repos/ansible/awx | closed | [ui_next] Remove User from Team's list | component:ui priority:high state:needs_devel type:feature | ##### ISSUE TYPE

- Feature Idea

##### SUMMARY

The Team's list shows a list of users under 'Access'. We should allow users to be removed from a Team in the same way that they're removed from an Organization

| 1.0 | [ui_next] Remove User from Team's list - ##### ISSUE TYPE

- Feature Idea

##### SUMMARY

The Team's list shows a list of users under 'Access'. We should allow users to be removed from a Team in the same way that they're removed from an Organization

| non_test | remove user from team s list issue type feature idea summary the team s list shows a list of users under access we should allow users to be removed from a team in the same way that they re removed from an organization | 0 |

31,905 | 6,016,962,719 | IssuesEvent | 2017-06-07 08:29:57 | owncloud/core | https://api.github.com/repos/owncloud/core | closed | can't disable app with occ command | documentation | <!--

Thanks for reporting issues back to ownCloud! This is the issue tracker of ownCloud, if you have any support question please check out https://owncloud.org/support

This is the bug tracker for the Server component. Find other components at https://github.com/owncloud/core/blob/master/.github/CONTRIBUTING.md#gui... | 1.0 | can't disable app with occ command - <!--

Thanks for reporting issues back to ownCloud! This is the issue tracker of ownCloud, if you have any support question please check out https://owncloud.org/support

This is the bug tracker for the Server component. Find other components at https://github.com/owncloud/core/bl... | non_test | can t disable app with occ command thanks for reporting issues back to owncloud this is the issue tracker of owncloud if you have any support question please check out this is the bug tracker for the server component find other components at for reporting potential security issues please see t... | 0 |

27,374 | 4,308,332,939 | IssuesEvent | 2016-07-21 12:38:25 | Imaginaerum/magento2-language-fr-fr | https://api.github.com/repos/Imaginaerum/magento2-language-fr-fr | closed | Error processing your request for french language | need tests | Magento gives me this error when I try to open the French Page:

```

a:4:{i:0;s:117:"Notice: Undefined offset: 1 in /var/www/html/magento/vendor/magento/framework/App/Language/Dictionary.php on line 196";i:1;s:9624:"#0 /var/www/html/magento/vendor/magento/framework/App/Language/Dictionary.php(196): Magento\Framework\A... | 1.0 | Error processing your request for french language - Magento gives me this error when I try to open the French Page:

```

a:4:{i:0;s:117:"Notice: Undefined offset: 1 in /var/www/html/magento/vendor/magento/framework/App/Language/Dictionary.php on line 196";i:1;s:9624:"#0 /var/www/html/magento/vendor/magento/framework/A... | test | error processing your request for french language magento gives me this error when i try to open the french page a i s notice undefined offset in var www html magento vendor magento framework app language dictionary php on line i s var www html magento vendor magento framework app lang... | 1 |

99,755 | 8,710,267,829 | IssuesEvent | 2018-12-06 16:01:06 | italia/spid | https://api.github.com/repos/italia/spid | closed | Verifica metadata Comune di Borgo San Dalmazzo | aggiornamento md test metadata | Buongiorno,

Per conto del Comune di Borgo San Dalmazzo

abbiamo predisposto i metadata e pubblicati all'URL

https://borgosandalmazzo.multeonline.it/serviziSPID/metadata.xml

i metadata sono stati aggiornati con l'aggiunta di un secondo SP

[metadata_borgosandalmazzo2SP_new-signed.zip](https://github.com/italia... | 1.0 | Verifica metadata Comune di Borgo San Dalmazzo - Buongiorno,

Per conto del Comune di Borgo San Dalmazzo

abbiamo predisposto i metadata e pubblicati all'URL

https://borgosandalmazzo.multeonline.it/serviziSPID/metadata.xml

i metadata sono stati aggiornati con l'aggiunta di un secondo SP

[metadata_borgosandal... | test | verifica metadata comune di borgo san dalmazzo buongiorno per conto del comune di borgo san dalmazzo abbiamo predisposto i metadata e pubblicati all url i metadata sono stati aggiornati con l aggiunta di un secondo sp cordiali saluti facondini stefano maggioli spa | 1 |

798,982 | 28,300,496,984 | IssuesEvent | 2023-04-10 05:22:18 | googleapis/google-cloud-ruby | https://api.github.com/repos/googleapis/google-cloud-ruby | closed | [Nightly CI Failures] Failures detected for google-cloud-recommender-v1 | type: bug priority: p1 nightly failure | At 2023-04-09 08:55:45 UTC, detected failures in google-cloud-recommender-v1 for: yard

report_key_b203859c45be229c6bf93346666a7a3d | 1.0 | [Nightly CI Failures] Failures detected for google-cloud-recommender-v1 - At 2023-04-09 08:55:45 UTC, detected failures in google-cloud-recommender-v1 for: yard

report_key_b203859c45be229c6bf93346666a7a3d | non_test | failures detected for google cloud recommender at utc detected failures in google cloud recommender for yard report key | 0 |

7,831 | 2,859,235,834 | IssuesEvent | 2015-06-03 09:23:34 | osakagamba/7GIQSKRNE5P3AVTZCEFXE3ON | https://api.github.com/repos/osakagamba/7GIQSKRNE5P3AVTZCEFXE3ON | closed | iZSlgSTuW3FRMA9yz7UXijsUSG/KRJ6/TVETrnWvWUkNJzkswUyiLfPFrh7VbrbEj5sfsxeTvGsa/Oa8GxNpaign7A6Coz5ae14rv+tWWi52l48VXNkrnOFkk5L8REpy9fwCGnYh2XkPvm5xC9IyvdajES8up8FlU+syhnN/c8U= | design | rBQKgP73P4u5KZfKwS2soWfb5zGicuy3cNM4BHOTJVjoAO1IsSYD3oY4iWeGJ84jizX6GxHehwIDrTpQos+3GWWWoZyjSr3PIj/7qkvlXXjB5CtDmfjlQhuYpmcaggw8NvWiRDK/VCUERMEkok9+FWQ/AyjaaAbcXtEXZlKgkG/DR6dREmQH8G+QfQXUI4DWi/cxzTH7FHVi1a1M/RPF+wAmw/CENeKHQMO9MrC3rlObDebNufa95slconUREJ/oetQttr5qw8C2Esfnxc06ea5rXuv2jLlZCpP3zr3oatTyfGeEhMrlklHgrZTi9YT7... | 1.0 | iZSlgSTuW3FRMA9yz7UXijsUSG/KRJ6/TVETrnWvWUkNJzkswUyiLfPFrh7VbrbEj5sfsxeTvGsa/Oa8GxNpaign7A6Coz5ae14rv+tWWi52l48VXNkrnOFkk5L8REpy9fwCGnYh2XkPvm5xC9IyvdajES8up8FlU+syhnN/c8U= - rBQKgP73P4u5KZfKwS2soWfb5zGicuy3cNM4BHOTJVjoAO1IsSYD3oY4iWeGJ84jizX6GxHehwIDrTpQos+3GWWWoZyjSr3PIj/7qkvlXXjB5CtDmfjlQhuYpmcaggw8NvWiRDK/VCUERMEko... | non_test | syhnn fwq ayjaaabcxtexzlkgkg rpf wamw | 0 |

204,764 | 15,530,955,603 | IssuesEvent | 2021-03-13 21:16:37 | isontheline/pro.webssh.net | https://api.github.com/repos/isontheline/pro.webssh.net | closed | SFTP : can’t refresh if short files list | bug testflight-release-needed | **Describe the feature**

If the files list doesn’t exceed the screen height size then we can’t use the pull to refresh. | 1.0 | SFTP : can’t refresh if short files list - **Describe the feature**

If the files list doesn’t exceed the screen height size then we can’t use the pull to refresh. | test | sftp can’t refresh if short files list describe the feature if the files list doesn’t exceed the screen height size then we can’t use the pull to refresh | 1 |

52,630 | 22,326,842,527 | IssuesEvent | 2022-06-14 11:25:15 | kyma-project/istio-operator | https://api.github.com/repos/kyma-project/istio-operator | closed | [POC] First implementation of kyma istio operator | area/service-mesh area/installation | <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

Implement kyma istio operator managaing kyma istio component. Kyma istio operator should be based on k8s operator watching kyma istio oper... | 1.0 | [POC] First implementation of kyma istio operator - <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

Implement kyma istio operator managaing kyma istio component. Kyma istio operator shou... | non_test | first implementation of kyma istio operator thank you for your contribution before you submit the issue search open and closed issues for duplicates read the contributing guidelines description implement kyma istio operator managaing kyma istio component kyma istio operator should b... | 0 |

202,816 | 15,302,366,661 | IssuesEvent | 2021-02-24 14:39:59 | infinispan/infinispan-operator | https://api.github.com/repos/infinispan/infinispan-operator | closed | CI test didn't stop execute tests if first step is fails | test | There are two portions of tests are running on Travis CI now with

* RUN_SA_OPERATOR=TRUE make test PARALLEL_COUNT=2

* make multinamespace-test PARALLEL_COUNT=2

even if first test is failed, second continuing to execute instead of stop CI job execution with fail state

This is default TravisCI behavior and should ... | 1.0 | CI test didn't stop execute tests if first step is fails - There are two portions of tests are running on Travis CI now with

* RUN_SA_OPERATOR=TRUE make test PARALLEL_COUNT=2

* make multinamespace-test PARALLEL_COUNT=2

even if first test is failed, second continuing to execute instead of stop CI job execution with... | test | ci test didn t stop execute tests if first step is fails there are two portions of tests are running on travis ci now with run sa operator true make test parallel count make multinamespace test parallel count even if first test is failed second continuing to execute instead of stop ci job execution with... | 1 |

180,224 | 13,926,744,788 | IssuesEvent | 2020-10-21 18:44:55 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | opened | Spike: E2E testing coverage | Automated testing | ## Description

The CMS team is interested in having E2E test coverage to ensure consistent function of our platform.

The Facilities team has E2E testing on their roadmap for Q4. As we discuss the CMS strategy for E2E, we need to consider whether and how we may combine our E2E efforts w/ that of the Facilities team ... | 1.0 | Spike: E2E testing coverage - ## Description

The CMS team is interested in having E2E test coverage to ensure consistent function of our platform.

The Facilities team has E2E testing on their roadmap for Q4. As we discuss the CMS strategy for E2E, we need to consider whether and how we may combine our E2E efforts w... | test | spike testing coverage description the cms team is interested in having test coverage to ensure consistent function of our platform the facilities team has testing on their roadmap for as we discuss the cms strategy for we need to consider whether and how we may combine our efforts w that of t... | 1 |

316,249 | 27,148,437,028 | IssuesEvent | 2023-02-16 22:09:16 | Iridescent-CM/technovation-app | https://api.github.com/repos/Iridescent-CM/technovation-app | opened | Address location tests | testing | The following tests should be updated/fixed, or removed:

```

Saving a location in a geopolitically sensitive area prompts the user with a choice when Israel is the country

# Temporarily skipped with xit

# ./spec/system/location/sensitive_locations_spec.rb:17

Saving a location in a geopolitically sensitive ar... | 1.0 | Address location tests - The following tests should be updated/fixed, or removed:

```

Saving a location in a geopolitically sensitive area prompts the user with a choice when Israel is the country

# Temporarily skipped with xit

# ./spec/system/location/sensitive_locations_spec.rb:17

Saving a location in a ge... | test | address location tests the following tests should be updated fixed or removed saving a location in a geopolitically sensitive area prompts the user with a choice when israel is the country temporarily skipped with xit spec system location sensitive locations spec rb saving a location in a geo... | 1 |

28,909 | 4,445,991,134 | IssuesEvent | 2016-08-20 11:31:21 | herculeshssj/orcamento | https://api.github.com/repos/herculeshssj/orcamento | opened | Fechar fatura tornando a fatura vencida | Teste | Verificar se houve clique duplo por parte do usuário, ou realmente está ocorrendo o fechamento e posterior mudança para o status de vencida da fatura. | 1.0 | Fechar fatura tornando a fatura vencida - Verificar se houve clique duplo por parte do usuário, ou realmente está ocorrendo o fechamento e posterior mudança para o status de vencida da fatura. | test | fechar fatura tornando a fatura vencida verificar se houve clique duplo por parte do usuário ou realmente está ocorrendo o fechamento e posterior mudança para o status de vencida da fatura | 1 |

177,077 | 13,683,012,424 | IssuesEvent | 2020-09-30 00:29:16 | MiqueasAmorim/Pedido | https://api.github.com/repos/MiqueasAmorim/Pedido | closed | CT01 (ProdutoTest) - O valor unitário do produto não pode ser 0 | test case | **Dados de entrada:**

- Nome : Borracha

- Valor unitário: 0

- Quantidade: 10

**Resultado esperado:**

- RuntimeException: "Valor inválido: 0.0"

| 1.0 | CT01 (ProdutoTest) - O valor unitário do produto não pode ser 0 - **Dados de entrada:**

- Nome : Borracha

- Valor unitário: 0

- Quantidade: 10

**Resultado esperado:**

- RuntimeException: "Valor inválido: 0.0"

| test | produtotest o valor unitário do produto não pode ser dados de entrada nome borracha valor unitário quantidade resultado esperado runtimeexception valor inválido | 1 |

191,812 | 14,596,491,044 | IssuesEvent | 2020-12-20 16:04:07 | github-vet/rangeloop-pointer-findings | https://api.github.com/repos/github-vet/rangeloop-pointer-findings | closed | hello-mr-code/terraform-oci: oci/apigateway_api_test.go; 16 LoC | fresh small test |

Found a possible issue in [hello-mr-code/terraform-oci](https://www.github.com/hello-mr-code/terraform-oci) at [oci/apigateway_api_test.go](https://github.com/hello-mr-code/terraform-oci/blob/2f6aa93ef8643328af454512a5fe78ab006697f0/oci/apigateway_api_test.go#L277-L292)

Below is the message reported by the analyzer f... | 1.0 | hello-mr-code/terraform-oci: oci/apigateway_api_test.go; 16 LoC -

Found a possible issue in [hello-mr-code/terraform-oci](https://www.github.com/hello-mr-code/terraform-oci) at [oci/apigateway_api_test.go](https://github.com/hello-mr-code/terraform-oci/blob/2f6aa93ef8643328af454512a5fe78ab006697f0/oci/apigateway_api_t... | test | hello mr code terraform oci oci apigateway api test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message ... | 1 |

177,984 | 14,656,908,385 | IssuesEvent | 2020-12-28 14:27:51 | beingmeta/kno | https://api.github.com/repos/beingmeta/kno | closed | PRINTOUT documentation | documentation | Describe basic model, use of return values and side-effects. Custom variants. | 1.0 | PRINTOUT documentation - Describe basic model, use of return values and side-effects. Custom variants. | non_test | printout documentation describe basic model use of return values and side effects custom variants | 0 |

278,854 | 30,702,412,739 | IssuesEvent | 2023-07-27 01:28:01 | pazhanivel07/linux_4.1.15 | https://api.github.com/repos/pazhanivel07/linux_4.1.15 | closed | CVE-2017-14156 (Medium) detected in linuxlinux-4.6 - autoclosed | Mend: dependency security vulnerability | ## CVE-2017-14156 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kern... | True | CVE-2017-14156 (Medium) detected in linuxlinux-4.6 - autoclosed - ## CVE-2017-14156 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux ... | non_test | cve medium detected in linuxlinux autoclosed cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files dri... | 0 |

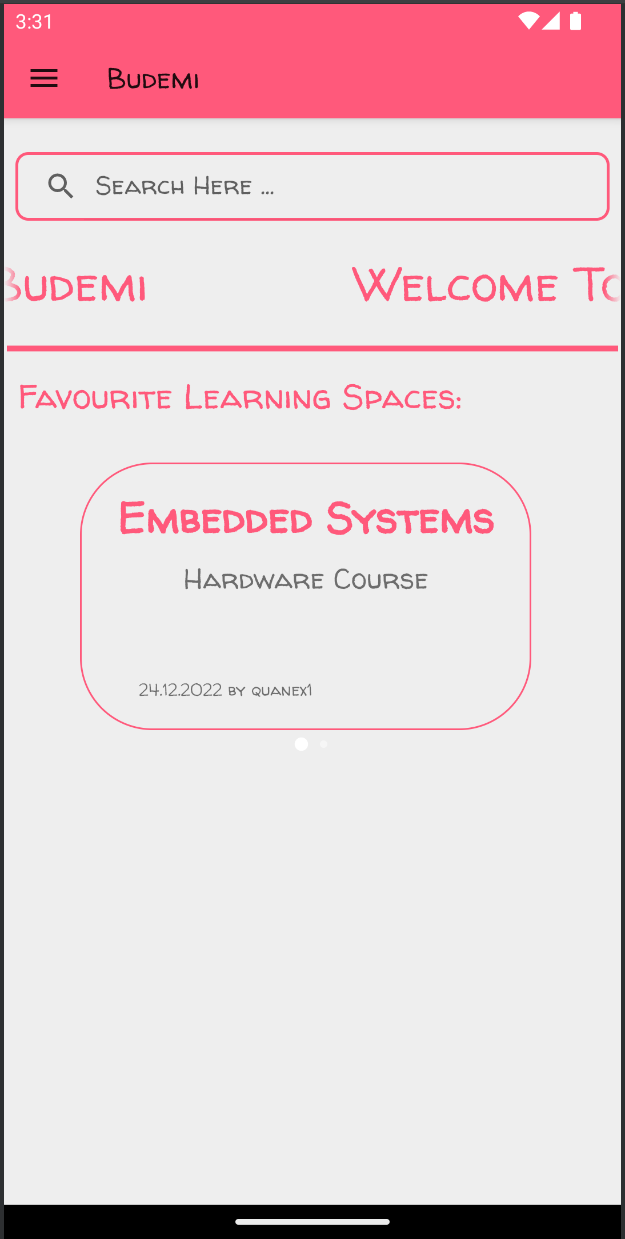

13,514 | 5,392,625,845 | IssuesEvent | 2017-02-26 13:03:13 | junit-team/junit5 | https://api.github.com/repos/junit-team/junit5 | closed | Upgrade to Gradle 3.4 | build enhancement up-for-grabs | ## Overview

The build fails after upgrading to Gradle 3.4 with following stacktrace:

```

...

:junit-platform-gradle-plugin:spotlessCheck

:junit-platform-gradle-plugin:compileTestJava NO-SOURCE

:junit-platform-gradle-plugin:compileTestGroovy

:junit-platform-gradle-plugin:processTestResources NO-SOURCE

:junit-p... | 1.0 | Upgrade to Gradle 3.4 - ## Overview

The build fails after upgrading to Gradle 3.4 with following stacktrace:

```

...

:junit-platform-gradle-plugin:spotlessCheck

:junit-platform-gradle-plugin:compileTestJava NO-SOURCE

:junit-platform-gradle-plugin:compileTestGroovy

:junit-platform-gradle-plugin:processTestResou... | non_test | upgrade to gradle overview the build fails after upgrading to gradle with following stacktrace junit platform gradle plugin spotlesscheck junit platform gradle plugin compiletestjava no source junit platform gradle plugin compiletestgroovy junit platform gradle plugin processtestresou... | 0 |

39,883 | 9,726,248,643 | IssuesEvent | 2019-05-30 10:56:28 | primefaces/primereact | https://api.github.com/repos/primefaces/primereact | closed | DataTable expanded rows collapse when modifying one property of a record | defect | **I'm submitting a ...**

```

[X] bug report

[ ] feature request

[ ] support request => Please do not submit support request here, instead see https://forum.primefaces.org/viewforum.php?f=57

``

**Current behavior**

If you are using the row expansion feature, and saving the expanded row data in the state (as demo... | 1.0 | DataTable expanded rows collapse when modifying one property of a record - **I'm submitting a ...**

```

[X] bug report

[ ] feature request

[ ] support request => Please do not submit support request here, instead see https://forum.primefaces.org/viewforum.php?f=57

``

**Current behavior**

If you are using the ro... | non_test | datatable expanded rows collapse when modifying one property of a record i m submitting a bug report feature request support request please do not submit support request here instead see current behavior if you are using the row expansion feature and saving the expanded row dat... | 0 |

279,696 | 30,730,680,103 | IssuesEvent | 2023-07-28 01:06:06 | Gal-Doron/Baragon-test-4 | https://api.github.com/repos/Gal-Doron/Baragon-test-4 | opened | aws-java-sdk-core-1.11.497.jar: 3 vulnerabilities (highest severity is: 7.5) | Mend: dependency security vulnerability | <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>aws-java-sdk-core-1.11.497.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /BaragonServiceIntegrationTests/pom.xml</p>

<p>Path to vulnerable library: /home/... | True | aws-java-sdk-core-1.11.497.jar: 3 vulnerabilities (highest severity is: 7.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>aws-java-sdk-core-1.11.497.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /B... | non_test | aws java sdk core jar vulnerabilities highest severity is vulnerable library aws java sdk core jar path to dependency file baragonserviceintegrationtests pom xml path to vulnerable library home wss scanner repository commons codec commons codec commons codec jar ... | 0 |

704,516 | 24,199,352,487 | IssuesEvent | 2022-09-24 10:31:27 | ModuSynth/meta | https://api.github.com/repos/ModuSynth/meta | opened | Segregate the synthesizers per user | Priority P1 feature | ## Context

Until now, every synthesizer created by any user will be regrouped under the same interface, and can be edited by whoever is on the interface. Until we decide to implement a collaborative way of editing synthesizers, only their creator should be able to modify them. To implement that, we need to implement... | 1.0 | Segregate the synthesizers per user - ## Context

Until now, every synthesizer created by any user will be regrouped under the same interface, and can be edited by whoever is on the interface. Until we decide to implement a collaborative way of editing synthesizers, only their creator should be able to modify them. T... | non_test | segregate the synthesizers per user context until now every synthesizer created by any user will be regrouped under the same interface and can be edited by whoever is on the interface until we decide to implement a collaborative way of editing synthesizers only their creator should be able to modify them t... | 0 |

278,415 | 24,152,376,272 | IssuesEvent | 2022-09-22 03:02:49 | Brain-Bones/skeleton | https://api.github.com/repos/Brain-Bones/skeleton | closed | Add "Copy to Clipboard" feature to Code Blocks | enhancement ready to test | Reference:

https://mantine.dev/core/code/

This seems like an obvious use case for the newly requested action. Though the action will need to exist before this is possible.

This is a blocking prerequisite:

https://github.com/Brain-Bones/skeleton/issues/199

| 1.0 | Add "Copy to Clipboard" feature to Code Blocks - Reference:

https://mantine.dev/core/code/

This seems like an obvious use case for the newly requested action. Though the action will need to exist before this is possible.

This is a blocking prerequisite:

https://github.com/Brain-Bones/skeleton/issues/199

| test | add copy to clipboard feature to code blocks reference this seems like an obvious use case for the newly requested action though the action will need to exist before this is possible this is a blocking prerequisite | 1 |

11,200 | 9,276,181,822 | IssuesEvent | 2019-03-20 01:46:00 | Microsoft/vscode-cpptools | https://api.github.com/repos/Microsoft/vscode-cpptools | opened | Using the `Build and Debug Active File` command causes an "undefined task type" warning to get logged. | Language Service bug | With 0.22.0.

It doesn't seem to cause any other issue. Not sure why we never noticed this earlier.

| 1.0 | Using the `Build and Debug Active File` command causes an "undefined task type" warning to get logged. - With 0.22.0.

It doesn't seem to cause any other issue. Not sure why we never noticed this earlier.

| non_test | using the build and debug active file command causes an undefined task type warning to get logged with it doesn t seem to cause any other issue not sure why we never noticed this earlier | 0 |

366,251 | 25,574,672,339 | IssuesEvent | 2022-11-30 20:54:49 | lightninglabs/taro | https://api.github.com/repos/lightninglabs/taro | opened | BIP updates | documentation bips spec | Need to make multiple Taro BIP updates to reflect spec changes made during implementation.

Non-interactive transfers ( from #159 , #172 , #176 ) :

- [ ] Unconditional use of split commitments for non-interactive transfers

- [ ] Zero-value root locators for full-value sends

- [ ] NUMs key use for full-value sends... | 1.0 | BIP updates - Need to make multiple Taro BIP updates to reflect spec changes made during implementation.

Non-interactive transfers ( from #159 , #172 , #176 ) :

- [ ] Unconditional use of split commitments for non-interactive transfers

- [ ] Zero-value root locators for full-value sends

- [ ] NUMs key use for fu... | non_test | bip updates need to make multiple taro bip updates to reflect spec changes made during implementation non interactive transfers from unconditional use of split commitments for non interactive transfers zero value root locators for full value sends nums key use for full value sen... | 0 |

350,547 | 31,925,494,505 | IssuesEvent | 2023-09-19 01:12:36 | AcalaNetwork/Acala | https://api.github.com/repos/AcalaNetwork/Acala | closed | broken e2e tests | T4-tests | https://github.com/AcalaNetwork/Acala/runs/7441526108?check_suite_focus=true

```

pthread lock: Invalid argument

error: test failed, to rerun pass '-p test-service --test standalone'

Caused by:

process didn't exit successfully: `/Acala/runner/work/Acala/Acala/target/release/deps/standalone-4010b5bae364c68f --in... | 1.0 | broken e2e tests - https://github.com/AcalaNetwork/Acala/runs/7441526108?check_suite_focus=true

```

pthread lock: Invalid argument

error: test failed, to rerun pass '-p test-service --test standalone'

Caused by:

process didn't exit successfully: `/Acala/runner/work/Acala/Acala/target/release/deps/standalone-40... | test | broken tests pthread lock invalid argument error test failed to rerun pass p test service test standalone caused by process didn t exit successfully acala runner work acala acala target release deps standalone include ignored skip test full node catching up skip simple balances te... | 1 |

658,529 | 21,895,982,931 | IssuesEvent | 2022-05-20 08:40:03 | StartsMercury/simply-no-shading | https://api.github.com/repos/StartsMercury/simply-no-shading | closed | [5.0.0] Smart Chunk Reload with Iris Shader is Active | enhancement low priority | This mod is useless when any shader is enabled. Therefore reloading chunks on changing the config is not necessary. | 1.0 | [5.0.0] Smart Chunk Reload with Iris Shader is Active - This mod is useless when any shader is enabled. Therefore reloading chunks on changing the config is not necessary. | non_test | smart chunk reload with iris shader is active this mod is useless when any shader is enabled therefore reloading chunks on changing the config is not necessary | 0 |

292,933 | 25,251,608,468 | IssuesEvent | 2022-11-15 15:04:18 | ava-labs/spacesvm-rs | https://api.github.com/repos/ava-labs/spacesvm-rs | opened | add back integration tests | tests | These were removed because the code base was changed very quickly before release. | 1.0 | add back integration tests - These were removed because the code base was changed very quickly before release. | test | add back integration tests these were removed because the code base was changed very quickly before release | 1 |

307,150 | 26,518,544,149 | IssuesEvent | 2023-01-18 23:18:57 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | closed | DISABLED test_dynamo_min_operator_with_shape_dynamic_shapes (torch._dynamo.testing.make_test_cls_with_patches.<locals>.DummyTestClass) | module: flaky-tests skipped module: unknown | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/failure/test_dynamo_min_operator_with_shape_dynamic_shapes) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/10713308472).

Over the past 72 hours, it has flakily failed i... | 1.0 | DISABLED test_dynamo_min_operator_with_shape_dynamic_shapes (torch._dynamo.testing.make_test_cls_with_patches.<locals>.DummyTestClass) - Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/failure/test_dynamo_min_operator_with_shape_dynamic_shapes) and the... | test | disabled test dynamo min operator with shape dynamic shapes torch dynamo testing make test cls with patches dummytestclass platforms linux this test was disabled because it is failing in ci see and the most recent trunk over the past hours it has flakily failed in workflow s debugging instr... | 1 |

121,599 | 15,987,339,666 | IssuesEvent | 2021-04-19 00:11:48 | almac-775/sp21-cse110-lab3 | https://api.github.com/repos/almac-775/sp21-cse110-lab3 | closed | Change Display | design documentation | <strong>What is the current issue? Please describe in detail</strong>

Have to mess with display values....

<strong>How would you like us to improve on this issue?</strong>

Not sure. Have to experiment with listed display values on the writeup...

<strong>If possible, please submit a picture or source for referen... | 1.0 | Change Display - <strong>What is the current issue? Please describe in detail</strong>

Have to mess with display values....

<strong>How would you like us to improve on this issue?</strong>

Not sure. Have to experiment with listed display values on the writeup...

<strong>If possible, please submit a picture or s... | non_test | change display what is the current issue please describe in detail have to mess with display values how would you like us to improve on this issue not sure have to experiment with listed display values on the writeup if possible please submit a picture or source for reference | 0 |

181,957 | 14,085,429,848 | IssuesEvent | 2020-11-05 00:58:33 | GaloisInc/crucible | https://api.github.com/repos/GaloisInc/crucible | opened | crux-mir test suite failures | MIR testing | I can't figure out how to run the `crux-mir` test suite locally. When I run it, i get a cavalcade of similar errors.

```

Test suite test: RUNNING...

crux-mir

crux concrete

impl

simple: FAIL (0.25s)

Compiling and running oracle program (0.21s)

Oracle outp... | 1.0 | crux-mir test suite failures - I can't figure out how to run the `crux-mir` test suite locally. When I run it, i get a cavalcade of similar errors.

```

Test suite test: RUNNING...

crux-mir

crux concrete

impl

simple: FAIL (0.25s)

Compiling and running oracle program... | test | crux mir test suite failures i can t figure out how to run the crux mir test suite locally when i run it i get a cavalcade of similar errors test suite test running crux mir crux concrete impl simple fail compiling and running oracle program ... | 1 |

20,745 | 6,101,957,506 | IssuesEvent | 2017-06-20 15:32:48 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | 404 Category not found when article added in backend after upgrade to Joomla! 3.7.2 | No Code Attached Yet | ### Steps to reproduce the issue

1. Login to Joomla! 3.7.2 backend.

2. Add new article.

3. Save article or save and close article.

### Expected result

Remain on the article edit page with the link modified to: ...**edit&id=article_id** or open the article list page.

### Actual result

Front end 404 Categ... | 1.0 | 404 Category not found when article added in backend after upgrade to Joomla! 3.7.2 - ### Steps to reproduce the issue

1. Login to Joomla! 3.7.2 backend.

2. Add new article.

3. Save article or save and close article.

### Expected result

Remain on the article edit page with the link modified to: ...**edit&id=a... | non_test | category not found when article added in backend after upgrade to joomla steps to reproduce the issue login to joomla backend add new article save article or save and close article expected result remain on the article edit page with the link modified to edit id art... | 0 |

15,147 | 3,927,180,808 | IssuesEvent | 2016-04-23 11:41:30 | MarlinFirmware/Marlin | https://api.github.com/repos/MarlinFirmware/Marlin | closed | Difference between zprobe_zoffset and Z home offset? | Documentation Issue Inactive | Does anyone know the difference between these two values? It seems they both serve the same purpose. If that is true, can one of them be removed? If that is not true could someone explain to me what the difference is? | 1.0 | Difference between zprobe_zoffset and Z home offset? - Does anyone know the difference between these two values? It seems they both serve the same purpose. If that is true, can one of them be removed? If that is not true could someone explain to me what the difference is? | non_test | difference between zprobe zoffset and z home offset does anyone know the difference between these two values it seems they both serve the same purpose if that is true can one of them be removed if that is not true could someone explain to me what the difference is | 0 |

41,632 | 5,379,112,423 | IssuesEvent | 2017-02-23 16:27:33 | learn-co-curriculum/redux-reducer | https://api.github.com/repos/learn-co-curriculum/redux-reducer | closed | Reducer Tests | Test | manageFriends reducer tests for entire state to be returned.

managePresents reducer tests only for state.presents but the default still tests for the entire state. Update for consistency to avoid confusion? | 1.0 | Reducer Tests - manageFriends reducer tests for entire state to be returned.

managePresents reducer tests only for state.presents but the default still tests for the entire state. Update for consistency to avoid confusion? | test | reducer tests managefriends reducer tests for entire state to be returned managepresents reducer tests only for state presents but the default still tests for the entire state update for consistency to avoid confusion | 1 |

180,173 | 30,456,132,622 | IssuesEvent | 2023-07-16 22:44:50 | limlabs/saleor-storefront-starter | https://api.github.com/repos/limlabs/saleor-storefront-starter | closed | Theme | Epic Design | ## Epic: [Theme]

### Description

[Provide a brief overview of the epic and its goals. Include any relevant background information or context.]

### Dependencies

- [Dependency 1]

- [Dependency 2]

- [Dependency 3]

### Links

- [Link 1]

- [Link 2]

- [Link 3]

### Tasks

- [Task 1]

- [Task 2]

- [Task 3]

... | 1.0 | Theme - ## Epic: [Theme]

### Description

[Provide a brief overview of the epic and its goals. Include any relevant background information or context.]

### Dependencies

- [Dependency 1]

- [Dependency 2]

- [Dependency 3]

### Links

- [Link 1]

- [Link 2]

- [Link 3]

### Tasks

- [Task 1]

- [Task 2]

- [T... | non_test | theme epic description dependencies links tasks acceptance criteria related issues additional notes considerations labels assignees milestone e... | 0 |

685,101 | 23,443,958,651 | IssuesEvent | 2022-08-15 17:37:43 | cds-snc/notification-planning | https://api.github.com/repos/cds-snc/notification-planning | opened | Delete client data when their account is archived | Medium Priority | Priorité moyenne Privacy | Vie privée Policy l Politique Data l Données | _Delete client data when their account is archived _

## Description

As a client of Notify, particularly PTM, I need to be able to have my data (name, phone number, email address) deleted from GC Notify's records and database so that I can have my privacy respected if my account has been archived, or if I am no longer... | 1.0 | Delete client data when their account is archived - _Delete client data when their account is archived _

## Description

As a client of Notify, particularly PTM, I need to be able to have my data (name, phone number, email address) deleted from GC Notify's records and database so that I can have my privacy respected ... | non_test | delete client data when their account is archived delete client data when their account is archived description as a client of notify particularly ptm i need to be able to have my data name phone number email address deleted from gc notify s records and database so that i can have my privacy respected ... | 0 |

70,292 | 15,079,827,269 | IssuesEvent | 2021-02-05 10:42:19 | mkevenaar/chocolatey-packages | https://api.github.com/repos/mkevenaar/chocolatey-packages | closed | CVE-2015-3227 (Medium) detected in activesupport-3.2.22.5.gem - autoclosed | security vulnerability | ## CVE-2015-3227 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>activesupport-3.2.22.5.gem</b></p></summary>

<p>A toolkit of support libraries and Ruby core extensions extracted fro... | True | CVE-2015-3227 (Medium) detected in activesupport-3.2.22.5.gem - autoclosed - ## CVE-2015-3227 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>activesupport-3.2.22.5.gem</b></p></summa... | non_test | cve medium detected in activesupport gem autoclosed cve medium severity vulnerability vulnerable library activesupport gem a toolkit of support libraries and ruby core extensions extracted from the rails framework rich support for multibyte strings internationalization ... | 0 |

14,633 | 11,028,688,972 | IssuesEvent | 2019-12-06 12:18:11 | killercup/cargo-edit | https://api.github.com/repos/killercup/cargo-edit | closed | Update CI to test on Linux, Mac, Windows | help wanted infrastructure | We should update our CI setup to test on all platforms. I recently saw that https://github.com/actions-rs/ has a collection of templates for Github actions that support testing and building Rust projects, so that might be an interesting alternative to travis.

My requirements:

- [ ] keep [bors](https://bors.tech/)... | 1.0 | Update CI to test on Linux, Mac, Windows - We should update our CI setup to test on all platforms. I recently saw that https://github.com/actions-rs/ has a collection of templates for Github actions that support testing and building Rust projects, so that might be an interesting alternative to travis.

My requirement... | non_test | update ci to test on linux mac windows we should update our ci setup to test on all platforms i recently saw that has a collection of templates for github actions that support testing and building rust projects so that might be an interesting alternative to travis my requirements keep test ... | 0 |

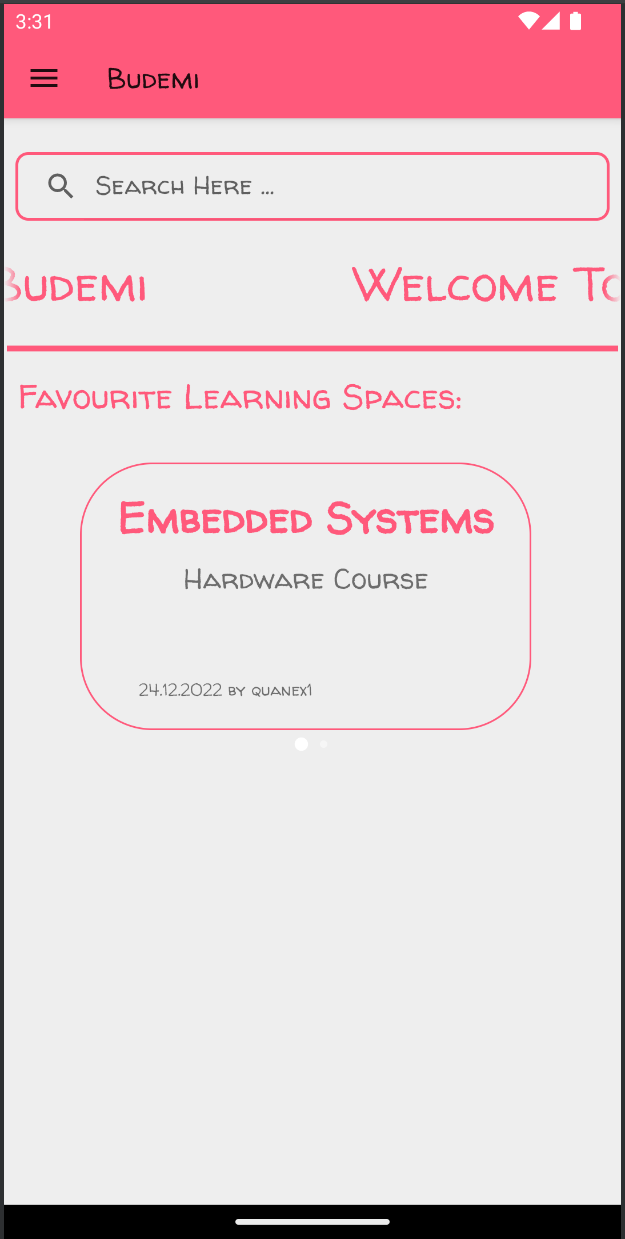

373,786 | 26,084,549,054 | IssuesEvent | 2022-12-25 23:15:11 | bounswe/bounswe2022group1 | https://api.github.com/repos/bounswe/bounswe2022group1 | closed | Favorite learning spaces added to the home page | Type: Documentation Priority: Medium Status: Completed Android | Favorite learning spaces section added to the home page.

API connections will be implemented.

Page design looks like this:

*Reviewer:*

@omerozdemir1

*Review Deadline:*

27.12.2022 | 1.0 | Favorite learning spaces added to the home page - Favorite learning spaces section added to the home page.

API connections will be implemented.

Page design looks like this:

*Reviewer:*

@omerozdemir1 ... | non_test | favorite learning spaces added to the home page favorite learning spaces section added to the home page api connections will be implemented page design looks like this reviewer review deadline | 0 |

279,751 | 24,252,594,201 | IssuesEvent | 2022-09-27 15:12:20 | microsoft/vscode | https://api.github.com/repos/microsoft/vscode | closed | Test badge on TreeView | testplan-item | Refs: https://github.com/microsoft/vscode/issues/62783

- [x] anyOS @meganrogge

- [x] anyOS @bpasero

Complexity: 4

Roles: Developer

[Create Issue](https://github.com/microsoft/vscode/issues/new?body=Testing+%23161780%0A%0A&assignees=alexr00)

---

We have newly finalized API for badges on TreeViews: https:... | 1.0 | Test badge on TreeView - Refs: https://github.com/microsoft/vscode/issues/62783

- [x] anyOS @meganrogge

- [x] anyOS @bpasero

Complexity: 4

Roles: Developer

[Create Issue](https://github.com/microsoft/vscode/issues/new?body=Testing+%23161780%0A%0A&assignees=alexr00)

---

We have newly finalized API for ba... | test | test badge on treeview refs anyos meganrogge anyos bpasero complexity roles developer we have newly finalized api for badges on treeviews this badge shows as a circle with a number on the view s view container to test read the inline documentation for the api and v... | 1 |

250,591 | 21,316,166,221 | IssuesEvent | 2022-04-16 10:07:11 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: django failed | C-test-failure O-robot O-roachtest release-blocker branch-release-21.2 | roachtest.django [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4907623&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4907623&tab=artifacts#/django) on release-21.2 @ [0f0029653c25772d09adae5be308ce0c45f84f0a](https://github.com/cockroachdb/cockroach/commits/0f0029... | 2.0 | roachtest: django failed - roachtest.django [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=4907623&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=4907623&tab=artifacts#/django) on release-21.2 @ [0f0029653c25772d09adae5be308ce0c45f84f0a](https://github.com/cockroach... | test | roachtest django failed roachtest django with on release the test failed on branch release cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts django run orm helpers go orm helpers go django go django go test runner go ... | 1 |

1,906 | 4,046,950,467 | IssuesEvent | 2016-05-23 00:33:22 | itkpi/events-parser | https://api.github.com/repos/itkpi/events-parser | closed | Black list | enhancement (service) enhancement (what user see) | Випилювати будь-які:

- по title

- по title || agenda

- Слово "Курсы" в title & назва компанії в title/agenda

| 1.0 | Black list - Випилювати будь-які:

- по title

- по title || agenda

- Слово "Курсы" в title & назва компанії в title/agenda

| non_test | black list випилювати будь які по title по title agenda слово курсы в title назва компанії в title agenda | 0 |

102,212 | 8,821,982,921 | IssuesEvent | 2019-01-02 06:50:51 | humera987/FXScript-Test-Functions | https://api.github.com/repos/humera987/FXScript-Test-Functions | closed | testing 2 : ApiV1ProjectsIdAutoSuggestionsStatusGetPathParamStatusNullValue | testing 2 | Project : testing 2

Job : Default

Env : Default

Region : US_WEST

Result : fail

Status Code : 500

Headers : {}

Endpoint : http://13.56.210.25/api/v1/projects/{id}/auto-suggestions/null

Request :

Response :

Not enough variable values available to expand 'id'

Logs :

2019-01-02 06:44:45 ... | 1.0 | testing 2 : ApiV1ProjectsIdAutoSuggestionsStatusGetPathParamStatusNullValue - Project : testing 2

Job : Default

Env : Default

Region : US_WEST

Result : fail

Status Code : 500

Headers : {}

Endpoint : http://13.56.210.25/api/v1/projects/{id}/auto-suggestions/null

Request :

Response :

Not e... | test | testing project testing job default env default region us west result fail status code headers endpoint request response not enough variable values available to expand id logs debug url debug method ... | 1 |

5,894 | 8,710,249,559 | IssuesEvent | 2018-12-06 15:58:35 | Open-EO/openeo-api | https://api.github.com/repos/Open-EO/openeo-api | closed | Dedicated export processes in the process graph | job management processes vote | The definition of the process graph output format is located at the moment in the preview and job requests. However, IMHO it makes more sense to have export definitions in the process graph itself.

If you have complex process graphs you might want to export intermediate results and statistical analysis data. It is m... | 1.0 | Dedicated export processes in the process graph - The definition of the process graph output format is located at the moment in the preview and job requests. However, IMHO it makes more sense to have export definitions in the process graph itself.

If you have complex process graphs you might want to export intermedi... | non_test | dedicated export processes in the process graph the definition of the process graph output format is located at the moment in the preview and job requests however imho it makes more sense to have export definitions in the process graph itself if you have complex process graphs you might want to export intermedi... | 0 |

106,031 | 13,240,630,754 | IssuesEvent | 2020-08-19 06:45:32 | nikodemus/foolang | https://api.github.com/repos/nikodemus/foolang | opened | #min: and #max: confusing | design later quality-of-life sidetrack | Currently

```

x min: y

```

means `min(x,y)`, but reads as "x, or at minimum y", ie. max(x,y).

| 1.0 | #min: and #max: confusing - Currently

```

x min: y

```

means `min(x,y)`, but reads as "x, or at minimum y", ie. max(x,y).

| non_test | min and max confusing currently x min y means min x y but reads as x or at minimum y ie max x y | 0 |

102,523 | 32,035,462,366 | IssuesEvent | 2023-09-22 15:02:40 | statechannels/go-nitro | https://api.github.com/repos/statechannels/go-nitro | closed | The eth chain service should query for historic logs | :building_construction: Productionization | Right now the eth chain service calls [SubscribeFilterLogs](https://github.com/statechannels/go-nitro/blob/7cc2c812388313a57b996e9341cb6ae2ee535621/node/engine/chainservice/eth_chainservice.go#L292) to get contract events. However [SubscribeFilterLogs](https://github.com/ethereum/go-ethereum/issues/15063) only support... | 1.0 | The eth chain service should query for historic logs - Right now the eth chain service calls [SubscribeFilterLogs](https://github.com/statechannels/go-nitro/blob/7cc2c812388313a57b996e9341cb6ae2ee535621/node/engine/chainservice/eth_chainservice.go#L292) to get contract events. However [SubscribeFilterLogs](https://git... | non_test | the eth chain service should query for historic logs right now the eth chain service calls to get contract events however only supports new events it doesn t return any historic events that have happened that means if a nitro node misses a contract event due to being offline it will never catch up on... | 0 |

50,352 | 13,523,483,161 | IssuesEvent | 2020-09-15 10:01:47 | AbdelhakAj/react-typescript | https://api.github.com/repos/AbdelhakAj/react-typescript | opened | CVE-2018-19797 (Medium) detected in opennmsopennms-source-22.0.1-1, node-sass-4.14.1.tgz | security vulnerability | ## CVE-2018-19797 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opennmsopennms-source-22.0.1-1</b>, <b>node-sass-4.14.1.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-4... | True | CVE-2018-19797 (Medium) detected in opennmsopennms-source-22.0.1-1, node-sass-4.14.1.tgz - ## CVE-2018-19797 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opennmsopennms-source-22... | non_test | cve medium detected in opennmsopennms source node sass tgz cve medium severity vulnerability vulnerable libraries opennmsopennms source node sass tgz node sass tgz wrapper around libsass library home page a href path to dependency file ... | 0 |

301,971 | 26,113,660,842 | IssuesEvent | 2022-12-28 01:12:33 | 17ANT/INATON | https://api.github.com/repos/17ANT/INATON | closed | [PostModify] 테스트 | ✨ Feature ✅ Test | ### 🌱 무엇을 하실 건지 설명해주세요!

- 오늘 작업 테스트 진행

### 🌱 구현방법 및 예상 동작

1. 게시글 수정

- 이미지 없는 게시글 수정 시 파일을 불러오지 못하는 이미지 출력X

- 게시글 수정 시 이미지 반영 여부

- 이미지 슬라이드 확인

2. 댓글 작성

- 댓글 입력시에만 게시 버튼 활성화

| 1.0 | [PostModify] 테스트 - ### 🌱 무엇을 하실 건지 설명해주세요!

- 오늘 작업 테스트 진행

### 🌱 구현방법 및 예상 동작

1. 게시글 수정

- 이미지 없는 게시글 수정 시 파일을 불러오지 못하는 이미지 출력X

- 게시글 수정 시 이미지 반영 여부

- 이미지 슬라이드 확인

2. 댓글 작성

- 댓글 입력시에만 게시 버튼 활성화

| test | 테스트 🌱 무엇을 하실 건지 설명해주세요 오늘 작업 테스트 진행 🌱 구현방법 및 예상 동작 게시글 수정 이미지 없는 게시글 수정 시 파일을 불러오지 못하는 이미지 출력x 게시글 수정 시 이미지 반영 여부 이미지 슬라이드 확인 댓글 작성 댓글 입력시에만 게시 버튼 활성화 | 1 |

93,218 | 8,405,314,286 | IssuesEvent | 2018-10-11 14:58:30 | chameleon-system/chameleon-system | https://api.github.com/repos/chameleon-system/chameleon-system | closed | Fix typos in XLIFF files | Status: Test Type: Bug | **Describe the bug**

There are some typos hiding in the XLIFF files.

**Affected version(s)**

6.2.0+

| 1.0 | Fix typos in XLIFF files - **Describe the bug**

There are some typos hiding in the XLIFF files.

**Affected version(s)**

6.2.0+