Unnamed: 0

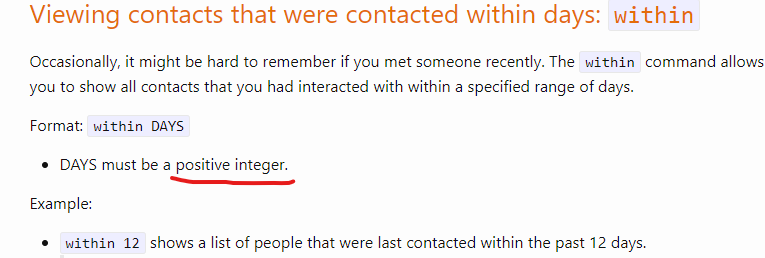

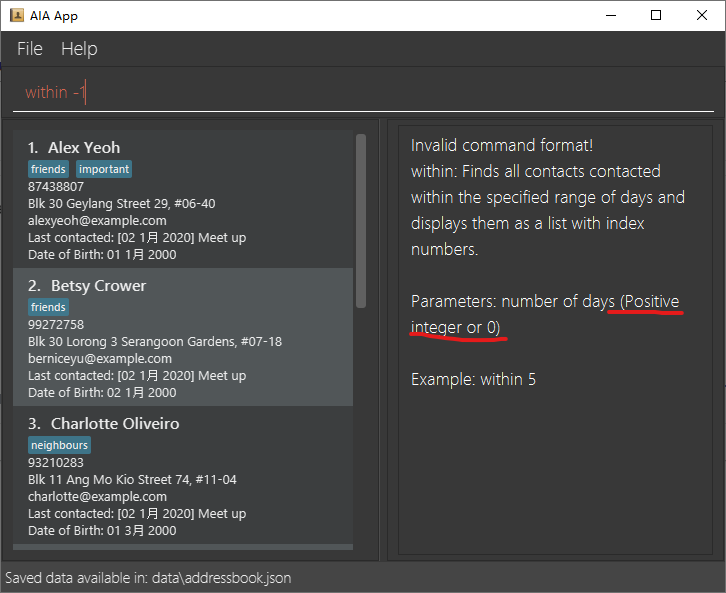

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

146,985

| 11,765,547,426

|

IssuesEvent

|

2020-03-14 17:54:23

|

IEMLdev/Intlekt-editor

|

https://api.github.com/repos/IEMLdev/Intlekt-editor

|

closed

|

après avoir marché pendant une heure, l'éditeur de dictionnaire a encore bloqué

|

bug to retest

|

Peut-être que c'est normal parce que j'ai fait beaucoup de modif et qu'il faut attendre demain 😬

<img width="1386" alt="Screenshot 2019-10-19 14 47 04" src="https://user-images.githubusercontent.com/19435943/67150013-2743c800-f280-11e9-95cb-6d0648e8b152.png">

|

1.0

|

après avoir marché pendant une heure, l'éditeur de dictionnaire a encore bloqué - Peut-être que c'est normal parce que j'ai fait beaucoup de modif et qu'il faut attendre demain 😬

<img width="1386" alt="Screenshot 2019-10-19 14 47 04" src="https://user-images.githubusercontent.com/19435943/67150013-2743c800-f280-11e9-95cb-6d0648e8b152.png">

|

test

|

après avoir marché pendant une heure l éditeur de dictionnaire a encore bloqué peut être que c est normal parce que j ai fait beaucoup de modif et qu il faut attendre demain 😬 img width alt screenshot src

| 1

|

257,516

| 27,563,796,894

|

IssuesEvent

|

2023-03-08 01:07:09

|

billmcchesney1/superagent

|

https://api.github.com/repos/billmcchesney1/superagent

|

opened

|

CVE-2022-21681 (High) detected in marked-1.2.7.tgz

|

security vulnerability

|

## CVE-2022-21681 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>marked-1.2.7.tgz</b></p></summary>

<p>A markdown parser built for speed</p>

<p>Library home page: <a href="https://registry.npmjs.org/marked/-/marked-1.2.7.tgz">https://registry.npmjs.org/marked/-/marked-1.2.7.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/marked/package.json</p>

<p>

Dependency Hierarchy:

- :x: **marked-1.2.7.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Marked is a markdown parser and compiler. Prior to version 4.0.10, the regular expression `inline.reflinkSearch` may cause catastrophic backtracking against some strings and lead to a denial of service (DoS). Anyone who runs untrusted markdown through a vulnerable version of marked and does not use a worker with a time limit may be affected. This issue is patched in version 4.0.10. As a workaround, avoid running untrusted markdown through marked or run marked on a worker thread and set a reasonable time limit to prevent draining resources.

<p>Publish Date: 2022-01-14

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-21681>CVE-2022-21681</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-5v2h-r2cx-5xgj">https://github.com/advisories/GHSA-5v2h-r2cx-5xgj</a></p>

<p>Release Date: 2022-01-14</p>

<p>Fix Resolution: 4.0.10</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue

|

True

|

CVE-2022-21681 (High) detected in marked-1.2.7.tgz - ## CVE-2022-21681 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>marked-1.2.7.tgz</b></p></summary>

<p>A markdown parser built for speed</p>

<p>Library home page: <a href="https://registry.npmjs.org/marked/-/marked-1.2.7.tgz">https://registry.npmjs.org/marked/-/marked-1.2.7.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/marked/package.json</p>

<p>

Dependency Hierarchy:

- :x: **marked-1.2.7.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Marked is a markdown parser and compiler. Prior to version 4.0.10, the regular expression `inline.reflinkSearch` may cause catastrophic backtracking against some strings and lead to a denial of service (DoS). Anyone who runs untrusted markdown through a vulnerable version of marked and does not use a worker with a time limit may be affected. This issue is patched in version 4.0.10. As a workaround, avoid running untrusted markdown through marked or run marked on a worker thread and set a reasonable time limit to prevent draining resources.

<p>Publish Date: 2022-01-14

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-21681>CVE-2022-21681</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-5v2h-r2cx-5xgj">https://github.com/advisories/GHSA-5v2h-r2cx-5xgj</a></p>

<p>Release Date: 2022-01-14</p>

<p>Fix Resolution: 4.0.10</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue

|

non_test

|

cve high detected in marked tgz cve high severity vulnerability vulnerable library marked tgz a markdown parser built for speed library home page a href path to dependency file package json path to vulnerable library node modules marked package json dependency hierarchy x marked tgz vulnerable library found in base branch master vulnerability details marked is a markdown parser and compiler prior to version the regular expression inline reflinksearch may cause catastrophic backtracking against some strings and lead to a denial of service dos anyone who runs untrusted markdown through a vulnerable version of marked and does not use a worker with a time limit may be affected this issue is patched in version as a workaround avoid running untrusted markdown through marked or run marked on a worker thread and set a reasonable time limit to prevent draining resources publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution rescue worker helmet automatic remediation is available for this issue

| 0

|

172,072

| 6,498,708,315

|

IssuesEvent

|

2017-08-22 18:29:54

|

FrogTheFrog/steam-rom-manager

|

https://api.github.com/repos/FrogTheFrog/steam-rom-manager

|

closed

|

Unsaved parser settings are lost when clicking "Test parser"

|

bug enhancement priority

|

1. Open a previously saved parser

1. Change settings such as ROMs directory and User's glob

1. Click the "Test parser" button.

1. Click back to the parser you were testing. All setting changes are gone.

I would expect the behavior would be to leave the modified settings in place. The primary reason I would ever hit "Test parser" would be to see if my new parser settings work well. I wouldn't want to have to save my parser settings before I got them right.

If you do allow navigating away without losing parsing settings, I can see how this bug report would actually turn into more of an enhancement. A more robust save system which shows each modified parser with a * next to its name so that you are aware it has been edited would then make it apparent that it is not yet saved.

|

1.0

|

Unsaved parser settings are lost when clicking "Test parser" - 1. Open a previously saved parser

1. Change settings such as ROMs directory and User's glob

1. Click the "Test parser" button.

1. Click back to the parser you were testing. All setting changes are gone.

I would expect the behavior would be to leave the modified settings in place. The primary reason I would ever hit "Test parser" would be to see if my new parser settings work well. I wouldn't want to have to save my parser settings before I got them right.

If you do allow navigating away without losing parsing settings, I can see how this bug report would actually turn into more of an enhancement. A more robust save system which shows each modified parser with a * next to its name so that you are aware it has been edited would then make it apparent that it is not yet saved.

|

non_test

|

unsaved parser settings are lost when clicking test parser open a previously saved parser change settings such as roms directory and user s glob click the test parser button click back to the parser you were testing all setting changes are gone i would expect the behavior would be to leave the modified settings in place the primary reason i would ever hit test parser would be to see if my new parser settings work well i wouldn t want to have to save my parser settings before i got them right if you do allow navigating away without losing parsing settings i can see how this bug report would actually turn into more of an enhancement a more robust save system which shows each modified parser with a next to its name so that you are aware it has been edited would then make it apparent that it is not yet saved

| 0

|

71,634

| 8,671,799,041

|

IssuesEvent

|

2018-11-29 20:11:06

|

poanetwork/blockscout

|

https://api.github.com/repos/poanetwork/blockscout

|

opened

|

Make the requirement for verifying a smart contract less harsh

|

design enhancement priority: medium team: developer

|

On the transaction details page, the user is prompted to verify a contract to view the decoded input data. This message is currently using the `danger` style. This should be changed to `info` to appear less like an error.

<img width="1111" alt="screen shot 2018-11-29 at 3 07 57 pm" src="https://user-images.githubusercontent.com/17620007/49248911-e5e8c680-f3e8-11e8-9335-9ce212de0537.png">

|

1.0

|

Make the requirement for verifying a smart contract less harsh - On the transaction details page, the user is prompted to verify a contract to view the decoded input data. This message is currently using the `danger` style. This should be changed to `info` to appear less like an error.

<img width="1111" alt="screen shot 2018-11-29 at 3 07 57 pm" src="https://user-images.githubusercontent.com/17620007/49248911-e5e8c680-f3e8-11e8-9335-9ce212de0537.png">

|

non_test

|

make the requirement for verifying a smart contract less harsh on the transaction details page the user is prompted to verify a contract to view the decoded input data this message is currently using the danger style this should be changed to info to appear less like an error img width alt screen shot at pm src

| 0

|

277,575

| 24,086,141,779

|

IssuesEvent

|

2022-09-19 11:07:08

|

streamnative/pulsar

|

https://api.github.com/repos/streamnative/pulsar

|

opened

|

ISSUE-17713: Flaky-test: SqliteJdbcSinkTest.tearDown

|

component/test flaky-tests

|

Original Issue: apache/pulsar#17713

---

### Search before asking

- [X] I searched in the [issues](https://github.com/apache/pulsar/issues) and found nothing similar.

### Example failure

https://github.com/apache/pulsar/actions/runs/3069039857/jobs/4977568132#step:10:4694

### Exception stacktrace

```

Error: Tests run: 19, Failures: 1, Errors: 0, Skipped: 11, Time elapsed: 4.036 s <<< FAILURE! - in org.apache.pulsar.io.jdbc.SqliteJdbcSinkTest

Error: tearDown(org.apache.pulsar.io.jdbc.SqliteJdbcSinkTest) Time elapsed: 0.11 s <<< FAILURE!

org.sqlite.SQLiteException: [SQLITE_ERROR] SQL error or missing database (cannot commit - no transaction is active)

at org.sqlite.core.DB.newSQLException(DB.java:1030)

at org.sqlite.core.DB.newSQLException(DB.java:1042)

at org.sqlite.core.DB.throwex(DB.java:1007)

at org.sqlite.core.DB.exec(DB.java:178)

at org.sqlite.SQLiteConnection.commit(SQLiteConnection.java:421)

at org.apache.pulsar.io.jdbc.JdbcAbstractSink.close(JdbcAbstractSink.java:141)

at org.apache.pulsar.io.jdbc.SqliteJdbcSinkTest.tearDown(SqliteJdbcSinkTest.java:122)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:77)

at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base/java.lang.reflect.Method.invoke(Method.java:568)

at org.testng.internal.MethodInvocationHelper.invokeMethod(MethodInvocationHelper.java:132)

at org.testng.internal.MethodInvocationHelper.invokeMethodConsideringTimeout(MethodInvocationHelper.java:61)

at org.testng.internal.ConfigInvoker.invokeConfigurationMethod(ConfigInvoker.java:366)

at org.testng.internal.ConfigInvoker.invokeConfigurations(ConfigInvoker.java:320)

at org.testng.internal.TestInvoker.runConfigMethods(TestInvoker.java:701)

at org.testng.internal.TestInvoker.runAfterGroupsConfigurations(TestInvoker.java:677)

at org.testng.internal.TestInvoker.invokeMethod(TestInvoker.java:661)

at org.testng.internal.TestInvoker.invokeTestMethod(TestInvoker.java:174)

at org.testng.internal.MethodRunner.runInSequence(MethodRunner.java:46)

at org.testng.internal.TestInvoker$MethodInvocationAgent.invoke(TestInvoker.java:822)

at org.testng.internal.TestInvoker.invokeTestMethods(TestInvoker.java:147)

at org.testng.internal.TestMethodWorker.invokeTestMethods(TestMethodWorker.java:146)

at org.testng.internal.TestMethodWorker.run(TestMethodWorker.java:128)

at java.base/java.util.ArrayList.forEach(ArrayList.java:1511)

at org.testng.TestRunner.privateRun(TestRunner.java:764)

at org.testng.TestRunner.run(TestRunner.java:585)

at org.testng.SuiteRunner.runTest(SuiteRunner.java:384)

at org.testng.SuiteRunner.runSequentially(SuiteRunner.java:378)

at org.testng.SuiteRunner.privateRun(SuiteRunner.java:337)

at org.testng.SuiteRunner.run(SuiteRunner.java:286)

at org.testng.SuiteRunnerWorker.runSuite(SuiteRunnerWorker.java:53)

at org.testng.SuiteRunnerWorker.run(SuiteRunnerWorker.java:96)

at org.testng.TestNG.runSuitesSequentially(TestNG.java:1218)

at org.testng.TestNG.runSuitesLocally(TestNG.java:1140)

at org.testng.TestNG.runSuites(TestNG.java:1069)

at org.testng.TestNG.run(TestNG.java:1037)

at org.apache.maven.surefire.testng.TestNGExecutor.run(TestNGExecutor.java:135)

at org.apache.maven.surefire.testng.TestNGDirectoryTestSuite.executeSingleClass(TestNGDirectoryTestSuite.java:112)

at org.apache.maven.surefire.testng.TestNGDirectoryTestSuite.executeLazy(TestNGDirectoryTestSuite.java:123)

at org.apache.maven.surefire.testng.TestNGDirectoryTestSuite.execute(TestNGDirectoryTestSuite.java:90)

at org.apache.maven.surefire.testng.TestNGProvider.invoke(TestNGProvider.java:146)

at org.apache.maven.surefire.booter.ForkedBooter.invokeProviderInSameClassLoader(ForkedBooter.java:384)

at org.apache.maven.surefire.booter.ForkedBooter.runSuitesInProcess(ForkedBooter.java:345)

at org.apache.maven.surefire.booter.ForkedBooter.execute(ForkedBooter.java:126)

at org.apache.maven.surefire.booter.ForkedBooter.main(ForkedBooter.java:418)

```

### Are you willing to submit a PR?

- [ ] I'm willing to submit a PR!

|

2.0

|

ISSUE-17713: Flaky-test: SqliteJdbcSinkTest.tearDown - Original Issue: apache/pulsar#17713

---

### Search before asking

- [X] I searched in the [issues](https://github.com/apache/pulsar/issues) and found nothing similar.

### Example failure

https://github.com/apache/pulsar/actions/runs/3069039857/jobs/4977568132#step:10:4694

### Exception stacktrace

```

Error: Tests run: 19, Failures: 1, Errors: 0, Skipped: 11, Time elapsed: 4.036 s <<< FAILURE! - in org.apache.pulsar.io.jdbc.SqliteJdbcSinkTest

Error: tearDown(org.apache.pulsar.io.jdbc.SqliteJdbcSinkTest) Time elapsed: 0.11 s <<< FAILURE!

org.sqlite.SQLiteException: [SQLITE_ERROR] SQL error or missing database (cannot commit - no transaction is active)

at org.sqlite.core.DB.newSQLException(DB.java:1030)

at org.sqlite.core.DB.newSQLException(DB.java:1042)

at org.sqlite.core.DB.throwex(DB.java:1007)

at org.sqlite.core.DB.exec(DB.java:178)

at org.sqlite.SQLiteConnection.commit(SQLiteConnection.java:421)

at org.apache.pulsar.io.jdbc.JdbcAbstractSink.close(JdbcAbstractSink.java:141)

at org.apache.pulsar.io.jdbc.SqliteJdbcSinkTest.tearDown(SqliteJdbcSinkTest.java:122)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at java.base/jdk.internal.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:77)

at java.base/jdk.internal.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.base/java.lang.reflect.Method.invoke(Method.java:568)

at org.testng.internal.MethodInvocationHelper.invokeMethod(MethodInvocationHelper.java:132)

at org.testng.internal.MethodInvocationHelper.invokeMethodConsideringTimeout(MethodInvocationHelper.java:61)

at org.testng.internal.ConfigInvoker.invokeConfigurationMethod(ConfigInvoker.java:366)

at org.testng.internal.ConfigInvoker.invokeConfigurations(ConfigInvoker.java:320)

at org.testng.internal.TestInvoker.runConfigMethods(TestInvoker.java:701)

at org.testng.internal.TestInvoker.runAfterGroupsConfigurations(TestInvoker.java:677)

at org.testng.internal.TestInvoker.invokeMethod(TestInvoker.java:661)

at org.testng.internal.TestInvoker.invokeTestMethod(TestInvoker.java:174)

at org.testng.internal.MethodRunner.runInSequence(MethodRunner.java:46)

at org.testng.internal.TestInvoker$MethodInvocationAgent.invoke(TestInvoker.java:822)

at org.testng.internal.TestInvoker.invokeTestMethods(TestInvoker.java:147)

at org.testng.internal.TestMethodWorker.invokeTestMethods(TestMethodWorker.java:146)

at org.testng.internal.TestMethodWorker.run(TestMethodWorker.java:128)

at java.base/java.util.ArrayList.forEach(ArrayList.java:1511)

at org.testng.TestRunner.privateRun(TestRunner.java:764)

at org.testng.TestRunner.run(TestRunner.java:585)

at org.testng.SuiteRunner.runTest(SuiteRunner.java:384)

at org.testng.SuiteRunner.runSequentially(SuiteRunner.java:378)

at org.testng.SuiteRunner.privateRun(SuiteRunner.java:337)

at org.testng.SuiteRunner.run(SuiteRunner.java:286)

at org.testng.SuiteRunnerWorker.runSuite(SuiteRunnerWorker.java:53)

at org.testng.SuiteRunnerWorker.run(SuiteRunnerWorker.java:96)

at org.testng.TestNG.runSuitesSequentially(TestNG.java:1218)

at org.testng.TestNG.runSuitesLocally(TestNG.java:1140)

at org.testng.TestNG.runSuites(TestNG.java:1069)

at org.testng.TestNG.run(TestNG.java:1037)

at org.apache.maven.surefire.testng.TestNGExecutor.run(TestNGExecutor.java:135)

at org.apache.maven.surefire.testng.TestNGDirectoryTestSuite.executeSingleClass(TestNGDirectoryTestSuite.java:112)

at org.apache.maven.surefire.testng.TestNGDirectoryTestSuite.executeLazy(TestNGDirectoryTestSuite.java:123)

at org.apache.maven.surefire.testng.TestNGDirectoryTestSuite.execute(TestNGDirectoryTestSuite.java:90)

at org.apache.maven.surefire.testng.TestNGProvider.invoke(TestNGProvider.java:146)

at org.apache.maven.surefire.booter.ForkedBooter.invokeProviderInSameClassLoader(ForkedBooter.java:384)

at org.apache.maven.surefire.booter.ForkedBooter.runSuitesInProcess(ForkedBooter.java:345)

at org.apache.maven.surefire.booter.ForkedBooter.execute(ForkedBooter.java:126)

at org.apache.maven.surefire.booter.ForkedBooter.main(ForkedBooter.java:418)

```

### Are you willing to submit a PR?

- [ ] I'm willing to submit a PR!

|

test

|

issue flaky test sqlitejdbcsinktest teardown original issue apache pulsar search before asking i searched in the and found nothing similar example failure exception stacktrace error tests run failures errors skipped time elapsed s failure in org apache pulsar io jdbc sqlitejdbcsinktest error teardown org apache pulsar io jdbc sqlitejdbcsinktest time elapsed s failure org sqlite sqliteexception sql error or missing database cannot commit no transaction is active at org sqlite core db newsqlexception db java at org sqlite core db newsqlexception db java at org sqlite core db throwex db java at org sqlite core db exec db java at org sqlite sqliteconnection commit sqliteconnection java at org apache pulsar io jdbc jdbcabstractsink close jdbcabstractsink java at org apache pulsar io jdbc sqlitejdbcsinktest teardown sqlitejdbcsinktest java at java base jdk internal reflect nativemethodaccessorimpl native method at java base jdk internal reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at java base jdk internal reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java at java base java lang reflect method invoke method java at org testng internal methodinvocationhelper invokemethod methodinvocationhelper java at org testng internal methodinvocationhelper invokemethodconsideringtimeout methodinvocationhelper java at org testng internal configinvoker invokeconfigurationmethod configinvoker java at org testng internal configinvoker invokeconfigurations configinvoker java at org testng internal testinvoker runconfigmethods testinvoker java at org testng internal testinvoker runaftergroupsconfigurations testinvoker java at org testng internal testinvoker invokemethod testinvoker java at org testng internal testinvoker invoketestmethod testinvoker java at org testng internal methodrunner runinsequence methodrunner java at org testng internal testinvoker methodinvocationagent invoke testinvoker java at org testng internal testinvoker invoketestmethods testinvoker java at org testng internal testmethodworker invoketestmethods testmethodworker java at org testng internal testmethodworker run testmethodworker java at java base java util arraylist foreach arraylist java at org testng testrunner privaterun testrunner java at org testng testrunner run testrunner java at org testng suiterunner runtest suiterunner java at org testng suiterunner runsequentially suiterunner java at org testng suiterunner privaterun suiterunner java at org testng suiterunner run suiterunner java at org testng suiterunnerworker runsuite suiterunnerworker java at org testng suiterunnerworker run suiterunnerworker java at org testng testng runsuitessequentially testng java at org testng testng runsuiteslocally testng java at org testng testng runsuites testng java at org testng testng run testng java at org apache maven surefire testng testngexecutor run testngexecutor java at org apache maven surefire testng testngdirectorytestsuite executesingleclass testngdirectorytestsuite java at org apache maven surefire testng testngdirectorytestsuite executelazy testngdirectorytestsuite java at org apache maven surefire testng testngdirectorytestsuite execute testngdirectorytestsuite java at org apache maven surefire testng testngprovider invoke testngprovider java at org apache maven surefire booter forkedbooter invokeproviderinsameclassloader forkedbooter java at org apache maven surefire booter forkedbooter runsuitesinprocess forkedbooter java at org apache maven surefire booter forkedbooter execute forkedbooter java at org apache maven surefire booter forkedbooter main forkedbooter java are you willing to submit a pr i m willing to submit a pr

| 1

|

313,192

| 26,908,518,082

|

IssuesEvent

|

2023-02-06 21:15:30

|

louis-langholtz/PlayRho

|

https://api.github.com/repos/louis-langholtz/PlayRho

|

closed

|

UnderlyingValue deprecated warnings in release 1.1.0 Testbed build

|

Bug Testbed

|

### Expected/Desired Behavior or Experience:

Testbed builds without warnings about `UnderlyingValue` being deprecated in release 1.1.*.

### Actual Behavior:

Seeing warnings like:

```

[ 91%] Building CXX object Testbed/CMakeFiles/Testbed.dir/Framework/Main.cpp.o

PlayRho/Testbed/Framework/Main.cpp:1182:28: warning: 'UnderlyingValue<unsigned short, playrho::BodyIdentifier>' is deprecated: Use

to_underlying instead [-Wdeprecated-declarations]

ImGui::IdContext idCtx(UnderlyingValue(b));

^

PlayRho/PlayRho/Common/IndexingNamedType.hpp:142:3: note: 'UnderlyingValue<unsigned short, playrho::BodyIdentifier>' has been explicitly

marked deprecated here

[[deprecated("Use to_underlying instead")]]

^

PlayRho/Testbed/Framework/Main.cpp:1353:28: warning: 'UnderlyingValue<unsigned short, playrho::FixtureIdentifier>' is deprecated: Use

to_underlying instead [-Wdeprecated-declarations]

ImGui::IdContext idCtx(UnderlyingValue(fixture));

^

PlayRho/PlayRho/Common/IndexingNamedType.hpp:142:3: note: 'UnderlyingValue<unsigned short, playrho::FixtureIdentifier>' has been

explicitly marked deprecated here

[[deprecated("Use to_underlying instead")]]

^

```

### Steps to Reproduce the Actual Behavior:

```sh

git clone --recurse-submodules --branch release-1.1.0 https://github.com/louis-langholtz/PlayRho.git

mkdir PlayRho-build

cd PlayRho-build

cmake -DPLAYRHO_BUILD_TESTBED=ON ../PlayRho

make

```

|

1.0

|

UnderlyingValue deprecated warnings in release 1.1.0 Testbed build - ### Expected/Desired Behavior or Experience:

Testbed builds without warnings about `UnderlyingValue` being deprecated in release 1.1.*.

### Actual Behavior:

Seeing warnings like:

```

[ 91%] Building CXX object Testbed/CMakeFiles/Testbed.dir/Framework/Main.cpp.o

PlayRho/Testbed/Framework/Main.cpp:1182:28: warning: 'UnderlyingValue<unsigned short, playrho::BodyIdentifier>' is deprecated: Use

to_underlying instead [-Wdeprecated-declarations]

ImGui::IdContext idCtx(UnderlyingValue(b));

^

PlayRho/PlayRho/Common/IndexingNamedType.hpp:142:3: note: 'UnderlyingValue<unsigned short, playrho::BodyIdentifier>' has been explicitly

marked deprecated here

[[deprecated("Use to_underlying instead")]]

^

PlayRho/Testbed/Framework/Main.cpp:1353:28: warning: 'UnderlyingValue<unsigned short, playrho::FixtureIdentifier>' is deprecated: Use

to_underlying instead [-Wdeprecated-declarations]

ImGui::IdContext idCtx(UnderlyingValue(fixture));

^

PlayRho/PlayRho/Common/IndexingNamedType.hpp:142:3: note: 'UnderlyingValue<unsigned short, playrho::FixtureIdentifier>' has been

explicitly marked deprecated here

[[deprecated("Use to_underlying instead")]]

^

```

### Steps to Reproduce the Actual Behavior:

```sh

git clone --recurse-submodules --branch release-1.1.0 https://github.com/louis-langholtz/PlayRho.git

mkdir PlayRho-build

cd PlayRho-build

cmake -DPLAYRHO_BUILD_TESTBED=ON ../PlayRho

make

```

|

test

|

underlyingvalue deprecated warnings in release testbed build expected desired behavior or experience testbed builds without warnings about underlyingvalue being deprecated in release actual behavior seeing warnings like building cxx object testbed cmakefiles testbed dir framework main cpp o playrho testbed framework main cpp warning underlyingvalue is deprecated use to underlying instead imgui idcontext idctx underlyingvalue b playrho playrho common indexingnamedtype hpp note underlyingvalue has been explicitly marked deprecated here playrho testbed framework main cpp warning underlyingvalue is deprecated use to underlying instead imgui idcontext idctx underlyingvalue fixture playrho playrho common indexingnamedtype hpp note underlyingvalue has been explicitly marked deprecated here steps to reproduce the actual behavior sh git clone recurse submodules branch release mkdir playrho build cd playrho build cmake dplayrho build testbed on playrho make

| 1

|

71,176

| 15,184,767,647

|

IssuesEvent

|

2021-02-15 10:01:38

|

devikab2b/whites

|

https://api.github.com/repos/devikab2b/whites

|

opened

|

CVE-2020-8908 (Low) detected in guava-16.0.1.jar

|

security vulnerability

|

## CVE-2020-8908 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>guava-16.0.1.jar</b></p></summary>

<p>Guava is a suite of core and expanded libraries that include

utility classes, google's collections, io classes, and much

much more.

Guava has only one code dependency - javax.annotation,

per the JSR-305 spec.</p>

<p>Library home page: <a href="http://code.google.com/p/guava-libraries">http://code.google.com/p/guava-libraries</a></p>

<p>Path to dependency file: whites/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/google/guava/guava/16.0.1/guava-16.0.1.jar</p>

<p>

Dependency Hierarchy:

- spark-sql_2.12-3.0.1.jar (Root Library)

- spark-core_2.12-3.0.1.jar

- curator-recipes-2.7.1.jar

- :x: **guava-16.0.1.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/devikab2b/whites/commit/4c5e641103e08d86e4119a1d3808eea3ebca1665">4c5e641103e08d86e4119a1d3808eea3ebca1665</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A temp directory creation vulnerability exist in Guava versions prior to 30.0 allowing an attacker with access to the machine to potentially access data in a temporary directory created by the Guava com.google.common.io.Files.createTempDir(). The permissions granted to the directory created default to the standard unix-like /tmp ones, leaving the files open. We recommend updating Guava to version 30.0 or later, or update to Java 7 or later, or to explicitly change the permissions after the creation of the directory if neither are possible.

<p>Publish Date: 2020-12-10

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8908>CVE-2020-8908</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>3.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-8908">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-8908</a></p>

<p>Release Date: 2020-12-10</p>

<p>Fix Resolution: v30.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-8908 (Low) detected in guava-16.0.1.jar - ## CVE-2020-8908 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>guava-16.0.1.jar</b></p></summary>

<p>Guava is a suite of core and expanded libraries that include

utility classes, google's collections, io classes, and much

much more.

Guava has only one code dependency - javax.annotation,

per the JSR-305 spec.</p>

<p>Library home page: <a href="http://code.google.com/p/guava-libraries">http://code.google.com/p/guava-libraries</a></p>

<p>Path to dependency file: whites/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/com/google/guava/guava/16.0.1/guava-16.0.1.jar</p>

<p>

Dependency Hierarchy:

- spark-sql_2.12-3.0.1.jar (Root Library)

- spark-core_2.12-3.0.1.jar

- curator-recipes-2.7.1.jar

- :x: **guava-16.0.1.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/devikab2b/whites/commit/4c5e641103e08d86e4119a1d3808eea3ebca1665">4c5e641103e08d86e4119a1d3808eea3ebca1665</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/low_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A temp directory creation vulnerability exist in Guava versions prior to 30.0 allowing an attacker with access to the machine to potentially access data in a temporary directory created by the Guava com.google.common.io.Files.createTempDir(). The permissions granted to the directory created default to the standard unix-like /tmp ones, leaving the files open. We recommend updating Guava to version 30.0 or later, or update to Java 7 or later, or to explicitly change the permissions after the creation of the directory if neither are possible.

<p>Publish Date: 2020-12-10

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-8908>CVE-2020-8908</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>3.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-8908">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-8908</a></p>

<p>Release Date: 2020-12-10</p>

<p>Fix Resolution: v30.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve low detected in guava jar cve low severity vulnerability vulnerable library guava jar guava is a suite of core and expanded libraries that include utility classes google s collections io classes and much much more guava has only one code dependency javax annotation per the jsr spec library home page a href path to dependency file whites pom xml path to vulnerable library home wss scanner repository com google guava guava guava jar dependency hierarchy spark sql jar root library spark core jar curator recipes jar x guava jar vulnerable library found in head commit a href found in base branch main vulnerability details a temp directory creation vulnerability exist in guava versions prior to allowing an attacker with access to the machine to potentially access data in a temporary directory created by the guava com google common io files createtempdir the permissions granted to the directory created default to the standard unix like tmp ones leaving the files open we recommend updating guava to version or later or update to java or later or to explicitly change the permissions after the creation of the directory if neither are possible publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact low integrity impact none availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

308,366

| 26,602,583,930

|

IssuesEvent

|

2023-01-23 16:47:23

|

microsoft/vscode-remote-release

|

https://api.github.com/repos/microsoft/vscode-remote-release

|

opened

|

Test: Unfiltered template list for Create Dev Container

|

testplan-item

|

Refs: https://github.com/microsoft/vscode-remote-release/issues/7629

- [ ] anyOS

Complexity: 1

---

Check that the `Create Dev Container` command does not filter templates. E.g., there is no `Show All Definitions` entry in the first QuickPick, but there is a `Python` entry.

|

1.0

|

Test: Unfiltered template list for Create Dev Container - Refs: https://github.com/microsoft/vscode-remote-release/issues/7629

- [ ] anyOS

Complexity: 1

---

Check that the `Create Dev Container` command does not filter templates. E.g., there is no `Show All Definitions` entry in the first QuickPick, but there is a `Python` entry.

|

test

|

test unfiltered template list for create dev container refs anyos complexity check that the create dev container command does not filter templates e g there is no show all definitions entry in the first quickpick but there is a python entry

| 1

|

258,798

| 22,347,997,042

|

IssuesEvent

|

2022-06-15 09:29:09

|

UserOfficeProject/user-office-project-issue-tracker

|

https://api.github.com/repos/UserOfficeProject/user-office-project-issue-tracker

|

closed

|

Create test ISIS Rapid questionnaire on dev

|

origin: project type: test ops: comms area: uop/stfc

|

We have an proposed questionnaire documented in the [project documentation](https://stfc365.sharepoint.com/:w:/r/sites/ISISProject-1102/_layouts/15/Doc.aspx?sourcedoc=%7B7C2AAE0A-BC21-4849-ADB0-964FCDDBBEF3%7D&file=Proposed+questionnaires+V+1.0.docx&action=default&mobileredirect=true&isSPOFile=1).

|

1.0

|

Create test ISIS Rapid questionnaire on dev - We have an proposed questionnaire documented in the [project documentation](https://stfc365.sharepoint.com/:w:/r/sites/ISISProject-1102/_layouts/15/Doc.aspx?sourcedoc=%7B7C2AAE0A-BC21-4849-ADB0-964FCDDBBEF3%7D&file=Proposed+questionnaires+V+1.0.docx&action=default&mobileredirect=true&isSPOFile=1).

|

test

|

create test isis rapid questionnaire on dev we have an proposed questionnaire documented in the

| 1

|

290,449

| 25,068,825,353

|

IssuesEvent

|

2022-11-07 10:28:10

|

airbytehq/airbyte

|

https://api.github.com/repos/airbytehq/airbyte

|

closed

|

Source AlloyDB for PostgreSQL: enable `high` test strictness level in SAT

|

type/enhancement area/connectors team/connectors-python test-strictness-level

|

## What

A `test_strictness_level` field was introduced to Source Acceptance Tests (SAT).

AlloyDB for PostgreSQL is a generally_available connector, we want it to have a `high` test strictness level.

This will help:

- maximize the SAT coverage on this connector.

- document its potential weaknesses in term of test coverage.

## How

1. Migrate the existing `acceptance-test-config.yml` file to the latest configuration format. (See instructions [here](https://github.com/airbytehq/airbyte/blob/master/airbyte-integrations/bases/source-acceptance-test/README.md#L61))

2. Enable `high` test strictness level in `acceptance-test-config.yml`. (See instructions [here](https://github.com/airbytehq/airbyte/blob/master/docs/connector-development/testing-connectors/source-acceptance-tests-reference.md#L240))

3. Commit changes on `acceptance-test-config.yml` and open a PR

4. Run SAT with the `/test` command on the branch.

5. If tests are failing please fix the failing test or use `bypass_reason` fields to explain why a specific test can't be run.

|

1.0

|

Source AlloyDB for PostgreSQL: enable `high` test strictness level in SAT - ## What

A `test_strictness_level` field was introduced to Source Acceptance Tests (SAT).

AlloyDB for PostgreSQL is a generally_available connector, we want it to have a `high` test strictness level.

This will help:

- maximize the SAT coverage on this connector.

- document its potential weaknesses in term of test coverage.

## How

1. Migrate the existing `acceptance-test-config.yml` file to the latest configuration format. (See instructions [here](https://github.com/airbytehq/airbyte/blob/master/airbyte-integrations/bases/source-acceptance-test/README.md#L61))

2. Enable `high` test strictness level in `acceptance-test-config.yml`. (See instructions [here](https://github.com/airbytehq/airbyte/blob/master/docs/connector-development/testing-connectors/source-acceptance-tests-reference.md#L240))

3. Commit changes on `acceptance-test-config.yml` and open a PR

4. Run SAT with the `/test` command on the branch.

5. If tests are failing please fix the failing test or use `bypass_reason` fields to explain why a specific test can't be run.

|

test

|

source alloydb for postgresql enable high test strictness level in sat what a test strictness level field was introduced to source acceptance tests sat alloydb for postgresql is a generally available connector we want it to have a high test strictness level this will help maximize the sat coverage on this connector document its potential weaknesses in term of test coverage how migrate the existing acceptance test config yml file to the latest configuration format see instructions enable high test strictness level in acceptance test config yml see instructions commit changes on acceptance test config yml and open a pr run sat with the test command on the branch if tests are failing please fix the failing test or use bypass reason fields to explain why a specific test can t be run

| 1

|

115,635

| 17,332,539,466

|

IssuesEvent

|

2021-07-28 05:45:23

|

panasalap/OpenJPEG-2.3.0_After-27841_27845_Fix

|

https://api.github.com/repos/panasalap/OpenJPEG-2.3.0_After-27841_27845_Fix

|

opened

|

CVE-2020-27841 (Medium) detected in openjpegv2.3.0

|

security vulnerability

|

## CVE-2020-27841 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>openjpegv2.3.0</b></p></summary>

<p>

<p>Official repository of the OpenJPEG project</p>

<p>Library home page: <a href=https://github.com/uclouvain/openjpeg.git>https://github.com/uclouvain/openjpeg.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/panasalap/OpenJPEG-2.3.0_After-27841_27845_Fix/commit/be713d2d2bd1d1324ebad976a00e4f9fef506436">be713d2d2bd1d1324ebad976a00e4f9fef506436</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

There's a flaw in openjpeg in versions prior to 2.4.0 in src/lib/openjp2/pi.c. When an attacker is able to provide crafted input to be processed by the openjpeg encoder, this could cause an out-of-bounds read. The greatest impact from this flaw is to application availability.

<p>Publish Date: 2021-01-05

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27841>CVE-2020-27841</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://security.gentoo.org/glsa/202101-29">https://security.gentoo.org/glsa/202101-29</a></p>

<p>Fix Resolution: All OpenJPEG 2 users should upgrade to the latest version # emerge --sync

# emerge --ask --oneshot --verbose >=media-libs/openjpeg-2.4.02

Gentoo has discontinued support OpenJPEG 1.x and any dependent packages should now be using OpenJPEG 2 or have dropped support for the library. We recommend that users unmerge OpenJPEG 1.x # emerge --unmerge media-libs/openjpeg1 >= </p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-27841 (Medium) detected in openjpegv2.3.0 - ## CVE-2020-27841 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>openjpegv2.3.0</b></p></summary>

<p>

<p>Official repository of the OpenJPEG project</p>

<p>Library home page: <a href=https://github.com/uclouvain/openjpeg.git>https://github.com/uclouvain/openjpeg.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/panasalap/OpenJPEG-2.3.0_After-27841_27845_Fix/commit/be713d2d2bd1d1324ebad976a00e4f9fef506436">be713d2d2bd1d1324ebad976a00e4f9fef506436</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

There's a flaw in openjpeg in versions prior to 2.4.0 in src/lib/openjp2/pi.c. When an attacker is able to provide crafted input to be processed by the openjpeg encoder, this could cause an out-of-bounds read. The greatest impact from this flaw is to application availability.

<p>Publish Date: 2021-01-05

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27841>CVE-2020-27841</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://security.gentoo.org/glsa/202101-29">https://security.gentoo.org/glsa/202101-29</a></p>

<p>Fix Resolution: All OpenJPEG 2 users should upgrade to the latest version # emerge --sync

# emerge --ask --oneshot --verbose >=media-libs/openjpeg-2.4.02

Gentoo has discontinued support OpenJPEG 1.x and any dependent packages should now be using OpenJPEG 2 or have dropped support for the library. We recommend that users unmerge OpenJPEG 1.x # emerge --unmerge media-libs/openjpeg1 >= </p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve medium detected in cve medium severity vulnerability vulnerable library official repository of the openjpeg project library home page a href found in head commit a href found in base branch master vulnerable source files vulnerability details there s a flaw in openjpeg in versions prior to in src lib pi c when an attacker is able to provide crafted input to be processed by the openjpeg encoder this could cause an out of bounds read the greatest impact from this flaw is to application availability publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href fix resolution all openjpeg users should upgrade to the latest version emerge sync emerge ask oneshot verbose media libs openjpeg gentoo has discontinued support openjpeg x and any dependent packages should now be using openjpeg or have dropped support for the library we recommend that users unmerge openjpeg x emerge unmerge media libs step up your open source security game with whitesource

| 0

|

257,557

| 22,194,358,938

|

IssuesEvent

|

2022-06-07 04:47:13

|

Azure/azure-sdk-for-net

|

https://api.github.com/repos/Azure/azure-sdk-for-net

|

closed

|

[Form Recognizer] StartRecognizeCustomFormsWithLabelsCanParseBlankPage failing in nightly runs

|

Client Cognitive - Form Recognizer test-reliability

|

Form Recognizer nightly test runs are failing with:

> Error message

> System.InvalidOperationException : The requested operation requires an element of type 'Number', but the target element has type 'String'.

>

>

> Stack trace

> at System.Text.Json.JsonDocument.TryGetValue(Int32 index, Single& value)

> at System.Text.Json.JsonElement.GetSingle()

> at Azure.AI.FormRecognizer.Models.FieldValue_internal.DeserializeFieldValue_internal(JsonElement element) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/Models/FieldValue_internal.Serialization.cs:line 171

> at Azure.AI.FormRecognizer.Models.DocumentResult.DeserializeDocumentResult(JsonElement element) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/Models/DocumentResult.Serialization.cs:line 72

> at Azure.AI.FormRecognizer.Models.V2AnalyzeResult.DeserializeV2AnalyzeResult(JsonElement element) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/Models/V2AnalyzeResult.Serialization.cs:line 70

> at Azure.AI.FormRecognizer.Models.AnalyzeOperationResult.DeserializeAnalyzeOperationResult(JsonElement element) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/Models/AnalyzeOperationResult.Serialization.cs:line 46

> at Azure.AI.FormRecognizer.FormRecognizerRestClient.GetAnalyzeFormResultAsync(Guid modelId, Guid resultId, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/FormRecognizerRestClient.cs:line 397

> at Azure.AI.FormRecognizer.Models.RecognizeCustomFormsOperation.Azure.Core.IOperation<Azure.AI.FormRecognizer.Models.RecognizedFormCollection>.UpdateStateAsync(Boolean async, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/FormRecognizerClient/RecognizeCustomFormsOperation.cs:line 187

> at Azure.Core.OperationInternal`1.UpdateStateAsync(Boolean async, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalOfT.cs:line 241

> at Azure.Core.OperationInternalBase.UpdateStatusAsync(Boolean async, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalBase.cs:line 201

> at Azure.Core.OperationInternalBase.UpdateStatusAsync(CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalBase.cs:line 72

> at Azure.Core.OperationPoller.WaitForCompletionResponseAsync(UpdateStatusAsync updateStatusAsync, HasCompleted hasCompleted, GetRawResponse getRawResponse, Nullable`1 suggestedInterval, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationPoller.cs:line 37

> at Azure.Core.OperationInternalBase.WaitForCompletionResponseAsync(CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalBase.cs:line 111

> at Azure.Core.OperationInternal`1.WaitForCompletionAsync(CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalOfT.cs:line 152

> at Azure.AI.FormRecognizer.Models.RecognizeCustomFormsOperation.WaitForCompletionAsync(CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/FormRecognizerClient/RecognizeCustomFormsOperation.cs:line 77

> at Azure.AI.FormRecognizer.Tests.RecognizeCustomFormsLiveTests.StartRecognizeCustomFormsWithLabelsCanParseBlankPage() in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/tests/FormRecognizerClient/RecognizeCustomFormsLiveTests.cs:line 248

> at Azure.AI.FormRecognizer.Tests.RecognizeCustomFormsLiveTests.StartRecognizeCustomFormsWithLabelsCanParseBlankPage() in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/tests/FormRecognizerClient/RecognizeCustomFormsLiveTests.cs:line 263

> at NUnit.Framework.Internal.TaskAwaitAdapter.GenericAdapter`1.BlockUntilCompleted()

> at NUnit.Framework.Internal.MessagePumpStrategy.NoMessagePumpStrategy.WaitForCompletion(AwaitAdapter awaiter)

> at NUnit.Framework.Internal.AsyncToSyncAdapter.Await(Func`1 invoke)

> at NUnit.Framework.Internal.Com

For more details check here:

- https://dev.azure.com/azure-sdk/internal/_build/results?buildId=1507193&view=results

@jsquire for notification.

|

1.0

|

[Form Recognizer] StartRecognizeCustomFormsWithLabelsCanParseBlankPage failing in nightly runs - Form Recognizer nightly test runs are failing with:

> Error message

> System.InvalidOperationException : The requested operation requires an element of type 'Number', but the target element has type 'String'.

>

>

> Stack trace

> at System.Text.Json.JsonDocument.TryGetValue(Int32 index, Single& value)

> at System.Text.Json.JsonElement.GetSingle()

> at Azure.AI.FormRecognizer.Models.FieldValue_internal.DeserializeFieldValue_internal(JsonElement element) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/Models/FieldValue_internal.Serialization.cs:line 171

> at Azure.AI.FormRecognizer.Models.DocumentResult.DeserializeDocumentResult(JsonElement element) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/Models/DocumentResult.Serialization.cs:line 72

> at Azure.AI.FormRecognizer.Models.V2AnalyzeResult.DeserializeV2AnalyzeResult(JsonElement element) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/Models/V2AnalyzeResult.Serialization.cs:line 70

> at Azure.AI.FormRecognizer.Models.AnalyzeOperationResult.DeserializeAnalyzeOperationResult(JsonElement element) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/Models/AnalyzeOperationResult.Serialization.cs:line 46

> at Azure.AI.FormRecognizer.FormRecognizerRestClient.GetAnalyzeFormResultAsync(Guid modelId, Guid resultId, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/Generated/FormRecognizerRestClient.cs:line 397

> at Azure.AI.FormRecognizer.Models.RecognizeCustomFormsOperation.Azure.Core.IOperation<Azure.AI.FormRecognizer.Models.RecognizedFormCollection>.UpdateStateAsync(Boolean async, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/FormRecognizerClient/RecognizeCustomFormsOperation.cs:line 187

> at Azure.Core.OperationInternal`1.UpdateStateAsync(Boolean async, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalOfT.cs:line 241

> at Azure.Core.OperationInternalBase.UpdateStatusAsync(Boolean async, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalBase.cs:line 201

> at Azure.Core.OperationInternalBase.UpdateStatusAsync(CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalBase.cs:line 72

> at Azure.Core.OperationPoller.WaitForCompletionResponseAsync(UpdateStatusAsync updateStatusAsync, HasCompleted hasCompleted, GetRawResponse getRawResponse, Nullable`1 suggestedInterval, CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationPoller.cs:line 37

> at Azure.Core.OperationInternalBase.WaitForCompletionResponseAsync(CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalBase.cs:line 111

> at Azure.Core.OperationInternal`1.WaitForCompletionAsync(CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/core/Azure.Core/src/Shared/OperationInternalOfT.cs:line 152

> at Azure.AI.FormRecognizer.Models.RecognizeCustomFormsOperation.WaitForCompletionAsync(CancellationToken cancellationToken) in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/src/FormRecognizerClient/RecognizeCustomFormsOperation.cs:line 77

> at Azure.AI.FormRecognizer.Tests.RecognizeCustomFormsLiveTests.StartRecognizeCustomFormsWithLabelsCanParseBlankPage() in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/tests/FormRecognizerClient/RecognizeCustomFormsLiveTests.cs:line 248

> at Azure.AI.FormRecognizer.Tests.RecognizeCustomFormsLiveTests.StartRecognizeCustomFormsWithLabelsCanParseBlankPage() in /mnt/vss/_work/1/s/sdk/formrecognizer/Azure.AI.FormRecognizer/tests/FormRecognizerClient/RecognizeCustomFormsLiveTests.cs:line 263

> at NUnit.Framework.Internal.TaskAwaitAdapter.GenericAdapter`1.BlockUntilCompleted()

> at NUnit.Framework.Internal.MessagePumpStrategy.NoMessagePumpStrategy.WaitForCompletion(AwaitAdapter awaiter)

> at NUnit.Framework.Internal.AsyncToSyncAdapter.Await(Func`1 invoke)

> at NUnit.Framework.Internal.Com

For more details check here:

- https://dev.azure.com/azure-sdk/internal/_build/results?buildId=1507193&view=results

@jsquire for notification.

|

test

|

startrecognizecustomformswithlabelscanparseblankpage failing in nightly runs form recognizer nightly test runs are failing with error message system invalidoperationexception the requested operation requires an element of type number but the target element has type string stack trace at system text json jsondocument trygetvalue index single value at system text json jsonelement getsingle at azure ai formrecognizer models fieldvalue internal deserializefieldvalue internal jsonelement element in mnt vss work s sdk formrecognizer azure ai formrecognizer src generated models fieldvalue internal serialization cs line at azure ai formrecognizer models documentresult deserializedocumentresult jsonelement element in mnt vss work s sdk formrecognizer azure ai formrecognizer src generated models documentresult serialization cs line at azure ai formrecognizer models jsonelement element in mnt vss work s sdk formrecognizer azure ai formrecognizer src generated models serialization cs line at azure ai formrecognizer models analyzeoperationresult deserializeanalyzeoperationresult jsonelement element in mnt vss work s sdk formrecognizer azure ai formrecognizer src generated models analyzeoperationresult serialization cs line at azure ai formrecognizer formrecognizerrestclient getanalyzeformresultasync guid modelid guid resultid cancellationtoken cancellationtoken in mnt vss work s sdk formrecognizer azure ai formrecognizer src generated formrecognizerrestclient cs line at azure ai formrecognizer models recognizecustomformsoperation azure core ioperation updatestateasync boolean async cancellationtoken cancellationtoken in mnt vss work s sdk formrecognizer azure ai formrecognizer src formrecognizerclient recognizecustomformsoperation cs line at azure core operationinternal updatestateasync boolean async cancellationtoken cancellationtoken in mnt vss work s sdk core azure core src shared operationinternaloft cs line at azure core operationinternalbase updatestatusasync boolean async cancellationtoken cancellationtoken in mnt vss work s sdk core azure core src shared operationinternalbase cs line at azure core operationinternalbase updatestatusasync cancellationtoken cancellationtoken in mnt vss work s sdk core azure core src shared operationinternalbase cs line at azure core operationpoller waitforcompletionresponseasync updatestatusasync updatestatusasync hascompleted hascompleted getrawresponse getrawresponse nullable suggestedinterval cancellationtoken cancellationtoken in mnt vss work s sdk core azure core src shared operationpoller cs line at azure core operationinternalbase waitforcompletionresponseasync cancellationtoken cancellationtoken in mnt vss work s sdk core azure core src shared operationinternalbase cs line at azure core operationinternal waitforcompletionasync cancellationtoken cancellationtoken in mnt vss work s sdk core azure core src shared operationinternaloft cs line at azure ai formrecognizer models recognizecustomformsoperation waitforcompletionasync cancellationtoken cancellationtoken in mnt vss work s sdk formrecognizer azure ai formrecognizer src formrecognizerclient recognizecustomformsoperation cs line at azure ai formrecognizer tests recognizecustomformslivetests startrecognizecustomformswithlabelscanparseblankpage in mnt vss work s sdk formrecognizer azure ai formrecognizer tests formrecognizerclient recognizecustomformslivetests cs line at azure ai formrecognizer tests recognizecustomformslivetests startrecognizecustomformswithlabelscanparseblankpage in mnt vss work s sdk formrecognizer azure ai formrecognizer tests formrecognizerclient recognizecustomformslivetests cs line at nunit framework internal taskawaitadapter genericadapter blockuntilcompleted at nunit framework internal messagepumpstrategy nomessagepumpstrategy waitforcompletion awaitadapter awaiter at nunit framework internal asynctosyncadapter await func invoke at nunit framework internal com for more details check here jsquire for notification

| 1

|

43,799

| 11,850,040,171

|

IssuesEvent

|

2020-03-24 16:04:02

|

hazelcast/hazelcast

|

https://api.github.com/repos/hazelcast/hazelcast

|

opened

|

Incorrect LocalCacheStats metrics computation

|

Type: Defect

|

`LocalCacheStatsImpl` states that it reports the following metrics in **milli**seconds:

```java

@Probe(name = CACHE_METRIC_AVERAGE_GET_TIME, unit = MS)

private float averageGetTime;

@Probe(name = CACHE_METRIC_AVERAGE_PUT_TIME, unit = MS)

private float averagePutTime;

@Probe(name = CACHE_METRIC_AVERAGE_REMOVAL_TIME, unit = MS)

private float averageRemoveTime;

```

It is instantiated from `CacheStatisticsImpl` which computes them in **micro**seconds https://github.com/hazelcast/hazelcast/blob/97ff57ba92dc1fbfa77720885e7db40757999080/hazelcast/src/main/java/com/hazelcast/cache/impl/CacheStatisticsImpl.java#L195

**Expected behavior**

Consistent measurement of the above metric. (However, I assume we need to check if other metrics are computed in the correct time units)

|

1.0

|

Incorrect LocalCacheStats metrics computation - `LocalCacheStatsImpl` states that it reports the following metrics in **milli**seconds:

```java

@Probe(name = CACHE_METRIC_AVERAGE_GET_TIME, unit = MS)

private float averageGetTime;

@Probe(name = CACHE_METRIC_AVERAGE_PUT_TIME, unit = MS)

private float averagePutTime;

@Probe(name = CACHE_METRIC_AVERAGE_REMOVAL_TIME, unit = MS)

private float averageRemoveTime;

```

It is instantiated from `CacheStatisticsImpl` which computes them in **micro**seconds https://github.com/hazelcast/hazelcast/blob/97ff57ba92dc1fbfa77720885e7db40757999080/hazelcast/src/main/java/com/hazelcast/cache/impl/CacheStatisticsImpl.java#L195

**Expected behavior**

Consistent measurement of the above metric. (However, I assume we need to check if other metrics are computed in the correct time units)

|

non_test

|

incorrect localcachestats metrics computation localcachestatsimpl states that it reports the following metrics in milli seconds java probe name cache metric average get time unit ms private float averagegettime probe name cache metric average put time unit ms private float averageputtime probe name cache metric average removal time unit ms private float averageremovetime it is instantiated from cachestatisticsimpl which computes them in micro seconds expected behavior consistent measurement of the above metric however i assume we need to check if other metrics are computed in the correct time units

| 0

|

152,045

| 5,831,977,737

|

IssuesEvent

|

2017-05-08 20:40:52

|

openshift/origin

|

https://api.github.com/repos/openshift/origin

|

closed

|

[POST_REBASE] numeric ordering of `oc get pods` output

|

component/cli help-wanted kind/enhancement kind/post-rebase priority/P3

|

It will be nice to have a numerically ordered output for `oc get pods` :

Here is the current output :

```

foobar-1-build

foobar-10-build

foobar-2-build

foobar-3-build

```

|

1.0

|

[POST_REBASE] numeric ordering of `oc get pods` output - It will be nice to have a numerically ordered output for `oc get pods` :

Here is the current output :

```

foobar-1-build

foobar-10-build

foobar-2-build

foobar-3-build

```

|

non_test

|

numeric ordering of oc get pods output it will be nice to have a numerically ordered output for oc get pods here is the current output foobar build foobar build foobar build foobar build

| 0

|

275,043

| 23,890,815,248

|

IssuesEvent

|

2022-09-08 11:19:47

|

stores-cedcommerce/HSL-Home-page-design

|

https://api.github.com/repos/stores-cedcommerce/HSL-Home-page-design

|

closed

|

In address page, when the phone number is starting from 0 then the error message is coming.

|

Account pages Desktop Functional / bug Ready to test fixed

|

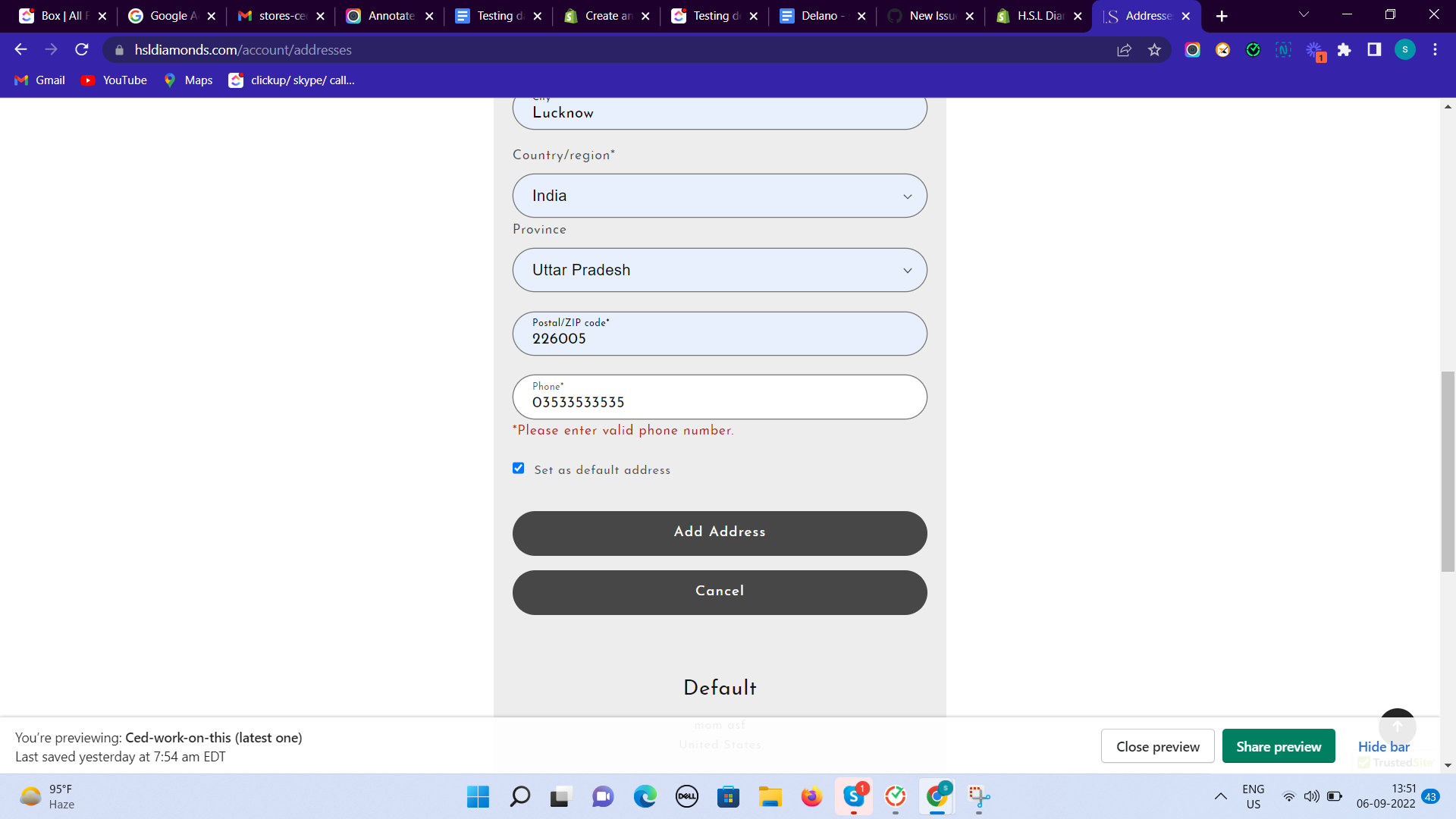

**Actual result:**

In address page, when the phone number is starting from 0 then the error message is coming.

https://www.hsldiamonds.com/account/addresses

**Expected result:**

The phone number validation needed to be checked.

|

1.0

|

In address page, when the phone number is starting from 0 then the error message is coming. - **Actual result:**

In address page, when the phone number is starting from 0 then the error message is coming.

https://www.hsldiamonds.com/account/addresses

**Expected result:**

The phone number validation needed to be checked.

|

test

|

in address page when the phone number is starting from then the error message is coming actual result in address page when the phone number is starting from then the error message is coming expected result the phone number validation needed to be checked

| 1

|

564,757

| 16,740,459,523

|

IssuesEvent

|

2021-06-11 09:08:24

|

codetapacademy/codetap.academy

|

https://api.github.com/repos/codetapacademy/codetap.academy

|

closed

|

feat: display completed percentage in the home page

|

Priority: High Status: Available Type: Enhancement

|

display completed percentage in the home page

|

1.0

|

feat: display completed percentage in the home page - display completed percentage in the home page

|

non_test

|

feat display completed percentage in the home page display completed percentage in the home page

| 0

|

177,198

| 13,686,269,143

|

IssuesEvent

|

2020-09-30 08:27:26

|

photoprism/photoprism

|

https://api.github.com/repos/photoprism/photoprism

|

closed

|

Hidden settings still accessible through URL route

|

please-test todo

|

Apart from the actual topic of this issue I'd like to thank all creators and maintainers of this fantastic project. I've searched some years ago for a well thought and non-cluttered self-host solution for my image and photo libraries, but unfortunately I didn't find anything at the time that matched my needs. Maybe I also stumbled over PhotoPrism, but it was in a too early development state.

The development process of PhotoPrism reflects my personal workflow and is well structured, the documentation is extensive including complete user & developer guides and the communication of project goal against community requests is handled with care. And it is written in my most favorite programming language of all time 😜 I will definitely try to contribute when I have a little more time again.

I've set PhotoPrism up on my server with [Traefik][] as reverse proxy and [Authelia][] as authenticator (patiently waiting with anticipation for #98) so I can host it for my family and friends. It works great and smoothly imported ~100GB in an astonishingly quick time, without any errors and a perfectly indexed gallery.

Last thing I must note before going into the actual issue topic: I feel a little flattered that [my _Nord_ project is listed as design inspiration for the web UI][c], it's nice to see that it is used in so many different projects and areas.

➜ **Start to read here to skip the off-topic content**

While setting up my PhotoPrism instance, I came across the `PHOTOPRISM_SETTINGS_HIDDEN` environment variable that allows to hide the “Settings“ sidebar entry that also sets settings into read-only. This is a simple way to hide UI elements that are not relevant for “normal“ users while there is no explicit user role management available, but a user that knows about the `/settings` route can also simply access the settings again. Even though the settings are read-only, I guess this is not intended and the route should not be served at all. If I'm wrong with my assumption this issue can be closed immediately because it then works as designed.

[authelia]: https://www.authelia.com

[c]: https://docs.photoprism.org/developer-guide/frontend/design/#colors

[traefik]: https://containo.us/traefik

|

1.0

|

Hidden settings still accessible through URL route - Apart from the actual topic of this issue I'd like to thank all creators and maintainers of this fantastic project. I've searched some years ago for a well thought and non-cluttered self-host solution for my image and photo libraries, but unfortunately I didn't find anything at the time that matched my needs. Maybe I also stumbled over PhotoPrism, but it was in a too early development state.

The development process of PhotoPrism reflects my personal workflow and is well structured, the documentation is extensive including complete user & developer guides and the communication of project goal against community requests is handled with care. And it is written in my most favorite programming language of all time 😜 I will definitely try to contribute when I have a little more time again.

I've set PhotoPrism up on my server with [Traefik][] as reverse proxy and [Authelia][] as authenticator (patiently waiting with anticipation for #98) so I can host it for my family and friends. It works great and smoothly imported ~100GB in an astonishingly quick time, without any errors and a perfectly indexed gallery.

Last thing I must note before going into the actual issue topic: I feel a little flattered that [my _Nord_ project is listed as design inspiration for the web UI][c], it's nice to see that it is used in so many different projects and areas.

➜ **Start to read here to skip the off-topic content**

While setting up my PhotoPrism instance, I came across the `PHOTOPRISM_SETTINGS_HIDDEN` environment variable that allows to hide the “Settings“ sidebar entry that also sets settings into read-only. This is a simple way to hide UI elements that are not relevant for “normal“ users while there is no explicit user role management available, but a user that knows about the `/settings` route can also simply access the settings again. Even though the settings are read-only, I guess this is not intended and the route should not be served at all. If I'm wrong with my assumption this issue can be closed immediately because it then works as designed.

[authelia]: https://www.authelia.com

[c]: https://docs.photoprism.org/developer-guide/frontend/design/#colors

[traefik]: https://containo.us/traefik

|

test

|