Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

98,162

| 12,300,036,481

|

IssuesEvent

|

2020-05-11 13:23:35

|

Opentrons/opentrons

|

https://api.github.com/repos/Opentrons/opentrons

|

closed

|

[PD] Thermocycler Graph of Profile

|

WIP design protocol designer

|

# User Story

As a user, I should be able to reference a standard graph/visualization of the profile I’ve created which shows the profile’s temperature, time spans, and cycles.

# UI Design

_In progress_

# Acceptance Criteria

- [ ] The graph is made up of equal width/height blocks. These blocks contain:

- [ ] A horizontal line, the height of which represents temperature

- [ ] A temperature

- [ ] A duration

- [ ] Blocks that are together in a cycle have an arrow underneath with the cycle # in it. These blocks also have a different fill than individual blocks.

- [ ] The horizontal lines in each block are connected to their neighbors by a line that spans the gutter between blocks

|

2.0

|

[PD] Thermocycler Graph of Profile - # User Story

As a user, I should be able to reference a standard graph/visualization of the profile I’ve created which shows the profile’s temperature, time spans, and cycles.

# UI Design

_In progress_

# Acceptance Criteria

- [ ] The graph is made up of equal width/height blocks. These blocks contain:

- [ ] A horizontal line, the height of which represents temperature

- [ ] A temperature

- [ ] A duration

- [ ] Blocks that are together in a cycle have an arrow underneath with the cycle # in it. These blocks also have a different fill than individual blocks.

- [ ] The horizontal lines in each block are connected to their neighbors by a line that spans the gutter between blocks

|

non_test

|

thermocycler graph of profile user story as a user i should be able to reference a standard graph visualization of the profile i’ve created which shows the profile’s temperature time spans and cycles ui design in progress acceptance criteria the graph is made up of equal width height blocks these blocks contain a horizontal line the height of which represents temperature a temperature a duration blocks that are together in a cycle have an arrow underneath with the cycle in it these blocks also have a different fill than individual blocks the horizontal lines in each block are connected to their neighbors by a line that spans the gutter between blocks

| 0

|

346,012

| 10,383,016,236

|

IssuesEvent

|

2019-09-10 08:46:54

|

red-hat-storage/ocs-ci

|

https://api.github.com/repos/red-hat-storage/ocs-ci

|

opened

|

ocs_operator_storage_cluster_cr points to nonexisting url

|

High Priority bug

|

Installation of upstream version via operator is failing on following error:

```

E AssertionError: Couldn't load URL: https://raw.githubusercontent.com/openshift/ocs-operator/master/deploy/crds/ocs_v1alpha1_storagecluster_cr.yaml content! Status: 404.

```

The URL is set in default config [ocs_ci/framework/conf/default_config.yaml](https://github.com/red-hat-storage/ocs-ci/blob/master/ocs_ci/framework/conf/default_config.yaml#L44).

The original file does not exists any more, but it seems to be just renamed to `ocs_v1_storagecluster_cr.yaml`:

https://github.com/openshift/ocs-operator/tree/master/deploy/crds

|

1.0

|

ocs_operator_storage_cluster_cr points to nonexisting url - Installation of upstream version via operator is failing on following error:

```

E AssertionError: Couldn't load URL: https://raw.githubusercontent.com/openshift/ocs-operator/master/deploy/crds/ocs_v1alpha1_storagecluster_cr.yaml content! Status: 404.

```

The URL is set in default config [ocs_ci/framework/conf/default_config.yaml](https://github.com/red-hat-storage/ocs-ci/blob/master/ocs_ci/framework/conf/default_config.yaml#L44).

The original file does not exists any more, but it seems to be just renamed to `ocs_v1_storagecluster_cr.yaml`:

https://github.com/openshift/ocs-operator/tree/master/deploy/crds

|

non_test

|

ocs operator storage cluster cr points to nonexisting url installation of upstream version via operator is failing on following error e assertionerror couldn t load url content status the url is set in default config the original file does not exists any more but it seems to be just renamed to ocs storagecluster cr yaml

| 0

|

30,983

| 6,385,767,674

|

IssuesEvent

|

2017-08-03 09:23:27

|

bridgedotnet/Bridge

|

https://api.github.com/repos/bridgedotnet/Bridge

|

closed

|

Cannot cast null to nullable

|

defect

|

Cannot cast null to nullable.

### Steps To Reproduce

https://dev.deck.net/16c8630e00f0554844f810648e73f9e9/third

https://dotnetfiddle.net/FeYHFH

```c#

public class Program

{

public static void Main()

{

Console.WriteLine((((object)null) as Int64?).HasValue ? "Failed" : "Passed");

}

}

```

|

1.0

|

Cannot cast null to nullable - Cannot cast null to nullable.

### Steps To Reproduce

https://dev.deck.net/16c8630e00f0554844f810648e73f9e9/third

https://dotnetfiddle.net/FeYHFH

```c#

public class Program

{

public static void Main()

{

Console.WriteLine((((object)null) as Int64?).HasValue ? "Failed" : "Passed");

}

}

```

|

non_test

|

cannot cast null to nullable cannot cast null to nullable steps to reproduce c public class program public static void main console writeline object null as hasvalue failed passed

| 0

|

821,359

| 30,819,127,865

|

IssuesEvent

|

2023-08-01 15:11:40

|

opendatahub-io/data-science-pipelines-operator

|

https://api.github.com/repos/opendatahub-io/data-science-pipelines-operator

|

opened

|

Use openshift-goimports to sort go importants

|

triage/accepted priority/normal

|

### Feature description

We should follow openshift best practices when organizing our go imports, we should utilize [openshift go imports for this](https://github.com/openshift-eng/openshift-goimports).

Acceptance criteria:

* Figure out how best to include this as part of development workflow, (can this be part of pre-commit?)

* add a gh action to verify that PRs adhere to the imports via openshift go imports.

* Also add a documentation to dspo readme on how to develop/run go imports when developing.

### Describe alternatives you've considered

_No response_

### Anything else?

_No response_

|

1.0

|

Use openshift-goimports to sort go importants - ### Feature description

We should follow openshift best practices when organizing our go imports, we should utilize [openshift go imports for this](https://github.com/openshift-eng/openshift-goimports).

Acceptance criteria:

* Figure out how best to include this as part of development workflow, (can this be part of pre-commit?)

* add a gh action to verify that PRs adhere to the imports via openshift go imports.

* Also add a documentation to dspo readme on how to develop/run go imports when developing.

### Describe alternatives you've considered

_No response_

### Anything else?

_No response_

|

non_test

|

use openshift goimports to sort go importants feature description we should follow openshift best practices when organizing our go imports we should utilize acceptance criteria figure out how best to include this as part of development workflow can this be part of pre commit add a gh action to verify that prs adhere to the imports via openshift go imports also add a documentation to dspo readme on how to develop run go imports when developing describe alternatives you ve considered no response anything else no response

| 0

|

304,486

| 9,332,750,125

|

IssuesEvent

|

2019-03-28 12:59:57

|

mlibrary/heliotrope

|

https://api.github.com/repos/mlibrary/heliotrope

|

closed

|

Vanilla Fulcrum

|

EPIC low priority refactor systems

|

Separate Fulcrum from Heliotrope such that following the setup instructions in the README.md creates a generic 'Vanilla Fulcrum' application.

|

1.0

|

Vanilla Fulcrum - Separate Fulcrum from Heliotrope such that following the setup instructions in the README.md creates a generic 'Vanilla Fulcrum' application.

|

non_test

|

vanilla fulcrum separate fulcrum from heliotrope such that following the setup instructions in the readme md creates a generic vanilla fulcrum application

| 0

|

68,508

| 3,288,906,608

|

IssuesEvent

|

2015-10-29 16:51:42

|

INN/Largo

|

https://api.github.com/repos/INN/Largo

|

closed

|

Pulling .rst function documentation out of .php

|

priority: low type: question

|

#### What's currently working.

Using __[`doxphp`](https://github.com/avalanche123/doxphp)__ (a php phar), it's possible to generate a `.json` representation of documentation directly from our `.php` files.

From there, we can generate `*.rst` using the __`doxphp2sphinx`__ renderer supplied in the same phar.

$ doxphp < functions.php | doxphp2sphinx > functions.rst

As a proof of concept, this is currently in our sphinx Makefile for a few select files [here](https://github.com/INN/Largo/blob/develop/docs/Makefile#L179-L185). With `make php`, It will generate an `.rst` files for each specified `.php` file. With `make html`, those files will compile to `.html` [like this](http://largo.readthedocs.org/api/inc/helpers.html). It requires you to have **`doxphp`** installed.

**tl;dr:** `.php > .json > .rst > .html`

We could modify this pipeline, writing either our own `.php > .json` parser (seems unnecessary) or sphinx extension to render the included `.json > .rst` in a different way. In theory, this shouldn't be too hard.

#### Questions.

1. Is organizing function documentation like this the best practice? (i.e is the way we organize our code is the best way to organize our function documentation?)

2. Not all files have functions that need documentation. I'd assume most of what should be included resides in the `./inc/` folder, but is there anything else that should be included? Is there anything in `./inc/` that shouldn't be included?

#### Others.

What others are doing

* [WordPress Codex](http://codex.wordpress.org/): Organizes function reference one function per page. I tend to like this format and think our users would be familiar with it. It also would allow us to add examples and longer form documentation for those functions that need it.

* [WooThemes](http://docs.woothemes.com/): Seems to have their documentation all over the place.

- Their **WooCommerce** plugin uses [APIgen](http://www.apigen.org/) to generate documentation for their WooCommerce plugin. and keeps it separate from

- **WooCodex** seems to mirror the structure of WordPress for some of their more [commonly used](http://docs.woothemes.com/documentation/woocodex/) functions ([like this](http://docs.woothemes.com/document/woocommerce_breadcrumb/)).

* Others?

|

1.0

|

Pulling .rst function documentation out of .php - #### What's currently working.

Using __[`doxphp`](https://github.com/avalanche123/doxphp)__ (a php phar), it's possible to generate a `.json` representation of documentation directly from our `.php` files.

From there, we can generate `*.rst` using the __`doxphp2sphinx`__ renderer supplied in the same phar.

$ doxphp < functions.php | doxphp2sphinx > functions.rst

As a proof of concept, this is currently in our sphinx Makefile for a few select files [here](https://github.com/INN/Largo/blob/develop/docs/Makefile#L179-L185). With `make php`, It will generate an `.rst` files for each specified `.php` file. With `make html`, those files will compile to `.html` [like this](http://largo.readthedocs.org/api/inc/helpers.html). It requires you to have **`doxphp`** installed.

**tl;dr:** `.php > .json > .rst > .html`

We could modify this pipeline, writing either our own `.php > .json` parser (seems unnecessary) or sphinx extension to render the included `.json > .rst` in a different way. In theory, this shouldn't be too hard.

#### Questions.

1. Is organizing function documentation like this the best practice? (i.e is the way we organize our code is the best way to organize our function documentation?)

2. Not all files have functions that need documentation. I'd assume most of what should be included resides in the `./inc/` folder, but is there anything else that should be included? Is there anything in `./inc/` that shouldn't be included?

#### Others.

What others are doing

* [WordPress Codex](http://codex.wordpress.org/): Organizes function reference one function per page. I tend to like this format and think our users would be familiar with it. It also would allow us to add examples and longer form documentation for those functions that need it.

* [WooThemes](http://docs.woothemes.com/): Seems to have their documentation all over the place.

- Their **WooCommerce** plugin uses [APIgen](http://www.apigen.org/) to generate documentation for their WooCommerce plugin. and keeps it separate from

- **WooCodex** seems to mirror the structure of WordPress for some of their more [commonly used](http://docs.woothemes.com/documentation/woocodex/) functions ([like this](http://docs.woothemes.com/document/woocommerce_breadcrumb/)).

* Others?

|

non_test

|

pulling rst function documentation out of php what s currently working using a php phar it s possible to generate a json representation of documentation directly from our php files from there we can generate rst using the renderer supplied in the same phar doxphp functions rst as a proof of concept this is currently in our sphinx makefile for a few select files with make php it will generate an rst files for each specified php file with make html those files will compile to html it requires you to have doxphp installed tl dr php json rst html we could modify this pipeline writing either our own php json parser seems unnecessary or sphinx extension to render the included json rst in a different way in theory this shouldn t be too hard questions is organizing function documentation like this the best practice i e is the way we organize our code is the best way to organize our function documentation not all files have functions that need documentation i d assume most of what should be included resides in the inc folder but is there anything else that should be included is there anything in inc that shouldn t be included others what others are doing organizes function reference one function per page i tend to like this format and think our users would be familiar with it it also would allow us to add examples and longer form documentation for those functions that need it seems to have their documentation all over the place their woocommerce plugin uses to generate documentation for their woocommerce plugin and keeps it separate from woocodex seems to mirror the structure of wordpress for some of their more functions others

| 0

|

170,442

| 6,444,659,752

|

IssuesEvent

|

2017-08-12 15:16:17

|

drckf/paysage

|

https://api.github.com/repos/drckf/paysage

|

closed

|

Dropout RBMs

|

Priority: Medium

|

Dropout RBMs are discussed in the original dropout paper with results superior to normal RBMs.

|

1.0

|

Dropout RBMs - Dropout RBMs are discussed in the original dropout paper with results superior to normal RBMs.

|

non_test

|

dropout rbms dropout rbms are discussed in the original dropout paper with results superior to normal rbms

| 0

|

329,055

| 28,146,631,717

|

IssuesEvent

|

2023-04-02 15:03:16

|

unifyai/ivy

|

https://api.github.com/repos/unifyai/ivy

|

closed

|

Fix elementwise.test_count_nonzero

|

Sub Task Ivy API Experimental Failing Test

|

| | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4588728136/jobs/8103151468" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4588728136/jobs/8103151468" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/4588728136/jobs/8103151468" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/4588728136/jobs/8103151468" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|

1.0

|

Fix elementwise.test_count_nonzero - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4588728136/jobs/8103151468" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4588728136/jobs/8103151468" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/4588728136/jobs/8103151468" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/4588728136/jobs/8103151468" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|

test

|

fix elementwise test count nonzero tensorflow img src torch img src numpy img src jax img src

| 1

|

613,759

| 19,097,793,857

|

IssuesEvent

|

2021-11-29 18:36:20

|

ballerina-platform/ballerina-lang

|

https://api.github.com/repos/ballerina-platform/ballerina-lang

|

closed

|

Able to push a package with a local repo dependancy to central

|

Type/Bug Priority/High Status/Blocked Team/DevTools SwanLakeDump Area/ProjectAPI

|

**Description:**

Even though a similar package name does or does not exist in central, should not be able to push a package with a local dependency to the central. Currently, it's allowed to push for both scenarios.

**Steps to reproduce:**

**Affected Versions:**

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

|

1.0

|

Able to push a package with a local repo dependancy to central - **Description:**

Even though a similar package name does or does not exist in central, should not be able to push a package with a local dependency to the central. Currently, it's allowed to push for both scenarios.

**Steps to reproduce:**

**Affected Versions:**

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

**Suggested Labels (optional):**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees (optional):**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

|

non_test

|

able to push a package with a local repo dependancy to central description even though a similar package name does or does not exist in central should not be able to push a package with a local dependency to the central currently it s allowed to push for both scenarios steps to reproduce affected versions os db other environment details and versions related issues optional suggested labels optional suggested assignees optional

| 0

|

516,420

| 14,981,892,391

|

IssuesEvent

|

2021-01-28 15:21:49

|

jetstack/cert-manager

|

https://api.github.com/repos/jetstack/cert-manager

|

closed

|

backup instructions in the docs are incorrect

|

kind/bug kind/feature priority/important-longterm

|

**Describe the bug**:

This page has instruction for backup up the certs and restoring them:

https://docs.cert-manager.io/en/latest/tasks/backup-restore-crds.html

The instruction don't work as is. The backup part work, but the restore won't work for many reasons.

```

status.conditions.lastTransitionTime in body must be of type string: "null"

```

a quick hack here is to replace `lastTransitionTime: null` with some valid date, but filtering the status part would be better. That fixed I get

```

Error from server (Conflict): Operation cannot be fulfilled on issuers.certmanager.k8s.io "letsencrypt-prod": the object has been modified; please apply your changes to the latest version and try again

Error from server (Conflict): Operation cannot be fulfilled on certificates.certmanager.k8s.io "cloud-master": the object has been modified; please apply your changes to the latest version and try again

```

**Expected behaviour**:

Restore should be tested and working

**Environment details:**:

I was upgrading from 0.5.2 to 0.6.7 (in order to upgrade further).

/kind bug

|

1.0

|

backup instructions in the docs are incorrect - **Describe the bug**:

This page has instruction for backup up the certs and restoring them:

https://docs.cert-manager.io/en/latest/tasks/backup-restore-crds.html

The instruction don't work as is. The backup part work, but the restore won't work for many reasons.

```

status.conditions.lastTransitionTime in body must be of type string: "null"

```

a quick hack here is to replace `lastTransitionTime: null` with some valid date, but filtering the status part would be better. That fixed I get

```

Error from server (Conflict): Operation cannot be fulfilled on issuers.certmanager.k8s.io "letsencrypt-prod": the object has been modified; please apply your changes to the latest version and try again

Error from server (Conflict): Operation cannot be fulfilled on certificates.certmanager.k8s.io "cloud-master": the object has been modified; please apply your changes to the latest version and try again

```

**Expected behaviour**:

Restore should be tested and working

**Environment details:**:

I was upgrading from 0.5.2 to 0.6.7 (in order to upgrade further).

/kind bug

|

non_test

|

backup instructions in the docs are incorrect describe the bug this page has instruction for backup up the certs and restoring them the instruction don t work as is the backup part work but the restore won t work for many reasons status conditions lasttransitiontime in body must be of type string null a quick hack here is to replace lasttransitiontime null with some valid date but filtering the status part would be better that fixed i get error from server conflict operation cannot be fulfilled on issuers certmanager io letsencrypt prod the object has been modified please apply your changes to the latest version and try again error from server conflict operation cannot be fulfilled on certificates certmanager io cloud master the object has been modified please apply your changes to the latest version and try again expected behaviour restore should be tested and working environment details i was upgrading from to in order to upgrade further kind bug

| 0

|

199,125

| 15,024,780,032

|

IssuesEvent

|

2021-02-01 20:07:48

|

CARTAvis/carta-backend

|

https://api.github.com/repos/CARTAvis/carta-backend

|

closed

|

extra channel info derived from header with ra-dec-stokes

|

awaiting testing bug

|

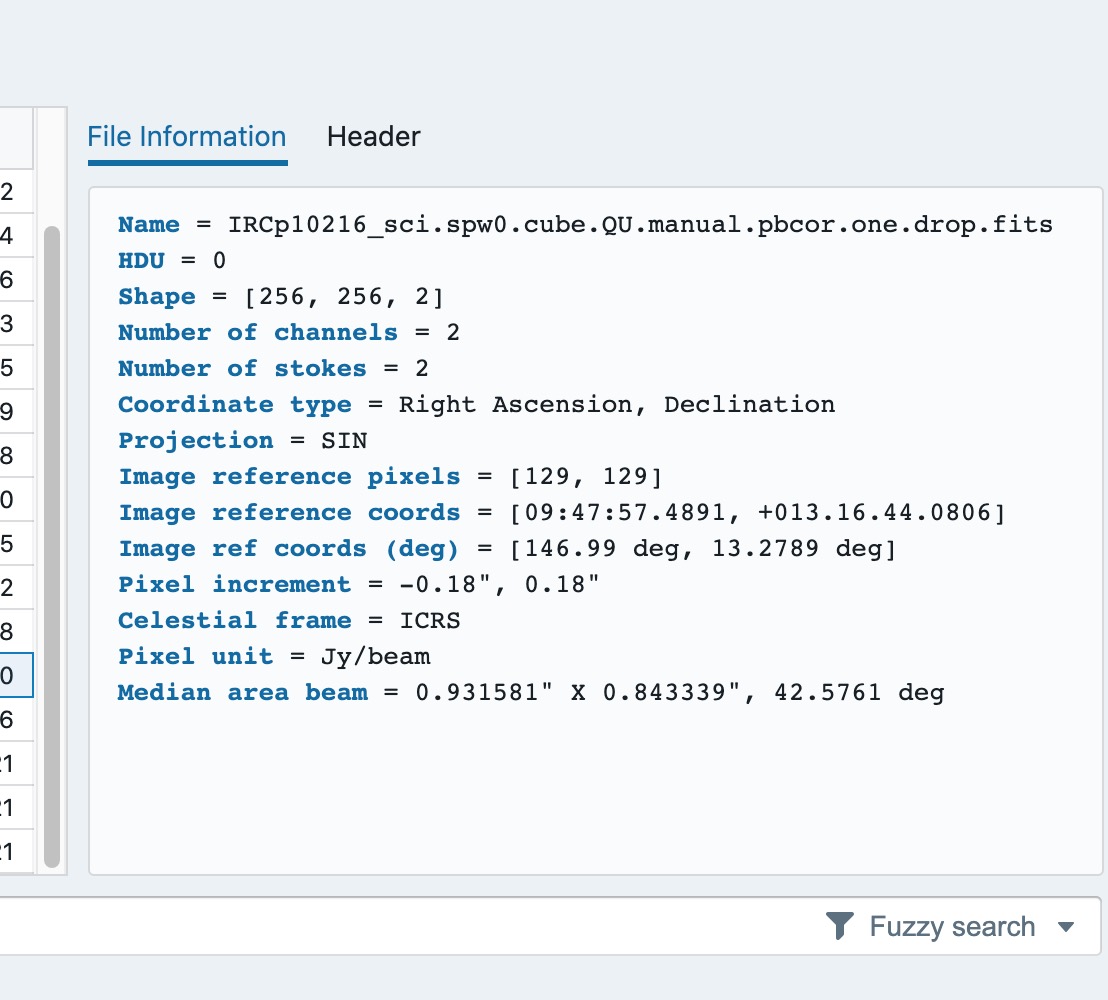

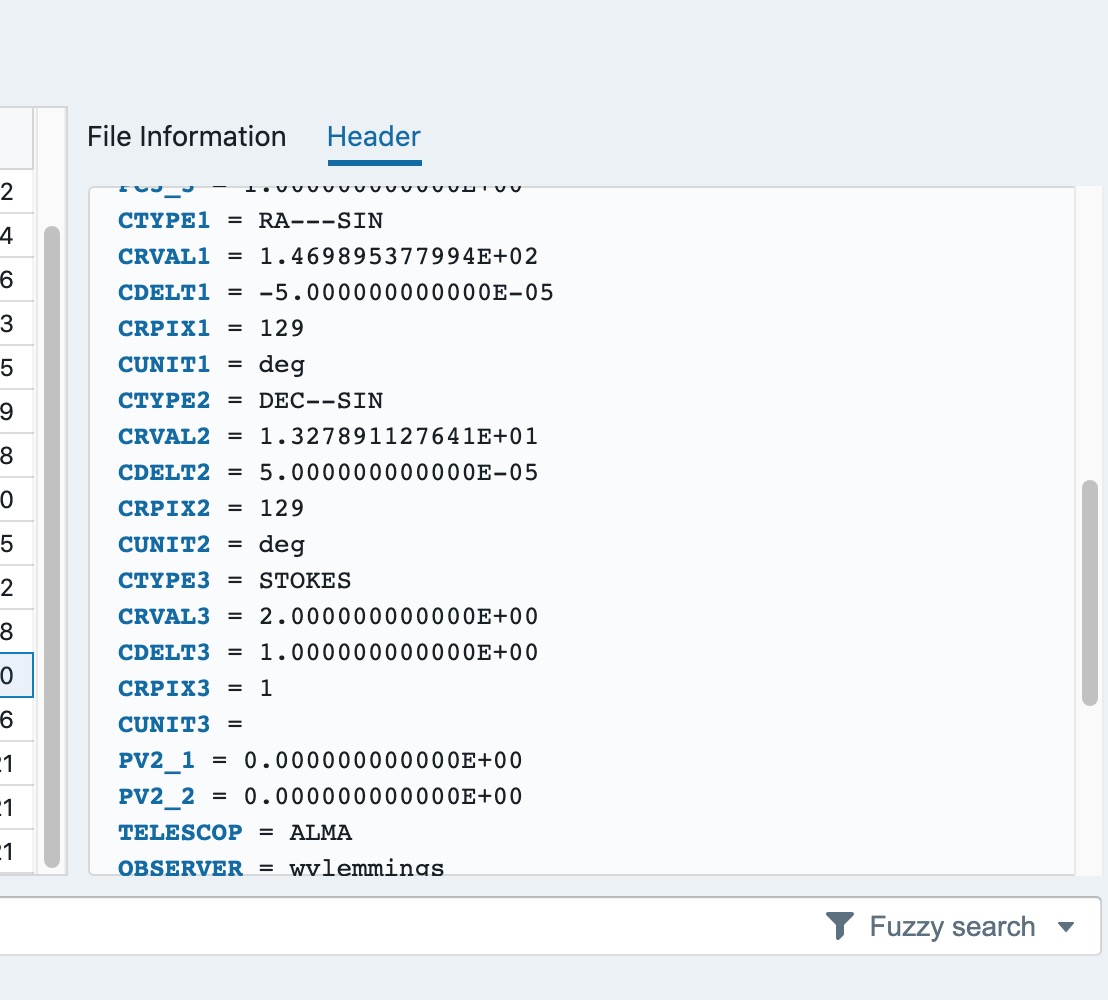

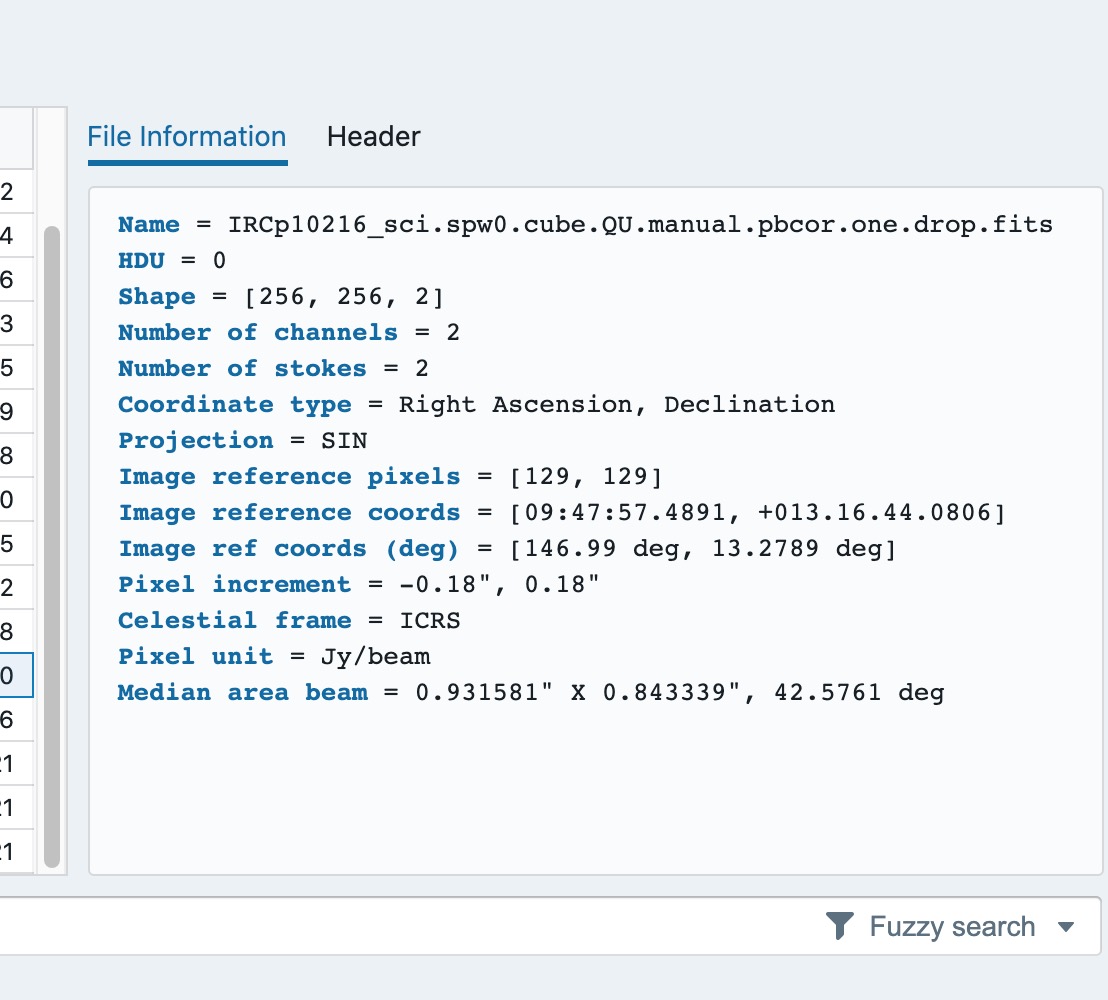

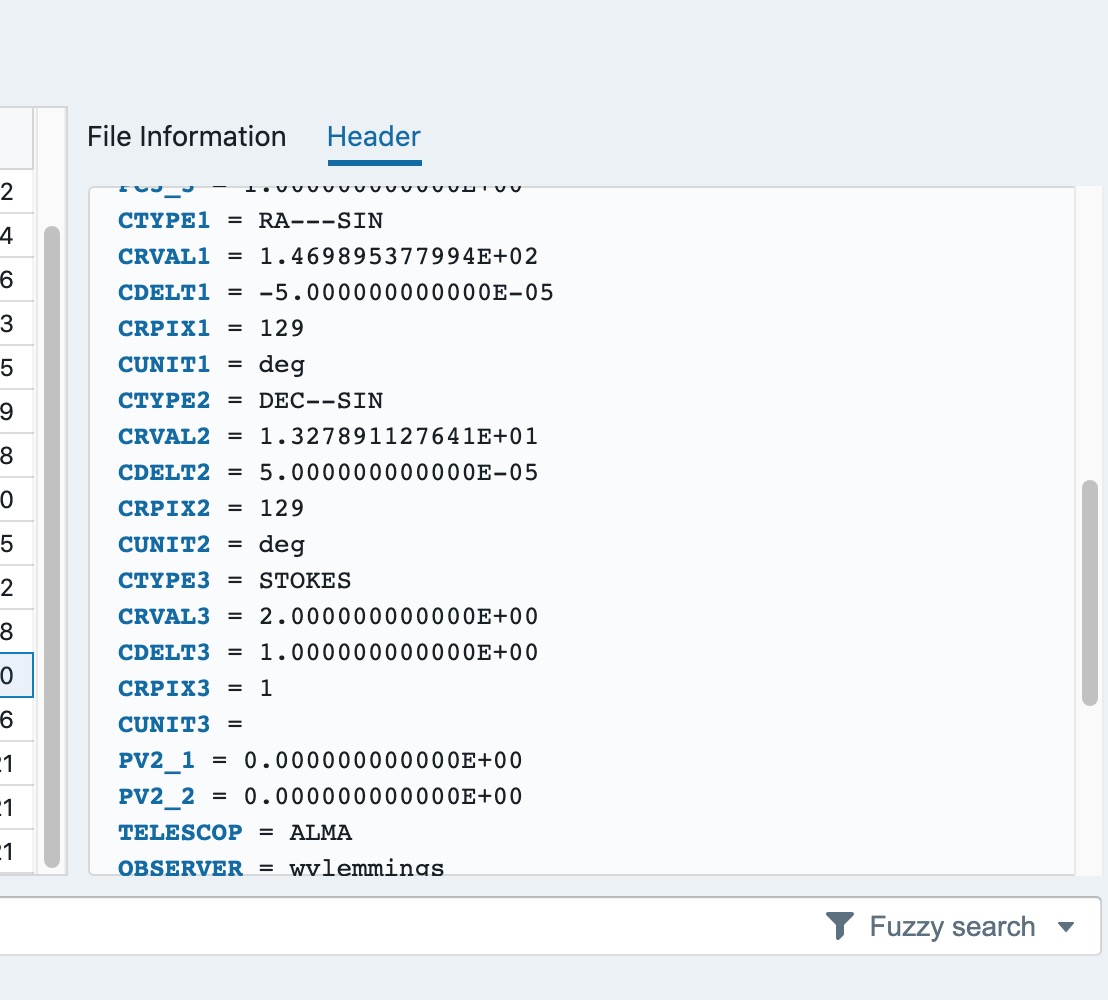

If the image has 3 axes as ra-dec-stokes, there is an extra channel info in the file info tab.

image from @zarda

|

1.0

|

extra channel info derived from header with ra-dec-stokes - If the image has 3 axes as ra-dec-stokes, there is an extra channel info in the file info tab.

image from @zarda

|

test

|

extra channel info derived from header with ra dec stokes if the image has axes as ra dec stokes there is an extra channel info in the file info tab image from zarda

| 1

|

147,582

| 13,210,679,832

|

IssuesEvent

|

2020-08-15 18:14:54

|

Gizra/og

|

https://api.github.com/repos/Gizra/og

|

closed

|

og_ungroup() documentation incorrectly informs about @return value.

|

Documentation Drupal 7

|

The current documentation of the og_ungroup() function states that an entity is returned but it's not currently the case:

```

/**

* Delete an association (e.g. unsubscribe) of an entity to a group.

*

* @param $group_type

* The entity type (e.g. "node").

* @param $gid

* The group entity object or ID, to ungroup.

* @param $entity_type

* (optional) The entity type (e.g. "node" or "user").

* @param $etid

* (optional) The entity object or ID, to ungroup.

*

* @return

* The entity with the fields updated.

*/

function og_ungroup($group_type, $gid, $entity_type = 'user', $etid = NULL) {

if (is_object($gid)) {

list($gid) = entity_extract_ids($group_type, $gid);

}

if ($entity_type == 'user' && empty($etid)) {

global $user;

$etid = $user->uid;

}

elseif (is_object($etid)) {

list($etid) = entity_extract_ids($entity_type, $etid);

}

if ($og_membership = og_get_membership($group_type, $gid, $entity_type, $etid)) {

$og_membership->delete();

}

}

```

Not providing a patch / PR because I don't know if what needs to be corrected is the documentation to follow the code, or to change the code to return something (perhaps more usefull...)

|

1.0

|

og_ungroup() documentation incorrectly informs about @return value. - The current documentation of the og_ungroup() function states that an entity is returned but it's not currently the case:

```

/**

* Delete an association (e.g. unsubscribe) of an entity to a group.

*

* @param $group_type

* The entity type (e.g. "node").

* @param $gid

* The group entity object or ID, to ungroup.

* @param $entity_type

* (optional) The entity type (e.g. "node" or "user").

* @param $etid

* (optional) The entity object or ID, to ungroup.

*

* @return

* The entity with the fields updated.

*/

function og_ungroup($group_type, $gid, $entity_type = 'user', $etid = NULL) {

if (is_object($gid)) {

list($gid) = entity_extract_ids($group_type, $gid);

}

if ($entity_type == 'user' && empty($etid)) {

global $user;

$etid = $user->uid;

}

elseif (is_object($etid)) {

list($etid) = entity_extract_ids($entity_type, $etid);

}

if ($og_membership = og_get_membership($group_type, $gid, $entity_type, $etid)) {

$og_membership->delete();

}

}

```

Not providing a patch / PR because I don't know if what needs to be corrected is the documentation to follow the code, or to change the code to return something (perhaps more usefull...)

|

non_test

|

og ungroup documentation incorrectly informs about return value the current documentation of the og ungroup function states that an entity is returned but it s not currently the case delete an association e g unsubscribe of an entity to a group param group type the entity type e g node param gid the group entity object or id to ungroup param entity type optional the entity type e g node or user param etid optional the entity object or id to ungroup return the entity with the fields updated function og ungroup group type gid entity type user etid null if is object gid list gid entity extract ids group type gid if entity type user empty etid global user etid user uid elseif is object etid list etid entity extract ids entity type etid if og membership og get membership group type gid entity type etid og membership delete not providing a patch pr because i don t know if what needs to be corrected is the documentation to follow the code or to change the code to return something perhaps more usefull

| 0

|

351,773

| 32,025,684,178

|

IssuesEvent

|

2023-09-22 08:40:25

|

unifyai/ivy

|

https://api.github.com/repos/unifyai/ivy

|

closed

|

Fix solving_equations_and_inverting_matrices.test_numpy_tensorinv

|

NumPy Frontend Sub Task Failing Test

|

| | |

|---|---|

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/6271730752"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6265043606"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6265043606"><img src=https://img.shields.io/badge/-success-success></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/6265043606"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/6265043606"><img src=https://img.shields.io/badge/-success-success></a>

|

1.0

|

Fix solving_equations_and_inverting_matrices.test_numpy_tensorinv - | | |

|---|---|

|paddle|<a href="https://github.com/unifyai/ivy/actions/runs/6271730752"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/6265043606"><img src=https://img.shields.io/badge/-success-success></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/6265043606"><img src=https://img.shields.io/badge/-success-success></a>

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/6265043606"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/6265043606"><img src=https://img.shields.io/badge/-success-success></a>

|

test

|

fix solving equations and inverting matrices test numpy tensorinv paddle a href src numpy a href src jax a href src tensorflow a href src torch a href src

| 1

|

13,294

| 2,750,721,889

|

IssuesEvent

|

2015-04-24 01:41:59

|

micheldumontier/semanticscience

|

https://api.github.com/repos/micheldumontier/semanticscience

|

closed

|

"is source of" is both property and class

|

auto-migrated Priority-Medium Type-Defect

|

```

http://semanticscience.org/resource/SIO_000219 'is source of' in sio-bio.owl

seems to be both a property and (probably incorrect) a class. 'drug regulatory

authority' is a subclass of this entity.

Viewed in TopBraid Composer Free Edition without inferencing.

```

Original issue reported on code.google.com by `matthias...@gmail.com` on 29 Jun 2012 at 9:22

|

1.0

|

"is source of" is both property and class - ```

http://semanticscience.org/resource/SIO_000219 'is source of' in sio-bio.owl

seems to be both a property and (probably incorrect) a class. 'drug regulatory

authority' is a subclass of this entity.

Viewed in TopBraid Composer Free Edition without inferencing.

```

Original issue reported on code.google.com by `matthias...@gmail.com` on 29 Jun 2012 at 9:22

|

non_test

|

is source of is both property and class is source of in sio bio owl seems to be both a property and probably incorrect a class drug regulatory authority is a subclass of this entity viewed in topbraid composer free edition without inferencing original issue reported on code google com by matthias gmail com on jun at

| 0

|

268,009

| 23,339,202,079

|

IssuesEvent

|

2022-08-09 12:44:53

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

closed

|

Failing ES Promotion: Chrome X-Pack UI Functional Tests - ML anomaly_detection.x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer·ts - machine learning - anomaly detection anomaly explorer with farequote based multi metric job renders View By swim lane

|

blocker :ml skipped-test failed-es-promotion Team:ML v7.17.6

|

**Chrome X-Pack UI Functional Tests - ML anomaly_detection**

**x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer.ts**

**machine learning - anomaly detection anomaly explorer with farequote based multi metric job renders View By swim lane**

This failure is preventing the promotion of the current Elasticsearch nightly snapshot.

For more information on the Elasticsearch snapshot promotion process including how to reproduce using the unverified nightly ES build: https://www.elastic.co/guide/en/kibana/master/development-es-snapshots.html

* [Failed promotion job](https://buildkite.com/elastic/kibana-elasticsearch-snapshot-verify/builds/1539#01827dcc-e0f1-4a59-a15c-acc245fdaa48)

* [Test Failure](https://buildkite.com/organizations/elastic/pipelines/kibana-elasticsearch-snapshot-verify/builds/1539/jobs/01827dcc-e0f1-4a59-a15c-acc245fdaa48/artifacts/01827df9-0925-4dfb-8d8a-f996e6024ebf)

```

Error: Expected swim lane y labels to be AAL,VRD,EGF,SWR,AMX,JZA,TRS,ACA,BAW,ASA, got AAL,EGF,VRD,SWR,JZA,AMX,TRS,ACA,BAW,ASA

at Assertion.assert (node_modules/@kbn/expect/expect.js:100:11)

at Assertion.eql (node_modules/@kbn/expect/expect.js:244:8)

at Object.assertAxisLabels (x-pack/test/functional/services/ml/swim_lane.ts:88:31)

at runMicrotasks (<anonymous>)

at processTicksAndRejections (node:internal/process/task_queues:96:5)

at Context.<anonymous> (x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer.ts:167:11)

at Object.apply (node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16) {

actual: '[\n' +

' "AAL"\n' +

' "EGF"\n' +

' "VRD"\n' +

' "SWR"\n' +

' "JZA"\n' +

' "AMX"\n' +

' "TRS"\n' +

' "ACA"\n' +

' "BAW"\n' +

' "ASA"\n' +

']',

expected: '[\n' +

' "AAL"\n' +

' "VRD"\n' +

' "EGF"\n' +

' "SWR"\n' +

' "AMX"\n' +

' "JZA"\n' +

' "TRS"\n' +

' "ACA"\n' +

' "BAW"\n' +

' "ASA"\n' +

']',

showDiff: true

}

```

|

1.0

|

Failing ES Promotion: Chrome X-Pack UI Functional Tests - ML anomaly_detection.x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer·ts - machine learning - anomaly detection anomaly explorer with farequote based multi metric job renders View By swim lane - **Chrome X-Pack UI Functional Tests - ML anomaly_detection**

**x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer.ts**

**machine learning - anomaly detection anomaly explorer with farequote based multi metric job renders View By swim lane**

This failure is preventing the promotion of the current Elasticsearch nightly snapshot.

For more information on the Elasticsearch snapshot promotion process including how to reproduce using the unverified nightly ES build: https://www.elastic.co/guide/en/kibana/master/development-es-snapshots.html

* [Failed promotion job](https://buildkite.com/elastic/kibana-elasticsearch-snapshot-verify/builds/1539#01827dcc-e0f1-4a59-a15c-acc245fdaa48)

* [Test Failure](https://buildkite.com/organizations/elastic/pipelines/kibana-elasticsearch-snapshot-verify/builds/1539/jobs/01827dcc-e0f1-4a59-a15c-acc245fdaa48/artifacts/01827df9-0925-4dfb-8d8a-f996e6024ebf)

```

Error: Expected swim lane y labels to be AAL,VRD,EGF,SWR,AMX,JZA,TRS,ACA,BAW,ASA, got AAL,EGF,VRD,SWR,JZA,AMX,TRS,ACA,BAW,ASA

at Assertion.assert (node_modules/@kbn/expect/expect.js:100:11)

at Assertion.eql (node_modules/@kbn/expect/expect.js:244:8)

at Object.assertAxisLabels (x-pack/test/functional/services/ml/swim_lane.ts:88:31)

at runMicrotasks (<anonymous>)

at processTicksAndRejections (node:internal/process/task_queues:96:5)

at Context.<anonymous> (x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer.ts:167:11)

at Object.apply (node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16) {

actual: '[\n' +

' "AAL"\n' +

' "EGF"\n' +

' "VRD"\n' +

' "SWR"\n' +

' "JZA"\n' +

' "AMX"\n' +

' "TRS"\n' +

' "ACA"\n' +

' "BAW"\n' +

' "ASA"\n' +

']',

expected: '[\n' +

' "AAL"\n' +

' "VRD"\n' +

' "EGF"\n' +

' "SWR"\n' +

' "AMX"\n' +

' "JZA"\n' +

' "TRS"\n' +

' "ACA"\n' +

' "BAW"\n' +

' "ASA"\n' +

']',

showDiff: true

}

```

|

test

|

failing es promotion chrome x pack ui functional tests ml anomaly detection x pack test functional apps ml anomaly detection anomaly explorer·ts machine learning anomaly detection anomaly explorer with farequote based multi metric job renders view by swim lane chrome x pack ui functional tests ml anomaly detection x pack test functional apps ml anomaly detection anomaly explorer ts machine learning anomaly detection anomaly explorer with farequote based multi metric job renders view by swim lane this failure is preventing the promotion of the current elasticsearch nightly snapshot for more information on the elasticsearch snapshot promotion process including how to reproduce using the unverified nightly es build error expected swim lane y labels to be aal vrd egf swr amx jza trs aca baw asa got aal egf vrd swr jza amx trs aca baw asa at assertion assert node modules kbn expect expect js at assertion eql node modules kbn expect expect js at object assertaxislabels x pack test functional services ml swim lane ts at runmicrotasks at processticksandrejections node internal process task queues at context x pack test functional apps ml anomaly detection anomaly explorer ts at object apply node modules kbn test target node functional test runner lib mocha wrap function js actual n aal n egf n vrd n swr n jza n amx n trs n aca n baw n asa n expected n aal n vrd n egf n swr n amx n jza n trs n aca n baw n asa n showdiff true

| 1

|

121,884

| 10,197,017,424

|

IssuesEvent

|

2019-08-12 22:32:15

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

opened

|

teamcity: failed test: gossip/restart

|

C-test-failure O-robot

|

The following tests appear to have failed on master (roachtest): acceptance/gossip/restart

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+gossip/restart).

[#1436098](https://teamcity.cockroachdb.com/viewLog.html?buildId=1436098):

```

acceptance/gossip/restart

--- FAIL: roachtest/acceptance/gossip/restart (26.519s)

test artifacts and logs in: artifacts/acceptance/gossip/restart/run_1

gossip.go:226,gossip.go:286,acceptance.go:69,test_runner.go:691: dial tcp 127.0.0.1:26261: connect: connection refused

test artifacts and logs in: artifacts/acceptance/gossip/restart/run_1

gossip.go:226,gossip.go:286,acceptance.go:69,test_runner.go:691: dial tcp 127.0.0.1:26261: connect: connection refused

```

Please assign, take a look and update the issue accordingly.

|

1.0

|

teamcity: failed test: gossip/restart - The following tests appear to have failed on master (roachtest): acceptance/gossip/restart

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+gossip/restart).

[#1436098](https://teamcity.cockroachdb.com/viewLog.html?buildId=1436098):

```

acceptance/gossip/restart

--- FAIL: roachtest/acceptance/gossip/restart (26.519s)

test artifacts and logs in: artifacts/acceptance/gossip/restart/run_1

gossip.go:226,gossip.go:286,acceptance.go:69,test_runner.go:691: dial tcp 127.0.0.1:26261: connect: connection refused

test artifacts and logs in: artifacts/acceptance/gossip/restart/run_1

gossip.go:226,gossip.go:286,acceptance.go:69,test_runner.go:691: dial tcp 127.0.0.1:26261: connect: connection refused

```

Please assign, take a look and update the issue accordingly.

|

test

|

teamcity failed test gossip restart the following tests appear to have failed on master roachtest acceptance gossip restart you may want to check acceptance gossip restart fail roachtest acceptance gossip restart test artifacts and logs in artifacts acceptance gossip restart run gossip go gossip go acceptance go test runner go dial tcp connect connection refused test artifacts and logs in artifacts acceptance gossip restart run gossip go gossip go acceptance go test runner go dial tcp connect connection refused please assign take a look and update the issue accordingly

| 1

|

90,532

| 15,856,201,240

|

IssuesEvent

|

2021-04-08 01:46:26

|

AnhaaD/hacknightvol4

|

https://api.github.com/repos/AnhaaD/hacknightvol4

|

opened

|

CVE-2019-20149 (High) detected in kind-of-6.0.2.tgz

|

security vulnerability

|

## CVE-2019-20149 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kind-of-6.0.2.tgz</b></p></summary>

<p>Get the native type of a value.</p>

<p>Library home page: <a href="https://registry.npmjs.org/kind-of/-/kind-of-6.0.2.tgz">https://registry.npmjs.org/kind-of/-/kind-of-6.0.2.tgz</a></p>

<p>Path to dependency file: /hacknightvol4/package.json</p>

<p>Path to vulnerable library: hacknightvol4/node_modules/snapdragon-node/node_modules/kind-of/package.json</p>

<p>

Dependency Hierarchy:

- nodemon-1.17.5.tgz (Root Library)

- chokidar-2.1.6.tgz

- anymatch-2.0.0.tgz

- micromatch-3.1.10.tgz

- :x: **kind-of-6.0.2.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ctorName in index.js in kind-of v6.0.2 allows external user input to overwrite certain internal attributes via a conflicting name, as demonstrated by 'constructor': {'name':'Symbol'}. Hence, a crafted payload can overwrite this builtin attribute to manipulate the type detection result.

<p>Publish Date: 2019-12-30

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-20149>CVE-2019-20149</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2019-20149">http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2019-20149</a></p>

<p>Release Date: 2019-12-30</p>

<p>Fix Resolution: 6.0.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2019-20149 (High) detected in kind-of-6.0.2.tgz - ## CVE-2019-20149 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>kind-of-6.0.2.tgz</b></p></summary>

<p>Get the native type of a value.</p>

<p>Library home page: <a href="https://registry.npmjs.org/kind-of/-/kind-of-6.0.2.tgz">https://registry.npmjs.org/kind-of/-/kind-of-6.0.2.tgz</a></p>

<p>Path to dependency file: /hacknightvol4/package.json</p>

<p>Path to vulnerable library: hacknightvol4/node_modules/snapdragon-node/node_modules/kind-of/package.json</p>

<p>

Dependency Hierarchy:

- nodemon-1.17.5.tgz (Root Library)

- chokidar-2.1.6.tgz

- anymatch-2.0.0.tgz

- micromatch-3.1.10.tgz

- :x: **kind-of-6.0.2.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

ctorName in index.js in kind-of v6.0.2 allows external user input to overwrite certain internal attributes via a conflicting name, as demonstrated by 'constructor': {'name':'Symbol'}. Hence, a crafted payload can overwrite this builtin attribute to manipulate the type detection result.

<p>Publish Date: 2019-12-30

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-20149>CVE-2019-20149</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2019-20149">http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2019-20149</a></p>

<p>Release Date: 2019-12-30</p>

<p>Fix Resolution: 6.0.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve high detected in kind of tgz cve high severity vulnerability vulnerable library kind of tgz get the native type of a value library home page a href path to dependency file package json path to vulnerable library node modules snapdragon node node modules kind of package json dependency hierarchy nodemon tgz root library chokidar tgz anymatch tgz micromatch tgz x kind of tgz vulnerable library vulnerability details ctorname in index js in kind of allows external user input to overwrite certain internal attributes via a conflicting name as demonstrated by constructor name symbol hence a crafted payload can overwrite this builtin attribute to manipulate the type detection result publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

264,340

| 8,307,891,840

|

IssuesEvent

|

2018-09-23 14:51:36

|

publiclab/plots2

|

https://api.github.com/repos/publiclab/plots2

|

opened

|

Digest email bug

|

bug priority

|

The digest emails are currently not being sent.

`ArgumentError: wrong number of arguments (given 0, expected 1)` is shown on Sidekiq dashboard (https://publiclab.org/sidekiq/retries).

|

1.0

|

Digest email bug - The digest emails are currently not being sent.

`ArgumentError: wrong number of arguments (given 0, expected 1)` is shown on Sidekiq dashboard (https://publiclab.org/sidekiq/retries).

|

non_test

|

digest email bug the digest emails are currently not being sent argumenterror wrong number of arguments given expected is shown on sidekiq dashboard

| 0

|

412,971

| 12,058,928,646

|

IssuesEvent

|

2020-04-15 18:20:39

|

zulip/zulip

|

https://api.github.com/repos/zulip/zulip

|

closed

|

Optimize rate_limiter performance for get_events queries

|

area: production in progress priority: high

|

See https://chat.zulip.org/#narrow/stream/3-backend/topic/profiling.20get_events/near/816860 for profiling details, but basically, currently a get_events request spends 1.4ms/request talking to redis for our rate limiter, which is somewhere between 15% and 50% of the total request runtime (my measurement technique is susceptible to issues like the first request on a code path being extra expensive). Since get_events is our most scalability-critical endpoint, this is a big deal.

We should do some rethinking of the redis internals for our rate limiter. I have a few ideas:

* Writing an alternative rate-limiter implementation for `get_events `specifically that's entirely in-process and would be basically instant. Since the Tornado system has a relatively strong constraint that a given user always connect to the same server, this might be fairly cheap to implement and would bring that 1.4ms to probably 50us or less. (And gate it on `RUNNING_INSIDE_TORNADO`).

* Look at rewriting our redis transactions to be more efficient for the highest-traffic cases (E.g. user is not close to limit, or user is way over limit). E.g. maybe `incr_rateimit` should automatically return the `api_calls_left` result rather than requiring 2 transactions.

* Looking at https://github.com/popravich/python-redis-benchmark, there may be some alternative async IO redis clients we could consider migrating to, and possibly some that are just faster. Given how little code we have interacting with redis directly, this might be an easy port to do; I'm not sure whether or not it would help. (And unlike the in-process hack approach, this would have side benefits to non-Tornado endpoints).

|

1.0

|

Optimize rate_limiter performance for get_events queries - See https://chat.zulip.org/#narrow/stream/3-backend/topic/profiling.20get_events/near/816860 for profiling details, but basically, currently a get_events request spends 1.4ms/request talking to redis for our rate limiter, which is somewhere between 15% and 50% of the total request runtime (my measurement technique is susceptible to issues like the first request on a code path being extra expensive). Since get_events is our most scalability-critical endpoint, this is a big deal.

We should do some rethinking of the redis internals for our rate limiter. I have a few ideas:

* Writing an alternative rate-limiter implementation for `get_events `specifically that's entirely in-process and would be basically instant. Since the Tornado system has a relatively strong constraint that a given user always connect to the same server, this might be fairly cheap to implement and would bring that 1.4ms to probably 50us or less. (And gate it on `RUNNING_INSIDE_TORNADO`).

* Look at rewriting our redis transactions to be more efficient for the highest-traffic cases (E.g. user is not close to limit, or user is way over limit). E.g. maybe `incr_rateimit` should automatically return the `api_calls_left` result rather than requiring 2 transactions.

* Looking at https://github.com/popravich/python-redis-benchmark, there may be some alternative async IO redis clients we could consider migrating to, and possibly some that are just faster. Given how little code we have interacting with redis directly, this might be an easy port to do; I'm not sure whether or not it would help. (And unlike the in-process hack approach, this would have side benefits to non-Tornado endpoints).

|

non_test

|

optimize rate limiter performance for get events queries see for profiling details but basically currently a get events request spends request talking to redis for our rate limiter which is somewhere between and of the total request runtime my measurement technique is susceptible to issues like the first request on a code path being extra expensive since get events is our most scalability critical endpoint this is a big deal we should do some rethinking of the redis internals for our rate limiter i have a few ideas writing an alternative rate limiter implementation for get events specifically that s entirely in process and would be basically instant since the tornado system has a relatively strong constraint that a given user always connect to the same server this might be fairly cheap to implement and would bring that to probably or less and gate it on running inside tornado look at rewriting our redis transactions to be more efficient for the highest traffic cases e g user is not close to limit or user is way over limit e g maybe incr rateimit should automatically return the api calls left result rather than requiring transactions looking at there may be some alternative async io redis clients we could consider migrating to and possibly some that are just faster given how little code we have interacting with redis directly this might be an easy port to do i m not sure whether or not it would help and unlike the in process hack approach this would have side benefits to non tornado endpoints

| 0

|

4,195

| 4,968,932,741

|

IssuesEvent

|

2016-12-05 11:33:47

|

core-wg/oscoap

|

https://api.github.com/repos/core-wg/oscoap

|

closed

|

Replay window is an input parameter?

|

core-object-security-00

|

Section 3.2 - Why is the replay window an input - this seems to be odd as you would not pre-fill part of the window. I assume this is really just the Replay Window Size.

|

True

|

Replay window is an input parameter? - Section 3.2 - Why is the replay window an input - this seems to be odd as you would not pre-fill part of the window. I assume this is really just the Replay Window Size.

|

non_test

|

replay window is an input parameter section why is the replay window an input this seems to be odd as you would not pre fill part of the window i assume this is really just the replay window size

| 0

|

138,488

| 11,202,492,452

|

IssuesEvent

|

2020-01-04 12:59:02

|

searchkit/searchkit

|

https://api.github.com/repos/searchkit/searchkit

|

closed

|

rangeFormatter function not used in filter

|

2.3.0-9 Ready For Testing stale

|

<img width="286" alt="screenshot 2017-06-13 15 59 49" src="https://user-images.githubusercontent.com/7115982/27072441-f190638e-5052-11e7-9b15-1a5bb8c7018d.png">

<img width="433" alt="screenshot 2017-06-13 15 59 53" src="https://user-images.githubusercontent.com/7115982/27072443-f29c5710-5052-11e7-837b-43106a0e66c8.png">

rangeFormatter function used in rangeFilter but not used in top filter

|

1.0

|

rangeFormatter function not used in filter - <img width="286" alt="screenshot 2017-06-13 15 59 49" src="https://user-images.githubusercontent.com/7115982/27072441-f190638e-5052-11e7-9b15-1a5bb8c7018d.png">

<img width="433" alt="screenshot 2017-06-13 15 59 53" src="https://user-images.githubusercontent.com/7115982/27072443-f29c5710-5052-11e7-837b-43106a0e66c8.png">

rangeFormatter function used in rangeFilter but not used in top filter

|

test

|

rangeformatter function not used in filter img width alt screenshot src img width alt screenshot src rangeformatter function used in rangefilter but not used in top filter

| 1

|

263,587

| 28,047,514,574

|

IssuesEvent

|

2023-03-29 01:03:58

|

tabacws-sandbox/juice-shop-checkPR

|

https://api.github.com/repos/tabacws-sandbox/juice-shop-checkPR

|

closed

|

check-dependencies-1.1.0.tgz: 1 vulnerabilities (highest severity is: 9.8) - autoclosed

|

Mend: dependency security vulnerability

|

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>check-dependencies-1.1.0.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/set-value/package.json</p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/tabacws-sandbox/juice-shop-checkPR/commit/898e55dce59f24513206f629f1dd595ca468b56f">898e55dce59f24513206f629f1dd595ca468b56f</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (check-dependencies version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2021-23440](https://www.mend.io/vulnerability-database/CVE-2021-23440) | <img src='https://whitesource-resources.whitesourcesoftware.com/critical_vul.png' width=19 height=20> Critical | 9.8 | set-value-2.0.1.tgz | Transitive | N/A* | ❌ |

<p>*For some transitive vulnerabilities, there is no version of direct dependency with a fix. Check the "Details" section below to see if there is a version of transitive dependency where vulnerability is fixed.</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/critical_vul.png' width=19 height=20> CVE-2021-23440</summary>

### Vulnerable Library - <b>set-value-2.0.1.tgz</b></p>

<p>Create nested values and any intermediaries using dot notation (`'a.b.c'`) paths.</p>

<p>Library home page: <a href="https://registry.npmjs.org/set-value/-/set-value-2.0.1.tgz">https://registry.npmjs.org/set-value/-/set-value-2.0.1.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/set-value/package.json</p>

<p>

Dependency Hierarchy:

- check-dependencies-1.1.0.tgz (Root Library)

- findup-sync-2.0.0.tgz

- micromatch-3.1.10.tgz

- snapdragon-0.8.2.tgz

- base-0.11.2.tgz

- cache-base-1.0.1.tgz

- :x: **set-value-2.0.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/tabacws-sandbox/juice-shop-checkPR/commit/898e55dce59f24513206f629f1dd595ca468b56f">898e55dce59f24513206f629f1dd595ca468b56f</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

This affects the package set-value before <2.0.1, >=3.0.0 <4.0.1. A type confusion vulnerability can lead to a bypass of CVE-2019-10747 when the user-provided keys used in the path parameter are arrays.

Mend Note: After conducting further research, Mend has determined that all versions of set-value up to version 4.0.0 are vulnerable to CVE-2021-23440.

<p>Publish Date: 2021-09-12

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2021-23440>CVE-2021-23440</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>9.8</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2021-09-12</p>

<p>Fix Resolution: set-value - 4.0.1

</p>

</p>

<p></p>

</details>

|

True

|

check-dependencies-1.1.0.tgz: 1 vulnerabilities (highest severity is: 9.8) - autoclosed - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>check-dependencies-1.1.0.tgz</b></p></summary>

<p></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/set-value/package.json</p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/tabacws-sandbox/juice-shop-checkPR/commit/898e55dce59f24513206f629f1dd595ca468b56f">898e55dce59f24513206f629f1dd595ca468b56f</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (check-dependencies version) | Remediation Available |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [CVE-2021-23440](https://www.mend.io/vulnerability-database/CVE-2021-23440) | <img src='https://whitesource-resources.whitesourcesoftware.com/critical_vul.png' width=19 height=20> Critical | 9.8 | set-value-2.0.1.tgz | Transitive | N/A* | ❌ |

<p>*For some transitive vulnerabilities, there is no version of direct dependency with a fix. Check the "Details" section below to see if there is a version of transitive dependency where vulnerability is fixed.</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/critical_vul.png' width=19 height=20> CVE-2021-23440</summary>

### Vulnerable Library - <b>set-value-2.0.1.tgz</b></p>

<p>Create nested values and any intermediaries using dot notation (`'a.b.c'`) paths.</p>

<p>Library home page: <a href="https://registry.npmjs.org/set-value/-/set-value-2.0.1.tgz">https://registry.npmjs.org/set-value/-/set-value-2.0.1.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/set-value/package.json</p>

<p>

Dependency Hierarchy:

- check-dependencies-1.1.0.tgz (Root Library)

- findup-sync-2.0.0.tgz

- micromatch-3.1.10.tgz

- snapdragon-0.8.2.tgz

- base-0.11.2.tgz

- cache-base-1.0.1.tgz

- :x: **set-value-2.0.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/tabacws-sandbox/juice-shop-checkPR/commit/898e55dce59f24513206f629f1dd595ca468b56f">898e55dce59f24513206f629f1dd595ca468b56f</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

This affects the package set-value before <2.0.1, >=3.0.0 <4.0.1. A type confusion vulnerability can lead to a bypass of CVE-2019-10747 when the user-provided keys used in the path parameter are arrays.

Mend Note: After conducting further research, Mend has determined that all versions of set-value up to version 4.0.0 are vulnerable to CVE-2021-23440.

<p>Publish Date: 2021-09-12

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2021-23440>CVE-2021-23440</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>9.8</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Release Date: 2021-09-12</p>

<p>Fix Resolution: set-value - 4.0.1

</p>

</p>

<p></p>

</details>

|

non_test

|

check dependencies tgz vulnerabilities highest severity is autoclosed vulnerable library check dependencies tgz path to dependency file package json path to vulnerable library node modules set value package json found in head commit a href vulnerabilities cve severity cvss dependency type fixed in check dependencies version remediation available critical set value tgz transitive n a for some transitive vulnerabilities there is no version of direct dependency with a fix check the details section below to see if there is a version of transitive dependency where vulnerability is fixed details cve vulnerable library set value tgz create nested values and any intermediaries using dot notation a b c paths library home page a href path to dependency file package json path to vulnerable library node modules set value package json dependency hierarchy check dependencies tgz root library findup sync tgz micromatch tgz snapdragon tgz base tgz cache base tgz x set value tgz vulnerable library found in head commit a href found in base branch master vulnerability details this affects the package set value before a type confusion vulnerability can lead to a bypass of cve when the user provided keys used in the path parameter are arrays mend note after conducting further research mend has determined that all versions of set value up to version are vulnerable to cve publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version release date fix resolution set value

| 0

|

38,842

| 8,554,684,441

|

IssuesEvent

|

2018-11-08 07:32:00

|

openshiftio/openshift.io

|

https://api.github.com/repos/openshiftio/openshift.io

|

closed

|

Support Quick fixes with codeAction in case of CVEs flagged from LSP

|

area/analytics env/vs-code status/in-progress team/analytics type/user-story

|

### Planner Link : https://openshift.io/openshiftio/Openshift_io/plan/detail/948

Currently in VSCode Extension, we flag CVEs for any core dependencies, proposed would be to enable quick fixes to those with enabling codeAction from Language Server.

### Tasks:

- [x] Update language server and client version

- [x] Bind codeAction supportin Language Server

- [x] Register command in client to listen and apply edits

|

1.0

|

Support Quick fixes with codeAction in case of CVEs flagged from LSP - ### Planner Link : https://openshift.io/openshiftio/Openshift_io/plan/detail/948

Currently in VSCode Extension, we flag CVEs for any core dependencies, proposed would be to enable quick fixes to those with enabling codeAction from Language Server.

### Tasks:

- [x] Update language server and client version

- [x] Bind codeAction supportin Language Server

- [x] Register command in client to listen and apply edits

|

non_test

|

support quick fixes with codeaction in case of cves flagged from lsp planner link currently in vscode extension we flag cves for any core dependencies proposed would be to enable quick fixes to those with enabling codeaction from language server tasks update language server and client version bind codeaction supportin language server register command in client to listen and apply edits

| 0

|

245,192

| 20,751,961,035

|

IssuesEvent

|

2022-03-15 08:34:34

|

openvinotoolkit/openvino

|

https://api.github.com/repos/openvinotoolkit/openvino

|

closed

|

[Bug] (minor) test namespace ?

|

bug category: IE Tests support_request PSE

|

##### System information (version)

<!-- Example

- OpenVINO => 2020.4

- Operating System / Platform => Windows 64 Bit

- Compiler => Visual Studio 2017

- Problem classification: Model Conversion

- Framework: TensorFlow (if applicable)

- Model name: ResNet50 (if applicable)

-->

- OpenVINO=> :grey_question:

- Operating System / Platform => :grey_question:

- Compiler => :grey_question:

- Problem classification => :grey_question:

##### Detailed description

<!-- your description -->

I suppose this namespace was meant to be "LayerTest**s**Definitions", instead of "LayerTestDefinitions", to be aligned with the rest of the filters. I was getting an unexpected compile error and I barely noticed the missing 's':

https://github.com/openvinotoolkit/openvino/blob/e8d5cf43d0e153f4f52c7be133f71f65c9eb4512/src/tests/functional/shared_test_classes/include/shared_test_classes/single_layer/prior_box.hpp#L29

##### Steps to reproduce

<!--

Describe your problem and steps you've done before you got to this point.

to add code example fence it with triple backticks and optional file extension

```.cpp

// C++ code example

```

or attach as .txt or .zip file

-->

##### Issue submission checklist

- [x] I report the issue, it's not a question

<!--

OpenVINO team works with support forum, Stack Overflow and other communities

to discuss problems. Tickets with question without real issue statement will be

closed.

-->

- [ ] I checked the problem with documentation, FAQ, open issues, Stack Overflow, etc and have not found solution

<!--

Places to check:

* OpenVINO documentation: https://docs.openvinotoolkit.org/

* OpenVINO forum: https://community.intel.com/t5/Intel-Distribution-of-OpenVINO/bd-p/distribution-openvino-toolkit

* OpenVINO issue tracker: https://github.com/openvinotoolkit/openvino/issues?q=is%3Aissue

* Stack Overflow branch: https://stackoverflow.com/questions/tagged/openvino

-->

- [ ] There is reproducer code and related data files: images, videos, models, etc.

<!--

The best reproducer -- test case for OpenVINO that we can add to the library.

-->

|

1.0

|

[Bug] (minor) test namespace ? - ##### System information (version)

<!-- Example

- OpenVINO => 2020.4

- Operating System / Platform => Windows 64 Bit

- Compiler => Visual Studio 2017

- Problem classification: Model Conversion

- Framework: TensorFlow (if applicable)

- Model name: ResNet50 (if applicable)

-->

- OpenVINO=> :grey_question:

- Operating System / Platform => :grey_question:

- Compiler => :grey_question:

- Problem classification => :grey_question:

##### Detailed description

<!-- your description -->

I suppose this namespace was meant to be "LayerTest**s**Definitions", instead of "LayerTestDefinitions", to be aligned with the rest of the filters. I was getting an unexpected compile error and I barely noticed the missing 's':

https://github.com/openvinotoolkit/openvino/blob/e8d5cf43d0e153f4f52c7be133f71f65c9eb4512/src/tests/functional/shared_test_classes/include/shared_test_classes/single_layer/prior_box.hpp#L29

##### Steps to reproduce

<!--

Describe your problem and steps you've done before you got to this point.

to add code example fence it with triple backticks and optional file extension

```.cpp

// C++ code example

```

or attach as .txt or .zip file

-->

##### Issue submission checklist

- [x] I report the issue, it's not a question

<!--

OpenVINO team works with support forum, Stack Overflow and other communities

to discuss problems. Tickets with question without real issue statement will be

closed.

-->

- [ ] I checked the problem with documentation, FAQ, open issues, Stack Overflow, etc and have not found solution

<!--

Places to check:

* OpenVINO documentation: https://docs.openvinotoolkit.org/

* OpenVINO forum: https://community.intel.com/t5/Intel-Distribution-of-OpenVINO/bd-p/distribution-openvino-toolkit

* OpenVINO issue tracker: https://github.com/openvinotoolkit/openvino/issues?q=is%3Aissue

* Stack Overflow branch: https://stackoverflow.com/questions/tagged/openvino

-->

- [ ] There is reproducer code and related data files: images, videos, models, etc.

<!--

The best reproducer -- test case for OpenVINO that we can add to the library.

-->

|

test

|

minor test namespace system information version example openvino operating system platform windows bit compiler visual studio problem classification model conversion framework tensorflow if applicable model name if applicable openvino grey question operating system platform grey question compiler grey question problem classification grey question detailed description i suppose this namespace was meant to be layertest s definitions instead of layertestdefinitions to be aligned with the rest of the filters i was getting an unexpected compile error and i barely noticed the missing s steps to reproduce describe your problem and steps you ve done before you got to this point to add code example fence it with triple backticks and optional file extension cpp c code example or attach as txt or zip file issue submission checklist i report the issue it s not a question openvino team works with support forum stack overflow and other communities to discuss problems tickets with question without real issue statement will be closed i checked the problem with documentation faq open issues stack overflow etc and have not found solution places to check openvino documentation openvino forum openvino issue tracker stack overflow branch there is reproducer code and related data files images videos models etc the best reproducer test case for openvino that we can add to the library

| 1

|

654,339

| 21,648,352,441

|

IssuesEvent

|

2022-05-06 06:27:33

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

video.gazzetta.it - video or audio doesn't play

|

priority-normal browser-focus-geckoview engine-gecko

|

<!-- @browser: Firefox Mobile 100.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:100.0) Gecko/100.0 Firefox/100.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/103955 -->