Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

381,994

| 11,299,254,814

|

IssuesEvent

|

2020-01-17 10:47:01

|

bryntum/support

|

https://api.github.com/repos/bryntum/support

|

closed

|

Cannot save unscheduled task with ENTER key

|

bug high-priority resolved

|

Await https://github.com/bryntum/bryntum-suite/pull/387 or try this in that branch (where duration field can be empty and still valid)

In basic demo, run:

```

const added = gantt.taskStore.rootNode.appendChild({ name : 'New' });

// run propagation to calculate new task fields

await gantt.project.propagate();

gantt.editTask(added);

```

Change name, ENTER key.

|

1.0

|

Cannot save unscheduled task with ENTER key - Await https://github.com/bryntum/bryntum-suite/pull/387 or try this in that branch (where duration field can be empty and still valid)

In basic demo, run:

```

const added = gantt.taskStore.rootNode.appendChild({ name : 'New' });

// run propagation to calculate new task fields

await gantt.project.propagate();

gantt.editTask(added);

```

Change name, ENTER key.

|

non_test

|

cannot save unscheduled task with enter key await or try this in that branch where duration field can be empty and still valid in basic demo run const added gantt taskstore rootnode appendchild name new run propagation to calculate new task fields await gantt project propagate gantt edittask added change name enter key

| 0

|

245,550

| 20,777,114,133

|

IssuesEvent

|

2022-03-16 11:32:20

|

zephyrproject-rtos/zephyr

|

https://api.github.com/repos/zephyrproject-rtos/zephyr

|

closed

|

tests: cmsis_dsp: rf16 and cf16 tests are not executed on Native POSIX

|

bug priority: low area: Tests area: CMSIS-DSP

|

**Describe the bug**

Following test scenarios:

* `libraries.cmsis_dsp.transform.cf16`

* `libraries.cmsis_dsp.transform.cf16.fpu`

* `libraries.cmsis_dsp.transform.rf16`

* `libraries.cmsis_dsp.transform.rf16.fpu`

From this directory:

`tests/lib/cmsis_dsp/transform/`

are not executed - only `PROJECT EXECUTION SUCCESSFUL` is printed.

Problem concerns only `native_posix` platform.

On `mps2_an521` and `mps2_an521_remote` platforms everything works properly.

**To Reproduce**

Steps to reproduce the behavior:

1. Run Twister by this command:

`./scripts/twister -p native_posix -T tests/lib/cmsis_dsp/transform/`

3. Analyze twister.log file - especially execution of `rf16` and `cf16` tests.

**Expected behavior**

Tests should be executed - not only printing of `PROJECT EXECUTION SUCCESSFUL` information.

**Impact**

At this moment those tests are marked as `PASS` what is misleading information, because they aren't even executed.

**Logs and console output**

```

2022-03-15 17:14:39,977 - twister - DEBUG - Spawning BinaryHandler Thread for native_posix/tests/lib/cmsis_dsp/transform/libraries.cmsis_dsp.transform.cf16.fpu

2022-03-15 17:14:39,978 - twister - DEBUG - OUTPUT: *** Booting Zephyr OS build zephyr-v3.0.0-884-gadc901aa6a39 ***

2022-03-15 17:14:39,978 - twister - DEBUG - OUTPUT: ===================================================================

2022-03-15 17:14:39,979 - twister - DEBUG - OUTPUT: PROJECT EXECUTION SUCCESSFUL

2022-03-15 17:14:39,979 - twister - DEBUG - OUTPUT:

```

**Environment (please complete the following information):**

- OS: Linux

- Toolchain: Zephyr SDK

- Commit SHA: adc901aa6a39caffd7971ff99456b26414cd3793

**Additional context**

Similar problem occurred here:

https://github.com/zephyrproject-rtos/zephyr/issues/42396

But I verified, that those tests do not work since the beginning - since they was added in those commits:

https://github.com/zephyrproject-rtos/zephyr/commit/600ca01464ef253097644f2fc4dd0f3f2a3f5087

https://github.com/zephyrproject-rtos/zephyr/commit/6547025bc19b0bfd6078b3e76ba54b1193de9196

So, the source of problem is probably not connected with changes introduced in ZTest API in October last year.

This problem was discovered during review of this PR:

https://github.com/zephyrproject-rtos/zephyr/pull/42482

Enhancement proposed in this PR could help to avoid this situation, due to the additional verification of printed test suite name.

If everything works properly on QEMU platforms, then perhaps it should be considered to remove support for Native POSIX platform for those tests?

|

1.0

|

tests: cmsis_dsp: rf16 and cf16 tests are not executed on Native POSIX - **Describe the bug**

Following test scenarios:

* `libraries.cmsis_dsp.transform.cf16`

* `libraries.cmsis_dsp.transform.cf16.fpu`

* `libraries.cmsis_dsp.transform.rf16`

* `libraries.cmsis_dsp.transform.rf16.fpu`

From this directory:

`tests/lib/cmsis_dsp/transform/`

are not executed - only `PROJECT EXECUTION SUCCESSFUL` is printed.

Problem concerns only `native_posix` platform.

On `mps2_an521` and `mps2_an521_remote` platforms everything works properly.

**To Reproduce**

Steps to reproduce the behavior:

1. Run Twister by this command:

`./scripts/twister -p native_posix -T tests/lib/cmsis_dsp/transform/`

3. Analyze twister.log file - especially execution of `rf16` and `cf16` tests.

**Expected behavior**

Tests should be executed - not only printing of `PROJECT EXECUTION SUCCESSFUL` information.

**Impact**

At this moment those tests are marked as `PASS` what is misleading information, because they aren't even executed.

**Logs and console output**

```

2022-03-15 17:14:39,977 - twister - DEBUG - Spawning BinaryHandler Thread for native_posix/tests/lib/cmsis_dsp/transform/libraries.cmsis_dsp.transform.cf16.fpu

2022-03-15 17:14:39,978 - twister - DEBUG - OUTPUT: *** Booting Zephyr OS build zephyr-v3.0.0-884-gadc901aa6a39 ***

2022-03-15 17:14:39,978 - twister - DEBUG - OUTPUT: ===================================================================

2022-03-15 17:14:39,979 - twister - DEBUG - OUTPUT: PROJECT EXECUTION SUCCESSFUL

2022-03-15 17:14:39,979 - twister - DEBUG - OUTPUT:

```

**Environment (please complete the following information):**

- OS: Linux

- Toolchain: Zephyr SDK

- Commit SHA: adc901aa6a39caffd7971ff99456b26414cd3793

**Additional context**

Similar problem occurred here:

https://github.com/zephyrproject-rtos/zephyr/issues/42396

But I verified, that those tests do not work since the beginning - since they was added in those commits:

https://github.com/zephyrproject-rtos/zephyr/commit/600ca01464ef253097644f2fc4dd0f3f2a3f5087

https://github.com/zephyrproject-rtos/zephyr/commit/6547025bc19b0bfd6078b3e76ba54b1193de9196

So, the source of problem is probably not connected with changes introduced in ZTest API in October last year.

This problem was discovered during review of this PR:

https://github.com/zephyrproject-rtos/zephyr/pull/42482

Enhancement proposed in this PR could help to avoid this situation, due to the additional verification of printed test suite name.

If everything works properly on QEMU platforms, then perhaps it should be considered to remove support for Native POSIX platform for those tests?

|

test

|

tests cmsis dsp and tests are not executed on native posix describe the bug following test scenarios libraries cmsis dsp transform libraries cmsis dsp transform fpu libraries cmsis dsp transform libraries cmsis dsp transform fpu from this directory tests lib cmsis dsp transform are not executed only project execution successful is printed problem concerns only native posix platform on and remote platforms everything works properly to reproduce steps to reproduce the behavior run twister by this command scripts twister p native posix t tests lib cmsis dsp transform analyze twister log file especially execution of and tests expected behavior tests should be executed not only printing of project execution successful information impact at this moment those tests are marked as pass what is misleading information because they aren t even executed logs and console output twister debug spawning binaryhandler thread for native posix tests lib cmsis dsp transform libraries cmsis dsp transform fpu twister debug output booting zephyr os build zephyr twister debug output twister debug output project execution successful twister debug output environment please complete the following information os linux toolchain zephyr sdk commit sha additional context similar problem occurred here but i verified that those tests do not work since the beginning since they was added in those commits so the source of problem is probably not connected with changes introduced in ztest api in october last year this problem was discovered during review of this pr enhancement proposed in this pr could help to avoid this situation due to the additional verification of printed test suite name if everything works properly on qemu platforms then perhaps it should be considered to remove support for native posix platform for those tests

| 1

|

41,910

| 5,408,907,166

|

IssuesEvent

|

2017-03-01 01:44:33

|

nwjs/nw.js

|

https://api.github.com/repos/nwjs/nw.js

|

closed

|

webview does not work correctly when "node" added to Chrome app manifest

|

bug P2 test-todo triaged

|

When "node" is added in the permissions in manifest.json in a Chrome App, a webview is not working immediately after the app has started. For example, the request handlers are undefined.

If you add a delay of say 500 ms, it works.

In the attached example it will print the stringified request handlers of the webview 1) just after startup and 2) after a delay. Only the delayed print will show the handlers.

If you remove node from the permissions, both are printed out correctly.

It is probably the whole UI that is delayed by adding node permission.

Also, the font changes when adding node to permissions. Why?

Sample tested in nwjs-sdk-v0.20.3-linux-x64 on Linux Mint 18.

[webviewtest.zip](https://github.com/nwjs/nw.js/files/801561/webviewtest.zip)

|

1.0

|

webview does not work correctly when "node" added to Chrome app manifest - When "node" is added in the permissions in manifest.json in a Chrome App, a webview is not working immediately after the app has started. For example, the request handlers are undefined.

If you add a delay of say 500 ms, it works.

In the attached example it will print the stringified request handlers of the webview 1) just after startup and 2) after a delay. Only the delayed print will show the handlers.

If you remove node from the permissions, both are printed out correctly.

It is probably the whole UI that is delayed by adding node permission.

Also, the font changes when adding node to permissions. Why?

Sample tested in nwjs-sdk-v0.20.3-linux-x64 on Linux Mint 18.

[webviewtest.zip](https://github.com/nwjs/nw.js/files/801561/webviewtest.zip)

|

test

|

webview does not work correctly when node added to chrome app manifest when node is added in the permissions in manifest json in a chrome app a webview is not working immediately after the app has started for example the request handlers are undefined if you add a delay of say ms it works in the attached example it will print the stringified request handlers of the webview just after startup and after a delay only the delayed print will show the handlers if you remove node from the permissions both are printed out correctly it is probably the whole ui that is delayed by adding node permission also the font changes when adding node to permissions why sample tested in nwjs sdk linux on linux mint

| 1

|

82,266

| 10,237,650,035

|

IssuesEvent

|

2019-08-19 14:19:20

|

Shopify/polaris-react

|

https://api.github.com/repos/Shopify/polaris-react

|

closed

|

[Button] Add “pressed” state

|

⚗️ Development 🎨 Design

|

## Problem

Sometimes buttons are used as a radio button, usually in a button group with segmented buttons but potentially on their own (as a toggle).

This has been implemented in polaris-rails: https://github.com/Shopify/polaris-ux/issues/58

## Examples

<img width="315" alt="screen shot 2017-10-12 at 6 21 06 pm" src="https://user-images.githubusercontent.com/804014/31557049-5590d798-b015-11e7-92a0-bc040d5d19af.png">

Re-created from the previous polaris-react repo.

|

1.0

|

[Button] Add “pressed” state - ## Problem

Sometimes buttons are used as a radio button, usually in a button group with segmented buttons but potentially on their own (as a toggle).

This has been implemented in polaris-rails: https://github.com/Shopify/polaris-ux/issues/58

## Examples

<img width="315" alt="screen shot 2017-10-12 at 6 21 06 pm" src="https://user-images.githubusercontent.com/804014/31557049-5590d798-b015-11e7-92a0-bc040d5d19af.png">

Re-created from the previous polaris-react repo.

|

non_test

|

add “pressed” state problem sometimes buttons are used as a radio button usually in a button group with segmented buttons but potentially on their own as a toggle this has been implemented in polaris rails examples img width alt screen shot at pm src re created from the previous polaris react repo

| 0

|

124,321

| 12,228,657,953

|

IssuesEvent

|

2020-05-03 20:22:23

|

brunoarueira/rss-hub-backend

|

https://api.github.com/repos/brunoarueira/rss-hub-backend

|

closed

|

Improve README

|

documentation

|

The README needs some attention to describe which is the purpose of this project, which technologies will be used and how someone can contribute to.

|

1.0

|

Improve README - The README needs some attention to describe which is the purpose of this project, which technologies will be used and how someone can contribute to.

|

non_test

|

improve readme the readme needs some attention to describe which is the purpose of this project which technologies will be used and how someone can contribute to

| 0

|

48,406

| 20,144,144,686

|

IssuesEvent

|

2022-02-09 04:37:13

|

Solemates-Turing2108/frontend

|

https://api.github.com/repos/Solemates-Turing2108/frontend

|

opened

|

service: API

|

service

|

A service to be used throughout the app to provide interfacing between the front end and back end.

AC:

- Functions/Methods are used to avoid components touching business logic

|

1.0

|

service: API - A service to be used throughout the app to provide interfacing between the front end and back end.

AC:

- Functions/Methods are used to avoid components touching business logic

|

non_test

|

service api a service to be used throughout the app to provide interfacing between the front end and back end ac functions methods are used to avoid components touching business logic

| 0

|

155,920

| 19,803,121,613

|

IssuesEvent

|

2022-01-19 01:31:11

|

ChoeMinji/HtmlUnit-2.37.0

|

https://api.github.com/repos/ChoeMinji/HtmlUnit-2.37.0

|

opened

|

CVE-2022-23307 (Medium) detected in log4j-1.2.12.jar

|

security vulnerability

|

## CVE-2022-23307 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-1.2.12.jar</b></p></summary>

<p></p>

<p>Path to vulnerable library: /src/test/resources/libraries/DWR/2.0.5/WEB-INF/lib/log4j-1.2.12.jar</p>

<p>

Dependency Hierarchy:

- :x: **log4j-1.2.12.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

CVE-2020-9493 identified a deserialization issue that was present in Apache Chainsaw. Prior to Chainsaw V2.0 Chainsaw was a component of Apache Log4j 1.2.x where the same issue exists.

<p>Publish Date: 2022-01-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-23307>CVE-2022-23307</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2022-23307 (Medium) detected in log4j-1.2.12.jar - ## CVE-2022-23307 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-1.2.12.jar</b></p></summary>

<p></p>

<p>Path to vulnerable library: /src/test/resources/libraries/DWR/2.0.5/WEB-INF/lib/log4j-1.2.12.jar</p>

<p>

Dependency Hierarchy:

- :x: **log4j-1.2.12.jar** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

CVE-2020-9493 identified a deserialization issue that was present in Apache Chainsaw. Prior to Chainsaw V2.0 Chainsaw was a component of Apache Log4j 1.2.x where the same issue exists.

<p>Publish Date: 2022-01-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2022-23307>CVE-2022-23307</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve medium detected in jar cve medium severity vulnerability vulnerable library jar path to vulnerable library src test resources libraries dwr web inf lib jar dependency hierarchy x jar vulnerable library found in base branch master vulnerability details cve identified a deserialization issue that was present in apache chainsaw prior to chainsaw chainsaw was a component of apache x where the same issue exists publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href step up your open source security game with whitesource

| 0

|

311,131

| 26,770,005,942

|

IssuesEvent

|

2023-01-31 13:29:04

|

SUNET/eduid-front

|

https://api.github.com/repos/SUNET/eduid-front

|

closed

|

DASHBOARD NAV: notification tips text state for unit test

|

testing

|

#### Description of issue:

To ensure correct data for different conditions, we will add a DashboardNav-test

|

1.0

|

DASHBOARD NAV: notification tips text state for unit test - #### Description of issue:

To ensure correct data for different conditions, we will add a DashboardNav-test

|

test

|

dashboard nav notification tips text state for unit test description of issue to ensure correct data for different conditions we will add a dashboardnav test

| 1

|

83,183

| 10,329,920,510

|

IssuesEvent

|

2019-09-02 13:25:57

|

vector-im/riotX-android

|

https://api.github.com/repos/vector-im/riotX-android

|

opened

|

blacklist/unblacklist devices

|

feature:e2e legacy-feature need-design

|

In stabilization because it's a missing functionality regarding other Riot clients

|

1.0

|

blacklist/unblacklist devices - In stabilization because it's a missing functionality regarding other Riot clients

|

non_test

|

blacklist unblacklist devices in stabilization because it s a missing functionality regarding other riot clients

| 0

|

104,558

| 22,691,523,909

|

IssuesEvent

|

2022-07-04 21:12:28

|

vatro/svelthree

|

https://api.github.com/repos/vatro/svelthree

|

opened

|

`SvelthreeInteraction` properly type emitted `CustomEvents`

|

general interaction code quality

|

Currently those are just `CustomEvents` not revealing anything about the contents of the `detail` object.

|

1.0

|

`SvelthreeInteraction` properly type emitted `CustomEvents` - Currently those are just `CustomEvents` not revealing anything about the contents of the `detail` object.

|

non_test

|

svelthreeinteraction properly type emitted customevents currently those are just customevents not revealing anything about the contents of the detail object

| 0

|

148,771

| 11,864,417,190

|

IssuesEvent

|

2020-03-25 21:38:12

|

brave/brave-browser

|

https://api.github.com/repos/brave/brave-browser

|

opened

|

Netflix does not work due to Widevine Content Decryption Module error in brave://components

|

OS/Windows QA/Test-Plan-Specified QA/Yes intermittent-issue plugin/Widevine

|

Follow up to https://github.com/brave/brave-browser/issues/4646

We do not recover gracefully when `Widevine Content Decryption Module` fails to download.

Browser needs to be restarted to trigger the update.

## Steps to Reproduce

<!--Please add a series of steps to reproduce the issue-->

1. Clean profile

2. Navigate to netflix.com and login.

3. Make `Widevine Content Decryption Module` fail to download (TODO: Determine how)

4. Open `brave://components` and check `Widevine Content Decryption Module` status

5. Stream a video

## Actual result:

<!--Please add screenshots if needed-->

`Widevine Content Decryption Module` error and the module stays at version 0.0.0.0

## Expected result:

`Widevine Content Decryption Module`

## Reproduces how often:

<!--[Easily reproduced/Intermittent issue/No steps to reproduce]-->

10% repro rate

## Brave version (brave://version info)

<!--For installed build, please copy Brave, Revision and OS from brave://version and paste here. If building from source please mention it along with brave://version details-->

Brave | 1.8.36 Chromium: 81.0.4044.69 (Official Build) nightly (64-bit)

-- | --

Revision | 6813546031a4bc83f717a2ef7cd4ac6ec1199132-refs/branch-heads/4044@{#776}

OS | Windows 7 Service Pack 1 (Build 7601.24544)

cc @simonhong @brave/legacy_qa @bsclifton

|

1.0

|

Netflix does not work due to Widevine Content Decryption Module error in brave://components - Follow up to https://github.com/brave/brave-browser/issues/4646

We do not recover gracefully when `Widevine Content Decryption Module` fails to download.

Browser needs to be restarted to trigger the update.

## Steps to Reproduce

<!--Please add a series of steps to reproduce the issue-->

1. Clean profile

2. Navigate to netflix.com and login.

3. Make `Widevine Content Decryption Module` fail to download (TODO: Determine how)

4. Open `brave://components` and check `Widevine Content Decryption Module` status

5. Stream a video

## Actual result:

<!--Please add screenshots if needed-->

`Widevine Content Decryption Module` error and the module stays at version 0.0.0.0

## Expected result:

`Widevine Content Decryption Module`

## Reproduces how often:

<!--[Easily reproduced/Intermittent issue/No steps to reproduce]-->

10% repro rate

## Brave version (brave://version info)

<!--For installed build, please copy Brave, Revision and OS from brave://version and paste here. If building from source please mention it along with brave://version details-->

Brave | 1.8.36 Chromium: 81.0.4044.69 (Official Build) nightly (64-bit)

-- | --

Revision | 6813546031a4bc83f717a2ef7cd4ac6ec1199132-refs/branch-heads/4044@{#776}

OS | Windows 7 Service Pack 1 (Build 7601.24544)

cc @simonhong @brave/legacy_qa @bsclifton

|

test

|

netflix does not work due to widevine content decryption module error in brave components follow up to we do not recover gracefully when widevine content decryption module fails to download browser needs to be restarted to trigger the update steps to reproduce clean profile navigate to netflix com and login make widevine content decryption module fail to download todo determine how open brave components and check widevine content decryption module status stream a video actual result widevine content decryption module error and the module stays at version expected result widevine content decryption module reproduces how often repro rate brave version brave version info brave chromium official build nightly bit revision refs branch heads os windows service pack build cc simonhong brave legacy qa bsclifton

| 1

|

292,352

| 25,206,805,590

|

IssuesEvent

|

2022-11-13 19:35:45

|

MinhazMurks/Bannerlord.Tweaks

|

https://api.github.com/repos/MinhazMurks/Bannerlord.Tweaks

|

opened

|

Test Quality of Recruitment Balancing

|

testing

|

Test to see if tweak: "Quality of Recruitment Balancing" works

|

1.0

|

Test Quality of Recruitment Balancing - Test to see if tweak: "Quality of Recruitment Balancing" works

|

test

|

test quality of recruitment balancing test to see if tweak quality of recruitment balancing works

| 1

|

184,646

| 14,289,809,560

|

IssuesEvent

|

2020-11-23 19:51:46

|

github-vet/rangeclosure-findings

|

https://api.github.com/repos/github-vet/rangeclosure-findings

|

closed

|

barakmich/go_sse2: src/sync/atomic/value_test.go; 30 LoC

|

fresh small test

|

Found a possible issue in [barakmich/go_sse2](https://www.github.com/barakmich/go_sse2) at [src/sync/atomic/value_test.go](https://github.com/barakmich/go_sse2/blob/a6a26455d4f4f81cfe89b1ec3261da5b80fa96aa/src/sync/atomic/value_test.go#L92-L121)

The below snippet of Go code triggered static analysis which searches for goroutines and/or defer statements

which capture loop variables.

[Click here to see the code in its original context.](https://github.com/barakmich/go_sse2/blob/a6a26455d4f4f81cfe89b1ec3261da5b80fa96aa/src/sync/atomic/value_test.go#L92-L121)

<details>

<summary>Click here to show the 30 line(s) of Go which triggered the analyzer.</summary>

```go

for _, test := range tests {

var v Value

done := make(chan bool, p)

for i := 0; i < p; i++ {

go func() {

r := rand.New(rand.NewSource(rand.Int63()))

expected := true

loop:

for j := 0; j < N; j++ {

x := test[r.Intn(len(test))]

v.Store(x)

x = v.Load()

for _, x1 := range test {

if x == x1 {

continue loop

}

}

t.Logf("loaded unexpected value %+v, want %+v", x, test)

expected = false

break

}

done <- expected

}()

}

for i := 0; i < p; i++ {

if !<-done {

t.FailNow()

}

}

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: a6a26455d4f4f81cfe89b1ec3261da5b80fa96aa

|

1.0

|

barakmich/go_sse2: src/sync/atomic/value_test.go; 30 LoC -

Found a possible issue in [barakmich/go_sse2](https://www.github.com/barakmich/go_sse2) at [src/sync/atomic/value_test.go](https://github.com/barakmich/go_sse2/blob/a6a26455d4f4f81cfe89b1ec3261da5b80fa96aa/src/sync/atomic/value_test.go#L92-L121)

The below snippet of Go code triggered static analysis which searches for goroutines and/or defer statements

which capture loop variables.

[Click here to see the code in its original context.](https://github.com/barakmich/go_sse2/blob/a6a26455d4f4f81cfe89b1ec3261da5b80fa96aa/src/sync/atomic/value_test.go#L92-L121)

<details>

<summary>Click here to show the 30 line(s) of Go which triggered the analyzer.</summary>

```go

for _, test := range tests {

var v Value

done := make(chan bool, p)

for i := 0; i < p; i++ {

go func() {

r := rand.New(rand.NewSource(rand.Int63()))

expected := true

loop:

for j := 0; j < N; j++ {

x := test[r.Intn(len(test))]

v.Store(x)

x = v.Load()

for _, x1 := range test {

if x == x1 {

continue loop

}

}

t.Logf("loaded unexpected value %+v, want %+v", x, test)

expected = false

break

}

done <- expected

}()

}

for i := 0; i < p; i++ {

if !<-done {

t.FailNow()

}

}

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: a6a26455d4f4f81cfe89b1ec3261da5b80fa96aa

|

test

|

barakmich go src sync atomic value test go loc found a possible issue in at the below snippet of go code triggered static analysis which searches for goroutines and or defer statements which capture loop variables click here to show the line s of go which triggered the analyzer go for test range tests var v value done make chan bool p for i i p i go func r rand new rand newsource rand expected true loop for j j n j x test v store x x v load for range test if x continue loop t logf loaded unexpected value v want v x test expected false break done expected for i i p i if done t failnow leave a reaction on this issue to contribute to the project by classifying this instance as a bug mitigated or desirable behavior rocket see the descriptions of the classifications for more information commit id

| 1

|

5,144

| 2,762,960,904

|

IssuesEvent

|

2015-04-29 04:26:23

|

TricksterGuy/complx

|

https://api.github.com/repos/TricksterGuy/complx

|

opened

|

Write a LC3Test Xml Generator [for complx]

|

complx feature request job lc3test

|

This is actually two jobs.

1. A simple command line interface that just generates the test xml files.

Design it however you want some ideas

* You can read the .asm file and automatically determine what the input and output is via looking at the symbol table and then ask the user for test input and output values

* Just ask the user several questions (like input symbol name, what is the type of it, etc) and then ask for test input output values

2. Integrating this in complx

|

1.0

|

Write a LC3Test Xml Generator [for complx] - This is actually two jobs.

1. A simple command line interface that just generates the test xml files.

Design it however you want some ideas

* You can read the .asm file and automatically determine what the input and output is via looking at the symbol table and then ask the user for test input and output values

* Just ask the user several questions (like input symbol name, what is the type of it, etc) and then ask for test input output values

2. Integrating this in complx

|

test

|

write a xml generator this is actually two jobs a simple command line interface that just generates the test xml files design it however you want some ideas you can read the asm file and automatically determine what the input and output is via looking at the symbol table and then ask the user for test input and output values just ask the user several questions like input symbol name what is the type of it etc and then ask for test input output values integrating this in complx

| 1

|

181,288

| 14,014,296,971

|

IssuesEvent

|

2020-10-29 11:42:11

|

publiclab/image-sequencer

|

https://api.github.com/repos/publiclab/image-sequencer

|

closed

|

adding tests for CLI functionality - brainstorming

|

discussion help wanted testing

|

Trying to think through the best way to do better CLI testing. Right now we have only: https://github.com/publiclab/image-sequencer/blob/440c3e0ad0ab081bdcc7dfff0d000f52a0902035/test/core/cli.js which is pretty non-existent.

Noting that we encountered this in https://github.com/publiclab/image-sequencer/issues/659 but did not at that time implement tests.

Ok, so I had thought this was the way to go, installing https://github.com/sstephenson/bats (blog https://medium.com/@pimterry/testing-your-shell-scripts-with-bats-abfca9bdc5b9) and comparing images like perhaps:

```js

looksSame = require('looks-same');

looksSame(process.argv[2], process.argv[3], function(error, {equal}) {

// equal will be true, if images looks the same

console.log(equal ? 1 : 0);

});

```

But actually maybe it's just easier to use one of our existing JS tests and running our CLI tests from inside node, like this:

```js

const { exec } = require('child_process');

var yourscript = exec('sh hi.sh',

(error, stdout, stderr) => {

console.log(stdout);

console.log(stderr);

if (error !== null) {

console.log(`exec error: ${error}`);

}

});

```

https://stackoverflow.com/questions/44647778/how-to-run-shell-script-file-using-nodejs#44667294 has some guidance on this.

Is there a standard way to write CLI tests for `commander.js`?

UPDATE: yes, it looks like there are some good ways: https://github.com/tj/commander.js/issues/438

|

1.0

|

adding tests for CLI functionality - brainstorming - Trying to think through the best way to do better CLI testing. Right now we have only: https://github.com/publiclab/image-sequencer/blob/440c3e0ad0ab081bdcc7dfff0d000f52a0902035/test/core/cli.js which is pretty non-existent.

Noting that we encountered this in https://github.com/publiclab/image-sequencer/issues/659 but did not at that time implement tests.

Ok, so I had thought this was the way to go, installing https://github.com/sstephenson/bats (blog https://medium.com/@pimterry/testing-your-shell-scripts-with-bats-abfca9bdc5b9) and comparing images like perhaps:

```js

looksSame = require('looks-same');

looksSame(process.argv[2], process.argv[3], function(error, {equal}) {

// equal will be true, if images looks the same

console.log(equal ? 1 : 0);

});

```

But actually maybe it's just easier to use one of our existing JS tests and running our CLI tests from inside node, like this:

```js

const { exec } = require('child_process');

var yourscript = exec('sh hi.sh',

(error, stdout, stderr) => {

console.log(stdout);

console.log(stderr);

if (error !== null) {

console.log(`exec error: ${error}`);

}

});

```

https://stackoverflow.com/questions/44647778/how-to-run-shell-script-file-using-nodejs#44667294 has some guidance on this.

Is there a standard way to write CLI tests for `commander.js`?

UPDATE: yes, it looks like there are some good ways: https://github.com/tj/commander.js/issues/438

|

test

|

adding tests for cli functionality brainstorming trying to think through the best way to do better cli testing right now we have only which is pretty non existent noting that we encountered this in but did not at that time implement tests ok so i had thought this was the way to go installing blog and comparing images like perhaps js lookssame require looks same lookssame process argv process argv function error equal equal will be true if images looks the same console log equal but actually maybe it s just easier to use one of our existing js tests and running our cli tests from inside node like this js const exec require child process var yourscript exec sh hi sh error stdout stderr console log stdout console log stderr if error null console log exec error error has some guidance on this is there a standard way to write cli tests for commander js update yes it looks like there are some good ways

| 1

|

140,311

| 11,309,157,740

|

IssuesEvent

|

2020-01-19 11:14:22

|

qmetry/qaf

|

https://api.github.com/repos/qmetry/qaf

|

closed

|

Random data and expression in variable interpolation support

|

configuration feature testdata

|

Till 2.1.15, variable interpolation looks up for available properties. With this feature it should support random value and expression as variable/parameter in addition to property as variable.

Examples:

```

${rnd:aaa-aaa-aaa}

${rnd:99999}

${expr:java.time.Instant.now()}

${expr:java.lang.System.currentTimeMillis()}

${expr:java.util.UUID.randomUUID()}

${expr:com.qmetry.qaf.automation.util.DateUtil.getDate(0, 'MM/dd/yyyy')}

```

|

1.0

|

Random data and expression in variable interpolation support - Till 2.1.15, variable interpolation looks up for available properties. With this feature it should support random value and expression as variable/parameter in addition to property as variable.

Examples:

```

${rnd:aaa-aaa-aaa}

${rnd:99999}

${expr:java.time.Instant.now()}

${expr:java.lang.System.currentTimeMillis()}

${expr:java.util.UUID.randomUUID()}

${expr:com.qmetry.qaf.automation.util.DateUtil.getDate(0, 'MM/dd/yyyy')}

```

|

test

|

random data and expression in variable interpolation support till variable interpolation looks up for available properties with this feature it should support random value and expression as variable parameter in addition to property as variable examples rnd aaa aaa aaa rnd expr java time instant now expr java lang system currenttimemillis expr java util uuid randomuuid expr com qmetry qaf automation util dateutil getdate mm dd yyyy

| 1

|

195,405

| 14,728,309,866

|

IssuesEvent

|

2021-01-06 09:47:08

|

OpenPaaS-Suite/esn-frontend-inbox

|

https://api.github.com/repos/OpenPaaS-Suite/esn-frontend-inbox

|

closed

|

As a user, I want to see my folders list in a tree view

|

QA:Testing enhancement

|

Use a feature flag, and user can enable the tree view in configuration. This feature flag is recorded in broswer's localstorage.

|

1.0

|

As a user, I want to see my folders list in a tree view - Use a feature flag, and user can enable the tree view in configuration. This feature flag is recorded in broswer's localstorage.

|

test

|

as a user i want to see my folders list in a tree view use a feature flag and user can enable the tree view in configuration this feature flag is recorded in broswer s localstorage

| 1

|

261,395

| 22,743,253,716

|

IssuesEvent

|

2022-07-07 06:47:34

|

kubernetes-sigs/cluster-api

|

https://api.github.com/repos/kubernetes-sigs/cluster-api

|

closed

|

Adjust clusterctl upgrade jobs for v1.3

|

kind/feature area/testing

|

Once we start development of CAPI v1.3 we have to adjust our clusterctl upgrade test jobs.

The goal is to have jobs which test clusterctl upgrade from the latest release of each currently supported contract version (v1alpha3 (v0.3.x), v1alpha4 (v0.4.x) and v1beta1 (v1.2.x).

We already have jobs for v0.3, v0.4 and v1.1. As we don't need the job for v1.1 anymore we can just change it for v1.2:

Job: [periodic-cluster-api-e2e-upgrade-v1-1-to-main](https://github.com/kubernetes/test-infra/blob/master/config/jobs/kubernetes-sigs/cluster-api/cluster-api-periodics-main.yaml#L100-L141)

* Change name to "periodic-cluster-api-e2e-upgrade-v1-2-to-main"

* INIT_WITH_BINARY should use v1.2.0

* Change testgrid-tab-name to "capi-e2e-upgrade-v1-2-to-main"

/kind feature

/area testing

|

1.0

|

Adjust clusterctl upgrade jobs for v1.3 - Once we start development of CAPI v1.3 we have to adjust our clusterctl upgrade test jobs.

The goal is to have jobs which test clusterctl upgrade from the latest release of each currently supported contract version (v1alpha3 (v0.3.x), v1alpha4 (v0.4.x) and v1beta1 (v1.2.x).

We already have jobs for v0.3, v0.4 and v1.1. As we don't need the job for v1.1 anymore we can just change it for v1.2:

Job: [periodic-cluster-api-e2e-upgrade-v1-1-to-main](https://github.com/kubernetes/test-infra/blob/master/config/jobs/kubernetes-sigs/cluster-api/cluster-api-periodics-main.yaml#L100-L141)

* Change name to "periodic-cluster-api-e2e-upgrade-v1-2-to-main"

* INIT_WITH_BINARY should use v1.2.0

* Change testgrid-tab-name to "capi-e2e-upgrade-v1-2-to-main"

/kind feature

/area testing

|

test

|

adjust clusterctl upgrade jobs for once we start development of capi we have to adjust our clusterctl upgrade test jobs the goal is to have jobs which test clusterctl upgrade from the latest release of each currently supported contract version x x and x we already have jobs for and as we don t need the job for anymore we can just change it for job change name to periodic cluster api upgrade to main init with binary should use change testgrid tab name to capi upgrade to main kind feature area testing

| 1

|

3,984

| 6,813,769,202

|

IssuesEvent

|

2017-11-06 10:30:29

|

TEDxYouthJPIS/main

|

https://api.github.com/repos/TEDxYouthJPIS/main

|

reopened

|

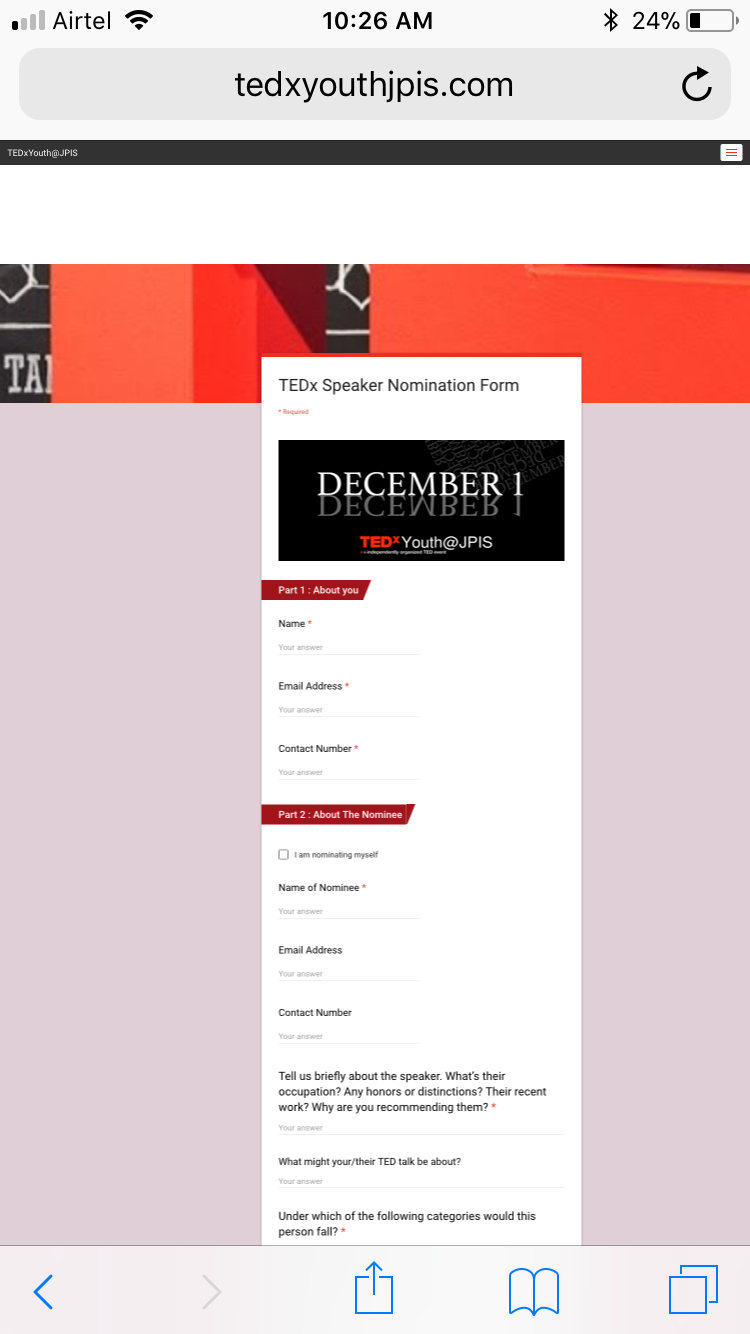

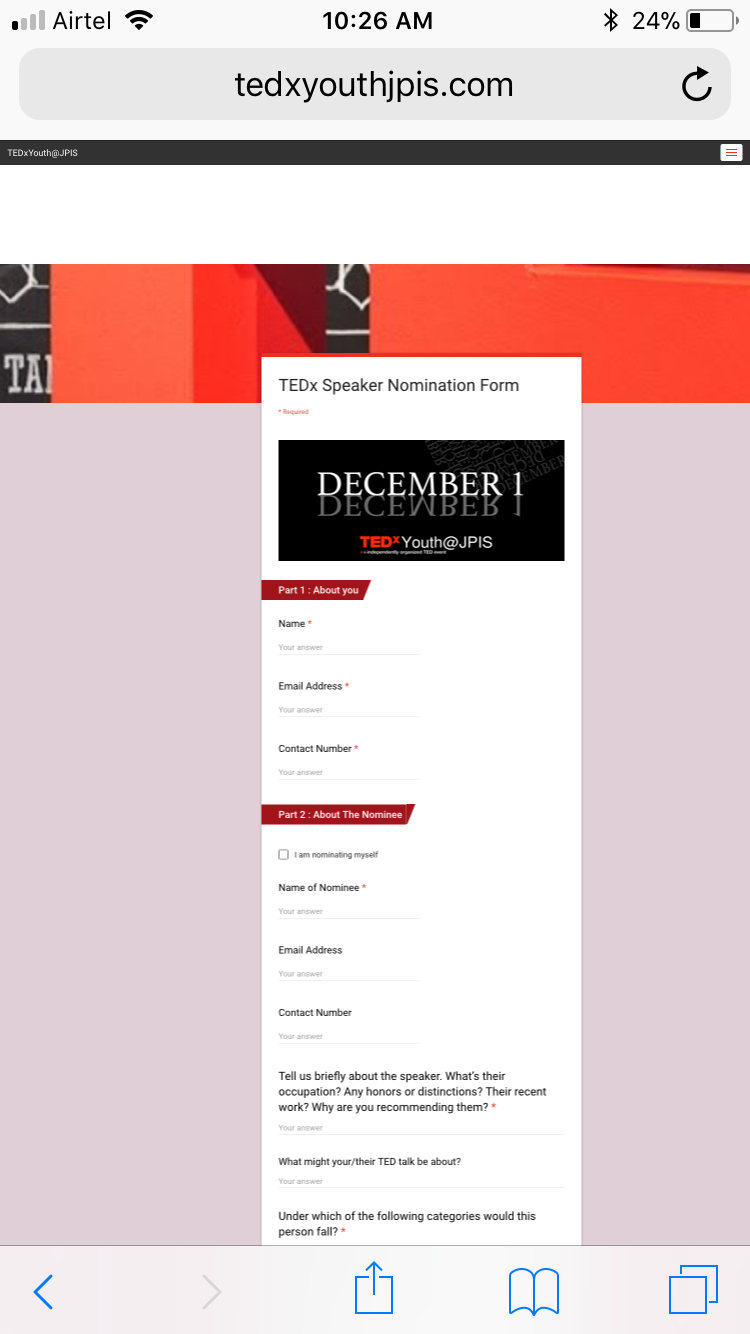

Nomination form display error on mobile

|

bug mobile-compatibility

|

The google form is not being seen in a smaller size on iphone. It's extending to the full limits. Change the code of the nomination html page. Look for help online with similar issues

|

True

|

Nomination form display error on mobile - The google form is not being seen in a smaller size on iphone. It's extending to the full limits. Change the code of the nomination html page. Look for help online with similar issues

|

non_test

|

nomination form display error on mobile the google form is not being seen in a smaller size on iphone it s extending to the full limits change the code of the nomination html page look for help online with similar issues

| 0

|

40,928

| 16,596,913,772

|

IssuesEvent

|

2021-06-01 14:28:00

|

Azure/azure-cli

|

https://api.github.com/repos/Azure/azure-cli

|

closed

|

az deployment group what-if is failing after release 2.24.0

|

ARM Service Attention

|

### **This is autogenerated. Please review and update as needed.**

## Describe the bug

**Command Name**

`az deployment group what-if`

**Errors:**

```

'str' object has no attribute 'value'

```

## To Reproduce:

Steps to reproduce the behavior. Note that argument values have been redacted, as they may contain sensitive information.

- _Put any pre-requisite steps here..._

- `az deployment group what-if -f {} -p {} -g {}`

## Expected Behavior

## Environment Summary

```

macOS-11.4-x86_64-i386-64bit

Python 3.8.10

Installer: HOMEBREW

azure-cli 2.24.0

Extensions:

aks-preview 0.5.14

azure-devops 0.18.0

```

## Additional Context

<!--Please don't remove this:-->

<!--auto-generated-->

|

1.0

|

az deployment group what-if is failing after release 2.24.0 - ### **This is autogenerated. Please review and update as needed.**

## Describe the bug

**Command Name**

`az deployment group what-if`

**Errors:**

```

'str' object has no attribute 'value'

```

## To Reproduce:

Steps to reproduce the behavior. Note that argument values have been redacted, as they may contain sensitive information.

- _Put any pre-requisite steps here..._

- `az deployment group what-if -f {} -p {} -g {}`

## Expected Behavior

## Environment Summary

```

macOS-11.4-x86_64-i386-64bit

Python 3.8.10

Installer: HOMEBREW

azure-cli 2.24.0

Extensions:

aks-preview 0.5.14

azure-devops 0.18.0

```

## Additional Context

<!--Please don't remove this:-->

<!--auto-generated-->

|

non_test

|

az deployment group what if is failing after release this is autogenerated please review and update as needed describe the bug command name az deployment group what if errors str object has no attribute value to reproduce steps to reproduce the behavior note that argument values have been redacted as they may contain sensitive information put any pre requisite steps here az deployment group what if f p g expected behavior environment summary macos python installer homebrew azure cli extensions aks preview azure devops additional context

| 0

|

66,763

| 7,017,574,895

|

IssuesEvent

|

2017-12-21 10:09:37

|

AdrianAntonGarcia/-TFG-UBUSetas

|

https://api.github.com/repos/AdrianAntonGarcia/-TFG-UBUSetas

|

closed

|

S14-17. Crear los test de integración de la actividad mostrar Información setas.

|

Android Test

|

Se crearán los test de integración de la actividad mostrar información setas para probar la funcionalidad de esta actividad.

|

1.0

|

S14-17. Crear los test de integración de la actividad mostrar Información setas. - Se crearán los test de integración de la actividad mostrar información setas para probar la funcionalidad de esta actividad.

|

test

|

crear los test de integración de la actividad mostrar información setas se crearán los test de integración de la actividad mostrar información setas para probar la funcionalidad de esta actividad

| 1

|

188,900

| 14,478,878,959

|

IssuesEvent

|

2020-12-10 09:03:14

|

kalexmills/github-vet-tests-dec2020

|

https://api.github.com/repos/kalexmills/github-vet-tests-dec2020

|

closed

|

code4pantanal/onibus.ms: internal/router/ponto_test.go; 3 LoC

|

fresh test tiny

|

Found a possible issue in [code4pantanal/onibus.ms](https://www.github.com/code4pantanal/onibus.ms) at [internal/router/ponto_test.go](https://github.com/code4pantanal/onibus.ms/blob/f771376df274eda5d44f4f298b4e1c4c670aab3e/internal/router/ponto_test.go#L43-L45)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call at line 44 may store a reference to ponto

[Click here to see the code in its original context.](https://github.com/code4pantanal/onibus.ms/blob/f771376df274eda5d44f4f298b4e1c4c670aab3e/internal/router/ponto_test.go#L43-L45)

<details>

<summary>Click here to show the 3 line(s) of Go which triggered the analyzer.</summary>

```go

for _, ponto := range pontos {

store.PontoStore().Insere(&ponto)

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: f771376df274eda5d44f4f298b4e1c4c670aab3e

|

1.0

|

code4pantanal/onibus.ms: internal/router/ponto_test.go; 3 LoC -

Found a possible issue in [code4pantanal/onibus.ms](https://www.github.com/code4pantanal/onibus.ms) at [internal/router/ponto_test.go](https://github.com/code4pantanal/onibus.ms/blob/f771376df274eda5d44f4f298b4e1c4c670aab3e/internal/router/ponto_test.go#L43-L45)

Below is the message reported by the analyzer for this snippet of code. Beware that the analyzer only reports the first

issue it finds, so please do not limit your consideration to the contents of the below message.

> function call at line 44 may store a reference to ponto

[Click here to see the code in its original context.](https://github.com/code4pantanal/onibus.ms/blob/f771376df274eda5d44f4f298b4e1c4c670aab3e/internal/router/ponto_test.go#L43-L45)

<details>

<summary>Click here to show the 3 line(s) of Go which triggered the analyzer.</summary>

```go

for _, ponto := range pontos {

store.PontoStore().Insere(&ponto)

}

```

</details>

Leave a reaction on this issue to contribute to the project by classifying this instance as a **Bug** :-1:, **Mitigated** :+1:, or **Desirable Behavior** :rocket:

See the descriptions of the classifications [here](https://github.com/github-vet/rangeclosure-findings#how-can-i-help) for more information.

commit ID: f771376df274eda5d44f4f298b4e1c4c670aab3e

|

test

|

onibus ms internal router ponto test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the contents of the below message function call at line may store a reference to ponto click here to show the line s of go which triggered the analyzer go for ponto range pontos store pontostore insere ponto leave a reaction on this issue to contribute to the project by classifying this instance as a bug mitigated or desirable behavior rocket see the descriptions of the classifications for more information commit id

| 1

|

180,522

| 13,934,853,327

|

IssuesEvent

|

2020-10-22 10:37:32

|

kyma-project/console

|

https://api.github.com/repos/kyma-project/console

|

closed

|

UI Test for opening externally linked cluster microfrontends

|

area/console area/quality stale test-missing

|

**Description**

Implement an automated test that will test opening CMFs with external link attribute (i.e `Stats & Metrics`, `Tracing`)

**Reasons**

To increase e2e test coverage and deploy new features with confidence

|

1.0

|

UI Test for opening externally linked cluster microfrontends - **Description**

Implement an automated test that will test opening CMFs with external link attribute (i.e `Stats & Metrics`, `Tracing`)

**Reasons**

To increase e2e test coverage and deploy new features with confidence

|

test

|

ui test for opening externally linked cluster microfrontends description implement an automated test that will test opening cmfs with external link attribute i e stats metrics tracing reasons to increase test coverage and deploy new features with confidence

| 1

|

8,545

| 22,826,998,070

|

IssuesEvent

|

2022-07-12 09:27:38

|

arduino/arduino-cli

|

https://api.github.com/repos/arduino/arduino-cli

|

closed

|

panic: runtime error when running the CLI daemon on my Pi

|

os: linux architecture: armv7 type: imperfection topic: gRPC

|

## Bug Report

### Current behavior

<!-- Paste the full command you run -->

I am trying to use the Arduino CLI daemon on `armv7l` over gRPC. I had this error in the Pro IDE:

```

daemon INFO panic: runtime error: invalid memory address or nil pointer dereference

daemon INFO [signal SIGSEGV: segmentation violation code=0x1 addr=0x4 pc=0x12c28]

daemon INFO goroutine 1 [running]:

daemon INFO github.com/segmentio/stats/v4/prometheus.(*Handler).HandleMeasures(0xec44d8, 0x44dd7270, 0xbfba24fe, 0x1a71c0e, 0x0, 0xec44a0, 0x21f1300, 0x1, 0x1)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/prometheus/handler.go:96 +0x1d8

daemon INFO github.com/segmentio/stats/v4.(*Engine).measure(0x21f11c0, 0x44dd7270, 0xbfba24fe, 0x1a71c0e, 0x0, 0xec44a0, 0x85f175, 0x6, 0x785f10, 0x985a50, ...)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:155 +0x2b4

daemon INFO github.com/segmentio/stats/v4.(*Engine).Add(0x21f11c0, 0x85f175, 0x6, 0x785f10, 0x985a50, 0x2155e80, 0x1, 0x1)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:94 +0x78

daemon INFO github.com/segmentio/stats/v4.(*Engine).Incr(...)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:84

daemon INFO github.com/segmentio/stats/v4.Incr(...)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:251

daemon INFO github.com/arduino/arduino-cli/cli/daemon.runDaemonCommand(0x21f74a0, 0x21ea630, 0x0, 0x5)

daemon INFO /__w/arduino-cli/arduino-cli/cli/daemon/daemon.go:65 +0x7e4

daemon INFO github.com/spf13/cobra.(*Command).execute(0x21f74a0, 0x21ea600, 0x5, 0x6, 0x21f74a0, 0x21ea600)

daemon INFO /github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:846 +0x1f4

daemon INFO github.com/spf13/cobra.(*Command).ExecuteC(0x20ad760, 0x17, 0x0, 0x20000e0)

daemon INFO /github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:950 +0x26c

daemon INFO github.com/spf13/cobra.(*Command).Execute(...)

daemon INFO /github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:887

daemon INFO main.main()

daemon INFO /__w/arduino-cli/arduino-cli/main.go:31 +0x24

daemon INFO Failed to start the daemon.

daemon ERROR Error: panic: runtime error: invalid memory address or nil pointer dereference

[signal SIGSEGV: segmentation violation code=0x1 addr=0x4 pc=0x12c28]

goroutine 1 [running]:

github.com/segmentio/stats/v4/prometheus.(*Handler).HandleMeasures(0xec44d8, 0x44dd7270, 0xbfba24fe, 0x1a71c0e, 0x0, 0xec44a0, 0x21f1300, 0x1, 0x1)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/prometheus/handler.go:96 +0x1d8

github.com/segmentio/stats/v4.(*Engine).measure(0x21f11c0, 0x44dd7270, 0xbfba24fe, 0x1a71c0e, 0x0, 0xec44a0, 0x85f175, 0x6, 0x785f10, 0x985a50, ...)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:155 +0x2b4

github.com/segmentio/stats/v4.(*Engine).Add(0x21f11c0, 0x85f175, 0x6, 0x785f10, 0x985a50, 0x2155e80, 0x1, 0x1)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:94 +0x78

github.com/segmentio/stats/v4.(*Engine).Incr(...)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:84

github.com/segmentio/stats/v4.Incr(...)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:251

github.com/arduino/arduino-cli/cli/daemon.runDaemonCommand(0x21f74a0, 0x21ea630, 0x0, 0x5)

/__w/arduino-cli/arduino-cli/cli/daemon/daemon.go:65 +0x7e4

github.com/spf13/cobra.(*Command).execute(0x21f74a0, 0x21ea600, 0x5, 0x6, 0x21f74a0, 0x21ea600)

/github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:846 +0x1f4

github.com/spf13/cobra.(*Command).ExecuteC(0x20ad760, 0x17, 0x0, 0x20000e0)

/github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:950 +0x26c

github.com/spf13/cobra.(*Command).Execute(...)

/github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:887

main.main()

/__w/arduino-cli/arduino-cli/main.go:31 +0x24

```

Disabling the `telemetry` **did** help:

```

cat ~/.arduinoProIDE/arduino-cli.yaml

board_manager:

additional_urls: []

daemon:

port: "50051"

directories:

data: /home/pi/.arduino15

downloads: /home/pi/.arduino15/staging

user: /home/pi/Arduino

logging:

file: ""

format: text

level: info

telemetry:

addr: :9090

enabled: false

```

<!-- Add a clear and concise description of the behavior. -->

### Expected behavior

<!-- Add a clear and concise description of what you expected to happen. -->

### Environment

- CLI version (output of `arduino-cli version`): `arduino-cli Version: 0.11.0 Commit: 0296f4d`

- OS and platform: `Linux raspberrypi 4.19.118-v7l+ #1311 SMP Mon Apr 27 14:26:42 BST 2020 armv7l GNU/Linux`

### Additional context

<!-- (Optional) Add any other context about the problem here. -->

I used the https://downloads.arduino.cc/arduino-cli/arduino-cli_0.11.0_Linux_ARMv7.tar.gz URL to get the CLI.

Update: I have corrected my original description; disabling the `telemetry` helped to work around the problem. I can confirm, without the telemetry, the daemon runs on ARM.

|

1.0

|

panic: runtime error when running the CLI daemon on my Pi - ## Bug Report

### Current behavior

<!-- Paste the full command you run -->

I am trying to use the Arduino CLI daemon on `armv7l` over gRPC. I had this error in the Pro IDE:

```

daemon INFO panic: runtime error: invalid memory address or nil pointer dereference

daemon INFO [signal SIGSEGV: segmentation violation code=0x1 addr=0x4 pc=0x12c28]

daemon INFO goroutine 1 [running]:

daemon INFO github.com/segmentio/stats/v4/prometheus.(*Handler).HandleMeasures(0xec44d8, 0x44dd7270, 0xbfba24fe, 0x1a71c0e, 0x0, 0xec44a0, 0x21f1300, 0x1, 0x1)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/prometheus/handler.go:96 +0x1d8

daemon INFO github.com/segmentio/stats/v4.(*Engine).measure(0x21f11c0, 0x44dd7270, 0xbfba24fe, 0x1a71c0e, 0x0, 0xec44a0, 0x85f175, 0x6, 0x785f10, 0x985a50, ...)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:155 +0x2b4

daemon INFO github.com/segmentio/stats/v4.(*Engine).Add(0x21f11c0, 0x85f175, 0x6, 0x785f10, 0x985a50, 0x2155e80, 0x1, 0x1)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:94 +0x78

daemon INFO github.com/segmentio/stats/v4.(*Engine).Incr(...)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:84

daemon INFO github.com/segmentio/stats/v4.Incr(...)

daemon INFO /github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:251

daemon INFO github.com/arduino/arduino-cli/cli/daemon.runDaemonCommand(0x21f74a0, 0x21ea630, 0x0, 0x5)

daemon INFO /__w/arduino-cli/arduino-cli/cli/daemon/daemon.go:65 +0x7e4

daemon INFO github.com/spf13/cobra.(*Command).execute(0x21f74a0, 0x21ea600, 0x5, 0x6, 0x21f74a0, 0x21ea600)

daemon INFO /github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:846 +0x1f4

daemon INFO github.com/spf13/cobra.(*Command).ExecuteC(0x20ad760, 0x17, 0x0, 0x20000e0)

daemon INFO /github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:950 +0x26c

daemon INFO github.com/spf13/cobra.(*Command).Execute(...)

daemon INFO /github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:887

daemon INFO main.main()

daemon INFO /__w/arduino-cli/arduino-cli/main.go:31 +0x24

daemon INFO Failed to start the daemon.

daemon ERROR Error: panic: runtime error: invalid memory address or nil pointer dereference

[signal SIGSEGV: segmentation violation code=0x1 addr=0x4 pc=0x12c28]

goroutine 1 [running]:

github.com/segmentio/stats/v4/prometheus.(*Handler).HandleMeasures(0xec44d8, 0x44dd7270, 0xbfba24fe, 0x1a71c0e, 0x0, 0xec44a0, 0x21f1300, 0x1, 0x1)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/prometheus/handler.go:96 +0x1d8

github.com/segmentio/stats/v4.(*Engine).measure(0x21f11c0, 0x44dd7270, 0xbfba24fe, 0x1a71c0e, 0x0, 0xec44a0, 0x85f175, 0x6, 0x785f10, 0x985a50, ...)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:155 +0x2b4

github.com/segmentio/stats/v4.(*Engine).Add(0x21f11c0, 0x85f175, 0x6, 0x785f10, 0x985a50, 0x2155e80, 0x1, 0x1)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:94 +0x78

github.com/segmentio/stats/v4.(*Engine).Incr(...)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:84

github.com/segmentio/stats/v4.Incr(...)

/github/home/go/pkg/mod/github.com/segmentio/stats/v4@v4.5.3/engine.go:251

github.com/arduino/arduino-cli/cli/daemon.runDaemonCommand(0x21f74a0, 0x21ea630, 0x0, 0x5)

/__w/arduino-cli/arduino-cli/cli/daemon/daemon.go:65 +0x7e4

github.com/spf13/cobra.(*Command).execute(0x21f74a0, 0x21ea600, 0x5, 0x6, 0x21f74a0, 0x21ea600)

/github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:846 +0x1f4

github.com/spf13/cobra.(*Command).ExecuteC(0x20ad760, 0x17, 0x0, 0x20000e0)

/github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:950 +0x26c

github.com/spf13/cobra.(*Command).Execute(...)

/github/home/go/pkg/mod/github.com/spf13/cobra@v1.0.0/command.go:887

main.main()

/__w/arduino-cli/arduino-cli/main.go:31 +0x24

```

Disabling the `telemetry` **did** help:

```

cat ~/.arduinoProIDE/arduino-cli.yaml

board_manager:

additional_urls: []

daemon:

port: "50051"

directories:

data: /home/pi/.arduino15

downloads: /home/pi/.arduino15/staging

user: /home/pi/Arduino

logging:

file: ""

format: text

level: info

telemetry:

addr: :9090

enabled: false

```

<!-- Add a clear and concise description of the behavior. -->

### Expected behavior

<!-- Add a clear and concise description of what you expected to happen. -->

### Environment

- CLI version (output of `arduino-cli version`): `arduino-cli Version: 0.11.0 Commit: 0296f4d`

- OS and platform: `Linux raspberrypi 4.19.118-v7l+ #1311 SMP Mon Apr 27 14:26:42 BST 2020 armv7l GNU/Linux`

### Additional context

<!-- (Optional) Add any other context about the problem here. -->

I used the https://downloads.arduino.cc/arduino-cli/arduino-cli_0.11.0_Linux_ARMv7.tar.gz URL to get the CLI.

Update: I have corrected my original description; disabling the `telemetry` helped to work around the problem. I can confirm, without the telemetry, the daemon runs on ARM.

|

non_test

|

panic runtime error when running the cli daemon on my pi bug report current behavior i am trying to use the arduino cli daemon on over grpc i had this error in the pro ide daemon info panic runtime error invalid memory address or nil pointer dereference daemon info daemon info goroutine daemon info github com segmentio stats prometheus handler handlemeasures daemon info github home go pkg mod github com segmentio stats prometheus handler go daemon info github com segmentio stats engine measure daemon info github home go pkg mod github com segmentio stats engine go daemon info github com segmentio stats engine add daemon info github home go pkg mod github com segmentio stats engine go daemon info github com segmentio stats engine incr daemon info github home go pkg mod github com segmentio stats engine go daemon info github com segmentio stats incr daemon info github home go pkg mod github com segmentio stats engine go daemon info github com arduino arduino cli cli daemon rundaemoncommand daemon info w arduino cli arduino cli cli daemon daemon go daemon info github com cobra command execute daemon info github home go pkg mod github com cobra command go daemon info github com cobra command executec daemon info github home go pkg mod github com cobra command go daemon info github com cobra command execute daemon info github home go pkg mod github com cobra command go daemon info main main daemon info w arduino cli arduino cli main go daemon info failed to start the daemon daemon error error panic runtime error invalid memory address or nil pointer dereference goroutine github com segmentio stats prometheus handler handlemeasures github home go pkg mod github com segmentio stats prometheus handler go github com segmentio stats engine measure github home go pkg mod github com segmentio stats engine go github com segmentio stats engine add github home go pkg mod github com segmentio stats engine go github com segmentio stats engine incr github home go pkg mod github com segmentio stats engine go github com segmentio stats incr github home go pkg mod github com segmentio stats engine go github com arduino arduino cli cli daemon rundaemoncommand w arduino cli arduino cli cli daemon daemon go github com cobra command execute github home go pkg mod github com cobra command go github com cobra command executec github home go pkg mod github com cobra command go github com cobra command execute github home go pkg mod github com cobra command go main main w arduino cli arduino cli main go disabling the telemetry did help cat arduinoproide arduino cli yaml board manager additional urls daemon port directories data home pi downloads home pi staging user home pi arduino logging file format text level info telemetry addr enabled false expected behavior environment cli version output of arduino cli version arduino cli version commit os and platform linux raspberrypi smp mon apr bst gnu linux additional context i used the url to get the cli update i have corrected my original description disabling the telemetry helped to work around the problem i can confirm without the telemetry the daemon runs on arm

| 0

|

68,307

| 3,286,064,223

|

IssuesEvent

|

2015-10-28 23:42:33

|

dart-lang/sdk

|

https://api.github.com/repos/dart-lang/sdk

|

opened

|

Add .analysis_options support for `enableSuperMixins`.

|

Area-Analyzer Priority-Medium Type-Enhancement

|

Straw-man:

```

analyzer:

enableSuperMixins: true

```

@sethladd : are there other options we need while I'm at it?

|

1.0

|

Add .analysis_options support for `enableSuperMixins`. - Straw-man:

```

analyzer:

enableSuperMixins: true

```

@sethladd : are there other options we need while I'm at it?

|

non_test

|

add analysis options support for enablesupermixins straw man analyzer enablesupermixins true sethladd are there other options we need while i m at it

| 0

|

642,284

| 20,883,404,864

|

IssuesEvent

|

2022-03-23 00:30:24

|

mskcc/helix_filters_01

|

https://api.github.com/repos/mskcc/helix_filters_01

|

closed

|

need to run test cases inside container

|

enhancement low priority

|

Right now the test case runner recipes run in the current terminal session, need to modify them so that they execute inside the Docker and Singularity containers instead

|

1.0

|

need to run test cases inside container - Right now the test case runner recipes run in the current terminal session, need to modify them so that they execute inside the Docker and Singularity containers instead

|

non_test

|

need to run test cases inside container right now the test case runner recipes run in the current terminal session need to modify them so that they execute inside the docker and singularity containers instead

| 0

|

87,102

| 8,058,898,758

|

IssuesEvent

|

2018-08-02 20:02:40

|

rancher/rancher

|

https://api.github.com/repos/rancher/rancher

|

closed

|

Rancher Logging does not take custom Docker root dir into account

|

area/tools kind/bug priority/-1 status/resolved status/to-test team/cn version/2.0

|

Due to server storage restrictions, my team needs to store anything large on our servers in `/app`, including the docker installation and `/var/log` directories, and then symlink to them from the original location. This seems to cause issues when enabling cluster logging in rancher. I dug into the issue, and saw the following logs for the fluentd container on my agent for each of my containers:

1: `2018-05-04 19:51:34 +0000 [warn]: #0 /var/lib/rancher/rke/log/kubelet_2512018f1c0e124fe6356cb915db9973ec5e24f2a0e77536274f661d9335706c.log unreadable. It is excluded and would be examined next time.`

AND

2: `2018-05-04 20:09:10 +0000 [warn]: #0 /var/log/containers/redis-7bd4689f65-q8w7r_default_redis-6272889bf0dfdc2609c1d05a8fd53115fc4352e6fbfb9409f343488889422759.log unreadable. It is excluded and would be examined next time.`

After seeing this I went into the container and dug down to see why it was not able to read the file. For example 2 above, the end symlink was trying to point to `/app/docker/containers`.... (the correct location on my host of the docker install/log location). The issue with this is that `/app/docker/containers` is not bind mounted for the fluentd container, only `/var/lib/docker` is bind mounted.

My proposed fix is to add the docker root dir, found from running `docker info`, as a bind mount when spinning up the fluentd container.

Thoughts?

**Rancher versions:**

2.0.0

**Infrastructure Stack versions:**

kubernetes (if applicable): 1.10

**Docker version: (`docker version`,`docker info` preferred)**

17.03

**Operating system and kernel: (`cat /etc/os-release`, `uname -r` preferred)**

centos 7

**Type/provider of hosts: (VirtualBox/Bare-metal/AWS/GCE/DO)**

bare-metal

**Setup details: (single node rancher vs. HA rancher, internal DB vs. external DB)**

single server with 3 agents on a single cluster

**Environment Template: (Cattle/Kubernetes/Swarm/Mesos)**

k8

**Steps to Reproduce:**

on your agent:

- Install docker

- move the docker installation to another dir

- sym link /var/lib/docker to your new location

- start rancher agent as normal on that machine and add it to your cluster

- configure cluster logging in rancher UI to send to a valid kafka endpoint (other methods may work as well not sure if this matters)

- view logs of fluentd container started on the agent

**Results:**

|

1.0

|

Rancher Logging does not take custom Docker root dir into account - Due to server storage restrictions, my team needs to store anything large on our servers in `/app`, including the docker installation and `/var/log` directories, and then symlink to them from the original location. This seems to cause issues when enabling cluster logging in rancher. I dug into the issue, and saw the following logs for the fluentd container on my agent for each of my containers:

1: `2018-05-04 19:51:34 +0000 [warn]: #0 /var/lib/rancher/rke/log/kubelet_2512018f1c0e124fe6356cb915db9973ec5e24f2a0e77536274f661d9335706c.log unreadable. It is excluded and would be examined next time.`

AND

2: `2018-05-04 20:09:10 +0000 [warn]: #0 /var/log/containers/redis-7bd4689f65-q8w7r_default_redis-6272889bf0dfdc2609c1d05a8fd53115fc4352e6fbfb9409f343488889422759.log unreadable. It is excluded and would be examined next time.`

After seeing this I went into the container and dug down to see why it was not able to read the file. For example 2 above, the end symlink was trying to point to `/app/docker/containers`.... (the correct location on my host of the docker install/log location). The issue with this is that `/app/docker/containers` is not bind mounted for the fluentd container, only `/var/lib/docker` is bind mounted.

My proposed fix is to add the docker root dir, found from running `docker info`, as a bind mount when spinning up the fluentd container.

Thoughts?

**Rancher versions:**

2.0.0

**Infrastructure Stack versions:**

kubernetes (if applicable): 1.10

**Docker version: (`docker version`,`docker info` preferred)**

17.03

**Operating system and kernel: (`cat /etc/os-release`, `uname -r` preferred)**

centos 7

**Type/provider of hosts: (VirtualBox/Bare-metal/AWS/GCE/DO)**

bare-metal

**Setup details: (single node rancher vs. HA rancher, internal DB vs. external DB)**

single server with 3 agents on a single cluster

**Environment Template: (Cattle/Kubernetes/Swarm/Mesos)**

k8

**Steps to Reproduce:**

on your agent:

- Install docker

- move the docker installation to another dir

- sym link /var/lib/docker to your new location

- start rancher agent as normal on that machine and add it to your cluster

- configure cluster logging in rancher UI to send to a valid kafka endpoint (other methods may work as well not sure if this matters)

- view logs of fluentd container started on the agent

**Results:**

|

test

|