Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 4

112

| repo_url

stringlengths 33

141

| action

stringclasses 3

values | title

stringlengths 1

1.02k

| labels

stringlengths 4

1.54k

| body

stringlengths 1

262k

| index

stringclasses 17

values | text_combine

stringlengths 95

262k

| label

stringclasses 2

values | text

stringlengths 96

252k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

58,469

| 6,599,523,733

|

IssuesEvent

|

2017-09-16 20:53:27

|

nskins/goby

|

https://api.github.com/repos/nskins/goby

|

closed

|

Refactor tests to use `let` instead of instance variables

|

better test suite

|

I noticed that most of the tests use a before action to assign instance variables that are used throughout the tests. This is not great for a couple of reasons:

1. Instance variables are created wherever they are referenced, so you are more prone to typos.

2. Oftentimes objects are being instantiated that are not being used in all of the tests, which is unnecessary.

I suggest refactoring to use `let`, this will clean up the code, protect against inadvertent typos, and speed up run time (`let` is lazy-loaded so you won't be instantiating every object for every test).

For example, the Escape Spec would now look like this:

```ruby

#spec/goby/battle/escape_spec.rb

RSpec.describe Goby::Escape do

let(:player) { Player.new }

let(:monster) { Monster.new }

let(:escape) { Escape.new }

context "constructor" do

it "has an appropriate default name" do

expect(escape.name).to eq "Escape"

end

end

context "run" do

# The purpose of this test is to run the code without error.

it "should return a usable result" do

# Exercise both branches of this function w/ high probability.

20.times do

escape.run(player, monster)

expect(player.escaped).to_not be nil

end

end

end

end

```

This is a pretty big refactor, I'm happy to take it on if you think it's worth it.

|

1.0

|

Refactor tests to use `let` instead of instance variables - I noticed that most of the tests use a before action to assign instance variables that are used throughout the tests. This is not great for a couple of reasons:

1. Instance variables are created wherever they are referenced, so you are more prone to typos.

2. Oftentimes objects are being instantiated that are not being used in all of the tests, which is unnecessary.

I suggest refactoring to use `let`, this will clean up the code, protect against inadvertent typos, and speed up run time (`let` is lazy-loaded so you won't be instantiating every object for every test).

For example, the Escape Spec would now look like this:

```ruby

#spec/goby/battle/escape_spec.rb

RSpec.describe Goby::Escape do

let(:player) { Player.new }

let(:monster) { Monster.new }

let(:escape) { Escape.new }

context "constructor" do

it "has an appropriate default name" do

expect(escape.name).to eq "Escape"

end

end

context "run" do

# The purpose of this test is to run the code without error.

it "should return a usable result" do

# Exercise both branches of this function w/ high probability.

20.times do

escape.run(player, monster)

expect(player.escaped).to_not be nil

end

end

end

end

```

This is a pretty big refactor, I'm happy to take it on if you think it's worth it.

|

test

|

refactor tests to use let instead of instance variables i noticed that most of the tests use a before action to assign instance variables that are used throughout the tests this is not great for a couple of reasons instance variables are created wherever they are referenced so you are more prone to typos oftentimes objects are being instantiated that are not being used in all of the tests which is unnecessary i suggest refactoring to use let this will clean up the code protect against inadvertent typos and speed up run time let is lazy loaded so you won t be instantiating every object for every test for example the escape spec would now look like this ruby spec goby battle escape spec rb rspec describe goby escape do let player player new let monster monster new let escape escape new context constructor do it has an appropriate default name do expect escape name to eq escape end end context run do the purpose of this test is to run the code without error it should return a usable result do exercise both branches of this function w high probability times do escape run player monster expect player escaped to not be nil end end end end this is a pretty big refactor i m happy to take it on if you think it s worth it

| 1

|

245,888

| 20,809,849,629

|

IssuesEvent

|

2022-03-18 00:27:40

|

brave/brave-browser

|

https://api.github.com/repos/brave/brave-browser

|

closed

|

Blocked count highlight doesn't cover entire number

|

bug feature/shields priority/P3 needs-discussion QA/Yes QA/Test-Plan-Specified feature/shields/panel OS/Desktop

|

<!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROVIDED-->

## Description

<!--Provide a brief description of the issue-->

Blocked count highlight doesn't cover entire number

## Steps to Reproduce

<!--Please add a series of steps to reproduce the issue-->

1. install `1.38.35`

2. launch Brave

3. enable `Shields V2` via `brave://flags`

4. restart Brave

5. sit on an XHR-happy page (any Facebook one will do)

6. check the Shields-blocked count via the icon-tip in the URL bar

7. once it reaches `99+`, click on it to expand the flyout panel

8. click to open its `Advanced controls` sub-panel

9. hover over the number for `Trackers & ads blocked (standard)`

10. note the highlight paint region

## Actual result:

<!--Please add screenshots if needed-->

<img width="1312" alt="Screen Shot 2022-03-11 at 12 41 01 AM" src="https://user-images.githubusercontent.com/387249/157833842-8933eca0-785e-4dae-afca-da0b4e72ab21.png">

## Expected result:

Should paint the entire number with the hover highlight

## Reproduces how often:

<!--[Easily reproduced/Intermittent issue/No steps to reproduce]-->

100%

## Brave version (brave://version info)

<!--For installed build, please copy Brave, Revision and OS from brave://version and paste here. If building from source please mention it along with brave://version details-->

Brave | 1.38.35 Chromium: 99.0.4844.51 (Official Build) nightly (arm64)

-- | --

Revision | d537ec02474b5afe23684e7963d538896c63ac77-refs/branch-heads/4844@{#875}

OS | macOS Version 11.6.4 (Build 20G417)

cc @nullhook @rebron @sri

|

1.0

|

Blocked count highlight doesn't cover entire number - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROVIDED-->

## Description

<!--Provide a brief description of the issue-->

Blocked count highlight doesn't cover entire number

## Steps to Reproduce

<!--Please add a series of steps to reproduce the issue-->

1. install `1.38.35`

2. launch Brave

3. enable `Shields V2` via `brave://flags`

4. restart Brave

5. sit on an XHR-happy page (any Facebook one will do)

6. check the Shields-blocked count via the icon-tip in the URL bar

7. once it reaches `99+`, click on it to expand the flyout panel

8. click to open its `Advanced controls` sub-panel

9. hover over the number for `Trackers & ads blocked (standard)`

10. note the highlight paint region

## Actual result:

<!--Please add screenshots if needed-->

<img width="1312" alt="Screen Shot 2022-03-11 at 12 41 01 AM" src="https://user-images.githubusercontent.com/387249/157833842-8933eca0-785e-4dae-afca-da0b4e72ab21.png">

## Expected result:

Should paint the entire number with the hover highlight

## Reproduces how often:

<!--[Easily reproduced/Intermittent issue/No steps to reproduce]-->

100%

## Brave version (brave://version info)

<!--For installed build, please copy Brave, Revision and OS from brave://version and paste here. If building from source please mention it along with brave://version details-->

Brave | 1.38.35 Chromium: 99.0.4844.51 (Official Build) nightly (arm64)

-- | --

Revision | d537ec02474b5afe23684e7963d538896c63ac77-refs/branch-heads/4844@{#875}

OS | macOS Version 11.6.4 (Build 20G417)

cc @nullhook @rebron @sri

|

test

|

blocked count highlight doesn t cover entire number have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the issue closed it will only be reopened after sufficient info is provided description blocked count highlight doesn t cover entire number steps to reproduce install launch brave enable shields via brave flags restart brave sit on an xhr happy page any facebook one will do check the shields blocked count via the icon tip in the url bar once it reaches click on it to expand the flyout panel click to open its advanced controls sub panel hover over the number for trackers ads blocked standard note the highlight paint region actual result img width alt screen shot at am src expected result should paint the entire number with the hover highlight reproduces how often brave version brave version info brave chromium official build nightly revision refs branch heads os macos version build cc nullhook rebron sri

| 1

|

60,154

| 6,672,967,037

|

IssuesEvent

|

2017-10-04 13:39:55

|

w3c/web-platform-tests

|

https://api.github.com/repos/w3c/web-platform-tests

|

closed

|

sequential_async_test

|

infra testharness.js

|

Originally posted as https://github.com/w3c/testharness.js/issues/96 by @mvano on 09 Dec 2014, 14:16 UTC:

> I'd like to run multiple async tests from a single page, but execute them sequentially, not in parallel. The idea would be for later tests to delay execution until the previous ones are done.

>

> The goal would be to make tests that share state (across the tests, or in the object under test) be deterministic. Here's a contrived example:

>

> ``` javascript

> var i = 0;

>

> sequential_async_test(function(test) {

> setTimeout(function() {

> assert_equals(i, 0);

> i++;

> test.done();

> }, Math.round(Math.random() * 100));

> }, 'Async test 0');

>

> sequential_async_test(function(test) {

> setTimeout(function() {

> assert_equals(i, 1);

> i++;

> test.done();

> }, Math.round(Math.random() * 100));

> }, 'Async test 1');

> ```

>

> I guess the alternative is to make separate pages, each with a single `async_test`. I'll probably do that for the time being.

|

1.0

|

sequential_async_test - Originally posted as https://github.com/w3c/testharness.js/issues/96 by @mvano on 09 Dec 2014, 14:16 UTC:

> I'd like to run multiple async tests from a single page, but execute them sequentially, not in parallel. The idea would be for later tests to delay execution until the previous ones are done.

>

> The goal would be to make tests that share state (across the tests, or in the object under test) be deterministic. Here's a contrived example:

>

> ``` javascript

> var i = 0;

>

> sequential_async_test(function(test) {

> setTimeout(function() {

> assert_equals(i, 0);

> i++;

> test.done();

> }, Math.round(Math.random() * 100));

> }, 'Async test 0');

>

> sequential_async_test(function(test) {

> setTimeout(function() {

> assert_equals(i, 1);

> i++;

> test.done();

> }, Math.round(Math.random() * 100));

> }, 'Async test 1');

> ```

>

> I guess the alternative is to make separate pages, each with a single `async_test`. I'll probably do that for the time being.

|

test

|

sequential async test originally posted as by mvano on dec utc i d like to run multiple async tests from a single page but execute them sequentially not in parallel the idea would be for later tests to delay execution until the previous ones are done the goal would be to make tests that share state across the tests or in the object under test be deterministic here s a contrived example javascript var i sequential async test function test settimeout function assert equals i i test done math round math random async test sequential async test function test settimeout function assert equals i i test done math round math random async test i guess the alternative is to make separate pages each with a single async test i ll probably do that for the time being

| 1

|

80,537

| 15,586,293,928

|

IssuesEvent

|

2021-03-18 01:36:51

|

saurockSaurav/weather-information-api

|

https://api.github.com/repos/saurockSaurav/weather-information-api

|

opened

|

CVE-2020-14061 (High) detected in jackson-databind-2.8.11.3.jar

|

security vulnerability

|

## CVE-2020-14061 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.11.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /weather-information-api/weather-rest-api-service/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.8.11.3/jackson-databind-2.8.11.3.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-1.5.20.RELEASE.jar (Root Library)

- :x: **jackson-databind-2.8.11.3.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.5 mishandles the interaction between serialization gadgets and typing, related to oracle.jms.AQjmsQueueConnectionFactory, oracle.jms.AQjmsXATopicConnectionFactory, oracle.jms.AQjmsTopicConnectionFactory, oracle.jms.AQjmsXAQueueConnectionFactory, and oracle.jms.AQjmsXAConnectionFactory (aka weblogic/oracle-aqjms).

<p>Publish Date: 2020-06-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-14061>CVE-2020-14061</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-14061">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-14061</a></p>

<p>Release Date: 2020-06-14</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.10.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-14061 (High) detected in jackson-databind-2.8.11.3.jar - ## CVE-2020-14061 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.11.3.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Library home page: <a href="http://github.com/FasterXML/jackson">http://github.com/FasterXML/jackson</a></p>

<p>Path to dependency file: /weather-information-api/weather-rest-api-service/pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.8.11.3/jackson-databind-2.8.11.3.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-1.5.20.RELEASE.jar (Root Library)

- :x: **jackson-databind-2.8.11.3.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

FasterXML jackson-databind 2.x before 2.9.10.5 mishandles the interaction between serialization gadgets and typing, related to oracle.jms.AQjmsQueueConnectionFactory, oracle.jms.AQjmsXATopicConnectionFactory, oracle.jms.AQjmsTopicConnectionFactory, oracle.jms.AQjmsXAQueueConnectionFactory, and oracle.jms.AQjmsXAConnectionFactory (aka weblogic/oracle-aqjms).

<p>Publish Date: 2020-06-14

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-14061>CVE-2020-14061</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-14061">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2020-14061</a></p>

<p>Release Date: 2020-06-14</p>

<p>Fix Resolution: com.fasterxml.jackson.core:jackson-databind:2.10.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_test

|

cve high detected in jackson databind jar cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file weather information api weather rest api service pom xml path to vulnerable library root repository com fasterxml jackson core jackson databind jackson databind jar dependency hierarchy spring boot starter web release jar root library x jackson databind jar vulnerable library vulnerability details fasterxml jackson databind x before mishandles the interaction between serialization gadgets and typing related to oracle jms aqjmsqueueconnectionfactory oracle jms aqjmsxatopicconnectionfactory oracle jms aqjmstopicconnectionfactory oracle jms aqjmsxaqueueconnectionfactory and oracle jms aqjmsxaconnectionfactory aka weblogic oracle aqjms publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution com fasterxml jackson core jackson databind step up your open source security game with whitesource

| 0

|

153,343

| 12,141,093,418

|

IssuesEvent

|

2020-04-23 21:45:07

|

rust-lang/rust

|

https://api.github.com/repos/rust-lang/rust

|

closed

|

Emit a warning when a codeblock is using "compile-fail" instead of "compile_fail"

|

A-doctests C-enhancement T-rustdoc

|

I just found out that a lot of error code explanations were using "compile-fail" instead of "compile_fail". I'm fixing this issue as part of something a bit bigger, however I assume this error is pretty common in rust and that rustdoc should warn people about it.

The big issue here is that since it's not a known tag, rustdoc doesn't recognize the codeblock as a rust one and therefore doesn't test it, which is pretty bad.

|

1.0

|

Emit a warning when a codeblock is using "compile-fail" instead of "compile_fail" - I just found out that a lot of error code explanations were using "compile-fail" instead of "compile_fail". I'm fixing this issue as part of something a bit bigger, however I assume this error is pretty common in rust and that rustdoc should warn people about it.

The big issue here is that since it's not a known tag, rustdoc doesn't recognize the codeblock as a rust one and therefore doesn't test it, which is pretty bad.

|

test

|

emit a warning when a codeblock is using compile fail instead of compile fail i just found out that a lot of error code explanations were using compile fail instead of compile fail i m fixing this issue as part of something a bit bigger however i assume this error is pretty common in rust and that rustdoc should warn people about it the big issue here is that since it s not a known tag rustdoc doesn t recognize the codeblock as a rust one and therefore doesn t test it which is pretty bad

| 1

|

189,901

| 14,527,387,969

|

IssuesEvent

|

2020-12-14 15:17:49

|

navarrotheus/caramelo-tec-2-CK0236

|

https://api.github.com/repos/navarrotheus/caramelo-tec-2-CK0236

|

opened

|

Testar SolicitationService

|

BACK TESTES

|

## Método create

- [ ] Teste de sucesso: Cria a solicitação com sucesso

- [ ] Teste de erro: Usuário com tal id não existe

- [ ] Teste de erro: Pet com tal id não existe

## Método update

- [ ] Teste de sucesso: Atualiza a solicitação com sucesso

- [ ] Teste de sucesso: Caso a solicitação seja aceita, cria uma adoção com o solicitante e o pet e seta a disponibilidade do pet como falsa

- [ ] Teste de erro: Usuário com tal id não existe

## Método delete

- [ ] Teste de sucesso: Deleta a solicitação com sucesso

- [ ] Teste de erro: Usuário com tal id não existe

## Método search

- [ ] Teste de sucesso: Busca as solicitações do usuário com sucesso

- [ ] Teste de erro: Usuário com tal id não existe

## Método searchPetSolicitations

- [ ] Teste de sucesso: Busca as solicitações do usuário com sucesso

- [ ] Teste de erro: Usuário com tal id não existe

- [ ] Teste de erro: Pet com tal id não existe

- [ ] Teste de erro: Usuário tenta buscar as solicitações de um Pet de outro usuário

|

1.0

|

Testar SolicitationService - ## Método create

- [ ] Teste de sucesso: Cria a solicitação com sucesso

- [ ] Teste de erro: Usuário com tal id não existe

- [ ] Teste de erro: Pet com tal id não existe

## Método update

- [ ] Teste de sucesso: Atualiza a solicitação com sucesso

- [ ] Teste de sucesso: Caso a solicitação seja aceita, cria uma adoção com o solicitante e o pet e seta a disponibilidade do pet como falsa

- [ ] Teste de erro: Usuário com tal id não existe

## Método delete

- [ ] Teste de sucesso: Deleta a solicitação com sucesso

- [ ] Teste de erro: Usuário com tal id não existe

## Método search

- [ ] Teste de sucesso: Busca as solicitações do usuário com sucesso

- [ ] Teste de erro: Usuário com tal id não existe

## Método searchPetSolicitations

- [ ] Teste de sucesso: Busca as solicitações do usuário com sucesso

- [ ] Teste de erro: Usuário com tal id não existe

- [ ] Teste de erro: Pet com tal id não existe

- [ ] Teste de erro: Usuário tenta buscar as solicitações de um Pet de outro usuário

|

test

|

testar solicitationservice método create teste de sucesso cria a solicitação com sucesso teste de erro usuário com tal id não existe teste de erro pet com tal id não existe método update teste de sucesso atualiza a solicitação com sucesso teste de sucesso caso a solicitação seja aceita cria uma adoção com o solicitante e o pet e seta a disponibilidade do pet como falsa teste de erro usuário com tal id não existe método delete teste de sucesso deleta a solicitação com sucesso teste de erro usuário com tal id não existe método search teste de sucesso busca as solicitações do usuário com sucesso teste de erro usuário com tal id não existe método searchpetsolicitations teste de sucesso busca as solicitações do usuário com sucesso teste de erro usuário com tal id não existe teste de erro pet com tal id não existe teste de erro usuário tenta buscar as solicitações de um pet de outro usuário

| 1

|

605,103

| 18,724,969,978

|

IssuesEvent

|

2021-11-03 15:26:27

|

brave/brave-browser

|

https://api.github.com/repos/brave/brave-browser

|

closed

|

Details section is empty on Reject/Approve transaction screen

|

priority/P3 QA/No release-notes/exclude feature/wallet OS/Android

|

We should fill tx details there if there are any

|

1.0

|

Details section is empty on Reject/Approve transaction screen - We should fill tx details there if there are any

|

non_test

|

details section is empty on reject approve transaction screen we should fill tx details there if there are any

| 0

|

209,653

| 16,048,047,764

|

IssuesEvent

|

2021-04-22 15:41:35

|

input-output-hk/ouroboros-network

|

https://api.github.com/repos/input-output-hk/ouroboros-network

|

closed

|

Property test which checks that codecs produce a valid CBOR encoding

|

testing

|

[validFlatTerm](http://hackage.haskell.org/package/cborg-0.2.5.0/docs/Codec-CBOR-FlatTerm.html#v:validFlatTerm)

```

validFlatTerm . toFlatTerm :: CBOR.Encoding -> Bool

```

|

1.0

|

Property test which checks that codecs produce a valid CBOR encoding - [validFlatTerm](http://hackage.haskell.org/package/cborg-0.2.5.0/docs/Codec-CBOR-FlatTerm.html#v:validFlatTerm)

```

validFlatTerm . toFlatTerm :: CBOR.Encoding -> Bool

```

|

test

|

property test which checks that codecs produce a valid cbor encoding validflatterm toflatterm cbor encoding bool

| 1

|

623,348

| 19,665,663,607

|

IssuesEvent

|

2022-01-10 22:10:23

|

aesimpson/sama-sanity

|

https://api.github.com/repos/aesimpson/sama-sanity

|

closed

|

CSS Fix for Multiple Modules within a section

|

Priority:Moderate

|

At xxxxlg wide screens, the stacked modules don't wrap:

|

1.0

|

CSS Fix for Multiple Modules within a section - At xxxxlg wide screens, the stacked modules don't wrap:

|

non_test

|

css fix for multiple modules within a section at xxxxlg wide screens the stacked modules don t wrap

| 0

|

186,817

| 14,409,561,604

|

IssuesEvent

|

2020-12-04 02:33:39

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

closed

|

[test-failed]: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/maps/embeddable/tooltip_filter_actions·js - maps app embeddable tooltip filter actions apply filter to current view "before all" hook for "should display create filter button when tooltip is locked"

|

Team:Geo failed-test test-cloud

|

**Version: 7.10.0**

**Class: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/maps/embeddable/tooltip_filter_actions·js**

**Stack Trace:**

```

Error: retry.try timeout: TypeError: Cannot read property 'clearValue' of undefined

at retry.try (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp1/TASK/saas_run_kibana_tests/node/linux-immutable/ci/cloud/common/build/kibana/test/functional/services/listing_table.ts:107:28)

at process._tickCallback (internal/process/next_tick.js:68:7)

at onFailure (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp1/TASK/saas_run_kibana_tests/node/linux-immutable/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:28:9)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp1/TASK/saas_run_kibana_tests/node/linux-immutable/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:68:13)

```

**Other test failures:**

- maps app embeddable tooltip filter actions panel actions "before all" hook for "should display more actions button when tooltip is locked"

_Test Report: https://internal-ci.elastic.co/view/Stack%20Tests/job/elastic+estf-cloud-kibana-tests/845/testReport/_

|

2.0

|

[test-failed]: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/maps/embeddable/tooltip_filter_actions·js - maps app embeddable tooltip filter actions apply filter to current view "before all" hook for "should display create filter button when tooltip is locked" - **Version: 7.10.0**

**Class: Chrome X-Pack UI Functional Tests1.x-pack/test/functional/apps/maps/embeddable/tooltip_filter_actions·js**

**Stack Trace:**

```

Error: retry.try timeout: TypeError: Cannot read property 'clearValue' of undefined

at retry.try (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp1/TASK/saas_run_kibana_tests/node/linux-immutable/ci/cloud/common/build/kibana/test/functional/services/listing_table.ts:107:28)

at process._tickCallback (internal/process/next_tick.js:68:7)

at onFailure (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp1/TASK/saas_run_kibana_tests/node/linux-immutable/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:28:9)

at retryForSuccess (/var/lib/jenkins/workspace/elastic+estf-cloud-kibana-tests/JOB/xpackGrp1/TASK/saas_run_kibana_tests/node/linux-immutable/ci/cloud/common/build/kibana/test/common/services/retry/retry_for_success.ts:68:13)

```

**Other test failures:**

- maps app embeddable tooltip filter actions panel actions "before all" hook for "should display more actions button when tooltip is locked"

_Test Report: https://internal-ci.elastic.co/view/Stack%20Tests/job/elastic+estf-cloud-kibana-tests/845/testReport/_

|

test

|

chrome x pack ui functional x pack test functional apps maps embeddable tooltip filter actions·js maps app embeddable tooltip filter actions apply filter to current view before all hook for should display create filter button when tooltip is locked version class chrome x pack ui functional x pack test functional apps maps embeddable tooltip filter actions·js stack trace error retry try timeout typeerror cannot read property clearvalue of undefined at retry try var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node linux immutable ci cloud common build kibana test functional services listing table ts at process tickcallback internal process next tick js at onfailure var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node linux immutable ci cloud common build kibana test common services retry retry for success ts at retryforsuccess var lib jenkins workspace elastic estf cloud kibana tests job task saas run kibana tests node linux immutable ci cloud common build kibana test common services retry retry for success ts other test failures maps app embeddable tooltip filter actions panel actions before all hook for should display more actions button when tooltip is locked test report

| 1

|

142,542

| 11,484,798,098

|

IssuesEvent

|

2020-02-11 05:16:42

|

proarc/proarc

|

https://api.github.com/repos/proarc/proarc

|

closed

|

RDflow - čárový kod se nepropíše

|

6 k testování RDFlow Release-3.5.16

|

Čárový kód je v metadatech

<mods:identifier type="barcode">26001600877</mods:identifier>

Ale nezobrazí se v tabulce Bibliografického záznamu.

|

1.0

|

RDflow - čárový kod se nepropíše - Čárový kód je v metadatech

<mods:identifier type="barcode">26001600877</mods:identifier>

Ale nezobrazí se v tabulce Bibliografického záznamu.

|

test

|

rdflow čárový kod se nepropíše čárový kód je v metadatech ale nezobrazí se v tabulce bibliografického záznamu

| 1

|

24,697

| 4,106,194,761

|

IssuesEvent

|

2016-06-06 07:38:34

|

Mr-Kumar-Abhishek/zuzeelik

|

https://api.github.com/repos/Mr-Kumar-Abhishek/zuzeelik

|

opened

|

Test builds from zuzeelik's pre-alpha version v0.0.0-0.5.0 in windows OS

|

testing

|

Test builds from zuzeelik's pre-alpha version` v0.0.0-0.5.0` in windows OS. Probably there could be some bugs with built-in functions that handles *quotes*. In *nix systems, all built-in functions are working fine.

|

1.0

|

Test builds from zuzeelik's pre-alpha version v0.0.0-0.5.0 in windows OS - Test builds from zuzeelik's pre-alpha version` v0.0.0-0.5.0` in windows OS. Probably there could be some bugs with built-in functions that handles *quotes*. In *nix systems, all built-in functions are working fine.

|

test

|

test builds from zuzeelik s pre alpha version in windows os test builds from zuzeelik s pre alpha version in windows os probably there could be some bugs with built in functions that handles quotes in nix systems all built in functions are working fine

| 1

|

2,057

| 2,873,078,819

|

IssuesEvent

|

2015-06-08 15:18:24

|

meumobi/sitebuilder

|

https://api.github.com/repos/meumobi/sitebuilder

|

closed

|

new user can't accept invites if another user is already logged in

|

bug sitebuilder

|

The new user can't validade, and the error message is: You need to choose a valid language

|

1.0

|

new user can't accept invites if another user is already logged in - The new user can't validade, and the error message is: You need to choose a valid language

|

non_test

|

new user can t accept invites if another user is already logged in the new user can t validade and the error message is you need to choose a valid language

| 0

|

152,600

| 12,121,608,652

|

IssuesEvent

|

2020-04-22 09:34:43

|

Students-of-the-city-of-Kostroma/Ray-of-hope

|

https://api.github.com/repos/Students-of-the-city-of-Kostroma/Ray-of-hope

|

closed

|

Протестировать регистрацию и авторизацию организации на новом сервере

|

AppServer LoginOrg O3 PR5 RegOrg Sprint 14 Testing

|

Epic #286 Task #287 #288

Протестировать функции, принимающие данные от клиента, обрабатывающие их и возвращающие ответ, на соответствие спецификациям [о регистрации](https://docs.google.com/document/d/1QkQMIYAaNvvknFlBldHHwvcq2iuWHP7E4QYHCFUZdy4) и [авторизации](https://docs.google.com/document/d/1tc8xZATtXaF6GUWpDQYYTfyn8zvWJbQeJYwcmKPkdW4) организации. Поднимать баги по мере нахождения.

|

1.0

|

Протестировать регистрацию и авторизацию организации на новом сервере - Epic #286 Task #287 #288

Протестировать функции, принимающие данные от клиента, обрабатывающие их и возвращающие ответ, на соответствие спецификациям [о регистрации](https://docs.google.com/document/d/1QkQMIYAaNvvknFlBldHHwvcq2iuWHP7E4QYHCFUZdy4) и [авторизации](https://docs.google.com/document/d/1tc8xZATtXaF6GUWpDQYYTfyn8zvWJbQeJYwcmKPkdW4) организации. Поднимать баги по мере нахождения.

|

test

|

протестировать регистрацию и авторизацию организации на новом сервере epic task протестировать функции принимающие данные от клиента обрабатывающие их и возвращающие ответ на соответствие спецификациям и организации поднимать баги по мере нахождения

| 1

|

269,843

| 23,471,396,035

|

IssuesEvent

|

2022-08-16 22:22:31

|

red/red

|

https://api.github.com/repos/red/red

|

closed

|

Deceptive error message from `set-quiet`

|

status.built status.tested type.bug

|

**Describe the bug**

```

>> o: object []

== make object! []

>> set-quiet in o 'x 1

*** Script Error: set-quiet does not allow word! for its word argument ;) what???

*** Where: set-quiet

*** Near : 1

*** Stack:

```

**To reproduce**

`set-quiet none 1`

**Expected behavior**

"Doesn't accept none for it's word argument"

**Platform version**

```

red-view-14aug22-4eb8ad83f.exe

```

|

1.0

|

Deceptive error message from `set-quiet` - **Describe the bug**

```

>> o: object []

== make object! []

>> set-quiet in o 'x 1

*** Script Error: set-quiet does not allow word! for its word argument ;) what???

*** Where: set-quiet

*** Near : 1

*** Stack:

```

**To reproduce**

`set-quiet none 1`

**Expected behavior**

"Doesn't accept none for it's word argument"

**Platform version**

```

red-view-14aug22-4eb8ad83f.exe

```

|

test

|

deceptive error message from set quiet describe the bug o object make object set quiet in o x script error set quiet does not allow word for its word argument what where set quiet near stack to reproduce set quiet none expected behavior doesn t accept none for it s word argument platform version red view exe

| 1

|

256,392

| 22,048,396,783

|

IssuesEvent

|

2022-05-30 06:04:16

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

opened

|

ccl/changefeedccl: TestChangefeedBackfillCheckpoint failed

|

C-test-failure O-robot branch-release-22.1

|

ccl/changefeedccl.TestChangefeedBackfillCheckpoint [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=5312529&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=5312529&tab=artifacts#/) on release-22.1 @ [34e6fcfdc8f3155831305ab4a78f960aaad3e7bc](https://github.com/cockroachdb/cockroach/commits/34e6fcfdc8f3155831305ab4a78f960aaad3e7bc):

```

=== RUN TestChangefeedBackfillCheckpoint

test_log_scope.go:79: test logs captured to: /artifacts/tmp/_tmp/a77002d7c9453d7cd2d382f907780e13/logTestChangefeedBackfillCheckpoint2134013902

test_log_scope.go:80: use -show-logs to present logs inline

=== CONT TestChangefeedBackfillCheckpoint

changefeed_test.go:5440: -- test log scope end --

--- FAIL: TestChangefeedBackfillCheckpoint (541.52s)

=== RUN TestChangefeedBackfillCheckpoint/enterprise-limit=100_B

changefeed_test.go:5420:

Error Trace: changefeed_test.go:5420

helpers_test.go:554

Error: Received unexpected error:

retrying txn failed after 50 attempts. original error: pq: restart transaction: TransactionRetryWithProtoRefreshError: TransactionRetryError: retry txn (RETRY_SERIALIZABLE - failed preemptive refresh due to a conflict: committed value on key /Table/109/1/"foo"/0/766181192725659648/0): "sql txn" meta={id=1ef45b68 key=/Table/109/1/"foo"/0/766179641595625472/0 pri=0.00000000 epo=50 ts=1653890353.444952019,2 min=1653890008.216986178,0 seq=8087} lock=true stat=PENDING rts=1653890344.066420091,0 wto=false gul=1653890008.716986178,0.

Test: TestChangefeedBackfillCheckpoint/enterprise-limit=100_B

--- FAIL: TestChangefeedBackfillCheckpoint/enterprise-limit=100_B (486.62s)

```

<details><summary>Help</summary>

<p>

See also: [How To Investigate a Go Test Failure \(internal\)](https://cockroachlabs.atlassian.net/l/c/HgfXfJgM)

Parameters in this failure:

- TAGS=bazel,gss,deadlock

</p>

</details>

/cc @cockroachdb/cdc

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*TestChangefeedBackfillCheckpoint.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

|

1.0

|

ccl/changefeedccl: TestChangefeedBackfillCheckpoint failed - ccl/changefeedccl.TestChangefeedBackfillCheckpoint [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=5312529&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=5312529&tab=artifacts#/) on release-22.1 @ [34e6fcfdc8f3155831305ab4a78f960aaad3e7bc](https://github.com/cockroachdb/cockroach/commits/34e6fcfdc8f3155831305ab4a78f960aaad3e7bc):

```

=== RUN TestChangefeedBackfillCheckpoint

test_log_scope.go:79: test logs captured to: /artifacts/tmp/_tmp/a77002d7c9453d7cd2d382f907780e13/logTestChangefeedBackfillCheckpoint2134013902

test_log_scope.go:80: use -show-logs to present logs inline

=== CONT TestChangefeedBackfillCheckpoint

changefeed_test.go:5440: -- test log scope end --

--- FAIL: TestChangefeedBackfillCheckpoint (541.52s)

=== RUN TestChangefeedBackfillCheckpoint/enterprise-limit=100_B

changefeed_test.go:5420:

Error Trace: changefeed_test.go:5420

helpers_test.go:554

Error: Received unexpected error:

retrying txn failed after 50 attempts. original error: pq: restart transaction: TransactionRetryWithProtoRefreshError: TransactionRetryError: retry txn (RETRY_SERIALIZABLE - failed preemptive refresh due to a conflict: committed value on key /Table/109/1/"foo"/0/766181192725659648/0): "sql txn" meta={id=1ef45b68 key=/Table/109/1/"foo"/0/766179641595625472/0 pri=0.00000000 epo=50 ts=1653890353.444952019,2 min=1653890008.216986178,0 seq=8087} lock=true stat=PENDING rts=1653890344.066420091,0 wto=false gul=1653890008.716986178,0.

Test: TestChangefeedBackfillCheckpoint/enterprise-limit=100_B

--- FAIL: TestChangefeedBackfillCheckpoint/enterprise-limit=100_B (486.62s)

```

<details><summary>Help</summary>

<p>

See also: [How To Investigate a Go Test Failure \(internal\)](https://cockroachlabs.atlassian.net/l/c/HgfXfJgM)

Parameters in this failure:

- TAGS=bazel,gss,deadlock

</p>

</details>

/cc @cockroachdb/cdc

<sub>

[This test on roachdash](https://roachdash.crdb.dev/?filter=status:open%20t:.*TestChangefeedBackfillCheckpoint.*&sort=title+created&display=lastcommented+project) | [Improve this report!](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)

</sub>

|

test

|

ccl changefeedccl testchangefeedbackfillcheckpoint failed ccl changefeedccl testchangefeedbackfillcheckpoint with on release run testchangefeedbackfillcheckpoint test log scope go test logs captured to artifacts tmp tmp test log scope go use show logs to present logs inline cont testchangefeedbackfillcheckpoint changefeed test go test log scope end fail testchangefeedbackfillcheckpoint run testchangefeedbackfillcheckpoint enterprise limit b changefeed test go error trace changefeed test go helpers test go error received unexpected error retrying txn failed after attempts original error pq restart transaction transactionretrywithprotorefresherror transactionretryerror retry txn retry serializable failed preemptive refresh due to a conflict committed value on key table foo sql txn meta id key table foo pri epo ts min seq lock true stat pending rts wto false gul test testchangefeedbackfillcheckpoint enterprise limit b fail testchangefeedbackfillcheckpoint enterprise limit b help see also parameters in this failure tags bazel gss deadlock cc cockroachdb cdc

| 1

|

65,588

| 14,740,878,706

|

IssuesEvent

|

2021-01-07 09:45:58

|

hiptest/ember-easy-datatable

|

https://api.github.com/repos/hiptest/ember-easy-datatable

|

opened

|

CVE-2018-16487 (Medium) detected in lodash-3.10.1.tgz

|

security vulnerability

|

## CVE-2018-16487 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-3.10.1.tgz</b></p></summary>

<p>The modern build of lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz">https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz</a></p>

<p>Path to dependency file: ember-easy-datatable/package.json</p>

<p>Path to vulnerable library: ember-easy-datatable/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- ember-cli-2.12.2.tgz (Root Library)

- broccoli-babel-transpiler-5.7.4.tgz

- babel-core-5.8.38.tgz

- :x: **lodash-3.10.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/hiptest/ember-easy-datatable/commit/174fc2ea19b9aaaa080440d9cb938d1e9a2d6120">174fc2ea19b9aaaa080440d9cb938d1e9a2d6120</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A prototype pollution vulnerability was found in lodash <4.17.11 where the functions merge, mergeWith, and defaultsDeep can be tricked into adding or modifying properties of Object.prototype.

<p>Publish Date: 2019-02-01

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-16487>CVE-2018-16487</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://bugzilla.redhat.com/show_bug.cgi?id=CVE-2018-16487">https://bugzilla.redhat.com/show_bug.cgi?id=CVE-2018-16487</a></p>

<p>Release Date: 2019-02-01</p>

<p>Fix Resolution: 4.17.11</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"lodash","packageVersion":"3.10.1","isTransitiveDependency":true,"dependencyTree":"ember-cli:2.12.2;broccoli-babel-transpiler:5.7.4;babel-core:5.8.38;lodash:3.10.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"4.17.11"}],"vulnerabilityIdentifier":"CVE-2018-16487","vulnerabilityDetails":"A prototype pollution vulnerability was found in lodash \u003c4.17.11 where the functions merge, mergeWith, and defaultsDeep can be tricked into adding or modifying properties of Object.prototype.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-16487","cvss3Severity":"medium","cvss3Score":"5.6","cvss3Metrics":{"A":"Low","AC":"High","PR":"None","S":"Unchanged","C":"Low","UI":"None","AV":"Network","I":"Low"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2018-16487 (Medium) detected in lodash-3.10.1.tgz - ## CVE-2018-16487 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>lodash-3.10.1.tgz</b></p></summary>

<p>The modern build of lodash modular utilities.</p>

<p>Library home page: <a href="https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz">https://registry.npmjs.org/lodash/-/lodash-3.10.1.tgz</a></p>

<p>Path to dependency file: ember-easy-datatable/package.json</p>

<p>Path to vulnerable library: ember-easy-datatable/node_modules/lodash/package.json</p>

<p>

Dependency Hierarchy:

- ember-cli-2.12.2.tgz (Root Library)

- broccoli-babel-transpiler-5.7.4.tgz

- babel-core-5.8.38.tgz

- :x: **lodash-3.10.1.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/hiptest/ember-easy-datatable/commit/174fc2ea19b9aaaa080440d9cb938d1e9a2d6120">174fc2ea19b9aaaa080440d9cb938d1e9a2d6120</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A prototype pollution vulnerability was found in lodash <4.17.11 where the functions merge, mergeWith, and defaultsDeep can be tricked into adding or modifying properties of Object.prototype.

<p>Publish Date: 2019-02-01

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-16487>CVE-2018-16487</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.6</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://bugzilla.redhat.com/show_bug.cgi?id=CVE-2018-16487">https://bugzilla.redhat.com/show_bug.cgi?id=CVE-2018-16487</a></p>

<p>Release Date: 2019-02-01</p>

<p>Fix Resolution: 4.17.11</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"lodash","packageVersion":"3.10.1","isTransitiveDependency":true,"dependencyTree":"ember-cli:2.12.2;broccoli-babel-transpiler:5.7.4;babel-core:5.8.38;lodash:3.10.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"4.17.11"}],"vulnerabilityIdentifier":"CVE-2018-16487","vulnerabilityDetails":"A prototype pollution vulnerability was found in lodash \u003c4.17.11 where the functions merge, mergeWith, and defaultsDeep can be tricked into adding or modifying properties of Object.prototype.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-16487","cvss3Severity":"medium","cvss3Score":"5.6","cvss3Metrics":{"A":"Low","AC":"High","PR":"None","S":"Unchanged","C":"Low","UI":"None","AV":"Network","I":"Low"},"extraData":{}}</REMEDIATE> -->

|

non_test

|

cve medium detected in lodash tgz cve medium severity vulnerability vulnerable library lodash tgz the modern build of lodash modular utilities library home page a href path to dependency file ember easy datatable package json path to vulnerable library ember easy datatable node modules lodash package json dependency hierarchy ember cli tgz root library broccoli babel transpiler tgz babel core tgz x lodash tgz vulnerable library found in head commit a href found in base branch master vulnerability details a prototype pollution vulnerability was found in lodash where the functions merge mergewith and defaultsdeep can be tricked into adding or modifying properties of object prototype publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact low integrity impact low availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution isopenpronvulnerability false ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails a prototype pollution vulnerability was found in lodash where the functions merge mergewith and defaultsdeep can be tricked into adding or modifying properties of object prototype vulnerabilityurl

| 0

|

249,856

| 21,195,374,409

|

IssuesEvent

|

2022-04-08 23:31:16

|

kubernetes/kubernetes

|

https://api.github.com/repos/kubernetes/kubernetes

|

closed

|

ci-cadvisor-e2e failed

|

priority/important-soon area/cadvisor sig/node kind/failing-test triage/accepted

|

### Which jobs are failing?

ci-cadvisor-e2e

### Which tests are failing?

```

W0322 22:57:59.666] 2022/03/22 22:57:59 main.go:331: Something went wrong: encountered 1 errors: [error during go run /go/src/k8s.io/kubernetes/test/e2e_node/runner/remote/run_remote.go --cleanup --logtostderr --vmodule=*=4 --ssh-env=gce --results-dir=/workspace/_artifacts --project=ci-cadvisor-e2e --zone=us-central1-f --ssh-user=prow --ssh-key=/workspace/.ssh/google_compute_engine --ginkgo-flags=--nodes=1 --test_args= --test-timeout=10m0s --image-config-file=/workspace/test-infra/jobs/e2e_node/containerd/image-config.yaml --test-suite=cadvisor: exit status 1]

W0322 22:57:59.673] Traceback (most recent call last):

W0322 22:57:59.674] File "/workspace/./test-infra/jenkins/../scenarios/kubernetes_e2e.py", line 723, in <module>

W0322 22:57:59.674] main(parse_args())

W0322 22:57:59.674] File "/workspace/./test-infra/jenkins/../scenarios/kubernetes_e2e.py", line 569, in main

W0322 22:57:59.674] mode.start(runner_args)

W0322 22:57:59.674] File "/workspace/./test-infra/jenkins/../scenarios/kubernetes_e2e.py", line 228, in start

W0322 22:57:59.675] check_env(env, self.command, *args)

W0322 22:57:59.675] File "/workspace/./test-infra/jenkins/../scenarios/kubernetes_e2e.py", line 111, in check_env

W0322 22:57:59.675] subprocess.check_call(cmd, env=env)

W0322 22:57:59.675] File "/usr/lib/python2.7/subprocess.py", line 190, in check_call

W0322 22:57:59.675] raise CalledProcessError(retcode, cmd)

W0322 22:57:59.676] subprocess.CalledProcessError: Command '('kubetest', '--dump=/workspace/_artifacts', '--gcp-service-account=/etc/service-account/service-account.json', '--up', '--down', '--test', '--deployment=node', '--provider=gce', '--cluster=bootstrap-e2e', '--gcp-network=bootstrap-e2e', '--gcp-project=ci-cadvisor-e2e', '--gcp-zone=us-central1-f', '--node-args=--image-config-file=/workspace/test-infra/jobs/e2e_node/containerd/image-config.yaml --test-suite=cadvisor', '--node-tests=true', '--test_args=--nodes=1', '--timeout=10m')' returned non-zero exit status 1

E0322 22:57:59.677] Command failed

I0322 22:57:59.678] process 339 exited with code 1 after 2.2m

E0322 22:57:59.678] FAIL: ci-cadvisor-e2e

```

### Since when has it been failing?

Since 03-22.

Changes list that may

- https://github.com/kubernetes/kubernetes/compare/dd604a0f9a2e015a869d01957297e33f2c0c7025...95e30f66c?

- - Candidate1: https://github.com/kubernetes/kubernetes/pull/108704 (but for windows)

- https://github.com/kubernetes/test-infra/compare/ce1169a66...a6fe00c22

- - Candidate1: https://github.com/kubernetes/test-infra/pull/25727

- - Candidate2: https://github.com/kubernetes/test-infra/pull/25405

### Testgrid link

https://testgrid.k8s.io/sig-node-cadvisor#cadvisor-e2e

### Reason for failure (if possible)

_No response_

### Anything else we need to know?

_No response_

### Relevant SIG(s)

/sig node

/area cadvisor

|

1.0

|

ci-cadvisor-e2e failed - ### Which jobs are failing?

ci-cadvisor-e2e

### Which tests are failing?

```

W0322 22:57:59.666] 2022/03/22 22:57:59 main.go:331: Something went wrong: encountered 1 errors: [error during go run /go/src/k8s.io/kubernetes/test/e2e_node/runner/remote/run_remote.go --cleanup --logtostderr --vmodule=*=4 --ssh-env=gce --results-dir=/workspace/_artifacts --project=ci-cadvisor-e2e --zone=us-central1-f --ssh-user=prow --ssh-key=/workspace/.ssh/google_compute_engine --ginkgo-flags=--nodes=1 --test_args= --test-timeout=10m0s --image-config-file=/workspace/test-infra/jobs/e2e_node/containerd/image-config.yaml --test-suite=cadvisor: exit status 1]

W0322 22:57:59.673] Traceback (most recent call last):

W0322 22:57:59.674] File "/workspace/./test-infra/jenkins/../scenarios/kubernetes_e2e.py", line 723, in <module>

W0322 22:57:59.674] main(parse_args())

W0322 22:57:59.674] File "/workspace/./test-infra/jenkins/../scenarios/kubernetes_e2e.py", line 569, in main

W0322 22:57:59.674] mode.start(runner_args)

W0322 22:57:59.674] File "/workspace/./test-infra/jenkins/../scenarios/kubernetes_e2e.py", line 228, in start

W0322 22:57:59.675] check_env(env, self.command, *args)

W0322 22:57:59.675] File "/workspace/./test-infra/jenkins/../scenarios/kubernetes_e2e.py", line 111, in check_env

W0322 22:57:59.675] subprocess.check_call(cmd, env=env)

W0322 22:57:59.675] File "/usr/lib/python2.7/subprocess.py", line 190, in check_call

W0322 22:57:59.675] raise CalledProcessError(retcode, cmd)

W0322 22:57:59.676] subprocess.CalledProcessError: Command '('kubetest', '--dump=/workspace/_artifacts', '--gcp-service-account=/etc/service-account/service-account.json', '--up', '--down', '--test', '--deployment=node', '--provider=gce', '--cluster=bootstrap-e2e', '--gcp-network=bootstrap-e2e', '--gcp-project=ci-cadvisor-e2e', '--gcp-zone=us-central1-f', '--node-args=--image-config-file=/workspace/test-infra/jobs/e2e_node/containerd/image-config.yaml --test-suite=cadvisor', '--node-tests=true', '--test_args=--nodes=1', '--timeout=10m')' returned non-zero exit status 1

E0322 22:57:59.677] Command failed

I0322 22:57:59.678] process 339 exited with code 1 after 2.2m

E0322 22:57:59.678] FAIL: ci-cadvisor-e2e

```

### Since when has it been failing?

Since 03-22.

Changes list that may

- https://github.com/kubernetes/kubernetes/compare/dd604a0f9a2e015a869d01957297e33f2c0c7025...95e30f66c?

- - Candidate1: https://github.com/kubernetes/kubernetes/pull/108704 (but for windows)

- https://github.com/kubernetes/test-infra/compare/ce1169a66...a6fe00c22

- - Candidate1: https://github.com/kubernetes/test-infra/pull/25727

- - Candidate2: https://github.com/kubernetes/test-infra/pull/25405

### Testgrid link

https://testgrid.k8s.io/sig-node-cadvisor#cadvisor-e2e

### Reason for failure (if possible)

_No response_

### Anything else we need to know?

_No response_

### Relevant SIG(s)

/sig node

/area cadvisor

|

test

|

ci cadvisor failed which jobs are failing ci cadvisor which tests are failing main go something went wrong encountered errors traceback most recent call last file workspace test infra jenkins scenarios kubernetes py line in main parse args file workspace test infra jenkins scenarios kubernetes py line in main mode start runner args file workspace test infra jenkins scenarios kubernetes py line in start check env env self command args file workspace test infra jenkins scenarios kubernetes py line in check env subprocess check call cmd env env file usr lib subprocess py line in check call raise calledprocesserror retcode cmd subprocess calledprocesserror command kubetest dump workspace artifacts gcp service account etc service account service account json up down test deployment node provider gce cluster bootstrap gcp network bootstrap gcp project ci cadvisor gcp zone us f node args image config file workspace test infra jobs node containerd image config yaml test suite cadvisor node tests true test args nodes timeout returned non zero exit status command failed process exited with code after fail ci cadvisor since when has it been failing since changes list that may (but for windows) testgrid link reason for failure if possible no response anything else we need to know no response relevant sig s sig node area cadvisor

| 1

|

51,145

| 13,190,289,230

|

IssuesEvent

|

2020-08-13 09:55:47

|

ESA-VirES/WebClient-Framework

|

https://api.github.com/repos/ESA-VirES/WebClient-Framework

|

opened

|

Broken server-side interpolation of the EEF data.

|

defect

|

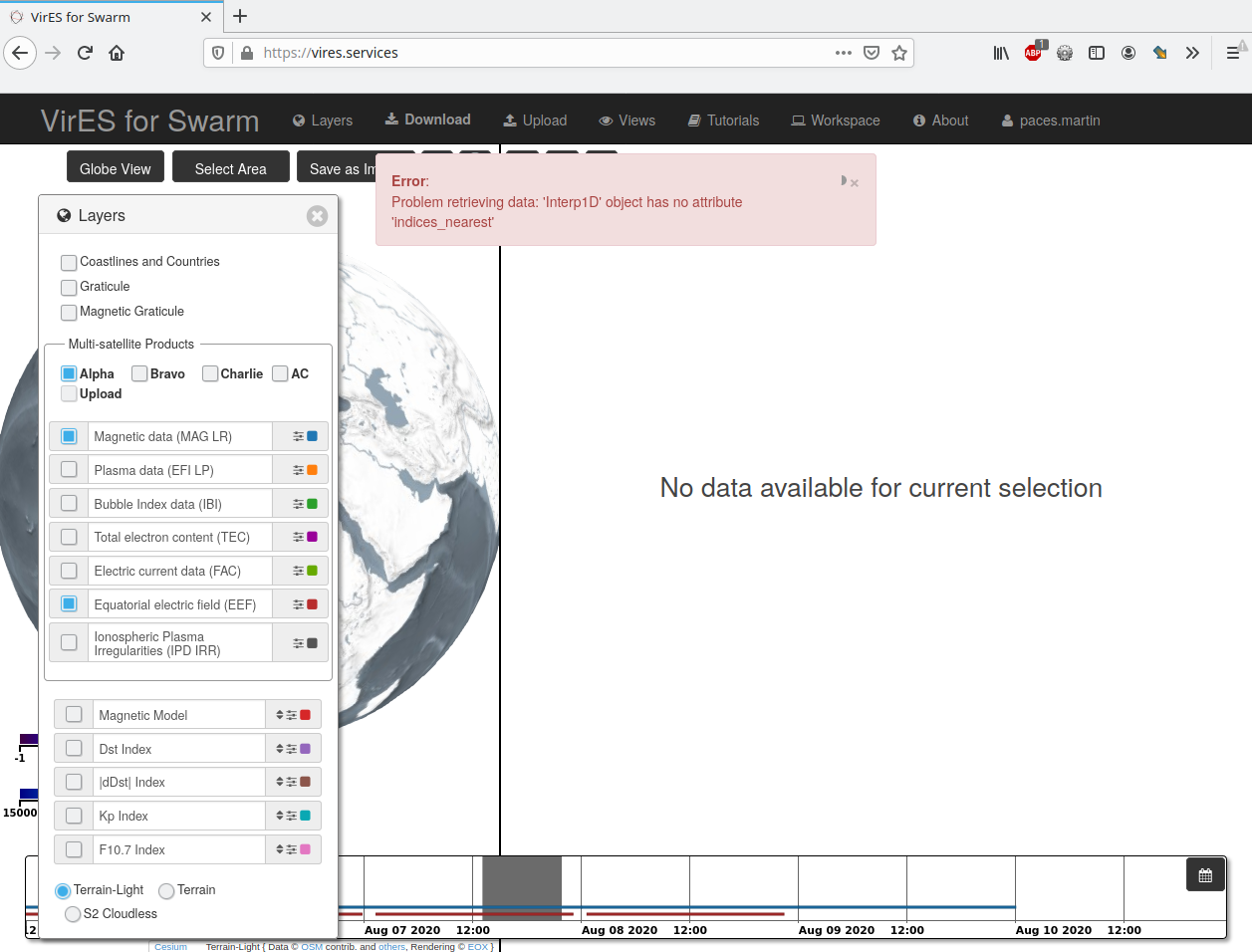

When selecting MAG and EEF data the server responds with following error:

```

Error: Problem retrieving data: 'Interp1D' object has no attribute 'indices_nearest'

```

This is a regression introduces in v3.3.0.

Observed on the production instance.

Already fixed on staging.

FAO @lmar76

|

1.0

|

Broken server-side interpolation of the EEF data. - When selecting MAG and EEF data the server responds with following error:

```

Error: Problem retrieving data: 'Interp1D' object has no attribute 'indices_nearest'

```

This is a regression introduces in v3.3.0.

Observed on the production instance.

Already fixed on staging.

FAO @lmar76

|

non_test

|

broken server side interpolation of the eef data when selecting mag and eef data the server responds with following error error problem retrieving data object has no attribute indices nearest this is a regression introduces in observed on the production instance already fixed on staging fao

| 0

|

21,665

| 3,911,689,155

|

IssuesEvent

|

2016-04-20 07:21:57

|

Legion-Expansion/Legion-Expansion

|

https://api.github.com/repos/Legion-Expansion/Legion-Expansion

|

reopened

|

Remove Icon Extensions and Icon Reloader as dependencies

|

needs testing pte

|

This will be needed for PTE release, but it will break icons on 89755.

|

1.0

|

Remove Icon Extensions and Icon Reloader as dependencies - This will be needed for PTE release, but it will break icons on 89755.

|

test

|

remove icon extensions and icon reloader as dependencies this will be needed for pte release but it will break icons on

| 1

|

275,534

| 23,921,258,929

|

IssuesEvent

|

2022-09-09 17:07:25

|

ECP-WarpX/WarpX

|

https://api.github.com/repos/ECP-WarpX/WarpX

|

opened

|

Invalid memory access when moving window and timers-based load-balancing is used

|

bug bug: affects latest release component: load balancing

|

I am opening this issue because I have observed an invalid memory access when moving window and load-balancing based on timers are used in combination.

Here I provide a small reproducer:

```

#################################

####### GENERAL PARAMETERS ######

#################################

max_step = 10

amr.n_cell = 64 64 64

amr.max_grid_size = 32

amr.blocking_factor = 32

amr.max_level = 0

geometry.dims = 3

geometry.prob_lo = -10.e-6 -10.e-6 -10.e-6 # physical domain

geometry.prob_hi = 10.e-6 10.e-6 10.e-6

algo.load_balance_intervals = 3::100

algo.load_balance_with_sfc = 0

algo.load_balance_costs_update = timers

warpx.do_moving_window = 1

warpx.moving_window_dir = z

warpx.moving_window_v = 1.0

warpx.start_moving_window_step = 2

#################################

####### Boundary condition ######

#################################

boundary.field_lo = pml pml pml

boundary.field_hi = pml pml pml

#################################

############ NUMERICS ###########

#################################

warpx.verbose = 1

warpx.cfl = 0.99

# Order of particle shape factors

algo.particle_shape = 3

#################################

############ PLASMA #############

#################################

particles.species_names = electrons

electrons.species_type = electron

electrons.injection_style = "NUniformPerCell"

electrons.num_particles_per_cell_each_dim = 1 1 2

electrons.profile = constant

electrons.density = 1.e25 # number of electrons per m^3

electrons.momentum_distribution_type = "gaussian"

electrons.ux_th = 0.01 # uth the std of the (unitless) momentum

electrons.uy_th = 0.01 # uth the std of the (unitless) momentum

electrons.uz_th = 0.01 # uth the std of the (unitless) momentum

```

When WarpX runs this inputfile (even without GPUs or OMP support), `valgrind` detects the following issue:

```

STEP 3 starts ...

==41155== Invalid read of size 4

==41155== at 0x55CFBD: Add<float> (AMReX_GpuAtomic.H:584)

==41155== by 0x55CFBD: WarpX::shiftMF(amrex::MultiFab&, amrex::Geometry const&, int, int, int, float, bool, amrex::ParserExecutor<3> const&) (WarpXMovingWindow.cpp:435)

==41155== by 0x55F8EF: WarpX::MoveWindow(int, bool) (WarpXMovingWindow.cpp:192)

==41155== by 0x372D78: WarpX::Evolve(int) (WarpXEvolve.cpp:269)

==41155== by 0x1BB863: main (main.cpp:67)

==41155== Address 0xb8c925c is 4 bytes before a block of size 32 alloc'd

==41155== at 0x4840F2F: operator new(unsigned long) (vg_replace_malloc.c:422)

==41155== by 0x1F088A: allocate (new_allocator.h:127)

==41155== by 0x1F088A: allocate (alloc_traits.h:464)

==41155== by 0x1F088A: _M_allocate (stl_vector.h:346)

==41155== by 0x1F088A: std::vector<float, std::allocator<float> >::_M_default_append(unsigned long) (vector.tcc:635)

==41155== by 0x1D8EEB: define (AMReX_LayoutData.H:31)

==41155== by 0x1D8EEB: LayoutData (AMReX_LayoutData.H:22)

==41155== by 0x1D8EEB: make_unique<amrex::LayoutData<float>, const amrex::BoxArray&, const amrex::DistributionMapping&> (unique_ptr.h:962)

==41155== by 0x1D8EEB: WarpX::AllocLevelMFs(int, amrex::BoxArray const&, amrex::DistributionMapping const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, bool) (WarpX.cpp:2170)

==41155== by 0x1DCEAB: WarpX::AllocLevelData(int, amrex::BoxArray const&, amrex::DistributionMapping const&) (WarpX.cpp:1680)

==41155== by 0x1DCFC7: WarpX::MakeNewLevelFromScratch(int, float, amrex::BoxArray const&, amrex::DistributionMapping const&) (WarpX.cpp:1548)

==41155== by 0x6D620D: amrex::AmrMesh::MakeNewGrids(float) (AMReX_AmrMesh.cpp:779)

==41155== by 0x3DDC2F: InitFromScratch (WarpXInitData.cpp:472)

==41155== by 0x3DDC2F: WarpX::InitData() (WarpXInitData.cpp:378)

==41155== by 0x1BB856: main (main.cpp:65)

==41155==

==41155== Invalid write of size 4

==41155== at 0x55CFC1: Add<float> (AMReX_GpuAtomic.H:584)

==41155== by 0x55CFC1: WarpX::shiftMF(amrex::MultiFab&, amrex::Geometry const&, int, int, int, float, bool, amrex::ParserExecutor<3> const&) (WarpXMovingWindow.cpp:435)

==41155== by 0x55F8EF: WarpX::MoveWindow(int, bool) (WarpXMovingWindow.cpp:192)

==41155== by 0x372D78: WarpX::Evolve(int) (WarpXEvolve.cpp:269)

==41155== by 0x1BB863: main (main.cpp:67)

==41155== Address 0xb8c925c is 4 bytes before a block of size 32 alloc'd

==41155== at 0x4840F2F: operator new(unsigned long) (vg_replace_malloc.c:422)

==41155== by 0x1F088A: allocate (new_allocator.h:127)

==41155== by 0x1F088A: allocate (alloc_traits.h:464)

==41155== by 0x1F088A: _M_allocate (stl_vector.h:346)

==41155== by 0x1F088A: std::vector<float, std::allocator<float> >::_M_default_append(unsigned long) (vector.tcc:635)

==41155== by 0x1D8EEB: define (AMReX_LayoutData.H:31)

==41155== by 0x1D8EEB: LayoutData (AMReX_LayoutData.H:22)

==41155== by 0x1D8EEB: make_unique<amrex::LayoutData<float>, const amrex::BoxArray&, const amrex::DistributionMapping&> (unique_ptr.h:962)

==41155== by 0x1D8EEB: WarpX::AllocLevelMFs(int, amrex::BoxArray const&, amrex::DistributionMapping const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, bool) (WarpX.cpp:2170)

==41155== by 0x1DCEAB: WarpX::AllocLevelData(int, amrex::BoxArray const&, amrex::DistributionMapping const&) (WarpX.cpp:1680)

==41155== by 0x1DCFC7: WarpX::MakeNewLevelFromScratch(int, float, amrex::BoxArray const&, amrex::DistributionMapping const&) (WarpX.cpp:1548)

==41155== by 0x6D620D: amrex::AmrMesh::MakeNewGrids(float) (AMReX_AmrMesh.cpp:779)

==41155== by 0x3DDC2F: InitFromScratch (WarpXInitData.cpp:472)

==41155== by 0x3DDC2F: WarpX::InitData() (WarpXInitData.cpp:378)

==41155== by 0x1BB856: main (main.cpp:65)

==41155==

==41155== Invalid read of size 4

==41155== at 0x55CFBD: Add<float> (AMReX_GpuAtomic.H:584)

==41155== by 0x55CFBD: WarpX::shiftMF(amrex::MultiFab&, amrex::Geometry const&, int, int, int, float, bool, amrex::ParserExecutor<3> const&) (WarpXMovingWindow.cpp:435)

==41155== by 0x55F92F: WarpX::MoveWindow(int, bool) (WarpXMovingWindow.cpp:193)

==41155== by 0x372D78: WarpX::Evolve(int) (WarpXEvolve.cpp:269)

==41155== by 0x1BB863: main (main.cpp:67)

==41155== Address 0xb8c925c is 4 bytes before a block of size 32 alloc'd

==41155== at 0x4840F2F: operator new(unsigned long) (vg_replace_malloc.c:422)

==41155== by 0x1F088A: allocate (new_allocator.h:127)

==41155== by 0x1F088A: allocate (alloc_traits.h:464)

==41155== by 0x1F088A: _M_allocate (stl_vector.h:346)

==41155== by 0x1F088A: std::vector<float, std::allocator<float> >::_M_default_append(unsigned long) (vector.tcc:635)

==41155== by 0x1D8EEB: define (AMReX_LayoutData.H:31)

==41155== by 0x1D8EEB: LayoutData (AMReX_LayoutData.H:22)

==41155== by 0x1D8EEB: make_unique<amrex::LayoutData<float>, const amrex::BoxArray&, const amrex::DistributionMapping&> (unique_ptr.h:962)

==41155== by 0x1D8EEB: WarpX::AllocLevelMFs(int, amrex::BoxArray const&, amrex::DistributionMapping const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, bool) (WarpX.cpp:2170)

==41155== by 0x1DCEAB: WarpX::AllocLevelData(int, amrex::BoxArray const&, amrex::DistributionMapping const&) (WarpX.cpp:1680)

==41155== by 0x1DCFC7: WarpX::MakeNewLevelFromScratch(int, float, amrex::BoxArray const&, amrex::DistributionMapping const&) (WarpX.cpp:1548)

==41155== by 0x6D620D: amrex::AmrMesh::MakeNewGrids(float) (AMReX_AmrMesh.cpp:779)

==41155== by 0x3DDC2F: InitFromScratch (WarpXInitData.cpp:472)

==41155== by 0x3DDC2F: WarpX::InitData() (WarpXInitData.cpp:378)

==41155== by 0x1BB856: main (main.cpp:65)

==41155==

==41155== Invalid write of size 4

==41155== at 0x55CFC1: Add<float> (AMReX_GpuAtomic.H:584)

==41155== by 0x55CFC1: WarpX::shiftMF(amrex::MultiFab&, amrex::Geometry const&, int, int, int, float, bool, amrex::ParserExecutor<3> const&) (WarpXMovingWindow.cpp:435)

==41155== by 0x55F92F: WarpX::MoveWindow(int, bool) (WarpXMovingWindow.cpp:193)

==41155== by 0x372D78: WarpX::Evolve(int) (WarpXEvolve.cpp:269)

==41155== by 0x1BB863: main (main.cpp:67)

==41155== Address 0xb8c925c is 4 bytes before a block of size 32 alloc'd

==41155== at 0x4840F2F: operator new(unsigned long) (vg_replace_malloc.c:422)

==41155== by 0x1F088A: allocate (new_allocator.h:127)

==41155== by 0x1F088A: allocate (alloc_traits.h:464)

==41155== by 0x1F088A: _M_allocate (stl_vector.h:346)

==41155== by 0x1F088A: std::vector<float, std::allocator<float> >::_M_default_append(unsigned long) (vector.tcc:635)

==41155== by 0x1D8EEB: define (AMReX_LayoutData.H:31)

==41155== by 0x1D8EEB: LayoutData (AMReX_LayoutData.H:22)

==41155== by 0x1D8EEB: make_unique<amrex::LayoutData<float>, const amrex::BoxArray&, const amrex::DistributionMapping&> (unique_ptr.h:962)

==41155== by 0x1D8EEB: WarpX::AllocLevelMFs(int, amrex::BoxArray const&, amrex::DistributionMapping const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, amrex::IntVect const&, bool) (WarpX.cpp:2170)

==41155== by 0x1DCEAB: WarpX::AllocLevelData(int, amrex::BoxArray const&, amrex::DistributionMapping const&) (WarpX.cpp:1680)

==41155== by 0x1DCFC7: WarpX::MakeNewLevelFromScratch(int, float, amrex::BoxArray const&, amrex::DistributionMapping const&) (WarpX.cpp:1548)

==41155== by 0x6D620D: amrex::AmrMesh::MakeNewGrids(float) (AMReX_AmrMesh.cpp:779)

==41155== by 0x3DDC2F: InitFromScratch (WarpXInitData.cpp:472)

==41155== by 0x3DDC2F: WarpX::InitData() (WarpXInitData.cpp:378)

==41155== by 0x1BB856: main (main.cpp:65)

==41155==

STEP 3 ends. TIME = 1.787413796e-15 DT = 5.958046162e-16

Evolve time = 44.5962677 s; This step = 13.71976852 s; Avg. per step = 14.86542225 s

```

|

1.0

|

Invalid memory access when moving window and timers-based load-balancing is used - I am opening this issue because I have observed an invalid memory access when moving window and load-balancing based on timers are used in combination.

Here I provide a small reproducer:

```

#################################

####### GENERAL PARAMETERS ######

#################################

max_step = 10

amr.n_cell = 64 64 64

amr.max_grid_size = 32

amr.blocking_factor = 32

amr.max_level = 0

geometry.dims = 3

geometry.prob_lo = -10.e-6 -10.e-6 -10.e-6 # physical domain

geometry.prob_hi = 10.e-6 10.e-6 10.e-6

algo.load_balance_intervals = 3::100

algo.load_balance_with_sfc = 0

algo.load_balance_costs_update = timers

warpx.do_moving_window = 1

warpx.moving_window_dir = z

warpx.moving_window_v = 1.0

warpx.start_moving_window_step = 2

#################################

####### Boundary condition ######

#################################

boundary.field_lo = pml pml pml

boundary.field_hi = pml pml pml

#################################

############ NUMERICS ###########

#################################

warpx.verbose = 1

warpx.cfl = 0.99

# Order of particle shape factors

algo.particle_shape = 3

#################################

############ PLASMA #############

#################################

particles.species_names = electrons

electrons.species_type = electron

electrons.injection_style = "NUniformPerCell"

electrons.num_particles_per_cell_each_dim = 1 1 2

electrons.profile = constant

electrons.density = 1.e25 # number of electrons per m^3

electrons.momentum_distribution_type = "gaussian"

electrons.ux_th = 0.01 # uth the std of the (unitless) momentum