Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 742 | labels stringlengths 4 431 | body stringlengths 5 239k | index stringclasses 10 values | text_combine stringlengths 96 240k | label stringclasses 2 values | text stringlengths 96 200k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

449,460 | 12,968,776,009 | IssuesEvent | 2020-07-21 06:31:44 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | accounts.firefox.com - design is broken | browser-firefox-mobile engine-gecko priority-normal | <!-- @browser: Firefox Mobile 79.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 5.1; Mobile; rv:79.0) Gecko/79.0 Firefox/79.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/55632 -->

**URL**: https://accounts.firefox.com/reset_password_verified?uid=a3b218bdba884245895fcc0de0f81f3b&token=8f8f8e95fb953a243ad7cc876fcc95ba340217985fd275c62b0044a3a24b0f12&code=1636968044d6c63a6300d84b3769efba&email=osipovich.dim%40gmail.com&service=a2270f727f45f648&resume=eyJkZXZpY2VJZCI6ImNhZjRjYWVjODg0ODQzMDlhMjk0NDFhZmVlYzE4YmVlIiwiZW1haWwiOiJvc2lwb3ZpY2guZGltQGdtYWlsLmNvbSIsImVudHJ5cG9pbnQiOm51bGwsImVudHJ5cG9pbnRFeHBlcmltZW50IjpudWxsLCJlbnRyeXBvaW50VmFyaWF0aW9uIjpudWxsLCJmbG93QmVnaW4iOjE1OTUxNjMxMDA0NDEsImZsb3dJZCI6IjI1MDg2NzVhN2U5OThmNTIwMzY5OGRiMDk5NjI1MDliMWI2MzQ5NjE0NDM1ZGI1NTBlZDU0Yzc0YjdkOWIxMWEiLCJwbGFuSWQiOm51bGwsInByb2R1Y3RJZCI6bnVsbCwicmVzZXRQYXNzd29yZENvbmZpcm0iOnRydWUsInN0eWxlIjpudWxsLCJ1bmlxdWVVc2VySWQiOiI4YTlmODFmZi01MmE0LTQ3NzctOWI2OC03NTU2YWFkNTNmOWUiLCJ1dG1DYW1wYWlnbiI6bnVsbCwidXRtQ29udGVudCI6bnVsbCwidXRtTWVkaXVtIjpudWxsLCJ1dG1Tb3VyY2UiOm51bGwsInV0bVRlcm0iOm51bGx9&emailToHashWith=osipovich.dim%40gmail.com&utm_medium=email&utm_campaign=fx-forgot-password&utm_content=fx-reset-password

**Browser / Version**: Firefox Mobile 79.0

**Operating System**: Android 5.1

**Tested Another Browser**: Yes Chrome

**Problem type**: Design is broken

**Description**: Items are overlapped

**Steps to Reproduce**:

Web app not working.video no reload

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200713203149</li><li>channel: beta</li><li>hasTouchScreen: true</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | accounts.firefox.com - design is broken - <!-- @browser: Firefox Mobile 79.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 5.1; Mobile; rv:79.0) Gecko/79.0 Firefox/79.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/55632 -->

**URL**: https://accounts.firefox.com/reset_password_verified?uid=a3b218bdba884245895fcc0de0f81f3b&token=8f8f8e95fb953a243ad7cc876fcc95ba340217985fd275c62b0044a3a24b0f12&code=1636968044d6c63a6300d84b3769efba&email=osipovich.dim%40gmail.com&service=a2270f727f45f648&resume=eyJkZXZpY2VJZCI6ImNhZjRjYWVjODg0ODQzMDlhMjk0NDFhZmVlYzE4YmVlIiwiZW1haWwiOiJvc2lwb3ZpY2guZGltQGdtYWlsLmNvbSIsImVudHJ5cG9pbnQiOm51bGwsImVudHJ5cG9pbnRFeHBlcmltZW50IjpudWxsLCJlbnRyeXBvaW50VmFyaWF0aW9uIjpudWxsLCJmbG93QmVnaW4iOjE1OTUxNjMxMDA0NDEsImZsb3dJZCI6IjI1MDg2NzVhN2U5OThmNTIwMzY5OGRiMDk5NjI1MDliMWI2MzQ5NjE0NDM1ZGI1NTBlZDU0Yzc0YjdkOWIxMWEiLCJwbGFuSWQiOm51bGwsInByb2R1Y3RJZCI6bnVsbCwicmVzZXRQYXNzd29yZENvbmZpcm0iOnRydWUsInN0eWxlIjpudWxsLCJ1bmlxdWVVc2VySWQiOiI4YTlmODFmZi01MmE0LTQ3NzctOWI2OC03NTU2YWFkNTNmOWUiLCJ1dG1DYW1wYWlnbiI6bnVsbCwidXRtQ29udGVudCI6bnVsbCwidXRtTWVkaXVtIjpudWxsLCJ1dG1Tb3VyY2UiOm51bGwsInV0bVRlcm0iOm51bGx9&emailToHashWith=osipovich.dim%40gmail.com&utm_medium=email&utm_campaign=fx-forgot-password&utm_content=fx-reset-password

**Browser / Version**: Firefox Mobile 79.0

**Operating System**: Android 5.1

**Tested Another Browser**: Yes Chrome

**Problem type**: Design is broken

**Description**: Items are overlapped

**Steps to Reproduce**:

Web app not working.video no reload

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20200713203149</li><li>channel: beta</li><li>hasTouchScreen: true</li>

</ul>

</details>

_From [webcompat.com](https://webcompat.com/) with ❤️_ | non_usab | accounts firefox com design is broken url browser version firefox mobile operating system android tested another browser yes chrome problem type design is broken description items are overlapped steps to reproduce web app not working video no reload browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel beta hastouchscreen true from with ❤️ | 0 |

4,864 | 3,897,238,997 | IssuesEvent | 2016-04-16 09:02:42 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 15759378: Xcode 5.0.2: Using ⌘-D to duplicate a UITableViewController embedded in a UINavigationController in IB makes UINavigationItem of duplicate "unselectable" | classification:ui/usability reproducible:always status:open | #### Description

Summary:

Using ⌘-D to duplicate a UITableViewController embedded in a UINavigationController in IB makes UINavigationItem of duplicate "unselectable" in the canvas window until one closes and reopens the project.

Steps to Reproduce:

1. Open IB

2. Create a UITableViewController

3. Embed in a UINavigationController

4. Drag a bar button to the right button slot

5. Duplicate (⌘-D) the UITableViewController

6. Control-drag from the right bar button on the first controller to PUSH segue to the new controller

7. Drag a bar button to the right button slot on the new controller

Expected Results:

Similar to the working result after #4

Actual Results:

No visual indication of ability to drag and drop button. In fact, if one DOES drop the button it is placed in IB as a toolbar button, NOT a navbar button.

Notes:

Mavericks 10.9.1

Closing the project and re-opening works around the issue

-

Product Version: Xcode 5.0.2 (5A3005)

Created: 2014-01-07T02:28:24.447718

Originated: 2014-01-07T13:28:00

Open Radar Link: http://www.openradar.me/15759378 | True | 15759378: Xcode 5.0.2: Using ⌘-D to duplicate a UITableViewController embedded in a UINavigationController in IB makes UINavigationItem of duplicate "unselectable" - #### Description

Summary:

Using ⌘-D to duplicate a UITableViewController embedded in a UINavigationController in IB makes UINavigationItem of duplicate "unselectable" in the canvas window until one closes and reopens the project.

Steps to Reproduce:

1. Open IB

2. Create a UITableViewController

3. Embed in a UINavigationController

4. Drag a bar button to the right button slot

5. Duplicate (⌘-D) the UITableViewController

6. Control-drag from the right bar button on the first controller to PUSH segue to the new controller

7. Drag a bar button to the right button slot on the new controller

Expected Results:

Similar to the working result after #4

Actual Results:

No visual indication of ability to drag and drop button. In fact, if one DOES drop the button it is placed in IB as a toolbar button, NOT a navbar button.

Notes:

Mavericks 10.9.1

Closing the project and re-opening works around the issue

-

Product Version: Xcode 5.0.2 (5A3005)

Created: 2014-01-07T02:28:24.447718

Originated: 2014-01-07T13:28:00

Open Radar Link: http://www.openradar.me/15759378 | usab | xcode using ⌘ d to duplicate a uitableviewcontroller embedded in a uinavigationcontroller in ib makes uinavigationitem of duplicate unselectable description summary using ⌘ d to duplicate a uitableviewcontroller embedded in a uinavigationcontroller in ib makes uinavigationitem of duplicate unselectable in the canvas window until one closes and reopens the project steps to reproduce open ib create a uitableviewcontroller embed in a uinavigationcontroller drag a bar button to the right button slot duplicate ⌘ d the uitableviewcontroller control drag from the right bar button on the first controller to push segue to the new controller drag a bar button to the right button slot on the new controller expected results similar to the working result after actual results no visual indication of ability to drag and drop button in fact if one does drop the button it is placed in ib as a toolbar button not a navbar button notes mavericks closing the project and re opening works around the issue product version xcode created originated open radar link | 1 |

257,063 | 22,144,015,531 | IssuesEvent | 2022-06-03 09:56:48 | MohistMC/Mohist | https://api.github.com/repos/MohistMC/Mohist | closed | [1.16.5]black mod list option not work properly | 1.16.5 Wait Needs Testing | <!-- ISSUE_TEMPLATE_3 -> IMPORTANT: DO NOT DELETE THIS LINE.-->

<!-- Thank you for reporting ! Please note that issues can take a lot of time to be fixed and there is no eta.-->

<!-- If you don't know where to upload your logs and crash reports, you can use these websites : -->

<!-- https://gist.github.com (recommended) -->

<!-- https://mclo.gs -->

<!-- https://haste.mohistmc.com -->

<!-- https://pastebin.com -->

<!-- TO FILL THIS TEMPLATE, YOU NEED TO REPLACE THE {} BY WHAT YOU WANT -->

**Minecraft Version :** 1.16.5

**Mohist Version :** 1.16.5-997

**Operating System :** windows server 2022

**Logs :** none

**Mod list :**

• minecraft mohist-1.16.5-997-server.jar : minecraft (1.16.5) - 1

• maven_libs mohist-1.16.5-997-universal.jar : forge (36.2.35) - 1

**Plugin list :** none

**Description of issue :**

The option of `modsblacklist`(which is in the mohist.yml) may not work properly, when the `list` parameter accept a list, the element in the list can't be matched by single, which I mean, if I set the parameter like this:

```

forge:

modsblacklist:

enable: true

list: xray,autoattack

kickmessage: Use of unauthorized mods

```

or

```

forge:

modsblacklist:

enable: true

list:

- xray

- autoattact

kickmessage: Use of unauthorized mods

```

as well as this after reload:

```

forge:

modsblacklist:

enable: true

list: '[xray, autoattact]'

kickmessage: Use of unauthorized mods

```

When a client with only `xray` but no `autoattack` loaded, it can pass the blacklist check and join the server, but what I expect is that the client with any mod listed by config should be blocked.

And if I set the parameter as below:

```

forge:

modsblacklist:

enable: true

list: xray

kickmessage: Use of unauthorized mods

```

It seems work properly that server could block any client with xray mod loaded.

So the problem is, how can I set the blacklist compare method to `contains any in list` but not `equal all`? | 1.0 | [1.16.5]black mod list option not work properly - <!-- ISSUE_TEMPLATE_3 -> IMPORTANT: DO NOT DELETE THIS LINE.-->

<!-- Thank you for reporting ! Please note that issues can take a lot of time to be fixed and there is no eta.-->

<!-- If you don't know where to upload your logs and crash reports, you can use these websites : -->

<!-- https://gist.github.com (recommended) -->

<!-- https://mclo.gs -->

<!-- https://haste.mohistmc.com -->

<!-- https://pastebin.com -->

<!-- TO FILL THIS TEMPLATE, YOU NEED TO REPLACE THE {} BY WHAT YOU WANT -->

**Minecraft Version :** 1.16.5

**Mohist Version :** 1.16.5-997

**Operating System :** windows server 2022

**Logs :** none

**Mod list :**

• minecraft mohist-1.16.5-997-server.jar : minecraft (1.16.5) - 1

• maven_libs mohist-1.16.5-997-universal.jar : forge (36.2.35) - 1

**Plugin list :** none

**Description of issue :**

The option of `modsblacklist`(which is in the mohist.yml) may not work properly, when the `list` parameter accept a list, the element in the list can't be matched by single, which I mean, if I set the parameter like this:

```

forge:

modsblacklist:

enable: true

list: xray,autoattack

kickmessage: Use of unauthorized mods

```

or

```

forge:

modsblacklist:

enable: true

list:

- xray

- autoattact

kickmessage: Use of unauthorized mods

```

as well as this after reload:

```

forge:

modsblacklist:

enable: true

list: '[xray, autoattact]'

kickmessage: Use of unauthorized mods

```

When a client with only `xray` but no `autoattack` loaded, it can pass the blacklist check and join the server, but what I expect is that the client with any mod listed by config should be blocked.

And if I set the parameter as below:

```

forge:

modsblacklist:

enable: true

list: xray

kickmessage: Use of unauthorized mods

```

It seems work properly that server could block any client with xray mod loaded.

So the problem is, how can I set the blacklist compare method to `contains any in list` but not `equal all`? | non_usab | black mod list option not work properly important do not delete this line minecraft version mohist version operating system windows server logs none mod list • minecraft mohist server jar minecraft • maven libs mohist universal jar forge plugin list none description of issue the option of modsblacklist which is in the mohist yml may not work properly when the list parameter accept a list the element in the list can t be matched by single which i mean if i set the parameter like this forge modsblacklist enable true list xray autoattack kickmessage use of unauthorized mods or forge modsblacklist enable true list xray autoattact kickmessage use of unauthorized mods as well as this after reload forge modsblacklist enable true list kickmessage use of unauthorized mods when a client with only xray but no autoattack loaded it can pass the blacklist check and join the server but what i expect is that the client with any mod listed by config should be blocked and if i set the parameter as below forge modsblacklist enable true list xray kickmessage use of unauthorized mods it seems work properly that server could block any client with xray mod loaded so the problem is how can i set the blacklist compare method to contains any in list but not equal all | 0 |

6,360 | 2,839,597,562 | IssuesEvent | 2015-05-27 14:30:20 | ScienceCommons/api | https://api.github.com/repos/ScienceCommons/api | closed | For now, do not display authors in search results | sloboda test | This will be cool down the road, but right now, because we still don't have a working author merge tool, there will most often be several different author names showing up each referring to the same author. This is very confusing for the user.

For example, https://www.curatescience.org/beta/#/query/pashler%20elderly yields 3 different authors for Hal R. Pashler (Hal Pashler, Harold Pashler, and Hal R. Pashler) | 1.0 | For now, do not display authors in search results - This will be cool down the road, but right now, because we still don't have a working author merge tool, there will most often be several different author names showing up each referring to the same author. This is very confusing for the user.

For example, https://www.curatescience.org/beta/#/query/pashler%20elderly yields 3 different authors for Hal R. Pashler (Hal Pashler, Harold Pashler, and Hal R. Pashler) | non_usab | for now do not display authors in search results this will be cool down the road but right now because we still don t have a working author merge tool there will most often be several different author names showing up each referring to the same author this is very confusing for the user for example yields different authors for hal r pashler hal pashler harold pashler and hal r pashler | 0 |

14,948 | 9,605,242,110 | IssuesEvent | 2019-05-10 22:58:45 | geneontology/amigo | https://api.github.com/repos/geneontology/amigo | closed | Update old "help" URLs to something functional | bug (B: affects usability) | Currently, all help URLs are set to something defunct (http://geneontology.org/form/contact-go); at least aim them somewhere that is not a deadend while other helpdesk issues are sorted out (http://help.geneontology.org).

| True | Update old "help" URLs to something functional - Currently, all help URLs are set to something defunct (http://geneontology.org/form/contact-go); at least aim them somewhere that is not a deadend while other helpdesk issues are sorted out (http://help.geneontology.org).

| usab | update old help urls to something functional currently all help urls are set to something defunct at least aim them somewhere that is not a deadend while other helpdesk issues are sorted out | 1 |

260,613 | 19,678,513,581 | IssuesEvent | 2022-01-11 14:42:42 | Gourmet-Dev/gourmet-apis | https://api.github.com/repos/Gourmet-Dev/gourmet-apis | opened | README 파일과 Issue Template, 그리고 PR Template 누락 | documentation | ### 문제 상황

* README 파일이 존재하지 않음

* Issue 등록시, 템플릿을 직접 작성해 넣어야 함

* Pull-Request 요청시, 템플릿을 직접 작성해 넣어야 함

---

### 기대 상황

* 레포지토리에 들어오면, 로컬 환경에서 서비스를 띄울 수 있게 기본적인 Getting Started 내용이 있어야 함

* Issue 등록시, 에디터에 자동으로 정해진 템플릿이 입력되어 있어야 함

* Pull-Request 요청시, 에디터에 자동으로 정해진 템플릿이 입력되어 있어야 함

---

### 재현 조건

* 재현 조건 없음 | 1.0 | README 파일과 Issue Template, 그리고 PR Template 누락 - ### 문제 상황

* README 파일이 존재하지 않음

* Issue 등록시, 템플릿을 직접 작성해 넣어야 함

* Pull-Request 요청시, 템플릿을 직접 작성해 넣어야 함

---

### 기대 상황

* 레포지토리에 들어오면, 로컬 환경에서 서비스를 띄울 수 있게 기본적인 Getting Started 내용이 있어야 함

* Issue 등록시, 에디터에 자동으로 정해진 템플릿이 입력되어 있어야 함

* Pull-Request 요청시, 에디터에 자동으로 정해진 템플릿이 입력되어 있어야 함

---

### 재현 조건

* 재현 조건 없음 | non_usab | readme 파일과 issue template 그리고 pr template 누락 문제 상황 readme 파일이 존재하지 않음 issue 등록시 템플릿을 직접 작성해 넣어야 함 pull request 요청시 템플릿을 직접 작성해 넣어야 함 기대 상황 레포지토리에 들어오면 로컬 환경에서 서비스를 띄울 수 있게 기본적인 getting started 내용이 있어야 함 issue 등록시 에디터에 자동으로 정해진 템플릿이 입력되어 있어야 함 pull request 요청시 에디터에 자동으로 정해진 템플릿이 입력되어 있어야 함 재현 조건 재현 조건 없음 | 0 |

12,077 | 7,686,967,162 | IssuesEvent | 2018-05-17 02:30:41 | MarkBind/markbind | https://api.github.com/repos/MarkBind/markbind | closed | Panels: go from minimized to expanded directly | a-ReaderUsability p.Low | Steps:

1. Minimize a panel using the `x` button

2. Click on the minimized panel

Actual: panel goes to collapsed mode.

Suggested: go direct to the expanded mode.

Reason: It is likely the reader wants to see the content of panel. The proposed improvement saves reader a click. | True | Panels: go from minimized to expanded directly - Steps:

1. Minimize a panel using the `x` button

2. Click on the minimized panel

Actual: panel goes to collapsed mode.

Suggested: go direct to the expanded mode.

Reason: It is likely the reader wants to see the content of panel. The proposed improvement saves reader a click. | usab | panels go from minimized to expanded directly steps minimize a panel using the x button click on the minimized panel actual panel goes to collapsed mode suggested go direct to the expanded mode reason it is likely the reader wants to see the content of panel the proposed improvement saves reader a click | 1 |

15,722 | 10,263,835,743 | IssuesEvent | 2019-08-22 15:06:36 | coala/coala-bears | https://api.github.com/repos/coala/coala-bears | closed | IndentationBear - Ignore doc comments/doc strings | area/genericbears area/usability difficulty/medium importance/medium | Doc comments don't usually follow standards and we are trying to ignore doc comments within the indentationBear, till then it is best if we tell people to ignore the IndentationBear over doc comments in the inline doc for the IndentationBear

| True | IndentationBear - Ignore doc comments/doc strings - Doc comments don't usually follow standards and we are trying to ignore doc comments within the indentationBear, till then it is best if we tell people to ignore the IndentationBear over doc comments in the inline doc for the IndentationBear

| usab | indentationbear ignore doc comments doc strings doc comments don t usually follow standards and we are trying to ignore doc comments within the indentationbear till then it is best if we tell people to ignore the indentationbear over doc comments in the inline doc for the indentationbear | 1 |

23,102 | 21,007,312,014 | IssuesEvent | 2022-03-30 00:41:47 | rabbitmq/rabbitmq-server | https://api.github.com/repos/rabbitmq/rabbitmq-server | closed | Consider making rabbitmqadmin available in PATH for Debian and RPM packages | usability pkg-rpm pkg-deb | I get that you may want to pre-configure it for the right URL, but it would be great if you could ship some kind of version of rabbitmqadmin suitable for config management packages to install or modify (even if it's a template of some sort, or only has default values, e.g., localhost / 15672 in default options).

For example, in Puppet, it has to do a curl of the admin interface using a username to connect in order to get the file, which seems like a really kludgy way to do it:

https://github.com/puppetlabs/puppetlabs-rabbitmq/blob/master/manifests/install/rabbitmqadmin.pp

https://tickets.puppetlabs.com/browse/MODULES-3098

| True | Consider making rabbitmqadmin available in PATH for Debian and RPM packages - I get that you may want to pre-configure it for the right URL, but it would be great if you could ship some kind of version of rabbitmqadmin suitable for config management packages to install or modify (even if it's a template of some sort, or only has default values, e.g., localhost / 15672 in default options).

For example, in Puppet, it has to do a curl of the admin interface using a username to connect in order to get the file, which seems like a really kludgy way to do it:

https://github.com/puppetlabs/puppetlabs-rabbitmq/blob/master/manifests/install/rabbitmqadmin.pp

https://tickets.puppetlabs.com/browse/MODULES-3098

| usab | consider making rabbitmqadmin available in path for debian and rpm packages i get that you may want to pre configure it for the right url but it would be great if you could ship some kind of version of rabbitmqadmin suitable for config management packages to install or modify even if it s a template of some sort or only has default values e g localhost in default options for example in puppet it has to do a curl of the admin interface using a username to connect in order to get the file which seems like a really kludgy way to do it | 1 |

15,198 | 9,851,898,854 | IssuesEvent | 2019-06-19 11:35:09 | virtualsatellite/VirtualSatellite4-Core | https://api.github.com/repos/virtualsatellite/VirtualSatellite4-Core | opened | Highlight changed elements after comparison / update | comfort/usability | There was a question in a project meeting whether it is possible to somehow show which components have changed when taking an update from SVN.

We see different use cases such as:

- Comparing to a baseline revision that is fixed. e.g. the version of today in the morning

- Comparing to see al the changes that just came in with the last update. this could eb apreference setting

- Comarping to see all my uncommitted changes

The idea is different to VirSat3 which means that we dont want to check out an old revision but want to bend the URI handlers to directly load the information from the SVN.

It will also need some good UI to spot the differences .

Idea: Comparison Editor

Investigate possibility to implement a comparison editor that can comapre the model with a given revision from the repository.

Functionality could be similar to team->comapre with.

maybe the functionality needs to create asummary of changes in the whole model. Investigate the use of EMF compare. | True | Highlight changed elements after comparison / update - There was a question in a project meeting whether it is possible to somehow show which components have changed when taking an update from SVN.

We see different use cases such as:

- Comparing to a baseline revision that is fixed. e.g. the version of today in the morning

- Comparing to see al the changes that just came in with the last update. this could eb apreference setting

- Comarping to see all my uncommitted changes

The idea is different to VirSat3 which means that we dont want to check out an old revision but want to bend the URI handlers to directly load the information from the SVN.

It will also need some good UI to spot the differences .

Idea: Comparison Editor

Investigate possibility to implement a comparison editor that can comapre the model with a given revision from the repository.

Functionality could be similar to team->comapre with.

maybe the functionality needs to create asummary of changes in the whole model. Investigate the use of EMF compare. | usab | highlight changed elements after comparison update there was a question in a project meeting whether it is possible to somehow show which components have changed when taking an update from svn we see different use cases such as comparing to a baseline revision that is fixed e g the version of today in the morning comparing to see al the changes that just came in with the last update this could eb apreference setting comarping to see all my uncommitted changes the idea is different to which means that we dont want to check out an old revision but want to bend the uri handlers to directly load the information from the svn it will also need some good ui to spot the differences idea comparison editor investigate possibility to implement a comparison editor that can comapre the model with a given revision from the repository functionality could be similar to team comapre with maybe the functionality needs to create asummary of changes in the whole model investigate the use of emf compare | 1 |

9,497 | 6,334,040,485 | IssuesEvent | 2017-07-26 15:51:14 | palantir/atlasdb | https://api.github.com/repos/palantir/atlasdb | opened | Unhelpful IllegalArgumentException on timelock revert | component: timelock component: usability | ```

Exception in thread "main" java.lang.IllegalArgumentException: array too small: 1 < 8

```

This comes up when you start up a node which was previously using timelock and then went back to embedded. It was implemented in this way, so that clients that don't even know that timelock doesn't exist can't accidentally violate the timestamp guarantee. See #1596 for context.

Probably unhelpful, and also pretty scary if one encounters this in the field without context.

We should catch this when creating the timestamp bound store, and rethrow something with a bit more explanation.

Internal reference: PDS-55189

(Also note, though, that this is kind of a win, in that our protection mechanism just prevented an instance of data corruption!) | True | Unhelpful IllegalArgumentException on timelock revert - ```

Exception in thread "main" java.lang.IllegalArgumentException: array too small: 1 < 8

```

This comes up when you start up a node which was previously using timelock and then went back to embedded. It was implemented in this way, so that clients that don't even know that timelock doesn't exist can't accidentally violate the timestamp guarantee. See #1596 for context.

Probably unhelpful, and also pretty scary if one encounters this in the field without context.

We should catch this when creating the timestamp bound store, and rethrow something with a bit more explanation.

Internal reference: PDS-55189

(Also note, though, that this is kind of a win, in that our protection mechanism just prevented an instance of data corruption!) | usab | unhelpful illegalargumentexception on timelock revert exception in thread main java lang illegalargumentexception array too small this comes up when you start up a node which was previously using timelock and then went back to embedded it was implemented in this way so that clients that don t even know that timelock doesn t exist can t accidentally violate the timestamp guarantee see for context probably unhelpful and also pretty scary if one encounters this in the field without context we should catch this when creating the timestamp bound store and rethrow something with a bit more explanation internal reference pds also note though that this is kind of a win in that our protection mechanism just prevented an instance of data corruption | 1 |

20,545 | 15,681,861,792 | IssuesEvent | 2021-03-25 06:15:58 | microsoft/win32metadata | https://api.github.com/repos/microsoft/win32metadata | closed | CreateIcon missing NativeArray attribute on byte* array parameters | usability | currently the metadata has:

```cs

[DllImport("USER32", ExactSpelling = true, SetLastError = true)]

public unsafe static extern HICON CreateIcon([Optional][In] HINSTANCE hInstance, [In] int nWidth, [In] int nHeight, [In] byte cPlanes, [In] byte cBitsPixel, [In][Const] byte* lpbANDbits, [In][Const] byte* lpbXORbits);

```

But the last two parameters should be arrays. | True | CreateIcon missing NativeArray attribute on byte* array parameters - currently the metadata has:

```cs

[DllImport("USER32", ExactSpelling = true, SetLastError = true)]

public unsafe static extern HICON CreateIcon([Optional][In] HINSTANCE hInstance, [In] int nWidth, [In] int nHeight, [In] byte cPlanes, [In] byte cBitsPixel, [In][Const] byte* lpbANDbits, [In][Const] byte* lpbXORbits);

```

But the last two parameters should be arrays. | usab | createicon missing nativearray attribute on byte array parameters currently the metadata has cs public unsafe static extern hicon createicon hinstance hinstance int nwidth int nheight byte cplanes byte cbitspixel byte lpbandbits byte lpbxorbits but the last two parameters should be arrays | 1 |

8,109 | 11,300,957,290 | IssuesEvent | 2020-01-17 14:40:55 | geneontology/go-ontology | https://api.github.com/repos/geneontology/go-ontology | closed | too many appressorium terms | multi-species process | GO:0075039 establishment of turgor in appressorium 1 annotations

GO:0075021 cAMP-mediated activation of appressorium formation

GO:0075016 appressorium formation on or near host 1 annotations

GO:0075003 adhesion of symbiont appressorium to host

GO:0075022 ethylene-mediated activation of appressorium formation

GO:0075023 MAPK-mediated regulation of appressorium formation

GO:0075040 regulation of establishment of turgor in appressorium

GO:0075043 maintenance of turgor in appressorium by melanization

GO:0075035 maturation of appressorium on or near host 1 annotations

GO:0075024 phospholipase C-mediated activation of appressorium formation

GO:0075025 initiation of appressorium on or near host

GO:0075020 calcium or calmodulin-mediated activation of appressorium formation

GO:0075017 regulation of appressorium formation on or near host

GO:0075041 positive regulation of establishment of turgor in appressorium

GO:0075042 negative regulation of establishment of turgor in appressorium

I am annotating a MAP kinease which regulates

appressorium formation

however i don't want to use

GO:0075023 MAPK-mediated regulation of appressorium formation

I want to do just

regulation of appressorium formation

I think the other terms

GO:0075024 phospholipase C-mediated activation of appressorium formation

GO:0075020 calcium or calmodulin-mediated activation of appressorium formation

should also go because they are just other MFs in the signalling pathway.

also merge

GO:0075025 initiation of appressorium on or near host

into positive regulation

| 1.0 | too many appressorium terms - GO:0075039 establishment of turgor in appressorium 1 annotations

GO:0075021 cAMP-mediated activation of appressorium formation

GO:0075016 appressorium formation on or near host 1 annotations

GO:0075003 adhesion of symbiont appressorium to host

GO:0075022 ethylene-mediated activation of appressorium formation

GO:0075023 MAPK-mediated regulation of appressorium formation

GO:0075040 regulation of establishment of turgor in appressorium

GO:0075043 maintenance of turgor in appressorium by melanization

GO:0075035 maturation of appressorium on or near host 1 annotations

GO:0075024 phospholipase C-mediated activation of appressorium formation

GO:0075025 initiation of appressorium on or near host

GO:0075020 calcium or calmodulin-mediated activation of appressorium formation

GO:0075017 regulation of appressorium formation on or near host

GO:0075041 positive regulation of establishment of turgor in appressorium

GO:0075042 negative regulation of establishment of turgor in appressorium

I am annotating a MAP kinease which regulates

appressorium formation

however i don't want to use

GO:0075023 MAPK-mediated regulation of appressorium formation

I want to do just

regulation of appressorium formation

I think the other terms

GO:0075024 phospholipase C-mediated activation of appressorium formation

GO:0075020 calcium or calmodulin-mediated activation of appressorium formation

should also go because they are just other MFs in the signalling pathway.

also merge

GO:0075025 initiation of appressorium on or near host

into positive regulation

| non_usab | too many appressorium terms go establishment of turgor in appressorium annotations go camp mediated activation of appressorium formation go appressorium formation on or near host annotations go adhesion of symbiont appressorium to host go ethylene mediated activation of appressorium formation go mapk mediated regulation of appressorium formation go regulation of establishment of turgor in appressorium go maintenance of turgor in appressorium by melanization go maturation of appressorium on or near host annotations go phospholipase c mediated activation of appressorium formation go initiation of appressorium on or near host go calcium or calmodulin mediated activation of appressorium formation go regulation of appressorium formation on or near host go positive regulation of establishment of turgor in appressorium go negative regulation of establishment of turgor in appressorium i am annotating a map kinease which regulates appressorium formation however i don t want to use go mapk mediated regulation of appressorium formation i want to do just regulation of appressorium formation i think the other terms go phospholipase c mediated activation of appressorium formation go calcium or calmodulin mediated activation of appressorium formation should also go because they are just other mfs in the signalling pathway also merge go initiation of appressorium on or near host into positive regulation | 0 |

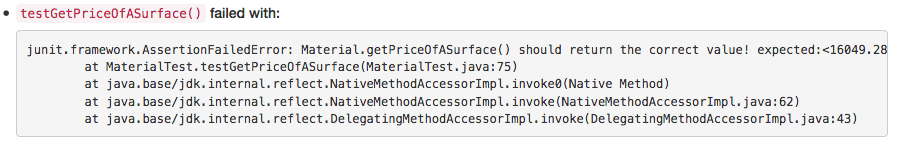

13,663 | 8,634,730,670 | IssuesEvent | 2018-11-22 18:06:00 | st-tu-dresden/inloop | https://api.github.com/repos/st-tu-dresden/inloop | closed | Display console output and exception messages in a user-friendlier way | enhancement usability | The most annoying problem is that long exception messages do not wrap around properly, which makes it difficult to see important message details, e.g., the expected vs. actual value.

| True | Display console output and exception messages in a user-friendlier way - The most annoying problem is that long exception messages do not wrap around properly, which makes it difficult to see important message details, e.g., the expected vs. actual value.

| usab | display console output and exception messages in a user friendlier way the most annoying problem is that long exception messages do not wrap around properly which makes it difficult to see important message details e g the expected vs actual value | 1 |

159,522 | 12,478,578,452 | IssuesEvent | 2020-05-29 16:42:55 | spel-uchile/SUCHAI-Flight-Software | https://api.github.com/repos/spel-uchile/SUCHAI-Flight-Software | closed | Sequence of 5 commands throws exit code -11 | Fuzz-Testing bug | The sequence is:

tm_parse_status 168850784532074374035732101058055169116 12826105913956939644 E0@2Ni*@ -162817515606114395225612514469063087299 -48916261179497125227303848440736194118 -6386859017823808887 r@F5uu -8152784359493193565

gssb_set_burn_config -285600039855062482800991264458719794343 -3143019222374379877 10778628039574652351 -219709885077994447318495468478095151127 FZG -322088856487707042825536300309208787826 w

drp_set_deployed

fp_del_cmd_unix ^TyCU& -243018170100445935 "4n3Kr/hr 80211873877148678249755549121640936724 1175049179

com_get_config h -7060649124262353519 2063750755 | 1.0 | Sequence of 5 commands throws exit code -11 - The sequence is:

tm_parse_status 168850784532074374035732101058055169116 12826105913956939644 E0@2Ni*@ -162817515606114395225612514469063087299 -48916261179497125227303848440736194118 -6386859017823808887 r@F5uu -8152784359493193565

gssb_set_burn_config -285600039855062482800991264458719794343 -3143019222374379877 10778628039574652351 -219709885077994447318495468478095151127 FZG -322088856487707042825536300309208787826 w

drp_set_deployed

fp_del_cmd_unix ^TyCU& -243018170100445935 "4n3Kr/hr 80211873877148678249755549121640936724 1175049179

com_get_config h -7060649124262353519 2063750755 | non_usab | sequence of commands throws exit code the sequence is tm parse status r gssb set burn config fzg w drp set deployed fp del cmd unix tycu hr com get config h | 0 |

103,755 | 4,184,965,399 | IssuesEvent | 2016-06-23 09:17:05 | Zenika/Zenika-Resume | https://api.github.com/repos/Zenika/Zenika-Resume | closed | Avoir une variable pour définir la période sur une expérience | Priority 1 | Dans une expérience il devrait être possible de définir la période :

Janvier 2015 - Mars 2016

Juste une string pour laisser le plus de liberter | 1.0 | Avoir une variable pour définir la période sur une expérience - Dans une expérience il devrait être possible de définir la période :

Janvier 2015 - Mars 2016

Juste une string pour laisser le plus de liberter | non_usab | avoir une variable pour définir la période sur une expérience dans une expérience il devrait être possible de définir la période janvier mars juste une string pour laisser le plus de liberter | 0 |

177,125 | 21,464,567,367 | IssuesEvent | 2022-04-26 01:23:15 | raindigi/site-landing | https://api.github.com/repos/raindigi/site-landing | closed | CVE-2021-33623 (High) detected in trim-newlines-1.0.0.tgz - autoclosed | security vulnerability | ## CVE-2021-33623 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>trim-newlines-1.0.0.tgz</b></p></summary>

<p>Trim newlines from the start and/or end of a string</p>

<p>Library home page: <a href="https://registry.npmjs.org/trim-newlines/-/trim-newlines-1.0.0.tgz">https://registry.npmjs.org/trim-newlines/-/trim-newlines-1.0.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/trim-newlines/package.json</p>

<p>

Dependency Hierarchy:

- gatsby-plugin-sharp-2.0.32.tgz (Root Library)

- imagemin-mozjpeg-8.0.0.tgz

- mozjpeg-6.0.1.tgz

- logalot-2.1.0.tgz

- squeak-1.3.0.tgz

- lpad-align-1.1.2.tgz

- meow-3.7.0.tgz

- :x: **trim-newlines-1.0.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/raindigi/site-landing/commit/bcba8b01c6ab60dc16fa75543eba31b8be7a461e">bcba8b01c6ab60dc16fa75543eba31b8be7a461e</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The trim-newlines package before 3.0.1 and 4.x before 4.0.1 for Node.js has an issue related to regular expression denial-of-service (ReDoS) for the .end() method.

<p>Publish Date: 2021-05-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33623>CVE-2021-33623</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33623">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33623</a></p>

<p>Release Date: 2021-05-28</p>

<p>Fix Resolution: trim-newlines - 3.0.1, 4.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2021-33623 (High) detected in trim-newlines-1.0.0.tgz - autoclosed - ## CVE-2021-33623 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>trim-newlines-1.0.0.tgz</b></p></summary>

<p>Trim newlines from the start and/or end of a string</p>

<p>Library home page: <a href="https://registry.npmjs.org/trim-newlines/-/trim-newlines-1.0.0.tgz">https://registry.npmjs.org/trim-newlines/-/trim-newlines-1.0.0.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/trim-newlines/package.json</p>

<p>

Dependency Hierarchy:

- gatsby-plugin-sharp-2.0.32.tgz (Root Library)

- imagemin-mozjpeg-8.0.0.tgz

- mozjpeg-6.0.1.tgz

- logalot-2.1.0.tgz

- squeak-1.3.0.tgz

- lpad-align-1.1.2.tgz

- meow-3.7.0.tgz

- :x: **trim-newlines-1.0.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/raindigi/site-landing/commit/bcba8b01c6ab60dc16fa75543eba31b8be7a461e">bcba8b01c6ab60dc16fa75543eba31b8be7a461e</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The trim-newlines package before 3.0.1 and 4.x before 4.0.1 for Node.js has an issue related to regular expression denial-of-service (ReDoS) for the .end() method.

<p>Publish Date: 2021-05-28

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33623>CVE-2021-33623</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33623">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33623</a></p>

<p>Release Date: 2021-05-28</p>

<p>Fix Resolution: trim-newlines - 3.0.1, 4.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_usab | cve high detected in trim newlines tgz autoclosed cve high severity vulnerability vulnerable library trim newlines tgz trim newlines from the start and or end of a string library home page a href path to dependency file package json path to vulnerable library node modules trim newlines package json dependency hierarchy gatsby plugin sharp tgz root library imagemin mozjpeg tgz mozjpeg tgz logalot tgz squeak tgz lpad align tgz meow tgz x trim newlines tgz vulnerable library found in head commit a href vulnerability details the trim newlines package before and x before for node js has an issue related to regular expression denial of service redos for the end method publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution trim newlines step up your open source security game with whitesource | 0 |

11,495 | 7,266,845,497 | IssuesEvent | 2018-02-20 00:40:38 | phan/phan | https://api.github.com/repos/phan/phan | closed | Finish support for Windows in daemon mode | daemon enhancement usability | 2 things look promising:

1. docker (may need to add config flag to change TCP listen interface to a custom value such as 0.0.0.0)

2. (Windows 10): "Ubuntu on Windows" https://www.hanselman.com/blog/DevelopersCanRunBashShellAndUsermodeUbuntuLinuxBinariesOnWindows10.aspx (Best performance)

I'm having problems getting the VM set up for https://developer.microsoft.com/en-us/windows/downloads/virtual-machines on virtualbox

(May be possibly by installing php7.1 and php7.1-dev with pthread support (pcntl module), building php-ast, and running phan.) | True | Finish support for Windows in daemon mode - 2 things look promising:

1. docker (may need to add config flag to change TCP listen interface to a custom value such as 0.0.0.0)

2. (Windows 10): "Ubuntu on Windows" https://www.hanselman.com/blog/DevelopersCanRunBashShellAndUsermodeUbuntuLinuxBinariesOnWindows10.aspx (Best performance)

I'm having problems getting the VM set up for https://developer.microsoft.com/en-us/windows/downloads/virtual-machines on virtualbox

(May be possibly by installing php7.1 and php7.1-dev with pthread support (pcntl module), building php-ast, and running phan.) | usab | finish support for windows in daemon mode things look promising docker may need to add config flag to change tcp listen interface to a custom value such as windows ubuntu on windows best performance i m having problems getting the vm set up for on virtualbox may be possibly by installing and dev with pthread support pcntl module building php ast and running phan | 1 |

27,964 | 30,794,854,986 | IssuesEvent | 2023-07-31 19:01:05 | aws-amplify/amplify-hosting | https://api.github.com/repos/aws-amplify/amplify-hosting | closed | UNCOMPRESSED_CODE_SIZE_EXCEEDED (frequent issue) | usability response-requested closed-for-staleness compute | ### Before opening, please confirm:

- [X] I have checked to see if my question is addressed in the [FAQ](https://github.com/aws-amplify/amplify-hosting/blob/master/FAQ.md).

- [X] I have [searched for duplicate or closed issues](https://github.com/aws-amplify/amplify-hosting/issues?q=is%3Aissue+).

- [X] I have read the guide for [submitting bug reports](https://github.com/aws-amplify/amplify-hosting/blob/master/CONTRIBUTING.md).

- [X] I have done my best to include a minimal, self-contained set of instructions for consistently reproducing the issue.

- [X] I have removed any sensitive information from my code snippets and submission.

### App Id

d1nra4pyobb96k

### AWS Region

us-east-1

### Amplify Hosting feature

Frontend builds

### Frontend framework

Next.js

### Next.js version

13.4.7

### Next.js router

Pages Router

### Describe the bug

Frequently builds fail to deploy because they exceed the 120 MB limit. Getting under this limit with few dependencies is virtually impossible. Is it possible to somehow increase this arbitrary storage limit?

We've also tried a few of the suggestions to compress the file size, to no avail.

### Expected behavior

Builds should deploy

### Reproduction steps

1. Deploy an application that goes slightly over the 120mb limit

2. The build fails to deploy

### Build Settings

```yaml

version: 1

frontend:

phases:

preBuild:

commands:

- yarn install --frozen-lockfile

# - yarn install

build:

commands:

- npm run build

# - rm -rf .next/standalone/node_modules/@buidlerlabs/hashgraph-venin-js/node_modules/solc

- du -h .next/standalone

#- allfiles=$(ls -al ./.next/standalone/**/*.js)

#- npx esbuild $allfiles --minify --outdir=.next/standalone --platform=node --target=node16 --format=cjs --allow-overwrite

artifacts:

baseDirectory: .next

files:

- '**/*'

cache:

paths:

- node_modules/**/*

```

### Log output

<details>

```

# Put your logs below this line

```

</details>

### Additional information

_No response_ | True | UNCOMPRESSED_CODE_SIZE_EXCEEDED (frequent issue) - ### Before opening, please confirm:

- [X] I have checked to see if my question is addressed in the [FAQ](https://github.com/aws-amplify/amplify-hosting/blob/master/FAQ.md).

- [X] I have [searched for duplicate or closed issues](https://github.com/aws-amplify/amplify-hosting/issues?q=is%3Aissue+).

- [X] I have read the guide for [submitting bug reports](https://github.com/aws-amplify/amplify-hosting/blob/master/CONTRIBUTING.md).

- [X] I have done my best to include a minimal, self-contained set of instructions for consistently reproducing the issue.

- [X] I have removed any sensitive information from my code snippets and submission.

### App Id

d1nra4pyobb96k

### AWS Region

us-east-1

### Amplify Hosting feature

Frontend builds

### Frontend framework

Next.js

### Next.js version

13.4.7

### Next.js router

Pages Router

### Describe the bug

Frequently builds fail to deploy because they exceed the 120 MB limit. Getting under this limit with few dependencies is virtually impossible. Is it possible to somehow increase this arbitrary storage limit?

We've also tried a few of the suggestions to compress the file size, to no avail.

### Expected behavior

Builds should deploy

### Reproduction steps

1. Deploy an application that goes slightly over the 120mb limit

2. The build fails to deploy

### Build Settings

```yaml

version: 1

frontend:

phases:

preBuild:

commands:

- yarn install --frozen-lockfile

# - yarn install

build:

commands:

- npm run build

# - rm -rf .next/standalone/node_modules/@buidlerlabs/hashgraph-venin-js/node_modules/solc

- du -h .next/standalone

#- allfiles=$(ls -al ./.next/standalone/**/*.js)

#- npx esbuild $allfiles --minify --outdir=.next/standalone --platform=node --target=node16 --format=cjs --allow-overwrite

artifacts:

baseDirectory: .next

files:

- '**/*'

cache:

paths:

- node_modules/**/*

```

### Log output

<details>

```

# Put your logs below this line

```

</details>

### Additional information

_No response_ | usab | uncompressed code size exceeded frequent issue before opening please confirm i have checked to see if my question is addressed in the i have i have read the guide for i have done my best to include a minimal self contained set of instructions for consistently reproducing the issue i have removed any sensitive information from my code snippets and submission app id aws region us east amplify hosting feature frontend builds frontend framework next js next js version next js router pages router describe the bug frequently builds fail to deploy because they exceed the mb limit getting under this limit with few dependencies is virtually impossible is it possible to somehow increase this arbitrary storage limit we ve also tried a few of the suggestions to compress the file size to no avail expected behavior builds should deploy reproduction steps deploy an application that goes slightly over the limit the build fails to deploy build settings yaml version frontend phases prebuild commands yarn install frozen lockfile yarn install build commands npm run build rm rf next standalone node modules buidlerlabs hashgraph venin js node modules solc du h next standalone allfiles ls al next standalone js npx esbuild allfiles minify outdir next standalone platform node target format cjs allow overwrite artifacts basedirectory next files cache paths node modules log output put your logs below this line additional information no response | 1 |

14,509 | 10,904,917,019 | IssuesEvent | 2019-11-20 09:43:22 | celo-org/celo-blockchain | https://api.github.com/repos/celo-org/celo-blockchain | opened | Alfajores node never syncs | bug celo-blockchain infrastructure | ### Expected Behavior

Running an alfajores full node and should be able to sync the blockchain

### Current Behavior

Alfajores nodes do not sync

Integration nodes sync fine

### Steps to reproduce

I am running celo nodes with the scripts from this PR:

https://github.com/celo-org/celo-blockchain/pull/613/files

like `$ ./run-node-docker.sh alajores 44782 us.gcr.io/celo-testnet/celo-node:alfajores 8547 8548 30304`

It is always ending up with a log of lots of handshakes but never every retrieving any chain data. It happens with the docker image from google cloud and custom built docker image from `celo-blockchain` master branch.

It looks like that it is not able to reach the boot nodes.

Full log here:

```

sebastian:celo-blockchain/ (feature/node_runner✗) $ ./run-node-docker.sh alajores 44782 us.gcr.io/celo-testnet/celo-node:alfajores 8547 8548 30304

Using Celo address: 9bbc4fe69f24c70527bd083e78081ba0e2bf3984

INFO [11-20|09:33:47.480] Maximum peer count ETH=25 LES=99 total=124

INFO [11-20|09:33:47.487] Allocated cache and file handles database=/root/.celo/geth/chaindata cache=16 handles=16

INFO [11-20|09:33:47.564] Writing custom genesis block

INFO [11-20|09:33:47.571] Persisted trie from memory database nodes=103 size=17.78kB time=2.1954ms gcnodes=0 gcsize=0.00B gctime=0s livenodes=1 livesize=0.00B

INFO [11-20|09:33:47.581] HASH2 hash=92b2a5…86b4d6

INFO [11-20|09:33:47.581] Successfully wrote genesis state database=chaindata hash=92b2a5…86b4d6

INFO [11-20|09:33:47.581] Allocated cache and file handles database=/root/.celo/geth/lightchaindata cache=16 handles=16

INFO [11-20|09:33:47.604] Writing custom genesis block

INFO [11-20|09:33:47.610] Persisted trie from memory database nodes=103 size=17.78kB time=1.2841ms gcnodes=0 gcsize=0.00B gctime=0s livenodes=1 livesize=0.00B

INFO [11-20|09:33:47.615] HASH2 hash=92b2a5…86b4d6

INFO [11-20|09:33:47.615] Successfully wrote genesis state database=lightchaindata hash=92b2a5…86b4d6

INFO [11-20|09:33:47.615] Allocated cache and file handles database=/root/.celo/geth/ultralightchaindata cache=16 handles=16

INFO [11-20|09:33:47.642] Writing custom genesis block

INFO [11-20|09:33:47.646] Persisted trie from memory database nodes=103 size=17.78kB time=1.3452ms gcnodes=0 gcsize=0.00B gctime=0s livenodes=1 livesize=0.00B

INFO [11-20|09:33:47.653] HASH2 hash=92b2a5…86b4d6

INFO [11-20|09:33:47.653] Successfully wrote genesis state database=ultralightchaindata hash=92b2a5…86b4d6

INFO [11-20|09:33:50.471] Maximum peer count ETH=100 LES=1000 total=1100

WARN [11-20|09:33:50.491] Found deprecated node list file /root/.celo/static-nodes.json, please use the TOML config file instead.

INFO [11-20|09:33:50.501] Starting peer-to-peer node instance=Geth/v1.8.23-stable/linux-amd64/go1.11.13

INFO [11-20|09:33:50.502] Allocated cache and file handles database=/root/.celo/geth/chaindata cache=768 handles=524288

INFO [11-20|09:33:50.714] Initialised chain configuration config="{ChainID: 44785 Homestead: 0 DAO: <nil> DAOSupport: false EIP150: 0 EIP155: 0 EIP158: 0 Byzantium: 0 Constantinople: 0 ConstantinopleFix: 0 Engine: istanbul}"

INFO [11-20|09:33:50.715] Creating dir dir=/root/.celo/geth/istanbul

INFO [11-20|09:33:50.716] Initialising Ethereum protocol versions=[64] network=44782

INFO [11-20|09:33:50.720] Loaded most recent local header number=0 hash=92b2a5…86b4d6 td=1 age=1y2mo4w

INFO [11-20|09:33:50.720] Loaded most recent local full block number=0 hash=92b2a5…86b4d6 td=1 age=1y2mo4w

INFO [11-20|09:33:50.720] Loaded most recent local fast block number=0 hash=92b2a5…86b4d6 td=1 age=1y2mo4w

INFO [11-20|09:33:50.724] Regenerated local transaction journal transactions=0 accounts=0

INFO [11-20|09:33:50.766] UDP listener up net=enode://a9b0789b986d70e51c9aec376d28847fed05ee909e3d5ccf5cef57d57549bba938ab85c243ba83f6b9db331adee27f849aff27eaa40ae8b4ef98584da299a7b1@[::]:30303

INFO [11-20|09:33:50.772] New local node record seq=1 id=f82efd32270286a9 ip=127.0.0.1 udp=30303 tcp=30303

INFO [11-20|09:33:50.772] Started P2P networking self=enode://a9b0789b986d70e51c9aec376d28847fed05ee909e3d5ccf5cef57d57549bba938ab85c243ba83f6b9db331adee27f849aff27eaa40ae8b4ef98584da299a7b1@127.0.0.1:30303

INFO [11-20|09:33:50.777] Starting topic registration topic=LES2@92b2a5bbc9b1b519

INFO [11-20|09:33:50.780] IPC endpoint opened url=/root/.celo/geth.ipc

INFO [11-20|09:33:50.781] HTTP endpoint opened url=http://0.0.0.0:8545 cors= vhosts=localhost

INFO [11-20|09:33:51.098] Ethereum handshake HASH id=9da22b8e5c6122d2 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.106] Ethereum handshake HASH id=947523bf1d9b8976 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.111] Ethereum handshake HASH id=df431a8d3e701951 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.115] Ethereum handshake HASH id=069c4c46b5929ece conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.116] Ethereum handshake HASH id=e5264af1f57e3026 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.119] Ethereum handshake HASH id=2ef4d3aec48f17d3 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.123] Ethereum handshake HASH id=bf12068ddde6912f conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.125] Ethereum handshake HASH id=d940b682c3bdf911 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.126] Ethereum handshake HASH id=2392f7a01f53abd8 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.128] Ethereum handshake HASH id=92f89d75a3379528 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.070] Ethereum handshake HASH id=92f89d75a3379528 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.082] Ethereum handshake HASH id=9da22b8e5c6122d2 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.093] Ethereum handshake HASH id=df431a8d3e701951 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.096] Ethereum handshake HASH id=d940b682c3bdf911 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.099] Ethereum handshake HASH id=069c4c46b5929ece conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.103] Ethereum handshake HASH id=bf12068ddde6912f conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.107] Ethereum handshake HASH id=2392f7a01f53abd8 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.109] Ethereum handshake HASH id=e5264af1f57e3026 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.110] Ethereum handshake HASH id=947523bf1d9b8976 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.112] Ethereum handshake HASH id=2ef4d3aec48f17d3 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

``` | 1.0 | Alfajores node never syncs - ### Expected Behavior

Running an alfajores full node and should be able to sync the blockchain

### Current Behavior

Alfajores nodes do not sync

Integration nodes sync fine

### Steps to reproduce

I am running celo nodes with the scripts from this PR:

https://github.com/celo-org/celo-blockchain/pull/613/files

like `$ ./run-node-docker.sh alajores 44782 us.gcr.io/celo-testnet/celo-node:alfajores 8547 8548 30304`

It is always ending up with a log of lots of handshakes but never every retrieving any chain data. It happens with the docker image from google cloud and custom built docker image from `celo-blockchain` master branch.

It looks like that it is not able to reach the boot nodes.

Full log here:

```

sebastian:celo-blockchain/ (feature/node_runner✗) $ ./run-node-docker.sh alajores 44782 us.gcr.io/celo-testnet/celo-node:alfajores 8547 8548 30304

Using Celo address: 9bbc4fe69f24c70527bd083e78081ba0e2bf3984

INFO [11-20|09:33:47.480] Maximum peer count ETH=25 LES=99 total=124

INFO [11-20|09:33:47.487] Allocated cache and file handles database=/root/.celo/geth/chaindata cache=16 handles=16

INFO [11-20|09:33:47.564] Writing custom genesis block

INFO [11-20|09:33:47.571] Persisted trie from memory database nodes=103 size=17.78kB time=2.1954ms gcnodes=0 gcsize=0.00B gctime=0s livenodes=1 livesize=0.00B

INFO [11-20|09:33:47.581] HASH2 hash=92b2a5…86b4d6

INFO [11-20|09:33:47.581] Successfully wrote genesis state database=chaindata hash=92b2a5…86b4d6

INFO [11-20|09:33:47.581] Allocated cache and file handles database=/root/.celo/geth/lightchaindata cache=16 handles=16

INFO [11-20|09:33:47.604] Writing custom genesis block

INFO [11-20|09:33:47.610] Persisted trie from memory database nodes=103 size=17.78kB time=1.2841ms gcnodes=0 gcsize=0.00B gctime=0s livenodes=1 livesize=0.00B

INFO [11-20|09:33:47.615] HASH2 hash=92b2a5…86b4d6

INFO [11-20|09:33:47.615] Successfully wrote genesis state database=lightchaindata hash=92b2a5…86b4d6

INFO [11-20|09:33:47.615] Allocated cache and file handles database=/root/.celo/geth/ultralightchaindata cache=16 handles=16

INFO [11-20|09:33:47.642] Writing custom genesis block

INFO [11-20|09:33:47.646] Persisted trie from memory database nodes=103 size=17.78kB time=1.3452ms gcnodes=0 gcsize=0.00B gctime=0s livenodes=1 livesize=0.00B

INFO [11-20|09:33:47.653] HASH2 hash=92b2a5…86b4d6

INFO [11-20|09:33:47.653] Successfully wrote genesis state database=ultralightchaindata hash=92b2a5…86b4d6

INFO [11-20|09:33:50.471] Maximum peer count ETH=100 LES=1000 total=1100

WARN [11-20|09:33:50.491] Found deprecated node list file /root/.celo/static-nodes.json, please use the TOML config file instead.

INFO [11-20|09:33:50.501] Starting peer-to-peer node instance=Geth/v1.8.23-stable/linux-amd64/go1.11.13

INFO [11-20|09:33:50.502] Allocated cache and file handles database=/root/.celo/geth/chaindata cache=768 handles=524288

INFO [11-20|09:33:50.714] Initialised chain configuration config="{ChainID: 44785 Homestead: 0 DAO: <nil> DAOSupport: false EIP150: 0 EIP155: 0 EIP158: 0 Byzantium: 0 Constantinople: 0 ConstantinopleFix: 0 Engine: istanbul}"

INFO [11-20|09:33:50.715] Creating dir dir=/root/.celo/geth/istanbul

INFO [11-20|09:33:50.716] Initialising Ethereum protocol versions=[64] network=44782

INFO [11-20|09:33:50.720] Loaded most recent local header number=0 hash=92b2a5…86b4d6 td=1 age=1y2mo4w

INFO [11-20|09:33:50.720] Loaded most recent local full block number=0 hash=92b2a5…86b4d6 td=1 age=1y2mo4w

INFO [11-20|09:33:50.720] Loaded most recent local fast block number=0 hash=92b2a5…86b4d6 td=1 age=1y2mo4w

INFO [11-20|09:33:50.724] Regenerated local transaction journal transactions=0 accounts=0

INFO [11-20|09:33:50.766] UDP listener up net=enode://a9b0789b986d70e51c9aec376d28847fed05ee909e3d5ccf5cef57d57549bba938ab85c243ba83f6b9db331adee27f849aff27eaa40ae8b4ef98584da299a7b1@[::]:30303

INFO [11-20|09:33:50.772] New local node record seq=1 id=f82efd32270286a9 ip=127.0.0.1 udp=30303 tcp=30303

INFO [11-20|09:33:50.772] Started P2P networking self=enode://a9b0789b986d70e51c9aec376d28847fed05ee909e3d5ccf5cef57d57549bba938ab85c243ba83f6b9db331adee27f849aff27eaa40ae8b4ef98584da299a7b1@127.0.0.1:30303

INFO [11-20|09:33:50.777] Starting topic registration topic=LES2@92b2a5bbc9b1b519

INFO [11-20|09:33:50.780] IPC endpoint opened url=/root/.celo/geth.ipc

INFO [11-20|09:33:50.781] HTTP endpoint opened url=http://0.0.0.0:8545 cors= vhosts=localhost

INFO [11-20|09:33:51.098] Ethereum handshake HASH id=9da22b8e5c6122d2 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.106] Ethereum handshake HASH id=947523bf1d9b8976 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.111] Ethereum handshake HASH id=df431a8d3e701951 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.115] Ethereum handshake HASH id=069c4c46b5929ece conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.116] Ethereum handshake HASH id=e5264af1f57e3026 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.119] Ethereum handshake HASH id=2ef4d3aec48f17d3 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.123] Ethereum handshake HASH id=bf12068ddde6912f conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.125] Ethereum handshake HASH id=d940b682c3bdf911 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.126] Ethereum handshake HASH id=2392f7a01f53abd8 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:33:51.128] Ethereum handshake HASH id=92f89d75a3379528 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.070] Ethereum handshake HASH id=92f89d75a3379528 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.082] Ethereum handshake HASH id=9da22b8e5c6122d2 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.093] Ethereum handshake HASH id=df431a8d3e701951 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.096] Ethereum handshake HASH id=d940b682c3bdf911 conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.099] Ethereum handshake HASH id=069c4c46b5929ece conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"

INFO [11-20|09:34:23.103] Ethereum handshake HASH id=bf12068ddde6912f conn=staticdial hash=92b2a5…86b4d6 genesis="&{header:0xc0000fefc0 uncles:[] transactions:[] randomness:0xc0000ce700 hash:{v:[146 178 165 187 201 177 181 25 121 173 213 235 110 121 112 126 10 177 62 45 65 179 190 108 230 148 125 87 157 134 180 214]} size:{v:<nil>} td:<nil> ReceivedAt:0001-01-01 00:00:00 +0000 UTC ReceivedFrom:<nil>}"