Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 742 | labels stringlengths 4 431 | body stringlengths 5 239k | index stringclasses 10 values | text_combine stringlengths 96 240k | label stringclasses 2 values | text stringlengths 96 200k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

69,874 | 8,468,592,480 | IssuesEvent | 2018-10-23 20:12:01 | JosefPihrt/Roslynator | https://api.github.com/repos/JosefPihrt/Roslynator | closed | RCS1179 False Negative | Area-Analyzers Resolution-By Design | Should RCS1179 offer to change this:

```csharp

namespace RCS1179_False_Negative

{

static class Program

{

public static string Method()

{

string value = "1";

if (System.Environment.Is64BitProcess)

{

value = "2";

}

return value;

}

}

}

```

To this?

```csharp

namespace RCS1179_False_Negative

{

static class Program

{

public static string Method()

{

if (System.Environment.Is64BitProcess)

{

return "2";

}

return "1";

}

}

}

``` | 1.0 | RCS1179 False Negative - Should RCS1179 offer to change this:

```csharp

namespace RCS1179_False_Negative

{

static class Program

{

public static string Method()

{

string value = "1";

if (System.Environment.Is64BitProcess)

{

value = "2";

}

return value;

}

}

}

```

To this?

```csharp

namespace RCS1179_False_Negative

{

static class Program

{

public static string Method()

{

if (System.Environment.Is64BitProcess)

{

return "2";

}

return "1";

}

}

}

``` | non_usab | false negative should offer to change this csharp namespace false negative static class program public static string method string value if system environment value return value to this csharp namespace false negative static class program public static string method if system environment return return | 0 |

21,999 | 18,263,089,114 | IssuesEvent | 2021-10-04 03:36:05 | SanderMertens/flecs | https://api.github.com/repos/SanderMertens/flecs | closed | Add meta components to core | enhancement usability | **Describe the problem you are trying to solve.**

Currently the flecs-meta module has components for describing types, and a set of macro's to populate those types. Applications may want to use different reflection systems though, and in this case flecs cannot be made aware of the layout of types. This awareness can be useful in several scenarios, such as querying.

**Describe the solution you'd like**

Move components from flecs-meta to a core addon. Keep the code to populate the components in flecs-meta, so that different reflection front ends can populate the components.

| True | Add meta components to core - **Describe the problem you are trying to solve.**

Currently the flecs-meta module has components for describing types, and a set of macro's to populate those types. Applications may want to use different reflection systems though, and in this case flecs cannot be made aware of the layout of types. This awareness can be useful in several scenarios, such as querying.

**Describe the solution you'd like**

Move components from flecs-meta to a core addon. Keep the code to populate the components in flecs-meta, so that different reflection front ends can populate the components.

| usab | add meta components to core describe the problem you are trying to solve currently the flecs meta module has components for describing types and a set of macro s to populate those types applications may want to use different reflection systems though and in this case flecs cannot be made aware of the layout of types this awareness can be useful in several scenarios such as querying describe the solution you d like move components from flecs meta to a core addon keep the code to populate the components in flecs meta so that different reflection front ends can populate the components | 1 |

27,659 | 30,037,393,839 | IssuesEvent | 2023-06-27 13:34:34 | haraldng/omnipaxos | https://api.github.com/repos/haraldng/omnipaxos | closed | Complete reconnection automation | enhancement usability | Together issue #61 and issue #67 enable us to discover and react to dropped `Prepare`, `AcceptSync`, `AcceptDecide`, and `Decide` messages rather than relying on the user to call reconnected().

They don't cover cases of dropped `AcceptStopSign`, `DecideStopSign`, `PrepareReq` messages. The absence of these messages can't be easily detected with sequence numbers. Instead they can be resent after some timeout (can for example use the same timeout as BLE). | True | Complete reconnection automation - Together issue #61 and issue #67 enable us to discover and react to dropped `Prepare`, `AcceptSync`, `AcceptDecide`, and `Decide` messages rather than relying on the user to call reconnected().

They don't cover cases of dropped `AcceptStopSign`, `DecideStopSign`, `PrepareReq` messages. The absence of these messages can't be easily detected with sequence numbers. Instead they can be resent after some timeout (can for example use the same timeout as BLE). | usab | complete reconnection automation together issue and issue enable us to discover and react to dropped prepare acceptsync acceptdecide and decide messages rather than relying on the user to call reconnected they don t cover cases of dropped acceptstopsign decidestopsign preparereq messages the absence of these messages can t be easily detected with sequence numbers instead they can be resent after some timeout can for example use the same timeout as ble | 1 |

115,362 | 4,663,779,773 | IssuesEvent | 2016-10-05 10:28:11 | Lakshman-LD/LetsMeetUp | https://api.github.com/repos/Lakshman-LD/LetsMeetUp | closed | render table in a different way for phones | enhancement High Priority | table in event page has to be rendered in an horizontal way | 1.0 | render table in a different way for phones - table in event page has to be rendered in an horizontal way | non_usab | render table in a different way for phones table in event page has to be rendered in an horizontal way | 0 |

679,666 | 23,241,243,431 | IssuesEvent | 2022-08-03 15:45:39 | fpdcc/ccfp-asset-dashboard | https://api.github.com/repos/fpdcc/ccfp-asset-dashboard | closed | production - Phase funding can not be deleted | bug high priority production | After adding a phase funding item it can not be deleted. Takes you to "page not found".

| 1.0 | production - Phase funding can not be deleted - After adding a phase funding item it can not be deleted. Takes you to "page not found".

| non_usab | production phase funding can not be deleted after adding a phase funding item it can not be deleted takes you to page not found | 0 |

50,191 | 10,467,394,503 | IssuesEvent | 2019-09-22 04:44:40 | evanplaice/evanplaice | https://api.github.com/repos/evanplaice/evanplaice | closed | Maintenance release for 'absurdum' | Code | Clean up everything, improve/add missing documentation, streamline CI/CD. | 1.0 | Maintenance release for 'absurdum' - Clean up everything, improve/add missing documentation, streamline CI/CD. | non_usab | maintenance release for absurdum clean up everything improve add missing documentation streamline ci cd | 0 |

176,506 | 28,104,445,354 | IssuesEvent | 2023-03-30 22:35:41 | telosnetwork/telos-wallet | https://api.github.com/repos/telosnetwork/telos-wallet | closed | Design EVM Wallet 0.1 for mobile | 🎨 Needs Design | ## Overview

After getting confirmation from the current design, we want to provide the mobile version to the developers.

## Acceptance criteria

- Design the landing page

- Design the wallet balance

- Design the wallet transactions

- Design the wallet send

- Design the wallet receive

- Design the wallet stake | 1.0 | Design EVM Wallet 0.1 for mobile - ## Overview

After getting confirmation from the current design, we want to provide the mobile version to the developers.

## Acceptance criteria

- Design the landing page

- Design the wallet balance

- Design the wallet transactions

- Design the wallet send

- Design the wallet receive

- Design the wallet stake | non_usab | design evm wallet for mobile overview after getting confirmation from the current design we want to provide the mobile version to the developers acceptance criteria design the landing page design the wallet balance design the wallet transactions design the wallet send design the wallet receive design the wallet stake | 0 |

28,265 | 4,086,920,956 | IssuesEvent | 2016-06-01 08:04:36 | RestComm/Restcomm-Connect | https://api.github.com/repos/RestComm/Restcomm-Connect | opened | RVD application log UI does not work | 1. Bug Visual App Designer | RVD application log UI does not display messages and the following exception is shown in server.log:

```

10:30:10,639 SEVERE [com.sun.jersey.spi.container.ContainerResponse] (http-/10.42.0.1:8080-20) The RuntimeException could not be mapped to a response, re-throwing to the HTTP container: java.lang.NullPointerException

at org.mobicents.servlet.restcomm.rvd.http.resources.SecuredRestService.secure(SecuredRestService.java:36) [classes:]

at org.mobicents.servlet.restcomm.rvd.http.resources.RvdController.appLog(RvdController.java:421) [classes:]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) [rt.jar:1.7.0_95]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57) [rt.jar:1.7.0_95]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) [rt.jar:1.7.0_95]

``` | 1.0 | RVD application log UI does not work - RVD application log UI does not display messages and the following exception is shown in server.log:

```

10:30:10,639 SEVERE [com.sun.jersey.spi.container.ContainerResponse] (http-/10.42.0.1:8080-20) The RuntimeException could not be mapped to a response, re-throwing to the HTTP container: java.lang.NullPointerException

at org.mobicents.servlet.restcomm.rvd.http.resources.SecuredRestService.secure(SecuredRestService.java:36) [classes:]

at org.mobicents.servlet.restcomm.rvd.http.resources.RvdController.appLog(RvdController.java:421) [classes:]

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) [rt.jar:1.7.0_95]

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:57) [rt.jar:1.7.0_95]

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) [rt.jar:1.7.0_95]

``` | non_usab | rvd application log ui does not work rvd application log ui does not display messages and the following exception is shown in server log severe http the runtimeexception could not be mapped to a response re throwing to the http container java lang nullpointerexception at org mobicents servlet restcomm rvd http resources securedrestservice secure securedrestservice java at org mobicents servlet restcomm rvd http resources rvdcontroller applog rvdcontroller java at sun reflect nativemethodaccessorimpl native method at sun reflect nativemethodaccessorimpl invoke nativemethodaccessorimpl java at sun reflect delegatingmethodaccessorimpl invoke delegatingmethodaccessorimpl java | 0 |

264,708 | 23,134,702,571 | IssuesEvent | 2022-07-28 13:28:14 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing ES Promotion: Chrome X-Pack UI Functional Tests - ML anomaly_detection.x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer·ts - machine learning - anomaly detection anomaly explorer with farequote based multi metric job renders View By swim lane | blocker :ml skipped-test failed-es-promotion Team:ML v8.4.0 | **Chrome X-Pack UI Functional Tests - ML anomaly_detection**

**x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer.ts**

**machine learning - anomaly detection anomaly explorer with farequote based multi metric job renders View By swim lane**

This failure is preventing the promotion of the current Elasticsearch nightly snapshot.

For more information on the Elasticsearch snapshot promotion process including how to reproduce using the unverified nightly ES build: https://www.elastic.co/guide/en/kibana/master/development-es-snapshots.html

* [Failed promotion job](https://buildkite.com/elastic/kibana-elasticsearch-snapshot-verify/builds/1486#01824005-72d9-4b2a-9d76-45c3be5e2b98)

* [Test Failure](https://buildkite.com/organizations/elastic/pipelines/kibana-elasticsearch-snapshot-verify/builds/1486/jobs/01824005-72d9-4b2a-9d76-45c3be5e2b98/artifacts/0182402d-8731-4fba-9424-26caabd1e1b5)

```

Error: Expected swim lane y labels to be AAL,VRD,EGF,SWR,AMX,JZA,TRS,ACA,BAW,ASA, got AAL,EGF,VRD,SWR,JZA,AMX,TRS,ACA,BAW,ASA

at Assertion.assert (node_modules/@kbn/expect/expect.js:100:11)

at Assertion.eql (node_modules/@kbn/expect/expect.js:244:8)

at Object.assertAxisLabels (x-pack/test/functional/services/ml/swim_lane.ts:88:31)

at runMicrotasks (<anonymous>)

at processTicksAndRejections (node:internal/process/task_queues:96:5)

at Context.<anonymous> (x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer.ts:167:11)

at Object.apply (node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16) {

actual: '[\n' +

' "AAL"\n' +

' "EGF"\n' +

' "VRD"\n' +

' "SWR"\n' +

' "JZA"\n' +

' "AMX"\n' +

' "TRS"\n' +

' "ACA"\n' +

' "BAW"\n' +

' "ASA"\n' +

']',

expected: '[\n' +

' "AAL"\n' +

' "VRD"\n' +

' "EGF"\n' +

' "SWR"\n' +

' "AMX"\n' +

' "JZA"\n' +

' "TRS"\n' +

' "ACA"\n' +

' "BAW"\n' +

' "ASA"\n' +

']',

showDiff: true

}

``` | 1.0 | Failing ES Promotion: Chrome X-Pack UI Functional Tests - ML anomaly_detection.x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer·ts - machine learning - anomaly detection anomaly explorer with farequote based multi metric job renders View By swim lane - **Chrome X-Pack UI Functional Tests - ML anomaly_detection**

**x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer.ts**

**machine learning - anomaly detection anomaly explorer with farequote based multi metric job renders View By swim lane**

This failure is preventing the promotion of the current Elasticsearch nightly snapshot.

For more information on the Elasticsearch snapshot promotion process including how to reproduce using the unverified nightly ES build: https://www.elastic.co/guide/en/kibana/master/development-es-snapshots.html

* [Failed promotion job](https://buildkite.com/elastic/kibana-elasticsearch-snapshot-verify/builds/1486#01824005-72d9-4b2a-9d76-45c3be5e2b98)

* [Test Failure](https://buildkite.com/organizations/elastic/pipelines/kibana-elasticsearch-snapshot-verify/builds/1486/jobs/01824005-72d9-4b2a-9d76-45c3be5e2b98/artifacts/0182402d-8731-4fba-9424-26caabd1e1b5)

```

Error: Expected swim lane y labels to be AAL,VRD,EGF,SWR,AMX,JZA,TRS,ACA,BAW,ASA, got AAL,EGF,VRD,SWR,JZA,AMX,TRS,ACA,BAW,ASA

at Assertion.assert (node_modules/@kbn/expect/expect.js:100:11)

at Assertion.eql (node_modules/@kbn/expect/expect.js:244:8)

at Object.assertAxisLabels (x-pack/test/functional/services/ml/swim_lane.ts:88:31)

at runMicrotasks (<anonymous>)

at processTicksAndRejections (node:internal/process/task_queues:96:5)

at Context.<anonymous> (x-pack/test/functional/apps/ml/anomaly_detection/anomaly_explorer.ts:167:11)

at Object.apply (node_modules/@kbn/test/target_node/functional_test_runner/lib/mocha/wrap_function.js:87:16) {

actual: '[\n' +

' "AAL"\n' +

' "EGF"\n' +

' "VRD"\n' +

' "SWR"\n' +

' "JZA"\n' +

' "AMX"\n' +

' "TRS"\n' +

' "ACA"\n' +

' "BAW"\n' +

' "ASA"\n' +

']',

expected: '[\n' +

' "AAL"\n' +

' "VRD"\n' +

' "EGF"\n' +

' "SWR"\n' +

' "AMX"\n' +

' "JZA"\n' +

' "TRS"\n' +

' "ACA"\n' +

' "BAW"\n' +

' "ASA"\n' +

']',

showDiff: true

}

``` | non_usab | failing es promotion chrome x pack ui functional tests ml anomaly detection x pack test functional apps ml anomaly detection anomaly explorer·ts machine learning anomaly detection anomaly explorer with farequote based multi metric job renders view by swim lane chrome x pack ui functional tests ml anomaly detection x pack test functional apps ml anomaly detection anomaly explorer ts machine learning anomaly detection anomaly explorer with farequote based multi metric job renders view by swim lane this failure is preventing the promotion of the current elasticsearch nightly snapshot for more information on the elasticsearch snapshot promotion process including how to reproduce using the unverified nightly es build error expected swim lane y labels to be aal vrd egf swr amx jza trs aca baw asa got aal egf vrd swr jza amx trs aca baw asa at assertion assert node modules kbn expect expect js at assertion eql node modules kbn expect expect js at object assertaxislabels x pack test functional services ml swim lane ts at runmicrotasks at processticksandrejections node internal process task queues at context x pack test functional apps ml anomaly detection anomaly explorer ts at object apply node modules kbn test target node functional test runner lib mocha wrap function js actual n aal n egf n vrd n swr n jza n amx n trs n aca n baw n asa n expected n aal n vrd n egf n swr n amx n jza n trs n aca n baw n asa n showdiff true | 0 |

22,183 | 18,817,423,070 | IssuesEvent | 2021-11-10 01:58:40 | tailscale/tailscale | https://api.github.com/repos/tailscale/tailscale | closed | Auth Keys and non-admin accounts access to admin console. | admin UI L3 Some users P2 Aggravating T5 Usability | The customer is working on a scenario where they want to provision developer infrastructure with Terraform and automatically log in and register machine to Tailscale under the developer's account.

While this is doable with auth keys, non-admin accounts cannot access this interface right now unless they temporarily give admin access to the user.

Workaround:

Share the service account or support account access with developers.

<img src="https://frontapp.com/assets/img/favicons/favicon-32x32.png" height="16" width="16" alt="Front logo" /> [Front conversations](https://app.frontapp.com/open/top_3gglt) | True | Auth Keys and non-admin accounts access to admin console. - The customer is working on a scenario where they want to provision developer infrastructure with Terraform and automatically log in and register machine to Tailscale under the developer's account.

While this is doable with auth keys, non-admin accounts cannot access this interface right now unless they temporarily give admin access to the user.

Workaround:

Share the service account or support account access with developers.

<img src="https://frontapp.com/assets/img/favicons/favicon-32x32.png" height="16" width="16" alt="Front logo" /> [Front conversations](https://app.frontapp.com/open/top_3gglt) | usab | auth keys and non admin accounts access to admin console the customer is working on a scenario where they want to provision developer infrastructure with terraform and automatically log in and register machine to tailscale under the developer s account while this is doable with auth keys non admin accounts cannot access this interface right now unless they temporarily give admin access to the user workaround share the service account or support account access with developers | 1 |

99,542 | 30,488,401,239 | IssuesEvent | 2023-07-18 05:29:32 | microsoft/onnxruntime | https://api.github.com/repos/microsoft/onnxruntime | closed | [Build] How to use cmake to compile and generate 'onnxruntime. dll' from source code in Windows | build platform:windows | ### Describe the issue

Compile from source code using cmake on Windows using default options to generate the following lib, but without 'onnxruntime. dll'“

### Urgency

_No response_

### Target platform

Windows

### Build script

Cmake 3.26.4+VS2019

### Error / output

no 'onnxruntime.dll' in the generated file

### Visual Studio Version

_No response_

### GCC / Compiler Version

_No response_ | 1.0 | [Build] How to use cmake to compile and generate 'onnxruntime. dll' from source code in Windows - ### Describe the issue

Compile from source code using cmake on Windows using default options to generate the following lib, but without 'onnxruntime. dll'“

### Urgency

_No response_

### Target platform

Windows

### Build script

Cmake 3.26.4+VS2019

### Error / output

no 'onnxruntime.dll' in the generated file

### Visual Studio Version

_No response_

### GCC / Compiler Version

_No response_ | non_usab | how to use cmake to compile and generate onnxruntime dll from source code in windows describe the issue compile from source code using cmake on windows using default options to generate the following lib but without onnxruntime dll “ urgency no response target platform windows build script cmake error output no onnxruntime dll in the generated file visual studio version no response gcc compiler version no response | 0 |

685,303 | 23,452,003,316 | IssuesEvent | 2022-08-16 04:29:47 | dnd-side-project/dnd-7th-7-backend | https://api.github.com/repos/dnd-side-project/dnd-7th-7-backend | opened | feat(route): Modify the return value of getByID API | Priority: High Type: Feature Status: In Progress | ## 🤷 이슈 내용

경로가 메인 경로일 경우 return 값에 리뷰 목록을 추가합니다.

## 📸 스크린샷

<img width="187" alt="image" src="https://user-images.githubusercontent.com/89819254/184797917-348a241e-772e-492f-9ab1-c77298fb883e.png">

| 1.0 | feat(route): Modify the return value of getByID API - ## 🤷 이슈 내용

경로가 메인 경로일 경우 return 값에 리뷰 목록을 추가합니다.

## 📸 스크린샷

<img width="187" alt="image" src="https://user-images.githubusercontent.com/89819254/184797917-348a241e-772e-492f-9ab1-c77298fb883e.png">

| non_usab | feat route modify the return value of getbyid api 🤷 이슈 내용 경로가 메인 경로일 경우 return 값에 리뷰 목록을 추가합니다 📸 스크린샷 img width alt image src | 0 |

152,501 | 5,848,202,621 | IssuesEvent | 2017-05-10 20:20:29 | samsung-cnct/k2 | https://api.github.com/repos/samsung-cnct/k2 | closed | Replace cloud-init coreos.units with write-file+runcmd | feature request K2 priority-p1 | coreos.units is specific to the modified cloud-init that coreos ships and will not work with other distros. Replace it.

We can use `write-files` to create the systemd.service files.

We can use `runcmd` to start and enable the service files once they're written. | 1.0 | Replace cloud-init coreos.units with write-file+runcmd - coreos.units is specific to the modified cloud-init that coreos ships and will not work with other distros. Replace it.

We can use `write-files` to create the systemd.service files.

We can use `runcmd` to start and enable the service files once they're written. | non_usab | replace cloud init coreos units with write file runcmd coreos units is specific to the modified cloud init that coreos ships and will not work with other distros replace it we can use write files to create the systemd service files we can use runcmd to start and enable the service files once they re written | 0 |

3,637 | 3,510,075,094 | IssuesEvent | 2016-01-09 06:02:27 | stamp-web/stamp-web-aurelia | https://api.github.com/repos/stamp-web/stamp-web-aurelia | closed | Setting catalogue number as active does not update live instance in page | bug usability | If you have a stamp, add a new active catalogue number, set this to active and then edit the stamp, the old non-active number will be set now as the active, however since it was not a re-activatve the old active catalogue number is not de-activated resulting in two active numbers. This causes a data-corruption in the database (perhaps a constraint is needed there?) which has to be repaired.

Refreshing the page and then editing is a workaround, but due to data corruption it can not be repaired in the UI if the above steps are followed | True | Setting catalogue number as active does not update live instance in page - If you have a stamp, add a new active catalogue number, set this to active and then edit the stamp, the old non-active number will be set now as the active, however since it was not a re-activatve the old active catalogue number is not de-activated resulting in two active numbers. This causes a data-corruption in the database (perhaps a constraint is needed there?) which has to be repaired.

Refreshing the page and then editing is a workaround, but due to data corruption it can not be repaired in the UI if the above steps are followed | usab | setting catalogue number as active does not update live instance in page if you have a stamp add a new active catalogue number set this to active and then edit the stamp the old non active number will be set now as the active however since it was not a re activatve the old active catalogue number is not de activated resulting in two active numbers this causes a data corruption in the database perhaps a constraint is needed there which has to be repaired refreshing the page and then editing is a workaround but due to data corruption it can not be repaired in the ui if the above steps are followed | 1 |

22,847 | 20,360,495,494 | IssuesEvent | 2022-02-20 16:08:55 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | opened | It's inconvenient that we require to specify `<port>` in `<remote_servers>` cluster configuration. | usability | **Describe the issue**

```

remote_servers.play.shard.replica.port

```

Should use server's TCP port by default. | True | It's inconvenient that we require to specify `<port>` in `<remote_servers>` cluster configuration. - **Describe the issue**

```

remote_servers.play.shard.replica.port

```

Should use server's TCP port by default. | usab | it s inconvenient that we require to specify in cluster configuration describe the issue remote servers play shard replica port should use server s tcp port by default | 1 |

61,336 | 8,514,502,268 | IssuesEvent | 2018-10-31 18:44:53 | craftercms/craftercms | https://api.github.com/repos/craftercms/craftercms | closed | [docs] Document new data sources: S3, WebDAV | documentation priority: medium | Much like the docs for Box and CMIS we need to document WebDAV and S3.

S3 is still minimal, so won't be as elaborate as WebDAV and Box. It will fully fleshed out once this ticket is completed: https://github.com/craftercms/craftercms/issues/2508 | 1.0 | [docs] Document new data sources: S3, WebDAV - Much like the docs for Box and CMIS we need to document WebDAV and S3.

S3 is still minimal, so won't be as elaborate as WebDAV and Box. It will fully fleshed out once this ticket is completed: https://github.com/craftercms/craftercms/issues/2508 | non_usab | document new data sources webdav much like the docs for box and cmis we need to document webdav and is still minimal so won t be as elaborate as webdav and box it will fully fleshed out once this ticket is completed | 0 |

497,870 | 14,395,787,959 | IssuesEvent | 2020-12-03 04:41:22 | hyphacoop/organizing | https://api.github.com/repos/hyphacoop/organizing | opened | Close Hypha office for holidays | [priority-★★☆] wg:operations | <sup>_This initial comment is collaborative and open to modification by all._</sup>

## Task Summary

🎟️ **Re-ticketed from:** #

🗣 **Loomio:** N/A

📅 **Due date:** Dec 18, 2020

🎯 **Success criteria:** ...

Make sure we're ready to close office for 2 weeks

## To Do

- [ ] Set up responder to hello@

- [ ] Record temporary VM greeting

- [ ] encourage members to add email responder? (provide draft text)

| 1.0 | Close Hypha office for holidays - <sup>_This initial comment is collaborative and open to modification by all._</sup>

## Task Summary

🎟️ **Re-ticketed from:** #

🗣 **Loomio:** N/A

📅 **Due date:** Dec 18, 2020

🎯 **Success criteria:** ...

Make sure we're ready to close office for 2 weeks

## To Do

- [ ] Set up responder to hello@

- [ ] Record temporary VM greeting

- [ ] encourage members to add email responder? (provide draft text)

| non_usab | close hypha office for holidays this initial comment is collaborative and open to modification by all task summary 🎟️ re ticketed from 🗣 loomio n a 📅 due date dec 🎯 success criteria make sure we re ready to close office for weeks to do set up responder to hello record temporary vm greeting encourage members to add email responder provide draft text | 0 |

111,862 | 9,544,664,071 | IssuesEvent | 2019-05-01 14:51:47 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | teamcity: failed test: TestLint | C-test-failure O-robot | The following tests appear to have failed on master (lint): TestLint, TestLint/TestVet: TestLint/TestVet/shadow, TestLint/TestVet

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestLint).

[#1262013](https://teamcity.cockroachdb.com/viewLog.html?buildId=1262013):

```

TestLint/TestVet

--- FAIL: lint/TestLint: TestLint/TestVet (842.530s)

------- Stdout: -------

=== PAUSE TestLint/TestVet

TestLint/TestVet: TestLint/TestVet/shadow

...

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:2218 +0x146 fp=0xc0034bbe28 sp=0xc0034bbdb8 pc=0xba8596

lint_test.go:1352:

cmd/compile/internal/gc.walkexpr(0xc0013d1800, 0xc000c33e10, 0xc003ff9b00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:1268 +0x8e98 fp=0xc0034bc4e0 sp=0xc0034bbe28 pc=0xba32a8

lint_test.go:1352:

cmd/compile/internal/gc.walkexpr(0xc0013d1a00, 0xc000c33e10, 0xc0013d1a00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:724 +0x42f9 fp=0xc0034bcb98 sp=0xc0034bc4e0 pc=0xb9e709

lint_test.go:1352:

cmd/compile/internal/gc.slicelit(0x0, 0xc000c32e00, 0xc003ff9950, 0xc000c33e10)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/sinit.go:866 +0x1db fp=0xc0034bcd80 sp=0xc0034bcb98 pc=0xb2efab

lint_test.go:1352:

cmd/compile/internal/gc.anylit(0xc000c32e00, 0xc003ff9950, 0xc000c33e10)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/sinit.go:1130 +0xf2c fp=0xc0034bce58 sp=0xc0034bcd80 pc=0xb335ac

lint_test.go:1352:

cmd/compile/internal/gc.walkexpr(0xc000c32e00, 0xc000c33e10, 0xc000d62e10)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:1730 +0x2347 fp=0xc0034bd510 sp=0xc0034bce58 pc=0xb9c757

lint_test.go:1352:

cmd/compile/internal/gc.walkexprlist(0xc0005d7410, 0x2, 0x2, 0xc000c33e10)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:379 +0x50 fp=0xc0034bd540 sp=0xc0034bd510 pc=0xb99c50

lint_test.go:1352:

cmd/compile/internal/gc.walkstmt(0xc000c33e00, 0xc264c0)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:334 +0x83f fp=0xc0034bd6f8 sp=0xc0034bd540 pc=0xb98b6f

lint_test.go:1352:

cmd/compile/internal/gc.walkstmtlist(0xc003ff24d8, 0x1, 0x1)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:79 +0x46 fp=0xc0034bd720 sp=0xc0034bd6f8 pc=0xb980d6

lint_test.go:1352:

cmd/compile/internal/gc.walk(0xc0005eab00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:63 +0x39e fp=0xc0034bd7e8 sp=0xc0034bd720 pc=0xb97e0e

lint_test.go:1352:

cmd/compile/internal/gc.compile(0xc0005eab00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/pgen.go:223 +0x6b fp=0xc0034bd838 sp=0xc0034bd7e8 pc=0xb02a9b

lint_test.go:1352:

cmd/compile/internal/gc.funccompile(0xc0005eab00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/pgen.go:209 +0xbd fp=0xc0034bd890 sp=0xc0034bd838 pc=0xb0293d

lint_test.go:1352:

cmd/compile/internal/gc.Main(0xcc51f8)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/main.go:641 +0x265b fp=0xc0034bdf20 sp=0xc0034bd890 pc=0xadd1fb

lint_test.go:1352:

main.main()

lint_test.go:1352:

/usr/local/go/src/cmd/compile/main.go:51 +0x96 fp=0xc0034bdf98 sp=0xc0034bdf20 pc=0xbfaa36

lint_test.go:1352:

runtime.main()

lint_test.go:1352:

/usr/local/go/src/runtime/proc.go:201 +0x207 fp=0xc0034bdfe0 sp=0xc0034bdf98 pc=0x42c4a7

lint_test.go:1352:

runtime.goexit()

lint_test.go:1352:

/usr/local/go/src/runtime/asm_amd64.s:1333 +0x1 fp=0xc0034bdfe8 sp=0xc0034bdfe0 pc=0x457da1

TestLint

--- FAIL: lint/TestLint (253.190s)

```

Please assign, take a look and update the issue accordingly.

| 1.0 | teamcity: failed test: TestLint - The following tests appear to have failed on master (lint): TestLint, TestLint/TestVet: TestLint/TestVet/shadow, TestLint/TestVet

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+TestLint).

[#1262013](https://teamcity.cockroachdb.com/viewLog.html?buildId=1262013):

```

TestLint/TestVet

--- FAIL: lint/TestLint: TestLint/TestVet (842.530s)

------- Stdout: -------

=== PAUSE TestLint/TestVet

TestLint/TestVet: TestLint/TestVet/shadow

...

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:2218 +0x146 fp=0xc0034bbe28 sp=0xc0034bbdb8 pc=0xba8596

lint_test.go:1352:

cmd/compile/internal/gc.walkexpr(0xc0013d1800, 0xc000c33e10, 0xc003ff9b00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:1268 +0x8e98 fp=0xc0034bc4e0 sp=0xc0034bbe28 pc=0xba32a8

lint_test.go:1352:

cmd/compile/internal/gc.walkexpr(0xc0013d1a00, 0xc000c33e10, 0xc0013d1a00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:724 +0x42f9 fp=0xc0034bcb98 sp=0xc0034bc4e0 pc=0xb9e709

lint_test.go:1352:

cmd/compile/internal/gc.slicelit(0x0, 0xc000c32e00, 0xc003ff9950, 0xc000c33e10)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/sinit.go:866 +0x1db fp=0xc0034bcd80 sp=0xc0034bcb98 pc=0xb2efab

lint_test.go:1352:

cmd/compile/internal/gc.anylit(0xc000c32e00, 0xc003ff9950, 0xc000c33e10)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/sinit.go:1130 +0xf2c fp=0xc0034bce58 sp=0xc0034bcd80 pc=0xb335ac

lint_test.go:1352:

cmd/compile/internal/gc.walkexpr(0xc000c32e00, 0xc000c33e10, 0xc000d62e10)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:1730 +0x2347 fp=0xc0034bd510 sp=0xc0034bce58 pc=0xb9c757

lint_test.go:1352:

cmd/compile/internal/gc.walkexprlist(0xc0005d7410, 0x2, 0x2, 0xc000c33e10)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:379 +0x50 fp=0xc0034bd540 sp=0xc0034bd510 pc=0xb99c50

lint_test.go:1352:

cmd/compile/internal/gc.walkstmt(0xc000c33e00, 0xc264c0)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:334 +0x83f fp=0xc0034bd6f8 sp=0xc0034bd540 pc=0xb98b6f

lint_test.go:1352:

cmd/compile/internal/gc.walkstmtlist(0xc003ff24d8, 0x1, 0x1)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:79 +0x46 fp=0xc0034bd720 sp=0xc0034bd6f8 pc=0xb980d6

lint_test.go:1352:

cmd/compile/internal/gc.walk(0xc0005eab00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/walk.go:63 +0x39e fp=0xc0034bd7e8 sp=0xc0034bd720 pc=0xb97e0e

lint_test.go:1352:

cmd/compile/internal/gc.compile(0xc0005eab00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/pgen.go:223 +0x6b fp=0xc0034bd838 sp=0xc0034bd7e8 pc=0xb02a9b

lint_test.go:1352:

cmd/compile/internal/gc.funccompile(0xc0005eab00)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/pgen.go:209 +0xbd fp=0xc0034bd890 sp=0xc0034bd838 pc=0xb0293d

lint_test.go:1352:

cmd/compile/internal/gc.Main(0xcc51f8)

lint_test.go:1352:

/usr/local/go/src/cmd/compile/internal/gc/main.go:641 +0x265b fp=0xc0034bdf20 sp=0xc0034bd890 pc=0xadd1fb

lint_test.go:1352:

main.main()

lint_test.go:1352:

/usr/local/go/src/cmd/compile/main.go:51 +0x96 fp=0xc0034bdf98 sp=0xc0034bdf20 pc=0xbfaa36

lint_test.go:1352:

runtime.main()

lint_test.go:1352:

/usr/local/go/src/runtime/proc.go:201 +0x207 fp=0xc0034bdfe0 sp=0xc0034bdf98 pc=0x42c4a7

lint_test.go:1352:

runtime.goexit()

lint_test.go:1352:

/usr/local/go/src/runtime/asm_amd64.s:1333 +0x1 fp=0xc0034bdfe8 sp=0xc0034bdfe0 pc=0x457da1

TestLint

--- FAIL: lint/TestLint (253.190s)

```

Please assign, take a look and update the issue accordingly.

| non_usab | teamcity failed test testlint the following tests appear to have failed on master lint testlint testlint testvet testlint testvet shadow testlint testvet you may want to check testlint testvet fail lint testlint testlint testvet stdout pause testlint testvet testlint testvet testlint testvet shadow lint test go usr local go src cmd compile internal gc walk go fp sp pc lint test go cmd compile internal gc walkexpr lint test go usr local go src cmd compile internal gc walk go fp sp pc lint test go cmd compile internal gc walkexpr lint test go usr local go src cmd compile internal gc walk go fp sp pc lint test go cmd compile internal gc slicelit lint test go usr local go src cmd compile internal gc sinit go fp sp pc lint test go cmd compile internal gc anylit lint test go usr local go src cmd compile internal gc sinit go fp sp pc lint test go cmd compile internal gc walkexpr lint test go usr local go src cmd compile internal gc walk go fp sp pc lint test go cmd compile internal gc walkexprlist lint test go usr local go src cmd compile internal gc walk go fp sp pc lint test go cmd compile internal gc walkstmt lint test go usr local go src cmd compile internal gc walk go fp sp pc lint test go cmd compile internal gc walkstmtlist lint test go usr local go src cmd compile internal gc walk go fp sp pc lint test go cmd compile internal gc walk lint test go usr local go src cmd compile internal gc walk go fp sp pc lint test go cmd compile internal gc compile lint test go usr local go src cmd compile internal gc pgen go fp sp pc lint test go cmd compile internal gc funccompile lint test go usr local go src cmd compile internal gc pgen go fp sp pc lint test go cmd compile internal gc main lint test go usr local go src cmd compile internal gc main go fp sp pc lint test go main main lint test go usr local go src cmd compile main go fp sp pc lint test go runtime main lint test go usr local go src runtime proc go fp sp pc lint test go runtime goexit lint test go usr local go src runtime asm s fp sp pc testlint fail lint testlint please assign take a look and update the issue accordingly | 0 |

13,833 | 9,084,513,044 | IssuesEvent | 2019-02-18 04:00:52 | fieldenms/tg | https://api.github.com/repos/fieldenms/tg | opened | User Role: deactivation of roles should check for existence of active users | P2 Security User management | ### Description

It should not be possible to deactivate `UserRole` instance that are associated with active users.

`UserRole`s are associated with users via `UserAndRoleAssociation`, which models many-2-many association and is not activatable. A `user` is associated with a `role` if a corresponding instance `UserAndRoleAssociation` exists. The validation logic for deactivation of user roles should take this specificity into account.

### Expected outcome

User roles deactivation to be aligned with the use of `UserAndRoleAssociation` for managing user-role associations. | True | User Role: deactivation of roles should check for existence of active users - ### Description

It should not be possible to deactivate `UserRole` instance that are associated with active users.

`UserRole`s are associated with users via `UserAndRoleAssociation`, which models many-2-many association and is not activatable. A `user` is associated with a `role` if a corresponding instance `UserAndRoleAssociation` exists. The validation logic for deactivation of user roles should take this specificity into account.

### Expected outcome

User roles deactivation to be aligned with the use of `UserAndRoleAssociation` for managing user-role associations. | non_usab | user role deactivation of roles should check for existence of active users description it should not be possible to deactivate userrole instance that are associated with active users userrole s are associated with users via userandroleassociation which models many many association and is not activatable a user is associated with a role if a corresponding instance userandroleassociation exists the validation logic for deactivation of user roles should take this specificity into account expected outcome user roles deactivation to be aligned with the use of userandroleassociation for managing user role associations | 0 |

14,023 | 2,789,855,588 | IssuesEvent | 2015-05-08 21:56:53 | google/google-visualization-api-issues | https://api.github.com/repos/google/google-visualization-api-issues | closed | Gauge is Broken | Priority-Medium Type-Defect | Original [issue 317](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=317) created by orwant on 2010-06-16T18:14:44.000Z:

Look even at your own examples - the gauge does not fire correctly on first 'changeGauge' and on subsequent changes it jerks to the change, no longer smooth.

JS error - TypeError: Result of expression 'fe.ve' [undefined] is not an object. default.gauge.I.js:537 | 1.0 | Gauge is Broken - Original [issue 317](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=317) created by orwant on 2010-06-16T18:14:44.000Z:

Look even at your own examples - the gauge does not fire correctly on first 'changeGauge' and on subsequent changes it jerks to the change, no longer smooth.

JS error - TypeError: Result of expression 'fe.ve' [undefined] is not an object. default.gauge.I.js:537 | non_usab | gauge is broken original created by orwant on look even at your own examples the gauge does not fire correctly on first changegauge and on subsequent changes it jerks to the change no longer smooth js error typeerror result of expression fe ve is not an object default gauge i js | 0 |

277,317 | 30,610,829,768 | IssuesEvent | 2023-07-23 15:33:20 | tyhal/tyhal.com | https://api.github.com/repos/tyhal/tyhal.com | closed | CVE-2018-11697 (High) detected in node-sassv4.13.1, CSS::Sassv3.6.0 | security vulnerability | ## CVE-2018-11697 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sassv4.13.1</b>, <b>CSS::Sassv3.6.0</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in LibSass through 3.5.4. An out-of-bounds read of a memory region was found in the function Sass::Prelexer::exactly() which could be leveraged by an attacker to disclose information or manipulated to read from unmapped memory causing a denial of service.

<p>Publish Date: 2018-06-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-11697>CVE-2018-11697</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/sass/libsass/releases/tag/3.6.0">https://github.com/sass/libsass/releases/tag/3.6.0</a></p>

<p>Release Date: 2018-06-04</p>

<p>Fix Resolution: libsass - 3.6.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2018-11697 (High) detected in node-sassv4.13.1, CSS::Sassv3.6.0 - ## CVE-2018-11697 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sassv4.13.1</b>, <b>CSS::Sassv3.6.0</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was discovered in LibSass through 3.5.4. An out-of-bounds read of a memory region was found in the function Sass::Prelexer::exactly() which could be leveraged by an attacker to disclose information or manipulated to read from unmapped memory causing a denial of service.

<p>Publish Date: 2018-06-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-11697>CVE-2018-11697</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/sass/libsass/releases/tag/3.6.0">https://github.com/sass/libsass/releases/tag/3.6.0</a></p>

<p>Release Date: 2018-06-04</p>

<p>Fix Resolution: libsass - 3.6.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_usab | cve high detected in node css cve high severity vulnerability vulnerable libraries node css vulnerability details an issue was discovered in libsass through an out of bounds read of a memory region was found in the function sass prelexer exactly which could be leveraged by an attacker to disclose information or manipulated to read from unmapped memory causing a denial of service publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact high integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution libsass step up your open source security game with whitesource | 0 |

23,104 | 21,013,712,450 | IssuesEvent | 2022-03-30 09:04:44 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | opened | Openssl now work in Rhel | usability | Hello!

There is RHEL 8, Clickhouse 22.2.2.1

For openssl I generated and signed by the created CA. (method 2 from https://altinity.com/blog/2019/3/5/clickhouse-networking-part-2 )

**CA certificate added to trusted:**

cp ca.crt /etc/pki/ca-trust/anchors

update-ca-etrust extract

**Connection verification via openssl is successful:**

openssl s_client -connect $HOSTNAME:9440 < /dev/null

Verify return code: 0 (ok)

**But connecting via clickhouse-client I get error:**

"The certificate yielded the error: unable to get the issuer certificate" and

"... unable to verify the first certificate"

(you don't have to strictly follow this form)

**I try to add path to trust store certificates, but not help:**

<caConfig>/etc/pki/ca-trust/</caConfig>

<caConfig>/usr/share/pki/ca-trust-source</caConfig>

How can I configure openssl on RHEL?

It works fine in Ubuntu.

| True | Openssl now work in Rhel - Hello!

There is RHEL 8, Clickhouse 22.2.2.1

For openssl I generated and signed by the created CA. (method 2 from https://altinity.com/blog/2019/3/5/clickhouse-networking-part-2 )

**CA certificate added to trusted:**

cp ca.crt /etc/pki/ca-trust/anchors

update-ca-etrust extract

**Connection verification via openssl is successful:**

openssl s_client -connect $HOSTNAME:9440 < /dev/null

Verify return code: 0 (ok)

**But connecting via clickhouse-client I get error:**

"The certificate yielded the error: unable to get the issuer certificate" and

"... unable to verify the first certificate"

(you don't have to strictly follow this form)

**I try to add path to trust store certificates, but not help:**

<caConfig>/etc/pki/ca-trust/</caConfig>

<caConfig>/usr/share/pki/ca-trust-source</caConfig>

How can I configure openssl on RHEL?

It works fine in Ubuntu.

| usab | openssl now work in rhel hello there is rhel clickhouse for openssl i generated and signed by the created ca method from ca certificate added to trusted cp ca crt etc pki ca trust anchors update ca etrust extract connection verification via openssl is successful openssl s client connect hostname dev null verify return code ok but connecting via clickhouse client i get error the certificate yielded the error unable to get the issuer certificate and unable to verify the first certificate you don t have to strictly follow this form i try to add path to trust store certificates but not help etc pki ca trust usr share pki ca trust source how can i configure openssl on rhel it works fine in ubuntu | 1 |

6,342 | 4,228,765,028 | IssuesEvent | 2016-07-04 02:05:42 | tgstation/tgstation | https://api.github.com/repos/tgstation/tgstation | closed | Hotkey mode still doesn't stay the same when you switch mobs | Bug Usability | Althought the annoying issue of looking like it did is out, it still doesn't keep the preference when you enter a new mob. | True | Hotkey mode still doesn't stay the same when you switch mobs - Althought the annoying issue of looking like it did is out, it still doesn't keep the preference when you enter a new mob. | usab | hotkey mode still doesn t stay the same when you switch mobs althought the annoying issue of looking like it did is out it still doesn t keep the preference when you enter a new mob | 1 |

15,064 | 9,694,089,600 | IssuesEvent | 2019-05-24 17:56:17 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Scrolling a multiline text box scrolls the Inspector also | enhancement topic:editor usability | <!-- Please search existing issues for potential duplicates before filing yours:

https://github.com/godotengine/godot/issues?q=is%3Aissue

-->

**Godot version:**

<!-- Specify commit hash if non-official. -->

8fc92ae86faed72c402e7770246ed18d50b5c43b

**OS/device including version:**

<!-- Specify GPU model and drivers if graphics-related. -->

Linux

**Issue description:**

<!-- What happened, and what was expected. -->

When the mouse wheel is used to scroll a multiline text box, it will scroll both it and the Inspector. Ideally it should only scroll the text box when the mouse is hovering over it.

| True | Scrolling a multiline text box scrolls the Inspector also - <!-- Please search existing issues for potential duplicates before filing yours:

https://github.com/godotengine/godot/issues?q=is%3Aissue

-->

**Godot version:**

<!-- Specify commit hash if non-official. -->

8fc92ae86faed72c402e7770246ed18d50b5c43b

**OS/device including version:**

<!-- Specify GPU model and drivers if graphics-related. -->

Linux

**Issue description:**

<!-- What happened, and what was expected. -->

When the mouse wheel is used to scroll a multiline text box, it will scroll both it and the Inspector. Ideally it should only scroll the text box when the mouse is hovering over it.

| usab | scrolling a multiline text box scrolls the inspector also please search existing issues for potential duplicates before filing yours godot version os device including version linux issue description when the mouse wheel is used to scroll a multiline text box it will scroll both it and the inspector ideally it should only scroll the text box when the mouse is hovering over it | 1 |

9,421 | 6,288,975,274 | IssuesEvent | 2017-07-19 18:10:48 | FStarLang/FStar | https://api.github.com/repos/FStarLang/FStar | closed | `val` annotations yield ugly-looking signatures | enhancement pull-request usability | Contrast this:

```

val fold_left: ('a -> 'b -> ML 'a) -> 'a -> list 'b -> ML 'a

let rec fold_left f x y = match y with

| [] -> x

| hd::tl -> fold_left f (f x hd) tl

```

which yields this for `#info fold_left`:

```

fold_left: (uu___:(uu___:'a@1 -> uu___:'b@1 -> 'a@3) -> uu___:'a@2 -> uu___:(list 'b@2) -> 'a@4)

```

with this:

```

let rec fold_left (f: 'a -> 'b -> ML 'a) (x: 'a) (y: list 'b) : ML 'a = match y with

| [] -> x

| hd::tl -> fold_left f (f x hd) tl

```

which yields this for `#info fold_left`:

```

fold_left: (f:(uu___:'a@1 -> uu___:'b@1 -> 'a@3) -> x:'a@2 -> y:(list 'b@2) -> 'a@4)

```

It would be nice t preserve the names `f`, `x`, and `y` even when there is a `val`.

| True | `val` annotations yield ugly-looking signatures - Contrast this:

```

val fold_left: ('a -> 'b -> ML 'a) -> 'a -> list 'b -> ML 'a

let rec fold_left f x y = match y with

| [] -> x

| hd::tl -> fold_left f (f x hd) tl

```

which yields this for `#info fold_left`:

```

fold_left: (uu___:(uu___:'a@1 -> uu___:'b@1 -> 'a@3) -> uu___:'a@2 -> uu___:(list 'b@2) -> 'a@4)

```

with this:

```

let rec fold_left (f: 'a -> 'b -> ML 'a) (x: 'a) (y: list 'b) : ML 'a = match y with

| [] -> x

| hd::tl -> fold_left f (f x hd) tl

```

which yields this for `#info fold_left`:

```

fold_left: (f:(uu___:'a@1 -> uu___:'b@1 -> 'a@3) -> x:'a@2 -> y:(list 'b@2) -> 'a@4)

```

It would be nice t preserve the names `f`, `x`, and `y` even when there is a `val`.

| usab | val annotations yield ugly looking signatures contrast this val fold left a b ml a a list b ml a let rec fold left f x y match y with x hd tl fold left f f x hd tl which yields this for info fold left fold left uu uu a uu b a uu a uu list b a with this let rec fold left f a b ml a x a y list b ml a match y with x hd tl fold left f f x hd tl which yields this for info fold left fold left f uu a uu b a x a y list b a it would be nice t preserve the names f x and y even when there is a val | 1 |

19,731 | 4,441,997,699 | IssuesEvent | 2016-08-19 11:41:13 | coala-analyzer/coala | https://api.github.com/repos/coala-analyzer/coala | closed | Codestyle: Add continuation line policy | area/documentation difficulty/newcomer | Basically we are already enforcing a style, where multiple-line lists, dicts, tuples, function definitions, function calls, and any such structures either:

- stay in one line

- span multiple lines that list one parameter/ item each

Since this is not covered by PEP8 we should add a section to our [codestyle](https://github.com/coala-analyzer/coala/blob/master/docs/Getting_Involved/Codestyle.rst)

| 1.0 | Codestyle: Add continuation line policy - Basically we are already enforcing a style, where multiple-line lists, dicts, tuples, function definitions, function calls, and any such structures either:

- stay in one line

- span multiple lines that list one parameter/ item each

Since this is not covered by PEP8 we should add a section to our [codestyle](https://github.com/coala-analyzer/coala/blob/master/docs/Getting_Involved/Codestyle.rst)

| non_usab | codestyle add continuation line policy basically we are already enforcing a style where multiple line lists dicts tuples function definitions function calls and any such structures either stay in one line span multiple lines that list one parameter item each since this is not covered by we should add a section to our | 0 |

19,350 | 13,901,941,958 | IssuesEvent | 2020-10-20 04:09:21 | pulumi/pulumi | https://api.github.com/repos/pulumi/pulumi | opened | Add `logout --all` option | impact/usability kind/enhancement | #### Enhancement

The pulumi CLI should have a `--all` option on `pulumi logout` that removes `~/.pulumi/credentials.json` from the user's machine to "hard reset" a user's credentials without requiring them to remove the file manually. | True | Add `logout --all` option - #### Enhancement

The pulumi CLI should have a `--all` option on `pulumi logout` that removes `~/.pulumi/credentials.json` from the user's machine to "hard reset" a user's credentials without requiring them to remove the file manually. | usab | add logout all option enhancement the pulumi cli should have a all option on pulumi logout that removes pulumi credentials json from the user s machine to hard reset a user s credentials without requiring them to remove the file manually | 1 |

18,666 | 13,152,292,872 | IssuesEvent | 2020-08-09 21:24:01 | greenlion/warp | https://api.github.com/repos/greenlion/warp | opened | Support clone plugin | enhancement usability | MySQL 8.0 can clone a server from another server. Right now only InnoDB is supported by the server for cloning. Cloning WARP based tables should be supported as well. This will require modifying the server and won't be pluggable so it is a semi-non-compatible change to upstream MySQL. WARP could still be loaded into a non WarpSQL server, but cloning would clone WARP tables as empty. | True | Support clone plugin - MySQL 8.0 can clone a server from another server. Right now only InnoDB is supported by the server for cloning. Cloning WARP based tables should be supported as well. This will require modifying the server and won't be pluggable so it is a semi-non-compatible change to upstream MySQL. WARP could still be loaded into a non WarpSQL server, but cloning would clone WARP tables as empty. | usab | support clone plugin mysql can clone a server from another server right now only innodb is supported by the server for cloning cloning warp based tables should be supported as well this will require modifying the server and won t be pluggable so it is a semi non compatible change to upstream mysql warp could still be loaded into a non warpsql server but cloning would clone warp tables as empty | 1 |

16,885 | 11,455,143,266 | IssuesEvent | 2020-02-06 18:29:28 | connectome-neuprint/neuPrintExplorer | https://api.github.com/repos/connectome-neuprint/neuPrintExplorer | closed | Option to copy collected results | enhancement in progress nicetohave usability | People often run a query just to put the results into another query. Add some way to take the format of a column as an input, like a built in collect function. Some check box where you can see and copy your results as a, b, c, d or a,b,c,d. | True | Option to copy collected results - People often run a query just to put the results into another query. Add some way to take the format of a column as an input, like a built in collect function. Some check box where you can see and copy your results as a, b, c, d or a,b,c,d. | usab | option to copy collected results people often run a query just to put the results into another query add some way to take the format of a column as an input like a built in collect function some check box where you can see and copy your results as a b c d or a b c d | 1 |

6,807 | 23,938,141,095 | IssuesEvent | 2022-09-11 14:26:00 | smcnab1/op-question-mark | https://api.github.com/repos/smcnab1/op-question-mark | closed | [BUG] Fix and re-enable HA Restart Notify | Status: Confirmed Type: Bug Priority: High For: Automations | Fix how often notified and only TTS during day then re-enable on HA UI | 1.0 | [BUG] Fix and re-enable HA Restart Notify - Fix how often notified and only TTS during day then re-enable on HA UI | non_usab | fix and re enable ha restart notify fix how often notified and only tts during day then re enable on ha ui | 0 |

11,737 | 7,423,183,064 | IssuesEvent | 2018-03-23 03:42:24 | matomo-org/matomo | https://api.github.com/repos/matomo-org/matomo | closed | Chrome: Selecting the tracking code with one click does not work anymore | Bug c: Usability | We have an angular directive `<pre piwik-select-on-focus>...</pre>` to select for example a code block with just one click. This is used for example when generating the tracking code. It is also used in other places for example by the Widgets screen to select a link or HTML, by Custom Dimensions, by plugins like A/B testing etc.

This is likely due to https://www.chromestatus.com/feature/6680566019653632

It likely still works for `<textarea piwik-select-on-focus>...</textarea>` but not for any other element. | True | Chrome: Selecting the tracking code with one click does not work anymore - We have an angular directive `<pre piwik-select-on-focus>...</pre>` to select for example a code block with just one click. This is used for example when generating the tracking code. It is also used in other places for example by the Widgets screen to select a link or HTML, by Custom Dimensions, by plugins like A/B testing etc.

This is likely due to https://www.chromestatus.com/feature/6680566019653632

It likely still works for `<textarea piwik-select-on-focus>...</textarea>` but not for any other element. | usab | chrome selecting the tracking code with one click does not work anymore we have an angular directive to select for example a code block with just one click this is used for example when generating the tracking code it is also used in other places for example by the widgets screen to select a link or html by custom dimensions by plugins like a b testing etc this is likely due to it likely still works for but not for any other element | 1 |

2,456 | 3,466,160,083 | IssuesEvent | 2015-12-22 01:02:00 | adobe-photoshop/spaces-design | https://api.github.com/repos/adobe-photoshop/spaces-design | opened | Document activation is slow | Performance | There are at least two problems:

1. We currently don't start updating the UI until after Photoshop finishes changing the document and updating the canvas. Usually, we should be able to perform these operations in parallel.

2. For documents that are already initialized, we shouldn't need to change more than one or two CSS classes because each document's panel structure is maintained separately in the DOM. I suspect it takes longer than this because our `shouldComponentUpdate` functions aren't sharp enough to limit re-rendering to the top-most components. | True | Document activation is slow - There are at least two problems:

1. We currently don't start updating the UI until after Photoshop finishes changing the document and updating the canvas. Usually, we should be able to perform these operations in parallel.

2. For documents that are already initialized, we shouldn't need to change more than one or two CSS classes because each document's panel structure is maintained separately in the DOM. I suspect it takes longer than this because our `shouldComponentUpdate` functions aren't sharp enough to limit re-rendering to the top-most components. | non_usab | document activation is slow there are at least two problems we currently don t start updating the ui until after photoshop finishes changing the document and updating the canvas usually we should be able to perform these operations in parallel for documents that are already initialized we shouldn t need to change more than one or two css classes because each document s panel structure is maintained separately in the dom i suspect it takes longer than this because our shouldcomponentupdate functions aren t sharp enough to limit re rendering to the top most components | 0 |

20,683 | 15,878,058,635 | IssuesEvent | 2021-04-09 10:28:07 | opengovsg/checkfirst | https://api.github.com/repos/opengovsg/checkfirst | closed | Make button unclickable when it can't be changed | usability enhancement | See screenshot below

<img width="469" alt="Screenshot 2021-03-22 at 1 52 00 PM" src="https://user-images.githubusercontent.com/23736580/111946149-ea839c80-8b15-11eb-86d7-783e0d59d534.png">

| True | Make button unclickable when it can't be changed - See screenshot below

<img width="469" alt="Screenshot 2021-03-22 at 1 52 00 PM" src="https://user-images.githubusercontent.com/23736580/111946149-ea839c80-8b15-11eb-86d7-783e0d59d534.png">

| usab | make button unclickable when it can t be changed see screenshot below img width alt screenshot at pm src | 1 |

12,009 | 3,562,008,042 | IssuesEvent | 2016-01-24 06:10:15 | CollaboratingPlatypus/PetaPoco | https://api.github.com/repos/CollaboratingPlatypus/PetaPoco | closed | Integration Testing Guidelines - Doc review | documentation review | Doc review request - [Integration testing guidelines](https://github.com/CollaboratingPlatypus/PetaPoco/wiki/Integration-Testing-Guidelines)

@CollaboratingPlatypus/petapoco-documentation

Community input welcome! | 1.0 | Integration Testing Guidelines - Doc review - Doc review request - [Integration testing guidelines](https://github.com/CollaboratingPlatypus/PetaPoco/wiki/Integration-Testing-Guidelines)

@CollaboratingPlatypus/petapoco-documentation

Community input welcome! | non_usab | integration testing guidelines doc review doc review request collaboratingplatypus petapoco documentation community input welcome | 0 |

28,374 | 12,834,703,495 | IssuesEvent | 2020-07-07 11:33:38 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Change the word create to creates | Pri2 cxp doc-enhancement service-fabric/svc triaged | Not: create

But: creates

Then depending on that information the manager service create an instance of your actual contact-storage service just for that customer.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 9bb25acc-6868-7ae8-5b42-813eee29efa4

* Version Independent ID: ba88e4b7-f27c-6d0a-7550-6e04c6f36c4c

* Content: [Scalability of Service Fabric services - Azure Service Fabric](https://docs.microsoft.com/en-us/azure/service-fabric/service-fabric-concepts-scalability)

* Content Source: [articles/service-fabric/service-fabric-concepts-scalability.md](https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/service-fabric/service-fabric-concepts-scalability.md)

* Service: **service-fabric**

* GitHub Login: @masnider

* Microsoft Alias: **masnider** | 1.0 | Change the word create to creates - Not: create

But: creates

Then depending on that information the manager service create an instance of your actual contact-storage service just for that customer.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 9bb25acc-6868-7ae8-5b42-813eee29efa4

* Version Independent ID: ba88e4b7-f27c-6d0a-7550-6e04c6f36c4c

* Content: [Scalability of Service Fabric services - Azure Service Fabric](https://docs.microsoft.com/en-us/azure/service-fabric/service-fabric-concepts-scalability)

* Content Source: [articles/service-fabric/service-fabric-concepts-scalability.md](https://github.com/MicrosoftDocs/azure-docs/blob/master/articles/service-fabric/service-fabric-concepts-scalability.md)

* Service: **service-fabric**

* GitHub Login: @masnider

* Microsoft Alias: **masnider** | non_usab | change the word create to creates not create but creates then depending on that information the manager service create an instance of your actual contact storage service just for that customer document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source service service fabric github login masnider microsoft alias masnider | 0 |

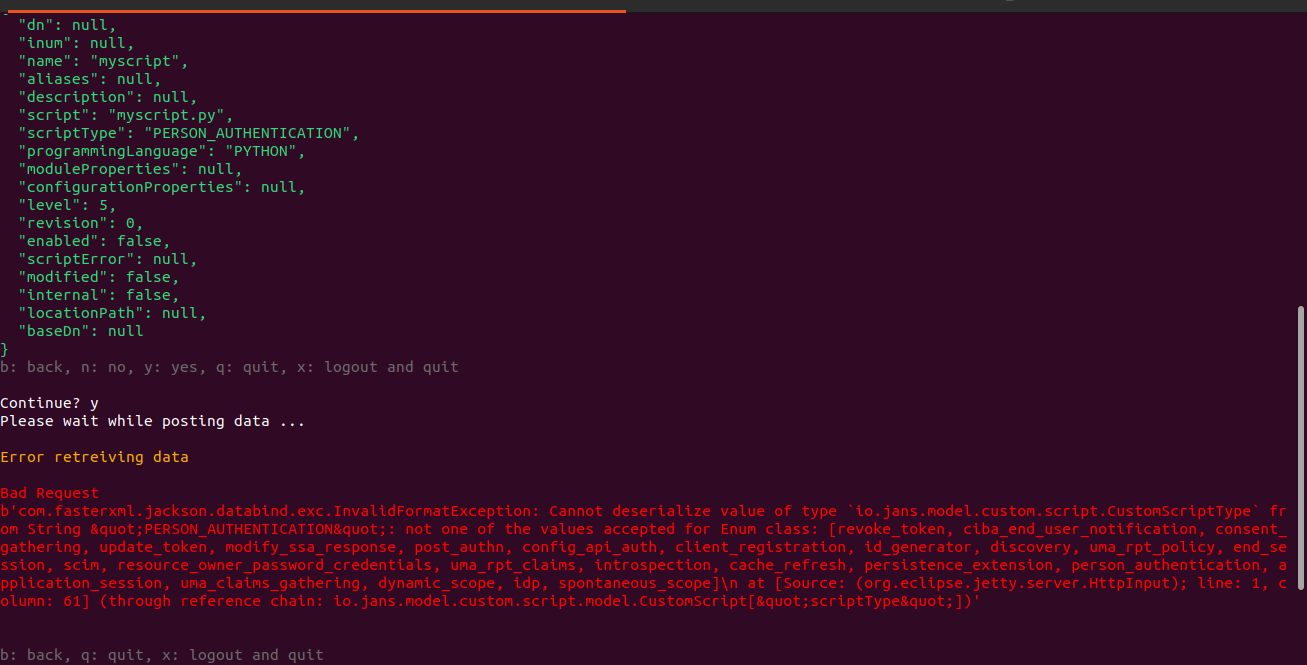

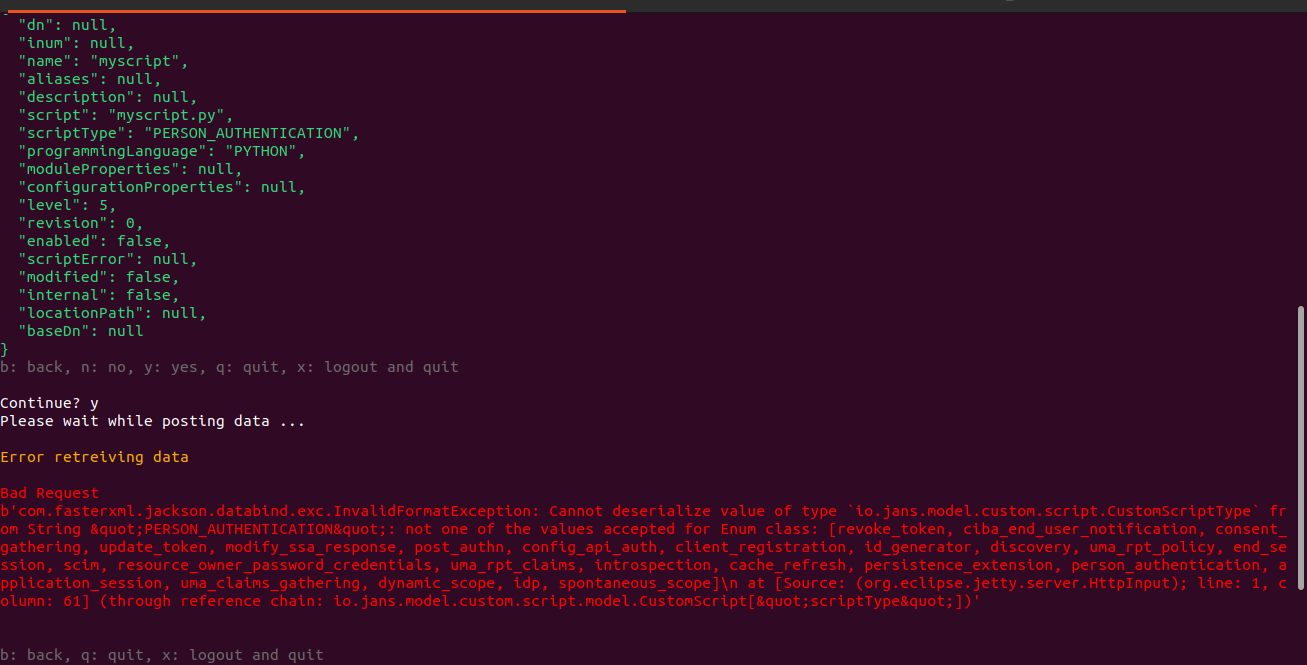

728,143 | 25,067,700,350 | IssuesEvent | 2022-11-07 09:40:04 | JanssenProject/jans | https://api.github.com/repos/JanssenProject/jans | opened | fix:(jans-cli) unable to add custom script with mendatory fields | kind-bug comp-jans-config-api priority-1 | **Describe the bug**

unable to add custom script with mendatory fields

**To Reproduce**

Steps to reproduce the behavior:

1. isntall jans 1.0.3 release

2. launch /opt/jans/jans-cli/config-cli.py

3. select 6 for custom script and 2 to add custom script

4. add required details

5. when prompted "Populate optional fields?" add n

6. continue? add y

7. see error

**Expected behavior**

should able to add custom script with mendatory fields

**Screenshots**

**Desktop (please complete the following information):**

- OS: ubuntu

- Browser [e.g. chrome, safari]

- Version 20.04

- DB: openDJ

**Smartphone (please complete the following information):**

- Device: [e.g. iPhone6]

- OS: [e.g. iOS8.1]

- Browser [e.g. stock browser, safari]

- Version [e.g. 22]

**Additional context**

Add any other context about the problem here.

| 1.0 | fix:(jans-cli) unable to add custom script with mendatory fields - **Describe the bug**

unable to add custom script with mendatory fields

**To Reproduce**

Steps to reproduce the behavior:

1. isntall jans 1.0.3 release

2. launch /opt/jans/jans-cli/config-cli.py

3. select 6 for custom script and 2 to add custom script

4. add required details

5. when prompted "Populate optional fields?" add n

6. continue? add y

7. see error

**Expected behavior**

should able to add custom script with mendatory fields

**Screenshots**

**Desktop (please complete the following information):**

- OS: ubuntu

- Browser [e.g. chrome, safari]

- Version 20.04

- DB: openDJ

**Smartphone (please complete the following information):**

- Device: [e.g. iPhone6]

- OS: [e.g. iOS8.1]

- Browser [e.g. stock browser, safari]

- Version [e.g. 22]

**Additional context**

Add any other context about the problem here.

| non_usab | fix jans cli unable to add custom script with mendatory fields describe the bug unable to add custom script with mendatory fields to reproduce steps to reproduce the behavior isntall jans release launch opt jans jans cli config cli py select for custom script and to add custom script add required details when prompted populate optional fields add n continue add y see error expected behavior should able to add custom script with mendatory fields screenshots desktop please complete the following information os ubuntu browser version db opendj smartphone please complete the following information device os browser version additional context add any other context about the problem here | 0 |

22,276 | 18,943,530,871 | IssuesEvent | 2021-11-18 07:29:28 | VirtusLab/git-machete | https://api.github.com/repos/VirtusLab/git-machete | closed | `github create-pr`: check if base branch for PR exists in remote | github usability | Attempt to create a pull request with base branch being already deleted from remote ends up with `Unprocessable Entity` error. (example in #332).

Proposed solution:

Perform `git fetch <remote>` at the beginning of `create-pr`. if base branch is not present in remote branches, perform `handle_untracked_branch` with relevant remote for missing base branch. | True | `github create-pr`: check if base branch for PR exists in remote - Attempt to create a pull request with base branch being already deleted from remote ends up with `Unprocessable Entity` error. (example in #332).

Proposed solution:

Perform `git fetch <remote>` at the beginning of `create-pr`. if base branch is not present in remote branches, perform `handle_untracked_branch` with relevant remote for missing base branch. | usab | github create pr check if base branch for pr exists in remote attempt to create a pull request with base branch being already deleted from remote ends up with unprocessable entity error example in proposed solution perform git fetch at the beginning of create pr if base branch is not present in remote branches perform handle untracked branch with relevant remote for missing base branch | 1 |

469,568 | 13,521,017,602 | IssuesEvent | 2020-09-15 06:14:19 | geocollections/sarv-edit | https://api.github.com/repos/geocollections/sarv-edit | closed | location table upload error | Difficulty: Hard Priority: High Source: API Status: Completed Type: Bug | API throws key error:

and suggests choices related to 'attachment_link' table | 1.0 | location table upload error - API throws key error:

and suggests choices related to 'attachment_link' table | non_usab | location table upload error api throws key error and suggests choices related to attachment link table | 0 |

50,781 | 21,409,800,686 | IssuesEvent | 2022-04-22 03:47:24 | Azure/azure-sdk-for-java | https://api.github.com/repos/Azure/azure-sdk-for-java | closed | [QUERY] ServiceBusQueueOperation and ServiceBusTopicOperation extends deprecated interface (SendOperation) | question Client customer-reported needs-team-attention azure-spring azure-spring-servicebus | **Query/Question**

* com.azure.spring.integration.servicebus.queue.ServiceBusQueueOperation

* com.azure.spring.integration.servicebus.topic.ServiceBusTopicOperation

I'm using these interfaces for sending to the Service Bus.

And I found that the interface (SendOperation) they inherit are deprecated.

Is there any alternative to avoid using deprecations?

**Setup (please complete the following information if applicable):**

- Library/Libraries: com.azure.spring:azure-spring-integration-servicebus:2.13.0 | 1.0 | [QUERY] ServiceBusQueueOperation and ServiceBusTopicOperation extends deprecated interface (SendOperation) - **Query/Question**

* com.azure.spring.integration.servicebus.queue.ServiceBusQueueOperation

* com.azure.spring.integration.servicebus.topic.ServiceBusTopicOperation

I'm using these interfaces for sending to the Service Bus.

And I found that the interface (SendOperation) they inherit are deprecated.

Is there any alternative to avoid using deprecations?

**Setup (please complete the following information if applicable):**

- Library/Libraries: com.azure.spring:azure-spring-integration-servicebus:2.13.0 | non_usab | servicebusqueueoperation and servicebustopicoperation extends deprecated interface sendoperation query question com azure spring integration servicebus queue servicebusqueueoperation com azure spring integration servicebus topic servicebustopicoperation i m using these interfaces for sending to the service bus and i found that the interface sendoperation they inherit are deprecated is there any alternative to avoid using deprecations setup please complete the following information if applicable library libraries com azure spring azure spring integration servicebus | 0 |

120,075 | 15,700,646,322 | IssuesEvent | 2021-03-26 10:07:37 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | opened | [Bug] UX : End Tour option must be displayed to user on the Tour Pop up | Bug Low Needs Design Needs Triaging | Steps to reproduce:

1) Navigate to application

2) Click on Welcome tour

3) Now navigate through the app

and observe the pop up

Observation:

End tour option is not displayed on the Pop up however this is placed on the header.

Expectation:

Since the user focus is on the Pop up that is helping him to navigate or travel through the app it would be great help to display the "End Tour" option on the Pop Up

| 1.0 | [Bug] UX : End Tour option must be displayed to user on the Tour Pop up - Steps to reproduce:

1) Navigate to application

2) Click on Welcome tour

3) Now navigate through the app

and observe the pop up

Observation:

End tour option is not displayed on the Pop up however this is placed on the header.

Expectation:

Since the user focus is on the Pop up that is helping him to navigate or travel through the app it would be great help to display the "End Tour" option on the Pop Up

| non_usab | ux end tour option must be displayed to user on the tour pop up steps to reproduce navigate to application click on welcome tour now navigate through the app and observe the pop up observation end tour option is not displayed on the pop up however this is placed on the header expectation since the user focus is on the pop up that is helping him to navigate or travel through the app it would be great help to display the end tour option on the pop up | 0 |