Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3 values | title stringlengths 2 742 | labels stringlengths 4 431 | body stringlengths 5 239k | index stringclasses 10 values | text_combine stringlengths 96 240k | label stringclasses 2 values | text stringlengths 96 200k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

281,828 | 30,888,966,783 | IssuesEvent | 2023-08-04 02:04:16 | nidhi7598/linux-4.1.15_CVE-2019-10220 | https://api.github.com/repos/nidhi7598/linux-4.1.15_CVE-2019-10220 | reopened | CVE-2017-7542 (Medium) detected in linuxlinux-4.4.302 | Mend: dependency security vulnerability | ## CVE-2017-7542 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.4.302</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/linux-4.1.15_CVE-2019-10220/commit/6a0d304d962ca933d73f507ce02157ef2791851c">6a0d304d962ca933d73f507ce02157ef2791851c</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/ipv6/output_core.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

The ip6_find_1stfragopt function in net/ipv6/output_core.c in the Linux kernel through 4.12.3 allows local users to cause a denial of service (integer overflow and infinite loop) by leveraging the ability to open a raw socket.

<p>Publish Date: 2017-07-21

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2017-7542>CVE-2017-7542</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-7542">http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-7542</a></p>

<p>Release Date: 2017-07-21</p>

<p>Fix Resolution: v4.13-rc2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2017-7542 (Medium) detected in linuxlinux-4.4.302 - ## CVE-2017-7542 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.4.302</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/linux-4.1.15_CVE-2019-10220/commit/6a0d304d962ca933d73f507ce02157ef2791851c">6a0d304d962ca933d73f507ce02157ef2791851c</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/net/ipv6/output_core.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

The ip6_find_1stfragopt function in net/ipv6/output_core.c in the Linux kernel through 4.12.3 allows local users to cause a denial of service (integer overflow and infinite loop) by leveraging the ability to open a raw socket.

<p>Publish Date: 2017-07-21

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2017-7542>CVE-2017-7542</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-7542">http://web.nvd.nist.gov/view/vuln/detail?vulnId=CVE-2017-7542</a></p>

<p>Release Date: 2017-07-21</p>

<p>Fix Resolution: v4.13-rc2</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_usab | cve medium detected in linuxlinux cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files net output core c vulnerability details the find function in net output core c in the linux kernel through allows local users to cause a denial of service integer overflow and infinite loop by leveraging the ability to open a raw socket publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend | 0 |

121,495 | 12,127,507,324 | IssuesEvent | 2020-04-22 18:50:54 | vmware-tanzu/velero | https://api.github.com/repos/vmware-tanzu/velero | closed | Velero site missing vSphere installation and configuration instructions | Documentation | **Describe the problem/challenge you have**

The Velero website site doesn't include installation and configuration instructions for the vShpere provider

**Describe the solution you'd like**

A link in the provider page to install and configuration instructions for vSphere

**Anything else you would like to add:**

Referencing the latest Velero page here

https://velero.io/docs/v1.3.1/supported-providers/

**Environment:**

N/A

- Velero version (use `velero version`):

- Kubernetes version (use `kubectl version`):

- Kubernetes installer & version:

- Cloud provider or hardware configuration:

- OS (e.g. from `/etc/os-release`):

| 1.0 | Velero site missing vSphere installation and configuration instructions - **Describe the problem/challenge you have**

The Velero website site doesn't include installation and configuration instructions for the vShpere provider

**Describe the solution you'd like**

A link in the provider page to install and configuration instructions for vSphere

**Anything else you would like to add:**

Referencing the latest Velero page here

https://velero.io/docs/v1.3.1/supported-providers/

**Environment:**

N/A

- Velero version (use `velero version`):

- Kubernetes version (use `kubectl version`):

- Kubernetes installer & version:

- Cloud provider or hardware configuration:

- OS (e.g. from `/etc/os-release`):

| non_usab | velero site missing vsphere installation and configuration instructions describe the problem challenge you have the velero website site doesn t include installation and configuration instructions for the vshpere provider describe the solution you d like a link in the provider page to install and configuration instructions for vsphere anything else you would like to add referencing the latest velero page here environment n a velero version use velero version kubernetes version use kubectl version kubernetes installer version cloud provider or hardware configuration os e g from etc os release | 0 |

86,526 | 17,019,954,468 | IssuesEvent | 2021-07-02 17:16:20 | TromboneDavies/PolarOps | https://api.github.com/repos/TromboneDavies/PolarOps | closed | Make collector.py store dates in better format (?) | code | Not sure if we actually want this, but it's hard on the eyes to look at the `.csv` file, or its `DataFrame` manifestation after `pd.read_csv()`'ing it, and see just a bunch of meaningless huge integers. It would be much nicer to see "5/13/2016 11:05PM" type stuff. | 1.0 | Make collector.py store dates in better format (?) - Not sure if we actually want this, but it's hard on the eyes to look at the `.csv` file, or its `DataFrame` manifestation after `pd.read_csv()`'ing it, and see just a bunch of meaningless huge integers. It would be much nicer to see "5/13/2016 11:05PM" type stuff. | non_usab | make collector py store dates in better format not sure if we actually want this but it s hard on the eyes to look at the csv file or its dataframe manifestation after pd read csv ing it and see just a bunch of meaningless huge integers it would be much nicer to see type stuff | 0 |

1,957 | 3,025,718,246 | IssuesEvent | 2015-08-03 10:35:18 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 22112410: Mail.app doesn't always release file handles of files sent as attachments. | classification:ui/usability reproducible:always status:open | #### Description

Summary:

Compose an email with an image attachment. "Edit" the image with the Markup feature. Send email. Wait a few minutes (the mail needs to send). Put attachment in trash. Try and empty trash. Just try. I dare you.

Steps to Reproduce:

1. Open Mail.app

2. Compose new message.

3. Add image attachment.

4. Edit the attachment with "Markup".

5. Send message.

6. Trash image file.

7. Wait a few minutes.

8. Attempt to empty trash.

Expected Results:

That the trash is emptied.

Actual Results:

I can't empty Trash because the files I've attached to a mail message are still in use by Mail (open files). See attached screenshots.

Version:

10.10.4 (14E46)

Notes:

Configuration:

If you don't edit the file before sending, the file handle is not leaked.

-

Product Version: 10.10.4 (14E46)

Created: 2015-08-03T10:33:37.060070

Originated: 2015-08-03T00:00:00

Open Radar Link: http://www.openradar.me/22112410 | True | 22112410: Mail.app doesn't always release file handles of files sent as attachments. - #### Description

Summary:

Compose an email with an image attachment. "Edit" the image with the Markup feature. Send email. Wait a few minutes (the mail needs to send). Put attachment in trash. Try and empty trash. Just try. I dare you.

Steps to Reproduce:

1. Open Mail.app

2. Compose new message.

3. Add image attachment.

4. Edit the attachment with "Markup".

5. Send message.

6. Trash image file.

7. Wait a few minutes.

8. Attempt to empty trash.

Expected Results:

That the trash is emptied.

Actual Results:

I can't empty Trash because the files I've attached to a mail message are still in use by Mail (open files). See attached screenshots.

Version:

10.10.4 (14E46)

Notes:

Configuration:

If you don't edit the file before sending, the file handle is not leaked.

-

Product Version: 10.10.4 (14E46)

Created: 2015-08-03T10:33:37.060070

Originated: 2015-08-03T00:00:00

Open Radar Link: http://www.openradar.me/22112410 | usab | mail app doesn t always release file handles of files sent as attachments description summary compose an email with an image attachment edit the image with the markup feature send email wait a few minutes the mail needs to send put attachment in trash try and empty trash just try i dare you steps to reproduce open mail app compose new message add image attachment edit the attachment with markup send message trash image file wait a few minutes attempt to empty trash expected results that the trash is emptied actual results i can t empty trash because the files i ve attached to a mail message are still in use by mail open files see attached screenshots version notes configuration if you don t edit the file before sending the file handle is not leaked product version created originated open radar link | 1 |

96,319 | 16,129,615,662 | IssuesEvent | 2021-04-29 01:04:02 | RG4421/ampere-centos-kernel | https://api.github.com/repos/RG4421/ampere-centos-kernel | opened | CVE-2020-27815 (High) detected in https://source.codeaurora.org/quic/kernel/agross-msm/qcom-arm64-for-4.3 | security vulnerability | ## CVE-2020-27815 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>https://source.codeaurora.org/quic/kernel/agross-msm/qcom-arm64-for-4.3</b></p></summary>

<p>

<p>Library home page: <a href=https://source.codeaurora.org/quic/kernel/agross-msm/>https://source.codeaurora.org/quic/kernel/agross-msm/</a></p>

<p>Found in base branch: <b>amp-centos-8.0-kernel</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (3)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/fs/jfs/jfs_dmap.h</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/fs/jfs/jfs_dmap.h</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/fs/jfs/jfs_dmap.h</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Linux kernel is vulnerable to Array index out of bounds access when setting extended attributes on journaling.

<p>Publish Date: 2020-10-27

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27815>CVE-2020-27815</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

| True | CVE-2020-27815 (High) detected in https://source.codeaurora.org/quic/kernel/agross-msm/qcom-arm64-for-4.3 - ## CVE-2020-27815 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>https://source.codeaurora.org/quic/kernel/agross-msm/qcom-arm64-for-4.3</b></p></summary>

<p>

<p>Library home page: <a href=https://source.codeaurora.org/quic/kernel/agross-msm/>https://source.codeaurora.org/quic/kernel/agross-msm/</a></p>

<p>Found in base branch: <b>amp-centos-8.0-kernel</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (3)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/fs/jfs/jfs_dmap.h</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/fs/jfs/jfs_dmap.h</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>ampere-centos-kernel/fs/jfs/jfs_dmap.h</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

Linux kernel is vulnerable to Array index out of bounds access when setting extended attributes on journaling.

<p>Publish Date: 2020-10-27

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27815>CVE-2020-27815</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

| non_usab | cve high detected in cve high severity vulnerability vulnerable library library home page a href found in base branch amp centos kernel vulnerable source files ampere centos kernel fs jfs jfs dmap h ampere centos kernel fs jfs jfs dmap h ampere centos kernel fs jfs jfs dmap h vulnerability details linux kernel is vulnerable to array index out of bounds access when setting extended attributes on journaling publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href | 0 |

14,005 | 8,782,785,530 | IssuesEvent | 2018-12-20 02:02:10 | dotnet/machinelearning | https://api.github.com/repos/dotnet/machinelearning | closed | Add confidence intervals to permutation feature importance | usability | `Permutation Feature Importance` (aka `PFI`) computes the importance of a feature to a model by permuting values for that feature, scoring it with the model, and comparing the new evaluation metrics to the original evaluation metrics. For speed, `PFI` uses only one permutation, and this leads to a bit of randomness in the predicted importances. For example, based on the random seed features can change orderings of importance and permutations can even end up showing to improve the model performance. These issues can be fixed by allowing the calculation of confidence intervals around the feature importance values. | True | Add confidence intervals to permutation feature importance - `Permutation Feature Importance` (aka `PFI`) computes the importance of a feature to a model by permuting values for that feature, scoring it with the model, and comparing the new evaluation metrics to the original evaluation metrics. For speed, `PFI` uses only one permutation, and this leads to a bit of randomness in the predicted importances. For example, based on the random seed features can change orderings of importance and permutations can even end up showing to improve the model performance. These issues can be fixed by allowing the calculation of confidence intervals around the feature importance values. | usab | add confidence intervals to permutation feature importance permutation feature importance aka pfi computes the importance of a feature to a model by permuting values for that feature scoring it with the model and comparing the new evaluation metrics to the original evaluation metrics for speed pfi uses only one permutation and this leads to a bit of randomness in the predicted importances for example based on the random seed features can change orderings of importance and permutations can even end up showing to improve the model performance these issues can be fixed by allowing the calculation of confidence intervals around the feature importance values | 1 |

16,134 | 11,848,786,341 | IssuesEvent | 2020-03-24 14:18:38 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Unable to build System.Runtime.WindowsRuntime | area-Infrastructure | I just did a clean, sync, and full rebuild. When I now try to build System.Runtime.WindowsRuntime, I get this error:

```

d:\repos\runtime\src\libraries\System.Runtime.WindowsRuntime\src>d:\repos\runtime\dotnet msbuild /t:rebuild

D:\repos\runtime\.dotnet

Microsoft (R) Build Engine version 16.6.0-preview-20126-02+13cfe7fc5 for .NET Core

Copyright (C) Microsoft Corporation. All rights reserved.

C:\Users\stoub\.nuget\packages\microsoft.dotnet.build.tasks.targetframework.sdk\5.0.0-beta.20167.1\build\Microsoft.DotNet.Build.Tasks.TargetFramework.Sdk.targets(84,5): error : Not able to find a compatible supported target framework for netstandard1.0 in Project System.Runtime.WindowsRuntime.csproj. The Supported Configurations are netcoreapp5.0, netstandard2.0 [d:\repos\runtime\src\libraries\System.Runtime.WindowsRuntime\src\System.Runtime.WindowsRuntime.csproj]

C:\Users\stoub\.nuget\packages\microsoft.dotnet.build.tasks.targetframework.sdk\5.0.0-beta.20167.1\build\Microsoft.DotNet.Build.Tasks.TargetFramework.Sdk.targets(84,5): error : Not able to find a compatible supported target framework for netstandard1.2 in Project System.Runtime.WindowsRuntime.csproj. The Supported Configurations are netcoreapp5.0, netstandard2.0 [d:\repos\runtime\src\libraries\System.Runtime.WindowsRuntime\src\System.Runtime.WindowsRuntime.csproj]

Restore completed in 42.75 ms for d:\repos\runtime\src\libraries\restore\winrt\winrt.depproj.

Restore completed in 8.25 ms for d:\repos\runtime\src\libraries\restore\winrt\winrt.depproj.

```

cc: @Anipik, @ViktorHofer | 1.0 | Unable to build System.Runtime.WindowsRuntime - I just did a clean, sync, and full rebuild. When I now try to build System.Runtime.WindowsRuntime, I get this error:

```

d:\repos\runtime\src\libraries\System.Runtime.WindowsRuntime\src>d:\repos\runtime\dotnet msbuild /t:rebuild

D:\repos\runtime\.dotnet

Microsoft (R) Build Engine version 16.6.0-preview-20126-02+13cfe7fc5 for .NET Core

Copyright (C) Microsoft Corporation. All rights reserved.

C:\Users\stoub\.nuget\packages\microsoft.dotnet.build.tasks.targetframework.sdk\5.0.0-beta.20167.1\build\Microsoft.DotNet.Build.Tasks.TargetFramework.Sdk.targets(84,5): error : Not able to find a compatible supported target framework for netstandard1.0 in Project System.Runtime.WindowsRuntime.csproj. The Supported Configurations are netcoreapp5.0, netstandard2.0 [d:\repos\runtime\src\libraries\System.Runtime.WindowsRuntime\src\System.Runtime.WindowsRuntime.csproj]

C:\Users\stoub\.nuget\packages\microsoft.dotnet.build.tasks.targetframework.sdk\5.0.0-beta.20167.1\build\Microsoft.DotNet.Build.Tasks.TargetFramework.Sdk.targets(84,5): error : Not able to find a compatible supported target framework for netstandard1.2 in Project System.Runtime.WindowsRuntime.csproj. The Supported Configurations are netcoreapp5.0, netstandard2.0 [d:\repos\runtime\src\libraries\System.Runtime.WindowsRuntime\src\System.Runtime.WindowsRuntime.csproj]

Restore completed in 42.75 ms for d:\repos\runtime\src\libraries\restore\winrt\winrt.depproj.

Restore completed in 8.25 ms for d:\repos\runtime\src\libraries\restore\winrt\winrt.depproj.

```

cc: @Anipik, @ViktorHofer | non_usab | unable to build system runtime windowsruntime i just did a clean sync and full rebuild when i now try to build system runtime windowsruntime i get this error d repos runtime src libraries system runtime windowsruntime src d repos runtime dotnet msbuild t rebuild d repos runtime dotnet microsoft r build engine version preview for net core copyright c microsoft corporation all rights reserved c users stoub nuget packages microsoft dotnet build tasks targetframework sdk beta build microsoft dotnet build tasks targetframework sdk targets error not able to find a compatible supported target framework for in project system runtime windowsruntime csproj the supported configurations are c users stoub nuget packages microsoft dotnet build tasks targetframework sdk beta build microsoft dotnet build tasks targetframework sdk targets error not able to find a compatible supported target framework for in project system runtime windowsruntime csproj the supported configurations are restore completed in ms for d repos runtime src libraries restore winrt winrt depproj restore completed in ms for d repos runtime src libraries restore winrt winrt depproj cc anipik viktorhofer | 0 |

30,482 | 4,622,528,399 | IssuesEvent | 2016-09-27 07:55:14 | Laravel-Backpack/Base | https://api.github.com/repos/Laravel-Backpack/Base | closed | Customise Registration Form | question testing or needs confirmation | Hi,

Just wondering what the best way to customise the auth templates would be?

I have added extra fields to my user model, so need them completed on registration - even for admin, so need to add these fields to the view and also Controller.

However because all of these live in /vendor/backpack etc they are outside of the regular version control.

Is there a standard way to handle this type of thing?

Cheers | 1.0 | Customise Registration Form - Hi,

Just wondering what the best way to customise the auth templates would be?

I have added extra fields to my user model, so need them completed on registration - even for admin, so need to add these fields to the view and also Controller.

However because all of these live in /vendor/backpack etc they are outside of the regular version control.

Is there a standard way to handle this type of thing?

Cheers | non_usab | customise registration form hi just wondering what the best way to customise the auth templates would be i have added extra fields to my user model so need them completed on registration even for admin so need to add these fields to the view and also controller however because all of these live in vendor backpack etc they are outside of the regular version control is there a standard way to handle this type of thing cheers | 0 |

6,818 | 4,553,622,423 | IssuesEvent | 2016-09-13 06:03:49 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Enhancement wish for "Create New Node" Window | enhancement topic:editor usability | **Old:**

**New:**

**Filter** is type of node.

- Node = everything

- CanvasItem = all Control node + all Node2D node

- Control = all Control node

and so on..

**Favorites** and **Recent** is like "Open a Resource/File" Window.

| True | Enhancement wish for "Create New Node" Window - **Old:**

**New:**

**Filter** is type of node.

- Node = everything

- CanvasItem = all Control node + all Node2D node

- Control = all Control node

and so on..

**Favorites** and **Recent** is like "Open a Resource/File" Window.

| usab | enhancement wish for create new node window old new filter is type of node node everything canvasitem all control node all node control all control node and so on favorites and recent is like open a resource file window | 1 |

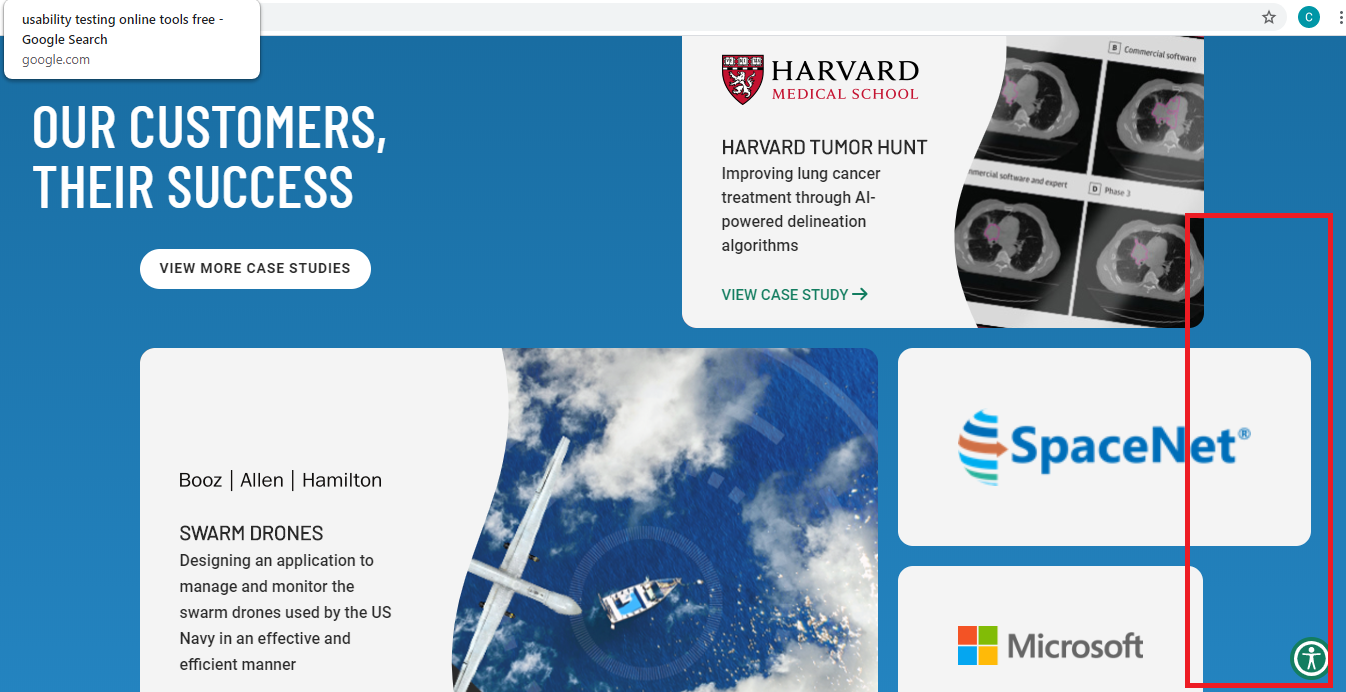

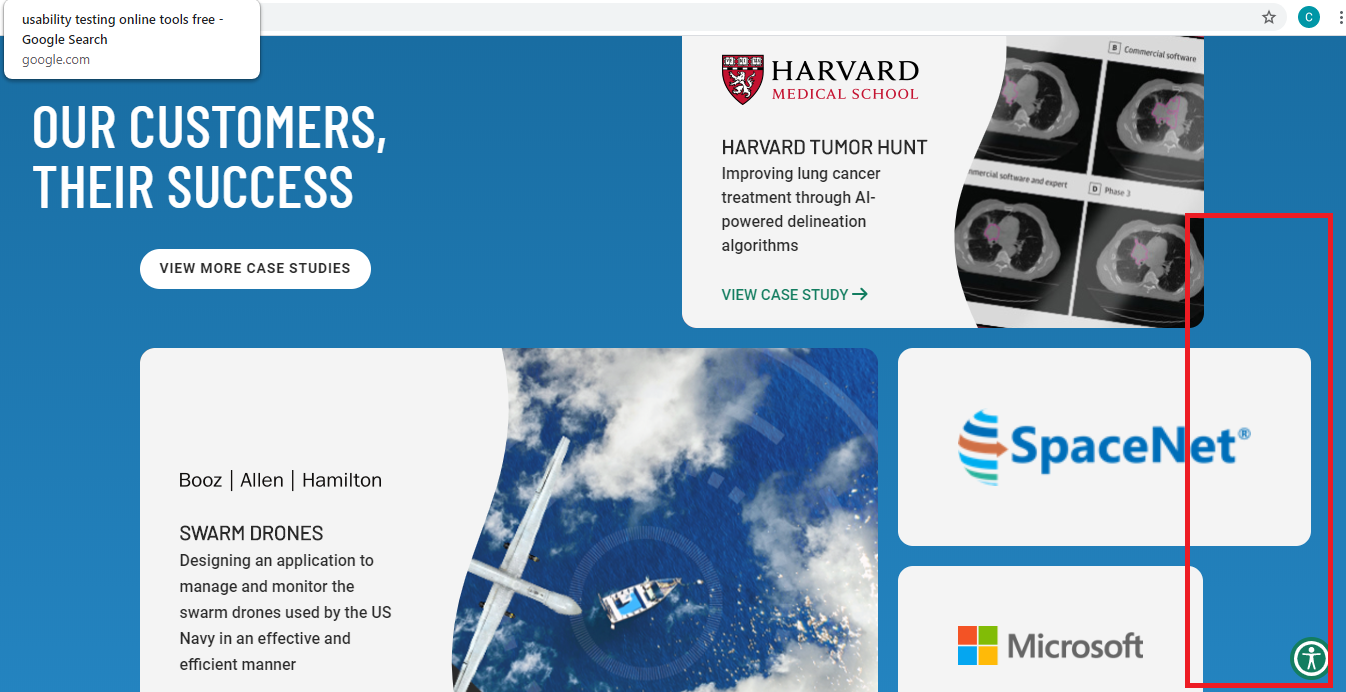

19,081 | 13,536,133,185 | IssuesEvent | 2020-09-16 08:36:40 | topcoder-platform/qa-fun | https://api.github.com/repos/topcoder-platform/qa-fun | closed | Images right side is not aligned with each other | UX/Usability | Steps :

1) Go to https://www.topcoder.com/

2) Scroll sown to section "OUR CUSTOMERS, THEIR SUCCESS"

Observe right side image alligment

Expected Result:

Images right side should be aligned.

Actual Result:

Images right side is not aligned with each other

Screenshots:

Device/OS/Browser Information:

Laptop Windows 10,

Chrome Version 81.0.4044.129 (Official Build) (64-bit) | True | Images right side is not aligned with each other - Steps :

1) Go to https://www.topcoder.com/

2) Scroll sown to section "OUR CUSTOMERS, THEIR SUCCESS"

Observe right side image alligment

Expected Result:

Images right side should be aligned.

Actual Result:

Images right side is not aligned with each other

Screenshots:

Device/OS/Browser Information:

Laptop Windows 10,

Chrome Version 81.0.4044.129 (Official Build) (64-bit) | usab | images right side is not aligned with each other steps go to scroll sown to section our customers their success observe right side image alligment expected result images right side should be aligned actual result images right side is not aligned with each other screenshots device os browser information laptop windows chrome version official build bit | 1 |

19,676 | 14,405,553,717 | IssuesEvent | 2020-12-03 18:52:59 | briansmith/ring | https://api.github.com/repos/briansmith/ring | closed | Add Visual Studio 2017 builds to AppVeyor | good-first-bug usability | [Note that Visual Studio “15” is the successor to Visual Studio 2015 and will probably have a different name; see https://www.visualstudio.com/en-us/news/releasenotes/vs15-relnotes.]

Let's support building the C/C++ components with Visual Studio “15” and using its linker.

The AppVeyor issue for Visual Studio “15” support is https://github.com/appveyor/ci/issues/753, where they say they'll wait at least until there's a beta release. But, we'll take patches to support it in *ring* before then. | True | Add Visual Studio 2017 builds to AppVeyor - [Note that Visual Studio “15” is the successor to Visual Studio 2015 and will probably have a different name; see https://www.visualstudio.com/en-us/news/releasenotes/vs15-relnotes.]

Let's support building the C/C++ components with Visual Studio “15” and using its linker.

The AppVeyor issue for Visual Studio “15” support is https://github.com/appveyor/ci/issues/753, where they say they'll wait at least until there's a beta release. But, we'll take patches to support it in *ring* before then. | usab | add visual studio builds to appveyor let s support building the c c components with visual studio “ ” and using its linker the appveyor issue for visual studio “ ” support is where they say they ll wait at least until there s a beta release but we ll take patches to support it in ring before then | 1 |

2,480 | 3,078,999,819 | IssuesEvent | 2015-08-21 14:02:37 | rabbitmq/rabbitmq-server | https://api.github.com/repos/rabbitmq/rabbitmq-server | opened | Reduce default QI (queue index) journal size | bug effort-tiny enhancement usability | See #227 for background.

Profiling suggests that the bottleneck in #227 is sequential folding over QI journal. While we will look into parallelising it, the need for reducing I/O by having a larger journal seems a lot less relevant in 2015 than it was ~ 5 years ago.

Benchmarks and monitoring results from multiple people suggests values such as `16K` or `8K` result in much more even throughput.

We've agreed to reduce default journal size from `65536` to `8192`. | True | Reduce default QI (queue index) journal size - See #227 for background.

Profiling suggests that the bottleneck in #227 is sequential folding over QI journal. While we will look into parallelising it, the need for reducing I/O by having a larger journal seems a lot less relevant in 2015 than it was ~ 5 years ago.

Benchmarks and monitoring results from multiple people suggests values such as `16K` or `8K` result in much more even throughput.

We've agreed to reduce default journal size from `65536` to `8192`. | usab | reduce default qi queue index journal size see for background profiling suggests that the bottleneck in is sequential folding over qi journal while we will look into parallelising it the need for reducing i o by having a larger journal seems a lot less relevant in than it was years ago benchmarks and monitoring results from multiple people suggests values such as or result in much more even throughput we ve agreed to reduce default journal size from to | 1 |

27,854 | 30,545,324,094 | IssuesEvent | 2023-07-20 03:02:06 | penrose/penrose | https://api.github.com/repos/penrose/penrose | closed | Run core functions on worker threads in `editor` and `edgeworth` | kind:usability | Running costly functions like `stepUntilConvergence` single-threaded blocks the main UI experience. Adding webworkers should be an easy change that'd improve the UX a lot. | True | Run core functions on worker threads in `editor` and `edgeworth` - Running costly functions like `stepUntilConvergence` single-threaded blocks the main UI experience. Adding webworkers should be an easy change that'd improve the UX a lot. | usab | run core functions on worker threads in editor and edgeworth running costly functions like stepuntilconvergence single threaded blocks the main ui experience adding webworkers should be an easy change that d improve the ux a lot | 1 |

286,923 | 24,794,758,116 | IssuesEvent | 2022-10-24 16:17:10 | near/nearcore | https://api.github.com/repos/near/nearcore | opened | #7839 breaks bunch of NayDuck tests | A-testing P-high | For example

```shell

$ git checkout 864cc29

$ cargo test -pintegration-tests --features test_features,expensive_tests -- --exact --nocapture tests::standard_cases::rpc::test_delete_key_testnet

→ fails

$ git checkout @~

$ cargo test -pintegration-tests --features test_features,expensive_tests -- --exact --nocapture tests::standard_cases::rpc::test_delete_key_testnet

→ passes

```

There are more tests. Compare https://nayduck.near.org/#/run/2727 and https://nayduck.near.org/#/run/2722.

| 1.0 | #7839 breaks bunch of NayDuck tests - For example

```shell

$ git checkout 864cc29

$ cargo test -pintegration-tests --features test_features,expensive_tests -- --exact --nocapture tests::standard_cases::rpc::test_delete_key_testnet

→ fails

$ git checkout @~

$ cargo test -pintegration-tests --features test_features,expensive_tests -- --exact --nocapture tests::standard_cases::rpc::test_delete_key_testnet

→ passes

```

There are more tests. Compare https://nayduck.near.org/#/run/2727 and https://nayduck.near.org/#/run/2722.

| non_usab | breaks bunch of nayduck tests for example shell git checkout cargo test pintegration tests features test features expensive tests exact nocapture tests standard cases rpc test delete key testnet → fails git checkout cargo test pintegration tests features test features expensive tests exact nocapture tests standard cases rpc test delete key testnet → passes there are more tests compare and | 0 |

10,537 | 6,793,976,942 | IssuesEvent | 2017-11-01 10:06:22 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | No easy way to restore default editor settings | enhancement topic:editor usability | **Operating system or device - Godot version:**

Arch Linux, Godot 3 master

**Issue description:**

I noticed there is currently no easy way to restore the default settings (per setting or globally) within the editor and project settings other than deleting config files and reloading the editor. I think this might become especially problematic for new users, because it's easy to mess things up and get confused or lost when playing around with certain values without any way of telling what the original sane settings were.

| True | No easy way to restore default editor settings - **Operating system or device - Godot version:**

Arch Linux, Godot 3 master

**Issue description:**

I noticed there is currently no easy way to restore the default settings (per setting or globally) within the editor and project settings other than deleting config files and reloading the editor. I think this might become especially problematic for new users, because it's easy to mess things up and get confused or lost when playing around with certain values without any way of telling what the original sane settings were.

| usab | no easy way to restore default editor settings operating system or device godot version arch linux godot master issue description i noticed there is currently no easy way to restore the default settings per setting or globally within the editor and project settings other than deleting config files and reloading the editor i think this might become especially problematic for new users because it s easy to mess things up and get confused or lost when playing around with certain values without any way of telling what the original sane settings were | 1 |

12,090 | 7,693,113,246 | IssuesEvent | 2018-05-18 01:34:33 | coreos/bugs | https://api.github.com/repos/coreos/bugs | closed | Compatibility of HPE FlexFabric 10Gb 2-port 534FLB Adapter with coreos | area/usability component/kernel kind/enhancement low hanging fruit team/os | # Issue Report #

## Bug ##

For Fibre channel storage (FCoE), I am using HP FlexFabric 10Gb 2-Port 534FLB Adapter. It is a converged network Broadcom adapter.

I opened discussion on CoreOS user group about the compatibility of this adapter with CoreOS and found out that currently, CoreOS don't have the kernel driver that supports FCoE offload (config option SCSI_BNX2X_FCOE).

**Link to the discussion:** https://groups.google.com/forum/#!topic/coreos-user/5v0FPUUd2to

**Output of `lspci -v` command:** [lspci.txt](https://github.com/coreos/bugs/files/1742631/lspci.txt)

### Container Linux Version ###

```

cat /etc/os-release

PRETTY_NAME="Debian GNU/Linux 8 (jessie)"

NAME="Debian GNU/Linux"

VERSION_ID="8"

VERSION="8 (jessie)"

ID=debian

HOME_URL="http://www.debian.org/"

SUPPORT_URL="http://www.debian.org/support"

BUG_REPORT_URL="https://bugs.debian.org/"

```

```

uname -r

4.14.16-coreos

```

### Environment ###

Baremetal

| True | Compatibility of HPE FlexFabric 10Gb 2-port 534FLB Adapter with coreos - # Issue Report #

## Bug ##

For Fibre channel storage (FCoE), I am using HP FlexFabric 10Gb 2-Port 534FLB Adapter. It is a converged network Broadcom adapter.

I opened discussion on CoreOS user group about the compatibility of this adapter with CoreOS and found out that currently, CoreOS don't have the kernel driver that supports FCoE offload (config option SCSI_BNX2X_FCOE).

**Link to the discussion:** https://groups.google.com/forum/#!topic/coreos-user/5v0FPUUd2to

**Output of `lspci -v` command:** [lspci.txt](https://github.com/coreos/bugs/files/1742631/lspci.txt)

### Container Linux Version ###

```

cat /etc/os-release

PRETTY_NAME="Debian GNU/Linux 8 (jessie)"

NAME="Debian GNU/Linux"

VERSION_ID="8"

VERSION="8 (jessie)"

ID=debian

HOME_URL="http://www.debian.org/"

SUPPORT_URL="http://www.debian.org/support"

BUG_REPORT_URL="https://bugs.debian.org/"

```

```

uname -r

4.14.16-coreos

```

### Environment ###

Baremetal

| usab | compatibility of hpe flexfabric port adapter with coreos issue report bug for fibre channel storage fcoe i am using hp flexfabric port adapter it is a converged network broadcom adapter i opened discussion on coreos user group about the compatibility of this adapter with coreos and found out that currently coreos don t have the kernel driver that supports fcoe offload config option scsi fcoe link to the discussion output of lspci v command container linux version cat etc os release pretty name debian gnu linux jessie name debian gnu linux version id version jessie id debian home url support url bug report url uname r coreos environment baremetal | 1 |

104,112 | 22,591,891,983 | IssuesEvent | 2022-06-28 20:47:40 | phetsims/mean-share-and-balance | https://api.github.com/repos/phetsims/mean-share-and-balance | opened | Tandem name should match associated variable/property name | dev:code-review | For code review #41 ...

By PhET-iO convention, tandem names are typically supposed to match their associated variable/property name (with exceptions made for unusual cases). There are at least 5 violations of that in this sim, and none of them qualifies as "unusual".

For example, in MeanShareAndBalanceScreenView.ts:

```

this.syncDataButton = new RectangularPushButton( {

...

tandem: options.tandem.createTandem( 'syncRepresentationsButton' ),

```

I added comment "//REVIEW tandem name does not match" to all of the cases that I identified. (Not necessarily a complete list.)

| 1.0 | Tandem name should match associated variable/property name - For code review #41 ...

By PhET-iO convention, tandem names are typically supposed to match their associated variable/property name (with exceptions made for unusual cases). There are at least 5 violations of that in this sim, and none of them qualifies as "unusual".

For example, in MeanShareAndBalanceScreenView.ts:

```

this.syncDataButton = new RectangularPushButton( {

...

tandem: options.tandem.createTandem( 'syncRepresentationsButton' ),

```

I added comment "//REVIEW tandem name does not match" to all of the cases that I identified. (Not necessarily a complete list.)

| non_usab | tandem name should match associated variable property name for code review by phet io convention tandem names are typically supposed to match their associated variable property name with exceptions made for unusual cases there are at least violations of that in this sim and none of them qualifies as unusual for example in meanshareandbalancescreenview ts this syncdatabutton new rectangularpushbutton tandem options tandem createtandem syncrepresentationsbutton i added comment review tandem name does not match to all of the cases that i identified not necessarily a complete list | 0 |

266,047 | 28,298,879,317 | IssuesEvent | 2023-04-10 02:51:04 | nidhi7598/linux-4.19.72 | https://api.github.com/repos/nidhi7598/linux-4.19.72 | closed | CVE-2019-19079 (High) detected in linuxlinux-4.19.254 - autoclosed | Mend: dependency security vulnerability | ## CVE-2019-19079 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.254</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/linux-4.19.72/commit/10a8c99e4f60044163c159867bc6f5452c1c36e5">10a8c99e4f60044163c159867bc6f5452c1c36e5</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A memory leak in the qrtr_tun_write_iter() function in net/qrtr/tun.c in the Linux kernel before 5.3 allows attackers to cause a denial of service (memory consumption), aka CID-a21b7f0cff19.

<p>Publish Date: 2019-11-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2019-19079>CVE-2019-19079</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-19079">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-19079</a></p>

<p>Release Date: 2020-08-24</p>

<p>Fix Resolution: v5.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2019-19079 (High) detected in linuxlinux-4.19.254 - autoclosed - ## CVE-2019-19079 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.254</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/linux-4.19.72/commit/10a8c99e4f60044163c159867bc6f5452c1c36e5">10a8c99e4f60044163c159867bc6f5452c1c36e5</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A memory leak in the qrtr_tun_write_iter() function in net/qrtr/tun.c in the Linux kernel before 5.3 allows attackers to cause a denial of service (memory consumption), aka CID-a21b7f0cff19.

<p>Publish Date: 2019-11-18

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2019-19079>CVE-2019-19079</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-19079">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-19079</a></p>

<p>Release Date: 2020-08-24</p>

<p>Fix Resolution: v5.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_usab | cve high detected in linuxlinux autoclosed cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files vulnerability details a memory leak in the qrtr tun write iter function in net qrtr tun c in the linux kernel before allows attackers to cause a denial of service memory consumption aka cid publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend | 0 |

24,559 | 23,917,484,741 | IssuesEvent | 2022-09-09 13:55:56 | liquibase/liquibase | https://api.github.com/repos/liquibase/liquibase | closed | context property in maven plugin does not tolerate spaces | TypeBug Severity3 ImpactMedium ThemeUsability DBAll hacktoberfest | Sample:

`

<changeSet id="43765-1" author="gvw" context="(alvara or stest) and default">

`

works fine, but

`

<changeSet id="43765-1" author="gvw" context="( alvara or stest ) and default">

`

doesn't.

| True | context property in maven plugin does not tolerate spaces - Sample:

`

<changeSet id="43765-1" author="gvw" context="(alvara or stest) and default">

`

works fine, but

`

<changeSet id="43765-1" author="gvw" context="( alvara or stest ) and default">

`

doesn't.

| usab | context property in maven plugin does not tolerate spaces sample works fine but doesn t | 1 |

2,861 | 3,214,642,455 | IssuesEvent | 2015-10-07 04:06:31 | cloudera/ibis | https://api.github.com/repos/cloudera/ibis | closed | Convenience for specifying join keys that are shared in left and right tables | usability | From the mailing list

```python

joined = T1.join(T2, ['chrom', 'alt', 'pos'])

``` | True | Convenience for specifying join keys that are shared in left and right tables - From the mailing list

```python

joined = T1.join(T2, ['chrom', 'alt', 'pos'])

``` | usab | convenience for specifying join keys that are shared in left and right tables from the mailing list python joined join | 1 |

17,448 | 12,043,914,183 | IssuesEvent | 2020-04-14 13:14:37 | godotengine/godot | https://api.github.com/repos/godotengine/godot | opened | Can't use arrows in ItemList after pressing a letter | bug discussion topic:gui usability | **Godot version:**

3.2.1

**Issue description:**

When you click ItemList, you can move through elements with arrow keys. But you can't anymore after pressing a letter to search something. You need to click again or wait few secs.

After checking the code, seems like incremental search has a separate path for arrow keys, but I don't much understand what is it for. In the end it just blocks arrow keys after searching.

**Steps to reproduce:**

1. Create ItemList with some elements

2. Press some letter

3. Try to use arrow keys | True | Can't use arrows in ItemList after pressing a letter - **Godot version:**

3.2.1

**Issue description:**

When you click ItemList, you can move through elements with arrow keys. But you can't anymore after pressing a letter to search something. You need to click again or wait few secs.

After checking the code, seems like incremental search has a separate path for arrow keys, but I don't much understand what is it for. In the end it just blocks arrow keys after searching.

**Steps to reproduce:**

1. Create ItemList with some elements

2. Press some letter

3. Try to use arrow keys | usab | can t use arrows in itemlist after pressing a letter godot version issue description when you click itemlist you can move through elements with arrow keys but you can t anymore after pressing a letter to search something you need to click again or wait few secs after checking the code seems like incremental search has a separate path for arrow keys but i don t much understand what is it for in the end it just blocks arrow keys after searching steps to reproduce create itemlist with some elements press some letter try to use arrow keys | 1 |

40,078 | 12,746,037,998 | IssuesEvent | 2020-06-26 15:14:39 | RG4421/developers | https://api.github.com/repos/RG4421/developers | opened | CVE-2019-11358 (Medium) detected in jquery-2.1.4.min.js, jquery-1.9.1.js | security vulnerability | ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-2.1.4.min.js</b>, <b>jquery-1.9.1.js</b></p></summary>

<p>

<details><summary><b>jquery-2.1.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/2.1.4/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/2.1.4/jquery.min.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/developers/node_modules/js-base64/.attic/test-moment/index.html</p>

<p>Path to vulnerable library: /developers/node_modules/js-base64/.attic/test-moment/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-2.1.4.min.js** (Vulnerable Library)

</details>

<details><summary><b>jquery-1.9.1.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/developers/node_modules/tinycolor2/test/index.html</p>

<p>Path to vulnerable library: /developers/node_modules/tinycolor2/test/../demo/jquery-1.9.1.js,/developers/node_modules/tinycolor2/demo/jquery-1.9.1.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.9.1.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/RG4421/developers/commit/09bff0d3b38e28079c6c900ddef39d33d88ab428">09bff0d3b38e28079c6c900ddef39d33d88ab428</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

jQuery before 3.4.0, as used in Drupal, Backdrop CMS, and other products, mishandles jQuery.extend(true, {}, ...) because of Object.prototype pollution. If an unsanitized source object contained an enumerable __proto__ property, it could extend the native Object.prototype.

<p>Publish Date: 2019-04-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-11358>CVE-2019-11358</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-11358">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-11358</a></p>

<p>Release Date: 2019-04-20</p>

<p>Fix Resolution: 3.4.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"JavaScript","packageName":"jquery","packageVersion":"2.1.4","isTransitiveDependency":false,"dependencyTree":"jquery:2.1.4","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.4.0"},{"packageType":"JavaScript","packageName":"jquery","packageVersion":"1.9.1","isTransitiveDependency":false,"dependencyTree":"jquery:1.9.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.4.0"}],"vulnerabilityIdentifier":"CVE-2019-11358","vulnerabilityDetails":"jQuery before 3.4.0, as used in Drupal, Backdrop CMS, and other products, mishandles jQuery.extend(true, {}, ...) because of Object.prototype pollution. If an unsanitized source object contained an enumerable __proto__ property, it could extend the native Object.prototype.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-11358","cvss3Severity":"medium","cvss3Score":"6.1","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Changed","C":"Low","UI":"Required","AV":"Network","I":"Low"},"extraData":{}}</REMEDIATE> --> | True | CVE-2019-11358 (Medium) detected in jquery-2.1.4.min.js, jquery-1.9.1.js - ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-2.1.4.min.js</b>, <b>jquery-1.9.1.js</b></p></summary>

<p>

<details><summary><b>jquery-2.1.4.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/2.1.4/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/2.1.4/jquery.min.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/developers/node_modules/js-base64/.attic/test-moment/index.html</p>

<p>Path to vulnerable library: /developers/node_modules/js-base64/.attic/test-moment/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-2.1.4.min.js** (Vulnerable Library)

</details>

<details><summary><b>jquery-1.9.1.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.js</a></p>

<p>Path to dependency file: /tmp/ws-scm/developers/node_modules/tinycolor2/test/index.html</p>

<p>Path to vulnerable library: /developers/node_modules/tinycolor2/test/../demo/jquery-1.9.1.js,/developers/node_modules/tinycolor2/demo/jquery-1.9.1.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.9.1.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/RG4421/developers/commit/09bff0d3b38e28079c6c900ddef39d33d88ab428">09bff0d3b38e28079c6c900ddef39d33d88ab428</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

jQuery before 3.4.0, as used in Drupal, Backdrop CMS, and other products, mishandles jQuery.extend(true, {}, ...) because of Object.prototype pollution. If an unsanitized source object contained an enumerable __proto__ property, it could extend the native Object.prototype.

<p>Publish Date: 2019-04-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-11358>CVE-2019-11358</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-11358">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-11358</a></p>

<p>Release Date: 2019-04-20</p>

<p>Fix Resolution: 3.4.0</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"JavaScript","packageName":"jquery","packageVersion":"2.1.4","isTransitiveDependency":false,"dependencyTree":"jquery:2.1.4","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.4.0"},{"packageType":"JavaScript","packageName":"jquery","packageVersion":"1.9.1","isTransitiveDependency":false,"dependencyTree":"jquery:1.9.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"3.4.0"}],"vulnerabilityIdentifier":"CVE-2019-11358","vulnerabilityDetails":"jQuery before 3.4.0, as used in Drupal, Backdrop CMS, and other products, mishandles jQuery.extend(true, {}, ...) because of Object.prototype pollution. If an unsanitized source object contained an enumerable __proto__ property, it could extend the native Object.prototype.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2019-11358","cvss3Severity":"medium","cvss3Score":"6.1","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Changed","C":"Low","UI":"Required","AV":"Network","I":"Low"},"extraData":{}}</REMEDIATE> --> | non_usab | cve medium detected in jquery min js jquery js cve medium severity vulnerability vulnerable libraries jquery min js jquery js jquery min js javascript library for dom operations library home page a href path to dependency file tmp ws scm developers node modules js attic test moment index html path to vulnerable library developers node modules js attic test moment index html dependency hierarchy x jquery min js vulnerable library jquery js javascript library for dom operations library home page a href path to dependency file tmp ws scm developers node modules test index html path to vulnerable library developers node modules test demo jquery js developers node modules demo jquery js dependency hierarchy x jquery js vulnerable library found in head commit a href vulnerability details jquery before as used in drupal backdrop cms and other products mishandles jquery extend true because of object prototype pollution if an unsanitized source object contained an enumerable proto property it could extend the native object prototype publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution isopenpronvulnerability true ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails jquery before as used in drupal backdrop cms and other products mishandles jquery extend true because of object prototype pollution if an unsanitized source object contained an enumerable proto property it could extend the native object prototype vulnerabilityurl | 0 |

21,842 | 17,873,079,503 | IssuesEvent | 2021-09-06 19:33:42 | bevyengine/bevy | https://api.github.com/repos/bevyengine/bevy | closed | Access Diagnostic::history field | C-Usability A-Diagnostics | ## What problem does this solve or what need does it fill?

I would like to access the history data of a diagnostic for drawing a graph using egui.

## What solution would you like?

The history field should be made public. Or a function to access the underlying history data.

## What alternative(s) have you considered?

Currently I'm storing the Diagnostic value in separate resource but this would be unnecessary if i could access the history stored with the diagnostic.

| True | Access Diagnostic::history field - ## What problem does this solve or what need does it fill?

I would like to access the history data of a diagnostic for drawing a graph using egui.

## What solution would you like?

The history field should be made public. Or a function to access the underlying history data.

## What alternative(s) have you considered?

Currently I'm storing the Diagnostic value in separate resource but this would be unnecessary if i could access the history stored with the diagnostic.

| usab | access diagnostic history field what problem does this solve or what need does it fill i would like to access the history data of a diagnostic for drawing a graph using egui what solution would you like the history field should be made public or a function to access the underlying history data what alternative s have you considered currently i m storing the diagnostic value in separate resource but this would be unnecessary if i could access the history stored with the diagnostic | 1 |

16,272 | 10,720,111,982 | IssuesEvent | 2019-10-26 15:22:22 | bronzehedwick/chrisdeluca | https://api.github.com/repos/bronzehedwick/chrisdeluca | closed | Create 2 column layout with nav | look + feel usability | Use a 2 column layout, using the new grid CSS module.

The left column should include a the navigation, and be ordered above the footer on small screens. | True | Create 2 column layout with nav - Use a 2 column layout, using the new grid CSS module.

The left column should include a the navigation, and be ordered above the footer on small screens. | usab | create column layout with nav use a column layout using the new grid css module the left column should include a the navigation and be ordered above the footer on small screens | 1 |

27,752 | 5,094,049,665 | IssuesEvent | 2017-01-03 09:49:53 | GoldenSoftwareLtd/gedemin | https://api.github.com/repos/GoldenSoftwareLtd/gedemin | closed | Товар и количество в накладной отображаются но нет цены ни покупной ни с НДС | Depot Priority-Critical Type-Defect | Originally reported on Google Code with ID 2991

```

допустим собрали мы какой то товар через акт переработки в материальном складе, поставили

цену и так далее, поставили на склад N далее при попытке отпустить этот товар через

отпуск товара на сторону (оптовая торговля) возникает следующая ошибка товар и количество

в накладной отображаются но нет цены ни покупной ни с НДС, при попытке набить цену

вручную выдает следующую ошибку "По данному тмц не достаточное количество остатков",

раньше было все нормально и операция проделывалась не один раз, но как только я сбросил

ваши настройки с сайта и обновил настройки торговли а именно склад-торговля (хранилище,

метаданные, макросы, данные) все перестало работать, если материал перемещать через

внутреннее перемещение материалов, то показываеться и цена и ндс все не обходимое,

но опять товар выбрать дает в отпуск товара на сторону(оптовая торговля). то происходит

тоже самое что описанное выше.

```

Reported by `gs1994` on 2012-12-08 13:10:45

| 1.0 | Товар и количество в накладной отображаются но нет цены ни покупной ни с НДС - Originally reported on Google Code with ID 2991

```

допустим собрали мы какой то товар через акт переработки в материальном складе, поставили

цену и так далее, поставили на склад N далее при попытке отпустить этот товар через

отпуск товара на сторону (оптовая торговля) возникает следующая ошибка товар и количество

в накладной отображаются но нет цены ни покупной ни с НДС, при попытке набить цену

вручную выдает следующую ошибку "По данному тмц не достаточное количество остатков",

раньше было все нормально и операция проделывалась не один раз, но как только я сбросил

ваши настройки с сайта и обновил настройки торговли а именно склад-торговля (хранилище,

метаданные, макросы, данные) все перестало работать, если материал перемещать через

внутреннее перемещение материалов, то показываеться и цена и ндс все не обходимое,

но опять товар выбрать дает в отпуск товара на сторону(оптовая торговля). то происходит

тоже самое что описанное выше.

```

Reported by `gs1994` on 2012-12-08 13:10:45

| non_usab | товар и количество в накладной отображаются но нет цены ни покупной ни с ндс originally reported on google code with id допустим собрали мы какой то товар через акт переработки в материальном складе поставили цену и так далее поставили на склад n далее при попытке отпустить этот товар через отпуск товара на сторону оптовая торговля возникает следующая ошибка товар и количество в накладной отображаются но нет цены ни покупной ни с ндс при попытке набить цену вручную выдает следующую ошибку по данному тмц не достаточное количество остатков раньше было все нормально и операция проделывалась не один раз но как только я сбросил ваши настройки с сайта и обновил настройки торговли а именно склад торговля хранилище метаданные макросы данные все перестало работать если материал перемещать через внутреннее перемещение материалов то показываеться и цена и ндс все не обходимое но опять товар выбрать дает в отпуск товара на сторону оптовая торговля то происходит тоже самое что описанное выше reported by on | 0 |

17,643 | 12,222,200,019 | IssuesEvent | 2020-05-02 12:05:18 | tideflow-io/tideflow | https://api.github.com/repos/tideflow-io/tideflow | closed | Tasks settings sidebar [workflow's editor] | :gift: Rewarded on Issuehunt 🎨 usability & ui 🏆 epic | <!-- Issuehunt Badges -->

[<img alt="Issuehunt badges" src="https://img.shields.io/badge/IssueHunt-%24150%20Rewarded-%237E24E3.svg" />](https://issuehunt.io/r/tideflow-io/tideflow/issues/70)

<!-- /Issuehunt Badges -->

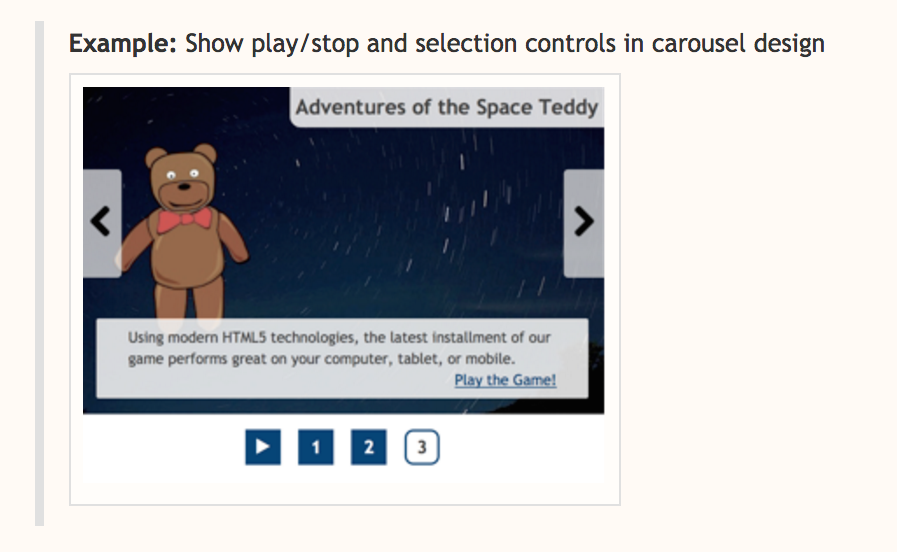

As a user, I can edit a task settings by clicking on "edit". The settings editor will appear in a new sticky & scrollable sidebar. (instead of being shows in the task's card)

## Current settings editor

<img width="438" alt="Captura de pantalla 2020-04-05 a las 21 30 29" src="https://user-images.githubusercontent.com/6388629/78508112-acfef580-7784-11ea-8b9c-029dde49f998.png">

## Expected settings editor

<img width="762" alt="Captura de pantalla 2020-04-05 a las 21 30 38" src="https://user-images.githubusercontent.com/6388629/78508118-b4be9a00-7784-11ea-9e7a-d2afa1f95e52.png">

<!-- Issuehunt content -->

---

<details>

<summary>

<b>IssueHunt Summary</b>

</summary>

#### [<img src='https://avatars2.githubusercontent.com/u/332141?v=4' alt='bobthekingofegypt' width=24 height=24> bobthekingofegypt](https://issuehunt.io/u/bobthekingofegypt) has been rewarded.

### Backers (Total: $150.00)

- [<img src='https://avatars2.githubusercontent.com/u/6388629?v=4' alt='joseconstela' width=24 height=24> joseconstela](https://issuehunt.io/u/joseconstela) ($150.00)

### Submitted pull Requests

- [#72 Add sidebar for showing edit form](https://issuehunt.io/r/tideflow-io/tideflow/pull/72)

---

### Tips

- Checkout the [Issuehunt explorer](https://issuehunt.io/r/tideflow-io/tideflow/) to discover more funded issues.

- Need some help from other developers? [Add your repositories](https://issuehunt.io/r/new) on IssueHunt to raise funds.

</details>

<!-- /Issuehunt content--> | True | Tasks settings sidebar [workflow's editor] - <!-- Issuehunt Badges -->

[<img alt="Issuehunt badges" src="https://img.shields.io/badge/IssueHunt-%24150%20Rewarded-%237E24E3.svg" />](https://issuehunt.io/r/tideflow-io/tideflow/issues/70)

<!-- /Issuehunt Badges -->

As a user, I can edit a task settings by clicking on "edit". The settings editor will appear in a new sticky & scrollable sidebar. (instead of being shows in the task's card)

## Current settings editor

<img width="438" alt="Captura de pantalla 2020-04-05 a las 21 30 29" src="https://user-images.githubusercontent.com/6388629/78508112-acfef580-7784-11ea-8b9c-029dde49f998.png">

## Expected settings editor

<img width="762" alt="Captura de pantalla 2020-04-05 a las 21 30 38" src="https://user-images.githubusercontent.com/6388629/78508118-b4be9a00-7784-11ea-9e7a-d2afa1f95e52.png">

<!-- Issuehunt content -->

---

<details>

<summary>

<b>IssueHunt Summary</b>

</summary>

#### [<img src='https://avatars2.githubusercontent.com/u/332141?v=4' alt='bobthekingofegypt' width=24 height=24> bobthekingofegypt](https://issuehunt.io/u/bobthekingofegypt) has been rewarded.

### Backers (Total: $150.00)

- [<img src='https://avatars2.githubusercontent.com/u/6388629?v=4' alt='joseconstela' width=24 height=24> joseconstela](https://issuehunt.io/u/joseconstela) ($150.00)

### Submitted pull Requests

- [#72 Add sidebar for showing edit form](https://issuehunt.io/r/tideflow-io/tideflow/pull/72)

---

### Tips

- Checkout the [Issuehunt explorer](https://issuehunt.io/r/tideflow-io/tideflow/) to discover more funded issues.

- Need some help from other developers? [Add your repositories](https://issuehunt.io/r/new) on IssueHunt to raise funds.

</details>

<!-- /Issuehunt content--> | usab | tasks settings sidebar as a user i can edit a task settings by clicking on edit the settings editor will appear in a new sticky scrollable sidebar instead of being shows in the task s card current settings editor img width alt captura de pantalla a las src expected settings editor img width alt captura de pantalla a las src issuehunt summary has been rewarded backers total submitted pull requests tips checkout the to discover more funded issues need some help from other developers on issuehunt to raise funds | 1 |

4,862 | 3,897,238,833 | IssuesEvent | 2016-04-16 09:02:33 | lionheart/openradar-mirror | https://api.github.com/repos/lionheart/openradar-mirror | opened | 15792060: redownload already owned movies | classification:usability reproducible:always status:open | #### Description

Summary:

Dear iTunes-Store-Team,

why isn't it possible to re-download already bought movies,

like it is possible with apps or music?

Steps to Reproduce:

buy and download a movie

(watch &) delete it

try to download it again

Expected Results:

the movie downloads again, if it isn't present on my harddrive

(I only have a 512 ssd)

Actual Results:

a popup says, that maybe I like to buy the movie a second time

(see attached image)

Version:

OSX, all Versions

Notes:

Configuration:

Attachments:

'Screen Shot 2014-01-10 at 15.51.01.png' was successfully uploaded.

-

Product Version: all

Created: 2014-01-10T15:02:10.722498

Originated: 2014-01-10T00:00:00

Open Radar Link: http://www.openradar.me/15792060 | True | 15792060: redownload already owned movies - #### Description

Summary:

Dear iTunes-Store-Team,

why isn't it possible to re-download already bought movies,

like it is possible with apps or music?

Steps to Reproduce:

buy and download a movie

(watch &) delete it

try to download it again

Expected Results:

the movie downloads again, if it isn't present on my harddrive

(I only have a 512 ssd)

Actual Results:

a popup says, that maybe I like to buy the movie a second time

(see attached image)

Version:

OSX, all Versions

Notes:

Configuration:

Attachments:

'Screen Shot 2014-01-10 at 15.51.01.png' was successfully uploaded.

-

Product Version: all

Created: 2014-01-10T15:02:10.722498

Originated: 2014-01-10T00:00:00