text stringlengths 2 350 | source stringlengths 9 49 |

|---|---|

Sparse dictionary learning is a feature learning method where a training example is represented as a linear combination of [basis functions](https://en.wikipedia.org/wiki/Basis_function "Basis function") and assumed to be a [sparse matrix](https://en.wikipedia.org/wiki/Sparse_matrix "Sparse matrix"). The method is [str... | ml.md_0_364 |

matrix"). The method is [strongly NP-hard](https://en.wikipedia.org/wiki/Strongly_NP-hard "Strongly NP-hard") and difficult to solve approximately.[[70]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-70) A popular [heuristic](https://en.wikipedia.org/wiki/Heuristic "Heuristic") method for sparse dictionary l... | ml.md_0_365 |

dictionary learning is the [_k_ -SVD](https://en.wikipedia.org/wiki/K-SVD "K-SVD") algorithm. Sparse dictionary learning has been applied in several contexts. In classification, the problem is to determine the class to which a previously unseen training example belongs. For a dictionary where each class has already bee... | ml.md_0_366 |

already been built, a new training example is associated with the class that is best sparsely represented by the corresponding dictionary. Sparse dictionary learning has also been applied in [image de-noising](https://en.wikipedia.org/wiki/Image_de-noising "Image de-noising"). The key idea is that a clean image patch c... | ml.md_0_367 |

can be sparsely represented by an image dictionary, but the noise cannot.[[71]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-71) | ml.md_0_368 |

#### Anomaly detection

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=20 "Edit section: Anomaly detection")]

Main article: [Anomaly detection](https://en.wikipedia.org/wiki/Anomaly_detection "Anomaly detection") | ml.md_0_369 |

In [data mining](https://en.wikipedia.org/wiki/Data_mining "Data mining"), anomaly detection, also known as outlier detection, is the identification of rare items, events or observations which raise suspicions by differing significantly from the majority of the data.[[72]](https://en.wikipedia.org/wiki/Machine_learning... | ml.md_0_370 |

Typically, the anomalous items represent an issue such as [bank fraud](https://en.wikipedia.org/wiki/Bank_fraud "Bank fraud"), a structural defect, medical problems or errors in a text. Anomalies are referred to as [outliers](https://en.wikipedia.org/wiki/Outlier "Outlier"), novelties, noise, deviations and | ml.md_0_371 |

novelties, noise, deviations and exceptions.[[73]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-73) | ml.md_0_372 |

In particular, in the context of abuse and network intrusion detection, the interesting objects are often not rare objects, but unexpected bursts of inactivity. This pattern does not adhere to the common statistical definition of an outlier as a rare object. Many outlier detection methods (in particular, unsupervised a... | ml.md_0_373 |

algorithms) will fail on such data unless aggregated appropriately. Instead, a cluster analysis algorithm may be able to detect the micro-clusters formed by these patterns.[[74]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-74) | ml.md_0_374 |

Three broad categories of anomaly detection techniques exist.[[75]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-ChandolaSurvey-75) Unsupervised anomaly detection techniques detect anomalies in an unlabelled test data set under the assumption that the majority of the instances in the data set are normal, by... | ml.md_0_375 |

by looking for instances that seem to fit the least to the remainder of the data set. Supervised anomaly detection techniques require a data set that has been labelled as "normal" and "abnormal" and involves training a classifier (the key difference from many other statistical classification problems is the inherently ... | ml.md_0_376 |

unbalanced nature of outlier detection). Semi-supervised anomaly detection techniques construct a model representing normal behaviour from a given normal training data set and then test the likelihood of a test instance to be generated by the model. | ml.md_0_377 |

#### Robot learning

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=21 "Edit section: Robot learning")] | ml.md_0_378 |

[Robot learning](https://en.wikipedia.org/wiki/Robot_learning "Robot learning") is inspired by a multitude of machine learning methods, starting from supervised learning, reinforcement learning,[[76]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-76)[[77]](https://en.wikipedia.org/wiki/Machine_learning#cite_... | ml.md_0_379 |

and finally [meta-learning](https://en.wikipedia.org/wiki/Meta-learning_\(computer_science\) "Meta-learning \(computer science\)") (e.g. MAML). | ml.md_0_380 |

#### Association rules

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=22 "Edit section: Association rules")]

Main article: [Association rule learning](https://en.wikipedia.org/wiki/Association_rule_learning "Association rule learning") | ml.md_0_381 |

See also: [Inductive logic programming](https://en.wikipedia.org/wiki/Inductive_logic_programming "Inductive logic programming") | ml.md_0_382 |

Association rule learning is a [rule-based machine learning](https://en.wikipedia.org/wiki/Rule-based_machine_learning "Rule-based machine learning") method for discovering relationships between variables in large databases. It is intended to identify strong rules discovered in databases using some measure of | ml.md_0_383 |

in databases using some measure of "interestingness".[[78]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-piatetsky-78) | ml.md_0_384 |

Rule-based machine learning is a general term for any machine learning method that identifies, learns, or evolves "rules" to store, manipulate or apply knowledge. The defining characteristic of a rule-based machine learning algorithm is the identification and utilisation of a set of relational rules that collectively r... | ml.md_0_385 |

represent the knowledge captured by the system. This is in contrast to other machine learning algorithms that commonly identify a singular model that can be universally applied to any instance in order to make a prediction.[[79]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-79) Rule-based machine learning a... | ml.md_0_386 |

approaches include [learning classifier systems](https://en.wikipedia.org/wiki/Learning_classifier_system "Learning classifier system"), association rule learning, and [artificial immune systems](https://en.wikipedia.org/wiki/Artificial_immune_system "Artificial immune system"). | ml.md_0_387 |

Based on the concept of strong rules, [Rakesh Agrawal](https://en.wikipedia.org/wiki/Rakesh_Agrawal_\(computer_scientist\) "Rakesh Agrawal \(computer scientist\)"), [Tomasz ImieliÅski](https://en.wikipedia.org/wiki/Tomasz_Imieli%C5%84ski "Tomasz ImieliÅski") and Arun Swami introduced association rules for discovering... | ml.md_0_388 |

discovering regularities between products in large-scale transaction data recorded by [point-of-sale](https://en.wikipedia.org/wiki/Point-of-sale "Point-of-sale") (POS) systems in supermarkets.[[80]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-mining-80) For example, the rule { o n i o n s , p o t a t o e... | ml.md_0_389 |

t a t o e s } â { b u r g e r } {\displaystyle \\{\mathrm {onions,potatoes} \\}\Rightarrow \\{\mathrm {burger} \\}}  found in the ... | ml.md_0_390 |

in the sales data of a supermarket would indicate that if a customer buys onions and potatoes together, they are likely to also buy hamburger meat. Such information can be used as the basis for decisions about marketing activities such as promotional [pricing](https://en.wikipedia.org/wiki/Pricing "Pricing") or [produc... | ml.md_0_391 |

"Pricing") or [product placements](https://en.wikipedia.org/wiki/Product_placement "Product placement"). In addition to [market basket analysis](https://en.wikipedia.org/wiki/Market_basket_analysis "Market basket analysis"), association rules are employed today in application areas including [Web usage | ml.md_0_392 |

areas including [Web usage mining](https://en.wikipedia.org/wiki/Web_usage_mining "Web usage mining"), [intrusion detection](https://en.wikipedia.org/wiki/Intrusion_detection "Intrusion detection"), [continuous production](https://en.wikipedia.org/wiki/Continuous_production "Continuous production"), and | ml.md_0_393 |

"Continuous production"), and [bioinformatics](https://en.wikipedia.org/wiki/Bioinformatics "Bioinformatics"). In contrast with [sequence mining](https://en.wikipedia.org/wiki/Sequence_mining "Sequence mining"), association rule learning typically does not consider the order of items either within a transaction or acro... | ml.md_0_394 |

[Learning classifier systems](https://en.wikipedia.org/wiki/Learning_classifier_system "Learning classifier system") (LCS) are a family of rule-based machine learning algorithms that combine a discovery component, typically a [genetic algorithm](https://en.wikipedia.org/wiki/Genetic_algorithm "Genetic algorithm"), with... | ml.md_0_395 |

with a learning component, performing either [supervised learning](https://en.wikipedia.org/wiki/Supervised_learning "Supervised learning"), [reinforcement learning](https://en.wikipedia.org/wiki/Reinforcement_learning "Reinforcement learning"), or [unsupervised learning](https://en.wikipedia.org/wiki/Unsupervised_lear... | ml.md_0_396 |

"Unsupervised learning"). They seek to identify a set of context-dependent rules that collectively store and apply knowledge in a [piecewise](https://en.wikipedia.org/wiki/Piecewise "Piecewise") manner in order to make predictions.[[81]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-81) | ml.md_0_397 |

[Inductive logic programming](https://en.wikipedia.org/wiki/Inductive_logic_programming "Inductive logic programming") (ILP) is an approach to rule learning using [logic programming](https://en.wikipedia.org/wiki/Logic_programming "Logic programming") as a uniform representation for input examples, background knowledge... | ml.md_0_398 |

and hypotheses. Given an encoding of the known background knowledge and a set of examples represented as a logical database of facts, an ILP system will derive a hypothesized logic program that [entails](https://en.wikipedia.org/wiki/Entailment "Entailment") all positive and no negative examples. [Inductive | ml.md_0_399 |

no negative examples. [Inductive programming](https://en.wikipedia.org/wiki/Inductive_programming "Inductive programming") is a related field that considers any kind of programming language for representing hypotheses (and not only logic programming), such as [functional programs](https://en.wikipedia.org/wiki/Function... | ml.md_0_400 |

"Functional programming"). | ml.md_0_401 |

Inductive logic programming is particularly useful in [bioinformatics](https://en.wikipedia.org/wiki/Bioinformatics "Bioinformatics") and [natural language processing](https://en.wikipedia.org/wiki/Natural_language_processing "Natural language processing"). [Gordon Plotkin](https://en.wikipedia.org/wiki/Gordon_Plotkin ... | ml.md_0_402 |

"Gordon Plotkin") and [Ehud Shapiro](https://en.wikipedia.org/wiki/Ehud_Shapiro "Ehud Shapiro") laid the initial theoretical foundation for inductive machine learning in a logical | ml.md_0_403 |

machine learning in a logical setting.[[82]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-82)[[83]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-83)[[84]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-84) Shapiro built their first implementation (Model Inference System) in 1981: a Pro... | ml.md_0_404 |

a Prolog program that inductively inferred logic programs from positive and negative examples.[[85]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-85) The term _inductive_ here refers to [philosophical](https://en.wikipedia.org/wiki/Inductive_reasoning "Inductive reasoning") induction, suggesting a theory to... | ml.md_0_405 |

theory to explain observed facts, rather than [mathematical induction](https://en.wikipedia.org/wiki/Mathematical_induction "Mathematical induction"), proving a property for all members of a well-ordered set. | ml.md_0_406 |

## Models

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=23 "Edit section: Models")] | ml.md_0_407 |

A **machine learning model** is a type of [mathematical model](https://en.wikipedia.org/wiki/Mathematical_model "Mathematical model") that, once "trained" on a given dataset, can be used to make predictions or classifications on new data. During training, a learning algorithm iteratively adjusts the model's internal pa... | ml.md_0_408 |

parameters to minimise errors in its predictions.[[86]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-86) By extension, the term "model" can refer to several levels of specificity, from a general class of models and their associated learning algorithms to a fully trained model with all its internal parameter... | ml.md_0_409 |

with all its internal parameters tuned.[[87]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-87) | ml.md_0_410 |

Various types of models have been used and researched for machine learning systems, picking the best model for a task is called [model selection](https://en.wikipedia.org/wiki/Model_selection "Model selection").

### Artificial neural networks | ml.md_0_411 |

### Artificial neural networks

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=24 "Edit section: Artificial neural networks")]

Main article: [Artificial neural network](https://en.wikipedia.org/wiki/Artificial_neural_network "Artificial neural network") | ml.md_0_412 |

See also: [Deep learning](https://en.wikipedia.org/wiki/Deep_learning "Deep learning") | ml.md_0_413 |

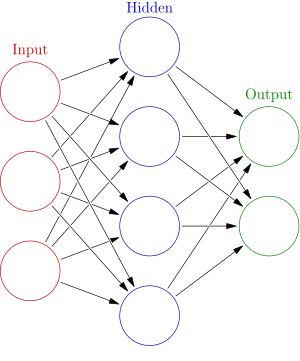

[](https://en.wikipedia.org/wiki/File:Colored_neural_network.svg)An artificial neural network is an interconnected group of nodes, akin to the vast network of [neurons](https://en.wikipedia.org/... | ml.md_0_414 |

"Neuron") in a [brain](https://en.wikipedia.org/wiki/Brain "Brain"). Here, each circular node represents an [artificial neuron](https://en.wikipedia.org/wiki/Artificial_neuron "Artificial neuron") and an arrow represents a connection from the output of one artificial neuron to the input of another. | ml.md_0_415 |

Artificial neural networks (ANNs), or [connectionist](https://en.wikipedia.org/wiki/Connectionism "Connectionism") systems, are computing systems vaguely inspired by the [biological neural networks](https://en.wikipedia.org/wiki/Biological_neural_network "Biological neural network") that constitute animal | ml.md_0_416 |

network") that constitute animal [brains](https://en.wikipedia.org/wiki/Brain "Brain"). Such systems "learn" to perform tasks by considering examples, generally without being programmed with any task-specific rules. | ml.md_0_417 |

An ANN is a model based on a collection of connected units or nodes called "[artificial neurons](https://en.wikipedia.org/wiki/Artificial_neuron "Artificial neuron")", which loosely model the [neurons](https://en.wikipedia.org/wiki/Neuron "Neuron") in a biological brain. Each connection, like the [synapses](https://en.... | ml.md_0_418 |

"Synapse") in a biological brain, can transmit information, a "signal", from one artificial neuron to another. An artificial neuron that receives a signal can process it and then signal additional artificial neurons connected to it. In common ANN implementations, the signal at a connection between artificial neurons is... | ml.md_0_419 |

artificial neurons is a [real number](https://en.wikipedia.org/wiki/Real_number "Real number"), and the output of each artificial neuron is computed by some non-linear function of the sum of its inputs. The connections between artificial neurons are called "edges". Artificial neurons and edges typically have a | ml.md_0_420 |

neurons and edges typically have a [weight](https://en.wikipedia.org/wiki/Weight_\(mathematics\) "Weight \(mathematics\)") that adjusts as learning proceeds. The weight increases or decreases the strength of the signal at a connection. Artificial neurons may have a threshold such that the signal is only sent if the agg... | ml.md_0_421 |

the aggregate signal crosses that threshold. Typically, artificial neurons are aggregated into layers. Different layers may perform different kinds of transformations on their inputs. Signals travel from the first layer (the input layer) to the last layer (the output layer), possibly after traversing the layers multipl... | ml.md_0_422 |

The original goal of the ANN approach was to solve problems in the same way that a [human brain](https://en.wikipedia.org/wiki/Human_brain "Human brain") would. However, over time, attention moved to performing specific tasks, leading to deviations from [biology](https://en.wikipedia.org/wiki/Biology "Biology"). Artifi... | ml.md_0_423 |

Artificial neural networks have been used on a variety of tasks, including [computer vision](https://en.wikipedia.org/wiki/Computer_vision "Computer vision"), [speech recognition](https://en.wikipedia.org/wiki/Speech_recognition "Speech recognition"), [machine translation](https://en.wikipedia.org/wiki/Machine_translat... | ml.md_0_424 |

"Machine translation"), [social network](https://en.wikipedia.org/wiki/Social_network "Social network") filtering, [playing board and video games](https://en.wikipedia.org/wiki/General_game_playing "General game playing") and [medical diagnosis](https://en.wikipedia.org/wiki/Medical_diagnosis "Medical diagnosis"). | ml.md_0_425 |

[Deep learning](https://en.wikipedia.org/wiki/Deep_learning "Deep learning") consists of multiple hidden layers in an artificial neural network. This approach tries to model the way the human brain processes light and sound into vision and hearing. Some successful applications of deep learning are computer vision and s... | ml.md_0_426 |

are computer vision and speech recognition.[[88]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-88) | ml.md_0_427 |

### Decision trees

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=25 "Edit section: Decision trees")]

Main article: [Decision tree learning](https://en.wikipedia.org/wiki/Decision_tree_learning "Decision tree learning") | ml.md_0_428 |

[](https://en.wikipedia.org/wiki/File:Decision_Tree.jpg)A decision tree showing survival probability of passengers on the [Titanic](https://en.wikipedia.org/wiki/Titanic "Titanic") | ml.md_0_429 |

Decision tree learning uses a [decision tree](https://en.wikipedia.org/wiki/Decision_tree "Decision tree") as a [predictive model](https://en.wikipedia.org/wiki/Predictive_modeling "Predictive modeling") to go from observations about an item (represented in the branches) to conclusions about the item's target value (re... | ml.md_0_430 |

(represented in the leaves). It is one of the predictive modelling approaches used in statistics, data mining, and machine learning. Tree models where the target variable can take a discrete set of values are called classification trees; in these tree structures, [leaves](https://en.wikipedia.org/wiki/Leaf_node "Leaf n... | ml.md_0_431 |

node") represent class labels, and branches represent [conjunctions](https://en.wikipedia.org/wiki/Logical_conjunction "Logical conjunction") of features that lead to those class labels. Decision trees where the target variable can take continuous values (typically [real numbers](https://en.wikipedia.org/wiki/Real_numb... | ml.md_0_432 |

"Real numbers")) are called regression trees. In decision analysis, a decision tree can be used to visually and explicitly represent decisions and [decision making](https://en.wikipedia.org/wiki/Decision_making "Decision making"). In data mining, a decision tree describes data, but the resulting classification tree can... | ml.md_0_433 |

tree can be an input for decision-making. | ml.md_0_434 |

### Random forest regression

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=26 "Edit section: Random forest regression")] | ml.md_0_435 |

Random forest regression (RFR) falls under umbrella of decision [tree-based models](https://en.wikipedia.org/wiki/Tree-based_models "Tree-based models"). RFR is an ensemble learning method that builds multiple decision trees and averages their predictions to improve accuracy and to avoid overfitting. To build decision ... | ml.md_0_436 |

trees, RFR uses bootstrapped sampling, for instance each decision tree is trained on random data of from training set. This random selection of RFR for training enables model to reduce bias predictions and achieve accuracy. RFR generates independent decision trees, and it can work on single output data as well multiple... | ml.md_0_437 |

regressor task. This makes RFR compatible to be used in various application.[[89]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-89)[[90]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-90) | ml.md_0_438 |

### Support-vector machines

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=27 "Edit section: Support-vector machines")]

Main article: [Support-vector machine](https://en.wikipedia.org/wiki/Support-vector_machine "Support-vector machine") | ml.md_0_439 |

Support-vector machines (SVMs), also known as support-vector networks, are a set of related [supervised learning](https://en.wikipedia.org/wiki/Supervised_learning "Supervised learning") methods used for classification and regression. Given a set of training examples, each marked as belonging to one of two categories, ... | ml.md_0_440 |

an SVM training algorithm builds a model that predicts whether a new example falls into one category.[[91]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-CorinnaCortes-91) An SVM training algorithm is a non-[probabilistic](https://en.wikipedia.org/wiki/Probabilistic_classification "Probabilistic classificati... | ml.md_0_441 |

"Probabilistic classification"), [binary](https://en.wikipedia.org/wiki/Binary_classifier "Binary classifier"), [linear classifier](https://en.wikipedia.org/wiki/Linear_classifier "Linear classifier"), although methods such as [Platt scaling](https://en.wikipedia.org/wiki/Platt_scaling "Platt scaling") exist to use SVM... | ml.md_0_442 |

to use SVM in a probabilistic classification setting. In addition to performing linear classification, SVMs can efficiently perform a non-linear classification using what is called the [kernel trick](https://en.wikipedia.org/wiki/Kernel_trick "Kernel trick"), implicitly mapping their inputs into high-dimensional featur... | ml.md_0_443 |

### Regression analysis

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=28 "Edit section: Regression analysis")]

Main article: [Regression analysis](https://en.wikipedia.org/wiki/Regression_analysis "Regression analysis") | ml.md_0_444 |

[](https://en.wikipedia.org/wiki/File:Linear_regression.svg)Illustration of linear regression on a data set | ml.md_0_445 |

Regression analysis encompasses a large variety of statistical methods to estimate the relationship between input variables and their associated features. Its most common form is [linear regression](https://en.wikipedia.org/wiki/Linear_regression "Linear regression"), where a single line is drawn to best fit the given ... | ml.md_0_446 |

fit the given data according to a mathematical criterion such as [ordinary least squares](https://en.wikipedia.org/wiki/Ordinary_least_squares "Ordinary least squares"). The latter is often extended by [regularisation](https://en.wikipedia.org/wiki/Regularization_\(mathematics\) "Regularization \(mathematics\)") method... | ml.md_0_447 |

to mitigate overfitting and bias, as in [ridge regression](https://en.wikipedia.org/wiki/Ridge_regression "Ridge regression"). When dealing with non-linear problems, go-to models include [polynomial regression](https://en.wikipedia.org/wiki/Polynomial_regression "Polynomial regression") (for example, used for trendline... | ml.md_0_448 |

for trendline fitting in Microsoft Excel[[92]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-92)), [logistic regression](https://en.wikipedia.org/wiki/Logistic_regression "Logistic regression") (often used in [statistical classification](https://en.wikipedia.org/wiki/Statistical_classification "Statistical c... | ml.md_0_449 |

classification")) or even [kernel regression](https://en.wikipedia.org/wiki/Kernel_regression "Kernel regression"), which introduces non-linearity by taking advantage of the [kernel trick](https://en.wikipedia.org/wiki/Kernel_trick "Kernel trick") to implicitly map input variables to higher-dimensional space. | ml.md_0_450 |

[Multivariate linear regression](https://en.wikipedia.org/wiki/General_linear_model "General linear model") extends the concept of linear regression to handle multiple dependent variables simultaneously. This approach estimates the relationships between a set of input variables and several output variables by fitting a | ml.md_0_451 |

output variables by fitting a [multidimensional](https://en.wikipedia.org/wiki/Multidimensional_system "Multidimensional system") linear model. It is particularly useful in scenarios where outputs are interdependent or share underlying patterns, such as predicting multiple economic indicators or reconstructing | ml.md_0_452 |

indicators or reconstructing images,[[93]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-93) which are inherently multi-dimensional. | ml.md_0_453 |

### Bayesian networks

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=29 "Edit section: Bayesian networks")]

Main article: [Bayesian network](https://en.wikipedia.org/wiki/Bayesian_network "Bayesian network") | ml.md_0_454 |

[](https://en.wikipedia.org/wiki/File:SimpleBayesNetNodes.svg)A simple Bayesian network. Rain influences whether the sprinkler is activated, and both rain and the sprinkler influence whether the grass... | ml.md_0_455 |

A Bayesian network, belief network, or directed acyclic graphical model is a probabilistic [graphical model](https://en.wikipedia.org/wiki/Graphical_model "Graphical model") that represents a set of [random variables](https://en.wikipedia.org/wiki/Random_variables "Random variables") and their [conditional | ml.md_0_456 |

variables") and their [conditional independence](https://en.wikipedia.org/wiki/Conditional_independence "Conditional independence") with a [directed acyclic graph](https://en.wikipedia.org/wiki/Directed_acyclic_graph "Directed acyclic graph") (DAG). For example, a Bayesian network could represent the probabilistic rela... | ml.md_0_457 |

relationships between diseases and symptoms. Given symptoms, the network can be used to compute the probabilities of the presence of various diseases. Efficient algorithms exist that perform [inference](https://en.wikipedia.org/wiki/Bayesian_inference "Bayesian inference") and learning. Bayesian networks that model seq... | ml.md_0_458 |

model sequences of variables, like [speech signals](https://en.wikipedia.org/wiki/Speech_recognition "Speech recognition") or [protein sequences](https://en.wikipedia.org/wiki/Peptide_sequence "Peptide sequence"), are called [dynamic Bayesian networks](https://en.wikipedia.org/wiki/Dynamic_Bayesian_network "Dynamic Bay... | ml.md_0_459 |

"Dynamic Bayesian network"). Generalisations of Bayesian networks that can represent and solve decision problems under uncertainty are called [influence diagrams](https://en.wikipedia.org/wiki/Influence_diagram "Influence diagram"). | ml.md_0_460 |

### Gaussian processes

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=30 "Edit section: Gaussian processes")]

Main article: [Gaussian processes](https://en.wikipedia.org/wiki/Gaussian_processes "Gaussian processes") | ml.md_0_461 |

[](https://en.wikipedia.org/wiki/File:Regressions_sine_demo.svg)An example of Gaussian Process Regression (prediction) compared with other regression models[[94]](https://en.wikipedia.org/wiki/Mac... | ml.md_0_462 |

A Gaussian process is a [stochastic process](https://en.wikipedia.org/wiki/Stochastic_process "Stochastic process") in which every finite collection of the random variables in the process has a [multivariate normal distribution](https://en.wikipedia.org/wiki/Multivariate_normal_distribution "Multivariate normal distrib... | ml.md_0_463 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.