text stringlengths 2 350 | source stringlengths 9 49 |

|---|---|

[Leo Breiman](https://en.wikipedia.org/wiki/Leo_Breiman "Leo Breiman") distinguished two statistical modelling paradigms: data model and algorithmic model,[[39]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-Cornell-University-Library-2001-39) wherein "algorithmic model" means more or less the machine learni... | ml.md_0_264 |

learning algorithms like [Random Forest](https://en.wikipedia.org/wiki/Random_forest "Random forest"). | ml.md_0_265 |

Some statisticians have adopted methods from machine learning, leading to a combined field that they call _statistical learning_.[[40]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-islr-40)

### Statistical physics | ml.md_0_266 |

### Statistical physics

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=8 "Edit section: Statistical physics")] | ml.md_0_267 |

Analytical and computational techniques derived from deep-rooted physics of disordered systems can be extended to large-scale problems, including machine learning, e.g., to analyse the weight space of [deep neural networks](https://en.wikipedia.org/wiki/Deep_neural_network "Deep neural | ml.md_0_268 |

"Deep neural network").[[41]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-SP_1-41) Statistical physics is thus finding applications in the area of [medical diagnostics](https://en.wikipedia.org/wiki/Medical_diagnostics "Medical diagnostics").[[42]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-S... | ml.md_0_269 |

## Theory

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=9 "Edit section: Theory")] | ml.md_0_270 |

Main articles: [Computational learning theory](https://en.wikipedia.org/wiki/Computational_learning_theory "Computational learning theory") and [Statistical learning theory](https://en.wikipedia.org/wiki/Statistical_learning_theory "Statistical learning theory") | ml.md_0_271 |

A core objective of a learner is to generalise from its experience.[[5]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-bishop2006-5)[[43]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-Mohri-2012-43) Generalisation in this context is the ability of a learning machine to perform accurately on new, ... | ml.md_0_272 |

new, unseen examples/tasks after having experienced a learning data set. The training examples come from some generally unknown probability distribution (considered representative of the space of occurrences) and the learner has to build a general model about this space that enables it to produce sufficiently accurate ... | ml.md_0_273 |

The computational analysis of machine learning algorithms and their performance is a branch of [theoretical computer science](https://en.wikipedia.org/wiki/Theoretical_computer_science "Theoretical computer science") known as [computational learning theory](https://en.wikipedia.org/wiki/Computational_learning_theory "C... | ml.md_0_274 |

"Computational learning theory") via the [probably approximately correct learning](https://en.wikipedia.org/wiki/Probably_approximately_correct_learning "Probably approximately correct learning") model. Because training sets are finite and the future is uncertain, learning theory usually does not yield guarantees of th... | ml.md_0_275 |

of the performance of algorithms. Instead, probabilistic bounds on the performance are quite common. The [biasâvariance decomposition](https://en.wikipedia.org/wiki/Bias%E2%80%93variance_decomposition "Biasâvariance decomposition") is one way to quantify generalisation [error](https://en.wikipedia.org/wiki/Errors_a... | ml.md_0_276 |

"Errors and residuals"). | ml.md_0_277 |

For the best performance in the context of generalisation, the complexity of the hypothesis should match the complexity of the function underlying the data. If the hypothesis is less complex than the function, then the model has under fitted the data. If the complexity of the model is increased in response, then the tr... | ml.md_0_278 |

the training error decreases. But if the hypothesis is too complex, then the model is subject to [overfitting](https://en.wikipedia.org/wiki/Overfitting "Overfitting") and generalisation will be poorer.[[44]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-alpaydin-44) | ml.md_0_279 |

In addition to performance bounds, learning theorists study the time complexity and feasibility of learning. In computational learning theory, a computation is considered feasible if it can be done in [polynomial time](https://en.wikipedia.org/wiki/Time_complexity#Polynomial_time "Time complexity"). There are two kinds... | ml.md_0_280 |

There are two kinds of [time complexity](https://en.wikipedia.org/wiki/Time_complexity "Time complexity") results: Positive results show that a certain class of functions can be learned in polynomial time. Negative results show that certain classes cannot be learned in polynomial time. | ml.md_0_281 |

## Approaches

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=10 "Edit section: Approaches")] | ml.md_0_282 |

[](https://en.wikipedia.org/wiki/File:Supervised_and_unsupervised_learning.png)In supervised learning, the training data is labelled with the expected answers, while in [... | ml.md_0_283 |

answers, while in [unsupervised learning](https://en.wikipedia.org/wiki/Unsupervised_learning "Unsupervised learning"), the model identifies patterns or structures in unlabelled data. | ml.md_0_284 |

Machine learning approaches are traditionally divided into three broad categories, which correspond to learning paradigms, depending on the nature of the "signal" or "feedback" available to the learning system: | ml.md_0_285 |

* [Supervised learning](https://en.wikipedia.org/wiki/Supervised_learning "Supervised learning"): The computer is presented with example inputs and their desired outputs, given by a "teacher", and the goal is to learn a general rule that [maps](https://en.wikipedia.org/wiki/Map_\(mathematics\) "Map \(mathematics\)") in... | ml.md_0_286 |

* [Unsupervised learning](https://en.wikipedia.org/wiki/Unsupervised_learning "Unsupervised learning"): No labels are given to the learning algorithm, leaving it on its own to find structure in its input. Unsupervised learning can be a goal in itself (discovering hidden patterns in data) or a means towards an end ([fea... | ml.md_0_287 |

a means towards an end ([feature learning](https://en.wikipedia.org/wiki/Feature_learning "Feature learning")). | ml.md_0_288 |

* [Reinforcement learning](https://en.wikipedia.org/wiki/Reinforcement_learning "Reinforcement learning"): A computer program interacts with a dynamic environment in which it must perform a certain goal (such as [driving a vehicle](https://en.wikipedia.org/wiki/Autonomous_car "Autonomous car") or playing a game against... | ml.md_0_289 |

a game against an opponent). As it navigates its problem space, the program is provided feedback that's analogous to rewards, which it tries to maximise.[[5]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-bishop2006-5) | ml.md_0_290 |

Although each algorithm has advantages and limitations, no single algorithm works for all problems.[[45]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-45)[[46]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-46)[[47]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-47)

### Supervised lear... | ml.md_0_291 |

### Supervised learning

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=11 "Edit section: Supervised learning")]

Main article: [Supervised learning](https://en.wikipedia.org/wiki/Supervised_learning "Supervised learning") | ml.md_0_292 |

[](https://en.wikipedia.org/wiki/File:Svm_max_sep_hyperplane_with_margin.png)A [support-vector machine](https://en.wikipedia.org/wiki/Support-vector_machine "Support-vector m... | ml.md_0_293 |

machine") is a supervised learning model that divides the data into regions separated by a [linear boundary](https://en.wikipedia.org/wiki/Linear_classifier "Linear classifier"). Here, the linear boundary divides the black circles from the white. | ml.md_0_294 |

Supervised learning algorithms build a mathematical model of a set of data that contains both the inputs and the desired outputs.[[48]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-48) The data, known as [training data](https://en.wikipedia.org/wiki/Training_data "Training data"), consists of a set of train... | ml.md_0_295 |

training examples. Each training example has one or more inputs and the desired output, also known as a supervisory signal. In the mathematical model, each training example is represented by an [array](https://en.wikipedia.org/wiki/Array_data_structure "Array data structure") or vector, sometimes called a [feature | ml.md_0_296 |

sometimes called a [feature vector](https://en.wikipedia.org/wiki/Feature_vector "Feature vector"), and the training data is represented by a [matrix](https://en.wikipedia.org/wiki/Matrix_\(mathematics\) "Matrix \(mathematics\)"). Through [iterative | ml.md_0_297 |

Through [iterative optimisation](https://en.wikipedia.org/wiki/Mathematical_optimization#Computational_optimization_techniques "Mathematical optimization") of an [objective function](https://en.wikipedia.org/wiki/Loss_function "Loss function"), supervised learning algorithms learn a function that can be used to predict... | ml.md_0_298 |

predict the output associated with new inputs.[[49]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-49) An optimal function allows the algorithm to correctly determine the output for inputs that were not a part of the training data. An algorithm that improves the accuracy of its outputs or predictions over ti... | ml.md_0_299 |

time is said to have learned to perform that task.[[18]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-Mitchell-1997-18) | ml.md_0_300 |

Types of supervised-learning algorithms include [active learning](https://en.wikipedia.org/wiki/Active_learning_\(machine_learning\) "Active learning \(machine learning\)"), [classification](https://en.wikipedia.org/wiki/Statistical_classification "Statistical classification") and [regression](https://en.wikipedia.org/... | ml.md_0_301 |

"Regression analysis").[[50]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-Alpaydin-2010-50) Classification algorithms are used when the outputs are restricted to a limited set of values, while regression algorithms are used when the outputs can take any numerical value within a range. For example, in a cla... | ml.md_0_302 |

in a classification algorithm that filters emails, the input is an incoming email, and the output is the folder in which to file the email. In contrast, regression is used for tasks such as predicting a person's height based on factors like age and genetics or forecasting future temperatures based on historical | ml.md_0_303 |

temperatures based on historical data.[[51]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-51) | ml.md_0_304 |

[Similarity learning](https://en.wikipedia.org/wiki/Similarity_learning "Similarity learning") is an area of supervised machine learning closely related to regression and classification, but the goal is to learn from examples using a similarity function that measures how similar or related two objects are. It has appli... | ml.md_0_305 |

are. It has applications in [ranking](https://en.wikipedia.org/wiki/Ranking "Ranking"), [recommendation systems](https://en.wikipedia.org/wiki/Recommender_system "Recommender system"), visual identity tracking, face verification, and speaker verification. | ml.md_0_306 |

### Unsupervised learning

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=12 "Edit section: Unsupervised learning")]

Main article: [Unsupervised learning](https://en.wikipedia.org/wiki/Unsupervised_learning "Unsupervised learning") | ml.md_0_307 |

See also: [Cluster analysis](https://en.wikipedia.org/wiki/Cluster_analysis "Cluster analysis") | ml.md_0_308 |

Unsupervised learning algorithms find structures in data that has not been labelled, classified or categorised. Instead of responding to feedback, unsupervised learning algorithms identify commonalities in the data and react based on the presence or absence of such commonalities in each new piece of data. Central appli... | ml.md_0_309 |

applications of unsupervised machine learning include clustering, [dimensionality reduction](https://en.wikipedia.org/wiki/Dimensionality_reduction "Dimensionality reduction"),[[7]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-Friedman-1998-7) and [density estimation](https://en.wikipedia.org/wiki/Density_e... | ml.md_0_310 |

"Density estimation").[[52]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-JordanBishop2004-52) | ml.md_0_311 |

Cluster analysis is the assignment of a set of observations into subsets (called _clusters_) so that observations within the same cluster are similar according to one or more predesignated criteria, while observations drawn from different clusters are dissimilar. Different clustering techniques make different assumptio... | ml.md_0_312 |

on the structure of the data, often defined by some _similarity metric_ and evaluated, for example, by _internal compactness_ , or the similarity between members of the same cluster, and _separation_ , the difference between clusters. Other methods are based on _estimated density_ and _graph connectivity_. | ml.md_0_313 |

A special type of unsupervised learning called, [self-supervised learning](https://en.wikipedia.org/wiki/Self-supervised_learning "Self-supervised learning") involves training a model by generating the supervisory signal from the data | ml.md_0_314 |

supervisory signal from the data itself.[[53]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-53)[[54]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-54) | ml.md_0_315 |

### Semi-supervised learning

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=13 "Edit section: Semi-supervised learning")]

Main article: [Semi-supervised learning](https://en.wikipedia.org/wiki/Semi-supervised_learning "Semi-supervised learning") | ml.md_0_316 |

Semi-supervised learning falls between [unsupervised learning](https://en.wikipedia.org/wiki/Unsupervised_learning "Unsupervised learning") (without any labelled training data) and [supervised learning](https://en.wikipedia.org/wiki/Supervised_learning "Supervised learning") (with completely labelled training data). So... | ml.md_0_317 |

Some of the training examples are missing training labels, yet many machine-learning researchers have found that unlabelled data, when used in conjunction with a small amount of labelled data, can produce a considerable improvement in learning accuracy. | ml.md_0_318 |

In [weakly supervised learning](https://en.wikipedia.org/wiki/Weak_supervision "Weak supervision"), the training labels are noisy, limited, or imprecise; however, these labels are often cheaper to obtain, resulting in larger effective training sets.[[55]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-55)

###... | ml.md_0_319 |

### Reinforcement learning

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=14 "Edit section: Reinforcement learning")]

Main article: [Reinforcement learning](https://en.wikipedia.org/wiki/Reinforcement_learning "Reinforcement learning") | ml.md_0_320 |

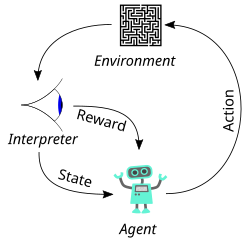

[](https://en.wikipedia.org/wiki/File:Reinforcement_learning_diagram.svg) | ml.md_0_321 |

Reinforcement learning is an area of machine learning concerned with how [software agents](https://en.wikipedia.org/wiki/Software_agent "Software agent") ought to take [actions](https://en.wikipedia.org/wiki/Action_selection "Action selection") in an environment so as to maximise some notion of cumulative reward. Due t... | ml.md_0_322 |

Due to its generality, the field is studied in many other disciplines, such as [game theory](https://en.wikipedia.org/wiki/Game_theory "Game theory"), [control theory](https://en.wikipedia.org/wiki/Control_theory "Control theory"), [operations research](https://en.wikipedia.org/wiki/Operations_research "Operations rese... | ml.md_0_323 |

research"), [information theory](https://en.wikipedia.org/wiki/Information_theory "Information theory"), [simulation-based optimisation](https://en.wikipedia.org/wiki/Simulation-based_optimisation "Simulation-based optimisation"), [multi-agent systems](https://en.wikipedia.org/wiki/Multi-agent_system "Multi-agent syste... | ml.md_0_324 |

"Multi-agent system"), [swarm intelligence](https://en.wikipedia.org/wiki/Swarm_intelligence "Swarm intelligence"), [statistics](https://en.wikipedia.org/wiki/Statistics "Statistics") and [genetic algorithms](https://en.wikipedia.org/wiki/Genetic_algorithm "Genetic algorithm"). In reinforcement learning, the environmen... | ml.md_0_325 |

is typically represented as a [Markov decision process](https://en.wikipedia.org/wiki/Markov_decision_process "Markov decision process") (MDP). Many reinforcement learning algorithms use [dynamic programming](https://en.wikipedia.org/wiki/Dynamic_programming "Dynamic programming") | ml.md_0_326 |

"Dynamic programming") techniques.[[56]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-56) Reinforcement learning algorithms do not assume knowledge of an exact mathematical model of the MDP and are used when exact models are infeasible. Reinforcement learning algorithms are used in autonomous vehicles or in... | ml.md_0_327 |

or in learning to play a game against a human opponent. | ml.md_0_328 |

### Dimensionality reduction

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=15 "Edit section: Dimensionality reduction")] | ml.md_0_329 |

[Dimensionality reduction](https://en.wikipedia.org/wiki/Dimensionality_reduction "Dimensionality reduction") is a process of reducing the number of random variables under consideration by obtaining a set of principal variables.[[57]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-57) In other words, it is a ... | ml.md_0_330 |

it is a process of reducing the dimension of the [feature](https://en.wikipedia.org/wiki/Feature_\(machine_learning\) "Feature \(machine learning\)") set, also called the "number of features". Most of the dimensionality reduction techniques can be considered as either feature elimination or | ml.md_0_331 |

as either feature elimination or [extraction](https://en.wikipedia.org/wiki/Feature_extraction "Feature extraction"). One of the popular methods of dimensionality reduction is [principal component analysis](https://en.wikipedia.org/wiki/Principal_component_analysis "Principal component analysis") (PCA). PCA involves ch... | ml.md_0_332 |

changing higher-dimensional data (e.g., 3D) to a smaller space (e.g., 2D). The [manifold hypothesis](https://en.wikipedia.org/wiki/Manifold_hypothesis "Manifold hypothesis") proposes that high-dimensional data sets lie along low-dimensional [manifolds](https://en.wikipedia.org/wiki/Manifold "Manifold"), and many dimens... | ml.md_0_333 |

reduction techniques make this assumption, leading to the area of [manifold learning](https://en.wikipedia.org/wiki/Manifold_learning "Manifold learning") and [manifold regularisation](https://en.wikipedia.org/wiki/Manifold_regularisation "Manifold regularisation"). | ml.md_0_334 |

### Other types

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=16 "Edit section: Other types")] | ml.md_0_335 |

Other approaches have been developed which do not fit neatly into this three-fold categorisation, and sometimes more than one is used by the same machine learning system. For example, [topic modelling](https://en.wikipedia.org/wiki/Topic_model "Topic model"), [meta-learning](https://en.wikipedia.org/wiki/Meta-learning_... | ml.md_0_336 |

"Meta-learning \(computer science\)").[[58]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-58) | ml.md_0_337 |

#### Self-learning

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=17 "Edit section: Self-learning")] | ml.md_0_338 |

Self-learning, as a machine learning paradigm was introduced in 1982 along with a neural network capable of self-learning, named _crossbar adaptive array_ (CAA).[[59]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-59)[[60]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-60) It gives a solution to t... | ml.md_0_339 |

to the problem learning without any external reward, by introducing emotion as an internal reward. Emotion is used as state evaluation of a self-learning agent. The CAA self-learning algorithm computes, in a crossbar fashion, both decisions about actions and emotions (feelings) about consequence situations. The system ... | ml.md_0_340 |

is driven by the interaction between cognition and emotion.[[61]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-61) The self-learning algorithm updates a memory matrix W =||w(a,s)|| such that in each iteration executes the following machine learning routine: | ml.md_0_341 |

1. in situation _s_ perform action _a_

2. receive a consequence situation _s'_

3. compute emotion of being in the consequence situation _v(s')_

4. update crossbar memory _w'(a,s) = w(a,s) + v(s')_ | ml.md_0_342 |

It is a system with only one input, situation, and only one output, action (or behaviour) a. There is neither a separate reinforcement input nor an advice input from the environment. The backpropagated value (secondary reinforcement) is the emotion toward the consequence situation. The CAA exists in two environments, o... | ml.md_0_343 |

one is the behavioural environment where it behaves, and the other is the genetic environment, wherefrom it initially and only once receives initial emotions about situations to be encountered in the behavioural environment. After receiving the genome (species) vector from the genetic environment, the CAA learns a goal... | ml.md_0_344 |

a goal-seeking behaviour, in an environment that contains both desirable and undesirable situations.[[62]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-62) | ml.md_0_345 |

#### Feature learning

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=18 "Edit section: Feature learning")]

Main article: [Feature learning](https://en.wikipedia.org/wiki/Feature_learning "Feature learning") | ml.md_0_346 |

Several learning algorithms aim at discovering better representations of the inputs provided during training.[[63]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-pami-63) Classic examples include [principal component analysis](https://en.wikipedia.org/wiki/Principal_component_analysis "Principal component an... | ml.md_0_347 |

component analysis") and cluster analysis. Feature learning algorithms, also called representation learning algorithms, often attempt to preserve the information in their input but also transform it in a way that makes it useful, often as a pre-processing step before performing classification or predictions. This techn... | ml.md_0_348 |

technique allows reconstruction of the inputs coming from the unknown data-generating distribution, while not being necessarily faithful to configurations that are implausible under that distribution. This replaces manual [feature engineering](https://en.wikipedia.org/wiki/Feature_engineering "Feature engineering"), an... | ml.md_0_349 |

and allows a machine to both learn the features and use them to perform a specific task. | ml.md_0_350 |

Feature learning can be either supervised or unsupervised. In supervised feature learning, features are learned using labelled input data. Examples include [artificial neural networks](https://en.wikipedia.org/wiki/Artificial_neural_network "Artificial neural network"), [multilayer perceptrons](https://en.wikipedia.org... | ml.md_0_351 |

"Multilayer perceptron"), and supervised [dictionary learning](https://en.wikipedia.org/wiki/Dictionary_learning "Dictionary learning"). In unsupervised feature learning, features are learned with unlabelled input data. Examples include dictionary learning, [independent component | ml.md_0_352 |

learning, [independent component analysis](https://en.wikipedia.org/wiki/Independent_component_analysis "Independent component analysis"), [autoencoders](https://en.wikipedia.org/wiki/Autoencoder "Autoencoder"), [matrix factorisation](https://en.wikipedia.org/wiki/Matrix_decomposition "Matrix | ml.md_0_353 |

"Matrix decomposition")[[64]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-64) and various forms of [clustering](https://en.wikipedia.org/wiki/Cluster_analysis "Cluster | ml.md_0_354 |

"Cluster analysis").[[65]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-coates2011-65)[[66]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-66)[[67]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-jurafsky-67) | ml.md_0_355 |

[Manifold learning](https://en.wikipedia.org/wiki/Manifold_learning "Manifold learning") algorithms attempt to do so under the constraint that the learned representation is low-dimensional. [Sparse coding](https://en.wikipedia.org/wiki/Sparse_coding "Sparse coding") algorithms attempt to do so under the constraint that... | ml.md_0_356 |

that the learned representation is sparse, meaning that the mathematical model has many zeros. [Multilinear subspace learning](https://en.wikipedia.org/wiki/Multilinear_subspace_learning "Multilinear subspace learning") algorithms aim to learn low-dimensional representations directly from [tensor](https://en.wikipedia.... | ml.md_0_357 |

"Tensor") representations for multidimensional data, without reshaping them into higher-dimensional vectors.[[68]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-68) [Deep learning](https://en.wikipedia.org/wiki/Deep_learning "Deep learning") algorithms discover multiple levels of representation, or a hierarc... | ml.md_0_358 |

or a hierarchy of features, with higher-level, more abstract features defined in terms of (or generating) lower-level features. It has been argued that an intelligent machine is one that learns a representation that disentangles the underlying factors of variation that explain the observed | ml.md_0_359 |

that explain the observed data.[[69]](https://en.wikipedia.org/wiki/Machine_learning#cite_note-69) | ml.md_0_360 |

Feature learning is motivated by the fact that machine learning tasks such as classification often require input that is mathematically and computationally convenient to process. However, real-world data such as images, video, and sensory data has not yielded attempts to algorithmically define specific features. An alt... | ml.md_0_361 |

An alternative is to discover such features or representations through examination, without relying on explicit algorithms. | ml.md_0_362 |

#### Sparse dictionary learning

[[edit](https://en.wikipedia.org/w/index.php?title=Machine_learning&action=edit§ion=19 "Edit section: Sparse dictionary learning")]

Main article: [Sparse dictionary learning](https://en.wikipedia.org/wiki/Sparse_dictionary_learning "Sparse dictionary learning") | ml.md_0_363 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.