repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

microsoft/JARVIS | deep-learning | 140 | Is nvidia 4070ti can to run this model? | Is it only the vram that matters? Can 4070ti run Jarvis? Has anyone tried it? | open | 2023-04-13T04:50:08Z | 2023-04-18T06:41:50Z | https://github.com/microsoft/JARVIS/issues/140 | [] | Ryan2009 | 1 |

jmcnamara/XlsxWriter | pandas | 504 | worksheet.set_column() keyword argument names don't match documentation | Hi!

firstcol, lastcol in worksheet.py

first_col, last_col in worksheet.html | closed | 2018-04-09T10:42:26Z | 2018-04-11T22:35:15Z | https://github.com/jmcnamara/XlsxWriter/issues/504 | [

"bug",

"documentation",

"short term"

] | QJKX | 3 |

jina-ai/serve | machine-learning | 6,022 | Other threads are currently calling into gRPC, skipping fork() handlers | **Describe your proposal/problem**

<!-- A clear and concise description of what the proposal is. -->

After I receive a request in an Executor endpoint, I will start a new process, process the model reasoning task asynchronously in the process, and then encapsulate the reasoning result into a Doc that meets the busine... | closed | 2023-08-08T04:22:45Z | 2023-08-08T08:37:58Z | https://github.com/jina-ai/serve/issues/6022 | [] | Song-Gy | 14 |

labmlai/annotated_deep_learning_paper_implementations | machine-learning | 66 | Small error in distillation code | Just a small but relevant nitpick, I think you mean to use `output` rather than using `large_logits` again here:

https://github.com/labmlai/annotated_deep_learning_paper_implementations/blob/master/labml_nn/distillation/__init__.py#L143 | closed | 2021-07-05T17:42:33Z | 2021-07-12T09:12:30Z | https://github.com/labmlai/annotated_deep_learning_paper_implementations/issues/66 | [] | jamespayor | 2 |

docarray/docarray | fastapi | 1,608 | Question: How to change `RuntimeConfig`. | It is not clear in Documentation how `RuntimeConfig` should be used and configured in Indexes.

It seems there is a `db.configure` method which allows it to change, but there is something weird. The runtime configuration is applied at `__init__` time which makes any `configure` call not applicable.

This happens a... | closed | 2023-06-01T08:00:06Z | 2023-06-14T18:25:39Z | https://github.com/docarray/docarray/issues/1608 | [] | JoanFM | 1 |

akfamily/akshare | data-science | 5,058 | AKShare 接口问题报告 | 1. 请先详细阅读文档对应接口的使用方式:https://akshare.akfamily.xyz

2. 操作系统版本,目前只支持 64 位操作系统

Windows 11 专业版 64 位操作系统, 基于 x64 的处理器

4. Python 版本,目前只支持 3.8 以上的版本

Python3.9

5. AKShare 版本,请升级到最新版

akshare-1.14.37

7. 接口的名称和相应的调用代码

接口名称: stock_zh_b_daily_qfq_df

调用代码:

import akshare as ak

stock_zh_b_daily_qfq_df = ak.stock_zh_b_d... | closed | 2024-07-21T15:28:02Z | 2024-07-22T10:54:28Z | https://github.com/akfamily/akshare/issues/5058 | [

"bug"

] | Hellohistory | 1 |

mwaskom/seaborn | data-science | 3,610 | Parameter fix in violinplot documentation | Kindly fix "orient" parameter in the documentation. It is set to "y" in the documentation but it only accepts "h" or "v" as parameter.

`sns.violinplot(seaice, x="Extent", y="Decade", orient="y", fill=False)`

to

`sns.violinplot(seaice, x="Extent", y="Decade", orient="h", fill=False)`

Ref: https://seaborn.pyd... | closed | 2024-01-03T11:38:37Z | 2024-01-03T11:49:14Z | https://github.com/mwaskom/seaborn/issues/3610 | [] | HussamCheema | 1 |

influxdata/influxdb-client-python | jupyter | 345 | Invalid Date Error when attempting to use delete_api | I am assuming this is a fault on my end, but I cannot seem to figure out why the delete_api is throwing an invalid date error.

I am using the following versions:

Python - 3.9.7

influxdb-client - 1.21.0

Influxdb - 2.0.9

Sample Code:

```python

from influxdb_client import InfluxDBClient

from influxdb_client.c... | closed | 2021-10-14T15:37:26Z | 2021-10-15T18:06:30Z | https://github.com/influxdata/influxdb-client-python/issues/345 | [

"question",

"wontfix"

] | rburchDev | 3 |

onnx/onnx | pytorch | 6,814 | CUDA inference on Azure's partial GPU | Hi, I am trying to run inference with ONNX runtime on Azure using Standard_NV6ads_A10_v5 VM which is 1/6th of GPU and getting CUDA error 801 - cudaErrorNotSupported when creating InferenceSession. Everything works as expected on VM with full GPU (NCasT4_v3).

Is CUDA inference supported on these partial GPU VMs ? Do I ... | closed | 2025-03-14T08:37:37Z | 2025-03-14T11:49:21Z | https://github.com/onnx/onnx/issues/6814 | [

"question"

] | mkompanek | 1 |

albumentations-team/albumentations | machine-learning | 1,515 | [Bug] tests for test_serialization_v2 are failing | Tests for `test_serialization/test_serialization_v2`

The reason is unclear.

In the test, the transform is loaded from a file and applied to a randomly generated image.

the output of the transform is loaded from the file and compared to the output of the transform.

What is strange is - that transform has Norma... | closed | 2024-02-15T20:56:57Z | 2024-02-16T01:53:04Z | https://github.com/albumentations-team/albumentations/issues/1515 | [

"bug"

] | ternaus | 1 |

plotly/dash-core-components | dash | 642 | support all input types? | We recently removed support for certain `type` attributes of `dcc.Input` as they don't have good cross browser support (e.g. `type="time"`).

Some users want them back: https://community.plot.ly/t/dash-timepicker/6541/6?u=chriddyp

Should we bring them back? Should we warn users? Or just document that they don't h... | open | 2019-09-12T15:04:29Z | 2022-05-11T13:49:19Z | https://github.com/plotly/dash-core-components/issues/642 | [] | chriddyp | 5 |

pyg-team/pytorch_geometric | pytorch | 9,660 | 27 tests fail | ### 🐛 Describe the bug

[log](https://freebsd.org/~yuri/py311-torch-geometric-2.6.0-test.log)

### Versions

torch-geometric-2.6.0

pytorch-2.4.0

Python-3.11

FreeBSD 14.1 | open | 2024-09-15T12:12:31Z | 2024-11-07T02:55:59Z | https://github.com/pyg-team/pytorch_geometric/issues/9660 | [

"bug",

"good first issue",

"test"

] | yurivict | 1 |

mwouts/itables | jupyter | 291 | Inf loading tables for particular `polars` data frame | I have a data frame with 300 columns and 1000 rows. When trying to display the data frame, it says "Loading ITables v2.1.1 from the `init_notebook_mode`".

The first thing after imports I do is `itables.init_notebook_mode(all_interactive=True)` and can display any other DF normally. Not sure how to debug the problem. | closed | 2024-06-19T09:36:20Z | 2024-06-24T19:34:29Z | https://github.com/mwouts/itables/issues/291 | [] | jmakov | 10 |

collerek/ormar | sqlalchemy | 1,387 | Request ormar to support pydantic 2.6, 2.7, ... | Hi @collerek ,

Just want to check if it's possible for us to support higher pydantic versions or is there a reason ormar is fixed at 2.5?

BTW, thank you for this amazing tool. | closed | 2024-07-31T16:43:30Z | 2024-12-05T14:13:30Z | https://github.com/collerek/ormar/issues/1387 | [] | brunorpinho | 4 |

scanapi/scanapi | rest-api | 310 | Update poetry-publish version | poetry-publish v1.3 uses a pre-built Docker image instead of building it every time from Dockerfile which makes it execute much faster | closed | 2020-10-09T21:05:21Z | 2020-10-14T22:40:07Z | https://github.com/scanapi/scanapi/issues/310 | [

"Automation",

"Hacktoberfest"

] | JRubics | 0 |

Esri/arcgis-python-api | jupyter | 1,773 | clone_items throws "IndexError: list index out of range" when there are nested repeats in the featureservice | **Describe the bug**

A clear and concise description of what the bug is.

When I use the code to clone items for feature service and if it has nested repeats I get the error and fails to clone.

**To Reproduce**

I have a feature service with following

FeatureLayer (id=0)

Table 1(id=1) --> related to layer 0

Tabl... | open | 2024-03-13T18:43:33Z | 2024-09-26T14:59:22Z | https://github.com/Esri/arcgis-python-api/issues/1773 | [

"bug"

] | nojha-g | 3 |

chiphuyen/stanford-tensorflow-tutorials | tensorflow | 10 | chatbot code can't run with tensorflow 1.0? | I have trouble run chatbot code when I update tensorflow.

no module seq2seq.model_with_buckets

| open | 2017-03-22T09:47:31Z | 2017-03-29T17:04:13Z | https://github.com/chiphuyen/stanford-tensorflow-tutorials/issues/10 | [] | zentechthaingo | 1 |

junyanz/pytorch-CycleGAN-and-pix2pix | pytorch | 858 | D_loss drop fast and G_loss drop slow | When I train my dataset,I fand that loss of Discriminative drop very fast,after 3 epoch ,loss_d is about 0.001,but the l1 loss is about 0.18 and the result of G is bad. | closed | 2019-11-27T08:20:43Z | 2024-09-27T10:56:24Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/858 | [] | KirtoXX | 5 |

RobertCraigie/prisma-client-py | asyncio | 968 | Audit Logs ? What is the best way to implement them | ## Problem

Is there a recommended way to implement audit logs with Prisma Py. I want to audit when certain actions are taken on a specific table

- example a user edited a value in `budgets` table. We need to store the value before, after, time, and updated_by

## Suggested solution

I'd like to do something ... | open | 2024-05-31T20:23:24Z | 2024-05-31T20:25:18Z | https://github.com/RobertCraigie/prisma-client-py/issues/968 | [] | ishaan-jaff | 0 |

DistrictDataLabs/yellowbrick | matplotlib | 651 | Fix second image in KElbowVisualizer documentation | The second image that was added to the `KElbowVisualizer` documentation in PR #635 is not rendering correctly because the `elbow_is_behind.png` file is not generated by the `elbow.py` file, but was added separately.

- [x] Expand `KElbowVisualizer` documentation in `elbow.rst`

- [x] Add example showing how to hide t... | closed | 2018-10-31T22:36:25Z | 2018-11-14T19:32:42Z | https://github.com/DistrictDataLabs/yellowbrick/issues/651 | [

"type: documentation"

] | Kautumn06 | 0 |

dsdanielpark/Bard-API | nlp | 59 | Feature for remember previous chat or responses. | hello, I am using your project and found one little thing: it is not saving or remembering the previous responses for better results. can you provide anything related to this issue? Thanks | closed | 2023-06-10T08:24:42Z | 2024-01-18T15:55:07Z | https://github.com/dsdanielpark/Bard-API/issues/59 | [] | BabaYaga1221 | 4 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 115 | 训练细节 | 训练细节部分,数据预处理部分代码可以开源吗?想用自己的数据做增量预训练,想参考一下大佬的思路 | closed | 2023-04-10T12:39:07Z | 2023-05-14T22:02:31Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/115 | [

"stale"

] | baketbek | 2 |

keras-team/keras | tensorflow | 20,167 | Keras model deserialization issue | I trained a model using Keras and saved it, while loading it back, it ran into a deserialization error

```

from keras.models import Model

from keras.layers import Input, Conv1D, Dense, Dropout, Lambda, concatenate

from keras.optimizers import Adam

```

```

model.save('./0813debugmodel.keras')

```

model save r... | closed | 2024-08-26T18:42:32Z | 2024-09-09T23:05:19Z | https://github.com/keras-team/keras/issues/20167 | [

"type:support",

"stat:awaiting response from contributor"

] | chenshenmsft | 5 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 445 | 合并模型之后缺少config文件 | *提示:将[ ]中填入x,表示打对钩。提问时删除这行。只保留符合的选项。*

转LLaMA 到hf没问题,联合lora模型转

python scripts/merge_llama_with_chinese_lora.py \

--base_model LLaMA_HF_13B \

--lora_model chinese_llama_plus_lora_13b,chinese_alpaca_plus_lora_13b \

--output_type pth \

--output_dir merge_weights 也没有问题.

然后运行示例代码 出现错误,,提示转模型后的路径里 m... | closed | 2023-05-29T03:41:07Z | 2023-06-08T23:56:42Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/445 | [

"stale"

] | sssssshf | 5 |

plotly/dash | jupyter | 3,075 | Delete button disappears upon use of page_action setting | page_action being set disables the column deletable functionality (no icon/button).

Minimal modified example from the docs:

```

from dash import Dash, dash_table, dcc, html, Input, Output, State, callback

app = Dash(__name__)

app.layout = html.Div([

html.Div([

dash_table.DataTable(

id='editing-columns... | open | 2024-11-14T17:27:37Z | 2025-01-19T19:05:12Z | https://github.com/plotly/dash/issues/3075 | [

"bug",

"P2"

] | CoranH | 1 |

nok/sklearn-porter | scikit-learn | 48 | MLPClassifier does not reset network value producing wrong predictions when doing continuous prediction | Hi, Great work with Porter really helpful!

The next is a small issue but one that took me a good time to debug so here I wanted to post as both a problem and a possible solution that seems to work for me.

I have been porting an MLPClassifier to android, everything seemed fine except that in java desktop tests the... | open | 2019-02-14T12:24:59Z | 2019-08-10T13:24:27Z | https://github.com/nok/sklearn-porter/issues/48 | [

"bug",

"enhancement",

"1.0.0"

] | julian-ramos | 1 |

apachecn/ailearning | scikit-learn | 534 | 关于随机森林有个问题 | AiLearning/src/py2.x/ml/7.RandomForest/randomForest.py 第111行

gini += float(size)/D * (proportion * (1.0 - proportion)) # 个人理解:计算代价,分类越准确,则 gini 越小

是不是少了一个2,见下:

gini += float(size)/D * (2*proportion * (1.0 - proportion)) # 个人理解:计算代价,分类越准确,则 gini 越小 | closed | 2019-07-08T13:30:04Z | 2021-09-07T17:45:41Z | https://github.com/apachecn/ailearning/issues/534 | [] | guohaoyuan | 1 |

kizniche/Mycodo | automation | 1,276 | arm64 Influxdb 2.x selection on install | System: Rpi 3B+

OS: raspios bullseye arm64 lite

During install if you select "Install Influxdb 2.x", the install script responds with ""You have chosen not to install Influxdb." etc. The issue looks to be the [value assigned to that selection in the setup.sh file](https://github.com/kizniche/Mycodo/blob/master/inst... | closed | 2023-02-10T23:27:06Z | 2023-04-06T16:20:30Z | https://github.com/kizniche/Mycodo/issues/1276 | [

"Fixed and Committed"

] | K1rdro | 1 |

sktime/sktime | scikit-learn | 7,897 | [DOC] Document or Fix Local ReadTheDocs Build Process | #### Describe the issue linked to the documentation

<!--

Tell us about the confusion introduced in the documentation.

-->

The process for building documentation locally is unclear, @fkiraly mentioned that there used to be a local build process, however whether it still works is unclear. I think it would be useful to ... | open | 2025-02-25T17:23:18Z | 2025-02-25T17:23:18Z | https://github.com/sktime/sktime/issues/7897 | [

"documentation"

] | Ankit-1204 | 0 |

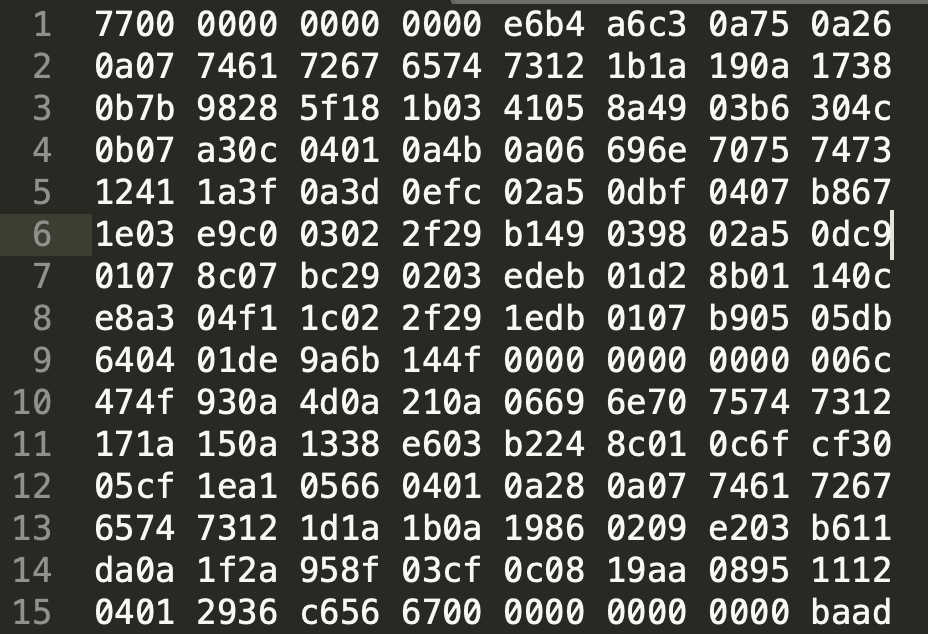

tensorflow/tensor2tensor | machine-learning | 1,499 | What should be the correct t2t-datagen files look like? | I'm quite confused that if the files generated by t2t-datagen are correct or not.

Here is part of the file opened by sublime:

This is read by tf.data:

:

#table name is RCA.MyLogin

__tablename__ = 'RCA.MyLogin'

id = db.Column('ID', db.Integer, primary_key=True)

UName = db.Col... | closed | 2017-12-12T16:48:24Z | 2020-12-05T20:46:35Z | https://github.com/pallets-eco/flask-sqlalchemy/issues/571 | [] | ledikari | 3 |

serengil/deepface | deep-learning | 956 | cv2.dnn issue with using SDD in deepface.analyze() | I have the most recent opencv-python version 4.9.0.80 and it seems to cause an issue when I try to run DeepFace.analyze():

```

DeepFace.analyze('Img.jpg', ['race'], detector_backend = 'ssd', enforce_detection= False, silent = False)

```

```

File ~/anaconda3/lib/python3.11/site-packages/deepface/detectors/SsdWr... | closed | 2024-01-15T19:36:38Z | 2024-01-18T21:49:55Z | https://github.com/serengil/deepface/issues/956 | [

"bug"

] | DianaDaInLee | 5 |

strawberry-graphql/strawberry | django | 2,988 | Support for query complexity | Hey folks, I while ago I wrote down some ideas on how we can add support for query complexity. For this we'll need two things:

1. An extensions that calculates the costs based on an operation

2. Support for customising the costs based on fields/arguments

For customising costs on fields and arguments I was thinki... | open | 2023-07-28T09:21:01Z | 2025-03-20T15:56:19Z | https://github.com/strawberry-graphql/strawberry/issues/2988 | [

"feature-request"

] | patrick91 | 19 |

ibis-project/ibis | pandas | 10,441 | feat: method missing in ibis/expr/types/arrays.py | ### Is your feature request related to a problem?

The method mode() does not exist in the file ibis/expr/types/arrays.py, so it's not possible to apply it on a columns of arrays.

Although the method does exists in DuckDB https://duckdb.org/docs/sql/functions/list#list_-rewrite-functions

### What is the motivatio... | open | 2024-11-05T16:12:21Z | 2024-11-06T09:33:57Z | https://github.com/ibis-project/ibis/issues/10441 | [

"feature"

] | mercelino | 0 |

RomelTorres/alpha_vantage | pandas | 130 | Canadian Equity Dividend Data not found | Not sure whether this is an Alpha Vantage issue or a wrapper issue. But I haven't got a response from Alpha Vantage so I will try here. All four of the following tickers have a long history of quarterly dividends. The output is a pandas df column sum of the output from the monthly_adjusted time series. I get the same r... | closed | 2019-05-27T16:04:15Z | 2019-09-12T01:42:58Z | https://github.com/RomelTorres/alpha_vantage/issues/130 | [] | liamland | 1 |

google-research/bert | nlp | 392 | For training, each question should have exactly 1 answer | I can see Squad 2.0 related files are there.

When I run training for Squad 2.0 I get following error:

```

Traceback (most recent call last):

File "run_squad.py", line 1283, in <module>

tf.app.run()

File "/usr/local/lib/python3.6/dist-packages/tensorflow/python/platform/app.py", line 125, in run

_s... | closed | 2019-01-23T10:57:04Z | 2019-06-18T11:28:35Z | https://github.com/google-research/bert/issues/392 | [] | ghost | 2 |

comfyanonymous/ComfyUI | pytorch | 7,135 | "Input type (torch.cuda.FloatTensor) and weight type (torch.FloatTensor) should be the same" [Since v0.3.15] | ### Expected Behavior

Running simple Stable Cascade workflow. This worked fine in v0.3.14

GPU: NVIDIA GeForce GTX 1660 SUPER

### Actual Behavior

Stage A crashes with "Input type (torch.cuda.FloatTensor) and weight type (torch.FloatTensor) should be the same"

### Steps to Reproduce

Running attached Workflow with S... | closed | 2025-03-08T15:34:30Z | 2025-03-09T15:44:50Z | https://github.com/comfyanonymous/ComfyUI/issues/7135 | [

"Potential Bug"

] | HostedDinner | 4 |

PaddlePaddle/PaddleHub | nlp | 2,315 | 如何微调? | 欢迎您对PaddleHub提出建议,非常感谢您对PaddleHub的贡献!

在留下您的建议时,辛苦您同步提供如下信息:

- 您想要增加什么新特性?

- 什么样的场景下需要该特性?

- 没有该特性的条件下,PaddleHub目前是否能间接满足该需求?

- 增加该特性,PaddleHub可能需要变化的部分。

- 如果可以的话,简要描述下您的解决方案

| open | 2023-12-12T06:05:55Z | 2025-01-02T11:00:49Z | https://github.com/PaddlePaddle/PaddleHub/issues/2315 | [] | lk2003atnet | 2 |

sherlock-project/sherlock | python | 2,384 | Add verify SSL cert option in requests to bypass WAF and Fix 8tracks false positive/negative | ### Description

This feature request proposes adding a new configuration option to the `data.json` file to support bypassing WAF by disabling SSL certificate verification in specific targets. By setting `verifyCert` to `False`, the `requests` library will send requests with `verify=False`, ignoring SSL certificate ver... | open | 2024-12-27T08:37:29Z | 2025-02-17T05:51:33Z | https://github.com/sherlock-project/sherlock/issues/2384 | [

"enhancement"

] | JackJuly | 5 |

huggingface/transformers | machine-learning | 35,977 | adalomo and deepspeed zero3 offload error | ### System Info

python==3.11.11

transformers==4.48.1

torch==2.5.1

deepspeed==0.16.3

### Who can help?

@muellerzr @SunMarc

### Information

- [x] The official example scripts

- [ ] My own modified scripts

### Tasks

- [x] An officially supported task in the `examples` folder (such as GLUE/SQuAD, ...)

- [ ] My own ... | closed | 2025-01-31T03:36:08Z | 2025-03-10T08:04:32Z | https://github.com/huggingface/transformers/issues/35977 | [

"bug"

] | YooSungHyun | 5 |

huggingface/datasets | pytorch | 6,651 | Slice splits support for datasets.load_from_disk | ### Feature request

Support for slice splits in `datasets.load_from_disk`, similar to how it's already supported for `datasets.load_dataset`.

### Motivation

Slice splits are convienient in a numer of cases - adding support to `datasets.load_from_disk` would make working with local datasets easier and homogeniz... | open | 2024-02-09T08:00:21Z | 2024-06-14T14:42:46Z | https://github.com/huggingface/datasets/issues/6651 | [

"enhancement"

] | mhorlacher | 0 |

sunscrapers/djoser | rest-api | 815 | Djoser password email Typo | I am creating app login authentication system using Django and react, I was able to succesfully implement djoser login auth but when I implement the password/reset/confim, it send a wronf reset link in my email.

Here is my djoser configuration:

DJOSER ={

'LOGIN_FIELD' : 'username',

'USER_CREATE_PASSWORD_RET... | closed | 2024-04-13T20:33:56Z | 2024-04-17T15:02:39Z | https://github.com/sunscrapers/djoser/issues/815 | [] | shaikhsufian | 1 |

scrapy/scrapy | web-scraping | 5,818 | Allow LinkExtractor extract all tag or attrs | <!--

Thanks for taking an interest in Scrapy!

If you have a question that starts with "How to...", please see the Scrapy Community page: https://scrapy.org/community/.

The GitHub issue tracker's purpose is to deal with bug reports and feature requests for the project itself.

Keep in mind that by filing an iss... | closed | 2023-01-31T03:44:21Z | 2023-01-31T06:37:13Z | https://github.com/scrapy/scrapy/issues/5818 | [] | NiuBlibing | 1 |

mwaskom/seaborn | matplotlib | 3,026 | rectangular cells of heatmap | Hello.

I wonder whether there are any ways to

make the heatmap cells rectangular,

or in other words, suitable for showing the number (after specifying annot = True).

Thanks in advance. | closed | 2022-09-15T03:06:05Z | 2022-09-15T10:31:21Z | https://github.com/mwaskom/seaborn/issues/3026 | [] | zjq1011 | 1 |

Asabeneh/30-Days-Of-Python | flask | 519 | Python | open | 2024-04-30T19:23:33Z | 2024-05-14T08:39:46Z | https://github.com/Asabeneh/30-Days-Of-Python/issues/519 | [] | ColorMode | 3 | |

Gozargah/Marzban | api | 1,608 | Limit users to use specific clients | Add feature to limit users to use specific clients like Windscribe, v2rayN, Streisand, etc.

| closed | 2025-01-16T07:02:05Z | 2025-01-16T07:44:18Z | https://github.com/Gozargah/Marzban/issues/1608 | [] | Neeqaque | 0 |

akfamily/akshare | data-science | 5,640 | AKShare 接口问题报告 | stock_zh_a_spot_em更新后返回有重复和遗漏 | 2-17 今早更新后(版本1.15.95),stock_zh_a_spot_em从返回200个到5000+个。

但是,每次爬取的结果都有近百个是重复的,还有相同个数的遗漏。

且每次返回重复的都不一样。应该是分页爬取时有时间差? | closed | 2025-02-17T02:18:08Z | 2025-02-17T07:26:17Z | https://github.com/akfamily/akshare/issues/5640 | [

"bug"

] | chopinic | 4 |

floodsung/Deep-Learning-Papers-Reading-Roadmap | deep-learning | 88 | Faster RCNN pdf broken link | open | 2018-03-02T16:15:29Z | 2018-03-02T16:15:29Z | https://github.com/floodsung/Deep-Learning-Papers-Reading-Roadmap/issues/88 | [] | adrianstaniec | 0 | |

taverntesting/tavern | pytest | 387 | Would it be possible to set colors in console output? Let's say red for ERROR, blue for PASS | When I got a lot of test case, it just become difficult to search for which test case failed.

Would it be better with colored output text?

In current version of tavern , can we achieve this ? | closed | 2019-07-15T08:37:42Z | 2019-08-09T21:43:04Z | https://github.com/taverntesting/tavern/issues/387 | [] | ankanch | 2 |

LAION-AI/Open-Assistant | python | 3,290 | Training with DPO : Direct Preference Optimization | A new way of training in RLHF directly with using a reward model : https://arxiv.org/pdf/2305.18290.pdf

I wonder if this is possible to use it instead of the usual RM + PPO setting. This is ML proposition. | closed | 2023-06-03T16:40:26Z | 2023-06-09T11:41:16Z | https://github.com/LAION-AI/Open-Assistant/issues/3290 | [

"ml",

"question"

] | Forbu | 1 |

ultralytics/ultralytics | python | 19,143 | Errors during Training | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar bug report.

### Ultralytics YOLO Component

Train

### Bug

Error During training

YOLO command for train:

!yolo task=detect mode=train model=yolo11x.pt data=/kaggle/wor... | closed | 2025-02-09T05:00:22Z | 2025-02-09T23:18:22Z | https://github.com/ultralytics/ultralytics/issues/19143 | [

"bug",

"fixed",

"detect"

] | ppraneth | 5 |

marcomusy/vedo | numpy | 566 | reconstruct a mesh by points or cells with vertices | Hi Macro,

I have some points with coordinates in numpy array, such as

points = np.array([[x1, y1, z1],

[x2, y2, z2],

[x3, y3, z3],

[x4, y4, z4],

...])

and cells with their vertices, such as

cells = np.array([[x11, y11, z11],[[x12, y12, z12]... | closed | 2021-12-23T01:47:10Z | 2021-12-25T16:18:52Z | https://github.com/marcomusy/vedo/issues/566 | [] | MianMianMeow | 2 |

huggingface/datasets | pytorch | 6,787 | TimeoutError in map | ### Describe the bug

```python

from datasets import Dataset

def worker(example):

while True:

continue

example['a'] = 100

return example

data = Dataset.from_list([{"a": 1}, {"a": 2}])

data = data.map(worker)

print(data[0])

```

I'm implementing a worker function whose runtime will de... | open | 2024-04-06T06:25:39Z | 2024-08-14T02:09:57Z | https://github.com/huggingface/datasets/issues/6787 | [] | Jiaxin-Wen | 7 |

BeanieODM/beanie | asyncio | 283 | Syntax for finding document not passing flake8 | Hi,

I am trying to search document with a field that is boolean

Code:

```python

plan = await Plan.find_one(Plan.active == True)

```

But flake8 is showing `comparison to True should be 'if cond is True:' or 'if cond:'flake8(E712)`

If I change `==` to `is`, then I won't be able to retrieve document that matches th... | closed | 2022-06-09T23:00:23Z | 2023-01-21T02:29:35Z | https://github.com/BeanieODM/beanie/issues/283 | [

"Stale"

] | ponty33 | 3 |

saulpw/visidata | pandas | 2,102 | [playback] Playback failing says existing column is missing | **Small description**

Playback failing says existing column is missing

**Expected result**

No errors due to columns that exist

**Actual result with screenshot**

https://asciinema.org/a/4Kop1pUil70qoHUWMpoCfvvBW

Using sample_data/benchmark.csv

No error other than in the status log:

- `no "Date" Colum... | closed | 2023-11-03T20:10:04Z | 2024-06-29T07:06:33Z | https://github.com/saulpw/visidata/issues/2102 | [

"bug",

"fixed"

] | frosencrantz | 4 |

seleniumbase/SeleniumBase | pytest | 3,231 | UC Mode `driver.connect()` and `driver.reconnect()` sometimes connect to an invisible Chrome extension tab. | ### UC Mode `driver.connect()` and `driver.reconnect()`may connect to an invisible Chrome extension tab.

----

This is causing the issues seen since Chrome 130. (There was a workaround in place for Mac/Linux whereby the issue was avoided by creating a `user-data-dir` in advance, but that didn't help Windows users.... | closed | 2024-10-27T23:10:11Z | 2024-10-29T14:23:14Z | https://github.com/seleniumbase/SeleniumBase/issues/3231 | [

"bug",

"UC Mode / CDP Mode",

"Fun"

] | mdmintz | 3 |

sunscrapers/djoser | rest-api | 15 | Is authorization token expiration implemented? | The readme says, "In other words, users have been granted access to a specific resource for a fixed time period." Where is token expiration implemented?

| closed | 2015-02-17T00:14:47Z | 2021-03-22T23:32:34Z | https://github.com/sunscrapers/djoser/issues/15 | [] | stugots | 2 |

pyg-team/pytorch_geometric | deep-learning | 8,844 | TGN example gives CUDA error | ### 🐛 Describe the bug

When trying to run [this example](https://github.com/pyg-team/pytorch_geometric/blob/master/examples/tgn.py) in a fresh google colab environment I get the following error:

Here's ... | closed | 2024-01-31T13:54:29Z | 2024-01-31T19:00:12Z | https://github.com/pyg-team/pytorch_geometric/issues/8844 | [

"bug",

"example"

] | mb-v1 | 1 |

psf/black | python | 3,811 | support for auto-format on small devices |

good day,

i want to raise here the requirement for support formating with tab indentation on small devices with low memory / limited resources.

i am aware that there are already some issues regarding this (all closed for comments). e.g. https://github.com/psf/black/issues/47

i kindly ask you to have a closer... | closed | 2023-07-24T16:53:34Z | 2023-07-26T07:28:25Z | https://github.com/psf/black/issues/3811 | [

"T: enhancement"

] | kr-g | 13 |

AutoViML/AutoViz | scikit-learn | 45 | Normed Histogram plot with negative y value? | Hi, the plots I have all has negative y values. How to interpret this?

<img width="519" alt="Screen Shot 2021-09-08 at 10 04 39 PM" src="https://user-images.githubusercontent.com/14266357/132610129-cde3e4db-6b4d-4421-abbc-6dcb821fb6b0.png">

I think the following code generates the plots.

sns.distplot(dft.loc[dft... | closed | 2021-09-09T02:05:54Z | 2021-11-22T19:43:33Z | https://github.com/AutoViML/AutoViz/issues/45 | [] | shuaiwang88 | 1 |

Sanster/IOPaint | pytorch | 202 | Need to keep exif data | Now after lama-cleaner finishes processing the picture, the exif data is also cleared | closed | 2023-02-06T03:18:10Z | 2023-04-28T06:41:34Z | https://github.com/Sanster/IOPaint/issues/202 | [

"enhancement"

] | linguowei | 5 |

tiangolo/uvicorn-gunicorn-fastapi-docker | fastapi | 30 | FastAPI on Alpine - smaller stack size? | closed | 2020-02-17T07:17:26Z | 2020-02-17T07:17:34Z | https://github.com/tiangolo/uvicorn-gunicorn-fastapi-docker/issues/30 | [] | matanos5 | 0 | |

sinaptik-ai/pandas-ai | data-science | 1,343 | Questions about the train function | Thanks for the great work.

I have several questions about the instruct train function

1. May I know that what vectorDB perform during the train? Does it act as a RAG?

2. After the train, is that anyway to save the trained model or stuff? Or it requires to call the train function for the prompt everytime?

3. For the... | closed | 2024-08-30T02:21:56Z | 2025-02-11T16:00:11Z | https://github.com/sinaptik-ai/pandas-ai/issues/1343 | [] | mrgreen3325 | 3 |

jupyter-incubator/sparkmagic | jupyter | 281 | Added new Endpoint, Executed SQL code, Not using existing created Session | Hi Experts,

I had added an endpoint and created a session. It's created an spark application and returned "application_1475230083909_12246" details. If I execute spark code using "%%spark" magic, it's executing the code using the above created session.

However, if I use "%%sql" magic and try to execute some sql code,... | closed | 2016-10-04T03:05:35Z | 2016-10-05T17:27:44Z | https://github.com/jupyter-incubator/sparkmagic/issues/281 | [] | pkasinathan | 7 |

dgtlmoon/changedetection.io | web-scraping | 2,328 | [Bug] Docker container "restarting (132)" with image 0.45.18 or higher | **Describe the bug**

Image "ghcr.io/dgtlmoon/changedetection.io:0.45.18" or higher causes container status "Restarting (132)".

**Version**

Works fine until version 0.45.17

**To Reproduce**

Update from a previous version or a new clean install causes same output.

The hardware is an old Intel Celeron P4600 (2) ... | closed | 2024-04-22T16:52:20Z | 2024-05-15T08:54:44Z | https://github.com/dgtlmoon/changedetection.io/issues/2328 | [

"help wanted",

"triage",

"upstream-bug"

] | Nekrotza | 16 |

CorentinJ/Real-Time-Voice-Cloning | tensorflow | 1,167 | Real-time? | Wondering if this can be used in real-time, like speech-to-text-to-speech. If not, is there any other solution for this? | open | 2023-02-24T09:37:53Z | 2023-02-24T09:37:53Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1167 | [] | Maple38 | 0 |

jeffknupp/sandman2 | rest-api | 53 | does update_version.sh update setup.py? | [setup.py has a plain text version string](https://github.com/jeffknupp/sandman2/blob/47ac844be564f1390fc5177557b1907b65e7f453/setup.py#L39) which I don't think will be updated by [update_version.sh](https://github.com/jeffknupp/sandman2/blob/master/update_version.sh). It is very different in sandman2 vs sandman(1).

... | closed | 2016-11-27T16:25:40Z | 2016-12-08T11:06:48Z | https://github.com/jeffknupp/sandman2/issues/53 | [] | swharden | 1 |

exaloop/codon | numpy | 627 | This ticket was submitted by mistake prematurely. Please ignore it. | This ticket was submitted by mistake prematurely.

Please ignore it. | closed | 2025-02-10T15:25:41Z | 2025-02-10T15:31:13Z | https://github.com/exaloop/codon/issues/627 | [] | Yaakov-Belch | 0 |

fastapi/fastapi | asyncio | 13,440 | Validations in `Annotated` like `AfterValidator` do not work in FastAPI 0.115.10 |

### Discussed in https://github.com/fastapi/fastapi/discussions/13431

<div type='discussions-op-text'>

<sup>Originally posted by **amacfie-tc** February 28, 2025</sup>

### First Check

- [X] I added a very descriptive title here.

- [X] I used the GitHub search to find a similar question and didn't find it.

- [X] I s... | closed | 2025-03-01T17:19:44Z | 2025-03-01T22:40:52Z | https://github.com/fastapi/fastapi/issues/13440 | [

"bug"

] | tiangolo | 2 |

PaddlePaddle/PaddleNLP | nlp | 9,868 | [Question]: Error loading layoutlmv2-base-uncased: Missing model_state.pdparams file | ### 请提出你的问题

I'm trying to load the layoutlmv2-base-uncased model using PaddleNLP with the following code:

from paddlenlp.transformers import AutoModel

model = AutoModel.from_pretrained("layoutlmv2-base-uncased")

However, I get the following error:

OSError: Can't load the model for 'layoutlmv2-base-uncased'. If you w... | open | 2025-02-13T15:14:39Z | 2025-03-17T08:28:39Z | https://github.com/PaddlePaddle/PaddleNLP/issues/9868 | [

"question"

] | swaranM | 2 |

collerek/ormar | sqlalchemy | 817 | `DateTime` field ignores timezone parameter | datetime field ignores `timezone` parameter in __new__ mehod

Steps to reproduce the behavior:

Let's say we have this model:

import sqlalchemy

from databases import Database

from settings import settings

database = Database("postgresql+asyncpg://user:pass@localhost:5433/database")

me... | open | 2022-09-07T10:17:13Z | 2023-03-24T13:03:39Z | https://github.com/collerek/ormar/issues/817 | [

"bug"

] | nrd-bam | 1 |

CTFd/CTFd | flask | 2,673 | Import Issue | <!--

If this is a bug report please fill out the template below.

If this is a feature request please describe the behavior that you'd like to see.

-->

**Environment**: Ubuntu 24.04

- CTFd Version/Commit: 3.6.4

- Operating System: Ubuntu

- Web Browser and Version: Firefox 125.0.2

**What happened?**

I ha... | closed | 2024-12-01T13:42:14Z | 2024-12-31T15:43:25Z | https://github.com/CTFd/CTFd/issues/2673 | [] | chinu8005 | 1 |

serengil/deepface | machine-learning | 1,425 | [BUG]: Two completely different pictures are predicted as True | ### Before You Report a Bug, Please Confirm You Have Done The Following...

- [X] I have updated to the latest version of the packages.

- [X] I have searched for both [existing issues](https://github.com/serengil/deepface/issues) and [closed issues](https://github.com/serengil/deepface/issues?q=is%3Aissue+is%3Aclosed) ... | closed | 2025-01-14T07:21:26Z | 2025-01-14T07:27:18Z | https://github.com/serengil/deepface/issues/1425 | [

"bug",

"invalid"

] | jhluaa | 1 |

jupyter/nbgrader | jupyter | 1,034 | Document how to access the formgrader when it is a JupyterHub service | ### Operating system

FreeBSD 11.2 64 bit

### `nbgrader --version`

5.4

### `jupyterhub --version` (if used with JupyterHub)

0.9.4

### `jupyter notebook --version`

5.7

### database

postgresql 10.5

Hi,

I have created a course account and two other user accounts.

JupyterHub has been set up as a managed sh... | closed | 2018-10-26T20:13:35Z | 2019-06-11T02:00:46Z | https://github.com/jupyter/nbgrader/issues/1034 | [

"documentation"

] | jnak12 | 5 |

sunscrapers/djoser | rest-api | 579 | CREATE_SESSION_ON_LOGIN exists but is not documented | I am currently looking into ways to utilize normal cookie based authentication for usage in our SPA, and while looking at the source code, i realized that djoser has a "CREATE_SESSION_ON_LOGIN" setting that actually essentially does this, however this is not properly documented as far as i could find.

Maybe you wan... | open | 2021-01-18T09:50:51Z | 2022-01-19T00:36:57Z | https://github.com/sunscrapers/djoser/issues/579 | [] | C0DK | 3 |

serengil/deepface | deep-learning | 954 | About yolov8n-face.pt | I want use it in Ascend 310B1. And I transform it to yolov8n-face.om. Now, the model output while one picture is (1,20,8400). I think the 8400 is the number of object. and 20 is the parameters like x1,x2,y1,y2,score.... However, I don't know how to deal it without know the train config. I hope you can give some tips.

... | closed | 2024-01-15T10:41:52Z | 2024-01-15T10:45:24Z | https://github.com/serengil/deepface/issues/954 | [

"invalid"

] | zdjk104tan | 2 |

coqui-ai/TTS | python | 2,862 | [Bug] KeyError: 'avg_loss_1' crash when training model | ### Describe the bug

Occurs when running the script below.

### To Reproduce

Run the following script. I've tried it w/ the `LJSpeech-1.1` dataset and the same error occurs, so you can sub in the desired dataset.

```python

import os

from TTS.tts.configs.shared_configs import BaseAudioConfig, BaseDatasetConfig

f... | closed | 2023-08-12T00:16:10Z | 2023-08-15T16:42:38Z | https://github.com/coqui-ai/TTS/issues/2862 | [

"bug"

] | T145 | 2 |

elliotgao2/gain | asyncio | 49 | demo error | copy your basic demo code and run it:

error.

```

Traceback (most recent call last):

File "b.py", line 23, in <module>

MySpider.run()

File "/home/qyy/anaconda3/envs/sanic/lib/python3.6/site-packages/gain/spider.py", line 52, in run

loop.run_until_complete(cls.init_parse(semaphore))

File "uvloop... | open | 2019-04-08T07:09:28Z | 2019-04-08T07:13:01Z | https://github.com/elliotgao2/gain/issues/49 | [] | AaronConlon | 0 |

arnaudmiribel/streamlit-extras | streamlit | 171 | Error running app | Received error: "Error running app. If this keeps happening, please [contact support](https://github.com/arnaudmiribel/streamlit-extras/issues/new). "

When trying to visit [Streamlit Extras](https://extras.streamlit.app/App%20logo) | closed | 2023-08-30T16:29:48Z | 2023-09-05T13:32:27Z | https://github.com/arnaudmiribel/streamlit-extras/issues/171 | [] | chris-cannon90 | 0 |

zappa/Zappa | flask | 1,345 | Importing task decorator from zappa.asynchronous takes ~2.5s | As above, simply importing the task decorator adds >2 seconds(!!!) to application start time.

## Context

This is not a bug per se, but given the (ideally) snappy nature of lambda, a simple import from Zappa should not be adding this much overhead.

## Expected Behavior

Quick

## Actual Behavior

Slow

## Pos... | closed | 2024-08-13T06:30:53Z | 2024-11-23T04:21:38Z | https://github.com/zappa/Zappa/issues/1345 | [

"no-activity",

"auto-closed"

] | texonidas | 3 |

widgetti/solara | flask | 468 | disable scrolling in main content | How can I disable scrolling of the main content area and force the content to fill 100% of the view window. I tried adding `style='height: 100vh, overflow: hidden'` to the `solara.Column` but this didn't work.

```

import solara

import pathlib

def get_config():

""" create custom jdaviz configuration ... | closed | 2024-01-18T14:06:01Z | 2024-02-28T10:36:17Z | https://github.com/widgetti/solara/issues/468 | [] | havok2063 | 8 |

apify/crawlee-python | web-scraping | 526 | Implement/document a way how to pass extra configuration to json.dump() | There is useful configuration to `json.dump()` which I'd like to pass through `await crawler.export_data("export.json")`, but I see no way to do that:

- `ensure_ascii` - as someone living in a country using extended latin, setting this to `False` prevents Python to encode half of the characters as weird mess

- `ind... | closed | 2024-09-15T17:47:00Z | 2024-10-31T16:34:14Z | https://github.com/apify/crawlee-python/issues/526 | [

"enhancement",

"t-tooling",

"hacktoberfest"

] | honzajavorek | 7 |

oegedijk/explainerdashboard | dash | 88 | Make "hide_popout" global parameter? | Would it be possible to add `hide_popout` to[ the list of globally toggle-able things](https://explainerdashboard.readthedocs.io/en/latest/custom.html?highlight=hide_poweredby#hiding-toggles-and-dropdowns-inside-components)? | closed | 2021-02-18T11:33:01Z | 2021-02-18T19:09:06Z | https://github.com/oegedijk/explainerdashboard/issues/88 | [] | hkoppen | 1 |

sigmavirus24/github3.py | rest-api | 702 | fetch a key by id return None | an example is worth 1000 words:

```python

>>> gh = github3.login(…)

>>> u2 =gh.user(gh.user().login)

>>> key_id = list(u.iter_keys())[0].id

>>> type(gh.key(key_id))

<class 'NoneType'>

```

as a solution I implemented that function using the `user.iter_keys` method:

```python

def get_key(self, key_id... | closed | 2017-05-06T18:48:25Z | 2017-05-06T19:48:23Z | https://github.com/sigmavirus24/github3.py/issues/702 | [] | guyzmo | 1 |

junyanz/pytorch-CycleGAN-and-pix2pix | deep-learning | 822 | Switch from GPU to CPU why, perceptual loss CycleGAN training | **Problem: model always switch from GPU to CPU**

Modifications done for adding the perceptual loss:

So if you want to use perceptual loss while using this amazing CycleGAN do the following:

In models.cycle_gan_model.py:

add at the beginning:

from torchvision import models

import torch.nn as nn

... | open | 2019-10-30T17:38:01Z | 2021-06-30T10:20:35Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/822 | [] | Scienceseb | 7 |

capitalone/DataProfiler | pandas | 820 | `_assimilate_histogram` and `_regenerate_histogram` refactor into standalone functions | **Is your feature request related to a problem? Please describe.**

`_assimilate_histogram` and `_regenerate_histogram` functions are not using self for anything of substance and as a result can be separated into their own standalone static functions.

**Describe the outcome you'd like:**

Move these two function... | open | 2023-05-15T17:50:38Z | 2023-08-10T15:15:42Z | https://github.com/capitalone/DataProfiler/issues/820 | [

"New Feature"

] | ksneab7 | 11 |

robusta-dev/robusta | automation | 1,158 | [helm] implement Liveness and readiness for k8s pods | **Is your feature request related to a problem?**

On robusta pods, Liveness and readiness probes are not defined. Pods continues to live even if the service have problems.

For example, if automountServiceAccountToken is set to false, the runner will start and run but the service is not ready as it can't contact kub... | open | 2023-11-10T08:04:43Z | 2023-11-14T06:07:22Z | https://github.com/robusta-dev/robusta/issues/1158 | [

"needs-triage"

] | michMartineau | 0 |

graphql-python/gql | graphql | 334 | 3.6.8 Support Error. | **Describe the bug**

GQL does not support 3.6.8 because it has multidict as a dependency, and multidict is Python>=3.7 only.

**To Reproduce**

Steps to reproduce the behavior:

pip install gql on a python 3.6.8 environment

**Expected behavior**

Proper installation

**System info (please complete the following... | closed | 2022-06-13T11:40:05Z | 2022-07-03T18:48:59Z | https://github.com/graphql-python/gql/issues/334 | [

"type: invalid"

] | cjlaserna | 1 |

nvbn/thefuck | python | 1,477 | Installation as instructed for Mint fails | The output of `thefuck --version` (something like `The Fuck 3.1 using Python

3.5.0 and Bash 4.4.12(1)-release`):

Irrelevant - the problem is with installing the program in the first place.

Your system (Debian 7, ArchLinux, Windows, etc.):

Mint 22 Cinnamon. (Mint 22 is based upon Ubuntu 24.04, a.k.a. '... | open | 2024-10-22T01:49:16Z | 2025-01-07T07:02:31Z | https://github.com/nvbn/thefuck/issues/1477 | [] | LinuxOnTheDesktop | 12 |

vanna-ai/vanna | data-visualization | 569 | Vanna is not able to get data from local Postgres DB | I have modified streamlit app to use ollama and chromadb

**vanna_calls.py**

```

from vanna.ollama import Ollama

from vanna.chromadb import ChromaDB_VectorStore

class MyVanna(ChromaDB_VectorStore, Ollama):

def __init__(self, config=None):

ChromaDB_VectorStore.__init__(self, config=config)

... | closed | 2024-07-29T00:04:57Z | 2024-07-31T16:01:01Z | https://github.com/vanna-ai/vanna/issues/569 | [

"bug"

] | imranrazakhan | 1 |

schemathesis/schemathesis | pytest | 1,789 | Add convenience methods on `ParameterSet` | It could be useful in hooks, e.g. to check whether `APIOperation` contains some header (we leave case-insensitive for a while).

1. Add a new `contains` method to the [ParameterSet](https://github.com/schemathesis/schemathesis/blob/master/src/schemathesis/parameters.py#L55) class, the method should accept `name` of ... | closed | 2023-10-05T12:15:07Z | 2023-10-13T20:32:16Z | https://github.com/schemathesis/schemathesis/issues/1789 | [

"Priority: Low",

"Hacktoberfest",

"Difficulty: Beginner",

"Component: Hooks"

] | Stranger6667 | 1 |

plotly/dash-table | dash | 305 | How do I style my numbers to commas style, or 3 significant figures in a plotly table (without converting them to string) | Thanks for your interest in Plotly's Dash DataTable component!!

Note that GitHub issues in this repo are reserved for bug reports and feature

requests. Implementation questions should be discussed in our

[Dash Community Forum](https://community.plot.ly/c/dash).

Before opening a new issue, please search through ... | closed | 2018-12-18T05:50:09Z | 2018-12-18T14:05:33Z | https://github.com/plotly/dash-table/issues/305 | [

"dash-type-question"

] | kennethtiong | 1 |

deedy5/primp | web-scraping | 97 | `client.cookies` setter does not set cookies | closed | 2025-02-20T19:59:21Z | 2025-02-22T17:01:28Z | https://github.com/deedy5/primp/issues/97 | [

"bug"

] | deedy5 | 2 | |

aminalaee/sqladmin | asyncio | 767 | Use HTMX | # Use HTMX

At #678, we discussed using HTMX to do inline edits.

## Benefits:

- HTMX could ease the development of `create_modal`, `edit_modal`, and `details_modal` from Flask Admin.

- HTMX could ease the development of Redis Console from Flask Admin.

- HTMX is a UI Toolkit (Bootstrap, Tabler, Tailwind) agnos... | open | 2024-05-13T15:30:20Z | 2024-05-25T02:51:04Z | https://github.com/aminalaee/sqladmin/issues/767 | [] | hasansezertasan | 3 |

allure-framework/allure-python | pytest | 742 | Allure report can not get case result when run case with param `--forked` | Hi Team,

I am facing issue to get case result when generate allure report using allure-pytest 3.13.1 version.

Cli: python -m pytest --forked tests/function -q --alluredir=./__out__/results

#### I'm submitting a ...

bug report

#### What is the current behavior?

<img width="707" alt="image" src="https:/... | open | 2023-04-18T08:05:55Z | 2023-07-08T22:29:10Z | https://github.com/allure-framework/allure-python/issues/742 | [

"bug",

"theme:pytest"

] | pengdada00100 | 2 |

wkentaro/labelme | deep-learning | 509 | 能不能弄个shift+滚轮左右移动 | 目前用的Alt+滚轮实在不方便 | closed | 2019-11-09T13:36:42Z | 2020-01-27T01:47:12Z | https://github.com/wkentaro/labelme/issues/509 | [] | kyrosz7u | 0 |

piskvorky/gensim | machine-learning | 3,191 | Similarity Interface of Gensim giving low similarity score for exact same documents with TfIdf + LdaModel | <!--

**IMPORTANT**:

- Use the [Gensim mailing list](https://groups.google.com/forum/#!forum/gensim) to ask general or usage questions. Github issues are only for bug reports.

- Check [Recipes&FAQ](https://github.com/RaRe-Technologies/gensim/wiki/Recipes-&-FAQ) first for common answers.

Github bug reports that d... | open | 2021-07-13T15:41:06Z | 2021-07-13T15:41:06Z | https://github.com/piskvorky/gensim/issues/3191 | [] | Rutvik-Trivedi | 0 |

dask/dask | pandas | 11,200 | dask.delayed: Dataframe partition with map_overlap gets randomly converted to Pandas Dataframe or Pandas Series. | **Describe the issue**:

In recent versions of Dask, passing a Dataframe partition as an argument to a dask.delayed function gets randomly converted either into a Pandas DataFrame (expected), or a Pandas Series (not expected). This behavior is entirely non-reproducible, i.e. always happens randomly at different stages ... | open | 2024-06-24T20:21:20Z | 2025-01-22T17:53:56Z | https://github.com/dask/dask/issues/11200 | [

"bug",

"dask-expr"

] | rettigl | 4 |

fedspendingtransparency/usaspending-api | pytest | 3,645 | Ability to filter using office names/codes | New feature suggestion: Allow filtering on awarding_office_name/code and funding_office_name/code. I currently have to pull down a huge dataset and then filter afterwards for the specific office that I want. Adding an office filter parameter in the API filter objects (specifically for Award Download and Bulk Award Down... | open | 2022-11-18T14:34:00Z | 2022-11-18T14:34:00Z | https://github.com/fedspendingtransparency/usaspending-api/issues/3645 | [] | jnolandrive | 0 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.