repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

stanfordnlp/stanza | nlp | 1,436 | Missing attributes start_char and end_char for certain tokens in Stanza pipeline output. | ### Describe the bug:

The output of the Stanza pipeline is missing start_char and end_char values for certain tokens. This issue can be observed in the following example, where the token 'It\"s' lacks start_char and end_char values, even though these fields are present for other tokens in the output.

### Steps to... | closed | 2024-11-26T22:19:54Z | 2024-12-03T11:25:55Z | https://github.com/stanfordnlp/stanza/issues/1436 | [

"bug"

] | al3xkras | 3 |

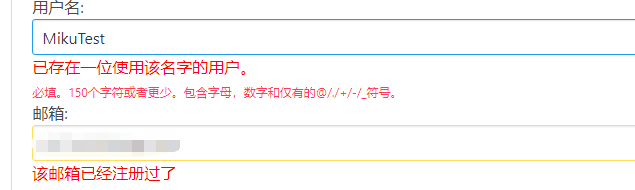

Ehco1996/django-sspanel | django | 159 | 在后台删除用户,数据库不会更新? | 我在后台删除了一个用户,然后再用这个昵称注册的时候提示已经被注册了。

后台看是确实只剩下一个了,但是数据库还有两个,而且用被删除的用户名登录可以登录上,输入用户名密码之后跳转500页面,然后点浏览器的返回,提示登录成功。虽然有些功能用不了,但是查看公告,联系站长,捐赠付费,充值界面,商品界面,购买记录,邀请管理,返利记录,这些功能都可以用。不知道算不算bug

![_201807221353... | closed | 2018-07-22T06:01:59Z | 2018-07-23T08:36:38Z | https://github.com/Ehco1996/django-sspanel/issues/159 | [] | Aruelius | 3 |

twopirllc/pandas-ta | pandas | 584 | Description for Each Indicator | I am very new to technical indicators, and there are over 200 indicators.

where can I find the documentation for each indicator with math? if documentation is not available, will this project accept my contribution? | closed | 2022-09-01T15:59:49Z | 2024-12-16T17:26:50Z | https://github.com/twopirllc/pandas-ta/issues/584 | [

"enhancement"

] | chandraveshchaudhari | 4 |

tortoise/tortoise-orm | asyncio | 1,462 | fields.TimeDeltaField in pydantic_model_creator raise Input should be None [type=none_required, input_value=datetime.timedelta(second...104, microseconds=48382), input_type=timedelta] | **Describe the bug**

with

tortoise-orm==0.20.0

pydantic==2.2.1

code like:

```

class Task(Model):

usage_time = fields.TimeDeltaField(null=True, blank=True, description='usgae')

class TaskListSchema(pydantic_model_creator(Task, name='TaskListSchema', exclude=('usage_time',))):

pass

TaskListSc... | open | 2023-08-23T07:16:43Z | 2023-08-23T10:02:17Z | https://github.com/tortoise/tortoise-orm/issues/1462 | [] | yinkh | 6 |

unit8co/darts | data-science | 2,256 | Wonder if there is any way to make a model predict more spread 'dynamic'? explain more in body | Hello! I have been trying alot to get a model to predict well on some electricity data. I have tried several models (but not all yet), and TCN is one of the ones that performs the best.

The model achieves a pretty good rmse and it outperforms the naive models substantially.

If i train and predcit on accumulated k... | closed | 2024-02-29T00:40:12Z | 2024-08-23T09:18:04Z | https://github.com/unit8co/darts/issues/2256 | [

"question"

] | Allena101 | 7 |

piskvorky/gensim | data-science | 3,177 | Incompatible types of unicode strings in `gensim.similarities.fastss.editdist` | #### Problem description

In #3146, a new algorithm for fast Levenshtein distance computation has been added. However, the algorithm currently cannot cope with different internal representations of Unicode strings, resulting in cryptic errors:

#### Steps/code/corpus to reproduce

``` python

$ pip install git+ht... | closed | 2021-06-21T18:25:05Z | 2021-06-29T05:23:41Z | https://github.com/piskvorky/gensim/issues/3177 | [] | Witiko | 3 |

PokeAPI/pokeapi | api | 306 | CORS Issue | This is a very specific question but I am trying to access the PokeAPI V1 and V2 and they seem to behave differently.

My code for both is

$.ajax({

type:"GET",

dataType: "jsonp",

url: (Uone or Utwo)

success: function(dataone)

{*somefunction

}})

. When I put in the url from the pokeAPI V1 it work... | closed | 2017-10-01T05:38:16Z | 2017-10-04T09:57:25Z | https://github.com/PokeAPI/pokeapi/issues/306 | [] | mbapna123 | 1 |

seleniumbase/SeleniumBase | web-scraping | 2,101 | Using uc option with proxy in multiprocessing repeats same ip |

Hi, using this code in multiprocessing and different proxy, repeats ip:

driver = Driver(uc=True, proxy=proxy_dict['http'], headless=headless, uc_cdp_events=api,incognito=True)

Thanks

| closed | 2023-09-12T16:23:10Z | 2023-09-15T16:18:30Z | https://github.com/seleniumbase/SeleniumBase/issues/2101 | [

"self-resolved",

"UC Mode / CDP Mode"

] | FranciscoPalomares | 11 |

httpie/cli | api | 822 | Fix simple typo: downland -> download | There is a small typo in httpie/downloads.py.

Should read download rather than downland.

| closed | 2019-12-04T11:08:59Z | 2019-12-04T12:32:09Z | https://github.com/httpie/cli/issues/822 | [] | timgates42 | 0 |

Neoteroi/BlackSheep | asyncio | 246 | Add missing article to exception messages | Add the missing articles to these error messages:

```python

def validate_source_path(source_folder: str) -> None:

source_folder_path = Path(source_folder)

if not source_folder_path.exists():

raise InvalidArgument("given root path does not exist")

if not source_folder_path.is_dir():

... | closed | 2022-03-25T19:48:22Z | 2022-04-26T20:26:22Z | https://github.com/Neoteroi/BlackSheep/issues/246 | [

"enhancement",

"fixed in branch"

] | RobertoPrevato | 0 |

ymcui/Chinese-BERT-wwm | nlp | 160 | 建议增加例子demo 方便快速入手 | 建议增加例子demo 方便快速入手 | closed | 2020-11-23T05:13:08Z | 2020-12-01T05:29:55Z | https://github.com/ymcui/Chinese-BERT-wwm/issues/160 | [

"stale"

] | zeng8280 | 2 |

KevinMusgrave/pytorch-metric-learning | computer-vision | 670 | Default normalization in distances is counterintuitive (or wrong) | Not a real bug, and maybe it's just personal preference, but I feel like the normalization in several distances is counterintuitive.

For example, the documentation for `CosineSimilarity`, says

> This class is equivalent to [DotProductSimilarity(normalize_embeddings=True)](https://kevinmusgrave.github.io/pytorch-m... | open | 2023-10-16T17:16:28Z | 2023-10-17T10:37:39Z | https://github.com/KevinMusgrave/pytorch-metric-learning/issues/670 | [

"bug",

"documentation"

] | mcschmitz | 1 |

flasgger/flasgger | rest-api | 281 | Add YAML in request body | I have an API that accepts YAML or JSON in body (according to what I sent in the other parameter)...

But even when I use `consume` tag, flasgger is only sending `"Content-Type: application/json"`

Here's the YAML doc string:

```

- in: body

name: yaml

consumes:

... | open | 2019-02-04T10:26:41Z | 2019-02-04T10:26:41Z | https://github.com/flasgger/flasgger/issues/281 | [] | AseedUsmani | 0 |

matplotlib/matplotlib | data-visualization | 29,492 | [Bug]: Face color of right-side table cells without right edge rendered wrongly | ### Bug summary

When the right edge of a colored right-most cell is removed, the rendering goes wrong, and a diagonal is created.

Here's an example where cell 4 is rendered wrongly, while cell 3 without its left edge is rendered correctly:

.cpu().numpy(),)

^^^^^^^^^^^^^^^^^^^^^^^^^^

TypeError: Got unsupported ScalarType ... | closed | 2024-06-25T14:43:26Z | 2024-06-25T16:04:00Z | https://github.com/huggingface/datasets/issues/7000 | [] | stoical07 | 3 |

TheAlgorithms/Python | python | 11,585 | Please like my code |

userInput = input('Enter 1 or 2: ')

if userInput == "1":

print ("Hello world")

print ("I love Python")

elif userInput == "2":

print ("python rocks")

print ("How are you?")

else:

print ("you did not enter a valid number")

| closed | 2024-09-26T09:31:43Z | 2024-09-30T10:01:19Z | https://github.com/TheAlgorithms/Python/issues/11585 | [] | Phyhoncoder13 | 2 |

OpenInterpreter/open-interpreter | python | 1,507 | Fails with "json.decoder.JSONDecodeError" trying to manipulate with screen using Antropic | ### Describe the bug

I've run "interpreter --os" to play with anthropic OS control feature and it crashed with "json.decoder.JSONDecodeError: Expecting value: line 1 column 1 (char 0)"

### Reproduce

I've run "interpreter --os", set a key and asked if it can hear me through the mic

it tried to open settings and fa... | open | 2024-10-29T08:57:57Z | 2024-11-04T14:30:26Z | https://github.com/OpenInterpreter/open-interpreter/issues/1507 | [

"Needs Verification"

] | artemu78 | 1 |

flairNLP/flair | pytorch | 3,292 | [Feature]: add BINDER | ### Problem statement

Extend the label verbalizer decoder to the [BINDER](https://openreview.net/forum?id=9EAQVEINuum) paper which is using a contrastive / similarity loss whereas the current implementation uses matrix multiplication.

### Solution

Write a new class that implements the BINDER paper.

### Additional C... | open | 2023-08-06T10:36:21Z | 2023-08-06T10:36:22Z | https://github.com/flairNLP/flair/issues/3292 | [

"feature"

] | whoisjones | 0 |

gradio-app/gradio | python | 10,572 | gr.Dataframe style is not working on gradio 5.16.0 | ### Describe the bug

As described in: https://www.gradio.app/guides/styling-the-gradio-dataframe, previously the style can display properly. But now it doesn't work in the FIRST run anymore. If run multiple times, the style can show properly.

Please check the code below and run it in https://www.gradio.app/playground... | closed | 2025-02-12T10:21:49Z | 2025-02-19T19:01:42Z | https://github.com/gradio-app/gradio/issues/10572 | [

"bug",

"Priority",

"💾 Dataframe",

"Regression"

] | jamie0725 | 2 |

ray-project/ray | deep-learning | 51,084 | [core] Cover cpplint for `/src/ray/object_manager` (excluding `plasma`) | ### Description

As part of the initiative to introduce cpplint into the pre-commit hook, we are gradually cleaning up C++ folders to ensure compliance with code style requirements. This issue focuses on cleaning up `src/ray/object_manager` (excluding `plasma`, as it's being covered through #50954).

### Goal

Ensure a... | open | 2025-03-05T01:47:39Z | 2025-03-05T04:48:13Z | https://github.com/ray-project/ray/issues/51084 | [

"enhancement",

"core"

] | elimelt | 2 |

gunthercox/ChatterBot | machine-learning | 2,038 | Erroneous chatterbot reponses | While running the following script:

`from chatterbot import ChatBot

bot = ChatBot(

'Maxine',

storage_adapter='chatterbot.storage.SQLStorageAdapter',

database_uri='sqlite:///database.sqlite3',

logic_adapters=[

{

'import_path': 'chatterbot.logic.BestMatch'... | open | 2020-08-30T16:45:26Z | 2020-09-03T14:07:32Z | https://github.com/gunthercox/ChatterBot/issues/2038 | [] | hankp46 | 1 |

scikit-hep/awkward | numpy | 2,968 | ak.to_parquet_row_groups | ### Description of new feature

Awkward 1.x had a secret back-door to `ak.to_parquet` that would interpret the first argument as an iterator of `ak.Arrays` to write as row groups, rather than a single `ak.Array` to write as a single row group, if its type was a `PartitionedArray`, which no longer exists in Awkward 2.x.... | closed | 2024-01-19T20:30:33Z | 2024-02-05T16:01:17Z | https://github.com/scikit-hep/awkward/issues/2968 | [

"feature"

] | jpivarski | 0 |

giotto-ai/giotto-tda | scikit-learn | 350 | Bindings externals wasserstein, input parameter q type | <!-- Instructions For Filing a Bug: https://github.com/giotto-ai/giotto-tda/blob/master/CONTRIBUTING.rst -->

#### Description

<!-- Example: Joblib Error thrown when calling fit on VietorisRipsPersistence

-->

Variable `q` in `wasserstein` bindings is of type [int](https://github.com/giotto-ai/giotto-tda/blob/adf... | closed | 2020-03-04T12:45:07Z | 2020-03-06T12:24:45Z | https://github.com/giotto-ai/giotto-tda/issues/350 | [] | MonkeyBreaker | 0 |

axnsan12/drf-yasg | django | 316 | sorting elements | Now elements are sorted by URL. method with tag auth and url 'other'

comes after the method with url 'next' without tag,

How to sort the list redoc-first element with tags, sorted by tags, elements without tags are located after tags?

'other' with tag auth comes before methods without tags. | closed | 2019-02-15T15:08:18Z | 2022-02-03T13:43:53Z | https://github.com/axnsan12/drf-yasg/issues/316 | [] | fenist19 | 3 |

streamlit/streamlit | machine-learning | 10,128 | Pre set selections for `st.dataframe` | ### Checklist

- [X] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar feature requests.

- [X] I added a descriptive title and summary to this issue.

### Summary

Is there any way to set selection on dataframe or data_editor ?

### Why?

_No response_

### How?

_No respo... | open | 2025-01-08T10:19:34Z | 2025-02-12T04:20:14Z | https://github.com/streamlit/streamlit/issues/10128 | [

"type:enhancement",

"feature:st.dataframe"

] | StarDustEins | 6 |

piskvorky/gensim | machine-learning | 3,149 | Python int too large to convert to C long | import cv2

import numpy as np

import matplotlib.pyplot as plt

def make_coordinates(image, line_parameters):

slope, intercept = line_parameters

y1 = image.shape[0]

y2 = int(y1 * (3/5))

x1 = int((y1 - intercept)/slope)

x2 = int((y2 - intercept)/slope)

return np.array([x1, y1, x2, y2])

... | closed | 2021-05-16T10:11:08Z | 2021-05-16T10:20:44Z | https://github.com/piskvorky/gensim/issues/3149 | [] | aayushchimurkar7m | 1 |

JoeanAmier/TikTokDownloader | api | 227 | 作品有多个UID可能会出问题 | ERROR: type should be string, got " \r\nhttps://github.com/JoeanAmier/TikTokDownloader/blob/master/src/extract/extractor.py#L508\r\n\r\n默认使用**最后一个作品**的UID,但是某些情况下,一个账号会采集到多个UID,因此概率出现问题,获取到错误的UID,可使用当前作品中最多的UID\r\n\r\n### 一个作品多个UID的情况\r\n\r\n\r\n### 最多的情况下可累计7 、8 个UID,这是其中的一个示例\r\n\r\n\r\n\r\n\r\n```python\r\n\r\n uid_lst = [item['author']['uid'] for item in data]\r\n\r\n from collections import Counter\r\n counts = Counter(uid_lst)\r\n\r\n # 获取出现次数最多的元素及其索引\r\n most_common_element = counts.most_common(1)[0][0]\r\n most_common_index = uid_lst.index(most_common_element)\r\n true_data = data[most_common_index]\r\n\r\n self.log.info(f\"当前账号UID列表: { set(uid_lst) } , 最多的UID:{most_common_element}\")\r\n\r\n item = self.generate_data_object(true_data)\r\n```" | open | 2024-06-04T02:37:56Z | 2024-06-09T15:44:30Z | https://github.com/JoeanAmier/TikTokDownloader/issues/227 | [

"功能异常(bug)",

"确认问题(confirm)",

"处理完成(complete)"

] | sansi98h | 3 |

marimo-team/marimo | data-science | 4,150 | [Newbie Feedback] First glance at marimo | Great:

- youtube guides

- single python file

- can change column width easily

- dark mode

- AI integration

- scratchpad!

- detects changes made in VSCode

- lightning fast answers on github

Could be better:

- strange that auto-save isn't on by default

- scratchpad different for each file. Often I work on many files at... | closed | 2025-03-18T16:01:42Z | 2025-03-18T21:52:27Z | https://github.com/marimo-team/marimo/issues/4150 | [] | dentroai | 7 |

plotly/dash | plotly | 2,296 | [BUG] Component as Props inserted by callbacks not found with dynamic inputs/outputs callbacks. | **Describe your context**

```

dash 2.6.2

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

```

**Describe the bug**

When the ` component properties` be updated, the added components won't trigger callback, only the initial components in the `component propertie... | closed | 2022-11-02T01:36:28Z | 2022-12-05T17:07:55Z | https://github.com/plotly/dash/issues/2296 | [

"bug"

] | CNFeffery | 1 |

albumentations-team/albumentations | deep-learning | 2,340 | RandomFog behaves differently in version 2.0.4 compared to previous versions | ## Describe the bug

The result of RandomFog in version 1.4.6 is completely different from the newest version 2.0.4

### To Reproduce

Steps to reproduce the behavior:

1. Environment (e.g., OS, Python version, Albumentations version, etc.)

OS: MacOS Sonoma 14.2 (23C64)

Python: 3.10.16

Albumentations version: 1.4.6 and... | closed | 2025-02-13T03:39:28Z | 2025-02-26T21:20:56Z | https://github.com/albumentations-team/albumentations/issues/2340 | [

"bug"

] | huuquan1994 | 5 |

mwaskom/seaborn | data-visualization | 2,748 | `histplot` isn't showing bar for a bin in certain distributions | For an example like this:

```

import numpy as np

import seaborn as sns

np.random.seed(42)

ZEROS=700

ONES=30000

dummy_data = np.hstack([np.zeros(ZEROS), 1 - (np.random.binomial(1000, 0.1, ONES) / 1000)])

sns.histplot(dummy_data)

```

We get a plot without any visible bar at x==0:

to do number plate recognition/ANPR?

Can we make the OCR capability faster by stripping out other languages or is this controlled by passing it the language as a parameter? I think this has great potential for number plate recognition... | closed | 2021-01-18T04:32:41Z | 2021-07-02T08:52:49Z | https://github.com/JaidedAI/EasyOCR/issues/351 | [] | Stevew7777 | 3 |

mljar/mljar-supervised | scikit-learn | 713 | Please document all preprocessing methods | Digging into the code, it looks like mljar tries various reprocessing techniques on the data, however none of the documentation covers what it attempts. | open | 2024-03-06T22:01:05Z | 2024-03-11T18:15:09Z | https://github.com/mljar/mljar-supervised/issues/713 | [

"docs"

] | gdevenyi | 4 |

davidsandberg/facenet | tensorflow | 477 | Windows Platform | Will this work on windows? | closed | 2017-10-08T07:42:58Z | 2018-04-01T21:08:04Z | https://github.com/davidsandberg/facenet/issues/477 | [] | elnasdgreat | 1 |

gee-community/geemap | streamlit | 277 | Python out of memory, kernel get restarted | <!-- Please search existing issues to avoid creating duplicates. -->

### Environment Information

- geemap version: 0.8.8

- Python version: 3.7

- Operating System: Ubuntu 20.04

### Description

I am trying to use open street shape files to load into earth engine map, but notebook kernel get restarted af... | closed | 2021-01-28T10:54:43Z | 2021-01-28T15:54:10Z | https://github.com/gee-community/geemap/issues/277 | [

"bug"

] | MISSEY | 1 |

holoviz/panel | jupyter | 6,870 | New widget combining Multichoice and AutocompleteInput | I would like to allow arbitrary text into Multichoice, along with the given option. I.e. the user is able to introduce new elements (which however do not enter the options list) always of the same type.

In the end I would like to have maybe an extension of AutocompleteInput into a list. IPywidgets already implement ... | closed | 2024-05-28T17:33:04Z | 2024-06-27T22:17:07Z | https://github.com/holoviz/panel/issues/6870 | [

"duplicate"

] | paoloalba | 3 |

bmoscon/cryptofeed | asyncio | 505 | is CANDLES interval only available as 1m? | I tried binance futures candles but I see only 1m intervals passing by, is it possible to have like 5m or do we need to compose it ourselves? | closed | 2021-05-30T14:15:25Z | 2021-05-30T15:55:03Z | https://github.com/bmoscon/cryptofeed/issues/505 | [

"question"

] | gigitalz | 3 |

wagtail/wagtail | django | 12,300 | JS slugify (with unicode enabled) leaves invalid characters in the slug | ### Issue Summary

The JS `slugify` function (used when cleaning a manually-entered slug) fails to strip out certain spacer/combining characters that are disallowed by Django slug validation.

### Steps to Reproduce

1. On a site with `WAGTAIL_ALLOW_UNICODE_SLUGS = True`, create a new page

2. Paste the text "উইক... | closed | 2024-09-11T11:26:40Z | 2024-09-19T14:22:12Z | https://github.com/wagtail/wagtail/issues/12300 | [

"type:Bug",

"good first issue"

] | gasman | 6 |

davidsandberg/facenet | computer-vision | 508 | Real time face recognition | Hello,

I am trying to understand how to use facenet for real time face recognition from the video stream.

I followed the steps from the wiki page Classifier training of inception resnet v1, but I am not sure how to use the results further and if I performed all the steps correctly.

1. I ran alignment and extrac... | open | 2017-10-30T09:16:56Z | 2019-05-11T11:46:23Z | https://github.com/davidsandberg/facenet/issues/508 | [] | mia-petkovic | 8 |

tensorpack/tensorpack | tensorflow | 903 | How many frames in one episode when evaluating DDQN? How to set the number? | Hi, nice to meet you.

I notice that in readme it is said that "An episode is limited to 10000 steps." However, in code ,i found

############################

env = AtariPlayer(ROM_FILE, frame_skip=ACTION_REPEAT, viz=viz,

live_lost_as_eoe=train, max_num_frames=60000)

######################... | closed | 2018-09-21T07:53:27Z | 2018-09-21T15:09:35Z | https://github.com/tensorpack/tensorpack/issues/903 | [

"examples"

] | silentobservers | 1 |

allure-framework/allure-python | pytest | 772 | allure-pytest 2.13.2 will merge parameterized use cases | #### Bug Description

When using @pytest.mark.parametrize() parametrization, the use cases are merged in the generated allure report, and it is not possible to generate an execution result record for each parametrized use case.

#### Steps to reproduce

action uses the `password_reset` permission from the settings:

``` py

def get_permissions(self):

...

elif self.action == "resend_activation... | open | 2023-09-07T12:28:54Z | 2024-12-11T09:27:00Z | https://github.com/sunscrapers/djoser/issues/761 | [

"help wanted"

] | 73VW | 2 |

scikit-optimize/scikit-optimize | scikit-learn | 1,010 | GPR with noise .fit bug | ## Bug description

Calling multiple times .fit(X, y) whenever self.noise exists causes self.kernel to add new WhiteKernel noise.

## Steps to reproduce

```python

from skopt.learning.gaussian_process import gpr, kernels #gpr is the package with the bug

import numpy as np

np.random.seed(0) # for reproduction

g... | open | 2021-03-16T11:41:00Z | 2021-03-16T23:48:07Z | https://github.com/scikit-optimize/scikit-optimize/issues/1010 | [] | PeraltaFede | 1 |

plotly/plotly.py | plotly | 4,127 | Plotly and tqdm in the same notebook cell causes blank plot | In a colab notebook, if a tqdm progress bar is being output in the same cell as a plotly plot, then rendering of the plotly plot fails. See [MRE colab notebook](https://colab.research.google.com/drive/1zZYwqRLuwSCvbg851g4szR_VxyiXoShT?usp=sharing).

In the chrome developer console I see "Uncaught ReferenceError: Plo... | open | 2023-03-28T12:49:36Z | 2024-09-28T18:34:00Z | https://github.com/plotly/plotly.py/issues/4127 | [

"bug",

"P3"

] | alimanfoo | 1 |

chiphuyen/stanford-tensorflow-tutorials | nlp | 14 | Encoding problem in "11_char_rnn_gist.py" example | Hi, I'm reading the code of `11_char_rnn_gist.py`, and I found the following problem:

In line 57, we encode the sequence `seq` with one-hot code with `depth=len(vocab)`.

However, `seq` is generated with `[vocab.index(x) + 1 for x in text if x in vocab]`, so the code of characters is between `1` to `len(vocab)`, ... | open | 2017-04-11T14:34:23Z | 2017-07-11T17:42:40Z | https://github.com/chiphuyen/stanford-tensorflow-tutorials/issues/14 | [] | alanwang93 | 1 |

TheAlgorithms/Python | python | 11,937 | would like to add travelling salesman problem under dynamic_programming | ### Feature description

adding of travelling salesman problem to learn and understand concepts and algorithms of dynamic programming | closed | 2024-10-10T14:13:52Z | 2024-10-10T14:15:40Z | https://github.com/TheAlgorithms/Python/issues/11937 | [

"enhancement"

] | OmMahajan29 | 0 |

PaddlePaddle/PaddleNLP | nlp | 9,693 | [Question]: 昇腾910B3,Paddle UIE 正常推理,但是训练过程loss=NaN | ### 请提出你的问题

PaddlePaddle==2.6.1

[PaddleCustomDevice](https://github.com/PaddlePaddle/PaddleCustomDevice)==2.6 编译安装

```

λ 5926b66120ca /app/output/PaddleNLP-2.6.1 python ./applications/information_extraction/text/finetune.py \

> --device npu \

--export_model_dir ./checkpoint/model_best \

--overwrite_ou... | closed | 2024-12-25T07:35:38Z | 2025-03-18T03:22:00Z | https://github.com/PaddlePaddle/PaddleNLP/issues/9693 | [

"question",

"stale"

] | modderBUG | 3 |

roboflow/supervision | deep-learning | 1,610 | How to define image size (dimensions) for detection inference while using sv.Detections.from_ultralytics() for images/videos? | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Question

How can I define specific image sizes while running inference with supervision ?

Whenever I am using `sv.Detections.from_ultralytics()` metho... | closed | 2024-10-20T21:04:17Z | 2024-10-21T02:29:57Z | https://github.com/roboflow/supervision/issues/1610 | [

"question"

] | tonmoy-TS | 1 |

MaartenGr/BERTopic | nlp | 2,114 | reduce_outliers and update_topics remove stop_words and ngram_range effects | ### Have you searched existing issues? 🔎

- [X] I have searched and found no existing issues

### Desribe the bug

After running `reduce_outliers` and `update_topics`, the effects of all specifications used in `vectorizer_model` (stop words, ngram) are gone. The results' representation words only show single wo... | open | 2024-08-06T17:36:32Z | 2024-08-07T09:10:17Z | https://github.com/MaartenGr/BERTopic/issues/2114 | [

"bug"

] | shj37 | 1 |

FlareSolverr/FlareSolverr | api | 953 | Unable to request using the first cookie, each request needs to be bypassed again | ### Have you checked our README?

- [X] I have checked the README

### Have you followed our Troubleshooting?

- [X] I have followed your Troubleshooting

### Is there already an issue for your problem?

- [X] I have checked older issues, open and closed

### Have you checked the discussions?

- [X] I hav... | closed | 2023-11-09T03:28:25Z | 2023-11-26T12:52:00Z | https://github.com/FlareSolverr/FlareSolverr/issues/953 | [

"more information needed"

] | aini123152011 | 1 |

pydantic/pydantic-settings | pydantic | 434 | Clarification Needed on pyproject_toml_table_header Logic in Pydantic Settings | # Issue Context

I tried to use pydantic-settings for project configuration management, but I couldn't understand why the pyproject_toml_table_header is restricted to a single block.

```

self.toml_table_header: tuple[str, ...] = settings_cls.model_config.get(

'pyproject_toml_table_header', ('... | closed | 2024-10-01T16:38:32Z | 2025-01-09T09:57:07Z | https://github.com/pydantic/pydantic-settings/issues/434 | [

"feature request"

] | py-mu | 9 |

vitalik/django-ninja | django | 869 | import error when using Ninja in Docker | I have an existing ,large Django application running in a Docker container, and added Ninja.

however, after restarting the container, I get an import error: ModuleNotFoundError: No module named 'ninja'

But then after making a change, Django reloads (while the container continues) and it gets imported just fine.

S... | closed | 2023-10-03T06:22:17Z | 2023-10-03T08:42:06Z | https://github.com/vitalik/django-ninja/issues/869 | [] | AlexDcm | 1 |

Nemo2011/bilibili-api | api | 831 | [漏洞] b站的一个域名撤掉了,导致probe_url检测时报错,无法上传文件 | **模块版本:"16.3.0"

**模块路径:** `bilibili_api.video_uploader.py`

**报错信息:**

```

[2024-10-17 21:29:35,235: WARNING/ForkPoolWorker-2] File "/usr/local/lib/python3.11/site-packages/bilibili_api/video_uploader.py", line 91, in _probe

httpx.post(f'https:{line["probe_url"]}', data=data, timeout=timeout)

```

**报错代码:... | open | 2024-10-18T01:48:10Z | 2024-10-23T02:25:40Z | https://github.com/Nemo2011/bilibili-api/issues/831 | [

"bug"

] | liaozd | 2 |

manrajgrover/halo | jupyter | 14 | Debug log on terminal | Hi,

when using Halo with boto3, I have a strange behaviour, all the debug log from boto are displayed

MacOS/Python3.6

halo (0.0.5)

boto3 (1.4.4)

Thanks | closed | 2017-10-03T13:21:14Z | 2017-10-06T16:37:45Z | https://github.com/manrajgrover/halo/issues/14 | [

"bug",

"up-for-grabs",

"hacktoberfest"

] | antoinerrr | 3 |

0b01001001/spectree | pydantic | 350 | bug: SecuritySchemeData root_validator conflicts with alias | ### Describe the bug

Impossible to create SecuritySchemeData with field_in field because of the alias="in" field on the pydantic field_in field

### To Reproduce

```

from spectree.models import InType, SecureType, SecuritySchemeData

if __name__ == "__main__":

foo = SecuritySchemeData(

type=Sec... | closed | 2023-10-03T11:30:12Z | 2024-10-30T09:24:55Z | https://github.com/0b01001001/spectree/issues/350 | [

"bug"

] | jelmerk | 2 |

aleju/imgaug | machine-learning | 743 | Would ElasticTransformation add a function only process keypoints? | same as title.

I have not an image, just keypoints.

I can use only keypoints aug in PiecewiseAffine, and i rewrite _augment_keypoints_by_samples in PiecewiseAffine like https://github.com/aleju/imgaug/issues/621

so i want to show the difference before ElasticTransformation and after. Do you have any advices? | open | 2021-02-01T07:52:36Z | 2021-02-01T07:52:36Z | https://github.com/aleju/imgaug/issues/743 | [] | merria28 | 0 |

nteract/papermill | jupyter | 119 | Review third party package for parameterizing notebooks (Python 3) | Mostly a note to self, as I'll be traveling for a few weeks and would like to check this out: https://github.com/hz-inova/run_jnb | closed | 2018-02-12T16:53:54Z | 2018-08-03T23:10:50Z | https://github.com/nteract/papermill/issues/119 | [] | willingc | 0 |

aleju/imgaug | machine-learning | 730 | 0.5.0 release? | Hi, just wondering when the next release will come out? I'm seeing a very intermittent, hard to reproduce bug in v0.4.0 (see below) that looks like it's probably been fixed in the current source.

Bug stack trace (snipped to last few lines) is below. Happy to post a separate issue for it, but probably unnecessary whe... | open | 2020-11-03T22:21:57Z | 2020-11-08T19:50:25Z | https://github.com/aleju/imgaug/issues/730 | [] | OliverColeman | 1 |

JaidedAI/EasyOCR | machine-learning | 1,109 | Any advice on how to improve performance on custom datasets? | The final goal is to increase the recognition rate of Korean and English in Korean, English, Chinese, etc. In the case of the character area, I know that it is recognized in units of sentences. Which do you think will be a better dataset for training, word units or sentence units? Also, is it possible to learn with a K... | open | 2023-08-10T04:08:52Z | 2023-09-25T22:15:22Z | https://github.com/JaidedAI/EasyOCR/issues/1109 | [] | Seoung-wook | 1 |

gevent/gevent | asyncio | 1,489 | Random crash on Windows when gevent.idle() and threadpool used | gevent version: 1.4.0

Python version: cPython 3.7.3 downloaded from python.org

Operating System: Win10

### Description:

The code bellow crashes on every second run.

Output:

```

Run #0 threadpool object num: 12

Run #1 threadpool object num: 13

Run #2 threadpool object num: 13

Run #3 threadpool object num... | closed | 2019-12-07T20:43:31Z | 2019-12-12T12:58:35Z | https://github.com/gevent/gevent/issues/1489 | [

"Type: Bug",

"Loop: libuv"

] | HelloZeroNet | 4 |

fastapi/sqlmodel | fastapi | 271 | No overload variant of "select" matches argument types | ### First Check

- [X] I added a very descriptive title to this issue.

- [X] I used the GitHub search to find a similar issue and didn't find it.

- [X] I searched the SQLModel documentation, with the integrated search.

- [X] I already searched in Google "How to X in SQLModel" and didn't find any information.

- [ ... | open | 2022-03-15T15:05:50Z | 2024-09-05T22:39:53Z | https://github.com/fastapi/sqlmodel/issues/271 | [

"question"

] | StefanBrand | 4 |

dynaconf/dynaconf | django | 922 | [bug] Integer like array is not cast to string even if said so. | **Describe the bug**

I am trying to have a number only string as a value in the dynaconf setting object, but the parser only treats it as a number.

**To Reproduce**

Steps to reproduce the behavior:

1. Having the following folder structure

<!-- Describe or use the command `$ tree -v` and paste below -->

<d... | closed | 2023-04-19T15:33:55Z | 2023-04-27T18:10:20Z | https://github.com/dynaconf/dynaconf/issues/922 | [

"bug"

] | FeryET | 3 |

rthalley/dnspython | asyncio | 748 | dns.resolver.NXDOMAIN problem | hello. The cname of the subdomain "images.mytech.walmart.com" appears to be "mytechcmsprod.azureedge.net". but "images.mytech.walmart.com" not has an A dns record. if the subdomain address does not have an "A" dns record, I wrote a function that tries to print the "CNAME" dns record to the screen.

```

def test_dns(... | closed | 2022-01-03T17:48:51Z | 2022-01-03T23:02:58Z | https://github.com/rthalley/dnspython/issues/748 | [] | Phoenix1112 | 8 |

521xueweihan/HelloGitHub | python | 1,857 | C++ | ## 项目推荐

- 项目地址:仅收录 GitHub 的开源项目,请填写 GitHub 的项目地址

- 类别:请从中选择(C、C#、C++、CSS、Go、Java、JS、Kotlin、Objective-C、PHP、Python、Ruby、Swift、其它、书籍、机器学习)

- 项目后续更新计划:

- 项目描述:

- 必写:这是个什么项目、能用来干什么、有什么特点或解决了什么痛点

- 可选:适用于什么场景、能够让初学者学到什么

- 描述长度(不包含示例代码): 10 - 256 个字符

- 推荐理由:令人眼前一亮的点是什么?解决了什么痛点?

- 示例代码:(可选)长度:1-20 行

... | closed | 2021-08-28T12:43:38Z | 2021-08-28T12:43:43Z | https://github.com/521xueweihan/HelloGitHub/issues/1857 | [

"恶意issue"

] | zcfloat | 1 |

ageitgey/face_recognition | machine-learning | 1,019 | ImportError: No module named face_recognition | * face_recognition version:

* Python version:

* Operating System:

### Description

I am trying to get started with face_recognition.

### What I Did

Running mac OS

Installed Anaconda - running python 3.7

pip 19.0.3 from /anaconda3/lib/python3.7/site-packages/pip (python 3.7)

pip installed face_recogniti... | open | 2020-01-04T11:58:11Z | 2022-11-01T07:43:14Z | https://github.com/ageitgey/face_recognition/issues/1019 | [] | leeadh | 6 |

plotly/dash | plotly | 2,447 | [BUG] Content-type of image type is application/json | **Context**

I'm using Dash 2.8.0 with Python 3.8.

I have a multi-page app using the default `use_pages=True` setup with pages defined in the `pages/` directory.

I also have a variety of static assets in the `assets/` directory. The folder structure is as follows:

```

assets/

- styles.css

- typography.cs... | closed | 2023-03-07T09:38:10Z | 2024-07-24T17:38:04Z | https://github.com/plotly/dash/issues/2447 | [] | rsewell97 | 4 |

iperov/DeepFaceLab | machine-learning | 857 | No preview appearing | no preview is showing. I have tried training mode and in every training mode no preview showed.

this error message also appears even though i am using H64. (specs: GTX1050 2gb, intel(R) Xeon(R), 12gb ram)

Starting. Press "Enter" to stop training and save model.

Error: OOM when allocating tensor with shape[3,3,5... | closed | 2020-08-10T14:03:29Z | 2023-06-11T07:42:19Z | https://github.com/iperov/DeepFaceLab/issues/857 | [] | JeezLoveJazzMusic | 3 |

ultralytics/yolov5 | pytorch | 12,522 | Inference Resolutions,Parameters and TensorRT Usage in 1280x1280 models | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

Hello. First of all, I have read the issues and documentation related to this topic. Alth... | closed | 2023-12-18T12:30:53Z | 2024-10-20T19:34:45Z | https://github.com/ultralytics/yolov5/issues/12522 | [

"question"

] | harunalperentoktas | 6 |

blb-ventures/strawberry-django-plus | graphql | 14 | Error when using relay module without Django | I'm interested in using the `relay` module in a non-Django project (keeping my eyes on how https://github.com/strawberry-graphql/strawberry/issues/1573 progresses).

I copied the `relay` and `aio` models out of this package and tried running your example on https://github.com/strawberry-graphql/strawberry/issues/157.... | closed | 2022-02-23T23:35:55Z | 2022-02-26T18:36:34Z | https://github.com/blb-ventures/strawberry-django-plus/issues/14 | [] | sloria | 3 |

dask/dask | numpy | 10,931 | combine_first: conditional type-cast to rhs's dtype | - XREF https://github.com/pandas-dev/pandas/pull/52532

`test_combine_first` is flaky. This is because the test randomly generates the test data, and if a chunk ends up being full of NaNs it will improperly trigger a FutureWarning in pandas that should only trigger when the whole Series is full of NaNs.

Reproducer... | open | 2024-02-16T12:34:12Z | 2024-02-16T16:30:06Z | https://github.com/dask/dask/issues/10931 | [

"dataframe",

"p3"

] | crusaderky | 6 |

mouredev/Hello-Python | fastapi | 568 | 网赌被黑的原因及解决方案 | 出黑咨询威:zdn200 飞机:@lc15688

切记,只要您赢钱了,遇到任何不给你提现的借口,基本表明您被黑了。

如果你出现以下这些情况,说明你已经被黑了:↓ ↓

【1】限制你账号的部分功能!出款和入款端口关闭,以及让你充值解开取款通道等等!

【2】客服找一些借口说什么系统维护,风控审核等等借口,就是不让取款!

【网赌被黑怎么办】【网赌赢了平台不给出款】【系统更新】【取款失败】【注单异常】【网络波动】【提交失败】

【单注为回归】【单注未更新】【出款通道维护】 【打双倍流水】 【充值同等的金额】

关于网上网赌娱乐平台赢钱了各种借口不给出款最新解决方法

切记,只要你赢钱了,遇到任何不给你提现的借口,基本表明你已经被黑了。... | closed | 2025-03-21T05:46:55Z | 2025-03-21T08:03:57Z | https://github.com/mouredev/Hello-Python/issues/568 | [] | zdn200dali | 0 |

jina-ai/clip-as-service | pytorch | 494 | Please add option to return tokenized text via http route /encode or a dedicated route /tokenize | Please add option to return tokenized text via http route /encode or a dedicated route /tokenize

[x] Are you running the latest `bert-as-service`?

[x] Did you follow [the installation](https://github.com/hanxiao/bert-as-service#install) and [the usage](https://github.com/hanxiao/bert-as-service#usage) instructions ... | open | 2019-12-19T18:49:57Z | 2019-12-19T18:52:36Z | https://github.com/jina-ai/clip-as-service/issues/494 | [] | michael-newsrx | 0 |

jazzband/django-oauth-toolkit | django | 976 | Ping Federate and Django Oauth Toolkit | <!-- What is your question? -->

Hello, I am trying to get the Django Oauth toolkit to work with my company's Ping Federate basic client credentials, but I am so lost. I want my users to sign in through our corporate sign in, and then grab their username and then take credentials from our admin.contrib

Am I in t... | closed | 2021-05-04T21:18:28Z | 2022-07-25T13:12:32Z | https://github.com/jazzband/django-oauth-toolkit/issues/976 | [

"question"

] | TimothyMalahy | 5 |

jupyterhub/zero-to-jupyterhub-k8s | jupyter | 3,054 | Not all resources support annotations configuration | ### Proposed change

Introduce `annotations` in Hub & CHP Deployment

### Alternative options

Introduce global `extraAnnotations ` for all possible generated resources

### Who would use this feature?

Would be useful for different k8s management solutions that use annotations for searching resources. | closed | 2023-03-09T11:20:39Z | 2023-03-12T13:16:33Z | https://github.com/jupyterhub/zero-to-jupyterhub-k8s/issues/3054 | [

"duplicate"

] | dev-dsp | 4 |

hatchet-dev/hatchet | fastapi | 785 | docs: timeouts page should say scheduling timeouts are for workflows, not steps | See https://github.com/hatchet-dev/hatchet/blame/69a7bc3b7553b324a51f72abb71918ef17b28d5c/frontend/docs/pages/home/features/timeouts.mdx#L9 | closed | 2024-08-14T12:58:29Z | 2025-02-15T00:20:08Z | https://github.com/hatchet-dev/hatchet/issues/785 | [] | wodow | 0 |

Nemo2011/bilibili-api | api | 597 | 函数名错误 | https://github.com/Nemo2011/bilibili-api/blob/11c33b16003f3111684421fb9ad4cbea5833ef18/bilibili_api/hot.py#L31

https://github.com/Nemo2011/bilibili-api/blob/11c33b16003f3111684421fb9ad4cbea5833ef18/bilibili_api/rank.py#L235

`weakly`应该更名为`weekly` | closed | 2023-12-15T20:06:58Z | 2023-12-15T23:35:30Z | https://github.com/Nemo2011/bilibili-api/issues/597 | [

"bug"

] | kaixinol | 1 |

keras-team/keras | tensorflow | 20,143 | TensorBoard Callback never updates internal step counter | Using the TensorBoard callback to generate time series data is currently not possible, since the internal `self._train_step` counter is never updated. Instead, looking at the time series tab only ever yields a single data point instead of a graph. This is caused by the fact that every call to `self.summary.scalar(...)`... | open | 2024-08-21T15:26:20Z | 2024-11-26T16:42:40Z | https://github.com/keras-team/keras/issues/20143 | [

"stat:awaiting keras-eng",

"type:Bug"

] | LarsKue | 5 |

apache/airflow | machine-learning | 47,778 | [Regression]Missing Asset Alias dependency graph | ### Apache Airflow version

3.0.0

### If "Other Airflow 2 version" selected, which one?

_No response_

### What happened?

In the current UI implementation, there is no way to see Asset Alias dependencies with DAG in Airflow 2.10.5, we were able to see that in the dependency graph.

**AF2**

<img width="694" alt="Ima... | closed | 2025-03-14T10:47:15Z | 2025-03-18T22:33:26Z | https://github.com/apache/airflow/issues/47778 | [

"kind:bug",

"priority:high",

"area:core",

"area:UI",

"area:datasets",

"affected_version:3.0.0beta"

] | vatsrahul1001 | 3 |

computationalmodelling/nbval | pytest | 180 | new pytest version throws deprecation warning | Earlier today, pytest v7.0.0 was released.

With this new pytest version installed, running `pytest --nbval my_notebook.ipynb` throws a deprecation warning:

```text

E pytest.PytestRemovedIn8Warning: The (fspath: py.path.local) argument to IPyNbFile is deprecated. Please use the (path: pathlib.Path) argument instead... | closed | 2022-02-04T20:39:18Z | 2023-02-17T22:26:02Z | https://github.com/computationalmodelling/nbval/issues/180 | [] | Jasha10 | 3 |

polakowo/vectorbt | data-visualization | 76 | portfolio.iloc crash if num_tests is too big | Hi,

I test the PortfolioOptimization.ipynb, always crash at `print(rb_portfolio.iloc[rb_best_asset_group].stats())`,

so I clone it to pycharm, get error `Process finished with exit code -1073741819 (0xC0000005)`

and is will crash in `portfolio.base._indexing_func`

```python

new_order_records = self._order... | closed | 2020-12-31T01:26:28Z | 2021-01-02T10:48:10Z | https://github.com/polakowo/vectorbt/issues/76 | [] | wukan1986 | 10 |

CorentinJ/Real-Time-Voice-Cloning | tensorflow | 842 | SV-EER calculation | Hi,

I'm trying to do the evaluation on test datasets. How can I calculate SV-EER for synthesizer. I did following steps, correct me if I'm wrong.

- run synthesizer on test data get melspectrums.

- did inverse melspectrum to get wav data

- get embeds for the wav data

- calculate similarity matrix and EER

Tha... | closed | 2021-09-09T08:44:12Z | 2021-09-14T20:42:00Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/842 | [] | cnu1439 | 1 |

keras-team/autokeras | tensorflow | 1,650 | Big CSV files and reducing RAM requirement | I have about 80-160 GB CSV files (2000 - 10 000 features, including string, floats and ints) I am trying to run with Autokeras. However, after .fit() I see first RAM get filles (128 Gb) and then the swap file get used (1 TB). Finally, it crashes. RTX 3090, 128 GB RAM, 1 TB swap.

What are the ways to reduce RAM size... | open | 2021-11-21T10:54:45Z | 2021-11-26T19:03:31Z | https://github.com/keras-team/autokeras/issues/1650 | [] | torronen | 2 |

mwaskom/seaborn | data-science | 3,339 | Parameter `layout_rect` for PairGrid() | In some situations axis labels disappear outside the figure when using PairGrid().

Like this:

I think, this could be fixed by adding a parameter `layout_rect` (in the fashion of the existing parameter `l... | closed | 2023-04-24T17:04:13Z | 2023-04-25T10:54:08Z | https://github.com/mwaskom/seaborn/issues/3339 | [] | leoluecken | 2 |

pyeve/eve | flask | 621 | Cannot set auth_field | Hello @nicolaiarocci ,

Thank you so much for creating this project. I'm having a blast tinkering with it.

However I'm having an issue regarding the `auth_field`

I've created a `users` collection, and I want my users to be able to update their email, but only their account. However the only author reference I have in ... | closed | 2015-05-06T16:59:17Z | 2015-05-07T08:21:48Z | https://github.com/pyeve/eve/issues/621 | [] | hbarroso | 3 |

kornia/kornia | computer-vision | 2,910 | AttributeError: 'list' object has no attribute 'ndim' with RandomTransplantation and batch with keys | ### Describe the bug

I can run:

```python

import torch

import kornia.augmentation as K

from kornia.augmentation.container import AugmentationSequential

# Example batch with correct shapes

batch = {

"image": torch.randn(10, 3, 64, 64), # [N, C, H, W]

"mask": torch.randint(0, 2, (10, 64, 64)) # [N, H... | closed | 2024-05-16T14:03:08Z | 2024-05-17T13:50:50Z | https://github.com/kornia/kornia/issues/2910 | [

"help wanted"

] | robmarkcole | 2 |

nltk/nltk | nlp | 2,371 | Replace nltk.decorators.py with decorator library | From @Copper-Head,

Can we get rid of `decorators.py` by using the [decorator](https://pypi.org/project/decorator/) library? | open | 2019-08-19T01:56:13Z | 2019-08-19T01:56:34Z | https://github.com/nltk/nltk/issues/2371 | [

"nice idea",

"pythonic"

] | alvations | 0 |

deezer/spleeter | tensorflow | 868 | Nevermind, delete the issue | Nevermind, delete the issue | closed | 2023-08-25T02:04:30Z | 2023-08-26T21:54:32Z | https://github.com/deezer/spleeter/issues/868 | [

"question"

] | otro678 | 0 |

browser-use/browser-use | python | 875 | step endless loop | ### Bug Description

use gpt-4o,always endless loop,but Qwen2.5-72B-Instruct success

### Reproduction Steps

version:

python:3.12.9

browser-use:0.1.40

langchain-openai:0.3.1

langchain-core:0.3.37

openai:1.64.0

playwright:1.50.0

... | open | 2025-02-26T06:45:32Z | 2025-02-26T10:12:59Z | https://github.com/browser-use/browser-use/issues/875 | [

"bug"

] | shukuang-1 | 4 |

MaartenGr/BERTopic | nlp | 1,219 | Using topics as a predictor in regression or classification | Hi!

I am using BERTopic to 'cluster' descriptions of online orders. These descriptions can range between a single word ('shoes') or more lengthy ('5 boxes of local herbal tea'). I am wondering if I can use the output of BERTopic, the topics, as a feature/predictor, in a classification or regression model.

Are th... | closed | 2023-04-27T17:55:30Z | 2023-05-23T08:27:42Z | https://github.com/MaartenGr/BERTopic/issues/1219 | [] | corabola | 2 |

flasgger/flasgger | rest-api | 35 | Change "no content" response from 204 status | Hi,

is it possible to change the response body when the code is 204?

The only way that I found it was change the javascript code.

| closed | 2016-10-14T19:27:35Z | 2017-03-24T20:19:49Z | https://github.com/flasgger/flasgger/issues/35 | [

"enhancement",

"help wanted"

] | andryw | 1 |

pytest-dev/pytest-selenium | pytest | 32 | Selenium plugin listed twice | Hi,

When using pytest-selenium and running my test, I see this at the start:

``` sh

platform darwin -- Python 3.4.3 -- py-1.4.30 -- pytest-2.7.2

rootdir: /bokeh/bokeh, inifile: setup.cfg

plugins: html, selenium, selenium, variables

collected 410 items

```

I think it's disconcerting that the plugins line reads: `plu... | closed | 2015-09-10T23:39:05Z | 2015-09-11T20:54:37Z | https://github.com/pytest-dev/pytest-selenium/issues/32 | [] | birdsarah | 3 |

matterport/Mask_RCNN | tensorflow | 2,389 | Problem loading model using keras.models.load_model() | Hi. I am trying to make a program using the already pre-trained model mask_rcnn_balloons.h5, but get a problem when loadning the file.

how I load the model:

path = "E:/Dokumenter/Ikt450/Assignments/7/models/mask_rcnn_balloon.h5"

model = keras.models.load_model(path)

error:

Traceback (most recent call last):

... | open | 2020-10-13T07:14:32Z | 2020-10-13T07:14:32Z | https://github.com/matterport/Mask_RCNN/issues/2389 | [] | Mariussuv | 0 |

polarsource/polar | fastapi | 5,158 | Checkout: Store `attribution_id` & `utm_*` query params automatically in `metadata` | Allow setting attribution via query params for the checkout that we store as an `attribution` object on checkout and pass to the order(s) & subscription(s). Useful for affiliate partners and sellers in general to use in combination with built-in efforts, e.g checkout links.

Rough idea: We would support `attribution_id... | open | 2025-03-04T16:30:15Z | 2025-03-13T08:15:59Z | https://github.com/polarsource/polar/issues/5158 | [

"feature",

"changelog"

] | birkjernstrom | 0 |

PokemonGoF/PokemonGo-Bot | automation | 5,824 | New Pokemon Hunter | ### Short Description

The sniping functionality doesn't really work in new API (0.45) because of the new speed limitations. I propose the following idea for sniping/hunting (rare) pokemons.

### Possible solution

Create a new task (modified MoveToMapPokemon/PokemonHunter?) with following functionality:

1. Get JSON... | open | 2016-11-19T23:49:18Z | 2016-11-19T23:49:18Z | https://github.com/PokemonGoF/PokemonGo-Bot/issues/5824 | [] | nucl3x | 0 |

ymcui/Chinese-LLaMA-Alpaca-2 | nlp | 424 | 关于训练过程中'eval_loss'都是nan的问题,解决方法 | ### 提交前必须检查以下项目

- [X] 请确保使用的是仓库最新代码(git pull),一些问题已被解决和修复。

- [X] 我已阅读[项目文档](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki)和[FAQ章节](https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/wiki/常见问题)并且已在Issue中对问题进行了搜索,没有找到相似问题和解决方案。

- [X] 第三方插件问题:例如[llama.cpp](https://github.com/ggerganov/llama.cpp)、[LangChain](https://g... | closed | 2023-11-27T03:08:05Z | 2024-04-22T14:23:49Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca-2/issues/424 | [

"stale"

] | 5663015 | 12 |

biolab/orange3 | pandas | 6,688 | FreeViz automatically runs when changing Gravity settings | FreeViz runs automatically when Gravity controls are changed in certain situations. I'm not sure if this is intended because every other setting is applied immediately, but the the fact that the optimization is stopped would suggest it should not start until I press Start. Either way, it still behaves in two different ... | open | 2024-01-01T16:06:22Z | 2024-01-05T09:09:48Z | https://github.com/biolab/orange3/issues/6688 | [

"wish",

"snack"

] | processo | 3 |

mlfoundations/open_clip | computer-vision | 674 | How can I decode the image feature to RGB-image | I want to use the image feature to do some downstream tasks (anomaly detection), could I decode the reconstructed feature to image like an autoencoder? I'm new to this and would really appreciate some simple guidance! | closed | 2023-10-15T12:38:02Z | 2023-10-21T21:34:18Z | https://github.com/mlfoundations/open_clip/issues/674 | [] | 1216537742 | 1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.