repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

nerfstudio-project/nerfstudio | computer-vision | 2,589 | Unable to add new nerf methods to latest image | **Describe the bug**

I have built a docker image based off the Dockerfile in the current repo. When I try to install the default new method template (https://github.com/tauzn-clock/plane-nerf) I am greeted with an error.

**To Reproduce**

Steps to reproduce the behavior:

1. Build a Docker image from Dockerfile

2.... | open | 2023-11-06T21:03:55Z | 2023-11-06T21:03:55Z | https://github.com/nerfstudio-project/nerfstudio/issues/2589 | [] | tauzn-clock | 0 |

huggingface/datasets | deep-learning | 6,610 | cast_column to Sequence(subfeatures_dict) has err | ### Describe the bug

I am working with the following demo code:

```

from datasets import load_dataset

from datasets.features import Sequence, Value, ClassLabel, Features

ais_dataset = load_dataset("/data/ryan.gao/ais_dataset_cache/raw/1978/")

ais_dataset = ais_dataset["train"]

def add_class(example):

... | closed | 2024-01-23T09:32:32Z | 2024-01-25T02:15:23Z | https://github.com/huggingface/datasets/issues/6610 | [] | neiblegy | 2 |

FlareSolverr/FlareSolverr | api | 465 | [btschool] (updating) The cookies provided by FlareSolverr are not valid | **Please use the search bar** at the top of the page and make sure you are not creating an already submitted issue.

Check closed issues as well, because your issue may have already been fixed.

### How to enable debug and html traces

[Follow the instructions from this wiki page](https://github.com/FlareSolverr/Fl... | closed | 2022-08-13T02:32:56Z | 2022-08-13T22:43:39Z | https://github.com/FlareSolverr/FlareSolverr/issues/465 | [

"invalid"

] | vsin123 | 1 |

Farama-Foundation/PettingZoo | api | 911 | [Bug Report] GymV26Environment-v0 already in registry | ### Describe the bug

Not sure if this is an error with pettingzoo or just the env creation tutorial, but I think I have seen it previously in other testing. Putting an issue up in case it is a bug in the code.

```

/Users/elliottower/anaconda3/envs/PettingZoo/lib/python3.8/site-packages/gymnasium/envs/registration.... | closed | 2023-03-21T23:16:48Z | 2023-03-21T23:26:51Z | https://github.com/Farama-Foundation/PettingZoo/issues/911 | [

"bug"

] | elliottower | 2 |

mwaskom/seaborn | pandas | 3,192 | TypeError: ufunc 'isfinite' not supported with numpy 1.24.0 | This is the code that I ran

```python

import matplotlib.pyplot as plt

import seaborn as sns

fmri = sns.load_dataset("fmri")

fmri.info()

sns.set(style="darkgrid")

sns.lineplot(data=fmri, x="timepoint", y="signal", hue="region", style="event")

plt.show()

```

This is the error I got

```python

❯ python3... | closed | 2022-12-19T13:57:57Z | 2024-02-28T20:42:27Z | https://github.com/mwaskom/seaborn/issues/3192 | [

"upstream"

] | Rizwan-Hasan | 3 |

ARM-DOE/pyart | data-visualization | 1,387 | ENH: Move to ruff for linting | We should move to [ruff](https://github.com/charliermarsh/ruff) for Python linting - we did this with xradar and the speed improvement is quite dramatic. | closed | 2023-02-14T14:52:43Z | 2023-02-16T21:21:27Z | https://github.com/ARM-DOE/pyart/issues/1387 | [

"Enhancement"

] | mgrover1 | 0 |

Johnserf-Seed/TikTokDownload | api | 369 | [BUG]启动Server.py,XB的js执行出错execjs._exceptions.ProgramError: Error: Cannot find module 'md5' | **描述出现的错误**

启动Server.py,XB的js执行出错execjs._exceptions.ProgramError: Error: Cannot find module 'md5'

**bug复现**

复现这次行为的步骤:

1.启动Server.py

2.启动TikTokDonwload.py

3.输入douyin video url

**截图**

如果适用,添加屏幕截图以帮助解释您的问题。

**桌面(请填写以下信息):**

-操作系统:Mac

-vpn代理无

-版本13070

**附文**

写了一个python通过execjs执行js代码的小sample,require("... | closed | 2023-03-26T09:54:56Z | 2023-04-03T07:14:20Z | https://github.com/Johnserf-Seed/TikTokDownload/issues/369 | [

"故障(bug)",

"额外求助(help wanted)",

"无效(invalid)"

] | sharkmao622 | 3 |

slackapi/bolt-python | fastapi | 464 | Not_authed after installing bot to workspace | #### The `slack_bolt` version

slack-bolt==1.6.0

slack-sdk==3.5.1

#### Python runtime version

Python 3.9.5

#### OS info

Kubuntu 21.04

#### Steps to reproduce:

I installed my bot to the workspace using `https://{my_domain}/slack/install`. After redirecting the new folder in the bot directory was creat... | closed | 2021-09-12T11:34:20Z | 2021-09-23T22:06:48Z | https://github.com/slackapi/bolt-python/issues/464 | [

"question",

"area:async",

"area:sync"

] | moffire | 2 |

gradio-app/gradio | data-visualization | 10,767 | Jittery animiation when streaming to chat_interface.chatbot_value | ### Describe the bug

### Have you searched existing issues? 🔎

- [x] I have searched and found no existing issues

### Reproduction

```

async def chat_fn_hidden_input(history: list[dict] = [], input_graph_state: dict = {}, uu... | closed | 2025-03-09T03:09:17Z | 2025-03-12T11:54:24Z | https://github.com/gradio-app/gradio/issues/10767 | [

"bug",

"needs repro"

] | brycepg | 3 |

pallets-eco/flask-sqlalchemy | flask | 649 | expected update syntax can be too aggressive | Not a bug report, more of a question.

Because the docs don't provide examples of UPDATE queries, I experimented with what seemed to make the most sense from the SQLA docs. I found that performing an .update() on (what I thought was) a single record turned out to update every row in database. See code sample.

Is t... | closed | 2018-10-25T23:47:40Z | 2021-04-06T00:14:01Z | https://github.com/pallets-eco/flask-sqlalchemy/issues/649 | [] | 2x2xplz | 12 |

keras-rl/keras-rl | tensorflow | 309 | Use of mutable default arguments throughout project | The use of mutable values for default arguments is [well documented](https://docs.python-guide.org/writing/gotchas/#mutable-default-arguments) to cause problems.

Anything following this format

```

# Function with mutable argument as default

def func(self, var=[]):

```

Should be adapted to the following.

... | closed | 2019-04-15T22:26:19Z | 2019-07-21T23:10:26Z | https://github.com/keras-rl/keras-rl/issues/309 | [

"wontfix"

] | Qinusty | 1 |

robinhood/faust | asyncio | 736 | Faust Application processes messages but does not commit offset after connection to kafka broker is reset | I see this issue when the connection to the kafka broker is reset.

In such a case, the faust application continues to process messages, but is not able to commit offset with the following error:

Could not send <class 'aiokafka.protocol.transaction.AddOffsetsToTxnRequest_v0'>: StaleMetadata('Broker id 8 not in curre... | open | 2021-08-24T18:07:28Z | 2021-09-04T15:24:44Z | https://github.com/robinhood/faust/issues/736 | [] | vinaygopalkrishnan | 1 |

huggingface/transformers | pytorch | 36,501 | TypeError: object of type 'IterableDataset' has no len() | ### System Info

When I tried a LLM using run_clm.py in example, I met the following error:

```

File "/workspace/train.py", line 611, in main

data_args.max_train_samples if data_args.max_train_samples is not None else len(train_dataset)

TypeError: object of type 'IterableDataset' has no len()

```

`len(train_dataset... | open | 2025-03-03T01:44:46Z | 2025-03-06T11:21:57Z | https://github.com/huggingface/transformers/issues/36501 | [

"bug"

] | mxjmtxrm | 6 |

datadvance/DjangoChannelsGraphqlWs | graphql | 2 | Could you please provide an working example with django and channel2 | I tried to use the one you provided in the readme. However, I did not make it. I am wondering if you can provide a working example in this project with django and channel 2.

Thanks a lot. | closed | 2018-06-28T22:09:38Z | 2019-04-20T01:31:46Z | https://github.com/datadvance/DjangoChannelsGraphqlWs/issues/2 | [

"enhancement"

] | junchiz | 5 |

cvat-ai/cvat | tensorflow | 9,057 | (Self Hosted) Annotation Download Fails Over HTTPS (but over http it works fine) | **Story**

When attempting to download a dataset in my CVAT deployment, a new tab briefly opens and closes without downloading anything. The issue seems to be related to mixed content, where CVAT is running over HTTPS, but dataset download URLs are served over HTTP. Modern browsers block such downloads for security reas... | closed | 2025-02-05T12:44:04Z | 2025-02-06T07:23:43Z | https://github.com/cvat-ai/cvat/issues/9057 | [

"need info"

] | osman-goni-cse | 5 |

mljar/mercury | jupyter | 114 | add preheated kernel for execution to speedup the computation | It should be possible to add preheated kernels for executing notebook. Please check the following discussions:

- https://github.com/jupyter/nbconvert/pull/852/

- https://github.com/jupyter/nbconvert/issues/1802

- https://github.com/jupyter/jupyter_client/issues/811

- https://github.com/jupyter/nbclient/issues/238 | closed | 2022-06-28T10:22:54Z | 2023-02-13T14:42:54Z | https://github.com/mljar/mercury/issues/114 | [

"enhancement"

] | pplonski | 2 |

seleniumbase/SeleniumBase | pytest | 2,350 | UC Mode Ineffective on Streamlit Cloud | I have deployed a web automation script on Streamlit Cloud, utilizing the `uc` mode to bypass the web scraping detection system. During local device testing, the script consistently successfully circumvents the web scraping detection system. However, when executing on Streamlit Cloud, the `uc` mode appears to be ineffe... | closed | 2023-12-08T11:01:59Z | 2023-12-08T13:39:22Z | https://github.com/seleniumbase/SeleniumBase/issues/2350 | [

"external",

"UC Mode / CDP Mode"

] | LiPingYen | 1 |

rafsaf/minimal-fastapi-postgres-template | sqlalchemy | 2 | Question : Add Fastapi-users library for authentication ? | I was asking myself if this would be a good idea to use this library :

https://github.com/fastapi-users/fastapi-users

Since it supports a lot of features out of the box, including oauth ...

What do you think ? | closed | 2021-11-24T16:45:41Z | 2022-01-30T14:20:38Z | https://github.com/rafsaf/minimal-fastapi-postgres-template/issues/2 | [] | sorasful | 3 |

drivendataorg/cookiecutter-data-science | data-science | 107 | importing scripts from other folders | It would be great if the structure came along with default importing commands or files (__init__ files). | closed | 2018-04-13T19:24:48Z | 2018-04-13T19:33:39Z | https://github.com/drivendataorg/cookiecutter-data-science/issues/107 | [] | gulfemd | 1 |

schemathesis/schemathesis | graphql | 2,371 | [FEATURE] Filling missing examples for basic data types | ## Description of Problem

`--contrib-openapi-fill-missing-examples` not working as expected along with `--hypothesis-phases=explicit`

let us say i have a field `name`, and i use `--hypothesis-phases=explicit` and `--contrib-openapi-fill-missing-examples`

schemathesis generates `name: ''` which is not valid json

... | open | 2024-07-24T19:07:25Z | 2024-07-28T09:08:55Z | https://github.com/schemathesis/schemathesis/issues/2371 | [

"Type: Feature"

] | ravy | 1 |

thomaxxl/safrs | rest-api | 66 | Type coercion does not always work for JSON columns | The JSON type in `sqlalchemy` seems to be hard-coded to return `dict` as its `python_type`:

```python

@property

def python_type(self):

return dict

```

There are many ways valid JSON can be something other than a `dict`. In safrs 2.8.0, these columns fall through to the base case of being coerced to dictio... | closed | 2020-05-30T19:45:31Z | 2020-05-31T07:13:13Z | https://github.com/thomaxxl/safrs/issues/66 | [] | polyatail | 2 |

apache/airflow | python | 47,887 | Missing Dropdown in Grid View to Increase DAG Run Count | ### Apache Airflow version

main (development)

### If "Other Airflow 2 version" selected, which one?

_No response_

### What happened?

The Grid View does not seem to have a dropdown to increase the DAG run count when there are many runs. This makes it difficult to navigate and view a large number of DAG runs effici... | open | 2025-03-18T05:10:00Z | 2025-03-18T10:11:27Z | https://github.com/apache/airflow/issues/47887 | [

"kind:bug",

"kind:feature",

"priority:high",

"area:core",

"area:UI",

"affected_version:3.0.0beta"

] | vatsrahul1001 | 3 |

httpie/cli | python | 1,530 | werkzeug compatibility | There no longer appears to be an issue with werkzeug 2.1.0 as no test failures are produced using 2.2.3.

Allowing up to the latest release (2.3.7) of werkzeug would be preferable but that produces test failures.

I do not know which project the test failures should be addressed.

[Test results](https://gist.github.... | open | 2023-09-10T19:05:41Z | 2024-05-18T05:31:40Z | https://github.com/httpie/cli/issues/1530 | [

"testing"

] | loqs | 0 |

geex-arts/django-jet | django | 424 | ValueError: Empty module name, using cookie cutter |

$ python manage.py migrate jet

Traceback (most recent call last):

File "manage.py", line 30, in <module>

execute_from_command_line(sys.argv)

File "/home/kapil/Documents/PycharmProjects/COOKIECUTTER/project_1_test/venv/lib/python3.6/site-packages/django/core/management/__init__.py", line 381, in execute_... | open | 2020-01-18T14:10:52Z | 2020-01-18T14:10:52Z | https://github.com/geex-arts/django-jet/issues/424 | [] | kp96-info | 0 |

nteract/papermill | jupyter | 431 | Cleanup phase before exiting when papermill is interrupted? | **Is there any common way to handle cases where one would need papermill to execute some cleanup cell(s) before papermill exits?**

This should happen regardless of why it's being terminated – due to an error, or because the papermill process was killed.

So it would be similar to the `finally` block of a `try-fina... | closed | 2019-09-13T23:41:34Z | 2019-09-17T20:51:20Z | https://github.com/nteract/papermill/issues/431 | [] | juhoautio | 2 |

Evil0ctal/Douyin_TikTok_Download_API | fastapi | 247 | [BUG] Docker环境配置了Scraper代理但是无法正常工作 | docker中部署,使用host方式对外服务,配置了代理后,无法解析抖音视频,也没找到异常日志,感觉是代理并没有生效。

另外,这个代理支不支持socks5代理类型呢?支不支持用户认证呢?

```

[Scraper] # scraper.py

# 是否使用代理(如果部署在IP受限国家需要开启默认为False关闭,请自行收集代理,下面代理仅作为示例不保证可用性)

# Whether to use proxy (if deployed in a country with IP restrictions, it needs to be turned on by default, False is closed. Please co... | closed | 2023-08-20T08:45:27Z | 2023-08-24T08:01:59Z | https://github.com/Evil0ctal/Douyin_TikTok_Download_API/issues/247 | [

"BUG"

] | lifei6671 | 1 |

explosion/spaCy | nlp | 13,482 | Macbook Pro M3 Max Install Issue: Cythonizing spacy/kb.pyx | I am posting this issue here after encountering it while trying to install a different program using it. The link to that other issue is below and the recommendation was that I file a report here. I will also reproduce the response from the other dev below my paste of my issue:

https://github.com/erew123/alltalk_t... | open | 2024-05-08T18:03:56Z | 2024-11-01T15:10:41Z | https://github.com/explosion/spaCy/issues/13482 | [

"install"

] | brianragle | 3 |

mljar/mercury | data-visualization | 67 | allow embeding | Please add a parameter in url for embedding. The embedded version should hide the navbar and show a small logo at the bottom: "Powered by Mercury". | closed | 2022-03-15T07:54:14Z | 2022-03-15T09:03:31Z | https://github.com/mljar/mercury/issues/67 | [

"enhancement"

] | pplonski | 1 |

albumentations-team/albumentations | machine-learning | 1,946 | albucore 0.0.17 breaks albumentation | The following line imports `preserve_channel_dim` which has been deleted from `albucore/utils.py` on version 0.0.17

https://github.com/albumentations-team/albumentations/blob/b358a8826de3bbd853d55a672f0b4d20c59bf5ff/albumentations/augmentations/blur/functional.py#L9C68-L9C88

https://github.com/albumentations-team... | closed | 2024-09-21T08:14:38Z | 2024-09-22T20:44:25Z | https://github.com/albumentations-team/albumentations/issues/1946 | [] | gui-miotto | 2 |

Netflix/metaflow | data-science | 1,815 | R tests on Github fail with macos-latest runner | #1814 swapped R test runner to ubuntu, due to them failing with macos-latest runners since the macos 14 update.

Suggest looking into eventually fixing R tests so they can be run on macos-latest, as this should be a more common platform among users requiring the R support. | open | 2024-04-26T23:37:52Z | 2024-12-04T16:12:13Z | https://github.com/Netflix/metaflow/issues/1815 | [

"bug"

] | saikonen | 1 |

wagtail/wagtail | django | 12,618 | Page choosers no longer working on `main` | <!--

Found a bug? Please fill out the sections below. 👍

-->

### Issue Summary

The page chooser modal will not respond to any clicks when choosing a page, and an error is thrown in the console:

`Uncaught TypeError: Cannot read properties of undefined (reading 'toLowerCase')`

![Uncaught TypeError: Cannot read proper... | closed | 2024-11-22T11:13:19Z | 2024-11-22T16:22:30Z | https://github.com/wagtail/wagtail/issues/12618 | [

"type:Bug"

] | laymonage | 0 |

sinaptik-ai/pandas-ai | pandas | 1,330 | Expose pandas API on SmartDataframe | ### 🚀 The feature

Expose commonly used pandas API on SmartDataframe so that a smart dataframe can be used as if it is a normal pandas dataframe.

### Motivation, pitch

Continuous modification of a pandas dataframe is often needed. Is there a support for modifying an existing SmartDataframe after it has been construc... | closed | 2024-08-19T17:29:23Z | 2024-11-25T16:07:45Z | https://github.com/sinaptik-ai/pandas-ai/issues/1330 | [

"enhancement"

] | c3-yiminliu | 1 |

litestar-org/litestar | api | 3,781 | Unexpected `ContextVar` handling for lifespan context managers | ### Description

A `ContextVar` set within a `lifespan` context manager is not available inside request handlers. By contrast, doing the same thing via an application level dependency does appear set in the require handler.

### MCVE

```python

import asyncio

from collections.abc import AsyncIterator

from cont... | closed | 2024-10-09T02:07:27Z | 2025-03-20T15:54:57Z | https://github.com/litestar-org/litestar/issues/3781 | [

"Bug :bug:"

] | rmorshea | 8 |

zappa/Zappa | flask | 1,283 | Delayed asynchronous invocation using SFN | # Summary

I'd like to open a discussion on the feature of delayed asynchronous task invocation as in the following example:

```python

@task(delay_seconds=1800)

make_pie():

""" This task is invoked asynchronously 30 minutes after it is initially run. """

```

# History

I initially created a PR on the ... | closed | 2023-11-06T10:18:35Z | 2024-04-13T20:36:57Z | https://github.com/zappa/Zappa/issues/1283 | [

"no-activity",

"auto-closed"

] | oliviersels | 5 |

microsoft/nni | tensorflow | 4,918 | Retiarii - How To Get Final Model | I am following your tutorial at https://nni.readthedocs.io/en/latest/tutorials/hello_nas.html and it runs fine, but I don't understand how to get the final results unless I am watching the experiment while it is running. I checked the logs at /content/nni/ec90fnpg/log/nnimanager.log but they don't seem to show any ac... | open | 2022-06-07T11:54:28Z | 2024-06-12T07:35:27Z | https://github.com/microsoft/nni/issues/4918 | [

"user raised",

"NAS 2.0"

] | impulsecorp | 6 |

huggingface/datasets | pandas | 6,483 | Iterable Dataset: rename column clashes with remove column | ### Describe the bug

Suppose I have a two iterable datasets, one with the features:

* `{"audio", "text", "column_a"}`

And the other with the features:

* `{"audio", "sentence", "column_b"}`

I want to combine both datasets using `interleave_datasets`, which requires me to unify the column names. I would typic... | closed | 2023-12-08T16:11:30Z | 2023-12-08T16:27:16Z | https://github.com/huggingface/datasets/issues/6483 | [

"streaming"

] | sanchit-gandhi | 4 |

streamlit/streamlit | data-science | 10,521 | Slow download of csv file when using the inbuild download as csv function for tables displayed as dataframes in Edge Browser | ### Checklist

- [x] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [x] I added a very descriptive title to this issue.

- [x] I have provided sufficient information below to help reproduce this issue.

### Summary

Issue is only in MS Edge Browser:

When pressin... | open | 2025-02-26T08:43:56Z | 2025-03-03T11:49:27Z | https://github.com/streamlit/streamlit/issues/10521 | [

"type:bug",

"feature:st.dataframe",

"status:confirmed",

"priority:P3",

"feature:st.download_button",

"feature:st.data_editor"

] | LazerLars | 2 |

microsoft/unilm | nlp | 1,637 | Reproducing Differential Transformer. | I compared the training losses of the Transformer and Differential Transformer under my own setting but failed to reproduce the experimental results. I wonder whether the operation of "taking difference" and applying softmax should be reversed in official code.

[official code](https://github.com/microsoft/unilm/blob/09... | closed | 2024-10-14T05:21:16Z | 2024-10-14T14:37:57Z | https://github.com/microsoft/unilm/issues/1637 | [] | Zcchill | 4 |

TracecatHQ/tracecat | automation | 872 | Editing secrets wipes out previous keys | **Describe the bug**

When editing a secret the previously set keys are not loaded as an option to update, when adding a new key to the existing secret it wipes out previous keys associated with the secret.

**To Reproduce**

- Create secret with multiple keys

- Edit the same secret to add or update existing key

- Existi... | closed | 2025-02-18T15:58:09Z | 2025-03-15T14:27:24Z | https://github.com/TracecatHQ/tracecat/issues/872 | [

"good first issue",

"frontend"

] | landonc | 4 |

axnsan12/drf-yasg | rest-api | 784 | get/put/patch id key type error | # Bug Report

## Description

`id` field is the primary key, and in url we set it to int like below,

```

path('api/v2/device/<int:id>', app_views.DeviceViewSet.as_view({

'get': 'retrieve',

'put': 'update',

'patch': 'partial_update',

})),

```

But in the swagger json, this field change to `str... | closed | 2022-03-21T02:44:58Z | 2022-03-21T04:02:21Z | https://github.com/axnsan12/drf-yasg/issues/784 | [] | cataglyphis | 1 |

scikit-learn/scikit-learn | python | 30,037 | Implement the two-parameter Box-Cox transform variant | ### Describe the workflow you want to enable

Currently, ony the single-parameter box-cox is implemented in sklearn.preprocessing.power_transform

The two parameter variant is defined as

where both the paramete... | open | 2024-10-09T12:35:03Z | 2024-10-09T14:59:44Z | https://github.com/scikit-learn/scikit-learn/issues/30037 | [

"New Feature"

] | jachymb | 1 |

skypilot-org/skypilot | data-science | 4,990 | [R2] Treat Cloudflare as a cloud | Every cloud except Cloudflare is implemented as subclass of clouds.Cloud. Cloudflare is an exception to the rule because unlike other clouds, Cloudflare does not offer compute and did not align well with compute-centric view of Skypilot. Once https://github.com/skypilot-org/skypilot/issues/4957 is resolved, Cloudflare ... | open | 2025-03-19T05:11:55Z | 2025-03-19T17:15:28Z | https://github.com/skypilot-org/skypilot/issues/4990 | [] | SeungjinYang | 0 |

huggingface/peft | pytorch | 2,255 | Is this the right way to check whether a model has been trained as expected? | I'd like to check whether my PEFT model has been trained as intended, i.e. whether the PEFT weights have changed, but not the base weights. The following code works, but I'm sure a PEFT specialist will suggest a better way.

```python

import tempfile

import torch

from datasets import load_dataset

from peft impo... | closed | 2024-12-03T17:36:00Z | 2024-12-04T12:01:37Z | https://github.com/huggingface/peft/issues/2255 | [] | qgallouedec | 5 |

xinntao/Real-ESRGAN | pytorch | 785 | inference_realesrgan.py how work on multi-gpu? | inference_realesrgan.py have argument: parser.add_argument('-g', '--gpu-id', type=int, default=None, help='gpu device to use (default=None) can be 0,1,2 for multi-gpu') but cause exception: error: argument -g/--gpu-id: invalid int value: '0,1' | open | 2024-04-18T07:38:18Z | 2024-08-22T09:43:22Z | https://github.com/xinntao/Real-ESRGAN/issues/785 | [] | siestina | 2 |

jonaswinkler/paperless-ng | django | 1,474 | [Other] Rerun OCR on uploaded documents | Hi there!

Thanks for the wonderful project!

Just uploaded my first documents to paperless and found out that it's seems like OCR uses `eng` language which is set as default one. I've changed the default language to `rus` and I don't see an option to rerun OCR on the uploaded files. Is there a way to perform this? | closed | 2021-12-10T11:40:05Z | 2022-01-16T07:50:16Z | https://github.com/jonaswinkler/paperless-ng/issues/1474 | [] | sprnza | 5 |

encode/databases | asyncio | 141 | Interval type column raises TypeError on record access | Hi

I found an issue when selecting from a table with an [`Interval`](https://docs.sqlalchemy.org/en/13/core/type_basics.html#sqlalchemy.types.Interval) column, this should parse to a `timedelta` object.

When used with databases, sqlalchemy raises a `TypeError` when trying to access the column in the result:

```p... | open | 2019-09-16T15:45:34Z | 2020-07-25T17:25:19Z | https://github.com/encode/databases/issues/141 | [] | steinitzu | 4 |

databricks/koalas | pandas | 1,492 | Pyspark functions vs Koalas methods | Hi, this is more of a question, but I was wondering why so many Koalas methods are explained as `pythonUDF`s?

I guess my questions are:

- Is this intended behavior? If so, could you explain why?

- What is the "optimal" pick here? Does this change when data is larger, smaller, etc?

Examples

**String method... | closed | 2020-05-13T09:11:50Z | 2020-06-16T12:08:24Z | https://github.com/databricks/koalas/issues/1492 | [

"question"

] | sebastianvermaas | 3 |

deezer/spleeter | deep-learning | 555 | fichier spleeter-cpu.yaml absent | dans le fichier ZIP de SLEEPER (version windows ) dans le dossier "conda" le fichier "" spleeter-cpu.yaml "" est absent

impossible donc de créer un nouvel environnement dans anaconda | closed | 2021-01-10T11:09:45Z | 2021-01-11T11:50:30Z | https://github.com/deezer/spleeter/issues/555 | [

"bug",

"invalid",

"conda"

] | dextermaniac | 1 |

hankcs/HanLP | nlp | 632 | 是否考虑出个python版?或者提供python接口? | 向python开放应该是个好的方向。 | closed | 2017-09-20T05:34:51Z | 2017-09-25T03:33:33Z | https://github.com/hankcs/HanLP/issues/632 | [

"duplicated"

] | suparek | 6 |

microsoft/MMdnn | tensorflow | 879 | pytorch2keras: ModuleNotFoundError: No module named 'models' | Platform (like ubuntu 16.04/win10): ubuntu 18.04

Python version: 3.6.8

Source framework with version (like Tensorflow 1.4.1 with GPU): pytorch 1.15.1

Destination framework with version (like CNTK 2.3 with GPU): keras 2.2.4

Pre-trained model path (webpath or webdisk path):

Running scripts: mmconvert -sf... | closed | 2020-08-02T15:19:36Z | 2020-12-15T07:31:04Z | https://github.com/microsoft/MMdnn/issues/879 | [] | zhang-f | 3 |

deepfakes/faceswap | deep-learning | 1,407 | Future Development Plans for FaceSwap | Just wondering, are there any new features or updates or new releases coming soon, or is the project wrapping up and going into the archives soon?

| closed | 2024-11-07T16:14:37Z | 2024-11-09T14:56:15Z | https://github.com/deepfakes/faceswap/issues/1407 | [] | ruidazeng | 1 |

miguelgrinberg/python-socketio | asyncio | 1,143 | namespace in conjunction with room does not work | Greetings to all, I noticed such a problem if out of context I use

`await sio.emit('new_message_response', data={'test':'tester'}, namespace='/chat', room='c50c277a-b3a8-49e8-84ae-ed2497ed6655')`

Then nothing happens, but if you remove the room, then sending happens, the room exists and there are people in it. | closed | 2023-02-26T08:08:38Z | 2023-02-26T16:01:01Z | https://github.com/miguelgrinberg/python-socketio/issues/1143 | [] | d1ksim | 0 |

sinaptik-ai/pandas-ai | data-science | 995 | AttributeError: 'GoogleGemini' object has no attribute 'google' | ### System Info

OS version: windows11

Python version: 3.11

The current version of pandasai being used: 2.0.3

### 🐛 Describe the bug

```

import pandas as pd

from pandasai.llm import GooglePalm, GoogleGemini

from pandasai import Agent

df = pd.DataFrame({

"country": [

"United States",

... | closed | 2024-03-05T05:20:50Z | 2024-03-15T16:19:25Z | https://github.com/sinaptik-ai/pandas-ai/issues/995 | [] | gDanzel | 2 |

inducer/pudb | pytest | 80 | Double stack entries with post_mortem | I can't figure out what is going on here. If you try [jedi](https://github.com/davidhalter/jedi), and use `./sith.py random code --pudb`, where you replace `code` with some random Python code (like try PuDB's own code base), the stack items appear twice (note, jedi is highly recursive, so the stack traces will tend to ... | open | 2013-07-26T00:25:24Z | 2013-08-09T04:27:25Z | https://github.com/inducer/pudb/issues/80 | [] | asmeurer | 1 |

miguelgrinberg/Flask-Migrate | flask | 312 | upgrade hangs forever with multiple database binds, probably deadlocked | Running `flask db upgrade` hangs forever, until I kill the process or stop the postgres server. I have 3 database binds including default. It's getting stuck when updating the version in `alembic_version` table. The migration change isn't related, even running an empty `pass` migration still hangs.

Probably, the fir... | closed | 2020-01-19T07:40:03Z | 2020-01-19T12:49:08Z | https://github.com/miguelgrinberg/Flask-Migrate/issues/312 | [] | alanhamlett | 2 |

ansible/ansible | python | 84,142 | ansible.builtin.unarchive fails to extract single file from .tar.gz archive with just this file | ### Summary

Running eg the following task

```yaml

- name: Extract archive

ansible.builtin.unarchive:

remote_src: yes

src: 'https://github.com/extrawurst/gitui/releases/download/v0.26.3/gitui-linux-x86_64.tar.gz'

dest: '{{ download_dir }}'

include:

- gitui

```

fails. Note that this ... | closed | 2024-10-18T15:57:25Z | 2024-11-03T14:00:01Z | https://github.com/ansible/ansible/issues/84142 | [

"module",

"bug",

"affects_2.16"

] | senarclens | 8 |

davidteather/TikTok-Api | api | 358 | Exception: Invalid Response | It worked well before, but now it reports this exception Exception: Invalid Response. Is my machine blocked? | closed | 2020-11-16T06:46:19Z | 2020-12-03T02:17:39Z | https://github.com/davidteather/TikTok-Api/issues/358 | [

"bug"

] | septfish | 10 |

ymcui/Chinese-BERT-wwm | tensorflow | 231 | 链接失效求助 | 崔先生您好!之前您为某位网友解答问题时提供了如下链接:

https://github.com/ymcui/CMRC2018-DRCD-BERT

但是现在这个链接失效了,请问现在对应的链接是哪个呢? | closed | 2023-04-01T06:14:05Z | 2023-04-06T03:48:59Z | https://github.com/ymcui/Chinese-BERT-wwm/issues/231 | [

"stale"

] | Alternate-D | 2 |

CorentinJ/Real-Time-Voice-Cloning | pytorch | 1,045 | improve inference time | Does anyone have a clue on how to fasten the inference time? I know other vocoders have been tried but they were not satisfacotry ... right?

| open | 2022-03-29T15:38:37Z | 2022-03-29T15:38:37Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1045 | [] | ireneb612 | 0 |

Kanaries/pygwalker | pandas | 510 | Would it be possbile to walk it with Datatable.frame type in the future? | https://github.com/h2oai/datatable

It has higher performance reading and manipulating big csv data than pandas/polars/modin. But I can't walk this datatable.frame type. | open | 2024-04-01T17:59:46Z | 2024-04-02T06:55:41Z | https://github.com/Kanaries/pygwalker/issues/510 | [

"Vote if you want it",

"proposal"

] | plusmid | 1 |

qubvel-org/segmentation_models.pytorch | computer-vision | 234 | dice_loss - -ve, iou_score - -ve | Dear @qubvel

Thank you for this amazing library.

I tried to build a UNet model with ResNet152 as a backbone and 1 class 'person'.

I used input images of 512x512 as input and corresponding binary masks.

I used your sample code of CamVid notebook as a base and did some tuning in the same to make it work for my dat... | closed | 2020-07-16T09:12:55Z | 2022-02-20T01:53:51Z | https://github.com/qubvel-org/segmentation_models.pytorch/issues/234 | [

"Stale"

] | PareshKamble | 8 |

sammchardy/python-binance | api | 1,004 | futures_socket() doesn't work in testnet | I'm trying to get updates from the futures account in testnet using futures_socket() while opening-closing orders, however it generates no output on any actions.

```

api = ...

secret = ...

from binance import AsyncClient, BinanceSocketManager

import asyncio

async def order_trade(client,symbol):

order =... | closed | 2021-09-02T23:25:27Z | 2022-07-19T11:28:08Z | https://github.com/sammchardy/python-binance/issues/1004 | [] | wckr-t | 1 |

public-apis/public-apis | api | 3,210 | Public Api test validation for Microsoft Edge | Any suggestions or verified data on this topic?? | closed | 2022-06-29T21:21:02Z | 2022-07-15T21:28:06Z | https://github.com/public-apis/public-apis/issues/3210 | [] | Vmc43hub | 1 |

noirbizarre/flask-restplus | flask | 119 | Add ability to document response headers | The library has the ability to quickly document the expected [request headers](https://flask-restplus.readthedocs.org/en/stable/documenting.html#headers) but currently there is not greay way to document the response headers. There was some stuff added in https://github.com/noirbizarre/flask-restplus/commit/a2b73da8d302... | closed | 2016-01-18T03:26:01Z | 2017-03-07T09:40:51Z | https://github.com/noirbizarre/flask-restplus/issues/119 | [

"bug",

"enhancement"

] | awiddersheim | 4 |

mwaskom/seaborn | matplotlib | 2,884 | Would it be possible to optionally use jax.gaussian_kde instead of scipy? | [Now that Jax has](https://github.com/google/jax/pull/11237) a GPU-accelerated version of `gaussian_kde`, would it be possible to use that instead of the scipy or the internal version of this function? | closed | 2022-06-29T05:12:10Z | 2022-06-29T21:30:10Z | https://github.com/mwaskom/seaborn/issues/2884 | [] | NeilGirdhar | 2 |

gunthercox/ChatterBot | machine-learning | 1,900 | Typo | closed | 2020-01-20T03:21:03Z | 2020-04-27T21:31:47Z | https://github.com/gunthercox/ChatterBot/issues/1900 | [

"invalid"

] | scottwedge | 0 | |

strawberry-graphql/strawberry | asyncio | 2,883 | GraphQL query timeouts | <!--- Provide a general summary of the changes you want in the title above. -->

On top of providing security via query complexity analysis and query depth analysis.

I believe having query timeouts is an important feature.

<!--- This template is entirely optional and can be removed, but is here to help both you a... | open | 2023-06-23T00:22:35Z | 2025-03-20T15:56:15Z | https://github.com/strawberry-graphql/strawberry/issues/2883 | [] | XChikuX | 0 |

dask/dask | pandas | 11,149 | a tutorial for distributed text deduplication | Can you provide an example of distributed text deduplication based on dask, such as:

- https://github.com/xorbitsai/xorbits/blob/main/python/xorbits/experimental/dedup.py

- https://github.com/ChenghaoMou/text-dedup/blob/main/text_dedup/minhash_spark.py

- https://github.com/FlagOpen/FlagData/blob/main/flagdata/dedupl... | open | 2024-05-27T07:38:30Z | 2024-06-03T07:29:57Z | https://github.com/dask/dask/issues/11149 | [

"documentation"

] | simplew2011 | 5 |

deepset-ai/haystack | machine-learning | 8,319 | Add workflow to verify release notes are formatted correctly when added in PRs | The release process can fail if the release notes yaml are not correctly formatted.

An example failure: https://github.com/deepset-ai/haystack/actions/runs/10669412195/job/29571286619#step:6:111

This is annoying as we usually discover this issue when drafting a new release, that slows down the whole process.

We ... | closed | 2024-09-02T15:47:01Z | 2024-09-04T15:37:33Z | https://github.com/deepset-ai/haystack/issues/8319 | [

"topic:CI",

"P2"

] | silvanocerza | 0 |

jupyter-widgets-contrib/ipycanvas | jupyter | 7 | MultiCanvas Importerror on Binder | On my Binder instance, in the notebook MultiCanvas.ipynb, the command `from ipycanvas import MultiCanvas`leads to

```

ImportError: cannot import name 'MultiCanvas' from 'ipycanvas' (/srv/conda/envs/notebook/lib/python3.7/site-packages/ipycanvas/__init__.py)

``` | closed | 2019-09-13T13:22:26Z | 2019-09-14T05:58:53Z | https://github.com/jupyter-widgets-contrib/ipycanvas/issues/7 | [] | lexnederbragt | 2 |

ageitgey/face_recognition | python | 1,542 | fcaial recognition wrong person | I created an actor database with hundreds of people and used a TV drama to extract faces for recognition. When I did face recognition on continuous frames of the same person, I found that the first few frames were correctly recognized, but then a mouth movement was recognized as another character, and the similarity wa... | open | 2023-12-01T09:36:34Z | 2023-12-01T09:36:34Z | https://github.com/ageitgey/face_recognition/issues/1542 | [] | zhouyong297137551 | 0 |

PablocFonseca/streamlit-aggrid | streamlit | 195 | how to get the details of selected rows in a grid with a grouped row when selecting the group | when using a rowGroup and selecting a grouped row, streamlit returns a dictionary that does not contain the details of the selected row inside the group (and not even the detail of group name).

Here is a reproducible example:

```python

import streamlit as st

from st_aggrid import AgGrid, ColumnsAutoSizeMode

im... | open | 2023-03-01T11:51:25Z | 2023-03-01T11:51:25Z | https://github.com/PablocFonseca/streamlit-aggrid/issues/195 | [] | pietroppeter | 0 |

holoviz/panel | matplotlib | 7,052 | AttributeError: 'CompositeWidget' object has no attribute '_composite' | panel==latest `main` branch

While trying to document the `CompositeWidget` in https://github.com/holoviz/panel/pull/7051 I discovered that its not fully "robust" as you cannot set the `_composite_widget` in a method marked `on_init=True`:

```python

import param

import panel as pn

from panel.widgets import Comp... | closed | 2024-08-01T06:46:46Z | 2025-01-21T11:43:38Z | https://github.com/holoviz/panel/issues/7052 | [] | MarcSkovMadsen | 0 |

neuml/txtai | nlp | 881 | No Issue, just cool | closed | 2025-02-27T18:10:47Z | 2025-02-28T02:55:14Z | https://github.com/neuml/txtai/issues/881 | [] | leoProbisky | 1 | |

ansible/ansible | python | 84,327 | An error occurred (FilterLimitExceeded) when calling the DescribeInstances operation: The maximum length for a filter value is 255 characters | ### Summary

When I try to launch a new EC2 instance using the `ec2_instance` module:

```yaml

- name: launch ec2 instance

ec2_instance:

state: "running"

region: "{{ region }}"

key_name: "{{ keypair }}"

network:

assign_public_ip: true

groups: "{{ group }}"

... | closed | 2024-11-18T04:44:16Z | 2024-12-02T14:00:02Z | https://github.com/ansible/ansible/issues/84327 | [

"bug",

"bot_closed",

"affects_2.16"

] | icicimov | 6 |

pytorch/pytorch | python | 149,124 | `torch.multinomial` fails under multi-worker DataLoader with a CUDA error: `Assertion cumdist[size - 1] > 0` failed | ### 🐛 Describe the bug

When using `torch.multinomial` in a Dataset/IterableDataset within a `DataLoader` that has multiple workers (num_workers > 0), an assertion error is thrown from a CUDA kernel:

```bash

pytorch\aten\src\ATen\native\cuda\MultinomialKernel.cu:112: block: [0,0,0], thread: [0,0,0] Assertion `cumdist... | open | 2025-03-13T14:23:35Z | 2025-03-13T15:21:17Z | https://github.com/pytorch/pytorch/issues/149124 | [

"triaged",

"module: data",

"module: python frontend"

] | yewentao256 | 0 |

nltk/nltk | nlp | 3,154 | 3.8.1: sphinx warnings `reference target not found` | On building my packages I'm using `sphinx-build` command with `-n` switch which shows warmings about missing references. These are not critical issues.

You can peak on fixes that kind of issues in other projects

https://github.com/RDFLib/rdflib-sqlalchemy/issues/95

https://github.com/RDFLib/rdflib/pull/2036

https... | open | 2023-05-16T16:51:57Z | 2023-05-17T21:18:01Z | https://github.com/nltk/nltk/issues/3154 | [

"documentation",

"enhancement"

] | kloczek | 1 |

JaidedAI/EasyOCR | machine-learning | 327 | Error occurs when i install | error: package directory 'libfuturize\tests' does not exist | closed | 2020-12-10T07:09:44Z | 2022-03-02T09:24:10Z | https://github.com/JaidedAI/EasyOCR/issues/327 | [] | AndyJMR | 3 |

torchbox/wagtail-grapple | graphql | 264 | `N*M + 1` problem when fetching image renditions | When we fetch multiple image renditions using the `srcSet` parameter on image queries, we end up having an `N * M + 1` situation, where `N` is the total number of images to retrieve renditions for and `M` is the number of renditions to generate.

I've opened a draft PR with a test which confirms this behaviour here: ... | closed | 2022-08-31T10:21:50Z | 2022-10-31T14:10:00Z | https://github.com/torchbox/wagtail-grapple/issues/264 | [] | Tijani-Dia | 1 |

deepfakes/faceswap | machine-learning | 1,365 | あ | closed | 2023-12-30T08:39:20Z | 2023-12-30T13:54:34Z | https://github.com/deepfakes/faceswap/issues/1365 | [] | alicerei | 0 | |

2noise/ChatTTS | python | 279 | 有没有其他推荐的开源TTS,可以做到流式或者低延迟响应的,chattts的速度实在是慢 | 我在A100上运行的chattts,生成长文本需要很长时间,后面尝试去做文本分割,一段段的生成,速度也还是不行,之前实现了基于paddlespeech的语音合成,流式的效果很好,但是音色比较拉跨,有没有合成效果不那么拉跨,而且速度还可以的tts推荐 | closed | 2024-06-06T11:59:58Z | 2024-10-06T16:34:57Z | https://github.com/2noise/ChatTTS/issues/279 | [

"ad"

] | chwljy | 6 |

skypilot-org/skypilot | data-science | 4,859 | [Tests] Client server API version compatibility tests | With then new client-server architecture, we need to add tests that verify functionality of client/servers running the same API version:

* New client, old server

* OId client, new server | open | 2025-02-28T23:08:57Z | 2025-02-28T23:08:57Z | https://github.com/skypilot-org/skypilot/issues/4859 | [] | romilbhardwaj | 0 |

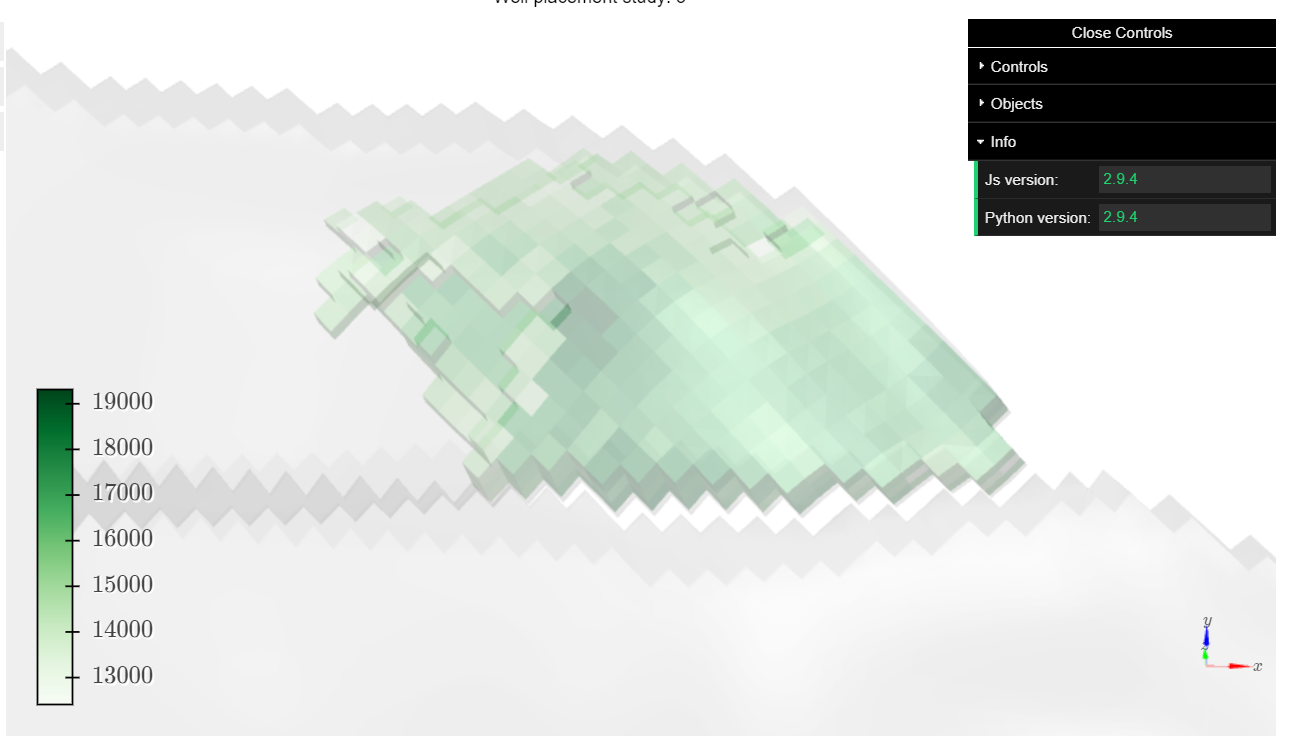

K3D-tools/K3D-jupyter | jupyter | 282 | Regression in 2.9.5 | There seems to be a regression in 2.9.5 about setting objects opacity. See below

**Expected: v2.9.4**

Green object opacity is set to 0.4

**2.9.5**

pervasively, it would help to eliminate the possibility... | closed | 2022-12-16T19:32:29Z | 2022-12-17T20:18:57Z | https://github.com/vitalik/django-ninja/issues/633 | [] | ghost | 1 |

omnilib/aiomultiprocess | asyncio | 5 | Check for existing asyncio event loops after forking | It seems there are some cases where there is already an existing, active event loop after forking, which causes an exception when the child process tries to create a new event loop. It should check for an existing event loop first, and only create one if there's no active loop available.

Target function: https://gi... | open | 2018-07-30T18:51:25Z | 2018-10-20T02:58:55Z | https://github.com/omnilib/aiomultiprocess/issues/5 | [

"enhancement",

"good first issue"

] | amyreese | 1 |

sepandhaghighi/samila | matplotlib | 77 | Add Notebook Examples | #### Description

We can have some `*.ipynb` files providing some examples from Samila use. | closed | 2021-11-22T15:02:19Z | 2022-03-21T14:28:32Z | https://github.com/sepandhaghighi/samila/issues/77 | [

"enhancement"

] | sadrasabouri | 0 |

graphistry/pygraphistry | jupyter | 1 | Replace NaNs with nulls since node cannot parse JSON with NaNs | closed | 2015-06-23T21:06:19Z | 2015-08-06T13:54:24Z | https://github.com/graphistry/pygraphistry/issues/1 | [

"bug"

] | thibaudh | 1 | |

plotly/dash | plotly | 2,541 | [BUG] Inconsistent/buggy partial plot updates using Patch | **Example scenario / steps to reproduce:**

- App has several plots, all of which have the same x-axis coordinate units

- When one plot is rescaled (x-axis range modified, e.g. zoom in), all plots should rescale the same way

- Rather than rebuild each plot, we can now implement a callback using `Patch`. This way, t... | closed | 2023-05-25T01:16:45Z | 2024-07-24T17:08:29Z | https://github.com/plotly/dash/issues/2541 | [] | abehr | 2 |

ultralytics/yolov5 | machine-learning | 12,506 | Make number of channels in convolution configurable | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and found no similar feature requests.

### Description

It would be great if the number of layer per convolution could be configurable. At the moment, it is hard-coded to 8.

Some ASICs might have a numb... | closed | 2023-12-14T13:33:36Z | 2024-01-14T01:14:42Z | https://github.com/ultralytics/yolov5/issues/12506 | [

"enhancement",

"Stale"

] | Corallo | 2 |

ray-project/ray | data-science | 51,280 | [Serve] slashes in deployment name cause actor failure | ### What happened + What you expected to happen

When creating a Serve deployment with a name containing a slash (such as `TextGenerationModel.options(name="huawei-noah/TinyBERT_General_4L_312D")`) leads to actor failures. The error occurs because the slash in the name is likely being used as a path separator in log fi... | open | 2025-03-11T23:05:06Z | 2025-03-11T23:05:06Z | https://github.com/ray-project/ray/issues/51280 | [

"bug",

"triage",

"serve"

] | crypdick | 0 |

ultralytics/ultralytics | deep-learning | 19,374 | classification, oriented bounding boxes, pose estimation, and instance segmentation请问预训练模型有了么,在哪里下载呢? | ### Search before asking

- [x] I have searched the Ultralytics [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar feature requests.

### Description

classification, oriented bounding boxes, pose estimation, and instance segmentation请问预训练模型有了么,在哪里下载呢?

### Use case

_No response_

### Ad... | open | 2025-02-22T15:05:25Z | 2025-02-23T13:26:10Z | https://github.com/ultralytics/ultralytics/issues/19374 | [

"OBB",

"segment",

"classify",

"pose"

] | CachCheng | 3 |

simple-login/app | flask | 1,197 | Inconsistent Cache-Control header | Please note that this is only for bug report.

For help on your account, please reach out to us at hi[at]simplelogin.io. Please make sure to check out [our FAQ](https://simplelogin.io/faq/) that contains frequently asked questions.

For feature request, you can use our [forum](https://github.com/simple-login/app... | open | 2022-07-29T05:19:00Z | 2022-08-01T07:01:10Z | https://github.com/simple-login/app/issues/1197 | [] | proletarius101 | 1 |

jofpin/trape | flask | 145 | can i use trape without google api key? | open | 2019-04-03T19:05:44Z | 2019-04-04T14:31:52Z | https://github.com/jofpin/trape/issues/145 | [] | cybergost | 1 | |

python-visualization/folium | data-visualization | 1,661 | Not able to add multiple markers on the Map | **Describe the bug**

For some reason the folium. Marker only creates one marker it should make multiple markers if it is inside the For loop I have tried multiple variations of code saving index.html inside the for loop used the code add_to(map) created a feature group and have the markers be added to the group but no... | closed | 2022-11-23T01:13:38Z | 2022-11-23T11:38:50Z | https://github.com/python-visualization/folium/issues/1661 | [] | Crimson-Zero | 1 |

plotly/plotly.py | plotly | 4,086 | 5.13.1: test suite is failing in `_plotly_utils/tests/validators/test_integer_validator.py` unit | I'm packaging your module as an rpm package so I'm using the typical PEP517 based build, install and test cycle used on building packages from non-root account.

- `python3 -sBm build -w --no-isolation`

- because I'm calling `build` with `--no-isolation` I'm using during all processes only locally installed modules

-... | closed | 2023-02-27T20:44:26Z | 2024-07-11T14:22:19Z | https://github.com/plotly/plotly.py/issues/4086 | [] | kloczek | 7 |

huggingface/datasets | pytorch | 7,387 | Dynamic adjusting dataloader sampling weight | Hi,

Thanks for your wonderful work! I'm wondering is there a way to dynamically adjust the sampling weight of each data in the dataset during training? Looking forward to your reply, thanks again. | open | 2025-02-10T03:18:47Z | 2025-03-07T14:06:54Z | https://github.com/huggingface/datasets/issues/7387 | [] | whc688 | 3 |

Guovin/iptv-api | api | 437 | ffmpeg安装好后,依然没办法用1.4.9版本对节目源进行筛选 |

请问一下,为啥ffmpeg都安装好了,还是不能对节目源进行筛选? | closed | 2024-10-22T10:52:42Z | 2024-10-22T12:26:36Z | https://github.com/Guovin/iptv-api/issues/437 | [

"invalid",

"question"

] | lzedwin | 4 |

hankcs/HanLP | nlp | 596 | 现在elasticsearch 如日中天,谁能提供一个 elasticsearch与 HanLP结合的分词插件?谢谢 | closed | 2017-08-04T02:54:08Z | 2017-08-06T03:32:48Z | https://github.com/hankcs/HanLP/issues/596 | [

"duplicated"

] | hubiao1 | 1 | |

stanfordnlp/stanza | nlp | 599 | Create Amharic support | **Is your feature request related to a problem? Please describe.**

Request Amharic support which does not exist in stanza

**Describe the solution you'd like**

Add Amharic support

**Describe alternatives you've considered**

SpaCY, still work in progress for handling multi-word tokens.

https://github.com/explo... | closed | 2021-01-18T18:48:19Z | 2021-01-25T01:19:07Z | https://github.com/stanfordnlp/stanza/issues/599 | [

"enhancement",

"data issue"

] | yosiasz | 9 |

ranaroussi/yfinance | pandas | 1,234 | Uses HTTPX instead of Requests. | **Describe the problem**

Requests and other libraries without HTTP 2 support are not performing well, causing problems due to protections in some cases.

**Describe the solution**

Replacing Requests with HTTPX (along with some request header settings) can improve the approach and perform better results.

**Additi... | closed | 2022-12-11T11:42:49Z | 2023-02-02T18:49:17Z | https://github.com/ranaroussi/yfinance/issues/1234 | [] | bergginu | 1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.