repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

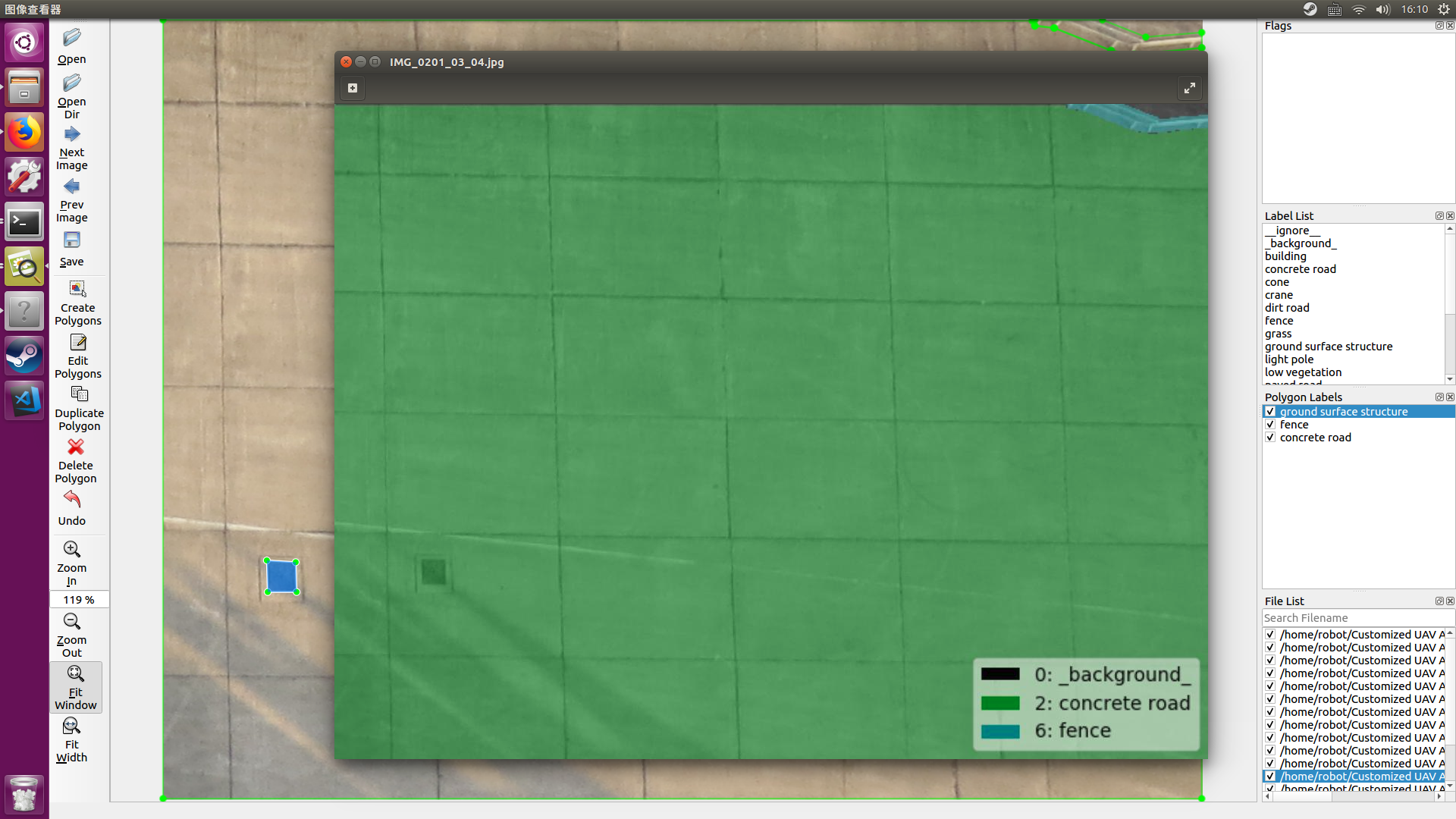

wkentaro/labelme | computer-vision | 329 | How to crop images from json annotations? | I would like to crop the images that I already have done the annotations, is this possible? | closed | 2019-02-21T17:56:39Z | 2019-04-27T01:58:32Z | https://github.com/wkentaro/labelme/issues/329 | [] | andrewsueg | 3 |

huggingface/peft | pytorch | 1,583 | cannot import name 'prepare_model_for_int8_training' from 'peft' | When I run the finetuning sample code from llama-recipes i.e. peft_finetuning.ipynb, I get this error. My code is , and python=3.9, peft=0.10.0

the error is

Has this method been deprecated? Or does my version not match? Thanks for the reply!

| closed | 2024-03-24T07:44:42Z | 2024-05-15T13:49:13Z | https://github.com/huggingface/peft/issues/1583 | [] | Eyict | 8 |

horovod/horovod | pytorch | 3,515 | Question: How can I run a distribution training using Horovod for a Regressor model | Question:

I have a regressor model which is not using libraries such as Tensorflow, Pytorch etc. Is there any example for that. | closed | 2022-04-20T14:14:41Z | 2022-04-20T14:50:14Z | https://github.com/horovod/horovod/issues/3515 | [] | mChowdhury-91 | 0 |

jupyter-incubator/sparkmagic | jupyter | 215 | Bad error messages when you forget arguments to magics | ```

%%delete

```

This throws a confusing exception rather than explaining to the user that they're missing a `-s` argument.

| closed | 2016-03-30T18:16:46Z | 2016-04-04T21:31:21Z | https://github.com/jupyter-incubator/sparkmagic/issues/215 | [

"kind:bug"

] | msftristew | 0 |

graphistry/pygraphistry | jupyter | 372 | Clear SSO exceptions | Clear exns that state the problem & where applicable, solution

- [ ] Old graphistry server

- [ ] Unknown idp

- [ ] Org without an idp | closed | 2022-07-09T00:34:12Z | 2022-09-02T02:15:53Z | https://github.com/graphistry/pygraphistry/issues/372 | [] | lmeyerov | 1 |

openapi-generators/openapi-python-client | rest-api | 557 | Types named datetime cause conflict with Python's datetime | **Describe the bug**

I am creating an OAS3 file from an existing API and one of the schemas includes a property named `datetime` which causes an Attribute exception when the generated model is imported.

**To Reproduce**

Steps to reproduce the behavior:

1. Convert a OAS3 file that contains a property named datatime:

2. Conversion succeeds with no errors or warnings

3. When executing generated code that has the offending model imported, an AttributeError will be thrown with message:

`'Unset' object has no attribute 'datetime'`

**Expected behavior**

A typed named `datetime` should be renamed with an underscore suffix like other built-in types

**OpenAPI Spec File**

```

components:

schemas:

fubar:

type: object

properties:

datetime:

type: string

format: date-time

```

**Desktop (please complete the following information):**

- OS: macOS 12.1

- Python Version: 3.9.6

- openapi-python-client version 0.10.8

**Additional context**

This looks like a simple fix. I'll work on a PR.

| closed | 2021-12-23T21:36:21Z | 2022-01-17T19:45:45Z | https://github.com/openapi-generators/openapi-python-client/issues/557 | [

"🐞bug"

] | kmray | 0 |

Johnserf-Seed/TikTokDownload | api | 265 | [BUG]一个图集作品一个文件夹 | **描述出现的错误**

一个图集作品一个文件夹

**bug复现**

复现这次行为的步骤:

1、下载用户主页作品时出现的

| closed | 2022-12-07T15:18:14Z | 2022-12-07T15:34:37Z | https://github.com/Johnserf-Seed/TikTokDownload/issues/265 | [

"故障(bug)",

"额外求助(help wanted)",

"无效(invalid)"

] | WYF03 | 1 |

huggingface/diffusers | pytorch | 10,722 | RuntimeError: The size of tensor a (4608) must match the size of tensor b (5120) at non-singleton dimension 2 during DreamBooth Training with Prior Preservation | ### Describe the bug

I am trying to run "train_dreambooth_lora_flux.py" on my dataset, but the error will happen if --with_prior_preservation is used.

**Who can help me? Thanks!**

### Reproduction

python ./examples/dreambooth/train_dreambooth_lora_flux.py \

--pretrained_model_name_or_path=$MODEL_NAME \

--instance_data_dir=$INSTANCE_DIR \

--output_dir=$OUTPUT_DIR \

--with_prior_preservation \

--class_data_dir="my_file" \

--class_prompt="A photo" \

--instance_prompt="A sks photo" \

--resolution=1024 \

--rank=32 \

--max_train_steps=5000 \

--checkpointing_steps=100 \

--seed="0" \

--mixed_precision="bf16" \

--train_batch_size=1 \

--guidance_scale=1 \

--gradient_accumulation_steps=4 \

--optimizer="prodigy" \

--learning_rate=1. \

--report_to="tensorboard" \

--lr_scheduler="constant" \

--lr_warmup_steps=0

### Logs

```shell

Traceback (most recent call last):

File "/data4/work/yinguowei/code/diffusers/./examples/dreambooth/train_dreambooth_lora_flux.py", line 1926, in <module>

main(args)

File "/data4/work/yinguowei/code/diffusers/./examples/dreambooth/train_dreambooth_lora_flux.py", line 1720, in main

model_pred = transformer(

File "/data/miniconda3/envs/diffusers_ygw/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1736, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/data/miniconda3/envs/diffusers_ygw/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1747, in _call_impl

return forward_call(*args, **kwargs)

File "/data/miniconda3/envs/diffusers_ygw/lib/python3.10/site-packages/accelerate/utils/operations.py", line 819, in forward

return model_forward(*args, **kwargs)

File "/data/miniconda3/envs/diffusers_ygw/lib/python3.10/site-packages/accelerate/utils/operations.py", line 807, in __call__

return convert_to_fp32(self.model_forward(*args, **kwargs))

File "/data/miniconda3/envs/diffusers_ygw/lib/python3.10/site-packages/torch/amp/autocast_mode.py", line 44, in decorate_autocast

return func(*args, **kwargs)

File "/data4/work/yinguowei/code/diffusers/src/diffusers/models/transformers/transformer_flux.py", line 529, in forward

encoder_hidden_states, hidden_states = block(

File "/data/miniconda3/envs/diffusers_ygw/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1736, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/data/miniconda3/envs/diffusers_ygw/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1747, in _call_impl

return forward_call(*args, **kwargs)

File "/data4/work/yinguowei/code/diffusers/src/diffusers/models/transformers/transformer_flux.py", line 188, in forward

attention_outputs = self.attn(

File "/data/miniconda3/envs/diffusers_ygw/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1736, in _wrapped_call_impl

return self._call_impl(*args, **kwargs)

File "/data/miniconda3/envs/diffusers_ygw/lib/python3.10/site-packages/torch/nn/modules/module.py", line 1747, in _call_impl

return forward_call(*args, **kwargs)

File "/data4/work/yinguowei/code/diffusers/src/diffusers/models/attention_processor.py", line 595, in forward

return self.processor(

File "/data4/work/yinguowei/code/diffusers/src/diffusers/models/attention_processor.py", line 2325, in __call__

query = apply_rotary_emb(query, image_rotary_emb)

File "/data4/work/yinguowei/code/diffusers/src/diffusers/models/embeddings.py", line 1204, in apply_rotary_emb

out = (x.float() * cos + x_rotated.float() * sin).to(x.dtype)

RuntimeError: The size of tensor a (4608) must match the size of tensor b (5120) at non-singleton dimension 2

```

### System Info

- 🤗 Diffusers version: 0.33.0.dev0

- Platform: Linux-5.4.119-19.0009.44-x86_64-with-glibc2.28

- Running on Google Colab?: No

- Python version: 3.10.16

- PyTorch version (GPU?): 2.5.1+cu124 (True)

- Flax version (CPU?/GPU?/TPU?): not installed (NA)

- Jax version: not installed

- JaxLib version: not installed

- Huggingface_hub version: 0.27.1

- Transformers version: 4.48.1

- Accelerate version: 1.3.0

- PEFT version: 0.14.0

- Bitsandbytes version: not installed

- Safetensors version: 0.5.2

- xFormers version: not installed

- Accelerator: NVIDIA A800-SXM4-80GB, 81920 MiB

NVIDIA A800-SXM4-80GB, 81920 MiB

NVIDIA A800-SXM4-80GB, 81920 MiB

NVIDIA A800-SXM4-80GB, 81920 MiB

NVIDIA A800-SXM4-80GB, 81920 MiB

NVIDIA A800-SXM4-80GB, 81920 MiB

NVIDIA A800-SXM4-80GB, 81920 MiB

NVIDIA A800-SXM4-80GB, 81920 MiB

- Using GPU in script?: <fill in>

- Using distributed or parallel set-up in script?: <fill in>

### Who can help?

_No response_ | open | 2025-02-05T08:48:35Z | 2025-03-13T15:03:47Z | https://github.com/huggingface/diffusers/issues/10722 | [

"bug",

"stale"

] | yinguoweiOvO | 5 |

ultralytics/yolov5 | pytorch | 12,638 | Training is slow on the second step of each epoch | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

Hello, i rencently made a ml rig to improve my training time. This allowed me to train my datasets (around 150k images) 10 times faster than before. I currently train with batch size = 1000 and each epoch is around 24 sec for the first step (against ~7min before) and the second step is around 1min30.

From my experience, the second step was always faster than the first one so I wonder why it is now almost 4 times longer

For the second step, the training time didn't improve that much and i don't really understand why. What does the second step does exactly ? Do you have any idea how I can improve the speed of the training.

I also have the feeling that i am CPU limited and that all the cores are not used during the training process. Is there a solution for that too ?

### Additional

_No response_ | closed | 2024-01-16T11:24:15Z | 2024-10-20T19:37:30Z | https://github.com/ultralytics/yolov5/issues/12638 | [

"question",

"Stale"

] | Busterfake | 3 |

vanna-ai/vanna | data-visualization | 628 | a minor bug in azure ai search | in azure ai search in similiar sql question it is extracting the sql in the format of question it is getting in text but it should get in question | closed | 2024-09-04T08:19:06Z | 2024-09-12T14:41:40Z | https://github.com/vanna-ai/vanna/issues/628 | [

"bug"

] | Jaya-sys | 2 |

LibrePhotos/librephotos | django | 680 | 6gb+ docker image | **Describe the enhancement you'd like**

All ofmy many docker images combines are <15gb.... one layer alone (00856e006cf8) on the librephotos images is 6gb !!

**Describe why this will benefit the LibrePhotos**

On a slow connection, 6gb takes 'forever' to download, and excess/unused 'stuff' must be being retained on that layer (given that apps way more complex in nature that librephotos take up way less)

**Additional context**

```

Pulling image: reallibrephotos/singleton:latest

IMAGE ID [1965417284]: Pulling from reallibrephotos/singleton.

IMAGE ID [e96e057aae67]: Pulling fs layer. Downloading 100% of 29 MB. Verifying Checksum. Download complete. Extracting. Pull complete.

IMAGE ID [01eca18ab462]: Pulling fs layer. Downloading 100% of 60 MB. Verifying Checksum. Download complete. Extracting. Pull complete.

IMAGE ID [9207938ee730]: Pulling fs layer. Downloading 100% of 983 KB. Verifying Checksum. Download complete. Extracting. Pull complete.

IMAGE ID [ee2b68d68fec]: Pulling fs layer. Downloading 100% of 53 KB. Verifying Checksum. Download complete. Extracting. Pull complete.

IMAGE ID [b164cc3bd207]: Pulling fs layer. Downloading 100% of 106 KB. Download complete. Extracting. Pull complete.

IMAGE ID [dc5062a40050]: Pulling fs layer. Downloading 100% of 67 KB. Verifying Checksum. Download complete. Extracting. Pull complete.

IMAGE ID [4f4fb700ef54]: Pulling fs layer. Downloading 100% of 32 B. Verifying Checksum. Download complete. Extracting. Pull complete.

IMAGE ID [00856e006cf8]: Pulling fs layer. Downloading 13% of 6 GB.

IMAGE ID [17ec9298135e]: Pulling fs layer. Downloading 100% of 845 KB. Verifying Checksum. Download complete.

IMAGE ID [9b9b1629d62f]: Pulling fs layer. Downloading 100% of 1 MB. Verifying Checksum. Download complete.

IMAGE ID [4ceafbd41d85]: Pulling fs layer. Downloading 100% of 122 B.

IMAGE ID [570003f2242e]: Pulling fs layer. Downloading 100% of 5 KB. Verifying Checksum. Download complete.

IMAGE ID [504f653315c2]: Pulling fs layer. Downloading 100% of 530 B. Verifying Checksum. Download complete.

IMAGE ID [8bd51bdb2792]: Pulling fs layer. Downloading 100% of 5 KB. Verifying Checksum. Download complete.

IMAGE ID [84af761819c0]: Pulling fs layer. Downloading 100% of 174 B. Verifying Checksum. Download complete

``` | closed | 2022-11-21T11:43:13Z | 2023-01-22T14:44:28Z | https://github.com/LibrePhotos/librephotos/issues/680 | [

"enhancement"

] | techie2000 | 6 |

mljar/mljar-supervised | scikit-learn | 751 | warning in test: tests/tests_automl/test_dir_change.py::AutoMLDirChangeTest::test_compute_predictions_after_dir_change | ```

============================= test session starts ==============================

platform linux -- Python 3.12.3, pytest-8.3.2, pluggy-1.5.0 -- /home/adas/mljar/mljar-supervised/venv/bin/python3

cachedir: .pytest_cache

rootdir: /home/adas/mljar/mljar-supervised

configfile: pytest.ini

plugins: cov-5.0.0

collecting ... collected 1 item

tests/tests_automl/test_dir_change.py::AutoMLDirChangeTest::test_compute_predictions_after_dir_change AutoML directory: automl_testing_A/automl_testing

The task is regression with evaluation metric rmse

AutoML will use algorithms: ['Baseline', 'Linear', 'Decision Tree', 'Random Forest', 'Xgboost', 'Neural Network']

AutoML will ensemble available models

AutoML steps: ['simple_algorithms', 'default_algorithms', 'ensemble']

* Step simple_algorithms will try to check up to 3 models

1_Baseline rmse 141.234462 trained in 0.25 seconds

2_DecisionTree rmse 89.485706 trained in 0.24 seconds

3_Linear rmse 0.0 trained in 0.25 seconds

* Step default_algorithms will try to check up to 3 models

4_Default_Xgboost rmse 55.550016 trained in 0.33 seconds

5_Default_NeuralNetwork rmse 9.201656 trained in 0.29 seconds

6_Default_RandomForest rmse 82.006449 trained in 0.57 seconds

* Step ensemble will try to check up to 1 model

Ensemble rmse 0.0 trained in 0.17 seconds

AutoML fit time: 5.97 seconds

AutoML best model: 3_Linear

FAILED

=================================== FAILURES ===================================

________ AutoMLDirChangeTest.test_compute_predictions_after_dir_change _________

cls = <class '_pytest.runner.CallInfo'>

func = <function call_and_report.<locals>.<lambda> at 0x7d808995b2e0>

when = 'call'

reraise = (<class '_pytest.outcomes.Exit'>, <class 'KeyboardInterrupt'>)

@classmethod

def from_call(

cls,

func: Callable[[], TResult],

when: Literal["collect", "setup", "call", "teardown"],

reraise: type[BaseException] | tuple[type[BaseException], ...] | None = None,

) -> CallInfo[TResult]:

"""Call func, wrapping the result in a CallInfo.

:param func:

The function to call. Called without arguments.

:type func: Callable[[], _pytest.runner.TResult]

:param when:

The phase in which the function is called.

:param reraise:

Exception or exceptions that shall propagate if raised by the

function, instead of being wrapped in the CallInfo.

"""

excinfo = None

start = timing.time()

precise_start = timing.perf_counter()

try:

> result: TResult | None = func()

venv/lib/python3.12/site-packages/_pytest/runner.py:341:

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

venv/lib/python3.12/site-packages/_pytest/runner.py:242: in <lambda>

lambda: runtest_hook(item=item, **kwds), when=when, reraise=reraise

venv/lib/python3.12/site-packages/pluggy/_hooks.py:513: in __call__

return self._hookexec(self.name, self._hookimpls.copy(), kwargs, firstresult)

venv/lib/python3.12/site-packages/pluggy/_manager.py:120: in _hookexec

return self._inner_hookexec(hook_name, methods, kwargs, firstresult)

venv/lib/python3.12/site-packages/_pytest/threadexception.py:92: in pytest_runtest_call

yield from thread_exception_runtest_hook()

venv/lib/python3.12/site-packages/_pytest/threadexception.py:68: in thread_exception_runtest_hook

yield

venv/lib/python3.12/site-packages/_pytest/unraisableexception.py:95: in pytest_runtest_call

yield from unraisable_exception_runtest_hook()

_ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _ _

def unraisable_exception_runtest_hook() -> Generator[None, None, None]:

with catch_unraisable_exception() as cm:

try:

yield

finally:

if cm.unraisable:

if cm.unraisable.err_msg is not None:

err_msg = cm.unraisable.err_msg

else:

err_msg = "Exception ignored in"

msg = f"{err_msg}: {cm.unraisable.object!r}\n\n"

msg += "".join(

traceback.format_exception(

cm.unraisable.exc_type,

cm.unraisable.exc_value,

cm.unraisable.exc_traceback,

)

)

> warnings.warn(pytest.PytestUnraisableExceptionWarning(msg))

E pytest.PytestUnraisableExceptionWarning: Exception ignored in: <_io.FileIO [closed]>

E

E Traceback (most recent call last):

E File "/home/adas/mljar/mljar-supervised/supervised/base_automl.py", line 218, in load

E self._data_info = json.load(open(data_info_path))

E ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

E ResourceWarning: unclosed file <_io.TextIOWrapper name='automl_testing_B/automl_testing/data_info.json' mode='r' encoding='UTF-8'>

venv/lib/python3.12/site-packages/_pytest/unraisableexception.py:85: PytestUnraisableExceptionWarning

=========================== short test summary info ============================

FAILED tests/tests_automl/test_dir_change.py::AutoMLDirChangeTest::test_compute_predictions_after_dir_change

============================== 1 failed in 8.06s ===============================

``` | closed | 2024-08-23T09:19:11Z | 2024-08-29T08:16:42Z | https://github.com/mljar/mljar-supervised/issues/751 | [] | a-szulc | 1 |

skypilot-org/skypilot | data-science | 4,876 | [Python API] Add `asyncio` support | Many frameworks (e.g., temporal) are asyncio native and it would be much easier to integrate with them if SkyPilot APIs returned await-able coroutines. For example, `request_id = sky.launch(); sky.get(request_id)` should be replaceable with an `await sky.launch()`.

Example of how ray works with asyncio: https://docs.ray.io/en/latest/ray-core/actors/async_api.html#asyncio-for-actors

Current workaround is to use `asyncio.to_thread` to call `sky.get`. | open | 2025-03-05T00:54:44Z | 2025-03-05T00:54:44Z | https://github.com/skypilot-org/skypilot/issues/4876 | [] | romilbhardwaj | 0 |

google-deepmind/graph_nets | tensorflow | 77 | How to use modules.SelfAttention | Hi

I tried to use modules.SelfAttention like this:

`graph_network = modules.SelfAttention()`

`input_graphs = utils_tf.data_dicts_to_graphs_tuple(graph_dicts)`

`output_graphs = graph_network(input_graphs)`

Then, I get this error:

`TypeError: _build() missing 3 required positional arguments: 'node_keys', 'node_queries', and 'attention_graph'`

I wonder if there's a notebook explaining how to use the modules.SelfAttention.

Best regards,

Ron

| closed | 2019-05-24T07:04:25Z | 2019-05-28T08:27:07Z | https://github.com/google-deepmind/graph_nets/issues/77 | [] | ronsoohyeong | 2 |

donnemartin/system-design-primer | python | 198 | Can you share with us the technic you used to create the design images? | Can you share what app you used to create the design images and the source files?

( opening study_guide.graffle in omnigraffle doesn't look like your pictures.)

Thanks.

(and great knowledge source, btw) | closed | 2018-08-11T16:12:01Z | 2018-08-12T03:02:47Z | https://github.com/donnemartin/system-design-primer/issues/198 | [] | yperry | 1 |

allure-framework/allure-python | pytest | 299 | Highlight test step | Hello,

Is there a way to highlight a step in allure report (generated by Robotframework).

I mean something like blue font or adding an icon ...

| closed | 2018-10-09T07:10:18Z | 2018-11-20T07:05:59Z | https://github.com/allure-framework/allure-python/issues/299 | [] | ric79 | 1 |

svc-develop-team/so-vits-svc | pytorch | 125 | [Help]: 在Google colab上运行,到推理这一步出现错误,ModuleNotFoundError: No module named 'torchcrepe' | ### 请勾选下方的确认框。

- [X] 我已仔细阅读[README.md](https://github.com/svc-develop-team/so-vits-svc/blob/4.0/README_zh_CN.md)和[wiki中的Quick solution](https://github.com/svc-develop-team/so-vits-svc/wiki/Quick-solution)。

- [X] 我已通过各种搜索引擎排查问题,我要提出的问题并不常见。

- [X] 我未在使用由第三方用户提供的一键包/环境包。

### 系统平台版本号

win10

### GPU 型号

525.85.12

### Python版本

Python 3.9.16

### PyTorch版本

2.0.0+cu118

### sovits分支

4.0(默认)

### 数据集来源(用于判断数据集质量)

UVR处理过的vtb直播音频

### 出现问题的环节或执行的命令

推理

### 问题描述

在推理环节出现以下错误

Traceback (most recent call last):

File "/content/so-vits-svc/inference_main.py", line 11, in <module>

from inference import infer_tool

File "/content/so-vits-svc/inference/infer_tool.py", line 20, in <module>

import utils

File "/content/so-vits-svc/utils.py", line 20, in <module>

from modules.crepe import CrepePitchExtractor

File "/content/so-vits-svc/modules/crepe.py", line 8, in <module>

import torchcrepe

ModuleNotFoundError: No module named 'torchcrepe'

### 日志

```python

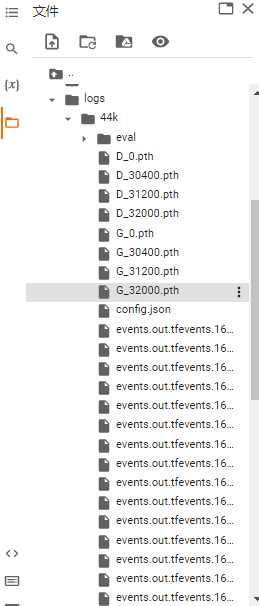

#@title 合成音频(推理)

#@markdown 需要将音频上传到so-vits-svc/raw 文件夹下, 然后设置模型路径、配置文件路径、合成的音频名称

!python inference_main.py -m "logs/44k/G_32000.pth" -c "configs/config.json" -n "live1.wav" -s qq

Traceback (most recent call last):

File "/content/so-vits-svc/inference_main.py", line 11, in <module>

from inference import infer_tool

File "/content/so-vits-svc/inference/infer_tool.py", line 20, in <module>

import utils

File "/content/so-vits-svc/utils.py", line 20, in <module>

from modules.crepe import CrepePitchExtractor

File "/content/so-vits-svc/modules/crepe.py", line 8, in <module>

import torchcrepe

ModuleNotFoundError: No module named 'torchcrepe'

```

### 截图`so-vits-svc`、`logs/44k`文件夹并粘贴到此处

### 补充说明

_No response_ | closed | 2023-04-05T14:49:38Z | 2023-04-09T05:14:42Z | https://github.com/svc-develop-team/so-vits-svc/issues/125 | [

"help wanted"

] | Asgardloki233 | 1 |

statsmodels/statsmodels | data-science | 9,232 | How to get in touch regarding a security concern | Hello 👋

I run a security community that finds and fixes vulnerabilities in OSS. A researcher (@tvnnn) has found a potential issue, which I would be eager to share with you.

Could you add a `SECURITY.md` file with an e-mail address for me to send further details to? GitHub [recommends](https://docs.github.com/en/code-security/getting-started/adding-a-security-policy-to-your-repository) a security policy to ensure issues are responsibly disclosed, and it would help direct researchers in the future.

Looking forward to hearing from you 👍

(cc @huntr-helper) | open | 2024-04-24T09:19:55Z | 2024-07-10T07:59:42Z | https://github.com/statsmodels/statsmodels/issues/9232 | [] | psmoros | 10 |

mithi/hexapod-robot-simulator | dash | 47 | Improve code quality of 4 specific modules | - [x] `hexapod.models`

- [x] `hexapod.linkage`

- [x] `hexapod.ik_solver.ik_solver`

- [x] `hexapod.ground_contact_solver`

https://github.com/mithi/hexapod-robot-simulator/blob/master/hexapod/models.py

https://github.com/mithi/hexapod-robot-simulator/blob/master/hexapod/linkage.py

https://github.com/mithi/hexapod-robot-simulator/blob/master/hexapod/ground_contact_solver.py

https://github.com/mithi/hexapod-robot-simulator/blob/master/hexapod/ik_solver/ik_solver.py | closed | 2020-04-13T21:27:01Z | 2020-04-14T12:02:58Z | https://github.com/mithi/hexapod-robot-simulator/issues/47 | [

"PRIORITY",

"code quality"

] | mithi | 2 |

milesmcc/shynet | django | 122 | Ignore inactive browser tabs | Sometimes it happens that users that visit my site leave their tab open while not actually visiting the site anymore. Currently I have a session that's been going on for 11 hours now, and this is also affecting my average session duration.

Maybe we should send the `document.visibilityState` in each heartbeat, and have a configurable option per site to ignore or not ignore hearbeats when this value is `hidden`.

> The Document of the top level browsing context can be in one of the following visibility states:

> `hidden`

> The Document is not visible at all on any screen.

> `visible`

> The Document is at least partially visible on at least one screen. This is the same condition under which the hidden attribute is set to false.

| closed | 2021-04-22T11:01:32Z | 2021-04-22T18:26:18Z | https://github.com/milesmcc/shynet/issues/122 | [] | CasperVerswijvelt | 3 |

SciTools/cartopy | matplotlib | 1,734 | Plot etopo | I'd like to suggest a new function for cartopy. As far as I'm aware, plotting an etopo bathymetry map with cartopy isn't easy. Basemap has a convenient function to do this: https://basemaptutorial.readthedocs.io/en/latest/backgrounds.html#etopo.

It would be great if cartopy has something similar! | open | 2021-02-18T18:08:07Z | 2021-03-12T21:32:03Z | https://github.com/SciTools/cartopy/issues/1734 | [

"Type: Enhancement",

"Experience-needed: low",

"Component: Raster source",

"Component: Feature source"

] | yz3062 | 0 |

graphistry/pygraphistry | jupyter | 475 | [BUG] donations demo fails midway | More merge branch testing:

1. umap() calls print memoization failure warnings

Ex:

```python

g = graphistry.nodes(ndf).bind(point_title='Category')

g2 = g.umap(X=['Why?'], y = ['Category'],

min_words=50000, # encode as topic model by setting min_words high

n_topics_target=4, # turn categories into a 4dim vector of regressive targets

n_topics=21, # latent embedding size

cardinality_threshold_target=2, # make sure that we throw targets into topic model over targets

)

```

=>

```

! Failed umap speedup attempt. Continuing without memoization speedups.* Ignoring target column of shape (285, 4) in UMAP fit, as it is not one dimensional

```

2. Failure starting with this cell:

```python

# pretend you have a minibatch of new data -- transform under the fit from the above

new_df, new_y = ndf.sample(5), ndf.sample(5) # pd.DataFrame({'Category': ndf['Category'].sample(5)})

a, b = g2.transform(new_df, new_y, kind='nodes')

a

```

=>

```

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

Cell In[20], line 3

1 # pretend you have a minibatch of new data -- transform under the fit from the above

2 new_df, new_y = ndf.sample(5), ndf.sample(5) # pd.DataFrame({'Category': ndf['Category'].sample(5)})

----> 3 a, b = g2.transform(new_df, new_y, kind='nodes')

4 a

TypeError: cannot unpack non-iterable Plotter object

``` | closed | 2023-05-01T06:21:32Z | 2023-05-26T23:29:48Z | https://github.com/graphistry/pygraphistry/issues/475 | [

"bug"

] | lmeyerov | 12 |

smarie/python-pytest-cases | pytest | 222 | Make the CI workflow execute the tests based on installed package, not source | As pointed out in https://github.com/smarie/python-pytest-cases/issues/220

The nox and CI build currently run the tests against the source, not the installed package. This is not nice, as it could miss some packaging issues.

In order to make this move, we would need the `tests/` folder to be outside of the `pytest_cases` folder, otherwise an import issue happens as noted by @kloczek in #220. This is due to the fact that `pytest` has a package auto-detection mechanism recursively climbing up the folders above `conftest.py` and stopping at the last `__init__.py`.

Let's take some time to figure out if this is the **ONLY** way to go ?

| closed | 2021-06-16T14:44:57Z | 2021-11-09T09:10:14Z | https://github.com/smarie/python-pytest-cases/issues/222 | [

"packaging (rpm/apt/...)"

] | smarie | 2 |

matterport/Mask_RCNN | tensorflow | 2,138 | 0 bbox&mask_loss when training on large images with plentiful annotation | Hi guys,

I met a problem which is when I was traning on large images (4000x2000) with plentiful annotations (100~300 annotated masks), the

mrcnn_bbox_loss

mrcnn_mask_loss

val_mrcnn_bbox_loss:

val_mrcnn_mask_loss

are always be 0.0000e+00

And the trained model cannot detect any instance on the testing images.

The training on small images (200x200) with just several annotations (1~5 annotated masks) is working fine with a good result.

Any ideas? I will be very appreciate,

| open | 2020-04-22T00:27:55Z | 2020-04-23T19:13:19Z | https://github.com/matterport/Mask_RCNN/issues/2138 | [] | yhc1994 | 1 |

nteract/papermill | jupyter | 468 | Workflows: Executing notebooks as a DAG? | **Note:** This should be tagged as question / suggestion but I don't think I can do that myself.

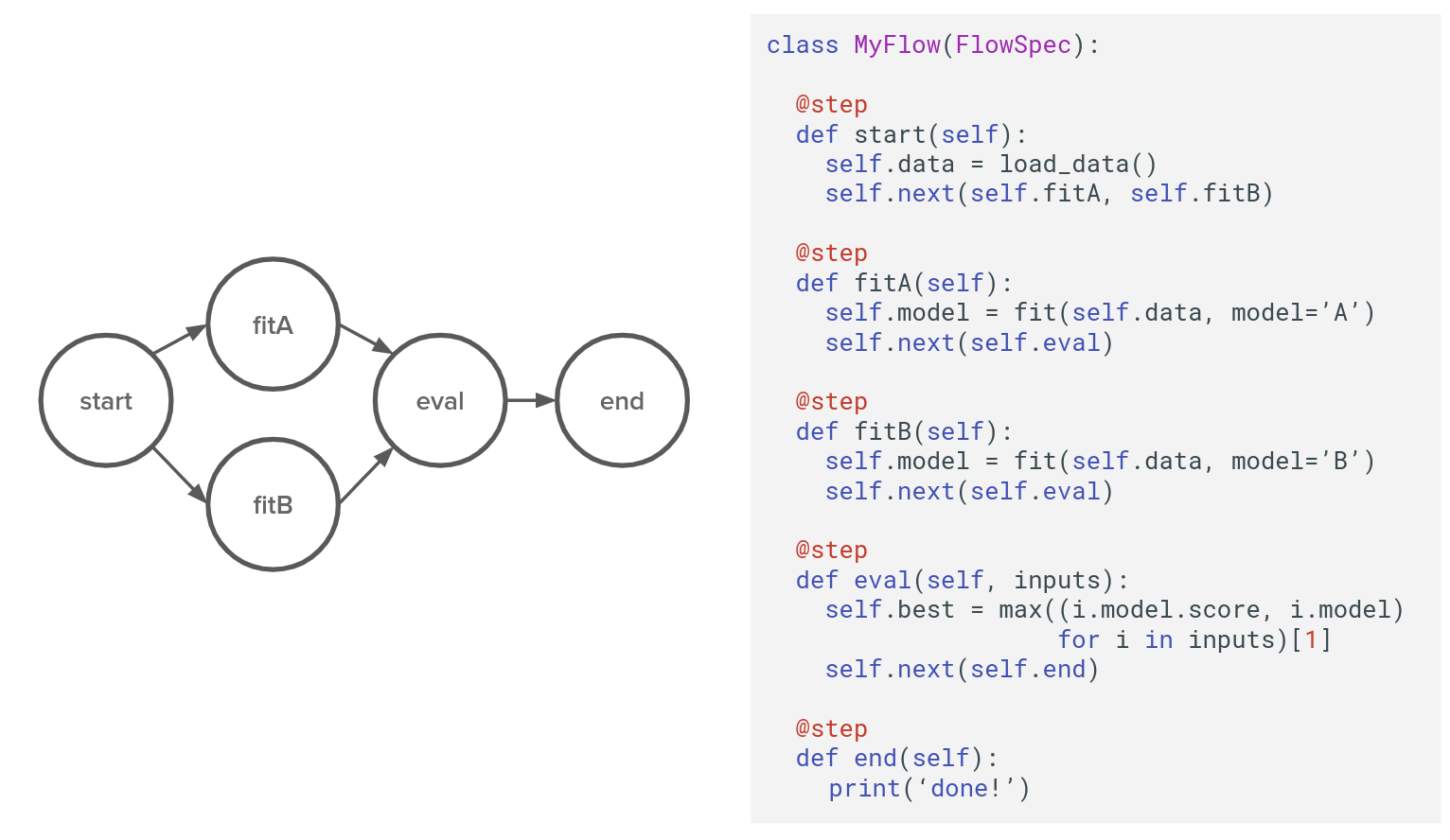

I have a use case where I want to enable data scientists to execute the notebooks they create as a DAG. This would be part of their development workflow, in order to ensure a set of notebooks work as an integrated pipeline before it is scheduled in a production environment using something like Airflow.

My question; Is there currently a way to achieve this using papermill? The Readme mentions workflows, but papermill engines are the closest thing I can find to a workflow (interpreted as a pipeline of notebooks)

And if there's no such functionality, what would be the best way to integrate this with papermill, bookstore, scrapbook etc?

@MSeal , Am particularly interested in feedback if you have the time.

Some very high level details about the environment this is situated in;

- Data scientists author individual Jupyter notebooks

- When moved to production, these are scheduled as a DAG using Airflow

- Each Airflow task spawns it's own papermill kernell on a remote compute cluster; which is responsible for executing only the notebook described by the task instance.

- nteract-scrapbook collects logs and provides feedback to the task scheduler.

What I'm working on is a way the data scientists to formally define their set of notebooks as a DAG and be able to execute it during their development workflow, hopefully producing a better integrated pipeline of notebooks without requiring the data scientist to work with (or have knowledge of) the task scheduler (Airflow) and compute cluster internals.

This is similar to this component of Metaflow by Netflix;

| closed | 2020-02-02T19:07:19Z | 2021-06-11T11:07:11Z | https://github.com/nteract/papermill/issues/468 | [

"question"

] | matthiasdv | 3 |

davidteather/TikTok-Api | api | 176 | Give likes or follow user | Thanks to the use of puppeteer is it possible that we can program it to click on the like or follow button in the profile page of an user or tiktok?

I have no experience with puppeteer so I don't know if it's possible.

Btw, great work!

| closed | 2020-07-11T08:59:57Z | 2020-07-11T14:56:14Z | https://github.com/davidteather/TikTok-Api/issues/176 | [] | elblogbruno | 2 |

tensorflow/datasets | numpy | 8,140 | Xxxx | /!\ PLEASE INCLUDE THE FULL STACKTRACE AND CODE SNIPPET

**Short description**

Description of the bug.

**Environment information**

* Operating System: <os>

* Python version: <version>

* `tensorflow-datasets`/`tfds-nightly` version: <package and version>

* `tensorflow`/`tf-nightly` version: <package and version>

* Does the issue still exists with the last `tfds-nightly` package (`pip install --upgrade tfds-nightly`) ?

**Reproduction instructions**

```python

<put a code snippet, a link to a gist or a colab here>

```

If you share a colab, make sure to update the permissions to share it.

**Link to logs**

If applicable, <link to gist with logs, stack trace>

**Expected behavior**

What you expected to happen.

**Additional context**

Add any other context about the problem here. | closed | 2024-11-25T17:51:02Z | 2024-12-09T14:26:23Z | https://github.com/tensorflow/datasets/issues/8140 | [

"bug"

] | Maxeboi1 | 0 |

ageitgey/face_recognition | python | 612 | Fails on Chinese people | * face_recognition version:

* Python version: 3.5.2

* Operating System: Ubuntu 16.04

### The library can't separate chinese people

I tried finding

https://www.google.com/url?sa=i&rct=j&q=&esrc=s&source=images&cd=&cad=rja&uact=8&ved=2ahUKEwjKwbLM6JTdAhWGMewKHekXBIYQjRx6BAgBEAU&url=https%3A%2F%2Flaotiantimes.com%2F2016%2F09%2F05%2Fchinese-prime-minister-to-visit-laos%2F&psig=AOvVaw0jNXiklPM4YaxtrqIEOjQe&ust=1535719348182900

in

https://www.google.com/url?sa=i&rct=j&q=&esrc=s&source=images&cd=&cad=rja&uact=8&ved=2ahUKEwiWwOmD55TdAhVNMewKHaGnBo0QjRx6BAgBEAU&url=https%3A%2F%2Fwww.japantimes.co.jp%2Fnews%2F2015%2F01%2F09%2Fnational%2Fhistory%2Fchina-brings-sex-slave-issue-spotlight%2F&psig=AOvVaw0jNXiklPM4YaxtrqIEOjQe&ust=1535719348182900

image and it identified the first person as the target person. Thus it can't accurately compare faces. | open | 2018-08-30T12:54:10Z | 2018-08-30T18:17:59Z | https://github.com/ageitgey/face_recognition/issues/612 | [] | f3uplift | 1 |

horovod/horovod | pytorch | 3,691 | NVTabular Docker image does not build | The `docker/horovod-nvtabular/Dockerfile` Docker image does not build in master any more:

https://github.com/horovod/horovod/runs/8261962616?check_suite_focus=true#step:8:2828

```

#27 40.96 Traceback (most recent call last):

#27 40.96 File "<string>", line 1, in <module>

#27 40.96 File "/root/miniconda3/lib/python3.8/site-packages/tensorflow/__init__.py", line 37, in <module>

#27 40.96 from tensorflow.python.tools import module_util as _module_util

#27 40.96 File "/root/miniconda3/lib/python3.8/site-packages/tensorflow/python/__init__.py", line 37, in <module>

#27 40.96 from tensorflow.python.eager import context

#27 40.96 File "/root/miniconda3/lib/python3.8/site-packages/tensorflow/python/eager/context.py", line 29, in <module>

#27 40.96 from tensorflow.core.framework import function_pb2

#27 40.96 File "/root/miniconda3/lib/python3.8/site-packages/tensorflow/core/framework/function_pb2.py", line 7, in <module>

#27 40.96 from google.protobuf import descriptor as _descriptor

#27 40.96 File "/root/miniconda3/lib/python3.8/site-packages/google/protobuf/descriptor.py", line 40, in <module>

#27 40.96 from google.protobuf.internal import api_implementation

#27 40.96 File "/root/miniconda3/lib/python3.8/site-packages/google/protobuf/internal/api_implementation.py", line 104, in <module>

#27 40.96 from google.protobuf.pyext import _message

#27 40.96 TypeError: bases must be types

```

It is likely that conflicting dependencies break tensorflow (e.g. #3684). Try to compare versions installed earlier when the image used to build with versions now being installed. | closed | 2022-09-09T06:00:42Z | 2022-09-15T09:41:56Z | https://github.com/horovod/horovod/issues/3691 | [

"bug"

] | EnricoMi | 4 |

slackapi/bolt-python | fastapi | 380 | Document about the ways to write unit tests for Bolt apps | I am looking to write unit tests for my Bolt API, which consists of an app with several handlers for events / messages. The handlers are both _decorated_ using the `app.event` decorator, _and_ make use of the `app` object to access things like the `db` connection that has been put on it. For example:

```python

# in main.py

app = App(

token=APP_TOKEN,

signing_secret=SIGNING_SECRET,

process_before_response=True,

token_verification_enabled=token_verification_enabled,

)

app.db = db

# in api.py:

from .main import app

@app.command("/slashcommand")

def slash_command(ack, respond):

ack()

results = app.db.do_query()

respond(...)

```

The thing is, I cannot find any framework pointers, or documentation, on how I would write reasonable unit tests for this. Presumably ones where the kwargs like `ack` and `respond` are replaced by test doubles. How do I do this?

### The page URLs

* https://slack.dev/bolt-python/

## Requirements

Please read the [Contributing guidelines](https://github.com/slackapi/bolt-python/blob/main/.github/contributing.md) and [Code of Conduct](https://slackhq.github.io/code-of-conduct) before creating this issue or pull request. By submitting, you are agreeing to those rules.

| open | 2021-06-15T21:30:22Z | 2025-03-22T19:18:06Z | https://github.com/slackapi/bolt-python/issues/380 | [

"docs",

"enhancement",

"area:async",

"area:sync"

] | offbyone | 19 |

httpie/cli | api | 1,010 | Output not properly displayed in UTF-8 | Hi,

I'm using httpie v.2.3.0, it's really wonderful! While playing around with my website today I encountered a strange effect while displaying the output. All special characters, like €(EUR) or Ä/Ö/Ü are not shown properly, it seems like there's encoding error. `curl` on the other side does the job correctly.

Here are the headers:

```

# http -F --headers stocksport1.at:

HTTP/1.1 200 OK

accept-ranges: bytes

age: 39

content-encoding: gzip

content-length: 854

content-type: text/html

date: Sun, 03 Jan 2021 11:20:03 GMT

etag: "6cb-5b0869f17f6b5"

last-modified: Wed, 30 Sep 2020 11:58:44 GMT

server: Apache

vary: Accept-Encoding

# curl stocksport1.at -L -D -

HTTP/1.1 200 OK

accept-ranges: bytes

age: 39

content-encoding: gzip

content-length: 854

content-type: text/html

date: Sun, 03 Jan 2021 11:20:03 GMT

etag: "6cb-5b0869f17f6b5"

last-modified: Wed, 30 Sep 2020 11:58:44 GMT

server: Apache

vary: Accept-Encoding

```

The charset is defined in the header of the HTML file, but i guess this isn't interpreted by httpie. So, what I'm doing wrong or what is httpie doing wrong?

Many thanks in advance, regards, Thomas

| closed | 2021-01-03T11:30:56Z | 2021-02-19T21:00:24Z | https://github.com/httpie/cli/issues/1010 | [] | thmsklngr | 3 |

deepfakes/faceswap | deep-learning | 1,198 | add the function of selecting multiple faces in the UI of manual | the gui is wonderful, but i cant choose multiple faces that i want to delete,

i have to delete them one by one..

so , is it possbile to add the function so we can quickly choose lots of faces that we want to delete | closed | 2021-12-11T13:47:27Z | 2021-12-12T03:02:21Z | https://github.com/deepfakes/faceswap/issues/1198 | [] | leeso2021 | 1 |

fugue-project/fugue | pandas | 503 | [COMPATIBILITY] Deprecate python 3.7 support | Python 3.7 end of life happened in June 2023, 0.8.6 is our last Fugue version to support python 3.7. It has several major bug fixes, and after this release, there will be breaking changes that will not work with Python 3.7. | closed | 2023-08-16T05:30:52Z | 2023-08-16T07:28:51Z | https://github.com/fugue-project/fugue/issues/503 | [

"python deprecation",

"compatibility"

] | goodwanghan | 0 |

iperov/DeepFaceLab | deep-learning | 5,608 | Деловое предложение | Иван добрый день , меня зовут Андрей запустил проекты Антивирус Гризли про , online-ocenka.ru оценка для нотариусов , к вам есть деловое предложение. Свяжитесь со мной пожалуйста , не могу найти как вам написать. ТГ @andrei_kratkiy .

Сорри за спам ветки. | open | 2023-01-09T09:48:08Z | 2023-06-08T23:08:15Z | https://github.com/iperov/DeepFaceLab/issues/5608 | [] | Andreypetrov10 | 1 |

aleju/imgaug | machine-learning | 5 | Changes : Review documentation style | I have added (some) documentation for the Augmenter and Noop classes in the augmenters.py file.

Review the changes in [My fork](https://github.com/SarthakYadav/imgaug) and make suggestions.

This is just a glimpse. Much work will be needed on documentation (as more often than not I am not quite sure what a parameter signifies, etc). | closed | 2016-12-11T12:26:10Z | 2016-12-11T18:34:25Z | https://github.com/aleju/imgaug/issues/5 | [] | SarthakYadav | 5 |

tflearn/tflearn | tensorflow | 624 | Bug using batch_norm in combination with tf==1.0.0 | I am experiencing very strange `batch_norm` behaviour, probably caused by the commit https://github.com/tflearn/tflearn/commit/3cfc4f471a6184f9acd36b50b239329911de5ab2 by @WHAAAT

Except in the code is alway catching the following exception (which is very strange):

```

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tflearn/layers/conv.py", line 1147, in residual_block

resnet = tflearn.batch_normalization(resnet)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tflearn/layers/normalization.py", line 79, in batch_normalization

trainable=False, restore=restore)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/contrib/framework/python/ops/arg_scope.py", line 177, in func_with_args

return func(*args, **current_args)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tflearn/variables.py", line 66, in variable

validate_shape=validate_shape)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/ops/variable_scope.py", line 988, in get_variable

custom_getter=custom_getter)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/ops/variable_scope.py", line 890, in get_variable

custom_getter=custom_getter)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/ops/variable_scope.py", line 348, in get_variable

validate_shape=validate_shape)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/ops/variable_scope.py", line 333, in _true_getter

caching_device=caching_device, validate_shape=validate_shape)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/ops/variable_scope.py", line 684, in _get_single_variable

validate_shape=validate_shape)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/ops/variables.py", line 226, in __init__

expected_shape=expected_shape)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/ops/variables.py", line 303, in _init_from_args

initial_value(), name="initial_value", dtype=dtype)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/ops/variable_scope.py", line 673, in <lambda>

shape.as_list(), dtype=dtype, partition_info=partition_info)

TypeError: __init__() got multiple values for keyword argument 'dtype'

```

and the code in except is always being run.

This thing is causing following problems for me:

1. I am unable to load old tflearn models with following problem:

```

.....

model.load(model_file)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tflearn/models/dnn.py", line 278, in load

self.trainer.restore(model_file, weights_only, **optargs)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tflearn/helpers/trainer.py", line 449, in restore

self.restorer.restore(self.session, model_file)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/training/saver.py", line 1439, in restore

{self.saver_def.filename_tensor_name: save_path})

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/client/session.py", line 767, in run

run_metadata_ptr)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/client/session.py", line 965, in _run

feed_dict_string, options, run_metadata)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/client/session.py", line 1015, in _do_run

target_list, options, run_metadata)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/client/session.py", line 1035, in _do_call

raise type(e)(node_def, op, message)

tensorflow.python.framework.errors_impl.NotFoundError: Tensor name "BatchNormalization/moving_mean_1" not found in checkpoint files mymodel/model.ckpt-20

[[Node: save_1/RestoreV2_2 = RestoreV2[dtypes=[DT_FLOAT], _device="/job:localhost/replica:0/task:0/cpu:0"](_recv_save_1/Const_0, save_1/RestoreV2_2/tensor_names, save_1/RestoreV2_2/shape_and_slices)]]

Caused by op u'save_1/RestoreV2_2', defined at:

....

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tflearn/models/dnn.py", line 64, in __init__

best_val_accuracy=best_val_accuracy)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tflearn/helpers/trainer.py", line 145, in __init__

keep_checkpoint_every_n_hours=keep_checkpoint_every_n_hours)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/training/saver.py", line 1051, in __init__

self.build()

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/training/saver.py", line 1081, in build

restore_sequentially=self._restore_sequentially)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/training/saver.py", line 675, in build

restore_sequentially, reshape)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/training/saver.py", line 402, in _AddRestoreOps

tensors = self.restore_op(filename_tensor, saveable, preferred_shard)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/training/saver.py", line 242, in restore_op

[spec.tensor.dtype])[0])

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/ops/gen_io_ops.py", line 668, in restore_v2

dtypes=dtypes, name=name)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/framework/op_def_library.py", line 763, in apply_op

op_def=op_def)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/framework/ops.py", line 2395, in create_op

original_op=self._default_original_op, op_def=op_def)

File "/Users/janzikes/anaconda2/envs/research/lib/python2.7/site-packages/tensorflow/python/framework/ops.py", line 1264, in __init__

self._traceback = _extract_stack()

NotFoundError (see above for traceback): Tensor name "BatchNormalization/moving_mean_1" not found in checkpoint files mymodel/model.ckpt-20

[[Node: save_1/RestoreV2_2 = RestoreV2[dtypes=[DT_FLOAT], _device="/job:localhost/replica:0/task:0/cpu:0"](_recv_save_1/Const_0, save_1/RestoreV2_2/tensor_names, save_1/RestoreV2_2/shape_and_slices)]]

```

2. Interesting thing happens when i change the code in tflearn from:

```

try:

moving_mean = vs.variable('moving_mean', input_shape[-1:], initializer=tf.zeros_initializer,

trainable=False, restore=restore)

except:

moving_mean = vs.variable('moving_mean', input_shape[-1:], initializer=tf.zeros_initializer(),

trainable=False, restore=restore)

```

to the original state:

```

moving_mean = vs.variable('moving_mean', input_shape[-1:], initializer=tf.zeros_initializer(),

trainable=False, restore=restore)

```

This loads the old model for me, but unfortunately predicts different stuff (worse stuff) than it used to predict with `tensorflow==0.11` and older tflearn (pre 0.3.0 commit).

3. During the training phase everything works well, except that it always ends up in the except block, the code in the try block always fails with the exception `TypeError: __init__() got multiple values for keyword argument 'dtype'`.

My python packages are following: `tflearn==0.3.0` and `tensorflow==1.0.0`. | closed | 2017-02-23T13:07:59Z | 2017-02-27T15:20:27Z | https://github.com/tflearn/tflearn/issues/624 | [] | ziky90 | 4 |

ultralytics/ultralytics | machine-learning | 18,832 | Segmentation mask error in training batch when using multiprocess | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar bug report.

### Ultralytics YOLO Component

Train

### Bug

I've noticed a very strange bug that occurs when starting YOLOv8 training in a seperate process (starting each trainig in a seperate multiprocess):

The generated training batches (for some reason always batch 2 and 4) generate weird training masks. I'm not sure if they are applied during the training or just appear on the training batch. It happens throughout all YOLOv8 versions.

At first I thought it has a connection with the albumentations library, but I was wrong.

I've attached the generated training batches as files.

kfold2_train_batch1_wrong:

has almost ALL masks wrong except images 0755

[](https://github.com/user-attachments/assets/e4b81136-f607-43e0-be52-77ebcbb331ad)

as a comparison I've uploaded kfold kfold4_train_batch_0, there you can clearly see image 0938 (top left ish) has the correct mask compared to kfold2_train_batch1_wrong.

[](https://github.com/user-attachments/assets/b5a8c3a3-004b-42f9-bc4c-d9d9b31df487)

the other training batch with same problem is kfold3_train_batch2, here only 2 images appear to be wrong (0691 and 0660)

[](https://github.com/user-attachments/assets/bd4bfcf5-05f8-4ffc-80b4-37bee938174b)

I can guarantee the mask conversion from the original dataset to YOLOv8 annotation format is correct and this isn't the problem. This only happens when using the multiprocess.

Any alternative way that I can use something which acts like multiprocess? I really need to start the trainig in a seperate process and get the results then close it.

### Environment

```

Ultralytics 8.3.65 🚀 Python-3.11.8 torch-2.5.1+cu124 CUDA:0 (Tesla V100-SXM2-16GB, 16144MiB)

Setup complete ✅ (80 CPUs, 503.8 GB RAM, 379.5/439.0 GB disk)

OS Linux-5.15.0-1065-nvidia-x86_64-with-glibc2.35

Environment Linux

Python 3.11.8

Install pip

RAM 503.76 GB

Disk 379.5/439.0 GB

CPU Intel Xeon E5-2698 v4 2.20GHz

CPU count 80

GPU Tesla V100-SXM2-16GB, 16144MiB

GPU count 1

CUDA 12.4

numpy ✅ 1.26.4>=1.23.0

numpy ✅ 1.26.4<2.0.0; sys_platform == "darwin"

matplotlib ✅ 3.10.0>=3.3.0

opencv-python ✅ 4.11.0.86>=4.6.0

pillow ✅ 11.1.0>=7.1.2

pyyaml ✅ 6.0.2>=5.3.1

requests ✅ 2.32.3>=2.23.0

scipy ✅ 1.15.1>=1.4.1

torch ✅ 2.5.1>=1.8.0

torch ✅ 2.5.1!=2.4.0,>=1.8.0; sys_platform == "win32"

torchvision ✅ 0.20.1>=0.9.0

tqdm ✅ 4.67.1>=4.64.0

psutil ✅ 6.1.1

py-cpuinfo ✅ 9.0.0

pandas ✅ 2.2.3>=1.1.4

seaborn ✅ 0.13.2>=0.11.0

ultralytics-thop ✅ 2.0.14>=2.0.0

```

### Minimal Reproducible Example

```

from ultralytics import YOLO

import torch.multiprocessing as mp

from multiprocessing import Queue

ROOT_DIR = os.getcwd()

DATA_DIR = os.path.join(ROOT_DIR,'data')

TRAINING_DIR = os.path.join(ROOT_DIR,'training')

def init_yaml(kfold_number=1):

data = {

'path': f'{DATA_DIR}/{kfold_number}',

'train': 'train/images',

'val': 'val/images',

'names': {

0: 'wound'

}

}

with open(os.path.join(ROOT_DIR, "config.yaml"), "w") as f:

yaml.dump(data, f, default_flow_style=False)

def train_model_function(i, result_queue):

model = YOLO('yolov8x-seg.pt')

results_training = model.train(data=os.path.join(ROOT_DIR, "config.yaml"), project=TRAINING_DIR, name="train_test_" + str(i),

epochs=1, imgsz=512, batch=16, deterministic=True, plots=True, seed=1401,

close_mosaic=0, augment=False, hsv_h=0, hsv_s=0, hsv_v=0, degrees=0, translate=0, scale=0,

shear=0.0, perspective=0, flipud=0, fliplr=0, bgr=0, mosaic=0, mixup=0, copy_paste=0, erasing=0,crop_fraction=0)

r = {

'train_map': results_training.seg.map,

'train_map50': results_training.seg.map50,

'train_map75': results_training.seg.map75,

'train_precision': float(results_training.seg.p[0]),

'train_recall': float(results_training.seg.r[0]),

'train_f1': float(results_training.seg.f1[0]),

}

result_queue.put((r))

def start_training_in_process(i):

result_queue = Queue()

train_process = mp.Process(

target=train_model_function,

args=(i, result_queue)

)

train_process.name = "TrainingProcess"

train_process.start()

train_process.join()

if not result_queue.empty():

r = result_queue.get()

return r

else:

print("No results returned from process")

return None

if __name__ == '__main__':

#loop through different kfolds of dataset

for i in range(1,6):

init_yaml(i)

start_training_in_process(i)

```

### Additional

_No response_

### Are you willing to submit a PR?

- [ ] Yes I'd like to help by submitting a PR! | open | 2025-01-22T23:49:08Z | 2025-02-26T06:34:19Z | https://github.com/ultralytics/ultralytics/issues/18832 | [

"bug",

"segment"

] | armanivers | 37 |

yihong0618/running_page | data-visualization | 560 | 请问从nike换成strava后,历史数据有重复怎么删除? | 从nike换成strava后,我的历史数据有重复,应该是因为strava把之前nike的记录又重复上传了一次(它们不完全一样,有一点差别),用的是github action,请问怎么能把重复的去掉?谢谢!

我的网站:

https://schenxia.github.io/running_page/ | closed | 2023-12-04T05:29:57Z | 2023-12-05T16:40:47Z | https://github.com/yihong0618/running_page/issues/560 | [] | schenxia | 2 |

plotly/plotly.py | plotly | 4,415 | Empty Facets on partially filled MultiIndex DataFrame with datetime.date in bar plot | I have a pandas dataframe with a multicolumn index consisting of three levels, which I want to plot using a plotly.express.bar. First dimension `Station` (str) goes into the facets, the second dimension `Night` (datetime.date) goes onto the x-axis and the third dimension `Limit` (int) is going to be the stacked bars.

The bug is, that the resulting figure does not show any data on facets which do only have data at one `Night`. The expected outcome would be that even though data exists only on one date this data is presented.

A reproducible example looks like this:

```python

import datetime

import pandas as pd

import plotly.express as px

df = pd.DataFrame([

["station-01", datetime.date(2023,10,1), 50, 0.1],

["station-01", datetime.date(2023,10,1), 100, 0.15],

["station-01", datetime.date(2023,10,2), 50, 0.2],

["station-01", datetime.date(2023,10,2), 100, 0.22],

["station-01", datetime.date(2023,10,3), 50, 0.05],

["station-01", datetime.date(2023,10,3), 100, 0.02],

["station-02", datetime.date(2023,10,1), 50, 0.5],

["station-02", datetime.date(2023,10,1), 100, 0.2],

["station-03", datetime.date(2023,10,1), 50, 0.5],

["station-03", datetime.date(2023,10,1), 100, 0.5],

], columns=["Station", "Night", "Limit", "Relative Duration"])

df = df.set_index(["Station", "Night", "Limit"])

px.bar(

df,

x=df.index.get_level_values("Night"),

y="Relative Duration",

range_y=[0, 1],

color=df.index.get_level_values("Limit"),

facet_col=df.index.get_level_values("Station"),

)

```

The figure created looks like this:

When replacing the `datetime.date` type by a string the figure looks like expected:

```python

import datetime

import pandas as pd

import plotly.express as px

df = pd.DataFrame([

["station-01", "101", 50, 0.1],

["station-01", "101", 100, 0.15],

["station-01", "102", 50, 0.2],

["station-01", "102", 100, 0.22],

["station-01", "103", 50, 0.05],

["station-01", "103", 100, 0.02],

["station-02", "101", 50, 0.5],

["station-02", "101", 100, 0.2],

["station-03", "101", 50, 0.5],

["station-03", "101", 100, 0.3],

], columns=["Station", "Night", "Limit", "Relative Duration"])

df = df.set_index(["Station", "Night", "Limit"])

px.bar(

df,

x=df.index.get_level_values("Night"),

y="Relative Duration",

range_y=[0, 1],

color=df.index.get_level_values("Limit"),

facet_col=df.index.get_level_values("Station"),

)

```

Due to this behavior I suspect the plot yielded from the initial data frame is false and there is a bug inside of the plotly.express code.

Thanks for helping out. | open | 2023-11-06T13:59:49Z | 2024-08-12T13:41:39Z | https://github.com/plotly/plotly.py/issues/4415 | [

"bug",

"sev-2",

"P3"

] | jonashoechst | 0 |

CorentinJ/Real-Time-Voice-Cloning | python | 411 | poor performance in compare to the main paper? | Hi,

For those of you who are working with this repo to synthesize different voices;

Have you noticed a huge difference between the voices generated by this repo and the samples released by the main paper [here](https://google.github.io/tacotron/publications/speaker_adaptation/)?

If yes, let's discuss and find out the reason(s). | closed | 2020-07-09T09:11:52Z | 2020-07-22T21:41:48Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/411 | [] | amintavakol | 6 |

tflearn/tflearn | tensorflow | 951 | Save model and load it on another model with same structure | I have two DNN-models with the same structure and they are trained independently, but I want to transfer the weights of the first model to the second model at runtime.

```

import tflearn

from tflearn.layers.core import input_data, fully_connected

from tflearn.layers.estimator import regression

#import copy

def create_model(shape):

fc = fully_connected(shape, n_units=12, activation="relu")

fc2 = fully_connected(fc, n_units=2, activation="softmax")

regressor = regression(fc2)

return tflearn.DNN(regressor)

shape = input_data([None, 2])

model1 = create_model(shape) #first model

model2 = create_model(shape) #second model

"""

...

train model1

...

"""

path = "models/test_model.tfl"

model1.save(path)

model2.load(path, weights_only=True) #transfer the weights of the first model

#model2 = copy.deepcopy(model1) throws Error because of thread.Lock Object

```

If I run this I get the following Error:

```

2017-11-05 17:42:47.978858: W C:\tf_jenkins\home\workspace\rel-win\M\windows\PY\36\tensorflow\core\framework\op_kernel.cc:1192] Not found: Key FullyConnected_3/b not found in checkpoint

2017-11-05 17:42:47.981882: W C:\tf_jenkins\home\workspace\rel-win\M\windows\PY\36\tensorflow\core\framework\op_kernel.cc:1192] Not found: Key FullyConnected_3/W not found in checkpoint

2017-11-05 17:42:47.982010: W C:\tf_jenkins\home\workspace\rel-win\M\windows\PY\36\tensorflow\core\framework\op_kernel.cc:1192] Not found: Key FullyConnected_2/b not found in checkpoint

2017-11-05 17:42:47.982181: W C:\tf_jenkins\home\workspace\rel-win\M\windows\PY\36\tensorflow\core\framework\op_kernel.cc:1192] Not found: Key FullyConnected_2/W not found in checkpoint

Traceback (most recent call last):

File "D:\Python\Python36\lib\site-packages\tensorflow\python\client\session.py", line 1323, in _do_call

return fn(*args)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\client\session.py", line 1302, in _run_fn

status, run_metadata)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\framework\errors_impl.py", line 473, in __exit__

c_api.TF_GetCode(self.status.status))

tensorflow.python.framework.errors_impl.NotFoundError: Key FullyConnected_3/b not found in checkpoint

[[Node: save_6/RestoreV2_7 = RestoreV2[dtypes=[DT_FLOAT], _device="/job:localhost/replica:0/task:0/device:CPU:0"](_arg_save_6/Const_0_0, save_6/RestoreV2_7/tensor_names, save_6/RestoreV2_7/shape_and_slices)]]

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "C:/Users/Jonas/PycharmProjects/ReinforcementLearning/TflearnSaveLoadTest.py", line 20, in <module>

model2.load(path, weights_only=True)

File "D:\Python\Python36\lib\site-packages\tflearn\models\dnn.py", line 308, in load

self.trainer.restore(model_file, weights_only, **optargs)

File "D:\Python\Python36\lib\site-packages\tflearn\helpers\trainer.py", line 492, in restore

self.restorer_trainvars.restore(self.session, model_file)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\training\saver.py", line 1666, in restore

{self.saver_def.filename_tensor_name: save_path})

File "D:\Python\Python36\lib\site-packages\tensorflow\python\client\session.py", line 889, in run

run_metadata_ptr)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\client\session.py", line 1120, in _run

feed_dict_tensor, options, run_metadata)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\client\session.py", line 1317, in _do_run

options, run_metadata)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\client\session.py", line 1336, in _do_call

raise type(e)(node_def, op, message)

tensorflow.python.framework.errors_impl.NotFoundError: Key FullyConnected_3/b not found in checkpoint

[[Node: save_6/RestoreV2_7 = RestoreV2[dtypes=[DT_FLOAT], _device="/job:localhost/replica:0/task:0/device:CPU:0"](_arg_save_6/Const_0_0, save_6/RestoreV2_7/tensor_names, save_6/RestoreV2_7/shape_and_slices)]]

Caused by op 'save_6/RestoreV2_7', defined at:

File "C:/Users/Jonas/PycharmProjects/ReinforcementLearning/TflearnSaveLoadTest.py", line 16, in <module>

model2 = create_model(shape)

File "C:/Users/Jonas/PycharmProjects/ReinforcementLearning/TflearnSaveLoadTest.py", line 11, in create_model

return tflearn.DNN(regressor)

File "D:\Python\Python36\lib\site-packages\tflearn\models\dnn.py", line 65, in __init__

best_val_accuracy=best_val_accuracy)

File "D:\Python\Python36\lib\site-packages\tflearn\helpers\trainer.py", line 155, in __init__

allow_empty=True)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\training\saver.py", line 1218, in __init__

self.build()

File "D:\Python\Python36\lib\site-packages\tensorflow\python\training\saver.py", line 1227, in build

self._build(self._filename, build_save=True, build_restore=True)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\training\saver.py", line 1263, in _build

build_save=build_save, build_restore=build_restore)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\training\saver.py", line 751, in _build_internal

restore_sequentially, reshape)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\training\saver.py", line 427, in _AddRestoreOps

tensors = self.restore_op(filename_tensor, saveable, preferred_shard)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\training\saver.py", line 267, in restore_op

[spec.tensor.dtype])[0])

File "D:\Python\Python36\lib\site-packages\tensorflow\python\ops\gen_io_ops.py", line 1020, in restore_v2

shape_and_slices=shape_and_slices, dtypes=dtypes, name=name)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\framework\op_def_library.py", line 787, in _apply_op_helper

op_def=op_def)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\framework\ops.py", line 2956, in create_op

op_def=op_def)

File "D:\Python\Python36\lib\site-packages\tensorflow\python\framework\ops.py", line 1470, in __init__

self._traceback = self._graph._extract_stack() # pylint: disable=protected-access

NotFoundError (see above for traceback): Key FullyConnected_3/b not found in checkpoint

[[Node: save_6/RestoreV2_7 = RestoreV2[dtypes=[DT_FLOAT], _device="/job:localhost/replica:0/task:0/device:CPU:0"](_arg_save_6/Const_0_0, save_6/RestoreV2_7/tensor_names, save_6/RestoreV2_7/shape_and_slices)]]

```

I've also tried to use the deepcopy function of the copy module to duplicate the model, but that doesn't seem to work:

`TypeError: can't pickle _thread.lock objects` | open | 2017-11-05T17:13:42Z | 2017-11-07T06:28:13Z | https://github.com/tflearn/tflearn/issues/951 | [] | TheRealfanibu | 1 |

plotly/plotly.py | plotly | 4,317 | Inconsistent Behaviour Between PlotlyJS and Plotly Python | If you take the figure object, the formatting string: '.2%f' will format values as percents when created with the Javascript library, but not with the Python library. '.2%', however, works with both libraries. | closed | 2023-08-11T16:15:15Z | 2023-08-11T17:51:34Z | https://github.com/plotly/plotly.py/issues/4317 | [] | msillz | 2 |

hack4impact/flask-base | sqlalchemy | 228 | ModuleNotFoundError: No module named 'flask.ext' | I have cloned the repo and followed the README.md to start the app.

But after running the command ```honcho start -e config.env -f Local```

i got below error, seems like the module is depricated.

```(.flask) user@flask:~/flask-base$ python -m honcho start -e config.env -f Local

15:44:52 system | redis.1 started (pid=3830)

15:44:53 system | web.1 started (pid=3834)

15:44:53 system | worker.1 started (pid=3835)

15:44:53 redis.1 | 3833:C 07 Mar 2024 15:44:53.027 # oO0OoO0OoO0Oo Redis is starting oO0OoO0OoO0Oo

15:44:53 redis.1 | 3833:C 07 Mar 2024 15:44:53.032 # Redis version=7.0.15, bits=64, commit=00000000, modified=0, pid=3833, just started

15:44:53 redis.1 | 3833:C 07 Mar 2024 15:44:53.033 # Warning: no config file specified, using the default config. In order to specify a config file use redis-server /path/to/redis.conf

15:44:53 redis.1 | 3833:M 07 Mar 2024 15:44:53.035 * Increased maximum number of open files to 10032 (it was originally set to 1024).

15:44:53 redis.1 | 3833:M 07 Mar 2024 15:44:53.036 * monotonic clock: POSIX clock_gettime

15:44:53 redis.1 | 3833:M 07 Mar 2024 15:44:53.037 * Running mode=standalone, port=6379.

15:44:53 redis.1 | 3833:M 07 Mar 2024 15:44:53.040 # Server initialized

15:44:53 redis.1 | 3833:M 07 Mar 2024 15:44:53.041 * Ready to accept connections

15:44:53 worker.1 | Traceback (most recent call last):

15:44:53 worker.1 | File "userflask-base/manage.py", line 5, in <module>

15:44:53 worker.1 | from flask_migrate import Migrate, MigrateCommand

15:44:53 worker.1 | File "/home/user/.flask/lib/python3.11/site-packages/flask_migrate/__init__.py", line 3, in <module>

15:44:53 worker.1 | from flask.ext.script import Manager

15:44:53 worker.1 | ModuleNotFoundError: No module named 'flask.ext'

15:44:53 web.1 | Traceback (most recent call last):

15:44:53 web.1 | File "/home/user/flask-base/manage.py", line 5, in <module>

15:44:53 web.1 | from flask_migrate import Migrate, MigrateCommand

15:44:53 web.1 | File "/home/user/.flask/lib/python3.11/site-packages/flask_migrate/__init__.py", line 3, in <module>

15:44:53 web.1 | from flask.ext.script import Manager

15:44:53 web.1 | ModuleNotFoundError: No module named 'flask.ext'

15:44:53 system | worker.1 stopped (rc=1)

15:44:53 system | sending SIGTERM to redis.1 (pid 3830)

15:44:53 system | sending SIGTERM to web.1 (pid 3834)

15:44:53 redis.1 | 3833:signal-handler (1709826293) Received SIGTERM scheduling shutdown...

15:44:53 system | web.1 stopped (rc=-15)

15:44:53 redis.1 | 3833:M 07 Mar 2024 15:44:53.444 # User requested shutdown...

15:44:53 redis.1 | 3833:M 07 Mar 2024 15:44:53.444 * Saving the final RDB snapshot before exiting.

15:44:53 redis.1 | 3833:M 07 Mar 2024 15:44:53.447 * DB saved on disk

15:44:53 redis.1 | 3833:M 07 Mar 2024 15:44:53.447 # Redis is now ready to exit, bye bye...

15:44:53 system | redis.1 stopped (rc=-15)``` | open | 2024-03-07T16:01:03Z | 2024-05-10T17:31:19Z | https://github.com/hack4impact/flask-base/issues/228 | [] | sathish-sign | 1 |

cobrateam/splinter | automation | 515 | Suggested updates to the `FlaskClient` driver | Hi - first off thank you for this wonderful library. I'm using it in conjunction with **pytest-bdd** and **Flask** and for the most part it's been plain sailing, however I am currently using a custom `FlaskClient` that overrides the `_do_method` to resolve 2 issues/traits I came across.

### `302` and `303` behaviour

By default most browser (correctly or incorrectly) will use the `GET` method when redirecting based on a `302` response, and `303`s (I believe) specifically address this confusion by declaring that the `303` response from a `POST` request is to a `GET` resource.

At the moment using the `FlaskClient` all `30X` responses retain the current method, so if a `303` response is received from a `POST` request then the `_do_method` will make another request using the `post` not the intended `get` method.

### `args` and `form` data

**Flask** provides access to data transmitted via `POST` or `PUT` through `request.form`, alternatively data transmitted in the URL (e.g via `GET`) is available through `request.args` (there's also `request.values` which allows you to access data in either but that's not important here).

Using the `FlaskClient` when you submit a form with a `method` attribute of `GET` (e.g `<form method="GET" ...>`) the request will be made as using the `GET` method but the data will be sent as if `POST`ed and will end up in the `request.form` attribute. As you can imagine this then causes issues because the view being called is looking for the submitted data in `request.args`.

The cause of the issue is that data is always sent in the `_do_method` using the `data` keyword argument when calling the `func_method`, but for a `GET` request (I believe) the `data` keyword should not sent as `None` and the data should be set against the URL as a query.

### Fixing these issues

As I mentioned at the start of the issue I'm currently using a patched driver based on the existing `FlaskClient` to resolve these issues/traits. I've included the code below in case others come across the same issues in the short-term and as a suggestion as to how they might be fixed. If you'd like me to contribute the changes as a pull request I'm more than happy to have a look at doing so over the weekend.

> **Note:** My patch is using Python3.4+, hence `from urllib import parse` so would need to be made compatible with 2.7+ though I think that would be a relatively easy task.

``` python

from urllib import parse

from splinter.browser import _DRIVERS

from splinter.driver import flaskclient

__all__ = ['FlaskClient']

class FlaskClient(flaskclient.FlaskClient):

"""

A patched `FlaskClient` driver that implements more standard `302`/`303`

behaviour and that sets data for `GET` requests against the URL.

"""

driver_name = 'flask'

def _do_method(self, method, url, data=None):

# Set the initial URL and client/HTTP method

self._url = url

func_method = getattr(self._browser, method.lower())

# Continue to make requests until a non 30X response is recieved

while True:

self._last_urls.append(url)

# If we're making a GET request set the data against the URL as a

# query.

if method.lower() == 'get':

# Parse the existing URL and it's query

url_parts = parse.urlparse(url)

url_params = parse.parse_qs(url_parts.query)

# Update any existing query dictionary with the `data` argument

url_params.update(data or {})

url_parts = url_parts._replace(

query=parse.urlencode(url_params, doseq=True))

# Rebuild the URL

url = parse.urlunparse(url_parts)

# As the `data` argument will be passed as a keyword argument to

# the `func_method` we set it `None` to prevent it populating

# `flask.request.form` on `GET` requests.

data = None

# Call the flask client

self._response = func_method(

url,

headers=self._custom_headers,

data=data,

follow_redirects=False

)

# Implement more standard `302`/`303` behaviour

if self._response.status_code in (302, 303):

func_method = getattr(self._browser, 'get')

# If the response was not in the `30X` range we're done

if self._response.status_code not in (301, 302, 303, 305, 307):

break

# If the response was in the `30X` range get next URL to request

url = self._response.headers['Location']

self._url = self._last_urls[-1]

self._post_load()

# Patch the default `FlaskClient` driver

_DRIVERS['flask'] = FlaskClient

```

| closed | 2016-09-20T16:37:13Z | 2020-03-08T14:54:24Z | https://github.com/cobrateam/splinter/issues/515 | [] | anthonyjb | 9 |

KevinMusgrave/pytorch-metric-learning | computer-vision | 37 | Question: Usage of model with multiple inputs | Hello,

I want to train pre-trained BERT model, which accepts three arguments (token_ids, token_types, attention_mask), all of them are tensors (N x L). As far as I understand from your source code, during training with `trainer` instance, I am able to put only one tensor into a model.

What would you recommend for me: 1. wrap model into another model, that accepts stacked tensors and splits them 2. edit source code 3. something else? Or may be I am wrong, please correct me, if it so | closed | 2020-04-08T16:59:29Z | 2020-04-09T16:36:19Z | https://github.com/KevinMusgrave/pytorch-metric-learning/issues/37 | [

"Frequently Asked Questions"

] | lebionick | 4 |

custom-components/pyscript | jupyter | 32 | bug with list comprehension since 0.3 | Updating to 0.3 I suddenly see this message in my error-logs

`SyntaxError: no binding for nonlocal 'entity_id' found`

Reproduce with:

```python

def white_or_cozy(group_entity_id):

entity_ids = state.get_attr(group_entity_id)['entity_id']

attrs = [state.get_attr(entity_id) for entity_id in entity_ids]

``` | closed | 2020-10-09T08:45:29Z | 2020-10-09T17:28:27Z | https://github.com/custom-components/pyscript/issues/32 | [] | basnijholt | 2 |

recommenders-team/recommenders | data-science | 2,064 | [BUG] NameError in ImplicitCF | ### Description

<!--- Describe your issue/bug/request in detail -->

```

2024-02-19T18:34:57.2553239Z @pytest.mark.gpu

2024-02-19T18:34:57.2568702Z def test_model_lightgcn(deeprec_resource_path, deeprec_config_path):

2024-02-19T18:34:57.2569997Z data_path = os.path.join(deeprec_resource_path, "dkn")

2024-02-19T18:34:57.2571489Z yaml_file = os.path.join(deeprec_config_path, "lightgcn.yaml")

2024-02-19T18:34:57.2572675Z user_file = os.path.join(data_path, r"user_embeddings.csv")

2024-02-19T18:34:57.2573837Z item_file = os.path.join(data_path, r"item_embeddings.csv")

2024-02-19T18:34:57.2574736Z

2024-02-19T18:34:57.2575330Z df = movielens.load_pandas_df(size="100k")

2024-02-19T18:34:57.2576298Z train, test = python_stratified_split(df, ratio=0.75)

2024-02-19T18:34:57.2577150Z

2024-02-19T18:34:57.2577757Z > data = ImplicitCF(train=train, test=test)

2024-02-19T18:34:57.2578784Z E NameError: name 'ImplicitCF' is not defined

2024-02-19T18:34:57.2579411Z

2024-02-19T18:34:57.2580002Z tests/smoke/recommenders/recommender/test_deeprec_model.py:251: NameError

```

### In which platform does it happen?

<!--- Describe the platform where the issue is happening (use a list if needed) -->

<!--- For example: -->

<!--- * Azure Data Science Virtual Machine. -->

<!--- * Azure Databricks. -->

<!--- * Other platforms. -->

### How do we replicate the issue?

<!--- Please be specific as possible (use a list if needed). -->

<!--- For example: -->

<!--- * Create a conda environment for pyspark -->

<!--- * Run unit test `test_sar_pyspark.py` with `pytest -m 'spark'` -->

<!--- * ... -->

https://github.com/recommenders-team/recommenders/actions/runs/7963399372/job/21738878728

### Expected behavior (i.e. solution)

<!--- For example: -->

<!--- * The tests for SAR PySpark should pass successfully. -->

### Other Comments

FYI @SimonYansenZhao this is similar to the issue we got in #2022 | closed | 2024-02-19T19:30:03Z | 2024-04-05T14:07:32Z | https://github.com/recommenders-team/recommenders/issues/2064 | [

"bug"

] | miguelgfierro | 3 |