repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

slackapi/python-slack-sdk | asyncio | 1,218 | SlackObjectFormationError: options attribute must have between 2 and 5 items whereas API allows a single option | ### Reproducible in:

#### The Slack SDK version

slack-sdk==3.16.1

#### Python runtime version

Python 3.10.3

#### OS info

ProductName: macOS

ProductVersion: 12.3.1

BuildVersion: 21E258

Darwin Kernel Version 21.4.0: Fri Mar 18 00:46:32 PDT 2022; root:xnu-8020.101.4~15/RELEASE_ARM64_T6000

#### Steps to reproduce:

```py

Message(

text="placeholder",

blocks=[

SectionBlock(

text="placeholder",

accessory=OverflowMenuElement(

options=[Option(value="placeholder", text="placeholder")]

),

)

],

).to_dict()

```

^ This will fail with `slack_sdk.errors.SlackObjectFormationError: options attribute must have between 2 and 5 item`

It used to be that the API required a minimum of two option entries in the overflow menu, but for a number of years now it has permitted just a single one.

### Expected result:

No error is thrown and the object is converted to a dict.

Block kit builder screenshot to confirm that the API allows a single option:

<img width="1316" alt="image" src="https://user-images.githubusercontent.com/7718702/170281869-ce3b3f66-29b8-4bc5-9860-2445efd366f2.png">

```json

{

"blocks": [

{

"type": "section",

"text": {

"type": "mrkdwn",

"text": "This is a section block with an overflow menu with a single option."

},

"accessory": {

"type": "overflow",

"options": [

{

"text": {

"type": "plain_text",

"text": "A single option",

"emoji": true

}

}

]

}

}

]

}

```

### Actual result:

An error is thrown: `slack_sdk.errors.SlackObjectFormationError: options attribute must have between 2 and 5 item`

| closed | 2022-05-25T14:08:33Z | 2022-05-27T04:44:44Z | https://github.com/slackapi/python-slack-sdk/issues/1218 | [

"bug",

"web-client",

"Version: 3x"

] | wilhelmklopp | 2 |

OthersideAI/self-operating-computer | automation | 133 | 'source' is not recognized as an internal or external command, operable program or batch file. | 'source' is not recognized as an internal or external command,

operable program or batch file.

| closed | 2024-01-13T19:18:50Z | 2024-01-14T13:53:26Z | https://github.com/OthersideAI/self-operating-computer/issues/133 | [] | theexpeert | 4 |

ultralytics/ultralytics | pytorch | 19,253 | (HUB) Export HMZ-Compatible .onnx or .hef | ### Search before asking

- [x] I have searched the Ultralytics [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar feature requests.

### Description

The Hailo model zoo compiler expects specific names for Yolo output layers. Add support for hailo-compatible onnx or hailo-optimized .hef models.

Unfortunately, you cannot simply export an ultralytics onnx model and compile it for Hailo 8 at the moment.

### Use case

Quickly training models for edge devices integrating Hailo-8

### Additional

_No response_

### Are you willing to submit a PR?

- [ ] Yes I'd like to help by submitting a PR! | open | 2025-02-14T20:50:09Z | 2025-02-15T10:29:50Z | https://github.com/ultralytics/ultralytics/issues/19253 | [

"enhancement",

"HUB",

"embedded",

"exports"

] | bhjelstrom | 2 |

microsoft/nni | tensorflow | 5,607 | Error on creating custom trial | **Describe the issue**:

Hi, I have a problem when I add a custom trial to the experiment from the webui. When pressing the button of "customized trial" in the trial jobs list, I create a new trial and start running, but suddenly the experiment gives an error because the trial doesn't have the parameter_id field. Is there any solution for it? Thank you.

**Environment**:

- NNI version: 2.10.1

- Training service (local|remote|pai|aml|etc): local

- Client OS: Ubuntu 22.04.2 LTS

- Server OS (for remote mode only):

- Python version: 3.10.11

- PyTorch/TensorFlow version: PyTorch 1.13.1

- Is conda/virtualenv/venv used?: conda

- Is running in Docker?: No

**Configuration**:

```

search_space:

n_rec:

_type: choice

_value: [ 64, 128, 256 ]

lr:

_type: loguniform

_value: [ 0.000001, 0.1 ]

thr:

_type: uniform

_value: [ 0.0, 10.0 ]

n_ref:

_type: loguniform

_value: [ 0.0001, 0.5 ]

tau_v:

_type: loguniform

_value: [ 3.0, 1000. ]

tau_a:

_type: loguniform

_value: [ 3.0, 1000. ]

tau_o:

_type: loguniform

_value: [ 3.0, 1000. ]

theta:

_type: uniform

_value: [ 0.0, 10.0 ]

sg_scale:

_type: uniform

_value: [ 0.03, 1.0 ]

freq:

_type: uniform

_value: [ 10.0, 100.0 ]

trial_command: python nni_experiment.py

trial_code_directory: .

trial_concurrency: 1

max_trial_number: 100

assessor:

name: Medianstop

class_args:

start_step: 50

tuner:

name: TPE

class_args:

optimize_mode: maximize

training_service:

platform: local

```

**Log message**:

- nnimanager.log:

```

[2023-06-12 11:03:46] INFO (main) Start NNI manager

[2023-06-12 11:03:46] INFO (NNIDataStore) Datastore initialization done

[2023-06-12 11:03:46] INFO (RestServer) Starting REST server at port 8080, URL prefix: "/"

[2023-06-12 11:03:46] INFO (RestServer) REST server started.

[2023-06-12 11:03:46] INFO (NNIManager) Starting experiment: c1qxbaym

[2023-06-12 11:03:47] INFO (NNIManager) Setup training service...

[2023-06-12 11:03:47] INFO (LocalTrainingService) Construct local machine training service.

[2023-06-12 11:03:47] INFO (NNIManager) Setup tuner...

[2023-06-12 11:03:47] INFO (NNIManager) Change NNIManager status from: INITIALIZED to: RUNNING

[2023-06-12 11:03:48] INFO (NNIManager) Add event listeners

[2023-06-12 11:03:48] INFO (LocalTrainingService) Run local machine training service.

[2023-06-12 11:03:48] INFO (NNIManager) NNIManager received command from dispatcher: ID,

[2023-06-12 11:03:48] INFO (NNIManager) NNIManager received command from dispatcher: TR, {"parameter_id": 0, "parameter_source": "algorithm", "parameters": {"n_rec": 256, "lr": 0.011448074517379067, "thr": 3.597306519090515, "n_ref": 0.008715046131089057, "tau_v": 7.573031603546667, "tau_a": 29.990669571487363, "tau_o": 128.18680794623737, "theta": 2.102562278413914, "sg_scale": 0.9624626013431545, "freq": 45.56603200372938}, "parameter_index": 0}

[2023-06-12 11:03:48] INFO (NNIManager) NNIManager received command from dispatcher: TR, {"parameter_id": 1, "parameter_source": "algorithm", "parameters": {"n_rec": 256, "lr": 3.3218768175937776e-05, "thr": 7.576678438876874, "n_ref": 0.4766486382392336, "tau_v": 4.566249658132258, "tau_a": 34.182390708234216, "tau_o": 3.093741894351405, "theta": 8.826553595697263, "sg_scale": 0.5791697231669481, "freq": 68.55767486427999}, "parameter_index": 0}

[2023-06-12 11:03:53] INFO (NNIManager) submitTrialJob: form: {

sequenceId: 0,

hyperParameters: {

value: '{"parameter_id": 0, "parameter_source": "algorithm", "parameters": {"n_rec": 256, "lr": 0.011448074517379067, "thr": 3.597306519090515, "n_ref": 0.008715046131089057, "tau_v": 7.573031603546667, "tau_a": 29.990669571487363, "tau_o": 128.18680794623737, "theta": 2.102562278413914, "sg_scale": 0.9624626013431545, "freq": 45.56603200372938}, "parameter_index": 0}',

index: 0

},

placementConstraint: { type: 'None', gpus: [] }

}

[2023-06-12 11:03:53] INFO (NNIManager) submitTrialJob: form: {

sequenceId: 1,

hyperParameters: {

value: '{"parameter_id": 1, "parameter_source": "algorithm", "parameters": {"n_rec": 256, "lr": 3.3218768175937776e-05, "thr": 7.576678438876874, "n_ref": 0.4766486382392336, "tau_v": 4.566249658132258, "tau_a": 34.182390708234216, "tau_o": 3.093741894351405, "theta": 8.826553595697263, "sg_scale": 0.5791697231669481, "freq": 68.55767486427999}, "parameter_index": 0}',

index: 0

},

placementConstraint: { type: 'None', gpus: [] }

}

[2023-06-12 11:03:58] INFO (NNIManager) Trial job ExCBx status changed from WAITING to RUNNING

[2023-06-12 11:03:58] INFO (NNIManager) Trial job ciNRn status changed from WAITING to RUNNING

[2023-06-12 11:14:50] INFO (NNITensorboardManager) tensorboard: ExCBx,ciNRn /home/sic/nni-experiments/c1qxbaym/trials/ExCBx/tensorboard,/home/sic/nni-experiments/c1qxbaym/trials/ciNRn/tensorboard

[2023-06-12 11:14:50] INFO (NNITensorboardManager) tensorboard start command: tensorboard,--bind_all --logdir_spec=0-ExCBx:/home/sic/nni-experiments/c1qxbaym/trials/ExCBx/tensorboard,1-ciNRn:/home/sic/nni-experiments/c1qxbaym/trials/ciNRn/tensorboard,--port=6006

[2023-06-12 11:14:50] INFO (NNITensorboardManager) tensorboard task id: zSWdn

[2023-06-12 11:14:50] INFO (NNIRestHandler) TensorboardTaskDetail {

id: 'zSWdn',

status: 'RUNNING',

trialJobIdList: [ 'ExCBx', 'ciNRn' ],

trialLogDirectoryList: [

'/home/sic/nni-experiments/c1qxbaym/trials/ExCBx/tensorboard',

'/home/sic/nni-experiments/c1qxbaym/trials/ciNRn/tensorboard'

],

pid: 2519812,

port: '6006'

}

[2023-06-12 11:14:50] INFO (NNITensorboardManager) tensorboardTask zSWdn status update: RUNNING to DOWNLOADING_DATA

[2023-06-12 11:14:50] INFO (NNITensorboardManager) tensorboardTask zSWdn status update: DOWNLOADING_DATA to RUNNING

[2023-06-12 11:17:08] INFO (NNIManager) User cancelTrialJob: ExCBx

[2023-06-12 11:17:09] INFO (NNIManager) Trial job ExCBx status changed from RUNNING to USER_CANCELED

[2023-06-12 11:17:09] INFO (NNIManager) submitTrialJob: form: {

sequenceId: 2,

hyperParameters: {

value: '{"parameter_id":null,"parameter_source":"customized","parameters":{"n_rec":256,"lr":0.0005,"thr":1,"n_ref":0.05,"tau_v":1000,"tau_a":1000,"tau_o":1000,"theta":5,"sg_scale":0.3,"freq":100}}',

index: 0

}

}

[2023-06-12 11:17:09] INFO (NNIManager) NNIManager received command from dispatcher: TR, {"parameter_id": 2, "parameter_source": "algorithm", "parameters": {"n_rec": 256, "lr": 2.228695710339134e-05, "thr": 2.6451070628903253, "n_ref": 0.002383796963784488, "tau_v": 21.571986754085653, "tau_a": 3.8068845699351344, "tau_o": 3.970901210844971, "theta": 5.8263479076965226, "sg_scale": 0.7507240392417996, "freq": 30.6873476711282}, "parameter_index": 0}

[2023-06-12 11:17:14] INFO (NNIManager) Trial job SjU2n status changed from WAITING to RUNNING

[2023-06-12 11:17:32] INFO (NNITensorboardManager) Forced stopping all tensorboard task.

[2023-06-12 11:17:32] INFO (NNITensorboardManager) tensorboardTask zSWdn status update: RUNNING to STOPPING

[2023-06-12 11:17:32] INFO (NNITensorboardManager) tensorboardTask zSWdn status update: STOPPING to STOPPED

[2023-06-12 11:17:32] INFO (NNITensorboardManager) All tensorboard task stopped.

[2023-06-12 11:19:15] ERROR (tuner_command_channel.WebSocketChannel) Error: Error: tuner_command_channel: Tuner closed connection

at WebSocket.handleWsClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/core/tuner_command_channel/websocket_channel.js:83:26)

at WebSocket.emit (node:events:538:35)

at WebSocket.emitClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/node_modules/express-ws/node_modules/ws/lib/websocket.js:246:10)

at Socket.socketOnClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/node_modules/express-ws/node_modules/ws/lib/websocket.js:1127:15)

at Socket.emit (node:events:526:28)

at TCP.<anonymous> (node:net:687:12)

```

- dispatcher.log:

```

[2023-06-12 11:03:48] INFO (nni.tuner.tpe/MainThread) Using random seed 606063535

[2023-06-12 11:03:48] INFO (nni.runtime.msg_dispatcher_base/MainThread) Dispatcher started

[2023-06-12 11:17:09] WARNING (medianstop_Assessor/Thread-2 (command_queue_worker)) trial_end: trial_job_id does not exist in running_history

[2023-06-12 11:18:34] ERROR (nni.runtime.msg_dispatcher_base/Thread-2 (command_queue_worker)) '<=' not supported between instances of 'NoneType' and 'int'

Traceback (most recent call last):

File "/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni/runtime/msg_dispatcher_base.py", line 109, in command_queue_worker

self.process_command(command, data)

File "/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni/runtime/msg_dispatcher_base.py", line 155, in process_command

command_handlers[command](data)

File "/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni/runtime/msg_dispatcher.py", line 135, in handle_report_metric_data

if self.is_created_in_previous_exp(data['parameter_id']):

File "/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni/recoverable.py", line 47, in is_created_in_previous_exp

return param_id <= self.recovered_max_param_id

TypeError: '<=' not supported between instances of 'NoneType' and 'int'

[2023-06-12 11:19:15] INFO (nni.runtime.msg_dispatcher_base/MainThread) Dispatcher exiting...

[2023-06-12 11:19:15] INFO (nni.runtime.msg_dispatcher_base/MainThread) Dispatcher terminiated

```

- nnictl stdout and stderr:

nnictl_stderr.log

```

--------------------------------------------------------------------------------

Experiment c1qxbaym start: 2023-06-12 11:03:45.935189

--------------------------------------------------------------------------------

node:events:504

throw er; // Unhandled 'error' event

^

Error: tuner_command_channel: Tuner closed connection

at WebSocket.handleWsClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/core/tuner_command_channel/websocket_channel.js:83:26)

at WebSocket.emit (node:events:538:35)

at WebSocket.emitClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/node_modules/express-ws/node_modules/ws/lib/websocket.js:246:10)

at Socket.socketOnClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/node_modules/express-ws/node_modules/ws/lib/websocket.js:1127:15)

at Socket.emit (node:events:526:28)

at TCP.<anonymous> (node:net:687:12)

Emitted 'error' event at:

at WebSocketChannelImpl.handleError (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/core/tuner_command_channel/websocket_channel.js:135:22)

at WebSocket.handleWsClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/core/tuner_command_channel/websocket_channel.js:83:14)

at WebSocket.emit (node:events:538:35)

[... lines matching original stack trace ...]

at TCP.<anonymous> (node:net:687:12)

Thrown at:

at handleWsClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/core/tuner_command_channel/websocket_channel.js:83:26)

at emit (node:events:538:35)

at emitClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/node_modules/express-ws/node_modules/ws/lib/websocket.js:246:10)

at socketOnClose (/home/sic/anaconda3/envs/ENV/lib/python3.10/site-packages/nni_node/node_modules/express-ws/node_modules/ws/lib/websocket.js:1127:15)

at emit (node:events:526:28)

at node:net:687:12

```

nnictl_stdout.log

```

--------------------------------------------------------------------------------

Experiment c1qxbaym start: 2023-06-12 11:03:45.935189

--------------------------------------------------------------------------------

{'n_rec': {'_type': 'choice', '_value': [64, 128, 256]}, 'lr': {'_type': 'loguniform', '_value': [1e-06, 0.1]}, 'thr': {'_type': 'uniform', '_value': [0, 10]}, 'n_ref': {'_type': 'loguniform', '_value': [0.0001, 0.5]}, 'tau_v': {'_type': 'loguniform', '_value': [3, 1000]}, 'tau_a': {'_type': 'loguniform', '_value': [3, 1000]}, 'tau_o': {'_type': 'loguniform', '_value': [3, 1000]}, 'theta': {'_type': 'uniform', '_value': [0, 10]}, 'sg_scale': {'_type': 'uniform', '_value': [0.03, 1]}, 'freq': {'_type': 'uniform', '_value': [10, 100]}}

2

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 0, 'value': '0.04779411852359772'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 0, 'value': '0.04779411852359772'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 1, 'value': '0.048253677785396576'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 1, 'value': '0.048253677785396576'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 2, 'value': '0.04641544073820114'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 2, 'value': '0.04641544073820114'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 3, 'value': '0.048713237047195435'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 3, 'value': '0.048713237047195435'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 4, 'value': '0.048253677785396576'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 4, 'value': '0.048253677785396576'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 5, 'value': '0.049172792583703995'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 5, 'value': '0.049172792583703995'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 6, 'value': '0.04963235184550285'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 6, 'value': '0.04963235184550285'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 7, 'value': '0.046875'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 7, 'value': '0.046875'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 8, 'value': '0.04779411852359772'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 8, 'value': '0.04779411852359772'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 9, 'value': '0.048253677785396576'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 9, 'value': '0.048253677785396576'}

{'parameter_id': 0, 'trial_job_id': 'ExCBx', 'type': 'PERIODICAL', 'sequence': 10, 'value': '0.046875'}

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 10, 'value': '0.046875'}

{'trial_job_id': 'ExCBx', 'event': 'USER_CANCELED', 'hyper_params': '{"parameter_id": 0, "parameter_source": "algorithm", "parameters": {"n_rec": 256, "lr": 0.011448074517379067, "thr": 3.597306519090515, "n_ref": 0.008715046131089057, "tau_v": 7.573031603546667, "tau_a": 29.990669571487363, "tau_o": 128.18680794623737, "theta": 2.102562278413914, "sg_scale": 0.9624626013431545, "freq": 45.56603200372938}, "parameter_index": 0}'}

1

{'parameter_id': 1, 'trial_job_id': 'ciNRn', 'type': 'PERIODICAL', 'sequence': 11, 'value': '0.048713237047195435'}

{'parameter_id': None, 'trial_job_id': 'SjU2n', 'type': 'PERIODICAL', 'sequence': 0, 'value': '0.04779411852359772'}

``` | closed | 2023-06-12T09:52:39Z | 2023-06-13T14:54:54Z | https://github.com/microsoft/nni/issues/5607 | [] | ferqui | 2 |

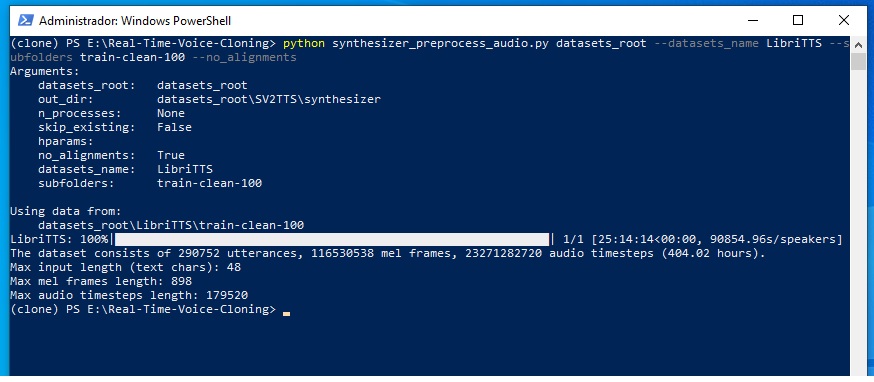

CorentinJ/Real-Time-Voice-Cloning | python | 768 | Error training in Spanish | Hello, I am trying to train in Spanish, I don't know how to solve this error.

Windows 10, rtx 3090. use a dataset to LibriTTS, modify to spanish syntetize-utils-simbol, and in hparams.py I changed it to basic_cleaners

The first step he did well I think, he did not make any mistakes.

In this step I had to modify the texts since the vowels in upper case "Á" at the beginning of a word gave me an error, in lower case "á" worked well.

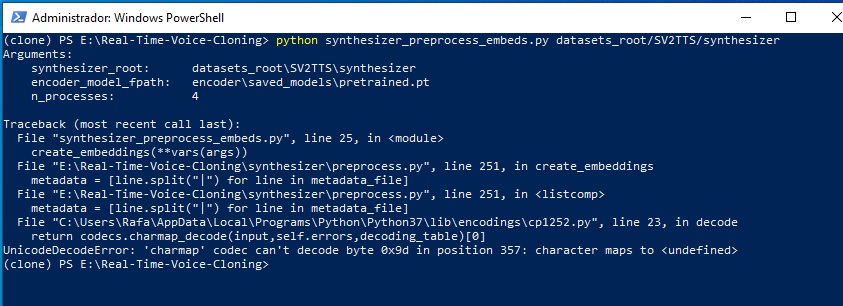

The second step using: python synthesizer_preprocess_embeds.py datasets_root/SV2TTS/synthesizer

I get the following error:

I am a beginner in self-taught programming, and I spent hours in google and did not find how to solve it.

| closed | 2021-06-05T22:34:05Z | 2021-06-06T12:06:13Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/768 | [] | necrcon | 2 |

OFA-Sys/Chinese-CLIP | nlp | 280 | 请问在三个图文检索数据集上微调时,有使用两阶段微调的方式吗? | 我直接使用最新master提供的muge_finetune_vit-b-16_rbt-base.sh进行训练,freeze_vision="",1张v100,其他参数没变,微调结束后结果提交到官网,评估结果比zero-shot低。

zero-shot:Recall@1=52.16, Recall@5=76.22, Recall@5=83.97, Mean Recall=70.78

finetune:Recall@1=48.82, Recall@5=75.8, Recall@5=84.59, Mean Recall=69.74

如果微调你们用了两阶段的话,参数有调整吗?像学习率、epoch等这些

另外请问你们最近有预训练其他的图文匹配模型吗,比如ALBEF、BLIP2等 | open | 2024-03-27T07:06:20Z | 2024-04-21T11:16:02Z | https://github.com/OFA-Sys/Chinese-CLIP/issues/280 | [] | xiuxiuxius | 6 |

HIT-SCIR/ltp | nlp | 484 | No function named chomp in src/utils/strutils.hpp:54 | src/parser/conllreader.h:54 call the function chomp(line); but no function named chomp in all the source files.

In fact , trim should be called here.

c++ ltp-version=3.4.0 | open | 2021-01-26T06:37:45Z | 2021-01-26T06:38:48Z | https://github.com/HIT-SCIR/ltp/issues/484 | [] | dooothink | 0 |

PrefectHQ/prefect | data-science | 17,085 | Prefect Worker logging concurrency empty queue error | ### Bug summary

We've run into a logging error which occurs in our Prefect worker deployed in AWS EKS. This is only a logging issue. The worker seems to work fine and flows can run to completion as expected. This logging error occurs with or without flows running.

Traceback with `PREFECT_DEBUG_MODE=1`

```

│ 21:29:53.117 | DEBUG | QueueingSpanExporterThread | prefect._internal.concurrency - Running call get(timeout=1.999997219 │

│ 999841) in thread 'QueueingSpanExporterThread' │

│ 21:29:53.117 | DEBUG | QueueingSpanExporterThread | prefect._internal.concurrency - <WatcherThreadCancelScope, name='get │

│ ' RUNNING, runtime=0.00> entered │

│ 21:29:53.338 | DEBUG | QueueingLogExporterThread | prefect._internal.concurrency - <WatcherThreadCancelScope, name='get' │

│ COMPLETED, runtime=2.00> exited │

│ 21:29:53.338 | DEBUG | QueueingLogExporterThread | prefect._internal.concurrency - Encountered exception in call get(<dr │

│ opped>) │

│ Traceback (most recent call last): │

│ File "/usr/local/lib/python3.11/site-packages/prefect/_internal/concurrency/calls.py", line 363, in _run_sync │

│ result = self.fn(*self.args, **self.kwargs) │

│ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ │

│ File "/usr/local/lib/python3.11/queue.py", line 179, in get │

│ raise Empty │

│ _queue.Empty

```

### Version info

```Text

# Prefect version

I’ve tried various Prefect + Python versions for our Prefect worker. The Docker images tried are:

`prefecthq/prefect:3.1.15-python3.10-kubernetes`

`prefecthq/prefect:3.1.15-python3.11-kubernetes`

`prefecthq/prefect:3.1.15-python3.12-kubernetes`

# AWS EKS information

* AWS EKS v1.3.1 or v1.3.2 (bug exists in both versions)

* EKS cluster has stable, typical addons: kube-proxy, coredns, etc.

* EKS default Managed Nodegroups or Managed Nodepools (bug occurs in both compute environments)

* AWS EKS AutoMode (bug exists even with AWS managed EKS services such as kube-proxy, coredns, etc)

# HELM Information

helm version:

version.BuildInfo{Version:"v3.14.4", GitCommit:"81c902a123462fd4052bc5e9aa9c513c4c8fc142", GitTreeState:"clean", GoVersion:"go1.22.2

helm chart version info:

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

prefect-worker-3 prefect 1 2025-01-31 08:09:41.422611 -0700 MST deployed

```

### Additional context

My `values.yaml` ends up being

```

## Common parameters

# -- partially overrides common.names.name

nameOverride: ""

# -- fully override common.names.fullname

fullnameOverride: "prefect-worker-3"

# -- fully override common.names.namespace

namespaceOverride: "prefect"

# -- labels to add to all deployed objects

commonLabels: {}

# -- annotations to add to all deployed objects

commonAnnotations: {}

## Deployment Configuration

worker:

autoscaling:

# -- enable autoscaling for the worker

enabled: false

# -- minimum number of replicas to scale down to

minReplicas: 1

# -- maximum number of replicas to scale up to

maxReplicas: 1

# -- target CPU utilization percentage for scaling the worker

targetCPUUtilizationPercentage: 80

# -- target memory utilization percentage for scaling the worker

targetMemoryUtilizationPercentage: 80

# -- unique cluster identifier, if none is provided this value will be infered at time of helm install

clusterUid: ""

initContainer:

# -- the resource specifications for the sync-base-job-template initContainer

# Defaults to the resources defined for the worker container

resources: {}

# -- the requested resources for the sync-base-job-template initContainer

# requests:

# memory: 256Mi

# cpu: 100m

# -- the requested limits for the sync-base-job-template initContainer

# limits:

# memory: 1Gi

# cpu: 1000m

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-container

# -- security context for the sync-base-job-template initContainer

containerSecurityContext:

# -- set init containers' security context runAsUser

runAsUser: 1001

# -- set init containers' security context runAsNonRoot

runAsNonRoot: true

# -- set init containers' security context readOnlyRootFilesystem

readOnlyRootFilesystem: true

# -- set init containers' security context allowPrivilegeEscalation

allowPrivilegeEscalation: false

# -- set init container's security context capabilities

capabilities: {}

image:

# -- worker image repository

repository: prefecthq/prefect

## prefect tag is pinned to the latest available image tag at packaging time. Update the value here to

## override pinned tag

# -- prefect image tag (immutable tags are recommended)

prefectTag: 3.1.15-python3.11-kubernetes

# -- worker image pull policy

pullPolicy: IfNotPresent

## Optionally specify an array of imagePullSecrets.

## Secrets must be manually created in the namespace.

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/pull-image-private-registry/

## e.g:

## pullSecrets:

## - myRegistryKeySecretName

# -- worker image pull secrets

pullSecrets: []

# -- enable worker image debug mode

debug: false

## general configuration of the worker

config:

# -- the work pool that your started worker will poll.

workPool: "jupiter-eos-prefect-3"

# -- one or more work queue names for the worker to pull from. if not provided, the worker will pull from all work queues in the work pool

workQueues: [jupiter-eos-prefect-3]

# -- how often the worker will query for runs

queryInterval: 5

# -- when querying for runs, how many seconds in the future can they be scheduled

prefetchSeconds: 10

# -- connect using HTTP/2 if the server supports it (experimental)

http2: true

## You can set the worker type here.

## The default image includes only the type "kubernetes".

## Custom workers must be properly registered with the prefect cli.

## See the guide here: https://docs.prefect.io/2.11.3/guides/deployment/developing-a-new-worker-type/

# -- specify the worker type

type: kubernetes

## one of 'always', 'if-not-present', 'never', 'prompt'

# -- install policy to use workers from Prefect integration packages.

installPolicy: prompt

# -- the name to give to the started worker. If not provided, a unique name will be generated.

name: null

# -- maximum number of flow runs to start simultaneously (default: unlimited)

limit: null

## If unspecified, Prefect will use the default base job template for the given worker type. If the work pool already exists, this will be ignored.

## e.g.:

## baseJobTemplate:

## configuration: |

## {

## "variables": {

## ...

## },

## "job_configuration": {

## ...

## }

## }

## OR

## baseJobTemplate:

## existingConfigMapName: "my-existing-config-map"

baseJobTemplate:

# -- the name of an existing ConfigMap containing a base job template. NOTE - the key must be 'baseJobTemplate.json'

existingConfigMapName: ""

# -- JSON formatted base job template. If data is provided here, the chart will generate a configmap and mount it to the worker pod

configuration: null

# -- optionally override the default name of the generated configmap

# name: ""

## connection settings

# -- one of 'cloud', 'selfHosted', or 'server'

apiConfig: "cloud"

cloudApiConfig:

# -- prefect account ID

accountId: "824f4d2f-31c4-4b2a-a025-42b8433da9fe"

# -- prefect workspace ID

workspaceId: "38c82ec2-e6c7-47ca-8218-d3173a4b4667"

apiKeySecret:

# -- prefect API secret name

name: prefect-api-key

# -- prefect API secret key

key: api_key

# -- prefect cloud API url; the full URL is constructed as https://cloudUrl/accounts/accountId/workspaces/workspaceId

cloudUrl: https://api.prefect.cloud/api

# selfHostedCloudApiConfig:

# # -- prefect API url (PREFECT_API_URL)

# apiUrl: ""

# # -- prefect account ID

# accountId: ""

# # -- prefect workspace ID

# workspaceId: ""

apiKeySecret:

# -- prefect API secret name

name: prefect-api-key

# -- prefect API secret key

key: api_key

# -- self hosted UI url

# uiUrl: ""

serverApiConfig:

# If the prefect server is located external to this cluster, set a fully qualified domain name as the apiUrl

# If the prefect server pod is deployed to this cluster, use the cluster DNS endpoint: http://<prefect-server-service-name>.<namespace>.svc.cluster.local:<prefect-server-port>/api

# -- prefect API url (PREFECT_API_URL)

apiUrl: ""

# -- prefect UI url

uiUrl: http://localhost:4201

# -- the number of old ReplicaSets to retain to allow rollback

revisionHistoryLimit: 10

# -- number of worker replicas to deploy

replicaCount: 1

resources:

# -- the requested resources for the worker container

requests:

memory: 256Mi

cpu: 100m

# -- the requested limits for the worker container

limits:

memory: 1Gi

cpu: 1000m

# ref: https://kubernetes.io/docs/tasks/configure-pod-container/configure-liveness-readiness-startup-probes/

livenessProbe:

enabled: false

config:

# -- The number of seconds to wait before starting the first probe.

initialDelaySeconds: 10

# -- The number of seconds to wait between consecutive probes.

periodSeconds: 10

# -- The number of seconds to wait for a probe response before considering it as failed.

timeoutSeconds: 5

# -- The number of consecutive failures allowed before considering the probe as failed.

failureThreshold: 3

# -- The minimum consecutive successes required to consider the probe successful.

successThreshold: 1

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-pod

podSecurityContext:

# -- set worker pod's security context runAsUser

runAsUser: 1001

# -- set worker pod's security context runAsNonRoot

runAsNonRoot: true

# -- set worker pod's security context fsGroup

fsGroup: 1001

# ref: https://kubernetes.io/docs/concepts/scheduling-eviction/pod-priority-preemption/#priorityclass

# -- priority class name to use for the worker pods; if the priority class is empty or doesn't exist, the worker pods are scheduled without a priority class

priorityClassName: ""

## ref: https://kubernetes.io/docs/tasks/configure-pod-container/security-context/#set-the-security-context-for-a-container

containerSecurityContext:

# -- set worker containers' security context runAsUser

runAsUser: 1001

# -- set worker containers' security context runAsNonRoot

runAsNonRoot: true

# -- set worker containers' security context readOnlyRootFilesystem

readOnlyRootFilesystem: true

# -- set worker containers' security context allowPrivilegeEscalation

allowPrivilegeEscalation: false

# -- set worker container's security context capabilities

capabilities: {}

## ref: https://kubernetes.io/docs/concepts/overview/working-with-objects/labels/

# -- extra labels for worker pod

podLabels: {}

## ref: https://kubernetes.io/docs/concepts/overview/working-with-objects/annotations/

# -- extra annotations for worker pod

podAnnotations: {}

## ref: https://kubernetes.io/docs/concepts/configuration/assign-pod-node/#affinity-and-anti-affinity

# -- affinity for worker pods assignment

affinity: {}

## ref: https://kubernetes.io/docs/user-guide/node-selection/

# -- node labels for worker pods assignment

nodeSelector: {}

## ref: https://kubernetes.io/docs/concepts/configuration/taint-and-toleration/

# -- tolerations for worker pods assignment

tolerations: []

## List of extra env vars

## e.g:

## extraEnvVars:

## - name: FOO

## value: "bar"

# -- array with extra environment variables to add to worker nodes

extraEnvVars: []

# -- name of existing ConfigMap containing extra env vars to add to worker nodes

extraEnvVarsCM: ""

# -- name of existing Secret containing extra env vars to add to worker nodes

extraEnvVarsSecret: ""

# -- additional sidecar containers

extraContainers: []

# -- array with extra volumes for the worker pod

extraVolumes: []

# -- array with extra volumeMounts for the worker pod

extraVolumeMounts: []

# -- array with extra Arguments for the worker container to start with

extraArgs: []

## ServiceAccount configuration

serviceAccount:

# -- specifies whether a ServiceAccount should be created

create: false

# -- the name of the ServiceAccount to use. if not set and create is true, a name is generated using the common.names.fullname template

name: ""

# -- additional service account annotations (evaluated as a template)

annotations: {}

## Role configuration

role:

# -- specifies whether a Role should be created

create: true

## List of extra role permissions

## e.g:

extraPermissions:

- apiGroups: [""]

resources: ["pods", "services"]

verbs: ["get", "list", "watch", "create", "update", "patch", "delete"]

# -- array with extra permissions to add to the worker role

# extraPermissions: []

## RoleBinding configuration

rolebinding:

# -- specifies whether a RoleBinding should be created

create: true

```

We’ve tried setting `PREFECT_CLOUD_ENABLE_ORCHESTRATION_TELEMETRY:false` but the logging error persists with a slightly different traceback (`APILogWorkerThread` instead of `QueueingLogExporterThread`)

```

│ 15:46:49.701 | DEBUG | APILogWorkerThread | prefect._internal.concurrency - <WatcherThreadCancelScope, name='get' │

│ 15:46:49.701 | DEBUG | APILogWorkerThread | prefect._internal.concurrency - Encountered exception in call get(<dro │

│ Traceback (most recent call last): │

│ File "/usr/local/lib/python3.11/site-packages/prefect/_internal/concurrency/calls.py", line 364, in _run_sync │

│ result = self.fn(*self.args, **self.kwargs) │

│ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^ │

│ File "/usr/local/lib/python3.11/queue.py", line 179, in get │

│ raise Empty │

│ _queue.Empty │

│ 15:46:49.702 | DEBUG | APILogWorkerThread | prefect._internal.concurrency - Running call get(timeout=1.99996715999

``` | closed | 2025-02-11T00:31:04Z | 2025-02-11T21:45:56Z | https://github.com/PrefectHQ/prefect/issues/17085 | [

"bug"

] | JupiterPucciarelli | 2 |

widgetti/solara | jupyter | 406 | Discord link broken | The discord link is invalid. | closed | 2023-11-30T19:22:57Z | 2023-11-30T19:51:33Z | https://github.com/widgetti/solara/issues/406 | [] | langestefan | 2 |

aio-libs-abandoned/aioredis-py | asyncio | 638 | Connection pool is idle for a long time, resulting in unreachable redis | When the connection pool is idle for a long time, about 10 minutes. When I request a connection from the connection pool again, my program will block and wait for about 15 minutes. Then raise exception: connection time out.

What is the cause of this?

I hope I can get your help. Thank you.

| closed | 2019-09-19T07:13:08Z | 2021-03-18T23:55:33Z | https://github.com/aio-libs-abandoned/aioredis-py/issues/638 | [

"need investigation",

"resolved-via-latest"

] | Zhao-Panpan | 0 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 308 | 如何根据不同的GPU显存来设置batch_size |

### 详细描述问题

我有三个nvidia Tesla的V100卡,1个16G,2个32G,该如何设置让这三个卡能够充分利用呢?不知道有没有大佬能够帮忙解答这个问题,试了好几次都没成功。现在最好的就是在2个32G的上面来跑,16G的就闲着用不了

| closed | 2023-05-11T05:38:40Z | 2023-05-23T22:02:33Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/308 | [

"stale"

] | heshuguo | 6 |

geopandas/geopandas | pandas | 2,566 | ENH: Improve error message for attempting to write multiple geometry columns using `to_file` | xref #2565

```python

In [16]: gdf = gpd.GeoDataFrame({"a":[1,2]}, geometry=[Point(1,1), Point(1,2)])

In [17]: gdf['geom2'] = gdf.geometry

In [18]: gdf.to_file("tmp.gpkg")

-----------------------------------

File ...\venv\lib\site-packages\geopandas\io\file.py:596, in infer_schema.<locals>.convert_type(column, in_type)

594 out_type = types[str(in_type)]

595 else:

--> 596 out_type = type(np.zeros(1, in_type).item()).__name__

597 if out_type == "long":

598 out_type = "int"

TypeError: Cannot interpret '<geopandas.array.GeometryDtype object at 0x0000025FA88908E0>' as a data type

```

| closed | 2022-09-25T12:14:04Z | 2023-07-16T22:40:57Z | https://github.com/geopandas/geopandas/issues/2566 | [

"enhancement",

"good first issue"

] | m-richards | 8 |

pytest-dev/pytest-cov | pytest | 78 | mark/fixture to turn coverage recording off | I don't know how feasible this is, but it'd be nice to have a mark and/or contextmanager-fixture to turn coverage collection off:

``` python

@pytest.mark.coverage_disabled

def test_foo():

# do some integration test or whatever

pass

def test_bar(coverage):

with coverage.disabled():

pass

```

| closed | 2015-08-12T15:47:05Z | 2017-11-24T22:34:13Z | https://github.com/pytest-dev/pytest-cov/issues/78 | [] | The-Compiler | 12 |

pallets-eco/flask-sqlalchemy | flask | 451 | order_by descending | Hello,

I have the following model

```

class Server(db.Model):

serverId = db.Column(db.Integer, primary_key=True)

serverName = db.Column(db.String(20))

serverIP = db.Column(db.String(20))

serverNote = db.Column(db.String(200))

serverStatus = db.Column(db.String(10))

serverBlNr = db.Column(db.Integer)

lastChecked = db.Column(db.DateTime(), default=datetime.utcnow, onupdate=datetime.utcnow)

def __init__(self, Name, IP, Note, Status):

self.serverName = Name

self.serverIP = IP

self.serverNote = Note

self.serverStatus = Status

self.serverBlNr = BlackNumber

```

And I've written the following route:

```

@app.route('/api/servers/', methods=['GET'])

def api_get_servers():

server_list = Server.query.order_by(Server.lastChecked).limit(10)

data = {}

for srv in server_list:

data[srv.serverId] = {

'serverName' : srv.serverName,

'serverIP' : srv.serverIP,

'serverStatus' : srv.serverStatus,

'serverNote' : srv.serverNote,

'lastChecked' : srv.lastChecked

}

return jsonify({'servers': data})

```

Is this the correct way to get the olderst 10 results ?

```

server_list = Server.query.order_by(Server.lastChecked).limit(10)

```

| closed | 2016-12-12T11:58:07Z | 2016-12-13T01:46:04Z | https://github.com/pallets-eco/flask-sqlalchemy/issues/451 | [] | tuwid | 4 |

scikit-learn-contrib/metric-learn | scikit-learn | 127 | tests failing due to scikit-learn update | Some tests are failing in master, due to scikit-learn update v0.20.0. There are two types of tests that are failing:

- Tests that uses the iris dataset: this is probably due to the fact that the iris dataset has changed in scikit learn v.0.20: cf http://scikit-learn.org/stable/whats_new.html#sklearn-datasets

For now maybe we can do a quickfix to pass the tests but maybe in the future we should replace these tests that test hard numerical values ?

- Tests using sklearn's check_estimator: they fail for the check `check_fit2d_1sample` which was improved in v0.20 by checking the actual error message that is returned: see diff between lines 594-595 of v0.19.0 and lines 843-845 of v0.20 [here](https://github.com/scikit-learn/scikit-learn/compare/0.19.0...0.20.0) in Files changed, `sklearn/utils/estimator_checks.py` (the git diff didn't really worked here so these lines are not aligned). They can be fixed by setting `ensure_min_samples=2` in the checks that are done at fit, but we should probably think more about what are the minimum number of samples needed to make the algorithms work (since scikit-learn only tests this error is thrown for 1 sample, but maybe the algorithm won't work for 2 samples either and return the bad error message as before, which is not ideal...)

| closed | 2018-10-10T13:24:00Z | 2019-09-03T08:06:01Z | https://github.com/scikit-learn-contrib/metric-learn/issues/127 | [] | wdevazelhes | 1 |

dynaconf/dynaconf | fastapi | 662 | [bug] Lazy validation fails with TypeError: __call__() takes 2 positional arguments but 3 were given | **Describe the bug**

Tried to introduce Lazy Validators as described in https://www.dynaconf.com/validation/#computed-values i.e.

```

from dynaconf.utils.parse_conf import empty, Lazy

Validator("FOO", default=Lazy(empty, formatter=my_function))

```

First bug (documentation): The above fails with

` ImportError: cannot import name 'empty' from 'dynaconf.utils.parse_conf'`

--> "empty" seems to be now in `dynaconf.utils.functional.empty`

Second bug:

Lazy Validator fails with

` TypeError: __call__() takes 2 positional arguments but 3 were given`

**To Reproduce**

Steps to reproduce the behavior:

```

from dynaconf.utils.parse_conf import Lazy

from dynaconf.utils.functional import empty

def lazy_foobar(s, v):

return "foobar"

from dynaconf import Dynaconf

s = Dynaconf(

validators=[Validator("FOOBAR", default=Lazy(empty, formatter=lazy_foobar)),],

)

print(s.FOOBAR)

```

stacktrace:

```

print(s.FOOBAR)

File "c:\ta\virtualenv\dconf\lib\site-packages\dynaconf\base.py", line 113, in __getattr__

self._setup()

File "c:\ta\virtualenv\dconf\lib\site-packages\dynaconf\base.py", line 164, in _setup

settings_module=settings_module, **self._kwargs

File "c:\ta\virtualenv\dconf\lib\site-packages\dynaconf\base.py", line 236, in __init__

only=self._validate_only, exclude=self._validate_exclude

File "c:\ta\virtualenv\dconf\lib\site-packages\dynaconf\validator.py", line 417, in validate

validator.validate(self.settings, only=only, exclude=exclude)

File "c:\ta\virtualenv\dconf\lib\site-packages\dynaconf\validator.py", line 198, in validate

settings, settings.current_env, only=only, exclude=exclude

File "c:\ta\virtualenv\dconf\lib\site-packages\dynaconf\validator.py", line 227, in _validate_items

if callable(self.default)

TypeError: __call__() takes 2 positional arguments but 3 were given

```

**Expected behavior**

Work as documented in https://www.dynaconf.com/validation/#computed-values

**Environment (please complete the following information):**

- OS: Windows 10 Pro

- Dynaconf Version: 3.1.7 (also 3.1.4)

- Frameworks in use: N/A

**Additional context**

Add any other context about the problem here.

| closed | 2021-10-04T13:54:00Z | 2021-10-30T08:52:50Z | https://github.com/dynaconf/dynaconf/issues/662 | [

"bug",

"hacktoberfest"

] | yahman72 | 7 |

RobertCraigie/prisma-client-py | asyncio | 63 | Improve experience working with Json fields | ## Problem

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

Currently the following code will generate a slew of type errors.

```py

user = await client.user.find_unique(where={'id': 'abc'})

print(user.extra['pets'][0]['name'])

```

While I do think that naively working with JSON objects should raise type errors, it should be easy to cast the data to the expected types.

For example, the above code written in a type safe manner would look like this:

```py

class Extra(TypedDict, total=False):

pets: List['Pet']

class Pet(TypedDict):

name: str

user = await client.user.find_unique(where={'id': 'abc'})

extra = cast(Extra, user.extra)

pets = extra.get('pets')

if pets:

print(pets[0]['name'])

```

However there are a few problems with this:

- There is no guarantee that the data at runtime will match the types which nullifies lots of the benefits of static type checking

- Types are not persisted, i.e. the whole dance to cast the type will have to be done every time

## Suggested solution

<!-- A clear and concise description of what you want to happen. -->

Once #59 is implemented, this would be easier, for example, this _should_ work:

```py

class User(prisma.models.User):

extra: Extra

user = await User.prisma().find_unique(where={'id': 'abc'})

pets = user.extra.get('pets')

if pets:

print(pets[0]['name'])

```

And for client based access, #24 would make this easier, for example:

```py

user = await client.user.find_unique(where={'id': 'abc'})

extra = prisma.validate(Extra, user.extra)

pets = extra.get('pets')

if pets:

print(pets[0]['name'])

```

The purpose of this issue is for tracking the aforementioned issues and documentation.

| open | 2021-09-07T18:15:12Z | 2022-11-20T19:58:13Z | https://github.com/RobertCraigie/prisma-client-py/issues/63 | [

"kind/improvement",

"topic: types",

"priority/medium",

"level/unknown"

] | RobertCraigie | 2 |

jofpin/trape | flask | 54 | 500 Internal Server Error | Linux Version >/`Linux Galaxy 4.17.0-kali1-amd64 #1 SMP Debian 4.17.8-1kali1 (2018-07-24) x86_64 GNU/Linux`

Python Version >/`Python 2.7.15`

Pip Version > /`pip 9.0.1 from /usr/lib/python2.7/dist-packages (python 2.7)`

Error >/

`Traceback (most recent call last):

File "/usr/local/lib/python2.7/dist-packages/flask/app.py", line 1982, in wsgi_app

response = self.full_dispatch_request()

File "/usr/local/lib/python2.7/dist-packages/flask/app.py", line 1614, in full_dispatch_request

rv = self.handle_user_exception(e)

File "/usr/local/lib/python2.7/dist-packages/flask/app.py", line 1517, in handle_user_exception

reraise(exc_type, exc_value, tb)

File "/usr/local/lib/python2.7/dist-packages/flask/app.py", line 1612, in full_dispatch_request

rv = self.dispatch_request()

File "/usr/local/lib/python2.7/dist-packages/flask/app.py", line 1598, in dispatch_request

return self.view_functions[rule.endpoint](**req.view_args)

File "/root/Desktop/trape/core/victim.py", line 96, in redirectVictim

html = victim_inject_code(opener.open(url).read(), 'vscript')

File "/usr/lib/python2.7/urllib2.py", line 421, in open

protocol = req.get_type()

File "/usr/lib/python2.7/urllib2.py", line 283, in get_type

raise ValueError, "unknown url type: %s" % self.__original

ValueError: unknown url type: www.facebook.com

[2018-09-19 11:49:48,855] ERROR in app: Exception on /redv [GET]

`

| closed | 2018-09-19T15:55:00Z | 2018-09-19T16:26:04Z | https://github.com/jofpin/trape/issues/54 | [] | V3rB0se | 1 |

babysor/MockingBird | deep-learning | 161 | 这个能支持多卡训练吗? | 我对pytorch不太清楚... | open | 2021-10-20T09:27:16Z | 2021-11-06T14:44:55Z | https://github.com/babysor/MockingBird/issues/161 | [] | atiyit | 3 |

Kludex/mangum | asyncio | 322 | mangum.io Domain Expired | I know this might not be the right place for this, but the docs site domain has expired and the site is down.

edit: this implies that this project may have been abandoned. For others looking for the doc site and finding this go here https://github.com/jordaneremieff/mangum/blob/main/docs/index.md | closed | 2024-05-14T16:06:38Z | 2024-09-23T03:17:50Z | https://github.com/Kludex/mangum/issues/322 | [] | jglien | 10 |

abhiTronix/vidgear | dash | 40 | WriteGear Bare-Minimum example (Non-Compression) not working | ## Description

1. I followed the demo here: https://github.com/abhiTronix/vidgear/wiki/Non-Compression-Mode:-OpenCV#1-writegear-bare-minimum-examplenon-compression-mode

2. Run the code

3. The following error showed:

```

Compression Mode is disabled, Activating OpenCV In-built Writer!

InputFrame => Height:360 Width:640 Channels:1

FILE_PATH: /******/Output.mp4, FOURCC = 1196444237, FPS = 30.0, WIDTH = 640, HEIGHT = 360, BACKEND =

OpenCV: FFMPEG: tag 0x47504a4d/'MJPG' is not supported with codec id 7 and format 'mp4 / MP4 (MPEG-4 Part 14)'

OpenCV: FFMPEG: fallback to use tag 0x7634706d/'mp4v'

Warning: RGBA and 16-bit grayscale video frames are not supported by OpenCV yet, switch to `compression_mode` to use them!

Traceback (most recent call last):

File "cam_demo.py", line 31, in <module>

writer.write(gray)

File "/Users/*****/lib/python3.7/site-packages/vidgear/gears/writegear.py", line 221, in write

raise ValueError('All frames in a video should have same size')

ValueError: All frames in a video should have same size

```

### Acknowledgment

<!--- By posting an issue you acknowledge the following: -->

- [x] A brief but descriptive Title of your issue

- [x] I have searched the [issues](https://github.com/abhiTronix/vidgear/issues) for my issue and found nothing related or helpful.

- [x] I have read the [FAQ](https://github.com/abhiTronix/vidgear/wiki/FAQ-&-Troubleshooting).

- [x] I have read the [Wiki](https://github.com/abhiTronix/vidgear/wiki#vidgear).

- [x] I have read the [Contributing Guidelines](https://github.com/abhiTronix/vidgear/blob/master/contributing.md).

### Environment

<!--- Include as many relevant details about the environment you experienced the bug in -->

* VidGear version: 0.1.5

* Branch: <!--- Master/Testing/Development/PyPi --> PyPi

* Python version: 3.7.3

* pip version: 19.1.1

* Operating System and version: macOS 10.14.3

### Expected Behavior

<!--- Tell us what should happen -->

Write frame to file.

### Actual Behavior

<!--- Tell us what happens instead -->

Frames in different sizes.

### Possible Fix

<!--- Not obligatory, but suggest a fix or reason for the bug -->

WriteGear specify the output frame size?

### Steps to reproduce

<!--

How would you describe your issue to someone who doesn’t know you or your project?

Try to write a sequence of steps that anybody can repeat to see the issue.

-->

(Write your steps here:)

See description.

### Optional

<!--- Provide screenshots where appropriate -->

| closed | 2019-07-27T19:49:44Z | 2019-07-30T07:13:44Z | https://github.com/abhiTronix/vidgear/issues/40 | [

"INVALID :stop_sign:"

] | iflyingboots | 2 |

xzkostyan/clickhouse-sqlalchemy | sqlalchemy | 26 | Database addition error with superset | Unable to add new database, it shows the error

<img width="1188" alt="screen shot 2018-08-16 at 12 28 30 pm" src="https://user-images.githubusercontent.com/11755543/44193272-eddd6d00-a14f-11e8-8662-8eb86abc74f9.png">

There is no exception thrown on the server, As well as no other error response. | closed | 2018-08-16T07:00:02Z | 2022-06-13T10:54:06Z | https://github.com/xzkostyan/clickhouse-sqlalchemy/issues/26 | [] | hemantjadon | 5 |

deepspeedai/DeepSpeed | deep-learning | 6,985 | [BUG] Invalidate trace cache warning | **Describe the bug**

During training I receive the following warning multiple times per epoch:

`Invalidate trace cache @ step 1 and module 1: cache has only 1 modules`

This is a bit of an odd message, I have done some digging, and these trace warnings seem to be a common issue people report here, but none like this one. Is there any input from the developers about what could be at the root of it? I spent quite some time figuring it out, but couldn't rely figure it out. Any help would be appreciated!

Also it would be really nice if there is an option to suppress this warning, in case it's not relevant. The way it's coded this is quite difficult.

**ds_report output**

```

[2025-01-30 13:15:38,204] [INFO] [real_accelerator.py:222:get_accelerator] Setting ds_accelerator to cuda (auto detect)

Warning: The cache directory for DeepSpeed Triton autotune, /cluster/home/michaes/.triton/autotune, appears to be on an NFS system. While this is generally acceptable, if you experience slowdowns or hanging when DeepSpeed exits, it is recommended to set the TRITON_CACHE_DIR environment variable to a non-NFS path.

--------------------------------------------------

DeepSpeed C++/CUDA extension op report

--------------------------------------------------

NOTE: Ops not installed will be just-in-time (JIT) compiled at

runtime if needed. Op compatibility means that your system

meet the required dependencies to JIT install the op.

--------------------------------------------------

JIT compiled ops requires ninja

ninja .................. [OKAY]

--------------------------------------------------

op name ................ installed .. compatible

--------------------------------------------------

[WARNING] async_io requires the dev libaio .so object and headers but these were not found.

[WARNING] async_io: please install the libaio-dev package with apt

[WARNING] If libaio is already installed (perhaps from source), try setting the CFLAGS and LDFLAGS environment variables to where it can be found.

async_io ............... [NO] ....... [NO]

fused_adam ............. [NO] ....... [OKAY]

cpu_adam ............... [NO] ....... [OKAY]

cpu_adagrad ............ [NO] ....... [OKAY]

cpu_lion ............... [NO] ....... [OKAY]

[WARNING] Please specify the CUTLASS repo directory as environment variable $CUTLASS_PATH

evoformer_attn ......... [NO] ....... [NO]

[WARNING] FP Quantizer is using an untested triton version (3.1.0), only 2.3.(0, 1) and 3.0.0 are known to be compatible with these kernels

fp_quantizer ........... [NO] ....... [NO]

fused_lamb ............. [NO] ....... [OKAY]

fused_lion ............. [NO] ....... [OKAY]

[WARNING] gds requires the dev libaio .so object and headers but these were not found.

[WARNING] gds: please install the libaio-dev package with apt

[WARNING] If libaio is already installed (perhaps from source), try setting the CFLAGS and LDFLAGS environment variables to where it can be found.

gds .................... [NO] ....... [NO]

transformer_inference .. [NO] ....... [OKAY]

inference_core_ops ..... [NO] ....... [OKAY]

cutlass_ops ............ [NO] ....... [OKAY]

quantizer .............. [NO] ....... [OKAY]

ragged_device_ops ...... [NO] ....... [OKAY]

ragged_ops ............. [NO] ....... [OKAY]

random_ltd ............. [NO] ....... [OKAY]

[WARNING] sparse_attn requires a torch version >= 1.5 and < 2.0 but detected 2.5

[WARNING] using untested triton version (3.1.0), only 1.0.0 is known to be compatible

sparse_attn ............ [NO] ....... [NO]

spatial_inference ...... [NO] ....... [OKAY]

transformer ............ [NO] ....... [OKAY]

stochastic_transformer . [NO] ....... [OKAY]

--------------------------------------------------

DeepSpeed general environment info:

torch install path ............... ['/cluster/home/michaes/.miniforge/envs/hyperion/lib/python3.11/site-packages/torch']

torch version .................... 2.5.0+cu121

deepspeed install path ........... ['/cluster/home/michaes/.miniforge/envs/hyperion/lib/python3.11/site-packages/deepspeed']

deepspeed info ................... 0.16.3, unknown, unknown

torch cuda version ............... 12.1

torch hip version ................ None

nvcc version ..................... 12.1

deepspeed wheel compiled w. ...... torch 0.0, cuda 0.0

shared memory (/dev/shm) size .... 250.73 GB

```

| closed | 2025-01-30T12:29:36Z | 2025-02-18T20:49:54Z | https://github.com/deepspeedai/DeepSpeed/issues/6985 | [

"bug",

"training"

] | leachim | 1 |

coqui-ai/TTS | deep-learning | 3,754 | [Bug] Anyway to run this as docker-compose ? | ### Describe the bug

Anyway to run this as docker-compose ?

### To Reproduce

Anyway to run this as docker-compose ?

### Expected behavior

Anyway to run this as docker-compose ?

### Logs

```shell

Anyway to run this as docker-compose ?

```

### Environment

```shell

Anyway to run this as docker-compose ?

```

### Additional context

Anyway to run this as docker-compose ? | closed | 2024-05-21T14:01:47Z | 2024-07-18T23:47:03Z | https://github.com/coqui-ai/TTS/issues/3754 | [

"bug",

"wontfix"

] | PeterTucker | 3 |

newpanjing/simpleui | django | 52 | django2.2.3使用中出现的问题 | **bug描述**

简单的描述下遇到的bug:

1.增加加,删除页面谷歌浏览器不适配

2.增加内容之后得点击返回多次才能回到对应的列表页

3.再list_filter中使用SimpleListFilter时报

Exception: object has no attribute 'field'

Exception Location: \venv\lib\site-packages\simpleui\templatetags\simpletags.py in load_dates, line 40

**环境**

1.操作系统:

2.python版本:3.7.3

3.django版本:2.2.3

4.simpleui版本:2.1

**其他描述**

| closed | 2019-05-22T09:51:01Z | 2019-05-23T07:39:37Z | https://github.com/newpanjing/simpleui/issues/52 | [

"bug"

] | yanlianhanlin | 4 |

plotly/dash-core-components | dash | 327 | styling tabs via css classes | Hi Plotly Team,

I can't get styling of the tabs component via css classes to function properly. Without knowing much about it, a guess could be it has to do with inconsistent naming of the `selected_className` property in the Tabs and Tab component.

I placed the following css file in the assets folder.

```css

.tab-style {

width: inherit;

border: none;

background: white;

padding-top: 0;

padding-bottom: 0;

height: 42px;

}

.selected-tab-style {

width: inherit;

box-shadow: none;

border-left: none;

border-right: none;

border-top: none;

border-bottom: 2px #004A96 solid;

background: white;

padding-top: 0;

padding-bottom: 0;

height: 42px;

}

```

and used the following app to reproduce the behavior.

```python

import dash

import dash_core_components as dcc

import dash_html_components as html

TAB_STYLE = {

'width': 'inherit',

'border': 'none',

'boxShadow': 'inset 0px -1px 0px 0px lightgrey',

'background': 'white',

'paddingTop': 0,

'paddingBottom': 0,

'height': '42px',

}

SELECTED_STYLE = {

'width': 'inherit',

'boxShadow': 'none',

'borderLeft': 'none',

'borderRight': 'none',

'borderTop': 'none',

'borderBottom': '2px #004A96 solid',

'background': 'white',

'paddingTop': 0,

'paddingBottom': 0,

'height': '42px',

}

app = dash.Dash()

app.layout = html.Div([

dcc.Tabs(

id='tabs-1',

value='tab-1',

children=[

dcc.Tab(

label='Tab 1',

value='tab-1',

style=TAB_STYLE,

selected_style=SELECTED_STYLE,

),

dcc.Tab(

label='Tab 2',

value='tab-2',

style=TAB_STYLE,

selected_style=SELECTED_STYLE,

),

]

),

html.Br(),

dcc.Tabs(

id='tabs-2',

value='tab-1',

children=[

dcc.Tab(

label='Tab 1',

value='tab-1',

className='tab-style',

selected_className='selected-tab-style',

),

dcc.Tab(

label='Tab 2',

value='tab-2',

className='tab-style',

selected_className='selected-tab-style',

),

]

)

])

app.css.config.serve_locally = True

app.scripts.config.serve_locally = True

if __name__ == '__main__':

app.run_server(debug=True)

```

Any insight on this is greatly appreciated. | closed | 2018-10-14T18:12:05Z | 2022-10-10T10:10:00Z | https://github.com/plotly/dash-core-components/issues/327 | [] | roeap | 4 |

biolab/orange3 | numpy | 6,642 | OCR (optical character recognition) in images | **What's your use case?**

I would like to extract text in image/pdf document.

**What's your proposed solution?**

An OCR module in the image add-on to extract text. Some modules exists in python for that. Some documentation here (well; sadly in french https://www.aranacorp.com/fr/reconnaissance-de-texte-avec-python/) and what I understand is that you could use pytesseract.

**Are there any alternative solutions?**

No

| closed | 2023-11-19T09:03:37Z | 2023-11-26T18:51:23Z | https://github.com/biolab/orange3/issues/6642 | [] | simonaubertbd | 2 |

ultralytics/yolov5 | pytorch | 13,332 | WARNING ⚠️ NMS time limit 0.340s exceeded | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and found no similar bug report.

### YOLOv5 Component

_No response_

### Bug

Hi YOLO comunnity. so im running training on my cpu and i have this probleme notice that ive already checked on the previous simular issues and i found this

time_limit = 0.1 + 0.02 * bs # seconds to quit after

i applied it but the issue still here

raidhani@raidhani-All-Series:~/catkin_ws/src/yolov5$ python3 train.py --img 640 --batch 6 --epochs 100 --data /home/raidhani/catkin_ws/src/data/data.yaml --weights yolov5s.pt

train: weights=yolov5s.pt, cfg=, data=/home/raidhani/catkin_ws/src/data/data.yaml, hyp=data/hyps/hyp.scratch-low.yaml, epochs=100, batch_size=6, imgsz=640, rect=False, resume=False, nosave=False, noval=False, noautoanchor=False, noplots=False, evolve=None, evolve_population=data/hyps, resume_evolve=None, bucket=, cache=None, image_weights=False, device=, multi_scale=False, single_cls=False, optimizer=SGD, sync_bn=False, workers=8, project=runs/train, name=exp, exist_ok=False, quad=False, cos_lr=False, label_smoothing=0.0, patience=100, freeze=[0], save_period=-1, seed=0, local_rank=-1, entity=None, upload_dataset=False, bbox_interval=-1, artifact_alias=latest, ndjson_console=False, ndjson_file=False

github: up to date with https://github.com/ultralytics/yolov5 ✅

YOLOv5 🚀 v7.0-368-gb163ff8d Python-3.8.10 torch-1.11.0+cpu CPU

hyperparameters: lr0=0.01, lrf=0.01, momentum=0.937, weight_decay=0.0005, warmup_epochs=3.0, warmup_momentum=0.8, warmup_bias_lr=0.1, box=0.05, cls=0.5, cls_pw=1.0, obj=1.0, obj_pw=1.0, iou_t=0.2, anchor_t=4.0, fl_gamma=0.0, hsv_h=0.015, hsv_s=0.7, hsv_v=0.4, degrees=0.0, translate=0.1, scale=0.5, shear=0.0, perspective=0.0, flipud=0.0, fliplr=0.5, mosaic=1.0, mixup=0.0, copy_paste=0.0

Comet: run 'pip install comet_ml' to automatically track and visualize YOLOv5 🚀 runs in Comet

TensorBoard: Start with 'tensorboard --logdir runs/train', view at http://localhost:6006/

Overriding model.yaml nc=80 with nc=10

from n params module arguments

0 -1 1 3520 models.common.Conv [3, 32, 6, 2, 2]

1 -1 1 18560 models.common.Conv [32, 64, 3, 2]

2 -1 1 18816 models.common.C3 [64, 64, 1]

3 -1 1 73984 models.common.Conv [64, 128, 3, 2]

4 -1 2 115712 models.common.C3 [128, 128, 2]

5 -1 1 295424 models.common.Conv [128, 256, 3, 2]

6 -1 3 625152 models.common.C3 [256, 256, 3]

7 -1 1 1180672 models.common.Conv [256, 512, 3, 2]

8 -1 1 1182720 models.common.C3 [512, 512, 1]

9 -1 1 656896 models.common.SPPF [512, 512, 5]

10 -1 1 131584 models.common.Conv [512, 256, 1, 1]

11 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

12 [-1, 6] 1 0 models.common.Concat [1]

13 -1 1 361984 models.common.C3 [512, 256, 1, False]

14 -1 1 33024 models.common.Conv [256, 128, 1, 1]

15 -1 1 0 torch.nn.modules.upsampling.Upsample [None, 2, 'nearest']

16 [-1, 4] 1 0 models.common.Concat [1]

17 -1 1 90880 models.common.C3 [256, 128, 1, False]

18 -1 1 147712 models.common.Conv [128, 128, 3, 2]

19 [-1, 14] 1 0 models.common.Concat [1]

20 -1 1 296448 models.common.C3 [256, 256, 1, False]

21 -1 1 590336 models.common.Conv [256, 256, 3, 2]

22 [-1, 10] 1 0 models.common.Concat [1]

23 -1 1 1182720 models.common.C3 [512, 512, 1, False]

24 [17, 20, 23] 1 40455 models.yolo.Detect [10, [[10, 13, 16, 30, 33, 23], [30, 61, 62, 45, 59, 119], [116, 90, 156, 198, 373, 326]], [128, 256, 512]]

Model summary: 214 layers, 7046599 parameters, 7046599 gradients, 16.0 GFLOPs

Transferred 343/349 items from yolov5s.pt

optimizer: SGD(lr=0.01) with parameter groups 57 weight(decay=0.0), 60 weight(decay=0.000515625), 60 bias

train: Scanning /home/raidhani/catkin_ws/src/data/train/labels.cache... 1008 images, 120 backgrounds, 0 corrupt: 100%|█████████

val: Scanning /home/raidhani/catkin_ws/src/data/valid/labels.cache... 230 images, 31 backgrounds, 0 corrupt: 100%|██████████| 2

AutoAnchor: 4.51 anchors/target, 0.997 Best Possible Recall (BPR). Current anchors are a good fit to dataset ✅

Plotting labels to runs/train/exp11/labels.jpg...

Image sizes 640 train, 640 val

Using 6 dataloader workers

Logging results to runs/train/exp11

Starting training for 100 epochs...

Epoch GPU_mem box_loss obj_loss cls_loss Instances Size

0/99 0G 0.1032 0.06545 0.05506 64 640: 100%|██████████| 169/169 [07:32<00:00, 2.68s/it

Class Images Instances P R mAP50 mAP50-95: 0%| | 0/20 [00:00<?, ?it/sWARNING ⚠️ NMS time limit 0.340s exceeded

Class Images Instances P R mAP50 mAP50-95: 5%|▌ | 1/20 [00:01<00:29, WARNING ⚠️ NMS time limit 0.340s exceeded

Class Images Instances P R mAP50 mAP50-95: 10%|█ | 2/20 [00:03<00:27, WARNING ⚠️ NMS time limit 0.340s exceeded

Class Images Instances P R mAP50 mAP50-95: 15%|█▌ | 3/20 [00:04<00:26, WARNING ⚠️ NMS time limit 0.340s exceeded

Class Images Instances P R mAP50 mAP50-95: 20%|██ | 4/20 [00:06<00:24, WARNING ⚠️ NMS time limit 0.340s exceeded

Class Images Instances P R mAP50 mAP50-95: 25%|██▌ | 5/20 [00:07<00:24, Class Images Instances P R mAP50 mAP50-95: 25%|██▌ | 5/20 [00:08<00:24,

Traceback (most recent call last):

File "train.py", line 986, in <module>

### Environment

YOLOv5 🚀 v7.0-368-gb163ff8d Python-3.8.10 torch-1.11.0+cpu CPU

### Minimal Reproducible Example

python3 train.py --img 640 --batch 6 --epochs 100 --data /home/raidhani/catkin_ws/src/data/data.yaml --weights yolov5s.pt

### Additional

_No response_

### Are you willing to submit a PR?

- [ ] Yes I'd like to help by submitting a PR! | open | 2024-09-25T01:24:00Z | 2024-11-09T14:46:48Z | https://github.com/ultralytics/yolov5/issues/13332 | [

"bug"

] | haniraid | 2 |

influxdata/influxdb-client-python | jupyter | 379 | query_data_frame returns object data type for all columns | <!--

Thank you for reporting a bug.

* Please add a :+1: or comment on a similar existing bug report instead of opening a new one.

* https://github.com/influxdata/influxdb-client-python/issues?utf8=%E2%9C%93&q=is%3Aissue+is%3Aopen+is%3Aclosed+sort%3Aupdated-desc+label%3Abug+

* Please check whether the bug can be reproduced with the latest release.

* The fastest way to fix a bug is to open a Pull Request.

* https://github.com/influxdata/influxdb-client-python/pulls

-->

__Steps to reproduce:__

1. Transferring data types (numeric, object etc.) to dataframe

__Expected behavior:__

Returning the data types of the data pulled from the database with __query_api__ as it should be.

__Actual behavior:__

When I use the following query with the __query_data_frame__ module in the __query_api__, all columns except _time are returned as objects; even though the data stored in the database is not string.

```console

query_api = influxdb_client.query_api()

bucket, measurement, start, stop ="test", "test", "0", "now()"

query = 'import \"influxdata/influxdb/schema\"' \

f' from(bucket:\"{bucket}\")' \

f' |> range(start: {start}, stop: {stop})' \

f' |> filter(fn: (r) => r._measurement == \"{measurement}\")' \

f' |> drop(columns: ["_start", "_stop","_measurement"])' \

f' |> schema.fieldsAsCols()'

result = query_api.query_data_frame(query=query)

print(result.dtypes)

_time datetime64[ns, UTC]

attribute_1 object

attribute_2 object

attribute_3 object

```

When I transform the data with the code below, I can see that the data is numeric, not object. The data which is saved in the database is as follows:

```console

result = json.loads(result.to_json(orient='records'))

print(result)

[{

"_time": 1638959688782,

"attribute_1": 1,

"attribute_2": 2,

"attribute_3": "test_message",

}]

```

__Specifications:__

- Client Version: 1.23.0

- InfluxDB Version: 2.2.1

- Platform: Windows 10 & Python3.8.11 (running on Docker)

| closed | 2021-12-08T08:12:25Z | 2021-12-14T13:31:37Z | https://github.com/influxdata/influxdb-client-python/issues/379 | [

"bug"

] | buraketmen | 1 |

fastapi-admin/fastapi-admin | fastapi | 5 | No model with name 'User' registered in app 'diff_models' | After installing (from the dev branch) and setting up fastapi-admin, I attempted to run `aerich migrate`, which failed with the error:

```

tortoise.exceptions.ConfigurationError: No model with name 'User' registered in app 'diff_models'.

```

But I didn't register any app named `diff_models`, and I don't see it in the code for this repo... so I'm pretty stumped as to how to fix this. If you could please point me in the right direction, that would be amazing. | closed | 2020-07-01T19:57:47Z | 2020-07-02T14:33:16Z | https://github.com/fastapi-admin/fastapi-admin/issues/5 | [] | mosheduminer | 4 |

vitalik/django-ninja | pydantic | 428 | properly name functions | **Is your feature request related to a problem? Please describe.**

I am using django-prometheus for statistics.

The view calls counters are bundled for function names and thus, all my api calls are in the name of `GET /api-1.0.0:ninja.operation._sync_view`

I would prefer to have the original function's name or the api-name

**Describe the solution you'd like**

https://github.com/vitalik/django-ninja/blob/3d745e7ad7b3cf4d92644143a9e342bdbc986273/ninja/operation.py#L314-L322

we could set `func.__module__` and `func.__name__` here | open | 2022-04-22T06:04:48Z | 2024-08-19T23:45:07Z | https://github.com/vitalik/django-ninja/issues/428 | [

"help wanted"

] | hiaselhans | 6 |

plotly/dash | data-visualization | 3,003 | Temporary failure in name resolution | This is occurring in latest Dash 2.18.1.

Doing this

app.run_server(host='0.0.0.0') .... did nothing

[https://github.com/plotly/dash/issues/1480](url) Referring to old issue. It expects HOST environment variable to be set under conda. Passing this host parameter is not overriding. | open | 2024-09-14T22:49:33Z | 2024-10-01T14:31:18Z | https://github.com/plotly/dash/issues/3003 | [

"regression",

"bug",

"P1"

] | summa-code | 5 |

ray-project/ray | data-science | 51,497 | CI test windows://python/ray/tests:test_basic is consistently_failing | CI test **windows://python/ray/tests:test_basic** is consistently_failing. Recent failures:

- https://buildkite.com/ray-project/postmerge/builds/8965#0195aaf1-9737-4a02-a7f8-1d7087c16fb1

- https://buildkite.com/ray-project/postmerge/builds/8965#0195aa03-5c4f-4156-97c5-9793049512c1

- https://buildkite.com/ray-project/postmerge/builds/8932#01959723-61ec-4376-b407-ff595262d8dc

DataCaseName-windows://python/ray/tests:test_basic-END

Managed by OSS Test Policy | closed | 2025-03-19T00:05:56Z | 2025-03-19T21:52:20Z | https://github.com/ray-project/ray/issues/51497 | [

"bug",

"triage",

"core",

"flaky-tracker",

"ray-test-bot",

"ci-test",

"weekly-release-blocker",

"stability"

] | can-anyscale | 3 |

FlareSolverr/FlareSolverr | api | 1,118 | [1337x] (testing) Exception (1337x): FlareSolverr was unable to process the request, please check FlareSolverr logs. Message: Error: Error solving the challenge. Timeout after 55.0 seconds.: FlareSolverr was unable to process the request, please check FlareSolverr logs. Message: Error: Error solving the challenge. Timeout after 55.0 seconds. | ### Have you checked our README?

- [X] I have checked the README

### Have you followed our Troubleshooting?

- [X] I have followed your Troubleshooting

### Is there already an issue for your problem?

- [X] I have checked older issues, open and closed

### Have you checked the discussions?

- [X] I have read the Discussions

### Environment

```markdown

- FlareSolverr version:3.3.13

- Last working FlareSolverr version:

- Operating system: Ubuntu Server 22.04 (Linux-5.15.0-100-generic-x86_64-with-glibc2.35)

- Are you using Docker: no

- FlareSolverr User-Agent (see log traces or / endpoint): Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/115.0.0.0 Safari/537.36

- Are you using a VPN: no

- Are you using a Proxy: no

- Are you using Captcha Solver: no

- If using captcha solver, which one:

- URL to test this issue: https://x1337x.eu/

```

### Description

Flaresolverr throws an error when testing for "https://x1337x.eu/" in Jackett:

Torrent[CORE] & iDope also throw timeout errors. TorrentQQ works as expected.

`Error: Error solving the challenge. Timeout after 55.0 seconds`

### Logged Error Messages

```text

INFO ReqId 140435798140480 Incoming request => POST /v1 body: {'maxTimeout': 55000, 'cmd': 'request.get', 'url': 'https://x1337x.eu/cat/Movies/time/desc/1/'}

DEBUG ReqId 140435798140480 Launching web browser...

DEBUG ReqId 140435798140480 Started executable: `/var/lib/deluge/.local/share/undetected_chromedriver/chromedriver` in a child process with pid: 3082135

DEBUG ReqId 140435798140480 New instance of webdriver has been created to perform the request

DEBUG ReqId 140435431355968 Navigating to... https://x1337x.eu/cat/Movies/time/desc/1/

INFO ReqId 140435431355968 Challenge detected. Title found: Just a moment...

DEBUG ReqId 140435431355968 Waiting for title (attempt 1): Just a moment...

DEBUG ReqId 140435431355968 Timeout waiting for selector

DEBUG ReqId 140435431355968 Try to find the Cloudflare verify checkbox...

DEBUG ReqId 140435431355968 Cloudflare verify checkbox not found on the page.

DEBUG ReqId 140435431355968 Try to find the Cloudflare 'Verify you are human' button...

DEBUG ReqId 140435431355968 The Cloudflare 'Verify you are human' button not found on the page.

ERROR ReqId 140435798140480 Error: Error solving the challenge. Timeout after 55.0 seconds.

DEBUG ReqId 140435798140480 Response => POST /v1 body: {'status': 'error', 'message': 'Error: Error solving the challenge. Timeout after 55.0 seconds.', 'startTimestamp': 1710100791025, 'endTimestamp': 1710100846760, 'version': '3.3.13'}

INFO ReqId 140435798140480 Response in 55.735 s

INFO ReqId 140435798140480 127.0.0.1 POST http://localhost:8191/v1 500 Internal Server Error

```

### Screenshots

_No response_ | closed | 2024-03-10T20:01:59Z | 2024-03-11T06:34:16Z | https://github.com/FlareSolverr/FlareSolverr/issues/1118 | [

"duplicate"

] | fcmircea | 6 |