repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

junyanz/pytorch-CycleGAN-and-pix2pix | pytorch | 1,054 | For Training KITTI dataset | Hello, it's really brilliant code! Thank you for releasing.

I want to train the KITTI dataset for generating "night scene". (based on Nuscene dataset's night scene)

KITTI dataset is "day scene".

So where do I have to change your code? Can you briefly explain this? Thank you very much | open | 2020-06-03T10:18:10Z | 2025-01-07T07:25:08Z | https://github.com/junyanz/pytorch-CycleGAN-and-pix2pix/issues/1054 | [] | skyphix | 5 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 301 | stage1 微调在单机多卡上跑不起来 | closed | 2023-05-11T01:17:21Z | 2023-07-04T06:44:53Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/301 | [] | yuemengrui | 4 | |

dgtlmoon/changedetection.io | web-scraping | 2,703 | [feature] (UI) Add autocomplete OR dropdown selector for watch tag. | **Version and OS**

0.46.04, termux

**Is your feature request related to a problem? Please describe.**

Now one have to manually write watch tag each time (for each new watch)

**Describe the solution you'd like**

Autocomplete writes it for you after first letters (with tab)

**Describe the use-case and give co... | closed | 2024-10-12T01:16:32Z | 2024-10-25T21:52:19Z | https://github.com/dgtlmoon/changedetection.io/issues/2703 | [

"enhancement"

] | gety9 | 2 |

nolar/kopf | asyncio | 842 | Does kopf depend on k8s version? | ### Keywords

k8s version

### Problem

Does kopf depend on k8s version?My k8s version is 1.13. | open | 2021-09-30T07:45:15Z | 2021-09-30T09:10:40Z | https://github.com/nolar/kopf/issues/842 | [

"question"

] | wyw64962771 | 1 |

sinaptik-ai/pandas-ai | data-science | 1,395 | docker compose up error 16/10/2024 | ### System Info

latest

ubuntu 22

3.11

### 🐛 Describe the bug

felipe@grupovanti:~/pandas-ai$ docker compose up

WARN[0000] The "cxwR1S88WmYVOw0BFpo4vuJ0Od5zrNXevkZcFt65wf5eTdMbGFMr6" variable is not set. Defaulting to a blank string.

[+] Running 9/9

✔ postgresql Pulled ... | closed | 2024-10-16T12:03:05Z | 2024-10-29T17:36:33Z | https://github.com/sinaptik-ai/pandas-ai/issues/1395 | [

"bug"

] | johnfelipe | 5 |

OpenInterpreter/open-interpreter | python | 708 | Need to pre-load a model somehow to avoid long start up times on Cloud Run | ### Describe the bug

When using a Cloud Run instance, the model is newly installed each time, but we have to download the start up files each time:

<img width="1047" alt="image" src="https://github.com/KillianLucas/open-interpreter/assets/3155884/94a46636-5fef-4049-bf44-5ce135eb3509">

Is it possible to load th... | closed | 2023-10-27T16:30:44Z | 2023-11-05T16:18:26Z | https://github.com/OpenInterpreter/open-interpreter/issues/708 | [

"Bug",

"External"

] | MarkEdmondson1234 | 5 |

ultralytics/yolov5 | deep-learning | 12,574 | About how the test results obtained by detect.py are evaluated | ### Search before asking

- [X] I have searched the YOLOv5 [issues](https://github.com/ultralytics/yolov5/issues) and [discussions](https://github.com/ultralytics/yolov5/discussions) and found no similar questions.

### Question

I used detect.py to get the test results and then wrote my own script to calculate the pr... | closed | 2024-01-03T08:04:31Z | 2024-10-20T19:36:00Z | https://github.com/ultralytics/yolov5/issues/12574 | [

"question",

"Stale"

] | Jiase | 7 |

huggingface/datasets | pandas | 6,571 | Make DatasetDict.column_names return a list instead of dict | Currently, `DatasetDict.column_names` returns a dict, with each split name as keys and the corresponding list of column names as values.

However, by construction, all splits have the same column names.

I think it makes more sense to return a single list with the column names, which is the same for all the split k... | open | 2024-01-09T10:45:17Z | 2024-01-09T10:45:17Z | https://github.com/huggingface/datasets/issues/6571 | [

"enhancement"

] | albertvillanova | 0 |

horovod/horovod | tensorflow | 4,043 | NVIDIA CUDA TOOLKIT version to run Horovod in Conda Environment | Hi Developers

I wish to install horovod inside Conda environment for which I require nccl from NVIDIA CUDA toolkit installed in system so I just wanted to know which is version of NVIDIA CUDA Toolkit is required to build horovod inside conda env to run Pytorch library.

Many Thanks

Pushkar | open | 2024-05-10T06:56:06Z | 2025-01-31T23:14:47Z | https://github.com/horovod/horovod/issues/4043 | [

"wontfix"

] | ppandit95 | 2 |

JaidedAI/EasyOCR | deep-learning | 1,313 | bangla text issue | bangla text works poor | open | 2024-10-06T19:13:11Z | 2024-10-06T19:13:11Z | https://github.com/JaidedAI/EasyOCR/issues/1313 | [] | Automatorbd | 0 |

Lightning-AI/pytorch-lightning | data-science | 20,356 | Type annotation for `BasePredictionWriter` subclass | ### Bug description

Subclassing the `BasePredictionWriter` for custom functionality results in Pylance complaining about incorrect type.

### What version are you seeing the problem on?

v2.4

### How to reproduce the bug

```python

from __future__ import annotations

from pathlib import Path

from typing... | open | 2024-10-22T13:42:32Z | 2024-10-22T14:14:02Z | https://github.com/Lightning-AI/pytorch-lightning/issues/20356 | [

"bug",

"needs triage",

"ver: 2.4.x"

] | saiden89 | 0 |

httpie/cli | python | 1,480 | I would like the option to disable the DNS Cache and do name resolution on every request | ## Checklist

- [ ] I've searched for similar feature requests.

---

## Enhancement request

…

---

## Problem it solves

E.g. “I'm always frustrated when […]”, “I’m trying to do […] so that […]”.

---

## Additional information, screenshots, or code examples

…

| open | 2023-02-16T00:34:13Z | 2023-02-16T00:34:13Z | https://github.com/httpie/cli/issues/1480 | [

"enhancement",

"new"

] | kahirokunn | 0 |

hankcs/HanLP | nlp | 750 | “来张北京的车票“ 分词为 “张北” “京“ | <!--

注意事项和版本号必填,否则不回复。若希望尽快得到回复,请按模板认真填写,谢谢合作。

-->

## 注意事项

请确认下列注意事项:

* 我已仔细阅读下列文档,都没有找到答案:

- [首页文档](https://github.com/hankcs/HanLP)

- [wiki](https://github.com/hankcs/HanLP/wiki)

- [常见问题](https://github.com/hankcs/HanLP/wiki/FAQ)

* 我已经通过[Google](https://www.google.com/#newwindow=1&q=HanLP)和[issue区检... | closed | 2018-01-17T09:45:37Z | 2018-01-17T09:49:47Z | https://github.com/hankcs/HanLP/issues/750 | [

"improvement"

] | whynogo | 1 |

microsoft/unilm | nlp | 807 | Meet a StopIteration when continue training infoxlm from xlmr | I try to continue training a infoxlm from xlmr on my own dataset.

After I initialize the conda environment and prepare the training data.

I use the following bash to train, but it throws a StopIteration Error.

The bash I used is here.

`python src-infoxlm/train.py ${MLM_DATA_DIR} \

--task infoxlm --criterion xlco ... | closed | 2022-07-28T08:33:40Z | 2022-10-15T03:19:34Z | https://github.com/microsoft/unilm/issues/807 | [] | SAI990323 | 5 |

akfamily/akshare | data-science | 5,798 | AKShare 接口问题报告 | import akshare as ak

# 注意:该接口返回的数据只有最近一个交易日的有开盘价,其他日期开盘价为 0

stock_zh_a_hist_min_em_df = ak.stock_zh_a_hist_min_em(symbol="000001", start_date="2025-03-07 09:30:00", end_date="2024-03-07 15:00:00", period="1", adjust="")

print(stock_zh_a_hist_min_em_df)

-----------------------------------------------------------------... | closed | 2025-03-08T10:41:26Z | 2025-03-09T10:56:40Z | https://github.com/akfamily/akshare/issues/5798 | [

"bug"

] | hifigecko | 1 |

Farama-Foundation/PettingZoo | api | 541 | $400 bounty for fixing and learning near optimal policy with Stable Baselines 3 in Waterworld environment | Hey,

If anyone is able to provide me Stable Baselines 3 based learning code that can learn near optimal policies (e.g. solve) the [Waterworld](https://www.pettingzoo.ml/sisl/waterworld) environment once it's fixed enough to be a reasonable environment that learning works in, I will pay you a bounty of $400. Example ... | closed | 2021-11-14T03:41:02Z | 2021-12-30T22:34:03Z | https://github.com/Farama-Foundation/PettingZoo/issues/541 | [] | jkterry1 | 6 |

LAION-AI/Open-Assistant | python | 3,429 | Download links in sft training folder | Please add links or cite to the opensource data in sft training. | closed | 2023-06-14T06:04:57Z | 2023-06-14T08:14:46Z | https://github.com/LAION-AI/Open-Assistant/issues/3429 | [] | lucasjinreal | 1 |

wandb/wandb | data-science | 9,549 | [Feature]: Support prefix glob for `Run.define_metric` | ### Description

I name all of my metrics as `metric_name/train/batch` or `metric_name/valid/epoch`, and I want to configure a default x-axis like `num_examples/train/batch` using [`Run.define_metric`](https://docs.wandb.ai/ref/python/run/#define_metric) (i.e. how many examples has the model seen up to that point, so t... | open | 2025-03-03T05:34:57Z | 2025-03-03T17:45:49Z | https://github.com/wandb/wandb/issues/9549 | [

"ty:feature"

] | ringohoffman | 1 |

erdewit/ib_insync | asyncio | 405 | News_api | Hey, I have actually subscribed to the Dowjones API, but unable to fetch tick data for the API, if anyone is having code for news API, for interactive broker please let me know | closed | 2021-10-16T18:14:31Z | 2021-11-04T19:54:24Z | https://github.com/erdewit/ib_insync/issues/405 | [] | sudhanshu8833 | 3 |

pyqtgraph/pyqtgraph | numpy | 2,578 | SpinBox _updateHeight has a type error | PyQtGraph v0.11.0 and v0.12.3, in SpinBox.py line 577 should be

```

self.setMaximumHeight(int(1e6))

```

not

```

self.setMaximumHeight(1e6)

```

The existing line causes a type error when we set `opts['compactHeight'] = False`, because `1e6` is a float, not an int.

Currently my workaround is to keep `o... | closed | 2023-01-08T01:25:33Z | 2023-01-08T02:23:29Z | https://github.com/pyqtgraph/pyqtgraph/issues/2578 | [

"good first issue"

] | jaxankey | 1 |

Miserlou/Zappa | flask | 1,377 | support AWS Lex Bot events | ## Context

Currently zappa supports excuting django [AWS events](https://github.com/Miserlou/Zappa#executing-in-response-to-aws-events). It doesn't recognise the Lex bot event. It explicitly checks for `'Records'` inside the request. I want to organise all Lex bot functions inside the django app itself. So that I will... | closed | 2018-02-06T06:22:35Z | 2018-02-08T18:27:34Z | https://github.com/Miserlou/Zappa/issues/1377 | [] | jnoortheen | 0 |

mwaskom/seaborn | pandas | 3,524 | how can i use despine in seaborn 0.13 | I want to use the Despine function In the seaborn 0.13 version, I want to remove the line of the upper right coordinate axis, how should I change,THANK YOU!

import seaborn.objects as so

import seaborn as sns

from seaborn import axes_style,plotting_context

from seaborn import despine

so.Plot.config.theme.update(axe... | closed | 2023-10-18T09:14:18Z | 2023-10-18T11:12:22Z | https://github.com/mwaskom/seaborn/issues/3524 | [] | z626093820 | 1 |

mherrmann/helium | web-scraping | 31 | Set chromedriver path in start_chrome | It would be nice if you could set the path to chromedriver in start_chrome, similar to the way you can pass options to it, for use in a container. (I spent most of the day yesterday trying to figure out why selenium can't find chromedriver even if it's in the path, and all I found was that it happens and people work ar... | closed | 2020-06-24T12:30:45Z | 2020-09-14T12:16:50Z | https://github.com/mherrmann/helium/issues/31 | [] | shalonwoodchl | 3 |

plotly/dash-table | dash | 659 | Built-in heatmap-style cell background colours | > May 1, 2020 Update by @chriddyp - This is now possible with conditional formatting. See https://dash.plotly.com/datatable/conditional-formatting & https://community.plotly.com/t/datatable-conditional-formatting-documentation/38763.

> We're keeping this issue open for built-in heatmap formatting that doesn't require ... | open | 2019-12-06T15:22:30Z | 2023-02-02T07:06:45Z | https://github.com/plotly/dash-table/issues/659 | [

"dash-type-enhancement"

] | interrogator | 5 |

bmoscon/cryptofeed | asyncio | 519 | add support huobi usdt perpetual contract. | Add support for Huobi USDT perpetual contract | open | 2021-06-13T13:30:51Z | 2021-06-14T22:20:26Z | https://github.com/bmoscon/cryptofeed/issues/519 | [

"Feature Request"

] | yfjelley | 1 |

sinaptik-ai/pandas-ai | pandas | 806 | Error with Custom prompt | ### System Info

Python 3.11.3

Pandasai 1.5.5

### 🐛 Describe the bug

Hi @gventuri I am trying to use custom prompt for python code generation. I am using agents and while looking at the log file, i can see that the prompt that was uses is the default prompt. Here is the code to replicate the issue and attach... | closed | 2023-12-08T06:10:06Z | 2024-06-01T00:20:53Z | https://github.com/sinaptik-ai/pandas-ai/issues/806 | [] | kumarnavn | 0 |

Kludex/mangum | asyncio | 202 | Allow Mangum to remove certain aws reponse headers from api gateway response | Problem:

Currently `Mangum` injects the following headers in the api gateway response for an aws lambda integration

```

x-amz-apigw-id

x-amzn-requestid

x-amzn-trace-id

```

This exposes additional information that the client doesn't need to know.

Proposal:

Allow Mangum to take optional parameter say `e... | closed | 2021-09-30T17:53:01Z | 2022-11-24T09:44:13Z | https://github.com/Kludex/mangum/issues/202 | [

"improvement"

] | amieka | 2 |

hack4impact/flask-base | sqlalchemy | 15 | Find easier way to create first admin | See discussion at https://github.com/hack4impact/women-veterans-rock/pull/1

| closed | 2015-10-21T03:14:59Z | 2016-07-07T17:32:52Z | https://github.com/hack4impact/flask-base/issues/15 | [

"enhancement"

] | sandlerben | 3 |

jupyter-book/jupyter-book | jupyter | 1,764 | Issue on page /LSA.html | open | 2022-06-22T16:08:50Z | 2022-06-22T16:12:54Z | https://github.com/jupyter-book/jupyter-book/issues/1764 | [] | Romali-040 | 1 | |

jazzband/django-oauth-toolkit | django | 1,254 | Using JWT for access and refresh tokens | <!-- What is your question? -->

Many of the standard implementations of OIDC specs use JWT as standard for access and refresh tokens. They also have the benefit of not relying on token persistence (in database) for the token introspection process.

Is there a plan for this feature in any future milestone? | closed | 2023-03-08T06:46:11Z | 2023-10-17T16:59:48Z | https://github.com/jazzband/django-oauth-toolkit/issues/1254 | [

"question"

] | mainakchhari | 7 |

yihong0618/running_page | data-visualization | 659 | v | closed | 2024-04-16T12:17:36Z | 2024-05-06T14:03:59Z | https://github.com/yihong0618/running_page/issues/659 | [] | Jeffg121 | 1 | |

plotly/dash-table | dash | 948 | Save input text in Input field inside Dash Editable Datatable without pressing 'Enter' key? | Hi All,

On entering a value in an input field inside an editable datatable, the user must press 'Enter' key to save it. Instead, if the user simply clicks outside of this cell, the value provided is not saved and the previous state is visible.

Is there a way by which we can save the input provided when focus moves ... | open | 2022-05-18T21:34:33Z | 2022-06-03T22:30:34Z | https://github.com/plotly/dash-table/issues/948 | [] | AnSohal | 3 |

modelscope/modelscope | nlp | 425 | Asr 推理问题 | OS: [e.g. linux]

Python/C++ Version:3.7.16

Package Version:pytorch=1.11.0、torchaudio=0.11.0、modelscope=1.7.1、funasr=0.7.1

Model:speech_paraformer-large-vad-punc_asr_nat-zh-cn-16k-common-vocab8404-pytorch

Command:

Details:asr转写

Error log:

Traceback (most recent call last):

File "/home/xiaoguo/.conda/envs/model... | closed | 2023-07-28T03:29:27Z | 2023-08-09T01:47:39Z | https://github.com/modelscope/modelscope/issues/425 | [] | Stonexiao | 2 |

localstack/localstack | python | 11,786 | bug: S3 on Resource Browser doesn't show Object folder named '/' | I've noticed recently that the LocalStack Resource Browser web app does not show object folders named '/'.

**Actual**

- LocalStack Resource Browser

**Expected**

- awslocal-cli for LocalStack instance

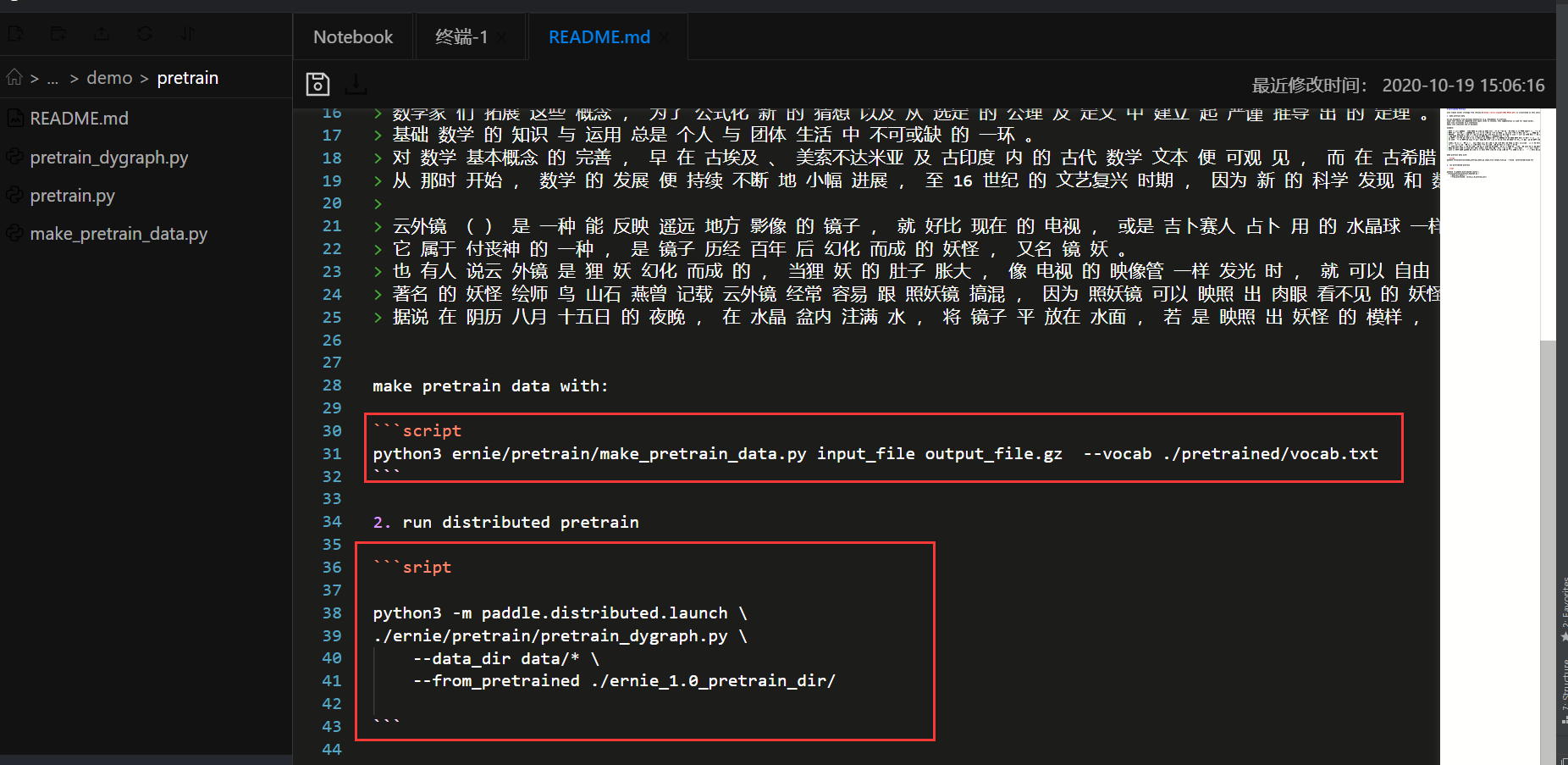

中运行readme文件

出现很多bug

首先是第一个制作预训练数据,需要的是paddlepaddle-gpu1.7的环境和paddle-propeller==0.3.1de... | closed | 2020-11-05T06:35:33Z | 2021-01-22T04:10:46Z | https://github.com/PaddlePaddle/ERNIE/issues/585 | [

"wontfix"

] | luming159 | 2 |

CorentinJ/Real-Time-Voice-Cloning | tensorflow | 319 | Short Phrase Workaround? location of specific words in seconds | So as other people have mentioned here, the spectrogram synthesis struggles with short phrases. One workaround is that if you lengthen your text to something that takes 6 seconds or so to say, you can do much better, then manually crop out the filler text. However, has anyone had luck doing that in an automated way? (... | closed | 2020-04-12T14:37:56Z | 2020-06-01T18:24:53Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/319 | [] | sunnybala | 3 |

kubeflow/katib | scikit-learn | 1,837 | AWS EKS Kubeflow Katib Examples stuck in running (2/3 Ready) | /kind bug

**What steps did you take and what happened:**

I have been installing and uninstalling kubeflow multiple times now in different ways trying to get Katib to function on the basic examples. I am simply running the base examples, I tried multiple and all have the same issue. This most recent try was with the... | closed | 2022-03-22T00:01:23Z | 2022-03-25T16:54:34Z | https://github.com/kubeflow/katib/issues/1837 | [

"kind/bug"

] | charlescurt | 10 |

roboflow/supervision | deep-learning | 1,562 | Connect Oriented Bounding Box to Metrics | # Connect Oriented Bounding Box to Metrics

> [!TIP]

> [Hacktoberfest](https://hacktoberfest.com/) is calling! Whether it's your first PR or your 50th, you’re helping shape the future of open source. Help us build the most reliable and user-friendly computer vision library out there! 🌱

---

Several new feature... | closed | 2024-10-03T11:50:31Z | 2024-11-01T09:45:43Z | https://github.com/roboflow/supervision/issues/1562 | [

"good first issue",

"hacktoberfest"

] | LinasKo | 20 |

koxudaxi/datamodel-code-generator | pydantic | 2,010 | Remove linters from package dependency | Would it be possible to move the code formatting tools to a dedicated poetry group such as `[tool.poetry.group.dev.dependencies]` or are they required for the package to work?

https://github.com/koxudaxi/datamodel-code-generator/blob/28be37d7c2a0b0bce21b0719ffb732df36ebce74/pyproject.toml#L54-L55 | closed | 2024-06-19T14:43:39Z | 2024-07-04T23:40:37Z | https://github.com/koxudaxi/datamodel-code-generator/issues/2010 | [

"answered"

] | PythonFZ | 1 |

tox-dev/tox | automation | 3,127 | TOX_OVERRIDES for testenv.pass_env are processed inconsistently | ## Issue

After adding TOX_OVERRIDEs to my projects, releases have started failing when the TWINE_PASSWORD is missing from passenv.

## Environment

Provide at least:

- OS: macOS, Linux

<details open>

<summary>Output of <code>pip list</code> of the host Python, where <code>tox</code> is installed</summary>... | open | 2023-09-18T15:03:37Z | 2024-03-05T22:15:14Z | https://github.com/tox-dev/tox/issues/3127 | [

"help:wanted"

] | jaraco | 1 |

huggingface/datasets | numpy | 6,584 | np.fromfile not supported | How to do np.fromfile to use it like np.load

```python

def xnumpy_fromfile(filepath_or_buffer, *args, download_config: Optional[DownloadConfig] = None, **kwargs):

import numpy as np

if hasattr(filepath_or_buffer, "read"):

return np.fromfile(filepath_or_buffer, *args, **kwargs)

else:

... | open | 2024-01-12T09:46:17Z | 2024-01-15T05:20:50Z | https://github.com/huggingface/datasets/issues/6584 | [] | d710055071 | 6 |

huggingface/transformers | nlp | 36,931 | Clarification on Commercial License Impact of LayoutLMv3ImageProcessor within UdopProcessor | Hi team,

I have a question regarding licensing and commercial usage.

Since UdopProcessor internally uses LayoutLMv3ImageProcessor (as part of resizing, rescaling, normalizing document images, and applying OCR), and given that LayoutLMv3 itself is not licensed for commercial use, I would like to clarify:

➡️ If I use ... | open | 2025-03-24T15:34:24Z | 2025-03-24T15:34:24Z | https://github.com/huggingface/transformers/issues/36931 | [] | Arjunexperion | 0 |

google-research/bert | nlp | 886 | accent character | hello,

in BERT tokenization.py, why are accents striped away? However, in the vocab file of multi_cased_model that supports multilingual languages, there are many accented characters.

Thanks, | open | 2019-10-25T09:09:17Z | 2020-03-30T19:31:16Z | https://github.com/google-research/bert/issues/886 | [] | lytum | 1 |

jupyterlab/jupyter-ai | jupyter | 838 | Server-side error on Python 3.8 | ## Description

```py

File "python3.8/site-packages/jupyter_ai/__init__.py", line 3, in <module>

from jupyter_ai_magics import load_ipython_extension, unload_ipython_extension

File "python3.8/site-packages/jupyter_ai_magics/__init__.py", line 4, in <module>

from .embedding_providers im... | closed | 2024-06-19T14:10:03Z | 2024-06-19T21:39:04Z | https://github.com/jupyterlab/jupyter-ai/issues/838 | [

"bug"

] | krassowski | 1 |

ultralytics/ultralytics | machine-learning | 18,904 | Benchmark gives NaN for exportable models | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and found no similar bug report.

### Ultralytics YOLO Component

Other

### Bug

I am getting this result from benchmark:

```

1300.2s 2103 Benchmarks complete for best.pt on face-detection-... | closed | 2025-01-26T15:12:52Z | 2025-01-28T18:16:28Z | https://github.com/ultralytics/ultralytics/issues/18904 | [

"bug",

"fixed",

"exports"

] | EmmanuelMess | 8 |

psf/black | python | 4,300 | line-length is not working as intended | I am using black version `24.3.0` and have set `line-length = 88` in my config file, however running `black .` command doesn't modify the lines of code that are over 88 characters.

Ex:

Before running black

```str = "this is the longest text in the history of mankind that I have seen in the world for all the good ... | closed | 2024-04-05T21:24:13Z | 2024-04-06T11:39:38Z | https://github.com/psf/black/issues/4300 | [

"T: bug"

] | gjambaisivanandham | 1 |

Farama-Foundation/Gymnasium | api | 733 | [Bug Report] UserWarning occurring after every call of the env. | ### Describe the bug

```

UserWarning: WARN: env.shape to get variables from other wrappers is deprecated and will be removed in v1.0, to get this variable you can do `env.unwrapped.shape` for environment variables or `env.get_wrapper_attr('shape')` that will search the reminding wrappers.

logger.warn(

```

the ... | closed | 2023-10-05T21:07:24Z | 2023-11-09T16:27:22Z | https://github.com/Farama-Foundation/Gymnasium/issues/733 | [

"bug"

] | davidireland3 | 3 |

dmlc/gluon-nlp | numpy | 849 | Fix all Pad() calls | Now that for all `Pad()` calls without pad_val set, users see a warning that `pad_val` is set to default value 0. This may confuse ppl who uses existing script and wonder if there's any problem in their setup for the warning message printed. We should fix all these usages. | closed | 2019-07-26T21:21:31Z | 2019-10-08T23:46:38Z | https://github.com/dmlc/gluon-nlp/issues/849 | [

"enhancement"

] | eric-haibin-lin | 1 |

jonaswinkler/paperless-ng | django | 1,347 | [BUG] Importing large file results in: RecursionError: maximum recursion depth exceeded | **Describe the bug**

I'm scanning a 90-page PDF that has been scanned using NAPS2 and is therefore already OCRed. When I want to import the file to paperless-ng the following error is produced.

**To Reproduce**

1. I don't know if this happens only for me. Try and upload a 90-pages or so PDF.

**Expected beha... | open | 2021-09-26T20:48:08Z | 2021-09-26T20:58:53Z | https://github.com/jonaswinkler/paperless-ng/issues/1347 | [] | ghost | 0 |

laurentS/slowapi | fastapi | 88 | Redis Version Conflict: install failed when redis > 4.0 | The conflict is caused by:

The user requested redis==4.2.0rc3

slowapi 0.1.5 depends on redis<4.0.0 and >=3.4.1 | closed | 2022-03-25T03:23:01Z | 2023-04-12T08:20:32Z | https://github.com/laurentS/slowapi/issues/88 | [] | a-yangyi | 9 |

python-visualization/folium | data-visualization | 1,453 | How to integrate and plot both heatmap and quivers/arrows for different timestamps using folium? | I want to plot wind velocity heatmap and wind direction quivers together for different timestamps on top of a map using folium. I could not find any plugin for this integrated operation. Can you please help me sort this out? | closed | 2021-02-14T02:25:55Z | 2022-11-18T14:30:15Z | https://github.com/python-visualization/folium/issues/1453 | [] | tasfia | 1 |

PaddlePaddle/ERNIE | nlp | 513 | ernie-tiny 使用GPU finetune | 用GPU finetune的时候,会报importError:libcublas.so cannot open shared object file...

但是在cuda lib中是有libcublas.so.9.0的,请问这种情况该怎么做? | closed | 2020-07-06T08:13:39Z | 2020-09-12T03:29:31Z | https://github.com/PaddlePaddle/ERNIE/issues/513 | [

"wontfix",

"Paddle-Issue"

] | kennyLSN | 3 |

xinntao/Real-ESRGAN | pytorch | 211 | Seams after upscaling | Whenever i input a seamless image i get an image with seams all around the edges, is there a way to patch the seams? | closed | 2022-01-03T13:23:17Z | 2024-05-15T14:38:33Z | https://github.com/xinntao/Real-ESRGAN/issues/211 | [] | industdev | 3 |

python-visualization/folium | data-visualization | 1,963 | Add support to map ruler based on configured projection | It would be great if we had the possibility to show a ruler around the map. This ruler should change as the projection change as well, and the frequency of the ticks should be configurable as well (eg. show the latitude every 10km and longitude every 5km if we are dealing with an UTM projection or latitude every 2º and... | open | 2024-06-04T13:47:17Z | 2024-06-14T15:15:37Z | https://github.com/python-visualization/folium/issues/1963 | [] | barcelosleo | 3 |

numpy/numpy | numpy | 28,157 | BUG: `StringDType`: `na_object` ignored in `full` | ### Describe the issue:

Creating a `StringDType` ndarray with `na_object` using `full` (and `full_like`) coerces the `nan` sentinel to a string.

I can work around this using `arr[:] = np.nan`, but think the behavior is unexpected.

### Reproduce the code example:

```python

import numpy as np

arr1 = np.full((1,), f... | closed | 2025-01-15T15:07:14Z | 2025-01-27T19:45:23Z | https://github.com/numpy/numpy/issues/28157 | [

"00 - Bug",

"component: numpy.strings"

] | mathause | 4 |

marimo-team/marimo | data-visualization | 3,781 | KaTeX Macro Support | ### Description

As outlined in https://github.com/marimo-team/marimo/discussions/1941, I would love to be able to have some Macro Support for KaTeX.

This would come in handy for repeated use of convoluted symbols and would really help us for teaching and presenting our stuff in notebooks.

Our current workflow for jup... | closed | 2025-02-13T12:51:39Z | 2025-02-14T07:18:32Z | https://github.com/marimo-team/marimo/issues/3781 | [

"enhancement"

] | claudiushaag | 3 |

itamarst/eliot | numpy | 456 | Testing infrastructure (@capture_logging) can't use custom JSON encoders | If you have a custom JSON encoder, and you try to test your log messages, your tests will fail because the `MemoryLogger` code path always encodes with the plain-vanilla JSON encoder.

Given `FileDestination` supports a custom JSON encoder, this is a problem. | closed | 2020-11-03T15:00:20Z | 2020-12-15T19:09:24Z | https://github.com/itamarst/eliot/issues/456 | [

"bug"

] | itamarst | 0 |

alpacahq/alpaca-trade-api-python | rest-api | 367 | Using paper trading, bars request returns 403 Forbidden | I've been running paper trading for the last few days without problem. Today for some reason, when I go to look up prices using the bars API, I am getting a 403 forbidden error.

I have version 0.51.0 installed, which from what I can tell on pip, is thee latest version.

Here's a simple example.

```python

impor... | closed | 2021-01-14T19:33:11Z | 2021-03-23T15:22:19Z | https://github.com/alpacahq/alpaca-trade-api-python/issues/367 | [] | joshterrill | 2 |

gee-community/geemap | streamlit | 445 | Publish Maps Notebook 24 - Datapane - Invalid version 'unknown' | Thanks for these lessons and videos. A great resource.

### Environment Information

- geemap version: 0.8.14

- Python version: 3.9.2

- Operating System: macOS 10.15.7

### Description

I am trying to run the Example notebook 24 Publish Maps https://github.com/giswqs/geemap/blob/master/examples/noteboo... | closed | 2021-04-26T21:43:53Z | 2021-11-13T06:28:25Z | https://github.com/gee-community/geemap/issues/445 | [

"bug"

] | nygeog | 1 |

davidsandberg/facenet | tensorflow | 306 | How to train my model? | closed | 2017-06-02T08:18:41Z | 2017-06-05T00:55:03Z | https://github.com/davidsandberg/facenet/issues/306 | [] | ouyangbei | 6 | |

pywinauto/pywinauto | automation | 694 | move_window not available on dialog | According to the documentation, move_window should be available on all controls and it seems like I have a valid dialog object. But when I try to use this method on the main window of my application, I get an error. I'm assuming that I am actually doing it wrong, rather than a bug, but any help would be appreciated. I ... | open | 2019-03-25T16:04:54Z | 2019-03-25T22:55:18Z | https://github.com/pywinauto/pywinauto/issues/694 | [

"duplicate"

] | alteryx-sezell | 1 |

xonsh/xonsh | data-science | 5,120 | Is the tutorial_ptk correct (maybe need to be fixed)? | 1) Is the tutorial_ptk correct?

I am beginner in python. And i wrote .xonshrc as here: https://xon.sh/tutorial_ptk.html

```

@events.on_ptk_create

def custom_keybindings(bindings, **kw):

@handler(Keys.ControlP)

def run_ls(event):

ls -l

event.cli.renderer.erase()

```

But error:

```

... | open | 2023-04-19T16:25:08Z | 2023-04-21T14:39:40Z | https://github.com/xonsh/xonsh/issues/5120 | [

"docs",

"prompt-toolkit"

] | pigasus55 | 1 |

PokeAPI/pokeapi | graphql | 1,045 | Pokemons rename in species request | The names of the pokemons are different, taking into account the urls `https://pokeapi.co/api/v2/pokemon/{name}` and `https://pokeapi.co/api/v2/pokemon-species/{name}`, generating errors.

For example, in the `https://pokeapi.co/api/v2/pokemon/892` request, the name of the pokemon is **urshifu-single-strike**, howeve... | closed | 2024-02-15T16:02:46Z | 2024-02-21T18:32:11Z | https://github.com/PokeAPI/pokeapi/issues/1045 | [] | aristofany-herderson | 2 |

flairNLP/fundus | web-scraping | 159 | Generate/Link xpath/csss documentation | This is important, since most of the work in adding a parser consists of xpath/css. We should ease this part of the contribution. | closed | 2023-04-06T12:22:09Z | 2023-08-22T17:58:05Z | https://github.com/flairNLP/fundus/issues/159 | [

"documentation"

] | Weyaaron | 2 |

ploomber/ploomber | jupyter | 276 | Document how to send custom parameters to nbconvert | We use the official nbconvert package to export notebooks to different formats. Each output format has some extra options that users can set via the `nbconvert_export_kwargs` parameter in `NotebookRunner` (such as hiding input cells). However, these details are hidden in the Python API docs, and even there, they aren't... | closed | 2020-11-04T19:41:33Z | 2020-11-16T02:36:47Z | https://github.com/ploomber/ploomber/issues/276 | [] | edublancas | 2 |

strawberry-graphql/strawberry | django | 2,943 | Allow other GraphiQL interfaces | I think we should allow users to choose between GraphiQL and other interfaces (like the Apollo Explorer).

This might not be too difficult to implement now that we have a base view, but maybe we need some tweaks for Django, or we should at least consider to (keep) support(ing) overriding the playground using template... | closed | 2023-07-12T14:52:52Z | 2025-03-20T15:56:18Z | https://github.com/strawberry-graphql/strawberry/issues/2943 | [

"feature-request"

] | patrick91 | 5 |

QingdaoU/OnlineJudge | django | 402 | Docker部署JavaScript支持的问题 |

我参照 OnlineJudgeDeploy 安装的,但是因为我海外的机器访问阿里云有问题,资源下载不了,所以我是从docker hub的镜像 `qduoj/judge-server` 安装的

安装完之后我添加了一个题目,但是发现没有JavaScript选项,是不是镜像的版本比较低呀?如果是的话,能更新到最新的吗?

多谢~ | open | 2022-01-25T06:49:26Z | 2022-01-26T03:30:06Z | https://github.com/QingdaoU/OnlineJudge/issues/402 | [] | akira-cn | 1 |

CorentinJ/Real-Time-Voice-Cloning | python | 934 | File structure for training (encoder, synthesizer (vocoder)) | I want to train my own model on the mozilla common voice dataset.

All .mp3s are delivered in one folder with accompanying .tsv lists. I understood, that next to an utterance the corresponding .txt has to reside.

But what about folder structre. Can I leave all .mp3s in that one folder or do I have to split them into ... | open | 2021-12-02T06:38:49Z | 2022-09-01T14:43:28Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/934 | [] | Dannypeja | 24 |

Kanaries/pygwalker | plotly | 555 | Issue with Pygwalker | This is the error I am getting while trying to execute this code:

walker=pyg.walk(df)

Error:

[Open Browser Console for more detailed log - Double click to close this message]

Failed to load model class 'BoxModel' from module '@jupyter-widgets/controls'

Error: Module @jupyter-widgets/controls, version ^1.5.0 is n... | closed | 2024-05-18T11:24:03Z | 2024-05-19T06:36:26Z | https://github.com/Kanaries/pygwalker/issues/555 | [] | Aditi-Gupta2001 | 4 |

quantmind/pulsar | asyncio | 225 | JsonProxy is not using utf-8 | The following line does not work as intended as it does not send requests encoded as utf-8:

`json.dumps(data).encode('utf-8')`

According to the docs I think you should set `ensure_ascii=False`:

`json.dumps(data, ensure_ascii=False).encode('utf-8')`

> If ensure_ascii is True (the default), all non-ASCII characters in... | closed | 2016-06-15T14:03:14Z | 2016-06-23T09:38:46Z | https://github.com/quantmind/pulsar/issues/225 | [] | wilddom | 3 |

onnx/onnx | scikit-learn | 5,799 | Verify implementation of BatchNormalization-9 | In the reference implementation of BatchNormalization-9, `_batchnorm_test_mode` is used twice. All the other implementations use both `_batchnorm_test_mode` and `_batchnorm_train_mode`. I'm trying to implement this operator in [GONNX](https://github.com/AdvancedClimateSystems/gonnx), and I'm wondering if this correct.... | closed | 2023-12-10T18:45:26Z | 2025-01-03T06:44:36Z | https://github.com/onnx/onnx/issues/5799 | [

"stale"

] | Swopper050 | 3 |

geex-arts/django-jet | django | 90 | Duplicate app label name with django-oscar dashboard | Ok, i foun this error installing `django-oscar` and `django-jet` in same project.

``` bash

./manage.py runserver ✓ 1567 11:22:45

Unhandled exception in thread started by <function check_errors.<locals>.wrapper at 0x7f... | open | 2016-07-25T16:27:43Z | 2018-05-28T09:17:46Z | https://github.com/geex-arts/django-jet/issues/90 | [] | SalahAdDin | 10 |

cupy/cupy | numpy | 8,213 | Typecasting issue | ### Description

It seems that CuPy is incorrectly typecasting data. The data type should remain unchanged if the user explicitly specifies it during tensor creation. However, the data type is being changed from float16 to float32, even though it was manually set to float16. Please refer to the output and code for deta... | closed | 2024-02-27T03:47:22Z | 2024-02-27T06:33:03Z | https://github.com/cupy/cupy/issues/8213 | [

"issue-checked"

] | rajagond | 2 |

BayesWitnesses/m2cgen | scikit-learn | 331 | Mabe not support lightgbmClassifier lightgbm== 2.3.0 when tree['tree_structure']['decision_type'] is '==' | AssertionError: Unexpected comparison op

i find my model.booster_.dump_model()['tree_info'][0]['tree_structure']['decision_type'] is '=='

but the code is assert op == ast.CompOpType.LTE, "Unexpected comparison op"

and ast.CompOpType.LTE is '<='

i change my code assert op == ast.CompOpType.LTE or op == ast.Comp... | closed | 2020-12-22T11:00:36Z | 2020-12-23T08:04:28Z | https://github.com/BayesWitnesses/m2cgen/issues/331 | [] | Sherlockgg | 1 |

andfanilo/streamlit-echarts | streamlit | 6 | echarts 5 support | Still waiting for https://github.com/hustcc/echarts-for-react/issues/388

A user wanted a demo of [gauge ring](https://echarts.apache.org/next/examples/en/editor.html?c=gauge-ring) in Streamlit | closed | 2020-12-11T07:48:52Z | 2021-04-16T19:46:51Z | https://github.com/andfanilo/streamlit-echarts/issues/6 | [] | andfanilo | 2 |

plotly/dash | flask | 2,911 | Inconsistent behavior with dcc.Store initial values and prevent_initial_call |

```

dash 2.16.1

dash-bootstrap-components 1.6.0

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-iconify 0.1.2

dash-mantine-components 0.12.1

dash-table 5.... | open | 2024-07-03T16:42:36Z | 2024-08-30T15:13:07Z | https://github.com/plotly/dash/issues/2911 | [

"bug",

"P3"

] | shimon-l | 1 |

tensorpack/tensorpack | tensorflow | 1,150 | running multi-pod with multi-gpu,each pod contains four or more gpu cards,but according to your guideline,all pods can running,but happen a probelem,so list version of each component version | ### 1. Running Multi-Pod With Multi-GPUS:

(1) **Multi-Pods Running Four Or More GPUS,Each Pod Contains Four GPUS**

(2) **Running Script For Python(imagenet-xxx.py) From Your Project**

(3) **Parameter Is The Same To Your Example**

### 2. I observed:

(1) **communication between gpu can't work**

(2) **... | closed | 2019-04-16T13:47:00Z | 2019-04-23T07:26:07Z | https://github.com/tensorpack/tensorpack/issues/1150 | [

"unrelated"

] | perrynzhou | 2 |

microsoft/qlib | deep-learning | 1,819 | Will consider improving and enhancing the functionality and examples of Reinforcement Learning? | Will consider improving and enhancing the functionality and examples of Reinforcement Learning?

The current sample is running slowly and has not been updated for a long time.

-------

会否考虑完善和增强强化学习部分的功... | open | 2024-07-01T12:53:23Z | 2024-09-28T07:09:33Z | https://github.com/microsoft/qlib/issues/1819 | [

"question"

] | ghyzx | 1 |

pykaldi/pykaldi | numpy | 102 | there is a problem between in fbank_feature.shape and pitch_feature.shape. | 1.fbank_feature:

from kaldi.feat.wave import WaveData

from kaldi.base._iostream import *

from kaldi.util.io import *

wavedata=WaveData()

inp=Input("BAC009S0912W0121.wav")

wavedata.read(inp.stream())

s3=wavedata.data()

s3 = s3[:,::int(wavedata.samp_freq / sf_fbank)]

m3 = SubVector(mean(s3, axis=0))

f3=fbank.co... | closed | 2019-03-25T08:57:32Z | 2019-03-26T03:09:08Z | https://github.com/pykaldi/pykaldi/issues/102 | [] | liuchenbaidu | 2 |

tiangolo/uvicorn-gunicorn-fastapi-docker | pydantic | 76 | Root path is applied 2 times when using root_path | Here is error:

Python 3.7.8

fastapi 0.63.0

Run configuration:

`uvicorn.run("app:app", host="0.0.0.0", port=port, reload=True)`

I think the error is in the path here is console log:

`127.0.0.1:549... | closed | 2021-02-25T15:46:02Z | 2022-11-25T00:24:13Z | https://github.com/tiangolo/uvicorn-gunicorn-fastapi-docker/issues/76 | [

"answered"

] | mindej | 2 |

healthchecks/healthchecks | django | 384 | Add a curl example in PHP example section | Suggested here: https://twitter.com/smknstd/status/1272818956076810247

Aside from the code sample, it will need some accompanying information–

- is curl support typically built in with PHP, or does it... | closed | 2020-06-17T07:29:54Z | 2020-07-07T18:21:32Z | https://github.com/healthchecks/healthchecks/issues/384 | [] | cuu508 | 6 |

nvbn/thefuck | python | 1,500 | No fucks given for homebrew update command | ```console

% brew update go

Error: This command updates brew itself, and does not take formula names.

Use `brew upgrade go` instead.

% fuck

No fucks given

```

Thefuck should run the suggested command.

Env: The Fuck 3.32 using Python 3.13.2 and ZSH 5.9

It seems the rule doesn't work: https://github.com/nvbn/thefuck/... | open | 2025-02-25T14:44:07Z | 2025-03-18T08:25:40Z | https://github.com/nvbn/thefuck/issues/1500 | [] | Hipska | 1 |

ymcui/Chinese-LLaMA-Alpaca | nlp | 128 | 词表合并问题 | 请教各位大佬:我在领域中文语料上训练了基于[sentencepiece](https://github.com/google/sentencepiece)的中文词表myly.model,请问与LLaMa原来的词表tokenizer.model如何进行合并? | closed | 2023-04-11T17:04:27Z | 2023-05-06T04:18:26Z | https://github.com/ymcui/Chinese-LLaMA-Alpaca/issues/128 | [] | jamestch | 9 |

vimalloc/flask-jwt-extended | flask | 133 | Default invalid token callback may not be secure | Hi, I'm quite new to this extension, and please correct me if I'm wrong.

While playing around with the `@jwt_protected` endpoint, and trying to customize my `invalid_token_loader` callback I noticed that we can get different error messages depending on how we tamper the token.

I understand it's reasonable to diff... | closed | 2018-03-16T22:29:56Z | 2018-03-26T21:17:03Z | https://github.com/vimalloc/flask-jwt-extended/issues/133 | [] | CristianoYL | 2 |

thp/urlwatch | automation | 232 | Identify jobs not only by URL, but also by filters and/or POST data | I want to check for changes in the "article-content" and the "table of contents" sections on the same web page. Both sections are in named classes. This is my config:

```

kind: url

name: GDPRTableOfContents

url: https://ico.org.uk/for-organisations/guide-to-the-general-data-protection-regulation-gdpr/

filter... | closed | 2018-05-10T15:51:46Z | 2020-07-10T13:16:18Z | https://github.com/thp/urlwatch/issues/232 | [] | cjohnsonuk | 7 |

deepset-ai/haystack | nlp | 8,524 | Allow subclassing of `Document` | I want to subclass `Document`. An issue arise when using built-in components that uses `haystack.Document` as type and the type checking that is performed in the pipeline:

```python

PipelineConnectError: Cannot connect 'cleaner.documents' with 'empty_doc_remover.documents': their declared input and output types do ... | closed | 2024-11-08T14:32:23Z | 2025-03-03T15:00:24Z | https://github.com/deepset-ai/haystack/issues/8524 | [

"P3"

] | tsoernes | 0 |

littlecodersh/ItChat | api | 687 | 如何在运行脚本期间依然在手机客户端提示消息? | 因为运行itchat之后相当于是网页登录了,所以就不能在手机客户端进行消息的铃声震动提示了。但是有时候还是希望能够及时通过铃声和震动来提示,怎么办呢? | closed | 2018-07-01T18:26:35Z | 2018-07-17T02:48:45Z | https://github.com/littlecodersh/ItChat/issues/687 | [] | caiqiqi | 2 |

ray-project/ray | tensorflow | 51,416 | [Ray Data | Core ] | ### What happened + What you expected to happen

I am running SAC on a custom environment, and use Ray Data to load a small csv file for training. I keep encountering the following error message about having too many positional arguments:

Exception occurred in Ray Data or Ray Core internal code. If you continue to se... | open | 2025-03-17T07:11:48Z | 2025-03-18T18:35:11Z | https://github.com/ray-project/ray/issues/51416 | [

"bug",

"triage",

"data"

] | teen4ever | 0 |

plotly/dash-core-components | dash | 601 | This fix correctly handles the `x` action case - only additional fix is that it might setting one of the date props superfluously if already / still `None`. | This fix correctly handles the `x` action case - only additional fix is that it might setting one of the date props superfluously if already / still `None`.

----------

Leaving the scope of this specific issue / fix and looking into the logic of this component as a whole. Multiple scenarios seem wrongly implemente... | closed | 2019-08-08T20:35:31Z | 2019-08-08T20:35:53Z | https://github.com/plotly/dash-core-components/issues/601 | [] | byronz | 0 |

HumanSignal/labelImg | deep-learning | 648 | Terminal error: "ZeroDivisionError: float division by zero," program crashes when attempting to create YOLO training samples on large images | I am trying to label images (with bounding boxes) with the YOLO format, but the program keeps crashing when I try to label large images (works fine with Pascal VOC samples). I have several UAV images (dimensions 4000 x 3000 pixels) taken at low altitude, and I need to label these images, as they are very high-resolutio... | closed | 2020-09-17T20:04:18Z | 2022-01-07T15:56:36Z | https://github.com/HumanSignal/labelImg/issues/648 | [] | ib124 | 1 |

pandas-dev/pandas | python | 60,928 | ENH: Control resampling at halfyear with origin | ### Pandas version checks

- [x] I have checked that this issue has not already been reported.

- [x] I have confirmed this bug exists on the [latest version](https://pandas.pydata.org/docs/whatsnew/index.html) of pandas.

- [ ] I have confirmed this bug exists on the [main branch](https://pandas.pydata.org/docs/dev/ge... | closed | 2025-02-13T22:52:27Z | 2025-03-03T18:21:17Z | https://github.com/pandas-dev/pandas/issues/60928 | [

"Enhancement",

"Frequency",

"Resample"

] | rwijtvliet | 8 |

alteryx/featuretools | data-science | 2,673 | Remove premium primitives from docs to be able to release it | closed | 2024-02-16T18:02:46Z | 2024-02-16T19:14:28Z | https://github.com/alteryx/featuretools/issues/2673 | [] | tamargrey | 0 | |

erdewit/ib_insync | asyncio | 542 | Error for pnlSingle | Hi,

I am using

`ib.reqPnLSingle('account', '', fill.contract.conId)`

and I am receiving:

Error for pnlSingle:

Traceback (most recent call last):

File "/home/p/.pyenv/versions/3.6.4/lib/python3.6/site-packages/ib_insync/decoder.py", line 185, in handler

for (typ, field) in zip(types, fields[skip:])]

F... | closed | 2023-01-17T12:05:30Z | 2023-01-19T09:06:41Z | https://github.com/erdewit/ib_insync/issues/542 | [] | piotrgolawski | 1 |

ultralytics/ultralytics | python | 19,108 | Scores for all classes for each prediction box | ### Search before asking

- [x] I have searched the Ultralytics YOLO [issues](https://github.com/ultralytics/ultralytics/issues) and [discussions](https://github.com/orgs/ultralytics/discussions) and found no similar questions.

### Question

I want be able to get the scores across all classes for a prediction.

Examp... | open | 2025-02-06T18:58:41Z | 2025-02-07T23:18:02Z | https://github.com/ultralytics/ultralytics/issues/19108 | [

"question",

"detect"

] | bharathsivaram10 | 6 |

ResidentMario/geoplot | matplotlib | 66 | Add dependency to doc | Hi,

I'm beginning to use your library and just wanted to share the troubles I went through to install the library from `pip`.

Maybe it should be nice to have a Dependency section in the README of this project.

I used this information [from cartopy](https://scitools.org.uk/cartopy/docs/v0.15/installing.html#requ... | closed | 2018-12-11T12:04:10Z | 2018-12-16T04:45:16Z | https://github.com/ResidentMario/geoplot/issues/66 | [] | SylvainLan | 2 |

dmlc/gluon-cv | computer-vision | 910 | Combine datasets in a single dataloader | I would like to experiment with combining multiple datasets to retrain object detection but I am unable to find an easy way to do so.

Using https://gluon-cv.mxnet.io/build/examples_detection/train_yolo_v3.html as a base example, my first idea would be to change

` for i, batch in enumerate(train_data):`

... | closed | 2019-08-15T09:38:24Z | 2021-06-01T07:11:00Z | https://github.com/dmlc/gluon-cv/issues/910 | [

"Stale"

] | douglas125 | 2 |

google-research/bert | nlp | 476 | Crash issue when best_non_null_entry is None on SQuAD 2.0 | If the n best entries are all null, we would get 'None' for best_non_null_entry and the program will crash in the next few lines.

I made a workaround as following by assigning `score_diff = FLAGS.null_score_diff_threshold + 1.0` to fix this issue in `run_squad.py`.

Please fix it in the official release.

```

... | open | 2019-03-05T01:26:38Z | 2019-03-05T01:26:38Z | https://github.com/google-research/bert/issues/476 | [] | xianzhez | 0 |

PokemonGoF/PokemonGo-Bot | automation | 5,556 | Sniper is assuming false VIPs on social mode | It seems to be reporting false VIPs when under social mode. I'll investigate it tomorrow because I'm sick and my head hurts right now. You can close all the other issues about this.

| closed | 2016-09-20T01:07:10Z | 2016-09-20T02:42:17Z | https://github.com/PokemonGoF/PokemonGo-Bot/issues/5556 | [

"Bug"

] | YvesHenri | 2 |

apache/airflow | automation | 47,373 | Deferred TI object has no attribute 'next_method' | ### Apache Airflow version

3.0.0b1

### If "Other Airflow 2 version" selected, which one?

_No response_

### What happened?

RetryOperator is failing.

```

[2025-03-05T07:12:25.188823Z] ERROR - Task failed with exception logger="task" error_detail=

[{"exc_type":"AttributeError","exc_value":"'RuntimeTaskInstance' obje... | closed | 2025-03-05T07:39:18Z | 2025-03-12T08:31:50Z | https://github.com/apache/airflow/issues/47373 | [

"kind:bug",

"priority:high",

"area:core",

"affected_version:3.0.0beta"

] | atul-astronomer | 10 |

flasgger/flasgger | rest-api | 424 | Missing git tag for 0.9.5 release | It would be nice to keep PyPI releases and git tags in sync :) | closed | 2020-08-01T05:43:04Z | 2020-08-01T15:28:29Z | https://github.com/flasgger/flasgger/issues/424 | [] | felixonmars | 1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.