repo_name stringlengths 9 75 | topic stringclasses 30

values | issue_number int64 1 203k | title stringlengths 1 976 | body stringlengths 0 254k | state stringclasses 2

values | created_at stringlengths 20 20 | updated_at stringlengths 20 20 | url stringlengths 38 105 | labels listlengths 0 9 | user_login stringlengths 1 39 | comments_count int64 0 452 |

|---|---|---|---|---|---|---|---|---|---|---|---|

ageitgey/face_recognition | machine-learning | 1,560 | image de-duplication by comparing faces images. | * face_recognition version:1.3.0

* Python version:3.11.6

* Operating System:Ubuntu(23.10)

### Description

Requirement:

I have 2 folders containing n no.of images, and each image may have different faces in them. So I need to extract only unique faces from images folder.

I have a folder of images which have mul... | open | 2024-04-16T08:42:36Z | 2024-04-16T08:42:36Z | https://github.com/ageitgey/face_recognition/issues/1560 | [] | Raghucharan16 | 0 |

thtrieu/darkflow | tensorflow | 557 | NaN training loss *after* first iteration | I am attempting to further train the yolo network on the coco dataset as a starting point for "training my own data." I converted the coco json into .xml annotations, but when I try to train, I get NaN loss starting at the second step. Most issues regarding NaN loss seem to be centered around incorrect annotations, h... | closed | 2018-02-02T20:39:53Z | 2022-05-15T16:29:16Z | https://github.com/thtrieu/darkflow/issues/557 | [] | matlabninja | 7 |

davidsandberg/facenet | tensorflow | 412 | How to run your code to mark one's name in one image?(accomplish face recognition) | If i want to test one image,and the result will cirle out the faces in the image and label his or her name beside the bounding boxes,so how to run this code to train my own model and how to modify this code to accomplish my goal?

Thanks,very much! | closed | 2017-08-06T06:48:12Z | 2022-05-31T16:38:57Z | https://github.com/davidsandberg/facenet/issues/412 | [] | Victoria2333 | 13 |

yihong0618/running_page | data-visualization | 386 | 小白不会折腾 | 请问想导出悦跑圈该怎么操作呀,不会这些东西 | closed | 2023-03-14T08:28:35Z | 2024-03-01T10:09:48Z | https://github.com/yihong0618/running_page/issues/386 | [] | woodanemone | 5 |

amdegroot/ssd.pytorch | computer-vision | 44 | RGB vs BGR? | Hello,

I was looking at your implementation and I believe the input to your model is an image with RGB ordering. I was also looking at the [keras implementation](https://github.com/rykov8/ssd_keras) and they use BGR values. I have been also testing with an [map evaluation script](https://github.com/oarriaga/single_sho... | closed | 2017-07-28T16:38:43Z | 2017-09-22T10:14:39Z | https://github.com/amdegroot/ssd.pytorch/issues/44 | [] | oarriaga | 4 |

public-apis/public-apis | api | 3,649 | Responsive-Login-Form-master5555.zip |

| closed | 2023-09-26T16:36:14Z | 2023-09-26T19:45:32Z | https://github.com/public-apis/public-apis/issues/3649 | [] | Shopeepromo | 0 |

babysor/MockingBird | deep-learning | 653 | 能否提供数据包的格式 | 如果我想使用自己的数据包,我应该如何去格式化我的数据

谢谢

Edit 1:

这边的是magicdata_dev_set的格式,我是否把我自己的数据包根据这个结构去做格式就可以了?

```

.

└── MAGICDATA_dev_set/

├── TRANS.txt

├── speaker_id_1/

│ ├── utterance_1.wav

│ ├── utterance_2.wav

│ └── ...

├── speaker_id_2/

│ ├── utterance_3.wav

│ ├── utterance... | closed | 2022-07-18T03:56:01Z | 2022-07-18T06:23:05Z | https://github.com/babysor/MockingBird/issues/653 | [] | markm812 | 2 |

CorentinJ/Real-Time-Voice-Cloning | deep-learning | 1,299 | 01453367 | Pero que seguro | open | 2024-05-21T13:42:25Z | 2024-05-21T13:43:43Z | https://github.com/CorentinJ/Real-Time-Voice-Cloning/issues/1299 | [] | Oscar199632 | 0 |

huggingface/datasets | pytorch | 6,899 | List of dictionary features get standardized | ### Describe the bug

Hi, i’m trying to create a HF dataset from a list using Dataset.from_list.

Each sample in the list is a dict with the same keys (which will be my features). The values for each feature are a list of dictionaries, and each such dictionary has a different set of keys. However, the datasets librar... | open | 2024-05-15T14:11:35Z | 2024-05-15T14:11:35Z | https://github.com/huggingface/datasets/issues/6899 | [] | sohamparikh | 0 |

biolab/orange3 | numpy | 7,051 | Box Plot: Sometimes the statistic does not appear under the graph. | **What's wrong?**

When the graph increases a lot vertically, the text of t'student, ANOVA, etc. is not visible.

**How can we reproduce the problem?**

Load dataset "Bank Marketing"

Add widget Box Plot

Connect both

In Box Plot choose the variable "age" and for Subgroups "education" or "month"

No text appears below the... | open | 2025-03-23T22:48:03Z | 2025-03-23T23:05:02Z | https://github.com/biolab/orange3/issues/7051 | [

"bug report"

] | gmolledaj | 1 |

dask/dask | pandas | 11,433 | `array.broadcast_shapes` to return a tuple of `int`, not a tuple of NumPy scalars | <!-- Please do a quick search of existing issues to make sure that this has not been asked before. -->

Before NumPy v2, the `repr` of NumPy scalars returned a plain number. This changed in [NEP 51](https://numpy.org/neps/nep-0051-scalar-representation.html), which changes how the result of `broadcast_shapes()` is pr... | closed | 2024-10-16T13:39:49Z | 2024-10-16T16:44:57Z | https://github.com/dask/dask/issues/11433 | [

"array"

] | trexfeathers | 4 |

Asabeneh/30-Days-Of-Python | numpy | 544 | Lack of error handling for invalid inputs. | ['The provided code appears to be a function that summarizes text using sentence extraction. However, there are potential issues with how it handles invalid inputs.', 'Current Behavior:', 'The code assumes that the input text is valid and does not perform any checks for empty or null inputs. If an empty string or null ... | open | 2024-07-03T12:07:05Z | 2024-07-03T12:07:05Z | https://github.com/Asabeneh/30-Days-Of-Python/issues/544 | [] | aakashrajaraman2 | 0 |

zihangdai/xlnet | nlp | 267 | colab notebook can not run under tensorflow 2.0 | XLNet-imdb-GPU.ipynb

Error: module 'tensorflow._api.v2.train' has no attribute 'Optimizer'

Add this fix:

%tensorflow_version 1.x | open | 2020-06-10T03:41:23Z | 2020-06-10T03:41:23Z | https://github.com/zihangdai/xlnet/issues/267 | [] | jlff | 0 |

neuml/txtai | nlp | 7 | Add unit tests and integrate Travis CI | Add testing framework and integrate Travis CI | closed | 2020-08-17T14:36:45Z | 2021-05-13T15:02:42Z | https://github.com/neuml/txtai/issues/7 | [] | davidmezzetti | 0 |

matterport/Mask_RCNN | tensorflow | 2,900 | ModuleNotFoundError: No module named 'maturin' | open | 2022-11-07T06:20:55Z | 2022-11-07T06:20:55Z | https://github.com/matterport/Mask_RCNN/issues/2900 | [] | xuqq0318 | 0 | |

marshmallow-code/apispec | rest-api | 395 | Representing openapi's "Any Type" | I am trying to use `marshmallow.fields.Raw` to represent a "arbitrary json" field. Currently I do not see a way to represent a field in marshmallow that will produce a openapi property that is empty when passed through apispec.

The code in `apispec.marshmallow.openapi.OpenAPIConverter.field2type_and_format` (see htt... | closed | 2019-02-22T00:05:34Z | 2025-01-21T18:22:07Z | https://github.com/marshmallow-code/apispec/issues/395 | [] | kaya-zekioglu | 4 |

ScrapeGraphAI/Scrapegraph-ai | machine-learning | 349 | Problem with scrapegraphai/graphs/pdf_scraper_graph.py | Hello,

output of FetchNode being fed directly into RAGNode won't work because RagNode is expecting the second argument as a list of str. However, FetchNode is outputing a li... | closed | 2024-06-06T04:53:16Z | 2024-06-16T11:33:15Z | https://github.com/ScrapeGraphAI/Scrapegraph-ai/issues/349 | [] | tindo2003 | 6 |

roboflow/supervision | pytorch | 676 | Add legend text on video frames | ### Search before asking

- [X] I have searched the Supervision [issues](https://github.com/roboflow/supervision/issues) and found no similar feature requests.

### Question

Hi All!

I'm trying to find a way to add a legend text at each frame (with the number of frame assigned) during the object tracking. Does supe... | closed | 2023-12-15T15:05:19Z | 2023-12-18T08:58:53Z | https://github.com/roboflow/supervision/issues/676 | [

"question"

] | dimpolitik | 4 |

huggingface/datasets | deep-learning | 7,071 | Filter hangs | ### Describe the bug

When trying to filter my custom dataset, the process hangs, regardless of the lambda function used. It appears to be an issue with the way the Images are being handled. The dataset in question is a preprocessed version of https://huggingface.co/datasets/danaaubakirova/patfig where notably, I hav... | open | 2024-07-25T15:29:05Z | 2024-07-25T15:36:59Z | https://github.com/huggingface/datasets/issues/7071 | [] | lucienwalewski | 0 |

donnemartin/data-science-ipython-notebooks | tensorflow | 8 | Add requirements file to help with installation for users who prefer not to use Anaconda | closed | 2015-07-12T11:35:04Z | 2015-07-14T11:47:19Z | https://github.com/donnemartin/data-science-ipython-notebooks/issues/8 | [

"enhancement"

] | donnemartin | 0 | |

seleniumbase/SeleniumBase | web-scraping | 2,842 | Suddenly unable to bypass CloudFlare challenge (Ubuntu Server) | Hello, overnight my instances of seleniumbase became unable to bypass the CloudFlare challenge ( which uses CloudFlare turnstile ).

I was using an older version of SB so I updated to latest ( 4.27.4 ), and it is still not passing the challenge.

depends on postgrest (>=0.10.8,<0.11.0), supabase (>=1.0.4,<2.0.0) requires postgrest (>=0.10.8,<0.11.0).

Because postgrest (0.10.8) d... | closed | 2023-08-31T14:55:38Z | 2023-09-18T15:03:05Z | https://github.com/supabase/supabase-py/issues/533 | [] | digi604 | 1 |

huggingface/text-generation-inference | nlp | 2,362 | AttributeError: 'Idefics2ForConditionalGeneration' object has no attribute 'model' | ### System Info

1xL40 node on Runpod

Latest `huggingface/text-generation-inference:latest` docker image.

Command: `--model-id HuggingFaceM4/idefics2-8b --port 8080 --max-input-length 3000 --max-total-tokens 4096 --max-batch-prefill-tokens 4096 --speculate 3 --lora-adapters orionsoftware/rater-adapter-v0.0.1`

#... | open | 2024-08-06T10:38:39Z | 2024-09-11T15:43:35Z | https://github.com/huggingface/text-generation-inference/issues/2362 | [] | komninoschatzipapas | 2 |

jupyter/nbgrader | jupyter | 1,681 | Installation: nbgrader menus don't show up in jupyterhub | ### Operating system

Ubuntu 20.04.5 LTS

### `nbgrader version`

0.8.1

### `jupyterhub version` (if used with JupyterHub)

1.5.0 20220918101831

### `jupyter notebook --version`

6.4.12

### Expected behavior

after `pip install nbrader` the Jupyter hub web UI contains Formgrader, Courses and Assignment menus

### A... | closed | 2022-10-05T08:10:04Z | 2022-10-07T07:32:49Z | https://github.com/jupyter/nbgrader/issues/1681 | [] | kliegr | 4 |

waditu/tushare | pandas | 1,338 | python调取daily数据是发生未知错误 | ID:361673

Traceback (most recent call last):

File "C:/Users/KING/Desktop/meiduo/Desktop/test5.py", line 41, in <module>

df = pro.daily(ts_code=i, start_date=start, end_date=end)

File "D:\Python37\lib\site-packages\tushare\pro\client.py", line 44, in query

raise Exception(result['msg'])

Exception: ... | open | 2020-04-13T07:02:58Z | 2020-04-13T07:02:58Z | https://github.com/waditu/tushare/issues/1338 | [] | zbqing | 0 |

lyhue1991/eat_tensorflow2_in_30_days | tensorflow | 98 | 3-1,3-2,3-3 | 您好,请在在3-1,3-2,3-3的分类模型正向传播的函数中: @tf.function(input_signature=[tf.TensorSpec(shape = [None,2], dtype = tf.float32)]),shape的参数该如何定义 | open | 2022-10-26T08:34:23Z | 2022-10-26T08:34:23Z | https://github.com/lyhue1991/eat_tensorflow2_in_30_days/issues/98 | [] | gaopfnice | 0 |

torchbox/wagtail-grapple | graphql | 290 | bug: order is not applied to search results | When using both searchQuery and order, the results are not ordered according to order. | closed | 2023-01-05T18:42:31Z | 2023-01-14T02:40:31Z | https://github.com/torchbox/wagtail-grapple/issues/290 | [

"bug"

] | dopry | 1 |

tflearn/tflearn | tensorflow | 389 | variable_scope() got multiple values for argument 'reuse' | I'm getting the following error:

```

Traceback (most recent call last):

File "book.py", line 15, in <module>

net = tflearn.fully_connected(net, 64)

File "/usr/local/lib/python3.5/site-packages/tflearn/layers/core.py", line 146, in fully_connected

with tf.variable_scope(scope, name, values=[incoming], reuse... | closed | 2016-10-11T19:19:11Z | 2016-10-15T23:55:48Z | https://github.com/tflearn/tflearn/issues/389 | [] | ror6ax | 8 |

tqdm/tqdm | pandas | 958 | Bug in tqdm.write() in MINGW64 on Windows 10 | - [x] I have marked all applicable categories:

+ [ ] exception-raising bug

+ [x] visual output bug

+ [ ] documentation request (i.e. "X is missing from the documentation." If instead I want to ask

- [x] I have visited the [source website], and in particular

read the [known issues]

- [x] I have sear... | open | 2020-05-01T10:20:11Z | 2020-05-01T10:20:22Z | https://github.com/tqdm/tqdm/issues/958 | [] | charlesbaynham | 0 |

mlfoundations/open_clip | computer-vision | 527 | Customized pretrained models | Hi, thanks for awesome repo. Is there any way to load a pre-trained model and change its layer config. For instance, I need to load ViT-B-16-plus-240, change the embed dim and after that I train it. Please note that I need to initialize the model's layer weights to the pre-trained model except for the layers which are ... | closed | 2023-05-10T17:22:59Z | 2023-05-18T16:52:20Z | https://github.com/mlfoundations/open_clip/issues/527 | [] | kyleub | 2 |

dgtlmoon/changedetection.io | web-scraping | 2,879 | Exception: 'ascii' codec can't encode character '\xf6' in position 5671: ordinal not in range(128) | **Describe the bug**

I get the error now on latest version: 0.48.05

Exception: 'ascii' codec can't encode character '\xf6' in position 5720: ordinal not in range(128)

**Version**

*Exact version* in the top right area: 0.48.05

**How did you install?**

Docker

**To Reproduce**

Steps to reproduce the b... | closed | 2025-01-02T21:58:40Z | 2025-01-03T08:13:55Z | https://github.com/dgtlmoon/changedetection.io/issues/2879 | [

"triage"

] | doggi87 | 1 |

0b01001001/spectree | pydantic | 240 | Deactive validation_error_status value | Is there a way to remove the `validation_error_status` feature for specific endpoints?

Use case: A `GET` endpoint with no query parameters but path parameters only, so it will never be possible to return a `422` or any error with the `ValidationError` form. | closed | 2022-07-27T17:02:51Z | 2023-03-24T02:26:59Z | https://github.com/0b01001001/spectree/issues/240 | [] | Joseda8 | 2 |

autokey/autokey | automation | 271 | trying to emulate shift+end with autokey | ## Classification:

Question

## Reproducibility:

Always

## Version

AutoKey version: autokey-gtk 0.95.1

Used GUI (Gtk, Qt, or both): gtk

If the problem is known to be present in more than one version, please list all of those.

Installed via: (PPA, pip3, …).

sudo apt-get install autokey-gtk

L... | closed | 2019-04-13T06:38:36Z | 2019-04-23T18:28:25Z | https://github.com/autokey/autokey/issues/271 | [] | opensas | 3 |

gtalarico/django-vue-template | rest-api | 46 | Initial settings: disableHostCheck, Cross-Origin | Hi, I appreciate your work!

When following the installation guide, it is not working out of the box. In

`vue.config.js`

I have to add

```

devServer: {

disableHostCheck: true,

...

}

```

to see the vue start page.

The web beowser console is repeatedly generating Cross-Origin blocks. So is some... | open | 2020-01-16T17:44:34Z | 2020-01-19T16:48:33Z | https://github.com/gtalarico/django-vue-template/issues/46 | [] | totobrei | 1 |

robotframework/robotframework | automation | 5,250 | Allow removing tags using `-tag` syntax also in `Test Tags` | `Test Tags` from `*** Settings ***` and `[Tags]` from Test Case behave differently when removing tags: while it is possible to remove tags with Test Case's `[Tag] -something`, Settings `Test Tags -something` introduces a new tag `-something`.

Running tests with these robot files (also [attached](https://github.com/... | open | 2024-10-30T05:56:30Z | 2024-11-01T11:05:54Z | https://github.com/robotframework/robotframework/issues/5250 | [

"enhancement",

"priority: medium",

"backwards incompatible",

"effort: medium"

] | romanliv | 1 |

autogluon/autogluon | scikit-learn | 4,681 | torch.load Compatibility Issue: Unsupported Global fastcore.foundation.L with weights_only=True | Check the documentation of torch.load to learn more about types accepted by default with weights_only https://pytorch.org/docs/stable/generated/torch.load.html.

Detailed Traceback:

Traceback (most recent call last):

File "C:\Users\celes\anaconda3\Lib\site-packages\autogluon\core\trainer\abstract_trainer.py", line ... | closed | 2024-11-23T11:41:14Z | 2024-11-23T14:04:34Z | https://github.com/autogluon/autogluon/issues/4681 | [

"enhancement"

] | celestinoxp | 0 |

pyg-team/pytorch_geometric | deep-learning | 8,967 | /lib/python3.10/site-packages/torch_geometric/utils/sparse.py:268 : Sparse CSR tensor support is in beta state | ### 🐛 Describe the bug

Hello,

I updated my pytorch to 2.2.1+cu121 using pip, and also updated pyg by `pip install torch_geometric`. Then I found there is a warning when I imported the dataset:

`/lib/python3.10/site-packages/torch_geometric/utils/sparse.py:268 : Sparse CSR tensor support is in beta state, please... | open | 2024-02-26T01:22:59Z | 2024-03-07T13:44:29Z | https://github.com/pyg-team/pytorch_geometric/issues/8967 | [

"bug"

] | RX28666 | 15 |

netbox-community/netbox | django | 17,774 | The rename of SSO from Microsoft Azure AD to Entra ID doesn't work as expected | ### Deployment Type

Self-hosted

### Triage priority

N/A

### NetBox Version

v4.1.4

### Python Version

3.10

### Steps to Reproduce

Update from NetBox v4.1.1 to v4.1.4 (SSO with Entra ID enabled)

### Expected Behavior

According to the #15829, the new label **Microsoft Entra ID** was ex... | closed | 2024-10-16T11:58:52Z | 2025-01-17T03:02:38Z | https://github.com/netbox-community/netbox/issues/17774 | [

"type: bug",

"status: accepted",

"severity: low"

] | lucafabbri365 | 8 |

glato/emerge | data-visualization | 16 | SyntaxError: invalid syntax when starting emerge | Getting the following error when trying to start emerge:

```sh

(app) ➜ app git:(master) emerge

Traceback (most recent call last):

File "/Users/dillon.jones/.pyenv/versions/app/bin/emerge", line 33, in <module>

sys.exit(load_entry_point('emerge-viz==1.1.0', 'console_scripts', 'emerge')())

File "/Users/d... | closed | 2022-02-17T17:04:17Z | 2022-02-25T19:45:28Z | https://github.com/glato/emerge/issues/16 | [

"bug"

] | dj0nes | 2 |

lk-geimfari/mimesis | pandas | 1,408 | finance.company() presented inconsistently | # Bug report

<!--

Hi, thanks for submitting a bug. We appreciate that.

But, we will need some information about what's wrong to help you.

-->

## What's wrong

The names of companies are not presented consistently within and between locales. Within locales some company names include the suffix (e.g. Ltd., C... | closed | 2023-09-07T09:17:32Z | 2023-09-13T13:35:25Z | https://github.com/lk-geimfari/mimesis/issues/1408 | [

"enhancement"

] | lunik1 | 1 |

autogluon/autogluon | computer-vision | 4,406 | Improve CPU training times for catboost | Related to https://github.com/catboost/catboost/issues/2722

Problem: Catboost takes 16x more time to train than a similar Xgboost model.

```

catboost: 1.2.5

xgboost: 2.0.3

autogluon: 1.1.1

Python: 3.10.14

OS: Windows 11 Pro (10.0.22635)

CPU: Intel(R) Core(TM) i7-1165G7

GPU: Integrated ... | closed | 2024-08-18T03:19:42Z | 2024-08-20T04:36:45Z | https://github.com/autogluon/autogluon/issues/4406 | [

"enhancement",

"wontfix",

"module: tabular"

] | crossxwill | 1 |

airtai/faststream | asyncio | 1,384 | Bug: Publisher Direct Usage | **Describe the bug**

Can't use direct publishing

[Documentation](https://faststream.airt.ai/latest/getting-started/publishing/direct/)

**How to reproduce**

```python

from faststream import FastStream

from faststream.rabbit import RabbitBroker

broker = RabbitBroker("amqp://guest:guest@localhost:5672")

ap... | closed | 2024-04-18T15:41:31Z | 2024-04-18T16:55:06Z | https://github.com/airtai/faststream/issues/1384 | [

"bug"

] | taras0024 | 4 |

nikitastupin/clairvoyance | graphql | 118 | Parameter to limit the amount of fields sent | There is a limitation in the amount of fields that can be sent at root level in some implementations, the following screenshots showcase the problem:

Request

Response

instead plt.close ("all"), how to do it? I can't find the official do... | closed | 2021-03-19T16:42:27Z | 2021-03-21T06:18:18Z | https://github.com/matplotlib/mplfinance/issues/360 | [

"question"

] | jaried | 7 |

pytest-dev/pytest-mock | pytest | 91 | UnicodeEncodeError in detailed introspection of assert_called_with | Comparing called arguments with `assert_called_with` fails with `UnicodeEncodeError` when one of the arguments (either on the left or right) is a unicode string, non ascii.

Python 2.7.13

Below are two test cases that looks like they *should* work:

```python

def test_assert_called_with_unicode_wrong_argument(moc... | closed | 2017-09-15T08:00:02Z | 2017-09-15T23:57:05Z | https://github.com/pytest-dev/pytest-mock/issues/91 | [

"bug"

] | AndreasHogstrom | 2 |

pallets/quart | asyncio | 130 | Weird error on page load | Hi,

So I wrote a simple discord bot and tried making a dashboard for it.

After loggin in it should go to the dashboard that lists all servers. (Note this worked when I ran everything local (127.0.0.1)

This is the error:

```

Traceback (most recent call last):

File "/usr/local/lib/python3.8/dist-packages/ai... | closed | 2021-07-05T18:28:03Z | 2022-07-05T01:58:55Z | https://github.com/pallets/quart/issues/130 | [] | rem9000 | 2 |

python-restx/flask-restx | flask | 166 | [From flask-restplus #589] Stop using failing ujson as default serializer | Flask-restx uses `ujson` for serialization by default, falling back to normal `json` if unavailable.

As has been mentioned before `ujson` is quite flawed, with some people reporting rounding errors.

Additionally, `ujson.dumps` does not take the `default` argument, which allows one to pass any custom method as the s... | open | 2020-06-30T12:58:32Z | 2024-09-04T03:33:07Z | https://github.com/python-restx/flask-restx/issues/166 | [

"bug",

"good first issue"

] | Anogio | 3 |

Nemo2011/bilibili-api | api | 100 | 【提问】如何应对Cookies中的SESSDATA和bili_jct频繁刷新 | **Python 版本:** 3.8.5

**模块版本:** 3.1.3

**运行环境:** Linux

---

无论是在3.1.3的bilibili_api.utils.Verify,抑或是当前最新版的bilibili_api.utils.Credential,用户认证都需要用到Cookies中的SESSDATA和bili_jct。尽管这种方式和大多SDK的AK/SK认证不同,但在过去两年左右时间里,我们的服务使用bilibili_api进行自动上传视频总是能成功,这归功于Cookies中的SESSDATA和bili_jct在很长一段时间保持不变。

但最近,总会因为SESSDATA和bili_jct频繁变... | closed | 2022-11-04T07:35:32Z | 2023-01-30T00:59:14Z | https://github.com/Nemo2011/bilibili-api/issues/100 | [] | nicliuqi | 10 |

gunthercox/ChatterBot | machine-learning | 2,370 | Bot | Halo

| closed | 2024-06-19T14:08:38Z | 2024-08-20T11:28:37Z | https://github.com/gunthercox/ChatterBot/issues/2370 | [] | wr-tiger | 0 |

streamlit/streamlit | python | 10,521 | Slow download of csv file when using the inbuild download as csv function for tables displayed as dataframes in Edge Browser | ### Checklist

- [x] I have searched the [existing issues](https://github.com/streamlit/streamlit/issues) for similar issues.

- [x] I added a very descriptive title to this issue.

- [x] I have provided sufficient information below to help reproduce this issue.

### Summary

Issue is only in MS Edge Browser:

When pressin... | open | 2025-02-26T08:43:56Z | 2025-03-03T11:49:27Z | https://github.com/streamlit/streamlit/issues/10521 | [

"type:bug",

"feature:st.dataframe",

"status:confirmed",

"priority:P3",

"feature:st.download_button",

"feature:st.data_editor"

] | LazerLars | 2 |

holoviz/panel | matplotlib | 6,991 | Make it possible and easy to pyscript editor with Panel | I hope that one day it would be possible and easy to use pyscript editor with Panel for embedding on web pages etc.

Currently I cannot get it working.

## Reproducible example

**mini-coi.js**

```javascript

/*! coi-serviceworker v0.1.7 - Guido Zuidhof and contributors, licensed under MIT */

/*! mini-coi - A... | open | 2024-07-16T06:48:19Z | 2024-07-17T11:07:34Z | https://github.com/holoviz/panel/issues/6991 | [

"TRIAGE"

] | MarcSkovMadsen | 4 |

gradio-app/gradio | data-visualization | 10,583 | Unable to correctly handle file download with multiple deployments | ### Describe the bug

I notice a weird problem while using the download functionality inside gr.File() when I deploy my app in multiple pods.

Consider the following case and two pods:

<img width="1887" alt="Image" src="https://github.com/user-attachments/assets/d001cf2d-1c20-493b-aab7-5e317b4c099d" />

- I have a fun... | open | 2025-02-13T06:35:06Z | 2025-02-28T17:53:24Z | https://github.com/gradio-app/gradio/issues/10583 | [

"bug",

"cloud"

] | jamie0725 | 1 |

vaexio/vaex | data-science | 1,368 | [FEATURE-REQUEST]:Porting str.extract functionality of pandas | Hi Team,

There is a 'str.extract' function that exists in pandas.The main use is to extract relevant groups of text from string.

The same is not available in vaex.Is it feasible to port it?

PFB the functionality

is helpful, but it's unclear how to extend this to multi-node TPU setups.

Currently using `tpu v4-256` with `tf.distribute.cluster_resolver.TPUClusterResolver` and `tf.distribute.TPUStra... | open | 2024-10-15T13:04:50Z | 2025-01-27T19:07:04Z | https://github.com/keras-team/keras/issues/20356 | [

"type:support",

"stat:awaiting keras-eng"

] | rivershah | 5 |

replicate/cog | tensorflow | 1,489 | how to import docker images? | I used cog to push to my private registry. I pulled from another machine. Can I import docker images? avoid building it again?

Because My network here is terrible bad (China) Try to build almost 48 hours but failed. | open | 2024-01-17T10:13:33Z | 2024-01-17T10:13:33Z | https://github.com/replicate/cog/issues/1489 | [] | deerleo | 0 |

roboflow/supervision | tensorflow | 1,787 | how to use yolov11s-seg supervision onnx runtime? | dear @onuralpszr i saw similar case on #1626 and tried some customization with my own usecase for segmentation but doesn't seem to properly working

here is how I am exporting my model with ultralytics

```python

ft_loaded_best_model.export(

format="onnx",

nms=True,

data="/content/disease__instance_segmented/data.yam... | closed | 2025-02-15T14:26:55Z | 2025-02-17T17:32:33Z | https://github.com/roboflow/supervision/issues/1787 | [

"question"

] | pranta-barua007 | 23 |

onnx/onnx | tensorflow | 5,978 | Need to know the setuptools version for using onnx in developer mode | # Ask a Question

I am trying to install onnx using `pip install -e .`. But I get the following error

> Traceback (most recent call last):

> File "/project/setup.py", line 321, in <module>

> setuptools.setup(

> File "/usr/local/lib/python3.10/dist-packages/setuptools/__init__.py", line 153, in setup

>... | closed | 2024-02-29T16:12:38Z | 2024-03-02T13:45:06Z | https://github.com/onnx/onnx/issues/5978 | [

"question"

] | Abhishek-TyRnT | 2 |

neuml/txtai | nlp | 747 | Fix issue with setting quantize=False in HFTrainer pipeline | Setting quantize to False is causing an Exception. | closed | 2024-07-12T16:33:42Z | 2024-07-15T00:54:49Z | https://github.com/neuml/txtai/issues/747 | [

"bug"

] | davidmezzetti | 0 |

plotly/dash-bio | dash | 429 | Can someone help me on how to implement the whole Circos app please. | **Describe the bug**

Am having a lot of trouble to implement the whole circos app on windows.

I can't install the dash-bio-utils.

Having an issue for installing parmed

**To Reproduce**

Steps to reproduce the behavior:

- pip3 install dash-bio-utils

**Expected behavior**

A clear and concise description of what ... | closed | 2019-10-19T20:31:40Z | 2022-09-27T09:44:28Z | https://github.com/plotly/dash-bio/issues/429 | [] | davilen | 3 |

django-import-export/django-import-export | django | 1,452 | how to ONLY export what I want? | Thanks for this app.

I'm using it to export data from django admin.

But I face a problem, how to ONLY export the data after filtering.

Just like there are 30000 records in the DB table, after filtering, I get 200 records. How to ONLY export these 200 records?

I do not want to export them all.

Thank you.

| closed | 2022-06-23T10:32:31Z | 2023-04-12T12:58:08Z | https://github.com/django-import-export/django-import-export/issues/1452 | [

"question"

] | monalisir | 7 |

plotly/dash | data-visualization | 2,227 | [BUG] Dash Background Callbacks do not work if you import SQLAlchemy | Dash dependencies:

```

dash 2.6.1

dash-bootstrap-components 1.2.1

dash-core-components 2.0.0

dash-html-components 2.0.0

dash-table 5.0.0

```

- OS: macOS Monterey

- Browser: Chrome

- Version: 105.0.5195.102

If I take the first Background Callbacks ... | closed | 2022-09-13T07:47:48Z | 2024-07-24T15:13:58Z | https://github.com/plotly/dash/issues/2227 | [] | jongillham | 1 |

CPJKU/madmom | numpy | 73 | unify negative indices behaviour of FramedSignal | The behaviour of negative indices for the `FramedSignal` is not consistent:

- if a single frame at position -1 is requested, the frame left of the first one is returned (as documented),

- if a slice [-1:] is requested, the last frame is returned.

The idea of returning the frame left of the first one was to be able to ... | closed | 2016-01-29T11:01:58Z | 2016-02-18T12:05:10Z | https://github.com/CPJKU/madmom/issues/73 | [] | superbock | 0 |

miguelgrinberg/Flask-Migrate | flask | 9 | multidb support | Alembic and Flask-SQLAlchemy has support to multidb. Flask-Migrate should work with them.

| closed | 2013-10-09T17:22:52Z | 2019-06-13T22:50:41Z | https://github.com/miguelgrinberg/Flask-Migrate/issues/9 | [] | iurisilvio | 9 |

pyjanitor-devs/pyjanitor | pandas | 750 | [DOC] Update Pull Request Template with `netlify` option | # Brief Description of Fix

<!-- Please describe the fix in terms of a "before" and "after". In other words, what's not so good about the current docs

page, and what you would like to see it become.

Example starter wording is provided. -->

Currently, the recommended approach for reviewing documentation updates... | open | 2020-09-18T00:52:33Z | 2020-10-19T00:41:45Z | https://github.com/pyjanitor-devs/pyjanitor/issues/750 | [

"good first issue",

"docfix",

"available for hacking",

"hacktoberfest"

] | loganthomas | 5 |

modAL-python/modAL | scikit-learn | 66 | missing 'inputs' positional argument with ActiveLearner function | All of my relevant code:

```python

#!/usr/bin/env python3.5

from data_generator import data_generator as dg

# standard imports

from keras.models import load_model

from keras.utils import to_categorical

from keras.wrappers.scikit_learn import KerasClassifier

from os import listdir

import pandas as pd

imp... | open | 2020-01-24T16:15:25Z | 2020-02-28T07:58:26Z | https://github.com/modAL-python/modAL/issues/66 | [] | zbrasseaux | 3 |

davidsandberg/facenet | computer-vision | 1,201 | How to judge the unknown face | I want to modify your code to output unknown faces when they don't exist in the dataset, instead of randomly outputting a face information | open | 2021-06-06T11:02:21Z | 2021-06-06T11:03:02Z | https://github.com/davidsandberg/facenet/issues/1201 | [] | niminjian | 1 |

pytorch/vision | machine-learning | 8,735 | `_skip_resize` ignored on detector inferenece | ### 🐛 Describe the bug

### Background

The FCOS object detector accepts `**kwargs`, of which is the `_skip_resize` flag, to be passed directly to `GeneralizedRCNNTransform` at FCOS init, [here](https://github.com/pytorch/vision/blob/main/torchvision/models/detection/fcos.py#L420). If not specified, any input imag... | open | 2024-11-20T07:56:31Z | 2024-12-01T11:32:10Z | https://github.com/pytorch/vision/issues/8735 | [] | talcs | 3 |

mitmproxy/mitmproxy | python | 7,172 | request dissect curl upload file size error. | #### Problem Description

As photo show blew, when I use curl upload file and use file_content = flow.request.multipart_form.get(b'file') get file content and len(file_content) is not correct, thanks.

<img width="951" alt="image" src="https://github.com/user-attachments/assets/433b1604-687e-468d-aee7-031c9eed6586">

... | closed | 2024-09-09T07:29:03Z | 2024-09-09T09:06:03Z | https://github.com/mitmproxy/mitmproxy/issues/7172 | [

"kind/triage"

] | zjwangmin | 4 |

allenai/allennlp | pytorch | 5,019 | Caption-Based Image Retrieval Model | We want to implement the Caption-Based Image Retrieval task from https://api.semanticscholar.org/CorpusID:199453025.

The [COCO](https://cocodataset.org/) and [Flickr30k](https://www.kaggle.com/hsankesara/flickr-image-dataset) datasets contain a large number of images with image captions. The task here is to train a ... | open | 2021-02-24T23:05:18Z | 2021-08-28T00:27:13Z | https://github.com/allenai/allennlp/issues/5019 | [

"Contributions welcome",

"Models",

"hard"

] | dirkgr | 0 |

dbfixtures/pytest-postgresql | pytest | 319 | How to enable the plugin for gitlab-CI / dockerized tests | ```yaml

# .gitlab-ci.yml

image: python:3.6

services:

## https://docs.gitlab.com/ee/ci/docker/using_docker_images.html#available-settings-for-services

- name: kartoza/postgis:11.5-2.5

alias: db

variables:

# In gitlab-ci, the host connection name should be the service name or alias

# (with al... | closed | 2020-08-17T21:59:27Z | 2021-04-20T15:22:05Z | https://github.com/dbfixtures/pytest-postgresql/issues/319 | [] | dazza-codes | 2 |

ydataai/ydata-profiling | jupyter | 1,479 | Add a report on outliers | ### Missing functionality

I'm missing an easy report to see outliers.

### Proposed feature

An outlier to me is some value more than 3 std dev away from the mean.

I calculate this as:

```python

mean = X.mean()

std = X.std()

lower, upper = mean - 3*std, mean + 3*std

outliers = X[(X < lower) | (X > upper)]

... | open | 2023-10-15T08:25:24Z | 2023-10-16T20:56:52Z | https://github.com/ydataai/ydata-profiling/issues/1479 | [

"feature request 💬"

] | svaningelgem | 1 |

QuivrHQ/quivr | api | 3,503 | Mine good responses + with context | Crafted manually -> use intent classifier to get most asked '*type'* of questions | closed | 2024-11-28T08:47:01Z | 2025-03-03T16:07:01Z | https://github.com/QuivrHQ/quivr/issues/3503 | [

"Stale"

] | linear[bot] | 2 |

matplotlib/mplfinance | matplotlib | 248 | Candle stick colors incorrectly colored | Hi Daniel,

Im running the following code and getting incorrect colors for the cande sticks. Output and screenshot are given below. Please advise.

Code

```

import mplfinance as mpf

import pandas as pd

print("mpf version:", mpf.__version__)

AAL_csv = pd.read_csv('./data/F.csv', index_col='timestamp', parse_... | closed | 2020-08-24T08:27:28Z | 2020-08-24T23:58:29Z | https://github.com/matplotlib/mplfinance/issues/248 | [

"question"

] | lcobiac | 4 |

BeanieODM/beanie | pydantic | 269 | Allow comparison operators for datetime | It would be great if it were possible to find documents by datetime field using comparison operators, e.g:

```python

products = await Product.find(Product.date < datetime.datetime.now()).to_list()

``` | closed | 2022-05-14T11:19:50Z | 2023-02-05T02:40:56Z | https://github.com/BeanieODM/beanie/issues/269 | [

"Stale"

] | rdfsx | 2 |

taverntesting/tavern | pytest | 341 | Recursive string interpolation | I'd like to use recursive string substitution when building the name for a variable. I know this uses the limited Python formatting, but test data that depends on multiple parameters (environment, running instance, etc) is more flexibly picked up from an external file like this.

Example (imagine ENV is something com... | closed | 2019-04-17T15:07:36Z | 2019-05-09T14:00:57Z | https://github.com/taverntesting/tavern/issues/341 | [] | bogdanbranescu | 2 |

shaikhsajid1111/facebook_page_scraper | web-scraping | 17 | No posts were found! | Hey! Thanks for your script.

But I was trying to run your example and get the 'no posts were found' error.

Is it because of the new layout?

Thanks! | open | 2022-03-03T16:55:31Z | 2022-08-14T09:13:15Z | https://github.com/shaikhsajid1111/facebook_page_scraper/issues/17 | [] | abigmeh | 9 |

sinaptik-ai/pandas-ai | data-visualization | 594 | I need to use Petals LLM on to PandasAI | ### 🚀 The feature

Hello PandasAI team,

I would like to propose a new feature for the PandasAI library: adding support for the Petals Language Learning Model (LLM).

Petals LLM operates on a peer-to-peer (P2P) network and allows us to use large models like meta-llama/Llama-2-70b-hf in a distributed manner. This c... | closed | 2023-09-25T20:40:11Z | 2024-06-01T00:18:16Z | https://github.com/sinaptik-ai/pandas-ai/issues/594 | [] | databenti | 2 |

coqui-ai/TTS | python | 2,510 | [Bug] Unable to find compute_embedding_from_clip | ### Describe the bug

Unable to find compute_embedding_from_clip

### To Reproduce

tts --text "This is a demo text." --speaker_wav "my_voice.wav"

### Expected behavior

_No response_

### Logs

```shell

> tts_models/en/ljspeech/tacotron2-DDC is already downloaded.

> vocoder_models/en/ljspeech/hifiga... | closed | 2023-04-12T22:52:08Z | 2023-04-21T09:55:55Z | https://github.com/coqui-ai/TTS/issues/2510 | [

"bug"

] | faizulhaque | 5 |

jwkvam/bowtie | jupyter | 77 | document subscribing functions to more than one event | closed | 2016-12-31T22:59:26Z | 2017-01-03T05:00:41Z | https://github.com/jwkvam/bowtie/issues/77 | [] | jwkvam | 0 | |

scikit-image/scikit-image | computer-vision | 7,641 | import paddleocr and raise_build_error occurs | ### Description:

I just import paddleocr in a python file, an ImportError occurs:

```shell

python test_import.py

/home/fengt/anaconda3/envs/py37/lib/python3.7/site-packages/paddle/fluid/core.py:214: UserWarning: Load version

/home/fengt/local/usr/lib/x86_64-linux-gnu/libgomp.so.1 failed

warnings.warn("Load {} f... | open | 2024-12-20T03:13:03Z | 2025-01-09T00:03:56Z | https://github.com/scikit-image/scikit-image/issues/7641 | [

":bug: Bug"

] | Tom89757 | 1 |

netbox-community/netbox | django | 18,553 | Virtualization: Modifying a cluster field also modifies member virtual machine sites | ### Deployment Type

NetBox Cloud

### NetBox Version

v4.2.2

### Python Version

3.12

### Steps to Reproduce

1. Create a cluster with a blank scope

2. Create some VM's, assign them to the cluster and assign them to a site

3. Edit a field on the cluster (eg description) and save

### Expected Behavior

Virtual Machi... | open | 2025-01-31T21:24:21Z | 2025-03-03T21:24:00Z | https://github.com/netbox-community/netbox/issues/18553 | [

"type: bug",

"status: under review",

"severity: medium"

] | cruse1977 | 1 |

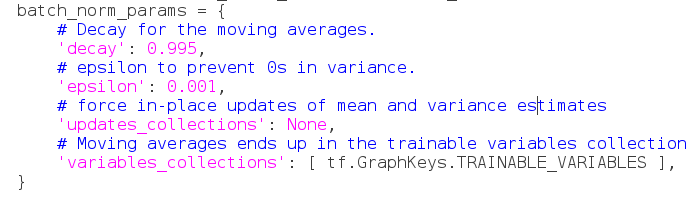

davidsandberg/facenet | computer-vision | 527 | how to set optional parameters for slim.batch_norm | here is my batch_norm_params, which is soon fed into normalizer_params.

however, when i print tf.trainable_variables, there are only mean, variance and beta for BN, missing gamma..

```

This code generates the following exception:

```P... | closed | 2023-09-14T17:20:50Z | 2023-09-15T13:24:28Z | https://github.com/aws/aws-sdk-pandas/issues/2461 | [

"bug"

] | CarlosDomingues | 2 |

SALib/SALib | numpy | 345 | Morris method normal distribution sample | Hi,

I was wondering how to create a sample with a normal distribution using the Morris sampler.

Since 'dists' is not yet supported in the morris sampler, I tried a workaround (tested with the saltelli-sampler that has 'dists' and it worked), but I receive lots of infinite-values in the sample with the morris metho... | open | 2020-09-10T14:45:09Z | 2020-10-24T07:25:22Z | https://github.com/SALib/SALib/issues/345 | [] | MatthiVH | 4 |

graphql-python/graphene-sqlalchemy | graphql | 112 | Generate Input Arguments from SQLAlchemy Class? | Hello,

Do you know if it's possible to generate input arguments dynamically from the SQLAlchemy class that will be transformed by the mutation?

Example:

My input arguments for a `CreatePerson` mutation look like this:

```python

class CreatePersonInput(graphene.InputObjectType):

"""Arguments to create a pe... | open | 2018-02-09T05:44:41Z | 2018-04-24T18:13:29Z | https://github.com/graphql-python/graphene-sqlalchemy/issues/112 | [] | alexisrolland | 3 |

jina-ai/serve | fastapi | 6,166 | After killing the process, it becomes a zombie process | 1. nohup jina flow --uses flow.yml --name bf7e2d0198df3388a73525a3f3c7f87f --workspace bf7e2d0198df3388a73525a3f3c7f87f >bf7e2d0198df3388a73525a3f3c7f87f/jina.log 2>&1 &

2. ps aux|grep 'jina flow '|grep 'name bf7e2d0198df3388a73525a3f3c7f87f' |grep -v grep |awk '{print $2}'| xargs echo

outputs are: 1719527 1719529 17... | closed | 2024-04-28T02:15:05Z | 2024-11-13T00:22:57Z | https://github.com/jina-ai/serve/issues/6166 | [

"Stale"

] | iamfengdy | 10 |

microsoft/nni | tensorflow | 5,149 | Remove trials from experiment | <!-- Please only use this template for submitting enhancement requests -->

**What would you like to be added**:

Remove trials from experiment, either in the web page or in the command line.

**Why is this needed**:

Sometime one trial may get strange result due to numerical issue, which is difficult to anticipa... | open | 2022-09-30T11:55:03Z | 2022-10-08T09:35:44Z | https://github.com/microsoft/nni/issues/5149 | [

"new feature",

"WebUI",

"need more info"

] | DDDOH | 4 |

huggingface/transformers | deep-learning | 36,626 | save_only_model with FSDP throws FileNotFoundError error | ### System Info

* Transformers (4.50.0.dev0) main branch at commit [94ae1ba](https://github.com/huggingface/transformers/commit/94ae1ba5b55e79ba766582de8a199d8ccf24a021)

* (also tried) transformers==4.49

* python==3.12

* accelerate==1.0.1

### Who can help?

@muellerzr @SunMarc @ArthurZucker

### Information

- [ ] T... | closed | 2025-03-10T06:56:16Z | 2025-03-13T16:17:37Z | https://github.com/huggingface/transformers/issues/36626 | [

"bug"

] | kmehant | 1 |

Kanaries/pygwalker | pandas | 677 | [BUG] When using the chat feature, the app crashes after a period of loading. | **Describe the bug**

When using the chat feature, the app crashes after a period of loading.

I launched Pygwalker from the .py file on my computer. Then a web page will open, and I use the chat feature on that page. After a while, it will crash.

The data I use: https://www.kaggle.com/datasets/dgomonov/new-york-city-a... | open | 2025-03-05T03:55:48Z | 2025-03-07T03:39:23Z | https://github.com/Kanaries/pygwalker/issues/677 | [

"bug",

"P1"

] | vanbolin | 5 |

scrapy/scrapy | python | 6,705 | Provide coroutine/Future alternatives to public Deferred APIs | If we want better support for native asyncio, we need to somehow provide `async def` alternatives to such public APIs as `CrawlerProcess.crawl()`, `ExecutionEngine.download()` or `ExecutionEngine.stop()`. It doesn't seem possible right away, because users can expect that e.g. `addCallback()` works on a result of any su... | open | 2025-03-08T17:15:47Z | 2025-03-08T17:15:47Z | https://github.com/scrapy/scrapy/issues/6705 | [

"enhancement",

"discuss",

"asyncio"

] | wRAR | 0 |

Lightning-AI/pytorch-lightning | deep-learning | 19,718 | Training stuck when running on Slurm with multiprocessing | ### Bug description

Hi,

I'm trying to train a model on Slurm using a single GPU, and in the training_step I call multiprocessing.Pool() to parallel some function calls (function is executes on every example in the training data).

When I run multiprocessing.Pool from the training_step, the call never ends. I added mu... | open | 2024-03-31T15:31:36Z | 2024-03-31T16:03:55Z | https://github.com/Lightning-AI/pytorch-lightning/issues/19718 | [

"question",

"environment: slurm",

"ver: 2.1.x"

] | talshechter | 1 |

Josh-XT/AGiXT | automation | 1,205 | 3D Printing Extension | ### Feature/Improvement Description

I would like to make automated prints using octoprint maybe or some other api/sdk allowing to print on 3d printing with slicing functions or just throwing gcode or stl files inside.

### Proposed Solution

Octoprint may work..... there is one I found called https://github.com/dougbr... | closed | 2024-06-07T07:16:12Z | 2025-01-20T02:34:59Z | https://github.com/Josh-XT/AGiXT/issues/1205 | [

"type | request | new feature"

] | birdup000 | 1 |

MaartenGr/BERTopic | nlp | 2,149 | TypeError: unhashable type: 'numpy.ndarray' | Hi.

I follow the tutorial [here ](https://huggingface.co/docs/hub/en/bertopic#:~:text=topic%2C%20prob%20%3D%20topic_model.transform(%22This%20is%20an%20incredible%20movie!%22)%0Atopic_model.topic_labels_%5Btopic%5D)

but got error

`topic_model.topic_labels_[topic]`

```

-------------------------------------------... | closed | 2024-09-13T11:43:16Z | 2024-09-13T13:04:21Z | https://github.com/MaartenGr/BERTopic/issues/2149 | [] | cindyangelira | 2 |

skypilot-org/skypilot | data-science | 4,255 | Implement Automatic Bucket Creation and Data Transfer in `with_data` API | To implement the `with_data` API in #4254:

- **Automatic Bucket Creation**

Add logic to create a storage bucket automatically when `with_data` is called. The API should link the upstream task’s output path to this bucket, streamlining data storage setup.

- **Data Transfer and Downstream Access**

Enable ... | open | 2024-11-04T00:43:48Z | 2024-12-19T23:09:06Z | https://github.com/skypilot-org/skypilot/issues/4255 | [] | andylizf | 1 |

ckan/ckan | api | 8,162 | PEDIR DINERO FALSO EN LÍNEA Telegram@Goodeuro BILLETES FALSOS DISPONIBLES EN LÍNEA |

PEDIR DINERO FALSO EN LÍNEA Telegram@Goodeuro

BILLETES FALSOS DISPONIBLES EN LÍNEA

Compre billetes de euro falsos de alta calidad en líneaA. Compra dinero falso en una tienda de confianza y no te ganes la vida pagando

Los billetes falsos se han vuelto extremadamente populares hoy en día debido a sus obvio... | closed | 2024-04-07T06:50:03Z | 2024-04-07T12:15:13Z | https://github.com/ckan/ckan/issues/8162 | [] | akimevice | 0 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.