id int64 599M 3.29B | url stringlengths 58 61 | html_url stringlengths 46 51 | number int64 1 7.72k | title stringlengths 1 290 | state stringclasses 2

values | comments int64 0 70 | created_at timestamp[s]date 2020-04-14 10:18:02 2025-08-05 09:28:51 | updated_at timestamp[s]date 2020-04-27 16:04:17 2025-08-05 11:39:56 | closed_at timestamp[s]date 2020-04-14 12:01:40 2025-08-01 05:15:45 ⌀ | user_login stringlengths 3 26 | labels listlengths 0 4 | body stringlengths 0 228k ⌀ | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

1,108,392,141 | https://api.github.com/repos/huggingface/datasets/issues/3603 | https://github.com/huggingface/datasets/pull/3603 | 3,603 | Add British Library books dataset | closed | 4 | 2022-01-19T17:53:05 | 2022-01-31T17:22:51 | 2022-01-31T17:01:49 | davanstrien | [] | This pull request adds a dataset of text from digitised (primarily 19th Century) books from the British Library. This collection has previously been used for training language models, e.g. https://github.com/dbmdz/clef-hipe/blob/main/hlms.md. It would be nice to make this dataset more accessible for others to use throu... | true |

1,108,247,870 | https://api.github.com/repos/huggingface/datasets/issues/3602 | https://github.com/huggingface/datasets/pull/3602 | 3,602 | Update url for conll2003 | closed | 2 | 2022-01-19T15:35:04 | 2022-01-20T16:23:03 | 2022-01-19T15:43:53 | lhoestq | [] | Following https://github.com/huggingface/datasets/issues/3582 I'm changing the download URL of the conll2003 data files, since the previous host doesn't have the authorization to redistribute the data | true |

1,108,207,131 | https://api.github.com/repos/huggingface/datasets/issues/3601 | https://github.com/huggingface/datasets/pull/3601 | 3,601 | Add conll2003 licensing | closed | 0 | 2022-01-19T15:00:41 | 2022-01-19T17:17:28 | 2022-01-19T17:17:28 | lhoestq | [] | Following https://github.com/huggingface/datasets/issues/3582, this PR updates the licensing section of the CoNLL2003 dataset. | true |

1,108,131,878 | https://api.github.com/repos/huggingface/datasets/issues/3600 | https://github.com/huggingface/datasets/pull/3600 | 3,600 | Use old url for conll2003 | closed | 0 | 2022-01-19T13:56:49 | 2022-01-19T14:16:28 | 2022-01-19T14:16:28 | lhoestq | [] | As reported in https://github.com/huggingface/datasets/issues/3582 the CoNLL2003 data files are not available in the master branch of the repo that used to host them.

For now we can use the URL from an older commit to access the data files | true |

1,108,111,607 | https://api.github.com/repos/huggingface/datasets/issues/3599 | https://github.com/huggingface/datasets/issues/3599 | 3,599 | The `add_column()` method does not work if used on dataset sliced with `select()` | closed | 1 | 2022-01-19T13:36:50 | 2022-01-28T15:35:57 | 2022-01-28T15:35:57 | ThGouzias | [

"bug"

] | Hello, I posted this as a question on the forums ([here](https://discuss.huggingface.co/t/add-column-does-not-work-if-used-on-dataset-sliced-with-select/13893)):

I have a dataset with 2000 entries

> dataset = Dataset.from_dict({'colA': list(range(2000))})

and from which I want to extract the first one thousan... | false |

1,108,107,199 | https://api.github.com/repos/huggingface/datasets/issues/3598 | https://github.com/huggingface/datasets/issues/3598 | 3,598 | Readme info not being parsed to show on Dataset card page | closed | 4 | 2022-01-19T13:32:29 | 2022-01-21T10:20:01 | 2022-01-21T10:20:01 | davidcanovas | [

"bug"

] | ## Describe the bug

The info contained in the README.md file is not being shown in the dataset main page. Basic info and table of contents are properly formatted in the README.

## Steps to reproduce the bug

# Sample code to reproduce the bug

The README file is this one: https://huggingface.co/datasets/softcatal... | false |

1,108,092,864 | https://api.github.com/repos/huggingface/datasets/issues/3597 | https://github.com/huggingface/datasets/issues/3597 | 3,597 | ERROR: File "setup.py" or "setup.cfg" not found. Directory cannot be installed in editable mode: /content | closed | 2 | 2022-01-19T13:19:28 | 2022-08-05T12:35:51 | 2022-02-14T08:46:34 | amitkml | [

"bug"

] | ## Bug

The install of streaming dataset is giving following error.

## Steps to reproduce the bug

```python

! git clone https://github.com/huggingface/datasets.git

! cd datasets

! pip install -e ".[streaming]"

```

## Actual results

Cloning into 'datasets'...

remote: Enumerating objects: 50816, done.

remot... | false |

1,107,345,338 | https://api.github.com/repos/huggingface/datasets/issues/3596 | https://github.com/huggingface/datasets/issues/3596 | 3,596 | Loss of cast `Image` feature on certain dataset method | closed | 7 | 2022-01-18T20:44:01 | 2022-01-21T18:07:28 | 2022-01-21T18:07:28 | davanstrien | [

"bug"

] | ## Describe the bug

When an a column is cast to an `Image` feature, the cast type appears to be lost during certain operations. I first noticed this when using the `push_to_hub` method on a dataset that contained urls pointing to images which had been cast to an `image`. This also happens when using select on a data... | false |

1,107,260,527 | https://api.github.com/repos/huggingface/datasets/issues/3595 | https://github.com/huggingface/datasets/pull/3595 | 3,595 | Add ImageNet toy datasets from fastai | closed | 1 | 2022-01-18T19:03:35 | 2023-09-24T09:39:07 | 2022-09-30T14:39:35 | mariosasko | [

"dataset contribution"

] | Adds the ImageNet toy datasets from FastAI: Imagenette, Imagewoof and Imagewang.

TODOs:

* [ ] add dummy data

* [ ] add dataset card

* [ ] generate `dataset_info.json` | true |

1,107,174,619 | https://api.github.com/repos/huggingface/datasets/issues/3594 | https://github.com/huggingface/datasets/pull/3594 | 3,594 | fix multiple language downloading in mC4 | closed | 1 | 2022-01-18T17:25:19 | 2022-01-19T11:22:57 | 2022-01-18T19:10:22 | polinaeterna | [] | If we try to access multiple languages of the [mC4 dataset](https://github.com/huggingface/datasets/tree/master/datasets/mc4), it will throw an error. For example, if we do

```python

mc4_subset_two_langs = load_dataset("mc4", languages=["st", "su"])

```

we got

```

FileNotFoundError: Couldn't find file at https:/... | true |

1,107,070,852 | https://api.github.com/repos/huggingface/datasets/issues/3593 | https://github.com/huggingface/datasets/pull/3593 | 3,593 | Update README.md | closed | 0 | 2022-01-18T15:52:16 | 2022-01-20T17:14:53 | 2022-01-20T17:14:53 | borgr | [] | Towards license of Tweet Eval parts | true |

1,107,026,723 | https://api.github.com/repos/huggingface/datasets/issues/3592 | https://github.com/huggingface/datasets/pull/3592 | 3,592 | Add QuickDraw dataset | closed | 1 | 2022-01-18T15:13:39 | 2022-06-09T10:04:54 | 2022-06-09T09:56:13 | mariosasko | [] | Add the QuickDraw dataset.

TODOs:

* [x] add dummy data

* [x] add dataset card

* [x] generate `dataset_info.json` | true |

1,106,928,613 | https://api.github.com/repos/huggingface/datasets/issues/3591 | https://github.com/huggingface/datasets/pull/3591 | 3,591 | Add support for time, date, duration, and decimal dtypes | closed | 2 | 2022-01-18T13:46:05 | 2022-01-31T18:29:34 | 2022-01-20T17:37:33 | mariosasko | [] | Add support for the pyarrow time (maps to `datetime.time` in python), date (maps to `datetime.time` in python), duration (maps to `datetime.timedelta` in python), and decimal (maps to `decimal.decimal` in python) dtypes. This should be helpful when writing scripts for time-series datasets. | true |

1,106,784,860 | https://api.github.com/repos/huggingface/datasets/issues/3590 | https://github.com/huggingface/datasets/pull/3590 | 3,590 | Update ANLI README.md | closed | 0 | 2022-01-18T11:22:53 | 2022-01-20T16:58:41 | 2022-01-20T16:58:41 | borgr | [] | Update license and little things concerning ANLI | true |

1,106,766,114 | https://api.github.com/repos/huggingface/datasets/issues/3589 | https://github.com/huggingface/datasets/pull/3589 | 3,589 | Pin torchmetrics to fix the COMET test | closed | 0 | 2022-01-18T11:03:49 | 2022-01-18T11:04:56 | 2022-01-18T11:04:55 | lhoestq | [] | Torchmetrics 0.7.0 got released and has issues with `transformers` (see https://github.com/PyTorchLightning/metrics/issues/770)

I'm pinning it to 0.6.0 in the CI, since 0.7.0 makes the COMET metric test fail. COMET requires torchmetrics==0.6.0 anyway. | true |

1,106,749,000 | https://api.github.com/repos/huggingface/datasets/issues/3588 | https://github.com/huggingface/datasets/pull/3588 | 3,588 | Update HellaSwag README.md | closed | 0 | 2022-01-18T10:46:15 | 2022-01-20T16:57:43 | 2022-01-20T16:57:43 | borgr | [] | Adding information from the git repo and paper that were missing | true |

1,106,719,182 | https://api.github.com/repos/huggingface/datasets/issues/3587 | https://github.com/huggingface/datasets/issues/3587 | 3,587 | No module named 'fsspec.archive' | closed | 0 | 2022-01-18T10:17:01 | 2022-08-11T09:57:54 | 2022-01-18T10:33:10 | shuuchen | [

"bug"

] | ## Describe the bug

Cannot import datasets after installation.

## Steps to reproduce the bug

```shell

$ python

Python 3.9.7 (default, Sep 16 2021, 13:09:58)

[GCC 7.5.0] :: Anaconda, Inc. on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import datasets

Traceback (most recent... | false |

1,106,455,672 | https://api.github.com/repos/huggingface/datasets/issues/3586 | https://github.com/huggingface/datasets/issues/3586 | 3,586 | Revisit `enable/disable_` toggle function prefix | closed | 0 | 2022-01-18T04:09:55 | 2022-03-14T15:01:08 | 2022-03-14T15:01:08 | jaketae | [

"enhancement"

] | As discussed in https://github.com/huggingface/transformers/pull/15167, we should revisit the `enable/disable_` toggle function prefix, potentially in favor of `set_enabled_`. Concretely, this translates to

- De-deprecating `disable_progress_bar()`

- Adding `enable_progress_bar()`

- On the caching side, adding `en... | false |

1,105,821,470 | https://api.github.com/repos/huggingface/datasets/issues/3585 | https://github.com/huggingface/datasets/issues/3585 | 3,585 | Datasets streaming + map doesn't work for `Audio` | closed | 1 | 2022-01-17T12:55:42 | 2022-01-20T13:28:00 | 2022-01-20T13:28:00 | patrickvonplaten | [

"bug",

"duplicate"

] | ## Describe the bug

When using audio datasets in streaming mode, applying a `map(...)` before iterating leads to an error as the key `array` does not exist anymore.

## Steps to reproduce the bug

```python

from datasets import load_dataset

ds = load_dataset("common_voice", "en", streaming=True, split="train")... | false |

1,105,231,768 | https://api.github.com/repos/huggingface/datasets/issues/3584 | https://github.com/huggingface/datasets/issues/3584 | 3,584 | https://huggingface.co/datasets/huggingface/transformers-metadata | closed | 0 | 2022-01-17T00:18:14 | 2022-02-14T08:51:27 | 2022-02-14T08:51:27 | ecankirkic | [

"wontfix",

"dataset-viewer"

] | ## Dataset viewer issue for '*name of the dataset*'

**Link:** *link to the dataset viewer page*

*short description of the issue*

Am I the one who added this dataset ? Yes-No

| false |

1,105,195,144 | https://api.github.com/repos/huggingface/datasets/issues/3583 | https://github.com/huggingface/datasets/issues/3583 | 3,583 | Add The Medical Segmentation Decathlon Dataset | open | 5 | 2022-01-16T21:42:25 | 2022-03-18T10:44:42 | null | omarespejel | [

"dataset request",

"vision"

] | ## Adding a Dataset

- **Name:** *The Medical Segmentation Decathlon Dataset*

- **Description:** The underlying data set was designed to explore the axis of difficulties typically encountered when dealing with medical images, such as small data sets, unbalanced labels, multi-site data, and small objects.

- **Paper:*... | false |

1,104,877,303 | https://api.github.com/repos/huggingface/datasets/issues/3582 | https://github.com/huggingface/datasets/issues/3582 | 3,582 | conll 2003 dataset source url is no longer valid | closed | 9 | 2022-01-15T23:04:17 | 2022-07-20T13:06:40 | 2022-01-21T16:57:32 | rcanand | [

"bug",

"dataset bug"

] | ## Describe the bug

Loading `conll2003` dataset fails because it was removed (just yesterday 1/14/2022) from the location it is looking for.

## Steps to reproduce the bug

```python

from datasets import load_dataset

load_dataset("conll2003")

```

## Expected results

The dataset should load.

## Actual r... | false |

1,104,857,822 | https://api.github.com/repos/huggingface/datasets/issues/3581 | https://github.com/huggingface/datasets/issues/3581 | 3,581 | Unable to create a dataset from a parquet file in S3 | open | 1 | 2022-01-15T21:34:16 | 2022-02-14T08:52:57 | null | regCode | [

"bug",

"enhancement"

] | ## Describe the bug

Trying to create a dataset from a parquet file in S3.

## Steps to reproduce the bug

```python

import s3fs

from datasets import Dataset

s3 = s3fs.S3FileSystem(anon=False)

with s3.open(PATH_LTR_TOY_CLEAN_DATASET, 'rb') as s3file:

dataset = Dataset.from_parquet(s3file)

```

## Expe... | false |

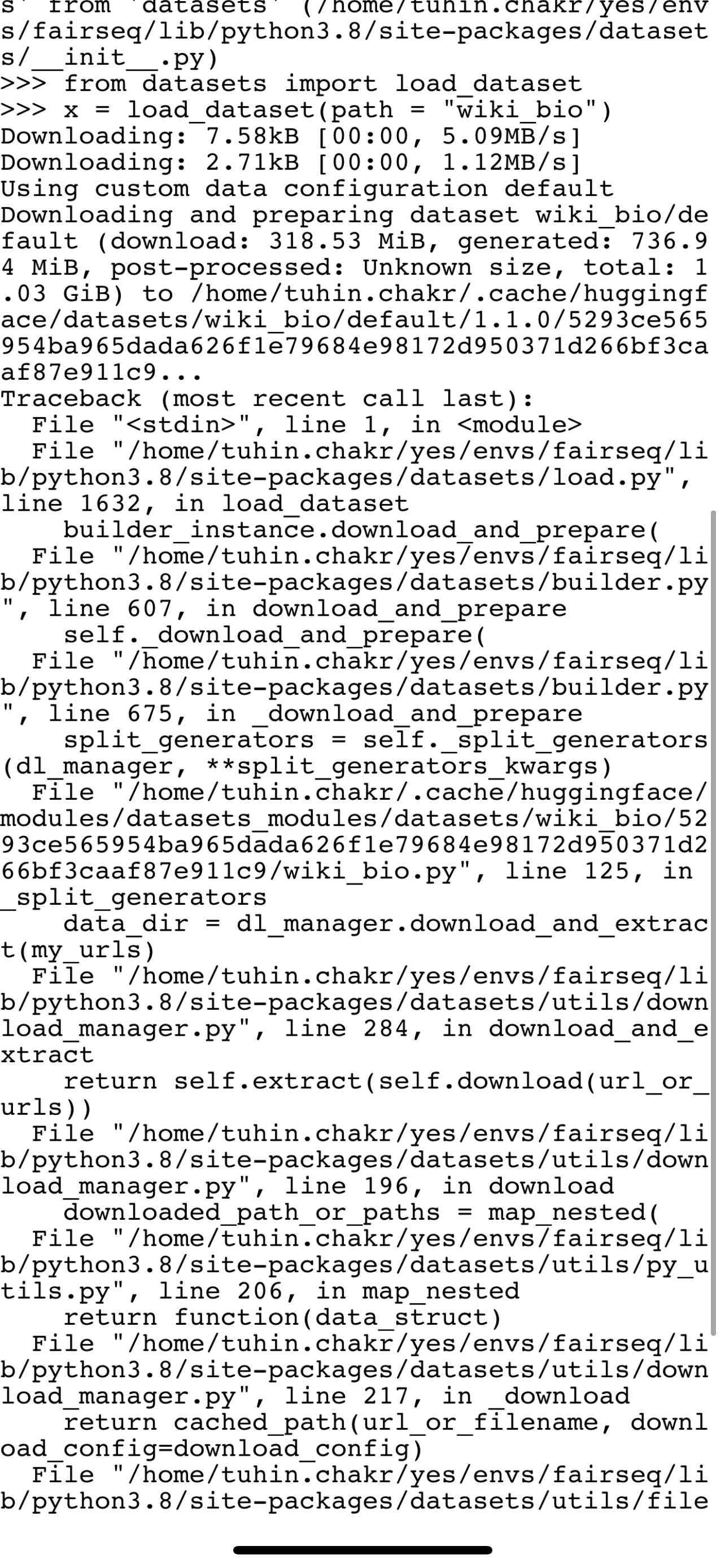

1,104,663,242 | https://api.github.com/repos/huggingface/datasets/issues/3580 | https://github.com/huggingface/datasets/issues/3580 | 3,580 | Bug in wiki bio load | closed | 4 | 2022-01-15T10:04:33 | 2022-01-31T08:38:09 | 2022-01-31T08:38:09 | tuhinjubcse | [

"dataset bug"

] |

wiki_bio is failing to load because of a failing drive link . Can someone fix this ?

dataset.save_to_disk("normal_save")

# save ... | false |

1,102,598,241 | https://api.github.com/repos/huggingface/datasets/issues/3577 | https://github.com/huggingface/datasets/issues/3577 | 3,577 | Add The Mexican Emotional Speech Database (MESD) | open | 0 | 2022-01-13T23:49:36 | 2022-01-27T14:14:38 | null | omarespejel | [

"dataset request",

"speech"

] | ## Adding a Dataset

- **Name:** *The Mexican Emotional Speech Database (MESD)*

- **Description:** *Contains 864 voice recordings with six different prosodies: anger, disgust, fear, happiness, neutral, and sadness. Furthermore, three voice categories are included: female adult, male adult, and child. *

- **Paper:** *... | false |

1,102,059,651 | https://api.github.com/repos/huggingface/datasets/issues/3576 | https://github.com/huggingface/datasets/pull/3576 | 3,576 | Add PASS dataset | closed | 0 | 2022-01-13T17:16:07 | 2022-01-20T16:50:48 | 2022-01-20T16:50:47 | mariosasko | [] | This PR adds the PASS dataset.

Closes #3043 | true |

1,101,947,955 | https://api.github.com/repos/huggingface/datasets/issues/3575 | https://github.com/huggingface/datasets/pull/3575 | 3,575 | Add Arrow type casting to struct for Image and Audio + Support nested casting | closed | 9 | 2022-01-13T15:36:59 | 2022-11-29T11:14:16 | 2022-01-21T13:22:27 | lhoestq | [] | ## Intro

1. Currently, it's not possible to have nested features containing Audio or Image.

2. Moreover one can keep an Arrow array as a StringArray to store paths to images, but such arrays can't be directly concatenated to another image array if it's stored an another Arrow type (typically, a StructType).

3... | true |

1,101,781,401 | https://api.github.com/repos/huggingface/datasets/issues/3574 | https://github.com/huggingface/datasets/pull/3574 | 3,574 | Fix qa4mre tags | closed | 0 | 2022-01-13T13:56:59 | 2022-01-13T14:03:02 | 2022-01-13T14:03:01 | lhoestq | [] | The YAML tags were invalid. I also fixed the dataset mirroring logging that failed because of this issue [here](https://github.com/huggingface/datasets/actions/runs/1690109581) | true |

1,101,157,676 | https://api.github.com/repos/huggingface/datasets/issues/3573 | https://github.com/huggingface/datasets/pull/3573 | 3,573 | Add Mauve metric | closed | 1 | 2022-01-13T03:52:48 | 2022-01-20T15:00:08 | 2022-01-20T15:00:08 | jthickstun | [] | Add support for the [Mauve](https://github.com/krishnap25/mauve) metric introduced in this [paper](https://arxiv.org/pdf/2102.01454.pdf) (Neurips, 2021). | true |

1,100,634,244 | https://api.github.com/repos/huggingface/datasets/issues/3572 | https://github.com/huggingface/datasets/issues/3572 | 3,572 | ConnectionError in IndicGLUE dataset | closed | 3 | 2022-01-12T17:59:36 | 2022-09-15T21:57:34 | 2022-09-15T21:57:34 | sahoodib | [

"dataset bug"

] | While I am trying to load IndicGLUE dataset (https://huggingface.co/datasets/indic_glue) it is giving me with the error:

```

ConnectionError: Couldn't reach https://storage.googleapis.com/ai4bharat-public-indic-nlp-corpora/evaluations/wikiann-ner.tar.gz (error 403) | false |

1,100,519,604 | https://api.github.com/repos/huggingface/datasets/issues/3571 | https://github.com/huggingface/datasets/pull/3571 | 3,571 | Add missing tasks to MuchoCine dataset | closed | 0 | 2022-01-12T16:07:32 | 2022-01-20T16:51:08 | 2022-01-20T16:51:07 | mariosasko | [] | Addresses the 2nd bullet point in #2520.

I'm also removing the licensing information, because I couldn't verify that it is correct. | true |

1,100,480,791 | https://api.github.com/repos/huggingface/datasets/issues/3570 | https://github.com/huggingface/datasets/pull/3570 | 3,570 | Add the KMWP dataset (extension of #3564) | closed | 3 | 2022-01-12T15:33:08 | 2022-10-01T06:43:16 | 2022-10-01T06:43:16 | sooftware | [

"dataset contribution"

] | New pull request of #3564 (Add the KMWP dataset) | true |

1,100,478,994 | https://api.github.com/repos/huggingface/datasets/issues/3569 | https://github.com/huggingface/datasets/pull/3569 | 3,569 | Add the DKTC dataset (Extension of #3564) | closed | 9 | 2022-01-12T15:31:29 | 2022-10-01T06:43:05 | 2022-10-01T06:43:04 | sooftware | [

"dataset contribution"

] | New pull request of #3564. (for DKTC)

| true |

1,100,380,631 | https://api.github.com/repos/huggingface/datasets/issues/3568 | https://github.com/huggingface/datasets/issues/3568 | 3,568 | Downloading Hugging Face Medical Dialog Dataset NonMatchingSplitsSizesError | closed | 1 | 2022-01-12T14:03:44 | 2022-02-14T09:32:34 | 2022-02-14T09:32:34 | fabianslife | [

"dataset bug"

] | I wanted to download the Nedical Dialog Dataset from huggingface, using this github link:

https://github.com/huggingface/datasets/tree/master/datasets/medical_dialog

After downloading the raw datasets from google drive, i unpacked everything and put it in the same folder as the medical_dialog.py which is:

```

... | false |

1,100,296,696 | https://api.github.com/repos/huggingface/datasets/issues/3567 | https://github.com/huggingface/datasets/pull/3567 | 3,567 | Fix push to hub to allow individual split push | closed | 1 | 2022-01-12T12:42:58 | 2023-09-24T09:54:19 | 2022-07-27T12:11:11 | thomasw21 | [] | # Description of the issue

If one decides to push a split on a datasets repo, he uploads the dataset and overrides the config. However previous config splits end up being lost despite still having the dataset necessary.

The new flow is the following:

- query the old config from the repo

- update into a new co... | true |

1,100,155,902 | https://api.github.com/repos/huggingface/datasets/issues/3566 | https://github.com/huggingface/datasets/pull/3566 | 3,566 | Add initial electricity time series dataset | closed | 2 | 2022-01-12T10:21:32 | 2022-02-15T13:31:48 | 2022-02-15T13:31:48 | kashif | [] | Here is an initial prototype time series dataset | true |

1,099,296,693 | https://api.github.com/repos/huggingface/datasets/issues/3565 | https://github.com/huggingface/datasets/pull/3565 | 3,565 | Add parameter `preserve_index` to `from_pandas` | closed | 2 | 2022-01-11T15:26:37 | 2022-01-12T16:11:27 | 2022-01-12T16:11:27 | Sorrow321 | [] | Added optional parameter, so that user can get rid of useless index preserving. [Issue](https://github.com/huggingface/datasets/issues/3563) | true |

1,099,214,403 | https://api.github.com/repos/huggingface/datasets/issues/3564 | https://github.com/huggingface/datasets/pull/3564 | 3,564 | Add the KMWP & DKTC dataset. | closed | 3 | 2022-01-11T14:14:08 | 2022-01-12T15:33:49 | 2022-01-12T15:33:28 | sooftware | [] | Add the DKTC dataset.

- https://github.com/tunib-ai/DKTC | true |

1,099,070,368 | https://api.github.com/repos/huggingface/datasets/issues/3563 | https://github.com/huggingface/datasets/issues/3563 | 3,563 | Dataset.from_pandas preserves useless index | closed | 1 | 2022-01-11T12:07:07 | 2022-01-12T16:11:27 | 2022-01-12T16:11:27 | Sorrow321 | [

"bug"

] | ## Describe the bug

Let's say that you want to create a Dataset object from pandas dataframe. Most likely you will write something like this:

```

import pandas as pd

from datasets import Dataset

df = pd.read_csv('some_dataset.csv')

# Some DataFrame preprocessing code...

dataset = Dataset.from_pandas(df)

`... | false |

1,098,341,351 | https://api.github.com/repos/huggingface/datasets/issues/3562 | https://github.com/huggingface/datasets/pull/3562 | 3,562 | Allow multiple task templates of the same type | closed | 0 | 2022-01-10T20:32:07 | 2022-01-11T14:16:47 | 2022-01-11T14:16:47 | mariosasko | [] | Add support for multiple task templates of the same type. Fixes (partially) #2520.

CC: @lewtun | true |

1,098,328,870 | https://api.github.com/repos/huggingface/datasets/issues/3561 | https://github.com/huggingface/datasets/issues/3561 | 3,561 | Cannot load ‘bookcorpusopen’ | closed | 3 | 2022-01-10T20:17:18 | 2022-02-14T09:19:27 | 2022-02-14T09:18:47 | HUIYINXUE | [

"bug",

"dataset bug"

] | ## Describe the bug

Cannot load 'bookcorpusopen'

## Steps to reproduce the bug

```python

dataset = load_dataset('bookcorpusopen')

```

or

```python

dataset = load_dataset('bookcorpusopen',script_version='master')

```

## Actual results

ConnectionError: Couldn't reach https://the-eye.eu/public/AI/pile_pre... | false |

1,098,280,652 | https://api.github.com/repos/huggingface/datasets/issues/3560 | https://github.com/huggingface/datasets/pull/3560 | 3,560 | Run pyupgrade for Python 3.6+ | closed | 3 | 2022-01-10T19:20:53 | 2022-01-31T13:38:49 | 2022-01-31T09:37:34 | bryant1410 | [] | Run the command:

```bash

pyupgrade $(find . -name "*.py" -type f) --py36-plus

```

Which mainly avoids unnecessary lists creations and also removes unnecessary code for Python 3.6+.

It was originally part of #3489.

Tip for reviewing faster: use the CLI (`git diff`) and scroll. | true |

1,098,178,222 | https://api.github.com/repos/huggingface/datasets/issues/3559 | https://github.com/huggingface/datasets/pull/3559 | 3,559 | Fix `DuplicatedKeysError` and improve card in `tweet_qa` | closed | 0 | 2022-01-10T17:27:40 | 2022-01-12T15:13:58 | 2022-01-12T15:13:57 | mariosasko | [] | Fix #3555 | true |

1,098,025,866 | https://api.github.com/repos/huggingface/datasets/issues/3558 | https://github.com/huggingface/datasets/issues/3558 | 3,558 | Integrate Milvus (pymilvus) library | open | 5 | 2022-01-10T15:20:29 | 2022-03-05T12:28:36 | null | mariosasko | [

"enhancement"

] | Milvus is a popular open-source vector database. We should add a new vector index to support this project. | false |

1,097,946,034 | https://api.github.com/repos/huggingface/datasets/issues/3557 | https://github.com/huggingface/datasets/pull/3557 | 3,557 | Fix bug in `ImageClassifcation` task template | closed | 3 | 2022-01-10T14:09:59 | 2022-01-11T15:47:52 | 2022-01-11T15:47:52 | mariosasko | [] | Fixes a bug in the `ImageClassification` task template which requires specifying class labels twice in dataset scripts. Additionally, this PR refactors the API around the classification task templates for cleaner `labels` handling.

CC: @lewtun @nateraw | true |

1,097,907,724 | https://api.github.com/repos/huggingface/datasets/issues/3556 | https://github.com/huggingface/datasets/pull/3556 | 3,556 | Preserve encoding/decoding with features in `Iterable.map` call | closed | 0 | 2022-01-10T13:32:20 | 2022-01-18T19:54:08 | 2022-01-18T19:54:07 | mariosasko | [] | As described in https://github.com/huggingface/datasets/issues/3505#issuecomment-1004755657, this PR uses a generator expression to encode/decode examples with `features` (which are set to None in `map`) before applying a map transform.

Fix #3505 | true |

1,097,736,982 | https://api.github.com/repos/huggingface/datasets/issues/3555 | https://github.com/huggingface/datasets/issues/3555 | 3,555 | DuplicatedKeysError when loading tweet_qa dataset | closed | 1 | 2022-01-10T10:53:11 | 2022-01-12T15:17:33 | 2022-01-12T15:13:56 | LeonieWeissweiler | [

"bug"

] | When loading the tweet_qa dataset with `load_dataset('tweet_qa')`, the following error occurs:

`DuplicatedKeysError: FAILURE TO GENERATE DATASET !

Found duplicate Key: 2a167f9e016ba338e1813fed275a6a1e

Keys should be unique and deterministic in nature

`

Might be related to issues #2433 and #2333

- `datasets` ... | false |

1,097,711,367 | https://api.github.com/repos/huggingface/datasets/issues/3554 | https://github.com/huggingface/datasets/issues/3554 | 3,554 | ImportError: cannot import name 'is_valid_waiter_error' | closed | 3 | 2022-01-10T10:32:04 | 2022-02-14T09:35:57 | 2022-02-14T09:35:57 | danielbellhv | [

"bug"

] | Based on [SO post](https://stackoverflow.com/q/70606147/17840900).

I'm following along to this [Notebook][1], cell "**Loading the dataset**".

Kernel: `conda_pytorch_p36`.

I run:

```

! pip install datasets transformers optimum[intel]

```

Output:

```

Requirement already satisfied: datasets in /home/ec2-u... | false |

1,097,252,275 | https://api.github.com/repos/huggingface/datasets/issues/3553 | https://github.com/huggingface/datasets/issues/3553 | 3,553 | set_format("np") no longer works for Image data | closed | 5 | 2022-01-09T17:18:13 | 2022-10-14T12:03:55 | 2022-10-14T12:03:54 | cgarciae | [

"bug"

] | ## Describe the bug

`dataset.set_format("np")` no longer works for image data, previously you could load the MNIST like this:

```python

dataset = load_dataset("mnist")

dataset.set_format("np")

X_train = dataset["train"]["image"][..., None] # <== No longer a numpy array

```

but now it doesn't work, `set_format(... | false |

1,096,985,204 | https://api.github.com/repos/huggingface/datasets/issues/3552 | https://github.com/huggingface/datasets/pull/3552 | 3,552 | Add the KMWP & DKTC dataset. | closed | 0 | 2022-01-08T17:12:14 | 2022-01-11T14:13:30 | 2022-01-11T14:13:30 | sooftware | [] | Add the KMWP & DKTC dataset.

Additional notes:

- Both datasets will be released on January 10 through the GitHub link below.

- https://github.com/tunib-ai/DKTC

- https://github.com/tunib-ai/KMWP

- So it doesn't work as a link at the moment, but the code will work soon (after it is released on January 10). | true |

1,096,561,111 | https://api.github.com/repos/huggingface/datasets/issues/3551 | https://github.com/huggingface/datasets/pull/3551 | 3,551 | Add more compression types for `to_json` | closed | 8 | 2022-01-07T18:25:02 | 2022-07-10T14:36:55 | 2022-02-21T15:58:15 | bhavitvyamalik | [] | This PR adds `bz2`, `xz`, and `zip` (WIP) for `to_json`. I also plan to add `infer` like how `pandas` does it | true |

1,096,522,377 | https://api.github.com/repos/huggingface/datasets/issues/3550 | https://github.com/huggingface/datasets/issues/3550 | 3,550 | Bug in `openbookqa` dataset | closed | 1 | 2022-01-07T17:32:57 | 2022-05-04T06:33:00 | 2022-05-04T06:32:19 | lucadiliello | [

"bug",

"dataset bug"

] | ## Describe the bug

Dataset entries contains a typo.

## Steps to reproduce the bug

```python

>>> from datasets import load_dataset

>>> obqa = load_dataset('openbookqa', 'main')

>>> obqa['train'][0]

```

## Expected results

```python

{'id': '7-980', 'question_stem': 'The sun is responsible for', 'choices'... | false |

1,096,426,996 | https://api.github.com/repos/huggingface/datasets/issues/3549 | https://github.com/huggingface/datasets/pull/3549 | 3,549 | Fix sem_eval_2018_task_1 download location | closed | 2 | 2022-01-07T15:37:52 | 2022-01-27T15:52:03 | 2022-01-27T15:52:03 | maxpel | [] | This changes the download location of sem_eval_2018_task_1 files to include the test set labels as discussed in https://github.com/huggingface/datasets/issues/2745#issuecomment-954588500_ with @lhoestq. | true |

1,096,409,512 | https://api.github.com/repos/huggingface/datasets/issues/3548 | https://github.com/huggingface/datasets/issues/3548 | 3,548 | Specify the feature types of a dataset on the Hub without needing a dataset script | closed | 1 | 2022-01-07T15:17:06 | 2022-01-20T14:48:38 | 2022-01-20T14:48:38 | lhoestq | [

"enhancement"

] | **Is your feature request related to a problem? Please describe.**

Currently if I upload a CSV with paths to audio files, the column type is string instead of Audio.

**Describe the solution you'd like**

I'd like to be able to specify the types of the column, so that when loading the dataset I directly get the feat... | false |

1,096,405,515 | https://api.github.com/repos/huggingface/datasets/issues/3547 | https://github.com/huggingface/datasets/issues/3547 | 3,547 | Datasets created with `push_to_hub` can't be accessed in offline mode | closed | 18 | 2022-01-07T15:12:25 | 2024-02-15T17:41:24 | 2023-12-21T15:13:12 | TevenLeScao | [

"bug"

] | ## Describe the bug

In offline mode, one can still access previously-cached datasets. This fails with datasets created with `push_to_hub`.

## Steps to reproduce the bug

in Python:

```

import datasets

mpwiki = datasets.load_dataset("teven/matched_passages_wikidata")

```

in bash:

```

export HF_DATASETS_OFFLIN... | false |

1,096,367,684 | https://api.github.com/repos/huggingface/datasets/issues/3546 | https://github.com/huggingface/datasets/pull/3546 | 3,546 | Remove print statements in datasets | closed | 1 | 2022-01-07T14:30:24 | 2022-01-07T18:09:16 | 2022-01-07T18:09:15 | mariosasko | [] | This is a second time I'm removing print statements in our datasets, so I've added a test to avoid these issues in the future. | true |

1,096,189,889 | https://api.github.com/repos/huggingface/datasets/issues/3545 | https://github.com/huggingface/datasets/pull/3545 | 3,545 | fix: 🐛 pass token when retrieving the split names | closed | 3 | 2022-01-07T10:29:22 | 2022-01-10T10:51:47 | 2022-01-10T10:51:46 | severo | [] | null | true |

1,095,784,681 | https://api.github.com/repos/huggingface/datasets/issues/3544 | https://github.com/huggingface/datasets/issues/3544 | 3,544 | Ability to split a dataset in multiple files. | open | 0 | 2022-01-06T23:02:25 | 2022-01-06T23:02:25 | null | Dref360 | [

"enhancement"

] | Hello,

**Is your feature request related to a problem? Please describe.**

My use case is that I have one writer that adds columns and multiple workers reading the same `Dataset`. Each worker should have access to columns added by the writer when they reload the dataset.

I understand that we shouldn't overwrite... | false |

1,095,226,438 | https://api.github.com/repos/huggingface/datasets/issues/3543 | https://github.com/huggingface/datasets/issues/3543 | 3,543 | Allow loading community metrics from the hub, just like datasets | closed | 5 | 2022-01-06T11:26:26 | 2022-05-31T20:59:14 | 2022-05-31T20:53:37 | eladsegal | [

"enhancement",

"generic discussion"

] | **Is your feature request related to a problem? Please describe.**

Currently, I can load a metric implemented by me by providing the local path to the file in `load_metric`.

However, there is no option to do it with the metric uploaded to the hub.

This means that if I want to allow other users to use it, they must d... | false |

1,095,088,485 | https://api.github.com/repos/huggingface/datasets/issues/3542 | https://github.com/huggingface/datasets/pull/3542 | 3,542 | Update the CC-100 dataset card | closed | 0 | 2022-01-06T08:35:18 | 2022-01-06T18:37:44 | 2022-01-06T18:37:44 | aajanki | [] | * summary from the dataset homepage

* more details about the data structure

* this dataset does not contain annotations | true |

1,095,033,828 | https://api.github.com/repos/huggingface/datasets/issues/3541 | https://github.com/huggingface/datasets/issues/3541 | 3,541 | Support 7-zip compressed data files | open | 1 | 2022-01-06T07:11:03 | 2022-07-19T10:18:30 | null | albertvillanova | [

"enhancement"

] | **Is your feature request related to a problem? Please describe.**

We should support 7-zip compressed data files:

- [x] in `extract`:

- #4672

- [ ] in `iter_archive`: for streaming mode

both in streaming and non-streaming modes.

| false |

1,094,900,336 | https://api.github.com/repos/huggingface/datasets/issues/3540 | https://github.com/huggingface/datasets/issues/3540 | 3,540 | How to convert torch.utils.data.Dataset to datasets.arrow_dataset.Dataset? | open | 0 | 2022-01-06T02:13:42 | 2022-01-06T02:17:39 | null | CindyTing | [

"enhancement"

] | Hi,

I use torch.utils.data.Dataset to define my own data, but I need to use the 'map' function of datasets.arrow_dataset.Dataset later, so I hope to convert torch.utils.data.Dataset to datasets.arrow_dataset.Dataset.

Here is an example.

```

from torch.utils.data import Dataset

from datasets.arrow_dataset import ... | false |

1,094,813,242 | https://api.github.com/repos/huggingface/datasets/issues/3539 | https://github.com/huggingface/datasets/pull/3539 | 3,539 | Research wording for nc licenses | closed | 1 | 2022-01-05T23:01:38 | 2022-01-06T18:58:20 | 2022-01-06T18:58:19 | meg-huggingface | [] | null | true |

1,094,756,755 | https://api.github.com/repos/huggingface/datasets/issues/3538 | https://github.com/huggingface/datasets/pull/3538 | 3,538 | Readme usage update | closed | 0 | 2022-01-05T21:26:28 | 2022-01-05T23:34:25 | 2022-01-05T23:24:15 | meg-huggingface | [] | Noticing that the recent commit throws a lot of errors in the automatic checks. It looks to me that those errors are simply errors that were already there (metadata issues), unrelated to what I've just changed, but worth another look to make sure. | true |

1,094,738,734 | https://api.github.com/repos/huggingface/datasets/issues/3537 | https://github.com/huggingface/datasets/pull/3537 | 3,537 | added PII statements and license links to data cards | closed | 0 | 2022-01-05T20:59:21 | 2022-01-05T22:02:37 | 2022-01-05T22:02:37 | mcmillanmajora | [] | Updates for the following datacards:

multilingual_librispeech

openslr

speech commands

superb

timit_asr

vctk | true |

1,094,645,771 | https://api.github.com/repos/huggingface/datasets/issues/3536 | https://github.com/huggingface/datasets/pull/3536 | 3,536 | update `pretty_name` for all datasets | closed | 1 | 2022-01-05T18:45:05 | 2022-07-10T14:36:54 | 2022-01-12T22:59:45 | bhavitvyamalik | [] | This PR updates `pretty_name` for all datasets. Previous PR #3498 had done this for only first 200 datasets | true |

1,094,633,214 | https://api.github.com/repos/huggingface/datasets/issues/3535 | https://github.com/huggingface/datasets/pull/3535 | 3,535 | Add SVHN dataset | closed | 0 | 2022-01-05T18:29:09 | 2022-01-12T14:14:35 | 2022-01-12T14:14:35 | mariosasko | [] | Add the SVHN dataset.

Additional notes:

* compared to the TFDS implementation, exposes additional the "full numbers" config

* adds the streaming support for `os.path.splitext` and `scipy.io.loadmat`

* adds `h5py` to the requirements list for the dummy data test | true |

1,094,352,449 | https://api.github.com/repos/huggingface/datasets/issues/3534 | https://github.com/huggingface/datasets/pull/3534 | 3,534 | Update wiki_dpr README.md | closed | 0 | 2022-01-05T13:29:44 | 2022-02-17T13:45:56 | 2022-01-05T14:16:51 | lhoestq | [] | Some infos of wiki_dpr were missing as noted in https://github.com/huggingface/datasets/issues/3510, I added them and updated the tags and the examples

Close #3510. | true |

1,094,156,147 | https://api.github.com/repos/huggingface/datasets/issues/3533 | https://github.com/huggingface/datasets/issues/3533 | 3,533 | Task search function on hub not working correctly | open | 3 | 2022-01-05T09:36:30 | 2022-05-12T14:45:57 | null | patrickvonplaten | [

"bug"

] | When I want to look at all datasets of the category: `speech-processing` *i.e.* https://huggingface.co/datasets?task_categories=task_categories:speech-processing&sort=downloads , then the following dataset doesn't show up for some reason:

- https://huggingface.co/datasets/speech_commands

even thought it's task t... | false |

1,094,035,066 | https://api.github.com/repos/huggingface/datasets/issues/3532 | https://github.com/huggingface/datasets/pull/3532 | 3,532 | Give clearer instructions to add the YAML tags | closed | 1 | 2022-01-05T06:47:52 | 2022-01-17T15:54:37 | 2022-01-17T15:54:36 | albertvillanova | [] | Fix #3531.

CC: @julien-c @VictorSanh | true |

1,094,033,280 | https://api.github.com/repos/huggingface/datasets/issues/3531 | https://github.com/huggingface/datasets/issues/3531 | 3,531 | Give clearer instructions to add the YAML tags | closed | 0 | 2022-01-05T06:44:20 | 2022-01-17T15:54:36 | 2022-01-17T15:54:36 | albertvillanova | [

"bug"

] | ## Describe the bug

As reported by @julien-c, many community datasets contain the line `YAML tags:` at the top of the YAML section in the header of the README file. See e.g.: https://huggingface.co/datasets/bigscience/P3/commit/a03bea08cf4d58f268b469593069af6aeb15de32

Maybe we should give clearer instruction/hints... | false |

1,093,894,732 | https://api.github.com/repos/huggingface/datasets/issues/3530 | https://github.com/huggingface/datasets/pull/3530 | 3,530 | Update README.md | closed | 0 | 2022-01-05T01:32:07 | 2022-01-05T12:50:51 | 2022-01-05T12:50:50 | meg-huggingface | [] | Removing reference to "Common Voice" in Personal and Sensitive Information section.

Adding link to license.

Correct license type in metadata. | true |

1,093,846,356 | https://api.github.com/repos/huggingface/datasets/issues/3529 | https://github.com/huggingface/datasets/pull/3529 | 3,529 | Update README.md | closed | 0 | 2022-01-04T23:52:47 | 2022-01-05T12:50:15 | 2022-01-05T12:50:14 | meg-huggingface | [] | Updating licensing information & personal and sensitive information. | true |

1,093,844,616 | https://api.github.com/repos/huggingface/datasets/issues/3528 | https://github.com/huggingface/datasets/pull/3528 | 3,528 | Update README.md | closed | 0 | 2022-01-04T23:48:11 | 2022-01-05T12:49:41 | 2022-01-05T12:49:40 | meg-huggingface | [] | Updating license with appropriate capitalization & a link.

Updating Personal and Sensitive Information to address PII concern. | true |

1,093,840,707 | https://api.github.com/repos/huggingface/datasets/issues/3527 | https://github.com/huggingface/datasets/pull/3527 | 3,527 | Update README.md | closed | 0 | 2022-01-04T23:39:41 | 2022-01-05T00:23:50 | 2022-01-05T00:23:50 | meg-huggingface | [] | Adding licensing information. | true |

1,093,833,446 | https://api.github.com/repos/huggingface/datasets/issues/3526 | https://github.com/huggingface/datasets/pull/3526 | 3,526 | Update license to bookcorpus dataset card | closed | 2 | 2022-01-04T23:25:23 | 2022-09-30T10:23:38 | 2022-09-30T10:21:20 | meg-huggingface | [

"dataset contribution"

] | Not entirely sure, following the links here, but it seems the relevant license is at https://github.com/soskek/bookcorpus/blob/master/LICENSE | true |

1,093,831,268 | https://api.github.com/repos/huggingface/datasets/issues/3525 | https://github.com/huggingface/datasets/pull/3525 | 3,525 | Adding license information for Openbookcorpus | closed | 3 | 2022-01-04T23:20:36 | 2022-04-20T09:54:30 | 2022-04-20T09:48:10 | meg-huggingface | [] | Not entirely sure, following the links here, but it seems the relevant license is at https://github.com/soskek/bookcorpus/blob/master/LICENSE | true |

1,093,826,723 | https://api.github.com/repos/huggingface/datasets/issues/3524 | https://github.com/huggingface/datasets/pull/3524 | 3,524 | Adding link to license. | closed | 0 | 2022-01-04T23:11:48 | 2022-01-05T12:31:38 | 2022-01-05T12:31:37 | meg-huggingface | [] | null | true |

1,093,819,227 | https://api.github.com/repos/huggingface/datasets/issues/3523 | https://github.com/huggingface/datasets/pull/3523 | 3,523 | Added links to licensing and PII message in vctk dataset | closed | 0 | 2022-01-04T22:56:58 | 2022-01-06T19:33:50 | 2022-01-06T19:33:50 | mcmillanmajora | [] | null | true |

1,093,807,586 | https://api.github.com/repos/huggingface/datasets/issues/3522 | https://github.com/huggingface/datasets/issues/3522 | 3,522 | wmt19 is broken (zh-en) | closed | 1 | 2022-01-04T22:33:45 | 2022-05-06T16:27:37 | 2022-05-06T16:27:37 | AjayP13 | [

"bug",

"dataset bug"

] | ## Describe the bug

A clear and concise description of what the bug is.

## Steps to reproduce the bug

```python

from datasets import load_dataset

dataset = load_dataset("wmt19", 'zh-en')

```

## Expected results

The dataset should download.

## Actual results

`ConnectionError: Couldn't reach ftp://cwmt-wm... | false |

1,093,797,947 | https://api.github.com/repos/huggingface/datasets/issues/3521 | https://github.com/huggingface/datasets/pull/3521 | 3,521 | Vivos license update | closed | 0 | 2022-01-04T22:17:47 | 2022-01-04T22:18:16 | 2022-01-04T22:18:16 | mcmillanmajora | [] | Updated the license information with the link to the license text | true |

1,093,747,753 | https://api.github.com/repos/huggingface/datasets/issues/3520 | https://github.com/huggingface/datasets/pull/3520 | 3,520 | Audio datacard update - first pass | closed | 2 | 2022-01-04T20:58:25 | 2022-01-05T12:30:21 | 2022-01-05T12:30:20 | meg-huggingface | [] | Filling out data card "Personal and Sensitive Information" for speech datasets to note PII concerns | true |

1,093,655,205 | https://api.github.com/repos/huggingface/datasets/issues/3519 | https://github.com/huggingface/datasets/pull/3519 | 3,519 | CC100: Using HTTPS for the data source URL fixes load_dataset() | closed | 0 | 2022-01-04T18:45:54 | 2022-01-05T17:28:34 | 2022-01-05T17:28:34 | aajanki | [] | Without this change the following script (with any lang parameter) consistently fails. After changing to the HTTPS URL, the script works as expected.

```python

from datasets import load_dataset

dataset = load_dataset("cc100", lang="en")

```

This is the error produced by the previous script:

```sh

Using cus... | true |

1,093,063,455 | https://api.github.com/repos/huggingface/datasets/issues/3518 | https://github.com/huggingface/datasets/issues/3518 | 3,518 | Add PubMed Central Open Access dataset | closed | 3 | 2022-01-04T06:54:35 | 2022-01-17T15:25:57 | 2022-01-17T15:25:57 | albertvillanova | [

"dataset request"

] | ## Adding a Dataset

- **Name:** PubMed Central Open Access

- **Description:** The PMC Open Access Subset includes more than 3.4 million journal articles and preprints that are made available under license terms that allow reuse.

- **Paper:** *link to the dataset paper if available*

- **Data:** https://www.ncbi.nlm.... | false |

1,092,726,651 | https://api.github.com/repos/huggingface/datasets/issues/3517 | https://github.com/huggingface/datasets/pull/3517 | 3,517 | Add CPPE-5 dataset | closed | 1 | 2022-01-03T18:31:20 | 2022-01-19T02:23:37 | 2022-01-05T18:53:02 | mariosasko | [] | Adds the recently released CPPE-5 dataset. | true |

1,092,657,738 | https://api.github.com/repos/huggingface/datasets/issues/3516 | https://github.com/huggingface/datasets/pull/3516 | 3,516 | dataset `asset` - change to raw.githubusercontent.com URLs | closed | 0 | 2022-01-03T16:43:57 | 2022-01-03T17:39:02 | 2022-01-03T17:39:01 | VictorSanh | [] | Changed the URLs to the ones it was automatically re-directing.

Before, the download was failing | true |

1,092,624,695 | https://api.github.com/repos/huggingface/datasets/issues/3515 | https://github.com/huggingface/datasets/issues/3515 | 3,515 | `ExpectedMoreDownloadedFiles` for `evidence_infer_treatment` | closed | 1 | 2022-01-03T15:58:38 | 2022-02-14T13:21:43 | 2022-02-14T13:21:43 | VictorSanh | [

"bug",

"dataset bug"

] | ## Describe the bug

I am trying to load a dataset called `evidence_infer_treatment`. The first subset (`1.1`) works fine but the second returns an error (`2.0`). It downloads a file but crashes during the checksums.

## Steps to reproduce the bug

```python

>>> from datasets import load_dataset

>>> load_dataset("e... | false |

1,092,606,383 | https://api.github.com/repos/huggingface/datasets/issues/3514 | https://github.com/huggingface/datasets/pull/3514 | 3,514 | Fix to_tf_dataset references in docs | closed | 1 | 2022-01-03T15:31:39 | 2022-01-05T18:52:48 | 2022-01-05T18:52:48 | mariosasko | [] | Fix the `to_tf_dataset` references in the docs. The currently failing example of usage will be fixed by #3338. | true |

1,092,569,802 | https://api.github.com/repos/huggingface/datasets/issues/3513 | https://github.com/huggingface/datasets/pull/3513 | 3,513 | Add desc parameter to filter | closed | 0 | 2022-01-03T14:44:18 | 2022-01-05T18:31:25 | 2022-01-05T18:31:25 | mariosasko | [] | Fix #3317 | true |

1,092,359,973 | https://api.github.com/repos/huggingface/datasets/issues/3512 | https://github.com/huggingface/datasets/issues/3512 | 3,512 | No Data format found | closed | 1 | 2022-01-03T09:41:11 | 2022-01-17T13:26:05 | 2022-01-17T13:26:05 | shazzad47 | [

"dataset-viewer"

] | ## Dataset viewer issue for '*name of the dataset*'

**Link:** *link to the dataset viewer page*

*short description of the issue*

Am I the one who added this dataset ? Yes-No

| false |

1,092,170,411 | https://api.github.com/repos/huggingface/datasets/issues/3511 | https://github.com/huggingface/datasets/issues/3511 | 3,511 | Dataset | closed | 2 | 2022-01-03T02:03:23 | 2022-01-03T08:41:26 | 2022-01-03T08:23:07 | MIKURI0114 | [

"dataset-viewer"

] | ## Dataset viewer issue for '*name of the dataset*'

**Link:** *link to the dataset viewer page*

*short description of the issue*

Am I the one who added this dataset ? Yes-No

| false |

1,091,997,004 | https://api.github.com/repos/huggingface/datasets/issues/3510 | https://github.com/huggingface/datasets/issues/3510 | 3,510 | `wiki_dpr` details for Open Domain Question Answering tasks | closed | 2 | 2022-01-02T11:04:01 | 2022-02-17T13:46:20 | 2022-02-17T13:46:20 | pk1130 | [] | Hey guys!

Thanks for creating the `wiki_dpr` dataset!

I am currently trying to use the dataset for context retrieval using DPR on NQ questions and need details about what each of the files and data instances mean, which version of the Wikipedia dump it uses, etc. Please respond at your earliest convenience regard... | false |

1,091,214,808 | https://api.github.com/repos/huggingface/datasets/issues/3507 | https://github.com/huggingface/datasets/issues/3507 | 3,507 | Discuss whether support canonical datasets w/o dataset_infos.json and/or dummy data | closed | 17 | 2021-12-30T17:04:25 | 2022-11-04T15:31:38 | 2022-11-04T15:31:37 | albertvillanova | [

"enhancement",

"generic discussion"

] | I open this PR to have a public discussion about this topic and make a decision.

As previously discussed, once we have the metadata in the dataset card (README file, containing both Markdown info and YAML tags), what is the point of having also the JSON metadata (dataset_infos.json file)?

On the other hand, the d... | false |

1,091,166,595 | https://api.github.com/repos/huggingface/datasets/issues/3506 | https://github.com/huggingface/datasets/pull/3506 | 3,506 | Allows DatasetDict.filter to have batching option | closed | 0 | 2021-12-30T15:22:22 | 2022-01-04T10:24:28 | 2022-01-04T10:24:27 | thomasw21 | [] | - Related to: #3244

- Fixes: #3503

We extends `.filter( ... batched: bool)` support to DatasetDict. | true |

1,091,150,820 | https://api.github.com/repos/huggingface/datasets/issues/3505 | https://github.com/huggingface/datasets/issues/3505 | 3,505 | cast_column function not working with map function in streaming mode for Audio features | closed | 1 | 2021-12-30T14:52:01 | 2022-01-18T19:54:07 | 2022-01-18T19:54:07 | ashu5644 | [

"bug"

] | ## Describe the bug

I am trying to use Audio class for loading audio features using custom dataset. I am able to cast 'audio' feature into 'Audio' format with cast_column function. On using map function, I am not getting 'Audio' casted feature but getting path of audio file only.

I am getting features of 'audio' of s... | false |

1,090,682,230 | https://api.github.com/repos/huggingface/datasets/issues/3504 | https://github.com/huggingface/datasets/issues/3504 | 3,504 | Unable to download PUBMED_title_abstracts_2019_baseline.jsonl.zst | closed | 10 | 2021-12-29T18:23:20 | 2024-05-20T09:44:59 | 2022-02-17T15:04:25 | ToddMorrill | [

"bug",

"dataset bug"

] | ## Describe the bug

I am unable to download the PubMed dataset from the link provided in the [Hugging Face Course (Chapter 5 Section 4)](https://huggingface.co/course/chapter5/4?fw=pt).

https://the-eye.eu/public/AI/pile_preliminary_components/PUBMED_title_abstracts_2019_baseline.jsonl.zst

## Steps to reproduce ... | false |

1,090,472,735 | https://api.github.com/repos/huggingface/datasets/issues/3503 | https://github.com/huggingface/datasets/issues/3503 | 3,503 | Batched in filter throws error | closed | 0 | 2021-12-29T12:01:04 | 2022-01-04T10:24:27 | 2022-01-04T10:24:27 | gpucce | [

"bug"

] | I hope this is really a bug, I could not find it among the open issues

## Describe the bug

using `batched=False` in DataSet.filter throws error

```python

TypeError: filter() got an unexpected keyword argument 'batched'

```

but in the docs it is lister as an argument.

## Steps to reproduce the bug

```python

... | false |

1,090,438,558 | https://api.github.com/repos/huggingface/datasets/issues/3502 | https://github.com/huggingface/datasets/pull/3502 | 3,502 | Add QuALITY | closed | 1 | 2021-12-29T10:58:46 | 2022-10-03T09:36:14 | 2022-10-03T09:36:14 | jaketae | [

"dataset contribution"

] | Fixes #3441. | true |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.