id int64 599M 3.29B | url stringlengths 58 61 | html_url stringlengths 46 51 | number int64 1 7.72k | title stringlengths 1 290 | state stringclasses 2

values | comments int64 0 70 | created_at timestamp[s]date 2020-04-14 10:18:02 2025-08-05 09:28:51 | updated_at timestamp[s]date 2020-04-27 16:04:17 2025-08-05 11:39:56 | closed_at timestamp[s]date 2020-04-14 12:01:40 2025-08-01 05:15:45 ⌀ | user_login stringlengths 3 26 | labels listlengths 0 4 | body stringlengths 0 228k ⌀ | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

612,583,126 | https://api.github.com/repos/huggingface/datasets/issues/50 | https://github.com/huggingface/datasets/pull/50 | 50 | [Tests] test only for fast test as a default | closed | 1 | 2020-05-05T12:59:22 | 2020-05-05T13:02:18 | 2020-05-05T13:02:16 | patrickvonplaten | [] | Test only for one config on circle ci to speed up testing. Add all config test as a slow test.

@mariamabarham @thomwolf | true |

612,545,483 | https://api.github.com/repos/huggingface/datasets/issues/49 | https://github.com/huggingface/datasets/pull/49 | 49 | fix flatten nested | closed | 0 | 2020-05-05T11:55:13 | 2020-05-05T13:59:26 | 2020-05-05T13:59:25 | lhoestq | [] | true | |

612,504,687 | https://api.github.com/repos/huggingface/datasets/issues/48 | https://github.com/huggingface/datasets/pull/48 | 48 | [Command Convert] remove tensorflow import | closed | 0 | 2020-05-05T10:41:00 | 2020-05-05T11:13:58 | 2020-05-05T11:13:56 | patrickvonplaten | [] | Remove all tensorflow import statements. | true |

612,446,493 | https://api.github.com/repos/huggingface/datasets/issues/47 | https://github.com/huggingface/datasets/pull/47 | 47 | [PyArrow Feature] fix py arrow bool | closed | 0 | 2020-05-05T08:56:28 | 2020-05-05T10:40:28 | 2020-05-05T10:40:27 | patrickvonplaten | [] | To me it seems that `bool` can only be accessed with `bool_` when looking at the pyarrow types: https://arrow.apache.org/docs/python/api/datatypes.html. | true |

612,398,190 | https://api.github.com/repos/huggingface/datasets/issues/46 | https://github.com/huggingface/datasets/pull/46 | 46 | [Features] Strip str key before dict look-up | closed | 0 | 2020-05-05T07:31:45 | 2020-05-05T08:37:45 | 2020-05-05T08:37:44 | patrickvonplaten | [] | The dataset `anli.py` currently fails because it tries to look up a key `1\n` in a dict that only has the key `1`. Added an if statement to strip key if it cannot be found in dict. | true |

612,386,583 | https://api.github.com/repos/huggingface/datasets/issues/45 | https://github.com/huggingface/datasets/pull/45 | 45 | [Load] Separate Module kwargs and builder kwargs. | closed | 0 | 2020-05-05T07:09:54 | 2022-10-04T09:32:11 | 2020-05-08T09:51:22 | patrickvonplaten | [] | Kwargs for the `load_module` fn should be passed with `module_xxxx` to `builder_kwargs` of `load` fn.

This is a follow-up PR of: https://github.com/huggingface/nlp/pull/41 | true |

611,873,486 | https://api.github.com/repos/huggingface/datasets/issues/44 | https://github.com/huggingface/datasets/pull/44 | 44 | [Tests] Fix tests for datasets with no config | closed | 0 | 2020-05-04T13:25:38 | 2020-05-04T13:28:04 | 2020-05-04T13:28:03 | patrickvonplaten | [] | Forgot to fix `None` problem for datasets that have no config this in PR: https://github.com/huggingface/nlp/pull/42 | true |

611,773,279 | https://api.github.com/repos/huggingface/datasets/issues/43 | https://github.com/huggingface/datasets/pull/43 | 43 | [Checksums] If no configs exist prevent to run over empty list | closed | 3 | 2020-05-04T10:39:42 | 2022-10-04T09:32:02 | 2020-05-04T13:18:03 | patrickvonplaten | [] | `movie_rationales` e.g. has no configs. | true |

611,754,343 | https://api.github.com/repos/huggingface/datasets/issues/42 | https://github.com/huggingface/datasets/pull/42 | 42 | [Tests] allow tests for builders without config | closed | 0 | 2020-05-04T10:06:22 | 2020-05-04T13:10:50 | 2020-05-04T13:10:48 | patrickvonplaten | [] | Some dataset scripts have no configs - the tests have to be adapted for this case.

In this case the dummy data will be saved as:

- natural_questions

-> dummy

-> -> 1.0.0 (version num)

-> -> -> dummy_data.zip

| true |

611,739,219 | https://api.github.com/repos/huggingface/datasets/issues/41 | https://github.com/huggingface/datasets/pull/41 | 41 | [Load module] allow kwargs into load module | closed | 0 | 2020-05-04T09:42:11 | 2020-05-04T19:39:07 | 2020-05-04T19:39:06 | patrickvonplaten | [] | Currenly it is not possible to force a re-download of the dataset script.

This simple change allows to pass ``force_reload=True`` as ``builder_kwargs`` in the ``load.py`` function. | true |

611,721,308 | https://api.github.com/repos/huggingface/datasets/issues/40 | https://github.com/huggingface/datasets/pull/40 | 40 | Update remote checksums instead of overwrite | closed | 0 | 2020-05-04T09:13:14 | 2020-05-04T11:51:51 | 2020-05-04T11:51:49 | lhoestq | [] | When the user uploads a dataset on S3, checksums are also uploaded with the `--upload_checksums` parameter.

If the user uploads the dataset in several steps, then the remote checksums file was previously overwritten. Now it's going to be updated with the new checksums. | true |

611,712,135 | https://api.github.com/repos/huggingface/datasets/issues/39 | https://github.com/huggingface/datasets/pull/39 | 39 | [Test] improve slow testing | closed | 0 | 2020-05-04T08:58:33 | 2020-05-04T08:59:50 | 2020-05-04T08:59:49 | patrickvonplaten | [] | true | |

611,677,656 | https://api.github.com/repos/huggingface/datasets/issues/38 | https://github.com/huggingface/datasets/issues/38 | 38 | [Checksums] Error for some datasets | closed | 3 | 2020-05-04T08:00:16 | 2020-05-04T09:48:20 | 2020-05-04T09:48:20 | patrickvonplaten | [] | The checksums command works very nicely for `squad`. But for `crime_and_punish` and `xnli`,

the same bug happens:

When running:

```

python nlp-cli nlp-cli test xnli --save_checksums

```

leads to:

```

File "nlp-cli", line 33, in <module>

service.run()

File "/home/patrick/python_bin/nlp/commands... | false |

611,670,295 | https://api.github.com/repos/huggingface/datasets/issues/37 | https://github.com/huggingface/datasets/pull/37 | 37 | [Datasets ToDo-List] add datasets | closed | 8 | 2020-05-04T07:47:39 | 2022-10-04T09:32:17 | 2020-05-08T13:48:23 | patrickvonplaten | [] | ## Description

This PR acts as a dashboard to see which datasets are added to the library and work.

Cicle-ci should always be green so that we can be sure that newly added datasets are functional.

This PR should not be merged.

## Progress

**For the following datasets the test commands**:

```

RUN_SLOW... | true |

611,528,349 | https://api.github.com/repos/huggingface/datasets/issues/36 | https://github.com/huggingface/datasets/pull/36 | 36 | Metrics - refactoring, adding support for download and distributed metrics | closed | 3 | 2020-05-03T23:00:17 | 2020-05-11T08:16:02 | 2020-05-11T08:16:00 | thomwolf | [] | Refactoring metrics to have a similar loading API than the datasets and improving the import system.

# Import system

The import system has ben upgraded. There are now three types of imports allowed:

1. `library` imports (identified as "absolute imports")

```python

import seqeval

```

=> we'll test all the impor... | true |

611,413,731 | https://api.github.com/repos/huggingface/datasets/issues/35 | https://github.com/huggingface/datasets/pull/35 | 35 | [Tests] fix typo | closed | 0 | 2020-05-03T13:23:49 | 2020-05-03T13:24:21 | 2020-05-03T13:24:20 | patrickvonplaten | [] | @lhoestq - currently the slow test fail with:

```

_____________________________________________________________________________________ DatasetTest.test_load_real_dataset_xnli _____________________________________________________________________________________

... | true |

611,385,516 | https://api.github.com/repos/huggingface/datasets/issues/34 | https://github.com/huggingface/datasets/pull/34 | 34 | [Tests] add slow tests | closed | 0 | 2020-05-03T11:01:22 | 2020-05-03T12:18:30 | 2020-05-03T12:18:29 | patrickvonplaten | [] | This PR adds a slow test that downloads the "real" dataset. The test is decorated as "slow" so that it will not automatically run on circle ci.

Before uploading a dataset, one should test that this test passes, manually by running

```

RUN_SLOW=1 pytest tests/test_dataset_common.py::DatasetTest::test_load_real_d... | true |

611,052,081 | https://api.github.com/repos/huggingface/datasets/issues/33 | https://github.com/huggingface/datasets/pull/33 | 33 | Big cleanup/refactoring for clean serialization | closed | 1 | 2020-05-01T23:45:57 | 2020-05-03T12:17:34 | 2020-05-03T12:17:33 | thomwolf | [] | This PR cleans many base classes to re-build them as `dataclasses`. We can thus use a simple serialization workflow for `DatasetInfo`, including it's `Features` and `SplitDict` based on `dataclasses` `asdict()`.

The resulting code is a lot shorter, can be easily serialized/deserialized, dataset info are human-readab... | true |

610,715,580 | https://api.github.com/repos/huggingface/datasets/issues/32 | https://github.com/huggingface/datasets/pull/32 | 32 | Fix map caching notebooks | closed | 0 | 2020-05-01T11:55:26 | 2020-05-03T12:15:58 | 2020-05-03T12:15:57 | lhoestq | [] | Previously, caching results with `.map()` didn't work in notebooks.

To reuse a result, `.map()` serializes the functions with `dill.dumps` and then it hashes it.

The problem is that when using `dill.dumps` to serialize a function, it also saves its origin (filename + line no.) and the origin of all the `globals` th... | true |

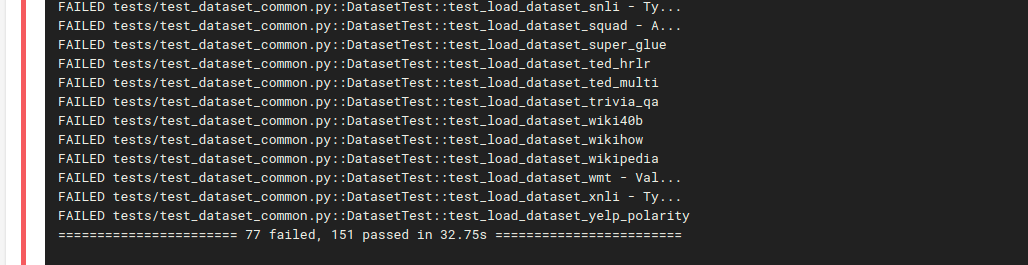

610,677,641 | https://api.github.com/repos/huggingface/datasets/issues/31 | https://github.com/huggingface/datasets/pull/31 | 31 | [Circle ci] Install a virtual env before running tests | closed | 0 | 2020-05-01T10:11:17 | 2020-05-01T22:06:16 | 2020-05-01T22:06:15 | patrickvonplaten | [] | Install a virtual env before running tests to not running into sudo issues when dynamically downloading files.

Same number of tests now pass / fail as on my local computer:

... | true |

610,549,072 | https://api.github.com/repos/huggingface/datasets/issues/30 | https://github.com/huggingface/datasets/pull/30 | 30 | add metrics which require download files from github | closed | 0 | 2020-05-01T04:13:22 | 2022-10-04T09:31:58 | 2020-05-11T08:19:54 | mariamabarham | [] | To download files from github, I copied the `load_dataset_module` and its dependencies (without the builder) in `load.py` to `metrics/metric_utils.py`. I made the following changes:

- copy the needed files in a folder`metric_name`

- delete all other files that are not needed

For metrics that require an external... | true |

610,243,997 | https://api.github.com/repos/huggingface/datasets/issues/29 | https://github.com/huggingface/datasets/pull/29 | 29 | Hf_api small changes | closed | 1 | 2020-04-30T17:06:43 | 2020-04-30T19:51:45 | 2020-04-30T19:51:44 | julien-c | [] | From Patrick:

```python

from nlp import hf_api

api = hf_api.HfApi()

api.dataset_list()

```

works :-) | true |

610,241,907 | https://api.github.com/repos/huggingface/datasets/issues/28 | https://github.com/huggingface/datasets/pull/28 | 28 | [Circle ci] Adds circle ci config | closed | 0 | 2020-04-30T17:03:35 | 2020-04-30T19:51:09 | 2020-04-30T19:51:08 | patrickvonplaten | [] | @thomwolf can you take a look and set up circle ci on:

https://app.circleci.com/projects/project-dashboard/github/huggingface

I think for `nlp` only admins can set it up, which I guess is you :-) | true |

610,230,476 | https://api.github.com/repos/huggingface/datasets/issues/27 | https://github.com/huggingface/datasets/pull/27 | 27 | [Cleanup] Removes all files in testing except test_dataset_common | closed | 0 | 2020-04-30T16:45:21 | 2020-04-30T17:39:25 | 2020-04-30T17:39:23 | patrickvonplaten | [] | As far as I know, all files in `tests` were old `tfds test files` so I removed them. We can still look them up on the other library. | true |

610,226,047 | https://api.github.com/repos/huggingface/datasets/issues/26 | https://github.com/huggingface/datasets/pull/26 | 26 | [Tests] Clean tests | closed | 0 | 2020-04-30T16:38:29 | 2020-04-30T20:12:04 | 2020-04-30T20:12:03 | patrickvonplaten | [] | the abseil testing library (https://abseil.io/docs/python/quickstart.html) is better than the one I had before, so I decided to switch to that and changed the `setup.py` config file.

Abseil has more support and a cleaner API for parametrized testing I think.

I added a list of all dataset scripts that are currentl... | true |

609,708,863 | https://api.github.com/repos/huggingface/datasets/issues/25 | https://github.com/huggingface/datasets/pull/25 | 25 | Add script csv datasets | closed | 3 | 2020-04-30T08:28:08 | 2022-10-04T09:32:13 | 2020-05-07T21:14:49 | jplu | [] | This is a PR allowing to create datasets from local CSV files. A usage might be:

```python

import nlp

ds = nlp.load(

path="csv",

name="bbc",

dataset_files={

nlp.Split.TRAIN: ["datasets/dummy_data/csv/train.csv"],

nlp.Split.TEST: [""datasets/dummy_data/csv/test.csv""]

},

c... | true |

609,064,987 | https://api.github.com/repos/huggingface/datasets/issues/24 | https://github.com/huggingface/datasets/pull/24 | 24 | Add checksums | closed | 5 | 2020-04-29T13:37:29 | 2020-04-30T19:52:50 | 2020-04-30T19:52:49 | lhoestq | [] | ### Checksums files

They are stored next to the dataset script in urls_checksums/checksums.txt.

They are used to check the integrity of the datasets downloaded files.

I kept the same format as tensorflow-datasets.

There is one checksums file for all configs.

### Load a dataset

When you do `load("squad")`, i... | true |

608,508,706 | https://api.github.com/repos/huggingface/datasets/issues/23 | https://github.com/huggingface/datasets/pull/23 | 23 | Add metrics | closed | 0 | 2020-04-28T18:02:05 | 2022-10-04T09:31:56 | 2020-05-11T08:19:38 | mariamabarham | [] | This PR is a draft for adding metrics (sacrebleu and seqeval are added)

use case examples:

`import nlp`

**sacrebleu:**

```

refs = [['The dog bit the man.', 'It was not unexpected.', 'The man bit him first.'],

['The dog had bit the man.', 'No one was surprised.', 'The man had bitten the dog.']]

sys = ['... | true |

608,298,586 | https://api.github.com/repos/huggingface/datasets/issues/22 | https://github.com/huggingface/datasets/pull/22 | 22 | adding bleu score code | closed | 0 | 2020-04-28T13:00:50 | 2020-04-28T17:48:20 | 2020-04-28T17:48:08 | mariamabarham | [] | this PR add the BLEU score metric to the lib. It can be tested by running the following code.

` from nlp.metrics import bleu

hyp1 = "It is a guide to action which ensures that the military always obeys the commands of the party"

ref1a = "It is a guide to action that ensures that the military forces always being... | true |

607,914,185 | https://api.github.com/repos/huggingface/datasets/issues/21 | https://github.com/huggingface/datasets/pull/21 | 21 | Cleanup Features - Updating convert command - Fix Download manager | closed | 2 | 2020-04-27T23:16:55 | 2020-05-01T09:29:47 | 2020-05-01T09:29:46 | thomwolf | [] | This PR makes a number of changes:

# Updating `Features`

Features are a complex mechanism provided in `tfds` to be able to modify a dataset on-the-fly when serializing to disk and when loading from disk.

We don't really need this because (1) it hides too much from the user and (2) our datatype can be directly ... | true |

607,313,557 | https://api.github.com/repos/huggingface/datasets/issues/20 | https://github.com/huggingface/datasets/pull/20 | 20 | remove boto3 and promise dependencies | closed | 0 | 2020-04-27T07:39:45 | 2020-04-27T16:04:17 | 2020-04-27T14:15:45 | lhoestq | [] | With the new download manager, we don't need `promise` anymore.

I also removed `boto3` as in [this pr](https://github.com/huggingface/transformers/pull/3968) | true |

606,400,645 | https://api.github.com/repos/huggingface/datasets/issues/19 | https://github.com/huggingface/datasets/pull/19 | 19 | Replace tf.constant for TF | closed | 1 | 2020-04-24T15:32:06 | 2020-04-29T09:27:08 | 2020-04-25T21:18:45 | jplu | [] | Replace simple tf.constant type of Tensor to tf.ragged.constant which allows to have examples of different size in a tf.data.Dataset.

Now the training works with TF. Here the same example than for the PT in collab:

```python

import tensorflow as tf

import nlp

from transformers import BertTokenizerFast, TFBertF... | true |

606,109,196 | https://api.github.com/repos/huggingface/datasets/issues/18 | https://github.com/huggingface/datasets/pull/18 | 18 | Updating caching mechanism - Allow dependency in dataset processing scripts - Fix style and quality in the repo | closed | 1 | 2020-04-24T07:39:48 | 2020-04-29T15:27:28 | 2020-04-28T16:06:28 | thomwolf | [] | This PR has a lot of content (might be hard to review, sorry, in particular because I fixed the style in the repo at the same time).

# Style & quality:

You can now install the style and quality tools with `pip install -e .[quality]`. This will install black, the compatible version of sort and flake8.

You can then ... | true |

605,753,027 | https://api.github.com/repos/huggingface/datasets/issues/17 | https://github.com/huggingface/datasets/pull/17 | 17 | Add Pandas as format type | closed | 0 | 2020-04-23T18:20:14 | 2020-04-27T18:07:50 | 2020-04-27T18:07:48 | jplu | [] | As detailed in the title ^^ | true |

605,661,462 | https://api.github.com/repos/huggingface/datasets/issues/16 | https://github.com/huggingface/datasets/pull/16 | 16 | create our own DownloadManager | closed | 4 | 2020-04-23T16:08:07 | 2021-05-05T18:25:24 | 2020-04-25T21:25:10 | lhoestq | [] | I tried to create our own - and way simpler - download manager, by replacing all the complicated stuff with our own `cached_path` solution.

With this implementation, I tried `dataset = nlp.load('squad')` and it seems to work fine.

For the implementation, what I did exactly:

- I copied the old download manager

- I... | true |

604,906,708 | https://api.github.com/repos/huggingface/datasets/issues/15 | https://github.com/huggingface/datasets/pull/15 | 15 | [Tests] General Test Design for all dataset scripts | closed | 10 | 2020-04-22T16:46:01 | 2022-10-04T09:31:54 | 2020-04-27T14:48:02 | patrickvonplaten | [] | The general idea is similar to how testing is done in `transformers`. There is one general `test_dataset_common.py` file which has a `DatasetTesterMixin` class. This class implements all of the logic that can be used in a generic way for all dataset classes. The idea is to keep each individual dataset test file as mini... | true |

604,761,315 | https://api.github.com/repos/huggingface/datasets/issues/14 | https://github.com/huggingface/datasets/pull/14 | 14 | [Download] Only create dir if not already exist | closed | 0 | 2020-04-22T13:32:51 | 2022-10-04T09:31:50 | 2020-04-23T08:27:33 | patrickvonplaten | [] | This was quite annoying to find out :D.

Some datasets have save in the same directory. So we should only create a new directory if it doesn't already exist. | true |

604,547,951 | https://api.github.com/repos/huggingface/datasets/issues/13 | https://github.com/huggingface/datasets/pull/13 | 13 | [Make style] | closed | 3 | 2020-04-22T08:10:06 | 2024-11-20T13:42:58 | 2020-04-23T13:02:22 | patrickvonplaten | [] | Added Makefile and applied make style to all.

make style runs the following code:

```

style:

black --line-length 119 --target-version py35 src

isort --recursive src

```

It's the same code that is run in `transformers`. | true |

604,518,583 | https://api.github.com/repos/huggingface/datasets/issues/12 | https://github.com/huggingface/datasets/pull/12 | 12 | [Map Function] add assert statement if map function does not return dict or None | closed | 3 | 2020-04-22T07:21:24 | 2022-10-04T09:31:53 | 2020-04-24T06:29:03 | patrickvonplaten | [] | IMO, if a function is provided that is not a print statement (-> returns variable of type `None`) or a function that updates the datasets (-> returns variable of type `dict`), then a `TypeError` should be raised.

Not sure whether you had cases in mind where the user should do something else @thomwolf , but I think ... | true |

603,921,624 | https://api.github.com/repos/huggingface/datasets/issues/11 | https://github.com/huggingface/datasets/pull/11 | 11 | [Convert TFDS to HFDS] Extend script to also allow just converting a single file | closed | 0 | 2020-04-21T11:25:33 | 2022-10-04T09:31:46 | 2020-04-21T20:47:00 | patrickvonplaten | [] | Adds another argument to be able to convert only a single file | true |

603,909,327 | https://api.github.com/repos/huggingface/datasets/issues/10 | https://github.com/huggingface/datasets/pull/10 | 10 | Name json file "squad.json" instead of "squad.py.json" | closed | 0 | 2020-04-21T11:04:28 | 2022-10-04T09:31:44 | 2020-04-21T20:48:06 | patrickvonplaten | [] | true | |

603,894,874 | https://api.github.com/repos/huggingface/datasets/issues/9 | https://github.com/huggingface/datasets/pull/9 | 9 | [Clean up] Datasets | closed | 1 | 2020-04-21T10:39:56 | 2022-10-04T09:31:42 | 2020-04-21T20:49:58 | patrickvonplaten | [] | Clean up `nlp/datasets` folder.

As I understood, eventually the `nlp/datasets` shall not exist anymore at all.

The folder `nlp/datasets/nlp` is kept for the moment, but won't be needed in the future, since it will live on S3 (actually it already does) at: `https://s3.console.aws.amazon.com/s3/buckets/datasets.h... | true |

601,783,243 | https://api.github.com/repos/huggingface/datasets/issues/8 | https://github.com/huggingface/datasets/pull/8 | 8 | Fix issue 6: error when the citation is missing in the DatasetInfo | closed | 0 | 2020-04-17T08:04:26 | 2020-04-29T09:27:11 | 2020-04-20T13:24:12 | jplu | [] | true | |

601,780,534 | https://api.github.com/repos/huggingface/datasets/issues/7 | https://github.com/huggingface/datasets/pull/7 | 7 | Fix issue 5: allow empty datasets | closed | 0 | 2020-04-17T07:59:56 | 2020-04-29T09:27:13 | 2020-04-20T13:23:48 | jplu | [] | true | |

600,330,836 | https://api.github.com/repos/huggingface/datasets/issues/6 | https://github.com/huggingface/datasets/issues/6 | 6 | Error when citation is not given in the DatasetInfo | closed | 3 | 2020-04-15T14:14:54 | 2020-04-29T09:23:22 | 2020-04-29T09:23:22 | jplu | [] | The following error is raised when the `citation` parameter is missing when we instantiate a `DatasetInfo`:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/jplu/dev/jplu/datasets/src/nlp/info.py", line 338, in __repr__

citation_pprint = _indent('"""{}"""'.format(self.... | false |

600,295,889 | https://api.github.com/repos/huggingface/datasets/issues/5 | https://github.com/huggingface/datasets/issues/5 | 5 | ValueError when a split is empty | closed | 3 | 2020-04-15T13:25:13 | 2020-04-29T09:23:05 | 2020-04-29T09:23:05 | jplu | [] | When a split is empty either TEST, VALIDATION or TRAIN I get the following error:

```

Traceback (most recent call last):

File "<stdin>", line 1, in <module>

File "/home/jplu/dev/jplu/datasets/src/nlp/load.py", line 295, in load

ds = dbuilder.as_dataset(**as_dataset_kwargs)

File "/home/jplu/dev/jplu/data... | false |

600,185,417 | https://api.github.com/repos/huggingface/datasets/issues/4 | https://github.com/huggingface/datasets/issues/4 | 4 | [Feature] Keep the list of labels of a dataset as metadata | closed | 6 | 2020-04-15T10:17:10 | 2020-07-08T16:59:46 | 2020-05-04T06:11:57 | jplu | [] | It would be useful to keep the list of the labels of a dataset as metadata. Either directly in the `DatasetInfo` or in the Arrow metadata. | false |

600,180,050 | https://api.github.com/repos/huggingface/datasets/issues/3 | https://github.com/huggingface/datasets/issues/3 | 3 | [Feature] More dataset outputs | closed | 3 | 2020-04-15T10:08:14 | 2020-05-04T06:12:27 | 2020-05-04T06:12:27 | jplu | [] | Add the following dataset outputs:

- Spark

- Pandas | false |

599,767,671 | https://api.github.com/repos/huggingface/datasets/issues/2 | https://github.com/huggingface/datasets/issues/2 | 2 | Issue to read a local dataset | closed | 5 | 2020-04-14T18:18:51 | 2020-05-11T18:55:23 | 2020-05-11T18:55:22 | jplu | [] | Hello,

As proposed by @thomwolf, I open an issue to explain what I'm trying to do without success. What I want to do is to create and load a local dataset, the script I have done is the following:

```python

import os

import csv

import nlp

class BbcConfig(nlp.BuilderConfig):

def __init__(self, **kwarg... | false |

599,457,467 | https://api.github.com/repos/huggingface/datasets/issues/1 | https://github.com/huggingface/datasets/pull/1 | 1 | changing nlp.bool to nlp.bool_ | closed | 0 | 2020-04-14T10:18:02 | 2022-10-04T09:31:40 | 2020-04-14T12:01:40 | mariamabarham | [] | true |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.