id int64 599M 3.29B | url stringlengths 58 61 | html_url stringlengths 46 51 | number int64 1 7.72k | title stringlengths 1 290 | state stringclasses 2

values | comments int64 0 70 | created_at timestamp[s]date 2020-04-14 10:18:02 2025-08-05 09:28:51 | updated_at timestamp[s]date 2020-04-27 16:04:17 2025-08-05 11:39:56 | closed_at timestamp[s]date 2020-04-14 12:01:40 2025-08-01 05:15:45 ⌀ | user_login stringlengths 3 26 | labels listlengths 0 4 | body stringlengths 0 228k ⌀ | is_pull_request bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

634,544,977 | https://api.github.com/repos/huggingface/datasets/issues/251 | https://github.com/huggingface/datasets/pull/251 | 251 | Better access to all dataset information | closed | 0 | 2020-06-08T11:56:50 | 2020-06-12T08:13:00 | 2020-06-12T08:12:58 | thomwolf | [] | Moves all the dataset info down one level from `dataset.info.XXX` to `dataset.XXX`

This way it's easier to access `dataset.feature['label']` for instance

Also, add the original split instructions used to create the dataset in `dataset.split`

Ex:

```

from nlp import load_dataset

stsb = load_dataset('glue', name=... | true |

634,416,751 | https://api.github.com/repos/huggingface/datasets/issues/250 | https://github.com/huggingface/datasets/pull/250 | 250 | Remove checksum download in c4 | closed | 1 | 2020-06-08T09:13:00 | 2020-08-25T07:04:56 | 2020-06-08T09:16:59 | lhoestq | [] | There was a line from the original tfds script that was still there and causing issues when loading the c4 script. This one should fix #233 and allow anyone to load the c4 script to generate the dataset | true |

633,393,443 | https://api.github.com/repos/huggingface/datasets/issues/249 | https://github.com/huggingface/datasets/issues/249 | 249 | [Dataset created] some critical small issues when I was creating a dataset | closed | 2 | 2020-06-07T12:58:54 | 2020-06-12T08:28:51 | 2020-06-12T08:28:51 | richarddwang | [] | Hi, I successfully created a dataset and has made a pr #248.

But I have encountered several problems when I was creating it, and those should be easy to fix.

1. Not found dataset_info.json

should be fixed by #241 , eager to wait it be merged.

2. Forced to install `apach_beam`

If we should install it, then it m... | false |

633,390,427 | https://api.github.com/repos/huggingface/datasets/issues/248 | https://github.com/huggingface/datasets/pull/248 | 248 | add Toronto BooksCorpus | closed | 11 | 2020-06-07T12:54:56 | 2020-06-12T08:45:03 | 2020-06-12T08:45:02 | richarddwang | [] | 1. I knew there is a branch `toronto_books_corpus`

- After I downloaded it, I found it is all non-english, and only have one row.

- It seems that it cites the wrong paper

- according to papar using it, it is called `BooksCorpus` but not `TornotoBooksCorpus`

2. It use a text mirror in google drive

- `bookscorpu... | true |

632,380,078 | https://api.github.com/repos/huggingface/datasets/issues/247 | https://github.com/huggingface/datasets/pull/247 | 247 | Make all dataset downloads deterministic by applying `sorted` to glob and os.listdir | closed | 3 | 2020-06-06T11:02:10 | 2020-06-08T09:18:16 | 2020-06-08T09:18:14 | patrickvonplaten | [] | This PR makes all datasets loading deterministic by applying `sorted()` to all `glob.glob` and `os.listdir` statements.

Are there other "non-deterministic" functions apart from `glob.glob()` and `os.listdir()` that you can think of @thomwolf @lhoestq @mariamabarham @jplu ?

**Important**

It does break backward c... | true |

632,380,054 | https://api.github.com/repos/huggingface/datasets/issues/246 | https://github.com/huggingface/datasets/issues/246 | 246 | What is the best way to cache a dataset? | closed | 2 | 2020-06-06T11:02:07 | 2020-07-09T09:15:07 | 2020-07-09T09:15:07 | Mistobaan | [] | For example if I want to use streamlit with a nlp dataset:

```

@st.cache

def load_data():

return nlp.load_dataset('squad')

```

This code raises the error "uncachable object"

Right now I just fixed with a constant for my specific case:

```

@st.cache(hash_funcs={pyarrow.lib.Buffer: lambda b: 0})

```... | false |

631,985,108 | https://api.github.com/repos/huggingface/datasets/issues/245 | https://github.com/huggingface/datasets/issues/245 | 245 | SST-2 test labels are all -1 | closed | 10 | 2020-06-05T21:41:42 | 2021-12-08T00:47:32 | 2020-06-06T16:56:41 | jxmorris12 | [] | I'm trying to test a model on the SST-2 task, but all the labels I see in the test set are -1.

```

>>> import nlp

>>> glue = nlp.load_dataset('glue', 'sst2')

>>> glue

{'train': Dataset(schema: {'sentence': 'string', 'label': 'int64', 'idx': 'int32'}, num_rows: 67349), 'validation': Dataset(schema: {'sentence': 'st... | false |

631,869,155 | https://api.github.com/repos/huggingface/datasets/issues/244 | https://github.com/huggingface/datasets/pull/244 | 244 | Add Allociné Dataset | closed | 3 | 2020-06-05T19:19:26 | 2020-06-11T07:47:26 | 2020-06-11T07:47:26 | TheophileBlard | [] | This is a french binary sentiment classification dataset, which was used to train this model: https://huggingface.co/tblard/tf-allocine.

Basically, it's a french "IMDB" dataset, with more reviews.

More info on [this repo](https://github.com/TheophileBlard/french-sentiment-analysis-with-bert). | true |

631,735,848 | https://api.github.com/repos/huggingface/datasets/issues/243 | https://github.com/huggingface/datasets/pull/243 | 243 | Specify utf-8 encoding for GLUE | closed | 1 | 2020-06-05T16:33:00 | 2020-06-17T21:16:06 | 2020-06-08T08:42:01 | patpizio | [] | #242

This makes the GLUE-MNLI dataset readable on my machine, not sure if it's a Windows-only bug. | true |

631,733,683 | https://api.github.com/repos/huggingface/datasets/issues/242 | https://github.com/huggingface/datasets/issues/242 | 242 | UnicodeDecodeError when downloading GLUE-MNLI | closed | 2 | 2020-06-05T16:30:01 | 2020-06-09T16:06:47 | 2020-06-08T08:45:03 | patpizio | [] | When I run

```python

dataset = nlp.load_dataset('glue', 'mnli')

```

I get an encoding error (could it be because I'm using Windows?) :

```python

# Lots of error log lines later...

~\Miniconda3\envs\nlp\lib\site-packages\tqdm\std.py in __iter__(self)

1128 try:

-> 1129 for obj in iterable:... | false |

631,703,079 | https://api.github.com/repos/huggingface/datasets/issues/241 | https://github.com/huggingface/datasets/pull/241 | 241 | Fix empty cache dir | closed | 2 | 2020-06-05T15:45:22 | 2020-06-08T08:35:33 | 2020-06-08T08:35:31 | lhoestq | [] | If the cache dir of a dataset is empty, the dataset fails to load and throws a FileNotFounfError. We could end up with empty cache dir because there was a line in the code that created the cache dir without using a temp dir. Using a temp dir is useful as it gets renamed to the real cache dir only if the full process is... | true |

631,434,677 | https://api.github.com/repos/huggingface/datasets/issues/240 | https://github.com/huggingface/datasets/issues/240 | 240 | Deterministic dataset loading | closed | 4 | 2020-06-05T09:03:26 | 2020-06-08T09:18:14 | 2020-06-08T09:18:14 | patrickvonplaten | [] | When calling:

```python

import nlp

dataset = nlp.load_dataset("trivia_qa", split="validation[:1%]")

```

the resulting dataset is not deterministic over different google colabs.

After talking to @thomwolf, I suspect the reason to be the use of `glob.glob` in line:

https://github.com/huggingface/nlp/blob/2e0... | false |

631,340,440 | https://api.github.com/repos/huggingface/datasets/issues/239 | https://github.com/huggingface/datasets/issues/239 | 239 | [Creating new dataset] Not found dataset_info.json | closed | 5 | 2020-06-05T06:15:04 | 2020-06-07T13:01:04 | 2020-06-07T13:01:04 | richarddwang | [] | Hi, I am trying to create Toronto Book Corpus. #131

I ran

`~/nlp % python nlp-cli test datasets/bookcorpus --save_infos --all_configs`

but this doesn't create `dataset_info.json` and try to use it

```

INFO:nlp.load:Checking datasets/bookcorpus/bookcorpus.py for additional imports.

INFO:filelock:Lock 1397953257... | false |

631,260,143 | https://api.github.com/repos/huggingface/datasets/issues/238 | https://github.com/huggingface/datasets/issues/238 | 238 | [Metric] Bertscore : Warning : Empty candidate sentence; Setting recall to be 0. | closed | 1 | 2020-06-05T02:14:47 | 2020-06-29T17:10:19 | 2020-06-29T17:10:19 | astariul | [

"metric bug"

] | When running BERT-Score, I'm meeting this warning :

> Warning: Empty candidate sentence; Setting recall to be 0.

Code :

```

import nlp

metric = nlp.load_metric("bertscore")

scores = metric.compute(["swag", "swags"], ["swags", "totally something different"], lang="en", device=0)

```

---

**What am I do... | false |

631,199,940 | https://api.github.com/repos/huggingface/datasets/issues/237 | https://github.com/huggingface/datasets/issues/237 | 237 | Can't download MultiNLI | closed | 3 | 2020-06-04T23:05:21 | 2020-06-06T10:51:34 | 2020-06-06T10:51:34 | patpizio | [] | When I try to download MultiNLI with

```python

dataset = load_dataset('multi_nli')

```

I get this long error:

```python

---------------------------------------------------------------------------

OSError Traceback (most recent call last)

<ipython-input-13-3b11f6be4cb9> in <m... | false |

631,099,875 | https://api.github.com/repos/huggingface/datasets/issues/236 | https://github.com/huggingface/datasets/pull/236 | 236 | CompGuessWhat?! dataset | closed | 9 | 2020-06-04T19:45:50 | 2020-06-11T09:43:42 | 2020-06-11T07:45:21 | aleSuglia | [] | Hello,

Thanks for the amazing library that you put together. I'm Alessandro Suglia, the first author of CompGuessWhat?!, a recently released dataset for grounded language learning accepted to ACL 2020 ([https://compguesswhat.github.io](https://compguesswhat.github.io)).

This pull-request adds the CompGuessWhat?! ... | true |

630,952,297 | https://api.github.com/repos/huggingface/datasets/issues/235 | https://github.com/huggingface/datasets/pull/235 | 235 | Add experimental datasets | closed | 6 | 2020-06-04T15:54:56 | 2020-06-12T15:38:55 | 2020-06-12T15:38:55 | yjernite | [] | ## Adding an *experimental datasets* folder

After using the 🤗nlp library for some time, I find that while it makes it super easy to create new memory-mapped datasets with lots of cool utilities, a lot of what I want to do doesn't work well with the current `MockDownloader` based testing paradigm, making it hard to ... | true |

630,534,427 | https://api.github.com/repos/huggingface/datasets/issues/234 | https://github.com/huggingface/datasets/issues/234 | 234 | Huggingface NLP, Uploading custom dataset | closed | 4 | 2020-06-04T05:59:06 | 2020-07-06T09:33:26 | 2020-07-06T09:33:26 | Nouman97 | [] | Hello,

Does anyone know how we can call our custom dataset using the nlp.load command? Let's say that I have a dataset based on the same format as that of squad-v1.1, how am I supposed to load it using huggingface nlp.

Thank you! | false |

630,432,132 | https://api.github.com/repos/huggingface/datasets/issues/233 | https://github.com/huggingface/datasets/issues/233 | 233 | Fail to download c4 english corpus | closed | 5 | 2020-06-04T01:06:38 | 2021-01-08T07:17:32 | 2020-06-08T09:16:59 | donggyukimc | [] | i run following code to download c4 English corpus.

```

dataset = nlp.load_dataset('c4', 'en', beam_runner='DirectRunner'

, data_dir='/mypath')

```

and i met failure as follows

```

Downloading and preparing dataset c4/en (download: Unknown size, generated: Unknown size, total: Unknown size) to /home/adam/.... | false |

630,029,568 | https://api.github.com/repos/huggingface/datasets/issues/232 | https://github.com/huggingface/datasets/pull/232 | 232 | Nlp cli fix endpoints | closed | 1 | 2020-06-03T14:10:39 | 2020-06-08T09:02:58 | 2020-06-08T09:02:57 | lhoestq | [] | With this PR users will be able to upload their own datasets and metrics.

As mentioned in #181, I had to use the new endpoints and revert the use of dataclasses (just in case we have changes in the API in the future).

We now distinguish commands for datasets and commands for metrics:

```bash

nlp-cli upload_data... | true |

629,988,694 | https://api.github.com/repos/huggingface/datasets/issues/231 | https://github.com/huggingface/datasets/pull/231 | 231 | Add .download to MockDownloadManager | closed | 0 | 2020-06-03T13:20:00 | 2020-06-03T14:25:56 | 2020-06-03T14:25:55 | lhoestq | [] | One method from the DownloadManager was missing and some users couldn't run the tests because of that.

@yjernite | true |

629,983,684 | https://api.github.com/repos/huggingface/datasets/issues/230 | https://github.com/huggingface/datasets/pull/230 | 230 | Don't force to install apache beam for wikipedia dataset | closed | 0 | 2020-06-03T13:13:07 | 2020-06-03T14:34:09 | 2020-06-03T14:34:07 | lhoestq | [] | As pointed out in #227, we shouldn't force users to install apache beam if the processed dataset can be downloaded. I moved the imports of some datasets to avoid this problem | true |

629,956,490 | https://api.github.com/repos/huggingface/datasets/issues/229 | https://github.com/huggingface/datasets/pull/229 | 229 | Rename dataset_infos.json to dataset_info.json | closed | 1 | 2020-06-03T12:31:44 | 2020-06-03T12:52:54 | 2020-06-03T12:48:33 | aswin-giridhar | [] | As the file required for the viewing in the live nlp viewer is named as dataset_info.json | true |

629,952,402 | https://api.github.com/repos/huggingface/datasets/issues/228 | https://github.com/huggingface/datasets/issues/228 | 228 | Not able to access the XNLI dataset | closed | 4 | 2020-06-03T12:25:14 | 2020-07-17T17:44:22 | 2020-07-17T17:44:22 | aswin-giridhar | [

"nlp-viewer"

] | When I try to access the XNLI dataset, I get the following error. The option of plain_text get selected automatically and then I get the following error.

```

FileNotFoundError: [Errno 2] No such file or directory: '/home/sasha/.cache/huggingface/datasets/xnli/plain_text/1.0.0/dataset_info.json'

Traceback:

File "/... | false |

629,845,704 | https://api.github.com/repos/huggingface/datasets/issues/227 | https://github.com/huggingface/datasets/issues/227 | 227 | Should we still have to force to install apache_beam to download wikipedia ? | closed | 3 | 2020-06-03T09:33:20 | 2020-06-03T15:25:41 | 2020-06-03T15:25:41 | richarddwang | [] | Hi, first thanks to @lhoestq 's revolutionary work, I successfully downloaded processed wikipedia according to the doc. 😍😍😍

But at the first try, it tell me to install `apache_beam` and `mwparserfromhell`, which I thought wouldn't be used according to #204 , it was kind of confusing me at that time.

Maybe we s... | false |

628,344,520 | https://api.github.com/repos/huggingface/datasets/issues/226 | https://github.com/huggingface/datasets/pull/226 | 226 | add BlendedSkillTalk dataset | closed | 1 | 2020-06-01T10:54:45 | 2020-06-03T14:37:23 | 2020-06-03T14:37:22 | mariamabarham | [] | This PR add the BlendedSkillTalk dataset, which is used to fine tune the blenderbot. | true |

628,083,366 | https://api.github.com/repos/huggingface/datasets/issues/225 | https://github.com/huggingface/datasets/issues/225 | 225 | [ROUGE] Different scores with `files2rouge` | closed | 3 | 2020-06-01T00:50:36 | 2020-06-03T15:27:18 | 2020-06-03T15:27:18 | astariul | [

"Metric discussion"

] | It seems that the ROUGE score of `nlp` is lower than the one of `files2rouge`.

Here is a self-contained notebook to reproduce both scores : https://colab.research.google.com/drive/14EyAXValB6UzKY9x4rs_T3pyL7alpw_F?usp=sharing

---

`nlp` : (Only mid F-scores)

>rouge1 0.33508031962733364

rouge2 0.145743337761... | false |

627,791,693 | https://api.github.com/repos/huggingface/datasets/issues/224 | https://github.com/huggingface/datasets/issues/224 | 224 | [Feature Request/Help] BLEURT model -> PyTorch | closed | 6 | 2020-05-30T18:30:40 | 2023-08-26T17:38:48 | 2021-01-04T09:53:32 | adamwlev | [

"enhancement"

] | Hi, I am interested in porting google research's new BLEURT learned metric to PyTorch (because I wish to do something experimental with language generation and backpropping through BLEURT). I noticed that you guys don't have it yet so I am partly just asking if you plan to add it (@thomwolf said you want to do so on Tw... | false |

627,683,386 | https://api.github.com/repos/huggingface/datasets/issues/223 | https://github.com/huggingface/datasets/issues/223 | 223 | [Feature request] Add FLUE dataset | closed | 3 | 2020-05-30T08:52:15 | 2020-12-03T13:39:33 | 2020-12-03T13:39:33 | lbourdois | [

"dataset request"

] | Hi,

I think it would be interesting to add the FLUE dataset for francophones or anyone wishing to work on French.

In other requests, I read that you are already working on some datasets, and I was wondering if FLUE was planned.

If it is not the case, I can provide each of the cleaned FLUE datasets (in the form... | false |

627,586,690 | https://api.github.com/repos/huggingface/datasets/issues/222 | https://github.com/huggingface/datasets/issues/222 | 222 | Colab Notebook breaks when downloading the squad dataset | closed | 6 | 2020-05-29T22:55:59 | 2020-06-04T00:21:05 | 2020-06-04T00:21:05 | carlos-aguayo | [] | When I run the notebook in Colab

https://colab.research.google.com/github/huggingface/nlp/blob/master/notebooks/Overview.ipynb

breaks when running this cell:

| false |

627,300,648 | https://api.github.com/repos/huggingface/datasets/issues/221 | https://github.com/huggingface/datasets/pull/221 | 221 | Fix tests/test_dataset_common.py | closed | 1 | 2020-05-29T14:12:15 | 2020-06-01T12:20:42 | 2020-05-29T15:02:23 | tayciryahmed | [] | When I run the command `RUN_SLOW=1 pytest tests/test_dataset_common.py::LocalDatasetTest::test_load_real_dataset_arcd` while working on #220. I get the error ` unexpected keyword argument "'download_and_prepare_kwargs'"` at the level of `load_dataset`. Indeed, this [function](https://github.com/huggingface/nlp/blob/ma... | true |

627,280,683 | https://api.github.com/repos/huggingface/datasets/issues/220 | https://github.com/huggingface/datasets/pull/220 | 220 | dataset_arcd | closed | 2 | 2020-05-29T13:46:50 | 2020-05-29T14:58:40 | 2020-05-29T14:57:21 | tayciryahmed | [] | Added Arabic Reading Comprehension Dataset (ARCD): https://arxiv.org/abs/1906.05394 | true |

627,235,893 | https://api.github.com/repos/huggingface/datasets/issues/219 | https://github.com/huggingface/datasets/pull/219 | 219 | force mwparserfromhell as third party | closed | 0 | 2020-05-29T12:33:17 | 2020-05-29T13:30:13 | 2020-05-29T13:30:12 | lhoestq | [] | This should fix your env because you had `mwparserfromhell ` as a first party for `isort` @patrickvonplaten | true |

627,173,407 | https://api.github.com/repos/huggingface/datasets/issues/218 | https://github.com/huggingface/datasets/pull/218 | 218 | Add Natual Questions and C4 scripts | closed | 0 | 2020-05-29T10:40:30 | 2020-05-29T12:31:01 | 2020-05-29T12:31:00 | lhoestq | [] | Scripts are ready !

However they are not processed nor directly available from gcp yet. | true |

627,128,403 | https://api.github.com/repos/huggingface/datasets/issues/217 | https://github.com/huggingface/datasets/issues/217 | 217 | Multi-task dataset mixing | open | 26 | 2020-05-29T09:22:26 | 2022-10-22T00:45:50 | null | ghomasHudson | [

"enhancement",

"generic discussion"

] | It seems like many of the best performing models on the GLUE benchmark make some use of multitask learning (simultaneous training on multiple tasks).

The [T5 paper](https://arxiv.org/pdf/1910.10683.pdf) highlights multiple ways of mixing the tasks together during finetuning:

- **Examples-proportional mixing** - sam... | false |

626,896,890 | https://api.github.com/repos/huggingface/datasets/issues/216 | https://github.com/huggingface/datasets/issues/216 | 216 | ❓ How to get ROUGE-2 with the ROUGE metric ? | closed | 3 | 2020-05-28T23:47:32 | 2020-06-01T00:04:35 | 2020-06-01T00:04:35 | astariul | [] | I'm trying to use ROUGE metric, but I don't know how to get the ROUGE-2 metric.

---

I compute scores with :

```python

import nlp

rouge = nlp.load_metric('rouge')

with open("pred.txt") as p, open("ref.txt") as g:

for lp, lg in zip(p, g):

rouge.add([lp], [lg])

score = rouge.compute()

```

... | false |

626,867,879 | https://api.github.com/repos/huggingface/datasets/issues/215 | https://github.com/huggingface/datasets/issues/215 | 215 | NonMatchingSplitsSizesError when loading blog_authorship_corpus | closed | 12 | 2020-05-28T22:55:19 | 2025-01-04T00:03:12 | 2022-02-10T13:05:45 | cedricconol | [

"dataset bug"

] | Getting this error when i run `nlp.load_dataset('blog_authorship_corpus')`.

```

raise NonMatchingSplitsSizesError(str(bad_splits))

nlp.utils.info_utils.NonMatchingSplitsSizesError: [{'expected': SplitInfo(name='train',

num_bytes=610252351, num_examples=532812, dataset_name='blog_authorship_corpus'),

'recorded... | false |

626,641,549 | https://api.github.com/repos/huggingface/datasets/issues/214 | https://github.com/huggingface/datasets/pull/214 | 214 | [arrow_dataset.py] add new filter function | closed | 13 | 2020-05-28T16:21:40 | 2020-05-29T11:43:29 | 2020-05-29T11:32:20 | patrickvonplaten | [] | The `.map()` function is super useful, but can IMO a bit tedious when filtering certain examples.

I think, filtering out examples is also a very common operation people would like to perform on datasets.

This PR is a proposal to add a `.filter()` function in the same spirit than the `.map()` function.

Here is a ... | true |

626,587,995 | https://api.github.com/repos/huggingface/datasets/issues/213 | https://github.com/huggingface/datasets/pull/213 | 213 | better message if missing beam options | closed | 0 | 2020-05-28T15:06:57 | 2020-05-29T09:51:17 | 2020-05-29T09:51:16 | lhoestq | [] | WDYT @yjernite ?

For example:

```python

dataset = nlp.load_dataset('wikipedia', '20200501.aa')

```

Raises:

```

MissingBeamOptions: Trying to generate a dataset using Apache Beam, yet no Beam Runner or PipelineOptions() has been provided in `load_dataset` or in the builder arguments. For big datasets it has to ru... | true |

626,580,198 | https://api.github.com/repos/huggingface/datasets/issues/212 | https://github.com/huggingface/datasets/pull/212 | 212 | have 'add' and 'add_batch' for metrics | closed | 0 | 2020-05-28T14:56:47 | 2020-05-29T10:41:05 | 2020-05-29T10:41:04 | lhoestq | [] | This should fix #116

Previously the `.add` method of metrics expected a batch of examples.

Now `.add` expects one prediction/reference and `.add_batch` expects a batch.

I think it is more coherent with the way the ArrowWriter works. | true |

626,565,994 | https://api.github.com/repos/huggingface/datasets/issues/211 | https://github.com/huggingface/datasets/issues/211 | 211 | [Arrow writer, Trivia_qa] Could not convert TagMe with type str: converting to null type | closed | 7 | 2020-05-28T14:38:14 | 2020-07-23T10:15:16 | 2020-07-23T10:15:16 | patrickvonplaten | [

"enhancement"

] | Running the following code

```

import nlp

ds = nlp.load_dataset("trivia_qa", "rc", split="validation[:1%]") # this might take 2.3 min to download but it's cached afterwards...

ds.map(lambda x: x, load_from_cache_file=False)

```

triggers a `ArrowInvalid: Could not convert TagMe with type str: converting to n... | false |

626,504,243 | https://api.github.com/repos/huggingface/datasets/issues/210 | https://github.com/huggingface/datasets/pull/210 | 210 | fix xnli metric kwargs description | closed | 0 | 2020-05-28T13:21:44 | 2020-05-28T13:22:11 | 2020-05-28T13:22:10 | lhoestq | [] | The text was wrong as noticed in #202 | true |

626,405,849 | https://api.github.com/repos/huggingface/datasets/issues/209 | https://github.com/huggingface/datasets/pull/209 | 209 | Add a Google Drive exception for small files | closed | 3 | 2020-05-28T10:40:17 | 2020-05-28T15:15:04 | 2020-05-28T15:15:04 | airKlizz | [] | I tried to use the ``nlp`` library to load personnal datasets. I mainly copy-paste the code for ``multi-news`` dataset because my files are stored on Google Drive.

One of my dataset is small (< 25Mo) so it can be verified by Drive without asking the authorization to the user. This makes the download starts directly... | true |

626,398,519 | https://api.github.com/repos/huggingface/datasets/issues/208 | https://github.com/huggingface/datasets/pull/208 | 208 | [Dummy data] insert config name instead of config | closed | 0 | 2020-05-28T10:28:19 | 2020-05-28T12:48:01 | 2020-05-28T12:48:00 | patrickvonplaten | [] | Thanks @yjernite for letting me know. in the dummy data command the config name shuold be passed to the dataset builder and not the config itself.

Also, @lhoestq fixed small import bug introduced by beam command I think. | true |

625,932,200 | https://api.github.com/repos/huggingface/datasets/issues/207 | https://github.com/huggingface/datasets/issues/207 | 207 | Remove test set from NLP viewer | closed | 3 | 2020-05-27T18:32:07 | 2022-02-10T13:17:45 | 2022-02-10T13:17:45 | chrisdonahue | [

"nlp-viewer"

] | While the new [NLP viewer](https://huggingface.co/nlp/viewer/) is a great tool, I think it would be best to outright remove the option of looking at the test sets. At the very least, a warning should be displayed to users before showing the test set. Newcomers to the field might not be aware of best practices, and smal... | false |

625,842,989 | https://api.github.com/repos/huggingface/datasets/issues/206 | https://github.com/huggingface/datasets/issues/206 | 206 | [Question] Combine 2 datasets which have the same columns | closed | 2 | 2020-05-27T16:25:52 | 2020-06-10T09:11:14 | 2020-06-10T09:11:14 | airKlizz | [] | Hi,

I am using ``nlp`` to load personal datasets. I created summarization datasets in multi-languages based on wikinews. I have one dataset for english and one for german (french is getting to be ready as well). I want to keep these datasets independent because they need different pre-processing (add different task-... | false |

625,839,335 | https://api.github.com/repos/huggingface/datasets/issues/205 | https://github.com/huggingface/datasets/pull/205 | 205 | Better arrow dataset iter | closed | 0 | 2020-05-27T16:20:21 | 2020-05-27T16:39:58 | 2020-05-27T16:39:56 | lhoestq | [] | I tried to play around with `tf.data.Dataset.from_generator` and I found out that the `__iter__` that we have for `nlp.arrow_dataset.Dataset` ignores the format that has been set (torch or tensorflow).

With these changes I should be able to come up with a `tf.data.Dataset` that uses lazy loading, as asked in #193. | true |

625,655,849 | https://api.github.com/repos/huggingface/datasets/issues/204 | https://github.com/huggingface/datasets/pull/204 | 204 | Add Dataflow support + Wikipedia + Wiki40b | closed | 0 | 2020-05-27T12:32:49 | 2020-05-28T08:10:35 | 2020-05-28T08:10:34 | lhoestq | [] | # Add Dataflow support + Wikipedia + Wiki40b

## Support datasets processing with Apache Beam

Some datasets are too big to be processed on a single machine, for example: wikipedia, wiki40b, etc. Apache Beam allows to process datasets on many execution engines like Dataflow, Spark, Flink, etc.

To process such da... | true |

625,515,488 | https://api.github.com/repos/huggingface/datasets/issues/203 | https://github.com/huggingface/datasets/pull/203 | 203 | Raise an error if no config name for datasets like glue | closed | 0 | 2020-05-27T09:03:58 | 2020-05-27T16:40:39 | 2020-05-27T16:40:38 | lhoestq | [] | Some datasets like glue (see #130) and scientific_papers (see #197) have many configs.

For example for glue there are cola, sst2, mrpc etc.

Currently if a user does `load_dataset('glue')`, then Cola is loaded by default and it can be confusing. Instead, we should raise an error to let the user know that he has to p... | true |

625,493,983 | https://api.github.com/repos/huggingface/datasets/issues/202 | https://github.com/huggingface/datasets/issues/202 | 202 | Mistaken `_KWARGS_DESCRIPTION` for XNLI metric | closed | 1 | 2020-05-27T08:34:42 | 2020-05-28T13:22:36 | 2020-05-28T13:22:36 | phiyodr | [] | Hi!

The [`_KWARGS_DESCRIPTION`](https://github.com/huggingface/nlp/blob/7d0fa58641f3f462fb2861dcdd6ce7f0da3f6a56/metrics/xnli/xnli.py#L45) for the XNLI metric uses `Args` and `Returns` text from [BLEU](https://github.com/huggingface/nlp/blob/7d0fa58641f3f462fb2861dcdd6ce7f0da3f6a56/metrics/bleu/bleu.py#L58) metric:

... | false |

625,235,430 | https://api.github.com/repos/huggingface/datasets/issues/201 | https://github.com/huggingface/datasets/pull/201 | 201 | Fix typo in README | closed | 2 | 2020-05-26T22:18:21 | 2020-05-26T23:40:31 | 2020-05-26T23:00:56 | LysandreJik | [] | true | |

625,226,638 | https://api.github.com/repos/huggingface/datasets/issues/200 | https://github.com/huggingface/datasets/pull/200 | 200 | [ArrowWriter] Set schema at first write example | closed | 1 | 2020-05-26T21:59:48 | 2020-05-27T09:07:54 | 2020-05-27T09:07:53 | lhoestq | [] | Right now if the schema was not specified when instantiating `ArrowWriter`, then it could be set with the first `write_table` for example (it calls `self._build_writer()` to do so).

I noticed that it was not done if the first example is added via `.write`, so I added it for coherence. | true |

625,217,440 | https://api.github.com/repos/huggingface/datasets/issues/199 | https://github.com/huggingface/datasets/pull/199 | 199 | Fix GermEval 2014 dataset infos | closed | 2 | 2020-05-26T21:41:44 | 2020-05-26T21:50:24 | 2020-05-26T21:50:24 | stefan-it | [] | Hi,

this PR just removes the `dataset_info.json` file and adds a newly generated `dataset_infos.json` file. | true |

625,200,627 | https://api.github.com/repos/huggingface/datasets/issues/198 | https://github.com/huggingface/datasets/issues/198 | 198 | Index outside of table length | closed | 2 | 2020-05-26T21:09:40 | 2020-05-26T22:43:49 | 2020-05-26T22:43:49 | casajarm | [] | The offset input box warns of numbers larger than a limit (like 2000) but then the errors start at a smaller value than that limit (like 1955).

> ValueError: Index (2000) outside of table length (2000).

> Traceback:

> File "/home/sasha/.local/lib/python3.7/site-packages/streamlit/ScriptRunner.py", line 322, in _ru... | false |

624,966,904 | https://api.github.com/repos/huggingface/datasets/issues/197 | https://github.com/huggingface/datasets/issues/197 | 197 | Scientific Papers only downloading Pubmed | closed | 3 | 2020-05-26T15:18:47 | 2020-05-28T08:19:28 | 2020-05-28T08:19:28 | antmarakis | [] | Hi!

I have been playing around with this module, and I am a bit confused about the `scientific_papers` dataset. I thought that it would download two separate datasets, arxiv and pubmed. But when I run the following:

```

dataset = nlp.load_dataset('scientific_papers', data_dir='.', cache_dir='.')

Downloading: 10... | false |

624,901,266 | https://api.github.com/repos/huggingface/datasets/issues/196 | https://github.com/huggingface/datasets/pull/196 | 196 | Check invalid config name | closed | 13 | 2020-05-26T13:52:51 | 2020-05-26T21:04:56 | 2020-05-26T21:04:55 | lhoestq | [] | As said in #194, we should raise an error if the config name has bad characters.

Bad characters are those that are not allowed for directory names on windows. | true |

624,858,686 | https://api.github.com/repos/huggingface/datasets/issues/195 | https://github.com/huggingface/datasets/pull/195 | 195 | [Dummy data command] add new case to command | closed | 1 | 2020-05-26T12:50:47 | 2020-05-26T14:38:28 | 2020-05-26T14:38:27 | patrickvonplaten | [] | Qanta: #194 introduces a case that was not noticed before. This change in code helps community users to have an easier time creating the dummy data. | true |

624,854,897 | https://api.github.com/repos/huggingface/datasets/issues/194 | https://github.com/huggingface/datasets/pull/194 | 194 | Add Dataset: Qanta | closed | 3 | 2020-05-26T12:44:35 | 2020-05-26T16:58:17 | 2020-05-26T13:16:20 | patrickvonplaten | [] | Fixes dummy data for #169 @EntilZha | true |

624,655,558 | https://api.github.com/repos/huggingface/datasets/issues/193 | https://github.com/huggingface/datasets/issues/193 | 193 | [Tensorflow] Use something else than `from_tensor_slices()` | closed | 7 | 2020-05-26T07:19:14 | 2020-10-27T15:28:11 | 2020-10-27T15:28:11 | astariul | [] | In the example notebook, the TF Dataset is built using `from_tensor_slices()` :

```python

columns = ['input_ids', 'token_type_ids', 'attention_mask', 'start_positions', 'end_positions']

train_tf_dataset.set_format(type='tensorflow', columns=columns)

features = {x: train_tf_dataset[x] for x in columns[:3]}

label... | false |

624,397,592 | https://api.github.com/repos/huggingface/datasets/issues/192 | https://github.com/huggingface/datasets/issues/192 | 192 | [Question] Create Apache Arrow dataset from raw text file | closed | 4 | 2020-05-25T16:42:47 | 2021-12-18T01:45:34 | 2020-10-27T15:20:22 | mrm8488 | [] | Hi guys, I have gathered and preprocessed about 2GB of COVID papers from CORD dataset @ Kggle. I have seen you have a text dataset as "Crime and punishment" in Apache arrow format. Do you have any script to do it from a raw txt file (preprocessed as for BERT like) or any guide?

Is the worth of send it to you and add i... | false |

624,394,936 | https://api.github.com/repos/huggingface/datasets/issues/191 | https://github.com/huggingface/datasets/pull/191 | 191 | [Squad es] add dataset_infos | closed | 0 | 2020-05-25T16:35:52 | 2020-05-25T16:39:59 | 2020-05-25T16:39:58 | patrickvonplaten | [] | @mariamabarham - was still about to upload this. Should have waited with my comment a bit more :D | true |

624,124,600 | https://api.github.com/repos/huggingface/datasets/issues/190 | https://github.com/huggingface/datasets/pull/190 | 190 | add squad Spanish v1 and v2 | closed | 5 | 2020-05-25T08:08:40 | 2020-05-25T16:28:46 | 2020-05-25T16:28:45 | mariamabarham | [] | This PR add the Spanish Squad versions 1 and 2 datasets.

Fixes #164 | true |

624,048,881 | https://api.github.com/repos/huggingface/datasets/issues/189 | https://github.com/huggingface/datasets/issues/189 | 189 | [Question] BERT-style multiple choice formatting | closed | 2 | 2020-05-25T05:11:05 | 2020-05-25T18:38:28 | 2020-05-25T18:38:28 | sarahwie | [] | Hello, I am wondering what the equivalent formatting of a dataset should be to allow for multiple-choice answering prediction, BERT-style. Previously, this was done by passing a list of `InputFeatures` to the dataloader instead of a list of `InputFeature`, where `InputFeatures` contained lists of length equal to the nu... | false |

623,890,430 | https://api.github.com/repos/huggingface/datasets/issues/188 | https://github.com/huggingface/datasets/issues/188 | 188 | When will the remaining math_dataset modules be added as dataset objects | closed | 3 | 2020-05-24T15:46:52 | 2020-05-24T18:53:48 | 2020-05-24T18:53:48 | tylerroost | [] | Currently only the algebra_linear_1d is supported. Is there a timeline for making the other modules supported. If no timeline is established, how can I help? | false |

623,627,800 | https://api.github.com/repos/huggingface/datasets/issues/187 | https://github.com/huggingface/datasets/issues/187 | 187 | [Question] How to load wikipedia ? Beam runner ? | closed | 2 | 2020-05-23T10:18:52 | 2020-05-25T00:12:02 | 2020-05-25T00:12:02 | richarddwang | [] | When `nlp.load_dataset('wikipedia')`, I got

* `WARNING:nlp.builder:Trying to generate a dataset using Apache Beam, yet no Beam Runner or PipelineOptions() has been provided. Please pass a nlp.DownloadConfig(beam_runner=...) object to the builder.download_and_prepare(download_config=...) method. Default values will be ... | false |

623,595,180 | https://api.github.com/repos/huggingface/datasets/issues/186 | https://github.com/huggingface/datasets/issues/186 | 186 | Weird-ish: Not creating unique caches for different phases | closed | 2 | 2020-05-23T06:40:58 | 2020-05-23T20:22:18 | 2020-05-23T20:22:17 | zphang | [] | Sample code:

```python

import nlp

dataset = nlp.load_dataset('boolq')

def func1(x):

return x

def func2(x):

return None

train_output = dataset["train"].map(func1)

valid_output = dataset["validation"].map(func1)

print()

print(len(train_output), len(valid_output))

# Output: 9427 9427

```

Th... | false |

623,172,484 | https://api.github.com/repos/huggingface/datasets/issues/185 | https://github.com/huggingface/datasets/pull/185 | 185 | [Commands] In-detail instructions to create dummy data folder | closed | 1 | 2020-05-22T12:26:25 | 2020-05-22T14:06:35 | 2020-05-22T14:06:34 | patrickvonplaten | [] | ### Dummy data command

This PR adds a new command `python nlp-cli dummy_data <path_to_dataset_folder>` that gives in-detail instructions on how to add the dummy data files.

It would be great if you can try it out by moving the current dummy_data folder of any dataset in `./datasets` with `mv datasets/<dataset_s... | true |

623,120,929 | https://api.github.com/repos/huggingface/datasets/issues/184 | https://github.com/huggingface/datasets/pull/184 | 184 | Use IndexError instead of ValueError when index out of range | closed | 0 | 2020-05-22T10:43:42 | 2020-05-28T08:31:18 | 2020-05-28T08:31:18 | richarddwang | [] | **`default __iter__ needs IndexError`**.

When I want to create a wrapper of arrow dataset to adapt to fastai,

I don't know how to initialize it, so I didn't use inheritance but use object composition.

I wrote sth like this.

```

clas HF_dataset():

def __init__(self, arrow_dataset):

self.dset = arrow_datas... | true |

623,054,270 | https://api.github.com/repos/huggingface/datasets/issues/183 | https://github.com/huggingface/datasets/issues/183 | 183 | [Bug] labels of glue/ax are all -1 | closed | 2 | 2020-05-22T08:43:36 | 2020-05-22T22:14:05 | 2020-05-22T22:14:05 | richarddwang | [] | ```

ax = nlp.load_dataset('glue', 'ax')

for i in range(30): print(ax['test'][i]['label'], end=', ')

```

```

-1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1, -1,

``` | false |

622,646,770 | https://api.github.com/repos/huggingface/datasets/issues/182 | https://github.com/huggingface/datasets/pull/182 | 182 | Update newsroom.py | closed | 0 | 2020-05-21T17:07:43 | 2020-05-22T16:38:23 | 2020-05-22T16:38:23 | yoavartzi | [] | Updated the URL for Newsroom download so it's more robust to future changes. | true |

622,634,420 | https://api.github.com/repos/huggingface/datasets/issues/181 | https://github.com/huggingface/datasets/issues/181 | 181 | Cannot upload my own dataset | closed | 6 | 2020-05-21T16:45:52 | 2020-06-18T22:14:42 | 2020-06-18T22:14:42 | korakot | [] | I look into `nlp-cli` and `user.py` to learn how to upload my own data.

It is supposed to work like this

- Register to get username, password at huggingface.co

- `nlp-cli login` and type username, passworld

- I have a single file to upload at `./ttc/ttc_freq_extra.csv`

- `nlp-cli upload ttc/ttc_freq_extra.csv`

... | false |

622,556,861 | https://api.github.com/repos/huggingface/datasets/issues/180 | https://github.com/huggingface/datasets/pull/180 | 180 | Add hall of fame | closed | 0 | 2020-05-21T14:53:48 | 2020-05-22T16:35:16 | 2020-05-22T16:35:14 | clmnt | [] | powered by https://github.com/sourcerer-io/hall-of-fame | true |

622,525,410 | https://api.github.com/repos/huggingface/datasets/issues/179 | https://github.com/huggingface/datasets/issues/179 | 179 | [Feature request] separate split name and split instructions | closed | 2 | 2020-05-21T14:10:51 | 2020-05-22T13:31:08 | 2020-05-22T13:31:07 | yjernite | [] | Currently, the name of an nlp.NamedSplit is parsed in arrow_reader.py and used as the instruction.

This makes it impossible to have several training sets, which can occur when:

- A dataset corresponds to a collection of sub-datasets

- A dataset was built in stages, adding new examples at each stage

Would it be ... | false |

621,979,849 | https://api.github.com/repos/huggingface/datasets/issues/178 | https://github.com/huggingface/datasets/pull/178 | 178 | [Manual data] improve error message for manual data in general | closed | 0 | 2020-05-20T18:10:45 | 2020-05-20T18:18:52 | 2020-05-20T18:18:50 | patrickvonplaten | [] | `nlp.load("xsum")` now leads to the following error message:

I guess the manual download instructions for `xsum` can also be improved. | true |

621,975,368 | https://api.github.com/repos/huggingface/datasets/issues/177 | https://github.com/huggingface/datasets/pull/177 | 177 | Xsum manual download instruction | closed | 0 | 2020-05-20T18:02:41 | 2020-05-20T18:16:50 | 2020-05-20T18:16:49 | mariamabarham | [] | true | |

621,934,638 | https://api.github.com/repos/huggingface/datasets/issues/176 | https://github.com/huggingface/datasets/pull/176 | 176 | [Tests] Refactor MockDownloadManager | closed | 0 | 2020-05-20T17:07:36 | 2020-05-20T18:17:19 | 2020-05-20T18:17:18 | patrickvonplaten | [] | Clean mock download manager class.

The print function was not of much help I think.

We should think about adding a command that creates the dummy folder structure for the user. | true |

621,929,428 | https://api.github.com/repos/huggingface/datasets/issues/175 | https://github.com/huggingface/datasets/issues/175 | 175 | [Manual data dir] Error message: nlp.load_dataset('xsum') -> TypeError | closed | 0 | 2020-05-20T17:00:32 | 2020-05-20T18:18:50 | 2020-05-20T18:18:50 | sshleifer | [] | v 0.1.0 from pip

```python

import nlp

xsum = nlp.load_dataset('xsum')

```

Issue is `dl_manager.manual_dir`is `None`

```python

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

<ipython-input-42-8a32f06... | false |

621,928,403 | https://api.github.com/repos/huggingface/datasets/issues/174 | https://github.com/huggingface/datasets/issues/174 | 174 | nlp.load_dataset('xsum') -> TypeError | closed | 0 | 2020-05-20T16:59:09 | 2020-05-20T17:43:46 | 2020-05-20T17:43:46 | sshleifer | [] | false | |

621,764,932 | https://api.github.com/repos/huggingface/datasets/issues/173 | https://github.com/huggingface/datasets/pull/173 | 173 | Rm extracted test dirs | closed | 2 | 2020-05-20T13:30:48 | 2020-05-22T16:34:36 | 2020-05-22T16:34:35 | lhoestq | [] | All the dummy data used for tests were duplicated. For each dataset, we had one zip file but also its extracted directory. I removed all these directories

Furthermore instead of extracting next to the dummy_data.zip file, we extract in the temp `cached_dir` used for tests, so that all the extracted directories get r... | true |

621,377,386 | https://api.github.com/repos/huggingface/datasets/issues/172 | https://github.com/huggingface/datasets/issues/172 | 172 | Clone not working on Windows environment | closed | 2 | 2020-05-20T00:45:14 | 2020-05-23T12:49:13 | 2020-05-23T11:27:52 | codehunk628 | [] | Cloning in a windows environment is not working because of use of special character '?' in folder name ..

Please consider changing the folder name ....

Reference to folder -

nlp/datasets/cnn_dailymail/dummy/3.0.0/3.0.0/dummy_data-zip-extracted/dummy_data/uc?export=download&id=0BwmD_VLjROrfM1BxdkxVaTY2bWs/dailymail/s... | false |

621,199,128 | https://api.github.com/repos/huggingface/datasets/issues/171 | https://github.com/huggingface/datasets/pull/171 | 171 | fix squad metric format | closed | 5 | 2020-05-19T18:37:36 | 2020-05-22T13:36:50 | 2020-05-22T13:36:48 | lhoestq | [] | The format of the squad metric was wrong.

This should fix #143

I tested with

```python3

predictions = [

{'id': '56be4db0acb8001400a502ec', 'prediction_text': 'Denver Broncos'}

]

references = [

{'answers': [{'text': 'Denver Broncos'}], 'id': '56be4db0acb8001400a502ec'}

]

``` | true |

621,119,747 | https://api.github.com/repos/huggingface/datasets/issues/170 | https://github.com/huggingface/datasets/pull/170 | 170 | Rename anli dataset | closed | 0 | 2020-05-19T16:26:57 | 2020-05-20T12:23:09 | 2020-05-20T12:23:08 | lhoestq | [] | What we have now as the `anli` dataset is actually the αNLI dataset from the ART challenge dataset. This name is confusing because `anli` is also the name of adversarial NLI (see [https://github.com/facebookresearch/anli](https://github.com/facebookresearch/anli)).

I renamed the current `anli` dataset by `art`. | true |

621,099,682 | https://api.github.com/repos/huggingface/datasets/issues/169 | https://github.com/huggingface/datasets/pull/169 | 169 | Adding Qanta (Quizbowl) Dataset | closed | 5 | 2020-05-19T16:03:01 | 2020-05-26T12:52:31 | 2020-05-26T12:52:31 | EntilZha | [] | This PR adds the qanta question answering datasets from [Quizbowl: The Case for Incremental Question Answering](https://arxiv.org/abs/1904.04792) and [Trick Me If You Can: Human-in-the-loop Generation of Adversarial Question Answering Examples](https://www.aclweb.org/anthology/Q19-1029/) (adversarial fold)

This part... | true |

620,959,819 | https://api.github.com/repos/huggingface/datasets/issues/168 | https://github.com/huggingface/datasets/issues/168 | 168 | Loading 'wikitext' dataset fails | closed | 6 | 2020-05-19T13:04:29 | 2020-05-26T21:46:52 | 2020-05-26T21:46:52 | itay1itzhak | [] | Loading the 'wikitext' dataset fails with Attribute error:

Code to reproduce (From example notebook):

import nlp

wikitext_dataset = nlp.load_dataset('wikitext')

Error:

---------------------------------------------------------------------------

AttributeError Traceback (most rece... | false |

620,908,786 | https://api.github.com/repos/huggingface/datasets/issues/167 | https://github.com/huggingface/datasets/pull/167 | 167 | [Tests] refactor tests | closed | 1 | 2020-05-19T11:43:32 | 2020-05-19T16:17:12 | 2020-05-19T16:17:10 | patrickvonplaten | [] | This PR separates AWS and Local tests to remove these ugly statements in the script:

```python

if "/" not in dataset_name:

logging.info("Skip {} because it is a canonical dataset")

return

```

To run a `aws` test, one should now run the following command:

```python

pytest -s... | true |

620,850,218 | https://api.github.com/repos/huggingface/datasets/issues/166 | https://github.com/huggingface/datasets/issues/166 | 166 | Add a method to shuffle a dataset | closed | 4 | 2020-05-19T10:08:46 | 2020-06-23T15:07:33 | 2020-06-23T15:07:32 | thomwolf | [

"generic discussion"

] | Could maybe be a `dataset.shuffle(generator=None, seed=None)` signature method.

Also, we could maybe have a clear indication of which method modify in-place and which methods return/cache a modified dataset. I kinda like torch conversion of having an underscore suffix for all the methods which modify a dataset in-pl... | false |

620,758,221 | https://api.github.com/repos/huggingface/datasets/issues/165 | https://github.com/huggingface/datasets/issues/165 | 165 | ANLI | closed | 0 | 2020-05-19T07:50:57 | 2020-05-20T12:23:07 | 2020-05-20T12:23:07 | douwekiela | [] | Can I recommend the following:

For ANLI, use https://github.com/facebookresearch/anli. As that paper says, "Our dataset is not

to be confused with abductive NLI (Bhagavatula et al., 2019), which calls itself αNLI, or ART.".

Indeed, the paper cited under what is currently called anli says in the abstract "We int... | false |

620,540,250 | https://api.github.com/repos/huggingface/datasets/issues/164 | https://github.com/huggingface/datasets/issues/164 | 164 | Add Spanish POR and NER Datasets | closed | 2 | 2020-05-18T22:18:21 | 2020-05-25T16:28:45 | 2020-05-25T16:28:45 | mrm8488 | [

"dataset request"

] | Hi guys,

In order to cover multilingual support a little step could be adding standard Datasets used for Spanish NER and POS tasks.

I can provide it in raw and preprocessed formats. | false |

620,534,307 | https://api.github.com/repos/huggingface/datasets/issues/163 | https://github.com/huggingface/datasets/issues/163 | 163 | [Feature request] Add cos-e v1.0 | closed | 10 | 2020-05-18T22:05:26 | 2020-06-16T23:15:25 | 2020-06-16T18:52:06 | sarahwie | [

"dataset request"

] | I noticed the second release of cos-e (v1.11) is included in this repo. I wanted to request inclusion of v1.0, since this is the version on which results are reported on in [the paper](https://www.aclweb.org/anthology/P19-1487/), and v1.11 has noted [annotation](https://github.com/salesforce/cos-e/issues/2) [issues](ht... | false |

620,513,554 | https://api.github.com/repos/huggingface/datasets/issues/162 | https://github.com/huggingface/datasets/pull/162 | 162 | fix prev files hash in map | closed | 3 | 2020-05-18T21:20:51 | 2020-05-18T21:36:21 | 2020-05-18T21:36:20 | lhoestq | [] | Fix the `.map` issue in #160.

This makes sure it takes the previous files when computing the hash. | true |

620,487,535 | https://api.github.com/repos/huggingface/datasets/issues/161 | https://github.com/huggingface/datasets/issues/161 | 161 | Discussion on version identifier & MockDataLoaderManager for test data | open | 12 | 2020-05-18T20:31:30 | 2020-05-24T18:10:03 | null | EntilZha | [

"generic discussion"

] | Hi, I'm working on adding a dataset and ran into an error due to `download` not being defined on `MockDataLoaderManager`, but being defined in `nlp/utils/download_manager.py`. The readme step running this: `RUN_SLOW=1 pytest tests/test_dataset_common.py::DatasetTest::test_load_real_dataset_localmydatasetname` triggers ... | false |

620,448,236 | https://api.github.com/repos/huggingface/datasets/issues/160 | https://github.com/huggingface/datasets/issues/160 | 160 | caching in map causes same result to be returned for train, validation and test | closed | 7 | 2020-05-18T19:22:03 | 2020-05-18T21:36:20 | 2020-05-18T21:36:20 | dpressel | [

"dataset bug"

] | hello,

I am working on a program that uses the `nlp` library with the `SST2` dataset.

The rough outline of the program is:

```

import nlp as nlp_datasets

...

parser.add_argument('--dataset', help='HuggingFace Datasets id', default=['glue', 'sst2'], nargs='+')

...

dataset = nlp_datasets.load_dataset(*args.... | false |

620,420,700 | https://api.github.com/repos/huggingface/datasets/issues/159 | https://github.com/huggingface/datasets/issues/159 | 159 | How can we add more datasets to nlp library? | closed | 1 | 2020-05-18T18:35:31 | 2020-05-18T18:37:08 | 2020-05-18T18:37:07 | Tahsin-Mayeesha | [] | false | |

620,396,658 | https://api.github.com/repos/huggingface/datasets/issues/158 | https://github.com/huggingface/datasets/pull/158 | 158 | add Toronto Books Corpus | closed | 0 | 2020-05-18T17:54:45 | 2020-06-11T07:49:15 | 2020-05-19T07:34:56 | mariamabarham | [] | This PR adds the Toronto Books Corpus.

.

It on consider TMX and plain text files (Moses) defined in the table **Statistics and TMX/Moses Downloads** [here](http://opus.nlpl.eu/Books.php ) | true |

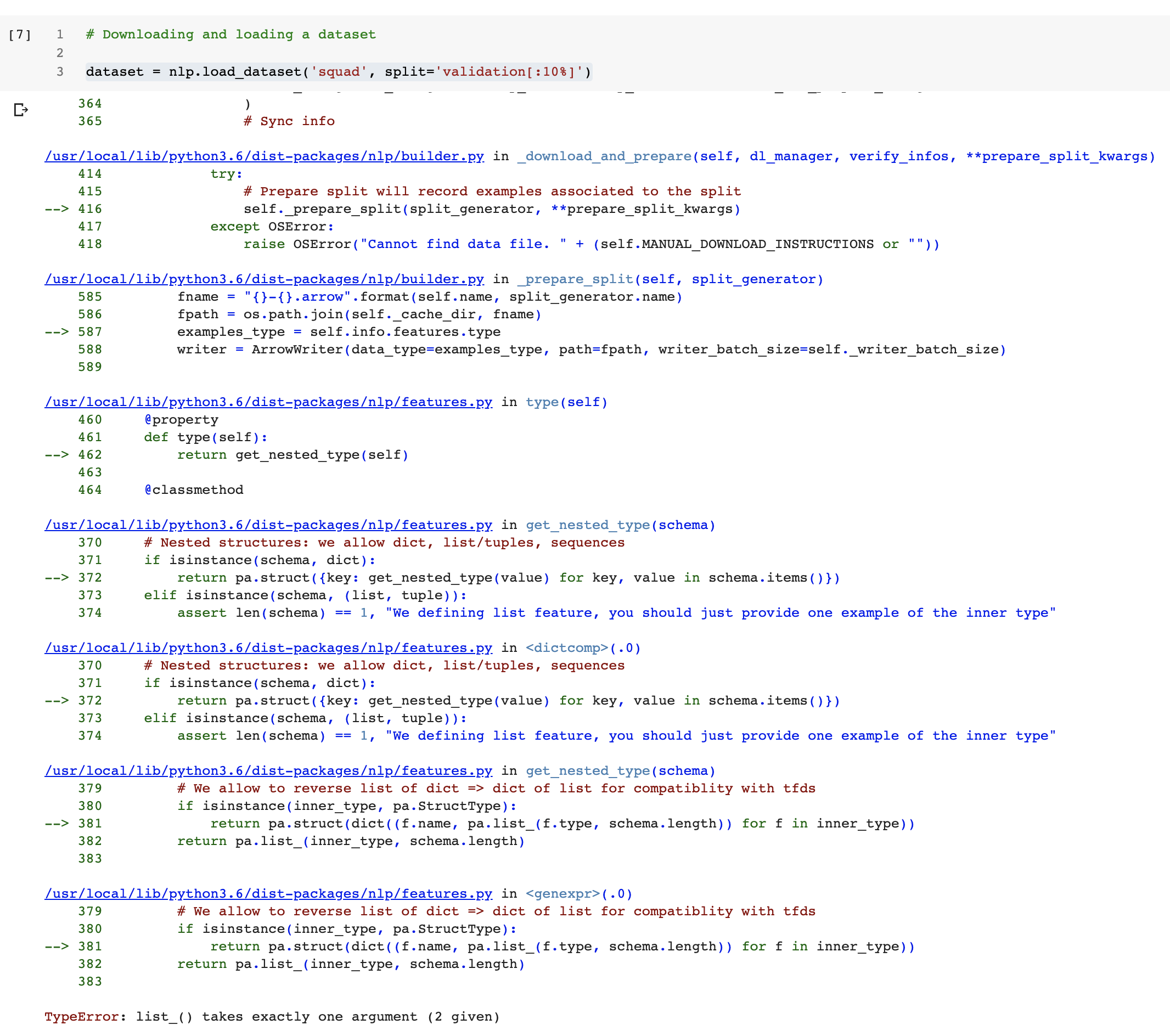

620,356,542 | https://api.github.com/repos/huggingface/datasets/issues/157 | https://github.com/huggingface/datasets/issues/157 | 157 | nlp.load_dataset() gives "TypeError: list_() takes exactly one argument (2 given)" | closed | 11 | 2020-05-18T16:46:38 | 2020-06-05T08:08:58 | 2020-06-05T08:08:58 | saahiluppal | [] | I'm trying to load datasets from nlp but there seems to have error saying

"TypeError: list_() takes exactly one argument (2 given)"

gist can be found here

https://gist.github.com/saahiluppal/c4b878f330b10b9ab9762bc0776c0a6a | false |

620,263,687 | https://api.github.com/repos/huggingface/datasets/issues/156 | https://github.com/huggingface/datasets/issues/156 | 156 | SyntaxError with WMT datasets | closed | 7 | 2020-05-18T14:38:18 | 2020-07-23T16:41:55 | 2020-07-23T16:41:55 | tomhosking | [] | The following snippet produces a syntax error:

```

import nlp

dataset = nlp.load_dataset('wmt14')

print(dataset['train'][0])

```

```

Traceback (most recent call last):

File "/home/tom/.local/lib/python3.6/site-packages/IPython/core/interactiveshell.py", line 3326, in run_code

exec(code_obj, self.... | false |

620,067,946 | https://api.github.com/repos/huggingface/datasets/issues/155 | https://github.com/huggingface/datasets/pull/155 | 155 | Include more links in README, fix typos | closed | 1 | 2020-05-18T09:47:08 | 2020-05-28T08:31:57 | 2020-05-28T08:31:57 | bharatr21 | [] | Include more links and fix typos in README | true |

620,059,066 | https://api.github.com/repos/huggingface/datasets/issues/154 | https://github.com/huggingface/datasets/pull/154 | 154 | add Ubuntu Dialogs Corpus datasets | closed | 0 | 2020-05-18T09:34:48 | 2020-05-18T10:12:28 | 2020-05-18T10:12:27 | mariamabarham | [] | This PR adds the Ubuntu Dialog Corpus datasets version 2.0. | true |

619,972,246 | https://api.github.com/repos/huggingface/datasets/issues/153 | https://github.com/huggingface/datasets/issues/153 | 153 | Meta-datasets (GLUE/XTREME/...) – Special care to attributions and citations | open | 4 | 2020-05-18T07:24:22 | 2020-05-18T21:18:16 | null | thomwolf | [

"generic discussion"

] | Meta-datasets are interesting in terms of standardized benchmarks but they also have specific behaviors, in particular in terms of attribution and authorship. It's very important that each specific dataset inside a meta dataset is properly referenced and the citation/specific homepage/etc are very visible and accessibl... | false |

619,971,900 | https://api.github.com/repos/huggingface/datasets/issues/152 | https://github.com/huggingface/datasets/pull/152 | 152 | Add GLUE config name check | closed | 5 | 2020-05-18T07:23:43 | 2020-05-27T22:09:12 | 2020-05-27T22:09:12 | bharatr21 | [] | Fixes #130 by adding a name check to the Glue class | true |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.